Cassandra: The Definitive Guide Cassandra.The.Definitive.Guide

Cassandra%20-%20The%20Definitive%20Guide

Cassandra.The.Definitive.Guide-www.gocit.vn

Cassandra.The.Definitive.Guide

Cassandra.The.Definitive.Guide-www.gocit.vn

Cassandra.The.Definitive.Guide-www.gocit.vn

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 330 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Table of Contents

- Foreword

- Preface

- Chapter 1. Introducing Cassandra

- Chapter 2. Installing Cassandra

- Chapter 3. The Cassandra Data Model

- Chapter 4. Sample Application

- Chapter 5. The Cassandra Architecture

- Chapter 6. Configuring Cassandra

- Chapter 7. Reading and Writing Data

- Query Differences Between RDBMS and Cassandra

- Basic Write Properties

- Consistency Levels

- Basic Read Properties

- The API

- Setup and Inserting Data

- Using a Simple Get

- Seeding Some Values

- Slice Predicate

- Get Range Slices

- Multiget Slice

- Deleting

- Batch Mutates

- Programmatically Defining Keyspaces and Column Families

- Summary

- Chapter 8. Clients

- Chapter 9. Monitoring

- Chapter 10. Maintenance

- Chapter 11. Performance Tuning

- Chapter 12. Integrating Hadoop

- Appendix. The Nonrelational Landscape

- Glossary

- Index

Cassandra: The Definitive Guide

Cassandra: The Definitive Guide

Eben Hewitt

Beijing

•

Cambridge

•

Farnham

•

Köln

•

Sebastopol

•

Tokyo

Cassandra: The Definitive Guide

by Eben Hewitt

Copyright © 2011 Eben Hewitt. All rights reserved.

Printed in the United States of America.

Published by O’Reilly Media, Inc., 1005 Gravenstein Highway North, Sebastopol, CA 95472.

O’Reilly books may be purchased for educational, business, or sales promotional use. Online editions

are also available for most titles (http://my.safaribooksonline.com). For more information, contact our

corporate/institutional sales department: (800) 998-9938 or corporate@oreilly.com.

Editor: Mike Loukides

Production Editor: Holly Bauer

Copyeditor: Genevieve d’Entremont

Proofreader: Emily Quill

Indexer: Ellen Troutman Zaig

Cover Designer: Karen Montgomery

Interior Designer: David Futato

Illustrator: Robert Romano

Printing History:

November 2010: First Edition.

Nutshell Handbook, the Nutshell Handbook logo, and the O’Reilly logo are registered trademarks of

O’Reilly Media, Inc. Cassandra: The Definitive Guide, the image of a Paradise flycatcher, and related

trade dress are trademarks of O’Reilly Media, Inc.

Many of the designations used by manufacturers and sellers to distinguish their products are claimed as

trademarks. Where those designations appear in this book, and O’Reilly Media, Inc. was aware of a

trademark claim, the designations have been printed in caps or initial caps.

While every precaution has been taken in the preparation of this book, the publisher and author assume

no responsibility for errors or omissions, or for damages resulting from the use of the information con-

tained herein.

TM

This book uses RepKover™, a durable and flexible lay-flat binding.

ISBN: 978-1-449-39041-9

[M]

1289577822

This book is dedicated to my sweetheart,

Alison Brown. I can hear the sound of violins,

long before it begins.

Table of Contents

Foreword . .................................................................. xv

Preface .................................................................... xvii

1. Introducing Cassandra ................................................... 1

What’s Wrong with Relational Databases? 1

A Quick Review of Relational Databases 6

RDBMS: The Awesome and the Not-So-Much 6

Web Scale 12

The Cassandra Elevator Pitch 14

Cassandra in 50 Words or Less 14

Distributed and Decentralized 14

Elastic Scalability 16

High Availability and Fault Tolerance 16

Tuneable Consistency 17

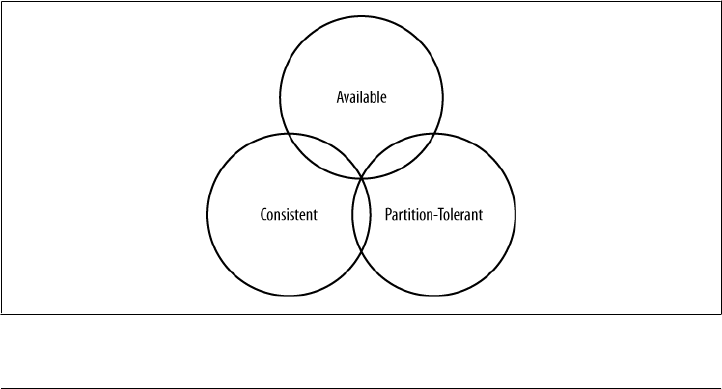

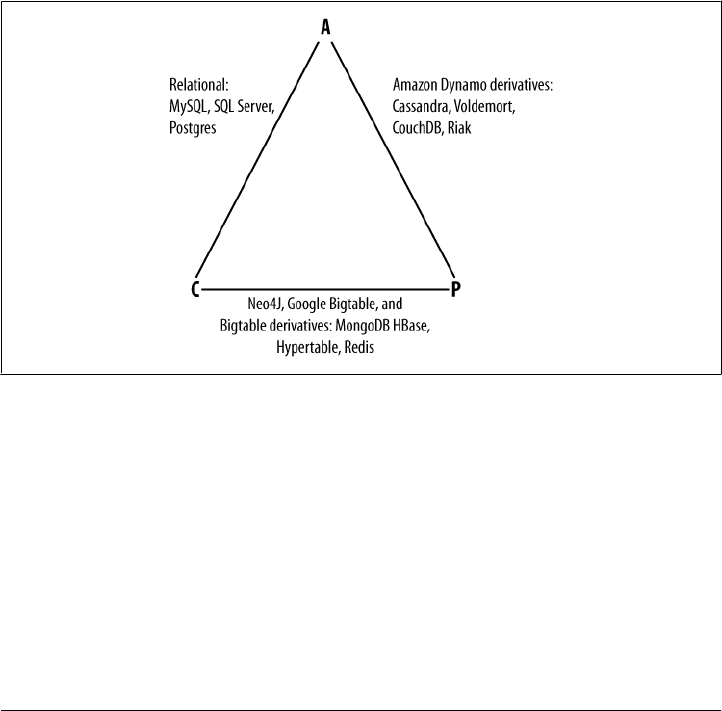

Brewer’s CAP Theorem 19

Row-Oriented 23

Schema-Free 24

High Performance 24

Where Did Cassandra Come From? 24

Use Cases for Cassandra 25

Large Deployments 25

Lots of Writes, Statistics, and Analysis 26

Geographical Distribution 26

Evolving Applications 26

Who Is Using Cassandra? 26

Summary 28

2. Installing Cassandra . ................................................... 29

Installing the Binary 29

Extracting the Download 29

vii

What’s In There? 29

Building from Source 30

Additional Build Targets 32

Building with Maven 32

Running Cassandra 33

On Windows 33

On Linux 33

Starting the Server 34

Running the Command-Line Client Interface 35

Basic CLI Commands 36

Help 36

Connecting to a Server 36

Describing the Environment 37

Creating a Keyspace and Column Family 38

Writing and Reading Data 39

Summary 40

3. The Cassandra Data Model . . ............................................. 41

The Relational Data Model 41

A Simple Introduction 42

Clusters 45

Keyspaces 46

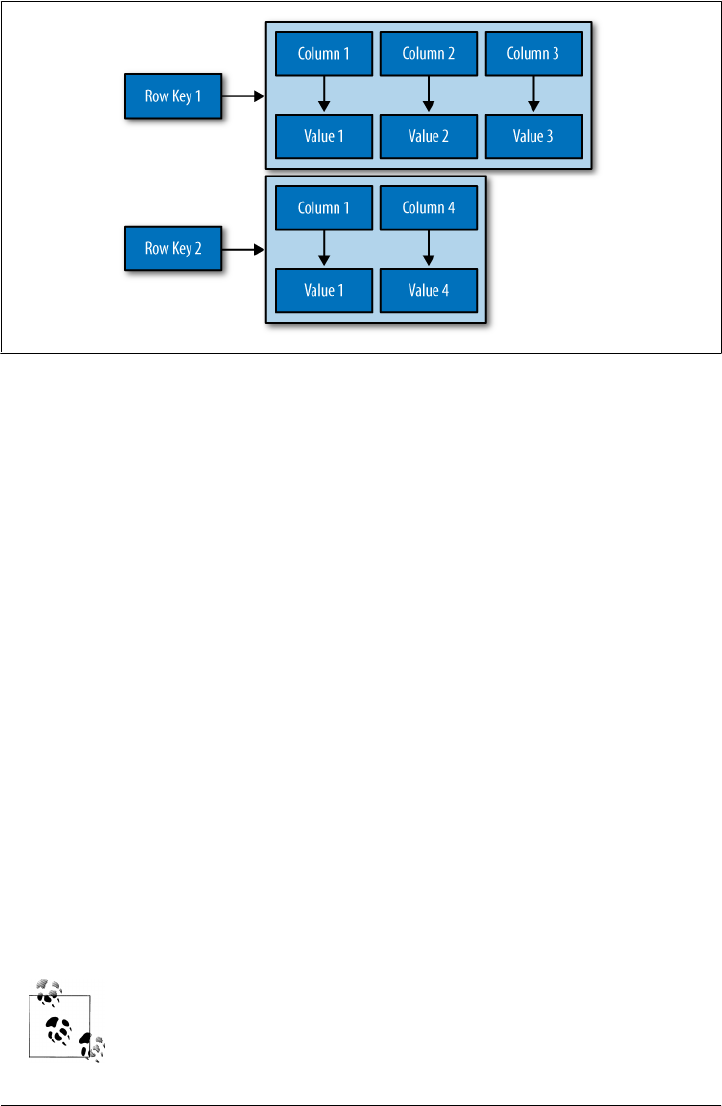

Column Families 47

Column Family Options 49

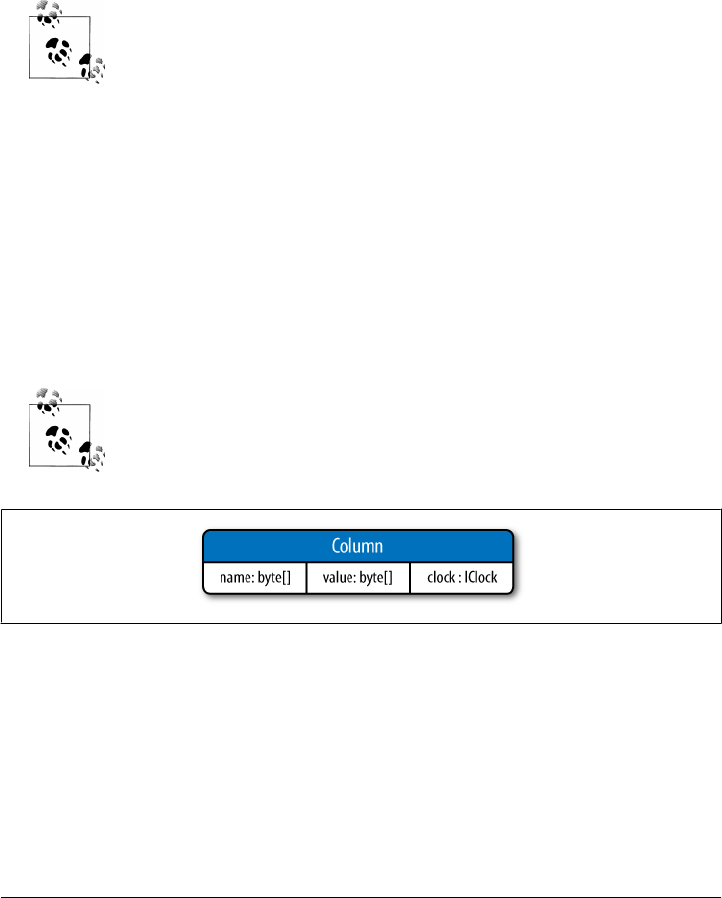

Columns 49

Wide Rows, Skinny Rows 51

Column Sorting 52

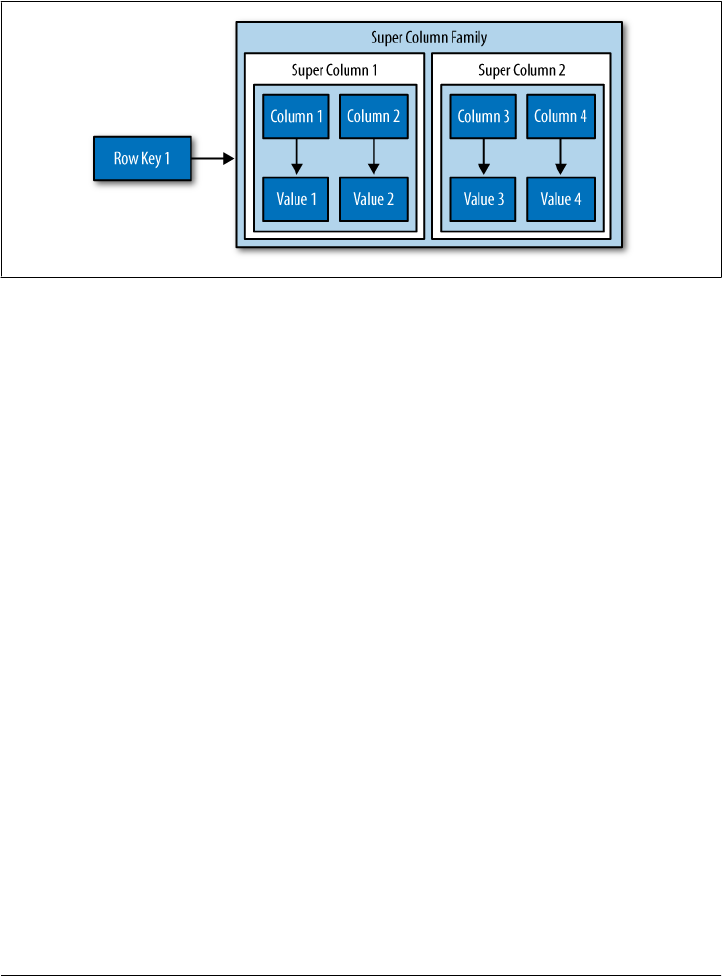

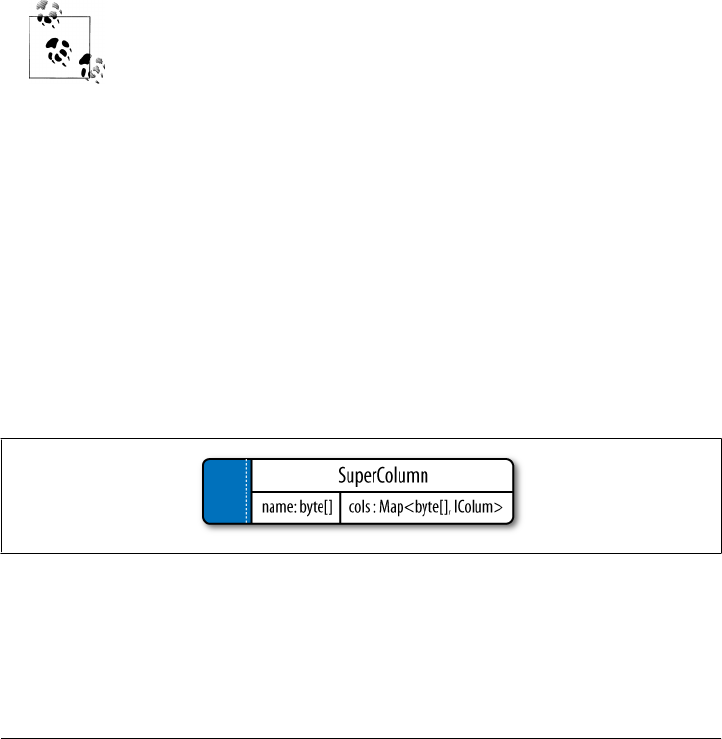

Super Columns 53

Composite Keys 55

Design Differences Between RDBMS and Cassandra 56

No Query Language 56

No Referential Integrity 56

Secondary Indexes 56

Sorting Is a Design Decision 57

Denormalization 57

Design Patterns 58

Materialized View 59

Valueless Column 59

Aggregate Key 59

Some Things to Keep in Mind 60

Summary 60

viii | Table of Contents

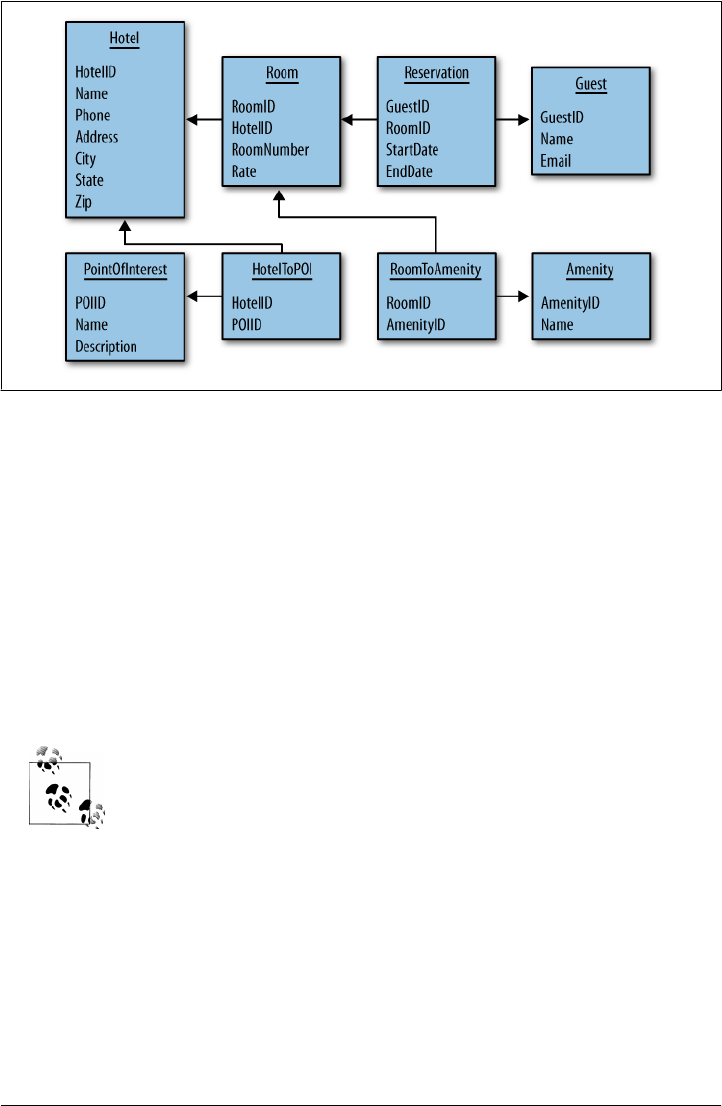

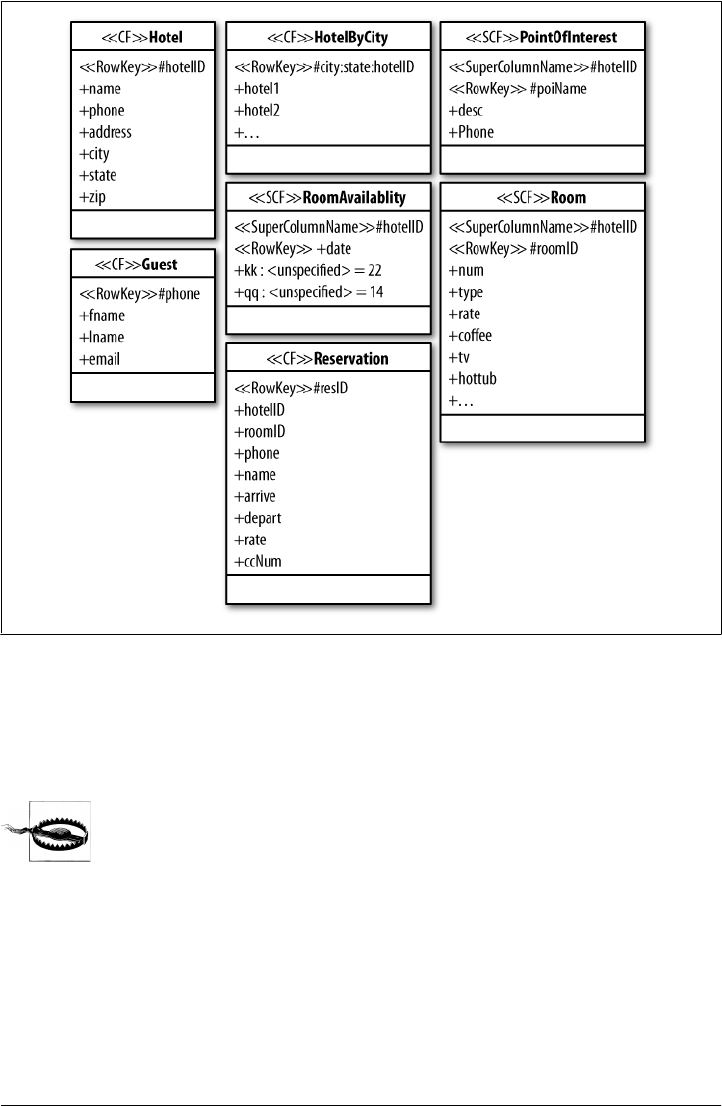

4. Sample Application .................................................... 61

Data Design 61

Hotel App RDBMS Design 62

Hotel App Cassandra Design 63

Hotel Application Code 64

Creating the Database 65

Data Structures 66

Getting a Connection 67

Prepopulating the Database 68

The Search Application 80

Twissandra 85

Summary 85

5. The Cassandra Architecture .............................................. 87

System Keyspace 87

Peer-to-Peer 88

Gossip and Failure Detection 88

Anti-Entropy and Read Repair 90

Memtables, SSTables, and Commit Logs 91

Hinted Handoff 93

Compaction 94

Bloom Filters 95

Tombstones 95

Staged Event-Driven Architecture (SEDA) 96

Managers and Services 97

Cassandra Daemon 97

Storage Service 97

Messaging Service 97

Hinted Handoff Manager 98

Summary 98

6. Configuring Cassandra .................................................. 99

Keyspaces 99

Creating a Column Family 102

Transitioning from 0.6 to 0.7 103

Replicas 103

Replica Placement Strategies 104

Simple Strategy 105

Old Network Topology Strategy 106

Network Topology Strategy 107

Replication Factor 107

Increasing the Replication Factor 108

Partitioners 110

Table of Contents | ix

Random Partitioner 110

Order-Preserving Partitioner 110

Collating Order-Preserving Partitioner 111

Byte-Ordered Partitioner 111

Snitches 111

Simple Snitch 111

PropertyFileSnitch 112

Creating a Cluster 113

Changing the Cluster Name 113

Adding Nodes to a Cluster 114

Multiple Seed Nodes 116

Dynamic Ring Participation 117

Security 118

Using SimpleAuthenticator 118

Programmatic Authentication 121

Using MD5 Encryption 122

Providing Your Own Authentication 122

Miscellaneous Settings 123

Additional Tools 124

Viewing Keys 124

Importing Previous Configurations 125

Summary 127

7. Reading and Writing Data . ............................................. 129

Query Differences Between RDBMS and Cassandra 129

No Update Query 129

Record-Level Atomicity on Writes 129

No Server-Side Transaction Support 129

No Duplicate Keys 130

Basic Write Properties 130

Consistency Levels 130

Basic Read Properties 132

The API 133

Ranges and Slices 133

Setup and Inserting Data 134

Using a Simple Get 140

Seeding Some Values 142

Slice Predicate 142

Getting Particular Column Names with Get Slice 142

Getting a Set of Columns with Slice Range 144

Getting All Columns in a Row 145

Get Range Slices 145

Multiget Slice 147

x | Table of Contents

Deleting 149

Batch Mutates 150

Batch Deletes 151

Range Ghosts 152

Programmatically Defining Keyspaces and Column Families 152

Summary 153

8. Clients .............................................................. 155

Basic Client API 156

Thrift 156

Thrift Support for Java 159

Exceptions 159

Thrift Summary 160

Avro 160

Avro Ant Targets 162

Avro Specification 163

Avro Summary 164

A Bit of Git 164

Connecting Client Nodes 165

Client List 165

Round-Robin DNS 165

Load Balancer 165

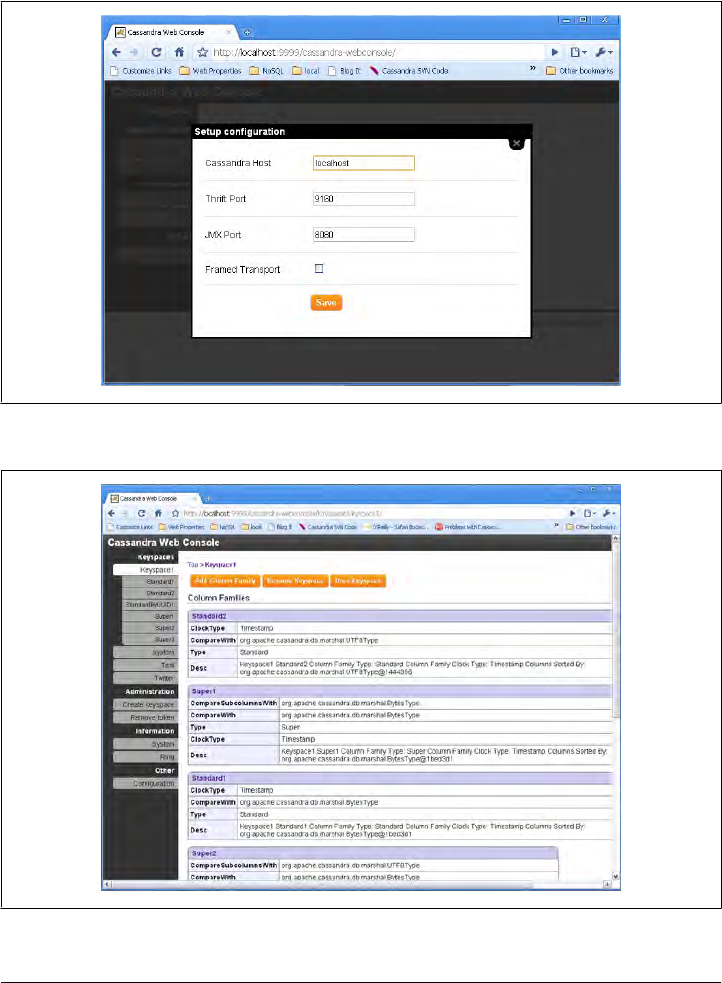

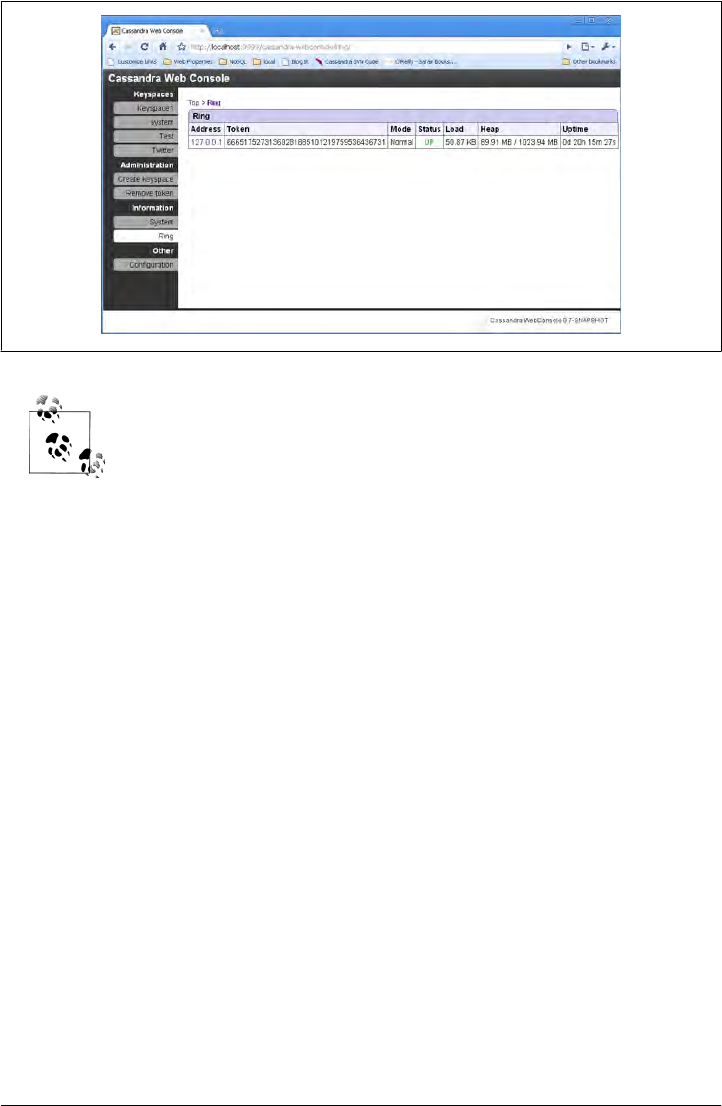

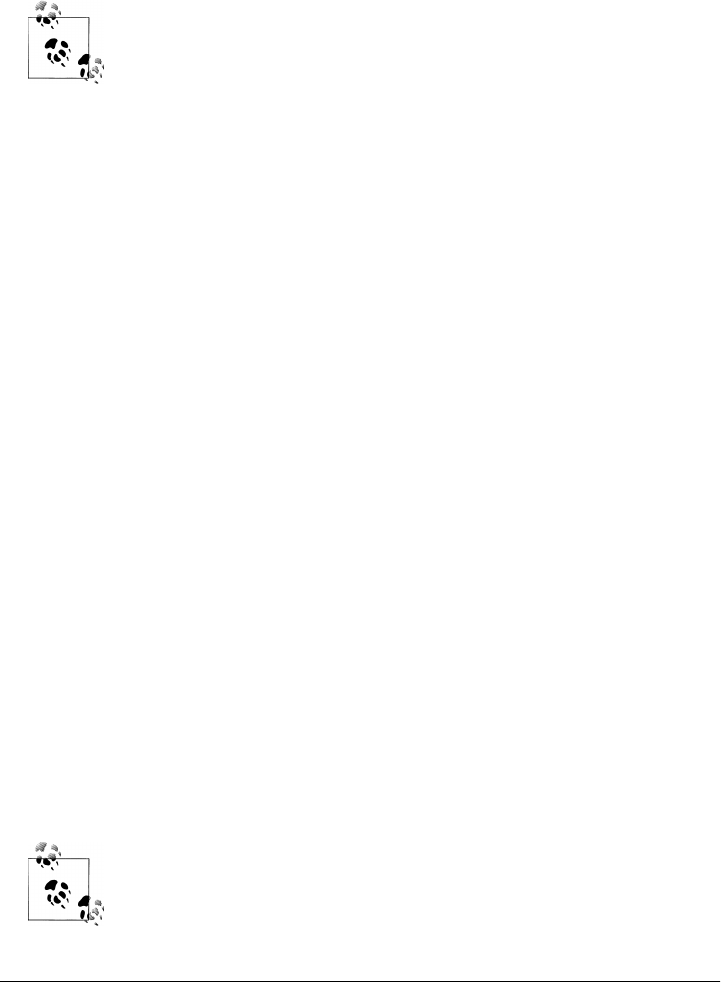

Cassandra Web Console 165

Hector (Java) 168

Features 169

The Hector API 170

HectorSharp (C#) 170

Chirper 175

Chiton (Python) 175

Pelops (Java) 176

Kundera (Java ORM) 176

Fauna (Ruby) 177

Summary 177

9. Monitoring . ......................................................... 179

Logging 179

Tailing 181

General Tips 182

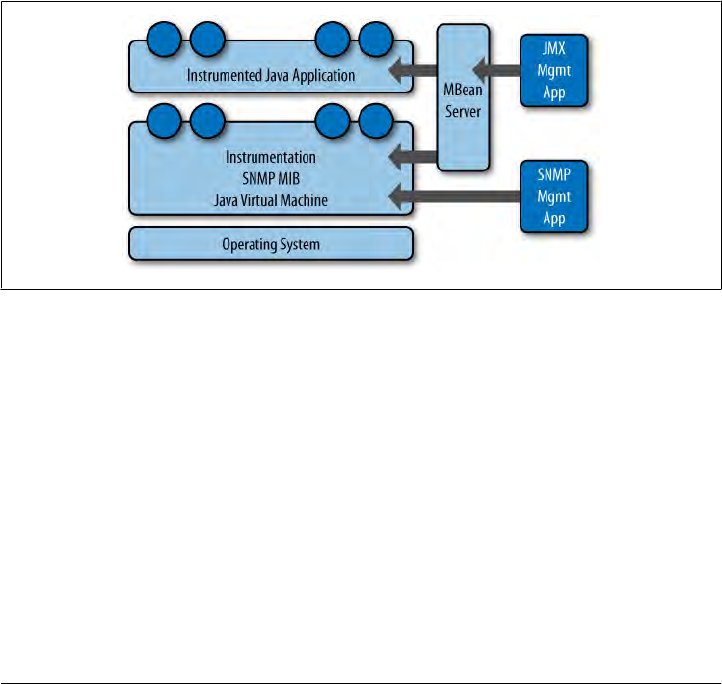

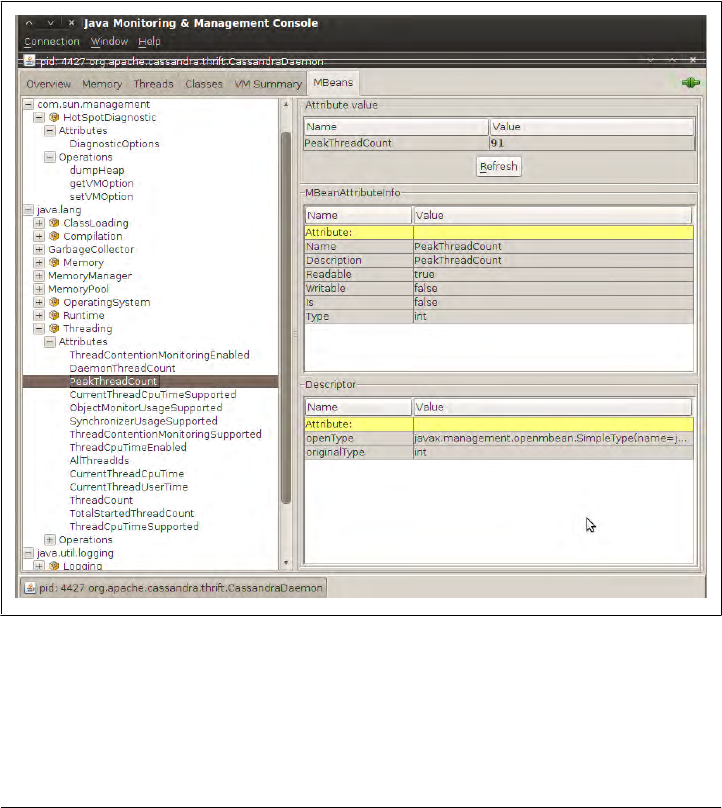

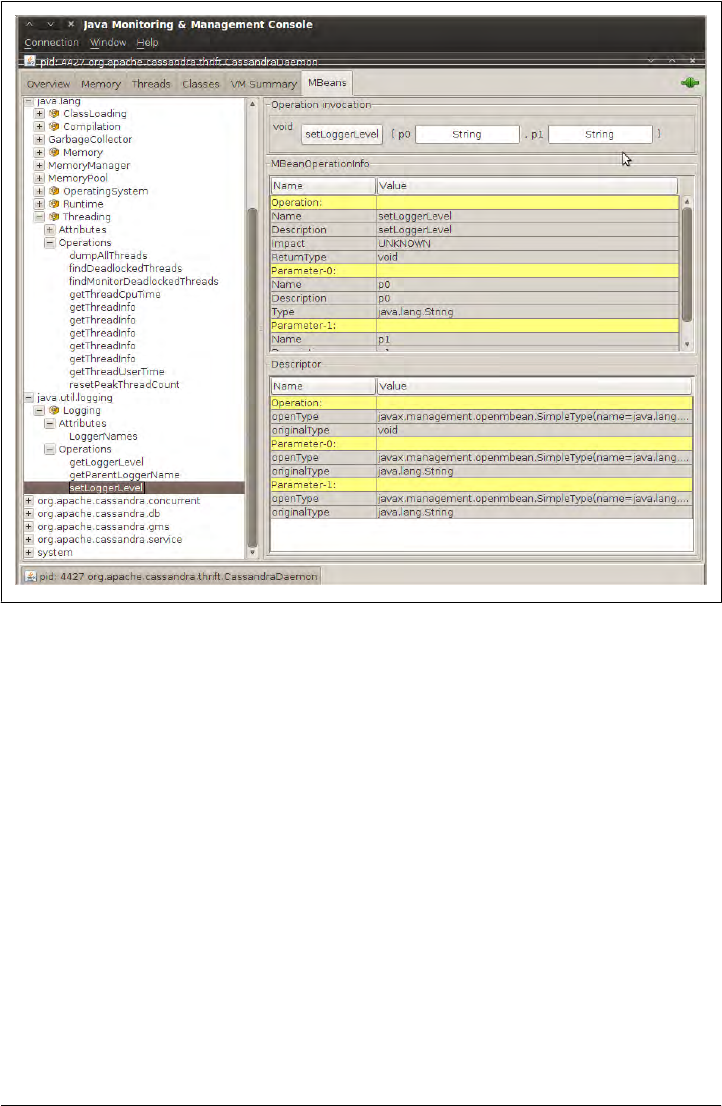

Overview of JMX and MBeans 183

MBeans 185

Integrating JMX 187

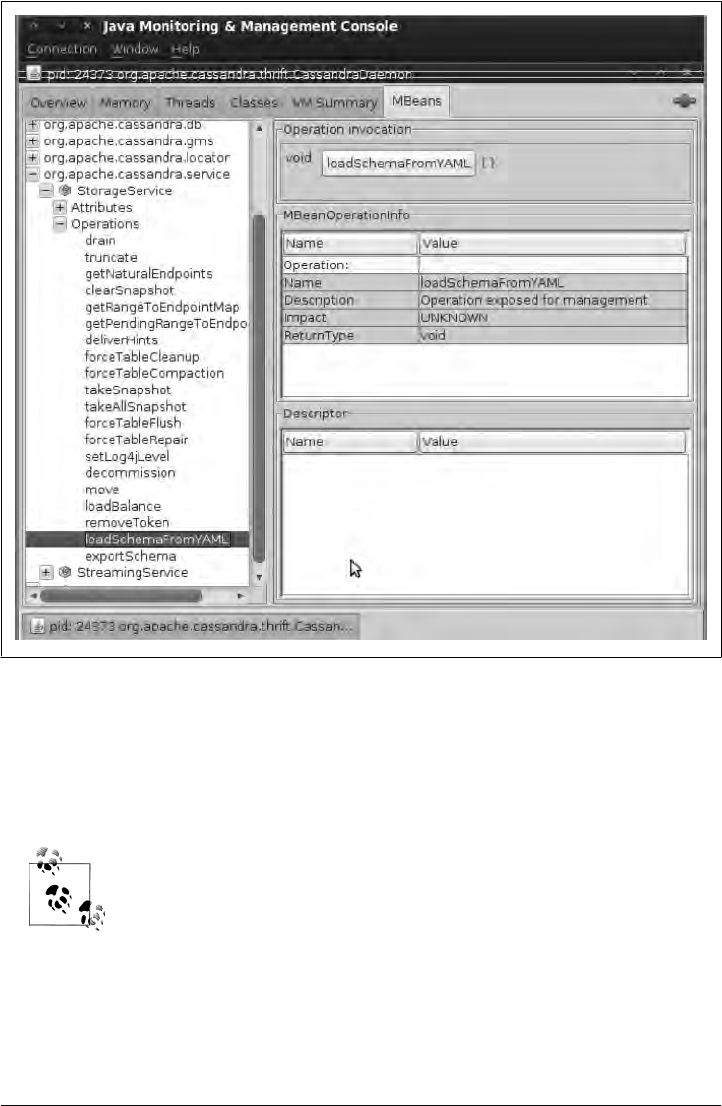

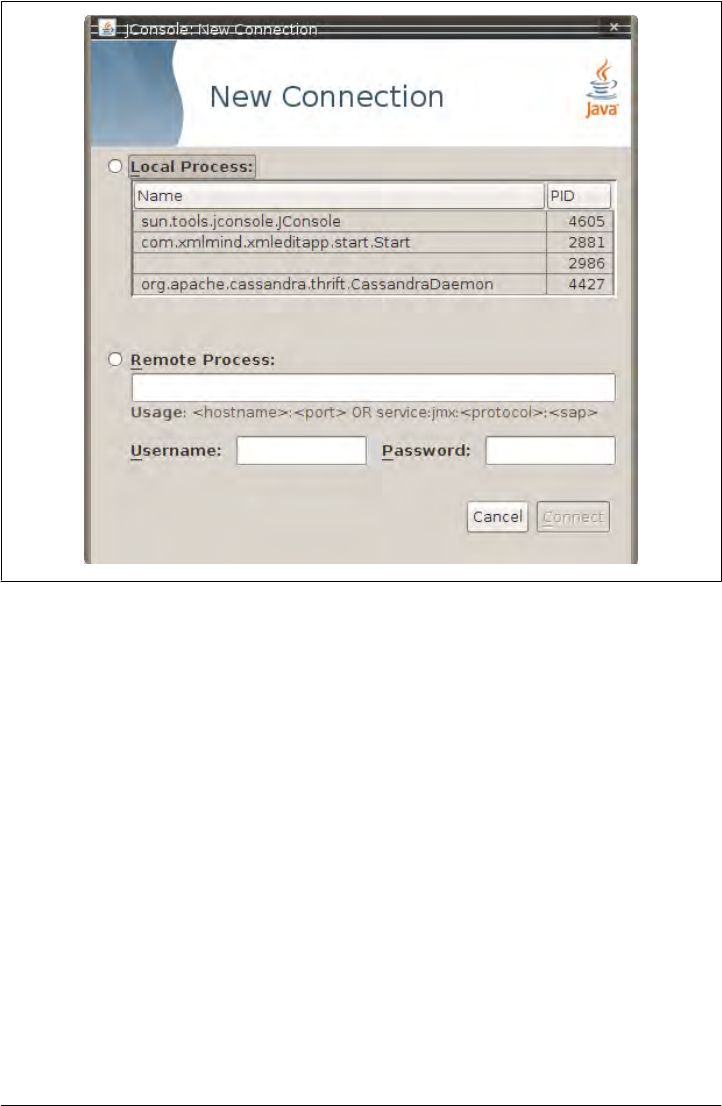

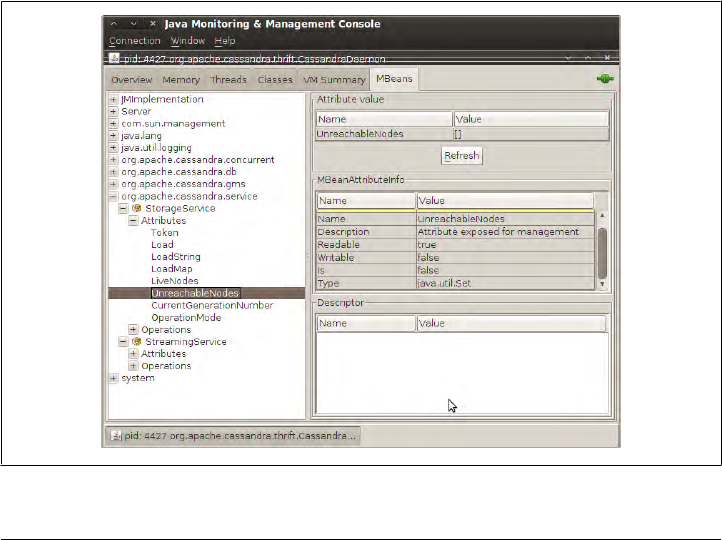

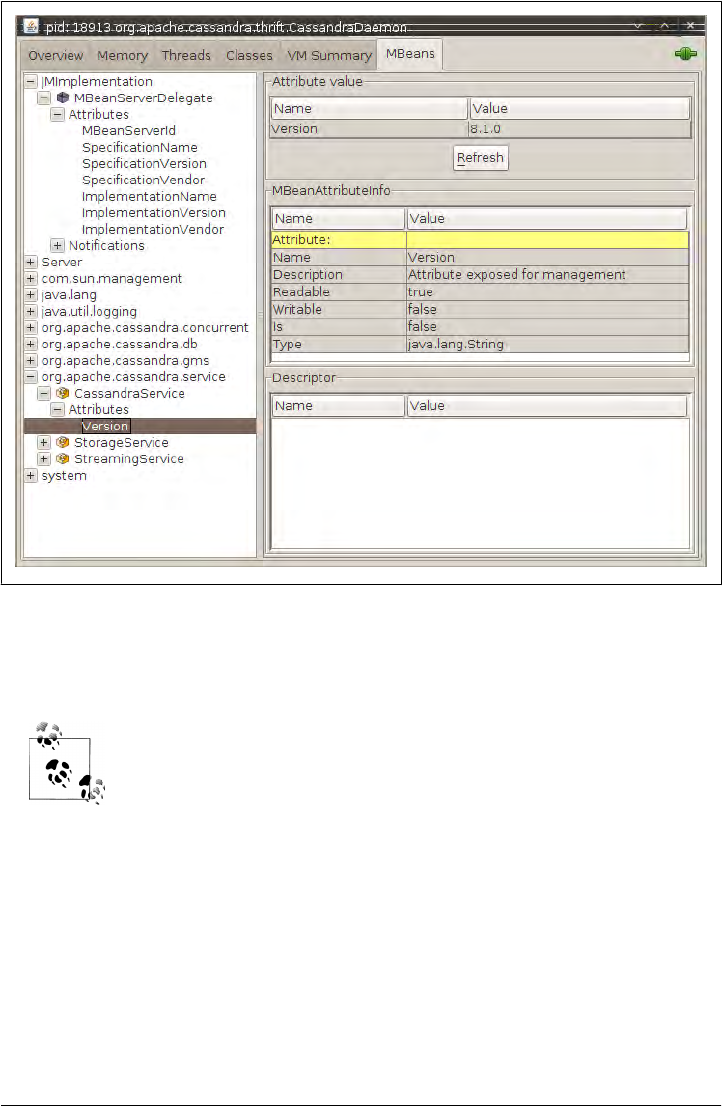

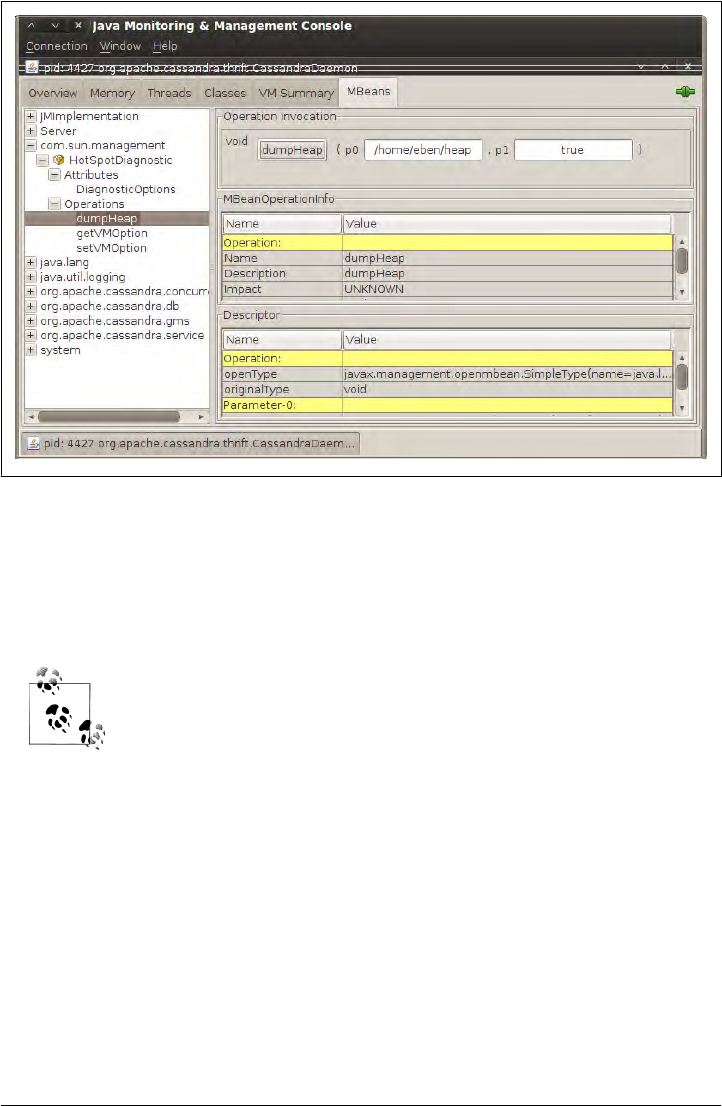

Interacting with Cassandra via JMX 188

Cassandra’s MBeans 190

Table of Contents | xi

org.apache.cassandra.concurrent 193

org.apache.cassandra.db 193

org.apache.cassandra.gms 194

org.apache.cassandra.service 194

Custom Cassandra MBeans 196

Runtime Analysis Tools 199

Heap Analysis with JMX and JHAT 199

Detecting Thread Problems 203

Health Check 204

Summary 204

10. Maintenance ......................................................... 207

Getting Ring Information 208

Info 208

Ring 208

Getting Statistics 209

Using cfstats 209

Using tpstats 210

Basic Maintenance 211

Repair 211

Flush 213

Cleanup 213

Snapshots 213

Taking a Snapshot 213

Clearing a Snapshot 214

Load-Balancing the Cluster 215

loadbalance and streams 215

Decommissioning a Node 218

Updating Nodes 220

Removing Tokens 220

Compaction Threshold 220

Changing Column Families in a Working Cluster 220

Summary 221

11. Performance Tuning . .................................................. 223

Data Storage 223

Reply Timeout 225

Commit Logs 225

Memtables 226

Concurrency 226

Caching 227

Buffer Sizes 228

Using the Python Stress Test 228

xii | Table of Contents

Generating the Python Thrift Interfaces 229

Running the Python Stress Test 230

Startup and JVM Settings 232

Tuning the JVM 232

Summary 234

12. Integrating Hadoop ................................................... 235

What Is Hadoop? 235

Working with MapReduce 236

Cassandra Hadoop Source Package 236

Running the Word Count Example 237

Outputting Data to Cassandra 239

Hadoop Streaming 239

Tools Above MapReduce 239

Pig 240

Hive 241

Cluster Configuration 241

Use Cases 242

Raptr.com: Keith Thornhill 243

Imagini: Dave Gardner 243

Summary 244

Appendix: The Nonrelational Landscape . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 245

Glossary ................................................................... 271

Index ..................................................................... 285

Table of Contents | xiii

Foreword

Cassandra was open-sourced by Facebook in July 2008. This original version of

Cassandra was written primarily by an ex-employee from Amazon and one from Mi-

crosoft. It was strongly influenced by Dynamo, Amazon’s pioneering distributed key/

value database. Cassandra implements a Dynamo-style replication model with no sin-

gle point of failure, but adds a more powerful “column family” data model.

I became involved in December of that year, when Rackspace asked me to build them

a scalable database. This was good timing, because all of today’s important open source

scalable databases were available for evaluation. Despite initially having only a single

major use case, Cassandra’s underlying architecture was the strongest, and I directed

my efforts toward improving the code and building a community.

Cassandra was accepted into the Apache Incubator, and by the time it graduated in

March 2010, it had become a true open source success story, with committers from

Rackspace, Digg, Twitter, and other companies that wouldn’t have written their own

database from scratch, but together built something important.

Today’s Cassandra is much more than the early system that powered (and still powers)

Facebook’s inbox search; it has become “the hands down winner for transaction pro-

cessing performance,” to quote Tony Bain, with a deserved reputation for reliability

and performance at scale.

As Cassandra matured and began attracting more mainstream users, it became clear

that there was a need for commercial support; thus, Matt Pfeil and I cofounded Riptano

in April 2010. Helping drive Cassandra adoption has been very rewarding, especially

seeing the uses that don’t get discussed in public.

Another need has been a book like this one. Like many open source projects, Cassan-

dra’s documentation has historically been weak. And even when the documentation

ultimately improves, a book-length treatment like this will remain useful.

xv

Thanks to Eben for tackling the difficult task of distilling the art and science of devel-

oping against and deploying Cassandra. You, the reader, have the opportunity to learn

these new concepts in an organized fashion.

—Jonathan Ellis

Project Chair, Apache Cassandra, and Cofounder, Riptano

xvi | Foreword

Preface

Why Apache Cassandra?

Apache Cassandra is a free, open source, distributed data storage system that differs

sharply from relational database management systems.

Cassandra first started as an incubation project at Apache in January of 2009. Shortly

thereafter, the committers, led by Apache Cassandra Project Chair Jonathan Ellis, re-

leased version 0.3 of Cassandra, and have steadily made minor releases since that time.

Though as of this writing it has not yet reached a 1.0 release, Cassandra is being used

in production by some of the biggest properties on the Web, including Facebook,

Twitter, Cisco, Rackspace, Digg, Cloudkick, Reddit, and more.

Cassandra has become so popular because of its outstanding technical features. It is

durable, seamlessly scalable, and tuneably consistent. It performs blazingly fast writes,

can store hundreds of terabytes of data, and is decentralized and symmetrical so there’s

no single point of failure. It is highly available and offers a schema-free data model.

Is This Book for You?

This book is intended for a variety of audiences. It should be useful to you if you are:

• A developer working with large-scale, high-volume websites, such as Web 2.0 so-

cial applications

• An application architect or data architect who needs to understand the available

options for high-performance, decentralized, elastic data stores

• A database administrator or database developer currently working with standard

relational database systems who needs to understand how to implement a fault-

tolerant, eventually consistent data store

xvii

• A manager who wants to understand the advantages (and disadvantages) of Cas-

sandra and related columnar databases to help make decisions about technology

strategy

• A student, analyst, or researcher who is designing a project related to Cassandra

or other non-relational data store options

This book is a technical guide. In many ways, Cassandra represents a new way of

thinking about data. Many developers who gained their professional chops in the last

15–20 years have become well-versed in thinking about data in purely relational or

object-oriented terms. Cassandra’s data model is very different and can be difficult to

wrap your mind around at first, especially for those of us with entrenched ideas about

what a database is (and should be).

Using Cassandra does not mean that you have to be a Java developer. However, Cas-

sandra is written in Java, so if you’re going to dive into the source code, a solid under-

standing of Java is crucial. Although it’s not strictly necessary to know Java, it can help

you to better understand exceptions, how to build the source code, and how to use

some of the popular clients. Many of the examples in this book are in Java. But because

of the interface used to access Cassandra, you can use Cassandra from a wide variety

of languages, including C#, Scala, Python, and Ruby.

Finally, it is assumed that you have a good understanding of how the Web works, can

use an integrated development environment (IDE), and are somewhat familiar with the

typical concerns of data-driven applications. You might be a well-seasoned developer

or administrator but still, on occasion, encounter tools used in the Cassandra world

that you’re not familiar with. For example, Apache Ivy is used to build Cassandra, and

a popular client (Hector) is available via Git. In cases where I speculate that you’ll need

to do a little setup of your own in order to work with the examples, I try to support that.

What’s in This Book?

This book is designed with the chapters acting, to a reasonable extent, as standalone

guides. This is important for a book on Cassandra, which has a variety of audiences

and is changing rapidly. To borrow from the software world, I wanted the book to be

“modular”—sort of. If you’re new to Cassandra, it makes sense to read the book in

order; if you’ve passed the introductory stages, you will still find value in later chapters,

which you can read as standalone guides.

Here is how the book is organized:

Chapter 1, Introducing Cassandra

This chapter introduces Cassandra and discusses what’s exciting and different

about it, who is using it, and what its advantages are.

Chapter 2, Installing Cassandra

This chapter walks you through installing Cassandra on a variety of platforms.

xviii | Preface

Chapter 3, The Cassandra Data Model

Here we look at Cassandra’s data model to understand what columns, super col-

umns, and rows are. Special care is taken to bridge the gap between the relational

database world and Cassandra’s world.

Chapter 4, Sample Application

This chapter presents a complete working application that translates from a rela-

tional model in a well-understood domain to Cassandra’s data model.

Chapter 5, The Cassandra Architecture

This chapter helps you understand what happens during read and write operations

and how the database accomplishes some of its notable aspects, such as durability

and high availability. We go under the hood to understand some of the more com-

plex inner workings, such as the gossip protocol, hinted handoffs, read repairs,

Merkle trees, and more.

Chapter 6, Configuring Cassandra

This chapter shows you how to specify partitioners, replica placement strategies,

and snitches. We set up a cluster and see the implications of different configuration

choices.

Chapter 7, Reading and Writing Data

This is the moment we’ve been waiting for. We present an overview of what’s

different about Cassandra’s model for querying and updating data, and then get

to work using the API.

Chapter 8, Clients

There are a variety of clients that third-party developers have created for many

different languages, including Java, C#, Ruby, and Python, in order to abstract

Cassandra’s lower-level API. We help you understand this landscape so you can

choose one that’s right for you.

Chapter 9, Monitoring

Once your cluster is up and running, you’ll want to monitor its usage, memory

patterns, and thread patterns, and understand its general activity. Cassandra has

a rich Java Management Extensions (JMX) interface baked in, which we put to use

to monitor all of these and more.

Chapter 10, Maintenance

The ongoing maintenance of a Cassandra cluster is made somewhat easier by some

tools that ship with the server. We see how to decommission a node, load-balance

the cluster, get statistics, and perform other routine operational tasks.

Chapter 11, Performance Tuning

One of Cassandra’s most notable features is its speed—it’s very fast. But there are

a number of things, including memory settings, data storage, hardware choices,

caching, and buffer sizes, that you can tune to squeeze out even more performance.

Preface | xix

Chapter 12, Integrating Hadoop

In this chapter, written by Jeremy Hanna, we put Cassandra in a larger context and

see how to integrate it with the popular implementation of Google’s Map/Reduce

algorithm, Hadoop.

Appendix

Many new databases have cropped up in response to the need to scale at Big Data

levels, or to take advantage of a “schema-free” model, or to support more recent

initiatives such as the Semantic Web. Here we contextualize Cassandra against a

variety of the more popular nonrelational databases, examining document-

oriented databases, distributed hashtables, and graph databases, to better

understand Cassandra’s offerings.

Glossary

It can be difficult to understand something that’s really new, and Cassandra has

many terms that might be unfamiliar to developers or DBAs coming from the re-

lational application development world, so I’ve included this glossary to make it

easier to read the rest of the book. If you’re stuck on a certain concept, you can flip

to the glossary to help clarify things such as Merkle trees, vector clocks, hinted

handoffs, read repairs, and other exotic terms.

This book is developed against Cassandra 0.6 and 0.7. The project team

is working hard on Cassandra, and new minor releases and bug fix re-

leases come out frequently. Where possible, I have tried to call out rel-

evant differences, but you might be using a different version by the time

you read this, and the implementation may have changed.

Finding Out More

If you’d like to find out more about Cassandra, and to get the latest updates, visit this

book’s companion website at http://www.cassandraguide.com.

It’s also an excellent idea to follow me on Twitter at @ebenhewitt.

Conventions Used in This Book

The following typographical conventions are used in this book:

Italic

Indicates new terms, URLs, email addresses, filenames, and file extensions.

Constant width

Used for program listings, as well as within paragraphs to refer to program elements

such as variable or function names, databases, data types, environment variables,

statements, and keywords.

xx | Preface

Constant width bold

Shows commands or other text that should be typed literally by the user.

Constant width italic

Shows text that should be replaced with user-supplied values or by values deter-

mined by context.

This icon signifies a tip, suggestion, or general note.

This icon indicates a warning or caution.

Using Code Examples

This book is here to help you get your job done. In general, you may use the code in

this book in your programs and documentation. You do not need to contact us for

permission unless you’re reproducing a significant portion of the code. For example,

writing a program that uses several chunks of code from this book does not require

permission. Selling or distributing a CD-ROM of examples from O’Reilly books does

require permission. Answering a question by citing this book and quoting example

code does not require permission. Incorporating a significant amount of example code

from this book into your product’s documentation does require permission.

We appreciate, but do not require, attribution. An attribution usually includes the title,

author, publisher, and ISBN. For example: “Cassandra: The Definitive Guide by Eben

Hewitt. Copyright 2011 Eben Hewitt, 978-1-449-39041-9.”

If you feel your use of code examples falls outside fair use or the permission given here,

feel free to contact us at permissions@oreilly.com.

Safari® Enabled

Safari Books Online is an on-demand digital library that lets you easily

search over 7,500 technology and creative reference books and videos to

find the answers you need quickly.

With a subscription, you can read any page and watch any video from our library online.

Read books on your cell phone and mobile devices. Access new titles before they are

available for print, and get exclusive access to manuscripts in development and post

feedback for the authors. Copy and paste code samples, organize your favorites,

Preface | xxi

download chapters, bookmark key sections, create notes, print out pages, and benefit

from tons of other time-saving features.

O’Reilly Media has uploaded this book to the Safari Books Online service. To have full

digital access to this book and others on similar topics from O’Reilly and other pub-

lishers, sign up for free at http://my.safaribooksonline.com

How to Contact Us

Please address comments and questions concerning this book to the publisher:

O’Reilly Media, Inc.

1005 Gravenstein Highway North

Sebastopol, CA 95472

800-998-9938 (in the United States or Canada)

707-829-0515 (international or local)

707 829-0104 (fax)

We have a web page for this book, where we list errata, examples, and any additional

information. You can access this page at:

http://oreilly.com/catalog/0636920010852/

To comment or ask technical questions about this book, send email to:

bookquestions@oreilly.com

For more information about our books, conferences, Resource Centers, and the

O’Reilly Network, see our website at:

http://www.oreilly.com

Acknowledgments

There are many wonderful people to whom I am grateful for helping bring this book

to life.

Thanks to Jeremy Hanna, for writing the Hadoop chapter, and for being so easy to

work with.

Thank you to my technical reviewers. Stu Hood’s insightful comments in particular

really improved the book. Robert Schneider and Gary Dusbabek contributed thought-

ful reviews.

Thank you to Jonathan Ellis for writing the foreword.

Thanks to my editor, Mike Loukides, for being a charming conversationalist at dinner

in San Francisco.

Thank you to Rain Fletcher for supporting and encouraging this book.

xxii | Preface

I’m inspired by the many terrific developers who have contributed to Cassandra. Hats

off for making such a pretty and powerful database.

As always, thank you to Alison Brown, who read drafts, gave me notes, and made sure

that I had time to work; this book would not have happened without you.

Preface | xxiii

CHAPTER 1

Introducing Cassandra

If at first the idea is not absurd,

then there is no hope for it.

—Albert Einstein

Welcome to Cassandra: The Definitive Guide. The aim of this book is to help developers

and database administrators understand this important new database, explore how it

compares to the relational database management systems we’re used to, and help you

put it to work in your own environment.

What’s Wrong with Relational Databases?

If I had asked people what they wanted, they

would have said faster horses.

—Henry Ford

I ask you to consider a certain model for data, invented by a small team at a company

with thousands of employees. It is accessible over a TCP/IP interface and is available

from a variety of languages, including Java and web services. This model was difficult

at first for all but the most advanced computer scientists to understand, until broader

adoption helped make the concepts clearer. Using the database built around this model

required learning new terms and thinking about data storage in a different way. But as

products sprang up around it, more businesses and government agencies put it to use,

in no small part because it was fast—capable of processing thousands of operations a

second. The revenue it generated was tremendous.

And then a new model came along.

The new model was threatening, chiefly for two reasons. First, the new model was very

different from the old model, which it pointedly controverted. It was threatening be-

cause it can be hard to understand something different and new. Ensuing debates can

help entrench people stubbornly further in their views—views that might have been

1

largely inherited from the climate in which they learned their craft and the circumstan-

ces in which they work. Second, and perhaps more importantly, as a barrier, the new

model was threatening because businesses had made considerable investments in the

old model and were making lots of money with it. Changing course seemed ridiculous,

even impossible.

Of course I’m talking about the Information Management System (IMS) hierarchical

database, invented in 1966 at IBM.

IMS was built for use in the Saturn V moon rocket. Its architect was Vern Watts, who

dedicated his career to it. Many of us are familiar with IBM’s database DB2. IBM’s

wildly popular DB2 database gets its name as the successor to DB1—the product built

around the hierarchical data model IMS. IMS was released in 1968, and subsequently

enjoyed success in Customer Information Control System (CICS) and other applica-

tions. It is still used today.

But in the years following the invention of IMS, the new model, the disruptive model,

the threatening model, was the relational database.

In his 1970 paper “A Relational Model of Data for Large Shared Data Banks,” Dr.

Edgar F. Codd, also at IBM, advanced his theory of the relational model for data while

working at IBM’s San Jose research laboratory. This paper, still available at http://www

.seas.upenn.edu/~zives/03f/cis550/codd.pdf, became the foundational work for rela-

tional database management systems.

Codd’s work was antithetical to the hierarchical structure of IMS. Understanding and

working with a relational database required learning new terms that must have sounded

very strange indeed to users of IMS. It presented certain advantages over its predecessor,

in part because giants are almost always standing on the shoulders of other giants.

While these ideas and their application have evolved in four decades, the relational

database still is clearly one of the most successful software applications in history. It’s

used in the form of Microsoft Access in sole proprietorships, and in giant multinational

corporations with clusters of hundreds of finely tuned instances representing multi-

terabyte data warehouses. Relational databases store invoices, customer records, prod-

uct catalogues, accounting ledgers, user authentication schemes—the very world, it

might appear. There is no question that the relational database is a key facet of the

modern technology and business landscape, and one that will be with us in its various

forms for many years to come, as will IMS in its various forms. The relational model

presented an alternative to IMS, and each has its uses.

So the short answer to the question, “What’s wrong with relational databases?” is

“Nothing.”

There is, however, a rather longer answer that I gently encourage you to consider. This

answer takes the long view, which says that every once in a while an idea is born that

ostensibly changes things, and engenders a revolution of sorts. And yet, in another way,

such revolutions, viewed structurally, are simply history’s business as usual. IMS,

2 | Chapter 1: Introducing Cassandra

RDBMS, NoSQL. The horse, the car, the plane. They each build on prior art, they each

attempt to solve certain problems, and so they’re each good at certain things—and less

good at others. They each coexist, even now.

So let’s examine for a moment why, at this point, we might consider an alternative to

the relational database, just as Codd himself four decades ago looked at the Information

Management System and thought that maybe it wasn’t the only legitimate way of or-

ganizing information and solving data problems, and that maybe, for certain problems,

it might prove fruitful to consider an alternative.

We encounter scalability problems when our relational applications become successful

and usage goes up. Joins are inherent in any relatively normalized relational database

of even modest size, and joins can be slow. The way that databases gain consistency is

typically through the use of transactions, which require locking some portion of the

database so it’s not available to other clients. This can become untenable under very

heavy loads, as the locks mean that competing users start queuing up, waiting for their

turn to read or write the data.

We typically address these problems in one or more of the following ways, sometimes

in this order:

• Throw hardware at the problem by adding more memory, adding faster processors,

and upgrading disks. This is known as vertical scaling. This can relieve you for a

time.

• When the problems arise again, the answer appears to be similar: now that one

box is maxed out, you add hardware in the form of additional boxes in a database

cluster. Now you have the problem of data replication and consistency during

regular usage and in failover scenarios. You didn’t have that problem before.

• Now we need to update the configuration of the database management system.

This might mean optimizing the channels the database uses to write to the under-

lying filesystem. We turn off logging or journaling, which frequently is not a

desirable (or, depending on your situation, legal) option.

• Having put what attention we could into the database system, we turn to our ap-

plication. We try to improve our indexes. We optimize the queries. But presumably

at this scale we weren’t wholly ignorant of index and query optimization, and

already had them in pretty good shape. So this becomes a painful process of picking

through the data access code to find any opportunities for fine tuning. This might

include reducing or reorganizing joins, throwing out resource-intensive features

such as XML processing within a stored procedure, and so forth. Of course, pre-

sumably we were doing that XML processing for a reason, so if we have to do it

somewhere, we move that problem to the application layer, hoping to solve it there

and crossing our fingers that we don’t break something else in the meantime.

What’s Wrong with Relational Databases? | 3

• We employ a caching layer. For larger systems, this might include distributed

caches such as memcached, EHCache, Oracle Coherence, or other related prod-

ucts. Now we have a consistency problem between updates in the cache and

updates in the database, which is exacerbated over a cluster.

• We turn our attention to the database again and decide that, now that the appli-

cation is built and we understand the primary query paths, we can duplicate some

of the data to make it look more like the queries that access it. This process, called

denormalization, is antithetical to the five normal forms that characterize the re-

lational model, and violate Codd’s 12 Commandments for relational data. We

remind ourselves that we live in this world, and not in some theoretical cloud, and

then undertake to do what we must to make the application start responding at

acceptable levels again, even if it’s no longer “pure.”

I imagine that this sounds familiar to you. At web scale, engineers have started to won-

der whether this situation isn’t similar to Henry Ford’s assertion that at a certain point,

it’s not simply a faster horse that you want. And they’ve done some impressive, inter-

esting work.

We must therefore begin here in recognition that the relational model is simply a

model. That is, it’s intended to be a useful way of looking at the world, applicable to

certain problems. It does not purport to be exhaustive, closing the case on all other

ways of representing data, never again to be examined, leaving no room for alternatives.

If we take the long view of history, Dr. Codd’s model was a rather disruptive one in its

time. It was new, with strange new vocabulary and terms such as “tuples”—familiar

words used in a new and different manner. The relational model was held up to sus-

picion, and doubtless suffered its vehement detractors. It encountered opposition even

in the form of Dr. Codd’s own employer, IBM, which had a very lucrative product set

around IMS and didn’t need a young upstart cutting into its pie.

But the relational model now arguably enjoys the best seat in the house within the data

world. SQL is widely supported and well understood. It is taught in introductory uni-

versity courses. There are free databases that come installed and ready to use with a

$4.95 monthly web hosting plan. Often the database we end up using is dictated to us

by architectural standards within our organization. Even absent such standards, it’s

prudent to learn whatever your organization already has for a database platform. Our

colleagues in development and infrastructure have considerable hard-won knowledge.

If by nothing more than osmosis—or inertia—we have learned over the years that a

relational database is a one-size-fits-all solution.

So perhaps the real question is not, “What’s wrong with relational databases?” but

rather, “What problem do you have?”

That is, you want to ensure that your solution matches the problem that you have.

There are certain problems that relational databases solve very well.

4 | Chapter 1: Introducing Cassandra

If massive, elastic scalability is not an issue for you, the trade-offs in relative complexity

of a system such as Cassandra may simply not be worth it. No proponent of Cassandra

that I know of is asking anyone to throw out everything they’ve learned about relational

databases, surrender their years of hard-won knowledge around such systems, and

unnecessarily jeopardize their employer’s carefully constructed systems in favor of the

flavor of the month.

Relational data has served all of us developers and DBAs well. But the explosion of the

Web, and in particular social networks, means a corresponding explosion in the sheer

volume of data we must deal with. When Tim Berners-Lee first worked on the Web in

the early 1990s, it was for the purpose of exchanging scientific documents between

PhDs at a physics laboratory. Now, of course, the Web has become so ubiquitous that

it’s used by everyone, from those same scientists to legions of five-year-olds exchanging

emoticons about kittens. That means in part that it must support enormous volumes

of data; the fact that it does stands as a monument to the ingenious architecture of the

Web.

But some of this infrastructure is starting to bend under the weight.

In 1966, a company like IBM was in a position to really make people listen to their

innovations. They had the problems, and they had the brain power to solve them.

As we enter the second decade of the 21st century, we’re starting to see similar inno-

vations, even from young companies such as Facebook and Twitter.

So perhaps the real question, then, is not “What problem do I have?” but rather, “What

kinds of things would I do with data if it wasn’t a problem?” What if you could easily

achieve fault tolerance, availability across multiple data centers, consistency that you

tune, and massive scalability even to the hundreds of terabytes, all from a client lan-

guage of your choosing? Perhaps, you say, you don’t need that kind of availability or

that level of scalability. And you know best. You’re certainly right, in fact, because if

your current database didn’t suit your current database needs, you’d have a nonfunc-

tioning system.

It is not my intention to convince you by clever argument to adopt a non-relational

database such as Apache Cassandra. It is only my intention to present what Cassandra

can do and how it does it so that you can make an informed decision and get started

working with it in practical ways if you find it applies. Only you know what your data

needs are. I do not ask you to reconsider your database—unless you’re miserable with

your current database, or you can’t scale how you need to already, or your data model

isn’t mapping to your application in a way that’s flexible enough for you. I don’t ask

you to consider your database, but rather to consider your organization, its dreams for

the future, and its emerging problems. Would you collect more information about your

business objects if you could?

Don’t ask how to make Cassandra fit into your existing environment. Ask what kinds

of data problems you’d like to have instead of the ones you have today. Ask what new

What’s Wrong with Relational Databases? | 5

kinds of data you would like. What understanding of your organization would you like

to have, if only you could enable it?

A Quick Review of Relational Databases

Though you are likely familiar with them, let’s briefly turn our attention to some of the

foundational concepts in relational databases. This will give us a basis on which to

consider more recent advances in thought around the trade-offs inherent in distributed

data systems, especially very large distributed data systems, such as those that are

required at web scale.

RDBMS: The Awesome and the Not-So-Much

There are many reasons that the relational database has become so overwhelmingly

popular over the last four decades. An important one is the Structured Query Language

(SQL), which is feature-rich and uses a simple, declarative syntax. SQL was first offi-

cially adopted as an ANSI standard in 1986; since that time it’s gone through several

revisions and has also been extended with vendor proprietary syntax such as Micro-

soft’s T-SQL and Oracle’s PL/SQL to provide additional implementation-specific

features.

SQL is powerful for a variety of reasons. It allows the user to represent complex rela-

tionships with the data, using statements that form the Data Manipulation Language

(DML) to insert, select, update, delete, truncate, and merge data. You can perform a

rich variety of operations using functions based on relational algebra to find a maximum

or minimum value in a set, for example, or to filter and order results. SQL statements

support grouping aggregate values and executing summary functions. SQL provides a

means of directly creating, altering, and dropping schema structures at runtime using

Data Definition Language (DDL). SQL also allows you to grant and revoke rights for

users and groups of users using the same syntax.

SQL is easy to use. The basic syntax can be learned quickly, and conceptually SQL and

RDBMS offer a low barrier to entry. Junior developers can become proficient readily,

and as is often the case in an industry beset by rapid changes, tight deadlines, and

exploding budgets, ease of use can be very important. And it’s not just the syntax that’s

easy to use; there are many robust tools that include intuitive graphical interfaces for

viewing and working with your database.

In part because it’s a standard, SQL allows you to easily integrate your RDBMS with a

wide variety of systems. All you need is a driver for your application language, and

you’re off to the races in a very portable way. If you decide to change your application

implementation language (or your RDBMS vendor), you can often do that painlessly,

assuming you haven’t backed yourself into a corner using lots of proprietary extensions.

6 | Chapter 1: Introducing Cassandra

Transactions, ACID-ity, and two-phase commit

In addition to the features mentioned already, RDBMS and SQL also support transac-

tions. A database transaction is, as Jim Gray puts it, “a transformation of state” that

has the ACID properties (see http://research.microsoft.com/en-us/um/people/gray/pa

pers/theTransactionConcept.pdf). A key feature of transactions is that they execute vir-

tually at first, allowing the programmer to undo (using ROLLBACK) any changes that

may have gone awry during execution; if all has gone well, the transaction can be reli-

ably committed. The debate about support for transactions comes up very quickly as

a sore spot in conversations around non-relational data stores, so let’s take a moment

to revisit what this really means.

ACID is an acronym for Atomic, Consistent, Isolated, Durable, which are the gauges

we can use to assess that a transaction has executed properly and that it was successful:

Atomic

Atomic means “all or nothing”; that is, when a statement is executed, every update within the

transaction must succeed in order to be called successful. There is no partial failure where one

update was successful and another related update failed. The common example here is with

monetary transfers at an ATM: the transfer requires subtracting money from one account and

adding it to another account. This operation cannot be subdivided; they must both succeed.

Consistent

Consistent means that data moves from one correct state to another correct state, with no

possibility that readers could view different values that don’t make sense together. For example,

if a transaction attempts to delete a Customer and her Order history, it cannot leave Order rows

that reference the deleted customer’s primary key; this is an inconsistent state that would cause

errors if someone tried to read those Order records.

Isolated

Isolated means that transactions executing concurrently will not become entangled with each

other; they each execute in their own space. That is, if two different transactions attempt to

modify the same data at the same time, then one of them will have to wait for the other to

complete.

Durable

Once a transaction has succeeded, the changes will not be lost. This doesn’t imply another

transaction won’t later modify the same data; it just means that writers can be confident that

the changes are available for the next transaction to work with as necessary.

On the surface, these properties seem so obviously desirable as to not even merit con-

versation. Presumably no one who runs a database would suggest that data updates

don’t have to endure for some length of time; that’s the very point of making updates—

that they’re there for others to read. However, a more subtle examination might lead

us to want to find a way to tune these properties a bit and control them slightly. There

is, as they say, no free lunch on the Internet, and once we see how we’re paying for our

transactions, we may start to wonder whether there’s an alternative.

Transactions become difficult under heavy load. When you first attempt to horizontally

scale a relational database, making it distributed, you must now account for distributed

A Quick Review of Relational Databases | 7

transactions, where the transaction isn’t simply operating inside a single table or a single

database, but is spread across multiple systems. In order to continue to honor the ACID

properties of transactions, you now need a transaction manager to orchestrate across

the multiple nodes.

In order to account for successful completion across multiple hosts, the idea of a two-

phase commit (sometimes referred to as “2PC”) is introduced. But then, because

two-phase commit locks all associate resources, it is useful only for operations that can

complete very quickly. Although it may often be the case that your distributed opera-

tions can complete in sub-second time, it is certainly not always the case. Some use

cases require coordination between multiple hosts that you may not control yourself.

Operations coordinating several different but related activities can take hours to

update.

Two-phase commit blocks; that is, clients (“competing consumers”) must wait for a

prior transaction to finish before they can access the blocked resource. The protocol

will wait for a node to respond, even if it has died. It’s possible to avoid waiting forever

in this event, because a timeout can be set that allows the transaction coordinator node

to decide that the node isn’t going to respond and that it should abort the transaction.

However, an infinite loop is still possible with 2PC; that’s because a node can send a

message to the transaction coordinator node agreeing that it’s OK for the coordinator

to commit the entire transaction. The node will then wait for the coordinator to send

a commit response (or a rollback response if, say, a different node can’t commit); if the

coordinator is down in this scenario, that node conceivably will wait forever.

So in order to account for these shortcomings in two-phase commit of distributed

transactions, the database world turned to the idea of compensation. Compensation,

often used in web services, means in simple terms that the operation is immediately

committed, and then in the event that some error is reported, a new operation is invoked

to restore proper state.

There are a few basic, well-known patterns for compensatory action that architects

frequently have to consider as an alternative to two-phase commit. These include writ-

ing off the transaction if it fails, deciding to discard erroneous transactions and

reconciling later. Another alternative is to retry failed operations later on notification.

In a reservation system or a stock sales ticker, these are not likely to meet your require-

ments. For other kinds of applications, such as billing or ticketing applications, this

can be acceptable.

Gregor Hohpe, a Google architect, wrote a wonderful and often-cited

blog entry called “Starbucks Does Not Use Two-Phase Commit.” It

shows in real-world terms how difficult it is to scale two-phase commit

and highlights some of the alternatives that are mentioned here. Check

it out at http://www.eaipatterns.com/ramblings/18_starbucks.html. It’s

an easy, fun, and enlightening read.

8 | Chapter 1: Introducing Cassandra

The problems that 2PC introduces for application developers include loss of availability

and higher latency during partial failures. Neither of these is desirable. So once you’ve

had the good fortune of being successful enough to necessitate scaling your database

past a single machine, you now have to figure out how to handle transactions across

multiple machines and still make the ACID properties apply. Whether you have 10 or

100 or 1,000 database machines, atomicity is still required in transactions as if you were

working on a single node. But it’s now a much, much bigger pill to swallow.

Schema

One often-lauded feature of relational database systems is the rich schemas they afford.

You can represent your domain objects in a relational model. A whole industry has

sprung up around (expensive) tools such as the CA ERWin Data Modeler to support

this effort. In order to create a properly normalized schema, however, you are forced

to create tables that don’t exist as business objects in your domain. For example, a

schema for a university database might require a Student table and a Course table. But

because of the “many-to-many” relationship here (one student can take many courses

at the same time, and one course has many students at the same time), you have to

create a join table. This pollutes a pristine data model, where we’d prefer to just have

students and courses. It also forces us to create more complex SQL statements to join

these tables together. The join statements, in turn, can be slow.

Again, in a system of modest size, this isn’t much of a problem. But complex queries

and multiple joins can become burdensomely slow once you have a large number of

rows in many tables to handle.

Finally, not all schemas map well to the relational model. One type of system that has

risen in popularity in the last decade is the complex event processing system, which

represents state changes in a very fast stream. It’s often useful to contextualize events

at runtime against other events that might be related in order to infer some conclusion

to support business decision making. Although event streams could be represented in

terms of a relational database, it is an uncomfortable stretch.

And if you’re an application developer, you’ll no doubt be familiar with the many

object-relational mapping (ORM) frameworks that have sprung up in recent years to

help ease the difficulty in mapping application objects to a relational model. Again, for

small systems, ORM can be a relief. But it also introduces new problems of its own,

such as extended memory requirements, and it often pollutes the application code with

increasingly unwieldy mapping code. Here’s an example of a Java method using

Hibernate to “ease the burden” of having to write the SQL code:

A Quick Review of Relational Databases | 9

@CollectionOfElements

@JoinTable(name="store_description",

joinColumns = @JoinColumn(name="store_code"))

@MapKey(columns={@Column(name="for_store",length=3)})

@Column(name="description")

private Map<String, String> getMap() {

return this.map;

}

//... etc.

Is it certain that we’ve done anything but move the problem here? Of course, with some

systems, such as those that make extensive use of document exchange, as with services

or XML-based applications, there are not always clear mappings to a relational data-

base. This exacerbates the problem.

Sharding and shared-nothing architecture

If you can’t split it, you can’t scale it.

—Randy Shoup, Distinguished Architect, eBay

Another way to attempt to scale a relational database is to introduce sharding to your

architecture. This has been used to good effect at large websites such as eBay, which

supports billions of SQL queries a day, and in other Web 2.0 applications. The idea

here is that you split the data so that instead of hosting all of it on a single server or

replicating all of the data on all of the servers in a cluster, you divide up portions of the

data horizontally and host them each separately.

For example, consider a large customer table in a relational database. The least dis-

ruptive thing (for the programming staff, anyway) is to vertically scale by adding CPU,

adding memory, and getting faster hard drives, but if you continue to be successful and

add more customers, at some point (perhaps into the tens of millions of rows), you’ll

likely have to start thinking about how you can add more machines. When you do so,

do you just copy the data so that all of the machines have it? Or do you instead divide

up that single customer table so that each database has only some of the records, with

their order preserved? Then, when clients execute queries, they put load only on the

machine that has the record they’re looking for, with no load on the other machines.

It seems clear that in order to shard, you need to find a good key by which to order

your records. For example, you could divide your customer records across 26 machines,

one for each letter of the alphabet, with each hosting only the records for customers

whose last names start with that particular letter. It’s likely this is not a good strategy,

however—there probably aren’t many last names that begin with “Q” or “Z,” so those

machines will sit idle while the “J,” “M,” and “S” machines spike. You could shard

according to something numeric, like phone number, “member since” date, or the

name of the customer’s state. It all depends on how your specific data is likely to be

distributed.

10 | Chapter 1: Introducing Cassandra

There are three basic strategies for determining shard structure:

Feature-based shard or functional segmentation

This is the approach taken by Randy Shoup, Distinguished Architect at eBay, who

in 2006 helped bring their architecture into maturity to support many billions of

queries per day. Using this strategy, the data is split not by dividing records in a

single table (as in the customer example discussed earlier), but rather by splitting

into separate databases the features that don’t overlap with each other very much.

For example, at eBay, the users are in one shard, and the items for sale are in

another. At Flixster, movie ratings are in one shard and comments are in another.

This approach depends on understanding your domain so that you can segment

data cleanly.

Key-based sharding

In this approach, you find a key in your data that will evenly distribute it across

shards. So instead of simply storing one letter of the alphabet for each server as in

the (naive and improper) earlier example, you use a one-way hash on a key data

element and distribute data across machines according to the hash. It is common

in this strategy to find time-based or numeric keys to hash on.

Lookup table

In this approach, one of the nodes in the cluster acts as a “yellow pages” directory

and looks up which node has the data you’re trying to access. This has two obvious

disadvantages. The first is that you’ll take a performance hit every time you have

to go through the lookup table as an additional hop. The second is that the lookup

table not only becomes a bottleneck, but a single point of failure.

To read about how they used data sharding strategies to improve per-

formance at Flixster, see http://lsvp.wordpress.com/2008/06/20.

Sharding can minimize contention depending on your strategy and allows you not just

to scale horizontally, but then to scale more precisely, as you can add power to the

particular shards that need it.

Sharding could be termed a kind of “shared-nothing” architecture that’s specific to

databases. A shared-nothing architecture is one in which there is no centralized (shared)

state, but each node in a distributed system is independent, so there is no client con-

tention for shared resources. The term was first coined by Michael Stonebraker at

University of California at Berkeley in his 1986 paper “The Case for Shared Nothing.”

Shared Nothing was more recently popularized by Google, which has written systems

such as its Bigtable database and its MapReduce implementation that do not share

state, and are therefore capable of near-infinite scaling. The Cassandra database is a

shared-nothing architecture, as it has no central controller and no notion of master/

slave; all of its nodes are the same.

A Quick Review of Relational Databases | 11

You can read the 1986 paper “The Case for Shared Nothing” online at

http://db.cs.berkeley.edu/papers/hpts85-nothing.pdf. It’s only a few pa-

ges. If you take a look, you’ll see that many of the features of shared-

nothing distributed data architecture, such as ease of high availability

and the ability to scale to a very large number of machines, are the very

things that Cassandra excels at.

MongoDB also provides auto-sharding capabilities to manage failover and node bal-

ancing. That many nonrelational databases offer this automatically and out of the box

is very handy; creating and maintaining custom data shards by hand is a wicked prop-

osition. It’s good to understand sharding in terms of data architecture in general, but

especially in terms of Cassandra more specifically, as it can take an approach similar

to key-based sharding to distribute data across nodes, but does so automatically.

Summary

In summary, relational databases are very good at solving certain data storage problems,

but because of their focus, they also can create problems of their own when it’s time

to scale. Then, you often need to find a way to get rid of your joins, which means

denormalizing the data, which means maintaining multiple copies of data and seriously

disrupting your design, both in the database and in your application. Further, you

almost certainly need to find a way around distributed transactions, which will quickly

become a bottleneck. These compensatory actions are not directly supported in any

but the most expensive RDBMS. And even if you can write such a huge check, you still

need to carefully choose partitioning keys to the point where you can never entirely

ignore the limitation.

Perhaps more importantly, as we see some of the limitations of RDBMS and conse-

quently some of the strategies that architects have used to mitigate their scaling issues,

a picture slowly starts to emerge. It’s a picture that makes some NoSQL solutions seem

perhaps less radical and less scary than we may have thought at first, and more like a

natural expression and encapsulation of some of the work that was already being done

to manage very large databases.

Web Scale

An invention has to make sense in the world in which it

is finished, not the world in which it is started.

—Ray Kurzweil

Because of some of the inherent design decisions in RDBMS, it is not always as easy to

scale as some other, more recent possibilities that take the structure of the Web into

consideration. But it’s not only the structure of the Web we need to consider, but also

its phenomenal growth, because as more and more data becomes available, we need

12 | Chapter 1: Introducing Cassandra

architectures that allow our organizations to take advantage of this data in near-time

to support decision making and to offer new and more powerful features and

capabilities to our customers.

It has been said, though it is hard to verify, that the 17th-century English

poet John Milton had actually read every published book on the face of

the earth. Milton knew many languages (he was even learning Navajo

at the time of his death), and given that the total number of published

books at that time was in the thousands, this would have been possible.

The size of the world’s data stores have grown somewhat since then.

We all know the Web is growing. But let’s take a moment to consider some numbers

from the IDC research paper “The Expanding Digital Universe.” (The complete

paper is available at http://www.emc.com/collateral/analyst-reports/expanding-digital

-idc-white-paper.pdf.)

• YouTube serves 100 million videos every day.

• Chevron accumulates 2TB of data every day.

• In 2006, the amount of data on the Internet was approximately 166 exabytes

(166EB). In 2010, that number reached nearly 1,000 exabytes. An exabyte is one

quintillion bytes, or 1.1 million terabytes. To put this statistic in perspective, 1EB

is roughly the equivalent of 50,000 years of DVD-quality video. 166EB is approx-

imately three million times the amount of information contained in all the books

ever written.

• Wal-Mart’s database of customer transactions is reputed to have stored 110 tera-

bytes in 2000, recording tens of millions of transactions per day. By 2004, it had

grown to half a petabyte.

• The movie Avatar required 1PB storage space, or the equivalent of a single MP3

song—if that MP3 were 32 years long (source: http://bit.ly/736XCz).

• As of May 2010, Google was provisioning 100,000 Android phones every day, all

of which have Internet access as a foundational service.

• In 1998, the number of email accounts was approximately 253 million. By 2010,

that number is closer to 2 billion.

As you can see, there is great variety to the kinds of data that need to be stored, pro-

cessed, and queried, and some variety to the businesses that use such data. Consider

not only customer data at familiar retailers or suppliers, and not only digital video

content, but also the required move to digital television and the explosive growth of

email, messaging, mobile phones, RFID, Voice Over IP (VoIP) usage, and more. We

now have Blu-ray players that stream movies and music. As we begin departing from

physical consumer media storage, the companies that provide that content—and the

third-party value-add businesses built around them—will require very scalable data

solutions. Consider too that as a typical business application developer or database

A Quick Review of Relational Databases | 13

administrator, we may be used to thinking of relational databases as the center of our

universe. You might then be surprised to learn that within corporations, around 80%

of data is unstructured.

Or perhaps you think the kind of scale afforded by NoSQL solutions such as Cassandra

don’t apply to you. And maybe they don’t. It’s very possible that you simply don’t have

a problem that Cassandra can help you with. But I’m not asking you to envision your

database and its data as they exist today and figure out ways to migrate to Cassandra.

That would be a very difficult exercise, with a payoff that might be hard to see. It’s

almost analytic that the database you have today is exactly the right one for your ap-

plication of today. But if you could incorporate a wider array of rich data sets to help

improve your applications, what kinds of qualities would you then be looking for in a

database? The question becomes what kind of application would you want to have if

durability, elastic scalability, vast storage, and blazing-fast writes weren’t a problem?

In a world now working at web scale and looking to the future, Apache Cassandra

might be one part of the answer.

The Cassandra Elevator Pitch

Hollywood screenwriters and software startups are often advised to have their “elevator

pitch” ready. This is a summary of exactly what their product is all about—concise,

clear, and brief enough to deliver in just a minute or two, in the lucky event that they

find themselves sharing an elevator with an executive or agent or investor who might

consider funding their project. Cassandra has a compelling story, so let's boil it down

to an elevator pitch that you can present to your manager or colleagues should the

occasion arise.

Cassandra in 50 Words or Less

“Apache Cassandra is an open source, distributed, decentralized, elastically scalable,

highly available, fault-tolerant, tuneably consistent, column-oriented database that

bases its distribution design on Amazon’s Dynamo and its data model on Google’s

Bigtable. Created at Facebook, it is now used at some of the most popular sites on the

Web.” That’s exactly 50 words.

Of course, if you were to recite that to your boss in the elevator, you'd probably get a

blank look in return. So let's break down the key points in the following sections.

Distributed and Decentralized

Cassandra is distributed, which means that it is capable of running on multiple

machines while appearing to users as a unified whole. In fact, there is little point in

running a single Cassandra node. Although you can do it, and that’s acceptable for

getting up to speed on how it works, you quickly realize that you’ll need multiple

14 | Chapter 1: Introducing Cassandra

machines to really realize any benefit from running Cassandra. Much of its design and

code base is specifically engineered toward not only making it work across many dif-

ferent machines, but also for optimizing performance across multiple data center racks,

and even for a single Cassandra cluster running across geographically dispersed data

centers. You can confidently write data to anywhere in the cluster and Cassandra will

get it.

Once you start to scale many other data stores (MySQL, Bigtable), some nodes need

to be set up as masters in order to organize other nodes, which are set up as slaves.

Cassandra, however, is decentralized, meaning that every node is identical; no Cas-

sandra node performs certain organizing operations distinct from any other node.

Instead, Cassandra features a peer-to-peer protocol and uses gossip to maintain and

keep in sync a list of nodes that are alive or dead.

The fact that Cassandra is decentralized means that there is no single point of failure.

All of the nodes in a Cassandra cluster function exactly the same. This is sometimes

referred to as “server symmetry.” Because they are all doing the same thing, by defini-

tion there can’t be a special host that is coordinating activities, as with the master/slave

setup that you see in MySQL, Bigtable, and so many others.

In many distributed data solutions (such as RDBMS clusters), you set up multiple cop-

ies of data on different servers in a process called replication, which copies the data to

multiple machines so that they can all serve simultaneous requests and improve per-

formance. Typically this process is not decentralized, as in Cassandra, but is rather

performed by defining a master/slave relationship. That is, all of the servers in this kind

of cluster don’t function in the same way. You configure your cluster by designating

one server as the master and others as slaves. The master acts as the authoritative

source of the data, and operates in a unidirectional relationship with the slave nodes,

which must synchronize their copies. If the master node fails, the whole database is in

jeopardy. The decentralized design is therefore one of the keys to Cassandra’s high

availability. Note that while we frequently understand master/slave replication in the

RDBMS world, there are NoSQL databases such as MongoDB that follow the master/

slave scheme as well.

Decentralization, therefore, has two key advantages: it’s simpler to use than master/

slave, and it helps you avoid outages. It can be easier to operate and maintain a decen-

tralized store than a master/slave store because all nodes are the same. That means that

you don’t need any special knowledge to scale; setting up 50 nodes isn’t much different

from setting up one. There’s next to no configuration required to support it. Moreover,

in a master/slave setup, the master can become a single point of failure (SPOF). To

avoid this, you often need to add some complexity to the environment in the form of

multiple masters. Because all of the replicas in Cassandra are identical, failures of a

node won’t disrupt service.

In short, because Cassandra is distributed and decentralized, there is no single point

of failure, which supports high availability.

The Cassandra Elevator Pitch | 15

Elastic Scalability

Scalability is an architectural feature of a system that can continue serving a greater

number of requests with little degradation in performance. Vertical scaling—simply

adding more hardware capacity and memory to your existing machine—is the easiest

way to achieve this. Horizontal scaling means adding more machines that have all or

some of the data on them so that no one machine has to bear the entire burden of

serving requests. But then the software itself must have an internal mechanism for

keeping its data in sync with the other nodes in the cluster.

Elastic scalability refers to a special property of horizontal scalability. It means that

your cluster can seamlessly scale up and scale back down. To do this, the cluster must

be able to accept new nodes that can begin participating by getting a copy of some or

all of the data and start serving new user requests without major disruption or recon-

figuration of the entire cluster. You don’t have to restart your process. You don’t have

to change your application queries. You don’t have to manually rebalance the data

yourself. Just add another machine—Cassandra will find it and start sending it work.

Scaling down, of course, means removing some of the processing capacity from your

cluster. You might have to do this if you move parts of your application to another

platform, or if your application loses users and you need to start selling off hardware.

Let’s hope that doesn’t happen. But if it does, you won’t need to upset the entire apple

cart to scale back.

High Availability and Fault Tolerance

In general architecture terms, the availability of a system is measured according to its

ability to fulfill requests. But computers can experience all manner of failure, from

hardware component failure to network disruption to corruption. Any computer is

susceptible to these kinds of failure. There are of course very sophisticated (and often

prohibitively expensive) computers that can themselves mitigate many of these cir-

cumstances, as they include internal hardware redundancies and facilities to send

notification of failure events and hot swap components. But anyone can accidentally

break an Ethernet cable, and catastrophic events can beset a single data center. So for

a system to be highly available, it must typically include multiple networked computers,

and the software they’re running must then be capable of operating in a cluster and

have some facility for recognizing node failures and failing over requests to another part

of the system.

Cassandra is highly available. You can replace failed nodes in the cluster with no

downtime, and you can replicate data to multiple data centers to offer improved local

performance and prevent downtime if one data center experiences a catastrophe such

as fire or flood.

16 | Chapter 1: Introducing Cassandra

Tuneable Consistency

Consistency essentially means that a read always returns the most recently written

value. Consider two customers are attempting to put the same item into their shopping

carts on an ecommerce site. If I place the last item in stock into my cart an instant after

you do, you should get the item added to your cart, and I should be informed that the

item is no longer available for purchase. This is guaranteed to happen when the state

of a write is consistent among all nodes that have that data.

But there’s no free lunch, and as we’ll see later, scaling data stores means making certain

trade-offs between data consistency, node availability, and partition tolerance. Cas-

sandra is frequently called “eventually consistent,” which is a bit misleading. Out of

the box, Cassandra trades some consistency in order to achieve total availability. But

Cassandra is more accurately termed “tuneably consistent,” which means it allows

you to easily decide the level of consistency you require, in balance with the level of

availability.

Let’s take a moment to unpack this, as the term “eventual consistency” has caused

some uproar in the industry. Some practitioners hesitate to use a system that is descri-

bed as “eventually consistent.”

For detractors of eventual consistency, the broad argument goes something like this:

eventual consistency is maybe OK for social web applications where data doesn’t

really matter. After all, you’re just posting to mom what little Billy ate for breakfast,

and if it gets lost, it doesn’t really matter. But the data I have is actually really