Guide EC2020 Vle

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 355 [warning: Documents this large are best viewed by clicking the View PDF Link!]

Elements of econometrics

C. Dougherty

EC2020

2016

Undergraduate study in

Economics, Management,

Finance and the Social Sciences

This subject guide is for a 200 course offered as part of the University of London

International Programmes in Economics, Management, Finance and the Social Sciences.

This is equivalent to Level 5 within the Framework for Higher Education Qualifications in

England, Wales and Northern Ireland (FHEQ).

For more information about the University of London International Programmes

undergraduate study in Economics, Management, Finance and the Social Sciences, see:

www.londoninternational.ac.uk

This guide was prepared for the University of London International Programmes by:

Dr. C. Dougherty, Senior Lecturer, Department of Economics, London School of Economics

and Political Science.

With typesetting and proof-reading provided by:

James S. Abdey, BA (Hons), MSc, PGCertHE, PhD, Department of Statistics, London School

of Economics and Political Science.

This is one of a series of subject guides published by the University. We regret that due

to pressure of work the author is unable to enter into any correspondence relating to, or

arising from, the guide. If you have any comments on this subject guide, favourable or

unfavourable, please use the form at the back of this guide.

University of London International Programmes

Publications Office

Stewart House

32 Russell Square

London WC1B 5DN

United Kingdom

www.londoninternational.ac.uk

Published by: University of London

© University of London 2011

Reprinted with minor revisions 2016

The University of London asserts copyright over all material in this subject guide except

where otherwise indicated. All rights reserved. No part of this work may be reproduced

in any form, or by any means, without permission in writing from the publisher. We make

every effort to respect copyright. If you think we have inadvertently used your copyright

material, please let us know.

Contents

Contents

Preface 1

0.1 Introduction.................................. 1

0.2 What is econometrics, and why study it? . . . . . . . . . . . . . . . . . . 1

0.3 Aims...................................... 1

0.4 Learningoutcomes .............................. 2

0.5 How to make use of the textbook . . . . . . . . . . . . . . . . . . . . . . 3

0.6 How to make use of this subject guide . . . . . . . . . . . . . . . . . . . 3

0.7 How to make use of the website . . . . . . . . . . . . . . . . . . . . . . . 4

0.7.1 Slideshows............................... 4

0.7.2 Datasets ............................... 4

0.8 Online study resources . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

0.8.1 TheVLE ............................... 5

0.8.2 Making use of the Online Library . . . . . . . . . . . . . . . . . . 6

0.9 Prerequisite for studying this subject . . . . . . . . . . . . . . . . . . . . 6

0.10 Application of linear algebra to econometrics . . . . . . . . . . . . . . . . 7

0.11Theexamination ............................... 7

0.12Overview.................................... 9

0.13Learningoutcomes .............................. 10

0.14Additionalexercises.............................. 10

0.15 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 11

0.16 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 22

1 Simple regression analysis 27

1.1 Overview.................................... 27

1.2 Learningoutcomes .............................. 27

1.3 Additionalexercises.............................. 28

1.4 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 30

1.5 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 35

2 Properties of the regression coefficients and hypothesis testing 41

2.1 Overview.................................... 41

i

Contents

2.2 Learningoutcomes .............................. 41

2.3 Furthermaterial................................ 42

2.4 Additionalexercises.............................. 43

2.5 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 48

2.6 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 53

3 Multiple regression analysis 59

3.1 Overview.................................... 59

3.2 Learningoutcomes .............................. 59

3.3 Additionalexercises.............................. 60

3.4 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 63

3.5 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 64

4 Transformations of variables 69

4.1 Overview.................................... 69

4.2 Learningoutcomes .............................. 69

4.3 Furthermaterial................................ 70

4.4 Additionalexercises.............................. 72

4.5 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 74

4.6 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 77

5 Dummy variables 85

5.1 Overview.................................... 85

5.2 Learningoutcomes .............................. 85

5.3 Additionalexercises.............................. 85

5.4 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 94

5.5 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 100

6 Specification of regression variables 115

6.1 Overview.................................... 115

6.2 Learningoutcomes .............................. 115

6.3 Additionalexercises.............................. 116

6.4 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 123

6.5 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 129

7 Heteroskedasticity 145

7.1 Overview.................................... 145

7.2 Learningoutcomes .............................. 145

ii

Contents

7.3 Additionalexercises.............................. 145

7.4 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 152

7.5 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 159

8 Stochastic regressors and measurement errors 169

8.1 Overview.................................... 169

8.2 Learningoutcomes .............................. 169

8.3 Additionalexercises.............................. 170

8.4 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 172

8.5 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 180

9 Simultaneous equations estimation 185

9.1 Overview.................................... 185

9.2 Learningoutcomes .............................. 185

9.3 Furthermaterial................................ 186

9.4 Additionalexercises.............................. 187

9.5 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 194

9.6 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 199

10 Binary choice and limited dependent variable models, and maximum

likelihood estimation 213

10.1Overview.................................... 213

10.2Learningoutcomes .............................. 213

10.3Furthermaterial................................ 214

10.4Additionalexercises.............................. 219

10.5 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 225

10.6 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 231

11 Models using time series data 239

11.1Overview.................................... 239

11.2Learningoutcomes .............................. 239

11.3Additionalexercises.............................. 240

11.4 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 245

11.5 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 250

12 Properties of regression models with time series data 261

12.1Overview.................................... 261

12.2Learningoutcomes .............................. 261

iii

Contents

12.3Additionalexercises.............................. 262

12.4 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 269

12.5 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 273

13 Introduction to nonstationary time series 285

13.1Overview.................................... 285

13.2Learningoutcomes .............................. 285

13.3Furthermaterial................................ 286

13.4Additionalexercises.............................. 287

13.5 Answers to the starred exercises in the textbook . . . . . . . . . . . . . . 291

13.6 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 295

14 Introduction to panel data 299

14.1Overview.................................... 299

14.2Learningoutcomes .............................. 299

14.3Additionalexercises.............................. 300

14.4 Answer to the starred exercise in the textbook . . . . . . . . . . . . . . . 304

14.5 Answers to the additional exercises . . . . . . . . . . . . . . . . . . . . . 306

15 Regression analysis with linear algebra primer 313

15.1Overview.................................... 313

15.2Notation.................................... 314

15.3Testexercises ................................. 314

15.4 The multiple regression model . . . . . . . . . . . . . . . . . . . . . . . . 314

15.5 The intercept in a regression model . . . . . . . . . . . . . . . . . . . . . 315

15.6 The OLS regression coefficients . . . . . . . . . . . . . . . . . . . . . . . 316

15.7 Unbiasedness of the OLS regression coefficients . . . . . . . . . . . . . . . 317

15.8 The variance-covariance matrix of the OLS regression coefficients . . . . 317

15.9 The Gauss–Markov theorem . . . . . . . . . . . . . . . . . . . . . . . . . 319

15.10 Consistency of the OLS regression coefficients . . . . . . . . . . . . . . 319

15.11 Frisch–Waugh–Lovell theorem . . . . . . . . . . . . . . . . . . . . . . . 320

15.12 Exact multicollinearity . . . . . . . . . . . . . . . . . . . . . . . . . . . 323

15.13 Estimation of a linear combination of regression coefficients . . . . . . . 324

15.14 Testing linear restrictions . . . . . . . . . . . . . . . . . . . . . . . . . . 325

15.15 Weighted least squares and heteroskedasticity . . . . . . . . . . . . . . 325

15.16 IV estimators and TSLS . . . . . . . . . . . . . . . . . . . . . . . . . . 327

15.17 Generalised least squares . . . . . . . . . . . . . . . . . . . . . . . . . . 329

iv

Contents

15.18 Appendix A: Derivation of the normal equations . . . . . . . . . . . . . 330

15.19 Appendix B: Demonstration that bu0bu/(n−k) is an unbiased estimator

of σ2

u...................................... 332

15.20 Appendix C: Answers to the exercises . . . . . . . . . . . . . . . . . . . 334

A Syllabus for the EC2020 Elements of econometrics examination 341

A.1 Review: Random variables and sampling theory . . . . . . . . . . . . . . 341

A.2 Chapter 1 Simple regression analysis . . . . . . . . . . . . . . . . . . . . 341

A.3 Chapter 2 Properties of the regression coefficients . . . . . . . . . . . . . 342

A.4 Chapter 3 Multiple regression analysis . . . . . . . . . . . . . . . . . . . 342

A.5 Chapter 4 Transformation of variables . . . . . . . . . . . . . . . . . . . 343

A.6 Chapter 5 Dummy variables . . . . . . . . . . . . . . . . . . . . . . . . . 343

A.7 Chapter 6 Specification of regression variables . . . . . . . . . . . . . . . 343

A.8 Chapter 7 Heteroskedasticity . . . . . . . . . . . . . . . . . . . . . . . . . 343

A.9 Chapter 8 Stochastic regressors and measurement errors . . . . . . . . . 344

A.10 Chapter 9 Simultaneous equations estimation . . . . . . . . . . . . . . . 344

A.11 Chapter 10 Binary choice models and maximum likelihood estimation . . 344

A.12 Chapter 11 Models using time series data . . . . . . . . . . . . . . . . . . 345

A.13 Chapter 12 Autocorrelation . . . . . . . . . . . . . . . . . . . . . . . . . 345

A.14 Chapter 13 Introduction to nonstationary processes . . . . . . . . . . . . 346

v

Contents

vi

Preface

0.1 Introduction

0.2 What is econometrics, and why study it?

Econometrics is the application of statistical methods to the quantification and critical

assessment of hypothetical economic relationships using data. It is with the aid of

econometrics that we discriminate between competing economic theories and put

numerical clothing onto the successful ones. Econometric analysis may be motivated by

a simple desire to improve our understanding of how the economy works, at either the

microeconomic or macroeconomic level, but more often it is undertaken with a specific

objective in mind. In the private sector, the financial benefits that accrue from a

sophisticated understanding of relevant markets and an ability to predict change may

be the driving factor. In the public sector, the impetus may come from an awareness

that evidence-based policy initiatives are likely to have the greatest impact.

It is now generally recognised that nearly all professional economists, not just those

actually working with data, should have a basic understanding of econometrics. There

are two major benefits. One is that it facilitates communication between

econometricians and the users of their work. The other is the development of the ability

to obtain a perspective on econometric work and to make a critical evaluation of it.

Econometric work is more robust in some contexts than in others. Experience with the

practice of econometrics and a knowledge of the potential problems that can arise are

essential for developing an instinct for judging how much confidence should be placed

on the findings of a particular study.

Such is the importance of econometrics that, in common with intermediate

macroeconomics and microeconomics, an introductory course forms part of the core of

any serious undergraduate degree in economics and is a prerequisite for admission to a

serious Master’s level course in economics or finance.

0.3 Aims

The aim of EC2020 Elements of econometrics is to give you an opportunity to

develop an understanding of econometrics to a standard that will equip you to

understand and evaluate most applied analysis of cross-sectional data and to be able to

undertake such analysis yourself. The restriction to cross-sectional data (data raised at

one moment in time, often through a survey of households, individuals, or enterprises)

should be emphasised because the analysis of time series data (observations on a set of

variables over a period of time) is much more complex. Chapters 11 to 13 of the

textbook, Introduction to econometrics, and this subject guide are devoted to the

1

Preface

analysis of time series data, but, beyond very simple applications, the objectives are

confined to giving you an understanding of the problems involved and making you

aware of the need for a Master’s level course if you intend to work with such data.

Specifically the aims of the course are to:

develop an understanding of the use of regression analysis and related techniques

for quantifying economic relationships and testing economic theories

equip you to read and evaluate empirical papers in professional journals

provide you with practical experience of using mainstream regression programmes

to fit economic models.

0.4 Learning outcomes

By the end of this course, and having completed the Essential reading and activities,

you should be able to:

describe and apply the classical regression model and its application to

cross-sectional data

describe and apply the:

•Gauss–Markov conditions and other assumptions required in the application of

the classical regression model

•reasons for expecting violations of these assumptions in certain circumstances

•tests for violations

•potential remedial measures, including, where appropriate, the use of

instrumental variables

recognise and apply the advantages of logit, probit and similar models over

regression analysis when fitting binary choice models

competently use regression, logit and probit analysis to quantify economic

relationships using standard regression programmes (Stata and EViews) in simple

applications

describe and explain the principles underlying the use of maximum likelihood

estimation

apply regression analysis to fit time-series models using stationary time series, with

awareness of some of the econometric problems specific to time-series applications

(for example, autocorrelation) and remedial measures

recognise the difficulties that arise in the application of regression analysis to

nonstationary time series, know how to test for unit roots, and know what is meant

by cointegration.

2

0.5. How to make use of the textbook

0.5 How to make use of the textbook

The only reading required for this course is my textbook:

C. Dougherty, Introduction to econometrics (Oxford: Oxford University Press,

2016) fifth edition [ISBN 9780199676828].

The syllabus is the same as that for EC220 Introduction to econometrics, the

corresponding internal course at the London School of Economics. The textbook has

been written to cover it with very little added and nothing subtracted.

When writing a textbook, there is a temptation to include a large amount of non-core

material that may potentially be of use or interest to students. There is much to be said

for this, since it allows the same textbook to be used to some extent for reference as

well as a vehicle for a taught course. However, my textbook is stripped down to nearly

the bare minimum for two reasons. First, the core material provides quite enough

content for an introductory year-long course and I think that students should initially

concentrate on gaining a good understanding of it. Second, if the textbook is focused

narrowly on the syllabus, students can read through it as a continuous narrative

without a need for directional guidance. Obviously, this is particularly important for

those who are studying the subject on their own, as is the case for most of those

enrolled on EC2020 Elements of econometrics.

An examination syllabus is provided as an appendix to this subject guide, but its

function is mostly to indicate the expected depth of understanding of each topic, rather

than the selection of the topics themselves.

0.6 How to make use of this subject guide

The function of this subject guide differs from that of other subject guides you may be

using. Unlike those for other courses, this subject guide acts as a supplementary

resource, with the textbook as the main resource. Each chapter forms an extension to a

corresponding chapter in the textbook with the same title. You must have a copy of the

textbook to be able to study this course. The textbook will give you the information you

need to carry out the activities and achieve the learning outcomes in the subject guide.

The main purpose of the subject guide is to provide you with opportunities to gain

experience with econometrics through practice with exercises. Each chapter of the

subject guide falls into two parts. The first part begins with an overview of the

corresponding chapter in the textbook. Then there is a checklist of learning outcomes

anticipated as a result of studying the chapter in the textbook, doing the exercises in

the subject guide, and making use of the corresponding resources on the website.

Finally, in some of the chapters, comes a section headed ‘Further material’. This

consists of new topics that may be included in the next edition of the textbook. The

second part of each chapter consists of additional exercises, followed by answers to the

starred exercises in the text and answers to the additional exercises.

You should organise your studies in the following way:

first read this introductory chapter

3

Preface

read the Overview section from the Review chapter of the subject guide

read the Review chapter of the textbook and do the starred exercises

refer to the subject guide for answers to the starred exercises in the text and for

additional exercises

check that you have covered all the items in the learning outcomes section in the

subject guide.

You should repeat this process for each of the numbered chapters. Note that the subject

guide chapters have the same titles as the chapters in the text. In those chapters where

there is a ‘Further material’ section in the subject guide, this should be read after

reading the chapter in the textbook.

0.7 How to make use of the website

You should make full use of the resources available at the Online Resource Centre

maintained by the publisher, Oxford University Press (OUP):

www.oup.com/uk/orc/bin/9780199567089. Here you will find PowerPoint slideshows

that provide a graphical treatment of the topics covered in the textbook, data sets for

practical work and statistical tables.

0.7.1 Slideshows

In principle you will be able to acquire mastery of the subject by studying the contents

of the textbook with the support of this subject guide and doing the exercises

conscientiously. However, I strongly recommend that you do study all the slideshows as

well. Some do not add much to the material in the textbook, and these you can skim

through quickly. Some, however, provide a much more graphical treatment than is

possible with print and they should improve your understanding. Some present and

discuss regression results and other hands-on material that could not be included in the

text for lack of space, and they likewise should be helpful.

0.7.2 Data sets

To use the data sets, you must have access to a proper statistics application with

facilities for regression analysis, such as Stata or EViews. The student versions of such

applications are adequate for doing all, or almost all, the exercises and of course are

much cheaper than the professional ones. Product and pricing information can be

obtained from the applications’ websites, the URL usually being the name of the

application sandwiched between ‘www.’ and ‘.com’.

If you do not have access to a commercial econometrics application, you should use

gretl. This is a sophisticated application almost as powerful as the commercial ones, and

it is free. See the gretl manual on the OUP website for further information.

Whatever you do, do not be tempted to try to get by with the regression engines built

into some spreadsheet applications, such as Microsoft Excel. They are not remotely

4

0.8. Online study resources

adequate for your needs.

There are three major data sets on the website. The most important one, for the

purposes of this subject guide, is the Consumer Expenditure Survey (CES) data set.

You will find on the website versions in the formats used by Stata, EViews and gretl. If

you are using some other application, you should download the text version

(comma-delimited ASCII) and import it. Answers to all of the exercises are provided in

the relevant chapters of this subject guide.

The exercises for the CES data set cover Chapters 1–10 of the text. For Chapters

11–13, you should use the Demand Functions data set, another major data set, to do

the additional exercises in the corresponding chapters of this subject guide. Again you

should download the data set in the appropriate format. For these exercises, also,

answers are provided.

The third major data set on the website is the Educational Attainment and Earnings

Function data set, which provides practical work for the first 10 chapters of the text

and Chapter 14. No answers are provided, but many parallel examples will be found in

the text.

0.8 Online study resources

In addition to the subject guide and the Essential reading, it is crucial that you take

advantage of the study resources that are available online for this course, including the

VLE and the Online Library.

You can access the VLE, the Online Library and your University of London email

account via the Student Portal at: http://my.londoninternational.ac.uk

You should have received your login details for the Student Portal with your official

offer, which was emailed to the address that you gave on your application form. You

have probably already logged into the Student Portal in order to register! As soon as

you registered, you will automatically have been granted access to the VLE, Online

Library and your fully functional University of London email account.

If you forget your login details at any point, please email uolia.support@london.ac.uk

quoting your student number.

0.8.1 The VLE

The VLE, which complements this subject guide, has been designed to enhance your

learning experience, providing additional support and a sense of community. It forms an

important part of your study experience with the University of London and you should

access it regularly.

The VLE provides a range of resources for EMFSS courses:

Electronic study materials: All of the printed materials which you receive from

the University of London are available to download, to give you flexibility in how

and where you study.

Discussion forums: An open space for you to discuss interests and seek support

5

Preface

from your peers, working collaboratively to solve problems and discuss subject

material. Some forums are moderated by an LSE academic.

Videos: Recorded academic introductions to many subjects; interviews and

debates with academics who have designed the courses and teach similar ones at

LSE.

Recorded lectures: For a few subjects, where appropriate, various teaching

sessions of the course have been recorded and made available online via the VLE.

Audio-visual tutorials and solutions: For some of the first year and larger later

courses such as Introduction to Economics, Statistics, Mathematics and Principles

of Banking and Accounting, audio-visual tutorials are available to help you work

through key concepts and to show the standard expected in examinations.

Self-testing activities: Allowing you to test your own understanding of subject

material.

Study skills: Expert advice on getting started with your studies, preparing for

examinations and developing your digital literacy skills.

Note: Students registered for Laws courses also receive access to the dedicated Laws

VLE.

Some of these resources are available for certain courses only, but we are expanding our

provision all the time and you should check the VLE regularly for updates.

0.8.2 Making use of the Online Library

The Online Library (http://onlinelibrary.london.ac.uk) contains a huge array of journal

articles and other resources to help you read widely and extensively.

To access the majority of resources via the Online Library you will either need to use

your University of London Student Portal login details, or you will be required to

register and use an Athens login.

The easiest way to locate relevant content and journal articles in the Online Library is

to use the Summon search engine.

If you are having trouble finding an article listed in a reading list, try removing any

punctuation from the title, such as single quotation marks, question marks and colons.

For further advice, please use the online help pages

(http://onlinelibrary.london.ac.uk/resources/summon) or contact the Online Library

team: onlinelibrary@shl.london.ac.uk

0.9 Prerequisite for studying this subject

The prerequisite for studying this subject is a solid background in mathematics and

elementary statistical theory. The mathematics requirement is a basic understanding of

multivariate differential calculus. With regard to statistics, you must have a clear

understanding of what is meant by the sampling distribution of an estimator, and of the

6

0.10. Application of linear algebra to econometrics

principles of statistical inference and hypothesis testing. This is absolutely essential. I

find that most problems that students have with introductory econometrics are not

econometric problems at all but problems with statistics, or rather, a lack of

understanding of statistics. There are no short cuts. If you do not have this background

knowledge, you should put your study of econometrics on hold and study statistics first.

Otherwise there will be core parts of the econometrics syllabus that you do not begin to

understand.

In addition, it would be helpful if you have some knowledge of economics. However,

although the examples and exercises relate to economics, most of them are so

straightforward that a previous study of economics is not a requirement.

0.10 Application of linear algebra to econometrics

At the end of this subject guide you will find a primer on the application of linear

algebra (matrix algebra) to econometrics. It is not part of the syllabus for the

examination, and studying it is unlikely to confer any advantage for the examination. It

is provided for the benefit of those students who intend to take a further course in

econometrics, especially at the Master’s level. The present course is ambitious, by

undergraduate standards, in terms of its coverage of concepts and, above all, its focus

on the development of an intuitive understanding. For its purposes, it has been quite

sufficient and appropriate to work with uncomplicated regression models, typically with

no more than two explanatory variables.

However, when you progress to the next level, it is necessary to generalise the theory to

cover multiple regression models with many explanatory variables, and linear algebra is

ideal for this purpose. The primer does not attempt to teach it. There are many

excellent texts and there is no point in duplicating them. The primer assumes that such

basic study has already been undertaken, probably taking about 20 to 50 hours,

depending on the individual. It is intended to show how the econometric theory in the

text can be handled with this more advanced mathematical approach, thus serving as

preparation for the higher-level course.

0.11 The examination

Important: the information and advice given here are based on the examination

structure used at the time this subject guide was written. Please note that subject

guides may be used for several years. Because of this we strongly advise you to always

check both the current Programme regulations for relevant information about the

examination, and the VLE where you should be advised of any forthcoming changes.

You should also carefully check the rubric/instructions on the paper you actually sit

and follow those instructions.

Candidates should answer eight out of 10 questions in three hours: all of the questions

in Section A (8 marks each) and three questions from Section B (20 marks each).

A calculator may be used when answering questions on this paper and it must comply

in all respects with the specification given with your Admission Notice.

7

Preface

Remember, it is important to check the VLE for:

up-to-date information on examination and assessment arrangements for this course

where available, past examination papers and Examiners’ commentaries for the

course which give advice on how each question might best be answered.

8

0.12. Overview

Review: Random variables and

sampling theory

0.12 Overview

The textbook and this subject guide assume that you have previously studied basic

statistical theory and have a sound understanding of the following topics:

descriptive statistics (mean, median, quartile, variance, etc.)

random variables and probability

expectations and expected value rules

population variance, covariance, and correlation

sampling theory and estimation

unbiasedness and efficiency

loss functions and mean square error

normal distribution

hypothesis testing, including:

•ttests

•Type I and Type II error

•the significance level and power of a ttest

•one-sided versus two-sided ttests

confidence intervals

convergence in probability, consistency, and plim rules

convergence in distribution and central limit theorems.

There are many excellent textbooks that offer a first course in statistics. The Review

chapter of my textbook is not a substitute. It has the much more limited objective of

providing an opportunity for revising some key statistical concepts and results that will

be used time and time again in the course. They are central to econometric analysis and

if you have not encountered them before, you should postpone your study of

econometrics and study statistics first.

9

Preface

0.13 Learning outcomes

After working through the corresponding chapter in the textbook, studying the

corresponding slideshows, and doing the starred exercises in the textbook and the

additional exercises in this subject guide, you should be able to explain what is meant

by all of the items listed in the Overview. You should also be able to explain why they

are important. The concepts of efficiency, consistency, and power are often

misunderstood by students taking an introductory econometrics course, so make sure

that you aware of their precise meanings.

0.14 Additional exercises

[Note: Each chapter has a set of additional exercises. The answers to them are

provided at the end of the chapter after the answers to the starred exercises in the text.]

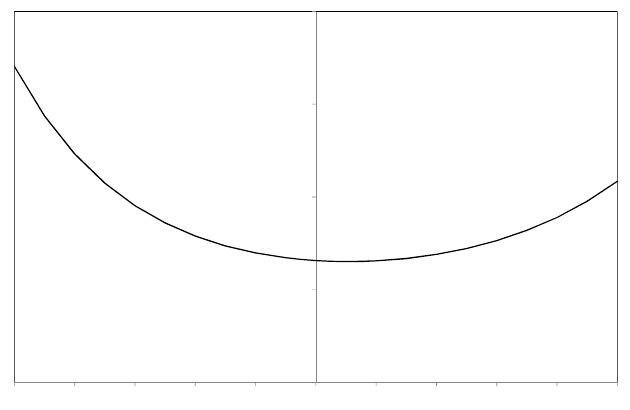

AR.1 A random variable Xhas a continuous uniform distribution from 0 to 2. Define its

probability density function.

X

( )

s.d. s.d.

acceptance regionrejection region rejection region

2.5%

2.5%

probability

density

0

2

X

AR.2 Find the expected value of Xin Exercise AR.1, using the expression given in Box

R.1 in the text.

AR.3 Derive E(X2) for Xdefined in Exercise AR.1, using the expression given in Box

R.1.

AR.4 Derive the population variance and the standard deviation of Xas defined in

Exercise AR.1, using the expression given in Box R.1.

AR.5 Using equation (R.9), find the variance of the random variable Xdefined in

Exercise AR.1 and show that the answer is the same as that obtained in Exercise

AR.4. (Note: You have already calculated E(X) in Exercise AR.2 and E(X2) in

Exercise AR.3.)

AR.6 In Table R.6, µ0and µ1were three standard deviations apart. Construct a similar

table for the case where they are two standard deviations apart.

10

0.15. Answers to the starred exercises in the textbook

AR.7 Suppose that a random variable Xhas a normal distribution with unknown mean µ

and variance σ2. To simplify the analysis, we shall assume that σ2is known. Given

a sample of observations, an estimator of µis the sample mean, X. An investigator

wishes to test H0:µ= 0 and believes that the true value cannot be negative. The

appropriate alternative hypothesis is therefore H1:µ > 0 and the investigator

decides to perform a one-sided test. However, the investigator is mistaken because

µcould in fact be negative. What are the consequences of erroneously performing a

one-sided test when a two-sided test would have been appropriate?

AR.8 Suppose that a random variable Xhas a normal distribution with mean µand

variance σ2. Given a sample of nindependent observations, it can be shown that:

bσ2=1

n−1XXi−X2

is an unbiased estimator of σ2. Is √bσ2either an unbiased or a consistent estimator

of σ?

0.15 Answers to the starred exercises in the textbook

R.2 A random variable Xis defined to be the larger of the two values when two dice

are thrown, or the value if the values are the same. Find the probability

distribution for X.

Answer:

The table shows the 36 possible outcomes. The probability distribution is derived

by counting the number of times each outcome occurs and dividing by 36. The

probabilities have been written as fractions, but they could equally well have been

written as decimals.

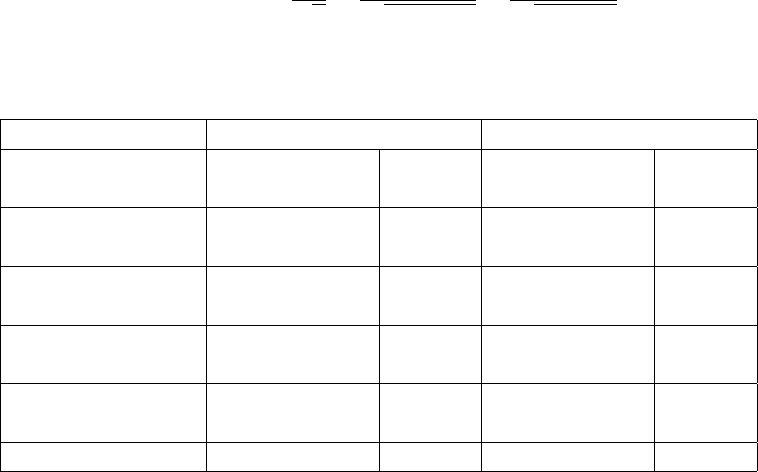

red123456

green

1 123456

2 223456

3 333456

4 444456

5 555556

6 666666

Value of X1 2 3 4 5 6

Frequency 1 3 5 7 9 11

Probability 1/36 3/36 5/36 7/36 9/36 11/36

11

Preface

R.4 Find the expected value of Xin Exercise R.2.

Answer:

The table is based on Table R.2 in the text. It is a good idea to guess the outcome

before doing the arithmetic. In this case, since the higher numbers have the largest

probabilities, the expected value should clearly lie between 4 and 5. If the

calculated value does not conform with the guess, it is possible that this is because

the guess was poor. However, it may be because there is an error in the arithmetic,

and this is one way of catching such errors.

X p Xp

1 1/36 1/36

2 3/36 6/36

3 5/36 15/36

4 7/36 28/36

5 9/36 45/36

6 11/36 66/36

Total 161/36 = 4.4722

R.7 Calculate E(X2) for Xdefined in Exercise R.2.

Answer:

The table is based on Table R.3 in the text. Given that the largest values of X2

have the highest probabilities, it is reasonable to suppose that the answer lies

somewhere in the range 15–30. The actual figure is 21.97.

X X2p X2p

1 1 1/36 1/36

2 4 3/36 12/36

3 9 5/36 45/36

4 16 7/36 112/36

5 25 9/36 225/36

6 36 11/36 396/36

Total 791/36 = 21.9722

R.10 Calculate the population variance and the standard deviation of Xas defined in

Exercise R.2, using the definition given by equation (R.8).

Answer:

The table is based on Table R.4 in the textbook. In this case it is not easy to make

a guess. The population variance is 1.97, and the standard deviation, its square

root, is 1.40. Note that four decimal places have been used in the working, even

though the estimate is reported to only two. This is to eliminate the possibility of

the estimate being affected by rounding error.

12

0.15. Answers to the starred exercises in the textbook

X p X −µX(X−µX)2(X−µX)2p

1 1/36 −3.4722 12.0563 0.3349

2 3/36 −2.4722 6.1119 0.5093

3 5/36 −1.4722 2.1674 0.3010

4 7/36 −0.4722 0.2230 0.0434

5 9/36 0.5278 0.2785 0.0696

6 11/36 1.5278 2.3341 0.7132

Total 1.9715

R.12 Using equation (R.9), find the variance of the random variable Xdefined in

Exercise R.2 and show that the answer is the same as that obtained in Exercise

R.10. (Note: You have already calculated µXin Exercise R.4 and E(X2) in

Exercise R.7.)

Answer:

E(X2) is 21.9722 (Exercise R.7). E(X) is 4.4722 (Exercise R.4), so µ2

Xis 20.0006.

Thus the variance is 21.9722 −20.0006 = 1.9716. The last-digit discrepancy

between this figure and that in Exercise R.10 is due to rounding error.

R.14 Suppose a variable Yis an exact linear function of X:

Y=λ+µX

where λand µare constants, and suppose that Zis a third variable. Show that

ρXZ =ρY Z

Answer:

We start by noting that Yi−Y=µXi−X. Then:

ρY Z =

EhYi−YZi−Zi

sEYi−Y2EZi−Z2

=

EhµXi−XZi−Zi

sEµ2Xi−X2Eµ2Zi−Z2

=

µE hXi−XZi−Zi

sµ2EXi−X2EZi−Z2

=ρXZ .

R.16 Show that, when you have nobservations, the condition that the generalised

estimator (λ1X1+··· +λnXn) should be an unbiased estimator of µXis

λ1+··· +λn= 1.

13

Preface

Answer:

E(Z) = E(λ1X1+··· +λnXn)

=E(λ1X1) + ··· +E(λnXn)

=λ1E(X1) + ··· +λnE(Xn)

=λ1µX+··· +λnµX

= (λ1+··· +λn)µX.

Thus E(Z) = µXrequires λ1+··· +λn= 1.

R.19 In general, the variance of the distribution of an estimator decreases when the

sample size is increased. Is it correct to describe the estimator as becoming more

efficient?

Answer:

No, it is incorrect. When the sample size increases, the variance of the estimator

decreases, and as a consequence it is more likely to give accurate results. Because it

is improving in this important sense, it is very tempting to describe the estimator

as becoming more efficient. But it is the wrong use of the term. Efficiency is a

comparative concept that is used when you are comparing two or more alternative

estimators, all of them being applied to the same data set with the same sample

size. The estimator with the smallest variance is said to be the most efficient. You

cannot use efficiency as suggested in the question because you are comparing the

variances of the same estimator with different sample sizes.

R.21 Suppose that you have observations on three variables X,Y, and Z, and suppose

that Yis an exact linear function of Z:

Y=λ+µZ

where λand µare constants. Show that bρXZ =bρXY . (This is the counterpart of

Exercise R.14.)

Answer:

We start by noting that Yi−Y=µZi−Z. Then:

bρXY =PXi−XYi−Y

rPXi−X2PYi−Y2

=PXi−XµZi−Z

rPXi−X2Pµ2Zi−Z2

=PXi−XZi−Z

rPXi−X2PZi−Z2

=bρXZ

14

0.15. Answers to the starred exercises in the textbook

R.26 Show that, in Figures R.18 and R.22, the probabilities of a Type II error are 0.15

in the case of a 5 per cent significance test and 0.34 in the case of a 1 per cent test.

Note that the distance between µ0and µ1is three standard deviations. Hence the

right-hand 5 per cent rejection region begins 1.96 standard deviations to the right

of µ0. This means that it is located 1.04 standard deviations to the left of µ1.

Similarly, for a 1 per cent test, the right-hand rejection region starts 2.58 standard

deviations to the right of µ0, which is 0.42 standard deviations to the left of µ1.

Answer:

For the 5 per cent test, the rejection region starts 3 −1.96 = 1.04 standard

deviations below µ1, given that the distance between µ1and µ0is 3 standard

deviations. See Figure R.18. According to the standard normal distribution table,

the cumulative probability of a random variable lying 1.04 standard deviations (or

less) above the mean is 0.8508. This implies that the probability of it lying 1.04

standard deviations below the mean is 0.1492. For the 1 per cent test, the rejection

region starts 3 −2.58 = 0.42 standard deviations below the mean. See Figure R.22.

The cumulative probability for 0.42 in the standard normal distribution table is

0.6628, so the probability of a Type II error is 0.3372.

R.27 Explain why the difference in the power of a 5 per cent test and a 1 per cent test

becomes small when the distance between µ0and µ1becomes large.

Answer:

The powers of both tests tend to one as the distance between µ0and µ1becomes

large. The difference in their powers must therefore tend to zero.

R.28 A random variable Xhas unknown population mean µ. A researcher has a sample

of observations with sample mean X. He wishes to test the null hypothesis

H0:µ=µ0. The figure shows the potential distribution of Xconditional on H0

being true. It may be assumed that the distribution is known to have variance

equal to one.

0

0

5% rejection region

X

f(X)

µ

0

The researcher decides to implement an unorthodox (and unwise) decision rule. He

decides to reject H0if Xlies in the central 5 per cent of the distribution (the tinted

area in the figure).

(a) Explain why his test is a 5 per cent significance test.

15

Preface

(b) Explain in intuitive terms why his test is unwise.

(c) Explain in technical terms why his test is unwise.

Answer:

The following discussion assumes that you are performing a 5 per cent significance

test, but it applies to any significance level.

If the null hypothesis is true, it does not matter how you define the 5 per cent

rejection region. By construction, the risk of making a Type I error will be 5 per

cent. Issues relating to Type II errors are irrelevant when the null hypothesis is true.

The reason that the central part of the conditional distribution is not used as a

rejection region is that it leads to problems when the null hypothesis is false. The

probability of not rejecting H0when it is false will be lower. To use the obvious

technical term, the power of the test will be lower.

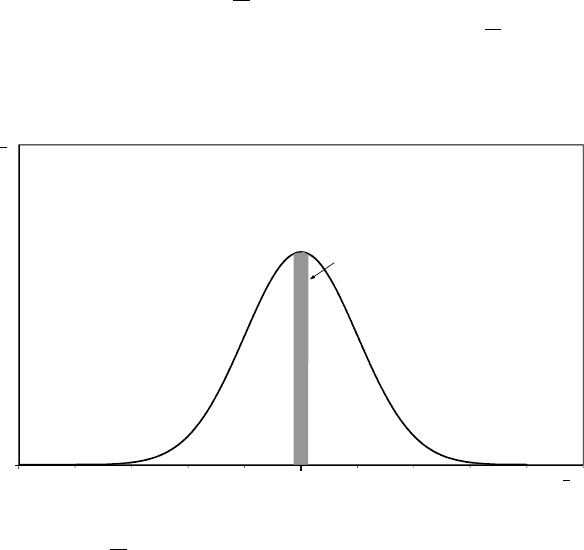

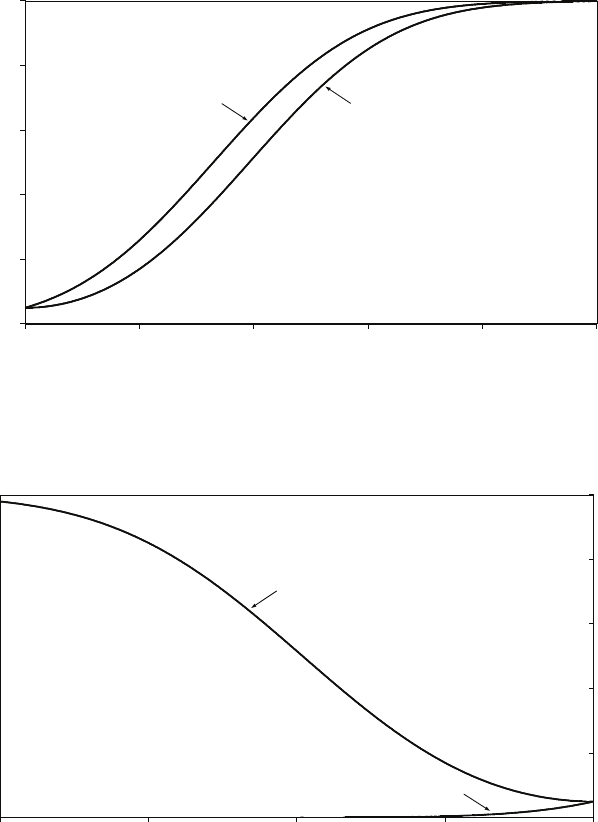

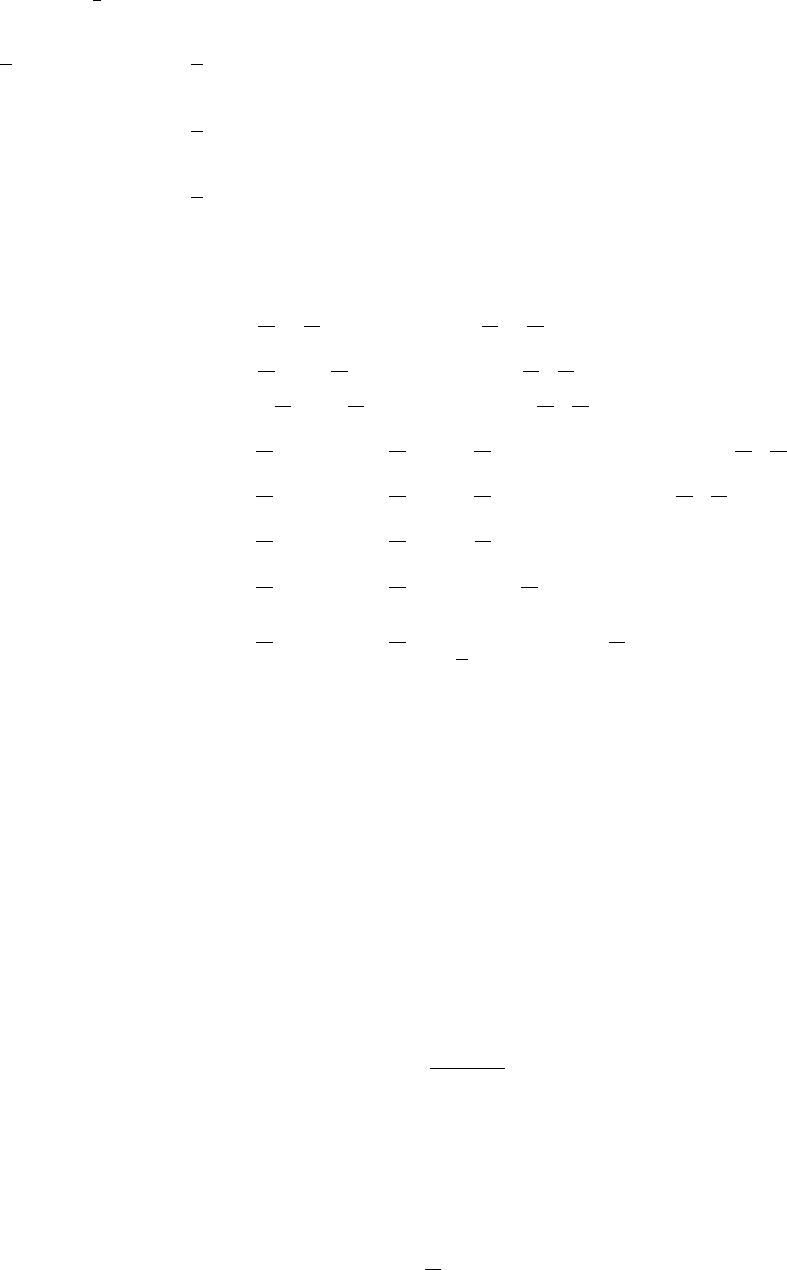

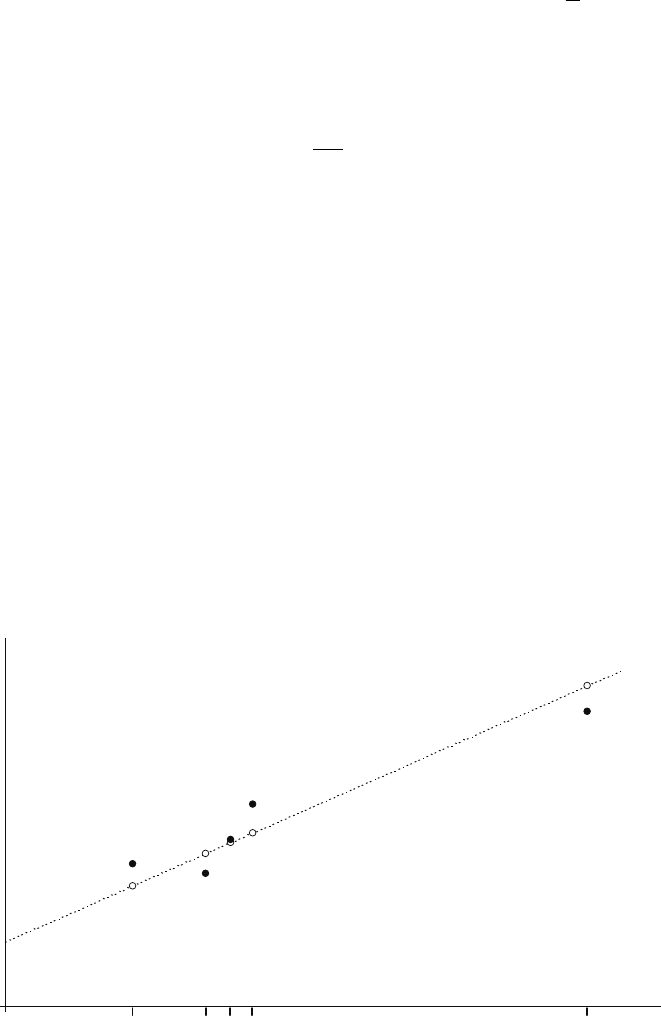

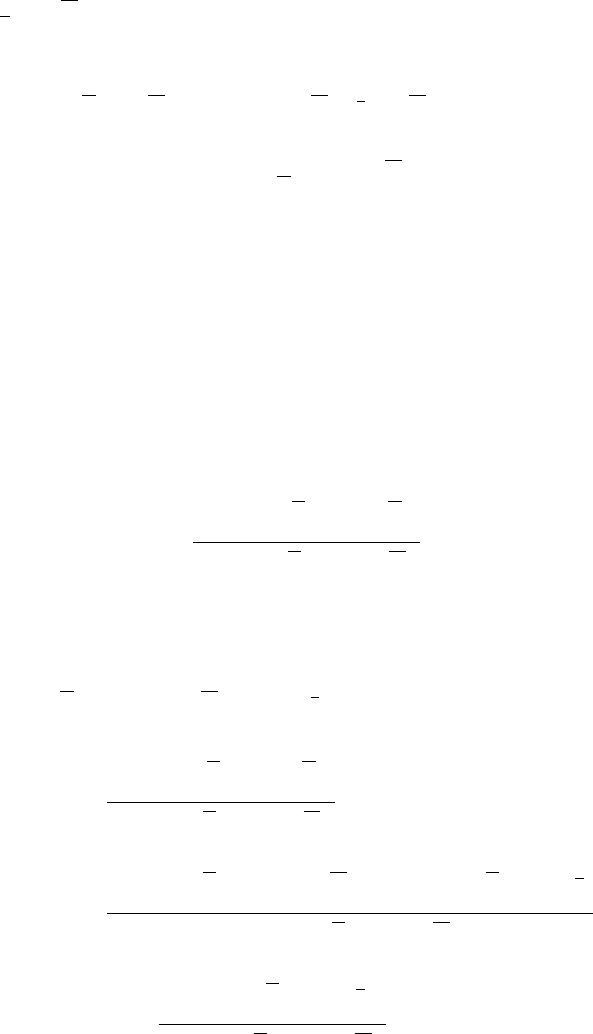

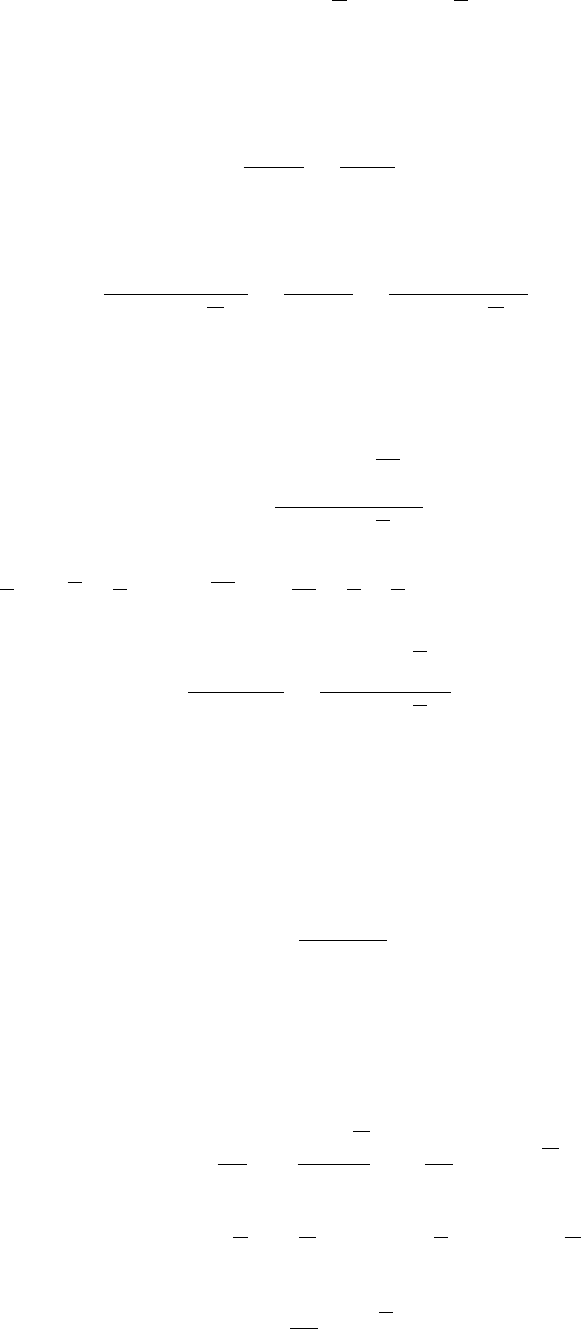

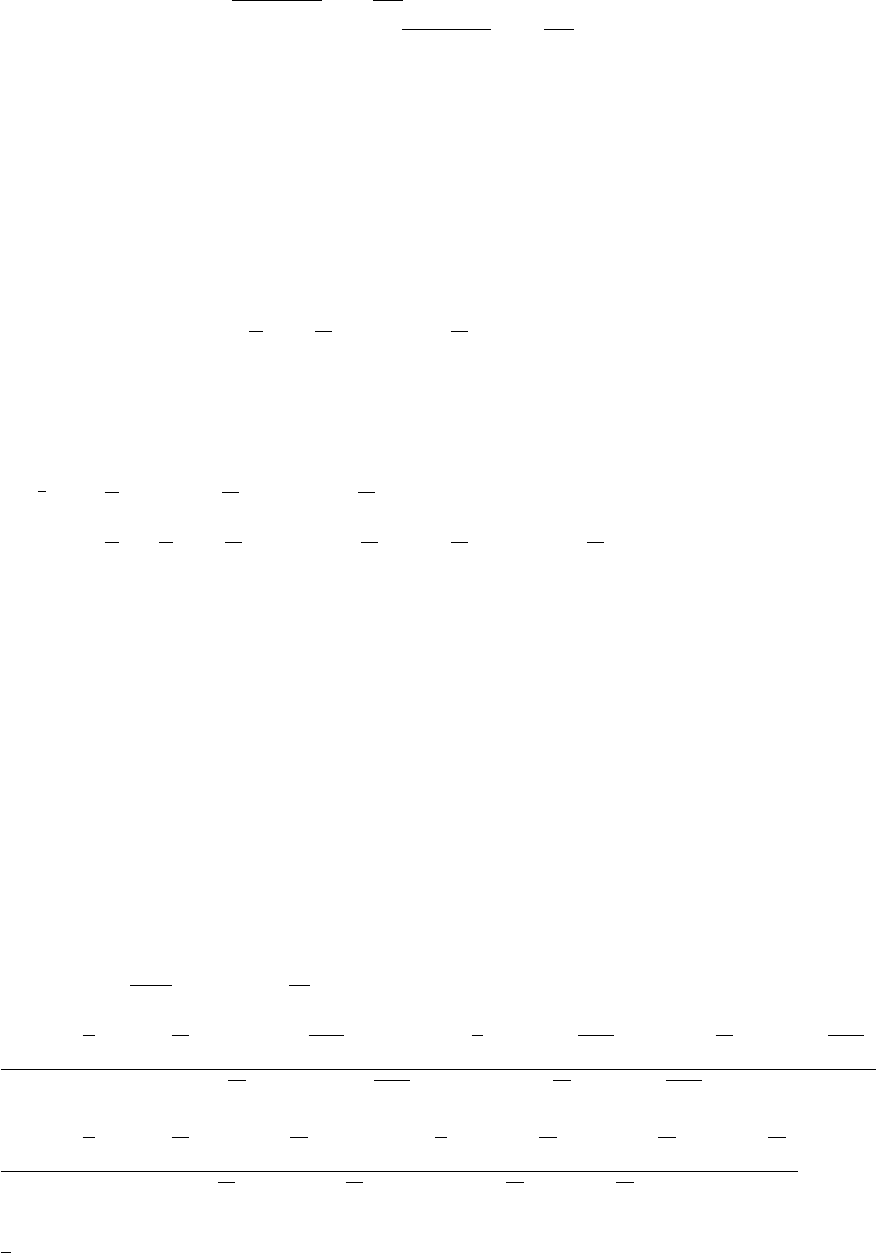

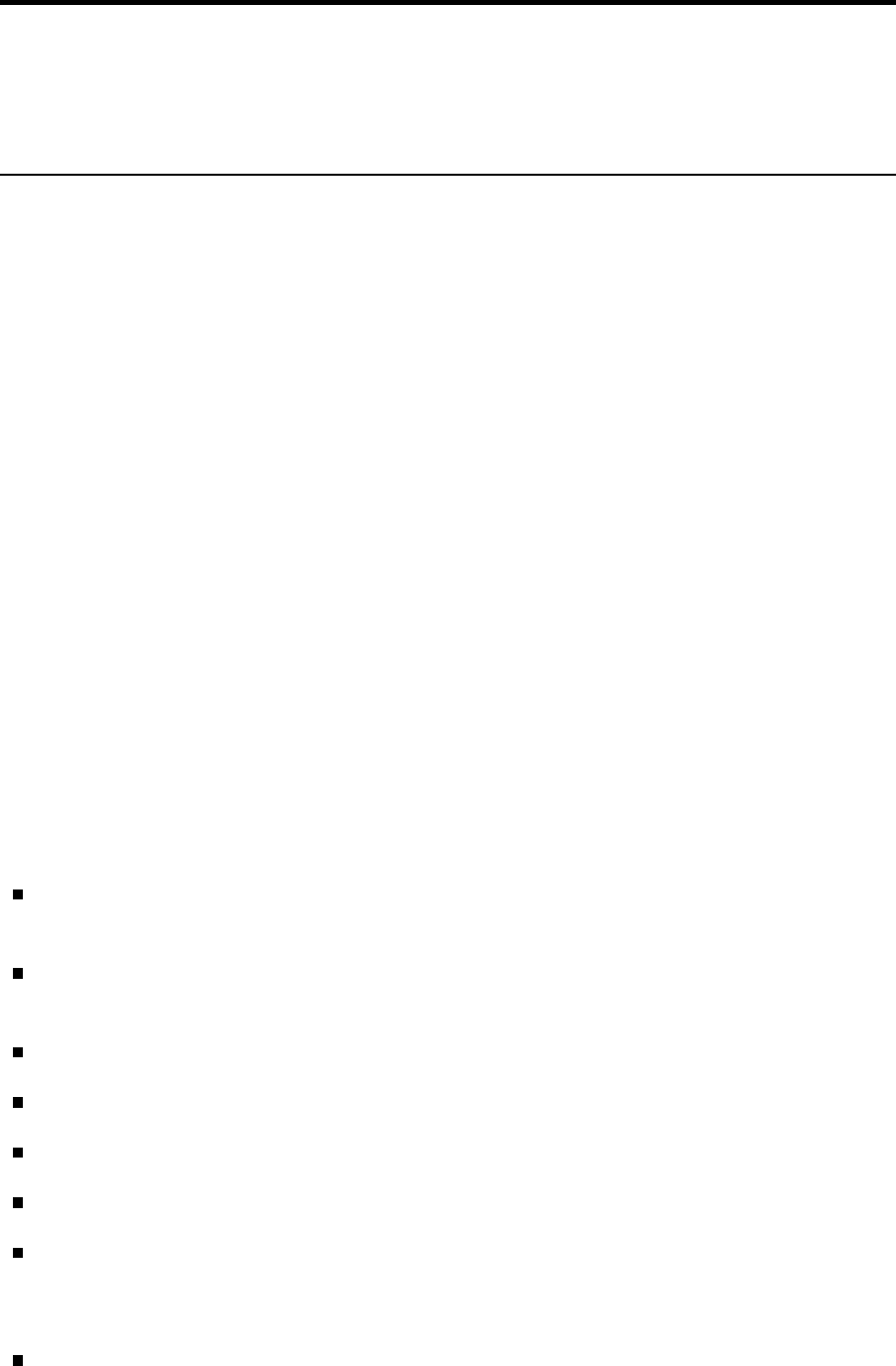

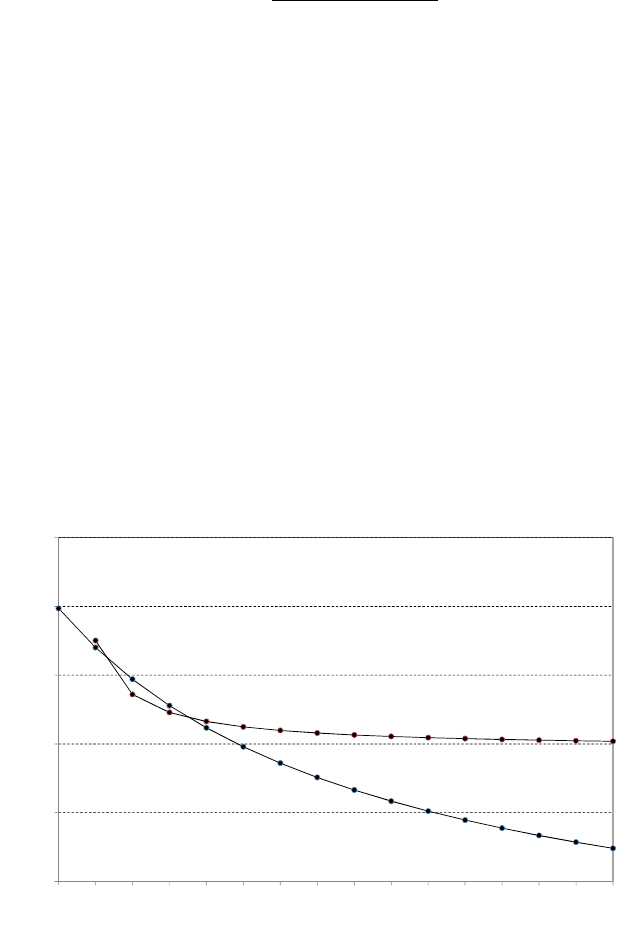

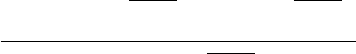

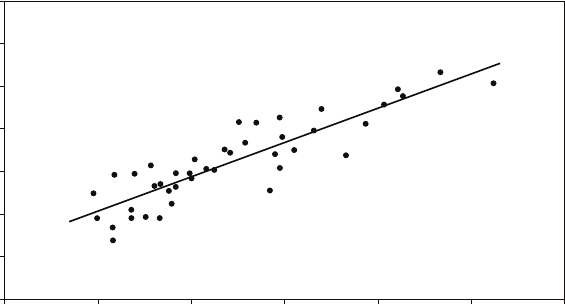

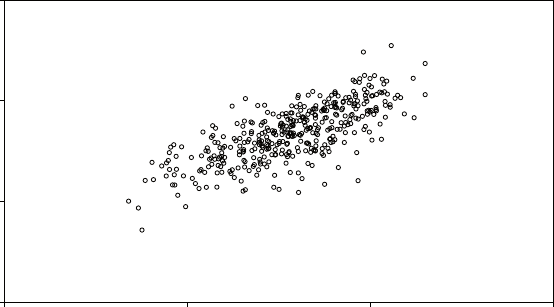

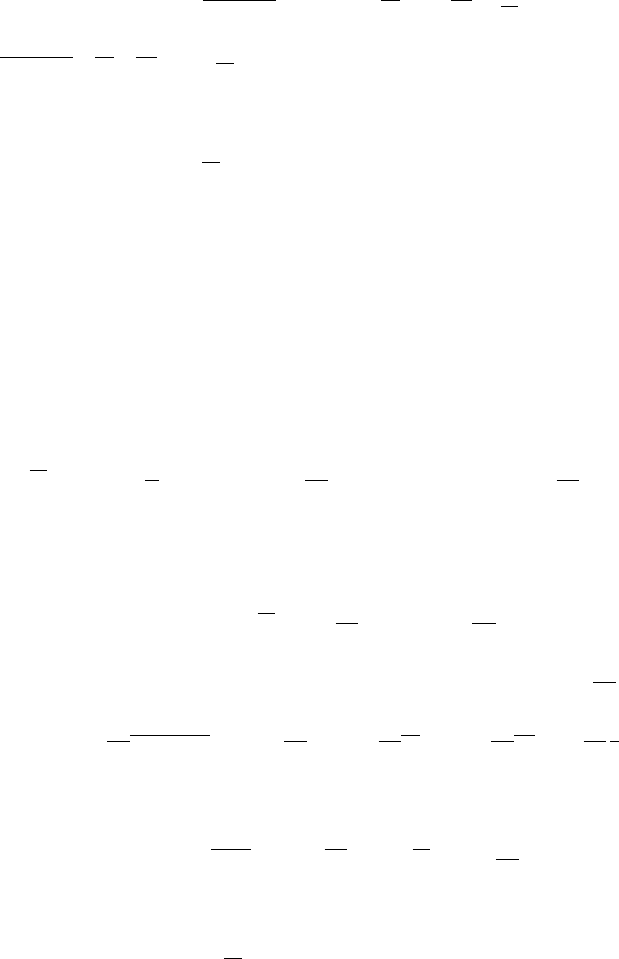

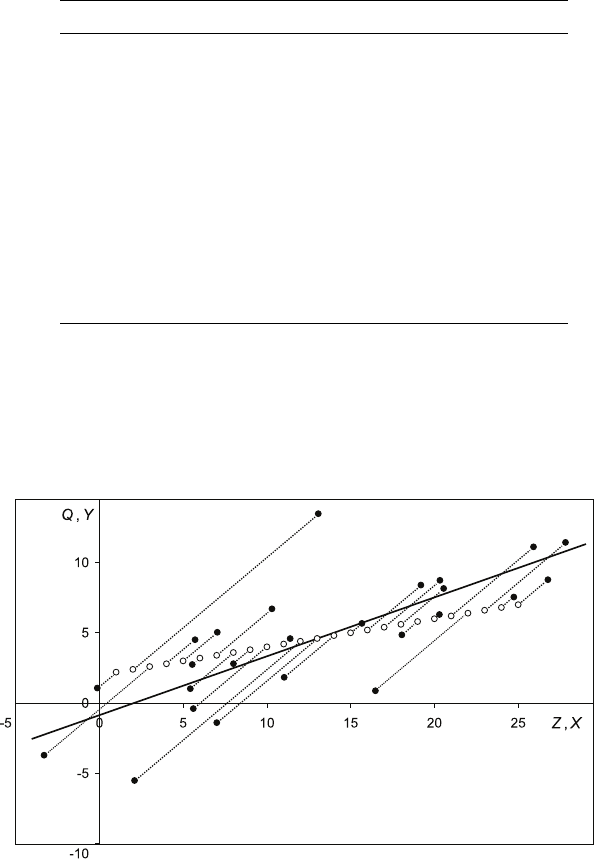

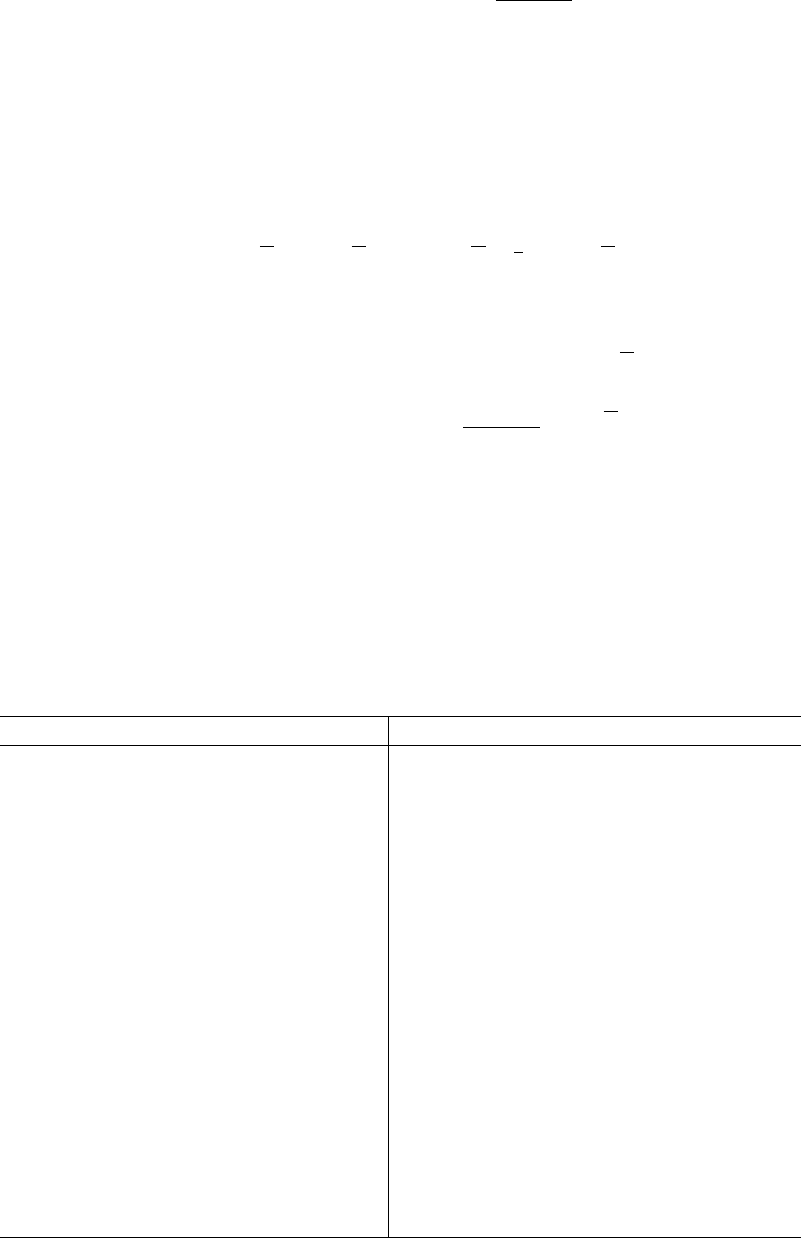

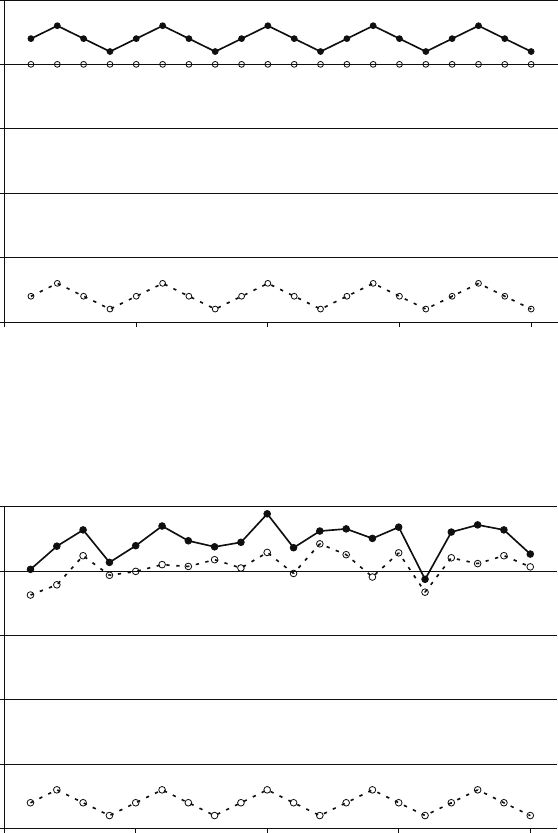

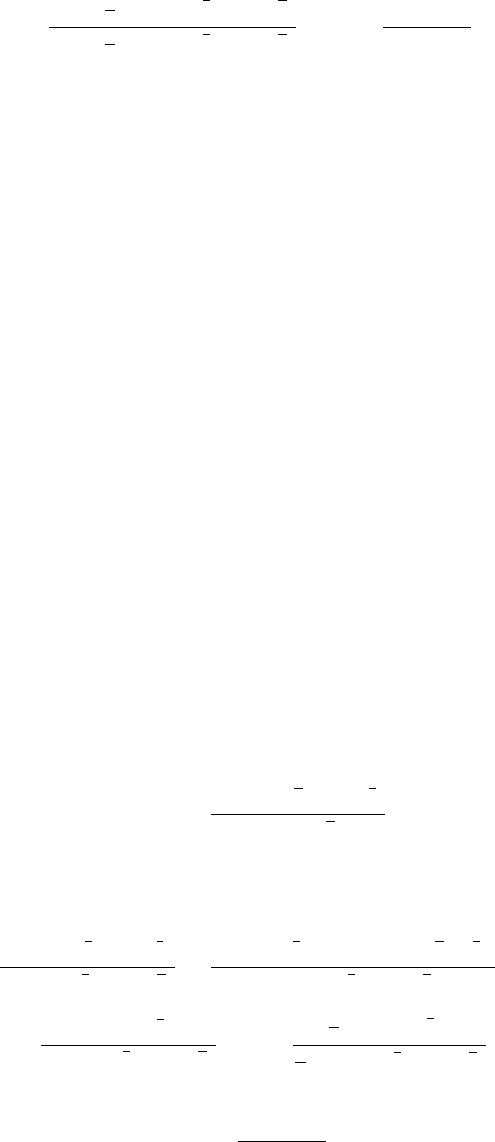

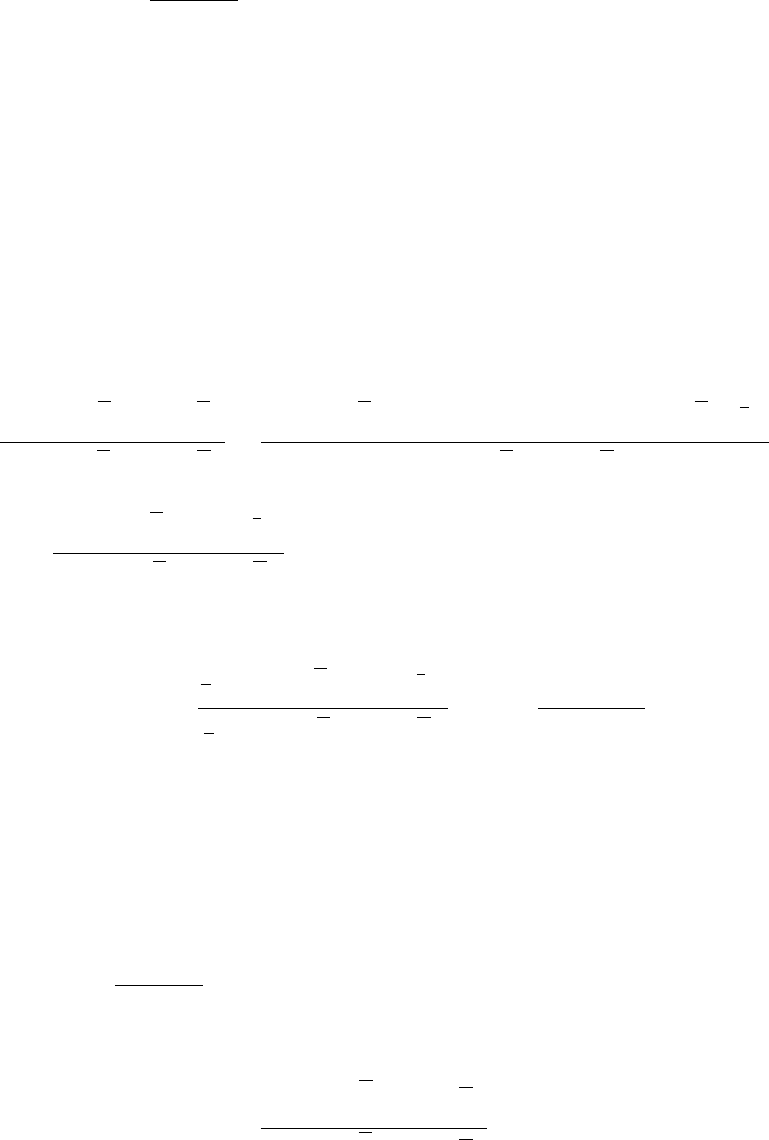

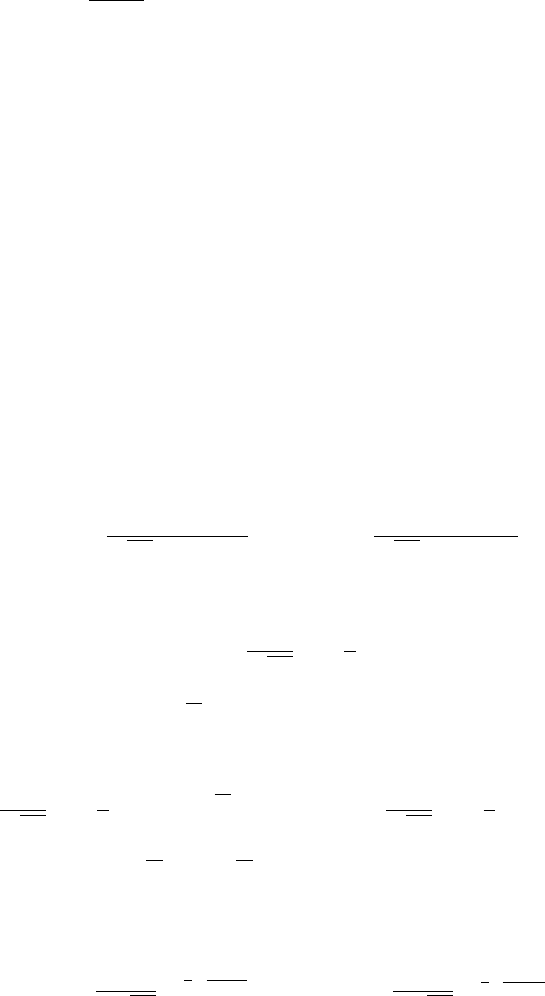

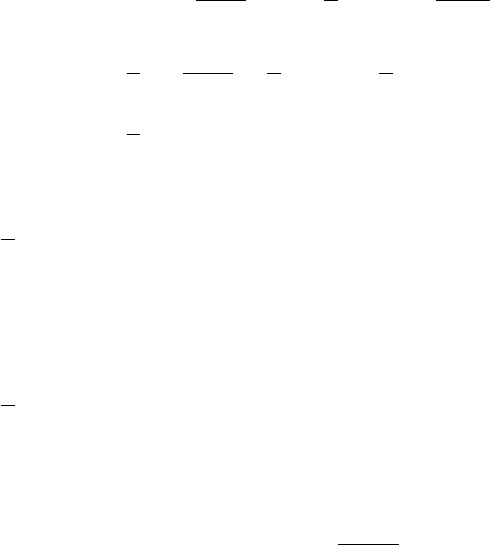

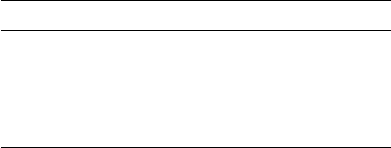

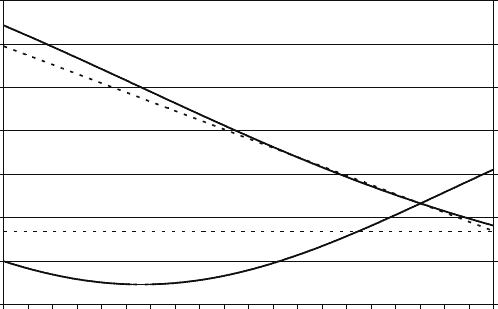

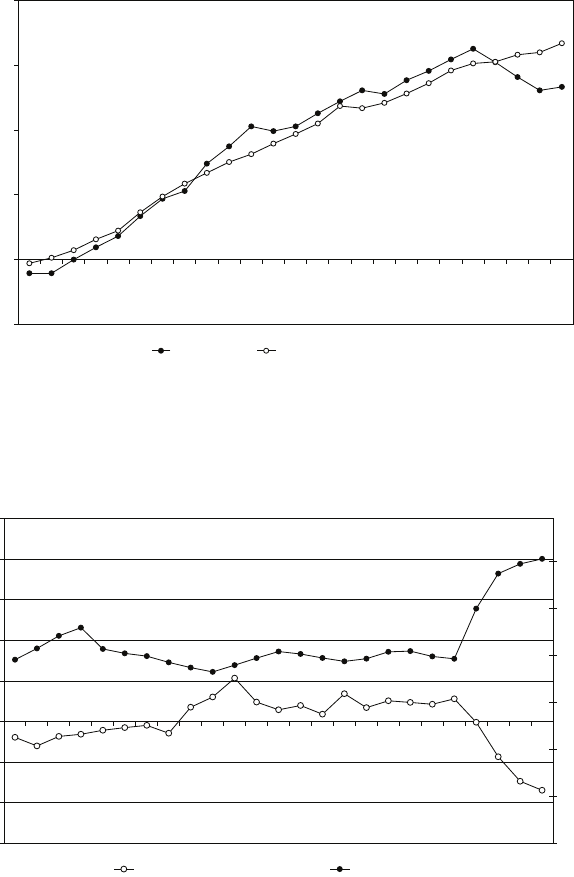

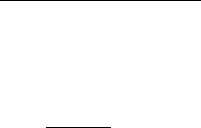

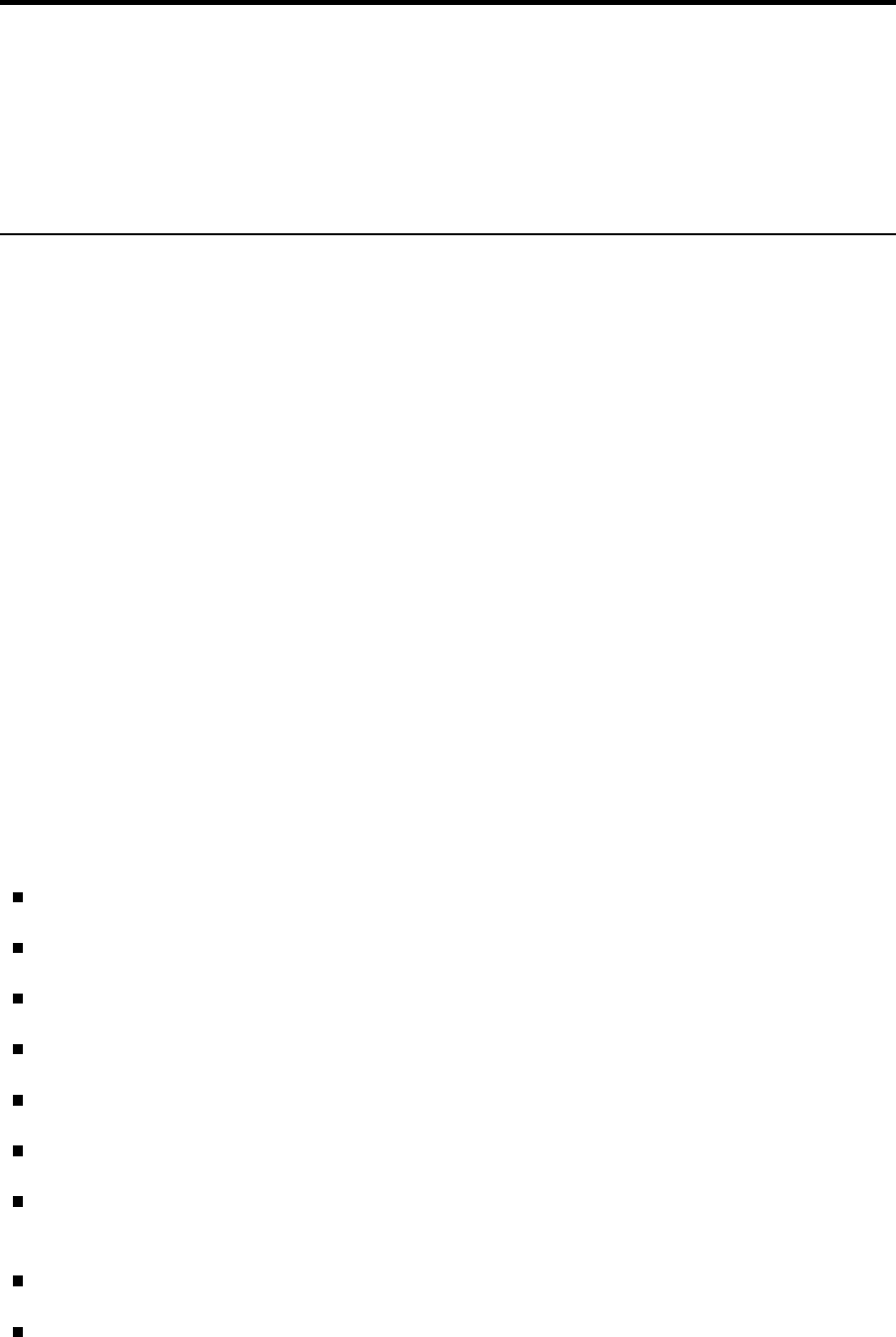

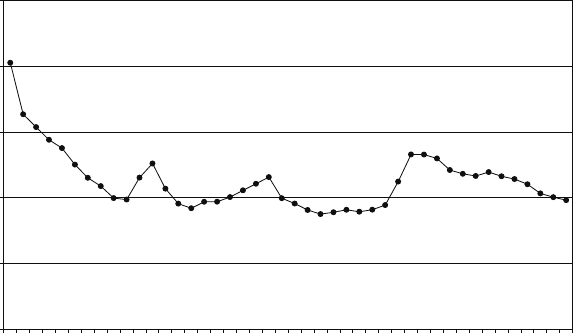

The figure shows the power functions for the test using the conventional upper and

lower 2.5 per cent tails and the test using the central region. The horizontal axis is

the difference between the true value and the hypothetical value µ0in terms of

standard deviations. The vertical axis is the power of the test. The first figure has

been drawn for the case where the true value is greater than the hypothetical value.

The second figure is for the case where the true value is lower than the hypothetical

value. It is the same, but reflected horizontally.

The greater the difference between the true value and the hypothetical mean, the

more likely is it that the sample mean will lie in the right tail of the distribution

conditional on H0being true, and so the more likely is it that the null hypothesis

will be rejected by the conventional test. The figure shows that the power of the

test approaches one asymptotically. However, if the central region of the

distribution is used as the rejection region, the probability of the sample mean

lying in it will diminish as the difference between the true and hypothetical values

increases, and the power of the test approaches zero asymptotically. This is an

extreme example of a very bad test procedure.

0.0

0.2

0.4

0.6

0.8

1.0

01234

conventional rejection region

(upper and lower 2.5% tails)

rejection region central 5%

Figure 1: Power functions of a conventional 5 per cent test and one using the central

region (true value > µ0).

16

0.15. Answers to the starred exercises in the textbook

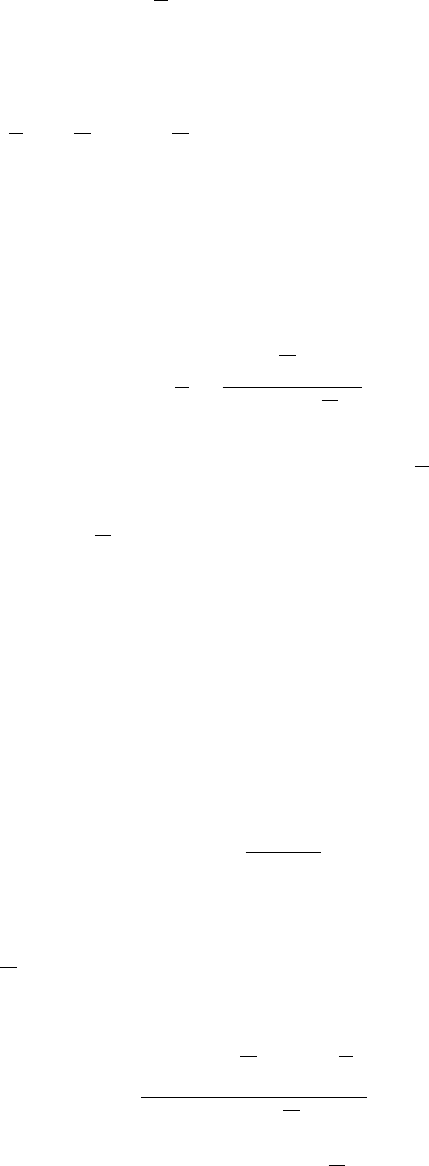

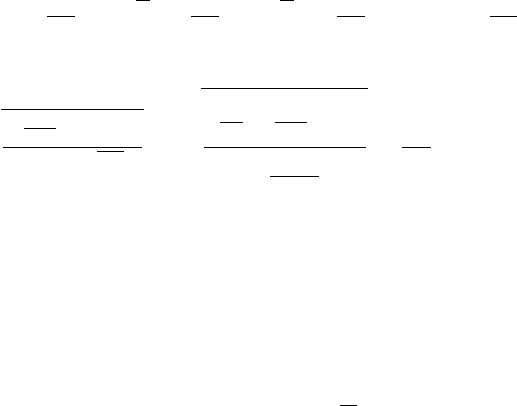

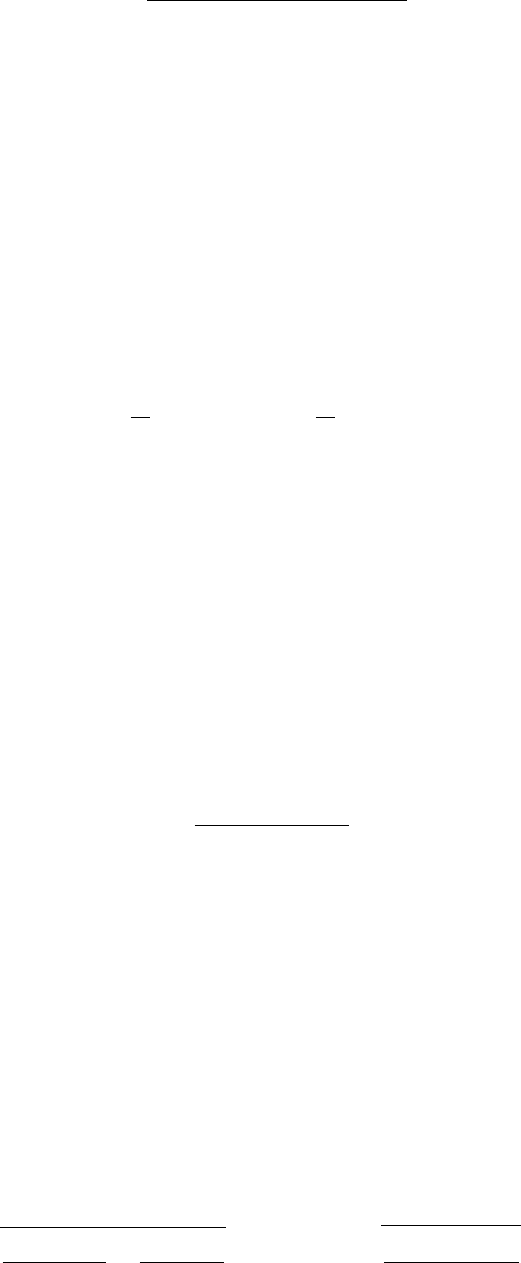

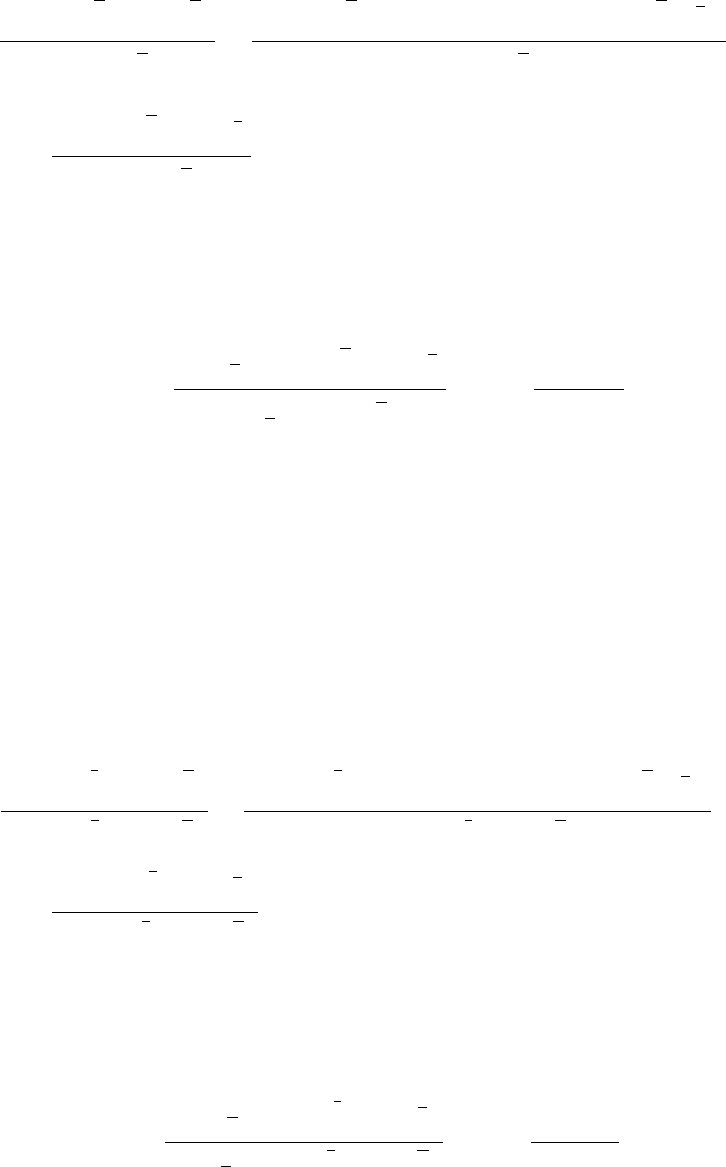

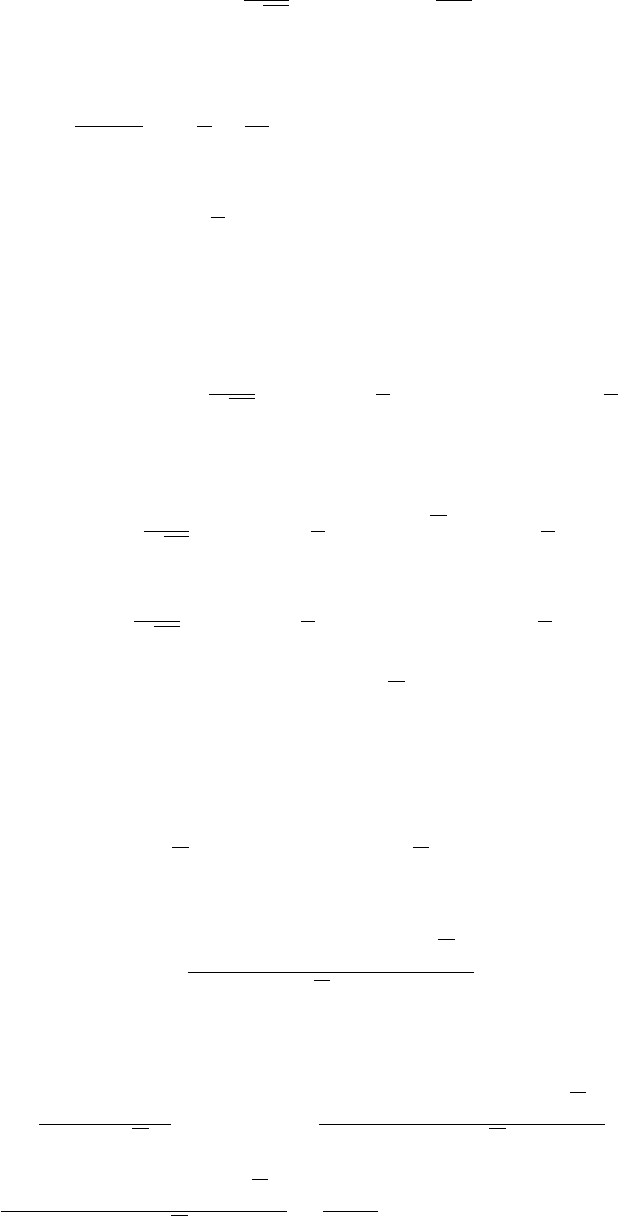

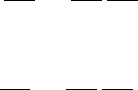

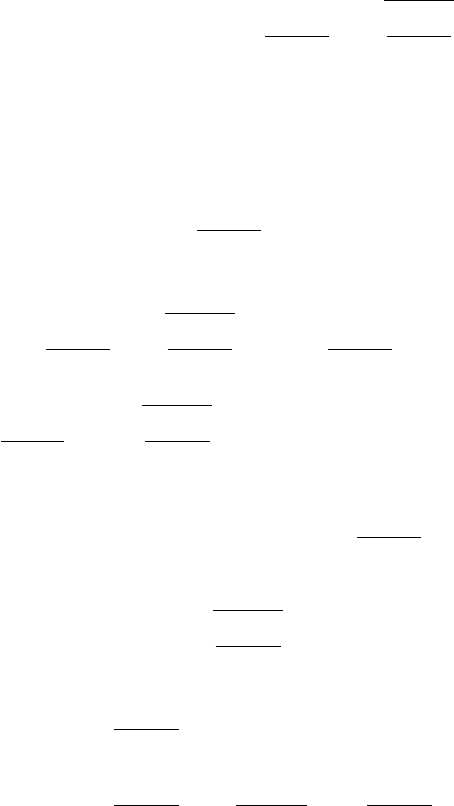

0.0

0.2

0.4

0.6

0.8

1.0

-4 -3 -2 -1 0

conventional rejection region

(upper and lower 2.5% tails)

rejection region central 5%

Figure 2: Power functions of a conventional 5 per cent test and one using the central

region (true value < µ0).

R.29 A researcher is evaluating whether an increase in the minimum hourly wage has

had an effect on employment in the manufacturing industry in the following three

months. Taking a sample of 25 firms, what should she conclude if:

(a) the mean decrease in employment is 9 per cent, and the standard error of the

mean is 5 per cent

(b) the mean decrease is 12 per cent, and the standard error is 5 per cent

(c) the mean decrease is 20 per cent, and the standard error is 5 per cent

(d) there is a mean increase of 11 per cent, and the standard error is 5 per cent?

Answer:

There are 24 degrees of freedom, and hence the critical values of tat the 5 per cent,

1 per cent, and 0.1 per cent levels are 2.06, 2.80, and 3.75, respectively.

(a) The tstatistic is −1.80. Fail to reject H0at the 5 per cent level.

(b) t=−2.40. Reject H0at the 5 per cent level but not the 1 per cent level.

(c) t=−4.00. Reject H0at the 1 per cent level. Better, reject at the 0.1 per cent

level.

(d) t= 2.20. This would be a surprising outcome, but if one is performing a

two-sided test, then reject H0at the 5 per cent level but not the 1 per cent

level.

R3.33 Demonstrate that the 95 per cent confidence interval defined by equation (R.89)

has a 95 per cent probability of capturing µ0if H0is true.

Answer:

If H0is true, there is 95 per cent probability that:

X−µ0

s.e.(X)< tcrit.

17

Preface

Hence there is 95 per cent probability that |X−µ0|< tcrit ×s.e.(X). Hence there is

95 per cent probability that (a) X−µ0< tcrit ×s.e.(X) and (b)

µ0−X < tcrit ×s.e.(X).

(a) can be rewritten X−tcrit ×s.e.(X)< µ0, giving the lower limit of the confidence

interval.

(b) can be rewritten X−µ0>−tcrit ×s.e.(X) and hence X+tcrit ×s.e.(X)> µ0,

giving the upper limit of the confidence interval.

Hence there is 95 per cent probability that µ0will lie in the confidence interval.

R.34 In Exercise R.29, a researcher was evaluating whether an increase in the minimum

hourly wage has had an effect on employment in the manufacturing industry.

Explain whether she might have been justified in performing one-sided tests in

cases (a) – (d), and determine whether her conclusions would have been different.

Answer:

First, there should be a discussion of whether the effect of an increase in the

minimum wage could have a positive effect on employment. If it is decided that it

cannot, we can use a one-sided test and the critical values of tat the 5 per cent, 1

per cent, and 0.1 per cent levels become 1.71, 2.49, and 3.47, respectively.

1. The tstatistic is −1.80. We can now reject H0at the 5 per cent level.

2. t=−2.40. No change, but much closer to rejecting at the 1 per cent level.

3. t=−4.00. No change. Reject at the 1 per cent level (and 0.1 per cent level).

4. t= 2.20. Here there is a problem because the coefficient has the unexpected

sign. In principle we should stick to our guns and fail to reject H0. However,

we should consider two further possibilities. One is that the justification for a

one-sided test is incorrect (not very likely in this case). The other is that the

model is misspecified in some way and the misspecification is responsible for

the unexpected sign. For example, the coefficient might be distorted by

omitted variable bias, to be discussed in Chapter 6.

R.37 A random variable Xhas population mean µXand population variance σ2

X. A

sample of nobservations {X1, . . . , Xn}is generated. Using the plim rules,

demonstrate that, subject to a certain condition that should be stated:

plim 1

X=1

µX

.

Answer:

plim X=µXby the weak law of large numbers. Provided that µX6= 0, we are

entitled to use the plim quotient rule, so:

plim 1

X=plim 1

plim X=1

µX

.

18

0.15. Answers to the starred exercises in the textbook

R.39 A random variable Xhas unknown population mean µXand population variance

σ2

X. A sample of nobservations {X1, . . . , Xn}is generated. Show that:

Z=1

2X1+1

4X2+1

8X3+··· +1

2n−1+1

2n−1Xn

is an unbiased estimator of µX. Show that the variance of Zdoes not tend to zero

as ntends to infinity and that therefore Zis an inconsistent estimator, despite

being unbiased.

Answer:

The weights sum to unity, so the estimator is unbiased. However, its variance is:

σ2

Z=1

4+1

16 +··· +1

4n−1+1

4n−1σ2

X.

This tends to σ2

X/3 as nbecomes large, not zero, so the estimator is inconsistent.

Note: the sum of a geometric progression is given by:

1 + a+a2+··· +an=1−an+1

1−a.

Hence:

1

2+1

4+1

8+··· +1

2n−1+1

2n−1=1

21 + 1

2+··· +1

2n−2+1

2n−1

=1

2×1−1

2n−1

1−1

2

+1

2n−1

= 1 −1

2n−1+1

2n−1= 1

and:

1

4+1

16 +··· +1

4n−1+1

4n−1=1

41 + 1

4+··· +1

4n−2+1

4n−1

=1

4×1−1

4n−1

1−1

4

+1

4n−1

=1

3 1−1

4n−1!+1

4n−1→1

3

as nbecomes large.

R.41 A random variable Xhas a continuous uniform distribution over the interval from

0 to θ, where θis an unknown parameter.

19

Preface

0

0

θ

X

f (X)

The following three estimators are used to estimate θ, given a sample of n

observations on X:

(a) twice the sample mean

(b) the largest value of Xin the sample

(c) the sum of the largest and smallest values of Xin the sample.

Explain verbally whether or not each estimator is (1) unbiased, and (2) consistent.

Answer:

(a) It is evident that E(X) = E(X) = θ/2. Hence 2Xis an unbiased estimator of θ.

The variance of Xis σ2

X/n. The variance of 2Xis therefore 4σ2

X/n. This will

tend to zero as ntends to infinity. Thus the distribution of 2Xwill collapse to

a spike at θand the estimator is consistent.

(b) The estimator will be biased downwards since the highest value of Xin the

sample will always be less than θ. However, as nincreases, the distribution of

the estimator will be increasingly concentrated in a narrow range just below θ.

To put it formally, the probability of the highest value being more than

below θwill be 1−

θnand this will tend to zero, no matter how small is,

as ntends to infinity. The estimator is therefore consistent. It can in fact be

shown that the expected value of the estimator is n

n+1 θand this tends to θas n

becomes large.

(c) The estimator will be unbiased. Call the maximum value of Xin the sample

Xmax and the minimum value Xmin. Given the symmetry of the distribution of

X, the distributions of Xmax and Xmin will be identical, except that that of

Xmin will be to the right of 0 and that of Xmax will be to the left of θ. Hence,

for any n,E(Xmin)−0 = θ−E(Xmax) and the expected value of their sum is

equal to θ. The estimator will be consistent for the same reason as explained in

(b).

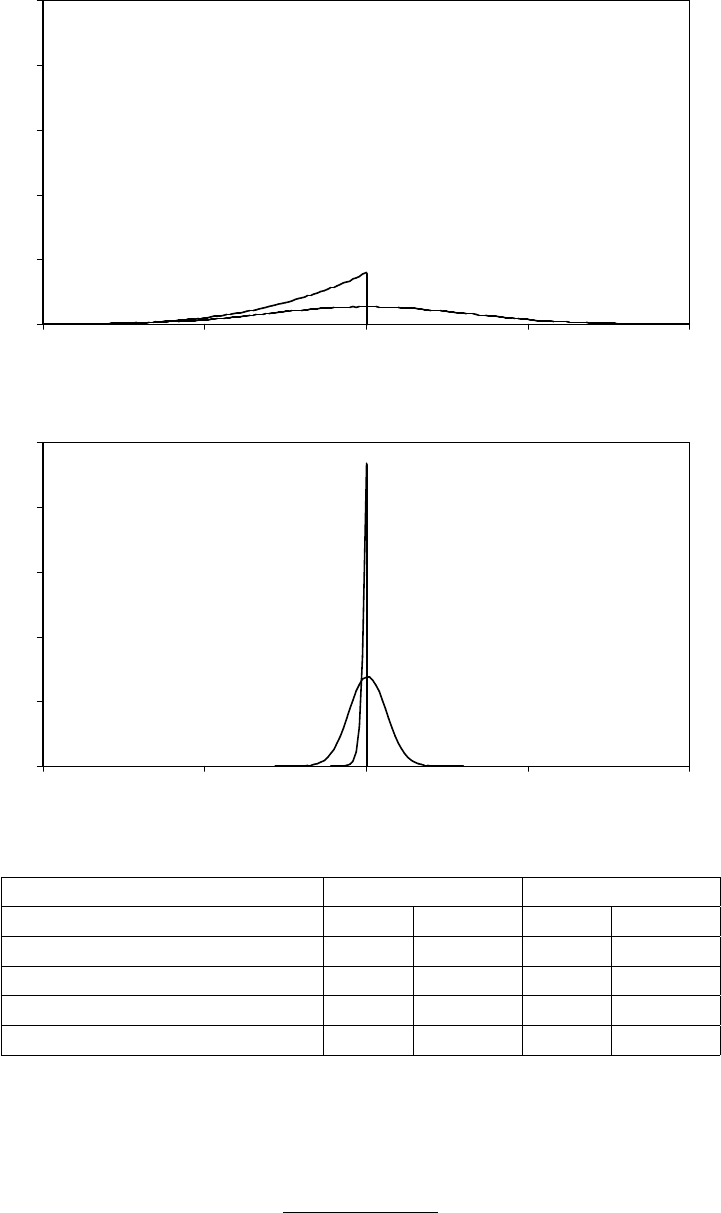

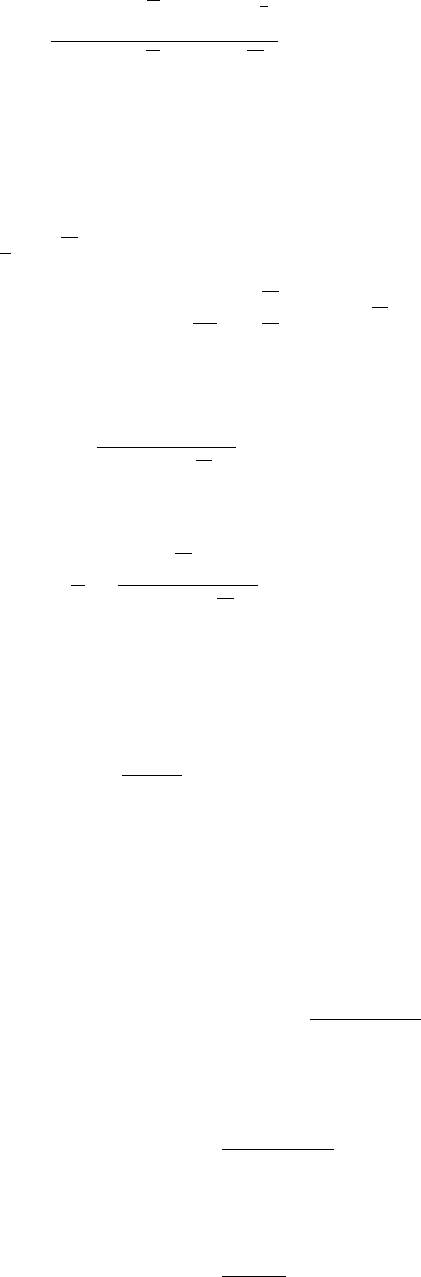

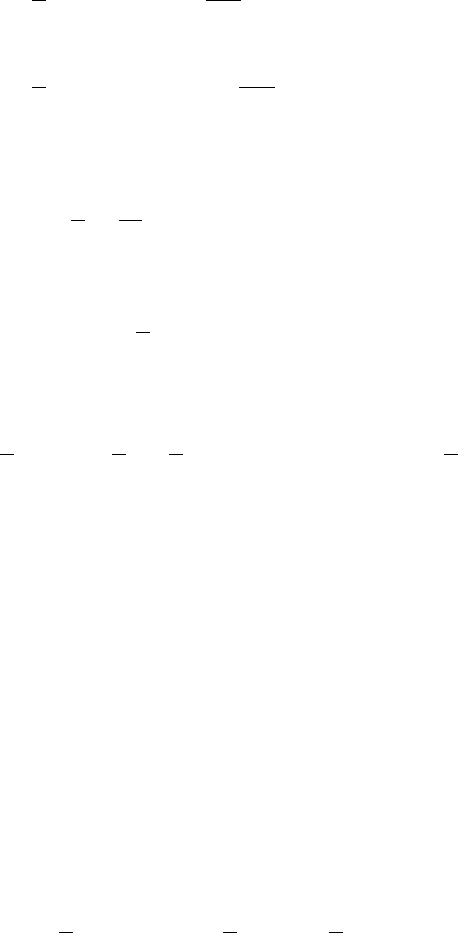

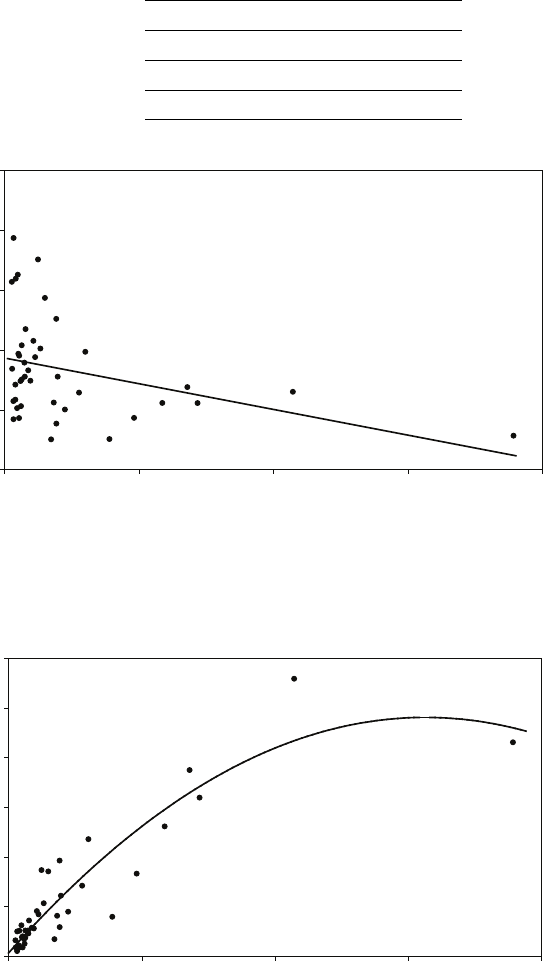

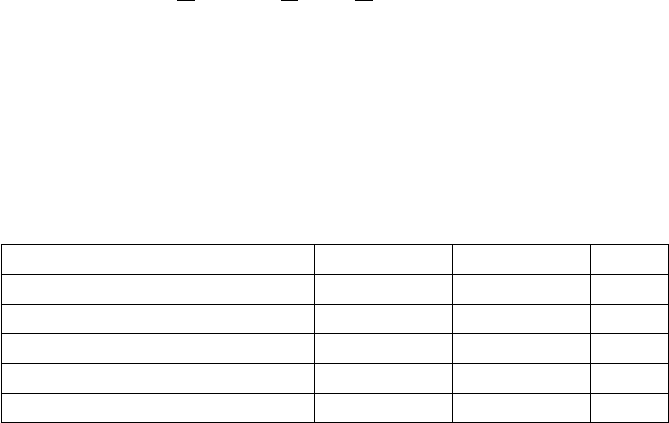

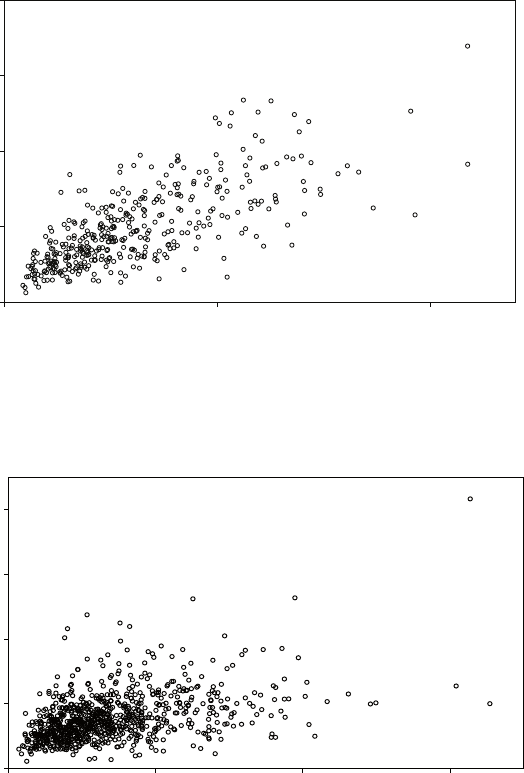

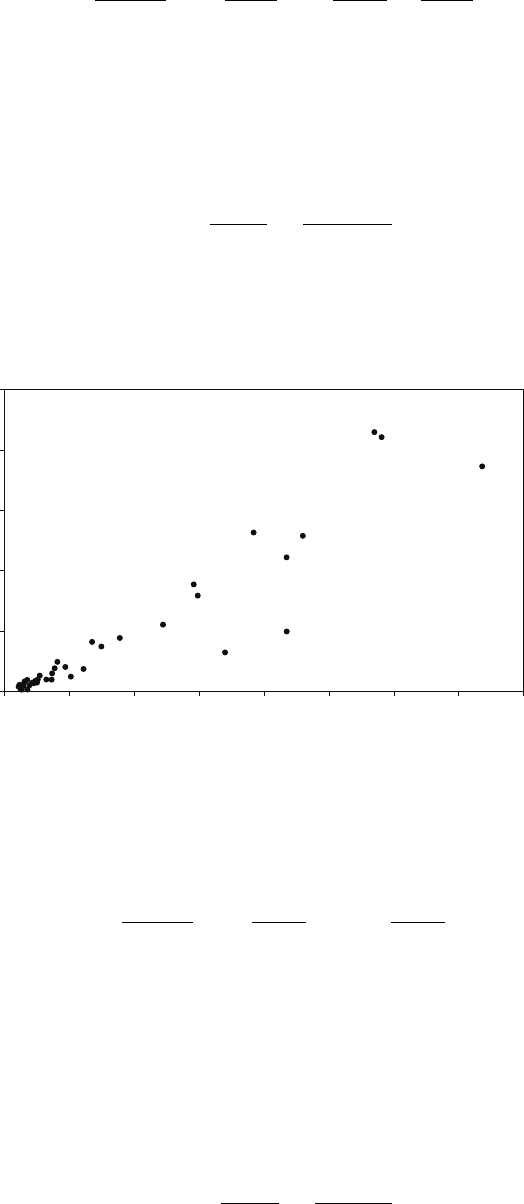

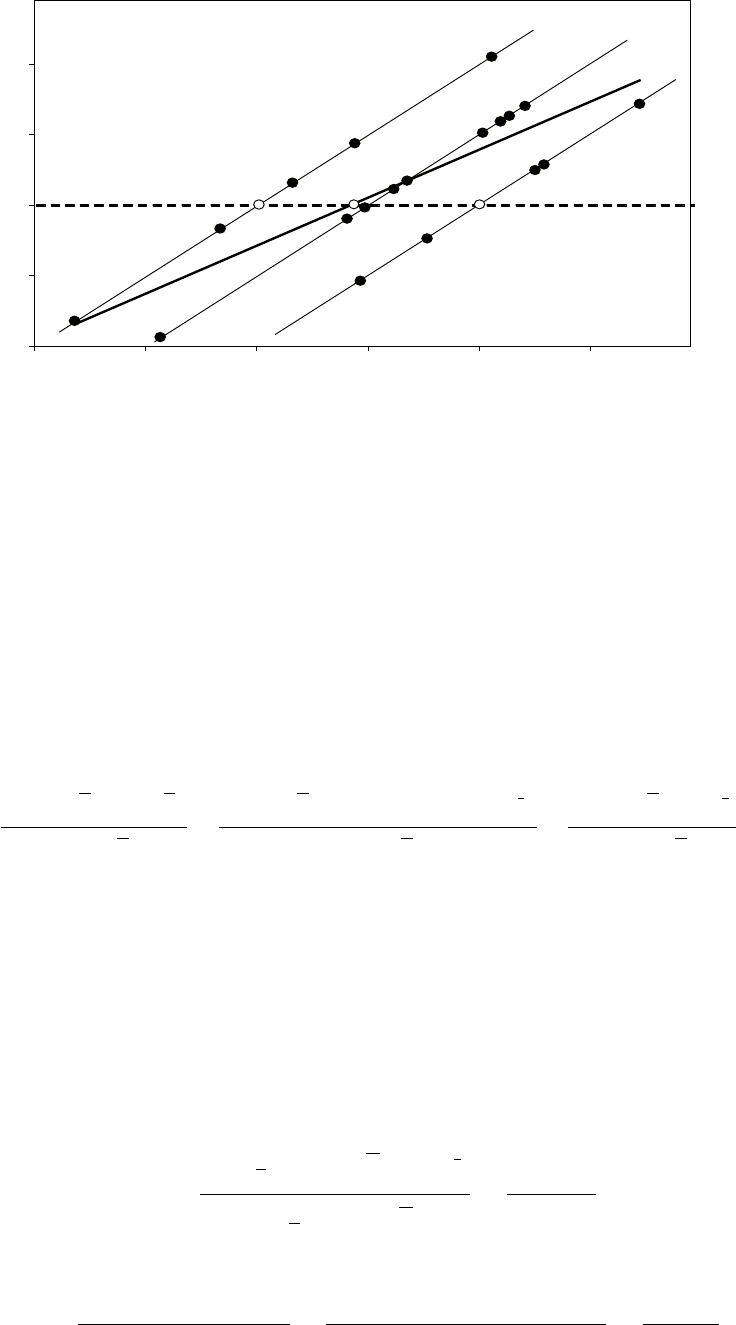

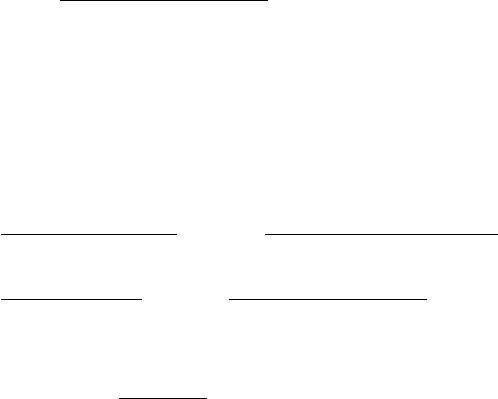

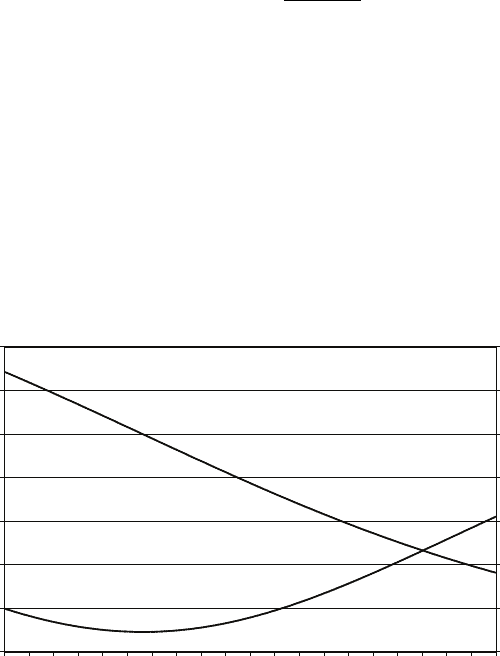

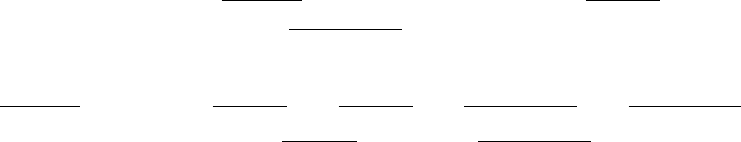

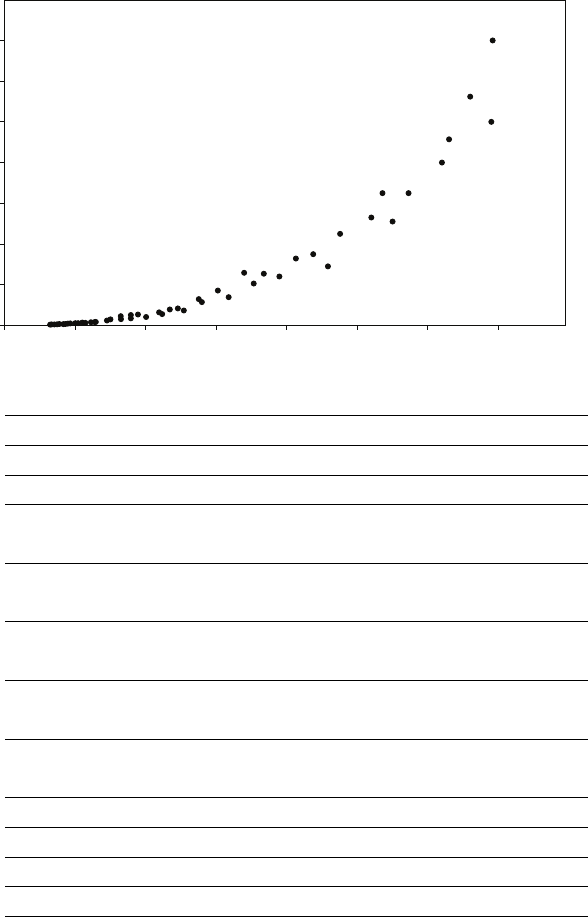

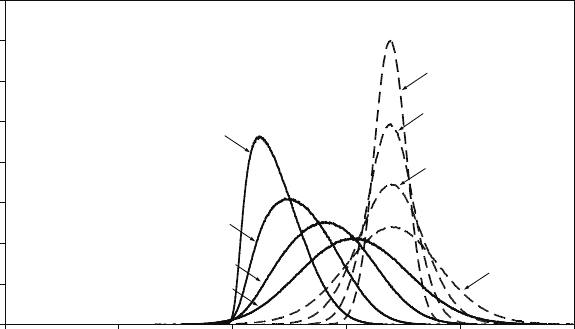

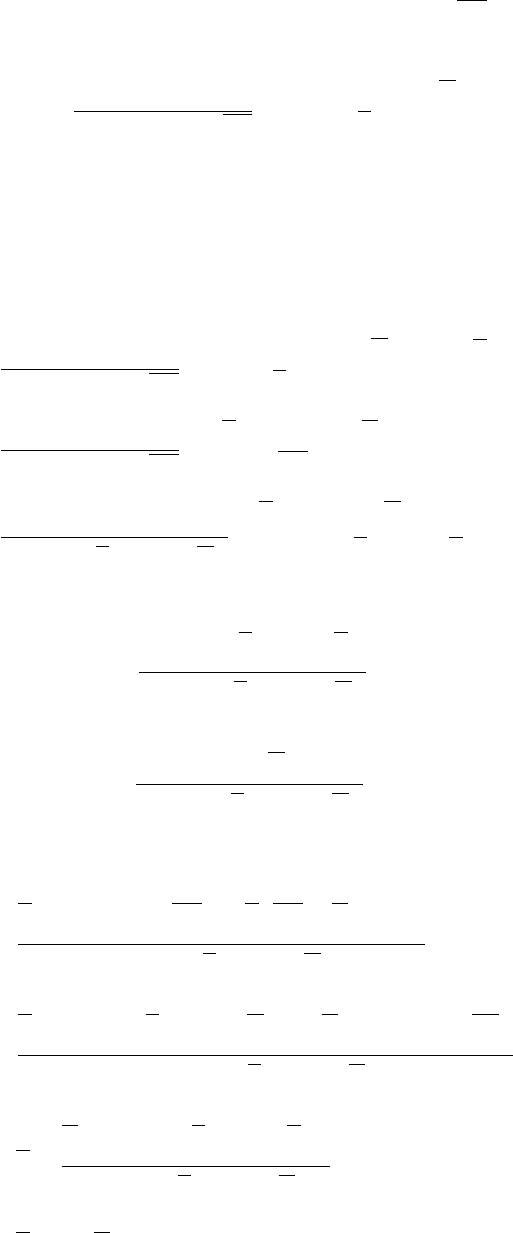

The first figure shows the distributions of the estimators (a) and (b) for 1,000,000

samples with only four observations in each sample, with θ= 1. The second figure

shows the distributions when the number of observations in each sample is equal to

20

0.15. Answers to the starred exercises in the textbook

100. The table gives the means and variances of the distributions as computed from

the results of the simulations. If the mean square error is used to compare the

estimators, which should be preferred for sample size 4? For sample size 100?

0

5

10

15

20

25

0 0.5 1 1.5 2

0

5

10

15

20

25

0 0.5 1 1.5 2

(b)

(b)

(a)

(a)

Sample size = 4

Sample size = 100

Sample size 4 Sample size 100

(a) (b) (a) (b)

Mean 1.0000 0.8001 1.0000 0.9901

Variance 0.0833 0.0267 0.0033 0.0001

Estimated bias 0.0000 −0.1999 0.0000 −0.0099

Estimated mean square error 0.0833 0.0667 0.0033 0.0002

It can be shown (Larsen and Marx, An Introduction to Mathematical Statistics and

Its Applications, p.382, that estimator (b) is biased downwards by an amount

θ/(n+ 1) and that its variance is:

nθ2

(n+ 1)2(n+ 2)

21

Preface

while estimator (a) has variance θ2/3n. How large does nhave to be for (b) to be

preferred to (a) using the mean square error criterion?

The crushing superiority of (b) over (a) may come as a surprise, so accustomed are

we to finding that the sample mean in the best estimator of a parameter. The

underlying reason in this case is that we are estimating a boundary parameter,

which, as its name implies, defines the limit of a distribution. In such a case the

optimal properties of the sample mean are no longer guaranteed and it may be

eclipsed by a score statistic such as the largest observation in the sample. Note that

the standard deviation of the sample mean is inversely proportional to √n, while

that of (b) is inversely proportional to n(disregarding the differences between n,

n+ 1, and n+ 2). (b) therefore approaches its limiting (asymptotically unbiased)

value much faster than (a) and is said to be superconsistent. We will encounter

superconsistent estimators again when we come to cointegration in Chapter 13.

Note that if we multiply (b) by (n+ 1)/n, it is unbiased for finite samples as well

as superconsistent.

0.16 Answers to the additional exercises

AR.1 The total area under the function over the interval [0,2] must be equal to 1. Since

the length of the rectangle is 2, its height must be 0.5. Hence f(X) = 0.5 for

0≤X≤2, and f(X) = 0 for X < 0 and X > 2.

AR.2 Obviously, since the distribution is uniform, the expected value of Xis 1. However

we will derive this formally.

E(X) = Z2

0

Xf(X) dX=Z2

0

0.5XdX=X2

42

0

=22

4−02

4= 1.

AR.3 The expected value of X2is given by:

E(X2) = Z2

0

X2f(X) dX=Z2

0

0.5X2dX=X3

62

0

=23

6−03

6= 1.3333.

AR.4 The variance of Xis given by:

E[X−µX]2=Z2

0

[X−µX]2f(X) dX=Z2

0

0.5[X−1]2dX

=Z2

0

(0.5X2−X+ 0.5) dX

=X3

6−X2

2+X

22

0

=8

6−2+1−[0] = 0.3333.

The standard deviation is equal to the square root, 0.5774.

22

0.16. Answers to the additional exercises

AR.5 From Exercise AR.3, E(X2)=1.3333. From Exercise AR.2, the square of E(X) is

1. Hence the variance is 0.3333, as in Exercise AR.4.

AR.6 Table R.6 is reproduced for reference:

Table R.6 Trade-off between Type I and Type II errors, one-sided and two-sided tests

Probability of Type II error if µ=µ1

One-sided test Two-sided test

5 per cent significance test 0.09 0.15

2.5 per cent significance test 0.15 (not investigated)

1 per cent significance test 0.25 0.34

Note: The distance between µ1and µ0in this example was 3 standard deviations.

Two-sided tests

Under the (false) H0:µ=µ0, the right rejection region for a two-sided 5 per cent

significance test starts 1.96 standard deviations above µ0, which is 0.04 standard

deviations below µ1. A Type II error therefore occurs if Xis more than 0.04

standard deviations to the left of µ1. Under H1:µ=µ1, the probability is 0.48.

Under H0, the right rejection region for a two-sided 1 per cent significance test

starts 2.58 standard deviations above µ0, which is 0.58 standard deviations above

µ1. A Type II error therefore occurs if Xis less than 0.58 standard deviations to

the right of µ1. Under H1:µ=µ1, the probability is 0.72.

One-sided tests

Under H0:µ=µ0, the right rejection region for a one-sided 5 per cent significance

test starts 1.65 standard deviations above µ0, which is 0.35 standard deviations

below µ1. A Type II error therefore occurs if Xis more than 0.35 standard

deviations to the left of µ1. Under H1:µ=µ1, the probability is 0.36.

Under H0, the right rejection region for a one-sided 1 per cent significance test

starts 2.33 standard deviations above µ0, which is 0.33 standard deviations above

µ1. A Type II error therefore occurs if Xis less than 0.33 standard deviations to

the right of µ1. Under H1:µ=µ1, the probability is 0.63.

Hence the table is:

Trade-off between Type I and Type II errors, one-sided and two-sided tests

Probability of Type II error if µ=µ1

One-sided test Two-sided test

5 per cent significance test 0.36 0.48

1 per cent significance test 0.63 0.72

AR.7 We will assume for sake of argument that the investigator is performing a 5 per

cent significance test, but the conclusions apply to all significance levels.

If the true value is 0, the null hypothesis is true. The risk of a Type I error is, by

construction, 5 per cent for both one-sided and two-sided tests. Issues relating to

Type II error do not arise because the null hypothesis is true.

23

Preface

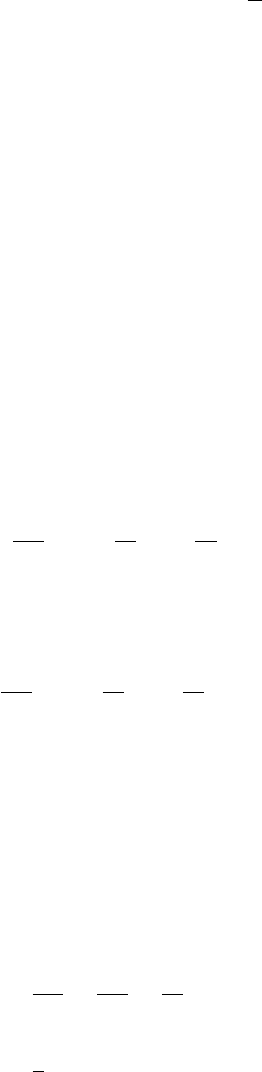

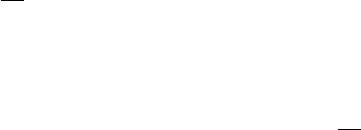

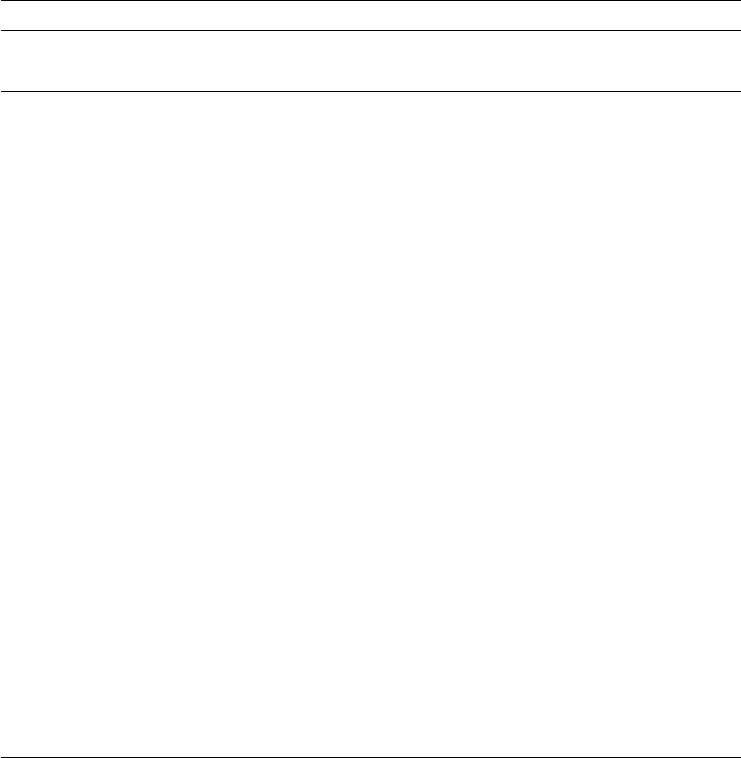

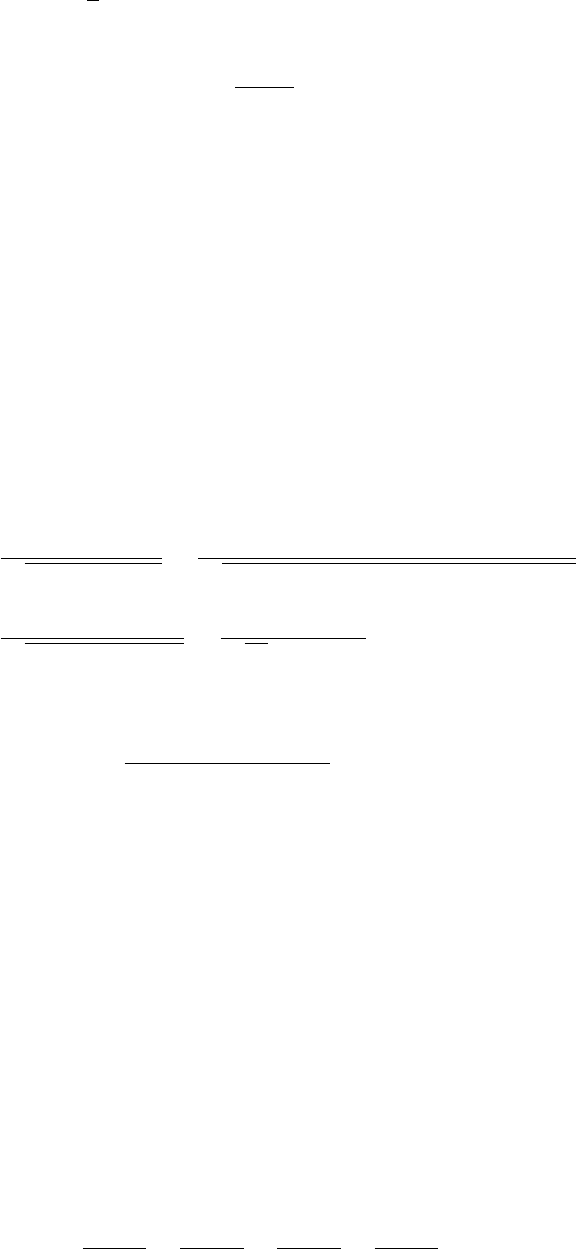

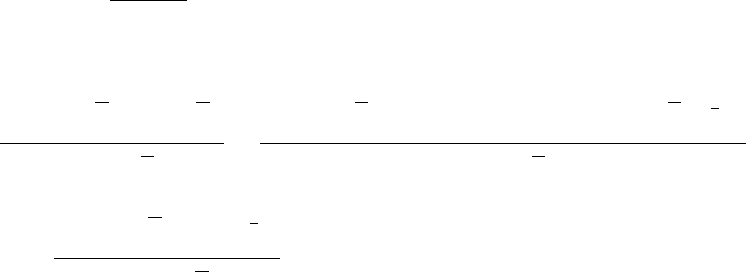

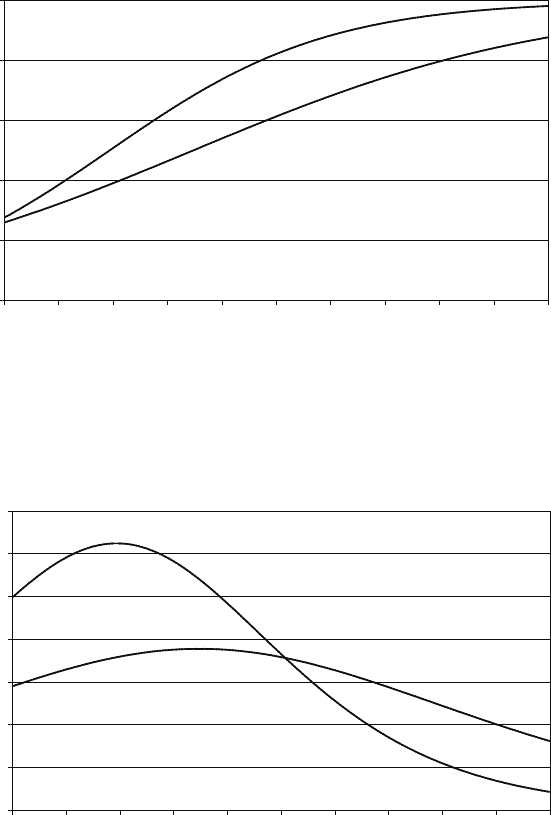

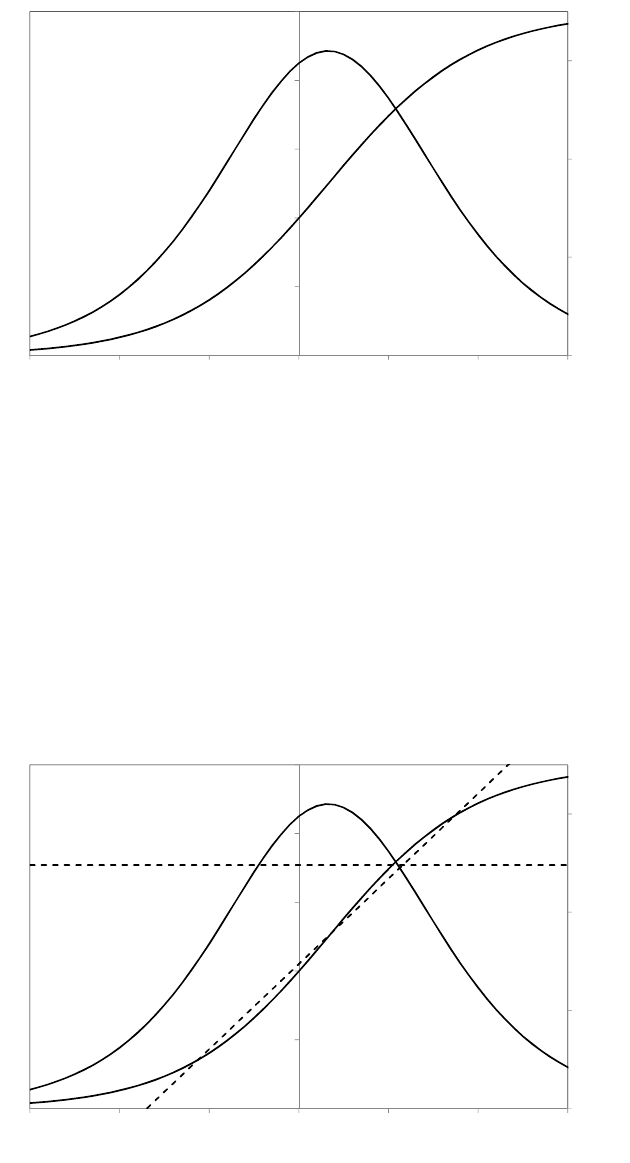

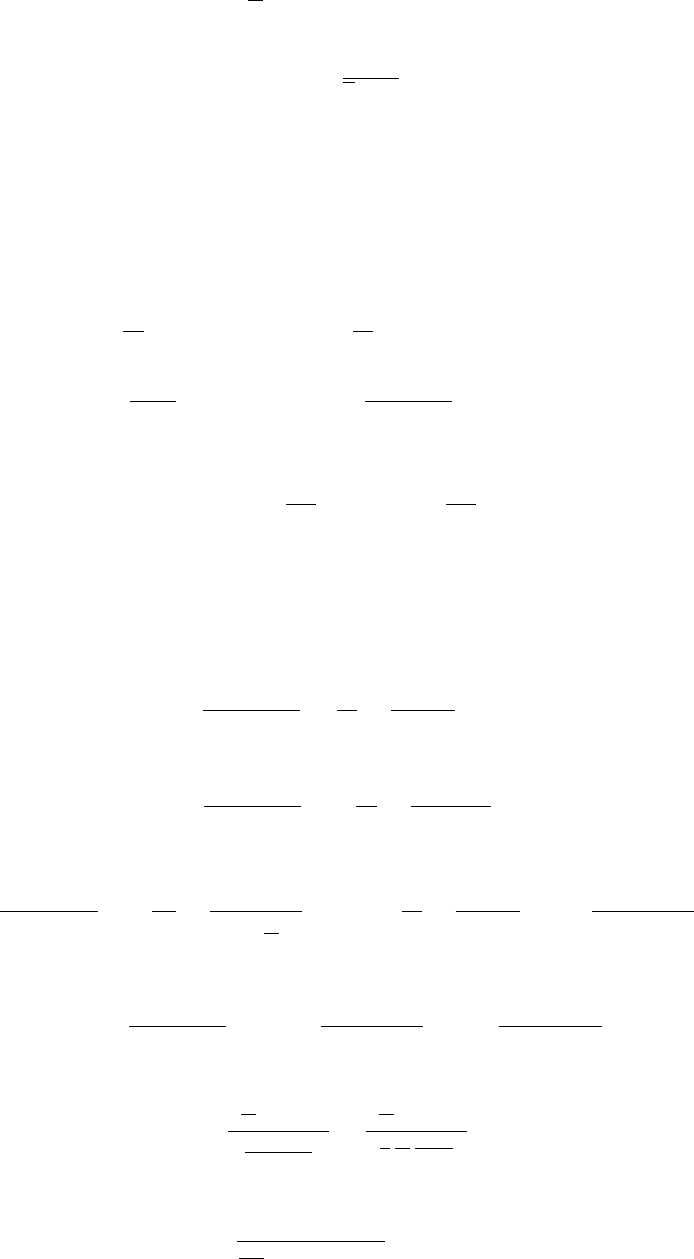

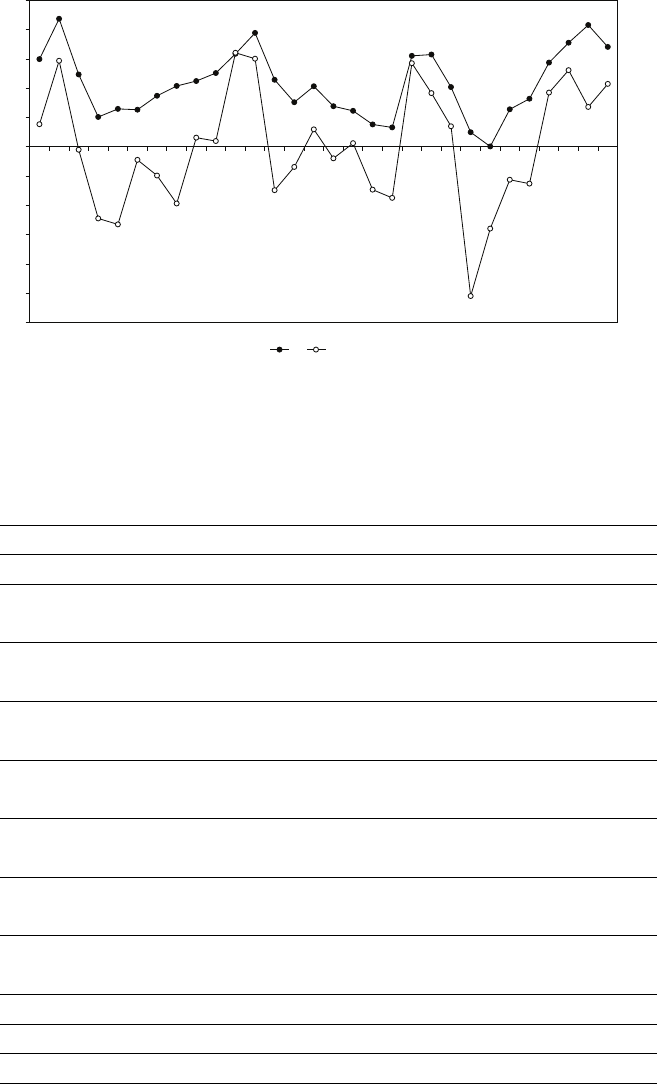

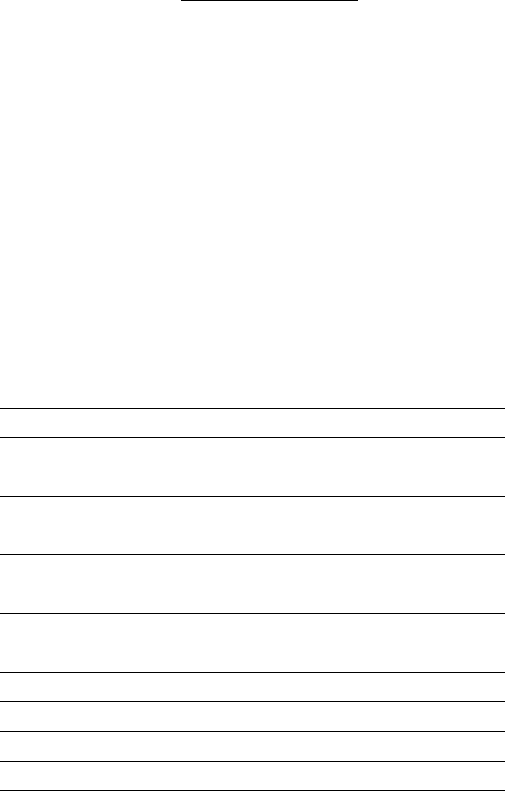

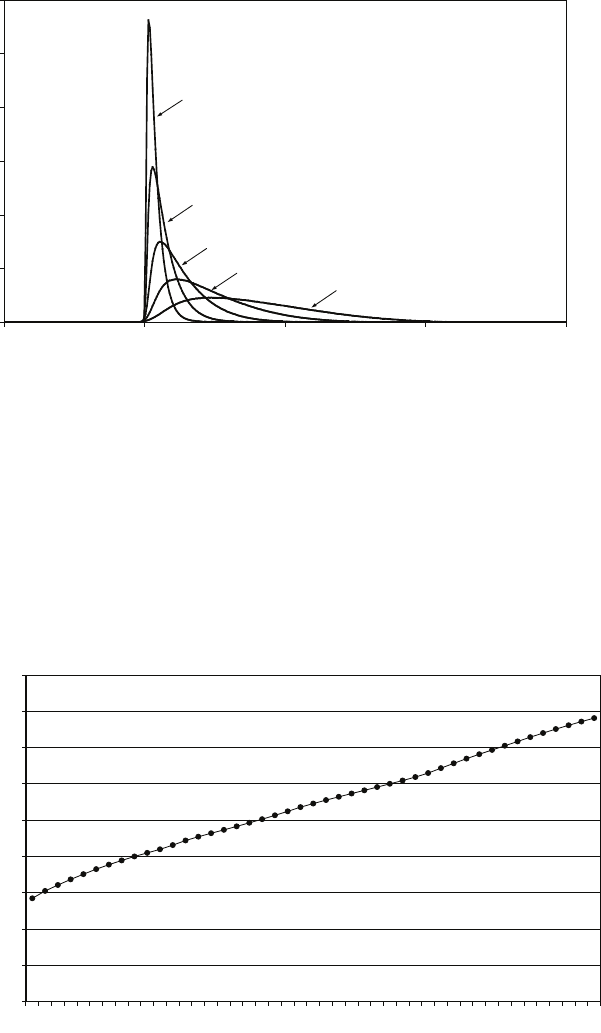

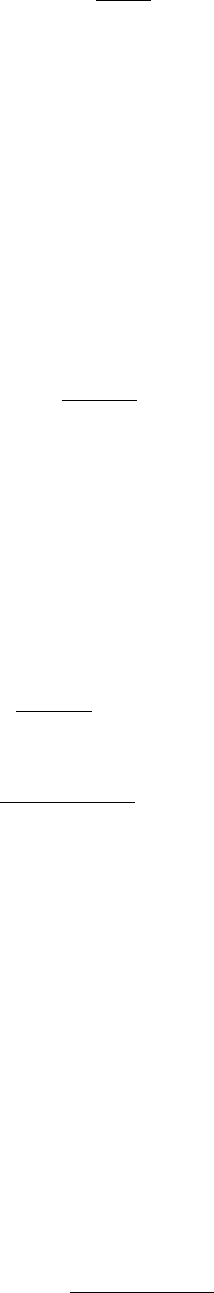

If the true value is positive, the investigator is lucky and makes the gain associated

with a one-sided test. Namely, the power of the test is uniformly higher than that

for a two-sided test for all positive values of µ. The power functions for one-sided

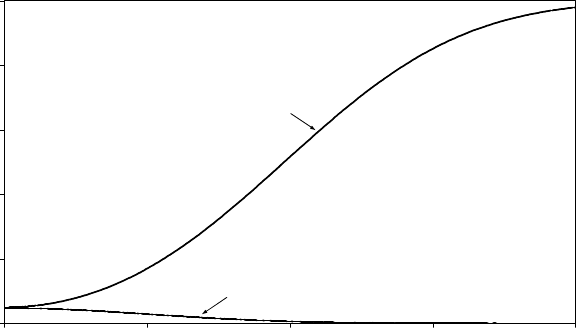

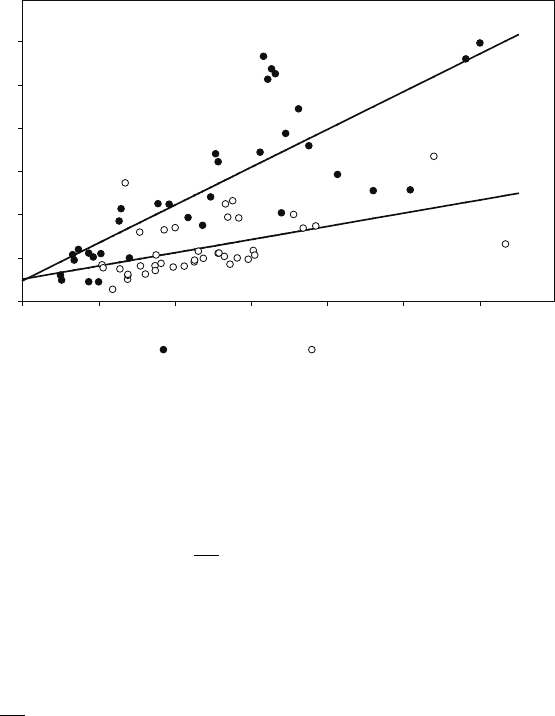

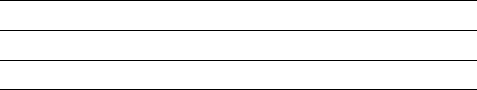

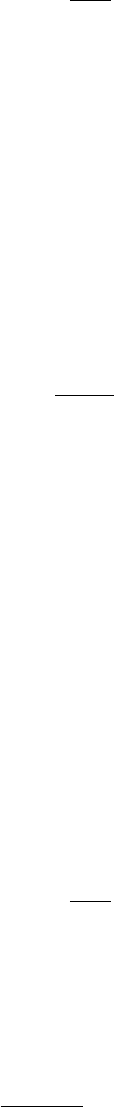

and two-sided tests are shown in the first figure below.

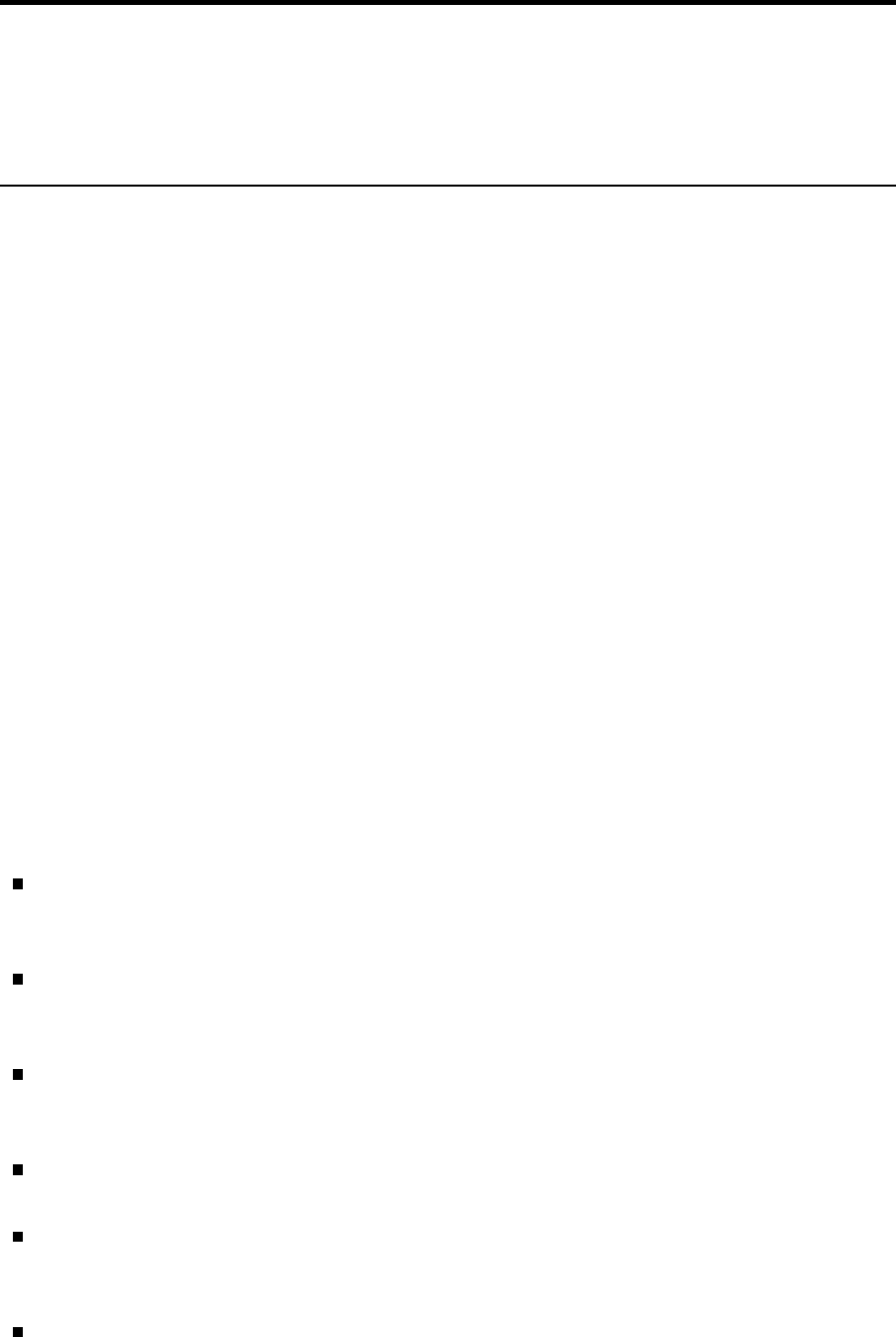

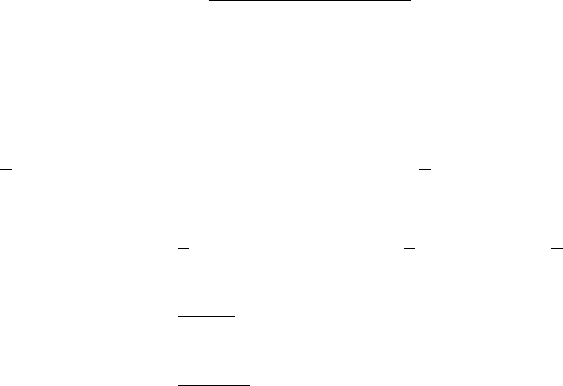

If the true value is negative, the power functions are as shown in the second figure.

That for the two-sided test is the same as that in the first figure, but reflected

horizontally. The larger (negatively) is the true value of µ, the greater will be the

probability of rejecting H0and the power approaches 1 asymptotically. However,

with a one-sided test, the power function will decrease from its already very low

value. The power is not automatically zero for true values that are negative because

even for these it is possible that a sample might have a mean that lies in the right

tail of the distribution under the null hypothesis. But the probability rapidly falls

to zero as the (negative) size of µgrows.

0.0

0.2

0.4

0.6

0.8

1.0

0 1 2 3 4 5

one-sided 5% test

two-sided 5% test

Figure 3: Power functions of one-sided and two-sided 5 per cent tests (true value >0).

0.0

0.2

0.4

0.6

0.8

1.0

-4 -3 -2 -1 0

one-sided 5% test

two-sided 5% test

!

Figure 4: Power functions of one-sided and two-sided 5 per cent tests (true value <0).

24

0.16. Answers to the additional exercises

AR.8 We will refute the unbiasedness proposition by considering the more general case

where Z2is an unbiased estimator of θ2. We know that:

E(Z−θ)2=E(Z2)−2θE(Z) + θ2= 2θ2−2θE(Z).

Hence:

E(Z) = θ−1

2θE(Z−θ)2.

Zis therefore a biased estimator of θexcept for the special case where Zis equal

to θfor all samples, that is, in the trivial case where there is no sampling error.

Nevertheless, since a function of a consistent estimator will, under quite general

conditions, be a consistent estimator of the function of the parameter, √bσ2will be

a consistent estimator of σ.

25

Preface

26

Chapter 1

Simple regression analysis

1.1 Overview

This chapter introduces the least squares criterion of goodness of fit and demonstrates,

first through examples and then in the general case, how it may be used to develop

expressions for the coefficients that quantify the relationship when a dependent variable

is assumed to be determined by one explanatory variable. The chapter continues by

showing how the coefficients should be interpreted when the variables are measured in

natural units, and it concludes by introducing R2, a second criterion of goodness of fit,

and showing how it is related to the least squares criterion and the correlation between

the fitted and actual values of the dependent variable.

1.2 Learning outcomes

After working through the corresponding chapter in the text, studying the

corresponding slideshows, and doing the starred exercises in the text and the additional

exercises in this subject guide, you should be able to explain what is meant by:

dependent variable

explanatory variable (independent variable, regressor)

parameter of a regression model

the nonstochastic component of a true relationship

the disturbance term

the least squares criterion of goodness of fit

ordinary least squares (OLS)

the regression line

fitted model

fitted values (of the dependent variable)

residuals

total sum of squares, explained sum of squares, residual sum of squares

R2.

27

1. Simple regression analysis

In addition, you should be able to explain the difference between:

the nonstochastic component of a true relationship and a fitted regression line, and

the values of the disturbance term and the residuals.

1.3 Additional exercises

A1.1 The output below gives the result of regressing FDHO, annual household

expenditure on food consumed at home, on EXP, total annual household

expenditure, both measured in dollars, using the Consumer Expenditure Survey

data set. Give an interpretation of the coefficients.

. reg FDHO EXP if FDHO>0

Source | SS df MS Number of obs = 6334

-------------+------------------------------ F( 1, 6332) = 3431.01

Model | 972602566 1 972602566 Prob > F = 0.0000

Residual | 1.7950e+09 6332 283474.003 R-squared = 0.3514

-------------+------------------------------ Adj R-squared = 0.3513

Total | 2.7676e+09 6333 437006.15 Root MSE = 532.42

------------------------------------------------------------------------------

FDHO | Coef. Std. Err. t P>|t| [95% Conf. Interval]

-------------+----------------------------------------------------------------

EXP | .0627099 .0010706 58.57 0.000 .0606112 .0648086

_cons | 369.4418 10.65718 34.67 0.000 348.5501 390.3334

------------------------------------------------------------------------------

A1.2 Download the CES data set from the website (see Appendix B of the text),

perform a regression parallel to that in Exercise A1.1 for your category of

expenditure, and provide an interpretation of the regression coefficients.

A1.3 The output shows the result of regressing the weight of the respondent, in pounds,

in 2011 on the weight in 2004, using EAWE Data Set 22. Provide an interpretation

of the coefficients. Summary statistics for the data are also provided.

. reg WEIGHT11 WEIGHT04

Source | SS df MS Number of obs = 500

-------------+------------------------------ F( 1, 498) = 1207.55

Model | 769248.875 1 769248.875 Prob > F = 0.0000

Residual | 317241.693 498 637.031513 R-squared = 0.7080

-------------+------------------------------ Adj R-squared = 0.7074

Total | 1086490.57 499 2177.33581 Root MSE = 25.239

------------------------------------------------------------------------------

WEIGHT11 | Coef. Std. Err. t P>|t| [95% Conf. Interval]

-------------+----------------------------------------------------------------

WEIGHT04 | .9739736 .0280281 34.75 0.000 .9189056 1.029042

_cons | 17.42232 4.888091 3.56 0.000 7.818493 27.02614

------------------------------------------------------------------------------

28

1.3. Additional exercises

. sum WEIGHT04 WEIGHT11

Variable | Obs Mean Std. Dev. Min Max

-------------+--------------------------------------------------------

WEIGHT04 | 500 169.686 40.31215 95 330

WEIGHT11 | 500 182.692 46.66193 95 370

A1.4 The output shows the result of regressing the hourly earnings of the respondent, in

dollars, in 2011 on height in 2004, measured in inches, using EAWE Data Set 22.

Provide an interpretation of the coefficients, comment on the plausibility of the

interpretation, and attempt to give an explanation.

. reg EARNINGS HEIGHT

Source | SS df MS Number of obs = 500

-------------+------------------------------ F( 1, 498) = 9.23

Model | 1393.77592 1 1393.77592 Prob > F = 0.0025

Residual | 75171.3726 498 150.946531 R-squared = 0.0182

-------------+------------------------------ Adj R-squared = 0.0162

Total | 76565.1485 499 153.437171 Root MSE = 12.286

------------------------------------------------------------------------------

EARNINGS | Coef. Std. Err. t P>|t| [95% Conf. Interval]

-------------+----------------------------------------------------------------

HEIGHT | .4087231 .1345068 3.04 0.003 .1444523 .6729938

_cons | -9.26923 9.125089 -1.02 0.310 -27.19765 8.659188

------------------------------------------------------------------------------

A1.5 A researcher has data for 50 countries on N, the average number of newspapers

purchased per adult in one year, and G, GDP per capita, measured in US $, and

fits the following regression (RSS = residual sum of squares):

b

N= 25.0+0.020G R2= 0.06,RSS = 4,000.0

The researcher realises that GDP has been underestimated by $100 in every

country and that Nshould have been regressed on G∗, where G∗=G+ 100.

Explain, with mathematical proofs, how the following components of the output

would have differed:

•the coefficient of GDP

•the intercept

•RSS

•R2.

A1.6 A researcher with the same model and data as in Exercise A1.5 believes that GDP

in each country has been underestimated by 50 per cent and that Nshould have

been regressed on G∗, where G∗= 2G. Explain, with mathematical proofs, how the

following components of the output would have differed:

•the coefficient of GDP

•the intercept

•RSS

•R2.

29

1. Simple regression analysis

A1.7 Some practitioners of econometrics advocate ‘standardising’ each variable in a

regression by subtracting its sample mean and dividing by its sample standard

deviation. Thus, if the original regression specification is:

Yi=β1+β2Xi+ui

the revised specification is:

Y∗

i=β∗

1+β∗

2X∗

i+vi

where:

Y∗

i=Yi−Y

bσY

and X∗

i=Xi−X

bσX

Yand Xare the sample means of Yand X,bσYand bσXare the estimators of the

standard deviations of Yand X, defined as the square roots of the estimated

variances:

bσ2

Y=1

n−1

n

X

i=1

(Yi−Y)2and bσ2

X=1

n−1

n

X

i=1

(Xi−X)2

and nis the number of observations in the sample. We will write the fitted models

for the two specifications as: b

Yi=b

β1+b

β2Xi

and: b

Y∗

i=b

β∗

1+b

β∗

2X∗

i.

Taking account of the definitions of Y∗and X∗, show that b

β∗

1= 0 and that

b

β∗

2=bσX

bσYb

β2. Provide an interpretation of b

β∗

2.

A1.8 For the model described in Exercise A1.7, suppose that Y∗is regressed on X∗

without an intercept: b

Y∗

i=b

β∗∗

2X∗

i.

Determine how b

β∗∗

2is related to b

β∗

2.

A1.9 A variable Yiis generated as:

Yi=β1+ui(1.1)

where β1is a fixed parameter and uiis a disturbance term that is independently

and identically distributed with expected value 0 and population variance σ2

u. The

least squares estimator of β1is Y, the sample mean of Y. Give a mathematical

demonstration that the value of R2in such a regression is zero.

1.4 Answers to the starred exercises in the textbook

1.9 The output shows the result of regressing the weight of the respondent in 2004,

measured in pounds, on his or her height, measured in inches, using EAWE Data

Set 21. Provide an interpretation of the coefficients.

30

1.4. Answers to the starred exercises in the textbook

. reg WEIGHT04 HEIGHT

Source | SS df MS Number of obs = 500

-------------+------------------------------ F( 1, 498) = 176.74

Model | 211309 1 211309 Prob > F = 0.0000

Residual | 595389.95 498 1195.56215 R-squared = 0.2619

-------------+------------------------------ Adj R-squared = 0.2605

Total | 806698.98 499 1616.63116 Root MSE = 34.577

------------------------------------------------------------------------------

WEIGHT04 | Coef. Std. Err. t P>|t| [95% Conf. Interval]

-------------+----------------------------------------------------------------

HEIGHT | 5.073711 .381639 13.29 0.000 4.32389 5.823532

_cons | -177.1703 25.93501 -6.83 0.000 -228.1258 -126.2147

------------------------------------------------------------------------------

Answer:

Literally the regression implies that, for every extra inch of height, an individual

tends to weigh an extra 5.1 pounds. The intercept, which literally suggests that an

individual with no height would weigh −177 pounds, has no meaning.

1.11 A researcher has international cross-sectional data on aggregate wages, W,

aggregate profits, P, and aggregate income, Y, for a sample of ncountries. By

definition:

Yi=Wi+Pi.

The regressions:

c

Wi=bα1+bα2Yi

b

Pi=b

β1+b

β2Yi

are fitted using OLS regression analysis. Show that the regression coefficients will

automatically satisfy the following equations:

bα2+b

β2= 1

bα1+b

β1= 0.

Explain intuitively why this should be so.

Answer:

bα2+b

β2=PYi−YWi−W

PYi−Y2+PYi−YPi−P

PYi−Y2

=PYi−YWi+Pi−W−P

PYi−Y2

=PYi−YYi−Y

PYi−Y2

= 1

31

1. Simple regression analysis

bα1+b

β1=W−bα2Y+P−b

β2Y=W+P−(bα2+b

β2)Y=Y−Y= 0.

The intuitive explanation is that the regressions break down income into predicted

wages and profits and one would expect the sum of the predicted components of

income to be equal to its actual level. The sum of the predicted components is

c

Wi+b

Pi= (bα1+bα2Yi)+(b

β1+b

β2Yi), and in general this will be equal to Yionly if

the two conditions are satisfied.

1.13 Suppose that the units of measurement of Xare changed so that the new measure,

X∗, is related to the original one by X∗

i=µ2Xi. Show that the new estimate of the

slope coefficient is b

β2/µ2, where b

β2is the slope coefficient in the original regression.

Answer:

b

β∗

2=PX∗

i−X∗Yi−Y

PX∗

i−X∗2

=Pµ2Xi−µ2XYi−Y

Pµ2Xi−µ2X2

=

µ2PXi−XYi−Y

µ2

2PXi−X2

=b

β2

µ2

.

1.14 Demonstrate that if Xis demeaned but Yis left in its original units, the intercept

in a regression of Yon demeaned Xwill be equal to Y.

Answer:

Let X∗

i=Xi−Xand b

β∗

1and b

β∗

2be the intercept and slope coefficient in a

regression of Yon X∗. Note that X∗= 0. Then:

b

β∗

1=Y−b

β∗

2X∗=Y.

The slope coefficient is not affected by demeaning:

b

β∗

2=PX∗

i−X∗Yi−Y

PX∗

i−X∗2=P[Xi−X]−0Yi−Y

P[Xi−X]−02=b

β2.

1.15 The regression output shows the result of regressing weight on height using the

same sample as in Exercise 1.9, but with weight and height measured in kilos and

centimetres: WMETRIC = 0.454 ∗WEIGHT04 and HMETRIC = 2.54 ∗HEIGHT .

Confirm that the estimates of the intercept and slope coefficient are as should be

expected from the changes in the units of measurement.

32

1.4. Answers to the starred exercises in the textbook

. gen WTMETRIC = 0.454*WEIGHT04

. gen HMETRIC = 2.54*HEIGHT

. reg WTMETRIC HMETRIC

Source | SS df MS Number of obs = 500

-------------+------------------------------ F( 1, 498) = 176.74

Model | 43554.1641 1 43554.1641 Prob > F = 0.0000

Residual | 122719.394 498 246.424486 R-squared = 0.2619

-------------+------------------------------ Adj R-squared = 0.2605

Total | 166273.558 499 333.213544 Root MSE = 15.698

------------------------------------------------------------------------------

WMETRIC | Coef. Std. Err. t P>|t| [95% Conf. Interval]

-------------+----------------------------------------------------------------

HMETRIC | .9068758 .0682142 13.29 0.000 .7728527 1.040899

_cons | -80.43529 11.77449 -6.83 0.000 -103.5691 -57.30148

------------------------------------------------------------------------------

Answer:

Abbreviate WEIGHT04 to W,HEIGHT to H,WMETRIC to W M, and

HMETRIC to HM.W M = 0.454Wand HM = 2.54H. The slope coefficient and

intercept for the regression in metric units, b

βM

2and b

βM

1, are then given by:

b

βM