The Hitchhiker’s Guide To Python Kenneth Reitz, Tanya Schlusser Best Practices For Development

the-hitchhikers-guide-to-python-kenneth-reitz

Kenneth%20Reitz%2C%20Tanya%20Schlusser-The%20Hitchhiker%E2%80%99s%20Guide%20to%20Python_%20Best%20Practices%20for%20Development

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 322 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Copyright

- Table of Contents

- Preface

- Part I. Getting Started

- Part II. Getting Down to Business

- Part III. Scenario Guide

- Appendix A. Additional Notes

- Index

- About the Authors

- Colophon

Kenneth Reitz & Tanya Schlusser

The

H i t c h h i k e r ’ s

Guide to

P y t h o n

BEST PRACTICES FOR DEVELOPMENT

Kenneth Reitz and Tanya Schlusser

The Hitchhiker’s Guide to Python

Best Practices for Development

Boston Farnham Sebastopol Tokyo

Beijing Boston Farnham Sebastopol Tokyo

Beijing

978-1-491-93317-6

[LSI]

The Hitchhiker’s Guide to Python

by Kenneth Reitz and Tanya Schlusser

Copyright © 2016 Kenneth Reitz, Tanya Schlusser. All rights reserved.

Printed in the United States of America.

Published by O’Reilly Media, Inc., 1005 Gravenstein Highway North, Sebastopol, CA 95472.

O’Reilly books may be purchased for educational, business, or sales promotional use. Online editions are

also available for most titles (http://safaribooksonline.com). For more information, contact our corporate/

institutional sales department: 800-998-9938 or corporate@oreilly.com.

Editor: Dawn Schanafelt

Production Editor: Nicole Shelby, Nicholas Adams

Copyeditor: Jasmine Kwityn

Proofreader: Amanda Kersey

Indexer: WordCo Indexing Services, Inc.

Interior Designer: David Futato

Cover Designer: Randy Comer

Illustrator: Rebecca Demarest

September 2016: First Edition

Revision History for the First Edition

2016-08-26: First Release

See http://oreilly.com/catalog/errata.csp?isbn=9781491933176 for release details.

The O’Reilly logo is a registered trademark of O’Reilly Media, Inc. e Hitchhiker’s Guide to Python, the

cover image, and related trade dress are trademarks of O’Reilly Media, Inc.

While the publisher and the authors have used good faith efforts to ensure that the information and

instructions contained in this work are accurate, the publisher and the authors disclaim all responsibility

for errors or omissions, including without limitation responsibility for damages resulting from the use of

or reliance on this work. Use of the information and instructions contained in this work is at your own

risk. If any code samples or other technology this work contains or describes is subject to open source

licenses or the intellectual property rights of others, it is your responsibility to ensure that your use

thereof complies with such licenses and/or rights.

Dedicated to you

Table of Contents

Preface. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . xi

Part I. Getting Started

1. Picking an Interpreter. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

The State of Python 2 Versus Python 3 3

Recommendations 4

So…3? 4

Implementations 5

CPython 5

Stackless 5

PyPy 6

Jython 6

IronPython 6

PythonNet 6

Skulpt 7

MicroPython 7

2. Properly Installing Python. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

Installing Python on Mac OS X 9

Setuptools and pip 11

virtualenv 11

Installing Python on Linux 12

Setuptools and pip 12

Development Tools 13

virtualenv 14

Installing Python on Windows 15

v

Setuptools and pip 17

virtualenv 18

Commercial Python Redistributions 18

3. Your Development Environment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

Text Editors 22

Sublime Text 23

Vim 23

Emacs 25

TextMate 26

Atom 26

Code 27

IDEs 27

PyCharm/IntelliJ IDEA 29

Aptana Studio 3/Eclipse + LiClipse + PyDev 29

WingIDE 30

Spyder 30

NINJA-IDE 30

Komodo IDE 31

Eric (the Eric Python IDE) 31

Visual Studio 32

Enhanced Interactive Tools 32

IDLE 32

IPython 33

bpython 33

Isolation Tools 33

Virtual Environments 34

pyenv 36

Autoenv 36

virtualenvwrapper 37

Buildout 38

Conda 38

Docker 39

Part II. Getting Down to Business

4. Writing Great Code. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 43

Code Style 43

PEP 8 44

PEP 20 (a.k.a. The Zen of Python) 45

General Advice 46

vi | Table of Contents

Conventions 52

Idioms 54

Common Gotchas 58

Structuring Your Project 61

Modules 61

Packages 65

Object-Oriented Programming 66

Decorators 67

Dynamic Typing 68

Mutable and Immutable Types 69

Vendorizing Dependencies 71

Testing Your Code 72

Testing Basics 74

Examples 76

Other Popular Tools 80

Documentation 82

Project Documentation 82

Project Publication 83

Docstring Versus Block Comments 84

Logging 84

Logging in a Library 85

Logging in an Application 86

Choosing a License 88

Upstream Licenses 88

Options 88

Licensing Resources 90

5. Reading Great Code. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 91

Common Features 92

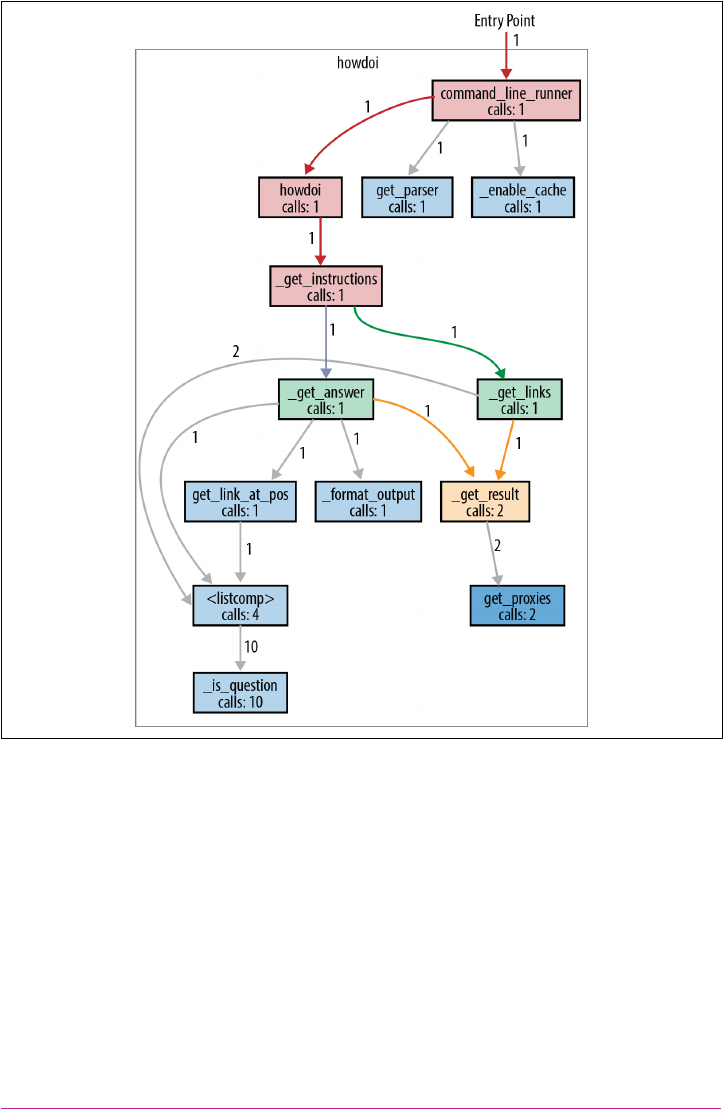

HowDoI 93

Reading a Single-File Script 93

Structure Examples from HowDoI 96

Style Examples from HowDoI 97

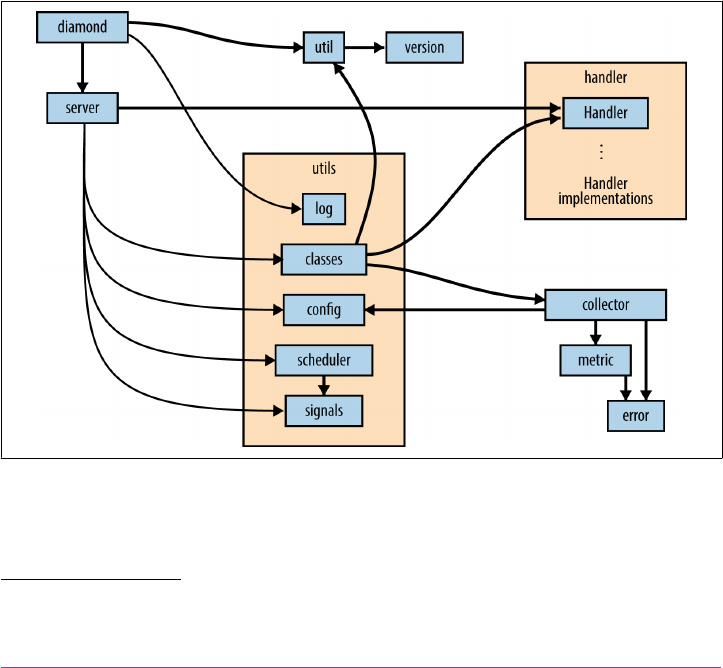

Diamond 99

Reading a Larger Application 100

Structure Examples from Diamond 105

Style Examples from Diamond 109

Tablib 112

Reading a Small Library 112

Structure Examples from Tablib 116

Style Examples from Tablib 124

Requests 126

Table of Contents | vii

Reading a Larger Library 126

Structure Examples from Requests 130

Style Examples from Requests 135

Werkzeug 140

Reading Code in a Toolkit 141

Style Examples from Werkzeug 148

Structure Examples from Werkzeug 149

Flask 155

Reading Code in a Framework 156

Style Examples from Flask 162

Structure Examples from Flask 163

6. Shipping Great Code. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 167

Useful Vocabulary and Concepts 168

Packaging Your Code 169

Conda 169

PyPI 170

Freezing Your Code 172

PyInstaller 174

cx_Freeze 176

py2app 177

py2exe 178

bbFreeze 178

Packaging for Linux-Built Distributions 179

Executable ZIP Files 180

Part III. Scenario Guide

7. User Interaction. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 185

Jupyter Notebooks 185

Command-Line Applications 186

GUI Applications 194

Widget Libraries 194

Game Development 200

Web Applications 200

Web Frameworks/Microframeworks 201

Web Template Engines 204

Web Deployment 209

8. Code Management and Improvement. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 213

Continuous Integration 213

viii | Table of Contents

System Administration 214

Server Automation 216

System and Task Monitoring 220

Speed 223

Interfacing with C/C++/FORTRAN Libraries 232

9. Software Interfaces. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 237

Web Clients 238

Web APIs 238

Data Serialization 243

Distributed Systems 246

Networking 246

Cryptography 251

10. Data Manipulation. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 259

Scientific Applications 260

Text Manipulation and Text Mining 264

String Tools in Python’s Standard Library 264

Image Manipulation 267

11. Data Persistence. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 271

Structured Files 271

Database Libraries 272

A. Additional Notes. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 287

Index. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 295

Table of Contents | ix

Preface

Python is big. Really big. You just won’t believe how vastly hugely mind-bogglingly

big it is.

This guide is not intended to teach you the Python language (we cite lots of great

resources that do that) but is rather an (opinionated) insider’s guide to our communi‐

ty’s favorite tools and best practices. The primary audience is new to mid-level

Python programmers who are interested in contributing to open source or in begin‐

ning a career or starting a company using Python, although casual Python users

should also find Part I and Chapter 5 helpful.

The first part will help you choose the text editor or interactive development environ‐

ment that fits your situation (for example, those using Java frequently may prefer

Eclipse with a Python plug-in) and surveys options for other interpreters that may

meet needs you don’t yet know Python could address (e.g., there’s a MicroPython

implementation based around the ARM Cortex-M4 chip). The second section dem‐

onstrates Pythonic style by highlighting exemplary code in the open source commu‐

nity that will hopefully encourage more in-depth reading and experimentation with

open source code. The final section briefly surveys the vast galaxy of libraries most

commonly used in the Python community—providing an idea of the scope of what

Python can do right now.

All of the royalties from the print version of this book will be directly donated to the

Django Girls, a giddily joyous global organization dedicated to organizing free

Django and Python workshops, creating open-sourced online tutorials, and curating

amazing first experiences with technology. Those who wish to contribute to the

online version can read more about how to do it at our website.

xi

Conventions Used in This Book

The following typographical conventions are used in this book:

Italic

Indicates new terms, URLs, email addresses, filenames, and file extensions.

Constant width

Used for program listings, as well as within paragraphs to refer to program ele‐

ments such as variable or function names, databases, data types, environment

variables, statements, and keywords.

Constant width bold

Shows commands or other text that should be typed literally by the user.

Constant width italic

Shows text that should be replaced with user-supplied values or by values deter‐

mined by context.

This element signifies a tip or suggestion.

This element signifies a general note.

This element indicates a warning or caution.

Safari® Books Online

Safari Books Online is an on-demand digital library that deliv‐

ers expert content in both book and video form from the

world’s leading authors in technology and business.

xii | Preface

Technology professionals, software developers, web designers, and business and crea‐

tive professionals use Safari Books Online as their primary resource for research,

problem solving, learning, and certification training.

Safari Books Online offers a range of plans and pricing for enterprise, government,

education, and individuals.

Members have access to thousands of books, training videos, and prepublication

manuscripts in one fully searchable database from publishers like O’Reilly Media,

Prentice Hall Professional, Addison-Wesley Professional, Microsoft Press, Sams, Que,

Peachpit Press, Focal Press, Cisco Press, John Wiley & Sons, Syngress, Morgan Kauf‐

mann, IBM Redbooks, Packt, Adobe Press, FT Press, Apress, Manning, New Riders,

McGraw-Hill, Jones & Bartlett, Course Technology, and hundreds more. For more

information about Safari Books Online, please visit us online.

How to Contact Us

Please address comments and questions concerning this book to the publisher:

O’Reilly Media, Inc.

1005 Gravenstein Highway North

Sebastopol, CA 95472

800-998-9938 (in the United States or Canada)

707-829-0515 (international or local)

707-829-0104 (fax)

We have a web page for this book, where we list errata, examples, and any additional

information. You can access this page at http://bit.ly/the-hitchhikers-guide-to-python.

To comment or ask technical questions about this book, send email to bookques‐

tions@oreilly.com.

For more information about our books, courses, conferences, and news, see our web‐

site at http://www.oreilly.com.

Find us on Facebook: http://facebook.com/oreilly

Follow us on Twitter: http://twitter.com/oreillymedia

Watch us on YouTube: http://www.youtube.com/oreillymedia

Preface | xiii

Acknowledgments

Welcome, friends, to e Hitchhiker’s Guide to Python.

This book is, to the best of my knowledge, the first of its kind: designed and curated

by a single author (myself—Kenneth), with the majority of the content provided by

hundreds of people from all over the world, for free. Never before in the history of

mankind has the technology been available to allow a beautiful collaboration of this

size and scale.

This book was made possible with:

Community

Love brings us together to conquer all obstacles.

Soware projects

Python, Sphinx, Alabaster, and Git.

Services

GitHub and Read the Docs.

Lastly, I’d like to extend a personal thank you to Tanya, who did all the hard work of

converting this work into book form and preparing it for publication, and the incred‐

ible O’Reilly team—Dawn, Jasmine, Nick, Heather, Nicole, Meg, and the dozens of

other people who worked behind the scenes to make this book the best it could be.

xiv | Preface

PART I

Getting Started

This part of the guide focuses on setting up a Python environment. It was inspired by

Stuart Ellis’s guide for Python on Windows, and consists of the following chapters

and topics:

Chapter 1, Picking an Interpreter

We compare Python 2 and Python 3, and share some interpreter options other

than CPython.

Chapter 2, Properly Installing Python

We show how to get Python, pip, and virtualenv.

Chapter 3, Your Development Environment

We describe our favorite text editors and IDEs for Python development.

1If you don’t do much low-level networking programming, the change was barely noticeable outside of the

print statement becoming a function. Otherwise, “unhappy with” is kind of a polite understatement—devel‐

opers responsible for large, popular web, socket, or networking libraries that deal with unicode and byte

strings had (or still have) extensive changes to make. Details about the change, direct from the first introduc‐

tion of Python 3 to the world, start off with: “Everything you thought you knew about binary data and Uni‐

code has changed.”

CHAPTER 1

Picking an Interpreter

The State of Python 2 Versus Python 3

When choosing a Python interpreter, one looming question is always present:

“Should I choose Python 2 or Python 3?” The answer is not as obvious as one might

think (although 3 is becoming more compelling every day).

Here is the state of things:

• Python 2.7 has been the standard for a long time.

•Python 3 introduced major changes to the language, which some developers are

unhappy with.1

•Python 2.7 will receive necessary security updates until 2020.

• Python 3 is continually evolving, like Python 2 did in years past.

You can now see why this is not such an easy decision.

3

2Someone who’s really amazingly together. We mean, who really knows where their towel is.

3Here’s a link to a high-level list of changes to Python’s Standard Library.

Recommendations

The way we see it, a truly hoopy frood2 would use Python 3. But if you can only use

Python 2, at least you’re still using Python. These are our recommendations:

Use Python 3 if…

•You love Python 3.

• You don’t know which one to use.

•You embrace change.

Use Python 2 if…

• You love Python 2 and are saddened by the future being Python 3.

• The stability requirements of your software would be impacted.3

•Software that you depend on requires it.

So…3?

If you’re choosing a Python interpreter to use, and aren’t opinionated, then use the

newest Python 3.x—every version brings new and improved standard library mod‐

ules, security, and bug fixes. Progress is progress. So only use Python 2 if you have a

strong reason to, such as a Python 2–exclusive library that has no adequate Python 3–

ready alternative, a need for a specific implementation (see “Implementations” on

page 5), or you (like some of us) love and are inspired by Python 2.

Check out Can I Use Python 3? to see whether any Python projects you’re depending

on will block adoption of Python 3.

For further reading, try Python2orPython3, which lays out some of the reasoning

behind a backward-incompatible break in the language specification, and links to

detailed specifications of the differences.

If you’re a beginner, there are far more important things to worry about than cross-

compatibility between all of the Python versions. Just get something working for the

system you’ve got, and cross this bridge later.

4 | Chapter 1: Picking an Interpreter

4The reference implementation accurately reflects the language’s definition. Its behavior is how all other imple‐

mentations should behave.

5C extension modules are written in C for use in Python.

Implementations

When people speak of Python, they often mean not just the language but also the

CPython implementation. Python is actually a specification for a language that can be

implemented in many different ways.

The different implementations may be for compatibility with other libraries, or

maybe for a little speed. Pure Python libraries should work regardless of your Python

implementation, but those built on C (like NumPy) won’t. This section provides a

quick rundown on the most popular implementations.

This guide presumes you’re working with the standard CPython

implementation of Python 3, although we’ll frequently add notes

when relevant for Python 2.

CPython

CPython is the reference implementation4 of Python, written in C. It compiles Python

code to intermediate bytecode which is then interpreted by a virtual machine. CPy‐

thon provides the highest level of compatibility with Python packages and C exten‐

sion modules.5

If you are writing open source Python code and want to reach the widest possible

audience, use CPython. To use packages that rely on C extensions to function, CPy‐

thon is your only implementation option.

All versions of the Python language are implemented in C because CPython is the

reference implementation.

Stackless

Stackless Python is regular CPython (so it should work with all of the libraries that

CPython can use), but with a patch that decouples the Python interpreter from the

call stack, making it possible to change the order of execution of code. Stackless intro‐

duces the contepts of tasklets, which can wrap functions and turn them into “micro-

threads” that can be serialized to disk for future execution and scheduled, by default

in round-robin execution.

Implementations | 5

The greenlet library implements this same stack-switching functionality for CPython

users. Much of the functionality has also been implemented in PyPy.

PyPy

PyPy is a Python interpreter implemented in a restricted statically typed subset of the

Python language called RPython, making certain kinds of optimization possible. The

interpreter features a just-in-time compiler and supports multiple backends, such as

C, Common Intermediate Language (CIL), and Java Virtual Machine (JVM) byte‐

code.

PyPy aims for maximum compatibility with the reference CPython implementation

while improving performance. If you are looking to increase performance of your

Python code, it’s worth giving PyPy a try. On a suite of benchmarks, it’s currently over

five times faster than CPython.

It supports Python 2.7, and PyPy3 targets Python 3. Both versions are available from

the PyPy download page.

Jython

Jython is a Python interpreter implementation that compiles Python code to Java

bytecode which is then executed by the JVM. Additionally, it is able to import and use

any Java class like a Python module.

If you need to interface with an existing Java code base or have other reasons for

needing to write Python code for the JVM, Jython is the best choice.

Jython currently supports up to Python 2.7.

IronPython

IronPython is an implementation of Python for the .NET framework. It can use both

Python and .NET framework libraries, and can also expose Python code to other lan‐

guages in the .NET framework.

Python Tools for Visual Studio integrates IronPython directly into the Visual Studio

development environment, making it an ideal choice for Windows developers.

IronPython supports Python2.7.

PythonNet

Python for .NET is a package that provides near seamless integration of a natively

installed Python installation with the .NET Common Language Runtime (CLR). This

is the inverse approach to that taken by IronPython, meaning PythonNet and IronPy‐

thon complement rather than compete with each other.

6 | Chapter 1: Picking an Interpreter

In conjunction with Mono, PythonNet enables native Python installations on non-

Windows operating systems, such as OS X and Linux, to operate within the .NET

framework. It can be run in addition to IronPython without conflict.

PythonNet supports from Python 2.3 up to Python 2.7; the installation instructions

are on the PythonNet readme page.

Skulpt

Skulpt is a JavaScript implementation of Python. It has not ported all of the CPython

standard library; the library has the modules math, random, turtle, image, unittest,

and parts of time, urllib, DOM, and re. It is intended for use in teaching. There is also

a way to add your own modules.

Notable examples of its use are Interactive Python and CodeSkulptor.

Skulpt supports most of Python 2.7 and Python 3.3. See the Skulpt GitHub page for

details.

MicroPython

MicroPython is an implementation of Python 3 optimized to run on a microcontrol‐

ler; it supports 32-bit ARM processors with the Thumb v2 instruction set, such as the

Cortex-M range used in low-cost microcontrollers. It includes these modules from

Python’s Standard Library, plus a few MicroPython-specific libraries for board details,

memory information, network access, and a modified version of the ctypes optimized

for smaller size. It is not the same as the Raspberry Pi, which has a Debian or other C-

based operating system, with Python installed. The pyboard actually uses MicroPy‐

thon as its operating system.

From here on out, we’re using CPython on a Unix-like system, on

OS X, or on a Windows system.

On to installation—grab your towel!

Implementations | 7

CHAPTER 2

Properly Installing Python

This chapter walks through CPython installation on the Mac OS X, Linux, and Win‐

dows platforms. Sections on packaging tools (like Setuptools and pip) are repetitive,

so you should skip straight to the section for your particular operating system, and

ignore the others.

If you are part of an organization that recommends you use a commercial Python

distribution, such as Anaconda or Canopy, you should follow your vendor’s instruc‐

tions. There is also a small note for you in “Commercial Python Redistributions” on

page 18.

If Python already exists on your system, do not, on any account,

allow anybody to change the symbolic link to the python exe‐

cutable to point at anything other than what it is already pointing

at. That would be almost as bad as reading Vogon poetry out loud.

(Think of the system-installed code that depends on a specific

Python in a specific place…)

Installing Python on Mac OS X

The latest version of Mac OS X, El Capitan, comes with its own Mac-specific imple‐

mentation of Python 2.7.

You don’t need to install or configure anything else to use Python. But we strongly

recommend installing Setuptools, pip, and virtualenv before you start building

Python applications for real-world use (i.e., contributing to collaborative projects).

You’ll learn more about these tools and how to install them later in this section. In

particular, you should always install Setuptools, as it makes it much easier for you to

use other third-party Python libraries.

9

1Other people have different opinions. The OS X Python implementation is not the same. It even has some

separate OS X–specific libraries. A small rant on this subject criticizing our recommendation is at the Stupid

Python Ideas blog. It raises valid concerns about collision of some names for people who switch-hit between

OS X’s CPython 2.7 and the canonical CPython 2.7. If this is a concern, use a virtual environment. Or, at the

very least, leave the OS X Python 2.7 where it is so that the system runs smoothly, install the standard Python

2.7 implemented in CPython, modify your path, and never use the OS X version. Then everything works fine,

including products that rely on Apple’s OS X–specific version.

2The best option is to pick Python 3, honestly, or to use virtual environments from the start and install nothing

but virtualenv and maybe virtualenvwrapper according to the advice of Hynek Schlawack.

3This will ensure that the Python you use is the one Homebrew just installed, while leaving the system’s origi‐

nal Python exactly as it is.

The version of Python that ships with OS X is great for learning, but it’s not good for

collaborative development. The version shipped with OS X may also be out of date

from the official current Python release, which is considered the stable production

version.1 So, if all you want to do is write scripts for yourself to pull information from

websites, or process data, you don’t need anything else. But if you are contributing to

open source projects, or working on a team with people that may have other operat‐

ing systems (or ever intend to in the future2), use the CPython release.

Before you download anything, read through the end of the next few paragraphs for

notes and warnings. Before installing Python, you’ll need to install GCC. It can be

obtained by downloading Xcode, the smaller Command-Line Tools (you need an

Apple account to download it), or the even smaller osx-gcc-installer package.

If you already have Xcode installed, do not install osx-gcc-installer.

In combination, the software can cause issues that are difficult to

diagnose.

While OS X comes with a large number of Unix utilities, those familiar with Linux

systems will notice one key component missing: a decent package manager. Home‐

brew fills this void.

To install Homebrew, open Terminal or your favorite OS X terminal emulator and

run the following code:

$ BREW_URI=https://raw.githubusercontent.com/Homebrew/install/master/install

$ ruby -e "$(curl -fsSL ${BREW_URI})"

The script will explain what changes it will make and prompt you before the installa‐

tion begins. Once you’ve installed Homebrew, insert the Homebrew directory at the

top of your PATH environment variable.3 You can do this by adding the following line

at the bottom of your ~/.prole file:

export PATH=/usr/local/bin:/usr/local/sbin:$PATH

10 | Chapter 2: Properly Installing Python

4A symbolic link is a pointer to the actual file location. You can confirm where the link points to by typing, for

example, ls -l /usr/local/bin/python3 at the command prompt.

5Packages that are compliant with Setuptools at a minimum provide enough information for the library to

identify and obtain all package dependencies. For more information, see the documentation for Packaging

and Distributing Python Projects, PEP 302, and PEP 241.

And then to install Python, run this once in a terminal:

$ brew install python3

Or for Python 2:

$ brew install python

By default, Python will then be installed in /usr/local/Cellar/python3/ or /usr/local/

Cellar/python/ with symbolic links4 to the interpreter at /usr/local/python3 or /usr/

local/python. People who use the --user option to pip install will need to work

around a bug involving distutils and the Homebrew configuration. We recommend

just using virtual environments, described in “virtualenv” on page 11.

Setuptools and pip

Homebrew installs Setuptools and pip for you. The executable installed with pip will

be mapped to pip3 if you are using Python 3 or to pip if you are using Python 2.

With Setuptools, you can download and install any compliant5 Python software over

a network (usually the Internet) with a single command (easy_install). It also ena‐

bles you to add this network installation capability to your own Python software with

very little work.

Both pip’s pip command and Setuptools’s easy_install command are tools to install

and manage Python packages. pip is recommended over easy_install because it

can also uninstall packages, its error messages are more digestible, and partial pack‐

age installs can’t happen (installs that fail partway through will unwind everything

that happened so far). For a more nuanced discussion, see pip vs easy_install in the

Python Packaging User Guide, which should be your first reference for current pack‐

aging information.

To upgrade your installation of pip, type the following in a shell:

$ pip install --upgrade pip

virtualenv

virtualenv creates isolated Python environments. It creates a folder containing all

the necessary executables to use the packages that a Python project would need. Some

Installing Python on Mac OS X | 11

6Advocates of this practice say it is the only way to ensure nothing ever overwrites an existing installed library

with a new version that could break other version-dependent code in the OS.

7For additional details, see the pip installation instructions.

people believe best practice is to install nothing except virtualenv and Setuptools and

to then always use virtual environments.6

To install virtualenv via pip, run pip at the command line of a terminal shell:

$ pip3 install virtualenv

or if you are using Python 2:

$ pip install virtualenv

Once you are in a virtual environment, you can always use the command pip,

whether you are working with Python 2 or Python 3, so that is what we will do in the

rest of this guide. “Virtual Environments” on page 34 describes usage and motivation

in more detail.

Installing Python on Linux

Ubuntu started releasing with only Python 3 installed (and Python 2 available via

apt-get) as of Wily Werewolf (Ubuntu 15.10). All of the details are on Ubuntu’s

Python page. Fedora’s release 23 is the first with only Python 3 (both Python 2.7 and 3

are available on releases 20–22), and otherwise Python 2.7 will be available via its

package manager.

Most parallel installations of Python 2 and Python 3 make a symbolic link from

python2 to a Python 2 interpreter and from python3 to a Python 3 interpreter. If you

decide to use Python 2, the current recommendation on Unix-like systems (see

Python Enhancement Proposal [PEP 394]) is to explicitly specify python2 in your

shebang notation (e.g., #!/usr/bin/env python2 as the first line in the file) rather

than rely on the environment python pointing where you expect.

Although not in PEP 394, it has also become convention to use pip2 and pip3 to link

to the respective pip package installers.

Setuptools and pip

Even if pip is available through a package installer on your system, to ensure you get

the most recent version, follow these steps.

First, download get-pip.py.7

Next, open a shell, change directories to the same location as get-pip.py, and type:

12 | Chapter 2: Properly Installing Python

8Packages that are compliant with Setuptools at a minimum provide enough information for it to identify and

obtain all package dependencies. For more information, see the documentation for Packaging and Distribut‐

ing Python Projects, PEP 302, and PEP 241.

$ wget https://bootstrap.pypa.io/get-pip.py

$ sudo python3 get-pip.py

or for Python 2:

$ wget https://bootstrap.pypa.io/get-pip.py

$ sudo python get-pip.py

This will also install Setuptools.

With the easy_install command that’s installed with Setuptools, you can download

and install any compliant8 Python software over a network (usually the Internet). It

also enables you to add this network installation capability to your own Python soft‐

ware with very little work.

pip is a tool that helps you easily install and manage Python packages. It is recom‐

mended over easy_install because it can also uninstall packages, its error messages

are more digestible, and partial package installs can’t happen (installs that fail partway

through will unwind everything that happened so far). For a more nuanced discus‐

sion, see “pip vs easy_install” in the Python Packaging User Guide, which should

be your first reference for current packaging information.

Development Tools

Almost everyone will at some point want to use Python libraries that depend on C

extensions. Sometimes your package manager will have these, prebuilt, so you can

check first (using yum search or apt-cache search); and with the newer wheels for‐

mat (precompiled, platform-specific binary files), you may be able to get binaries

directly from PyPI, using pip. But if you expect to create C extensions in the future,

or if the people maintaining your library haven’t made wheels for your platform, you

will need the development tools for Python: various C libraries, make, and the GCC

compiler. The following are some useful packages that use C libraries:

Concurrency tools

• The threading library threading

• The event-handling library (Python 3.4+) asyncio

• The coroutine-based networking library curio

•The coroutine-based networking library gevent

• The event-driven networking library Twisted

Installing Python on Linux | 13

Scientic analysis

• The linear algebra library NumPy

• The numerical toolkit SciPy

•The machine learning library scikit-learn

• The plotting library Matplotlib

Data/database interface

• The interface to the HDF5 data format h5py

• The PostgreSQL database adapter Psycopg

•The database abstraction and object-relational mapper SQLAlchemy

On Ubuntu, in a terminal shell, type:

$ sudo apt-get update --fix-missing

$ sudo apt-get install python3-dev # For Python 3

$ sudo apt-get install python-dev # For Python 2

Or on Fedora, in a terminal shell, type:

$ sudo yum update

$ sudo yum install gcc

$ sudo yum install python3-devel # For Python 3

$ sudo yum install python2-devel # For Python 2

and then pip3 install --user desired-package will be able to build tools that

must be compiled. (Or pip install --user desired-package for Python 2.) You

also will need the tool itself installed (for details on how to do this, see the HDF5

installation documentation). For PostgreSQL on Ubuntu, you’d type this in a terminal

shell:

$ sudo apt-get install libpq-dev

or on Fedora:

$ sudo yum install postgresql-devel

virtualenv

virtualenv is a command installed with the virtualenv package that creates isolated

Python environments. It creates a folder containing all the necessary executables to

use the packages that a Python project would need.

To install virtualenv using Ubuntu’s package manager, type:

$ sudo apt-get install python-virtualenv

or on Fedora:

14 | Chapter 2: Properly Installing Python

9Or consider IronPython (discussed in “IronPython” on page 6) if you want to integrate Python with the .NET

framework. But if you’re a beginner, this should probably not be your first Python interpreter. This whole

book talks about CPython.

$ sudo yum install python-virtualenv

Or via pip, run pip at the command line of a terminal shell, and use the --user

option to install it locally for yourself rather than doing a system install:

$ pip3 install --user virtualenv

or if you are using Python 2:

$ sudo pip install --user virtualenv

Once you are in a virtual environment, you can always use the command pip,

whether you are working with Python 2 or Python 3, so that is what we will do in the

rest of this guide. “Virtual Environments” on page 34 describes usage and motivation

in more detail.

Installing Python on Windows

Windows users have it harder than other Pythonistas—because it’s harder to compile

anything on Windows, and many Python libraries use C extensions under the hood.

Thanks to wheels, binaries can be downloaded from PyPI using pip (if they exist), so

things have gotten a little easier.

There are two paths here: a commercial distribution (discussed in “Commercial

Python Redistributions” on page 18) or straight-up CPython. Anaconda is much eas‐

ier, especially when you’re going to do scientific work. Actually, pretty much everyone

who does scientific computing on Windows with Python (except those developing C-

based Python libraries of their own) will recommend Anaconda. But if you know

your way around compiling and linking, if you want to contribute to open source

projects that use C code, or if you just don’t want a commercial distribution (what

you need is free), we hope you consider installing straight-up CPython.9

As time progresses, more and more packages with C libraries will have wheels on

PyPI, and so can be obtained via pip. The trouble comes when required C library

dependencies are not bundled with the wheel. This dependency problem is another

reason you may prefer commercial Python redistributions like Anaconda.

Use CPython if you are the kind of Windows user who:

• Doesn’t need Python libraries that rely on C extensions

•Owns a Visual C++ compiler (not the free one)

• Can handle setting up MinGW

Installing Python on Windows | 15

10 You must know at least what version of Python you’re using and whether you selected 32-bit or 64-bit Python.

We recommend 32-bit, as every third-party DLL will have a 32-bit version and some may not have 64-bit

versions. The most widely cited location to obtain compiled binaries is Christoph Gohlke’s resource site. For

scikit-learn, Carl Kleffner is building binaries using MinGW in preparation for eventual release on PyPI.

11 Anaconda has more free stuff, and comes bundled with Spyder, a better IDE. If you use Anaconda, you’ll find

Anaconda’s free package index and Canopy’s package index to be helpful.

12 Meaning you are 100% certain that any Dynamically Linked Libraries (DLLs) and drivers you need are avail‐

able in 64 bit.

13 The PATH lists every location the operating system will look to find executable programs, like Python and

Python scripts like pip. Each entry is separated by a semicolon.

• Is game to download binaries by hand10 and then pip install the binary

If you will use Python as a substitute for R or MATLAB, or just want to get up to

speed quickly and will install CPython later if necessary (see “Commercial Python

Redistributions” on page 18 for some tips), use Anaconda.11

If you want your interface to be mostly graphical (point-and-click), or if Python is

your first language and this is your first install, use Canopy.

If your entire team has already committed to one of these options, then you should

go with whatever is currently being used.

To install the standard CPython implementation on Windows, you first need to

download the latest version of Python 3 or Python 2.7 from the official website. If you

want to be sure you are installing a fully up-to-date version (or are certain you really,

really want the 64-bit installer12), then use the Python Releases for Windows site to

find the release you need.

The Windows version is provided as an MSI package. This format allows Windows

administrators to automate installation with their standard tools. To install the pack‐

age manually, just double-click the file.

By design, Python installs to a directory with the version number embedded (e.g.,

Python version 3.5 will install at C:\Python35\) so that you can have multiple versions

of Python on the same system without conflicts. Of course, only one interpreter can

be the default application for Python file types. The installer does not automatically

modify the PATH environment variable,13 so that you always have control over which

copy of Python is run.

Typing the full path name for a Python interpreter each time quickly gets tedious, so

add the directories for your default Python version to the PATH. Assuming that the

Python installation you want to use is in C:\Python35\, you will want to add this to

your PATH:

C:\Python35;C:\Python35\Scripts\

16 | Chapter 2: Properly Installing Python

14 Windows PowerShell provides a command-line shell and scripting language that is similar enough to Unix

shells that Unix users will be able to function without reading a manual, but with features specifically for use

with Windows. It is built on the .NET Framework. For more information, see Microsoft’s “Using Windows

PowerShell.”

15 The installer will prompt you whether it’s OK to overwrite the existing installation. Say yes; releases in the

same minor version are backward-compatible.

16 For additional details, see the pip installation instructions.

17 Packages that are compliant with Setuptools at a minimum provide enough information for the library to

identify and obtain all package dependencies. For more information, see the documentation for “Packaging

and Distributing Python Projects,” PEP 302, and PEP 241.

You can do this easily by running the following in PowerShell:14

PS C:\> [Environment]::SetEnvironmentVariable(

"Path",

"$env:Path;C:\Python35\;C:\Python35\Scripts\",

"User")

The second directory (Scripts) receives command files when certain packages are

installed, so it is a very useful addition. You do not need to install or configure any‐

thing else to use Python.

Having said that, we strongly recommend installing Setuptools, pip, and virtualenv

before you start building Python applications for real-world use (i.e., contributing to

collaborative projects). You’ll learn more about these tools and how to install them

later in this section. In particular, you should always install Setuptools, as it makes it

much easier for you to use other third-party Python libraries.

Setuptools and pip

The current MSI packaged installers install Setuptools and pip for you with Python,

so if you are following along with this book and just installed now, you have them

already. Otherwise, the best way to get them with Python 2.7 installed is to upgrade to

the newest release.15 For Python 3, in versions 3.3 and prior, download the script get-

pip.py,16 and run it. Open a shell, change directories to the same location as get-pip.py,

and then type:

PS C:\> python get-pip.py

With Setuptools, you can download and install any compliant17 Python software over

a network (usually the Internet) with a single command (easy_install). It also ena‐

bles you to add this network installation capability to your own Python software with

very little work.

Both pip’s pip command and Setuptools’s easy_install command are tools to install

and manage Python packages. pip is recommended over easy_install because it

can also uninstall packages, its error messages are more digestible, and partial pack‐

Installing Python on Windows | 17

age installs can’t happen (installs that fail partway through will unwind everything

that happened so far). For a more nuanced discussion, see “pip vs easy_install” in

the Python Packaging User Guide, which should be your first reference for current

packaging information.

virtualenv

The virtualenv command creates isolated Python environments. It creates a folder

containing all the necessary executables to use the packages that a Python project

would need. Then, when you activate the environment using a command in the new

folder, it prepends that folder to your PATH environment variable—the Python in the

new folder becomes the first one found, and the packages in its subfolders are the

ones used.

To install virtualenv via pip, run pip at the command line of a PowerShell terminal:

PS C:\> pip install virtualenv

“Virtual Environments” on page 34 describes usage and motivation in more detail.

On OS X and Linux, because Python comes installed for use by system or third-party

software, they must specifically distinguish between the Python 2 and Python 3 ver‐

sions of pip. On Windows, there is no need to do this, so whenever we say pip3, we

mean pip for Windows users. Regardless of OS, once you are in a virtual environ‐

ment, you can always use the command pip, whether you are working with Python 2

or Python 3, so that is what we will do in the rest of this guide.

Commercial Python Redistributions

Your IT department or classroom teaching assistant may have asked you to install a

commercial redistribution of Python. This is intended to simplify the work an orga‐

nization needs to do to maintain a consistent environment for multiple users. All of

the ones listed here provide the C implementation of Python (CPython).

A technical reviewer for the first draft of this chapter said we massively understated

the trouble it is to use a regular CPython installation on Windows for most users: that

even with wheels, compiling and/or linking to external C libraries is painful for

everyone but seasoned developers. We have a bias toward plain CPython, but the

truth is if you’re going to be a consumer of libraries and packages (as opposed to a

creator or contributor), you should just download a commercial redistribution and

get on with your life—doubly so if you’re a Windows user. Later, when you want to

contribute to open source, you can install the regular distribution of CPython.

18 | Chapter 2: Properly Installing Python

18 Intel and Anaconda have a partnership, and all of the Intel accelerated packages are only available using

conda. However, you can always conda install pip and use pip (or pip install conda and use conda)

when you want to.

It is easier to go back to a standard Python installation if you do

not alter the default settings in vendor-specific installations.

Here’s what these commercial distributions have to offer:

e Intel Distribution for Python

The purpose of the Intel Distribution for Python is to deliver high-performance

Python in an easy-to-access, free package. The primary boost to performance

comes from linking Python packages with native libraries such as the Intel Math

Kernel Library (MKL), and enhanced threading capabilities that include the Intel

Threading Building Blocks (TBB) library. It relies on Continuum’s conda for

package management, but also comes with pip. It can be downloaded by itself or

installed from https://anaconda.org/ in a conda environment.18

It provides the SciPy stack and the other common libraries listed in the release

notes (PDF). Customers of Intel Parallel Studio XE get commercial support and

everyone else can use the forums for help. So, this option gives you the scientific

libraries without too much fuss, and otherwise is a regular Python distribution.

Continuum Analytics’ Anaconda

Continuum Analytics’ distribution of Python is released under the BSD license

and provides tons of precompiled science and math binaries on its free package

index. It has a different package manager than pip, called conda, that also man‐

ages virtual environments, but acts more like Buildout (discussed in “Buildout”

on page 38) than like virtualenv—managing libraries and other external depen‐

dencies for the user. The package formats are incompatibile, so each installer

can’t install from the other’s package index.

The Anaconda distribution comes with the SciPy stack and other tools. Ana‐

conda has the best license and the most stuff for free; if you’re going to use a

commercial distribution—especially if you’re already comfortable working with

the command line already and like R or Scala (also bundled)—choose this. If you

don’t need all of those other things, use the miniconda distribution instead. Cus‐

tomers get various levels of indemnification (related to open source licenses, and

who can use what when, or whom gets sued for what), commercial support, and

extra Python libraries.

Commercial Python Redistributions | 19

ActiveState’s ActivePython

ActiveState’s distribution is released under the ActiveState Community License

and is free for evaluation only; otherwise it requires a license. ActiveState also

provides solutions for Perl and Tcl. The main selling point of this distribution is

broad indemnification (again related to open source licenses) for the more than

7,000 packages in its cultivated package index, reachable using the ActiveState

pypm tool, a replacement for pip.

Enthought’s Canopy

Enthought’s distribution is released under the Canopy Software License, with a

package manager, enpkg, that is used in place of pip to connect to Canopy’s pack‐

age index.

Enthought provides free academic licenses to students and staff from degree-

granting institutions. Distinguishing features from Enthought’s distribution are

graphical tools to interact with Python, including its own IDE that resembles

MATLAB, a graphical package manager, a graphical debugger, and a graphical

data manipulation tool. Like the other commercial redistributors, there is indem‐

nification and commercial support, in addition to more packages for customers.

20 | Chapter 2: Properly Installing Python

1If at some point you want to build C extensions for Python, check out “Extending Python with C or C++.” For

more details, see Chapter 15 of Python Cookbook.

CHAPTER 3

Your Development Environment

This chapter provides an overview of the text editors, integrated development envi‐

ronments (IDEs), and other development tools currently popular in the Python edit

→ test → debug cycle.

We unabashedly prefer Sublime Text (discussed in “Sublime Text” on page 23) as an

editor and PyCharm/IntelliJ IDEA (discussed in “PyCharm/IntelliJ IDEA” on page

29) as an IDE but recognize that the best option depends on the type of coding you

do and the other languages you use. This chapter lists a number of the most popular

ones and reasons for choosing them.

Python does not need build tools like Make or Java’s Ant or Maven because it is inter‐

preted, not compiled,1 so we do not discuss them here. But in Chapter 6, we’ll

describe how to use Setuptools to package projects and Sphinx to build documenta‐

tion.

We also won’t cover version control systems, as these are language-independent, but

the people who maintain the C (reference) implementation of Python just moved

from Mercurial to Git (see PEP 512). The original justification to use Mercurial, in

PEP 374, contains a small but useful comparison between today’s top four options:

Subversion, Bazaar, Git, and Mercurial.

This chapter concludes with a brief review of the current ways to manage different

interpreters to replicate different deployment situations while coding.

21

Text Editors

Just about anything that can edit plain text will work for writing Python code; how‐

ever, choosing the right editor can save you hours per week. All of the text editors

listed in this section support syntax highlighting and can be extended via plug-ins to

use static code checkers (linters) and debuggers.

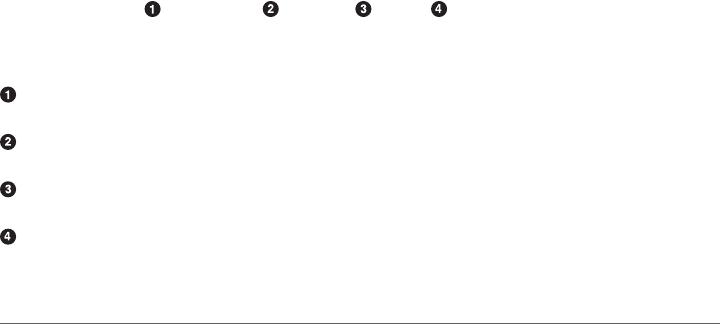

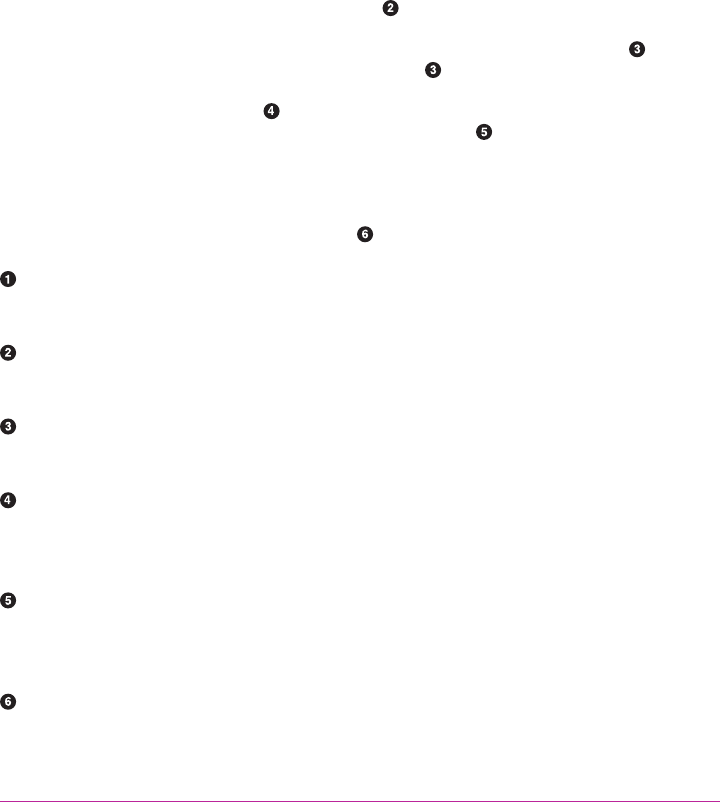

Table 3-1 lists our favorite text editors in descending order of preference and articu‐

lates why a developer would choose one over the other. The rest of the chapter briefly

elaborates on each editor. Wikipedia has a very detailed text editor comparison chart

for those who need to check for specific features.

Table 3-1. Text editors at a glance

Tool Availability Reason to use

Sublime

Text • Open API/has free trial

• OS X, Linux, Windows

• It’s fast, with a small footprint.

• It handles large (> 2 GB) les well.

• Extensions are written in Python.

Vim • Open source/donations appreciated

• OS X, Linux, Windows, Unix

• You already love Vi/Vim.

• It (or at least Vi) is preinstalled on every OS except Windows.

• It can be a console application.

Emacs • Open source/donations appreciated

• OS X, Linux, Windows, Unix

• You already love Emacs.

• Extensions are written in Lisp.

• It can be a console application.

TextMate • Open source/need a license

• OS X only

• Great UI.

• Nearly all interfaces (static code check/debug/test) come

preinstalled.

• Good Apple tools—for example, the interface to xcodebuild (via

the Xcode bundle).

Atom • Open source/free

• OS X, Linux, Windows

• Extensions are written in JavaScript/HTML/CSS.

• Very nice GitHub integration.

Code • Open API (eventually)/free

• OS X, Linux, Windows (but Visual

Studio, the corresponding IDE, only

works on Windows)

• IntelliSense (code completion) worthy of Microsoft’s VisualStudio.

• Good for Windows devs, with support for .Net, C#, and F#.

• Caveat: not yet extensible (to come).

22 | Chapter 3: Your Development Environment

2Snippets are sets of frequently typed code, like CSS styles or class definitions, that can be autocompleted if you

type a few charaters and then hit the Tab key.

Sublime Text

Sublime Text is our recommended text editor for code, markup, and prose. Its speed

is the first thing cited when people recommend it; the number of packages available

(3,000+) is next.

Sublime Text was first released in 2008 by Jon Skinner. Written in Python, it has

excellent support for editing Python code and uses Python for its package extension

API. A “Projects” feature allows the user to add/remove files or folders—these can

then be searched via the “Goto Anything” function, which identifies locations within

the project that contain the search term(s).

You need PackageControl to access the Sublime Text package repository. Popular

packages include SublimeLinter, an interface to the user’s selection of installed static

code checkers; Emmett for web development snippets;2 and Sublime SFTP for remote

editing via FTP.

Anaconda (no relation to the commercial Python distribution of the same name),

released in 2013, by itself turns Sublime almost into an IDE, complete with static code

checks, docstring checks, a test runner, and capability to look up the definition of or

locate uses of highlighted objects.

Vim

Vim is a console-based text editor (with optional GUI) that uses keyboard shortcuts

for editing instead of menus or icons. It was first released in 1991 by Bram Moole‐

naar, and its predecessor, Vi, was released in 1976 by Bill Joy. Both are written in C.

Vim is extensible via vimscript, a simple scripting language. There are options to use

other languages: to enable Python scripting, set the build configuration flags when

building from the C source to --enable-pythoninterp and/or --enable-

python3interp before you build from source. To check whether Python or Python3

are enabled, type :echo has("python") or :echo has("python3"); the result will be

“1” if True or “0” if False.

Vi (and frequently Vim) is available out of the box on pretty much every system but

Windows, and there is an executable installer for Vim on Windows. Users who can

tolerate the learning curve will become extremely efficient; so much that the basic Vi

key bindings are available as a configuration option in most other editors and IDEs.

Text Editors | 23

3Just open the editor by typing vi (or vim) then Enter on the command line, and once inside, type :help then

Enter for the tutorial.

4To locate your home directory on Windows, open Vim and type :echo $HOME.

If you want to work for a large company in any sort of IT role, a

functioning awareness of Vi is necessary.3 Vim is much more fea‐

tureful than Vi, but is close enough that a Vim user can function in

Vi.

If you only develop in Python, you can set the default settings for indentation and

line wrapping to values compliant with PEP 8. To do that, create a file called .vimrc in

your home directory,4 and add the following:

set textwidth=79 " lines longer than 79 columns will be broken

set shiftwidth=4 " operation >> indents 4 columns; << unindents 4 columns

set tabstop=4 " a hard TAB displays as 4 columns

set expandtab " insert spaces when hitting TABs

set softtabstop=4 " insert/delete 4 spaces when hitting a TAB/BACKSPACE

set shiftround " round indent to multiple of 'shiftwidth'

set autoindent " align the new line indent with the previous line

With these settings, newlines are inserted after 79 characters and indentation is set to

four spaces per tab, and if you are inside an indented statement, your next line will

also be indented to the same level.

There is also a syntax plug-in called python.vim that features some improvements

over the syntax file included in Vim 6.1, and a small plug-in, SuperTab, that makes

code completion more convenient by using the Tab key or any other customized keys.

If you also use Vim for other languages, there is a handy plug-in called indent, which

handles indentation settings for Python source files.

These plug-ins supply you with a basic environment for developing in Python. If your

Vim is compiled with +python (the default for Vim 7 and newer), you can also use the

plug-in vim-flake8 to do static code checks from within the editor. It provides the

function Flake8, which runs PEP8 and Pyflakes, and can be mapped to any hotkey or

action you want in Vim. The plug-in will display errors at the bottom of the screen

and provide an easy way to jump to the corresponding line.

If you think it’s handy, you can make Vim call Flake8 every time you save a Python

file by adding the following line to your .vimrc:

autocmd BufWritePost *.py call Flake8()

Or, if you are already using syntastic, you can set it to run Pyflakes on write and show

errors and warnings in the quickfix window. Here’s an example configuration to do

that and also show status and warning messages in the status bar:

24 | Chapter 3: Your Development Environment

5We love Raymond Hettinger. If everyone coded the way he recommends, the world would be a much better

place.

set statusline+=%#warningmsg#

set statusline+=%{SyntasticStatuslineFlag()}

set statusline+=%*

let g:syntastic_auto_loc_list=1

let g:syntastic_loc_list_height=5

Python-mode

Python-mode is a complex solution for working with Python code in Vim. If you like

any of the features listed here, use it (but be aware it will slow down Vim’s launch a

little bit):

•Asynchronous Python code checking (pylint, pyflakes, pep8, mccabe), in any

combination

•Code refactoring and autocompletion with rope

• Fast Python folding (you can hide and show code within indents)

• Support for virtualenv

• The ability to search through Python documentation and run Python code

• Auto PEP8 error fixes

Emacs

Emacs is another powerful text editor. It now has a GUI but can still be run directly in

the console. It is fully programmable (Lisp), and with a little work can be wired up as

a Python IDE. Masochists and Raymond Hettinger5 use it.

Emacs is written in Lisp and was first released in 1976 by Richard Stallman and Guy

L. Steele, Jr. Built-in features include remote edit (via FTP), a calendar, mail send/

read, and even a shrink (Esc, then x, then doctor). Popular plug-ins include

YASnippet to map custom code snippets to keystrokes, and Tramp for debugging. It

is extensible via its own dialect of Lisp, elisp plus.

If you are already an Emacs user, EmacsWiki’s “Python Programming in Emacs” has

the best advice for Python packages and configuration. Those new to Emacs can get

started with the official Emacs tutorial.

There are three major Python modes for Emacs right now:

Text Editors | 25

6Electron is a platform to build cross-platform desktop applications using HTML, CSS, and JavaScript.

•Fabián Ezequiel Gallina’s python.el, now bundled with Emacs (version 24.3+),

implements syntax highlighting, indentation, movement, shell interaction, and a

number of other common Emacs edit-mode features.

•Jorgen Schäfer’s Elpy aims to provide a full-featured interative development envi‐

ronment within Emacs, including debugging, linters, and code completion.

•Python’s source distribution ships with an alternate version in the directory Misc/

python-mode.el. You can download it from the Web as a separate file from

launchpad. It has some tools for programming by speech, additional keystroke

shortcuts, and allows you to set up a complete Python IDE.

TextMate

TextMate is a GUI with Emacs roots that works only on OS X. It has a truly Apple-

worthy user interface that somehow manages to be unobtrusive while exposing all of

the commands with minimal discovery effort.

TextMate is written in C++ and was first released in 2004 by Allan Oddgard and

Ciarán Walsh. Sublime Text (discussed in “Sublime Text” on page 23) can directly

import TextMate snippets, and Microsoft’s Code (discussed in “Code” on page 27) can

directly import TextMate syntax highlighting.

Snippets in any language can be added in bundled groups, and it can otherwise be

extended with shell scripts: the user can highlight some text and pipe it as standard

input through the script using the Cmd+| (pipe) key combination. The script output

replaces the highlighted text.

It has built-in syntax highlighting for Apple’s Swift and Objective C, and (via the

Xcode bundle) an interface to xcodebuild. A veteran TextMate user will not have

problems coding in Python using this editor. New users who don’t spend much time

coding for Apple products are probably better off with the newer cross-platform edi‐

tors that borrow heavily from TextMate’s best-loved features.

Atom

Atom is a “hackable text editor for the 21st century,” according to the folks at GitHub

who created it. It was first released in 2014, is written in CoffeeScript (JavaScript) and

Less (CSS), and is built on top of Electron (formerly Atom Shell),6 which is GitHub’s

application shell based on io.js and Chromium.

26 | Chapter 3: Your Development Environment

7https://github.com/Microso/PTVS/wiki/Features-Matrix

Atom is extensible via JavaScript and CSS, and users can add snippets in any language

(including TextMate-style snippet definitions). As you’d expect, it has very nice Git‐

Hub integration. It comes with native package control and a plethora of packages

(2,000+). Recommended for Python development is Linter combined with linter-

flake8. Web developers may also like the Atom development server, which runs a

small HTTP server and can display the HTML preview within Atom.

Code

Microsoft announced Code in 2015. It is a free, closed source text editor in the Visual

Studio family, also built on GitHub’s Electron. It is cross-platform and has key bind‐

ings just like TextMate.

It comes with an extension API—check out the VS Code Extension Marketplace to

browse existing extensions—and merges what its developers thought were the best

parts of TextMate and Atom with Microsoft. It has IntelliSense (code completion)

worthy of VisualStudio, and good support for .Net, C#, and F#.

Visual Studio (the sister IDE to the Code text editor) still only works on Windows,

even though Code is cross-platform.

IDEs

Many developers use both a text editor and an IDE, switching to the IDE for larger,

more complex, or more collaborative projects. Table 3-2 highlights the distinguishing

features of some popular IDEs, and the sections that follow provide more in-depth

information on each one.

One feature frequently cited as a reason to go to a full IDE (outside of great code

completion and debugging tools) is the ability to quickly switch between Python

interpreters (e.g., from Python 2 to Python 3 to IronPython); this is available in the

free version of all of the IDEs listed in Table 3-2, Visual Studio now offers this at all

levels.7

Additional features that may or may not come free are tools that interface with ticket‐

ing systems, deployment tools (e.g., Heroku or Google App Engine), collaboration

tools, remote debugging, and extra features for using web development frameworks

such as Django.

IDEs | 27

Table 3-2. IDEs at a glance

Tool Availability Reason to use

PyCharm/Intellij

IDEA • Open API/paid professional edition

• Open source/free community

edition

• OS X, Linux, Windows

• Nearly perfect code completion.

• Good support for virtual environments.

• Good support for web frameworks (in the paid version).

Aptana Studio 3 /

Eclipse + LiClipse +

PyDev

• Open source/free

• OS X, Linux, Windows

• You already love Eclipse.

• Java support (LiClipse/Eclipse).

WingIDE • Open API/free trial

• OS X, Linux, Windows

• Great debugger (web)—best of the IDEs listed here.

• Extensible via Python.

Spyder • Open source/free

• OS X, Linux, Windows

• Data science: IPython integrated, and it is bundled with

NumPy, SciPy, and matplotlib.

• The default IDE in popular scientic Python distributions:

Anaconda, Python(x,y), and WinPython.

NINJA-IDE • Open source/donations appreciated

• OS X, Linux, Windows

• Intentionally lightweight.

• Strong Python focus.

Komodo IDE • Open API/text editor (Komodo

Edit) is open source

• OS X, Linux, Windows

• Python, PHP, Perl, Ruby, Node.

• Extensions are based on Mozilla add-ons.

Eric (the Eric

Python IDE) • Open source/donations appreciated

• OS X, Linux, Windows

• Ruby + Python.

• Intentionally lightweight.

• Great debugger (scientic)—can debug one thread while

others continue.

Visual Studio

(Community) • Open API/free community edition

• Paid professional or enterprise

edition

• Windows only

• Great integration with Microsoft languages and tools.

• IntelliSense (code completion) is fantastic.

• Project management and deployment assistance, including

sprint planning tools and manifest templates in the

Enterprise edition.

• Caveat: cannot use virtual environments except in the

Enterprise (most expensive) edition.

28 | Chapter 3: Your Development Environment

PyCharm/IntelliJ IDEA

PyCharm is our favorite Python IDE. The top reasons are its nearly perfect code

completion tools, and the quality of its tools for web development. Those in the sci‐

entific community recommend the free edition (which doesn’t have the web develop‐

ment tools) as just fine for their needs, but not as often as they choose Spyder

(discussed in “Spyder” on page 30).

PyCharm is developed by JetBrains, also known for IntelliJ IDEA, a proprietary Java

IDE that competes with Eclipse. PyCharm (first released in 2010) and IntelliJ IDEA

(first released in 2001) share the same code base, and most of PyCharm’s features can

be brought to IntelliJ with the free Python plug-in.

JetBrains recommends PyCharm for a simpler UI, or IntelliJ IDEA if you want to

introspect into Jython functions, perform cross-language navigation, or do Java-to-

Python refactoring. (PyCharm works with Jython but only as a possible choice for

interpreter; the introspection tools aren’t there.) The two are licensed separately—so

choose before you buy.

The IntelliJ Community Edition and PyCharm Commuity Edition are open sourced

(Apache 2.0 License) and free.

Aptana Studio 3/Eclipse + LiClipse + PyDev

Eclipse is written in Java and was first released in 2001 by IBM as an open, versatile

Java IDE. PyDev, the Eclipse plug-in for Python development, was released in 2003

by Aleks Totic, who later passed the torch to Fabio Zadrozny. It is the most popular

Eclipse plug-in for Python development.

Although the Eclipse community doesn’t push back online when people advocate for

IntelliJ IDEA in forums comparing the two, Eclipse is still the most commonly used

Java IDE. This is relevant for Python developers who interface with tools written in

Java, as many popular ones (e.g., Hadoop, Spark, and proprietary versions of these)

come with instructions and plug-ins for development with Eclipse.

A fork of PyDev is baked into Aptana’s Studio 3, which is an open source suite of

plug-ins bundled with Eclipse that provide an IDE for Python (and Django), Ruby

(and Rails), HTML, CSS, and PHP. The primary focus of Aptanta’s owner, Appcelera‐

tor, is the Appcelerator Studio, a proprietary mobile platform for HTML, CSS, and

JavaScript that requires a monthly license (once your app goes live). General PyDev

and Python support is there, but is not a priority. That said, if you like Eclipse and are

primarily a JavaScript developer making apps for mobile platforms with occasional

forays into Python, especially if you use Appcelerator at work, Aptana’s Studio 3 is a

good choice.

IDEs | 29

LiClipse was born out of a desire to have a better multilanguge experience in Eclipse,

and easy access to fully dark themes (i.e., in addition to the text background, menus

and borders will also be dark). It is a proprietary suite of Eclipse plug-ins written by

Zadrozny; part of the license fees (optional) go to keeping PyDev totally free and

open source (EPL License; the same as Eclipse). It comes bundled with PyDev, so

Python users don’t need to install it themselves.

WingIDE

WingIDE is a Python-specific IDE; probably the second most popular Python IDE

after PyCharm. It runs on Linux, Windows, and OS X.

Its debugging tools are very good and include tools to debug Django templates.

WingIDE users cite its debugger, the quick learning curve, and a lightweight footprint

as reasons they prefer this IDE.

Wing was released in 2000 by Wingware and is written in Python, C, and C++. It

supports extensions but does not have a plug-in repository yet, so users have to

search for others’ blogs or GitHub accounts to find existing packages.

Spyder

Spyder (an abbreviation of Scientific PYthon Development EnviRonment) is an IDE

specifically geared toward working with scientific Python libraries.

Spyder is written in Python by Carlos Córdoba. It is open source (MIT License), and

offers code completion, syntax highlighting, a class and function browser, and object

inspection. Other features are available via community plug-ins.

Spyder includes integration with pyflakes, pylint, and rope, and comes bundled with

NumPy, SciPy, IPython, and Matplotlib. It is itself bundled with the popular Scientific

Python distributions Anaconda, Python(x, y), and WinPython.

NINJA-IDE

NINJA-IDE (from the recursive acronym: “Ninja-IDE Is Not Just Another IDE”) is a

cross-platform IDE designed to build Python applications. It runs on Linux/X11, Mac

OS X, and Windows. Installers for these platforms can be downloaded from NINJA-

IDE’s website.

NINJA-IDE is developed in Python and Qt, open sourced (GPLv3 License), and is

intentionally lightweight. Out of the box, its best-liked feature is that it highlights

problem code when running static code checkers or debugging, and the ability to pre‐

view web pages in-browser. It is extensible via Python, and has a plug-in repository.

The idea is that users will add only the tools they need.

30 | Chapter 3: Your Development Environment

Development slowed for a while, but a new NINJA-IDE v3 is planned for some time

in 2016, and there is still active communication on the NINJA-IDE listserv. The com‐

munity has many native Spanish speakers, including the core development team.

Komodo IDE

Komodo IDE is developed by ActiveState and is a commercial IDE for Windows,

Mac, and Linux. KomodoEdit, the IDE’s text editor, is the open source (Mozilla pub‐

lic license) alternative.

Komodo was first released in 2000 by ActiveState and uses the Mozilla and Scintilla

code base. It is extensible via Mozilla add-ons. It suports Python, Perl, Ruby, PHP, Tcl,

SQL, Smarty, CSS, HTML, and XML. Komodo Edit does not have a debugger, but one

is available as a plug-in. The IDE does not support virtual environments, but does

allow the user to select which Python interpreter to use. Django support is not as

extensive as in WingIDE, PyCharm, or Eclipse + PyDev.

Eric (the Eric Python IDE)

Eric is open source (GPLv3 licence) with more than 10 years of active development. It

is written in Python and based on the Qt GUI toolkit, integrating the Scintilla editor

control. It is named after Eric Idle, a member of the Monty Python troupe, and in

homage to the IDLE IDE, bundled with Python distributions.

Its features include source code autocompletion, syntax highlighting, support for ver‐

sion control systems, Python 3 support, an integrated web browser, a Python shell, an

integrated debugger, and a flexible plug-in system. It does not have extra tools for web

frameworks.

Like NINJA-IDE and Komodo IDE, it is intentionally lightweight. Faithful users

believe it has the best debugging tools around, including the ability to stop and debug

one thread while others continue to run. If you wish to use Matplotlib for interactive

plotting in this IDE, you must use the Qt4 backend:

# This must come first:

import matplotlib

matplotlib.use('Qt4Agg')

# And then pyplot will use the Qt4 backend:

import matplotlib.pyplot as plt

This link is to the most recent documentation for the Eric IDE. Users leaving positive

notes on Eric IDE’s web page are almost all from the scientific computation (e.g.,

weather models, or computational fluid dynamics) community.

IDEs | 31

Visual Studio

Professional programmers who work with Microsoft products on Windows will want

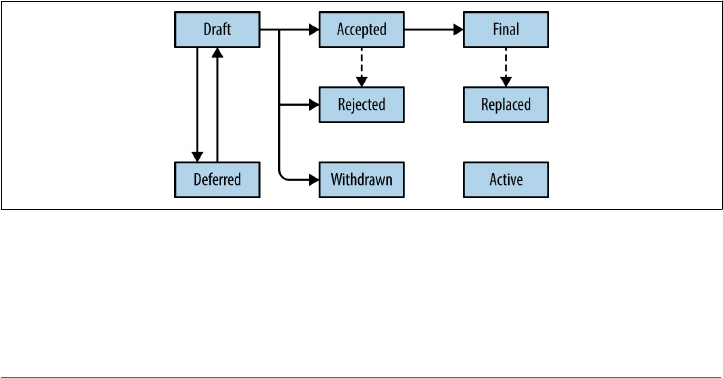

Visual Studio. It is written in C++ and C#, and its first version appeared in 1995. In