NIST Information Security Guide For IT Systems

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 65

Archived NIST Technical Series Publication

The attached publication has been archived (withdrawn), and is provided solely for historical purposes.

It may have been superseded by another publication (indicated below).

Archived Publication

Series/Number:

Title:

Publication Date(s):

Withdrawal Date:

Withdrawal Note:

Superseding Publication(s)

The attached publication has been superseded by the following publication(s):

Series/Number:

Title:

Author(s):

Publication Date(s):

URL/DOI:

Additional Information (if applicable)

Contact:

Latest revision of the

attached publication:

Related information:

Withdrawal

announcement

(link):

Date updated: June ϭ9, 2015

NIST Special Publication 800-30

Risk Management Guide for Information Technology Systems

July 2002

September 2012

SP 800-30 is superseded in its entirety by the publication of

SP 800-30 Revision 1 (September 2012).

NIST Special Publication 800-30 Revision 1

Guide for Conducting Risk Assessments

Joint Task Force Transformation Initiative

September 2012

http://dx.doi.org/10.6028/NIST.SP.800-30r1

Computer Security Division (Information Technology Lab)

SP 800-30 Revision 1 (as of June 19, 2015)

http://csrc.nist.gov/

N/A

NATL INST. OF STAND & TECH

I

NISI

PUBUCATSOt^S

National Institute of

Standards and Technology

Technology Administration

U.S. Department of Commerce

NIST Special Publication

800-30

Risk Management Guide

for Information Technology

Systems

Recommendations of the National Institute

of Standards and Technology

Gary Stoneburner, Alice Goguen, and

Alexis Feringa

COMPUTER SECURITY

rhe National Institute of Standards and Teciinology was established in 1988 by Congress to "assist

industry in the development of technology ...needed to improve product quality, to modernize manufacturing

processes, to ensure product reliability .. . and to facilitate rapid commercialization ...of products based on new scientific

discoveries."

NIST, originally founded as the National Bureau of Standards in 1901, works to strengthen U.S. industry's

competitiveness; advance science and engineering; and improve public health, safety, and the environment. One of the

agency's basic functions is to develop, maintain, and retain custody of the national standards of measurement, and provide

the means and methods for comparing standards used in science, engineering, manufacturing, commerce, industry, and

education with the standards adopted or recognized by the Federal Government.

As an agency of the U.S. Commerce Department's Technology Administration, NIST conducts basic and

applied research in the physical sciences and engineering, and develops measurement techniques, test

methods, standards, and related services. The Institute does generic and precompetitive work on new and

advanced technologies. NIST's research facilities are located at Gaithersburg, MD 20899, and at Boulder, CO 80303.

Major technical operating units and their principal activities are listed below. For more information contact the Publications

and Program Inquiries Desk, 301-975-3058.

Office of the Director

•National Quality Program

•International and Academic Affairs

Technology Services

•Standards Services

•Technology Partnerships

•Measurement Services

•Information Services

Advanced Technology Program

•Economic Assessment

•Information Technology and Applications

•Chemistry and Life Sciences

•Materials and Manufacturing Technology

•Electronics and Photonics Technology

Manufacturing Extension Partnership

Program

•Regional Programs

•National Programs

•Program Development

Electronics and Electrical Engineering

Laboratory

•Microelectronics

•Law Enforcement Standards

•Electricity

•Semiconductor Electronics

•Radio-Frequency Technology

'

•Electromagnetic Technology'

•Optoelectronics'

Materials Science and Engineering

Laboratory

•Theoretical and Computational Materials Science

•Materials Reliability'

•Ceramics

•Polymers

•Metallurgy

•NIST Center for Neutron Research

Chemical Science and Technology

Laboratory

•Biotechnology

•Physical and Chemical Properties'

•Analytical Chemistry

•Process Measurements

•Surface and Microanalysis Science

Physics Laboratory

•Electron and Optical Physics

•Atomic Physics

•Optical Technology

•Ionizing Radiation

•Time and Frequency'

•Quantum Physics'

Manufacturing Engineering

Laboratory

•Precision Engineering

•Automated Production Technology

•Intelligent Systems

•Fabrication Technology

•Manufacturing Systems Integration

Building and Fire Research Laboratory

•Applied Economics

•Structures

•Building Materials

•Building Environment

•Fire Safety Engineering

•Fire Science

Information Technology Laboratory

•Mathematical and Computational Sciences^

•Advanced Network Technologies

•Computer Security

•Information Access and User Interfaces

•High Performance Systems and Services

•Distributed Computing and Information Services

•Software Diagnostics and Conformance Testing

•Statistical Engineering

'At Boulder, CO 80303.

2Some elements at Boulder, CO.

NisT Special Publication 800-30 Risk Management Guide

for Information Technology

Systems

Recommendations of the National Institute

of Standards and Technology

Gary Stoneburner, Alice Goguen, and

Alexis Feringa

COMPUTER SECURITY

Computer Security Division

Information Technology Laboratory

National Institute of Standards and Technology

Gaithersburg, MD 20899-8930

July 2002

U.S. Department of Commerce

Donald L. Evans, Secretary

Technology Administration

Phillip J. Bond, Under Secretary of Commercefor Technology

National Institute of Standards and Technology

Arden L. Bement, Jr., Director

Reports on Information Security Technology

The Information Technology Laboratory (ITL) at the National Institute of Standards and Technology (NIST)

promotes the U.S. economy and public welfare by providing technical leadership for the Nation's measurement

and standards infrastructure. ITL develops tests, test methods, reference data, proof of concept

implementations, and technical analyses to advance the development and productive use of information

technology. ITL's responsibilities include the development of technical, physical, administrative, and

management standards and guidelines for the cost-effective security and privacy of sensitive unclassified

information in Federal computer systems. This Special Publication 800-series reports on ITL's research,

guidance, and outreach efforts in computer security, and its collaborative activities with industry, government,

and academic organizations.

Certain commercial entities, equipment, or materials may be identified in this document in order to describe an

experimental procedure or concept adequately. Such identification is not intended to imply recommendation or

endorsement by the National Institute of Standards and Technology, nor is it intended to imply that the entities,

materials, or equipment are necessarily the best available for the purpose.

National Institute of Standards and Technology Special Publication 800-30

Natl. Inst. Stand. Technol. Spec. Publ. 800-30, 54 pages (July 2002)

CODEN: NSPUE2

U.S. GOVERNMENT PRINTING OFFICE

WASHINGTON: 2002

For sale by the Superintendent of Documents, U.S. Government Printing Office

Internet: bookstore.gpo.gov —Phone: (202) 512- 1800 —Fax: (202) 512-2250

Mail: Stop SSOP Washington, DC 20402-0001

Acknowledgements

The authors, Gary Stonebumer from NIST and Ahce Goguen and Alexis Feringa from Booz

Allen Hamilton, wish to express their thanks to their colleagues at both organizations who

reviewed drafts of this document. In particular, Timothy Grance, Marianne Swanson, and Joan

Hash from NIST and Debra L. Banning, Jeffrey Confer, Randall K. Ewell, and Waseem

Mamlouk from Booz Allen Hamilton, provided valuable insights that contributed substantially to

the technical content of this document. Moreover, we gratefully acknowledge and appreciate the

many comments from the public and private sectors whose thoughtful and constructive

comments improved the quality and utility of this publication.

SP 800-30 Page iii

TABLE OF CONTENTS

1. INTRODUCTION

1.1 Authority

1.2 Purpose

1.3 Objective

1.4 Target Audience

1.5 Related References

1.6 Guide Structure

2. RISK MANAGEMENT OVERVIEW

2.1 Importance of Risk Management

2.2 Integration of Risk Management into SDLC ..

2.3 Key Roles

3. RISK ASSESSMENT

3.1 Step 1:System Characterization

3.1.1 System-Related Information

3.1.2 Information-Gathering Techniques

3.2 Step 2: Threat Identification

3.2.1 Threat-Source Identification

3.2.2 Motivation and Threat Actions

3.3 Step 3: Vulnerability Identification

3.3.1 Vulnerability Sources

3.3.2 System Security Testing ,

3.3.3 Development ofSecurity Requirements Checklist.

3.4 Step 4: Control Analysis

3.4.1 Control Methods

3.4.2 Control Categories

3.4.3 Control Analysis Technique

3.5 Step 5:Likelihood Determination

3.6 Step 6: Impact Analysis

3.7 Step?: Risk Determination

3.7.1 Risk-Level Matrix

3.7.2 Description ofRisk Level

3.8 Step 8: Control Recommendations

3.9 Step 9: Results Documentation

4. RISK MITIGATION

4.1 Risk Mitigation Options

4.2 Risk Mitigation Strategy

4.3 Approach for Control Implementation ,

4.4 Control Categories

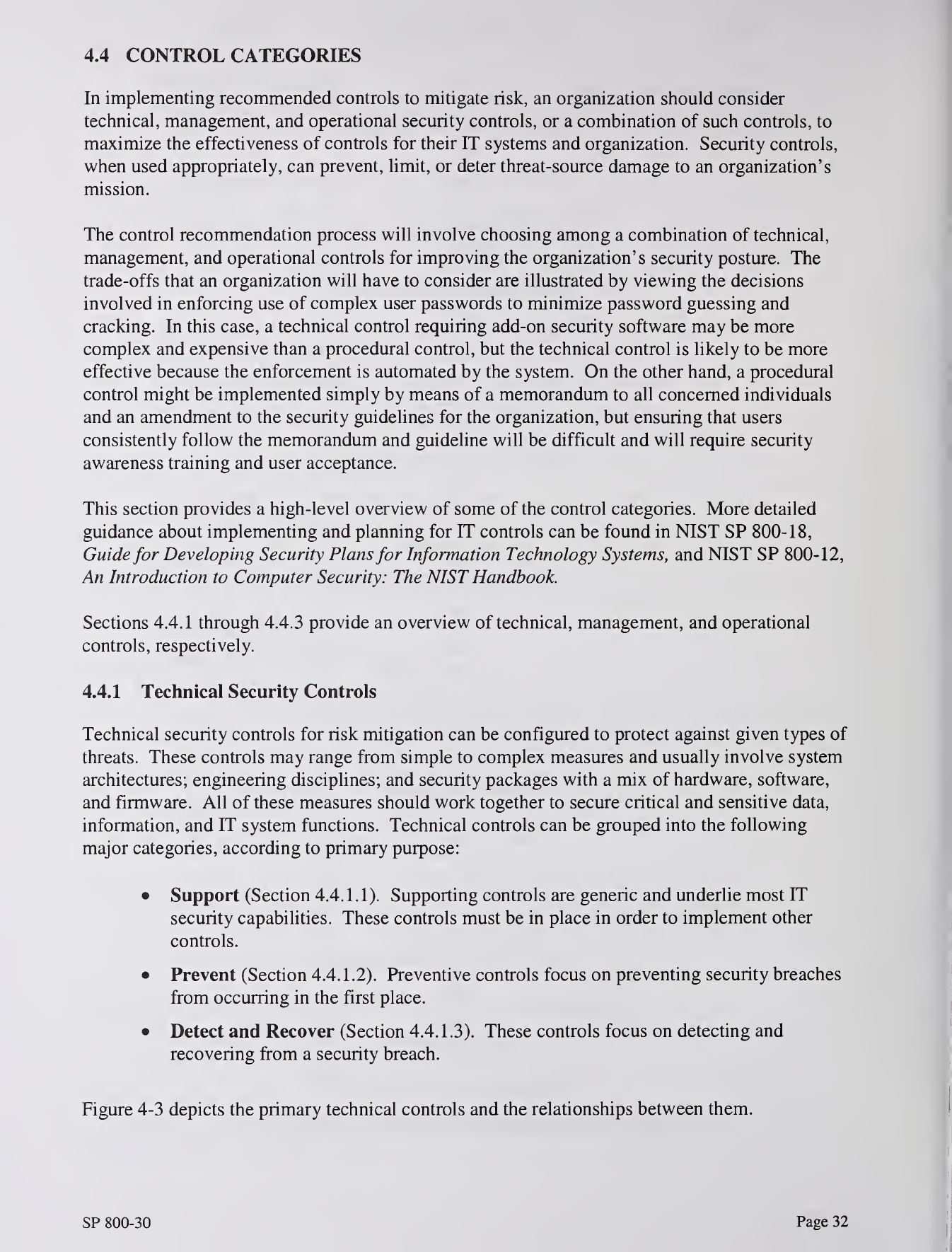

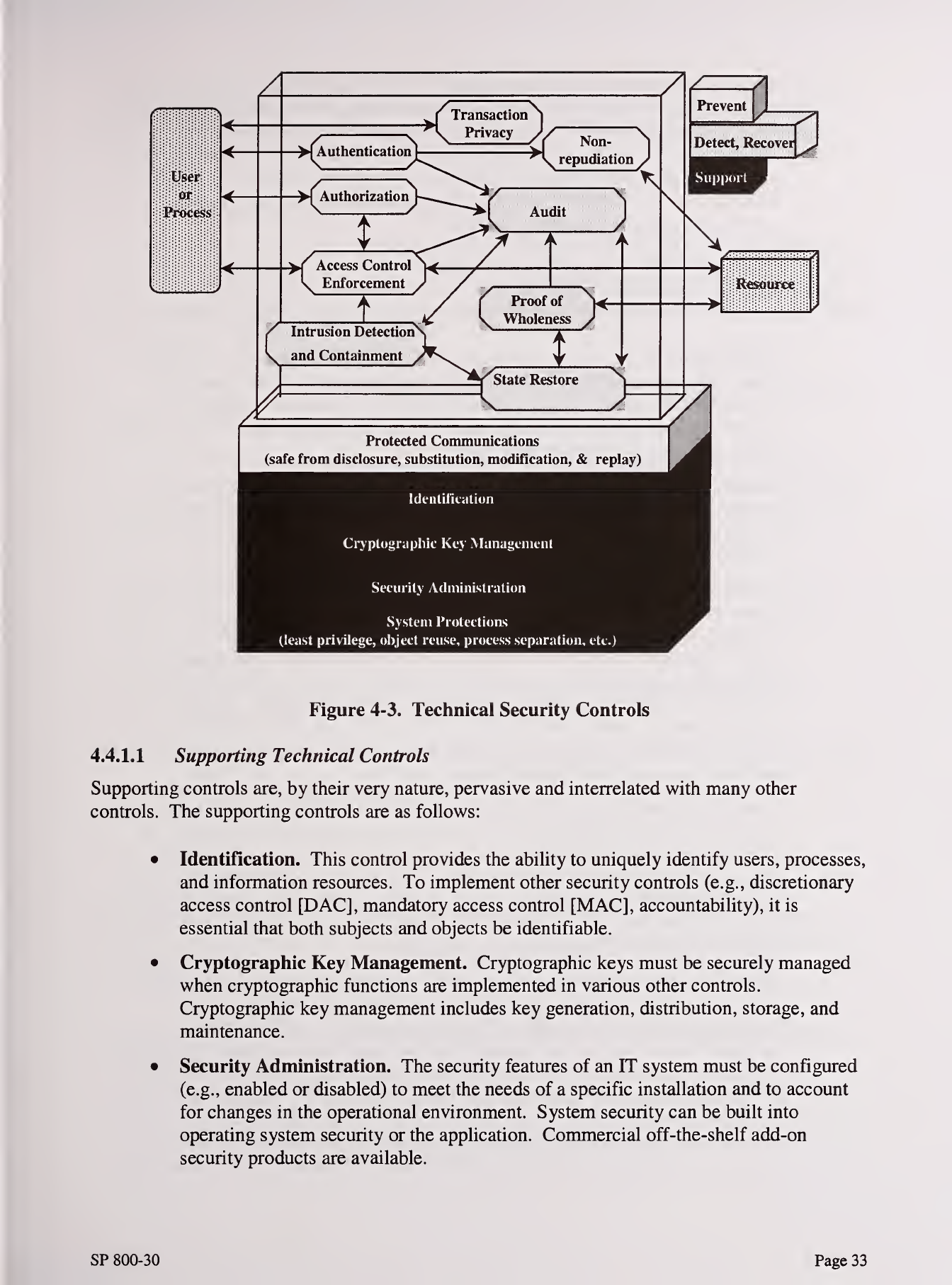

4.4.1 Technical Security Controls

4.4.2 Management Security Controls

4.4.3 Operational Security Controls

4.5 Cost-Benefit Analysis

4.6 Residual Risk

5. EVALUATION AND ASSESSMENT

5.1 Ciood Security Practice

5.2 Keys for Success

Appendix A—Sample Interview Questions A-

Appendix B—Sample Risk Assessment Report Outline B-

SP 800-30 Page

Appendix C—Sample Implementation Safeguard Plan Summary Table C-1

Appendix D—Acronyms D-1

Appendix E—Glossary E-1

Appendix F—References F-

1

LIST OF FIGURES

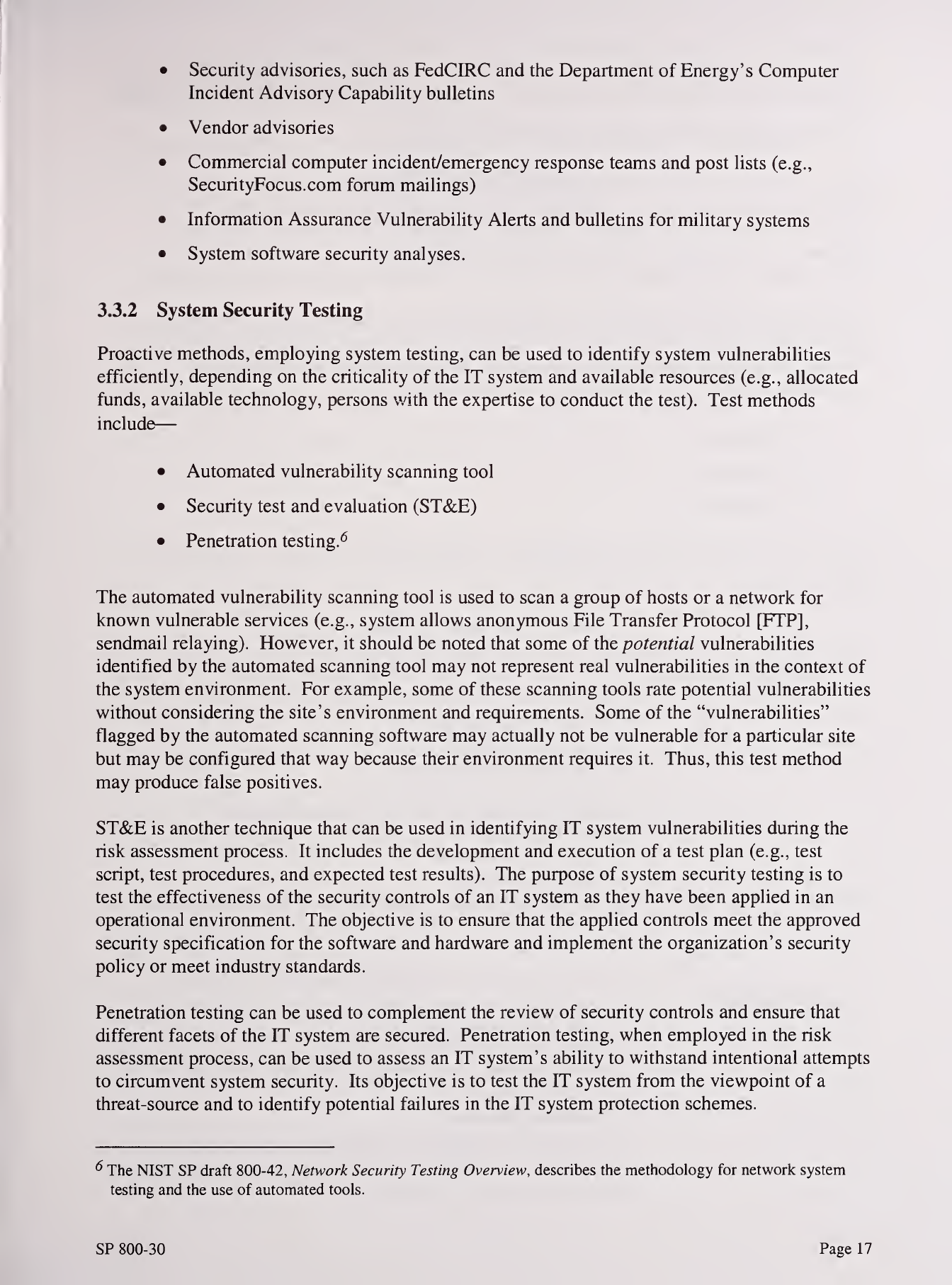

Figure 3-1 Risk Assessment Methodology Flowchart 9

Figure 4-1 Risk Mitigation Action Points 28

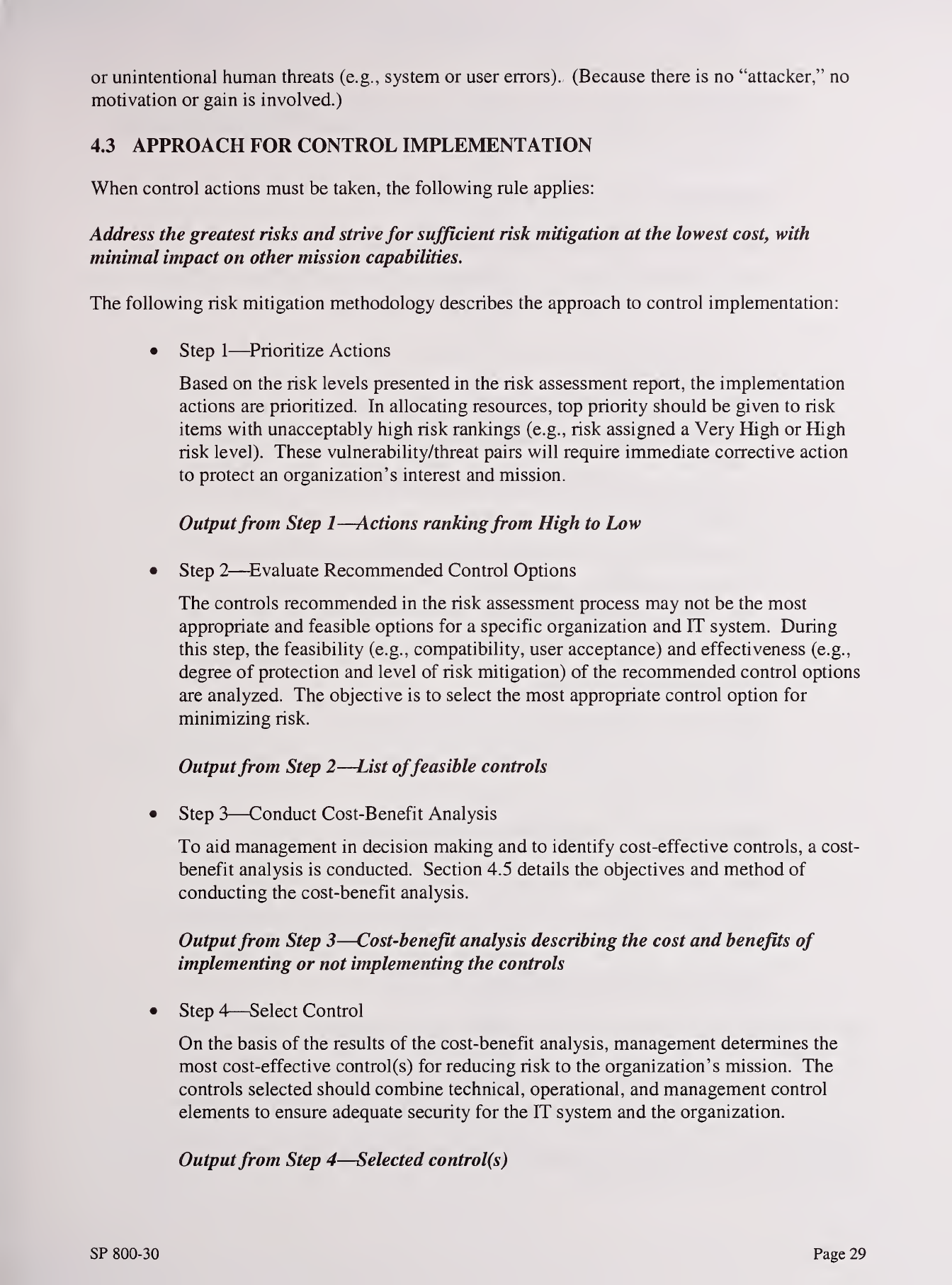

Figure 4-2 Risk Mitigation Methodology Flowchart 3

1

Figure 4-3 Technical Security Controls 33

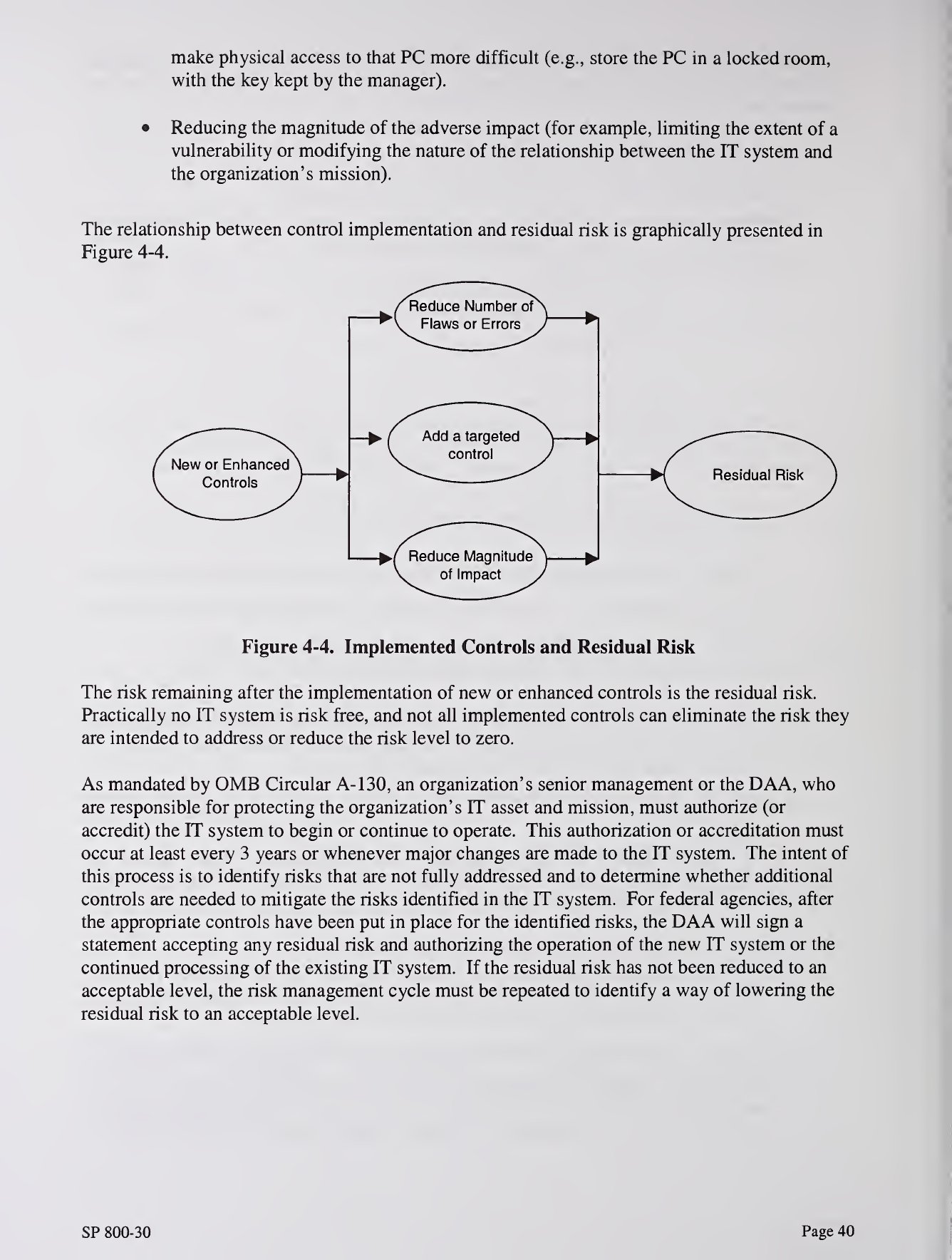

Figure 4-4 Control Implementation and Residual Risk 40

LIST OF TABLES

Table 2-1 Integration of Risk Management to the SDLC 5

Table 3-1 Human Threats: Threat-Source, Motivation, and Threat Actions 14

Table 3-2 Vulnerability/Threat Pairs 15

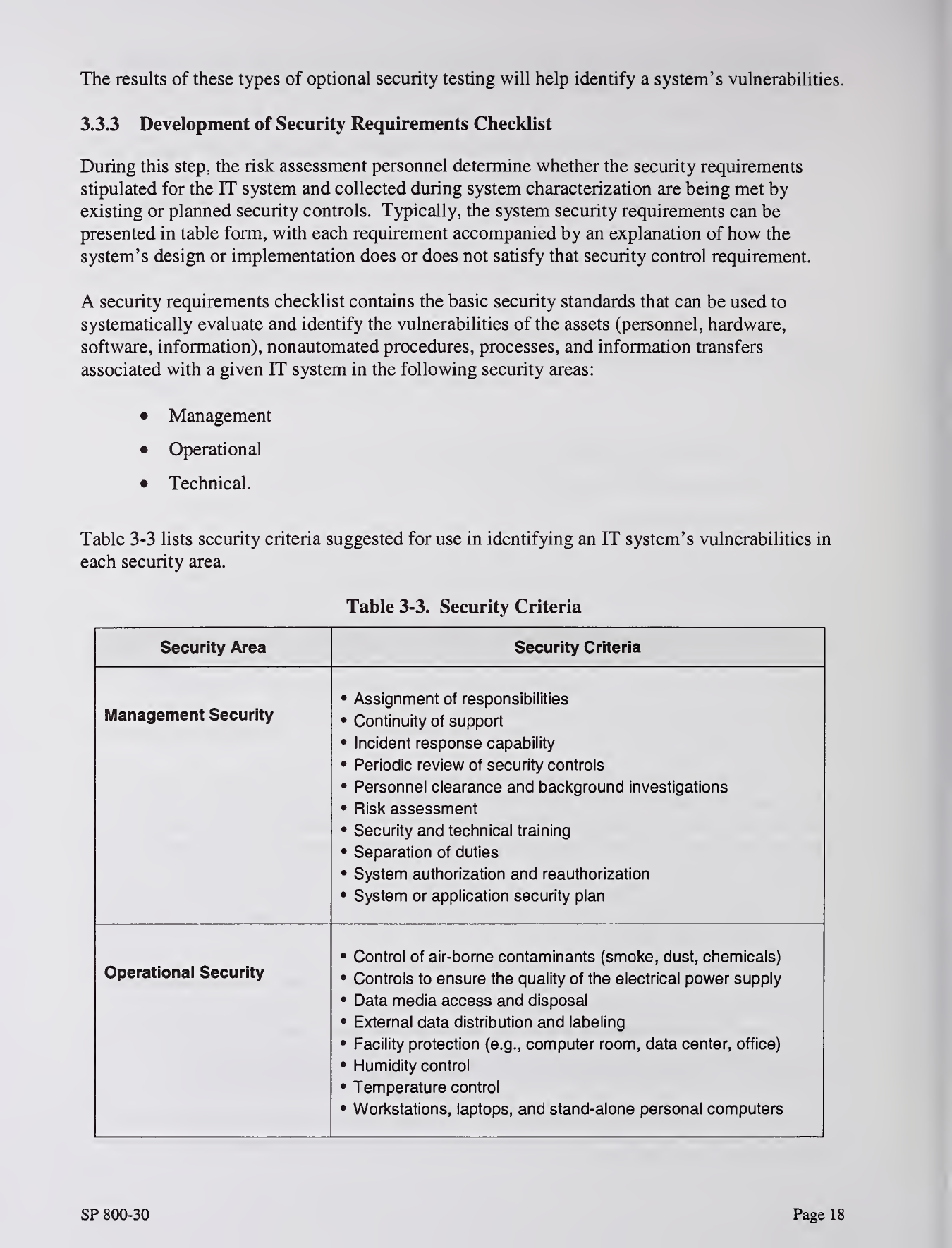

Table 3-3 Security Criteria 18

Table 3-4 Likelihood Definitions 21

Table 3-5 Magnitude of Impact Definitions 23

Table 3-6 Risk-Level Matrix 25

Table 3-7 Risk Scale and Necessary Actions 25

SP 800-30 Page v

I

!

'1. INTRODUCTION

Every organization has amission. In this digital era, as organizations use automated information

technology (IT) systems^ to process their information for better support of their missions, risk

management plays acritical role in protecting an organization's information assets, and therefore

its mission, from IT-related risk.

An effective risk management process is an important component of asuccessful IT security

program. The principal goal of an organization's risk management process should be to protect

the organization and its ability to perform their mission, not just its IT assets. Therefore, the risk

management process should not be treated primarily as atechnical function carried out by the IT

experts who operate and manage the IT system, but as an essential management function of the

organization.

1.1 AUTHORITY

This document has been developed by NIST in furtherance of its statutory responsibilities under

the Computer Security Act of 1987 and the Information Technology Management Reform Act of

1996 (specifically 15 United States Code (U.S.C.) 278 g-3 (a)(5)). This is not aguideline within

the meaning of 15 U.S.C 278 g-3 (a)(3).

These guidelines are for use by Federal organizations which process sensitive information.

They are consistent with the requirements of 0MB Circular A-130, Appendix HI.

The guidelines herein are not mandatory and binding standards. This document may be used by

non-governmental organizations on avoluntary basis. It is not subject to copyright.

Nothing in this document should be taken to contradict standards and guidelines made

mandatory and binding upon Federal agencies by the Secretary of Commerce under his statutory

authority. Nor should these guidelines be interpreted as altering or superseding the existing

authorities of the Secretary of Commerce, the Director of the Office of Management and Budget,

or any other Federal official.

1.2 PURPOSE

Risk is the net negative impact of the exercise of avulnerability, considering both the probability

and the impact of occurrence. Risk management is the process of identifying risk, assessing risk,

and taking steps to reduce risk to an acceptable level. This guide provides afoundation for the

development of an effective risk management program, containing both the definitions and the

practical guidance necessary for assessing and mitigating risks identified within IT systems. The

ultimate goal is to help organizations to better manage IT-related mission risks.

1The term "IT system" refers to ageneral support system (e.g., mainframe computer, mid-range computer, local

area network, agencywide backbone) or amajor application that can run on ageneral support system and whose

use of information resources satisfies aspecific set of user requirements.

SP 800-30 Page 1

In addition, this guide provides information on the selection of cost-effective security controls.^

These controls can be used to mitigate risk for the better protection of mission-critical

information and the IT systems that process, store, and carry this information.

Organizations may choose to expand or abbreviate the comprehensive processes and steps

suggested in this guide and tailor them to their environment in managing IT-related mission

risks.

1.3 OBJECTIVE

The objective of performing risk management is to enable the organization to accomplish its

mission(s) (1) by better securing the IT systems that store, process, or transmit organizational

information; (2) by enabling management to make well-informed risk management decisions to

justify the expenditures that are part of an IT budget; and (3) by assisting management in

authorizing (or accrediting) the IT systems-^ on the basis of the supporting documentation

resulting from the performance of risk management.

1.4 TARGET AUDIENCE

This guide provides acommon foundation for experienced and inexperienced, technical, and

non-technical personnel who support or use the risk management process for their IT systems.

These personnel include

—

•Senior management, the mission owners, who make decisions about the IT security

budget.

•Federal Chief Information Officers, who ensure the implementation of risk

management for agency IT systems and the security provided for these IT systems

•The Designated Approving Authority (DAA), who is responsible for the final

decision on whether to allow operation of an IT system

•The IT security program manager, who implements the security program

•Information system security officers (ISSO), who are responsible for IT security

•IT system owners of system software and/or hardware used to support IT functions.

•Information owners of data stored, processed, and transmitted by the IT systems

•Business or functional managers, who are responsible for the IT procurement process

•Technical support personnel (e.g., network, system, application, and database

administrators; computer specialists; data security analysts), who manage and

administer security for the IT systems

•IT system and application programmers, who develop and maintain code that could

affect system and data integrity

The terms "safeguards" and "controls" refer to risk-reducing measures; these terms are used interchangeably in

this guidance document.

Office of Management and Budget's November 2000 Circular A-130, the Computer Security Act of 1987, and the

Government Information Security Reform Act of October 2000 require that an IT system be authorized prior to

operation and reauthorized at least every 3years thereafter.

SP 800-30 Page 2

•IT quality assurance personnel, who test and ensure the integrity of the IT systems

and data

•Information system auditors, who audit IT systems

•IT consultants, who support clients in risk management.

1.5 RELATED REFERENCES

This guide is based on the general concepts presented in National Institute of Standards and

Technology (NIST) Special Publication (SP) 800-27, Engineering Principles for IT Security,

along with the principles and practices in NIST SP 800-14, Generally Accepted Principles and

Practices for Securing Information Technology Systems. In addition, it is consistent with the

policies presented in Office of Management and Budget (0MB) Circular A-130, Appendix III,

"Security of Federal Automated Information Resources"; the Computer Security Act (CSA) of

1987; and the Government Information Security Reform Act of October 2000.

1.6 GUIDE STRUCTURE

The remaining sections of this guide discuss the following:

•Section 2provides an overview of risk management, how it fits into the system

development life cycle (SDLC), and the roles of individuals who support and use this

process.

•Section 3describes the risk assessment methodology and the nine primary steps in

conducting arisk assessment of an IT system.

•Section 4describes the risk mitigation process, including risk mitigation options and

strategy, approach for control implementation, control categories, cost-benefit

analysis, and residual risk.

•Section 5discusses the good practice and need for an ongoing risk evaluation and

assessment and the factors that will lead to asuccessful risk management program.

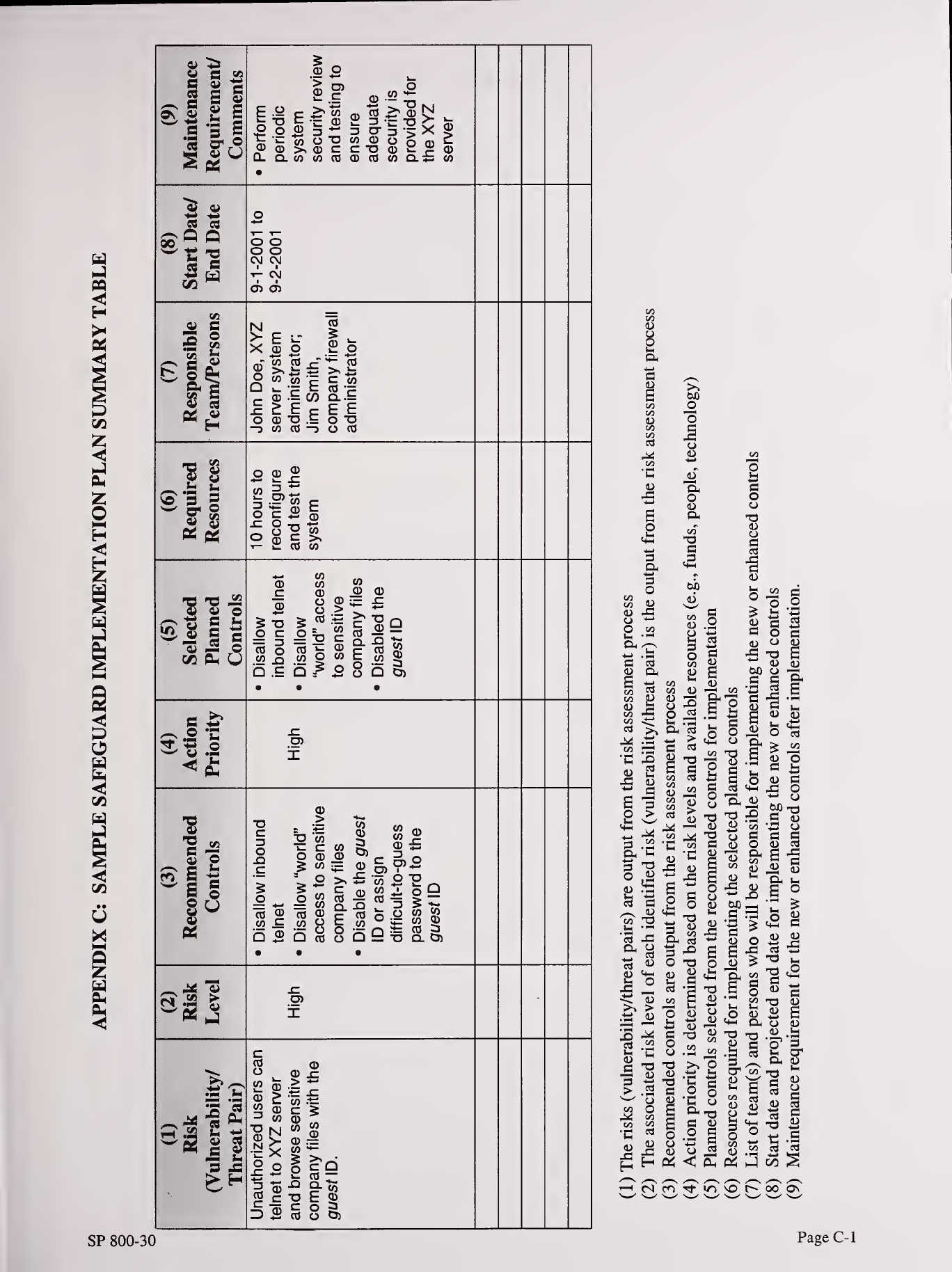

This guide also contains six appendixes. Appendix Aprovides sample interview questions.

Appendix Bprovides asample outline for use in documenting risk assessment results. Appendix

Ccontains asample table for the safeguard implementation plan. Appendix Dprovides alist of

the acronyms used in this document. Appendix Econtains aglossary of terms used frequently in

this guide. Appendix Flists references.

SP 800-30 Page 3

2. RISK MANAGEMENT OVERVIEW

This guide describes the risk management methodology, how it fits into each phase of the SDLC,

and how the risk management process is tied to the process of system authorization (or

accreditation).

2.1 IMPORTANCE OF RISK MANAGEMENT

Risk management encompasses three processes: risk assessment, risk mitigation, and evaluation

and assessment. Section 3of this guide describes the risk assessment process, which includes

identification and evaluation of risks and risk impacts, and recommendation of risk-reducing

measures. Section 4describes risk mitigation, which refers to prioritizing, implementing, and

maintaining the appropriate risk-reducing measures recommended from the risk assessment

process. Section 5discusses the continual evaluation process and keys for implementing a

successful risk management program. The DAA or system authorizing official is responsible for

determining whether the remaining risk is at an acceptable level or whether additional security

controls should be implemented to further reduce or eliminate the residual risk before

authorizing (or accrediting) the IT system for operation.

Risk management is the process that allows IT managers to balance the operational and

economic costs of protective measures and achieve gains in mission capability by protecting the

IT systems and data that support their organizations' missions. This process is not unique to the

IT environment; indeed it pervades decision-making in all areas of our daily lives. Take the case

of home security, for example. Many people decide to have home security systems installed and

pay amonthly fee to a service provider to have these systems monitored for the better protection

of their property. Presumably, the homeowners have weighed the cost of system installation and

monitoring against the value of their household goods and their family's safety, afundamental

"mission" need.

The head of an organizational unit must ensure that the organization has the capabilities needed

to accomplish its mission. These mission owners must determine the security capabilities that

their IT systems must have to provide the desired level of mission support in the face of real-

world threats. Most organizations have tight budgets for IT security; therefore, IT security

spending must be reviewed as thoroughly as other management decisions. Awell-structured risk

management methodology, when used effectively, can help management identify appropriate

controls for providing the mission-essential security capabilities.

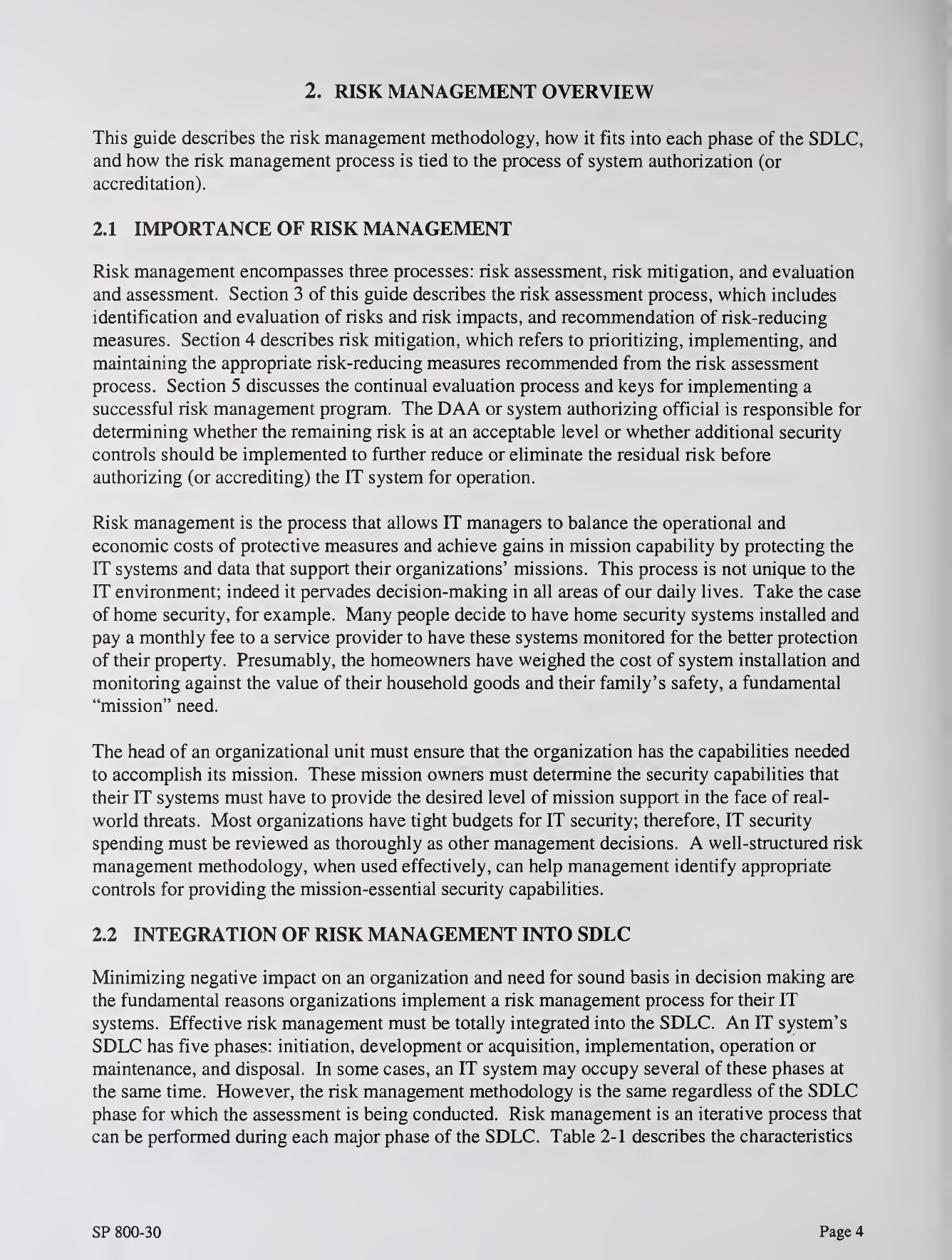

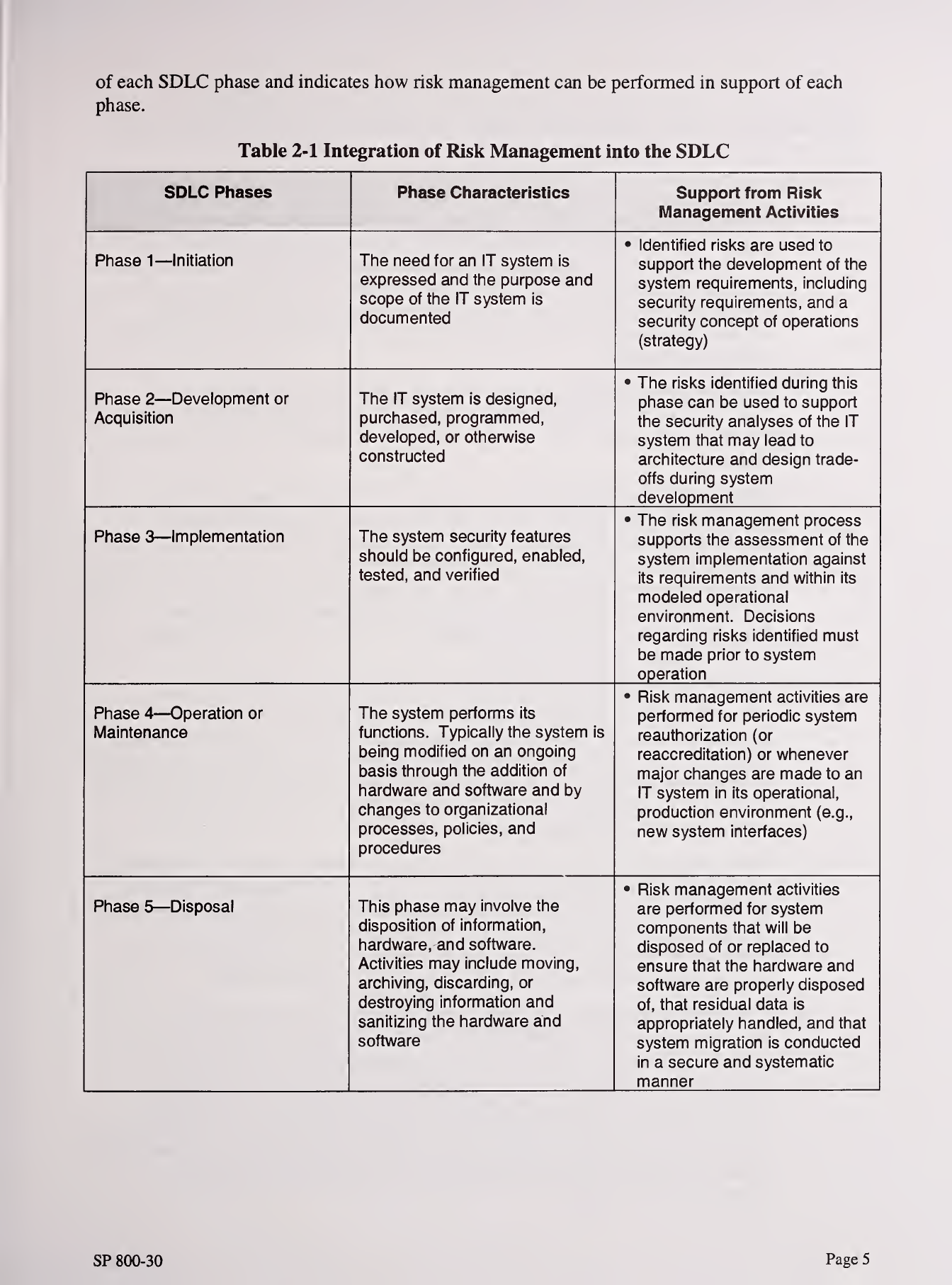

2.2 INTEGRATION OF RISK MANAGEMENT INTO SDLC

Minimizing negative impact on an organization and need for sound basis in decision making are

the fundamental reasons organizations implement arisk management process for their IT

systems. Effective risk management must be totally integrated into the SDLC. An IT system's

SDLC has five phases: initiation, development or acquisition, implementation, operation or

maintenance, and disposal. In some cases, an IT system may occupy several of these phases at

the same time. However, the risk management methodology is the same regardless of the SDLC

phase for which the assessment is being conducted. Risk management is an iterative process that

can be performed during each major phase of the SDLC. Table 2-1 describes the characteristics

SP 800-30 Page 4

of each SDLC phase and indicates how risk management can be performed in support of each

phase.

Table 2-1 Integration of Risk Management into the SDLC

SDLC Phases Phase Characteristics Support from Risl(

l\/lanagement Activities

Phase 1—Initiation The need for an IT system is

expressed and the purpose and

scope of the IT system is

documented

•Identified risks are used to

support the development of the

system requirements, including

security requirements, and a

security concept of operations

(strategy)

Phase 2—Development or

Acquisition

The IT system is designed,

purchased, programmed,

developed, or otherwise

constructed

•The risks identified during this

phase can be used to support

the security analyses of the IT

system that may lead to

architecture and design trade-

offs during system

development

Phase 3—Implementation The system security features

should be configured, enabled,

tested, and verified

•The risk management process

supports the assessment of the

system implementation against

its requirements and within its

modeled operational

environment. Decisions

regarding risks identified must

be made prior to system

operation

Phase 4—Operation or

Maintenance

The system performs its

functions. Typically the system is

being modified on an ongoing

basis through the addition of

hardware and software and by

changes to organizational

processes, policies, and

procedures

•Risk management activities are

performed for periodic system

reauthorization (or

reaccreditation) or whenever

major changes are made to an

IT system in its operational,

production environment (e.g.,

new system interfaces)

Phase 5—Disposal This phase may involve the

disposition of information,

hardware, and software.

Activities may include moving,

archiving, discarding, or

destroying information and

sanitizing the hardware and

software

•Risk management activities

are performed for system

components that will be

disposed of or replaced to

ensure that the hardware and

software are properly disposed

of, that residual data is

appropriately handled, and that

system migration is conducted

in asecure and systematic

manner

SP 800-30 Page 5

2.3 KEY ROLES

Risk management is amanagement responsibility. This section describes the key roles of the

personnel who should support and participate in the risk management process.

•Senior Management. Senior management, under the standard of due care and

ultimate responsibility for mission accomplishment, must ensure that the necessary

resources are effectively applied to develop the capabilities needed to accomplish the

mission. They must also assess and incorporate results of the risk assessment activity

into the decision making process. An effective risk management program that

assesses and mitigates IT-related mission risks requires the support and involvement

of senior management.

•Chief Information Officer (CIO). The CIO is responsible for the agency's IT

planning, budgeting, and performance including its information security components.

Decisions made in these areas should be based on an effective risk management

program.

•System and Information Owners. The system and information owners are

responsible for ensuring that proper controls are in place to address integrity,

confidentiality, and availability of the IT systems and data they own. Typically the

system and information owners are responsible for changes to their IT systems. Thus,

they usually have to approve and sign off on changes to their IT systems (e.g., system

enhancement, major changes to the software and hardware). The system and

information owners must therefore understand their role in the risk management

process and fully support this process.

•Business and Functional Managers. The managers responsible for business

operations and IT procurement process must take an active role in the risk

management process. These managers are the individuals with the authority and

responsibility for making the trade-off decisions essential to mission accomplishment.

Their involvement in the risk management process enables the achievement of proper

security for the IT systems, which, if managed properly, will provide mission

effectiveness with aminimal expenditure of resources.

•ISSO. IT security program managers and computer security officers are responsible

for their organizations' security programs, including risk management. Therefore,

they play aleading role in introducing an appropriate, structured methodology to help

identify, evaluate, and minimize risks to the IT systems that support their

organizations' missions. ISSOs also act as major consultants in support of senior

management to ensure that this activity takes place on an ongoing basis.

•IT Security Practitioners. IT security practitioners (e.g., network, system,

application, and database administrators; computer specialists; security analysts;

security consultants) are responsible for proper implementation of security

requirements in their IT systems. As changes occur in the existing IT system

environment (e.g., expansion in network connectivity, changes to the existing

infrastructure and organizational policies, introduction of new technologies), the IT

security practitioners must support or use the risk management process to identify and

assess new potential risks and implement new security controls as needed to

safeguard their IT systems.

SP 800-30 Page 6

•Security Awareness Trainers (Security/Subject Matter Professionals). The

organization's personnel are the users of the IT systems. Use of the IT systems and

data according to an organization's policies, guidelines, and rules of behavior is

critical to mitigating risk and protecting the organization's IT resources. To minimize

risk to the IT systems, it is essential that system and application users be provided

with security awareness training. Therefore, the IT security trainers or

security/subject matter professionals must understand the risk management process so

that they can develop appropriate training materials and incorporate risk assessment

into training programs to educate the end users.

SP 800-30 Page 7

3. RISK ASSESSMENT

Risk assessment is the first process in the risk management methodology. Organizations use risk

assessment to determine the extent of the potential threat and the risk associated with an IT

system throughout its SDLC. The output of this process helps to identify appropriate controls for

reducing or eliminating risk during the risk mitigation process, as discussed in Section 4.

Risk is afunction of the likelihood of agiven threat-source's exercising aparticular potential

vulnerability, and the resulting impact of that adverse event on the organization.

To determine the likelihood of afuture adverse event, threats to an IT system must be analyzed

in conjunction with the potential vulnerabilities and the controls in place for the IT system.

Impact refers to the magnitude of harm that could be caused by athreat's exercise of a

vulnerability. The level of impact is governed by the potential mission impacts and in turn

produces arelative value for the IT assets and resources affected (e.g., the criticality and

sensitivity of the IT system components and data). The risk assessment methodology

encompasses nine primary steps, which are described in Sections 3.1 through 3.9

—

•Step 1—System Characterization (Section 3.1)

•Step 2—^Threat Identification (Section 3.2)

•Step 3—^Vulnerability Identification (Section 3.3)

•Step A—Control Analysis (Section 3.4)

•Step 5—Likelihood Determination (Section 3.5)

•Step 6—Impact Analysis (Section 3.6)

•Step 7—Risk Determination (Section 3.7)

•Step 8—Control Recommendations (Section 3.8)

•Step 9—Results Documentation (Section 3.9).

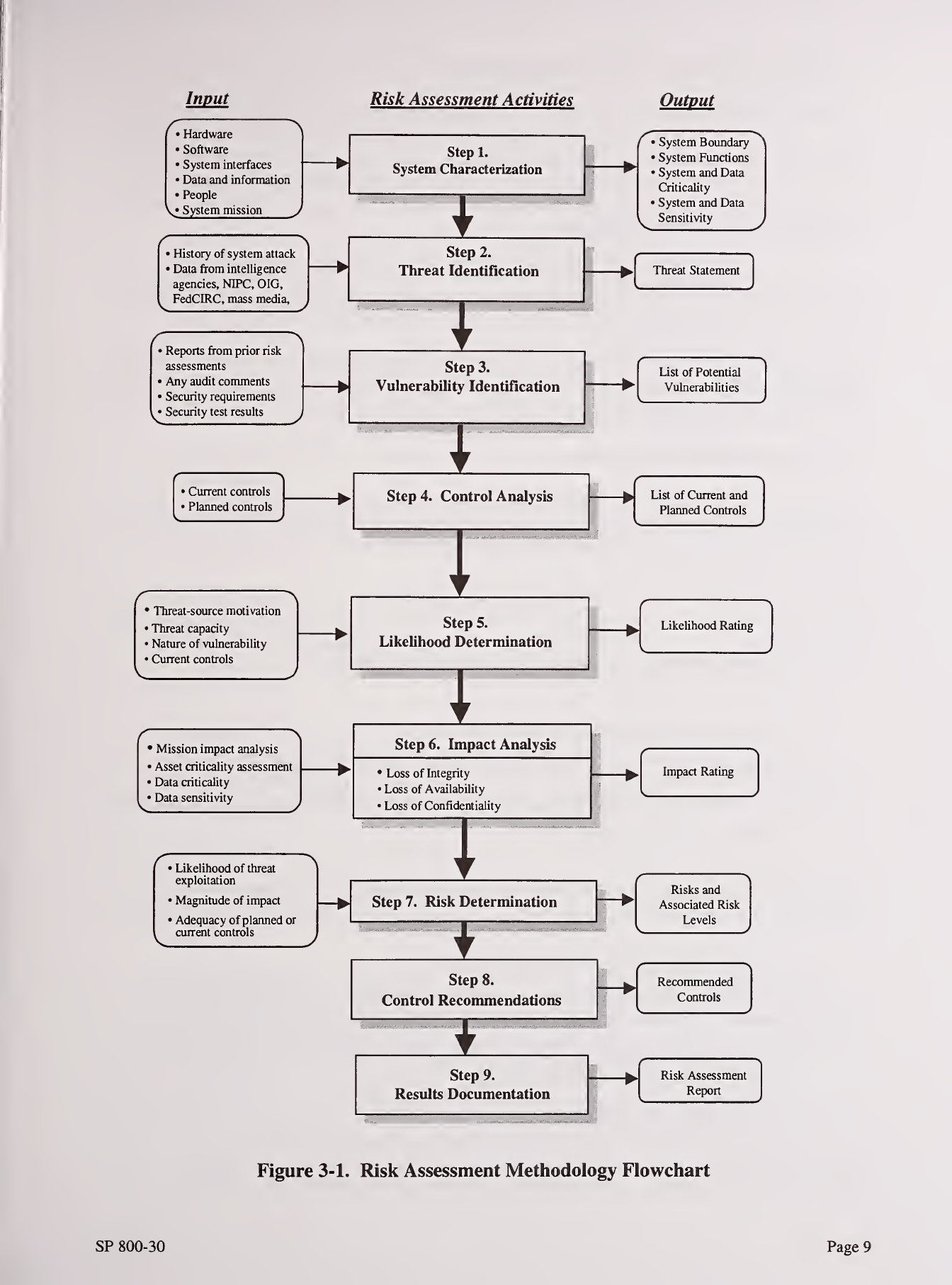

Steps 2, 3, 4, and 6can be conducted in parallel after Step 1has been completed. Figure 3-1

depicts these steps and the inputs to and outputs from each step.

SP 800-30 Pages

Input Risk Assessment Activities Output

•Hardware

•Software

•System interfaces

•Data and information

•People

^» System mission ,

'History of system attack

'Data from intelligence

agencies, NIPC, OIG.

FedCIRC, mass media.

•Reports from prior risk

assessments

•Any audit comments

•Security requirements

•Security test results

>Current controls

'Planned controls

•Threat-source motivation

•Threat capacity

•Nahire of vulnerability

•Current controls

•Mission impact analysis

•Asset criticality assessment

•Data criticality

•Data sensitivity

'Likelihood of threat

exploitation

'Magnitude of impact

'Adequacy of plaimed or

current controls

Step 1.

System Characterization

I

Step 2.

Threat Identification

I

Step 3.

->| Vulnerability Identification

I

Step 4. Control Analysis

I

I

Step 6. Impact Analysis

•Loss of Integrity

•Loss of Availability

•Loss of Confidentiality

I

Step 7. Risk Determination

Step 9.

Results Documentation

•System Boundary

•System Functions

•System and Data

Criticality

•System and Data

Sensitivity

Threat Statement

List of Potential

Vulnerabilities

List of Current and

Planned Controls

Step 5.

1

—Likelihood Determination

Likelihood Rating

Impact Rating

Risks and

Associated Risk

Levels

r

Step 8. —Recommended

Control Recommendations Controls

Risk Assessment

Report

Figure 3-1. Risk Assessment Methodology Flowchart

SP 800-30 Page 9

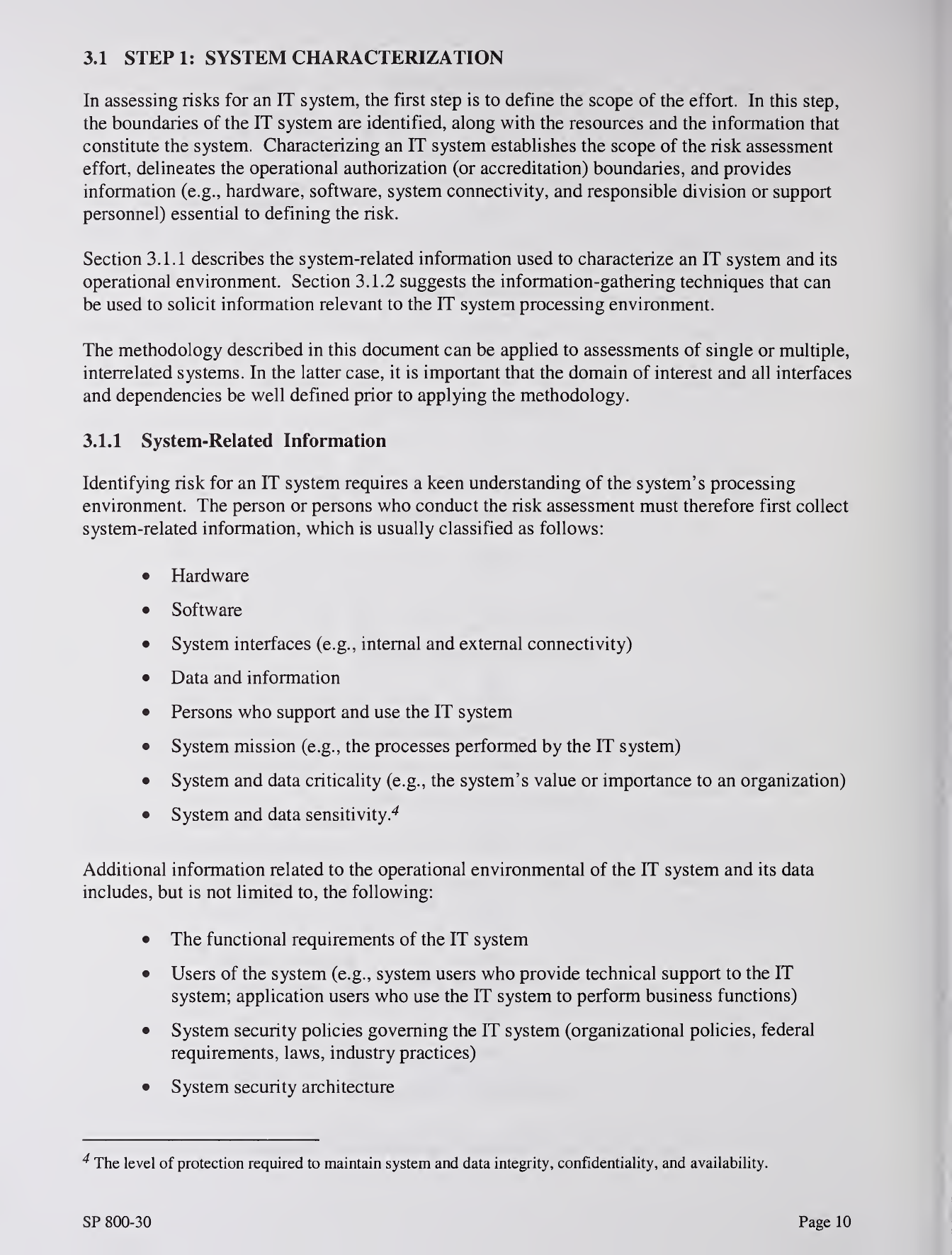

3.1 STEPl: SYSTEM CHARACTERIZATION

In assessing risks for an IT system, the first step is to define the scope of the effort. In this step,

the boundaries of the IT system are identified, along with the resources and the information that

constitute the system. Characterizing an IT system establishes the scope of the risk assessment

effort, delineates the operational authorization (or accreditation) boundaries, and provides

information (e.g., hardware, software, system connectivity, and responsible division or support

personnel) essential to defining the risk.

Section 3.1.1 describes the system-related information used to characterize an IT system and its

operational environment. Section 3.1.2 suggests the information-gathering techniques that can

be used to solicit information relevant to the IT system processing environment.

The methodology described in this document can be applied to assessments of single or multiple,

interrelated systems. In the latter case, it is important that the domain of interest and all interfaces

and dependencies be well defined prior to applying the methodology.

3.1.1 System-Related Information

Identifying risk for an IT system requires a keen understanding of the system's processing

environment. The person or persons who conduct the risk assessment must therefore first collect

system-related information, which is usually classified as follows:

•Hardware

•Software

•System interfaces (e.g., internal and external connectivity)

•Data and information

•Persons who support and use the IT system

•System mission (e.g., the processes performed by the IT system)

•System and data criticality (e.g., the system's value or importance to an organization)

•System and data sensitivity.^

Additional information related to the operational environmental of the IT system and its data

includes, but is not limited to, the following:

•The functional requirements of the IT system

•Users of the system (e.g., system users who provide technical support to the IT

system; application users who use the IT system to perform business functions)

•System security policies governing the IT system (organizational policies, federal

requirements, laws, industry practices)

•System security architecture

^The level of protection required to maintain system and data integrity, confidentiality, and availability.

SP 800-30 Page 10

•Current network topology (e.g., network diagram)

•Information storage protection that safeguards system and data availability, integrity,

and confidentiality

•Flow of information pertaining to the IT system (e.g., system interfaces, system input

and output flowchart)

•Technical controls used for the IT system (e.g., built-in or add-on security product

that supports identification and authentication, discretionary or mandatory access

control, audit, residual information protection, encryption methods)

•Management controls used for the IT system (e.g., rules of behavior, security

planning)

•Operational controls used for the IT system (e.g., personnel security, backup,

contingency, and resumption and recovery operations; system maintenance; off-site

storage; user account establishment and deletion procedures; controls for segregation

of user functions, such as privileged user access versus standard user access)

•Physical security environment of the IT system (e.g., facility security, data center

policies)

•Environmental security implemented for the IT system processing environment (e.g.,

controls for humidity, water, power, pollution, temperature, and chemicals).

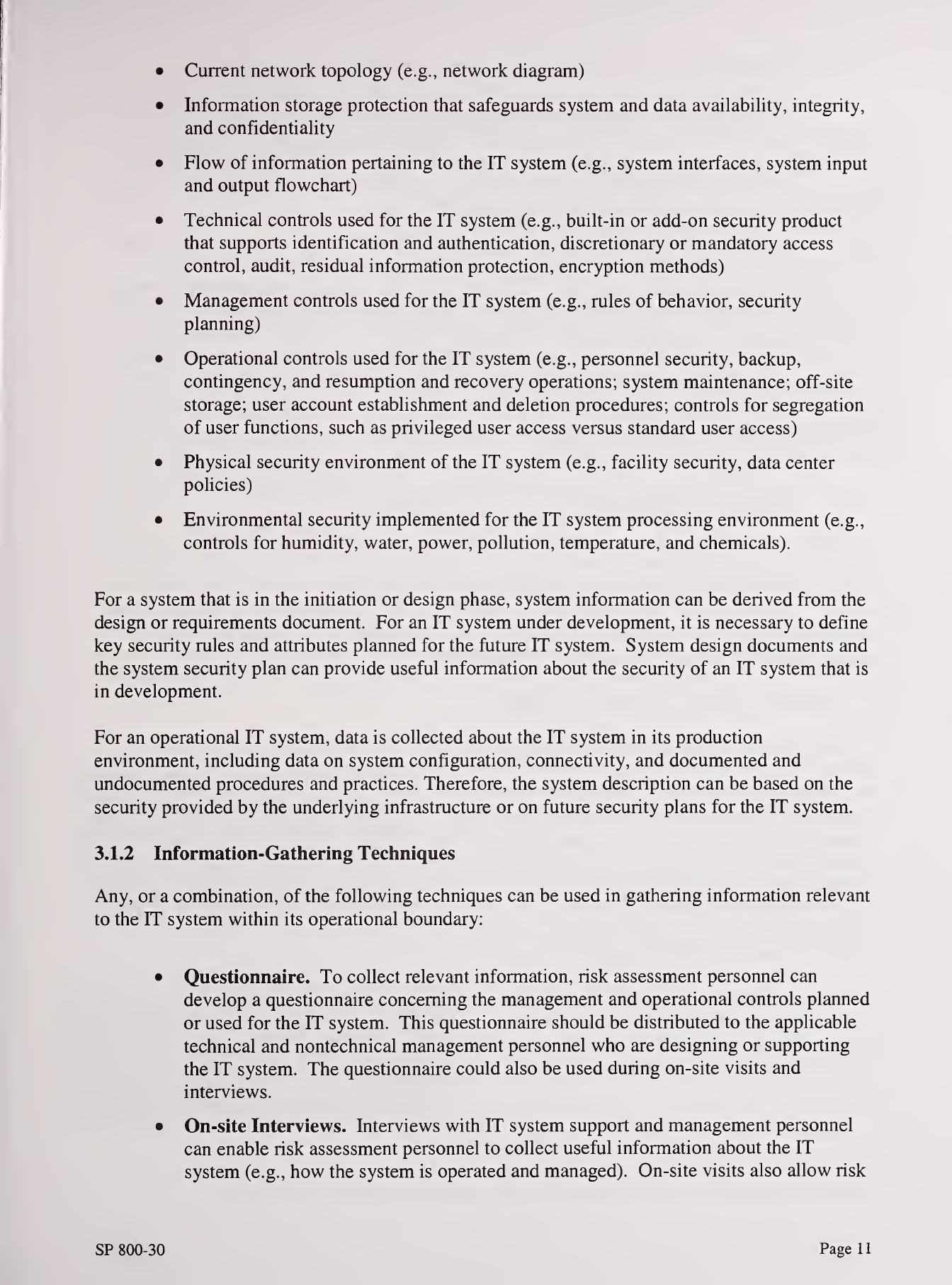

For asystem that is in the initiation or design phase, system information can be derived from the

design or requirements document. For an IT system under development, it is necessary to define

key security rules and attributes planned for the future IT system. System design documents and

the system security plan can provide useful information about the security of an IT system that is

in development.

For an operational IT system, data is collected about the IT system in its production

environment, including data on system configuration, connectivity, and documented and

undocumented procedures and practices. Therefore, the system description can be based on the

security provided by the underlying infrastructure or on future security plans for the IT system.

3.1.2 Information-Gathering Techniques

Any, or acombination, of the following techniques can be used in gathering information relevant

to the IT system within its operational boundary:

•Questionnaire. To collect relevant information, risk assessment personnel can

develop aquestionnaire concerning the management and operational controls planned

or used for the IT system. This questionnaire should be distributed to the applicable

technical and nontechnical management personnel who are designing or supporting

the IT system. The questionnaire could also be used during on-site visits and

interviews.

•On-site Interviews. Interviews with IT system support and management personnel

can enable risk assessment personnel to collect useful information about the IT

system (e.g., how the system is operated and managed). On-site visits also allow risk

SP 800-30 Page 1

1

assessment personnel to observe and gather information about the physical,

environmental, and operational security of the IT system. Appendix Acontains

sample interview questions asked during interviews with site personnel to achieve a

better understanding of the operational characteristics of an organization. For

systems still in the design phase, on-site visit would be face-to-face data gathering

exercises and could provide the opportunity to evaluate the physical environment in

which the IT system will operate.

•Document Review. Policy documents (e.g., legislative documentation, directives),

system documentation (e.g., system user guide, system administrative manual,

system design and requirement document, acquisition document), and security-related

documentation (e.g., previous audit report, risk assessment report, system test results,

system security plan^, security policies) can provide good information about the

security controls used by and planned for the IT system. An organization's mission

impact analysis or asset criticality assessment provides information regarding system

and data criticality and sensitivity.

•Use of Automated Scanning Tool. Proactive technical methods can be used to

collect system information efficiently. For example, anetwork mapping tool can

identify the services that run on alarge group of hosts and provide aquick way of

building individual profiles of the target IT system(s).

Information gathering can be conducted throughout the risk assessment process, from Step 1

(System Characterization) through Step 9(Results Documentation).

Outputfrom Step 1—Characterization ofthe IT system assessed, agood picture ofthe IT

system environment, and delineation ofsystem boundary

3.2 STEP 2: THREAT IDENTIFICATION

Athreat is the potential for aparticular threat-source to successfully exercise aparticular

vulnerability. Avulnerability is aweakness that can

be accidentally triggered or intentionally exploited. A

threat-source does not present arisk when there is no

vulnerability that can be exercised. In determining the

likelihood of athreat (Section 3.5), one must consider

threat-sources, potential vulnerabilities (Section 3.3),

and existing controls (Section 3.4).

Threat: The potential for athreat-

source to exercise (accidentally trigger

or intentionally exploit) aspecific

vulnerability.

3.2.1 Threat-Source Identification

The goal of this step is to identify the potential

threat-sources and compile athreat statement

listing potential threat-sources that are applicable

to the IT system being evaluated.

Threat-Source: Either (1) intent and method

targeted at the intentional exploitation of a

vulnerability or (2) asituation and method

that may accidentally trigger avulnerability.

^During the initial phase, arisk assessment could be used to develop the initial system security plan.

SP 800-30 Page 12

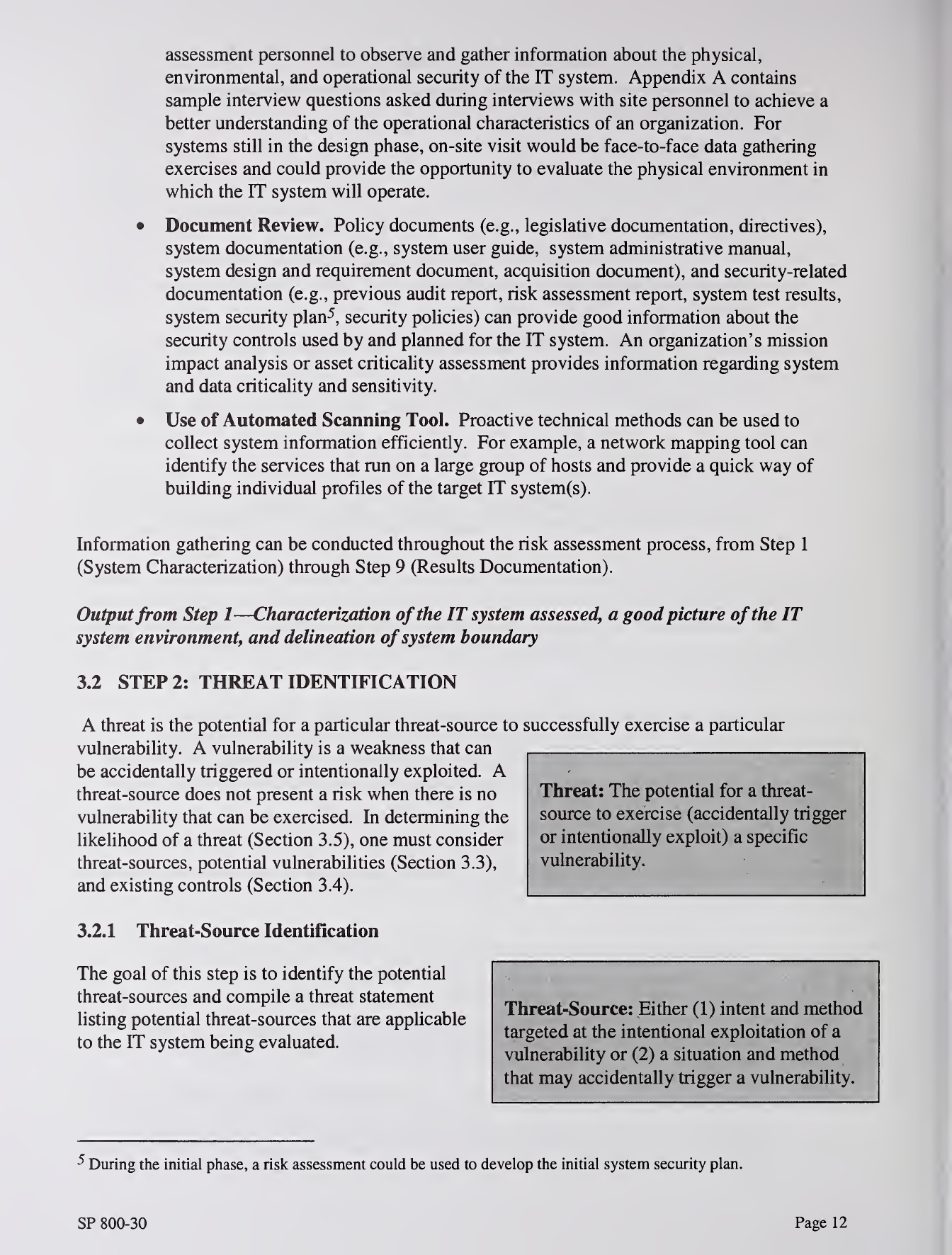

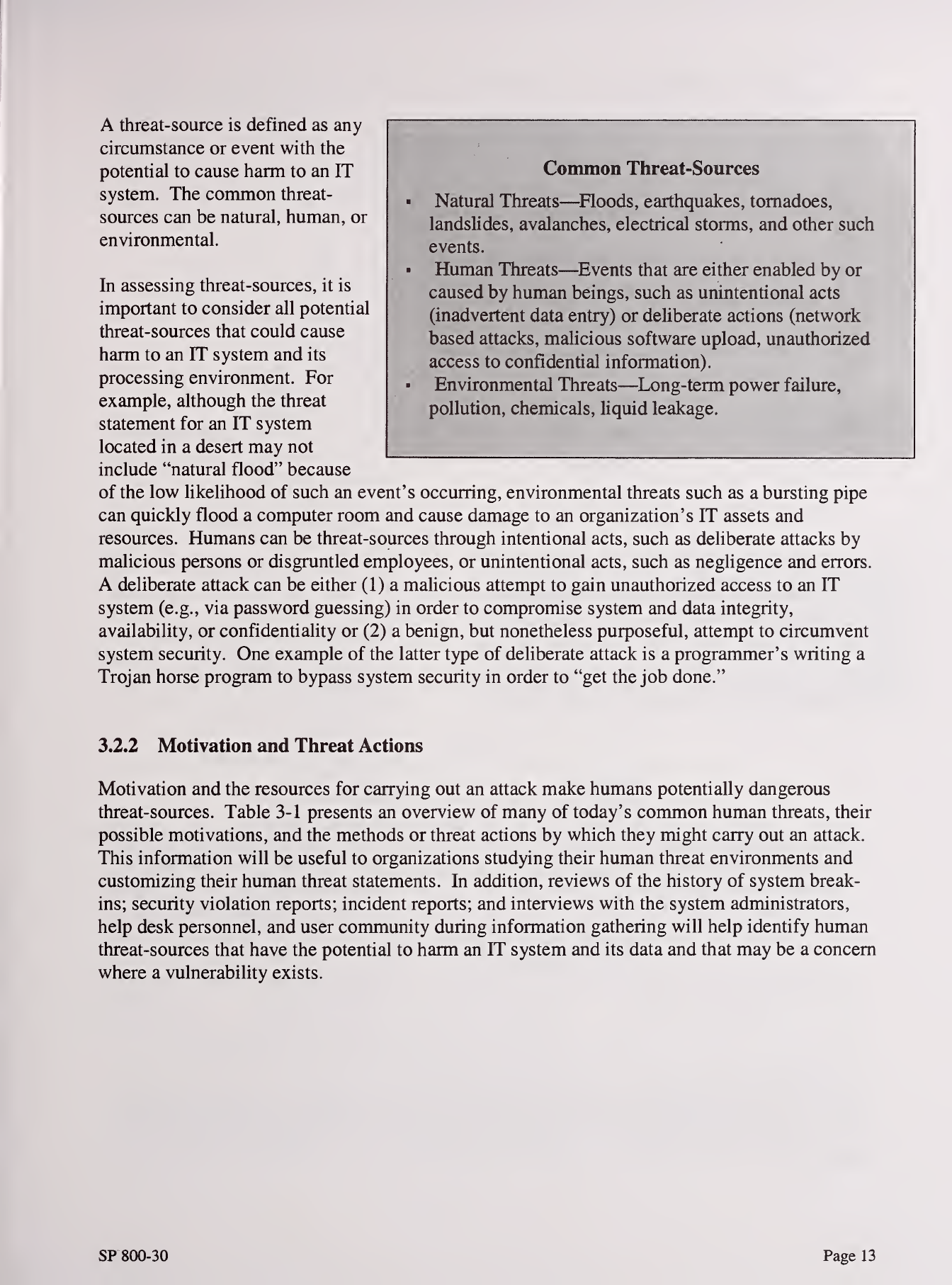

Athreat-source is defined as any

circumstance or event with the

potential to cause harm to an IT

system. The common threat-

sources can be natural, human, or

environmental.

In assessing threat-sources, it is

important to consider all potential

threat-sources that could cause

harm to an IT system and its

processing environment. For

example, although the threat

statement for an IT system

located in adesert may not

include "natural flood" because

of the low likelihood of such an event's occurring, environmental threats such as abursting pipe

can quickly flood acomputer room and cause damage to an organization's IT assets and

resources. Humans can be threat-sources through intentional acts, such as deliberate attacks by

malicious persons or disgruntled employees, or unintentional acts, such as negligence and errors.

Adeliberate attack can be either (1) amalicious attempt to gain unauthorized access to an IT

system (e.g., via password guessing) in order to compromise system and data integrity,

availability, or confidentiality or (2) abenign, but nonetheless purposeful, attempt to circumvent

system security. One example of the latter type of deliberate attack is aprogrammer's writing a

Trojan horse program to bypass system security in order to "get the job done."

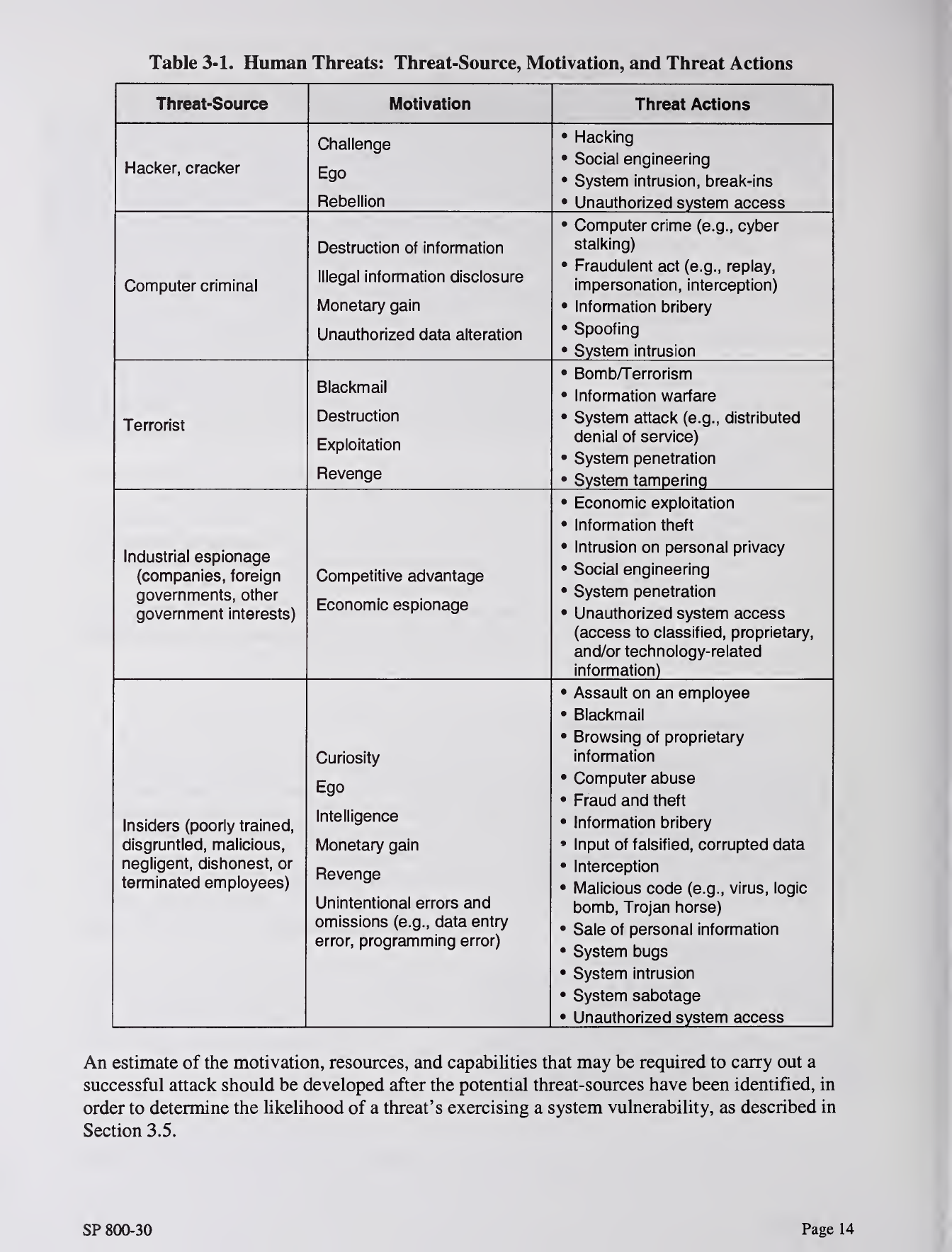

3.2.2 Motivation and Tlireat Actions

Motivation and the resources for carrying out an attack make humans potentially dangerous

threat-sources. Table 3-1 presents an overview of many of today's common human threats, their

possible motivations, and the methods or threat actions by which they might carry out an attack.

This information will be useful to organizations studying their human threat environments and

customizing their human threat statements. In addition, reviews of the history of system break-

ins; security violation reports; incident reports; and interviews with the system administrators,

help desk personnel, and user community during information gathering will help identify human

threat-sources that have the potential to harm an IT system and its data and that may be aconcern

where avulnerability exists.

Common Threat-Sources

Natural Threats—^Floods, earthquakes, tornadoes,

landslides, avalanches, electrical storms, and other such

events.

«Human Threats—^Events that are either enabled by or

caused by human beings, such as unintentional acts

(inadvertent data entry) or deliberate actions (network

based attacks, malicious software upload, unauthorized

access to confidential information),

Environmental Threats—Long-term power failure,

pollution, chemicals, liquid leakage.

SP800-30 Page 13

Table 3-1. Human Threats: Threat-Source, Motivation, and Threat Actions

Threat-Source Motivation Threat Actions

Hacker, cracker

Challenge

Ego

Rebellion

•Hacking

•Social engineering

•System intrusion, break-ins

•Unauthorized system access

Computer criminal

Destruction of information

lllonf)! information Hicolociiro

Monetary gain

Unauthorized data alteration

•Computer crime (e.g., cyber

stalking)

•Fraudulent act (e.g., replay,

impersonation, interception)

•Information bribery

•Spoofing

•System intrusion

Terrorist

Blackmail

Destruction

Exploitation

Revenge

•Bomb/Terrorism

•Information warfare

•System attack (e.g., distributed

denial of service)

•System penetration

•System tampering

Industrial espionage

(companies, foreign

novprnmpnt^ nthpr

UWUI 1II 11^1 IIO, V/il Iwl

government interests)

Competitive advantage

Economic espionage

•Economic exploitation

•Information theft

•Intrusion on personal privacy

•Social engineering

•System penetration

•Unauthorized system access

(access to classified, proprietary,

and/or technology-related

information)

Insiders (poorly trained,

disgruntled, malicious,

negligent, dishonest, or

terminated employees)

Curiosity

Ego

Intelligence

Monetary gain

Revenge

Unintentional errors and

omissions (e.g., data entry

error, programming error)

•Assault on an employee

•Blackmail

•Browsing of proprietary

information

•Computer abuse

•Fraud and theft

•Information bribery

•Input of falsified, corrupted data

•Interception

•Malicious code (e.g., virus, logic

bomb, Trojan horse)

•Sale of personal information

•System bugs

•System intrusion

•System sabotage

•Unauthorized system access

An estimate of the motivation, resources, and capabilities that may be required to carry out a

successful attack should be developed after the potential threat-sources have been identified, in

order to determine the likelihood of athreat's exercising asystem vulnerability, as described in

Section 3.5.

SP 800-30 Page 14

j

i

I

The threat statement, or the list of potential threat-sources, should be tailored to the individual

organization and its processing environment (e.g., end-user computing habits). In general,

information on natural threats (e.g., floods, earthquakes, storms) should be readily available.

Known threats have been identified by many government and private sector organizations.

Intrusion detection tools also are becoming more prevalent, and government and industry

organizations continually collect data on security events, thereby improving the ability to

realistically assess threats. Sources of information include, but are not limited to, the following:

•Intelligence agencies (for example, the Federal Bureau of Investigation's National

Infrastructure Protection Center)

•Federal Computer Incident Response Center (FedCIRC)

•Mass media, particularly Web-based resources such as SecurityFocus.com,

SecurityWatch.com, SecurityPortal.com, and SANS.org.

Outputfrom Step 2—Athreat statement containing alist ofthreat-sources that could exploit

system vulnerabilities

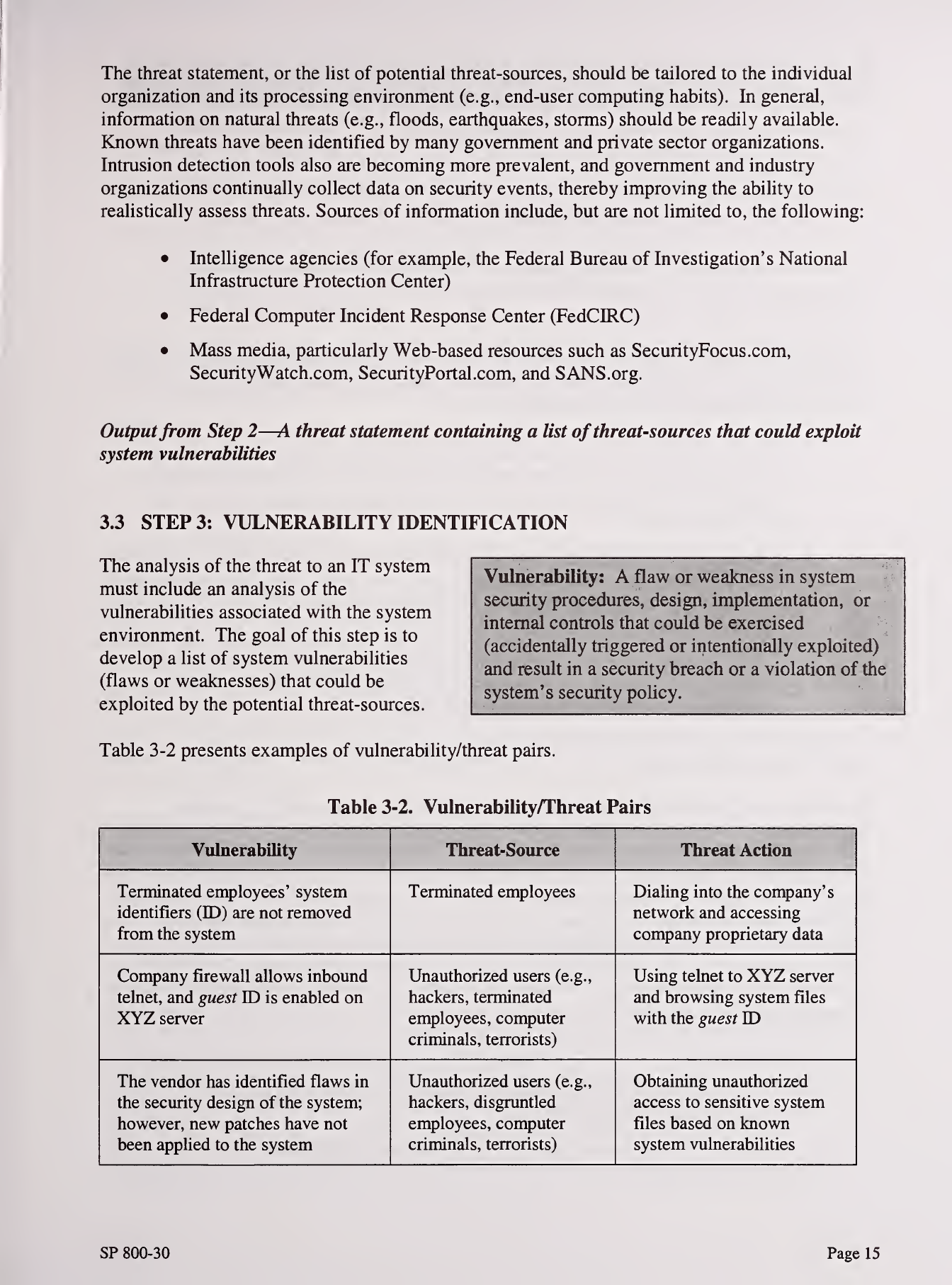

3.3 STEP 3: VULNERABILITY IDENTIFICATION

The analysis of the threat to an IT system

must include an analysis of the

vulnerabilities associated with the system

environment. The goal of this step is to

develop alist of system vulnerabilities

(flaws or weaknesses) that could be

exploited by the potential threat-sources.

Table 3-2 presents examples of vulnerability/threat pairs.

Table 3-2. Vulnerability/Threat Pairs

Vulnerability Threat-Source Threat Action

Terminated employees' system

identifiers (ED) are not removed

from the system

Terminated employees Dialing into the company's

network and accessing

company proprietary data

Company firewall allows inbound

telnet, and guest YD is enabled on

XYZ server

Unauthorized users (e.g.,

hackers, terminated

employees, computer

criminals, terrorists)

Using telnet to XYZ server

and browsing system files

with the guest ID

The vendor has identified flaws in

the security design of the system;

however, new patches have not

been applied to the system

Unauthorized users (e.g.,

hackers, disgruntled

employees, computer

criminals, terrorists)

Obtaining unauthorized

access to sensitive system

files based on known

system vulnerabilities

Vulnerability: Aflaw or weakness in system

security procedures, design, implementation, or

internal controls that could be exercised

(accidentally triggered or intentionally exploited)

and result in asecurity breach or aviolation of the

system's security poUcy.

SP 800-30 Page 15

Vulnerability Threat-Source Threat Action

Data center uses water sprinklers

to suppress fire; tarpaulins to

protect hardware and equipment

from water damage are not in

place

Fire, negligent persons Water sprinklers being

turned on in the data center

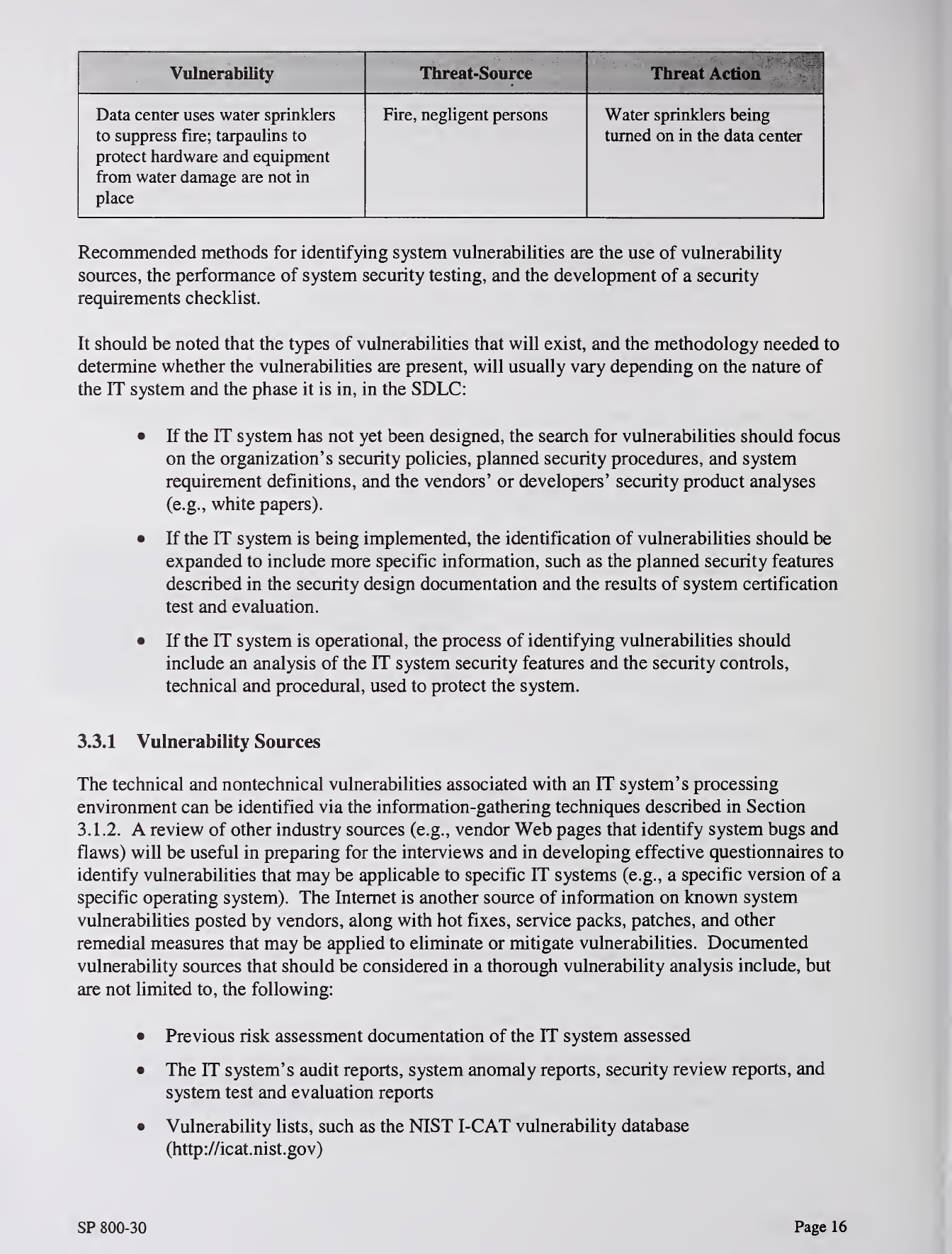

Recommended methods for identifying system vulnerabilities are the use of vulnerability

sources, the performance of system security testing, and the development of asecurity

requirements checklist.

It should be noted that the types of vulnerabilities that will exist, and the methodology needed to

determine whether the vulnerabilities are present, will usually vary depending on the nature of

the IT system and the phase it is in, in the SDLC:

•If the IT system has not yet been designed, the search for vulnerabilities should focus

on the organization's security policies, planned security procedures, and system

requirement definitions, and the vendors' or developers' security product analyses

(e.g., white papers).

•If the IT system is being implemented, the identification of vulnerabilities should be

expanded to include more specific information, such as the planned security features

described in the security design documentation and the results of system certification

test and evaluation.

•If the IT system is operational, the process of identifying vulnerabilities should

include an analysis of the IT system security features and the security controls,

technical and procedural, used to protect the system.

3.3.1 Vulnerability Sources

The technical and nontechnical vulnerabilities associated with an IT system's processing

environment can be identified via the information-gathering techniques described in Section

3.1.2. Areview of other industry sources (e.g., vendor Web pages that identify system bugs and

flaws) will be useful in preparing for the interviews and in developing effective questionnaires to

identify vulnerabilities that may be applicable to specific IT systems (e.g., aspecific version of a

specific operating system). The Internet is another source of information on known system

vulnerabilities posted by vendors, along with hot fixes, service packs, patches, and other

remedial measures that may be applied to eliminate or mitigate vulnerabilities. Documented

vulnerability sources that should be considered in athorough vulnerability analysis include, but

are not limited to, the following:

•Previous risk assessment documentation of the IT system assessed

•The IT system's audit reports, system anomaly reports, security review reports, and

system test and evaluation reports

•Vulnerability lists, such as the NIST I-CAT vulnerability database

(http://icat.nist.gov)

SP 800-30 Page 16

•Security advisories, such as FedCIRC and the Department of Energy's Computer

Incident Advisory Capability bulletins

•Vendor advisories

•Commercial computer incident/emergency response teams and post lists (e.g.,

SecurityFocus.com forum mailings)

•Information Assurance Vulnerability Alerts and bulletins for military systems

•System software security analyses.

3.3.2 System Security Testing

Proactive methods, employing system testing, can be used to identify system vulnerabilities

efficiently, depending on the criticality of the IT system and available resources (e.g., allocated

funds, available technology, persons with the expertise to conduct the test). Test methods

include

—

•Automated vulnerability scanning tool

•Security test and evaluation (ST&E)

•Penetration testing.*^

The automated vulnerability scanning tool is used to scan agroup of hosts or anetwork for

known vulnerable services (e.g., system allows anonymous File Transfer Protocol [FTP],

sendmail relaying). However, it should be noted that some of the potential vulnerabilities

identified by the automated scanning tool may not represent real vulnerabilities in the context of

the system environment. For example, some of these scanning tools rate potential vulnerabilities

without considering the site's environment and requirements. Some of the "vulnerabilities"

flagged by the automated scanning software may actually not be vulnerable for aparticular site

but may be configured that way because their environment requires it. Thus, this test method

may produce false positives.

ST&E is another technique that can be used in identifying IT system vulnerabilities during the

risk assessment process. It includes the development and execution of atest plan (e.g., test

script, test procedures, and expected test results). The purpose of system security testing is to

test the effectiveness of the security controls of an IT system as they have been applied in an

operational environment. The objective is to ensure that the applied controls meet the approved

security specification for the software and hardware and implement the organization's security

policy or meet industry standards.

Penetration testing can be used to complement the review of security controls and ensure that

different facets of the IT system are secured. Penetration testing, when employed in the risk

assessment process, can be used to assess an IT system's ability to withstand intentional attempts

to circumvent system security. Its objective is to test the IT system from the viewpoint of a

threat-source and to identify potential failures in the IT system protection schemes.

The NIST SP draft 800-42, Network Security Testing Overview, describes the methodology for network system

testing and the use of automated tools.

SP 800-30 Page 17

The results of these types of optional security testing will help identify asystem's vulnerabilities.

3.3.3 Development of Security Requirements Cliecklist

During this step, the risk assessment personnel determine whether the security requirements

stipulated for the IT system and collected during system characterization are being met by

existing or planned security controls. Typically, the system security requirements can be

presented in table form, with each requirement accompanied by an explanation of how the

system's design or implementation does or does not satisfy that security control requirement.

Asecurity requirements checklist contains the basic security standards that can be used to

systematically evaluate and identify the vulnerabilities of the assets (personnel, hardware,

software, information), nonautomated procedures, processes, and information transfers

associated with agiven IT system in the following security areas:

•Management

•Operational

•Technical.

Table 3-3 lists security criteria suggested for use in identifying an IT system's vulnerabilities in

each security area.

Table 3-3. Security Criteria

Security Area Security Criteria

Management Security

•Assignment of responsibilities

•Continuity of support

•Incident response capability

•Periodic review of security controls

•Personnel clearance and background investigations

•Risk assessment

•Security and technical training

•Separation of duties

•System authorization and reauthorization

•System or application security plan

Operational Security

•Control of air-borne contaminants (smoke, dust, chemicals)

•Controls to ensure the quality of the electrical power supply

•Data media access and disposal

•External data distribution and labeling

•Facility protection (e.g., computer room, data center, office)

•Humidity control

•Temperature control

•Workstations, laptops, and stand-alone personal computers

SP 800-30 Page 18

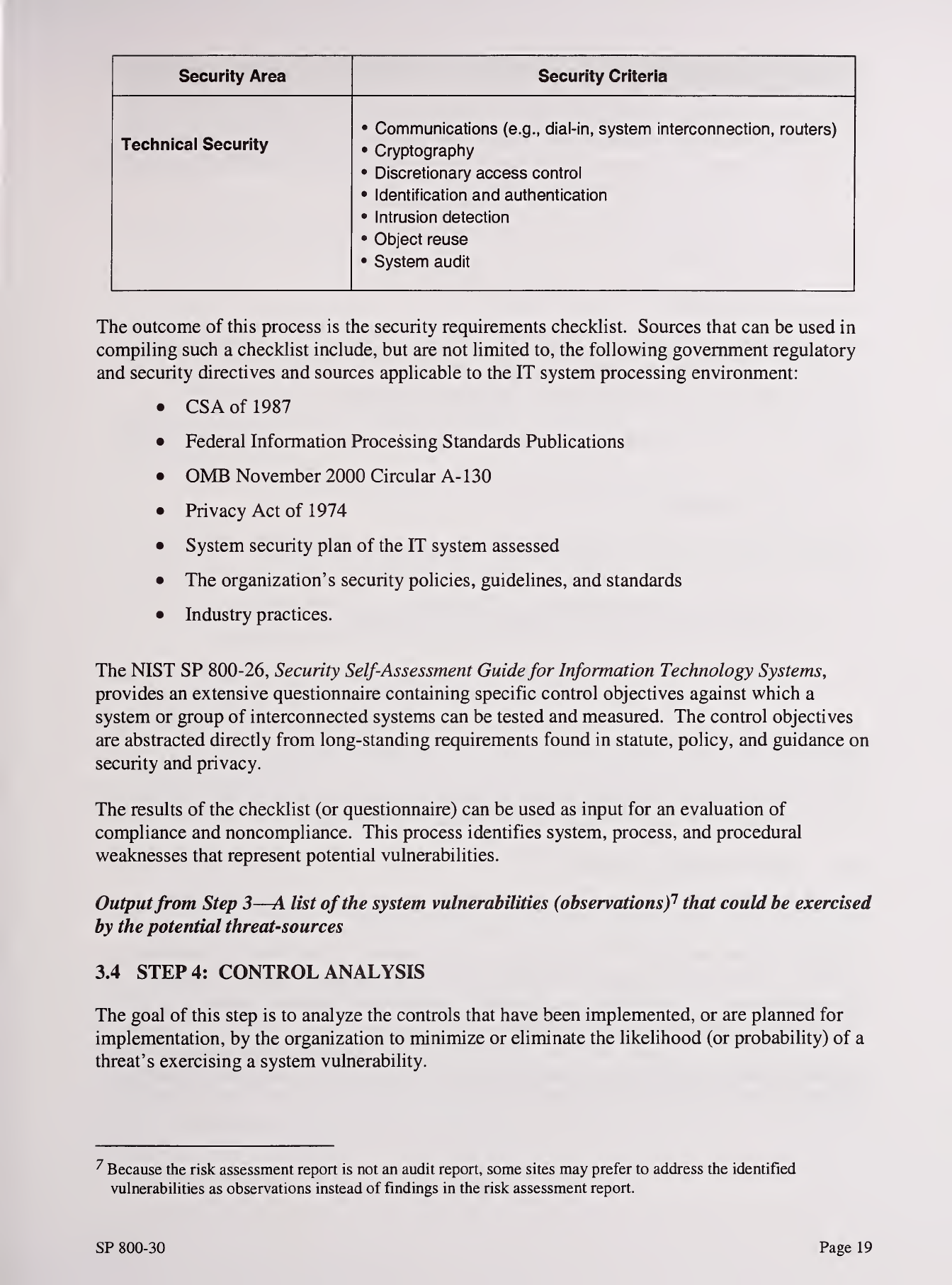

Security Area Security Criteria

Technical Security

•Communications (e.g., dial-in, system interconnection, routers)

•Cryptography

•Discretionary access control

•Identification and authentication

•Intrusion detection

•Object reuse

•System audit

The outcome of this process is the security requirements checklist. Sources that can be used in

compiUng such achecklist include, but are not limited to, the following government regulatory

and security directives and sources applicable to the IT system processing environment:

•CSA of 1987

•Federal Information Processing Standards Publications

•OMB November 2000 Circular A- 130

•Privacy Act of 1974

•System security plan of the IT system assessed

•The organization's security policies, guidelines, and standards

•Industry practices.

The NIST SP 800-26, Security Self-Assessment Guide for Information Technology Systems,

provides an extensive questionnaire containing specific control objectives against which a

system or group of interconnected systems can be tested and measured. The control objectives

are abstracted directly from long-standing requirements found in statute, policy, and guidance on

security and privacy.

The results of the checklist (or questionnaire) can be used as input for an evaluation of

compliance and noncompliance. This process identifies system, process, and procedural

weaknesses that represent potential vulnerabilities.

Outputfrom Step 3—Alist ofthe system vulnerabilities (observations)"^ that could be exercised

by the potential threat-sources

3.4 STEP 4: CONTROL ANALYSIS

The goal of this step is to analyze the controls that have been implemented, or are planned for

implementation, by the organization to minimize or eliminate the likelihood (or probability) of a

threat's exercising asystem vulnerability.

Because the risk assessment report is not an audit report, some sites may prefer to address the identified

vulnerabiUties as observations instead of findings in the risk assessment report.

SP 800-30 Page 19

To derive an overall likelihood rating that indicates the probability that apotential vulnerability

may be exercised within the construct of the associated threat environment (Step 5below), the

implementation of current or planned controls must be considered. For example, avulnerability

(e.g., system or procedural weakness) is not likely to be exercised or the likelihood is low if there

is alow level of threat-source interest or capability or if there are effective security controls that

can eliminate, or reduce the magnitude of, harm.

Sections 3.4.1 through 3.4.3, respectively, discuss control methods, control categories, and the

control analysis technique.

3.4.1 Control Methods

Security controls encompass the use of technical and nontechnical methods. Technical controls

are safeguards that are incorporated into computer hardware, software, or firmware (e.g., access

control mechanisms, identification and authentication mechanisms, encryption methods,

intrusion detection software). Nontechnical controls are management and operational controls,

such as security policies; operational procedures; and personnel, physical, and environmental

security.

3.4.2 Control Categories

The control categories for both technical and nontechnical control methods can be further

classified as either preventive or detective. These two subcategories are explained as follows:

•Preventive controls inhibit attempts to violate security policy and include such

controls as access control enforcement, encryption, and authentication.

•Detective controls warn of violations or attempted violations of security policy and

include such controls as audit trails, intrusion detection methods, and checksums.

Section 4.4 further explains these controls from the implementation standpoint. The

implementation of such controls during the risk mitigation process is the direct result of the

identification of deficiencies in current or planned controls during the risk assessment process

(e.g., controls are not in place or controls are not properly implemented).

3.4.3 Control Analysis Technique

As discussed in Section 3.3.3, development of asecurity requirements checklist or use of an

available checklist will be helpful in analyzing controls in an efficient and systematic manner.

The security requirements checklist can be used to validate security noncompliance as well as

compliance. Therefore, it is essential to update such checklists to reflect changes in an

organization's control environment (e.g., changes in security policies, methods, and

requirements) to ensure the checklist's validity.

Outputfrom Step 4—List of current or planned controls usedfor the IT system to mitigate the

likelihood ofavulnerability's being exercised and reduce the impact of such an adverse event

SP 800-30 Page 20

3.5 STEPS: LIKELIHOOD DETERMINATION

To derive an overall likelihood rating that indicates the probability that apotential vulnerability

may be exercised within the construct of the associated threat environment, the following

governing factors must be considered:

•Threat-source motivation and capability

•Nature of the vulnerability

•Existence and effectiveness of current controls.

The likelihood that apotential vulnerability could be exercised by agiven threat-source can be

described as high, medium, or low. Table 3-4 below describes these three likelihood levels.

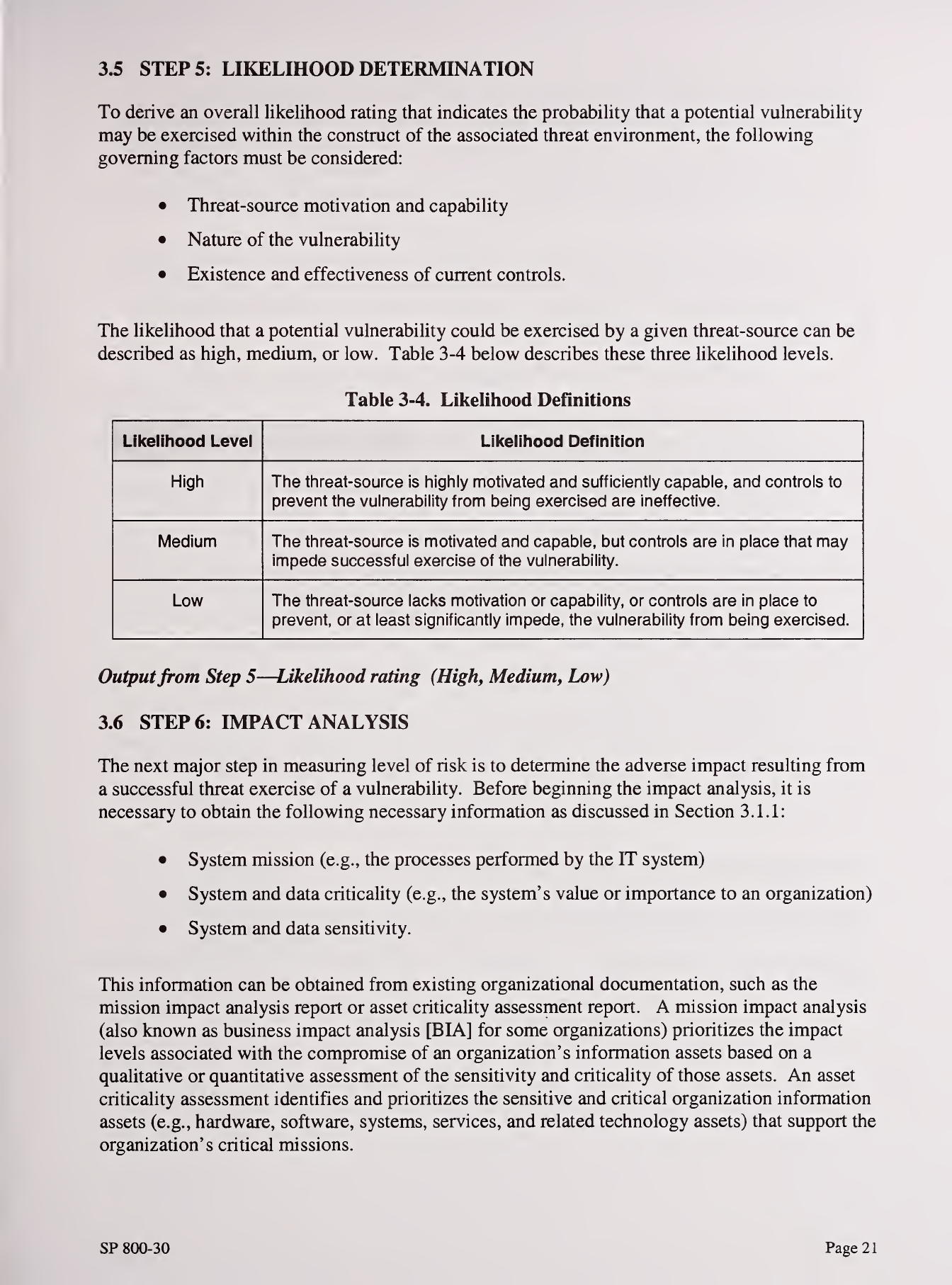

Table 3-4. Likelihood Definitions

Likelihood Level Likelihood Definition

High The threat-source is highly motivated and sufficiently capable, and controls to

prevent the vulnerability from being exercised are ineffective.

Medium The threat-source is motivated and capable, but controls are in place that may

impede successful exercise of the vulnerability.

Low The threat-source lacks motivation or capability, or controls are in place to

prevent, or at least significantly impede, the vulnerability from being exercised.

Outputfrom Step 5—Likelihood rating (High, Medium, Low)

3.6 STEP 6: IMPACT ANALYSIS

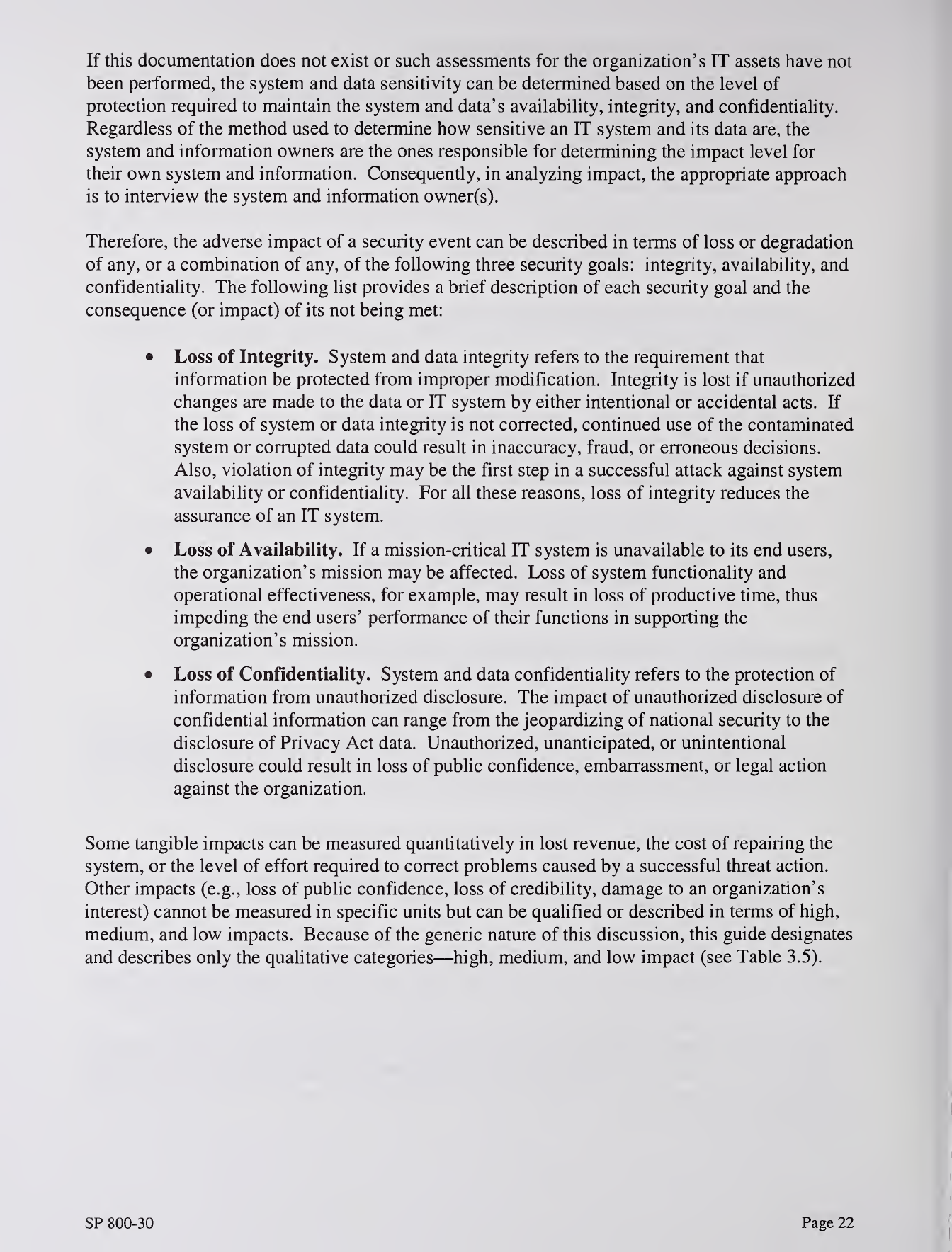

The next major step in measuring level of risk is to determine the adverse impact resulting from

asuccessful threat exercise of avulnerability. Before beginning the impact analysis, it is

necessary to obtain the following necessary information as discussed in Section 3.1.1:

•System mission (e.g., the processes performed by the IT system)

•System and data criticality (e.g., the system's value or importance to an organization)

•System and data sensitivity.

This information can be obtained from existing organizational documentation, such as the

mission impact analysis report or asset criticality assessment report. Amission impact analysis

(also known as business impact analysis [BIA] for some organizations) prioritizes the impact

levels associated with the compromise of an organization's information assets based on a

qualitative or quantitative assessment of the sensitivity and criticality of those assets. An asset

criticality assessment identifies and prioritizes the sensitive and critical organization information

assets (e.g., hardware, software, systems, services, and related technology assets) that support the

organization's critical missions.

SP 800-30 Page 21

If this documentation does not exist or such assessments for the organization's IT assets have not

been performed, the system and data sensitivity can be determined based on the level of

protection required to maintain the system and data's availability, integrity, and confidentiality.

Regardless of the method used to determine how sensitive an IT system and its data are, the

system and information owners are the ones responsible for determining the impact level for

their own system and information. Consequently, in analyzing impact, the appropriate approach

is to interview the system and information owner(s).

Therefore, the adverse impact of asecurity event can be described in terms of loss or degradation

of any, or a combination of any, of the following three security goals: integrity, availability, and

confidentiality. The following list provides abrief description of each security goal and the

consequence (or impact) of its not being met:

•Loss of Integrity. System and data integrity refers to the requirement that

information be protected from improper modification. Integrity is lost if unauthorized

changes are made to the data or IT system by either intentional or accidental acts. If

the loss of system or data integrity is not corrected, continued use of the contaminated

system or corrupted data could result in inaccuracy, fraud, or erroneous decisions.

Also, violation of integrity may be the first step in asuccessful attack against system

availability or confidentiality. For all these reasons, loss of integrity reduces the

assurance of an IT system.

•Loss of Availability. If amission-critical IT system is unavailable to its end users,

the organization's mission may be affected. Loss of system functionality and

operational effectiveness, for example, may result in loss of productive time, thus

impeding the end users' performance of their functions in supporting the

organization's mission.

•Loss of Confidentiality. System and data confidentiality refers to the protection of

information from unauthorized disclosure. The impact of unauthorized disclosure of

confidential information can range from the jeopardizing of national security to the

disclosure of Privacy Act data. Unauthorized, unanticipated, or unintentional

disclosure could result in loss of public confidence, embarrassment, or legal action

against the organization.

Some tangible impacts can be measured quantitatively in lost revenue, the cost of repairing the

system, or the level of effort required to correct problems caused by asuccessful threat action.

Other impacts (e.g., loss of public confidence, loss of credibility, damage to an organization's

interest) cannot be measured in specific units but can be qualified or described in terms of high,

medium, and low impacts. Because of the generic nature of this discussion, this guide designates

and describes only the qualitative categories—high, medium, and low impact (see Table 3.5).

SP 800-30 Page 22

\

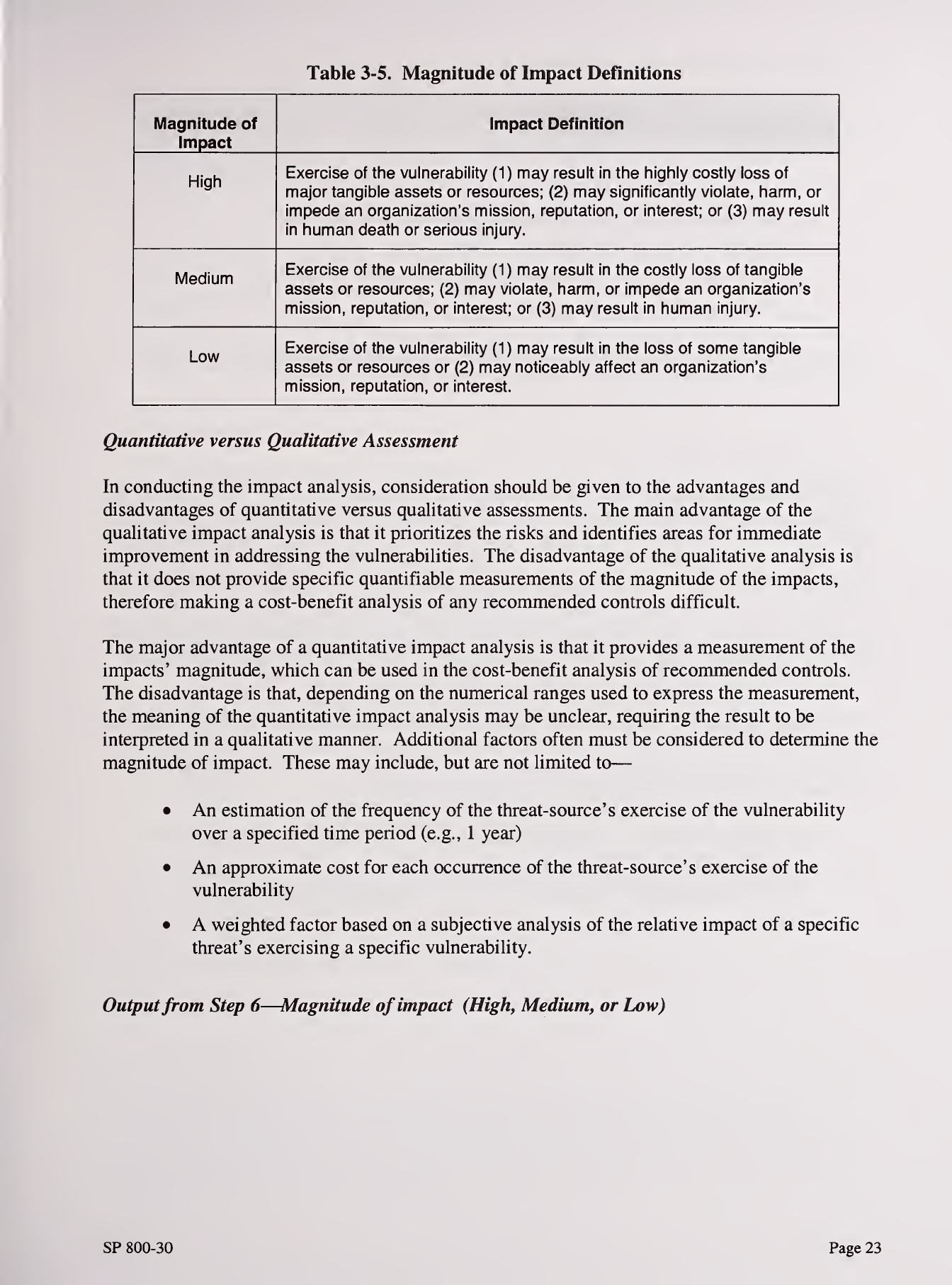

Table 3-5. Magnitude of Impact DeHnitions

Magnitude of

Impact

impact Definition

High Exercise of tlie vulnerability (1) may result in the highly costly loss of

major tangible assets or resources; (2) may significantly violate, harm, or

impede an organization's mission, reputation, or interest; or (3) may result

in human death or serious injury.

Medium Exercise of the vulnerability (1) may result in the costly loss of tangible

assets or resources; (2) may violate, harm, or impede an organization's

mission, reputation, or interest; or (3) may result in human injury.

Low Exercise of the vulnerability (1) may result in the loss of some tangible

assets or resources or (2) may noticeably affect an organization's

mission, reputation, or interest.

Quantitative versus Qualitative Assessment

In conducting the impact analysis, consideration should be given to the advantages and

disadvantages of quantitative versus qualitative assessments. The main advantage of the

qualitative impact analysis is that it prioritizes the risks and identifies areas for immediate

improvement in addressing the vulnerabilities. The disadvantage of the qualitative analysis is

that it does not provide specific quantifiable measurements of the magnitude of the impacts,

therefore making acost-benefit analysis of any recommended controls difficult.

The major advantage of aquantitative impact analysis is that it provides ameasurement of the

impacts' magnitude, which can be used in the cost-benefit analysis of recommended controls.

The disadvantage is that, depending on the numerical ranges used to express the measurement,

the meaning of the quantitative impact analysis may be unclear, requiring the result to be

interpreted in aqualitative manner. Additional factors often must be considered to determine the

magnitude of impact. These may include, but are not limited to

—

•An estimation of the frequency of the threat-source's exercise of the vulnerability

over aspecified time period (e.g., 1year)

•An approximate cost for each occurrence of the threat-source's exercise of the

vulnerability

•Aweighted factor based on asubjective analysis of the relative impact of aspecific

threat's exercising aspecific vulnerability.

Outputfrom Step 6—Magnitude of impact (High, Medium, or Low)

SP 800-30 Page 23

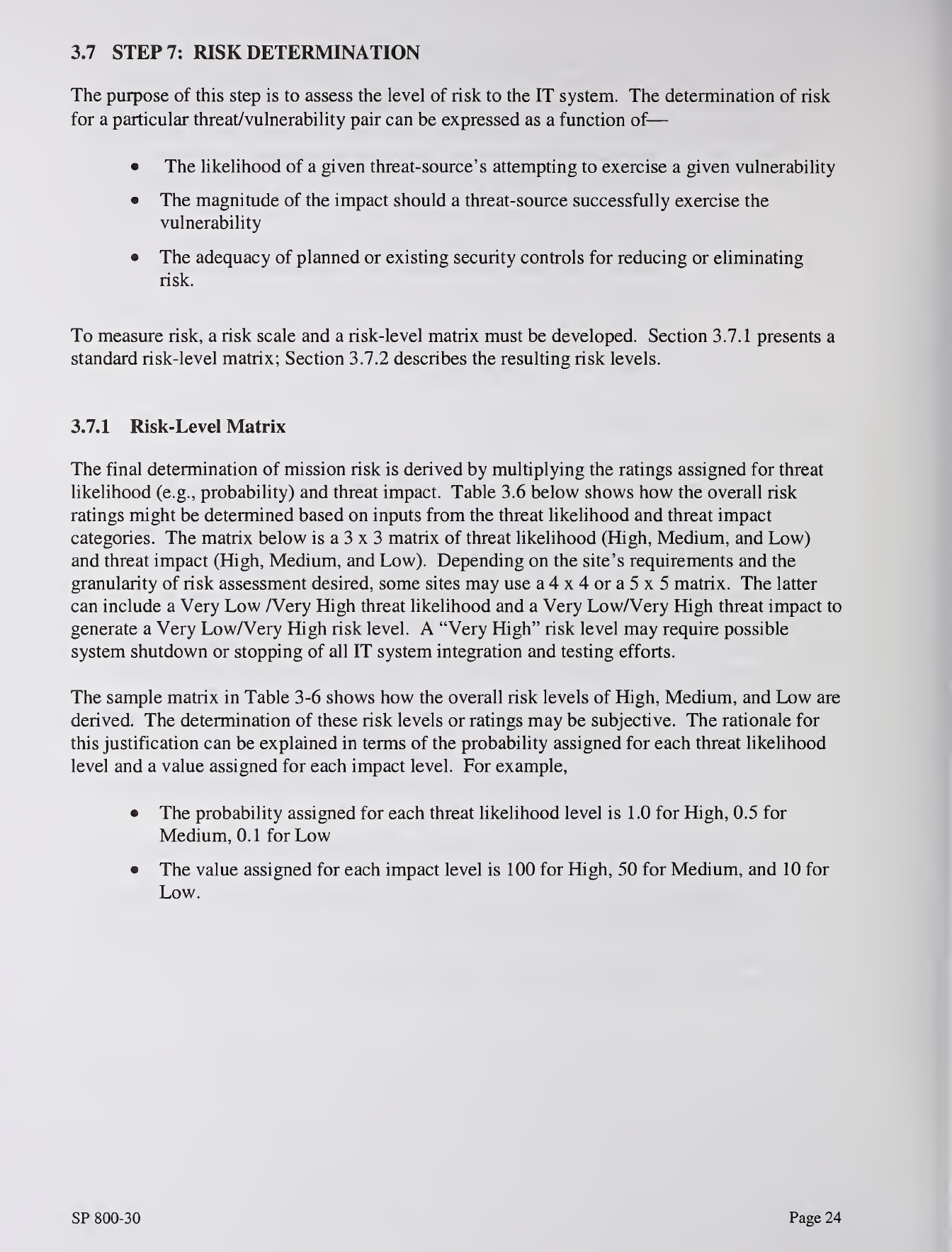

3.7 STEP 7: RISK DETERMINATION

The purpose of this step is to assess the level of risk to the IT system. The determination of risk

for aparticular threat/vulnerability pair can be expressed as afunction of

—

•The likelihood of agiven threat-source's attempting to exercise agiven vulnerability

•The magnitude of the impact should athreat-source successfully exercise the

vulnerability

•The adequacy of planned or existing security controls for reducing or eliminating

risk.

To measure risk, arisk scale and arisk-level matrix must be developed. Section 3.7.1 presents a

standard risk-level matrix; Section 3.7.2 describes the resulting risk levels.

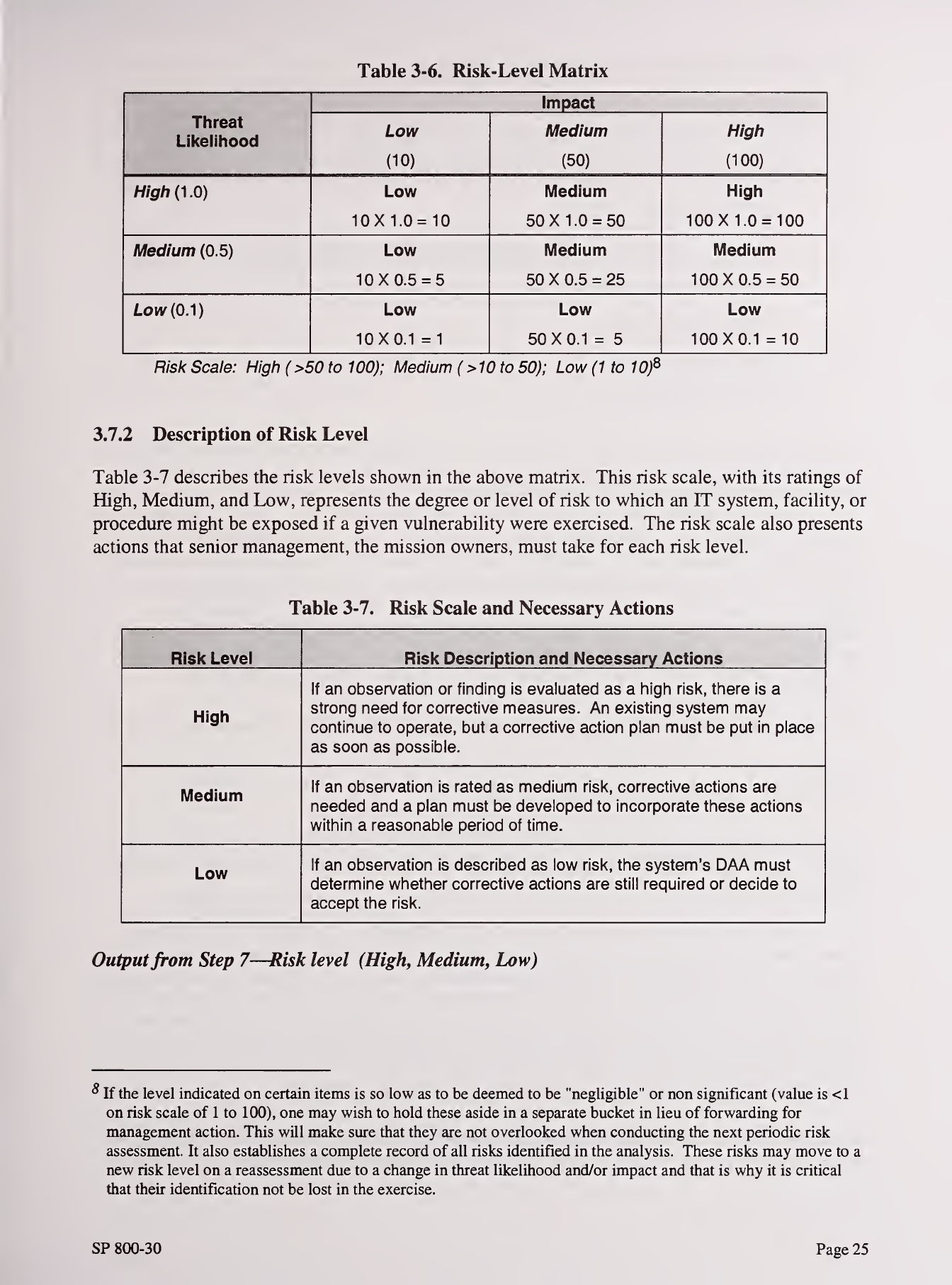

3.7.1 Risk-Level Matrix

The final determination of mission risk is derived by multiplying the ratings assigned for threat

likelihood (e.g., probability) and threat impact. Table 3.6 below shows how the overall risk

ratings might be determined based on inputs from the threat likelihood and threat impact

categories. The matrix below is a3x3matrix of threat likelihood (High, Medium, and Low)

and threat impact (High, Medium, and Low). Depending on the site's requirements and the

granularity of risk assessment desired, some sites may use a4x4ora5x5 matrix. The latter

can include aVery Low /Very High threat likelihood and aVery Low/Very High threat impact to

generate aVery LowA^ery High risk level. A"Very High" risk level may require possible

system shutdown or stopping of all IT system integration and testing efforts.

The sample matrix in Table 3-6 shows how the overall risk levels of High, Medium, and Low are

derived. The determination of these risk levels or ratings may be subjective. The rationale for

this justification can be explained in terms of the probability assigned for each threat likelihood

level and avalue assigned for each impact level. For example,

•The probability assigned for each threat likelihood level is 1.0 for High, 0.5 for

Medium, 0.1 for Low

•The value assigned for each impact level is 100 for High, 50 for Medium, and 10 for

Low.

SP 800-30 Page 24

Table 3-6. Risk-Level Matrix

Impact

Threat

Likelihood Low

(10)

Medium

(50)

Higli

(100)

High{^.0) Low Medium High

10X1.0 =10 50 X1.0 =50 100 X1.0 =100

Medium (0.5) Low Medium Medium

10X0.5 =550 X0.5 =25 100X0.5 =50

Low (0.1) Low Low Low

10X0.1 =150X0.1 =5100X0.1 =10

Risk Scale: High (>50 to 100); Medium (>10to 50); Low (1 to 10)^

3.7.2 Description of Risk Level

Table 3-7 describes the risk levels shown in the above matrix. This risk scale, with its ratings of

High, Medium, and Low, represents the degree or level of risk to which an IT system, facility, or

procedure might be exposed if agiven vulnerability were exercised. The risk scale also presents

actions that senior management, the mission owners, must take for each risk level.

Table 3-7. Risk Scale and Necessary Actions

Risk Level Risk Description and Necessary Actions

High

If an observation or finding is evaluated as a higli risk, there is a

strong need for corrective measures. An existing systenn may

continue to operate, but acorrective action plan must be put in place

as soon as possible.

Medium If an observation is rated as medium risk, corrective actions are

needed and aplan must be developed to incorporate these actions

within areasonable period of time.

Low If an observation is described as low risk, the system's DAA must

determine whether corrective actions are still required or decide to

accept the risk.

Outputfrom Step 7—Risk level (High, Medium, Low)

If the level indicated on certain items is so low as to be deemed to be "negligible" or non significant (value is <1

on risk scale of 1to 100), one may wish to hold these aside in aseparate bucket in lieu of forwarding for

management action. This will make sure that they are not overlooked when conducting the next periodic risk

assessment. It also establishes acomplete record of all risks identified in the analysis. These risks may move to a

new risk level on areassessment due to achange in threat likelihood and/or impact and that is why it is critical

that their identification not be lost in the exercise.

SP 800-30 Page 25

3.8 STEPS: CONTROL RECOMMENDATIONS

During this step of the process, controls that could mitigate or eliminate the identified risks, as

appropriate to the organization's operations, are provided. The goal of the recommended

controls is to reduce the level of risk to the IT system and its data to an acceptable level. The

following factors should be considered in recommending controls and alternative solutions to

minimize or eliminate identified risks:

•Effectiveness of recommended options (e.g., system compatibility)

•Legislation and regulation

•Organizational policy

•Operational impact

•Safety and reliability.

The control recommendations are the results of the risk assessment process and provide input to

the risk mitigation process, during which the recommended procedural and technical security

controls are evaluated, prioritized, and implemented.

It should be noted that not all possible recommended controls can be implemented to reduce loss.

To determine which ones are required and appropriate for aspecific organization, acost-benefit

analysis, as discussed in Section 4.6, should be conducted for the proposed recommended

controls, to demonstrate that the costs of implementing the controls can be justified by the

reduction in the level of risk. In addition, the operational impact (e.g., effect on system

performance) and feasibility (e.g., technical requirements, user acceptance) of introducing the

recommended option should be evaluated carefully during the risk mitigation process.

Outputfrom Step 8—Recommendation of control(s) and alternative solutions to mitigate risk

3.9 STEP 9: RESULTS DOCUMENTATION

Once the risk assessment has been completed (threat-sources and vulnerabilities identified, risks

assessed, and recommended controls provided), the results should be documented in an official

report or briefing.

Arisk assessment report is amanagement report that helps senior management, the mission

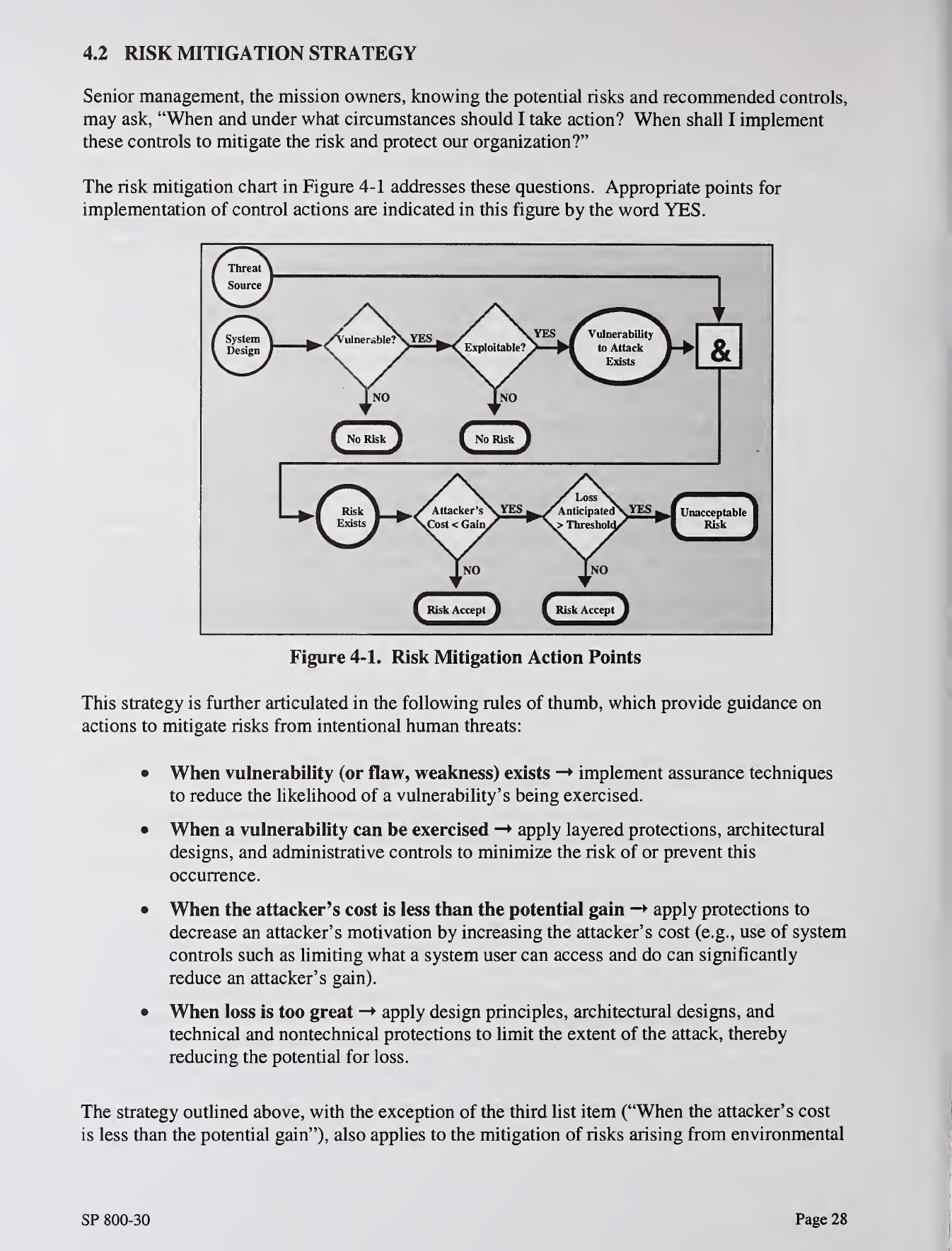

owners, make decisions on policy, procedural, budget, and system operational and management