Packtpub.Pentaho.3.2.Data.Integration.Beginners.Guide.Apr.2010

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 493 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Cover

- Copyright

- Credits

- Foreword

- The Kettle Project

- About the Author

- About the Reviewers

- Table of Contents

- Preface

- Chapter 1: Getting started with Pentaho Data Integration

- Pentaho Data Integration and Pentaho BI Suite

- Pentaho Data Integration

- Installing PDI

- Time for action – installing PDI

- Launching the PDI graphical designer: Spoon

- Time for action – starting and customizing Spoon

- Time for action – creating a hello world transformation

- Time for action – running and previewing the hello_world

- transformation

- Installing MySQL

- Time for action – installing MySQL on Windows

- Time for action – installing MySQL on Ubuntu

- Summary

- Chapter 2: Getting Started with Transformations

- Reading data from files

- Time for action – reading results of football matches from files

- Time for action – reading all your files at a time using a single

- Text file input step

- Time for action – reading all your files at a time using a single

- Text file input step and regular expressions

- Sending data to files

- Time for action – sending the results of matches to a plain file

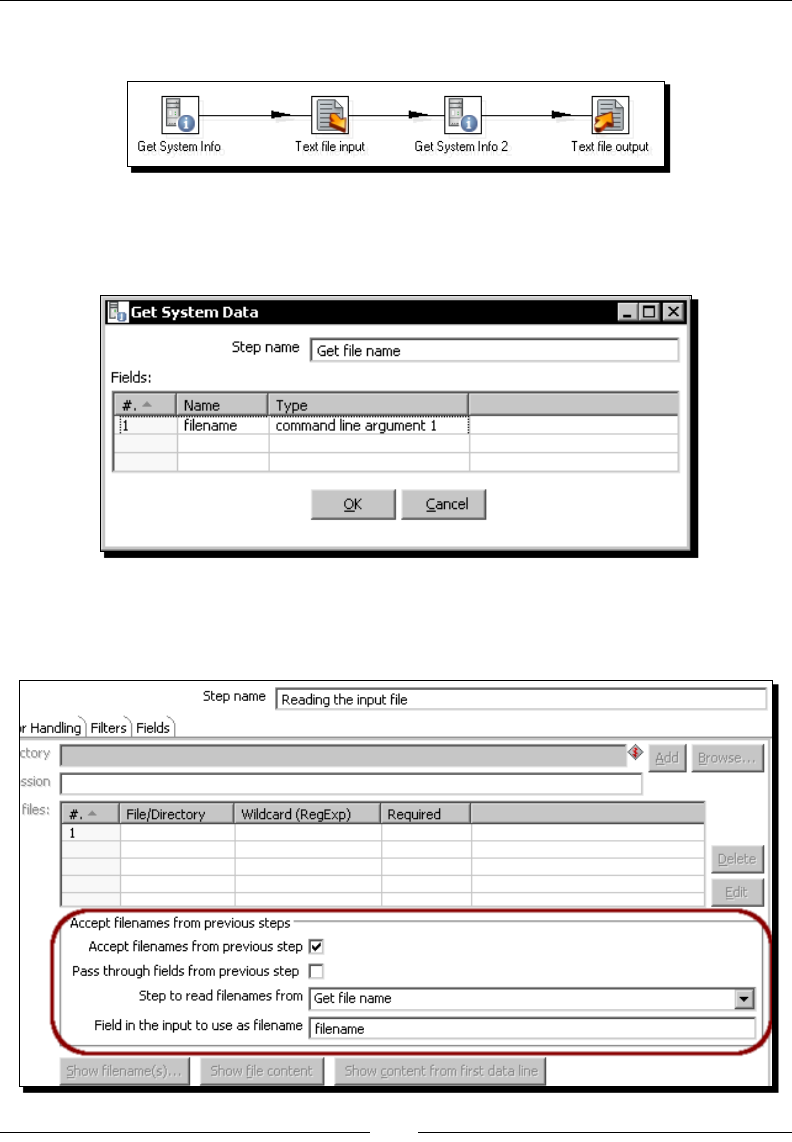

- Getting system information

- Time for action – updating a file with news about examinations

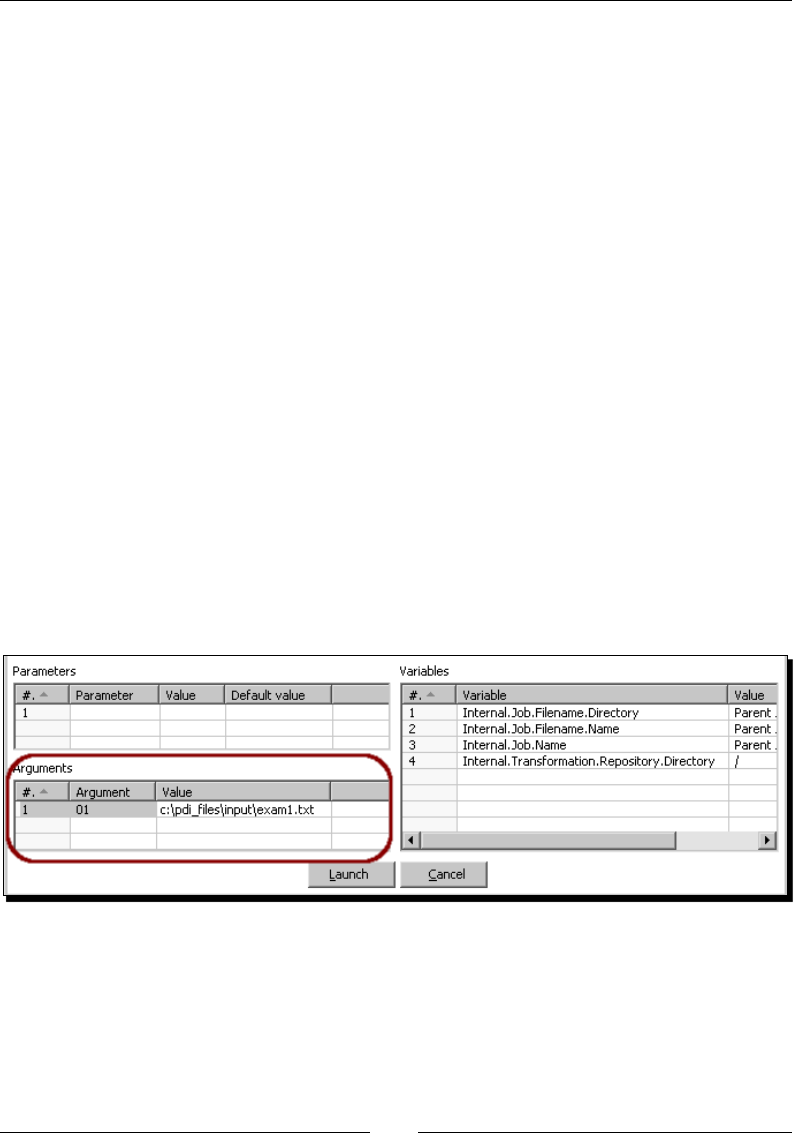

- Time for action – running the examination transformation from

- a terminal window

- XML files

- Time for action – getting data from an XML file with information

- about countries

- Summary

- Chapter 3: Basic data manipulation

- Basic calculations

- Time for action – reviewing examinations by using the

- Calculator step

- Time for action – reviewing examinations by using the

- Formula step

- Calculations on groups of rows

- Time for action – calculating World Cup statistics by

- grouping data

- Filtering

- Time for action – counting frequent words by filtering

- Looking up data

- Time for action – finding out which language people speak

- Summary

- Chapter 4: Controlling the Flow of Data

- Splitting streams

- Time for action – browsing new PDI features by copying

- a dataset

- Time for action – assigning tasks by distributing

- Splitting the stream based on conditions

- Time for action – assigning tasks by filtering priorities with the

- Filter rows step

- Time for action – assigning tasks by filtering priorities with the

- Switch/ Case step

- Merging streams

- Time for action – gathering progress and merging all together

- Time for action – giving priority to Bouchard by using

- Append Stream

- Summary

- Chapter 5: Transforming Your Data with JavaScript Code and the JavaScript Step

- Doing simple tasks with the JavaScript step

- Time for action – calculating scores with JavaScript

- Time for action – testing the calculation of averages

- Enriching the code

- Time for action – calculating flexible scores by using variables

- Reading and parsing unstructured files

- Time for action – changing a list of house descriptions with

- JavaScript

- Avoiding coding by using purpose-built steps

- Summary

- Chapter 6: Transforming the Row Set

- Converting rows to columns

- Time for action – enhancing a films file by converting

- rows to columns

- Time for action – calculating total scores by performances

- by country

- Normalizing data

- Time for action – enhancing the matches file by normalizing

- the dataset

- Generating a custom time dimension dataset by using Kettle variables

- Time for action – creating the time dimension dataset

- Time for action – getting variables for setting the default

- starting date

- Summary

- Chapter 7: Validating Data and Handling Errors

- Capturing errors

- Time for action – capturing errors while calculating the age

- of a film

- Time for action – aborting when there are too many errors

- Time for action – treating errors that may appear

- Avoiding unexpected errors by validating data

- Time for action – validating genres with a Regex Evaluation step

- Time for action – checking films file with the Data Validator

- Summary

- Chapter 8: Working with Databases

- Introducing the Steel Wheels sample database

- Time for action – creating a connection with the Steel Wheels

- database

- Time for action – exploring the sample database

- Querying a database

- Time for action – getting data about shipped orders

- Time for action – getting orders in a range of dates by using

- parameters

- Time for action – getting orders in a range of dates by using

- variables

- Sending data to a database

- Time for action – loading a table with a list of manufacturers

- Time for action – inserting new products or updating

- existent ones

- Time for action – testing the update of existing products

- Eliminating data from a database

- Time for action – deleting data about discontinued items

- Summary

- Chapter 9: Performing Advanced Operations with Databases

- Preparing the environment

- Time for action – populating the Jigsaw database

- Looking up data in a database

- Time for action – using a Database lookup step to create a list

- of products to buy

- Time for action – using a Database join step to create a list of

- suggested products to buy

- Introducing dimensional modeling

- Loading dimensions with data

- Time for action – loading a region dimension with a

- Combination lookup/update step

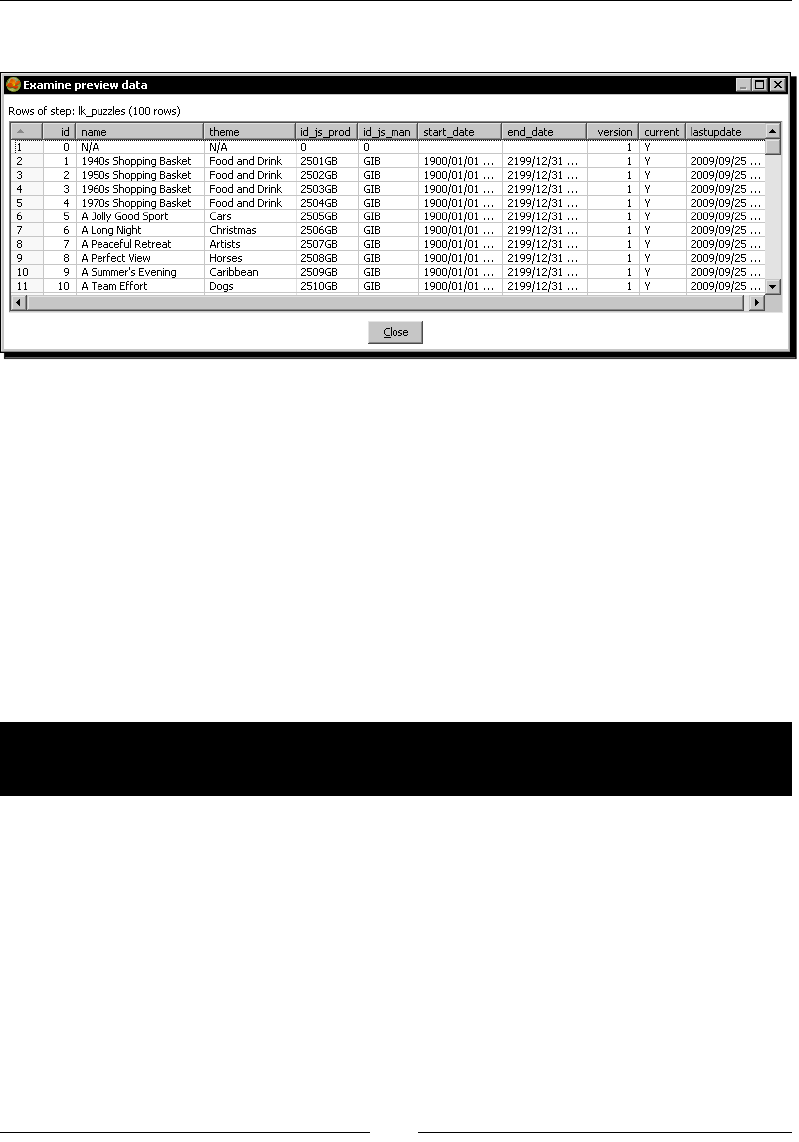

- Time for action – testing the transformation that loads the

- region dimension

- Time for action – keeping a history of product changes with the

- Dimension lookup/update step

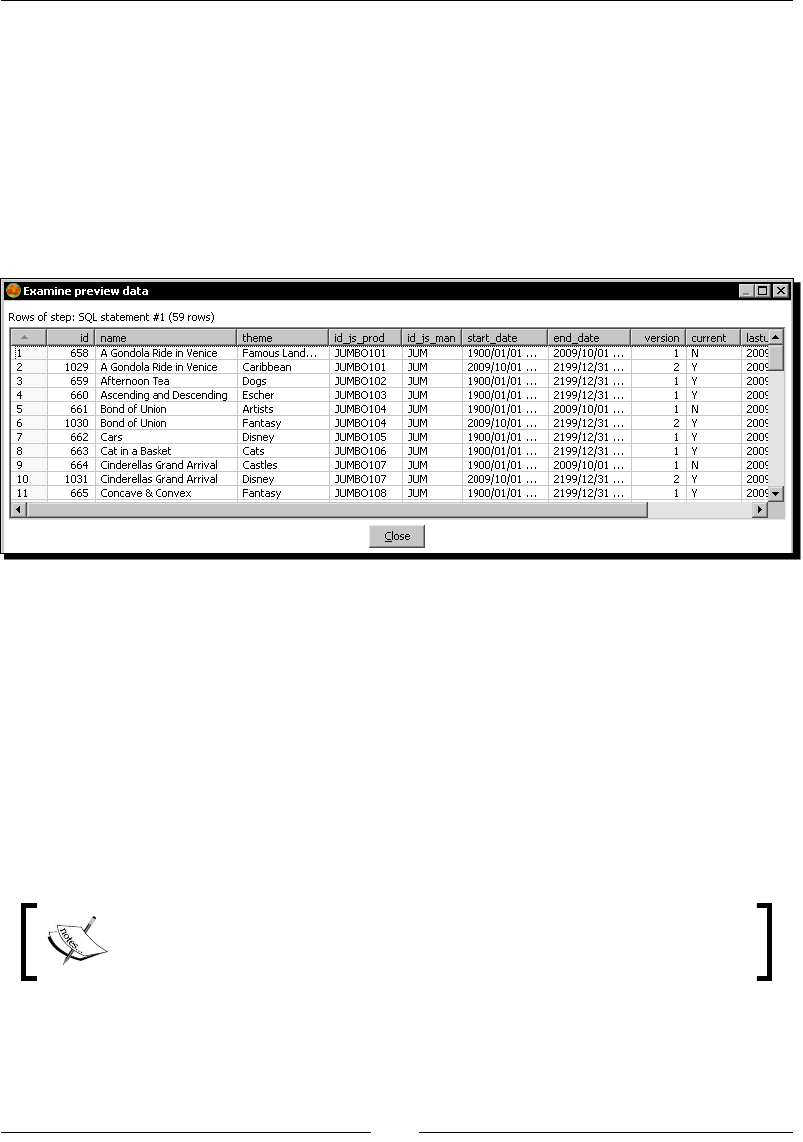

- Time for action – testing the transformation that keeps a history

- of product changes

- Summary

- Chapter 10: Creating Basic Task Flows

- Introducing PDI jobs

- Time for action – creating a simple hello world job

- Receiving arguments and parameters in a job

- Time for action – customizing the hello world file with

- arguments and parameters

- Running jobs from a terminal window

- Time for action – executing the hello world job from a terminal

- window

- Using named parameters and command-line arguments in transformations

- Time for action – calling the hello world transformation with

- fixed arguments and parameters

- Deciding between the use of a command-line argument and a named parameter

- Running job entries under conditions

- Time for action – sending a sales report and warning the

- administrator if something is wrong

- Summary

- Chapter 11: Creating Advanced Transformations and Jobs

- Enhancing your processes with the use of variables

- Time for action – updating a file with news about examinations

- by setting a variable with the name of the file

- Enhancing the design of your processes

- Time for action – generating files with top scores

- Time for action – calculating the top scores with a

- subtransformation

- Time for action – splitting the generation of top scores by

- copying and getting rows

- Time for action – generating the files with top scores by

- nesting jobs

- Iterating jobs and transformations

- Time for action – generating custom files by executing a

- transformation for every input row

- Summary

- Chapter 12: Developing and Implementing a Simple Datamart

- Exploring the sales datamart

- Loading the dimensions

- Time for action – loading dimensions for the sales datamart

- Extending the sales datamart model

- Loading a fact table with aggregated data

- Time for action – loading the sales fact table by looking up

- dimensions

- Getting facts and dimensions together

- Time for action – loading the fact table using a range of dates

- obtained from the command line

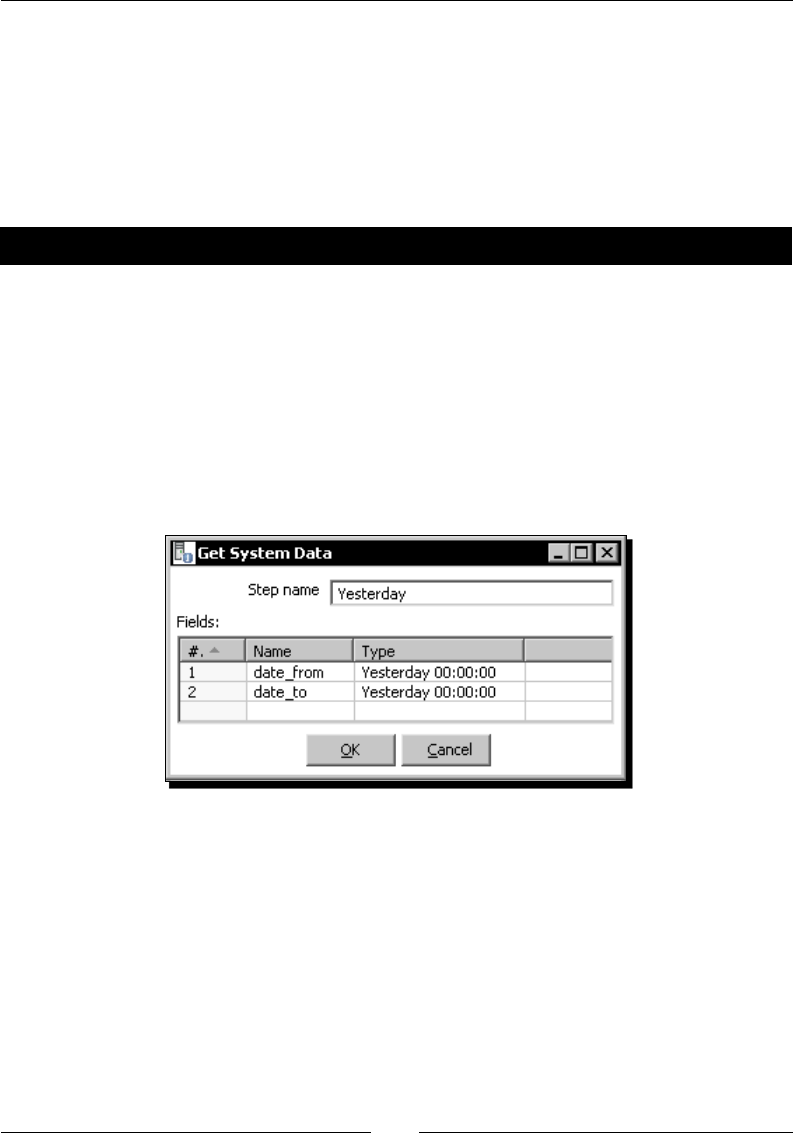

- Time for action – loading the sales star

- Getting rid of administrative tasks

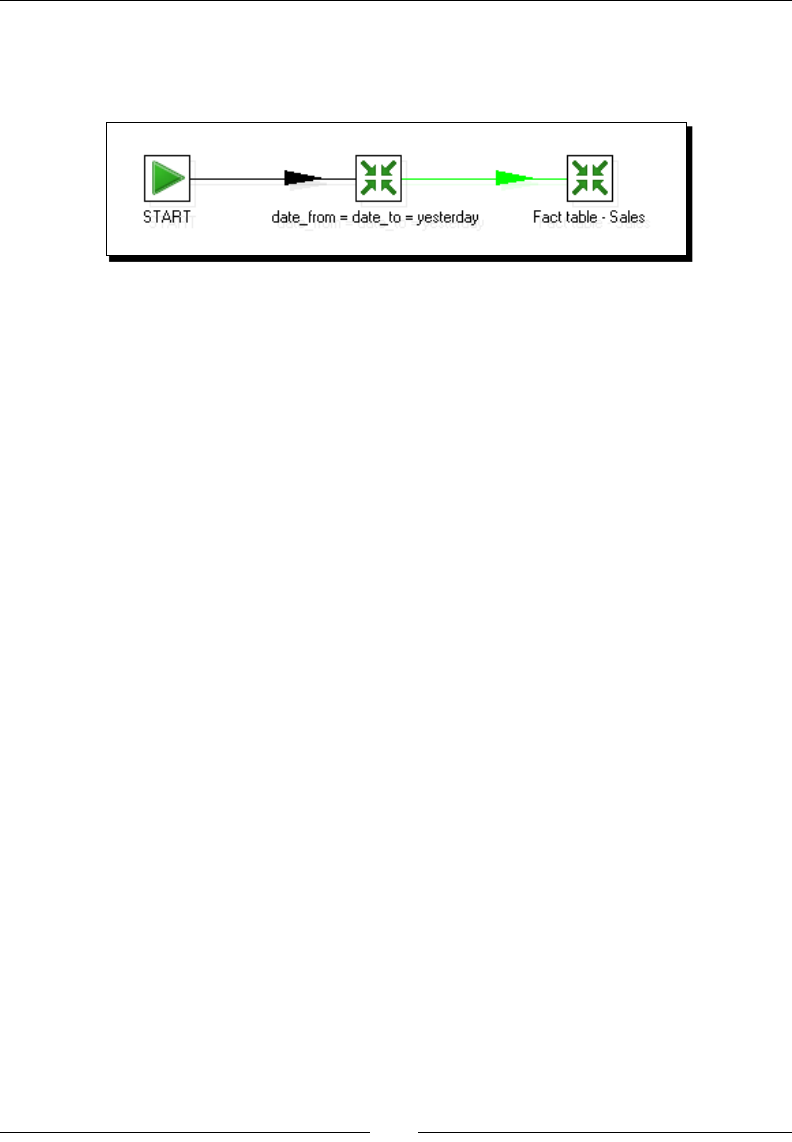

- Time for action – automating the loading of the sales datamart

- Summary

- Chapter 13: Taking it Further

- Appendix A: Working with Repositories

- Creating a repository

- Time for action – creating a PDI repository

- Working with the repository storage system

- Time for action – logging into a repository

- Examining and modifying the contents of a repository with the Repository explorer

- Migrating from a file-based system to a repository-based system and vice-versa

- Summary

- Appendix B: Pan and Kitchen: Launching Transformations and Jobs from the Command Line

- Appendix C: Quick Reference: Steps and Job Entries

- Appendix D: Spoon Shortcuts

- Appendix E: Introducing PDI 4 Features

- Appendix F: Pop Quiz Answers

- Index

Pentaho 3.2 Data Integration

Beginner's Guide

Explore, transform, validate, and integrate your data with ease

María Carina Roldán

BIRMINGHAM - MUMBAI

Pentaho 3.2 Data Integration

Beginner's Guide

Copyright © 2010 Packt Publishing

All rights reserved. No part of this book may be reproduced, stored in a retrieval system,

or transmied in any form or by any means, without the prior wrien permission of the

publisher, except in the case of brief quotaons embedded in crical arcles or reviews.

Every eort has been made in the preparaon of this book to ensure the accuracy of the

informaon presented. However, the informaon contained in this book is sold without

warranty, either express or implied. Neither the author, Packt Publishing, nor its dealers or

distributors will be held liable for any damages caused or alleged to be caused directly or

indirectly by this book.

Packt Publishing has endeavored to provide trademark informaon about all the companies

and products menoned in this book by the appropriate use of capitals. However, Packt

Publishing cannot guarantee the accuracy of this informaon.

First published: April 2010

Producon Reference: 1050410

Published by Packt Publishing Ltd.

32 Lincoln Road

Olton

Birmingham, B27 6PA, UK.

ISBN 978-1-847199-54-6

www.packtpub.com

Cover Image by Parag Kadam (paragvkadam@gmail.com)

Credits

Author

María Carina Roldán

Reviewers

Jens Bleuel

Roland Bouman

Ma Casters

James Dixon

Will Gorman

Gretchen Moran

Acquision Editor

Usha Iyer

Development Editor

Reshma Sundaresan

Technical Editors

Gaurav Datar

Rukhsana Khambaa

Copy Editor

Sanchari Mukherjee

Editorial Team Leader

Gagandeep Singh

Project Team Leader

Lata Basantani

Project Coordinator

Poorvi Nair

Proofreader

Sandra Hopper

Indexer

Rekha Nair

Graphics

Geetanjali Sawant

Producon Coordinator

Shantanu Zagade

Cover Work

Shantanu Zagade

Foreword

If we look back at what has happened in the data integraon market over the last 10

years we can see a lot of change. In the rst half of that decade there was an explosion

in the number of data integraon tools and in the second half there was a big wave of

consolidaons. This consolidaon wave put an ever growing amount of data integraon

power in the hands of only a few large billion dollar companies. For any person, company

or project in need of data integraon, this meant either paying large amounts of money or

doing hand-coding of their soluon.

During that exact same period, we saw web servers, programming languages, operang

systems, and even relaonal databases turn into a commodity in the ICT market place. This

was driven among other things by the availability of open source soware such as Apache,

GNU, Linux, MySQL, and many others. For the ICT market, this meant that more services

could be deployed at a lower cost. If you look closely at what has been going on in those last

10 years, you will noce that most companies increasingly deployed more ICT services to

end-users. These services get more and more connected over an ever growing network.

Prey much anything ranging from ny mobile devices to huge cloud-based infrastructure

is being deployed and all those can contain data that is valuable to an organizaon.

The job of any person that needs to integrate all this data is not easy. Complexity of

informaon services technology usually increases exponenally with the number of systems

involved. Because of this, integrang all these systems can be a daunng and scary task that

is never complete. Any piece of code lives in what can be described as a soware ecosystem

that is always in a state of ux. Like in nature, certain ecosystems evolve extremely fast

where others change very slowly over me. However, like in nature all ICT systems change.

What is needed is another wave of commodicaon in the area of data integraon and

business intelligence in general. This is where Pentaho comes in.

Pentaho tries to provide answers to these problems by making the integraon soware

available as open source, accessible, easy to use, and easy to maintain for users and

developers alike. Every release of our soware we try to make things easier, beer, and

faster. However, even if things can be done with nice user interfaces, there are sll a huge

amount of possibilies and opons to choose from.

As the founder of the project I've always liked the fact that Kele users had a lot of choice.

Choice translates into creavity, and creavity oen delivers good soluons that are

comfortable to the person implemenng them. However, this choice can be daunng to any

beginning Kele developer. With thousands of opons to choose from, it can be very hard to

get started.

This is above all others the reason why I'm very happy to see this book come to life. It will

be a great and indispensable help for everyone that is taking steps into the wonderful world

of data integraon with Kele. As such, I hope you see this book as an open invitaon to get

started with Kele in the wonderful world of data integraon.

Ma Casters

Chief Data Integraon at Pentaho

Kele founder

The Kettle Project

Whether there is a migraon to do, an ETL process to run, or a need for massively loading

data into a database, you have several soware tools, ranging from expensive and

sophiscated to free open source and friendly ones, which help you accomplish the task.

Ten years ago, the scenario was clearly dierent. By 2000, Ma Casters, a Belgian business

intelligent consultant, had been working for a while as a datawarehouse architect and

administrator. As such, he was one of quite a number of people who, no maer if the

company they worked for was big or small, had to deal with the dicules that involve

bridging the gap between informaon technology and business needs. What made it even

worse at that me was that ETL tools were prohibively expensive and everything had to

be craed done. The last employer he worked for, didn't think that wring a new ETL tool

would be a good idea. This was one of the movaons for Ma to become an independent

contractor and to start his own company. That was in June 2001.

At the end of that year, he told his wife that he was going to write a new piece of soware

for himself to do ETL tasks. It was going to take up some me le and right in the evenings

and weekends. Surprised, she asked how long it would take you to get it done. He replied

that it would probably take ve years and that he perhaps would have something working

in three.

Working on that started in early 2003. Ma's main goals for wring the soware included

learning about databases, ETL processes, and data warehousing. This would in turn improve

his chances on a job market that was prey volale. Ulmately, it would allow him to work

full me on the soware.

Another important goal was to understand what the tool had to do. Ma wanted a scalable

and parallel tool, and wanted to isolate rows of data as much as possible.

The last but not least goal was to pick the right technology that would support the tool. The

rst idea was to build it on top of KDE, the popular Unix desktop environment. Trolltech, the

people behind Qt, the core UI library of KDE, had released database plans to create drivers

for popular databases. However, the lack of decent drivers for those databases drove Ma

to change plans and use Java. He picked Java because he had some prior experience as he

had wrien a Japanese Chess (Shogi) database program when Java 1.0 was released. To

Sun's credit, this soware sll runs and is available at http://ibridge.be/shogi/.

Aer a year of development, the tool was capable of reading text les, reading from

databases, wring to databases and it was very exible. The experience with Java was not

100% posive though. The code had grown unstructured, crashes occurred all too oen, and

it was hard to get something going with the Java graphic library used at that moment, the

Abstract Window Toolkit (AWT); it looked bad and it was slow.

As for the library, Ma decided to start using the newly released Standard Widget Toolkit

(SWT), which helped solve part of the problem. As for the rest, Kele was a complete mess.

It was me to ask for help. The help came in hands of Wim De Clercq, a senior enterprise

Java architect, co-owner of Ixor (www.ixor.be) and also friend of Ma. At various intervals

over the next few years, Wim involved himself in the project, giving advices to Ma about

good pracces in Java programming. Listening to that advice meant performing massive

amounts of code changes. As a consequence, it was not unusual to spend weekends doing

nothing but refactoring code and xing thousands of errors because of that. But, bit by bit,

things kept going in the right direcon.

At that same me, Ma also showed the results to his peers, colleagues, and other senior

BI consultants to hear what they thought of Kele. That was how he got in touch with the

Flemish Trac Centre (www.verkeerscentrum.be/verkeersinfo/kaart) where billions

of rows of data had to be integrated from thousands of data sources all over Belgium. All of

a sudden, he was being paid to deploy and improve Kele to handle that job. The diversity of

test cases at the trac center helped to improve Kele dramacally. That was somewhere in

2004 and Kele was by its version 1.2.

While working at Flemish, Ma also posted messages on Javaforge (www.javaforge.com)

to let people know they could download a free copy of Kele for their own use. He got a

few reacons. Despite some of them being remarkably negave, most were posive. The

most interesng response came from a nice guy called Jens Bleuel in Germany who asked if

it was possible to integrate third-party soware into Kele. In his specic case, he needed a

connector to link Kele with the German SAP soware (www.sap.com). Kele didn't have a

plugin architecture, so Jens' queson made Ma think about a plugin system, and that was

the main movaon for developing version 2.0.

For various reasons including the birth of Ma's son Sam and a lot of consultancy work,

it took around a year to release Kele version 2.0. It was a fairly complete release with

advanced support for slowly changing dimensions and junk dimensions (Chapter 9 explains

those concepts), ability to connect to thirteen dierent databases, and the most important

fact being support for plugins. Ma contacted Jens to let him know the news and Jens was

really interested. It was a very memorable moment for Ma and Jens as it took them only a

few hours to get a new plugin going that read data from an SAP/R3 server. There was a lot

of excitement, and they agreed to start promong the sales of Kele from the Kettle.be

website and from Prorao (www.proratio.de), the company Jens worked for.

Those were days of improvements, requests, people interested in the project. However, it

became too much to handle. Doing development and sales all by themselves was no fun

aer a while. As such, Ma thought about open sourcing Kele early in 2005 and by late

summer he made his decision. Jens and Prorao didn't mind and the decision was nal.

When they nally open sourced Kele on December 2005, the response was massive. The

downloadable package put up on Javaforge got downloaded around 35000 mes during rst

week only. The news got spread all over the world prey quickly.

What followed was a ood of messages, both private and on the forum. At its peak in March

2006, Ma got over 300 messages a day concerning Kele.

In no me, he was answering quesons like crazy, allowing people to join the development

team and working as a consultant at the same me. Added to this, the birth of his daughter

Hannelore in February 2006 was too much to deal with.

Fortunately, good mes came. While Ma was trying to handle all that, a discussion was

taking place at the Pentaho forum (http://forums.pentaho.org/) concerning the ETL

tool that Pentaho should support. They had selected Enhydra Octopus, a Java-based ETL

soware, but they didn't have a strong reliance on a specic tool.

While Jens was evaluang all sorts of open source BI packages, he came across that thread.

Ma replied immediately persuading people at Pentaho to consider including Kele. And

he must be convincing because the answer came quickly and was posive. James Dixon,

Pentaho founder and CTO, opened Kele the possibility to be the premier and only ETL

tool supported by Pentaho. Later on, Ma came in touch with one of the other Pentaho

founders, Richard Daley, who oered him a job. That allowed Ma to focus full-me on

Kele. Four years later, he's sll happily working for Pentaho as chief architect for data

integraon, doing the best eort to deliver Kele 4.0. Jens Bleuel, who collaborated with

Ma since the early versions, is now also part of the Pentaho team.

About the Author

María Carina was born in a small town in the Patagonia region in Argenna. She earned

her Bachelor degree in Computer Science at UNLP in La Plata and then moved to Buenos

Aires where she has lived since 1994 working in IT.

She has been working as a BI consultant for the last 10 years. At the beginning she worked

with Cognos suite. However, over the last three years, she has been dedicated, full me, to

developing Pentaho BI soluons both for local and several Lan-American companies, as well

as for a French automove company in the last months.

She is also an acve contributor to the Pentaho community.

At present, she lives in Buenos Aires, Argenna, with her husband Adrián and children

Camila and Nicolás.

Wring my rst book in a foreign language and working on a full me job

at the same me, not to menon the upbringing of two small kids, was

denitely a big challenge. Now I can tell that it's not impossible.

I dedicate this book to my husband and kids; I'd like to thank them for all

their support and tolerance over the last year. I'd also like to thank my

colleagues and friends who gave me encouraging words throughout the

wring process.

Special thanks to the people at Packt; working with them has been

really pleasant.

I'd also like to thank the Pentaho community and developers for making

Kele the incredible tool it is. Thanks to the technical reviewers who,

with their very crical eye, contributed to make this a book suited to

the audience.

Finally, I'd like to thank Ma Casters who, despite his busy schedule, was

willing to help me from the rst moment he knew about this book.

About the Reviewers

Jens Bleuel is a Senior Consultant and Engineer at Pentaho. He is also working as a project

leader, trainer, and product specialist in the services and support department. Before he

joined Pentaho in mid 2007, he was soware developer and project leader, and his main

business was Data Warehousing and the architecture along with designing and developing of

user friendly tools. He studied business economics, was on a grammar school for electronics,

and has been programming in a wide area of environments such as Assembler, C, Visual

Basic, Delphi, .NET, and these days mainly in Java. His customer focus is on the wholesale

market and consumer goods industries. Jens is 40 years old and lives with his wife and two

boys in Mainz, Germany (near the nice Rhine river). In his spare me, he pracces Tai-Chi,

Qigong, and photography.

Roland Bouman has been working in the IT industry since 1998, mostly as a database and

web applicaon developer. He has also worked for MySQL AB (later Sun Microsystems) as

cercaon developer and as curriculum developer.

Roland mainly focuses on open source web technology, databases, and Business Intelligence.

He's an acve member of the MySQL and Pentaho communies and can oen be found

speaking at worldwide conferences and events such as the MySQL user conference, the

O'Reilly Open Source conference (OSCON), and at Pentaho community events.

Roland is co-author of the MySQL 5.1 Cluster DBA Cercaon Study Guide (Vervante,

ISBN: 595352502) and Pentaho Soluons: Business Intelligence and Data Warehousing with

Pentaho and MySQL (Wiley, ISBN: 978-0-470-48432-6). He also writes on a regular basis for

the Dutch Database Magazine (DBM).

Roland is @rolandbouman on Twier and maintains a blog at

http://rpbouman.blogspot.com/.

Ma Casters has been an independent senior BI consultant for almost two decades. In that

period he led, designed, and implemented numerous data warehouses and BI soluons for

large and small companies. In that capacity, he always had the need for ETL in some form

or another. Almost out of pure necessity, he has been busy wring the ETL tool called Kele

(a.k.a. Pentaho Data Integraon) for the past eight years. First, he developed the tool mostly

on his own. Since the end of 2005 when Kele was declared an open source technology,

development took place with the help of a large community.

Since the Kele project was acquired by Pentaho in early 2006, he has been Chief of Data

Integraon at Pentaho as the lead architect, head of development, and spokesperson for the

Kele community.

I would like to personally thank the complete community for their help

in making Kele the success it is today. In parcular, I would like to thank

Maria for taking the me to write this nice book as well as the many

arcles on the Pentaho wiki (for example, the Kele tutorials), and her

appreciated parcipaon on the forum. Many thanks also go to my

employer Pentaho, for their large investment in open source BI in

general and Kele in parcular.

James Dixon is the Chief Geek and one of the co-founders of Pentaho Corporaon—the

leading commercial open source Business Intelligence company. He has worked in the

business intelligence market since graduang in 1992 from Southampton University with a

degree in Computer Science. He has served as Soware Engineer, Development Manager,

Engineering VP, and CTO at mulple business intelligence soware companies. He regularly

uses Pentaho Data Integraon for internal projects and was involved in the architectural

design of PDI V3.0.

He lives in Orlando, Florida, with his wife Tami and son Samuel.

I would like to thank my co-founders, my parents, and my wife Tami for all

their support and tolerance of my odd working hours.

I would like to thank my son Samuel for all the opportunies he gives me to

prove I'm not as clever as I think I am.

Will Gorman is an Engineering Team Lead at Pentaho. He works on a variety of Pentaho's

products, including Reporng, Analysis, Dashboards, Metadata, and the BI Server. Will

started his career at GE Research and earned his Masters degree in Computer Science at

Rensselaer Polytechnic Instute in Troy, New York. Will is the author of Pentaho Reporng

3.5 for Java Developers (ISBN: 3193), published by Packt Publishing.

Gretchen Moran is a graduate of University of Wisconsin – Stevens Point with a Bachelor's

degree in Computer Informaon Systems with a minor in Data Communicaons. Gretchen

began her career as a corporate data warehouse developer in the insurance industry and

joined Arbor Soware/Hyperion Soluons in 1999 as a commercial developer for the

Hyperion Analyzer and Web Analycs team. Gretchen has been a key player with Pentaho

Corporaon since its incepon in 2004. As Community Leader and core developer, Gretchen

managed the explosive growth of Pentaho's open source community for her rst 2 years

with the company. Gretchen has contributed to many of the Pentaho projects, including the

Pentaho BI Server, Pentaho Data Integraon, Pentaho Metadata Editor, Pentaho Reporng,

Pentaho Charng, and others.

Thanks Doug, Anthony, Isabella and Baby Jack for giving me my favorite

challenges and crowning achievements—being a wife and mom.

Table of Contents

Preface 1

Chapter 1: Geng started with Pentaho Data Integraon 7

Pentaho Data Integraon and Pentaho BI Suite 7

Exploring the Pentaho Demo 9

Pentaho Data Integraon 9

Using PDI in real world scenarios 11

Loading data warehouses or data marts 11

Integrang data 12

Data cleansing 12

Migrang informaon 13

Exporng data 13

Integrang PDI using Pentaho BI 13

Installing PDI 14

Time for acon – installing PDI 14

Launching the PDI graphical designer: Spoon 15

Time for acon – starng and customizing Spoon 15

Spoon 18

Seng preferences in the Opons window 18

Storing transformaons and jobs in a repository 19

Creang your rst transformaon 20

Time for acon – creang a hello world transformaon 20

Direcng the Kele engine with transformaons 25

Exploring the Spoon interface 26

Running and previewing the transformaon 27

Time for acon – running and previewing the

hello_world transformaon 27

Installing MySQL 29

Time for acon – installing MySQL on Windows 29

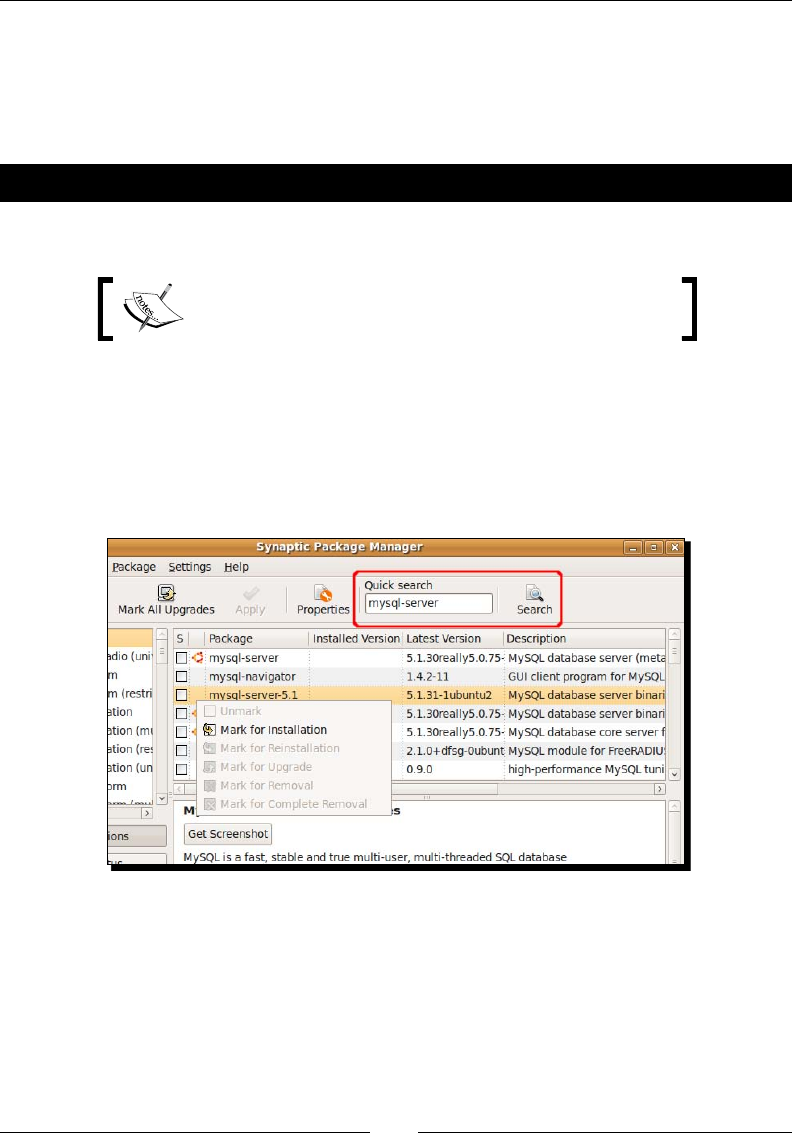

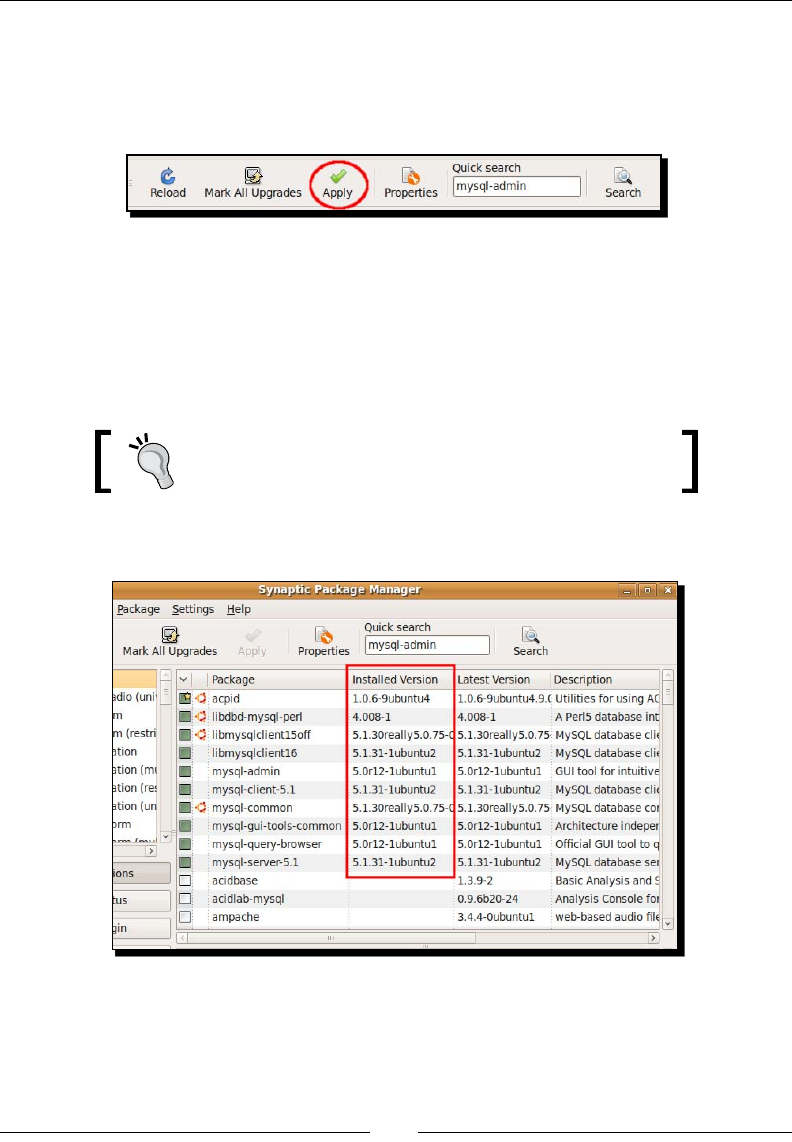

Time for acon – installing MySQL on Ubuntu 32

Summary 34

Table of Contents

[ ii ]

Chapter 2: Geng Started with Transformaons 35

Reading data from les 35

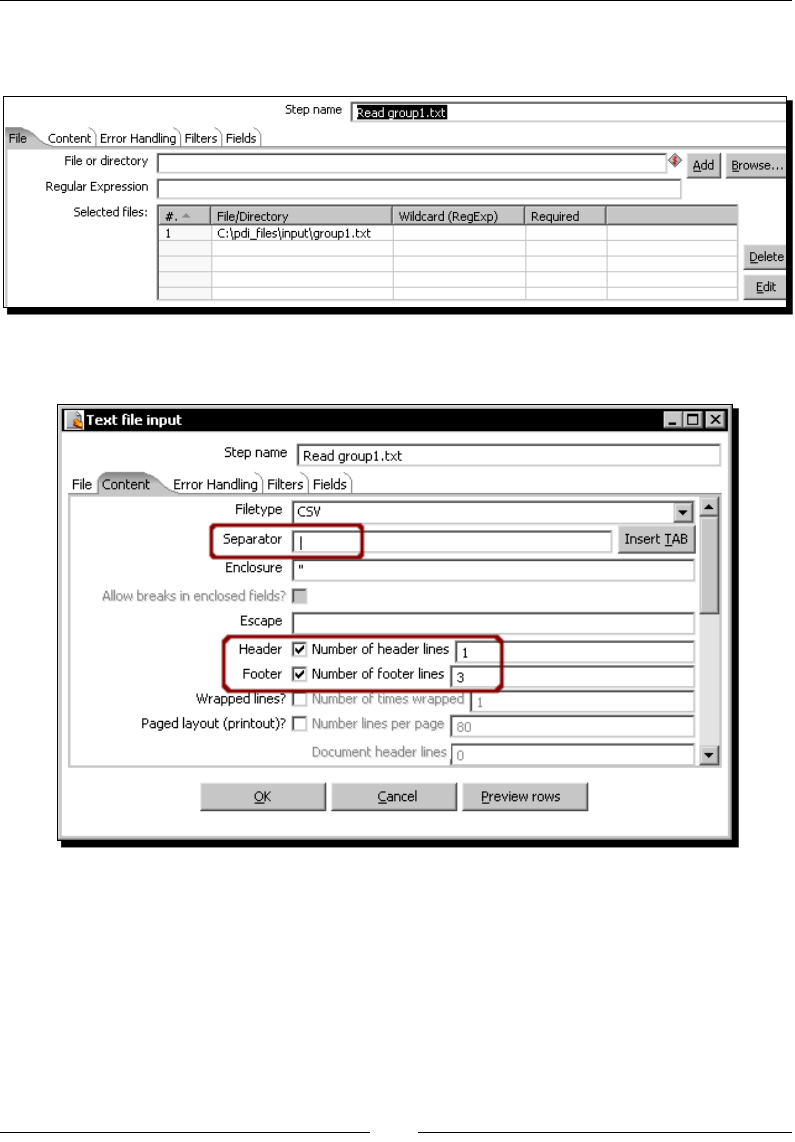

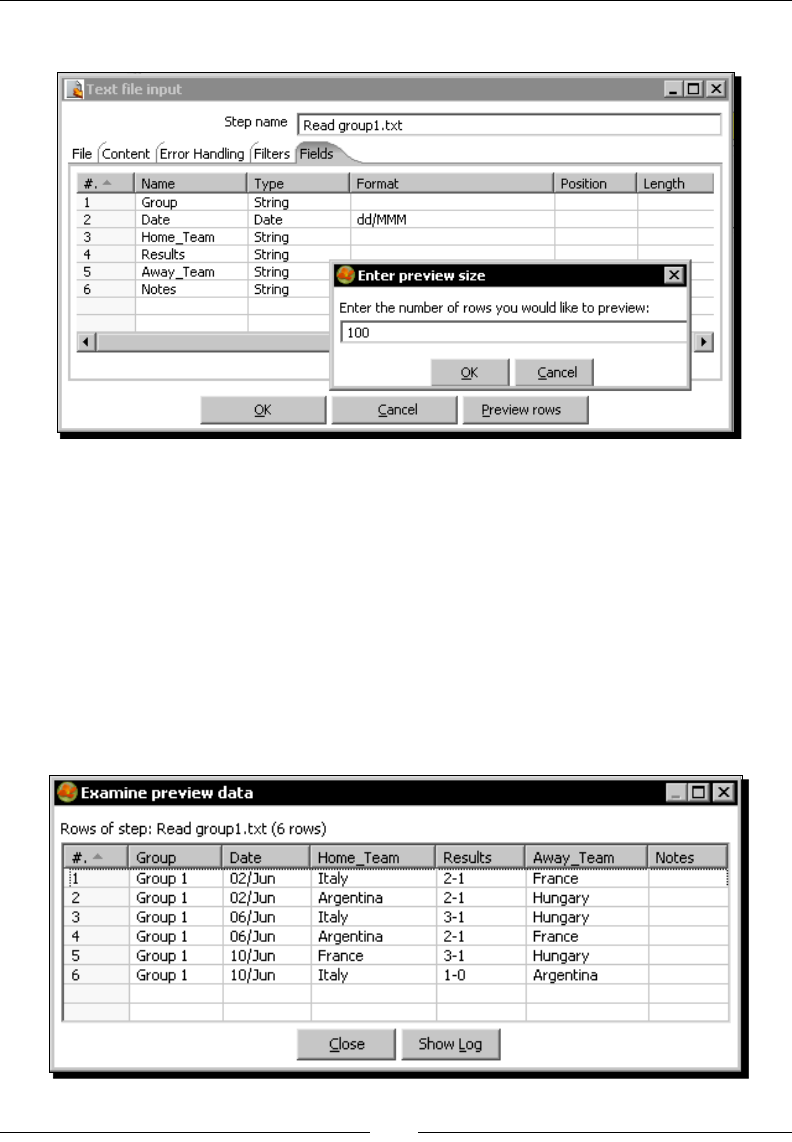

Time for acon – reading results of football matches from les 36

Input les 41

Input steps 41

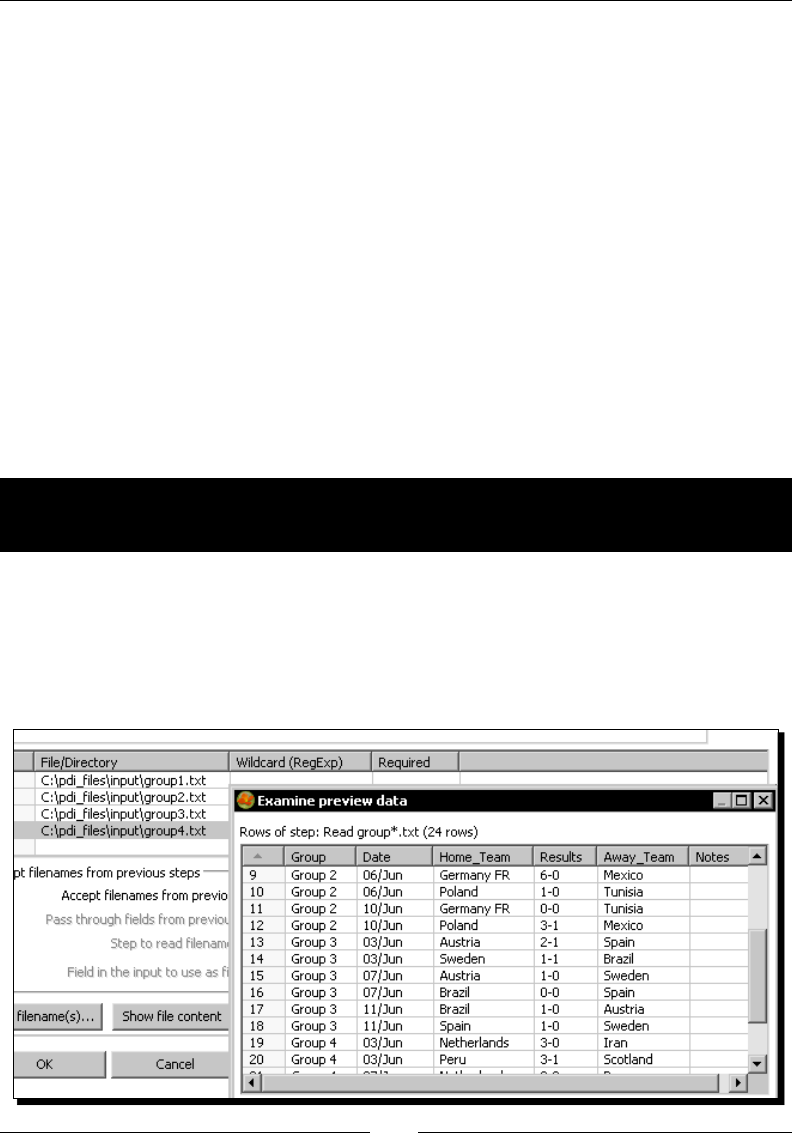

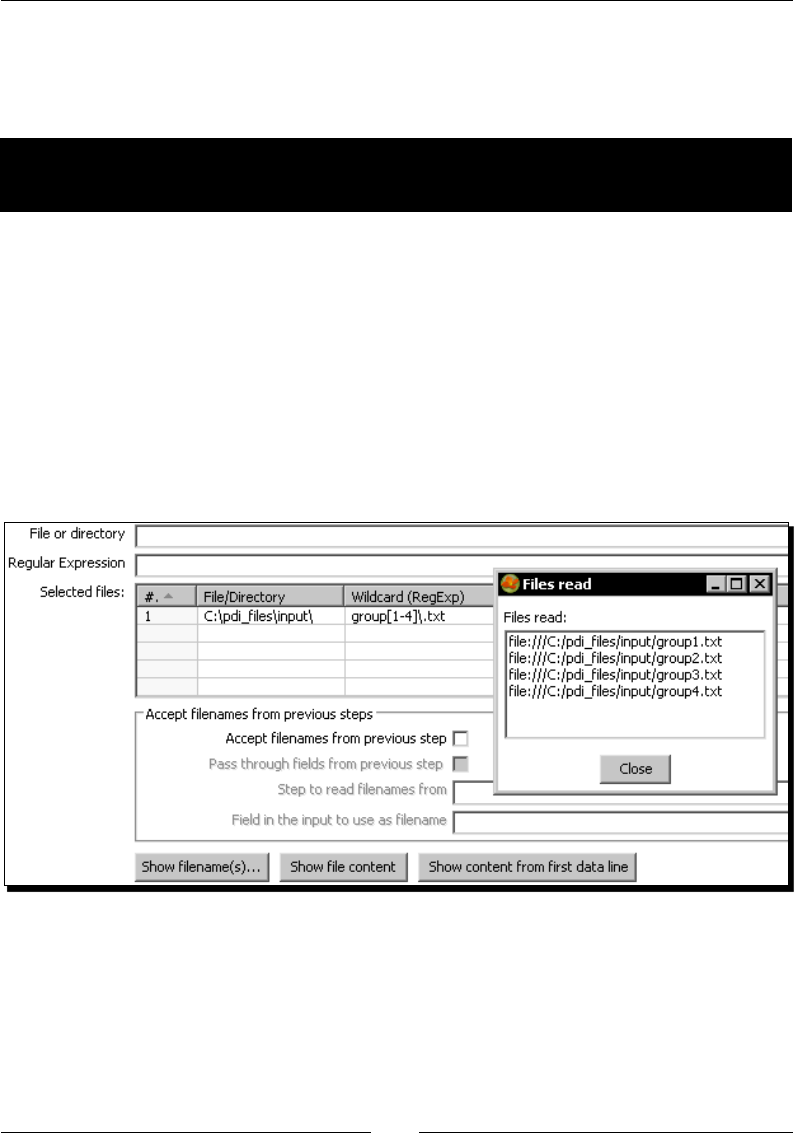

Reading several les at once 42

Time for acon – reading all your les at a me using a single

Text le input step 42

Time for acon – reading all your les at a me using a single

Text le input step and regular expressions 43

Regular expressions 44

Grids 46

Sending data to les 47

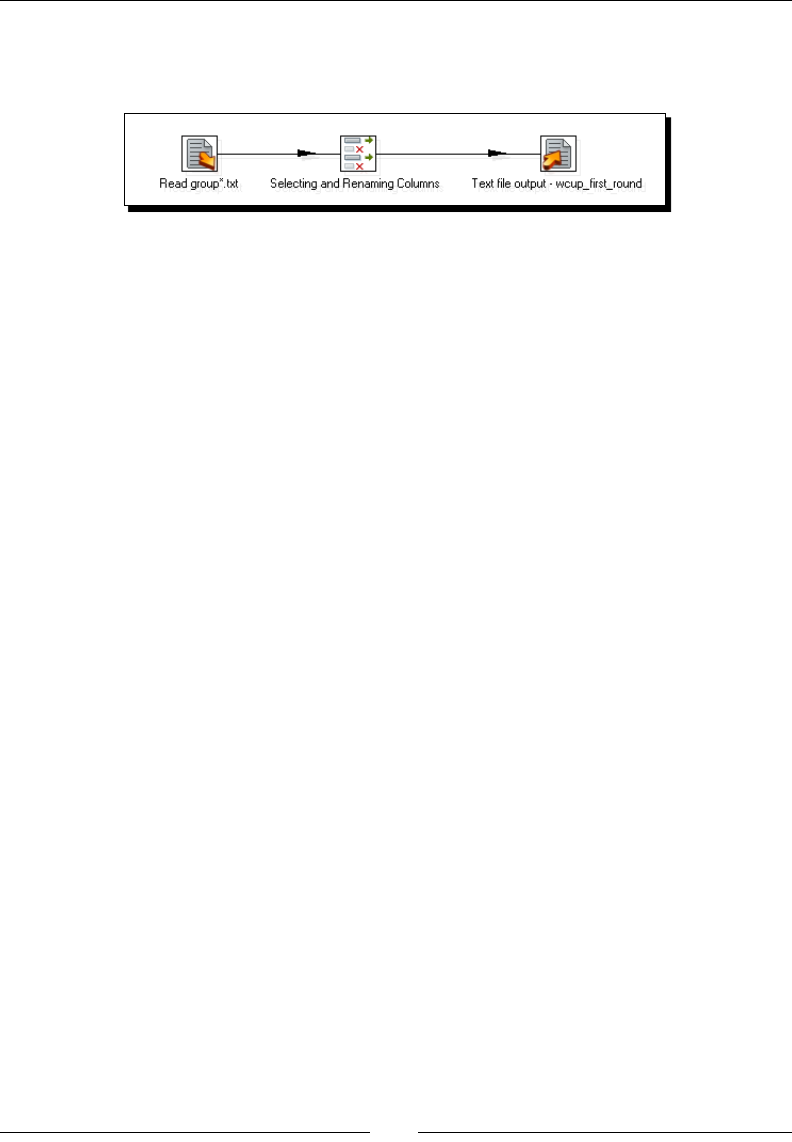

Time for acon – sending the results of matches to a plain le 47

Output les 49

Output steps 50

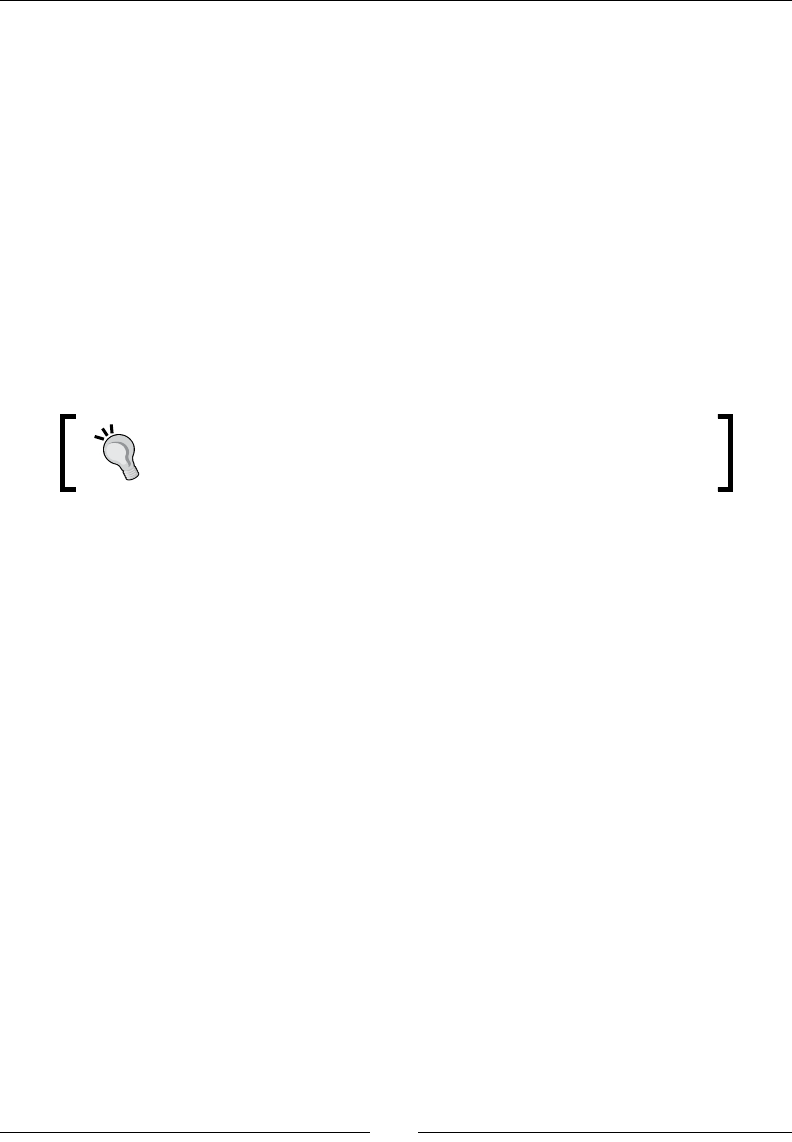

Some data denions 50

Rowset 50

Streams 51

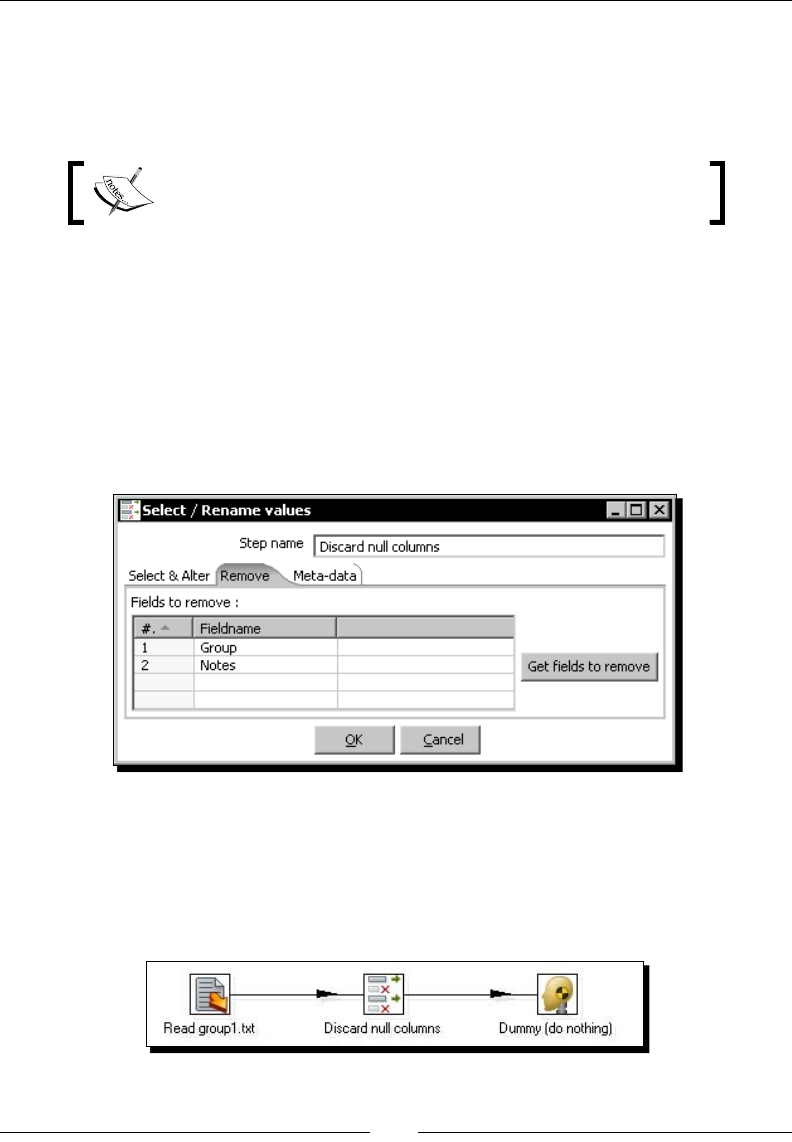

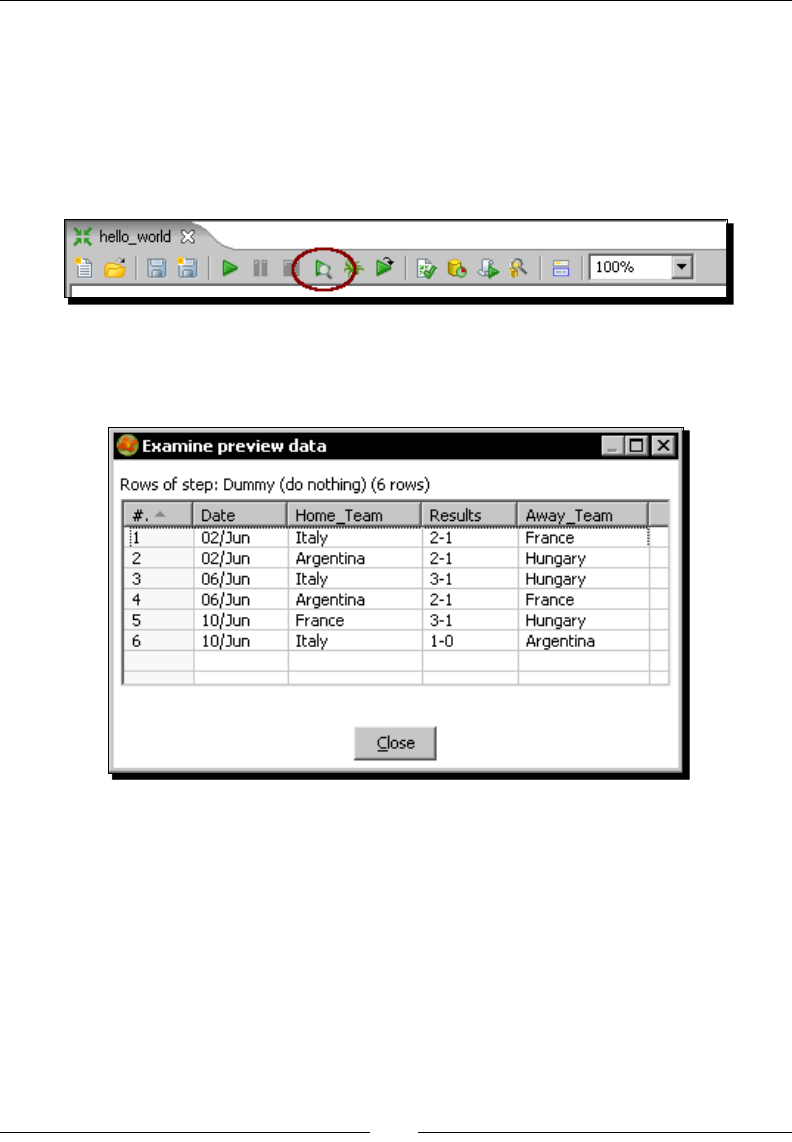

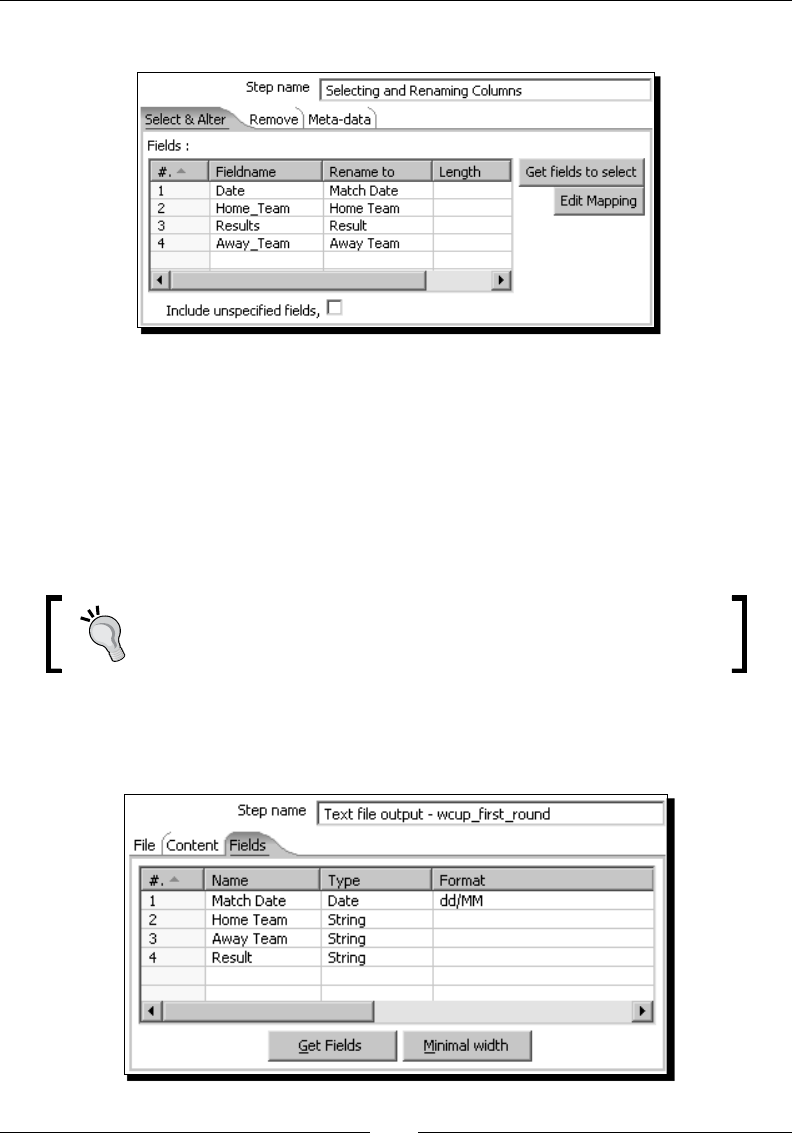

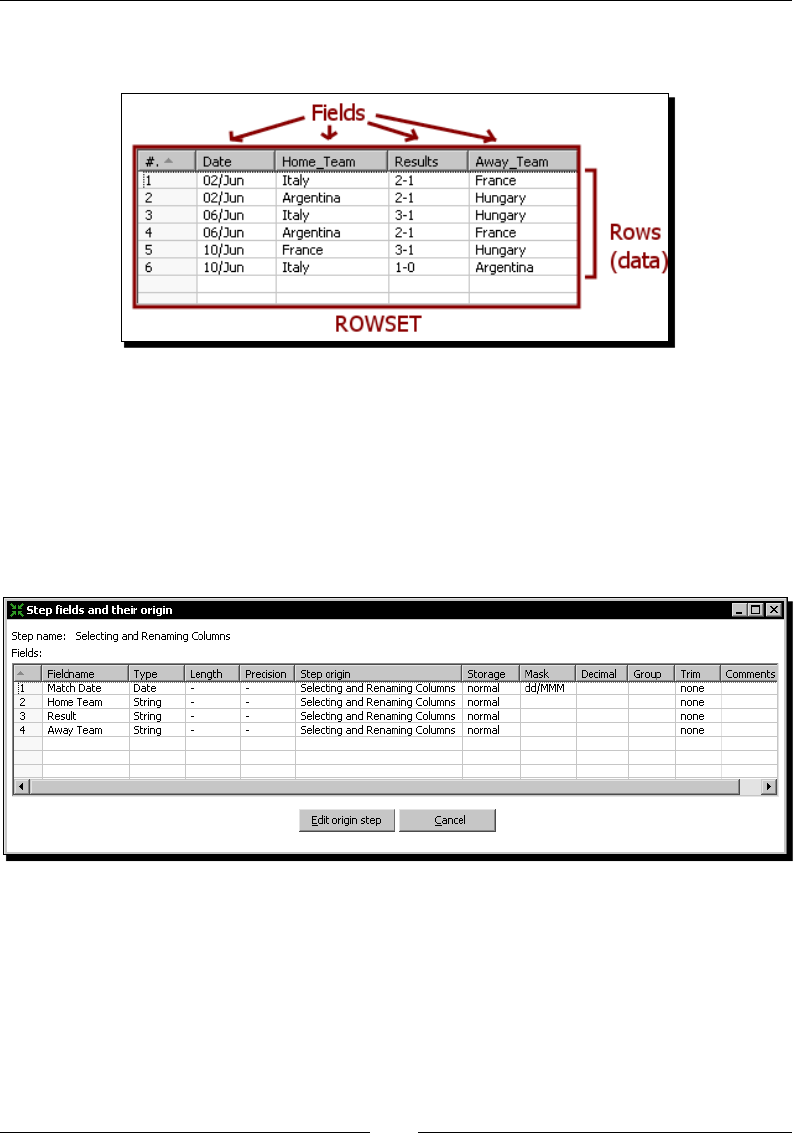

The Select values step 52

Geng system informaon 52

Time for acon – updang a le with news about examinaons 53

Geng informaon by using Get System Info step 57

Data types 58

Date elds 58

Numeric elds 59

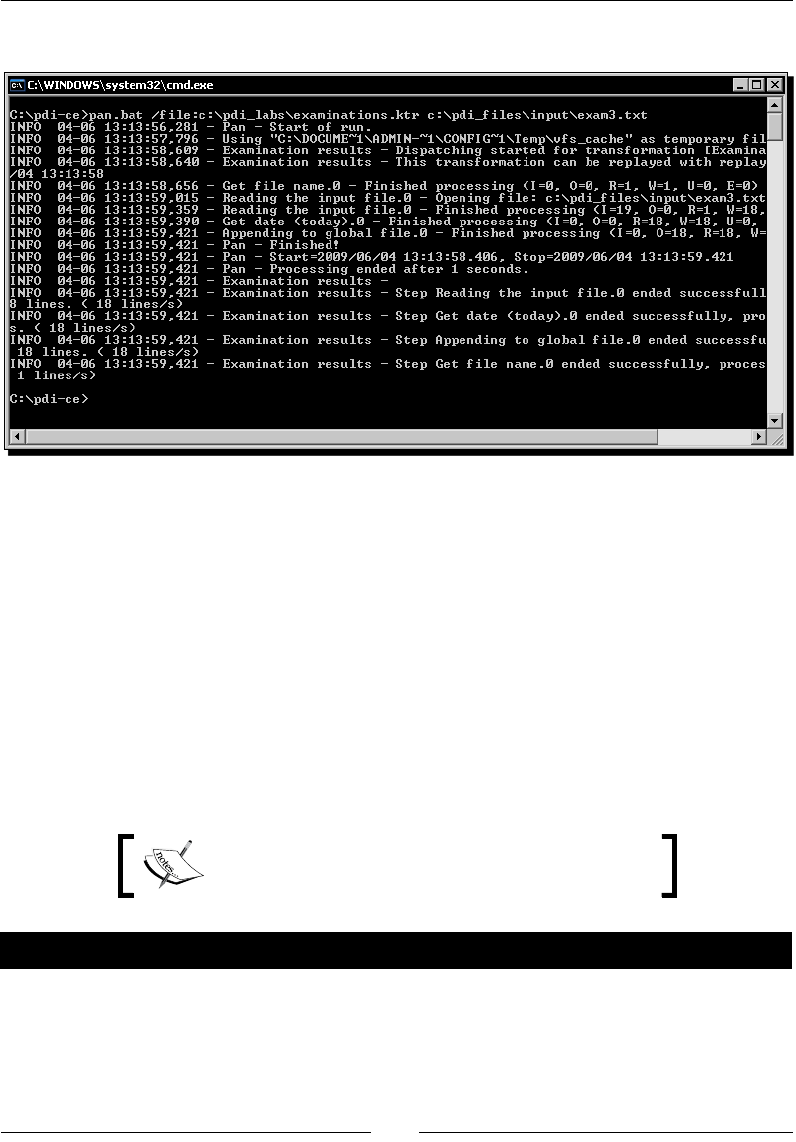

Running transformaons from a terminal window 60

Time for acon – running the examinaon transformaon from

a terminal window 60

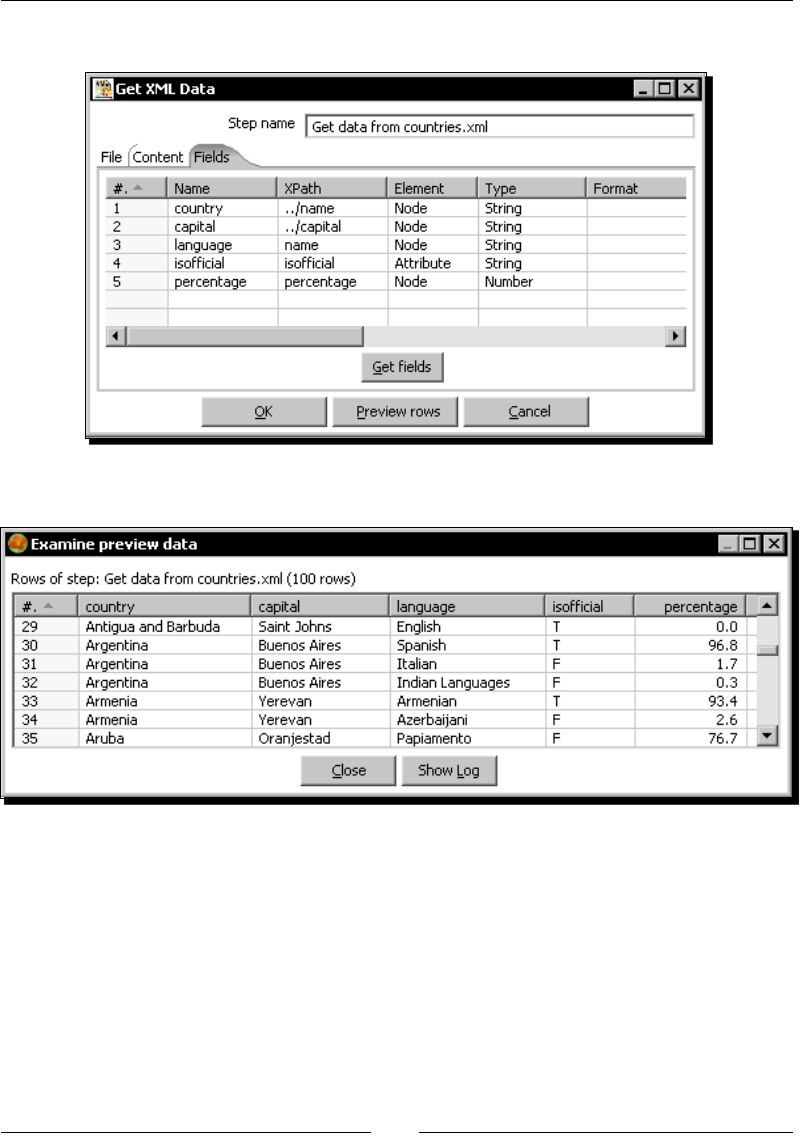

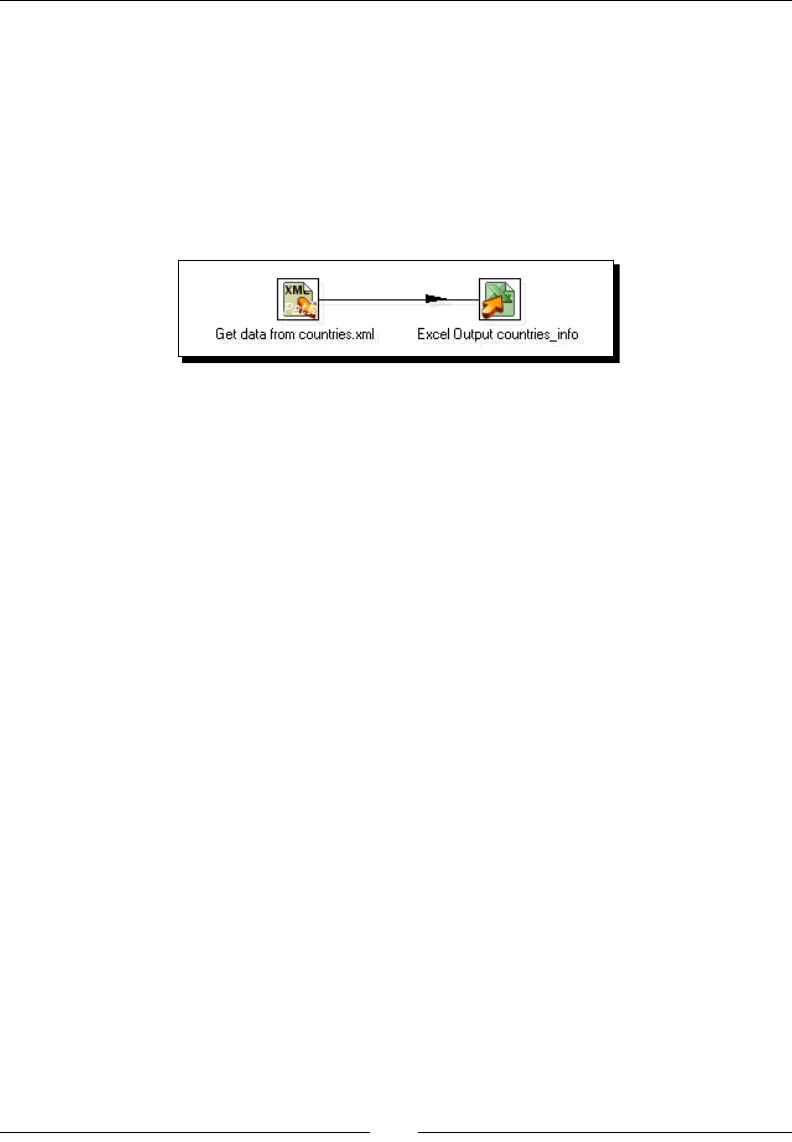

XML les 62

Time for acon – geng data from an XML le with informaon

about countries 62

What is XML 67

PDI transformaon les 68

Geng data from XML les 68

XPath 68

Conguring the Get data from XML step 69

Kele variables 70

How and when you can use variables 70

Summary 72

Table of Contents

[ iii ]

Chapter 3: Basic data manipulaon 73

Basic calculaons 73

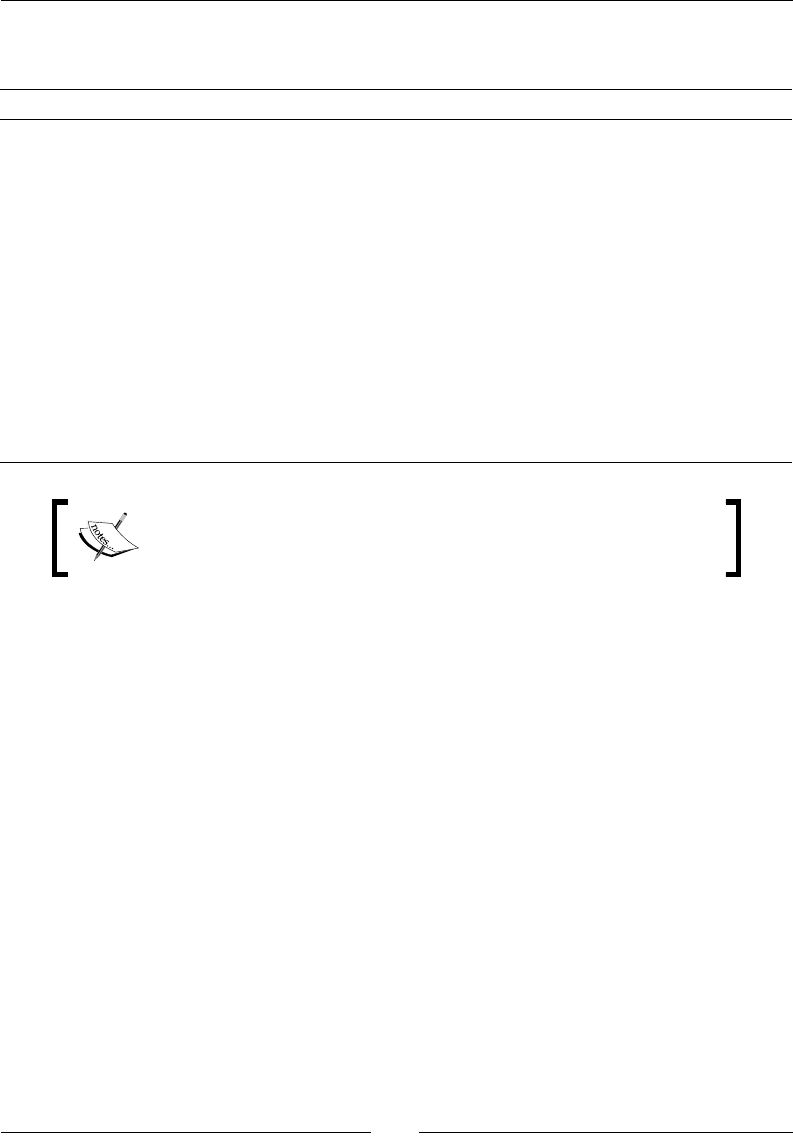

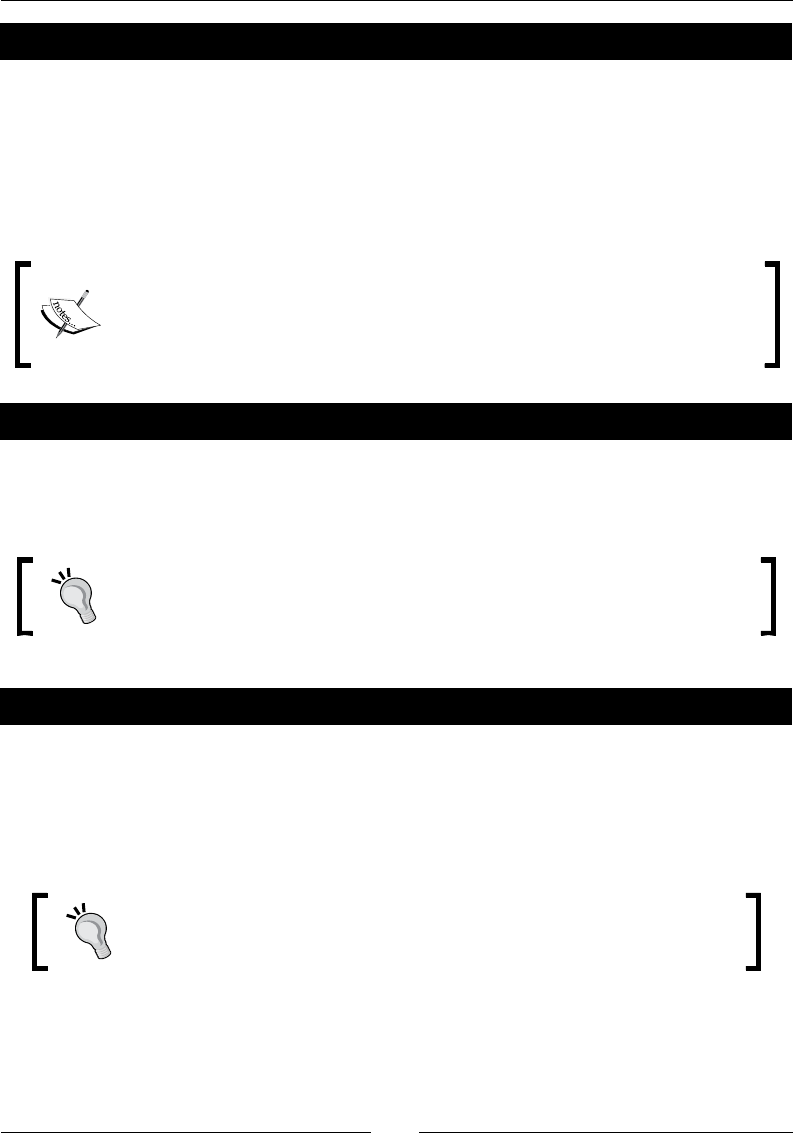

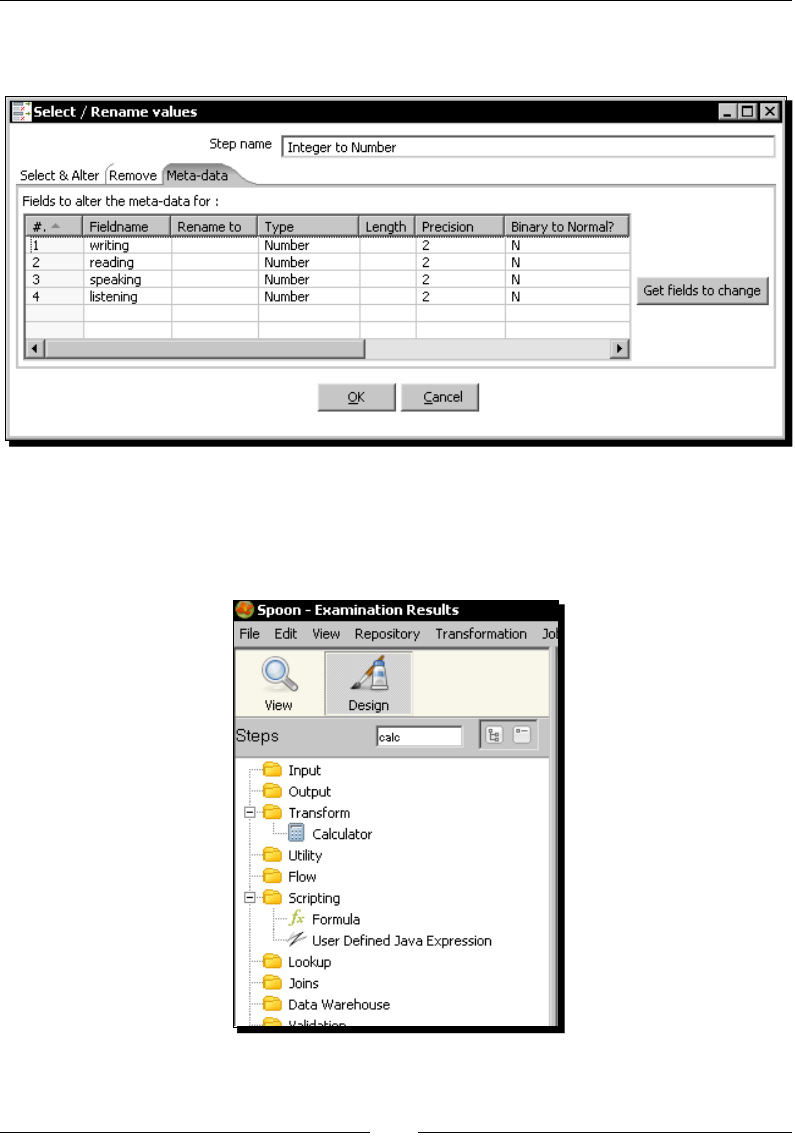

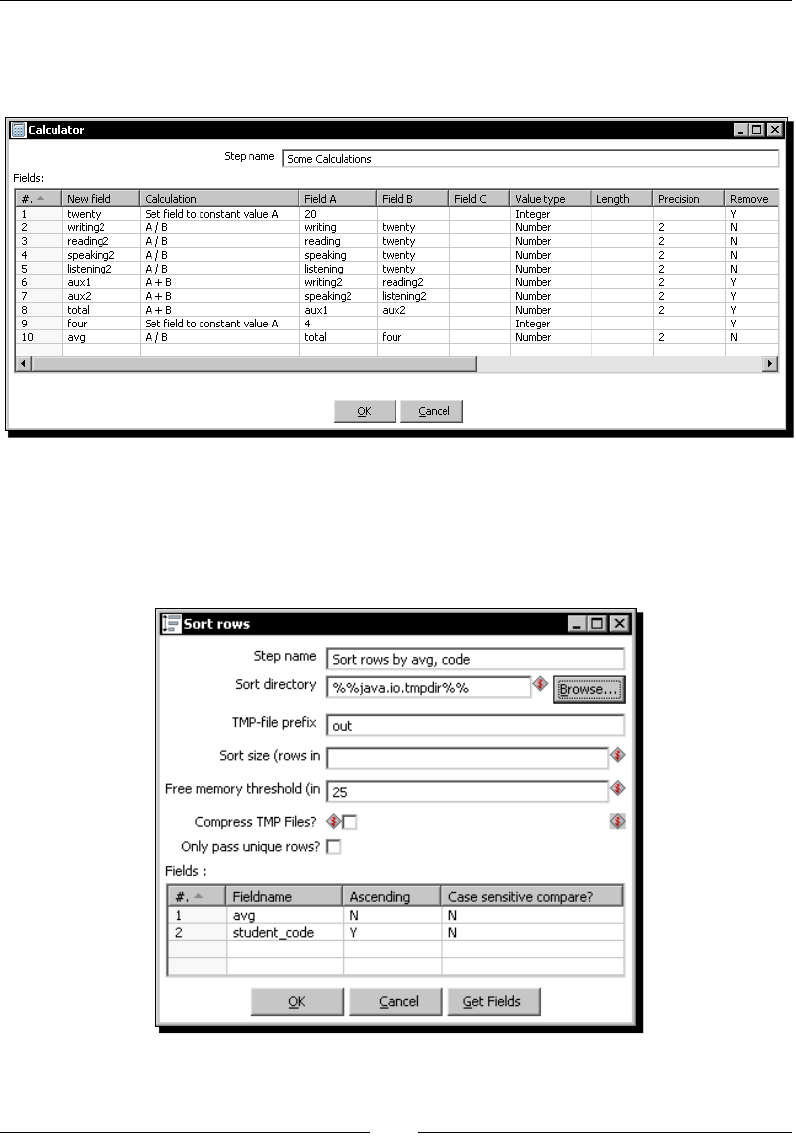

Time for acon – reviewing examinaons by using the Calculator step 74

Adding or modifying elds by using dierent PDI steps 82

The Calculator step 83

The Formula step 84

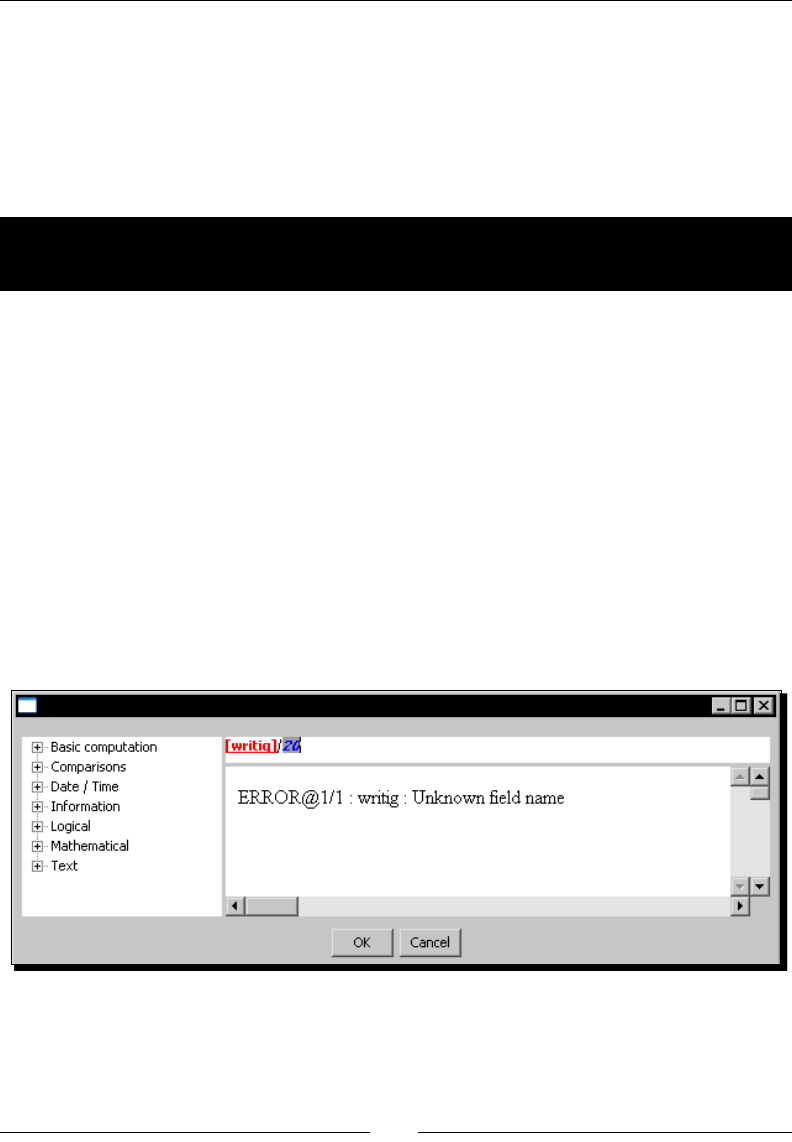

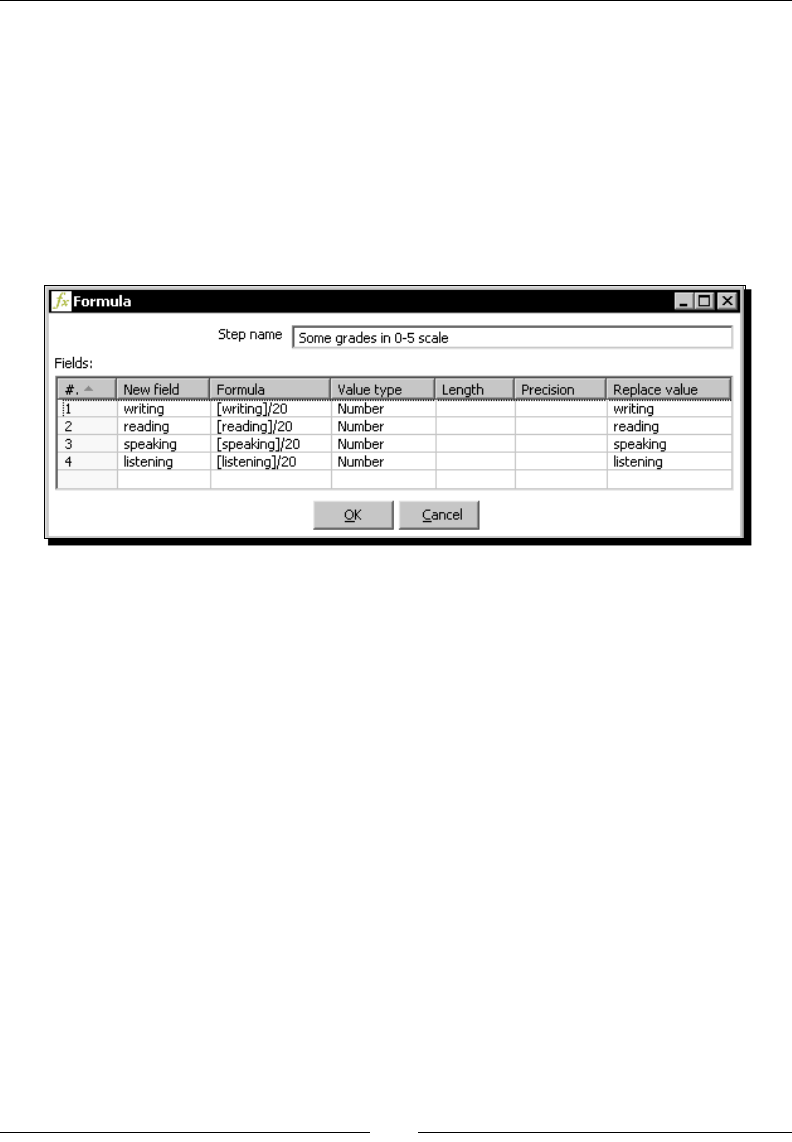

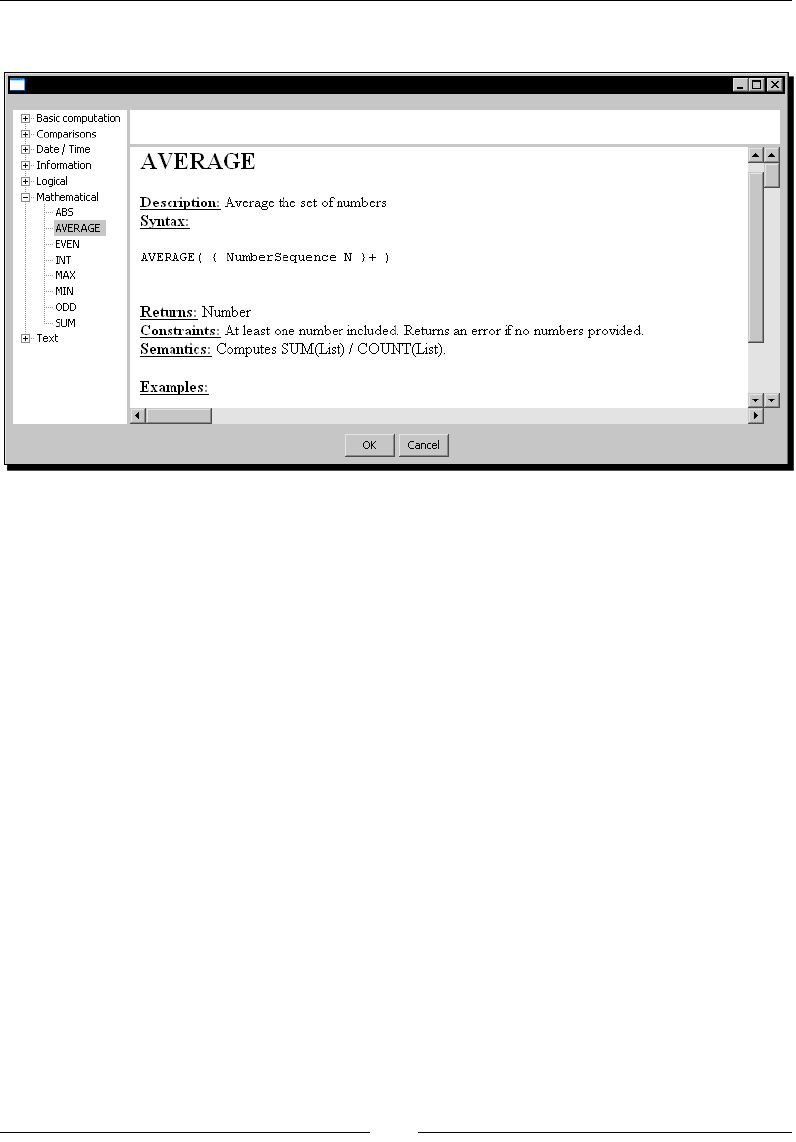

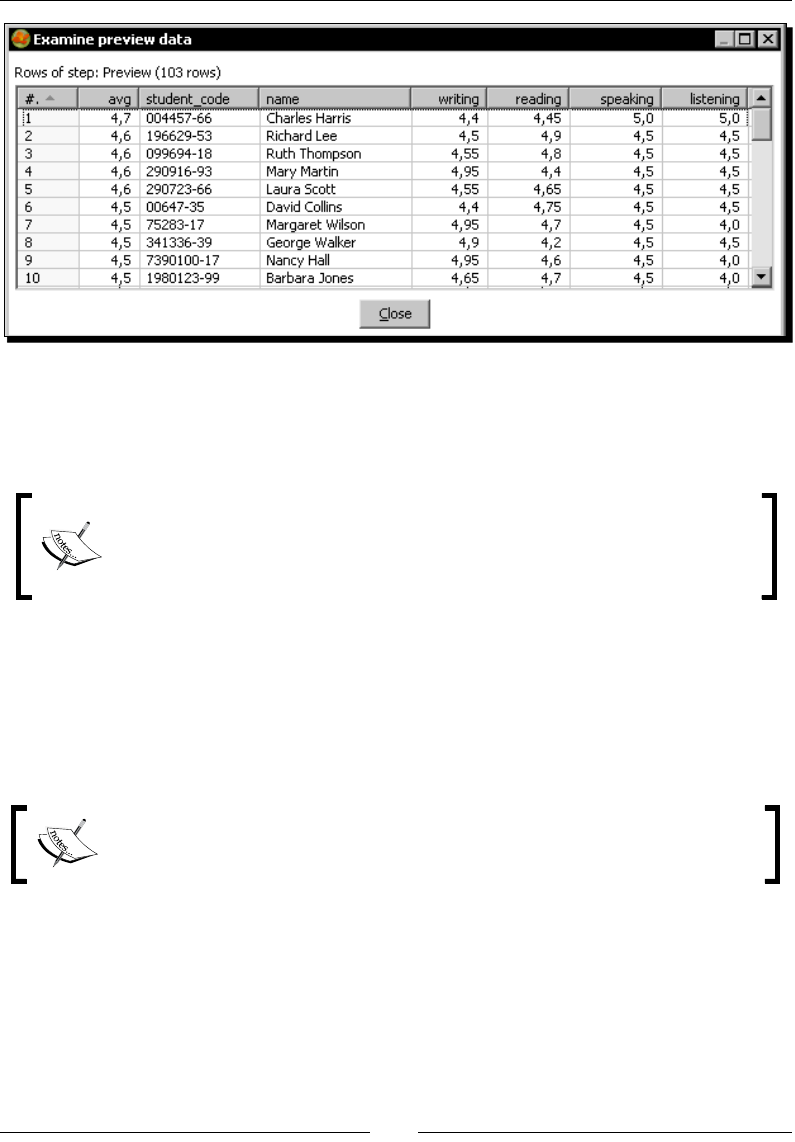

Time for acon – reviewing examinaons by using the Formula step 84

Calculaons on groups of rows 88

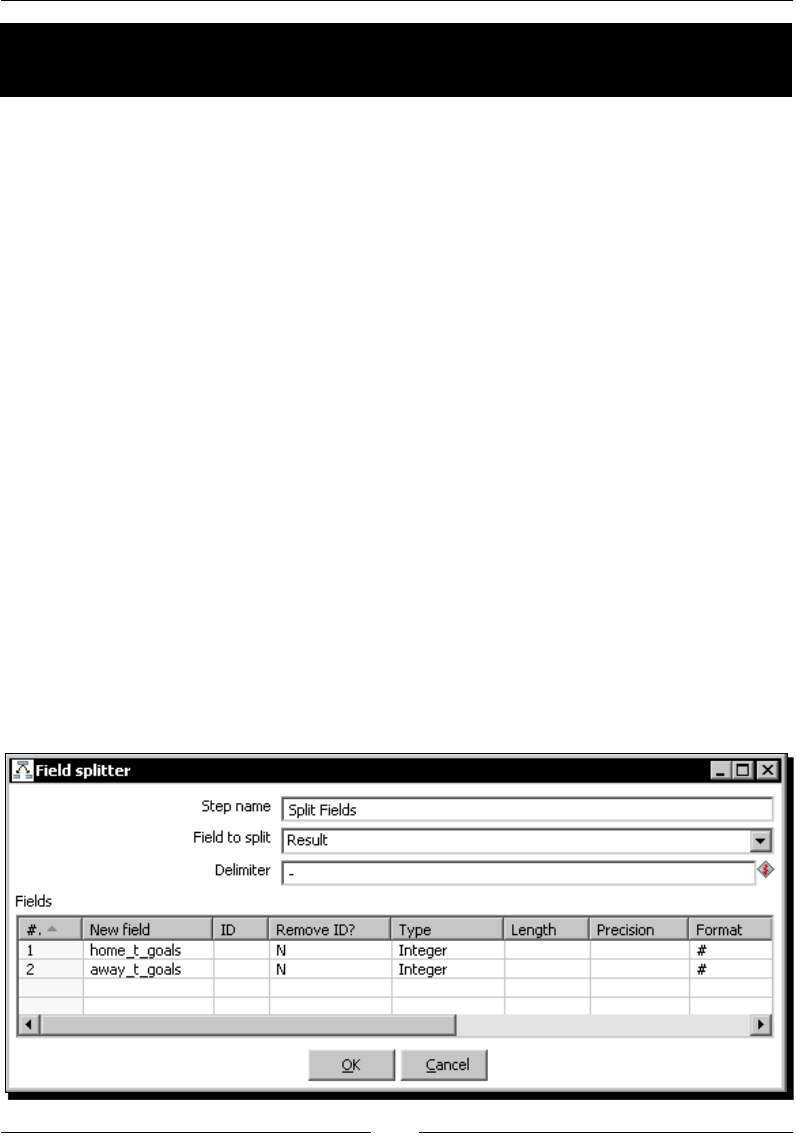

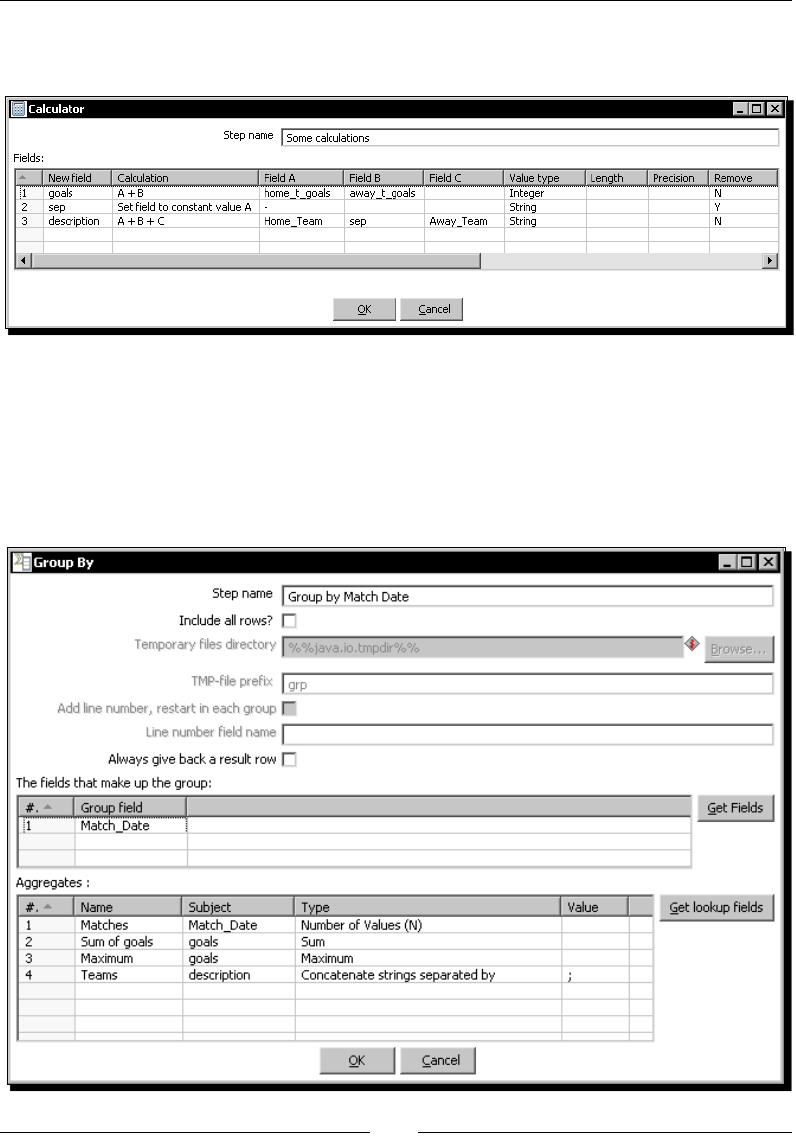

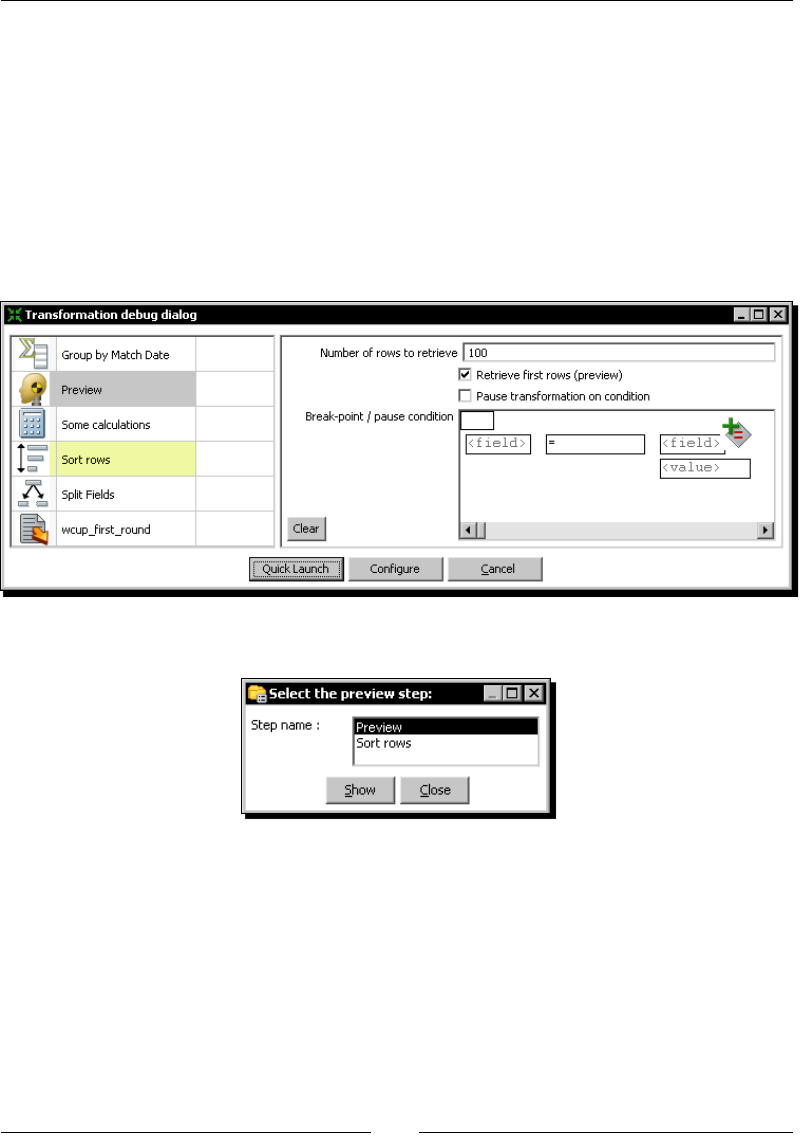

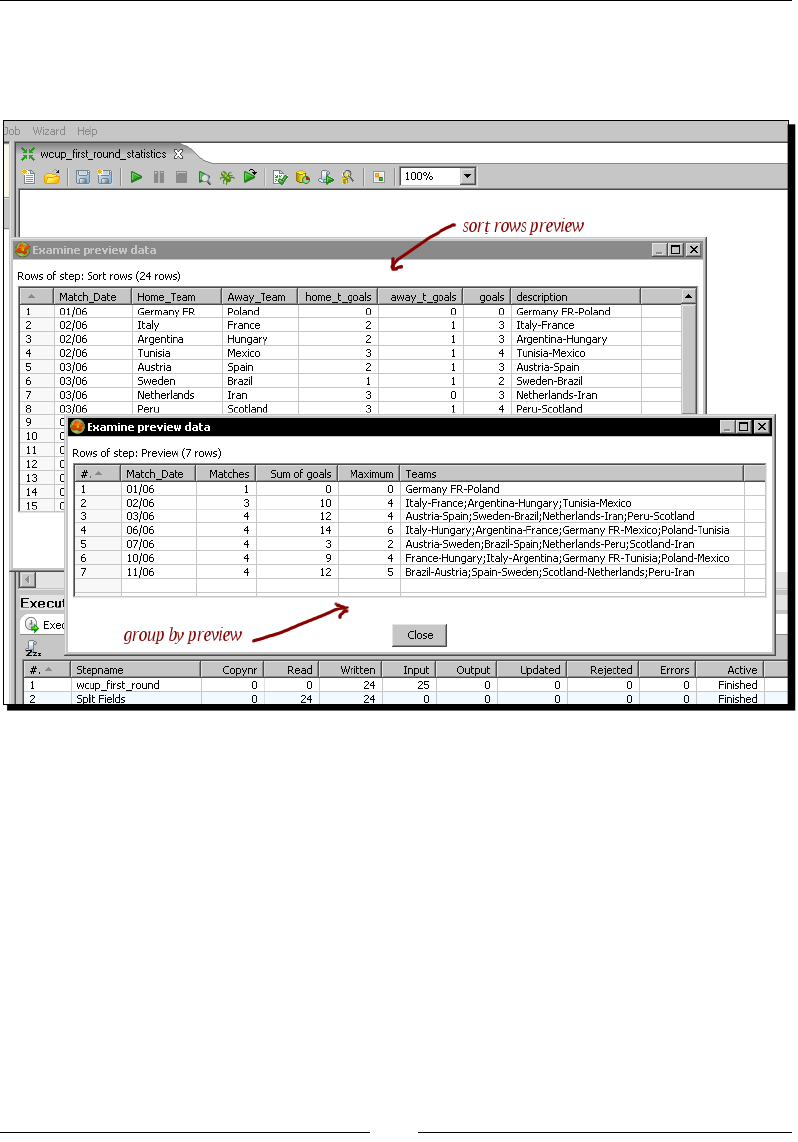

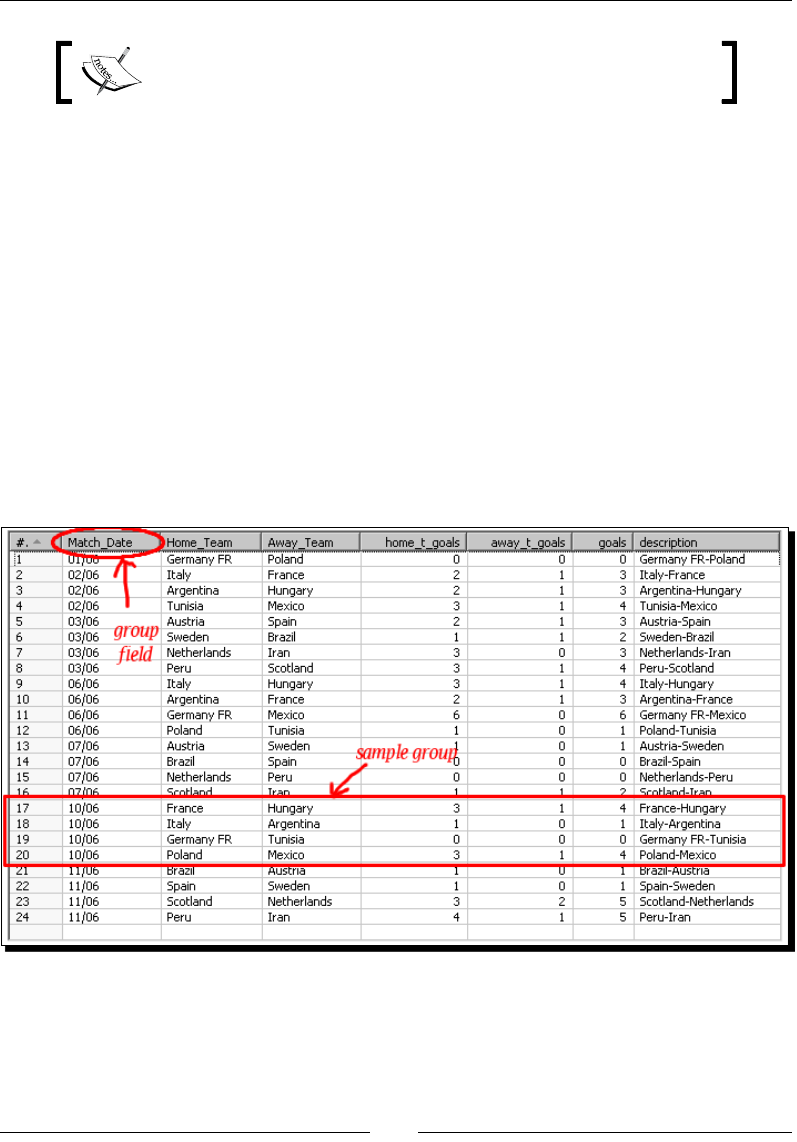

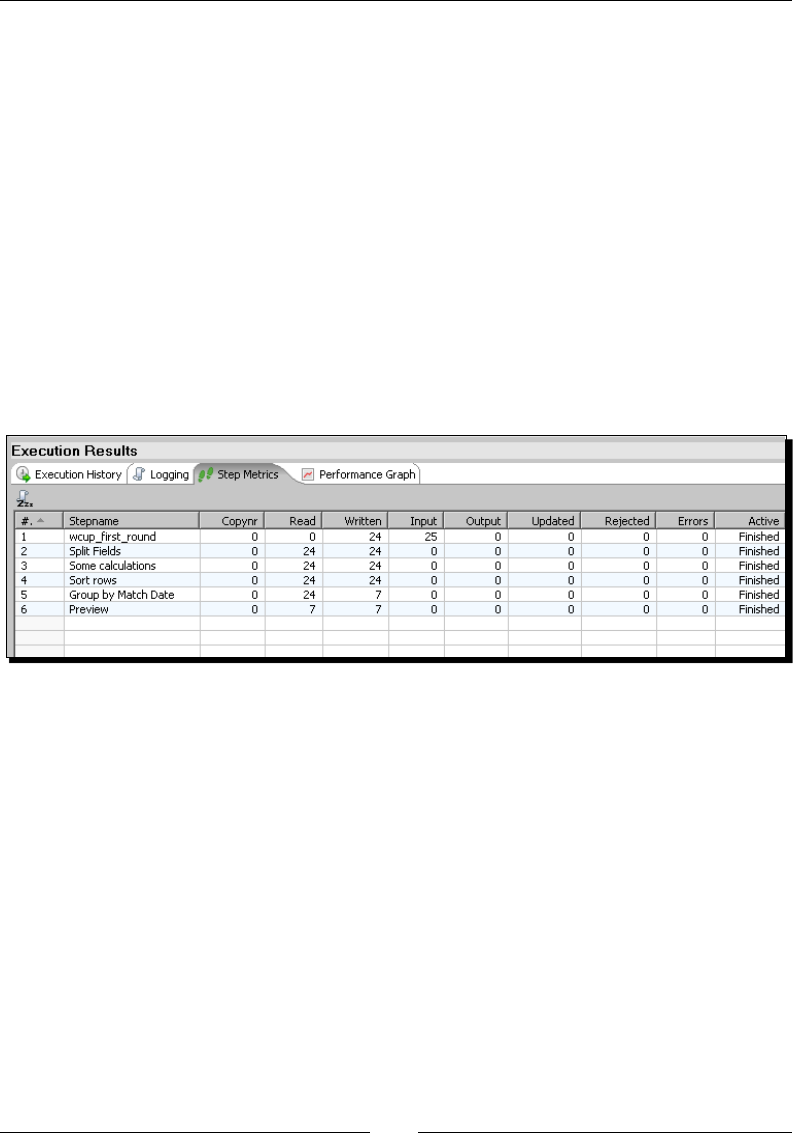

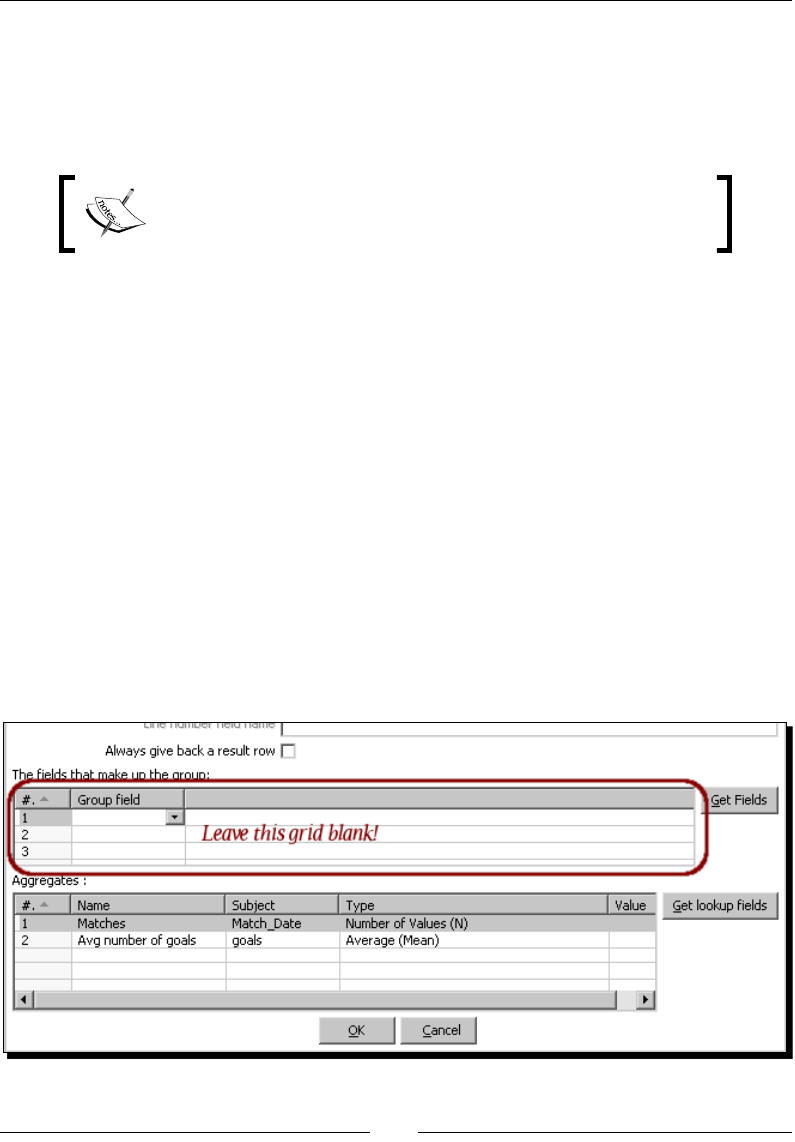

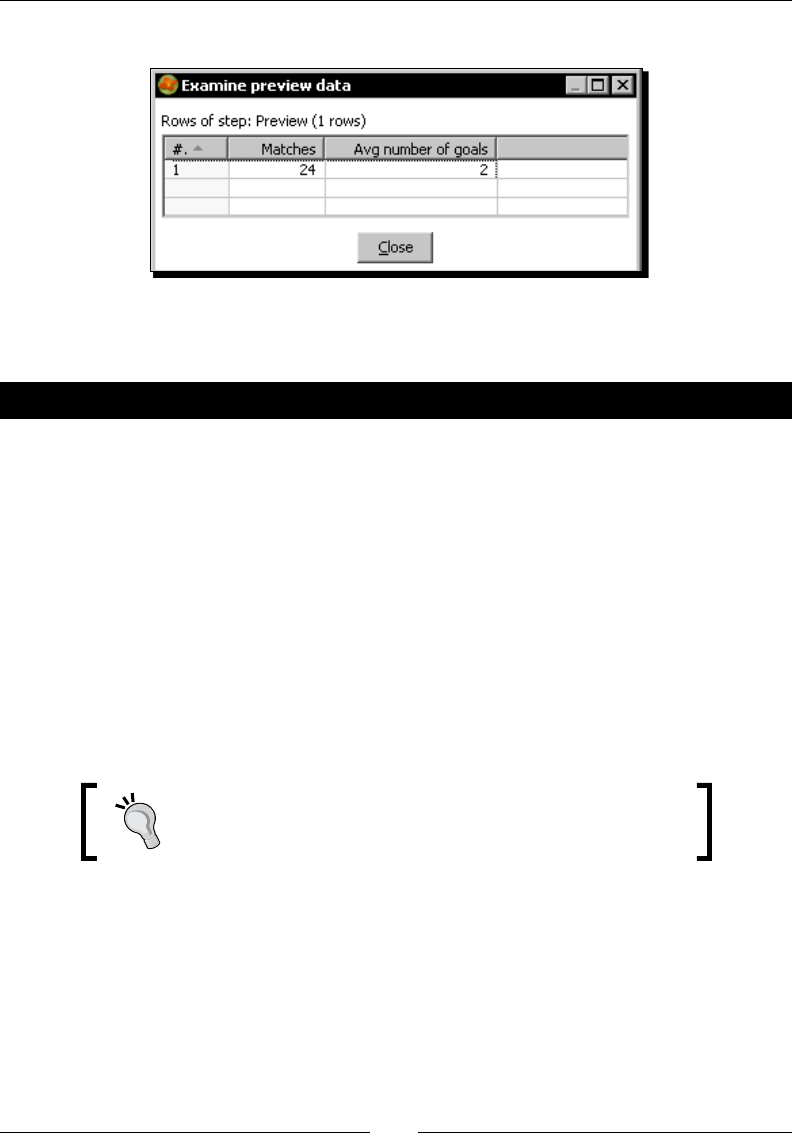

Time for acon – calculang World Cup stascs by grouping data 89

Group by step 94

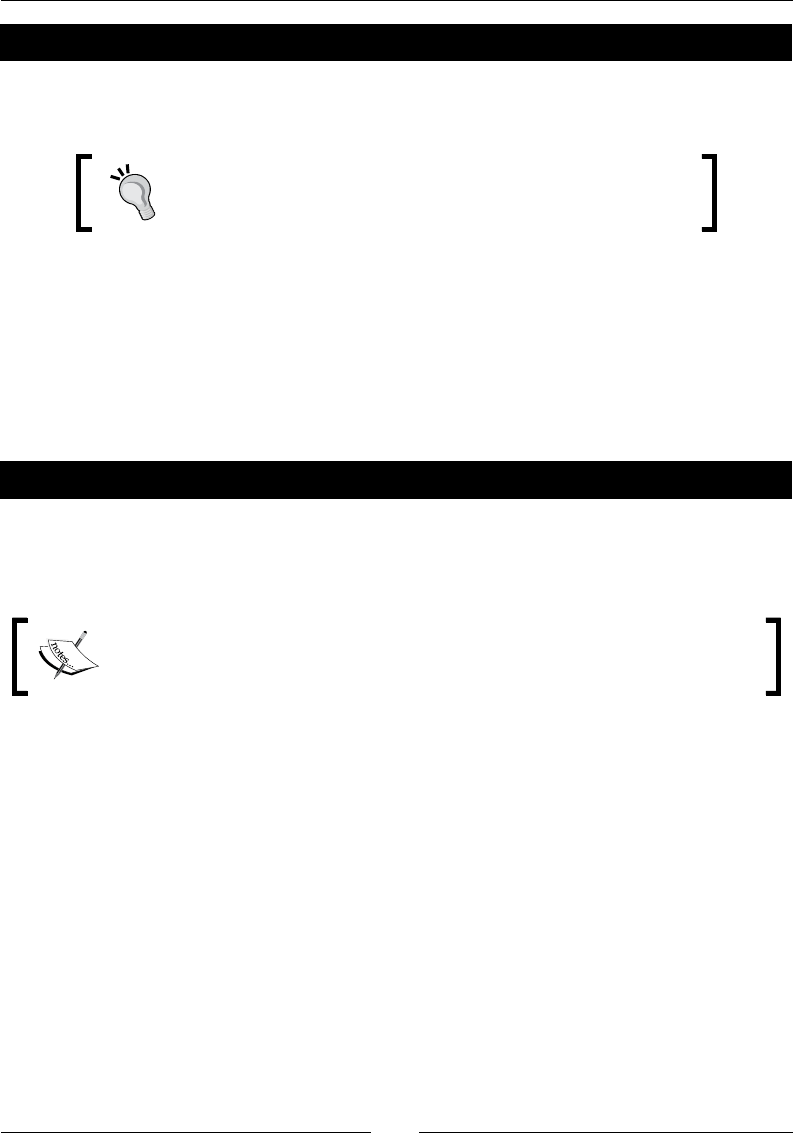

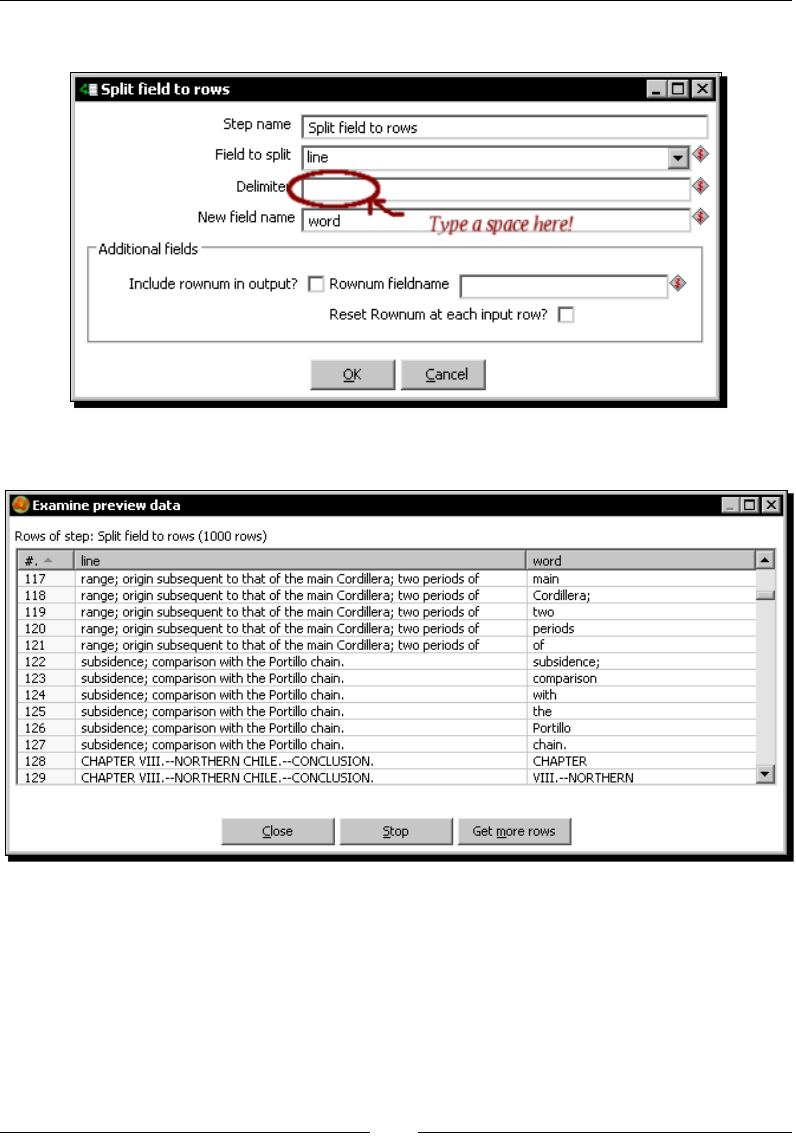

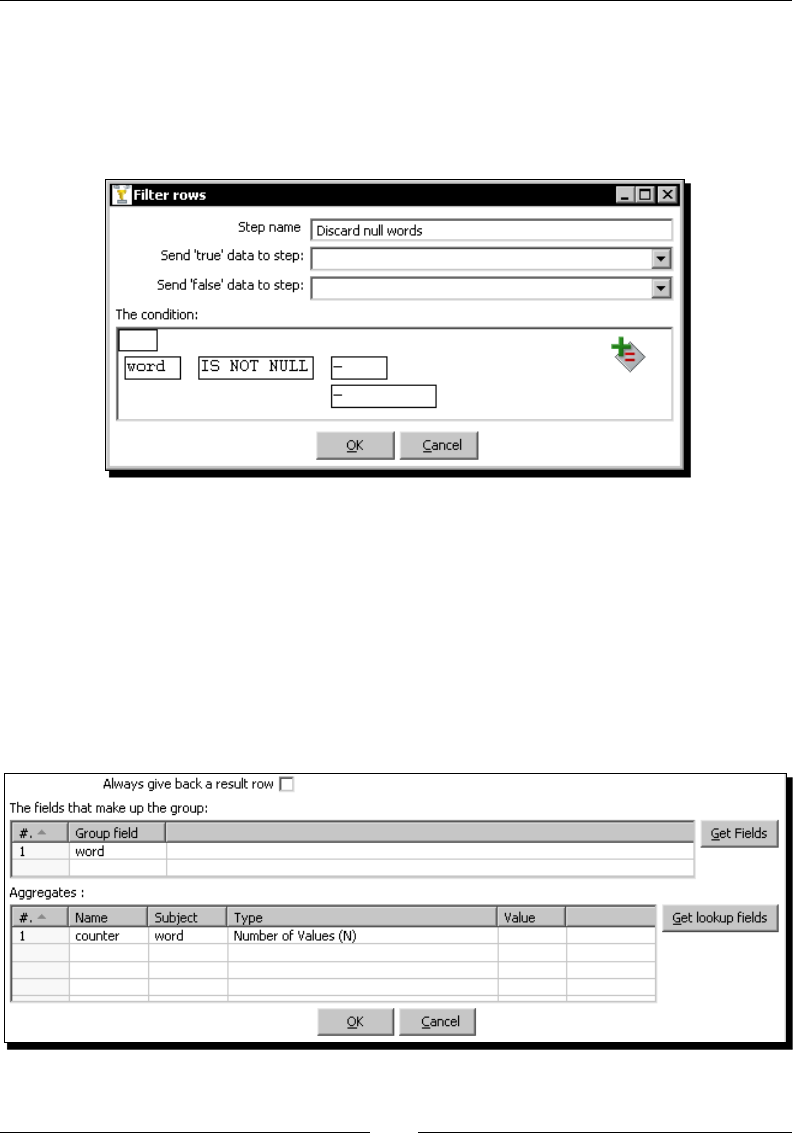

Filtering 97

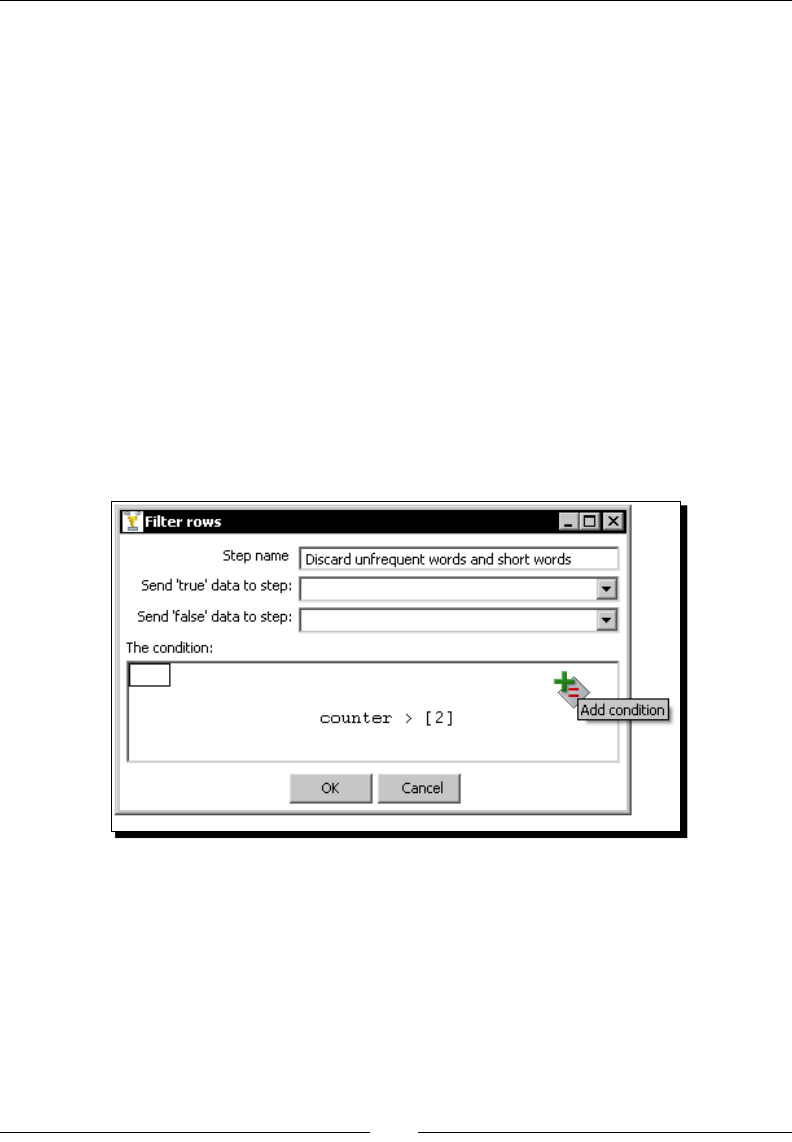

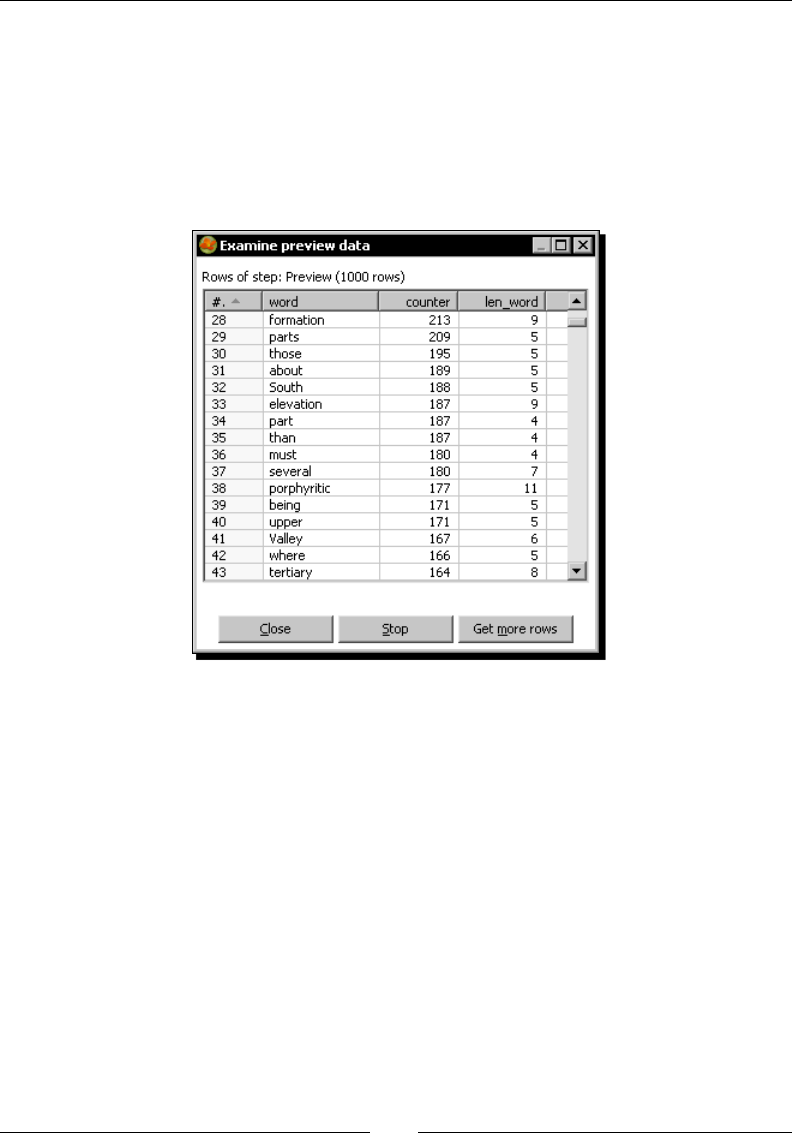

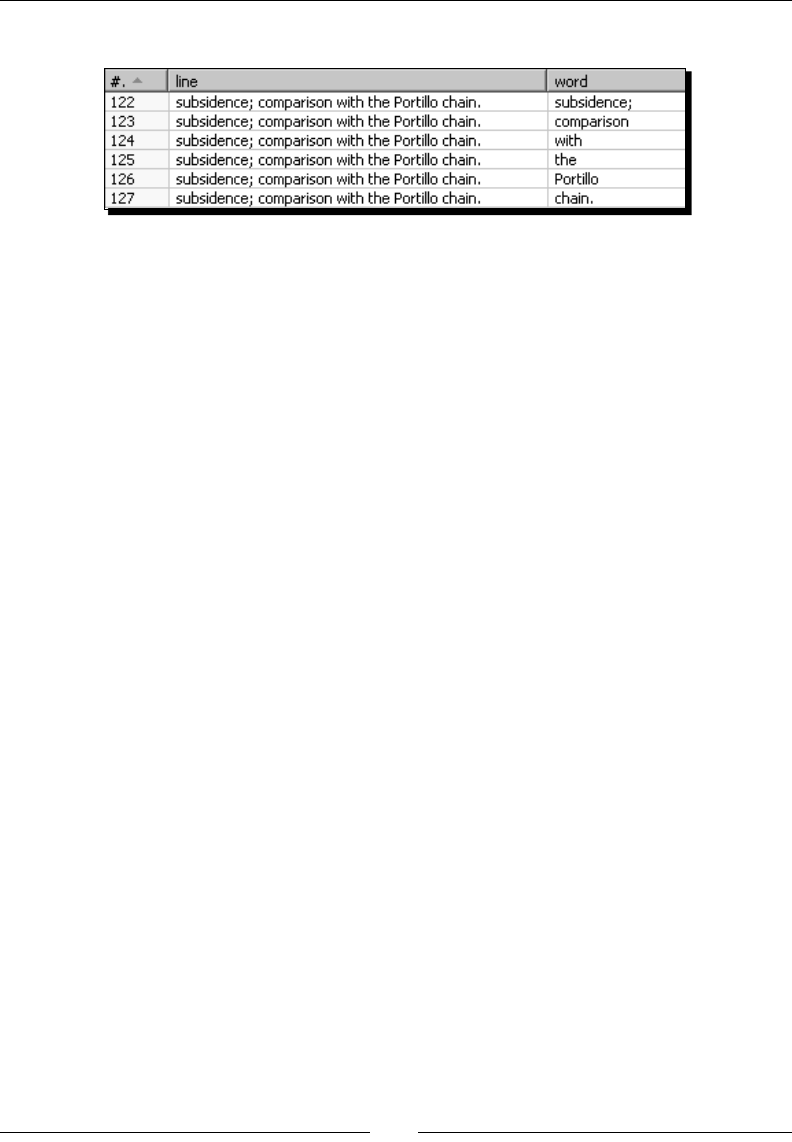

Time for acon – counng frequent words by ltering 97

Filtering rows using the Filter rows step 103

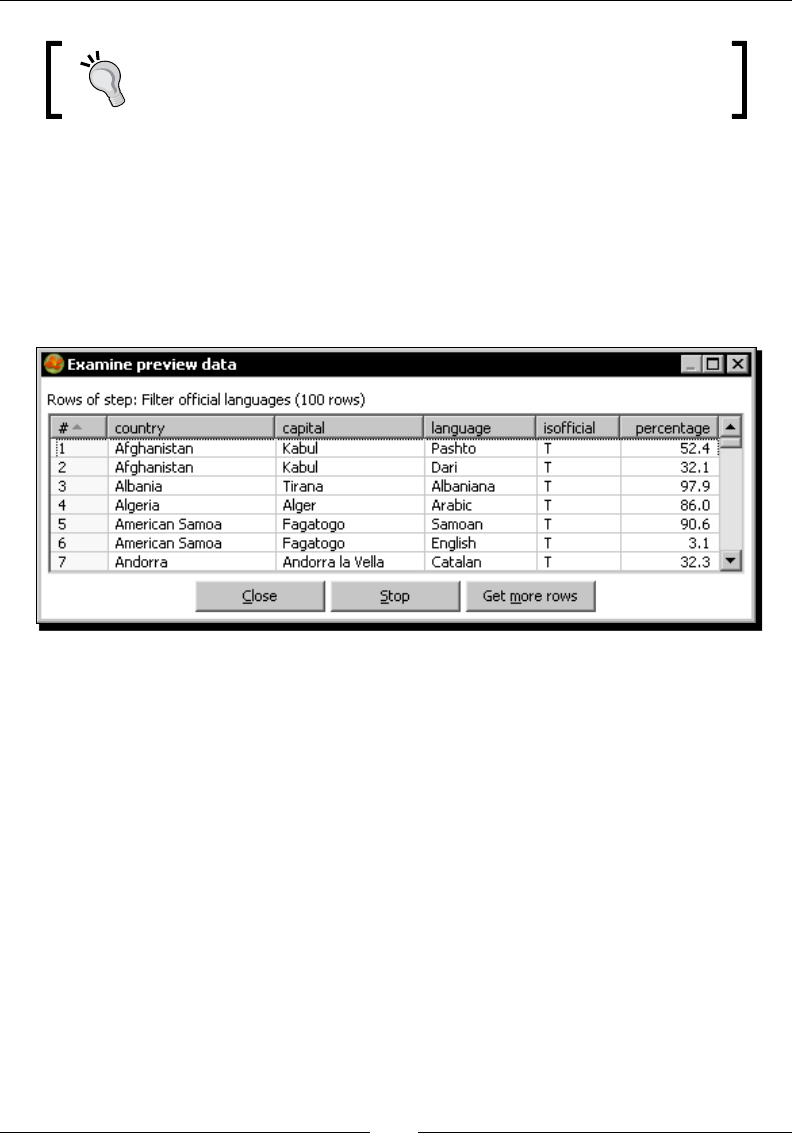

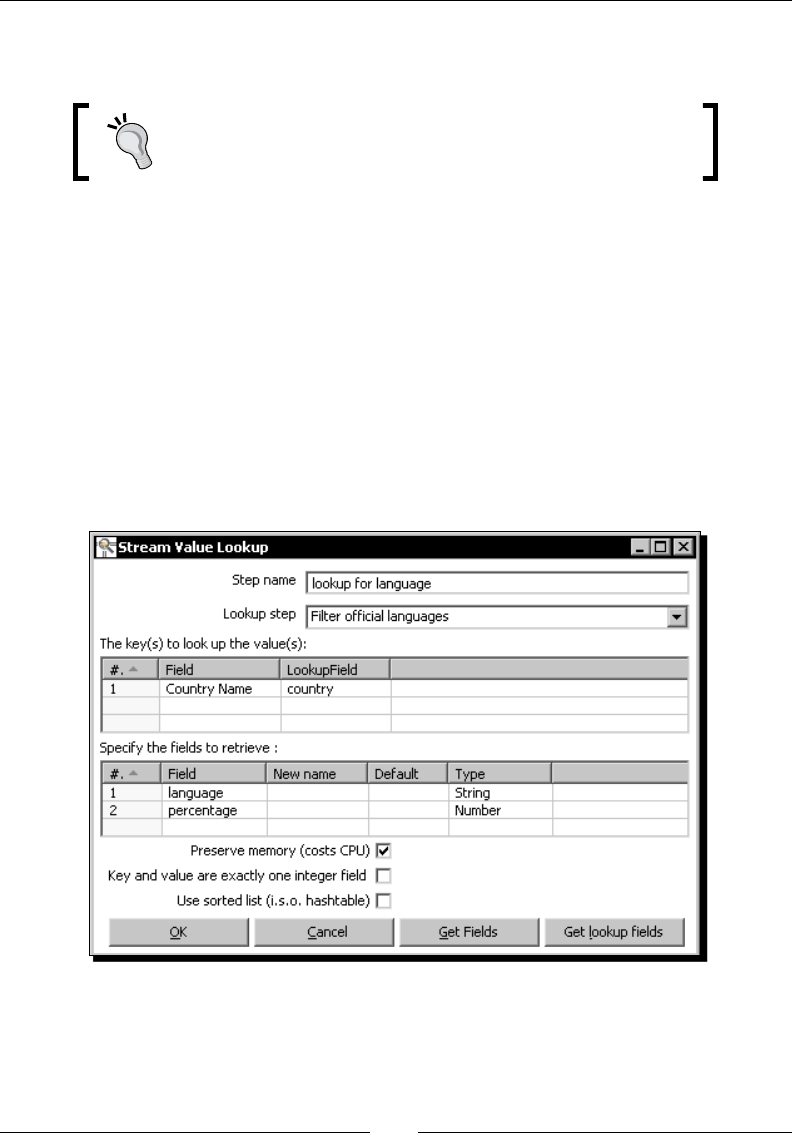

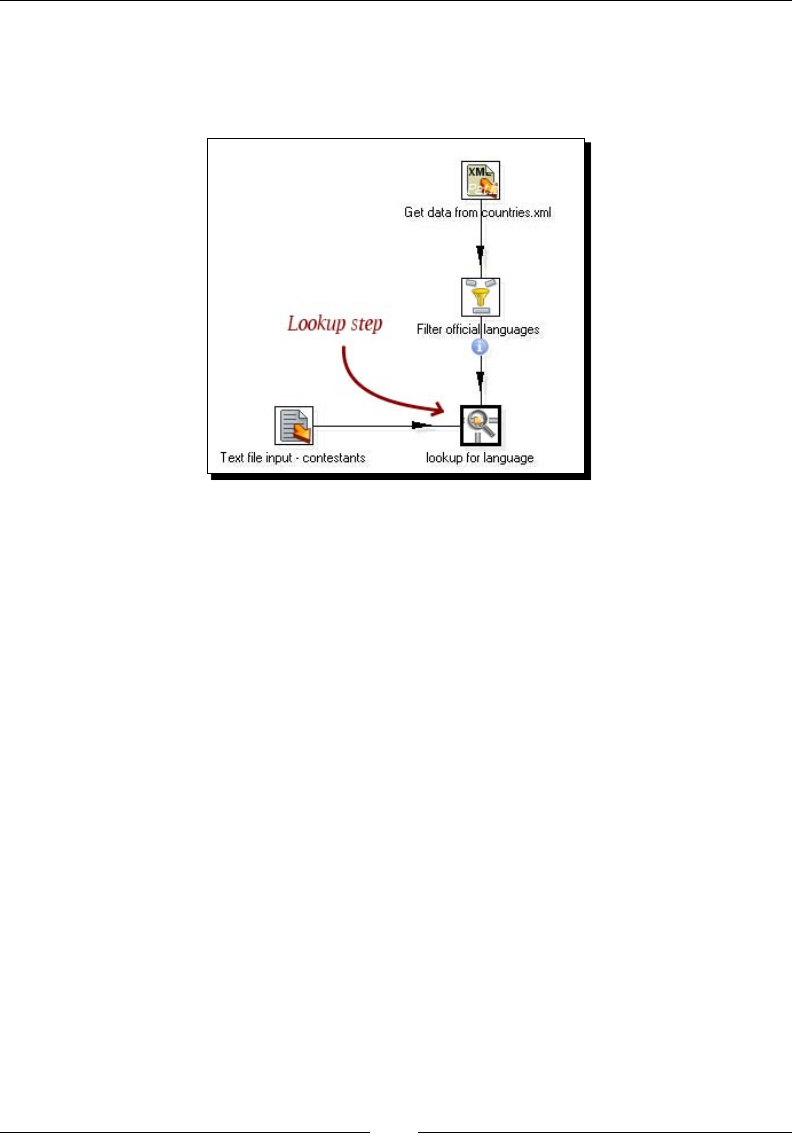

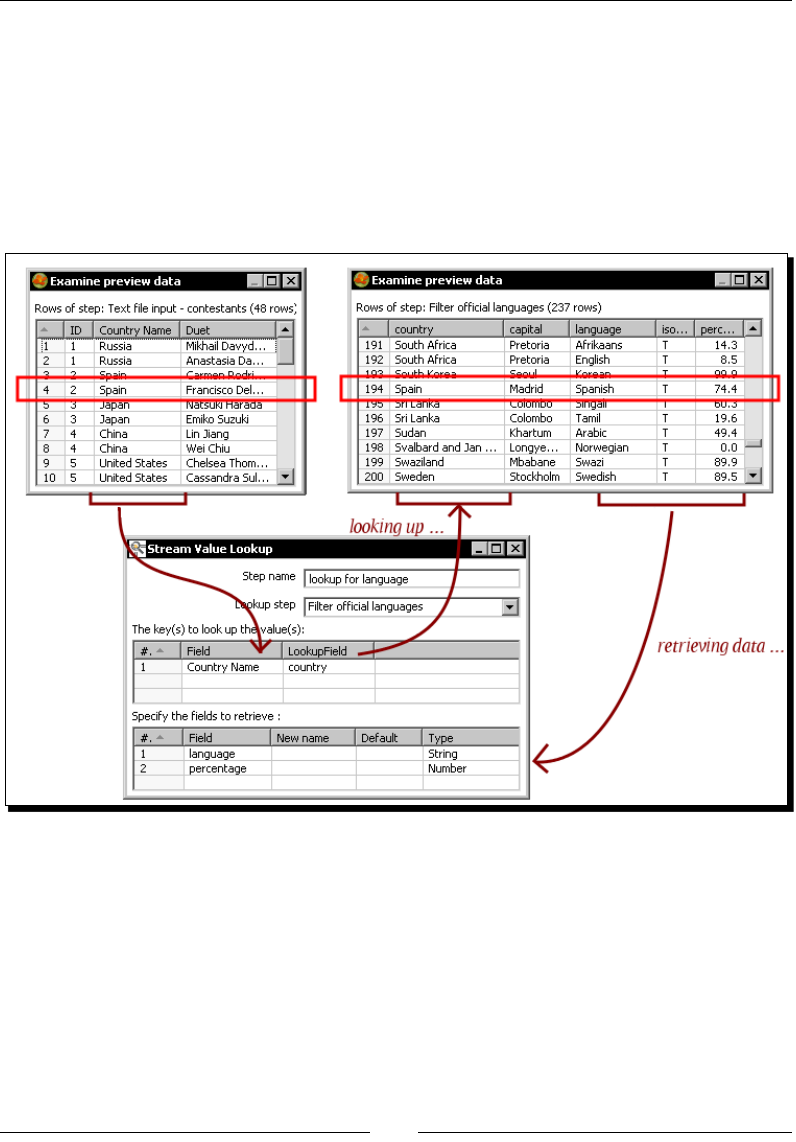

Looking up data 105

Time for acon – nding out which language people speak 105

The Stream lookup step 109

Summary 112

Chapter 4: Controlling the Flow of Data 113

Spling streams 113

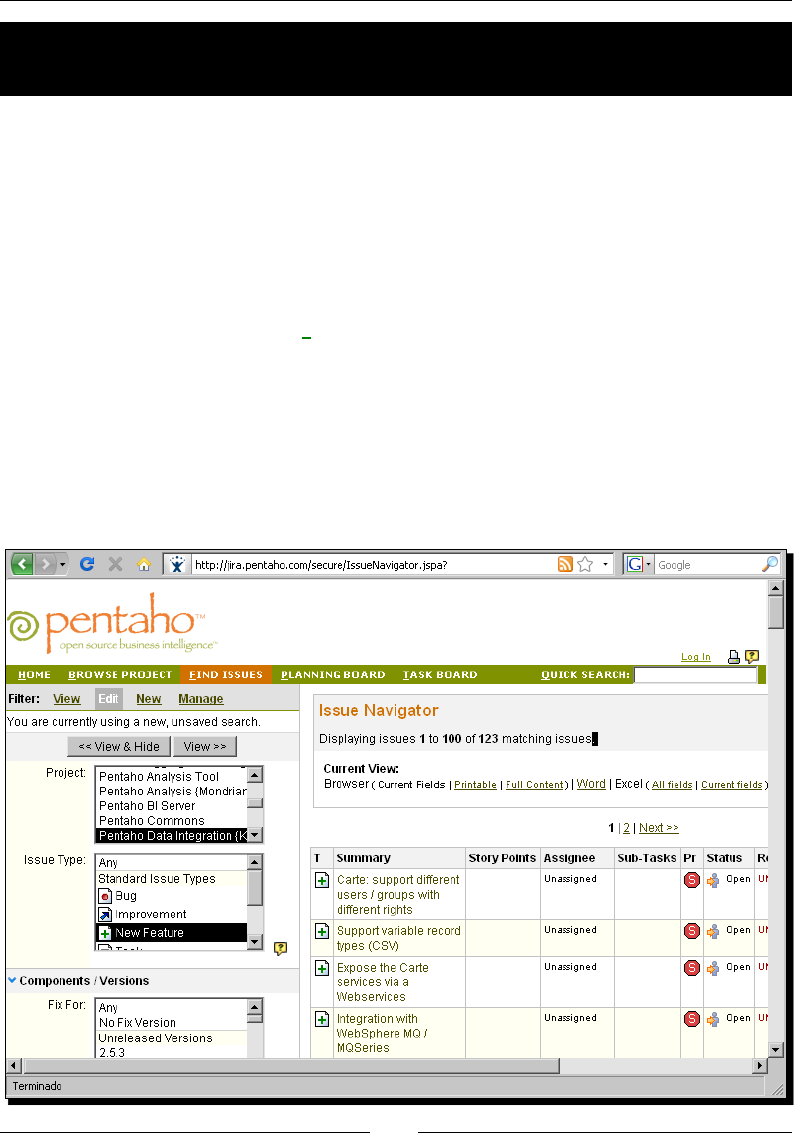

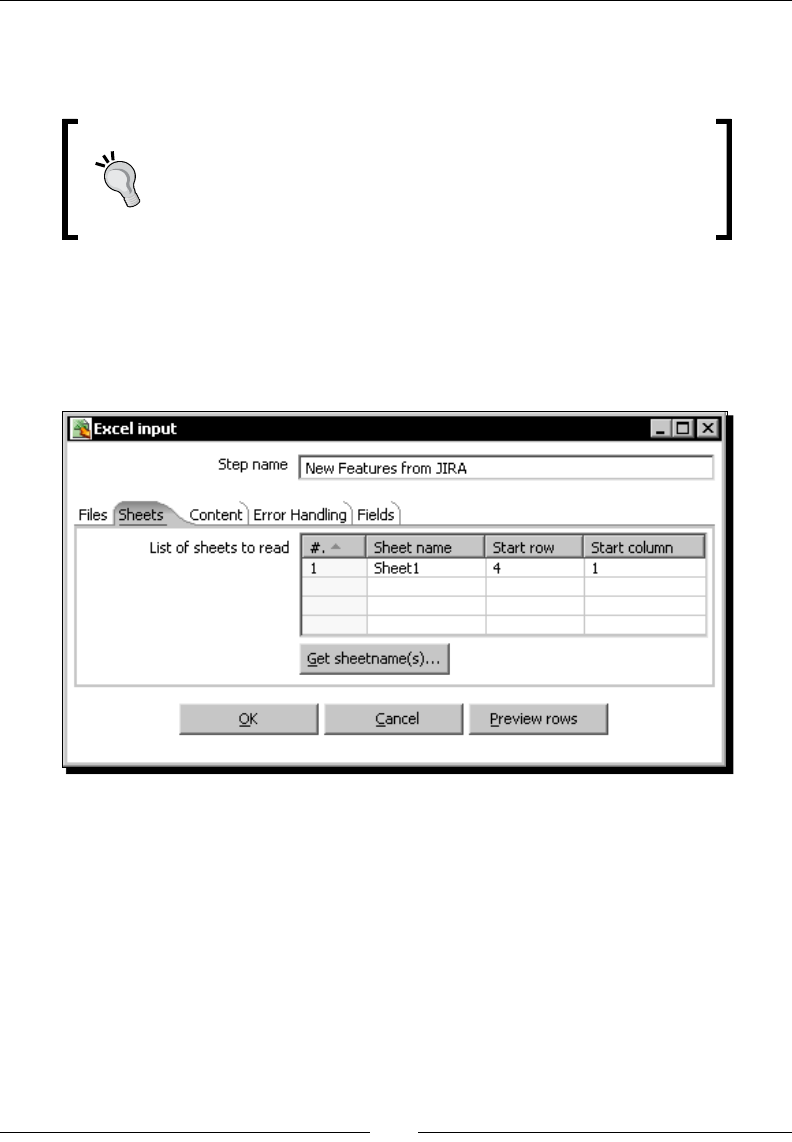

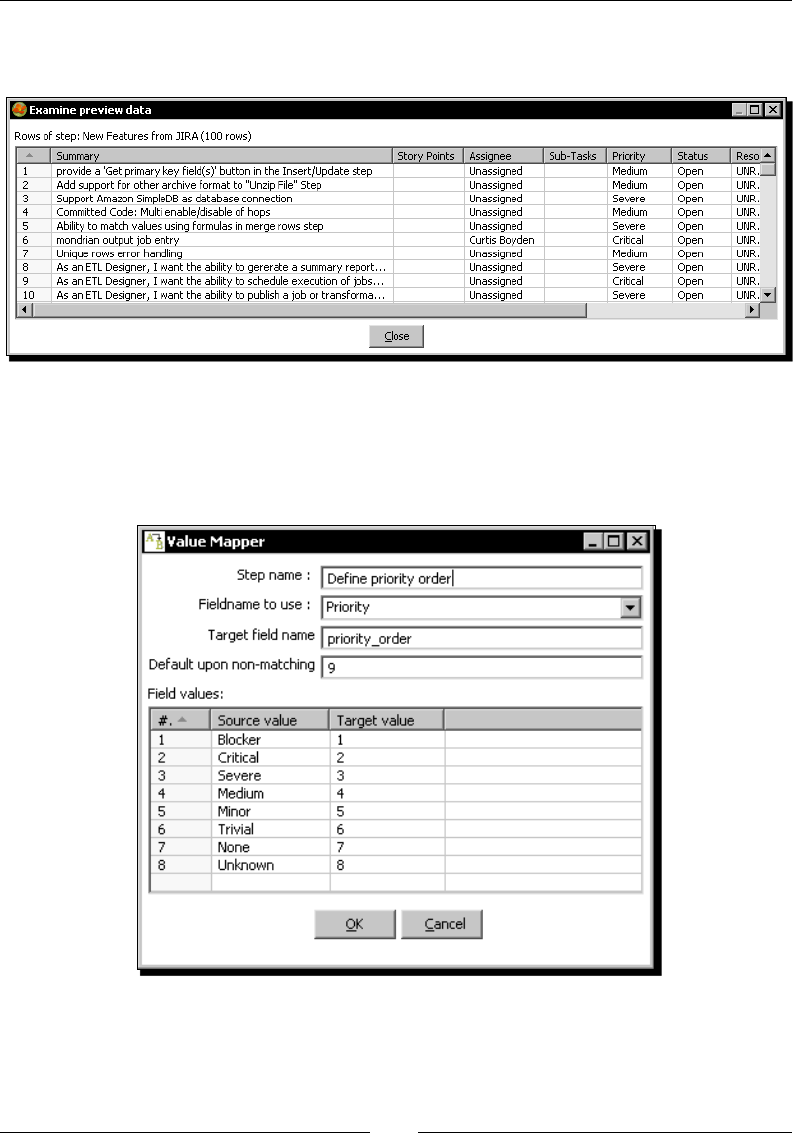

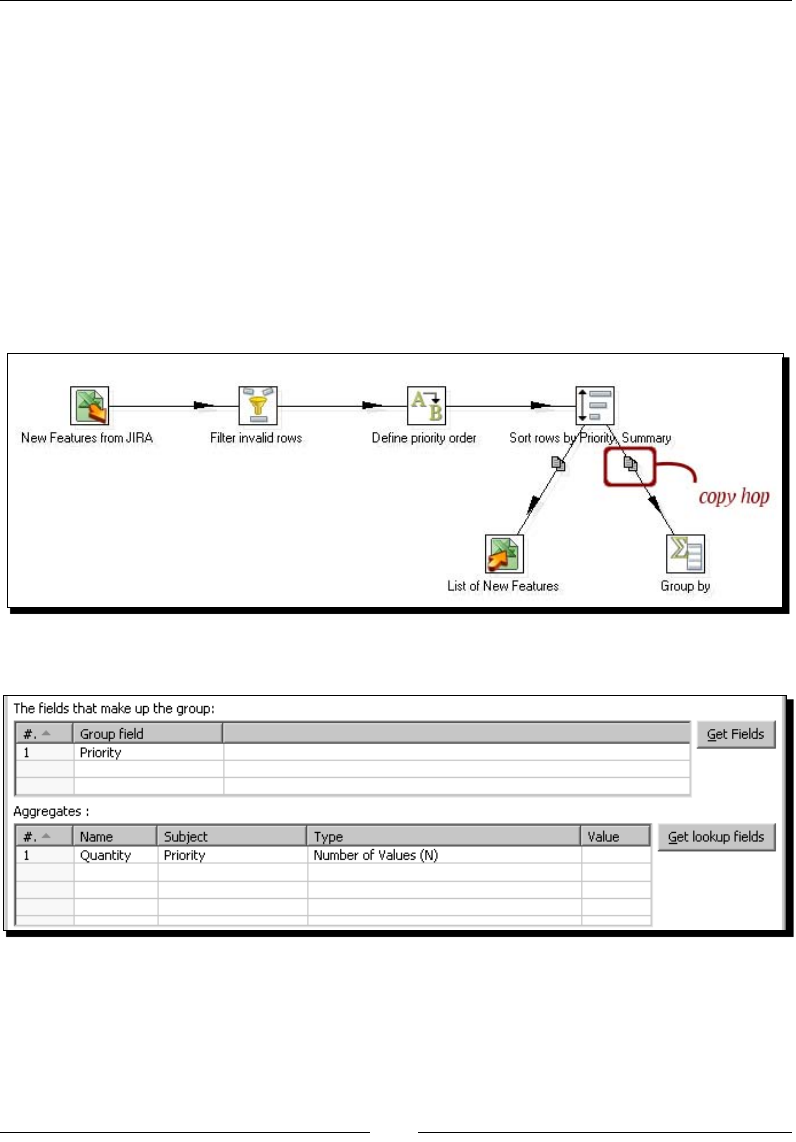

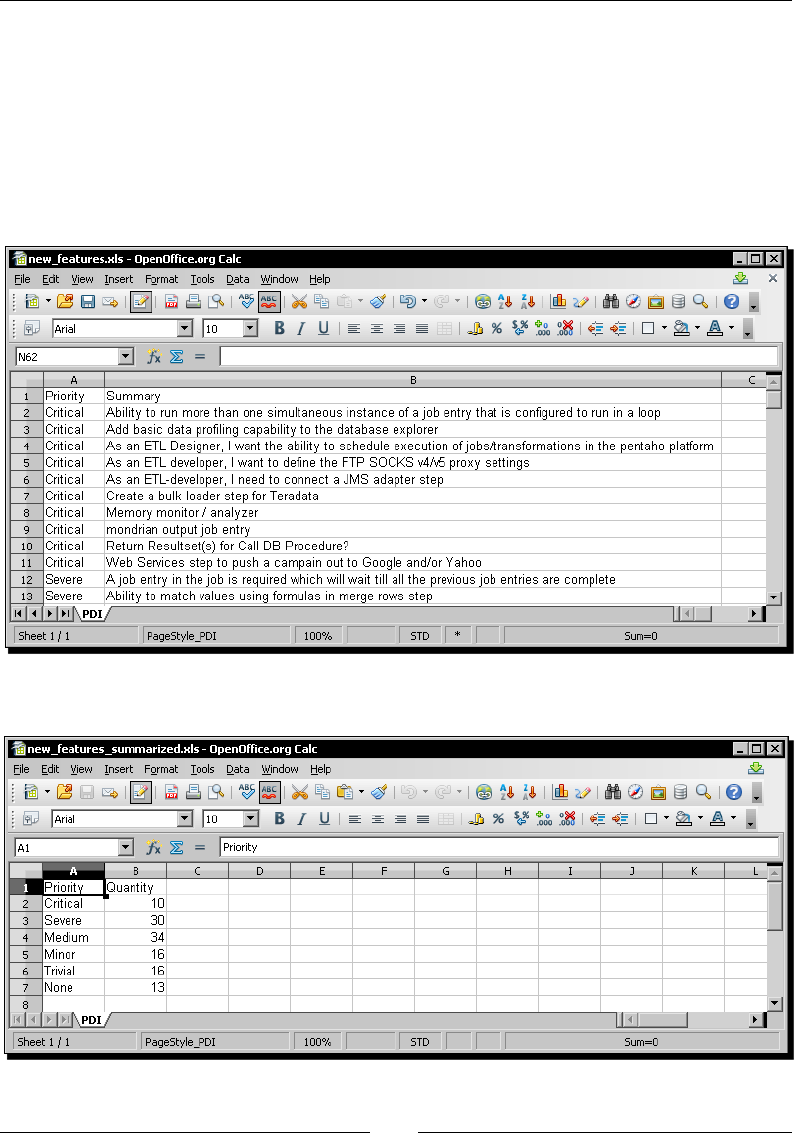

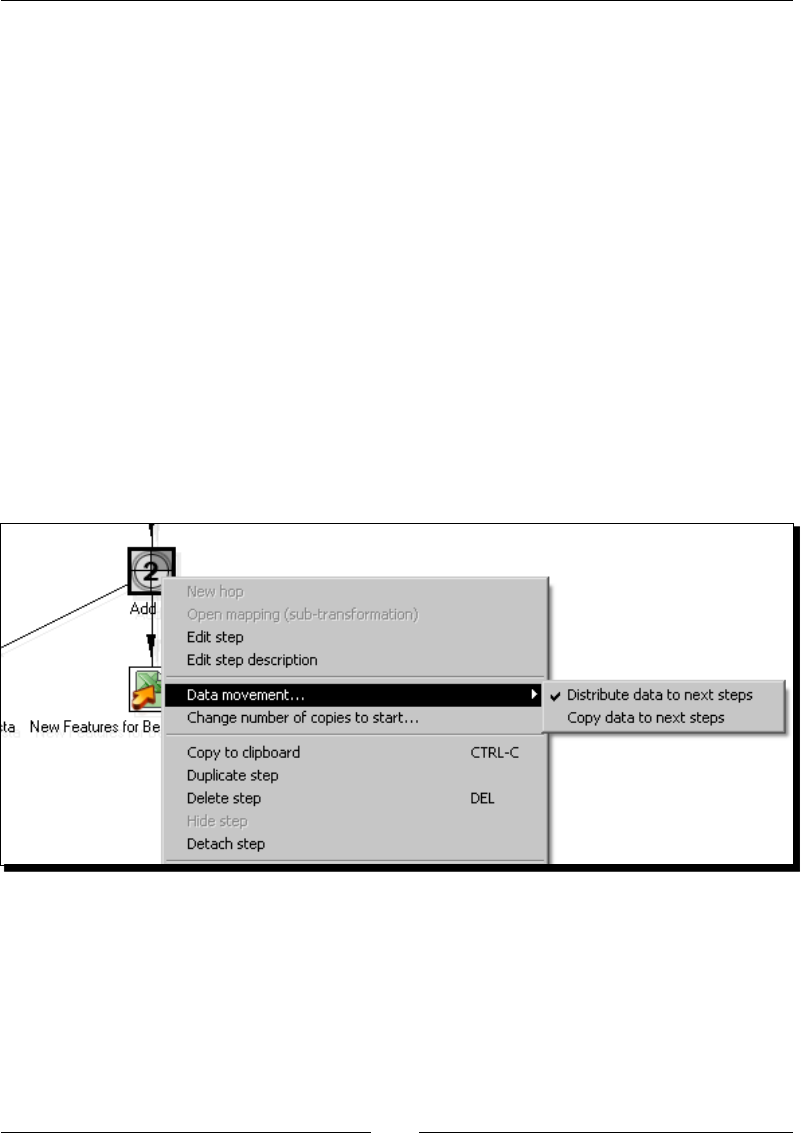

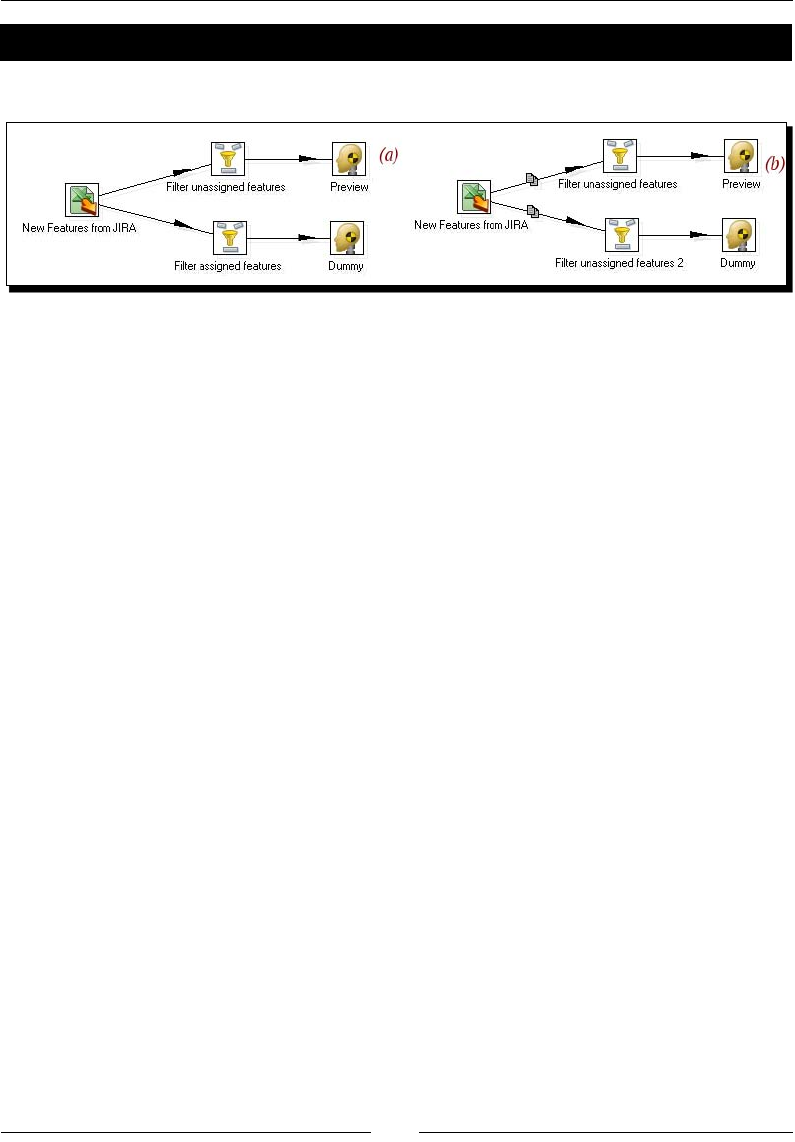

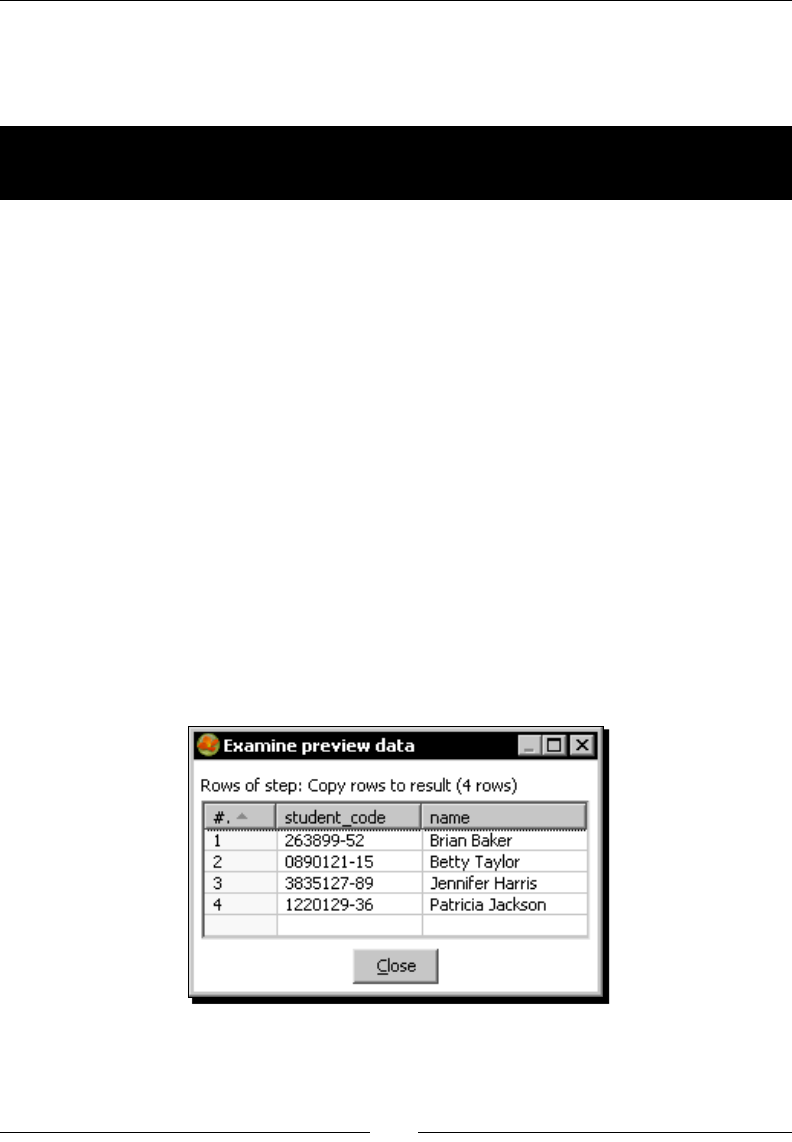

Time for acon – browsing new PDI features by copying a dataset 114

Copying rows 119

Distribung rows 120

Time for acon – assigning tasks by distribung 121

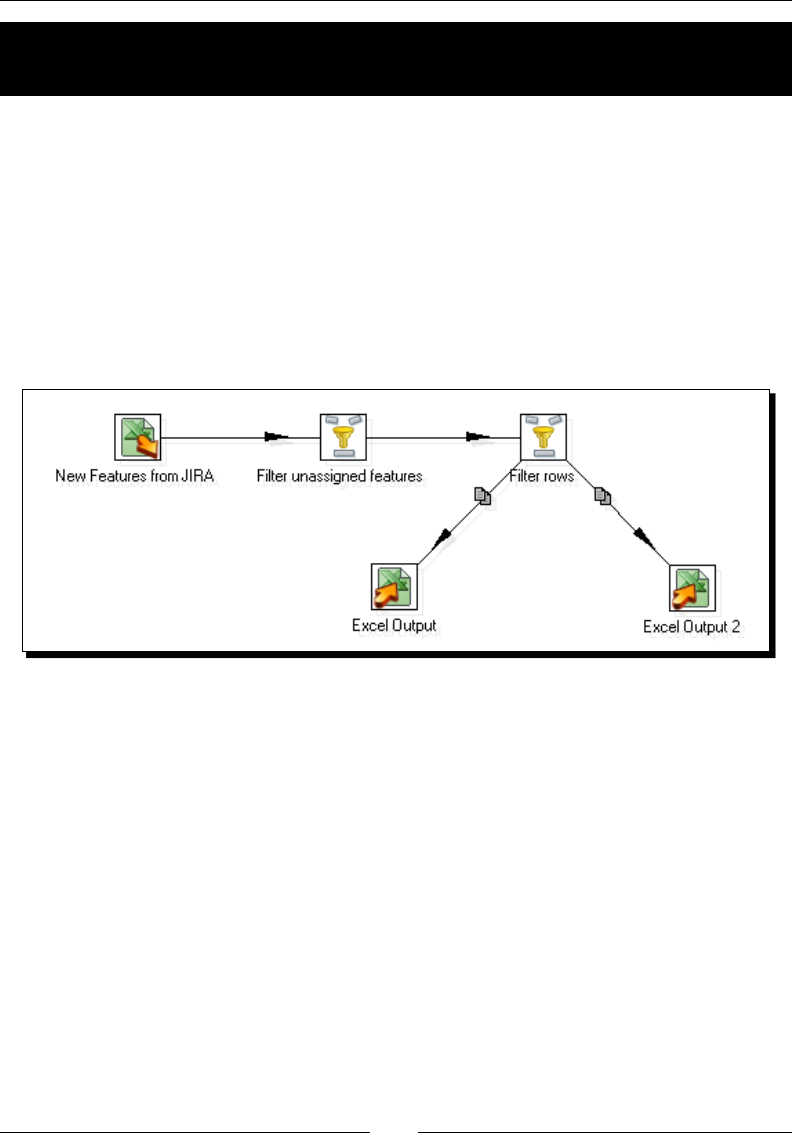

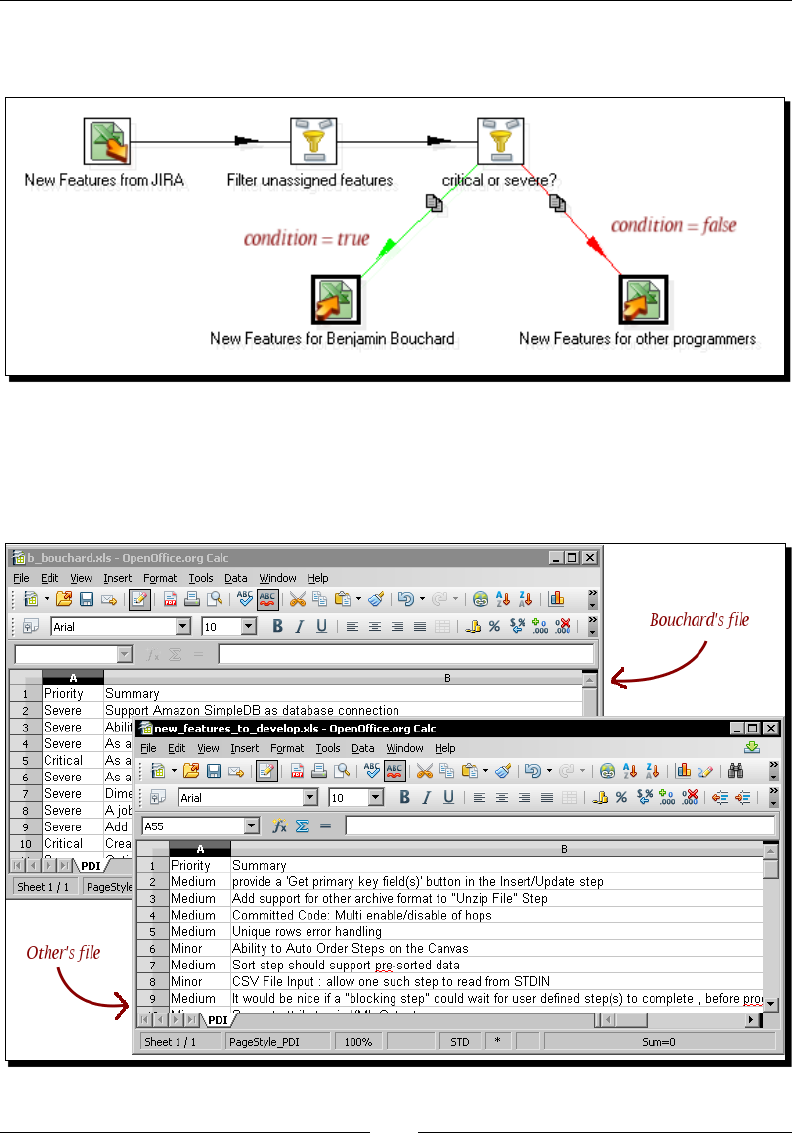

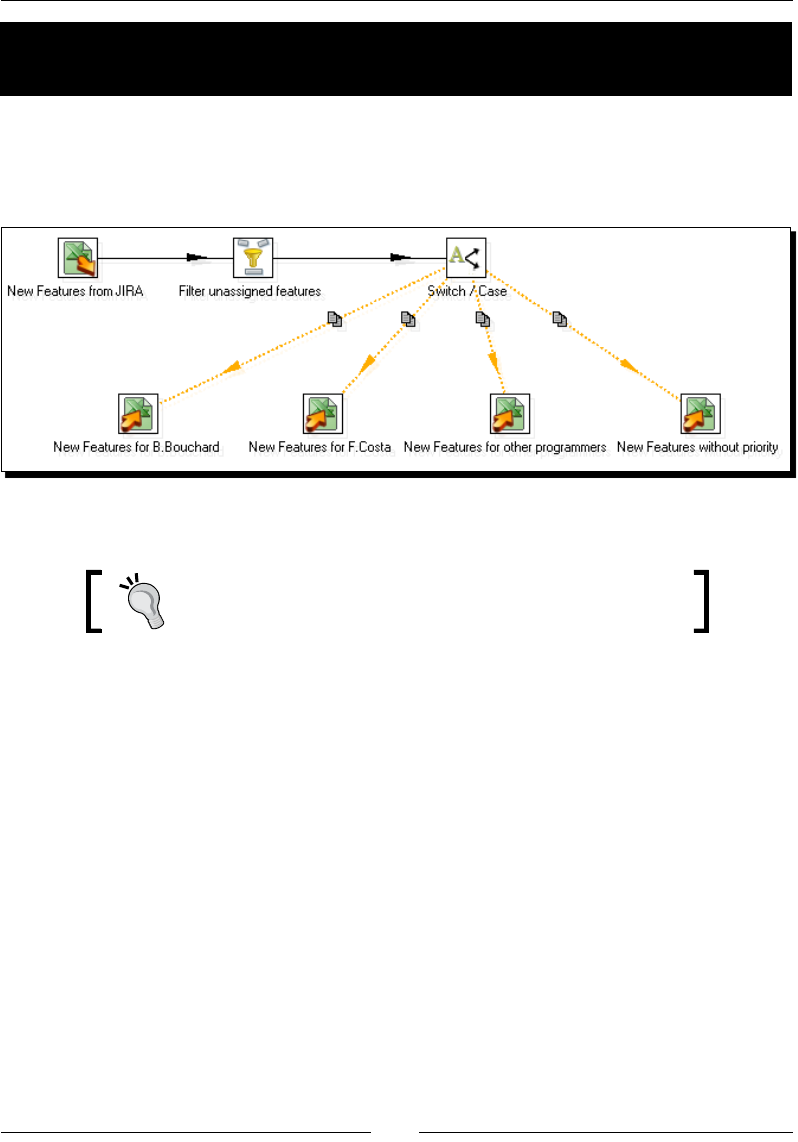

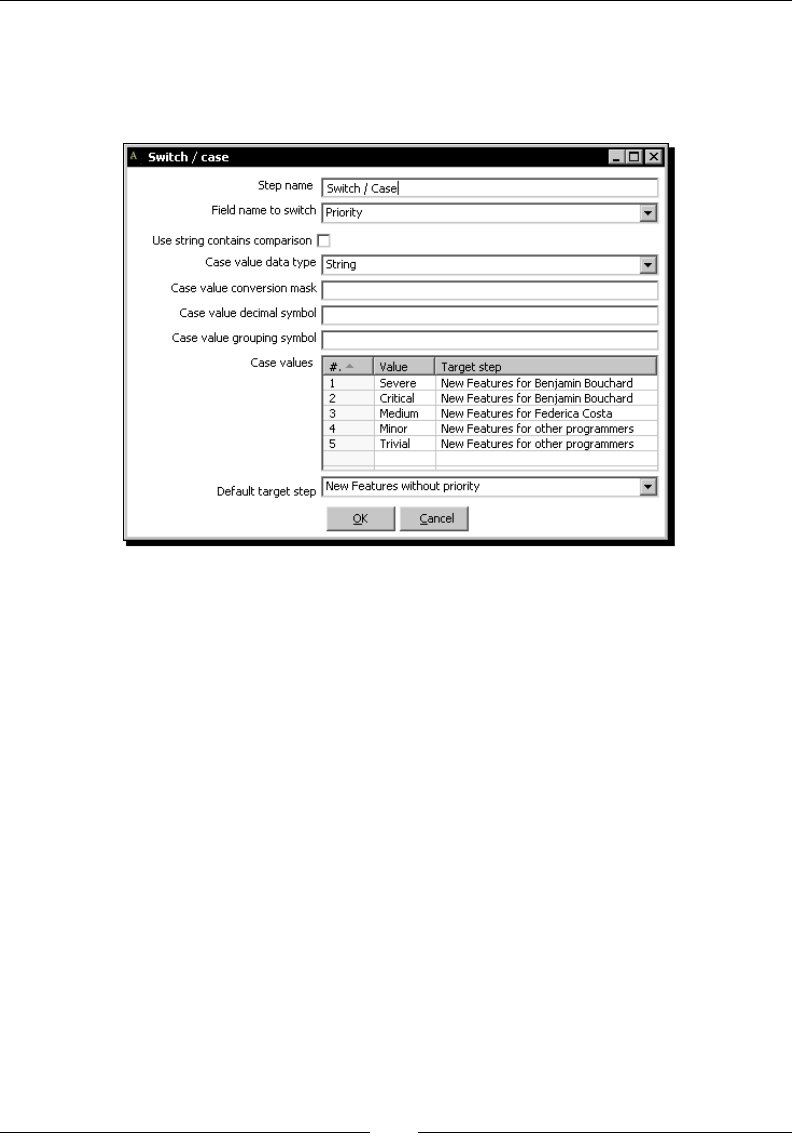

Spling the stream based on condions 125

Time for acon – assigning tasks by ltering priories with the Filter rows step 126

PDI steps for spling the stream based on condions 128

Time for acon – assigning tasks by ltering priories with the Switch/ Case step 129

Merging streams 131

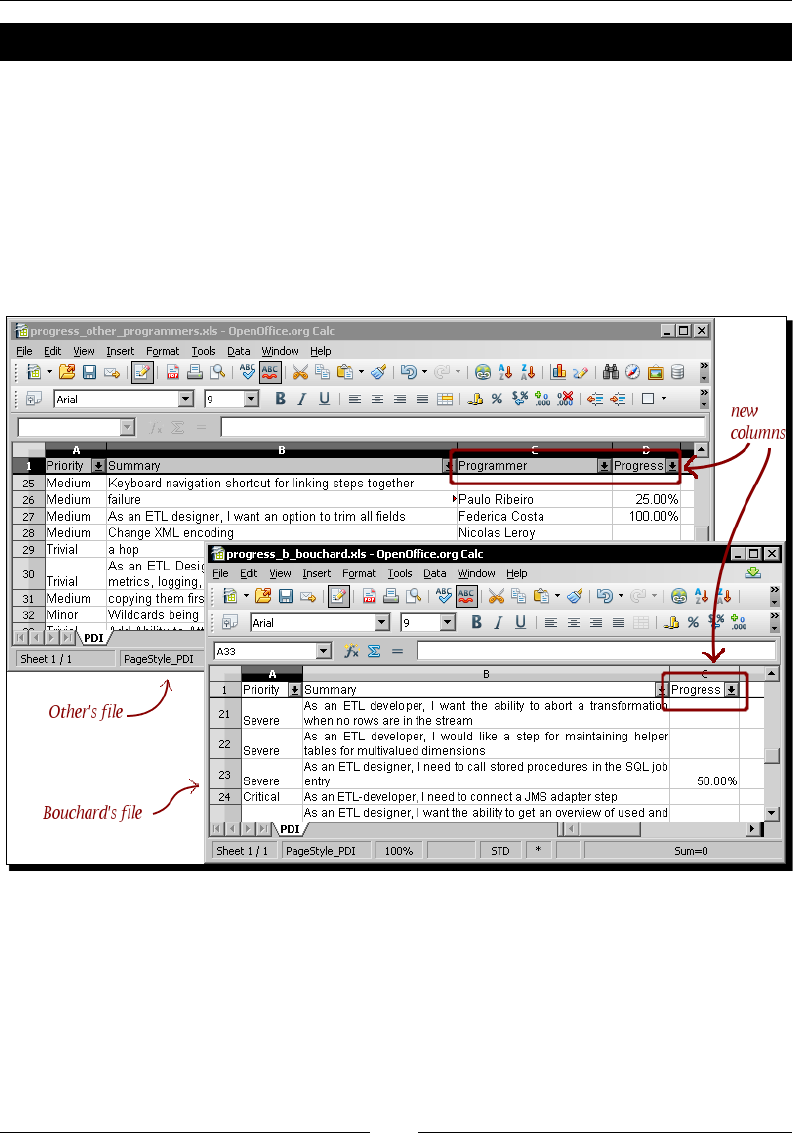

Time for acon – gathering progress and merging all together 132

PDI opons for merging streams 134

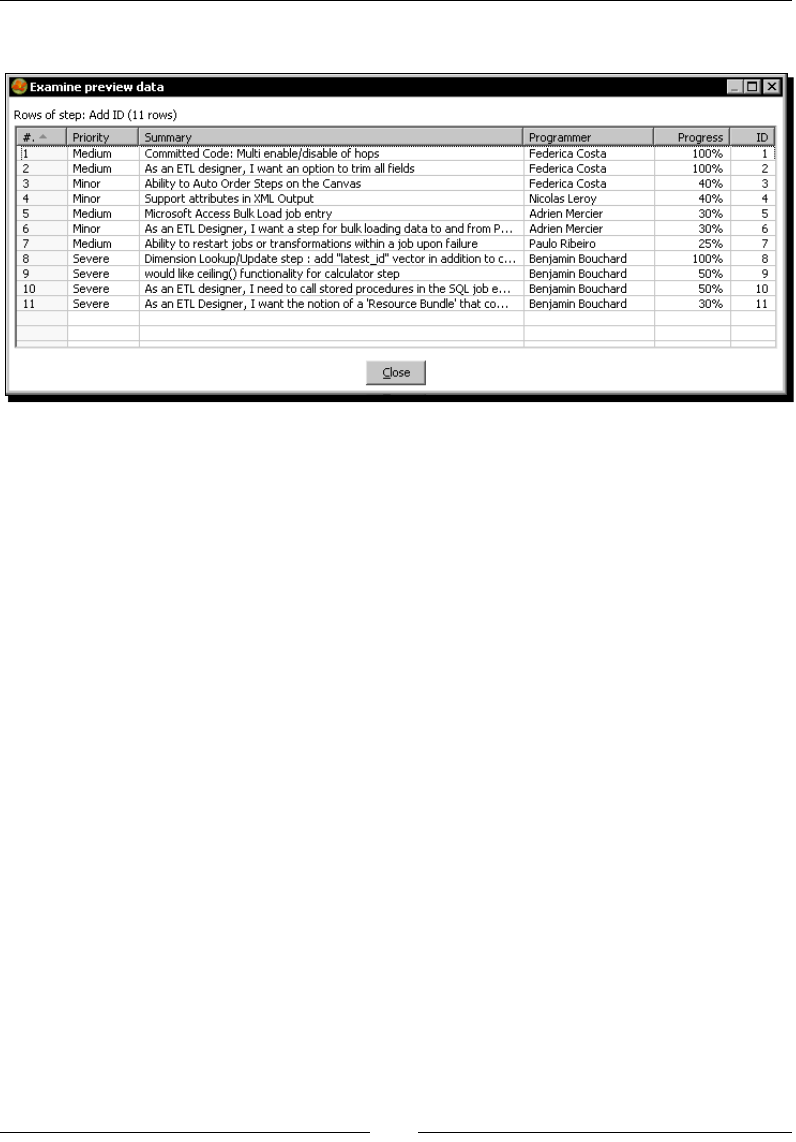

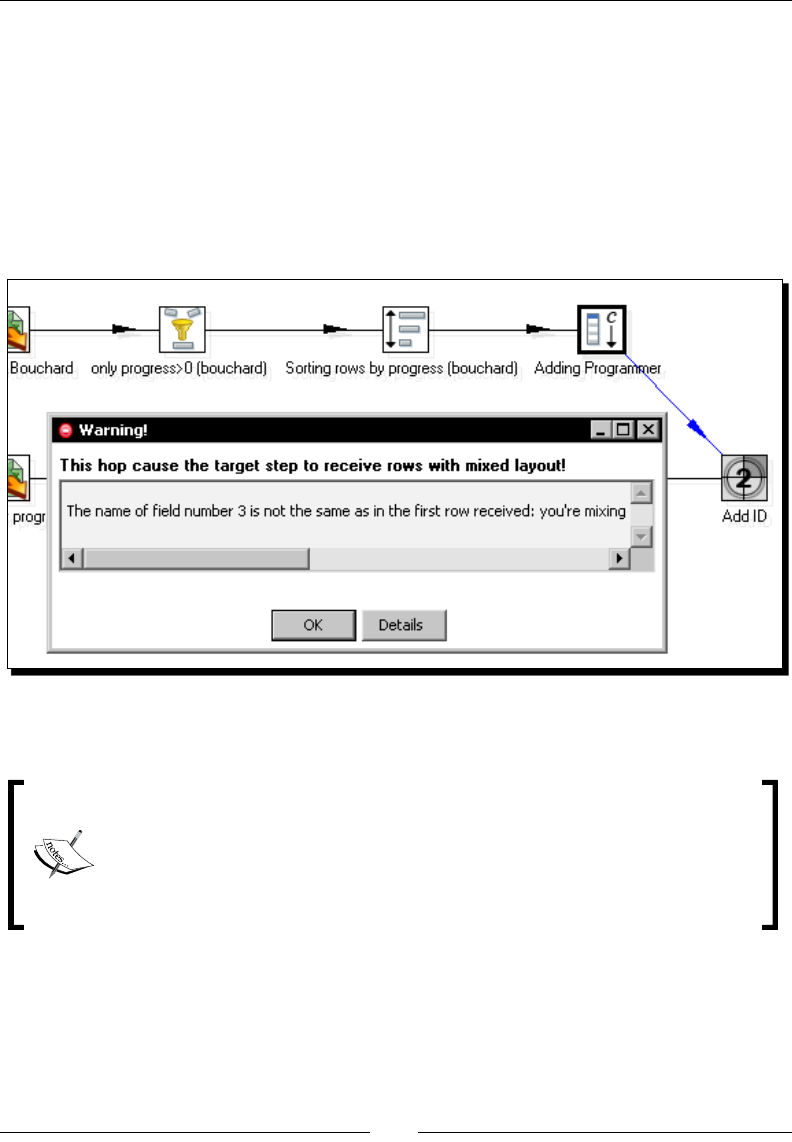

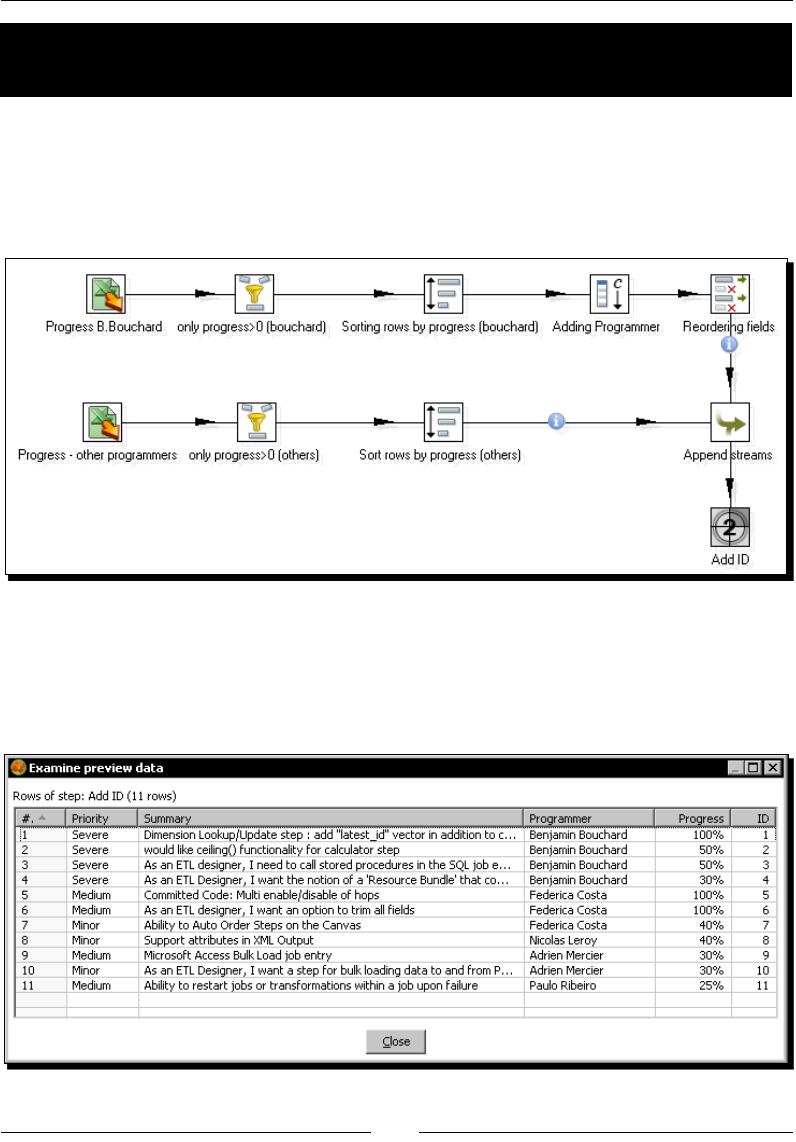

Time for acon – giving priority to Bouchard by using Append Stream 137

Summary 139

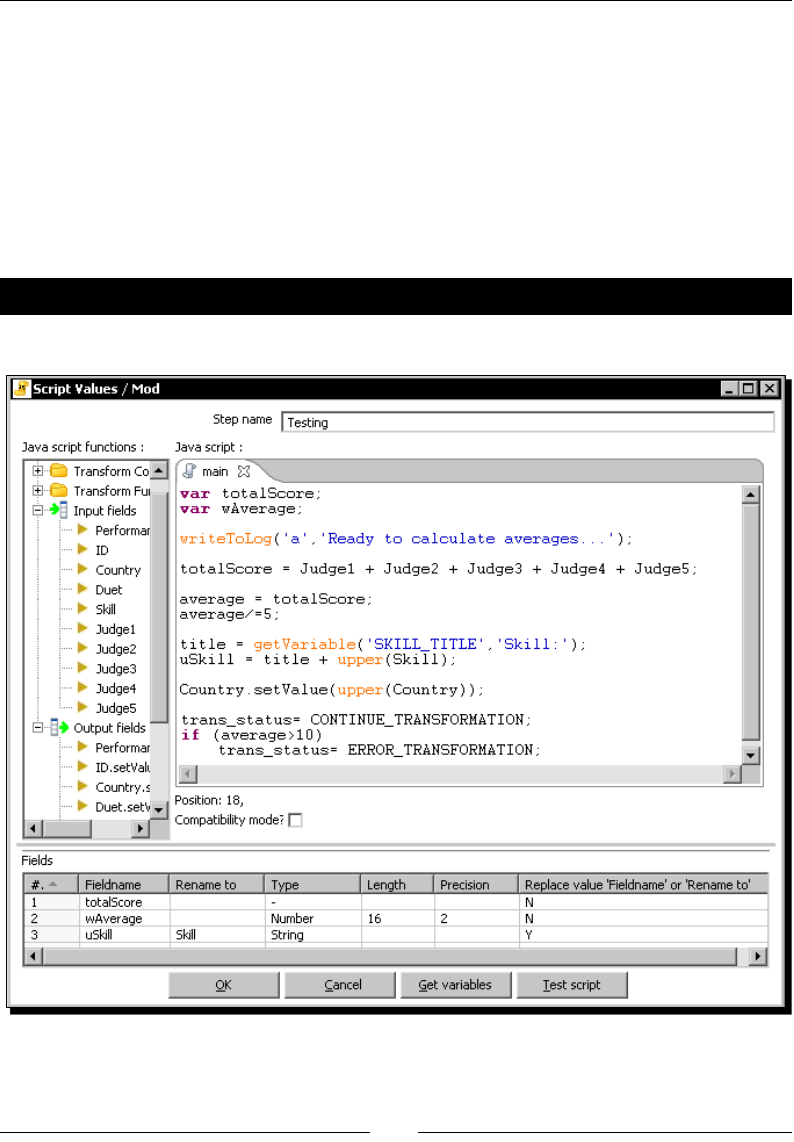

Chapter 5: Transforming Your Data with JavaScript Code and

the JavaScript Step 141

Doing simple tasks with the JavaScript step 141

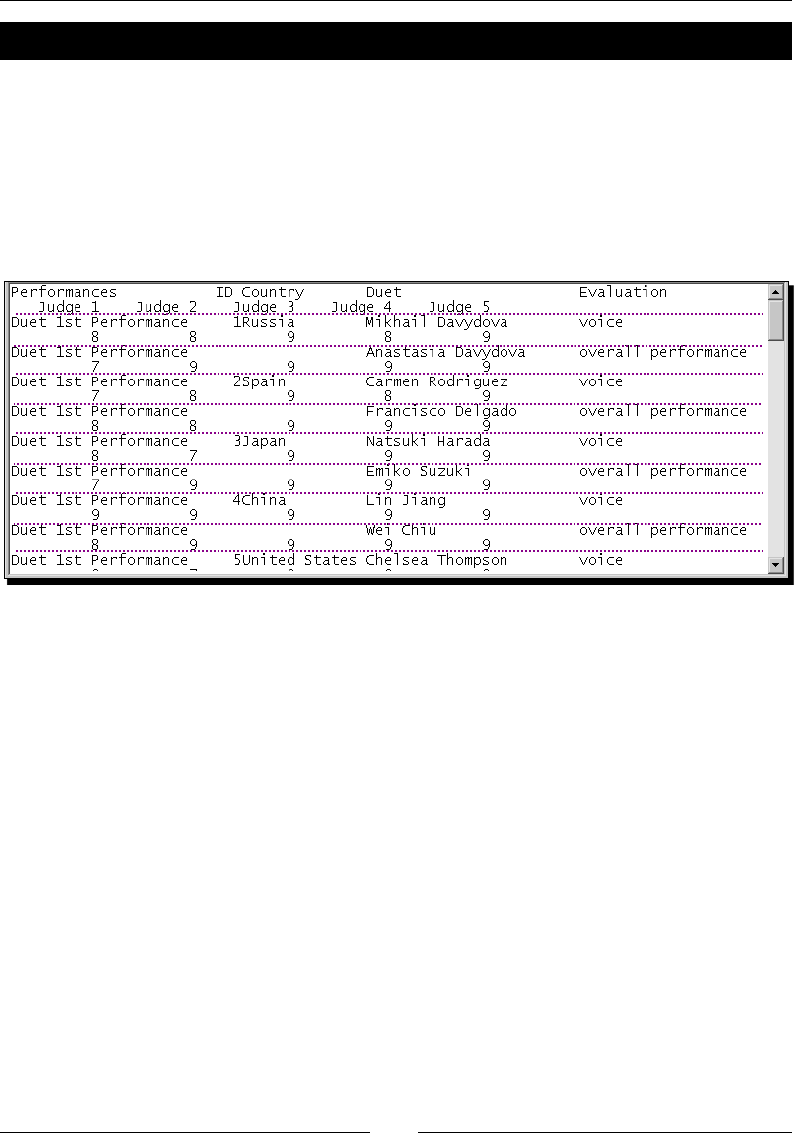

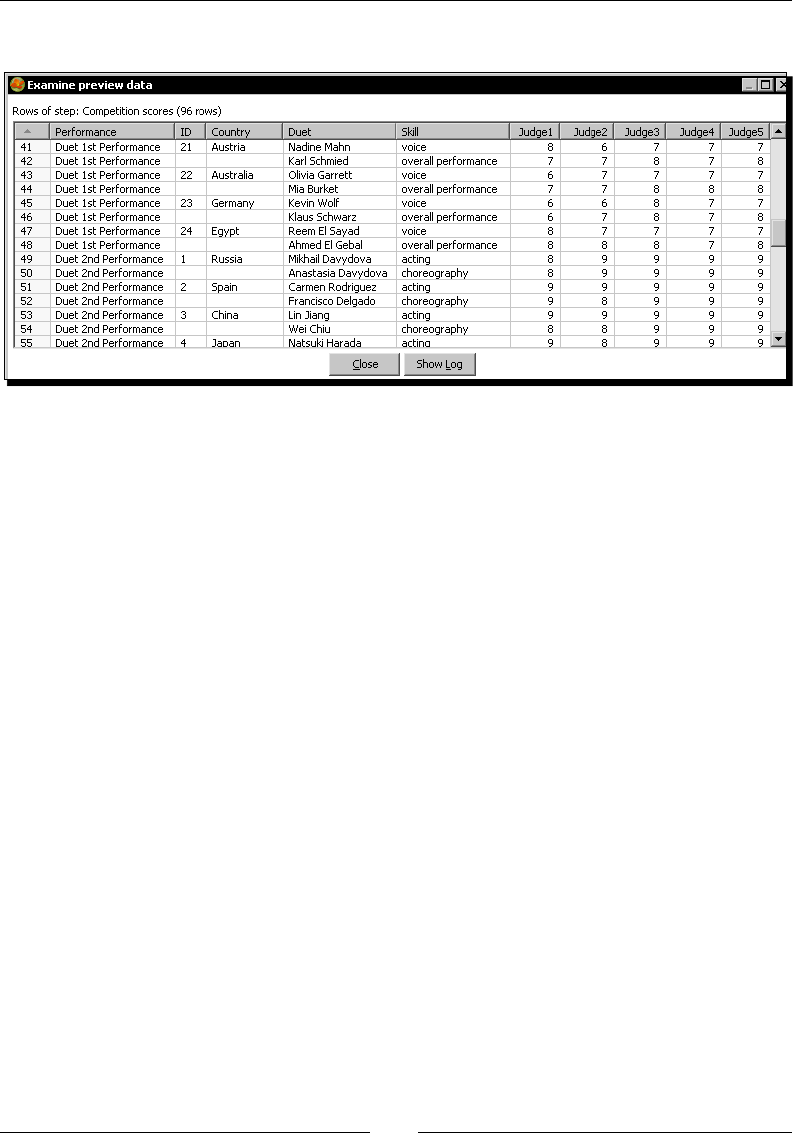

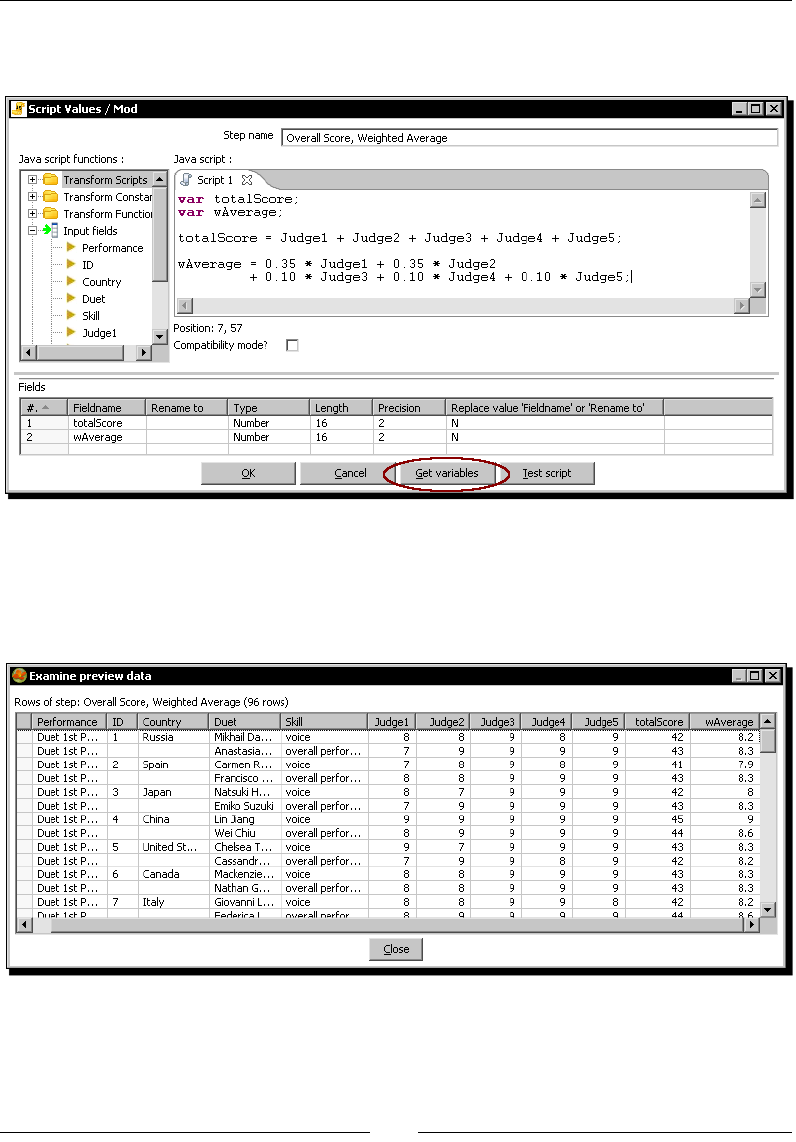

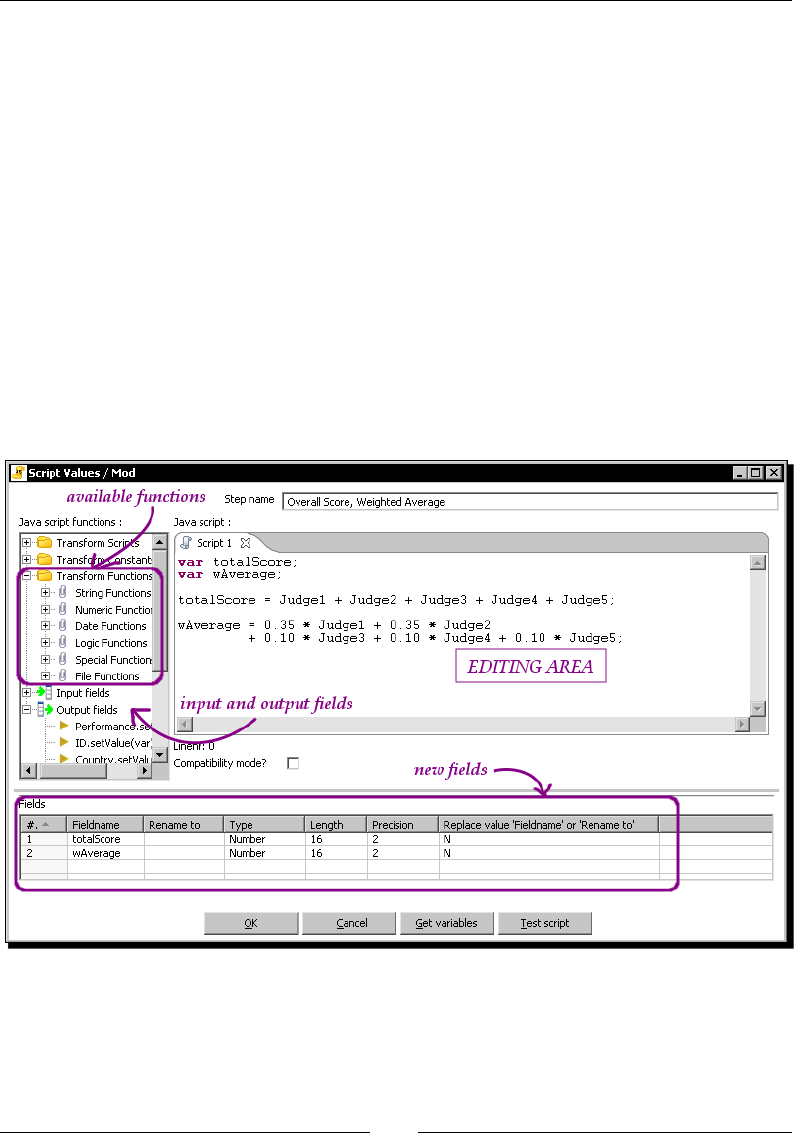

Time for acon – calculang scores with JavaScript 142

Using the JavaScript language in PDI 147

Inserng JavaScript code using the Modied Java Script Value step 148

Adding elds 150

Table of Contents

[ iv ]

Modifying elds 150

Turning on the compability switch 151

Tesng your code 151

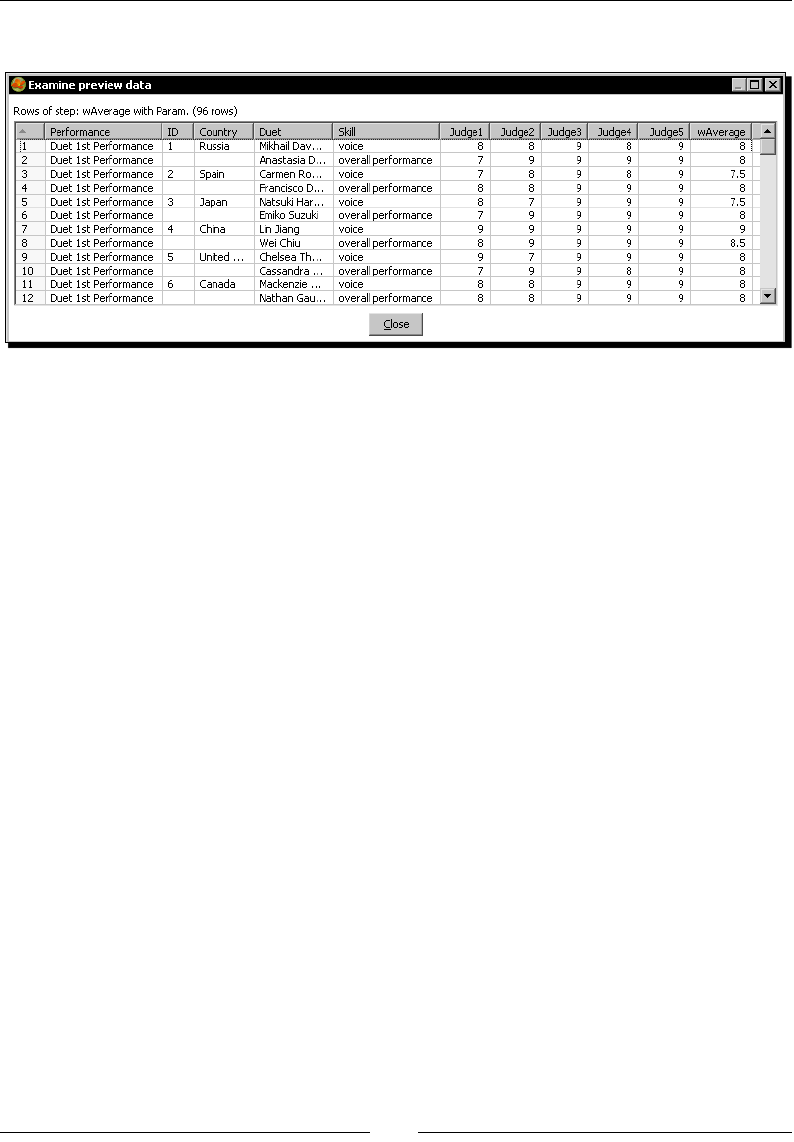

Time for acon – tesng the calculaon of averages 152

Tesng the script using the Test script buon 153

Enriching the code 154

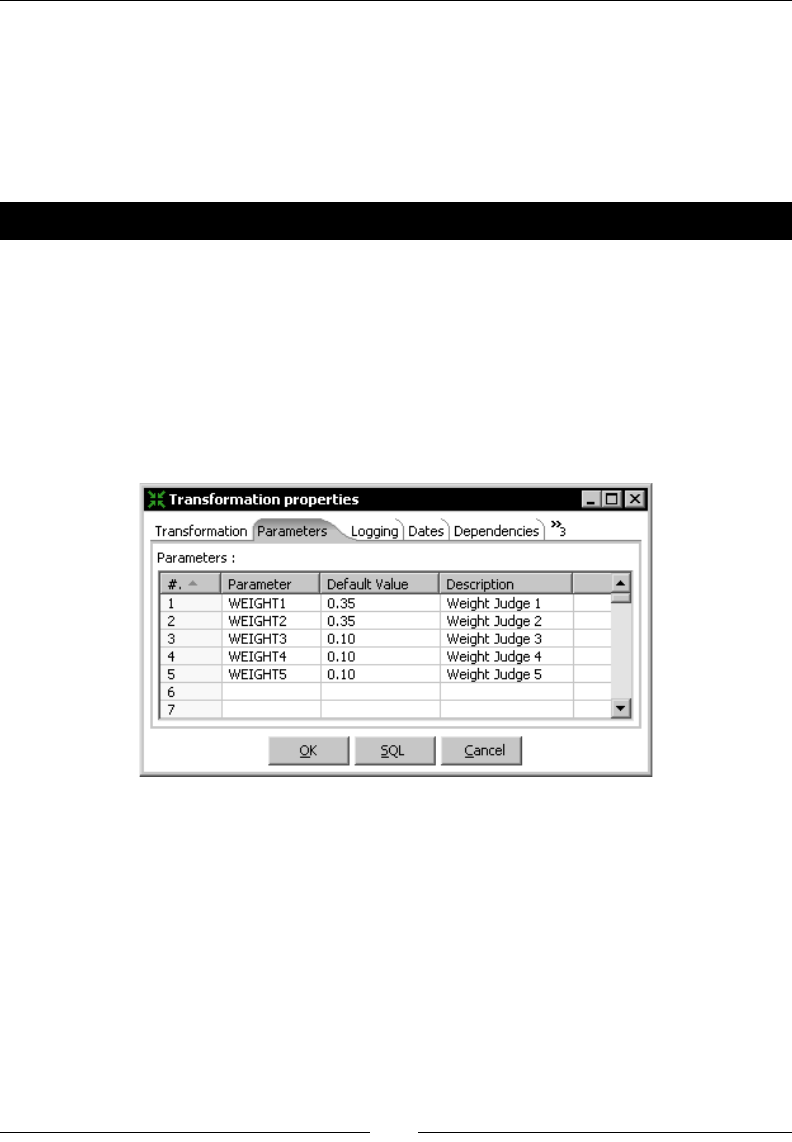

Time for acon – calculang exible scores by using variables 154

Using named parameters 158

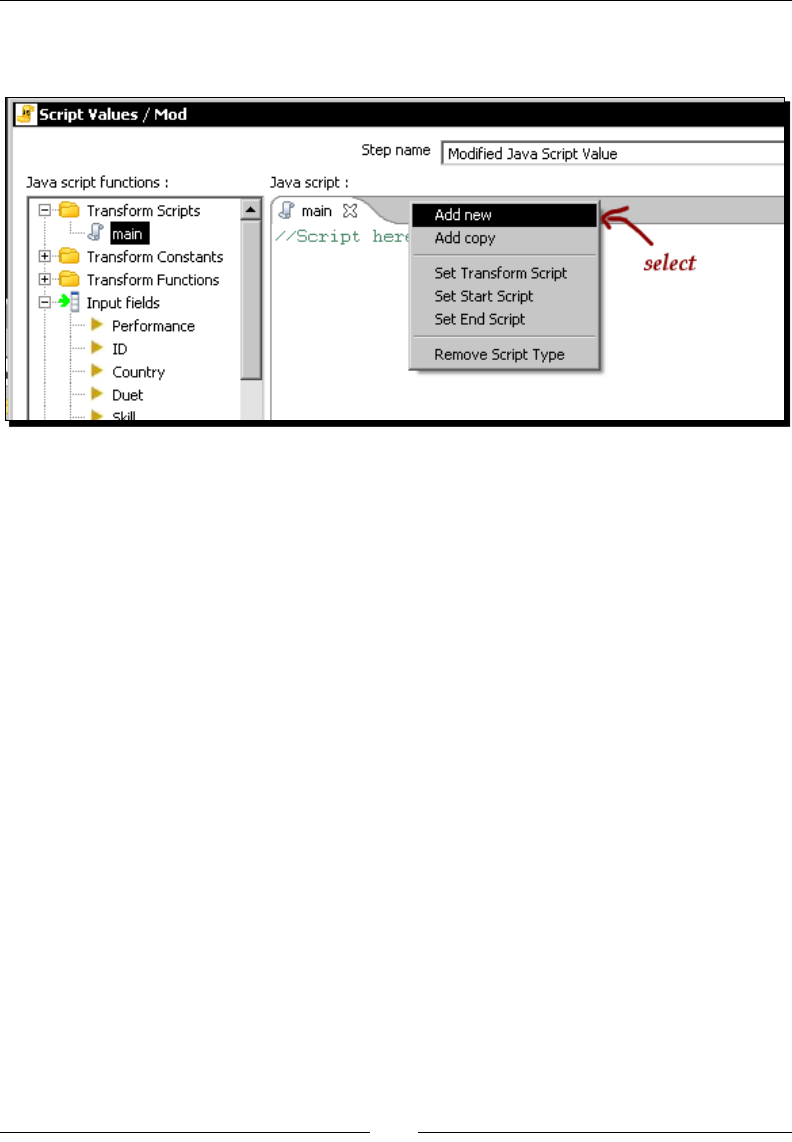

Using the special Start, Main, and End scripts 159

Using transformaon predened constants 159

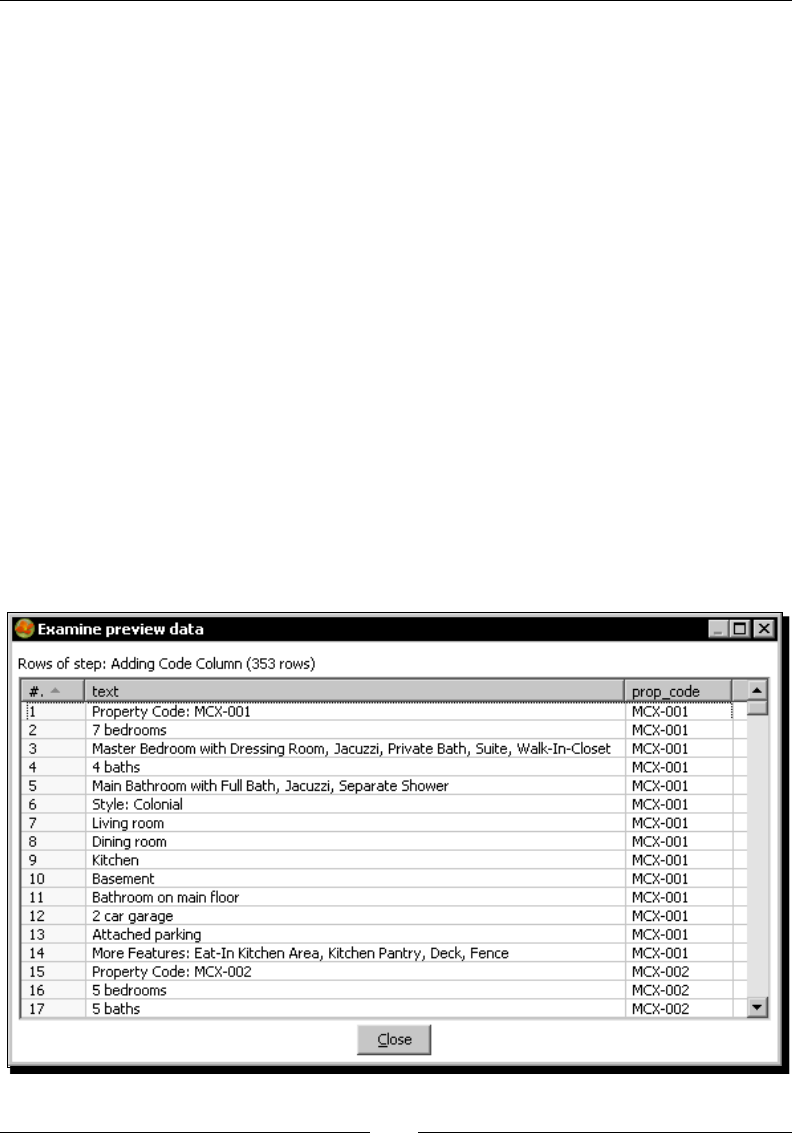

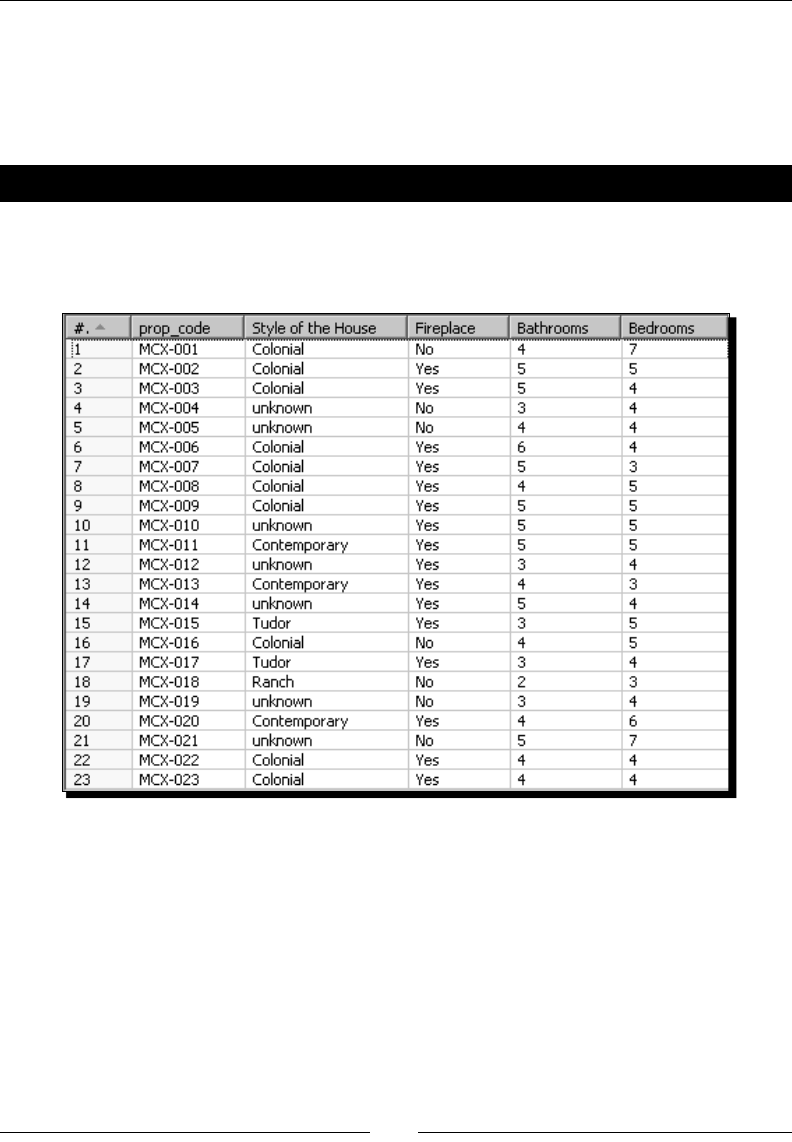

Reading and parsing unstructured les 162

Time for acon – changing a list of house descripons with JavaScript 162

Looking at previous rows 164

Avoiding coding by using purpose-built steps 165

Summary 167

Chapter 6: Transforming the Row Set 169

Converng rows to columns 169

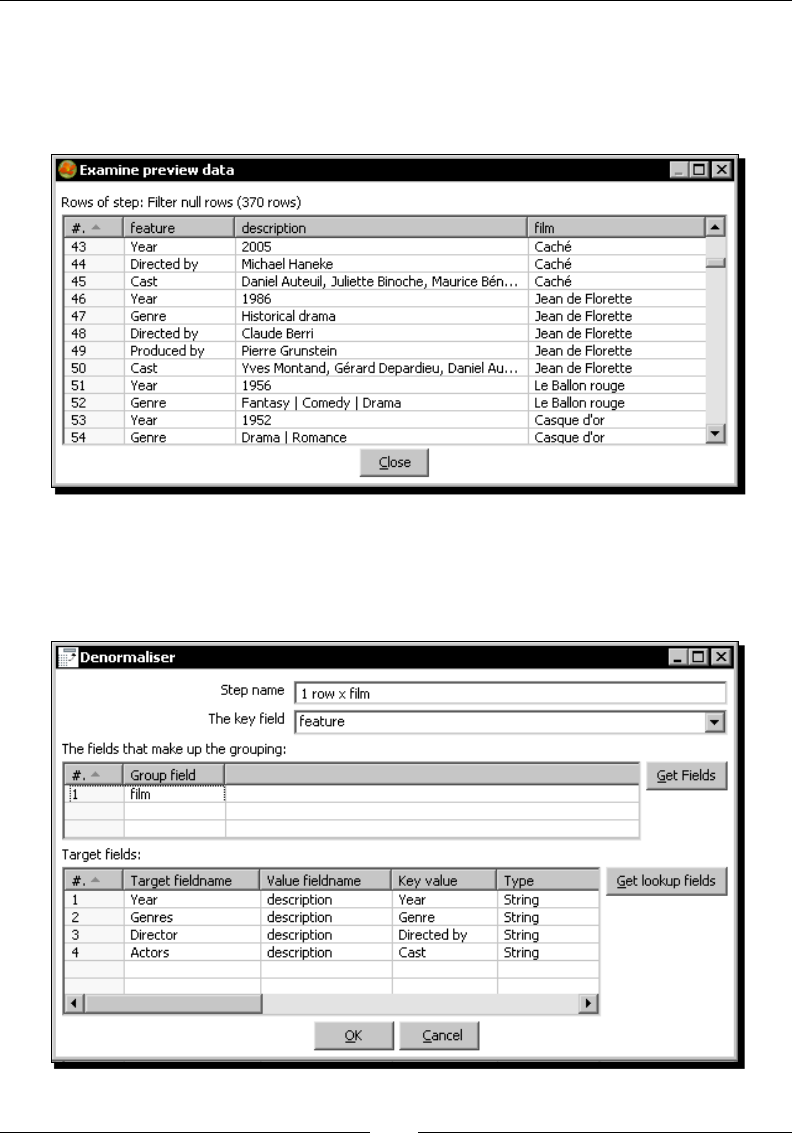

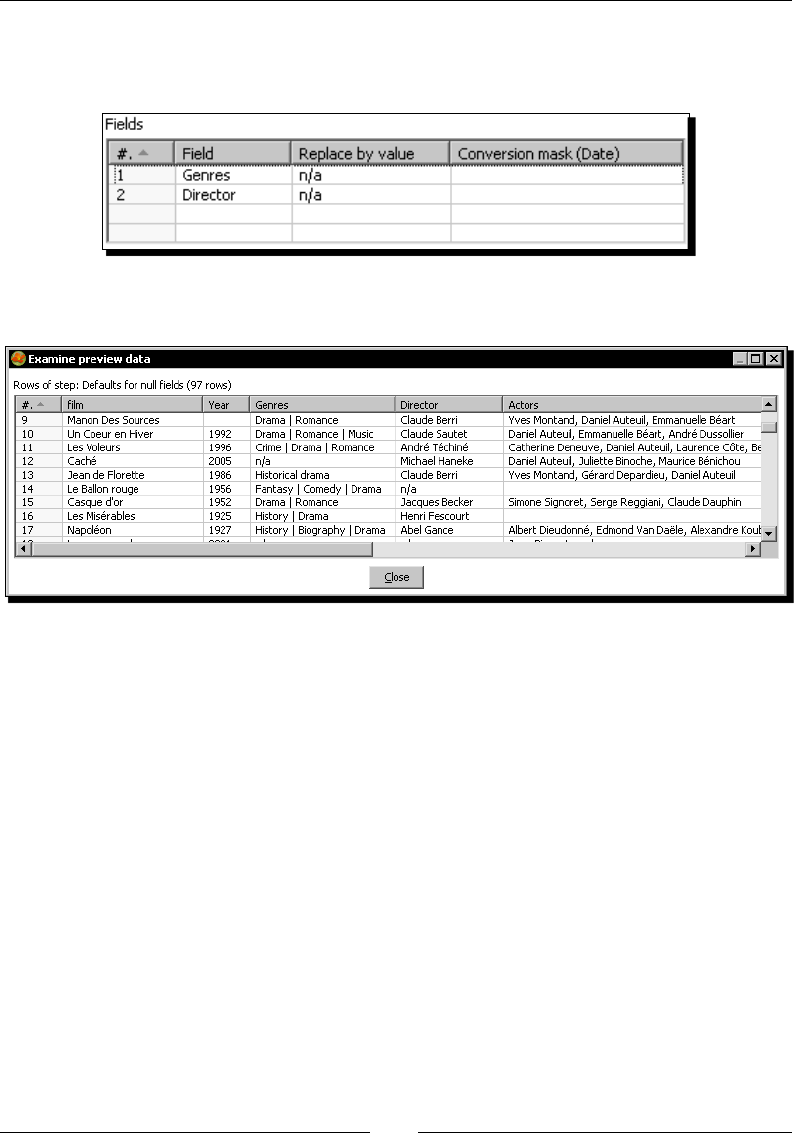

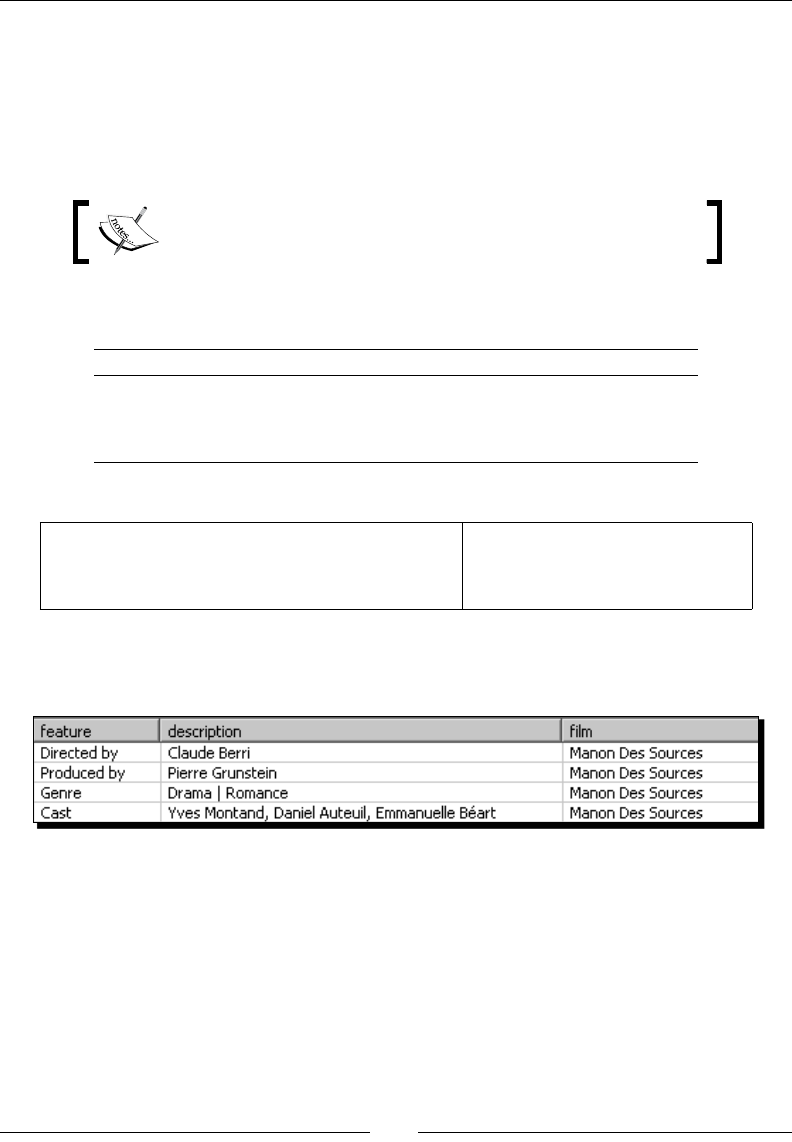

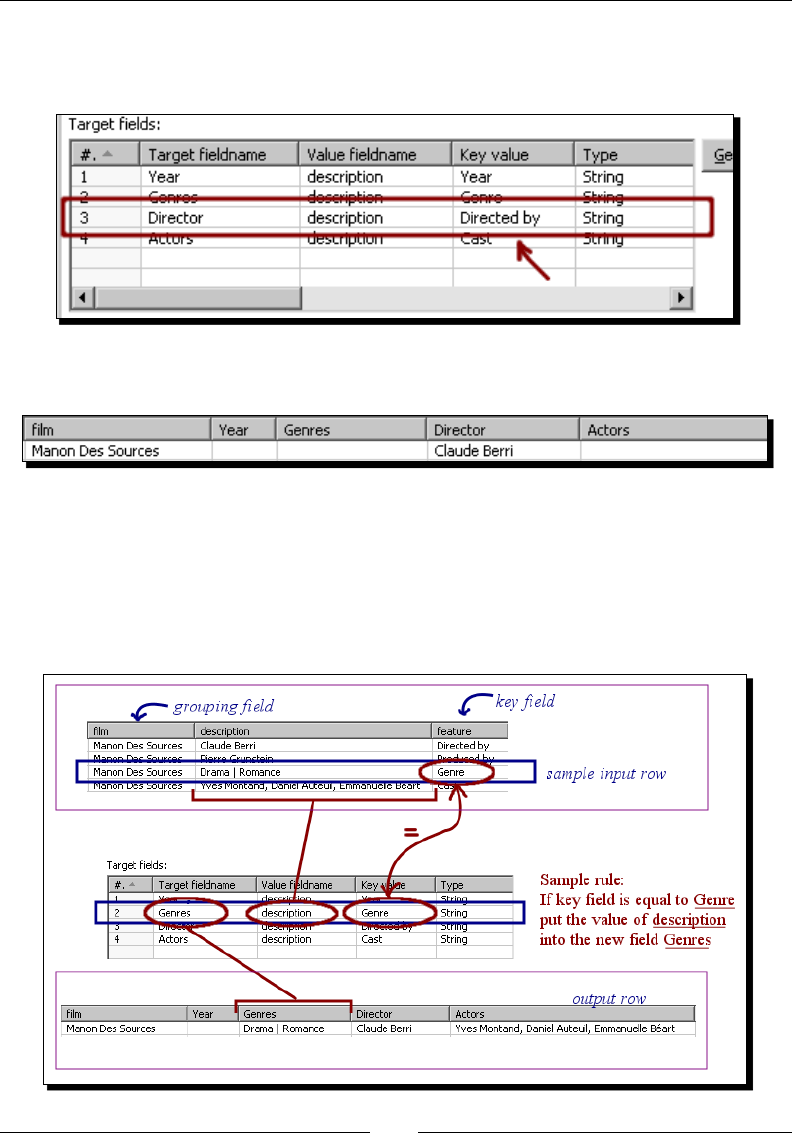

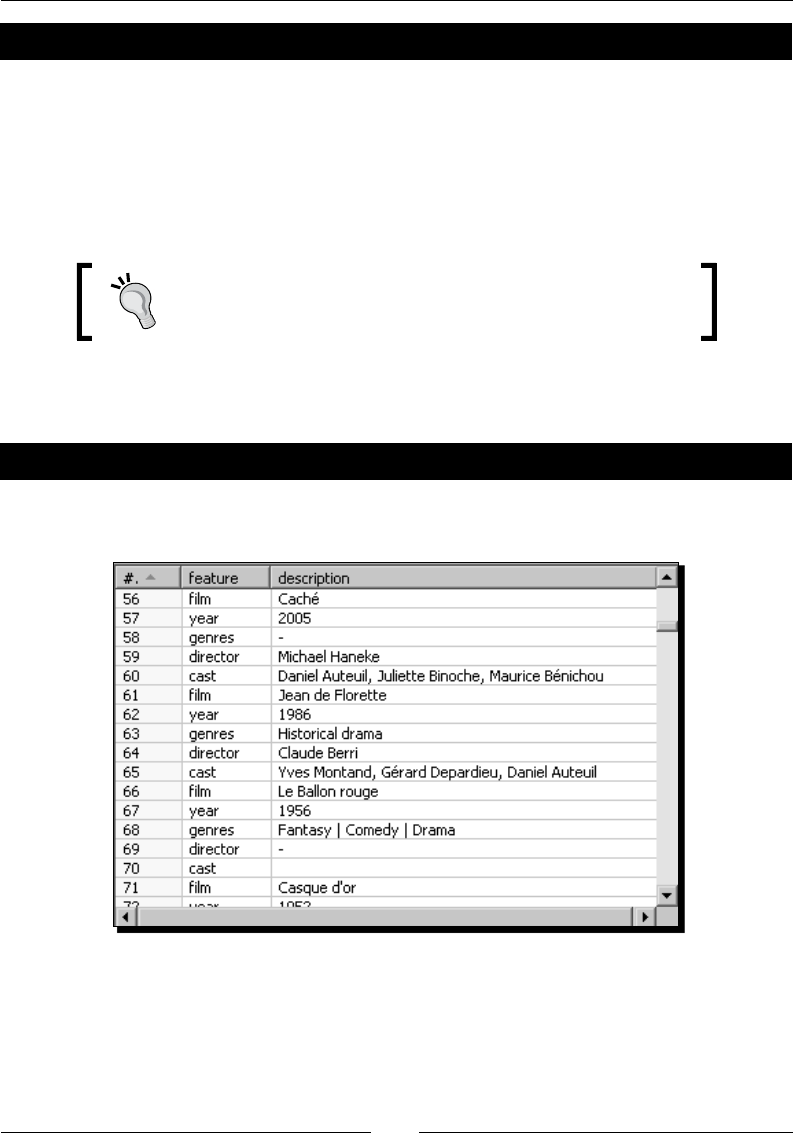

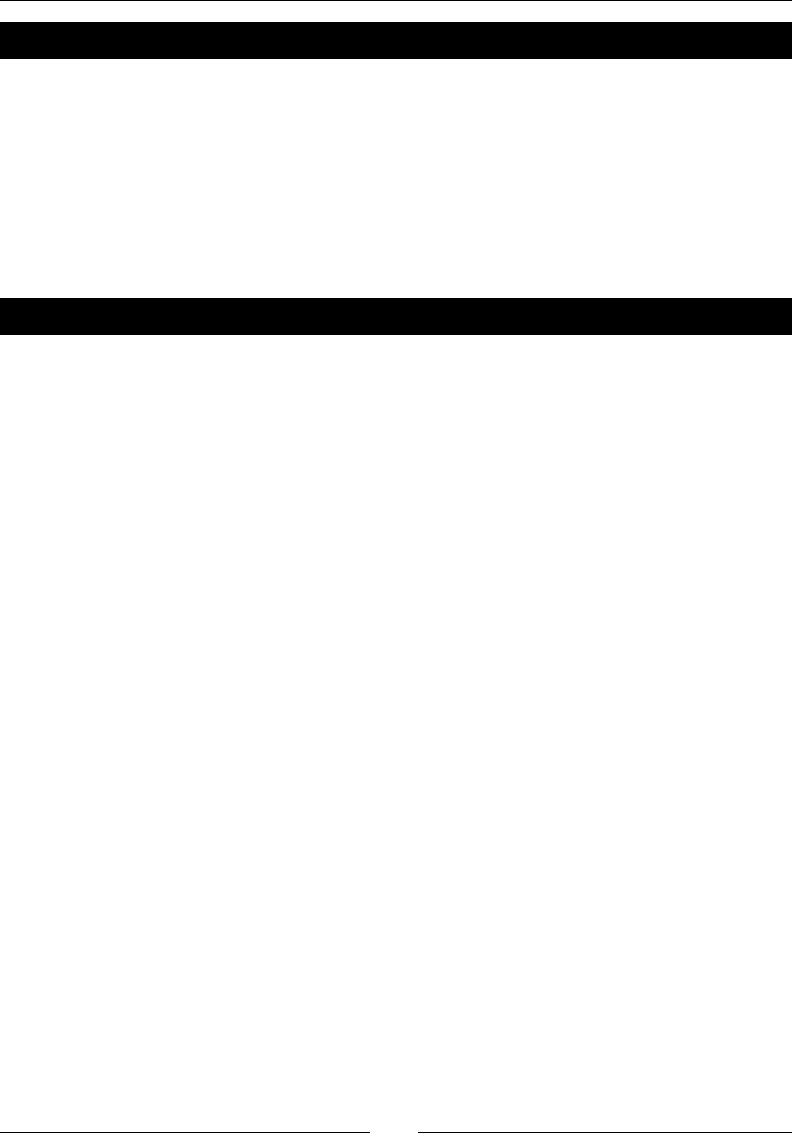

Time for acon – enhancing a lms le by converng rows to columns 170

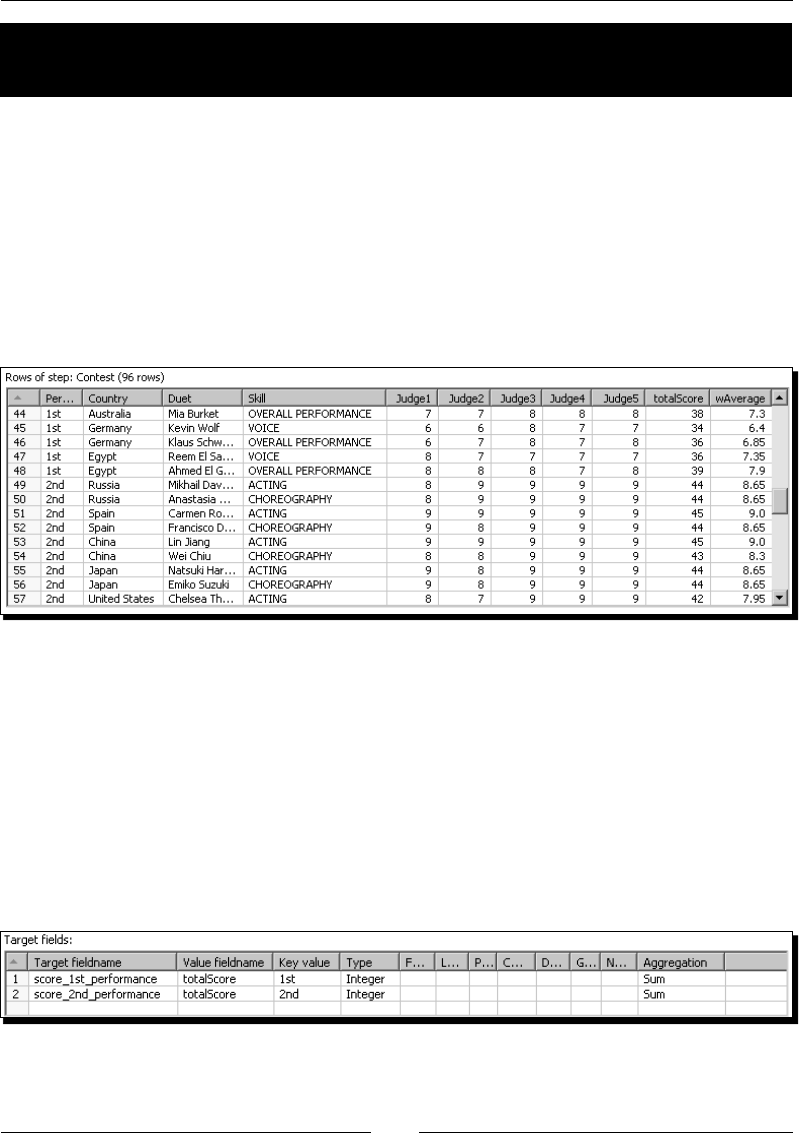

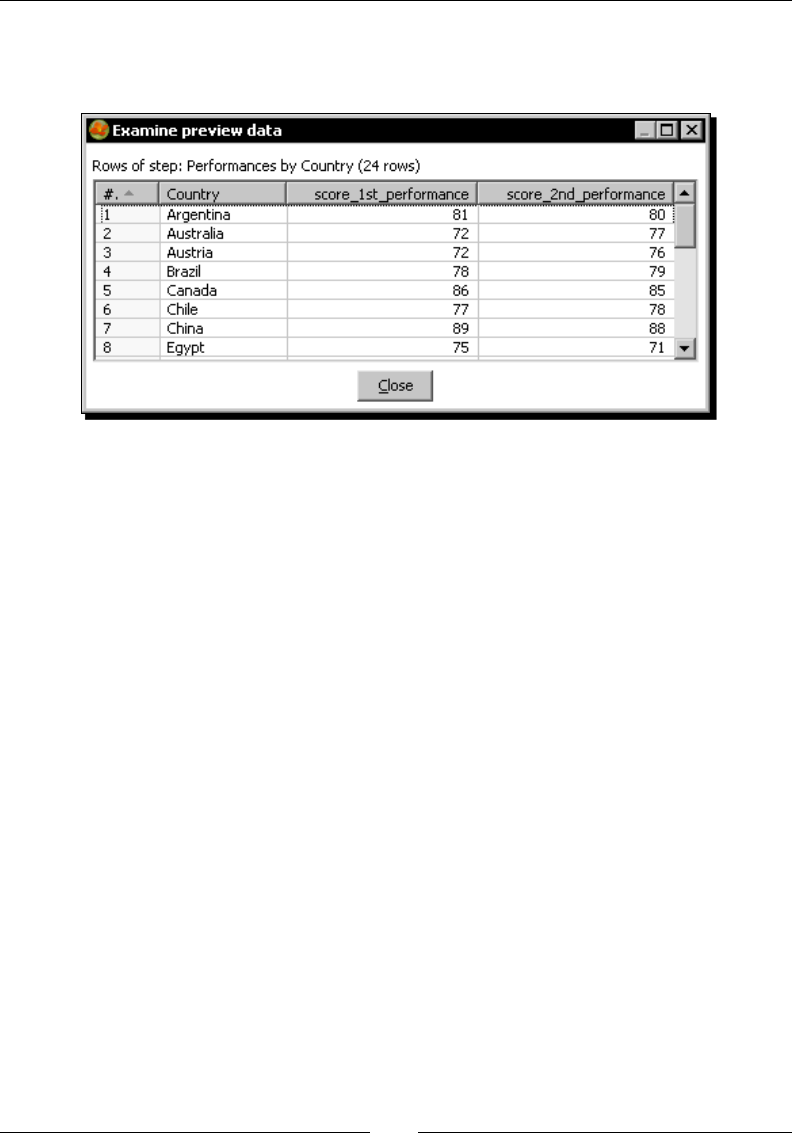

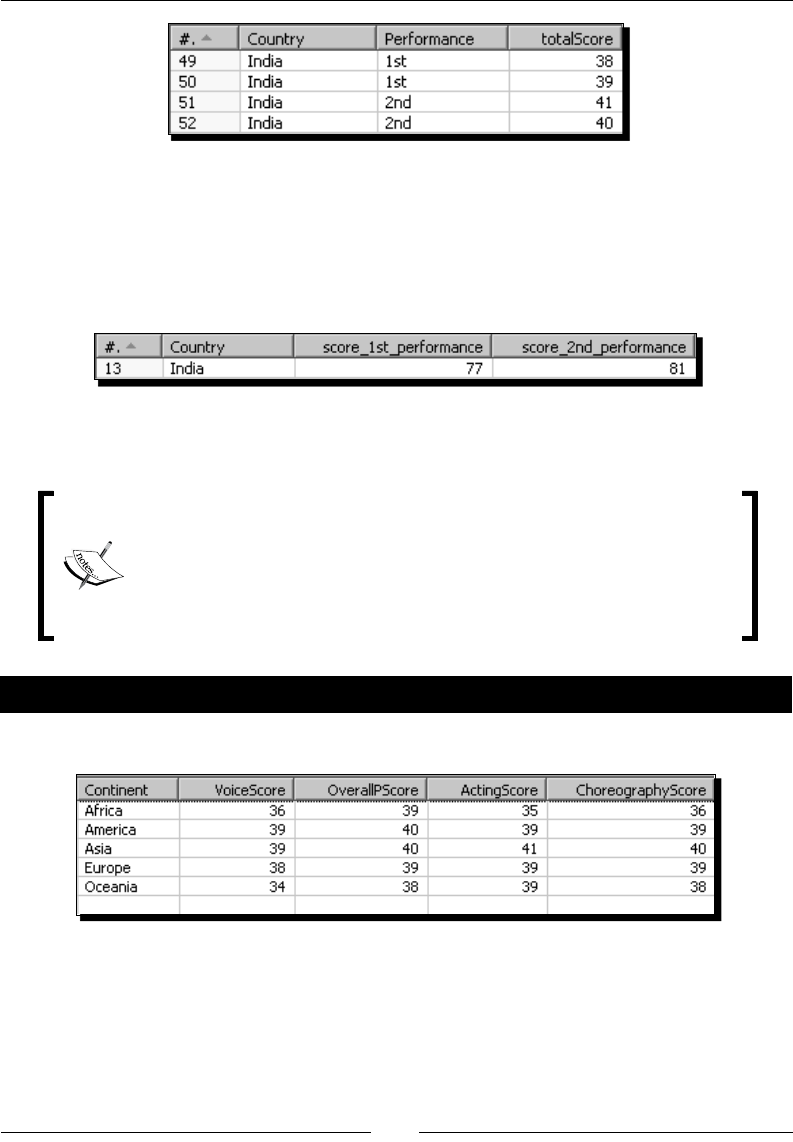

Converng row data to column data by using the Row denormalizer step 173

Aggregang data with a Row denormalizer step 176

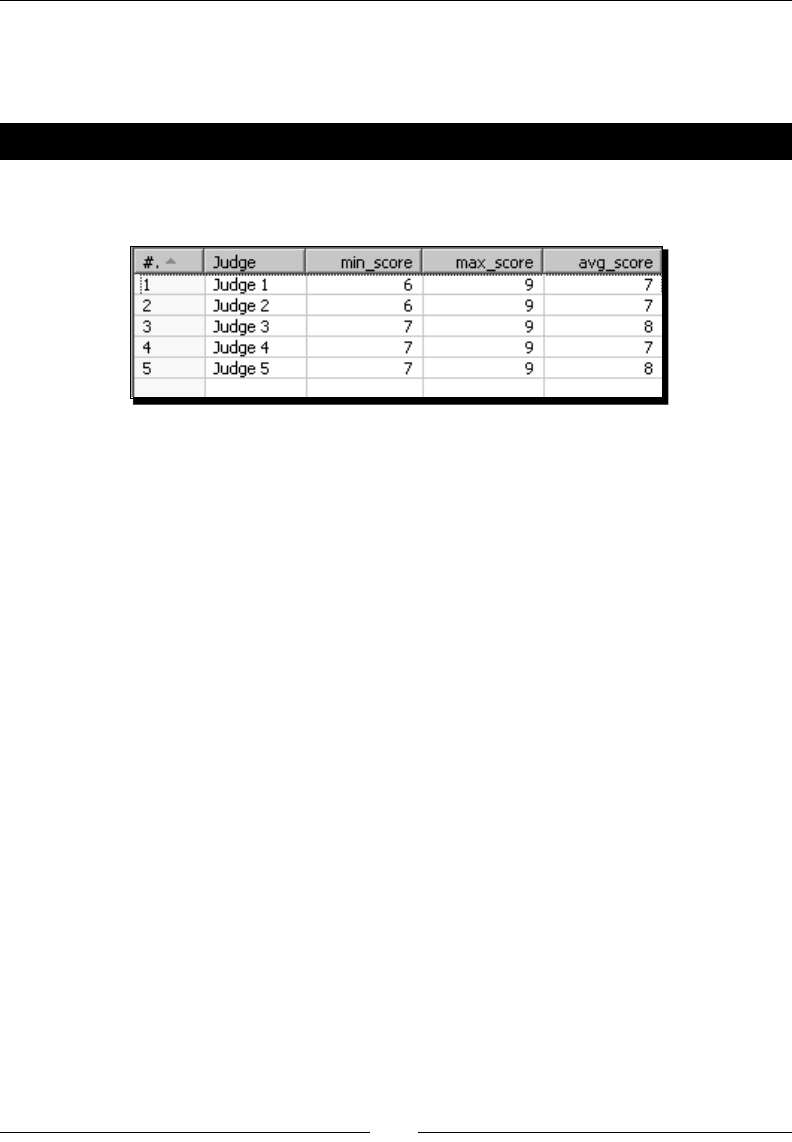

Time for acon – calculang total scores by performances by country 177

Using Row denormalizer for aggregang data 178

Normalizing data 180

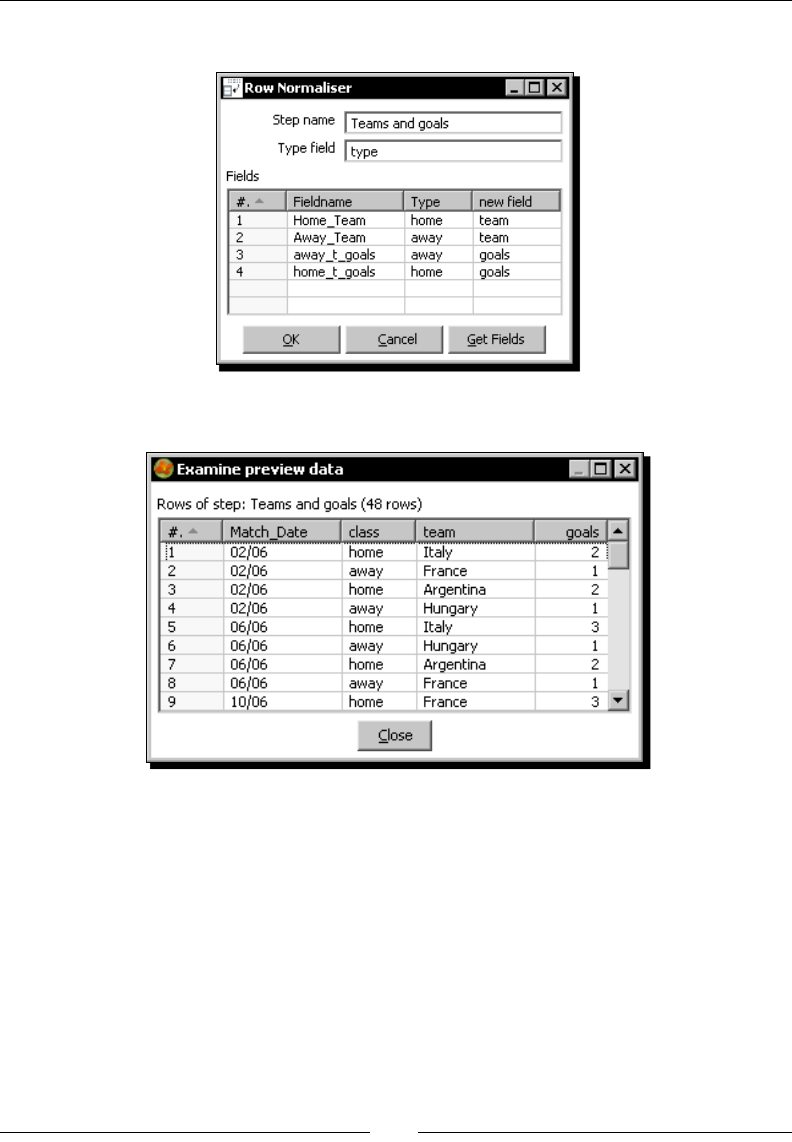

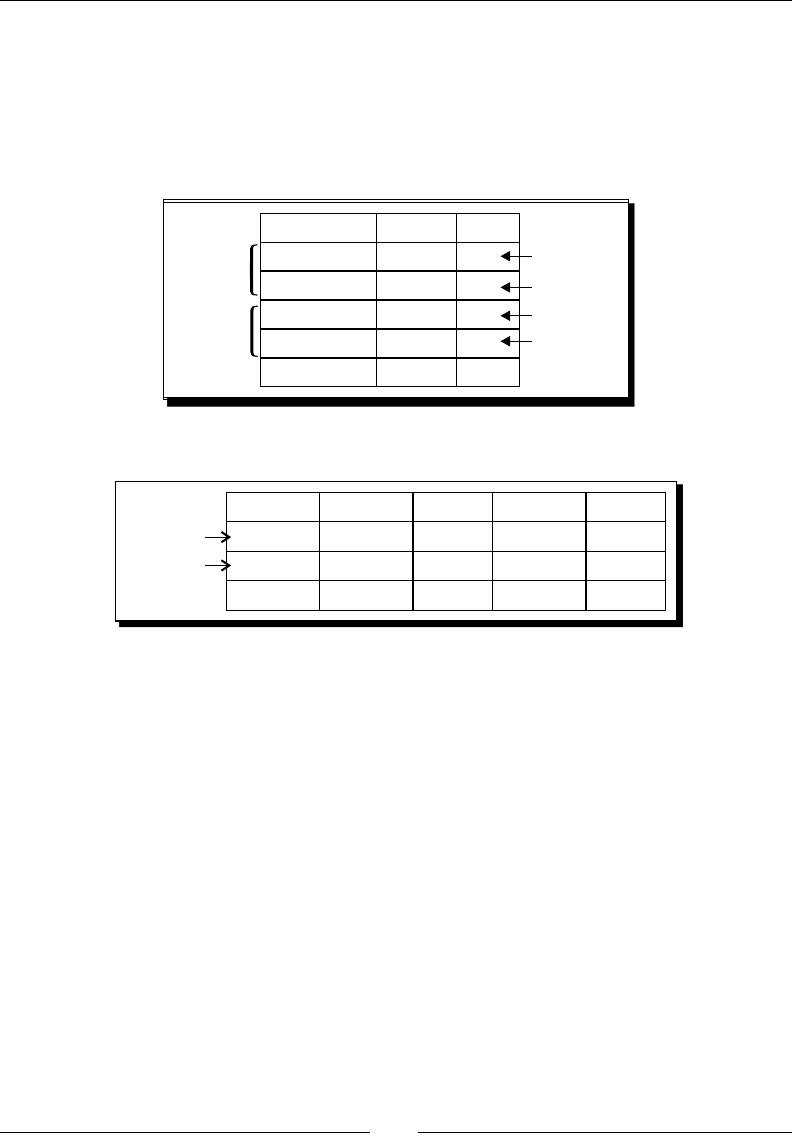

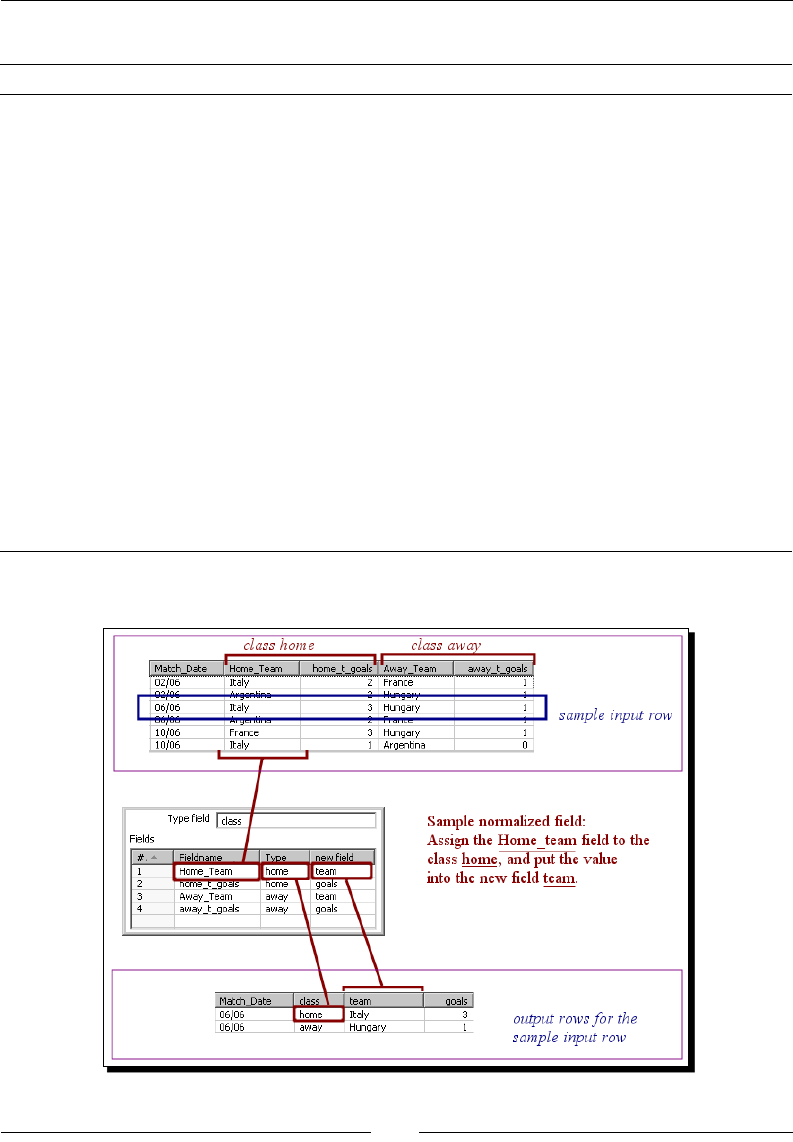

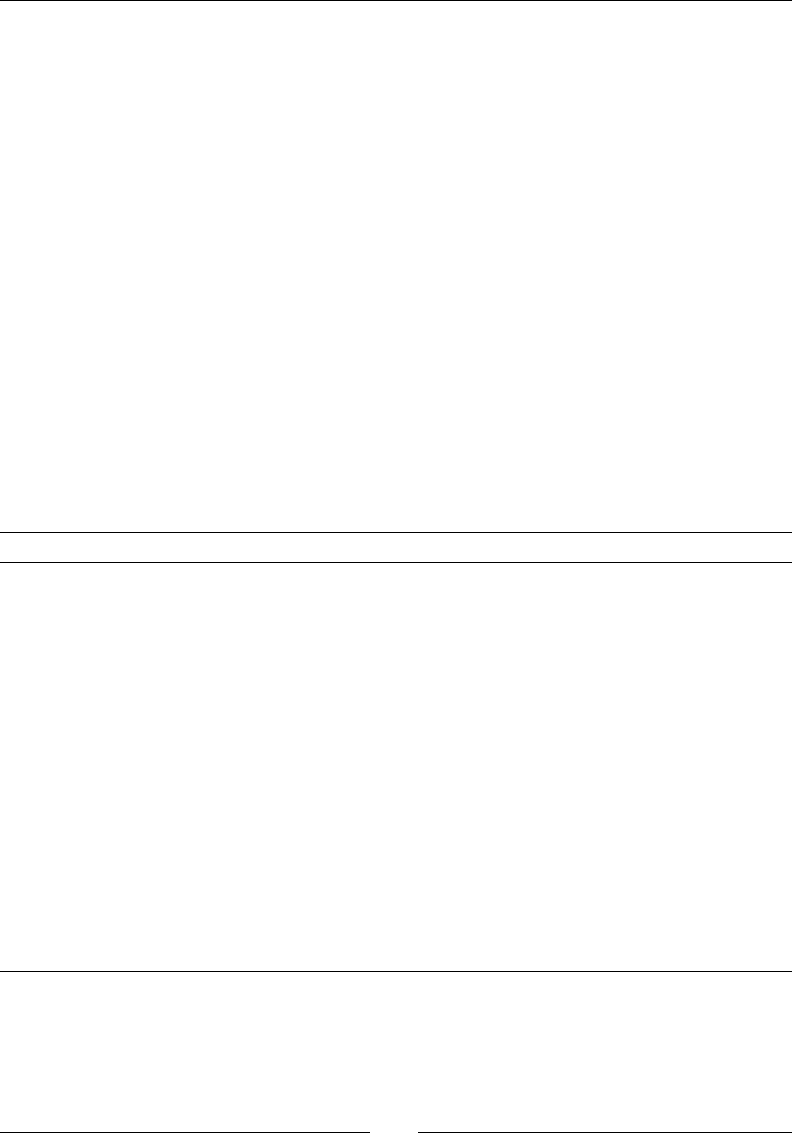

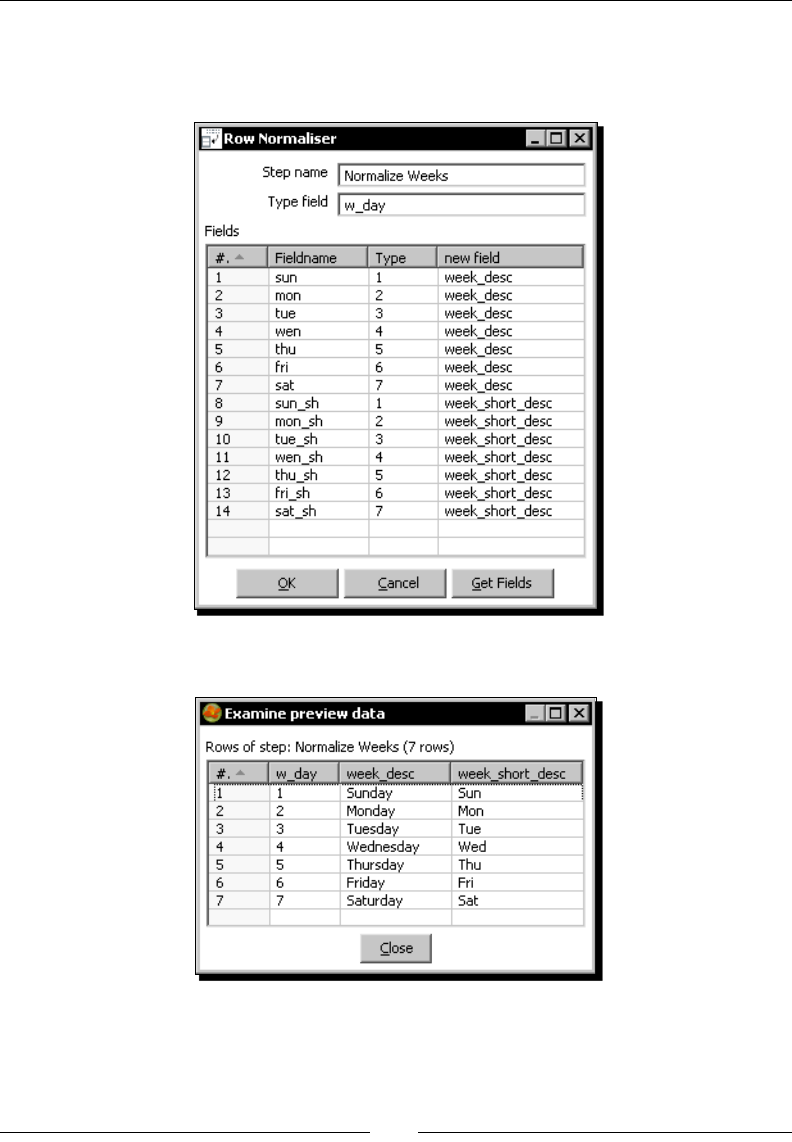

Time for acon – enhancing the matches le by normalizing the dataset 180

Modifying the dataset with a Row Normalizer step 182

Summarizing the PDI steps that operate on sets of rows 184

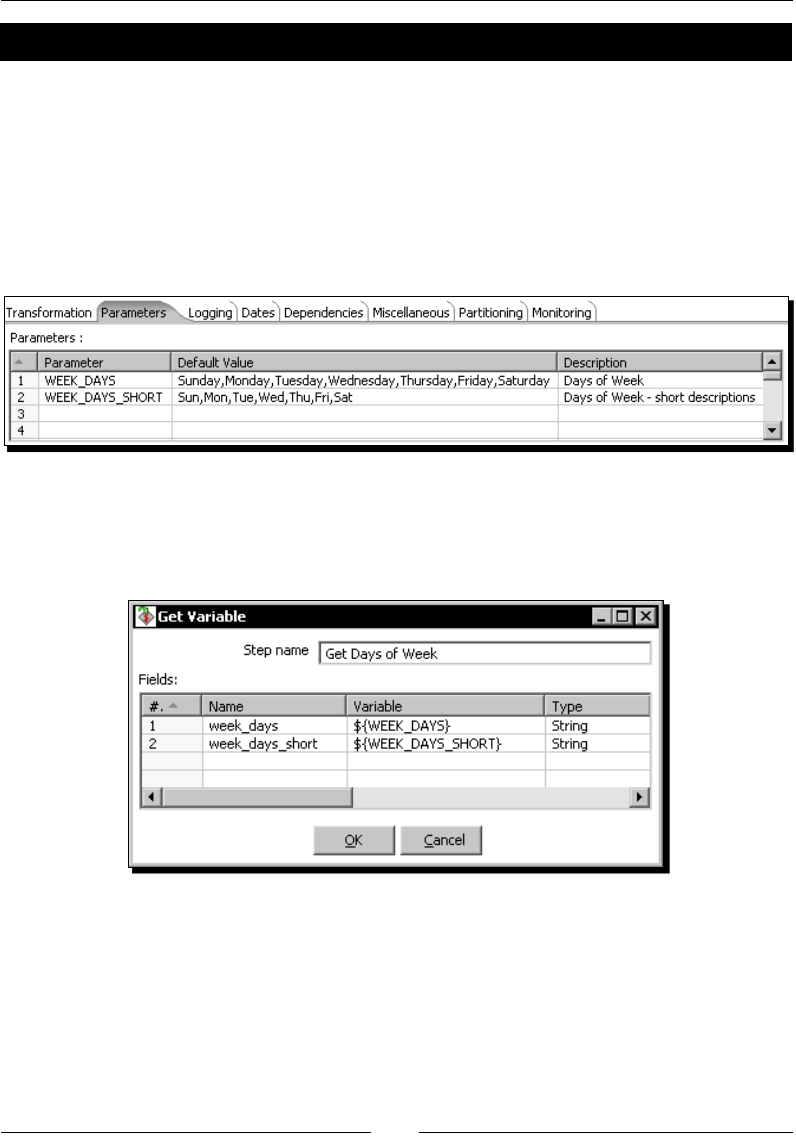

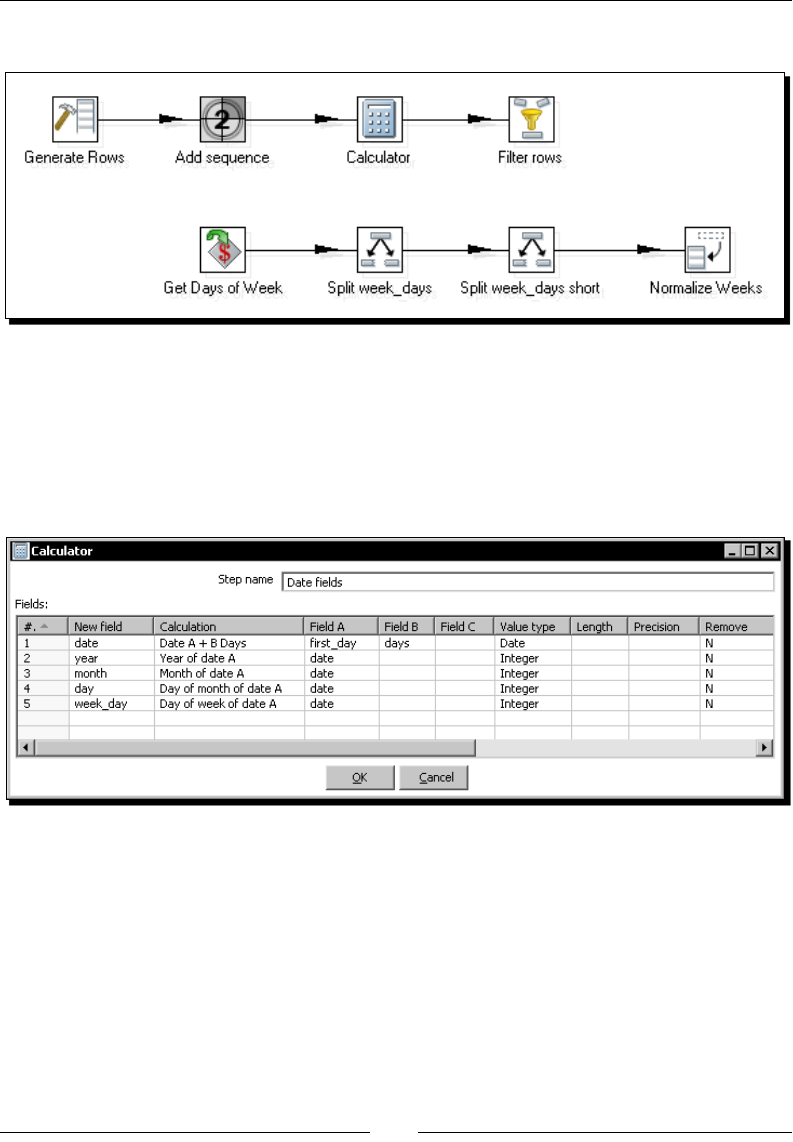

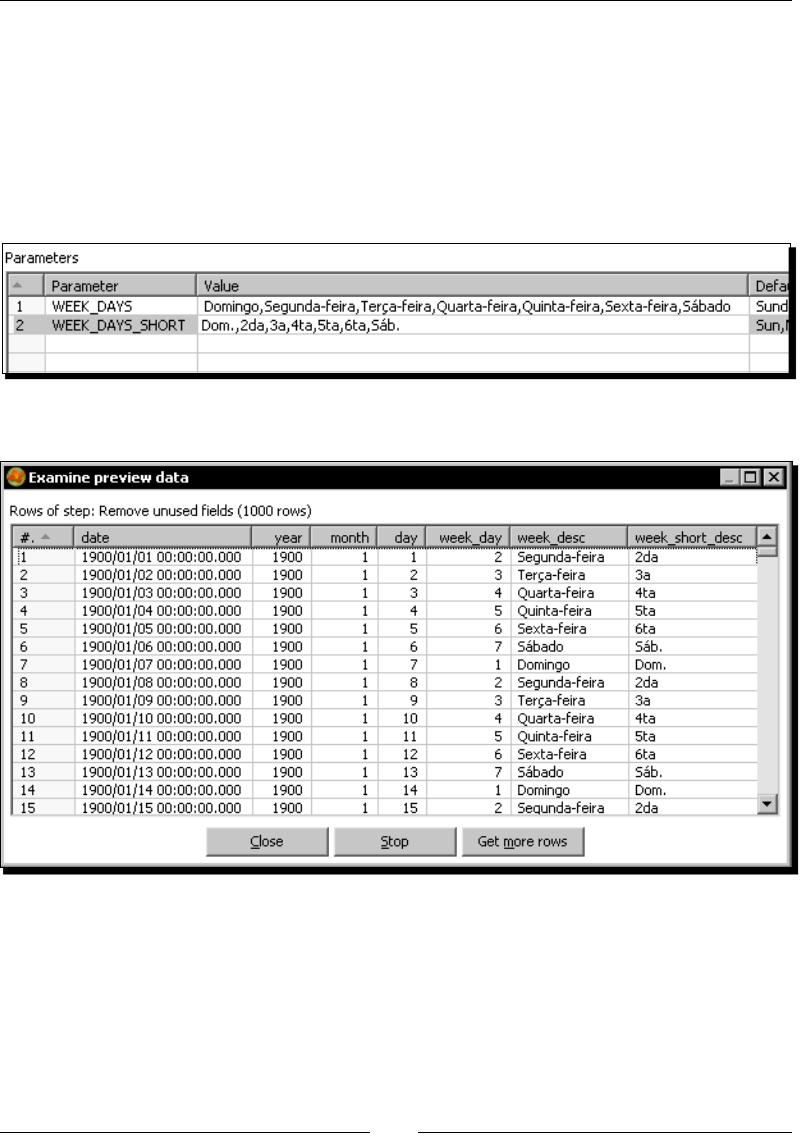

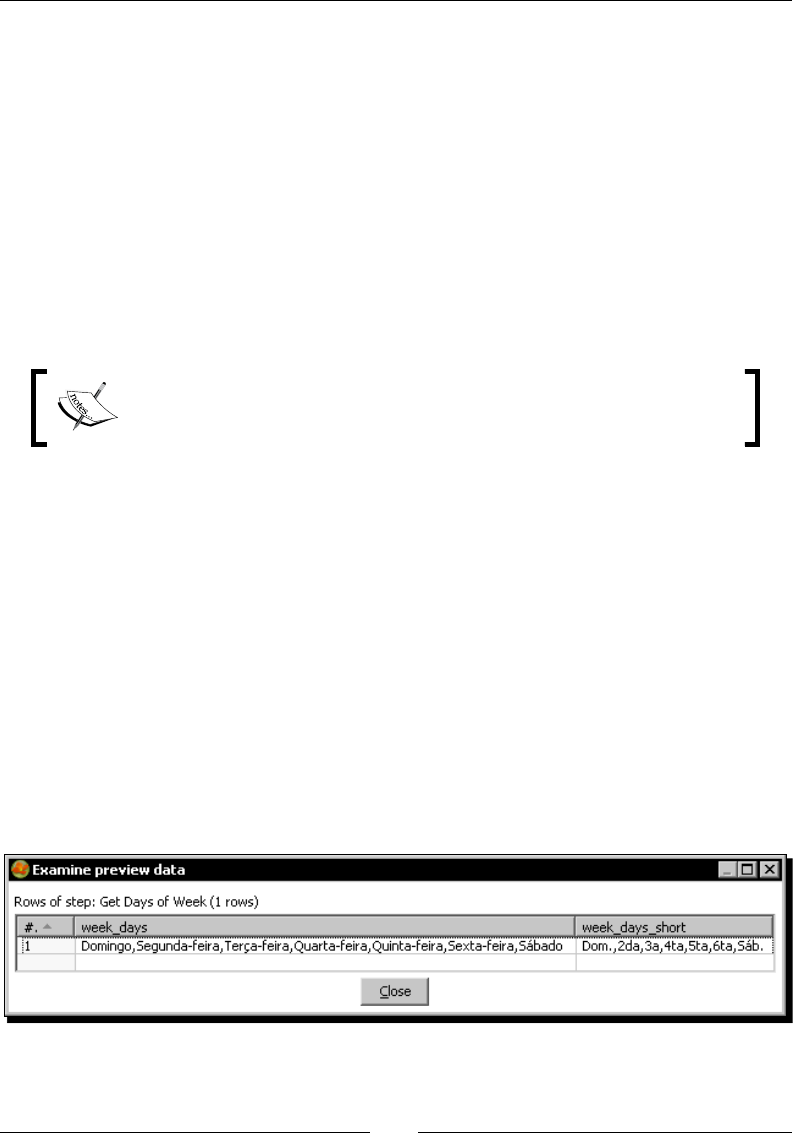

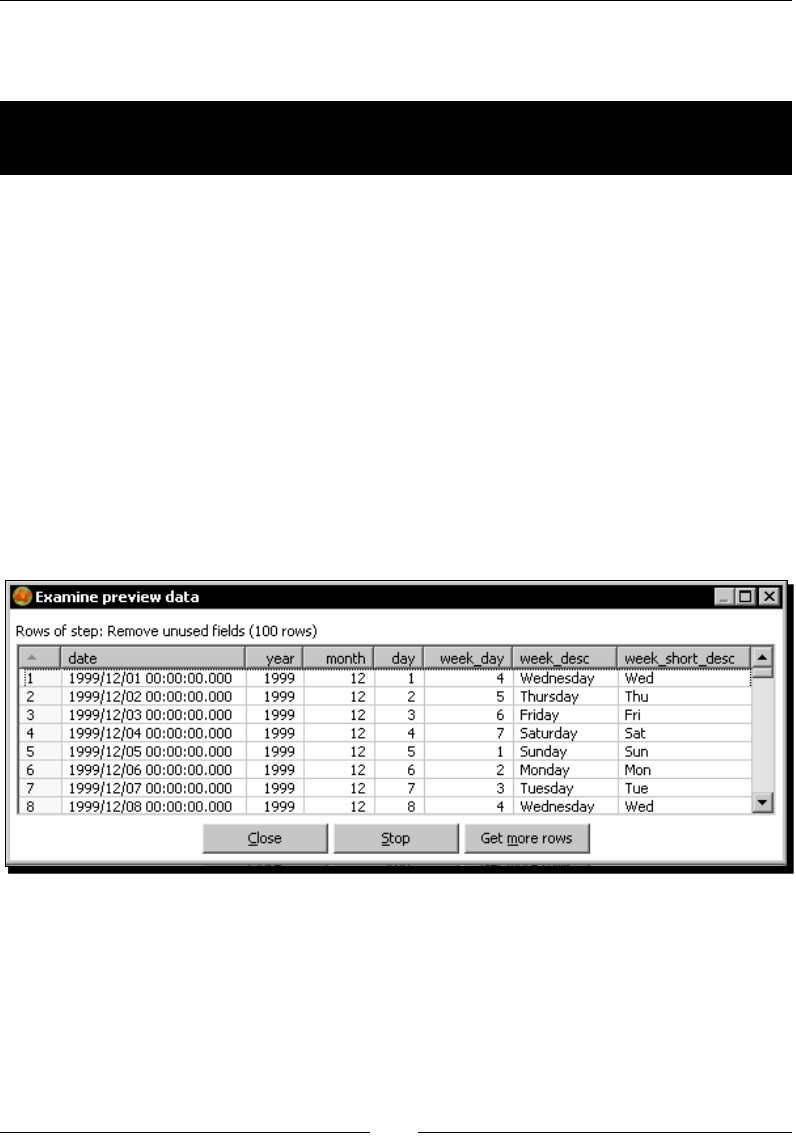

Generang a custom me dimension dataset by using Kele variables 186

Time for acon – creang the me dimension dataset 187

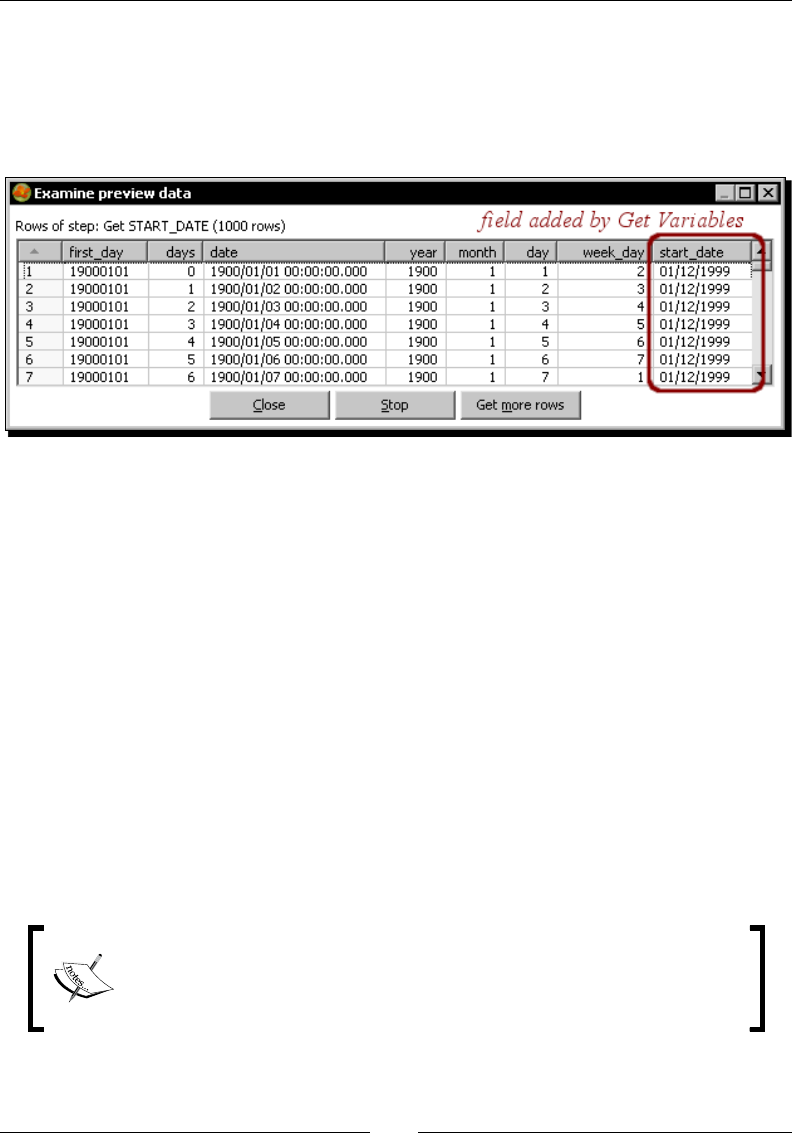

Geng variables 191

Time for acon – geng variables for seng the default starng date 192

Using the Get Variables step 193

Summary 194

Chapter 7: Validang Data and Handling Errors 195

Capturing errors 195

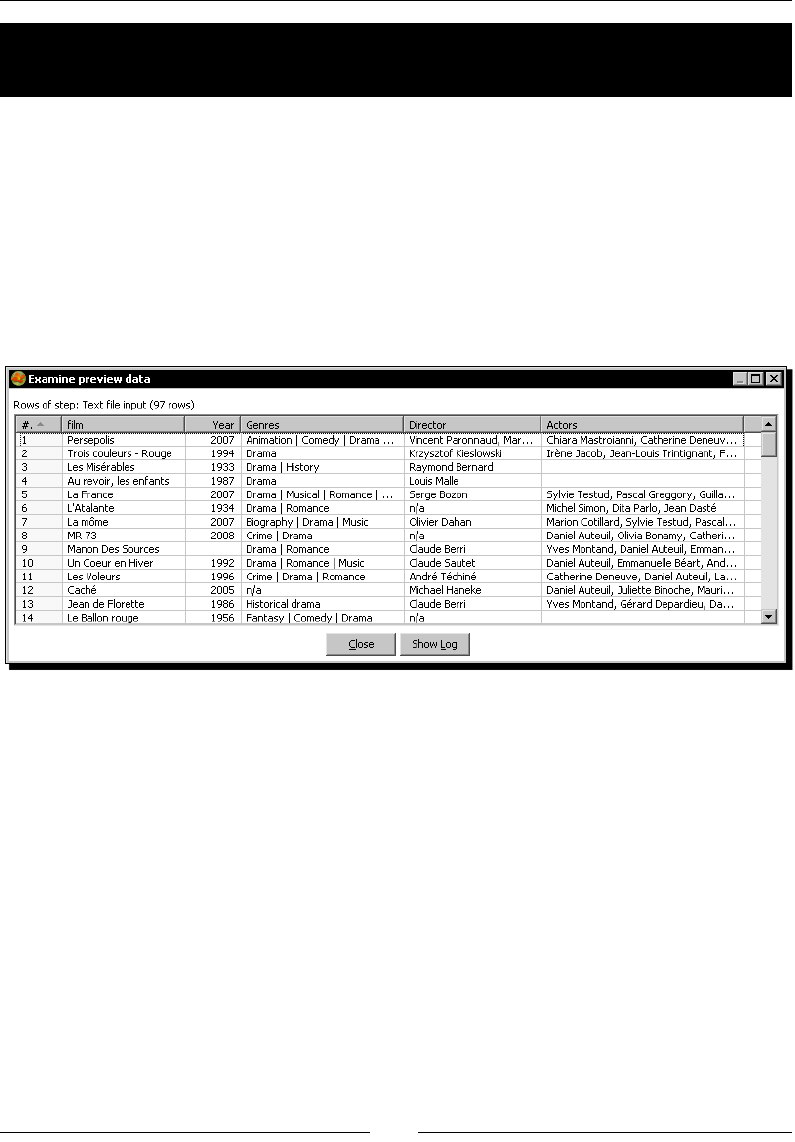

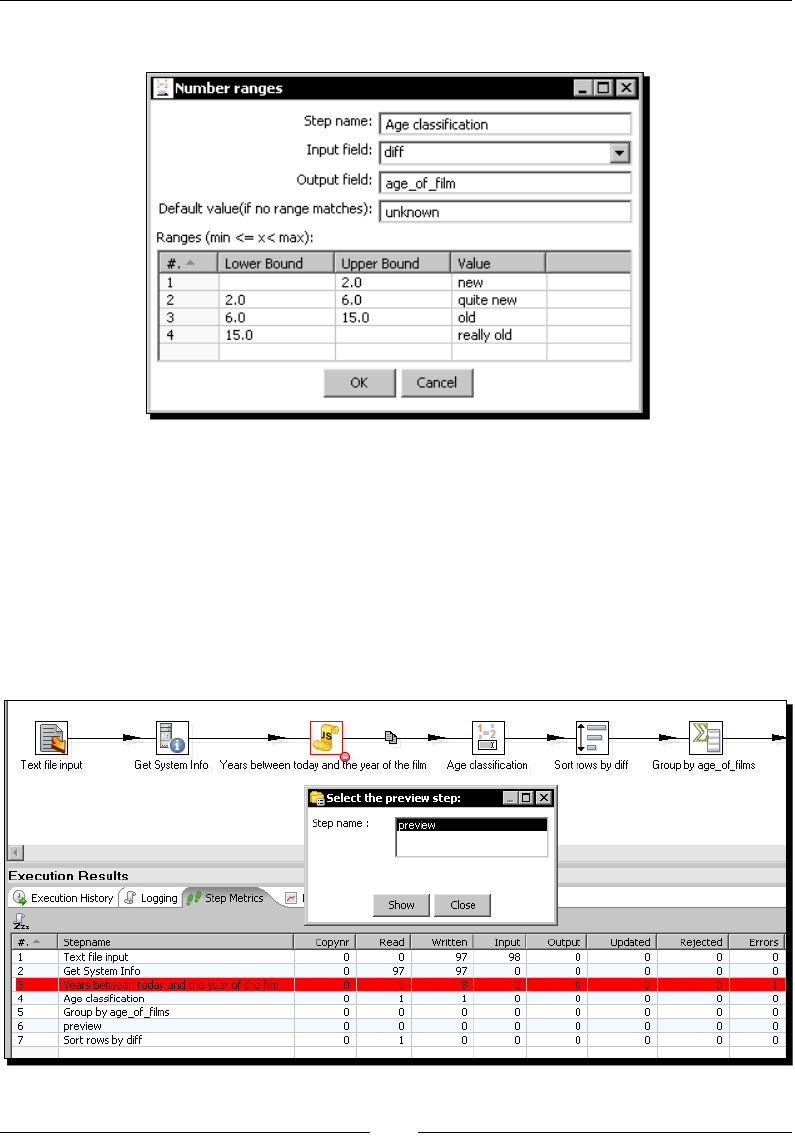

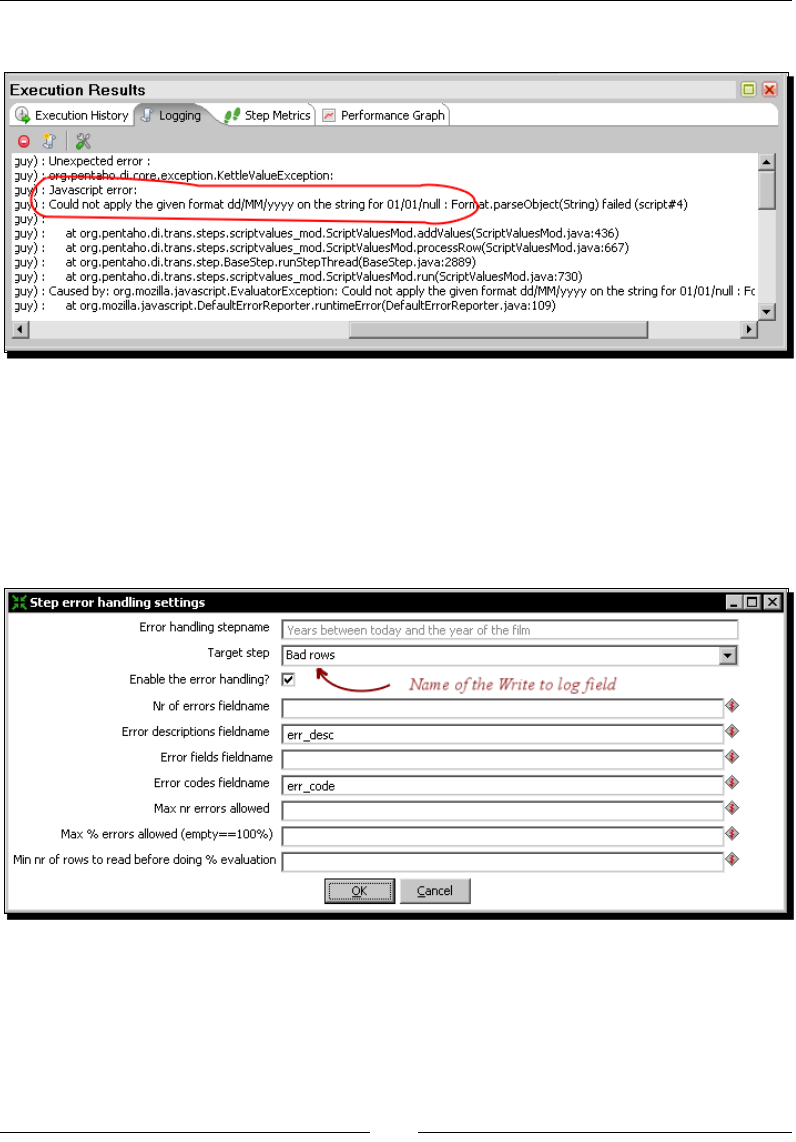

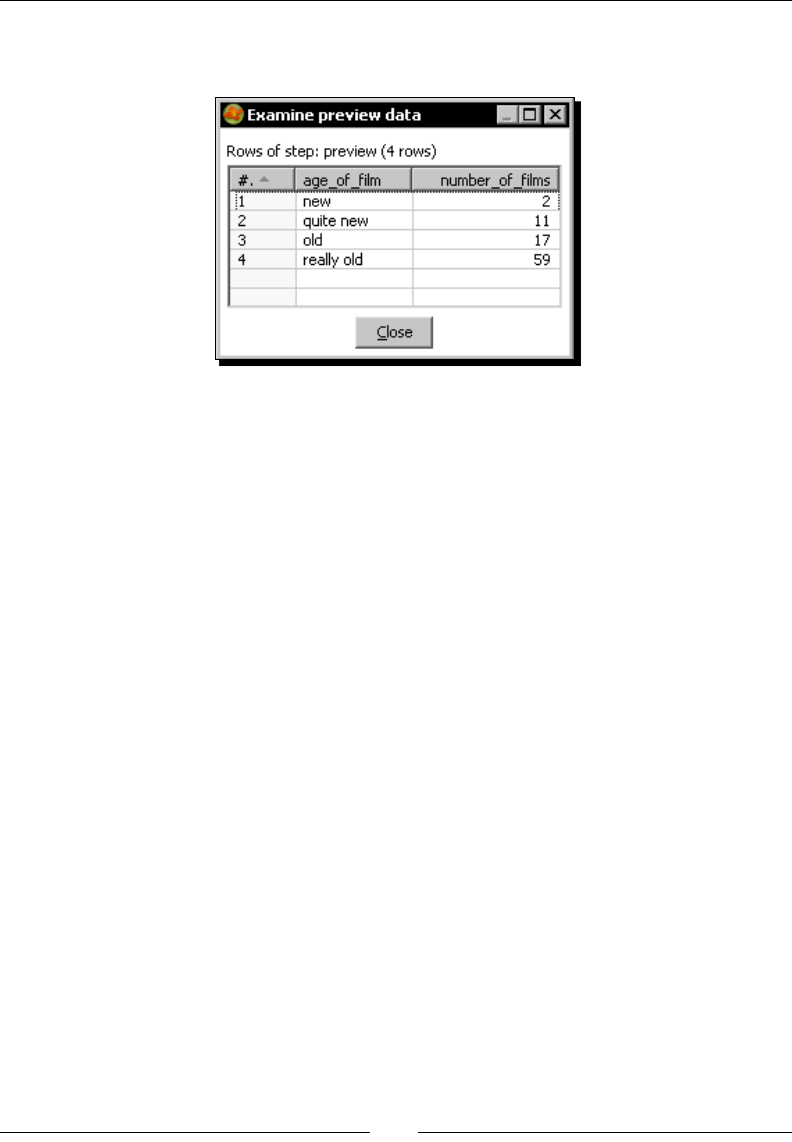

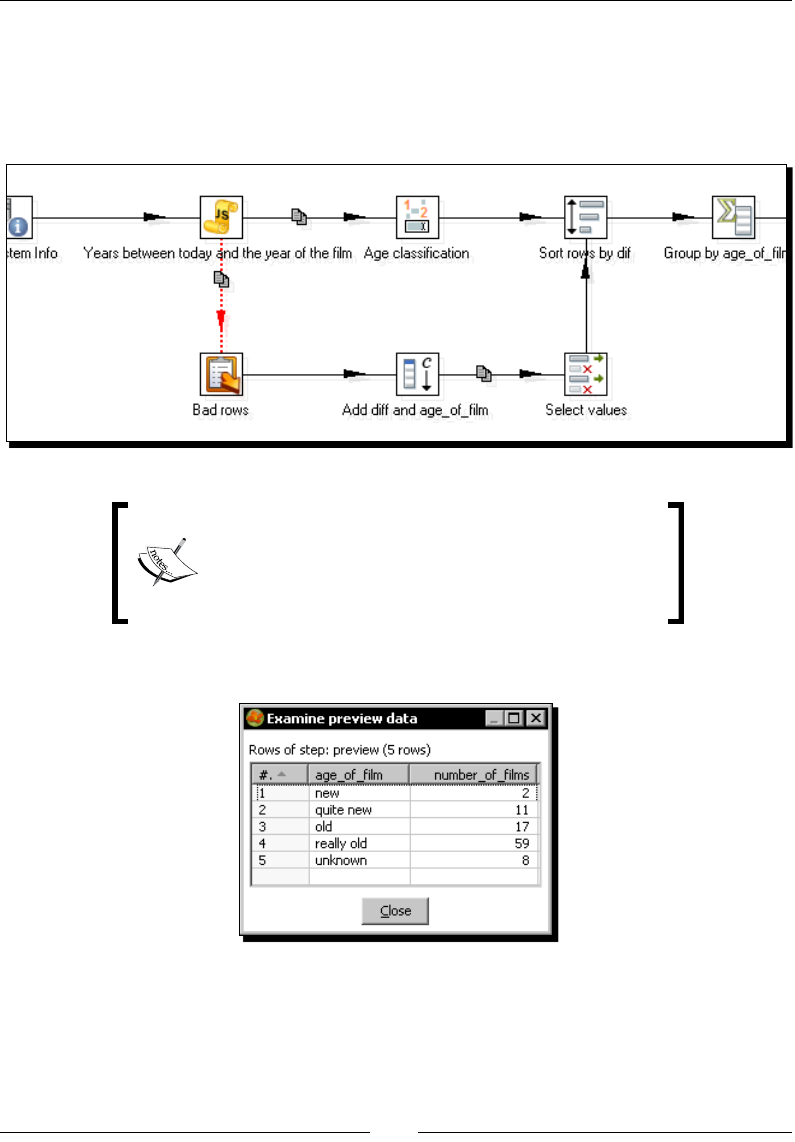

Time for acon – capturing errors while calculang the age of a lm 196

Using PDI error handling funconality 200

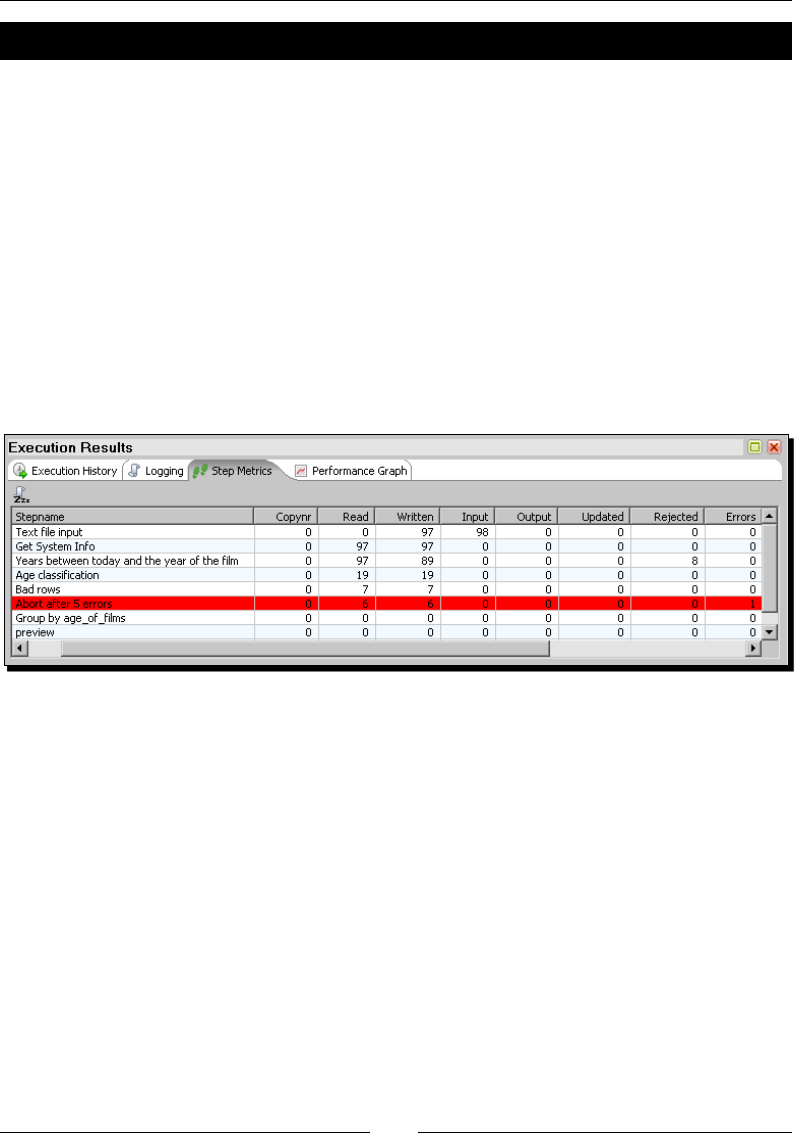

Aborng a transformaon 201

Time for acon – aborng when there are too many errors 202

Aborng a transformaon using the Abort step 203

Fixing captured errors 203

Table of Contents

[ v ]

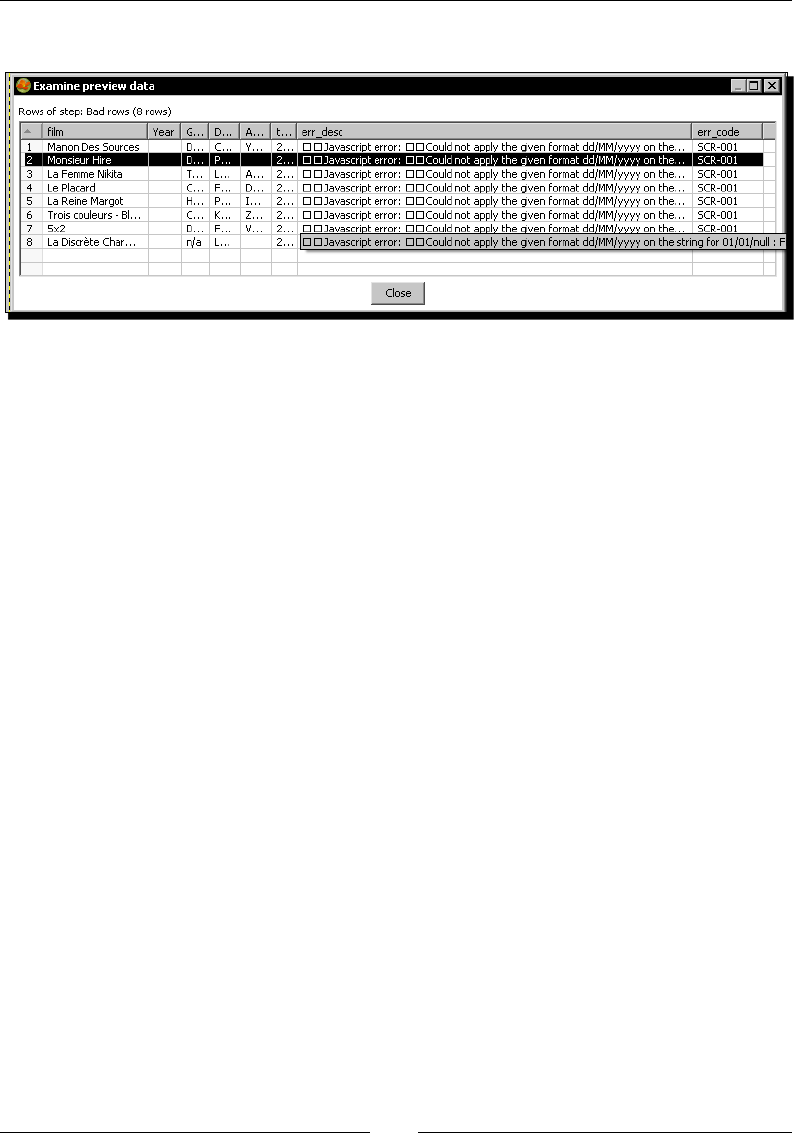

Time for acon – treang errors that may appear 203

Treang rows coming to the error stream 205

Avoiding unexpected errors by validang data 206

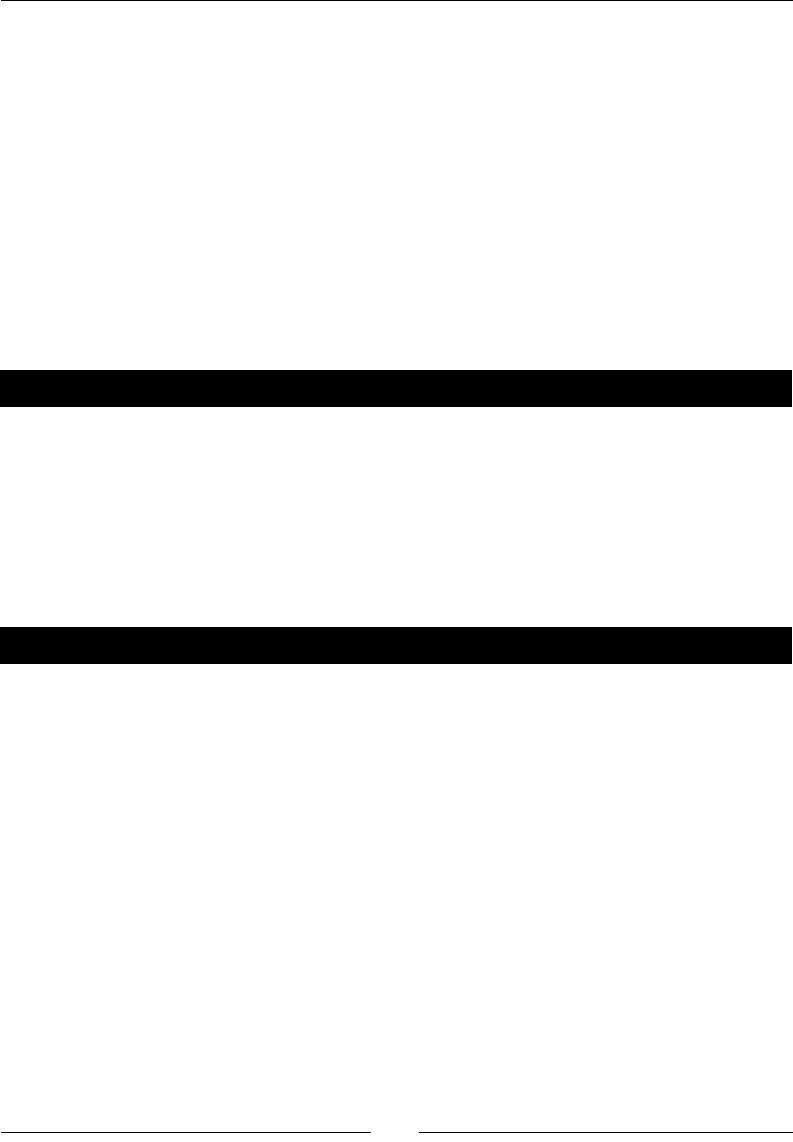

Time for acon – validang genres with a Regex Evaluaon step 206

Validang data 208

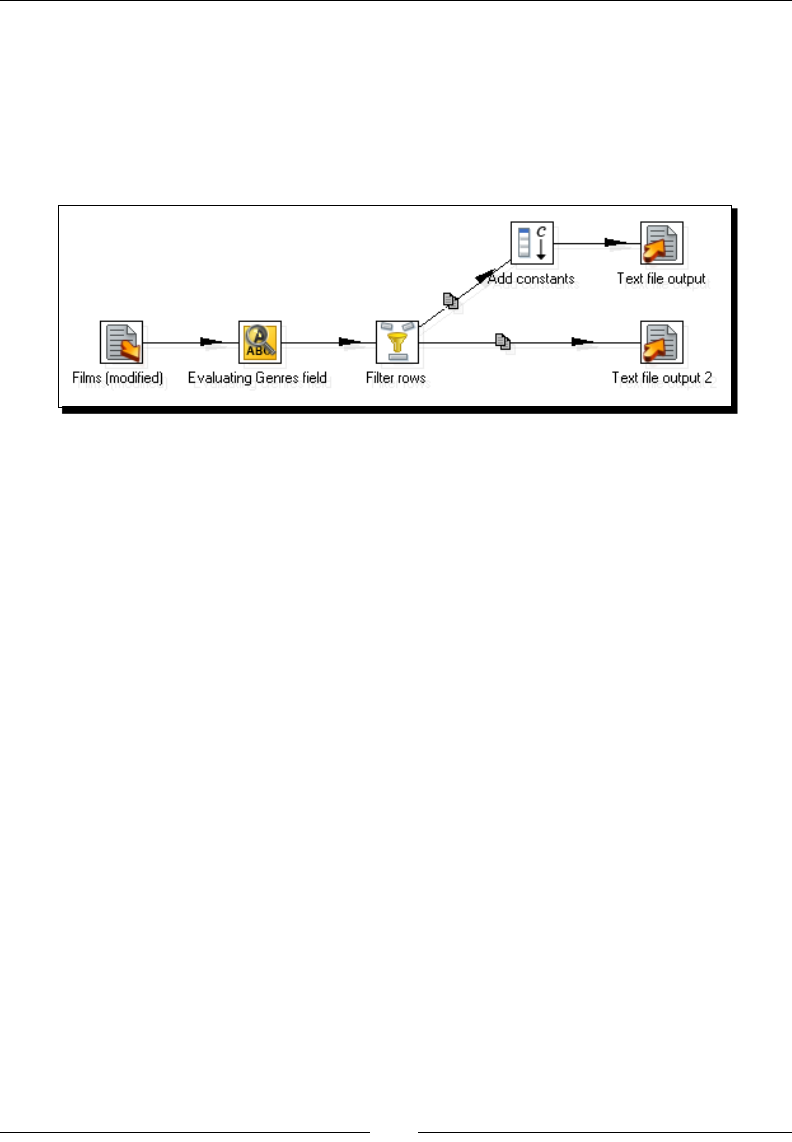

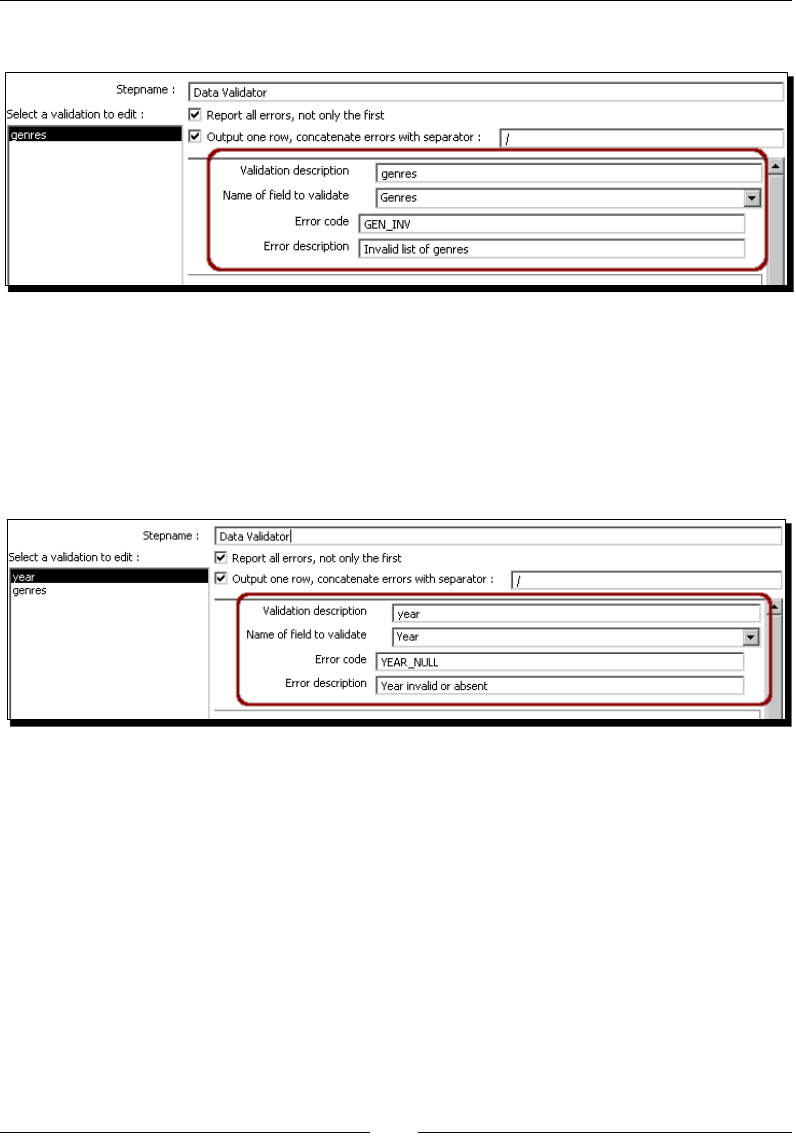

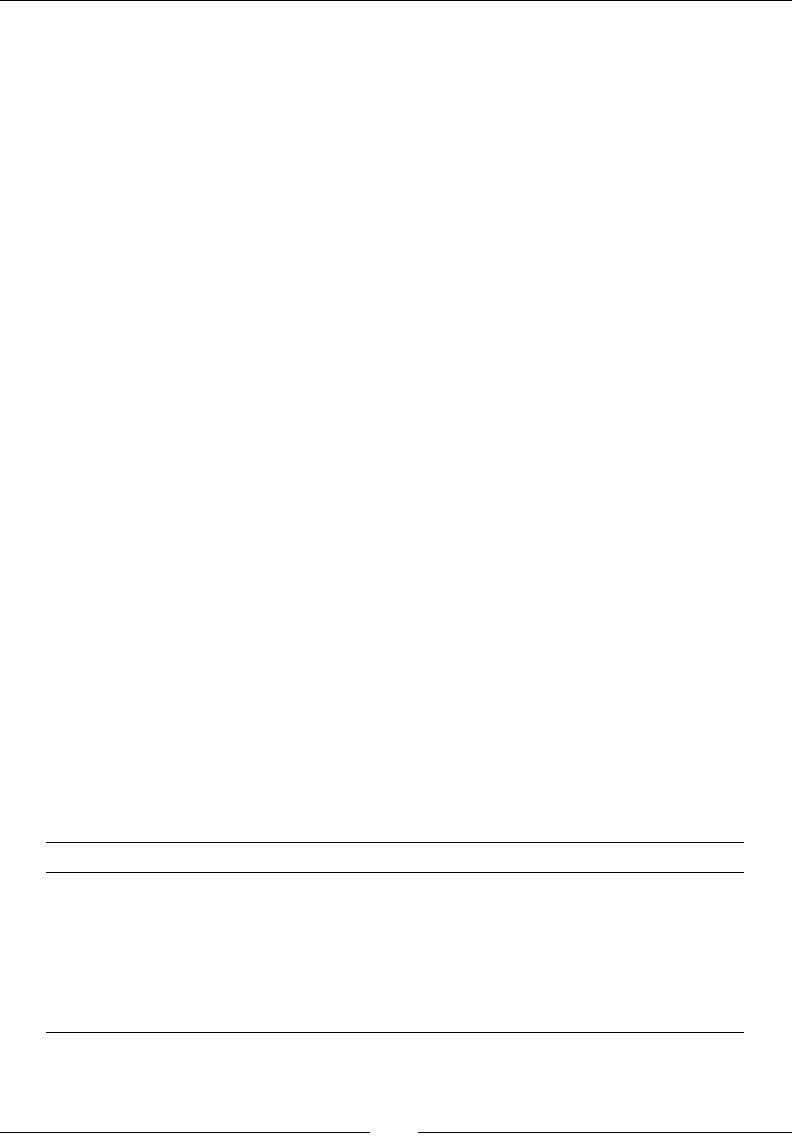

Time for acon – checking lms le with the Data Validator 209

Dening simple validaon rules using the Data Validator 211

Cleansing data 213

Summary 215

Chapter 8: Working with Databases 217

Introducing the Steel Wheels sample database 217

Connecng to the Steel Wheels database 219

Time for acon – creang a connecon with the Steel Wheels database 219

Connecng with Relaonal Database Management Systems 222

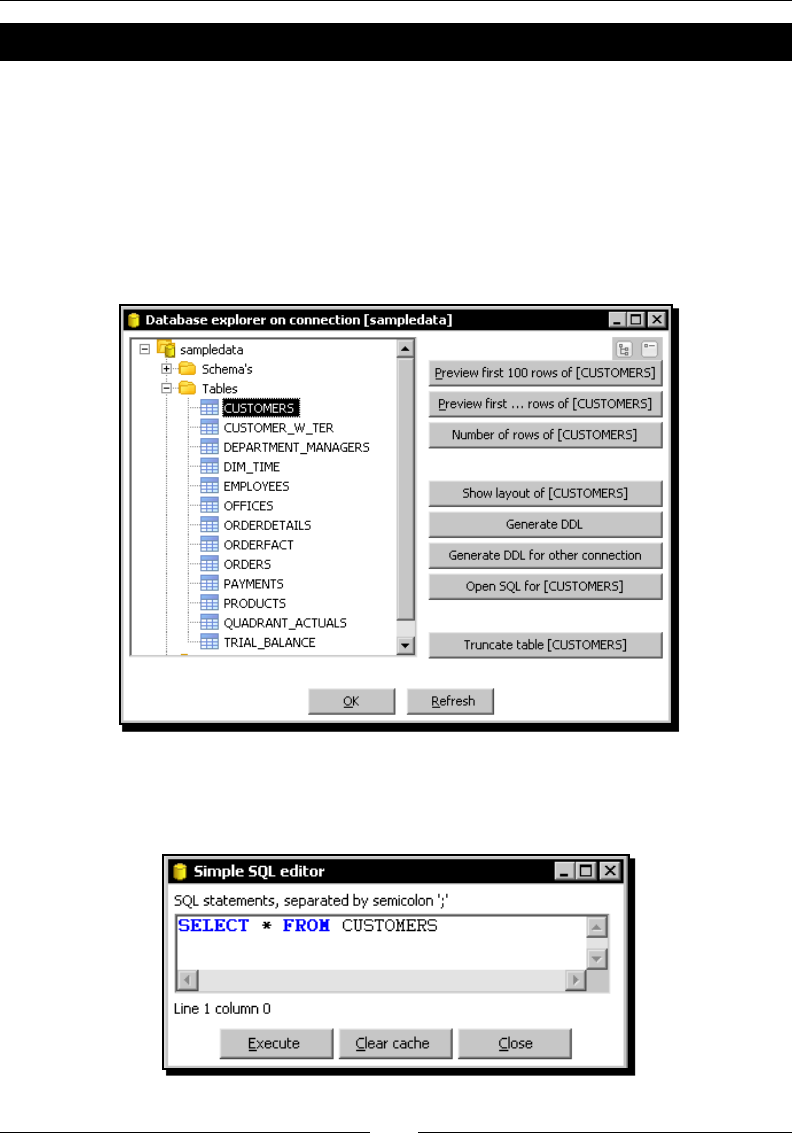

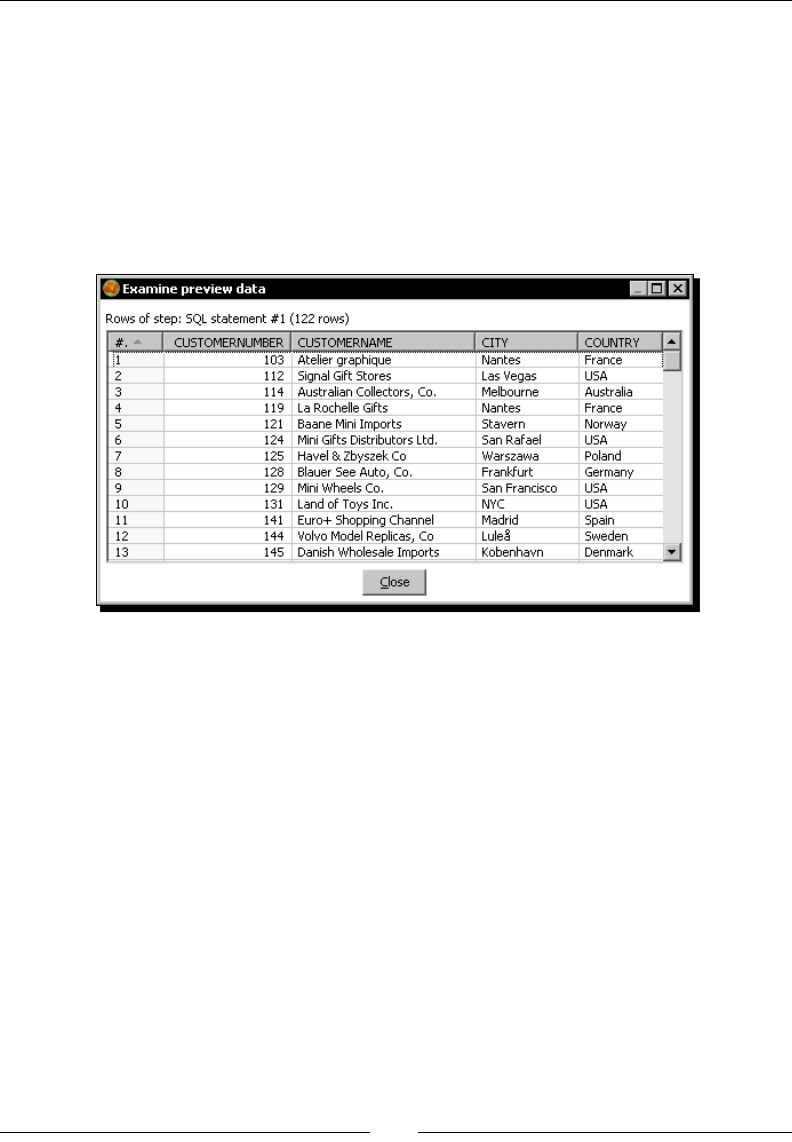

Exploring the Steel Wheels database 223

Time for acon – exploring the sample database 224

A brief word about SQL 225

Exploring any congured database with the PDI Database explorer 228

Querying a database 229

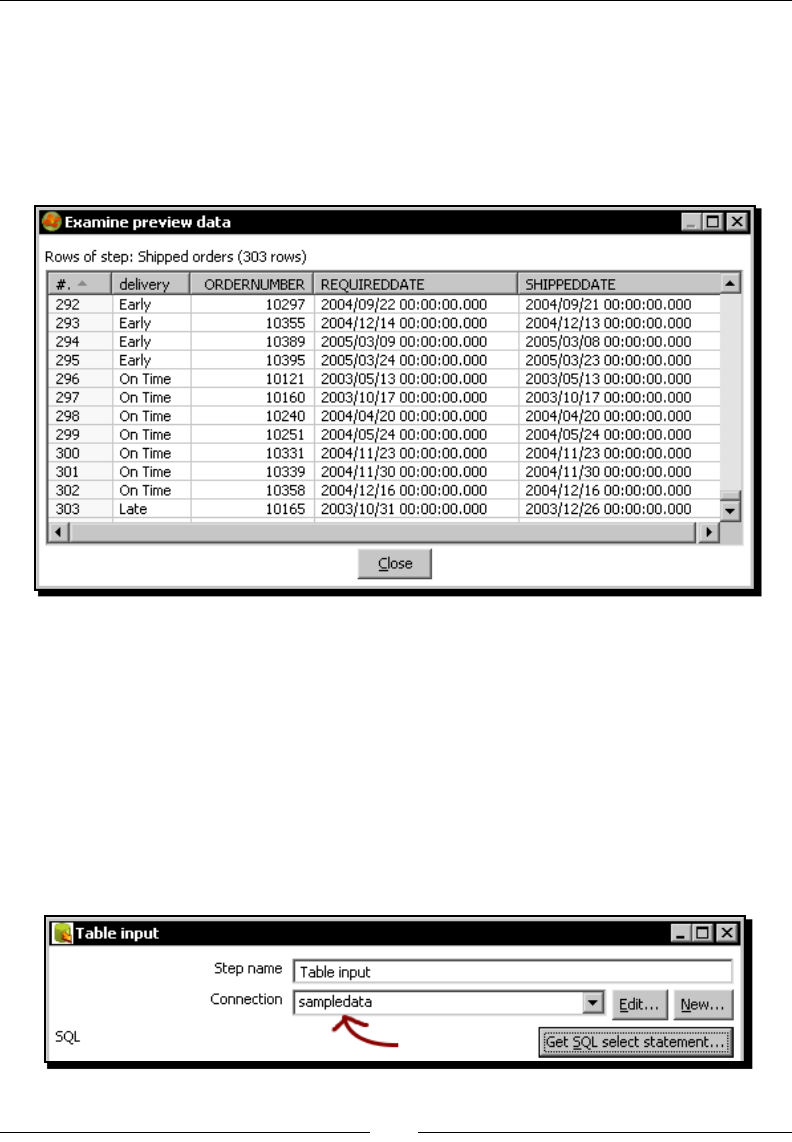

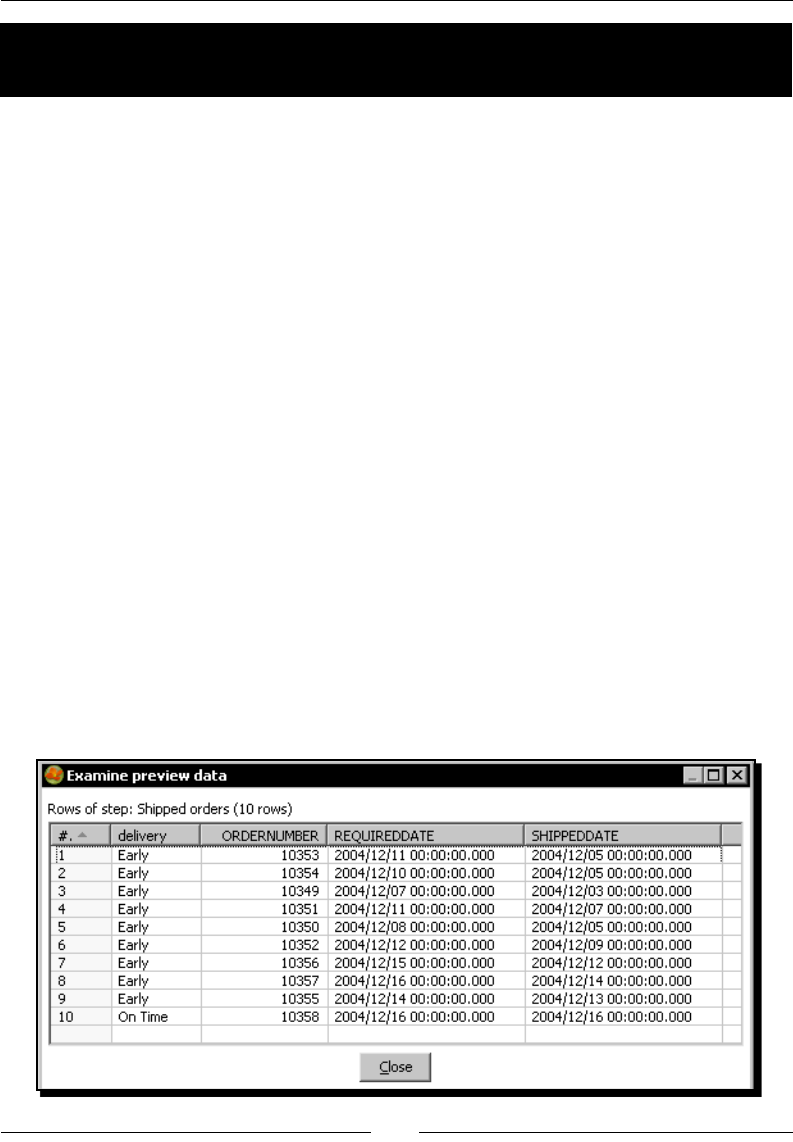

Time for acon – geng data about shipped orders 229

Geng data from the database with the Table input step 231

Using the SELECT statement for generang a new dataset 232

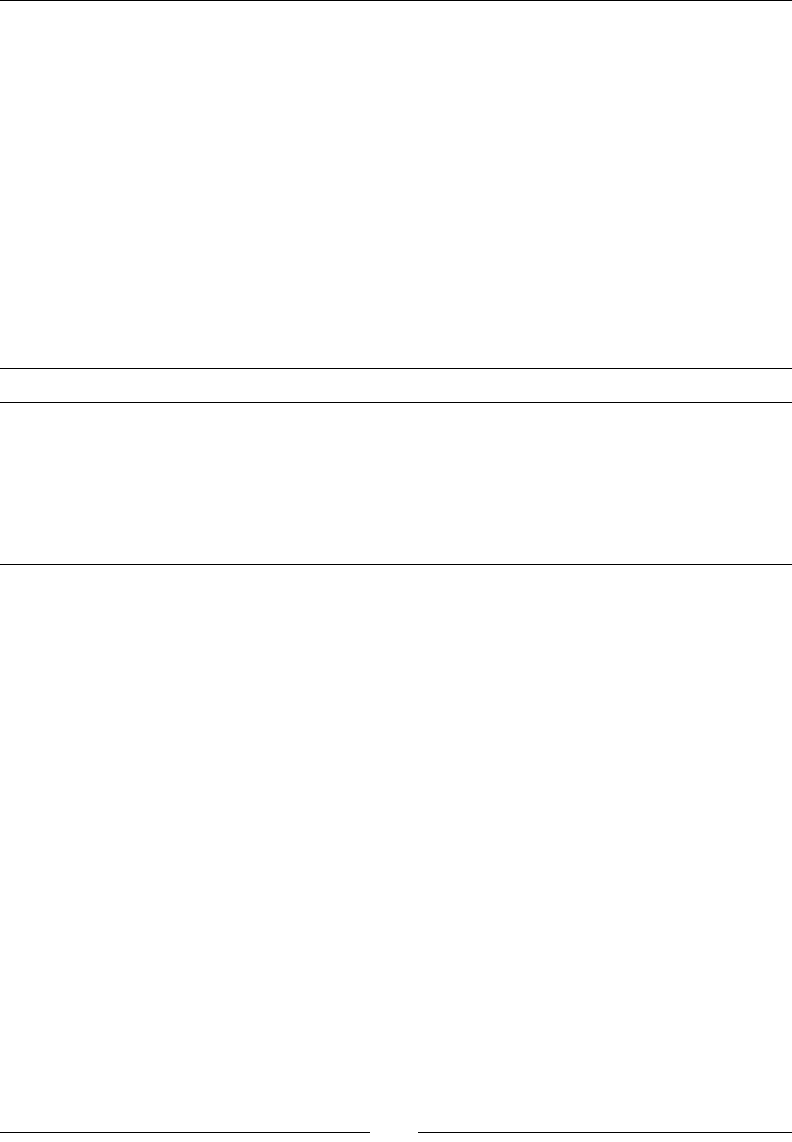

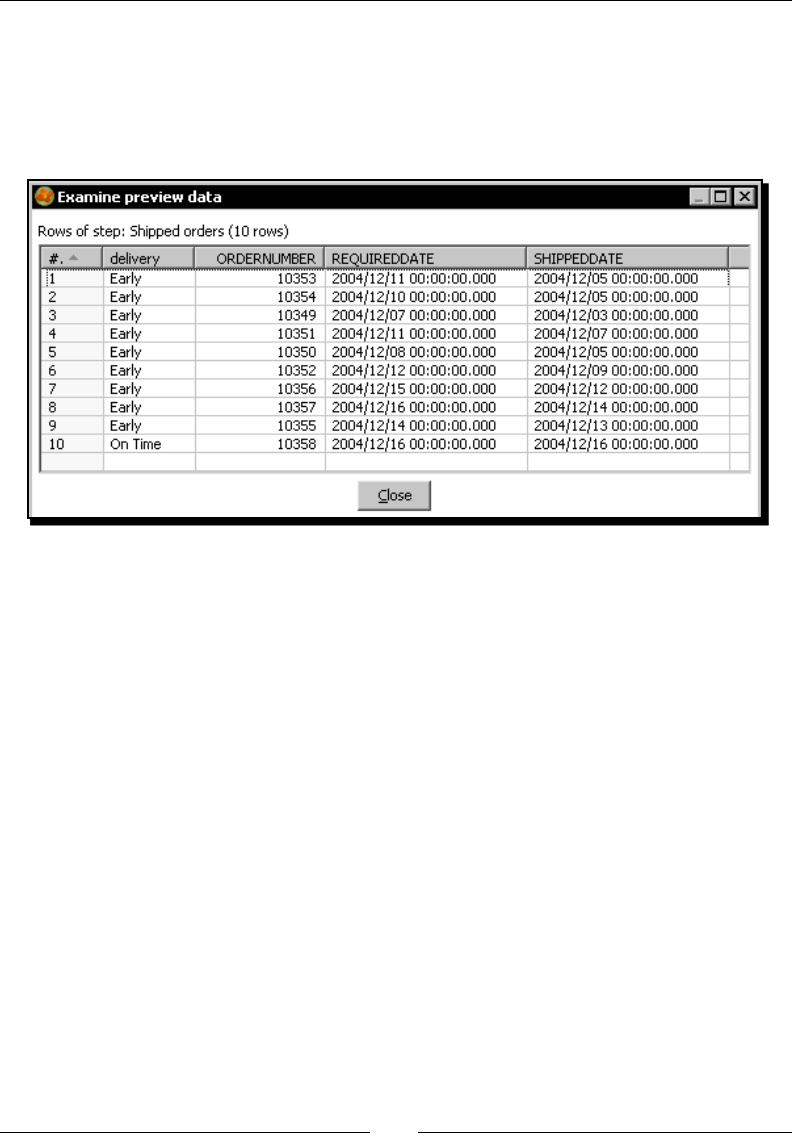

Making exible queries by using parameters 234

Time for acon – geng orders in a range of dates by using parameters 234

Making exible queries by using Kele variables 236

Time for acon – geng orders in a range of dates by using variables 237

Sending data to a database 239

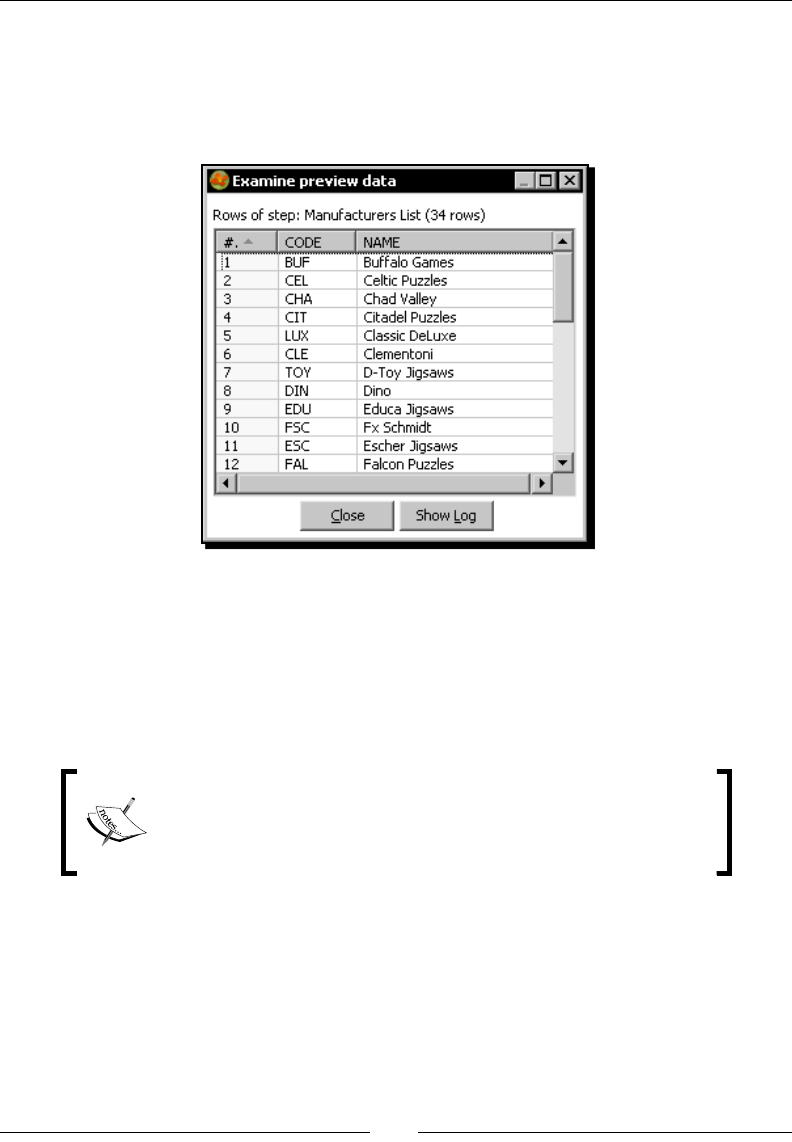

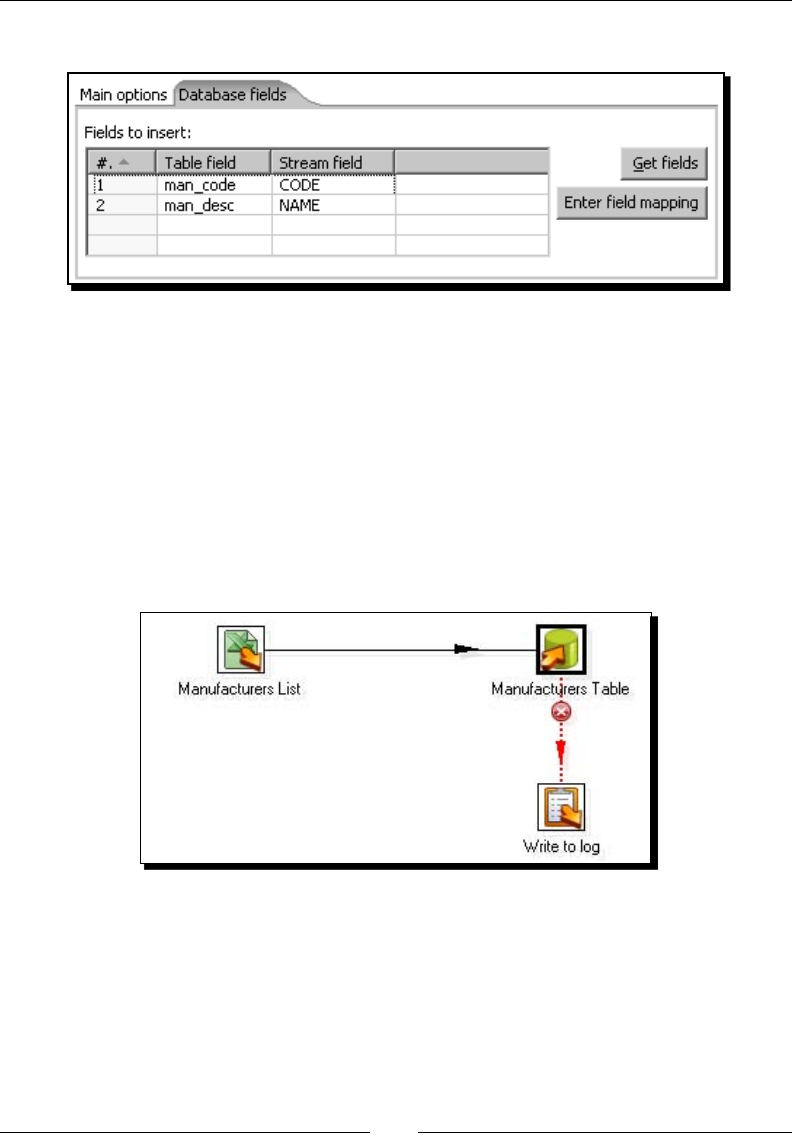

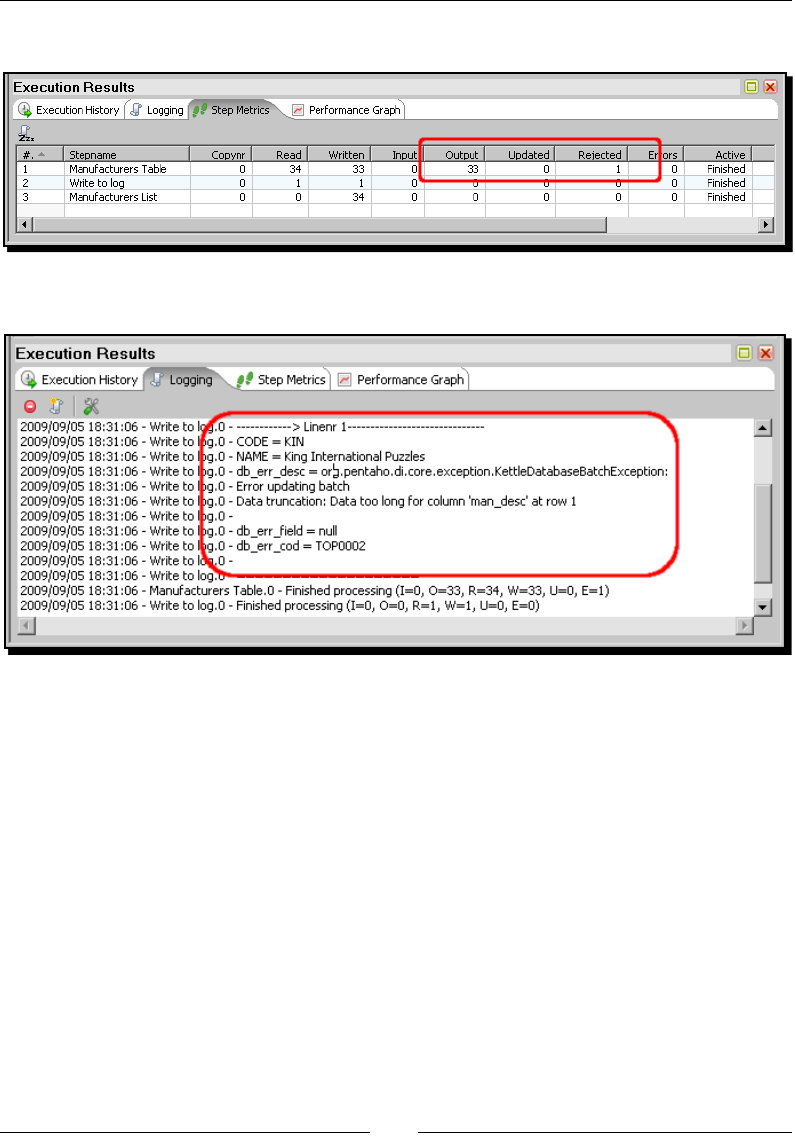

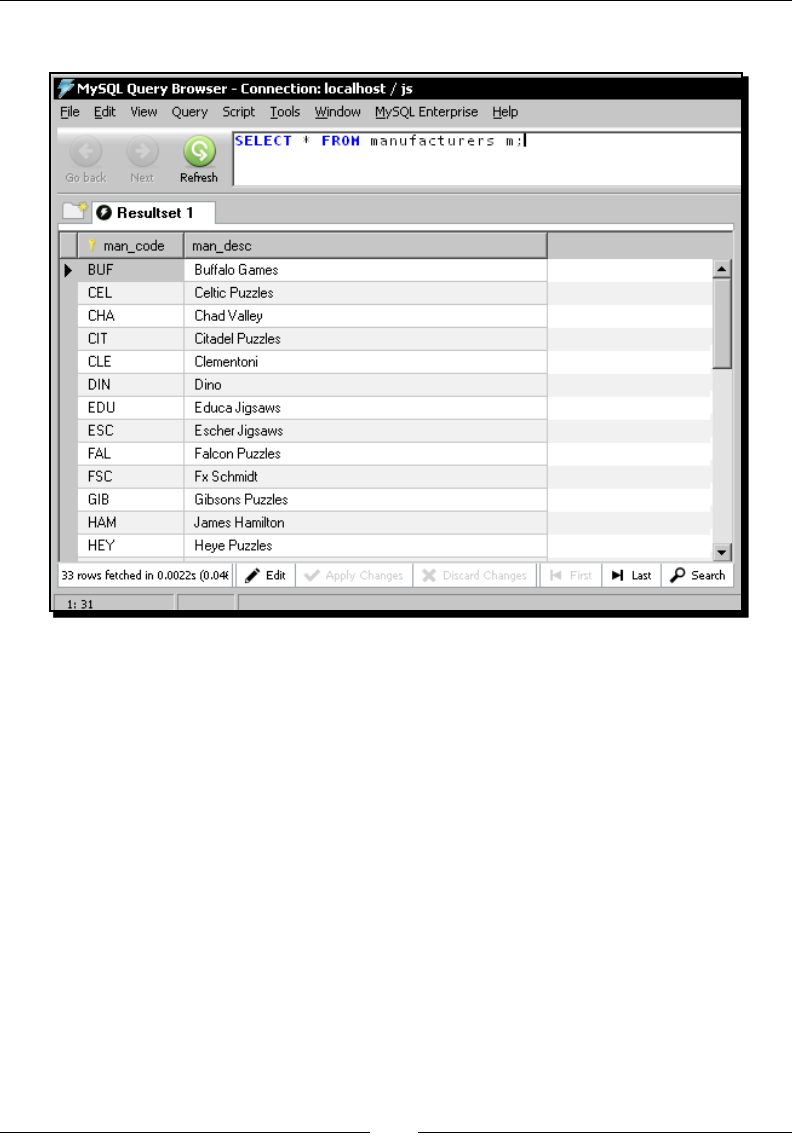

Time for acon – loading a table with a list of manufacturers 239

Inserng new data into a database table with the Table output step 245

Inserng or updang data by using other PDI steps 246

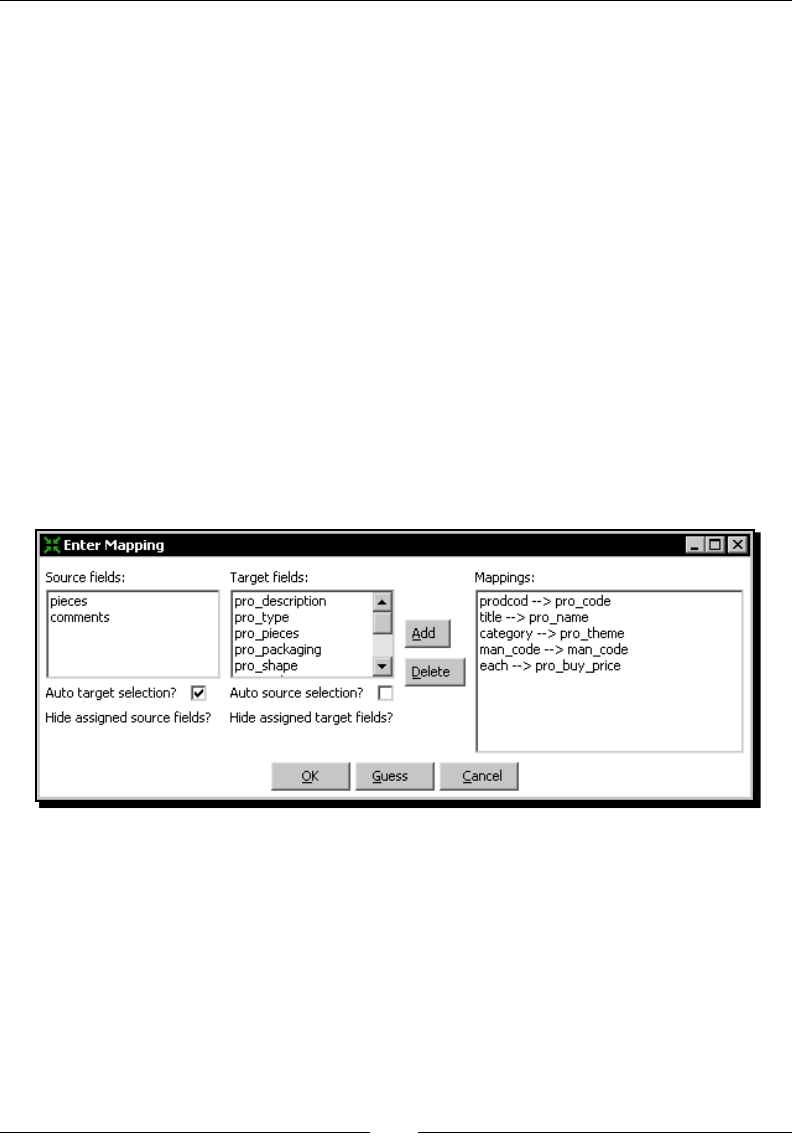

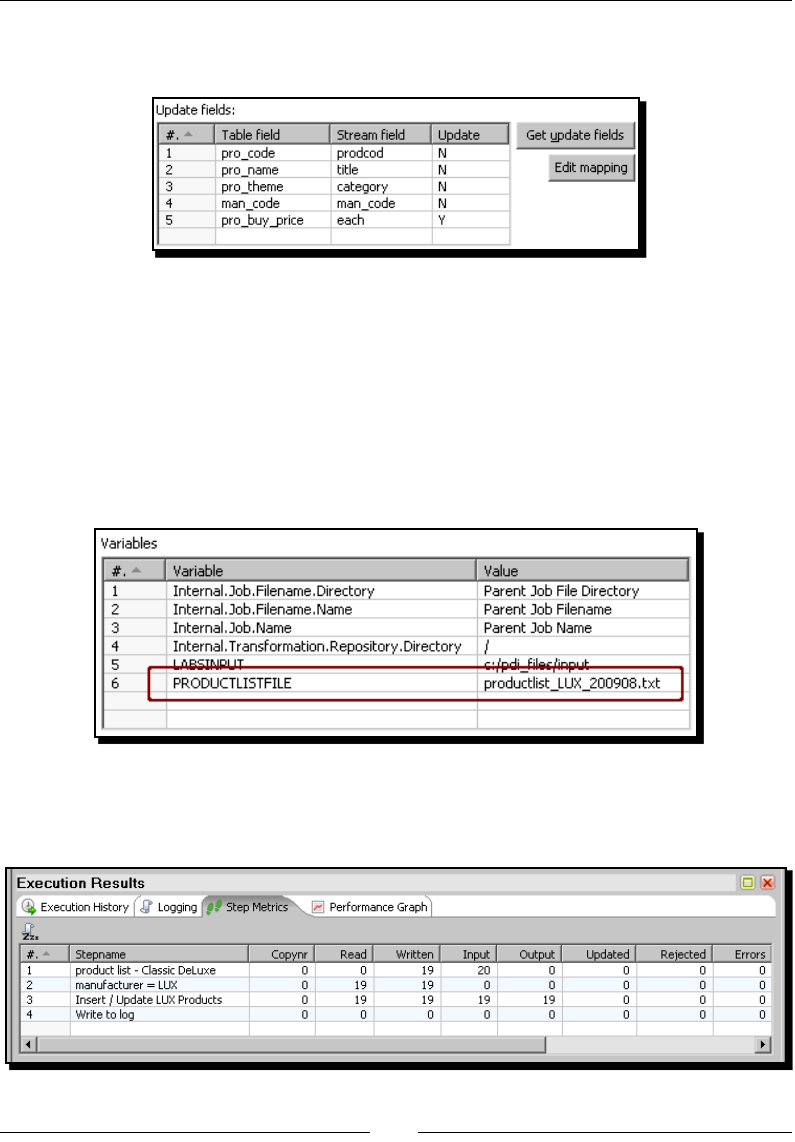

Time for acon – inserng new products or updang existent ones 246

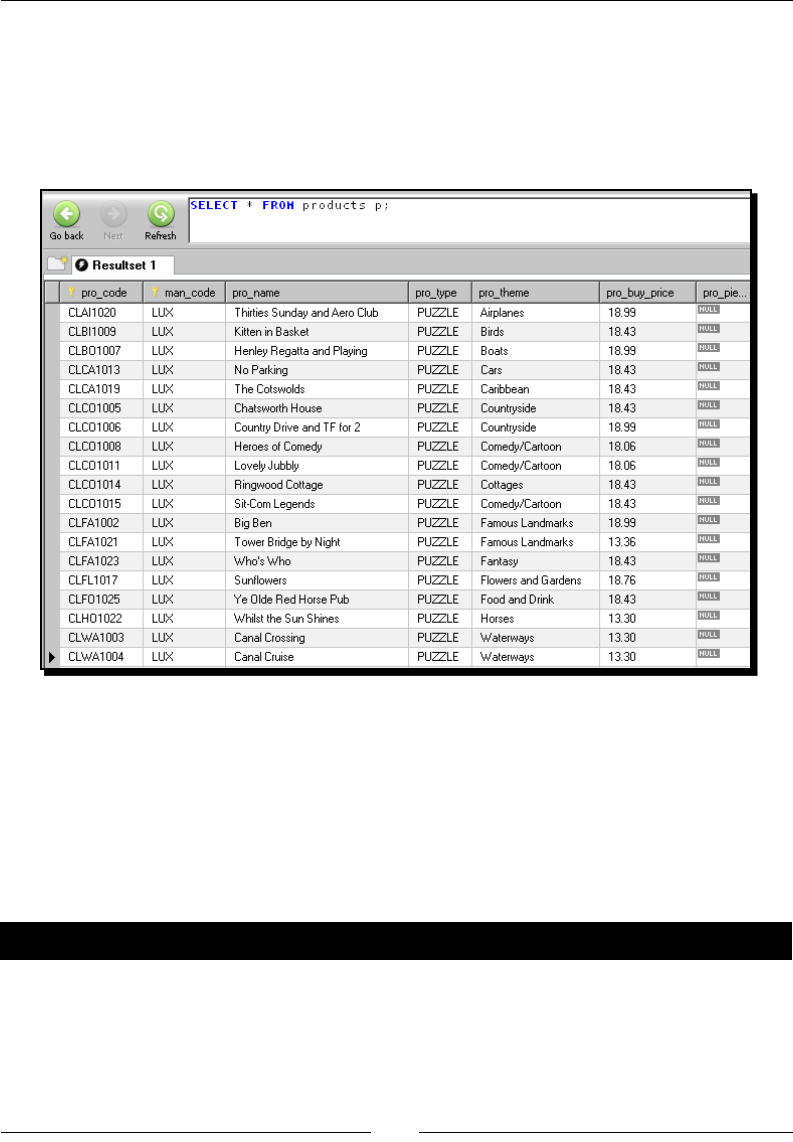

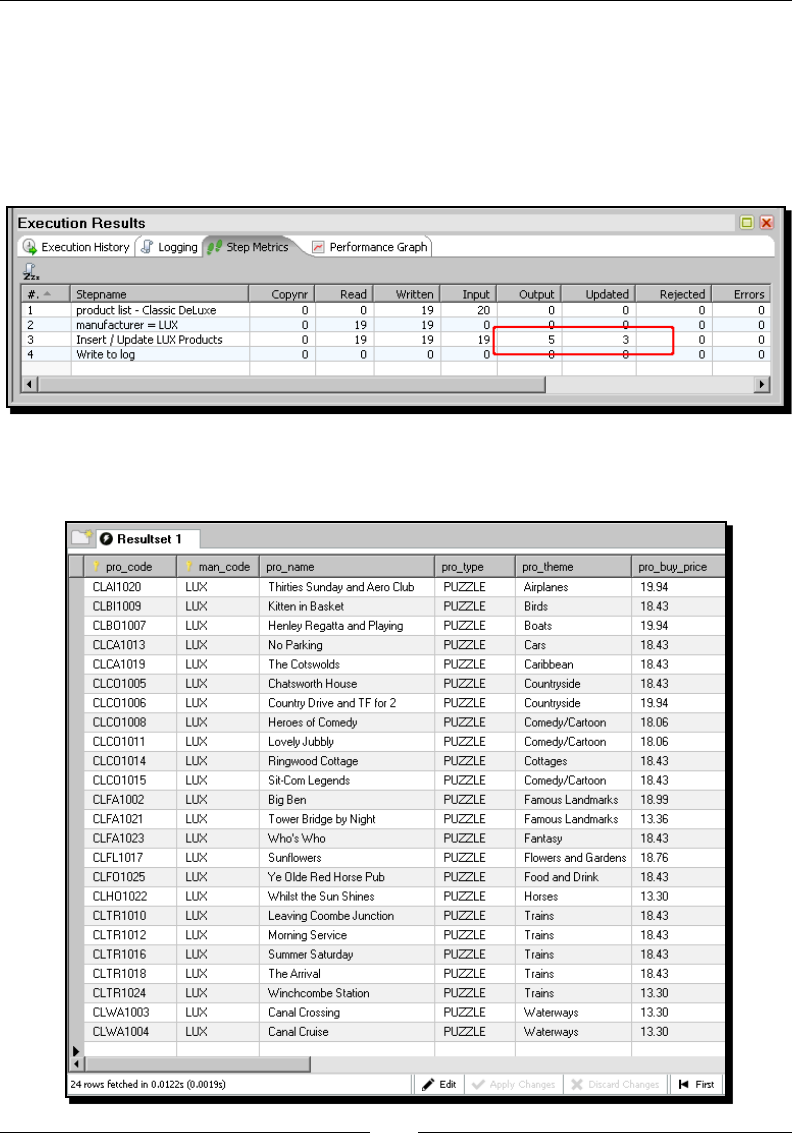

Time for acon – tesng the update of exisng products 249

Inserng or updang data with the Insert/Update step 251

Eliminang data from a database 256

Time for acon – deleng data about disconnued items 256

Deleng records of a database table with the Delete step 259

Summary 260

Chapter 9: Performing Advanced Operaons with Databases 261

Preparing the environment 261

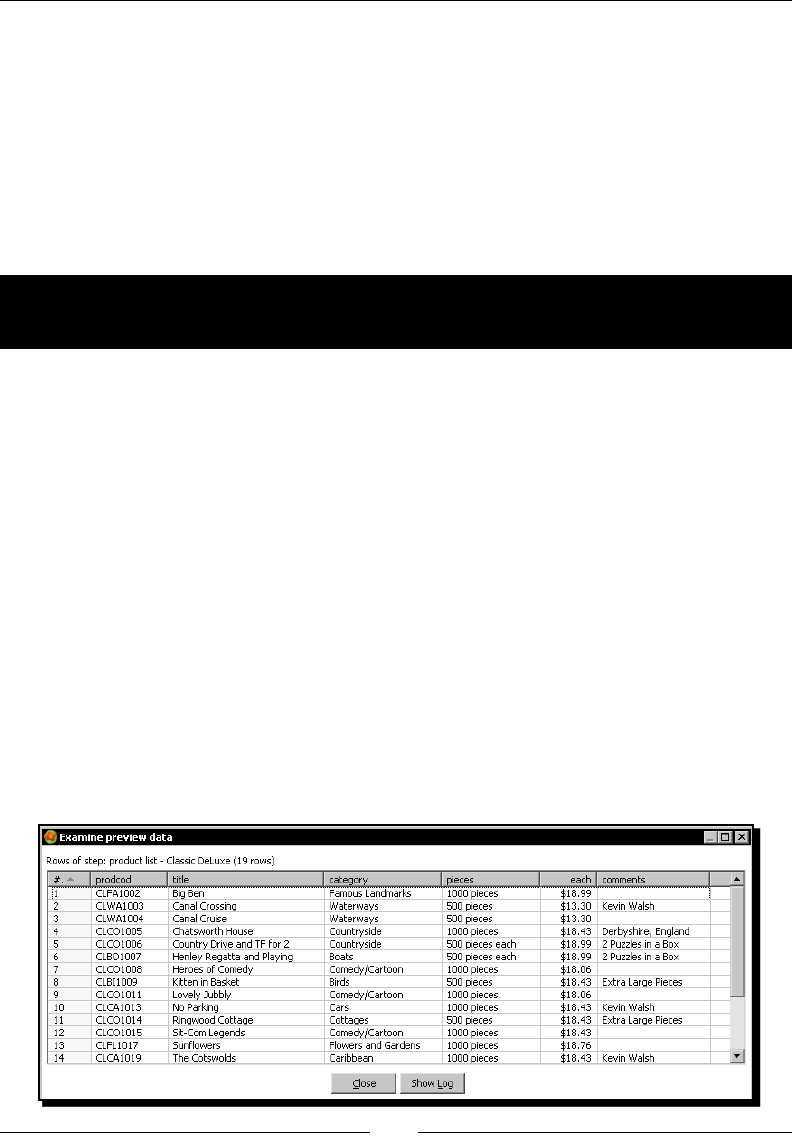

Time for acon – populang the Jigsaw database 261

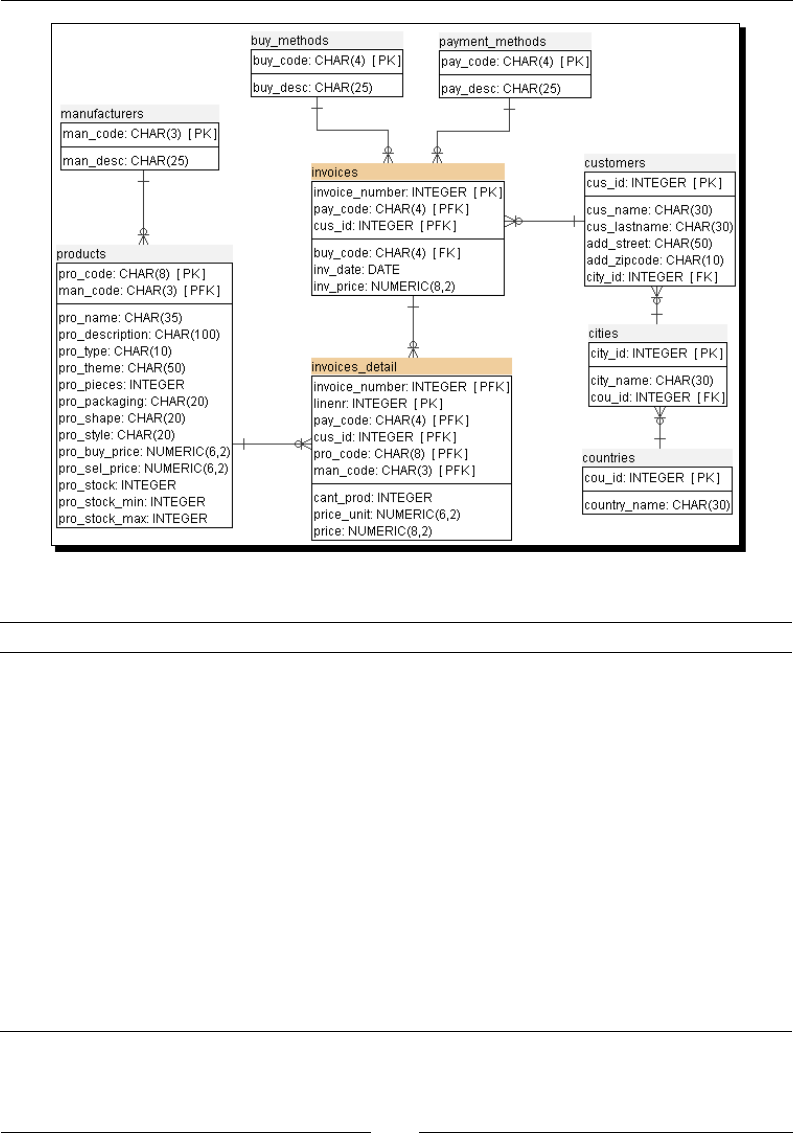

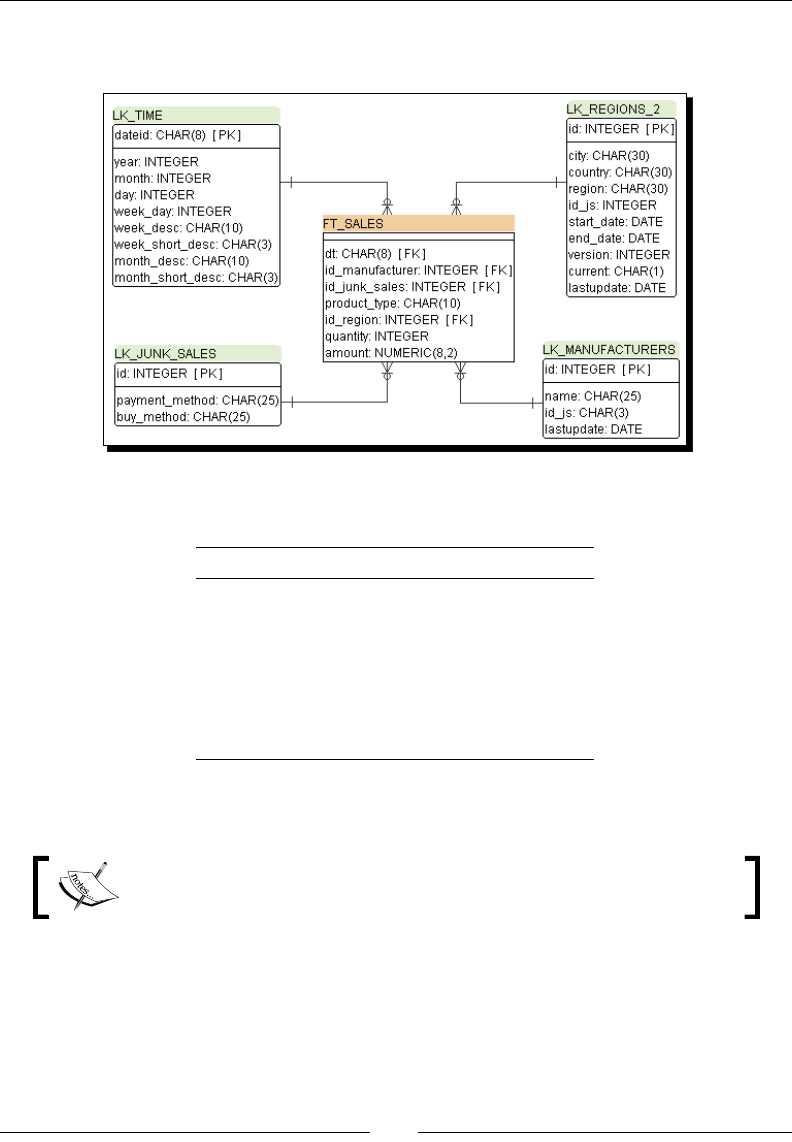

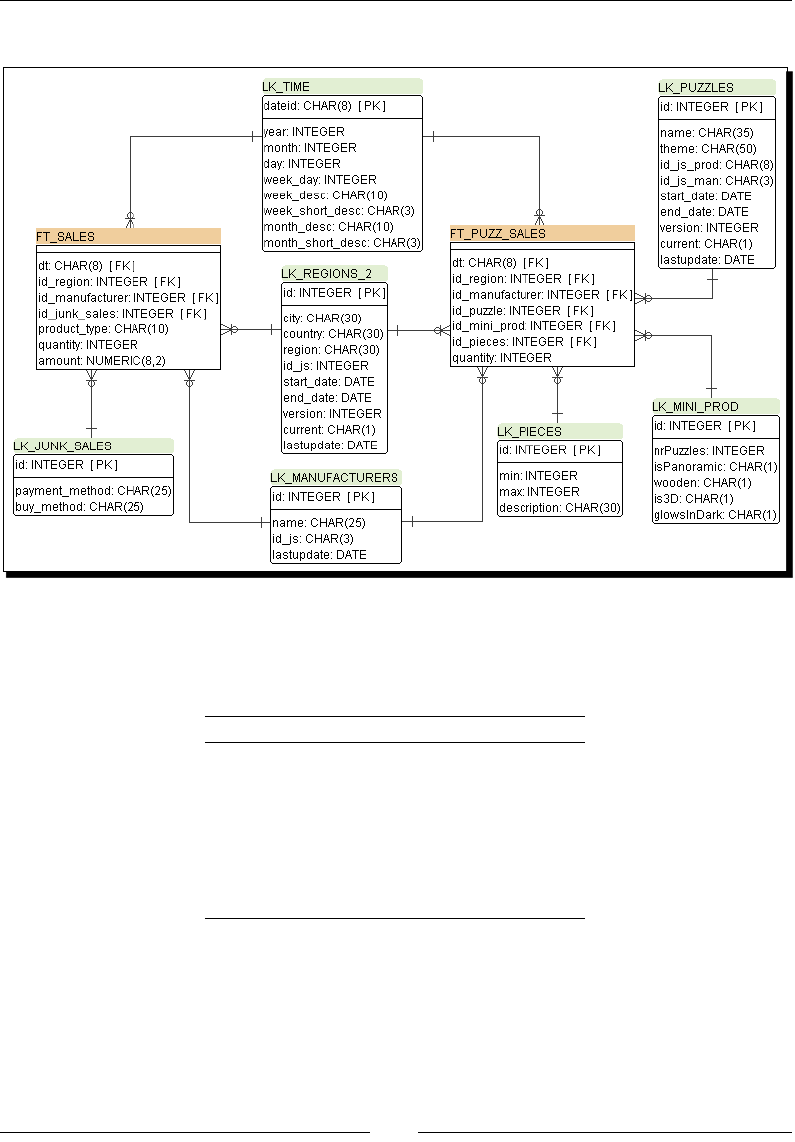

Exploring the Jigsaw database model 264

Table of Contents

[ vi ]

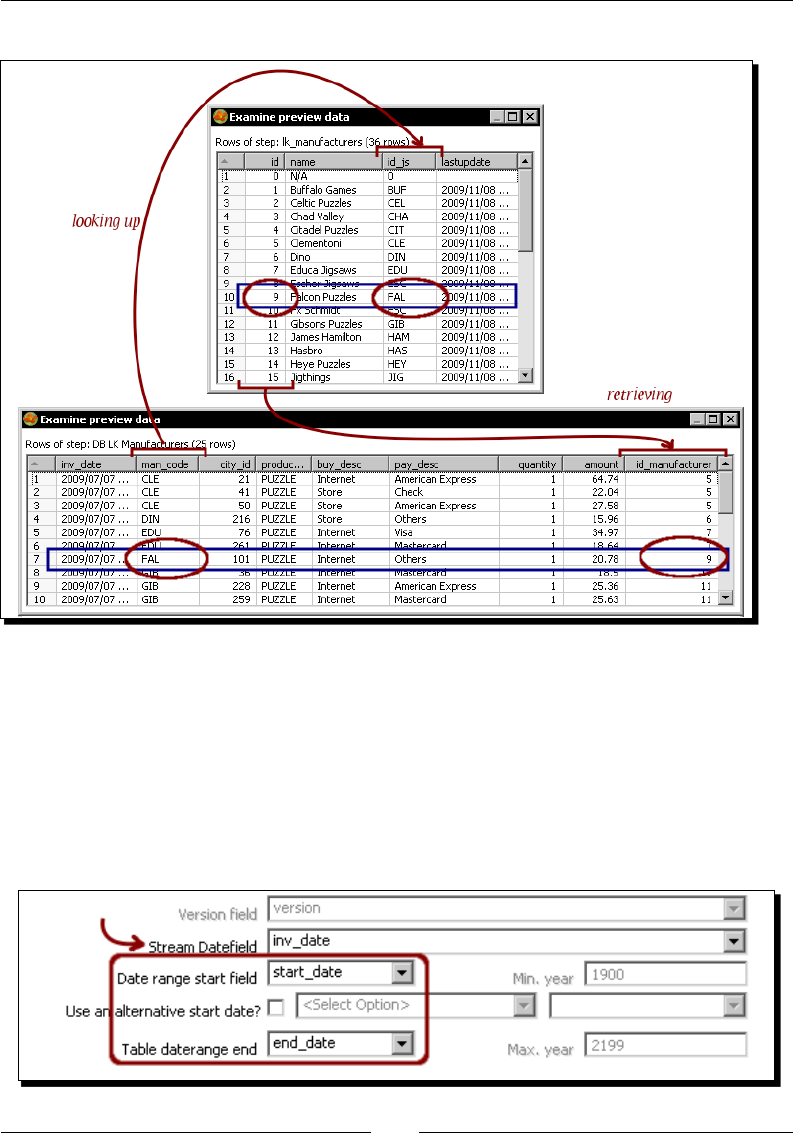

Looking up data in a database 266

Doing simple lookups 266

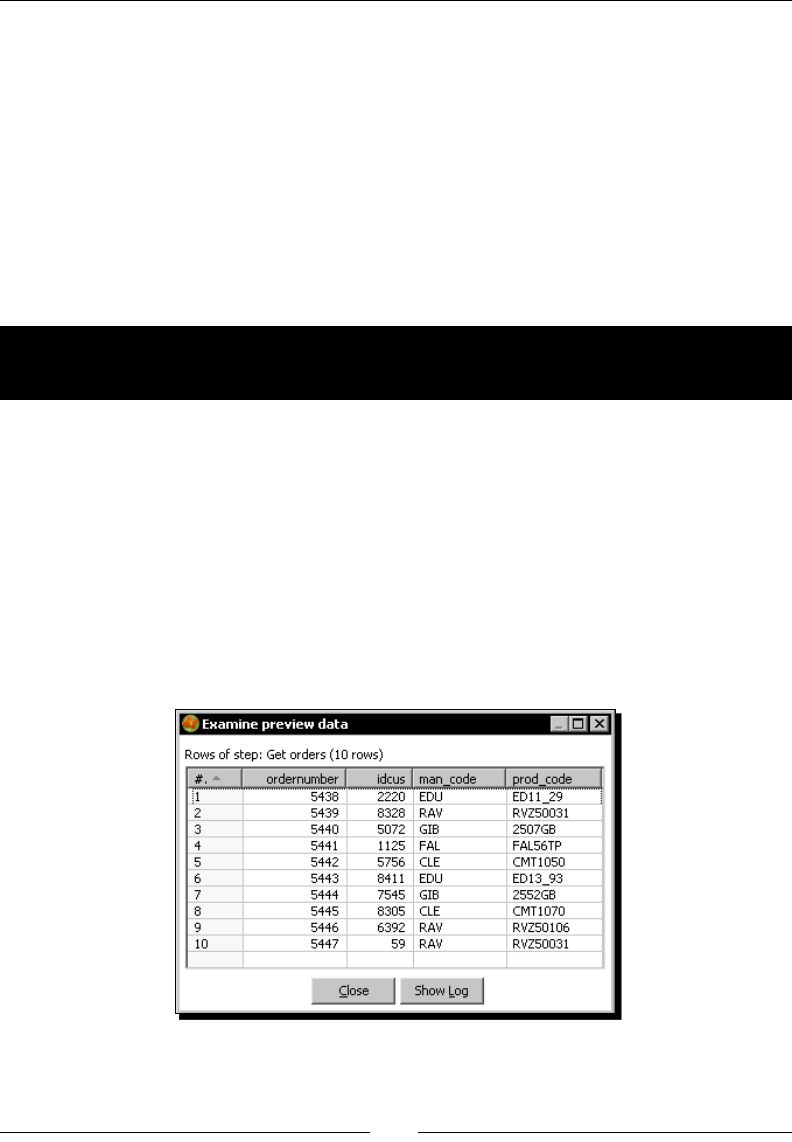

Time for acon – using a Database lookup step to create a list of products to buy 266

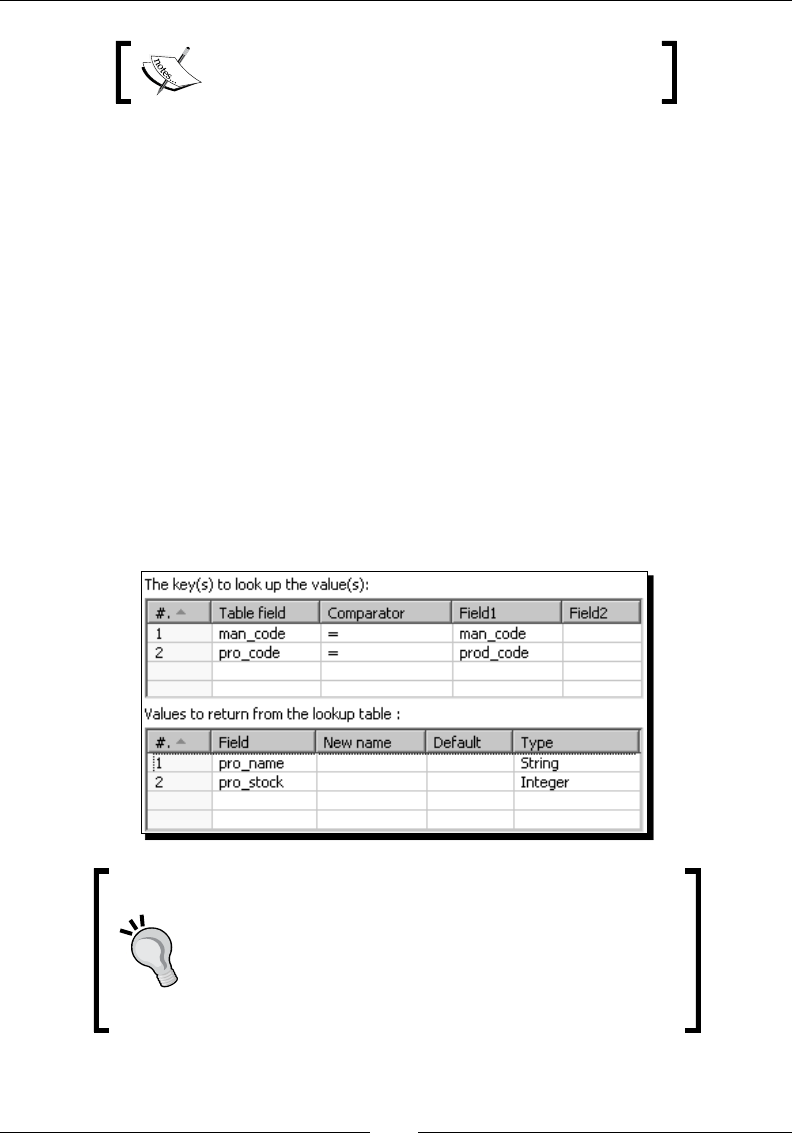

Looking up values in a database with the Database lookup step 268

Doing complex lookups 270

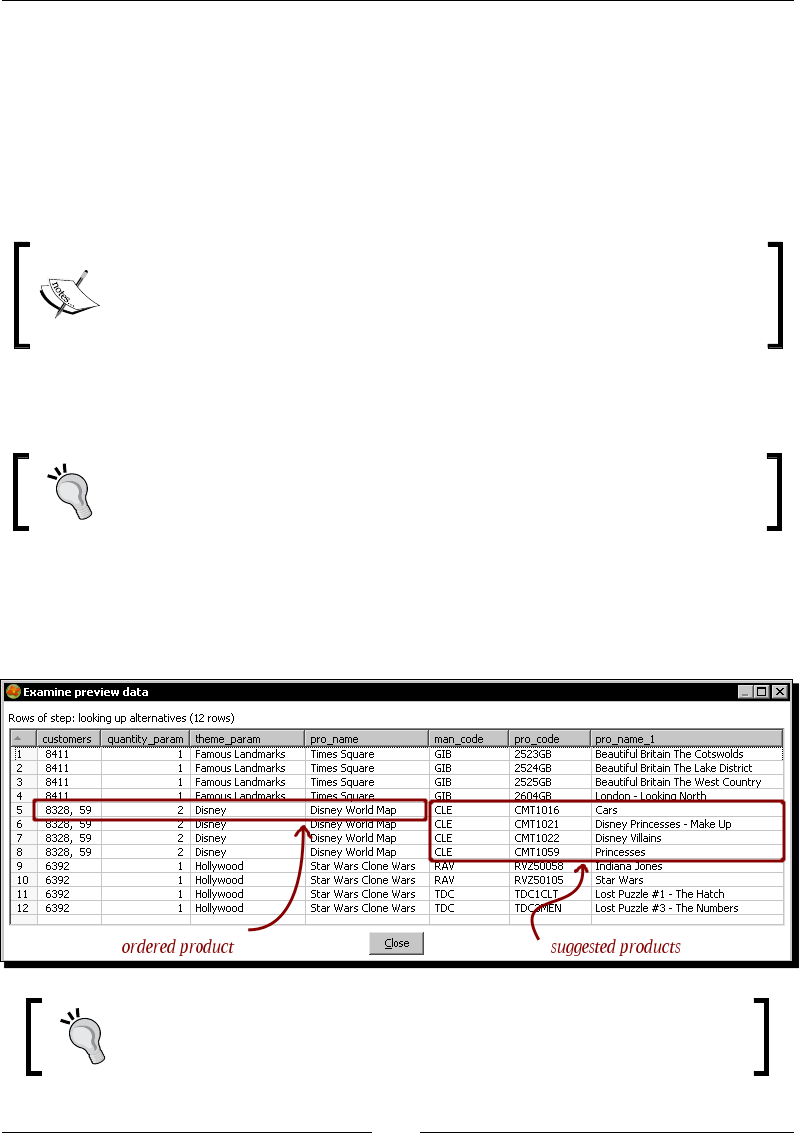

Time for acon – using a Database join step to create a list of

suggested products to buy 270

Joining data from the database to the stream data by using a Database join step 272

Introducing dimensional modeling 275

Loading dimensions with data 276

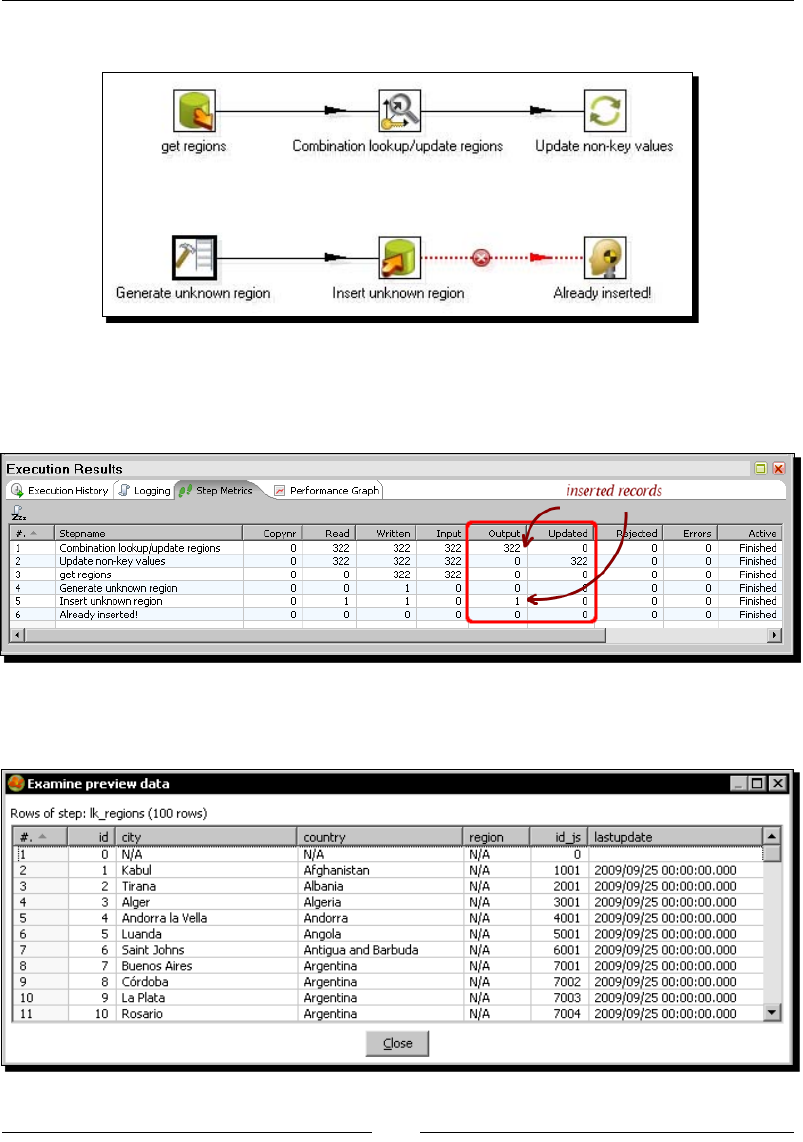

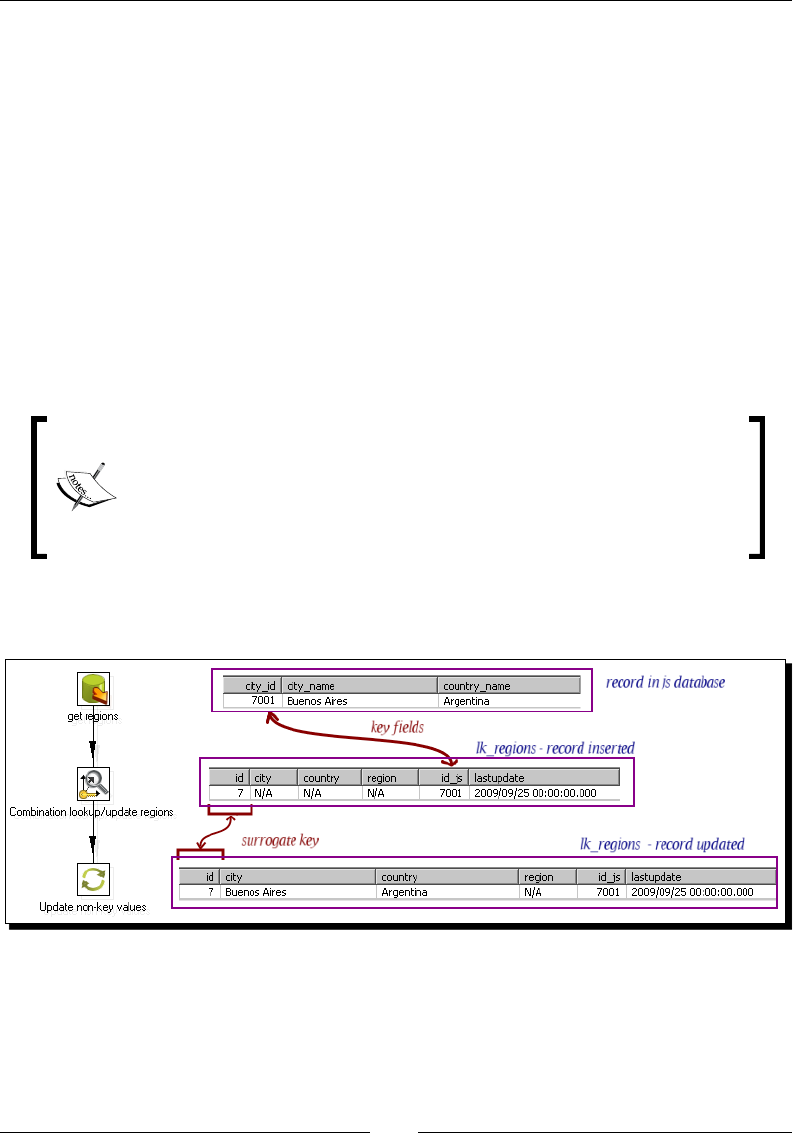

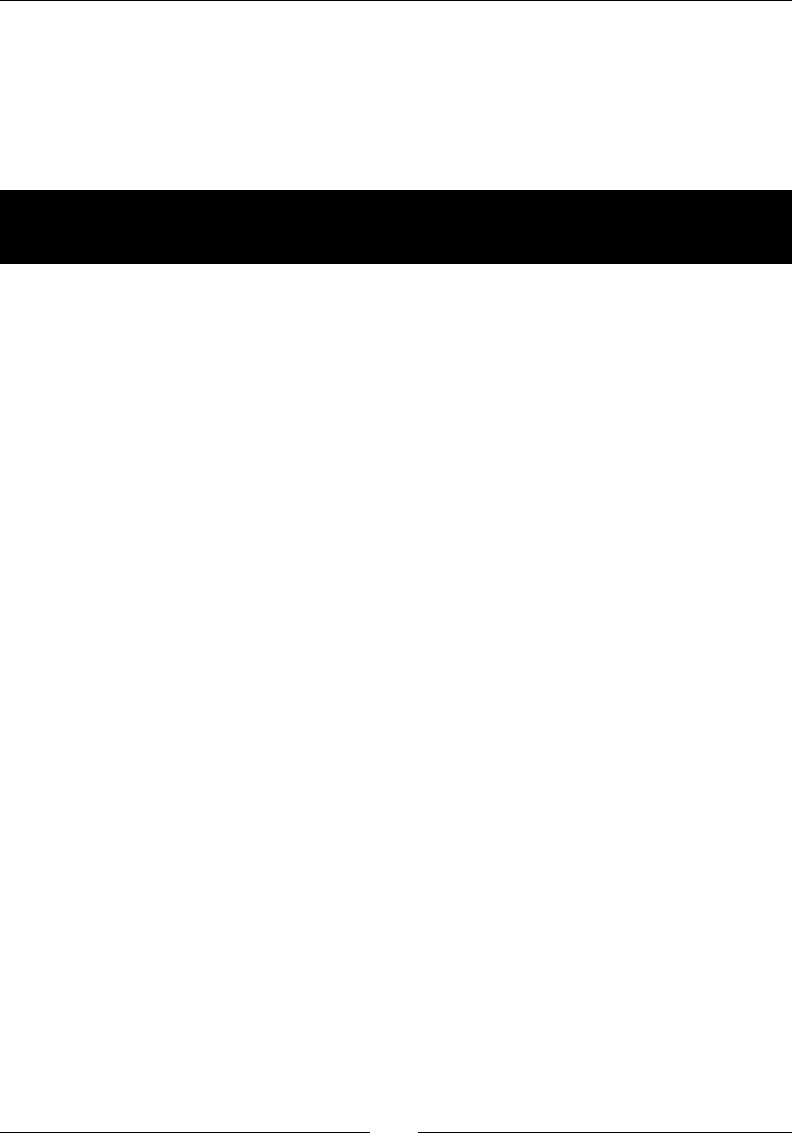

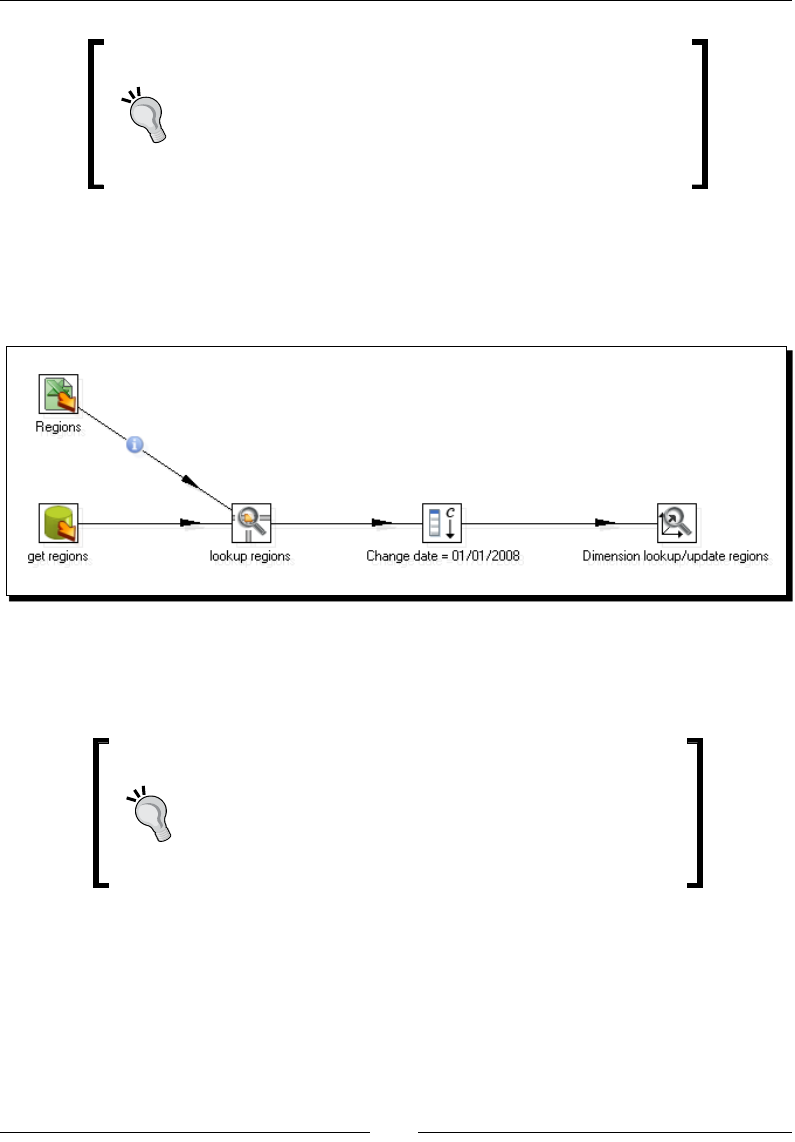

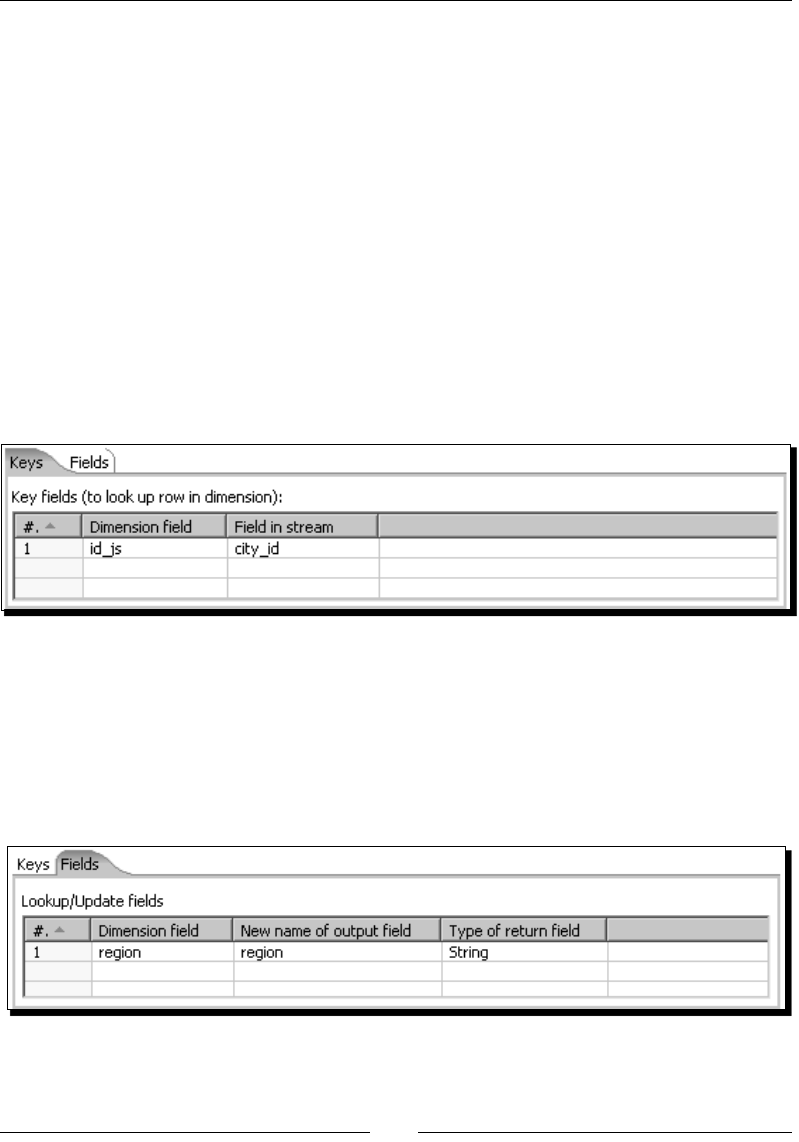

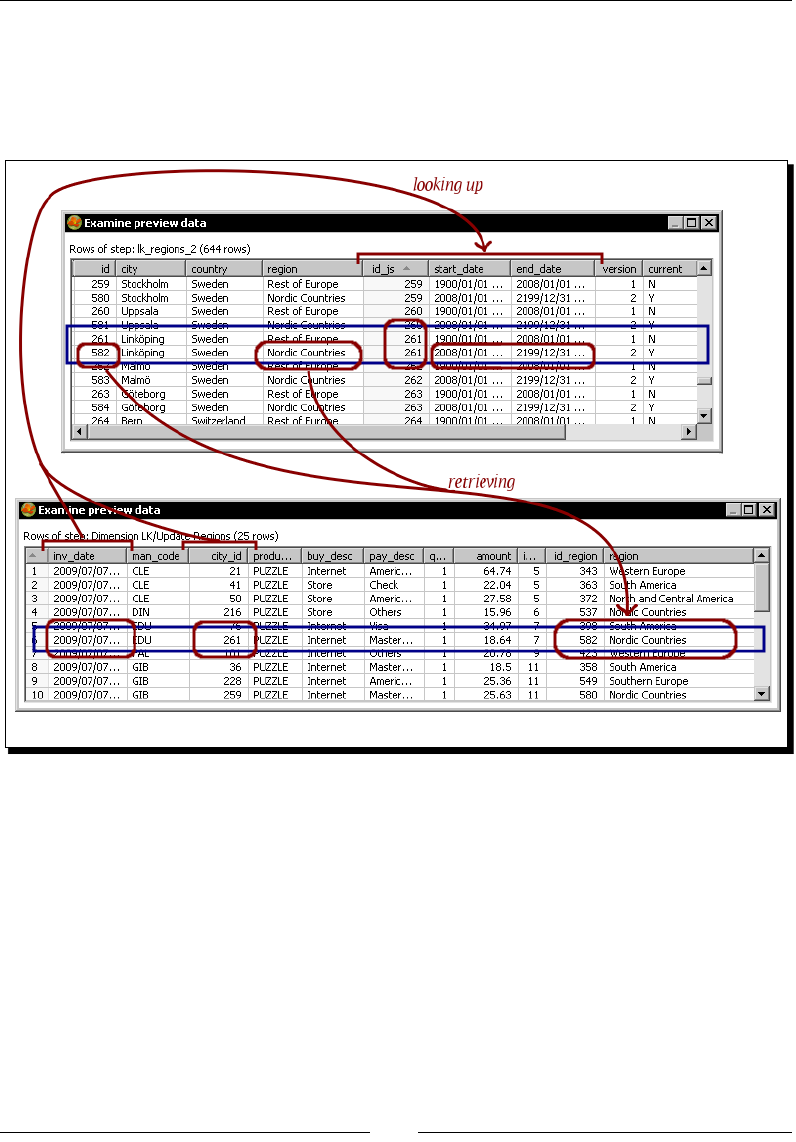

Time for acon – loading a region dimension with a

Combinaon lookup/update step 276

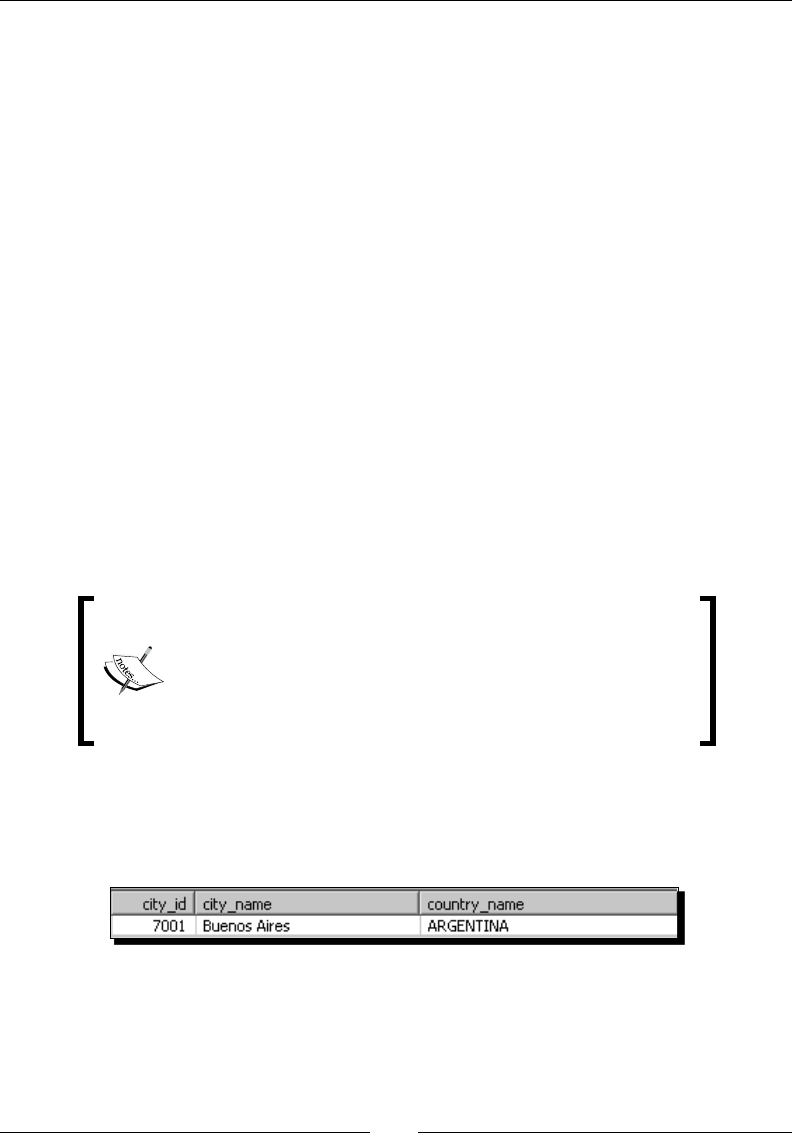

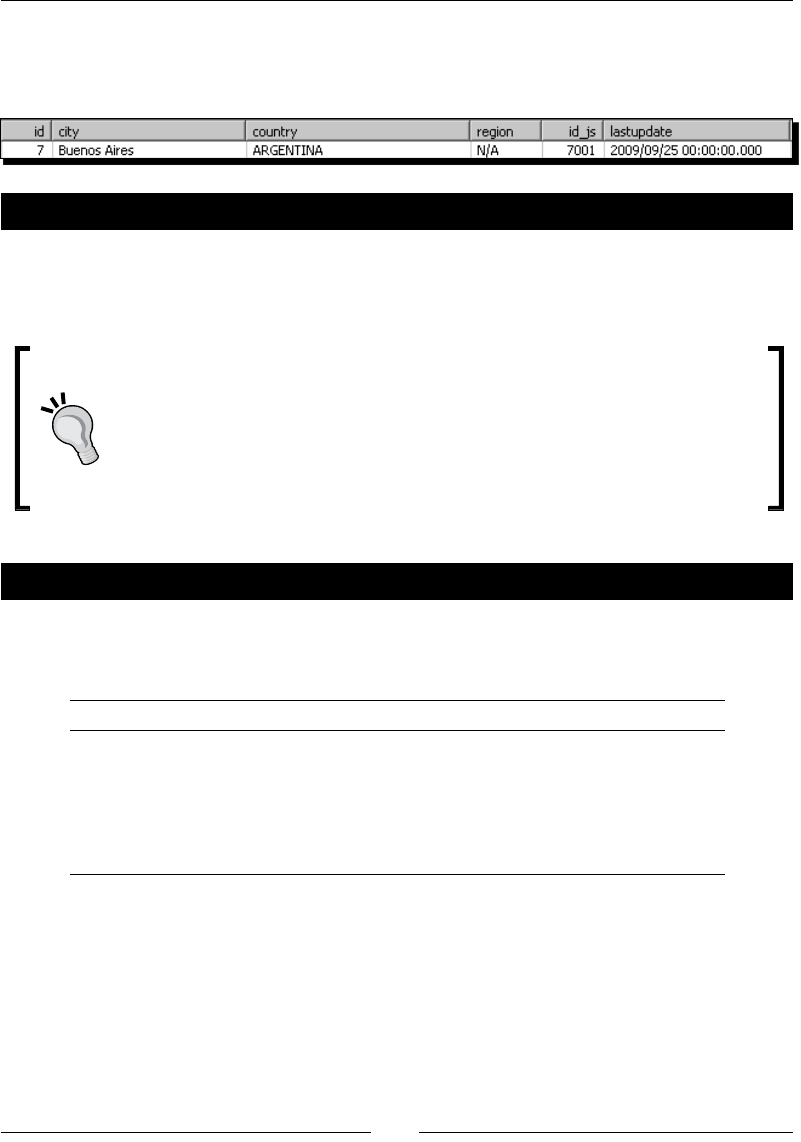

Time for acon – tesng the transformaon that loads the region dimension 279

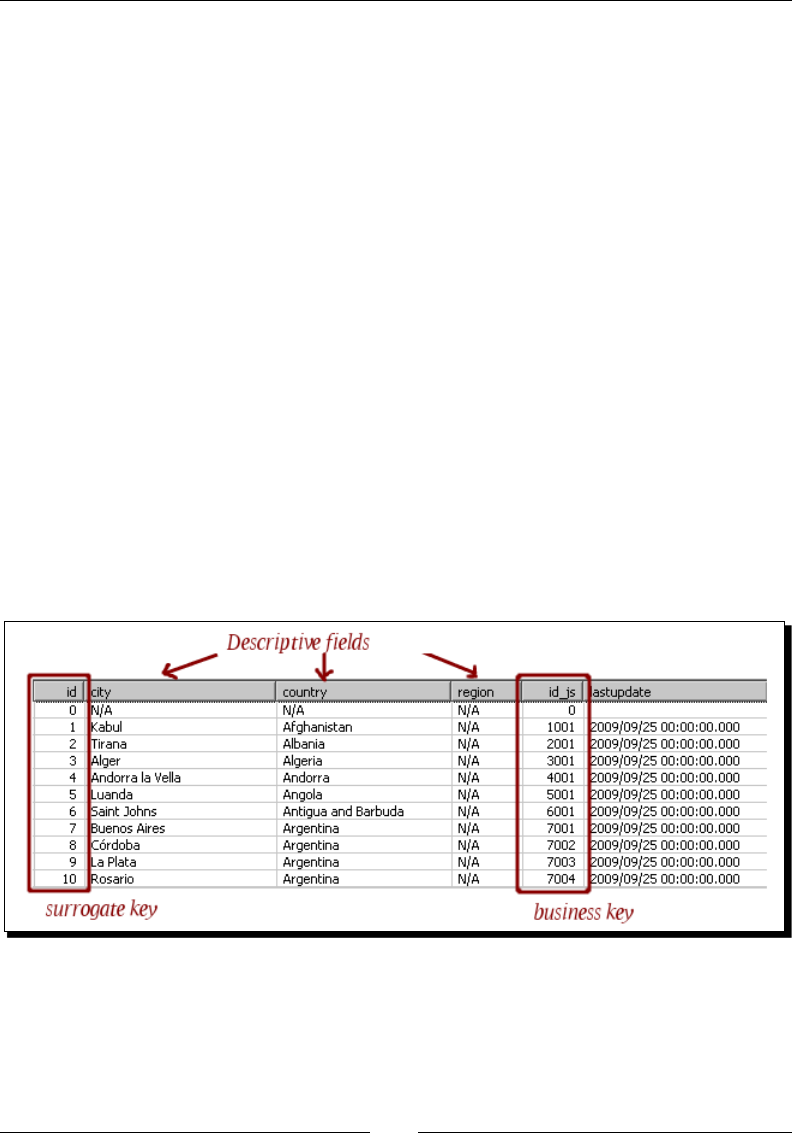

Describing data with dimensions 281

Loading Type I SCD with a Combinaon lookup/update step 282

Keeping a history of changes 286

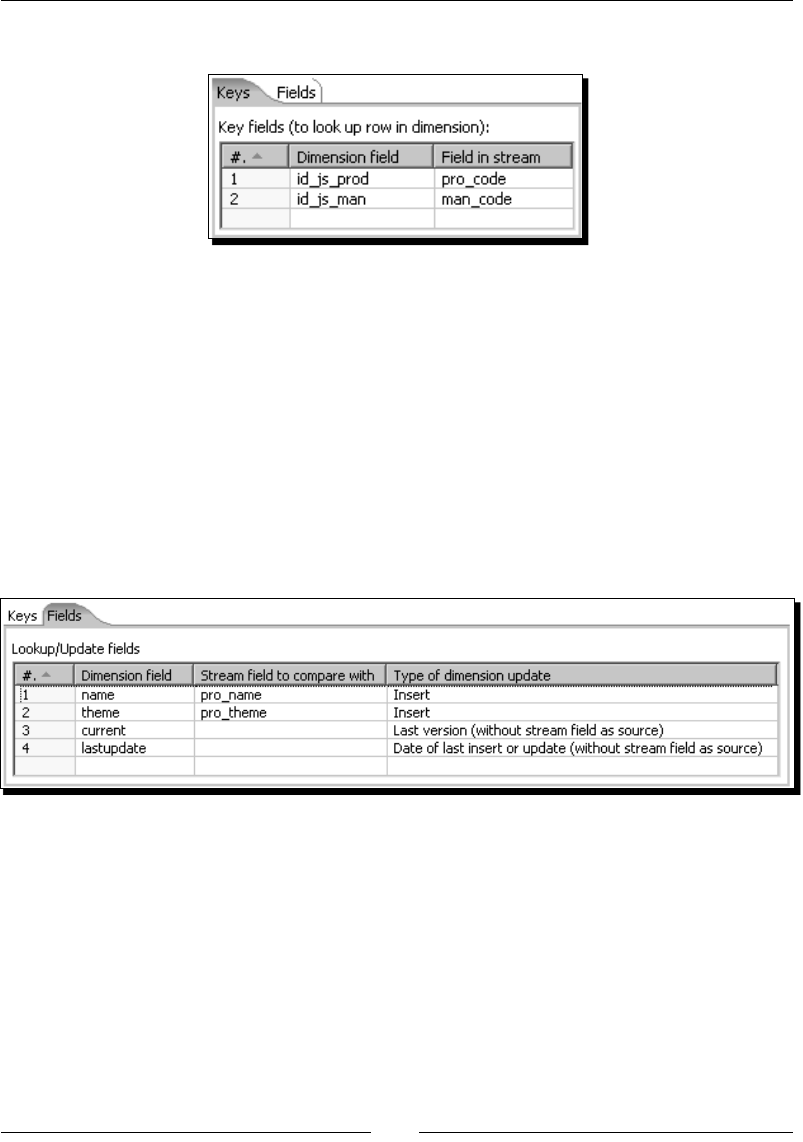

Time for acon – keeping a history of product changes with the

Dimension lookup/update step 286

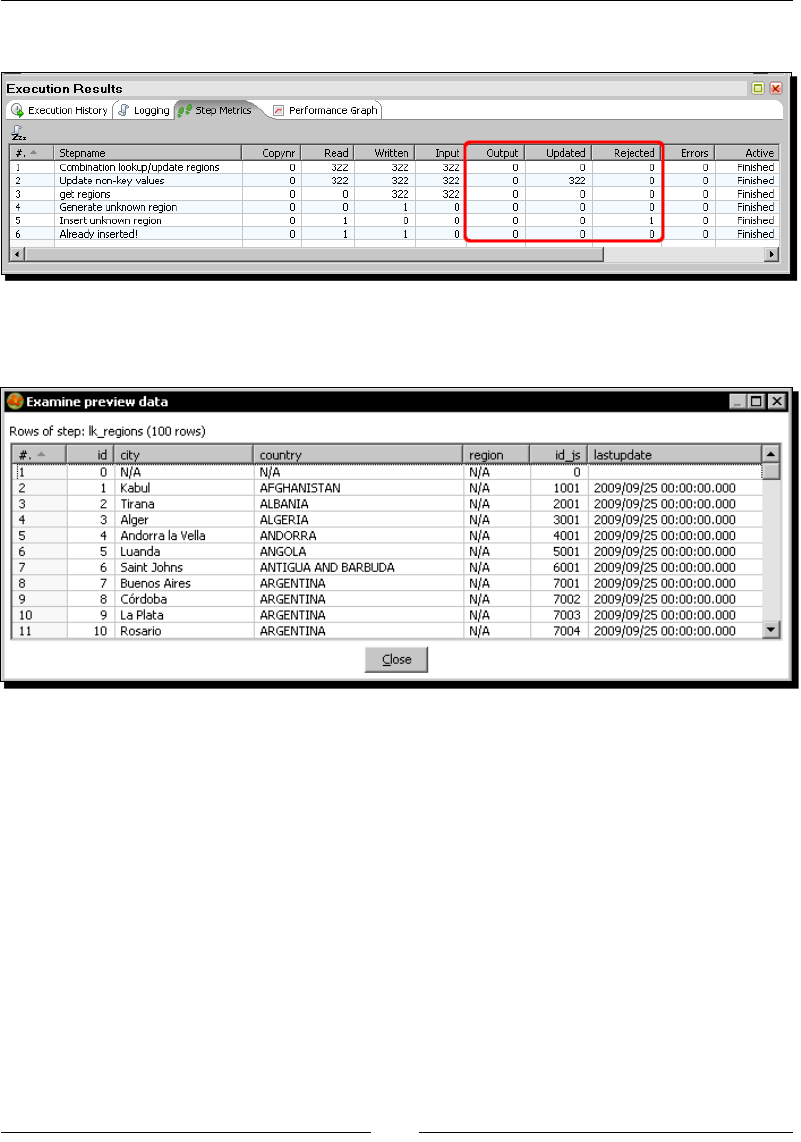

Time for acon – tesng the transformaon that keeps a history

of product changes 288

Keeping an enre history of data with a Type II slowly changing dimension 289

Loading Type II SCDs with the Dimension lookup/update step 291

Summary 296

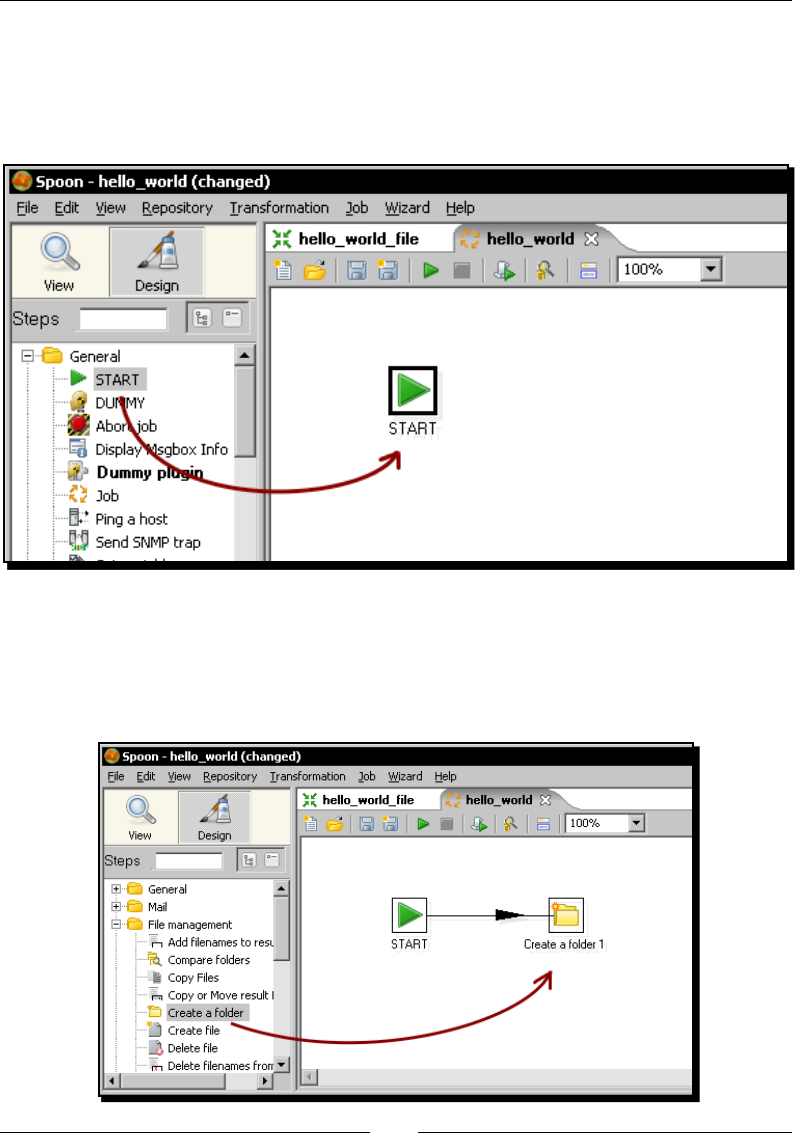

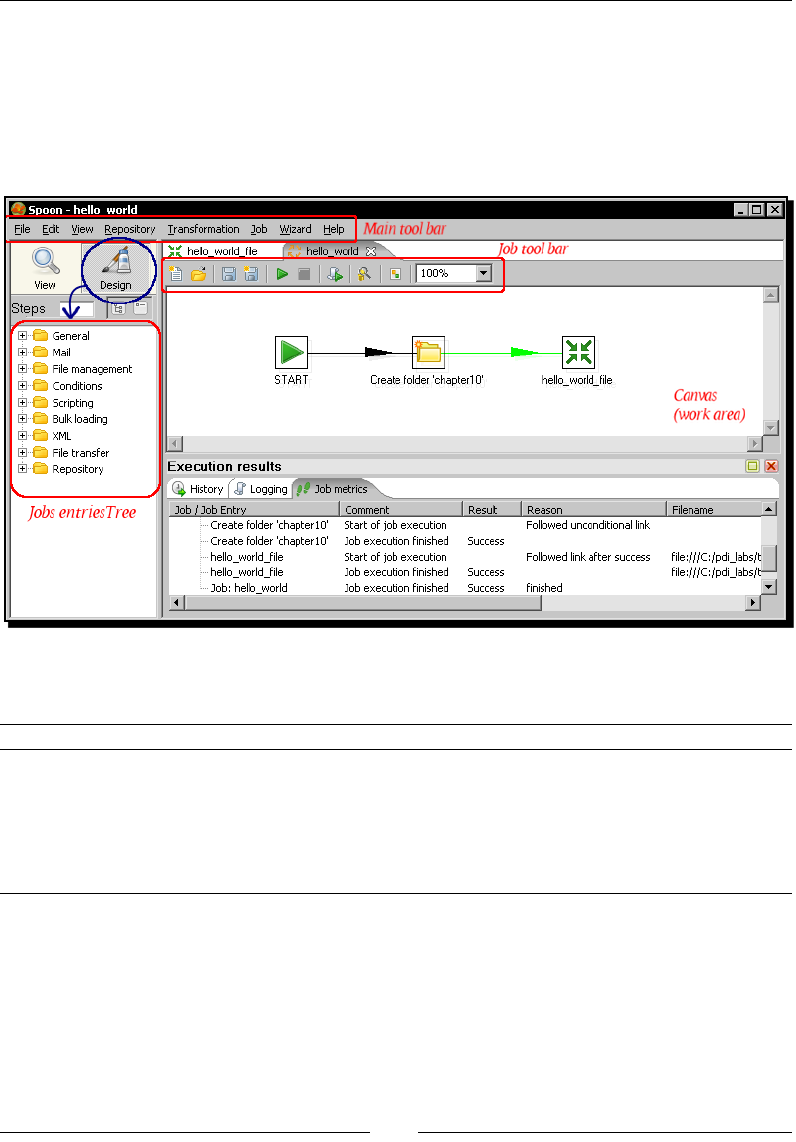

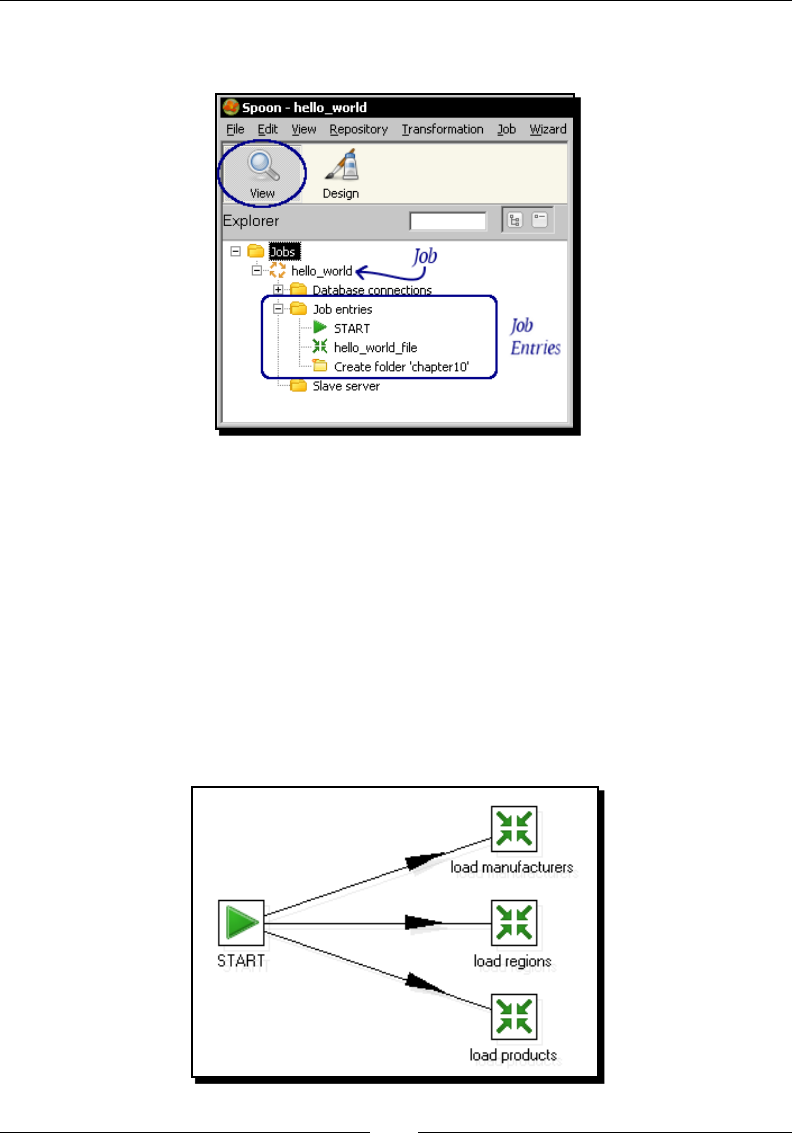

Chapter 10: Creang Basic Task Flows 297

Introducing PDI jobs 297

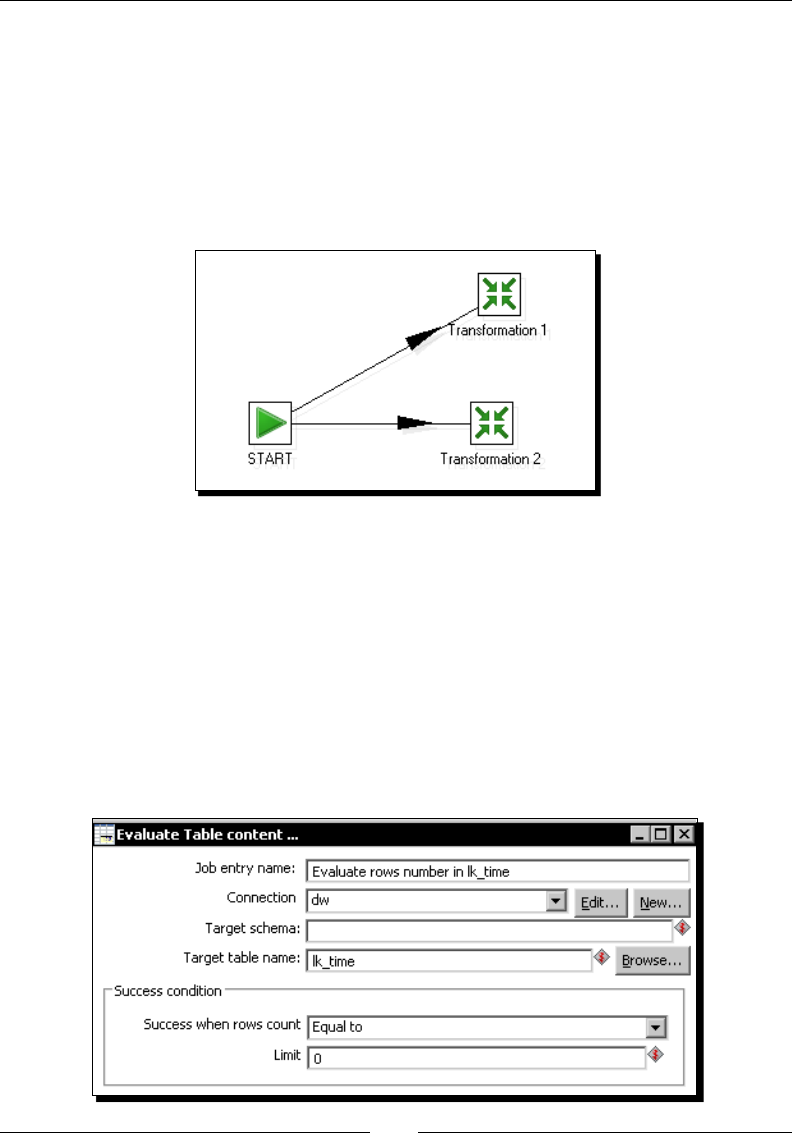

Time for acon – creang a simple hello world job 298

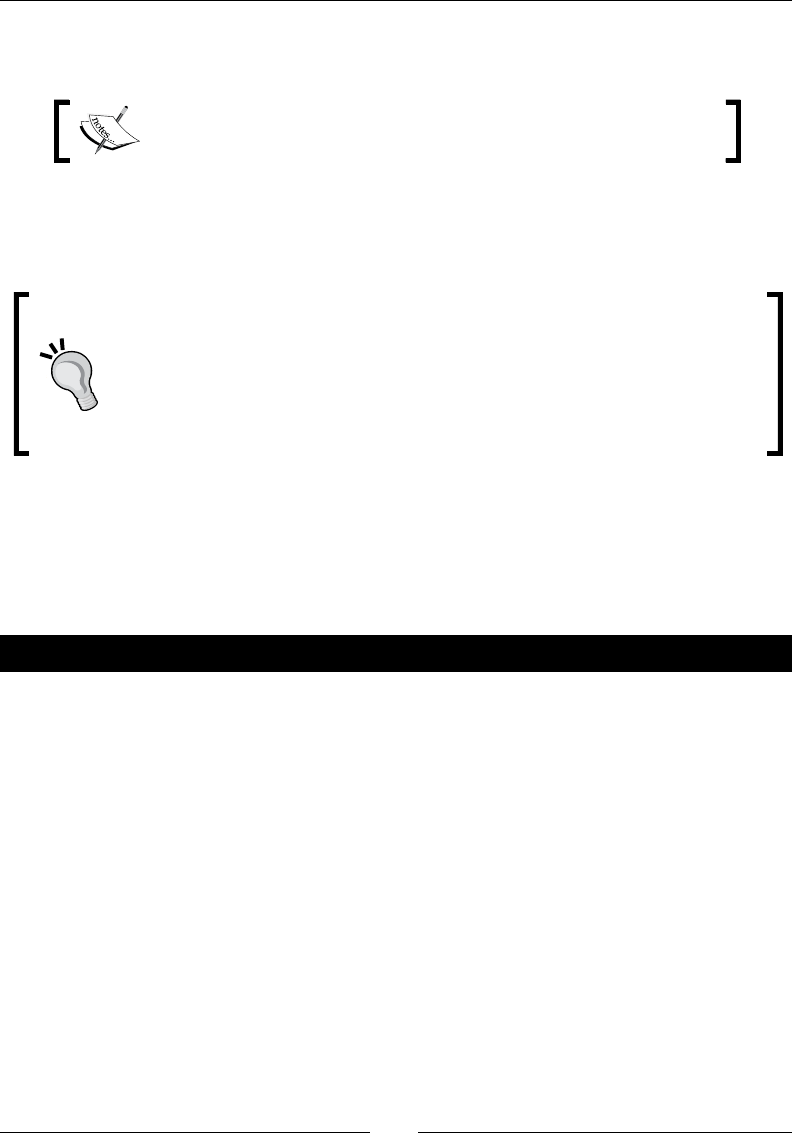

Execung processes with PDI jobs 305

Using Spoon to design and run jobs 306

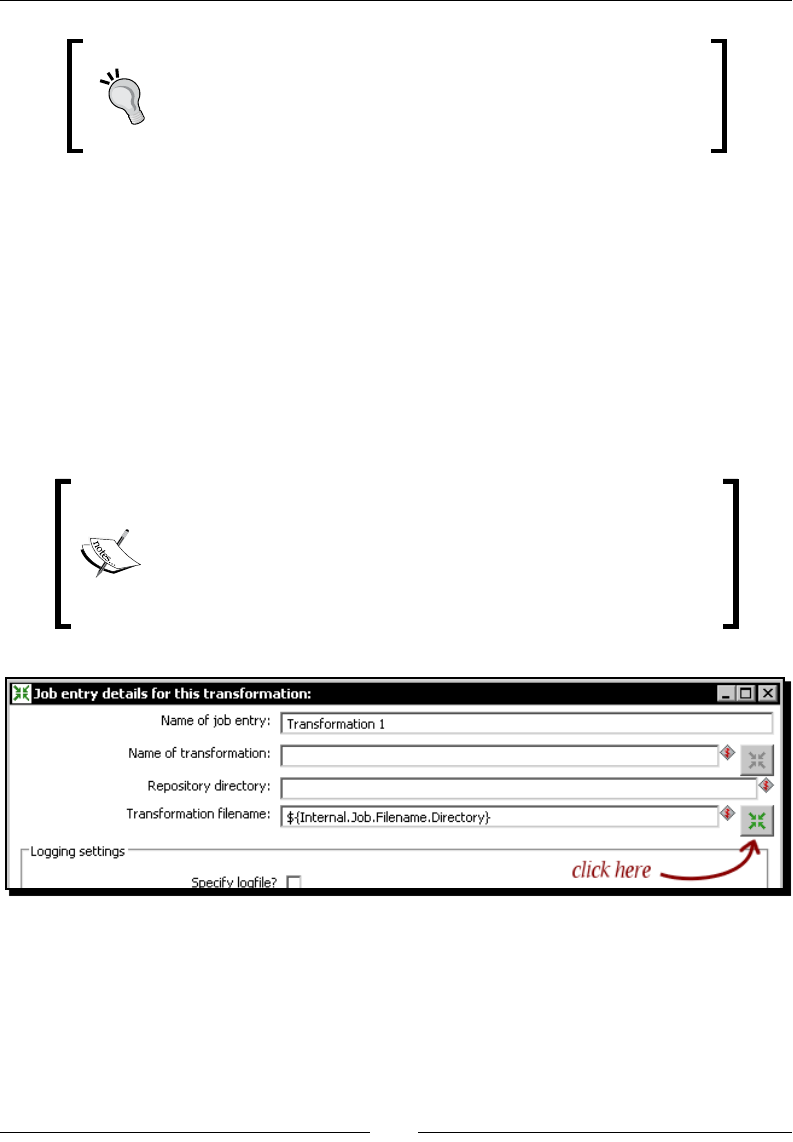

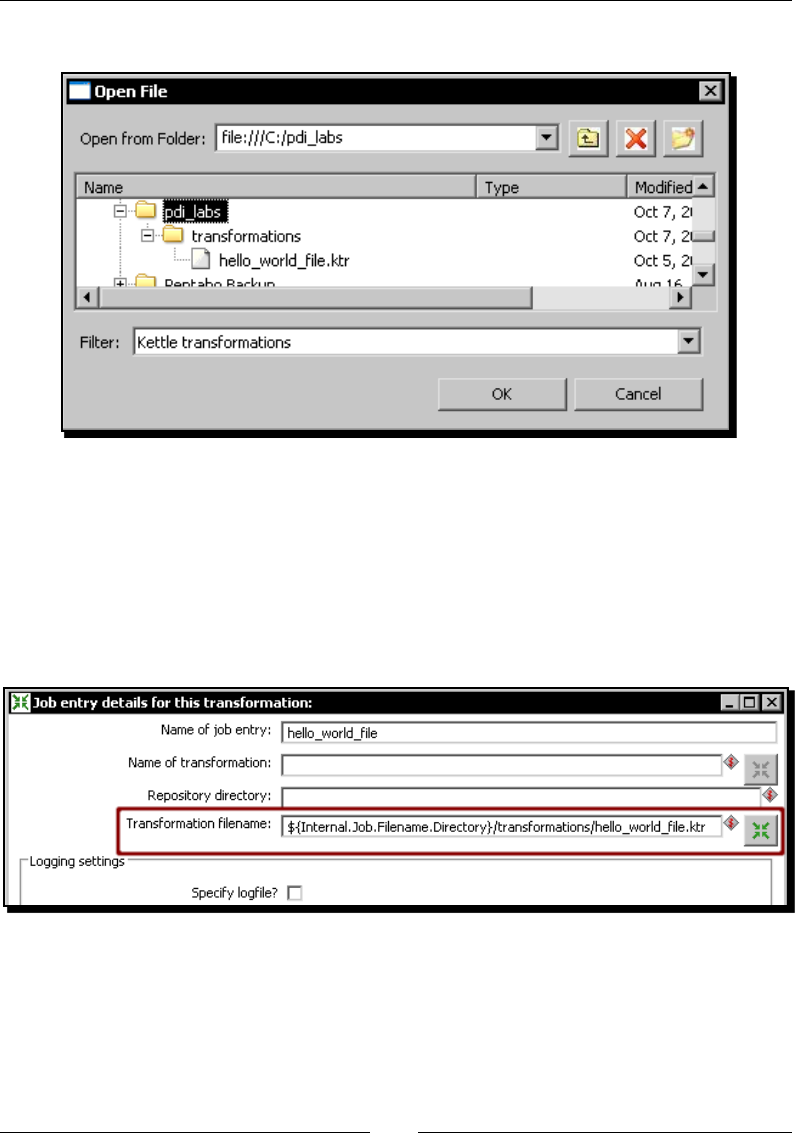

Using the transformaon job entry 307

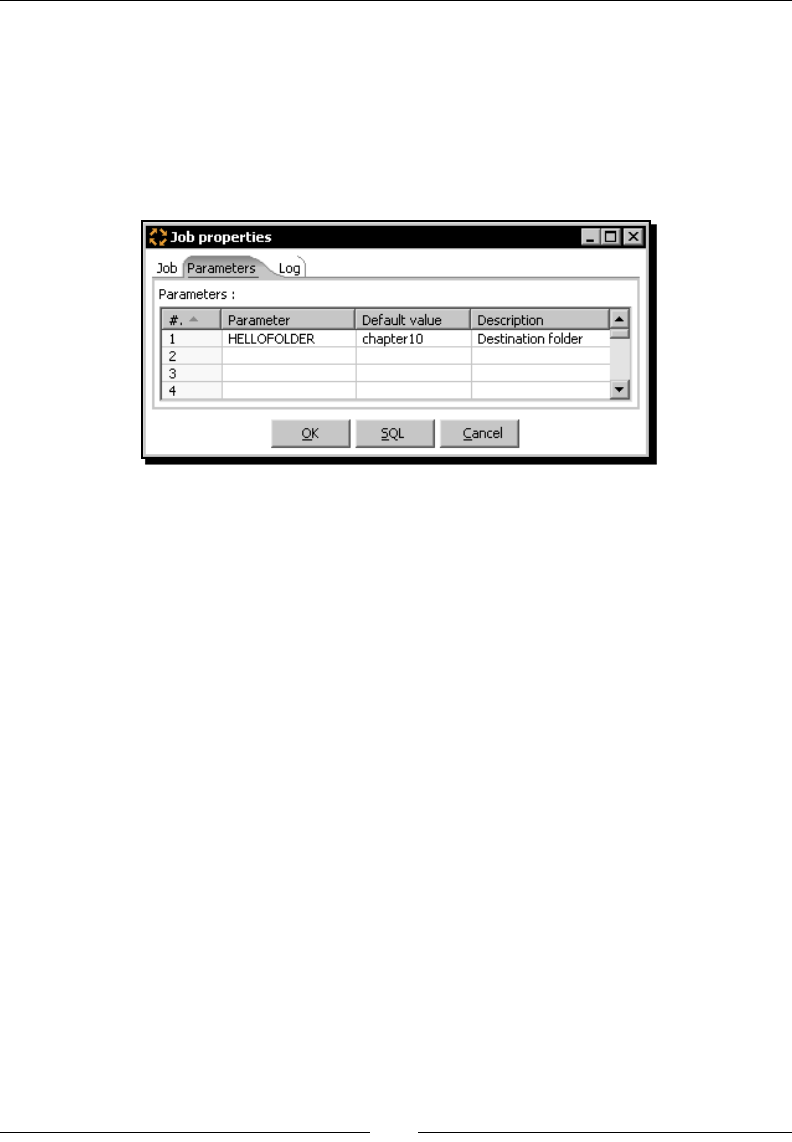

Receiving arguments and parameters in a job 309

Time for acon – customizing the hello world le with

arguments and parameters 309

Using named parameters in jobs 312

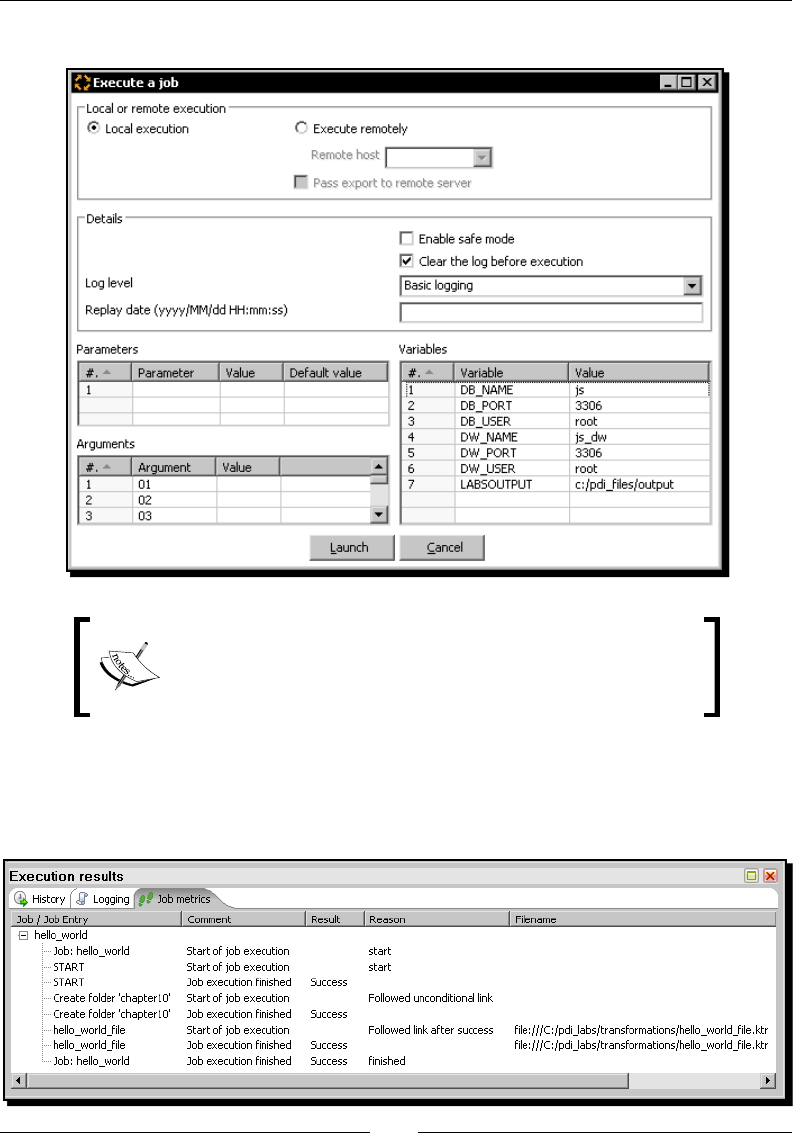

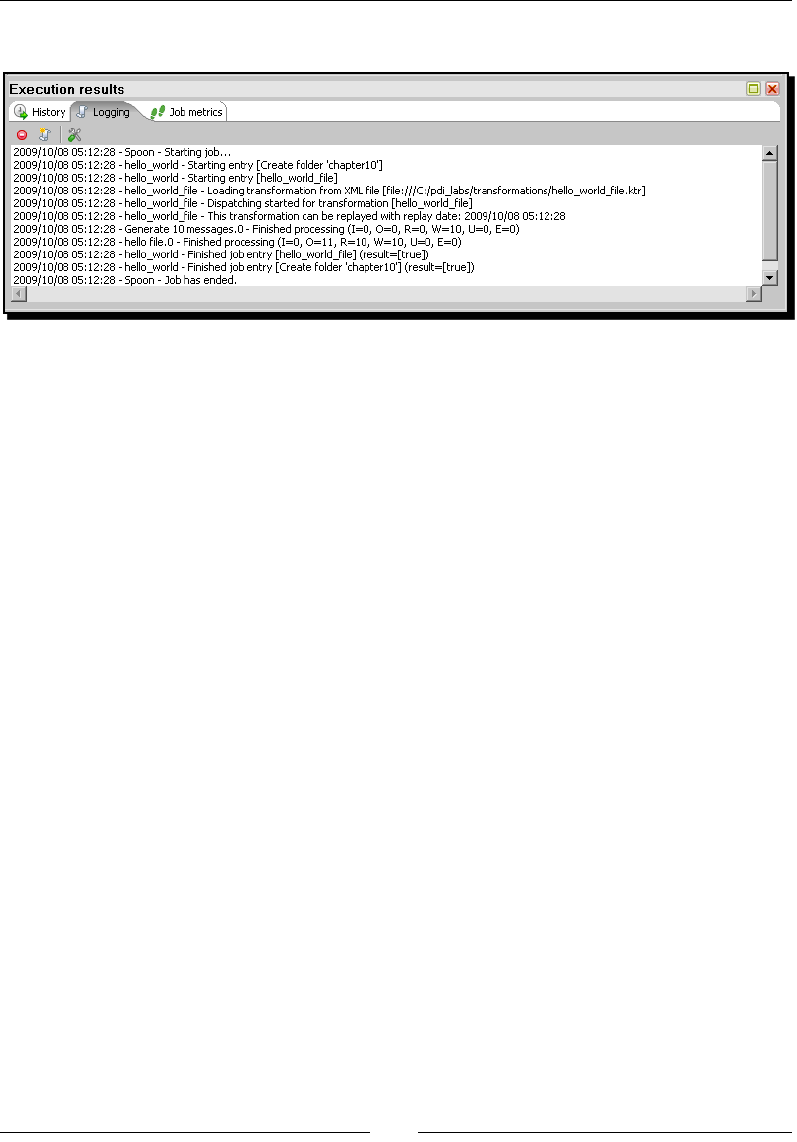

Running jobs from a terminal window 312

Time for acon – execung the hello world job from a terminal window 313

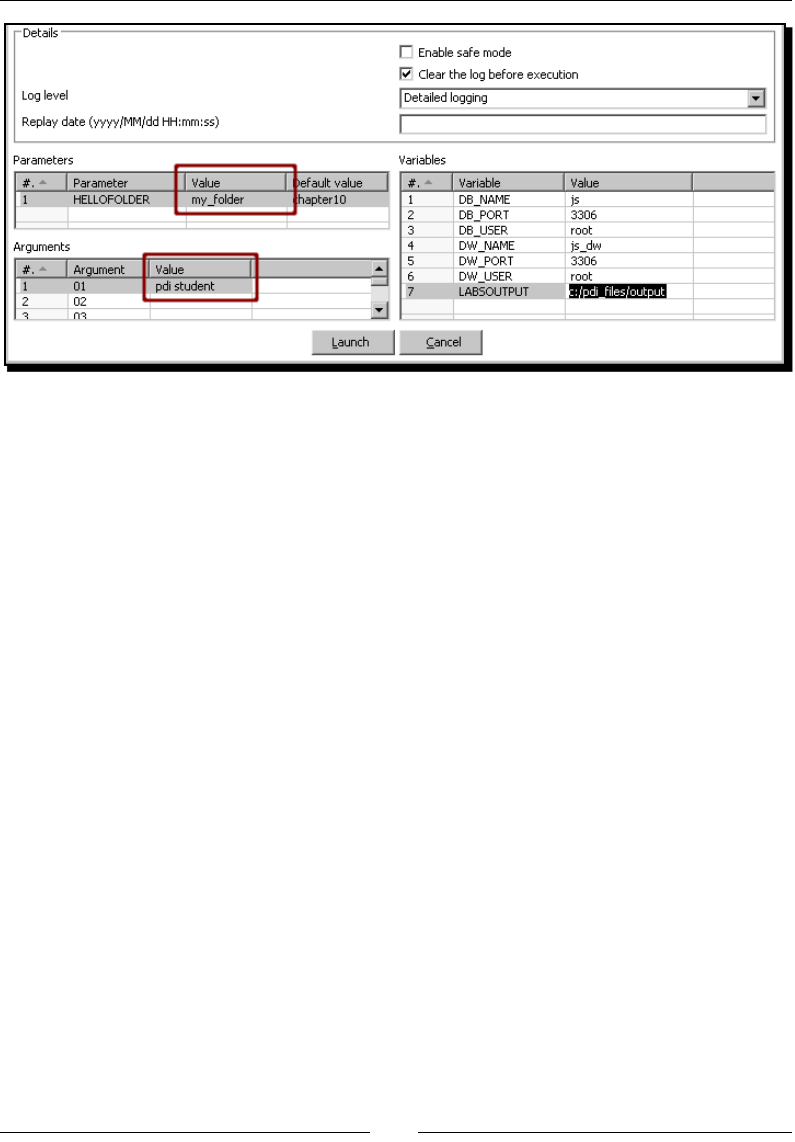

Using named parameters and command-line arguments in transformaons 314

Time for acon – calling the hello world transformaon with

xed arguments and parameters 315

Deciding between the use of a command-line argument and a named parameter 317

Running job entries under condions 318

Table of Contents

[ vii ]

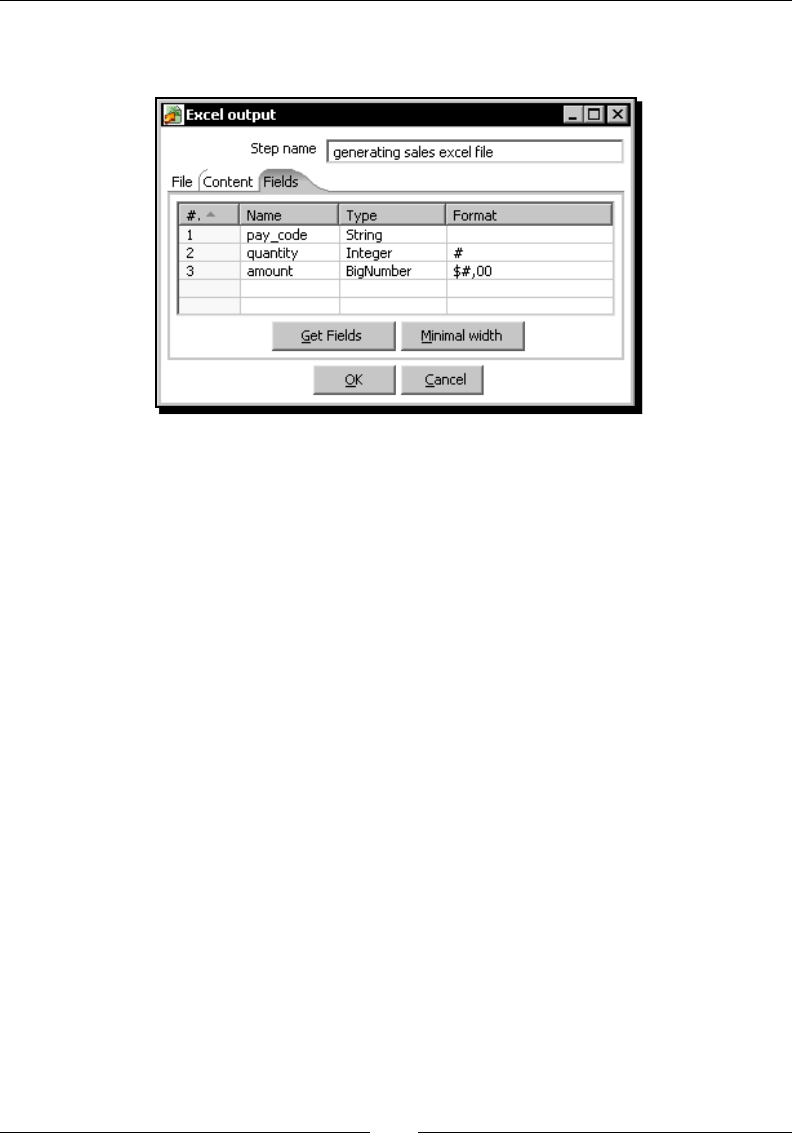

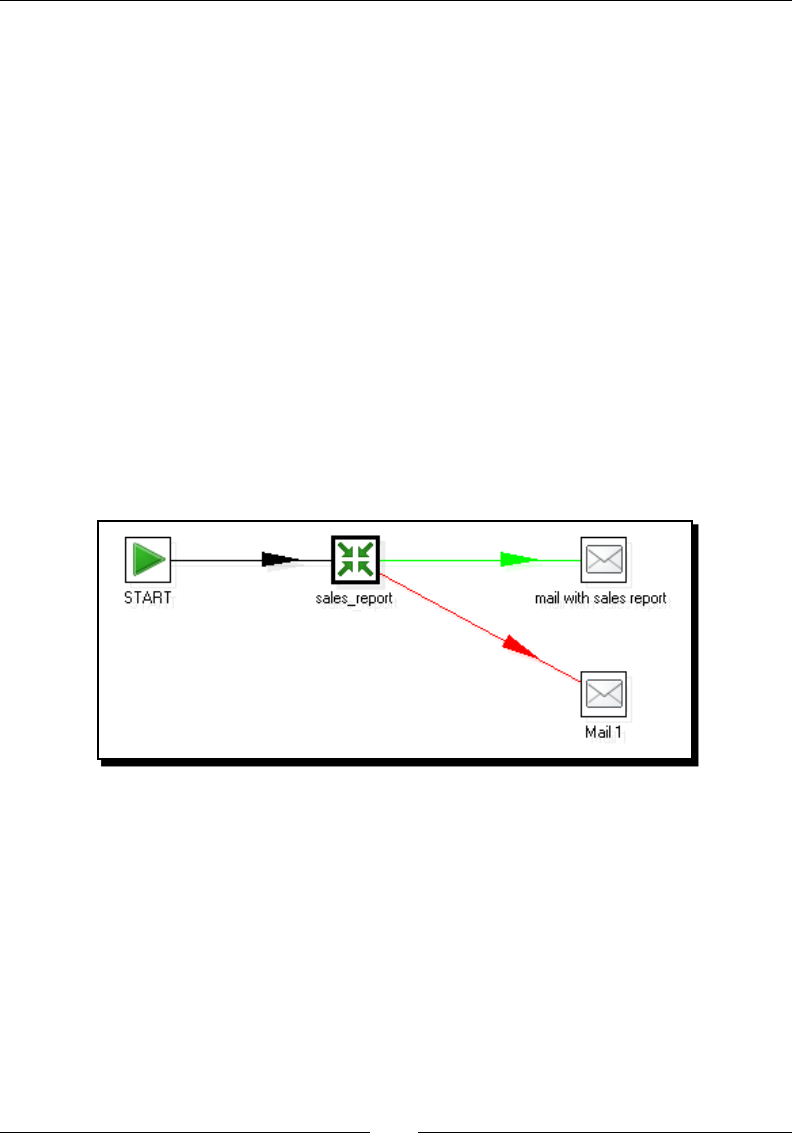

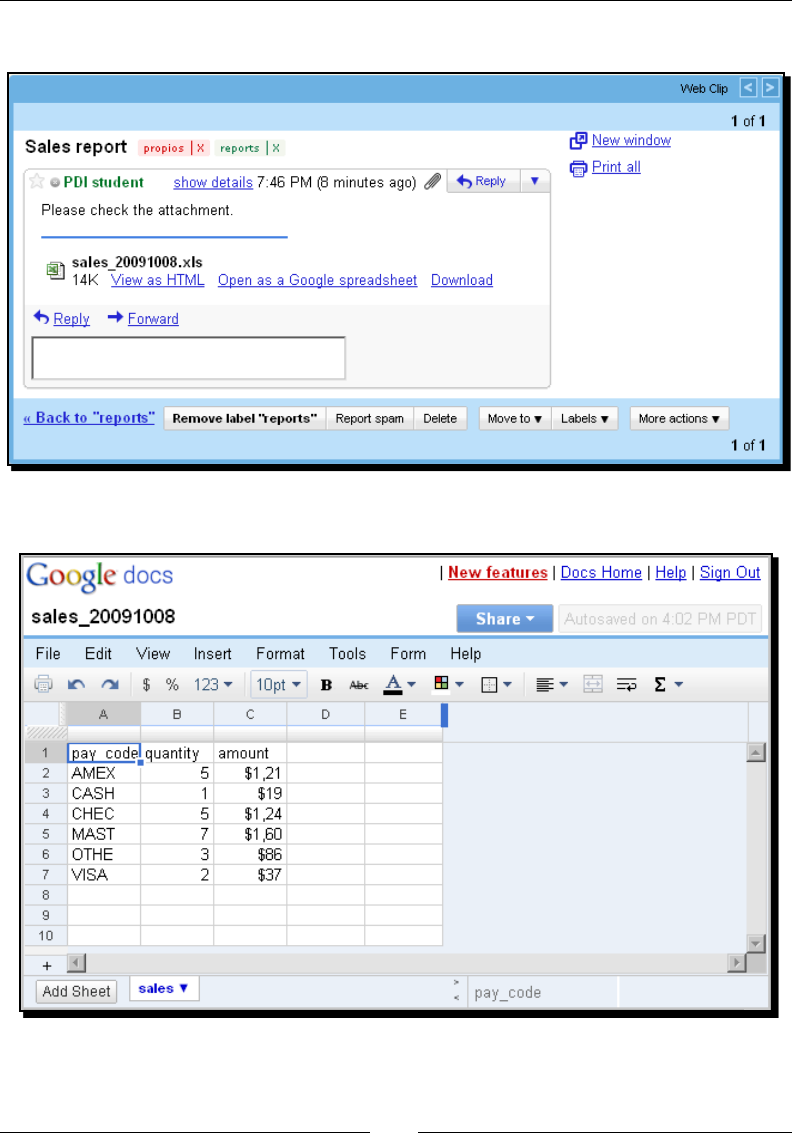

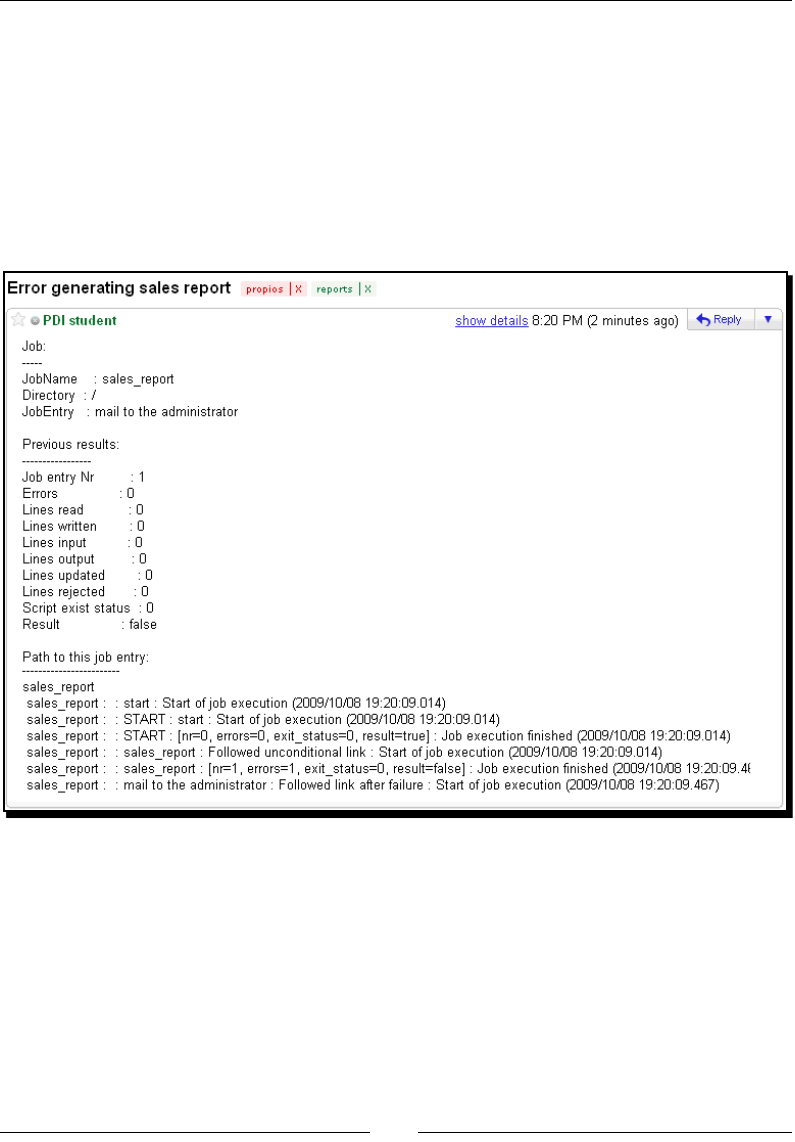

Time for acon – sending a sales report and warning the

administrator if something is wrong 318

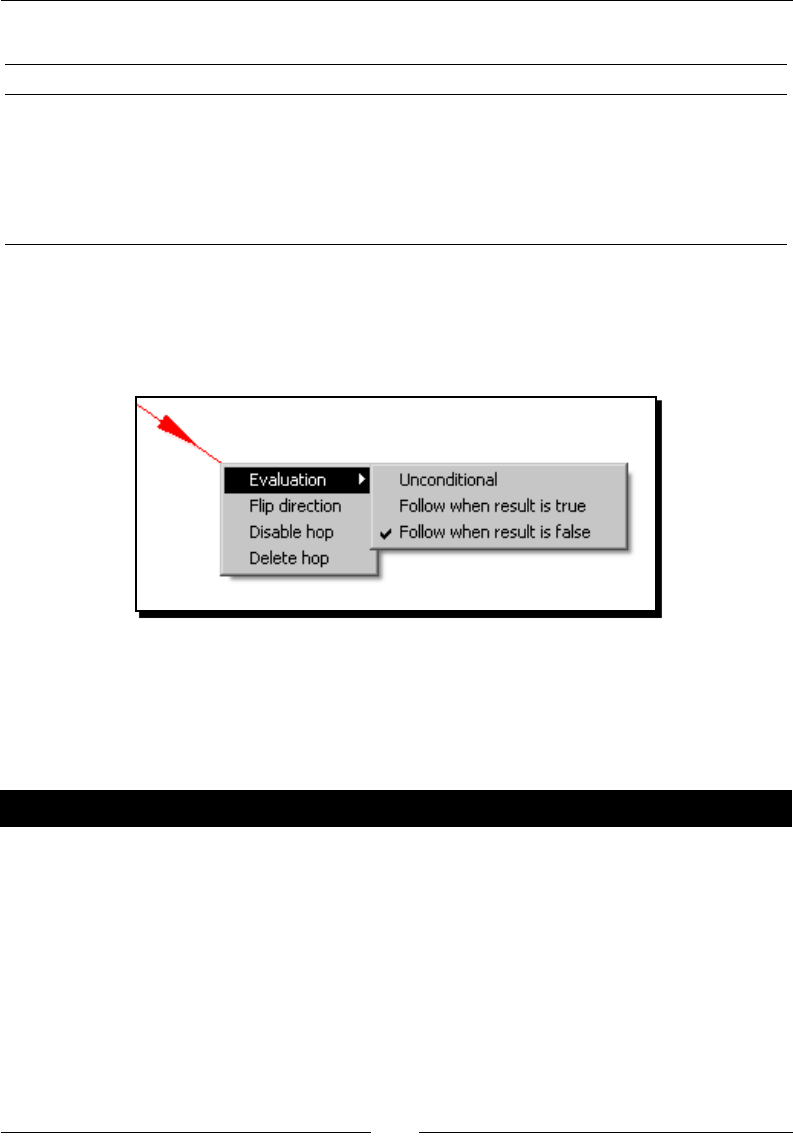

Changing the ow of execuon on the basis of condions 324

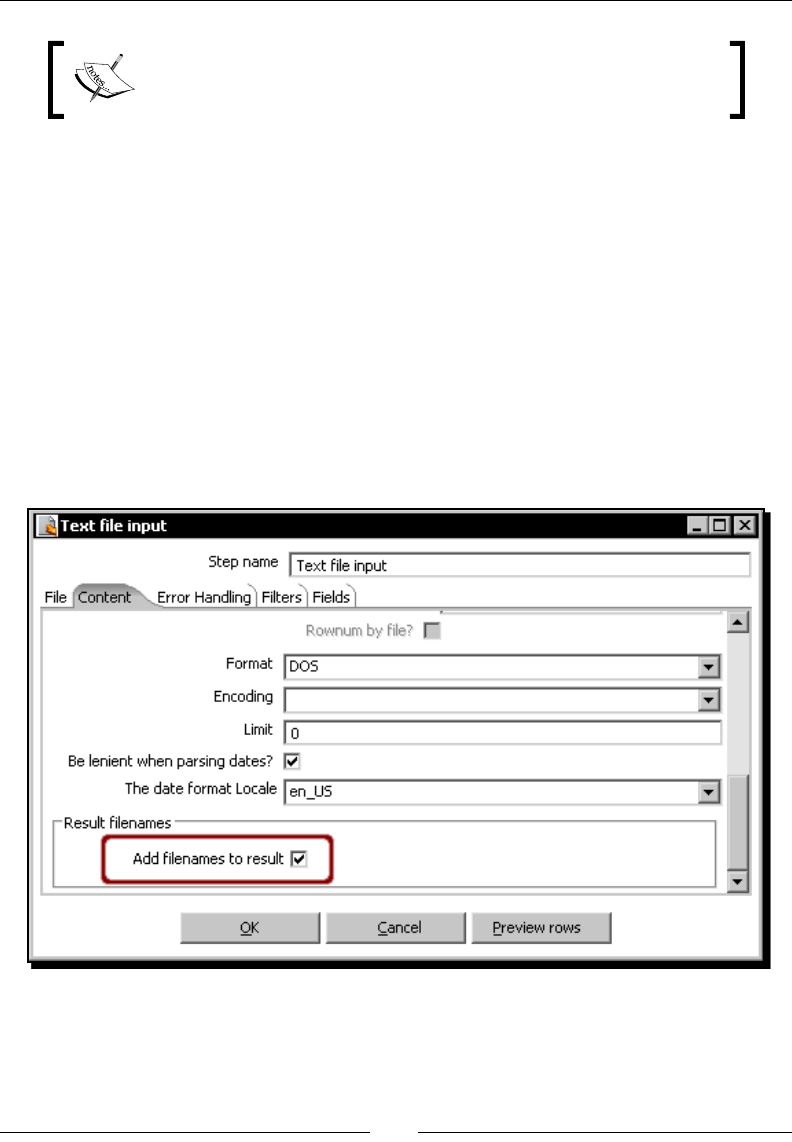

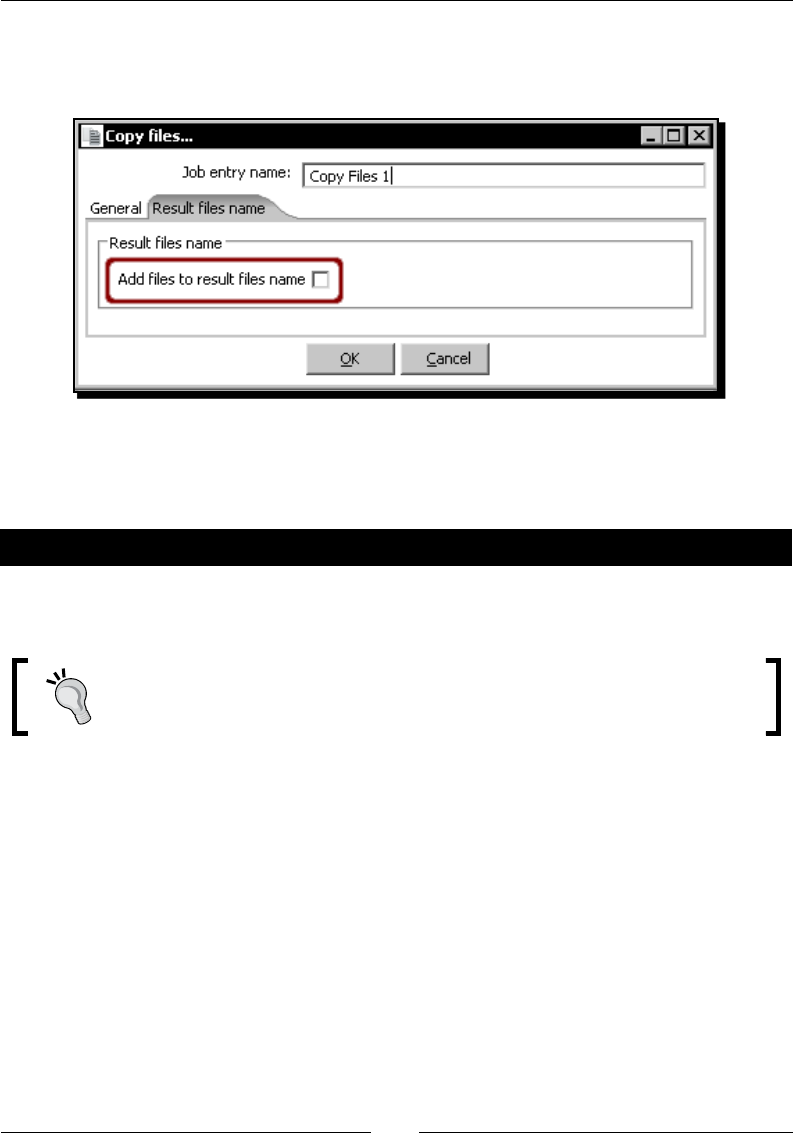

Creang and using a le results list 326

Summary 327

Chapter 11: Creang Advanced Transformaons and Jobs 329

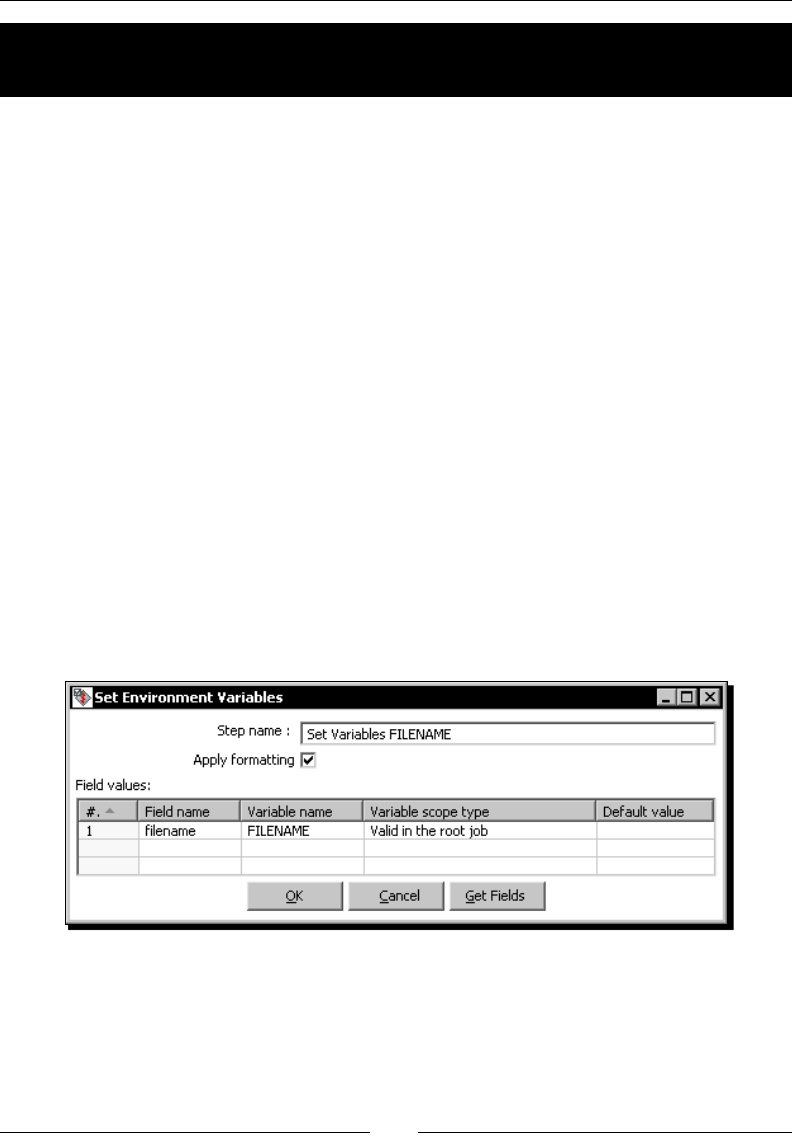

Enhancing your processes with the use of variables 329

Time for acon – updang a le with news about examinaons by seng

a variable with the name of the le 330

Seng variables inside a transformaon 335

Enhancing the design of your processes 337

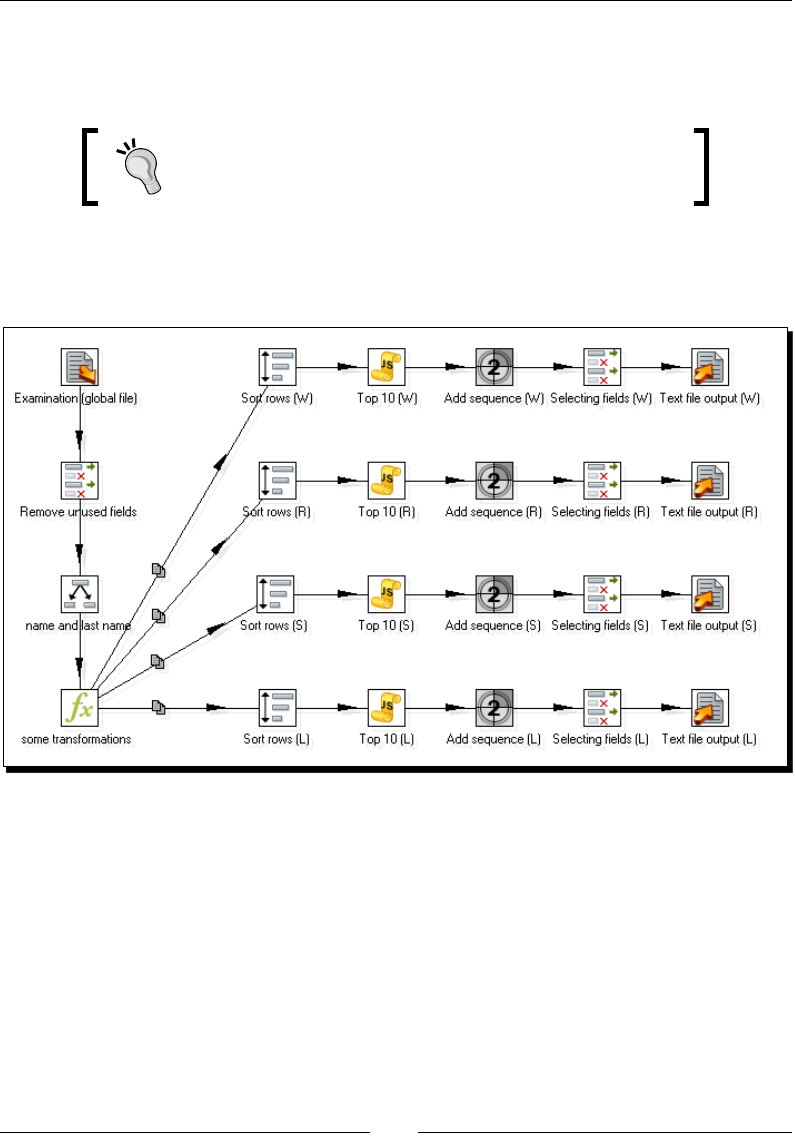

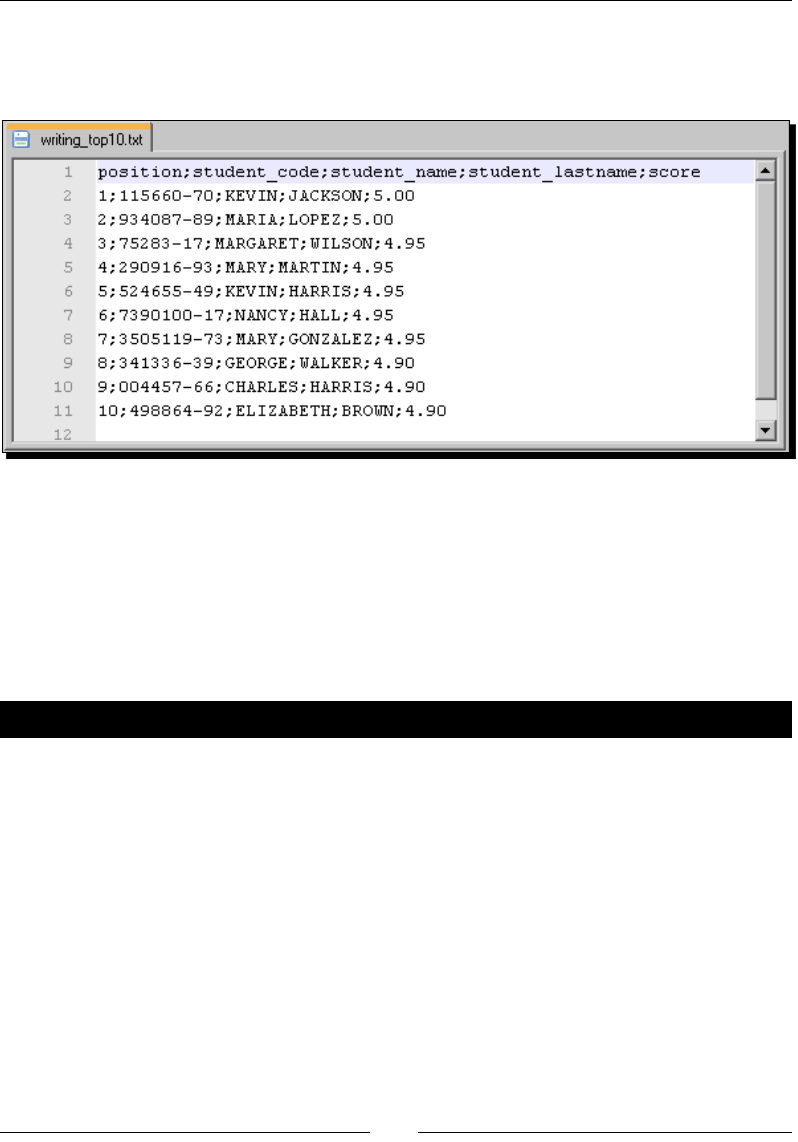

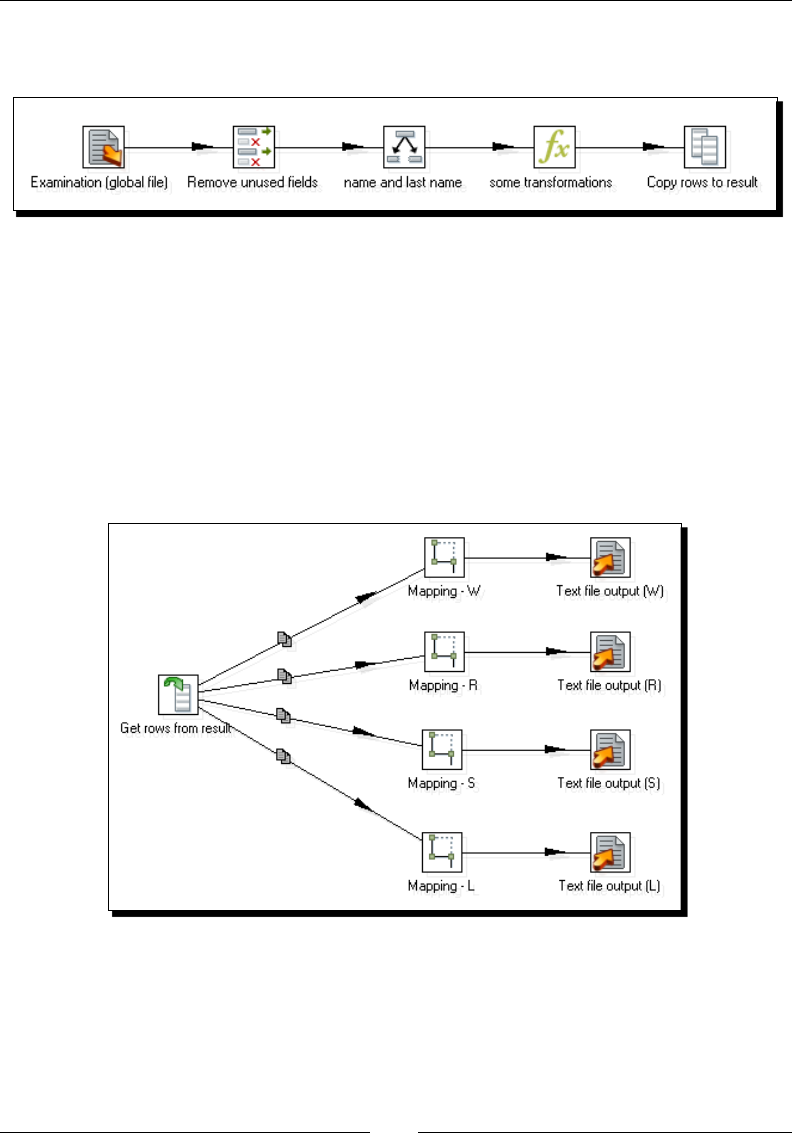

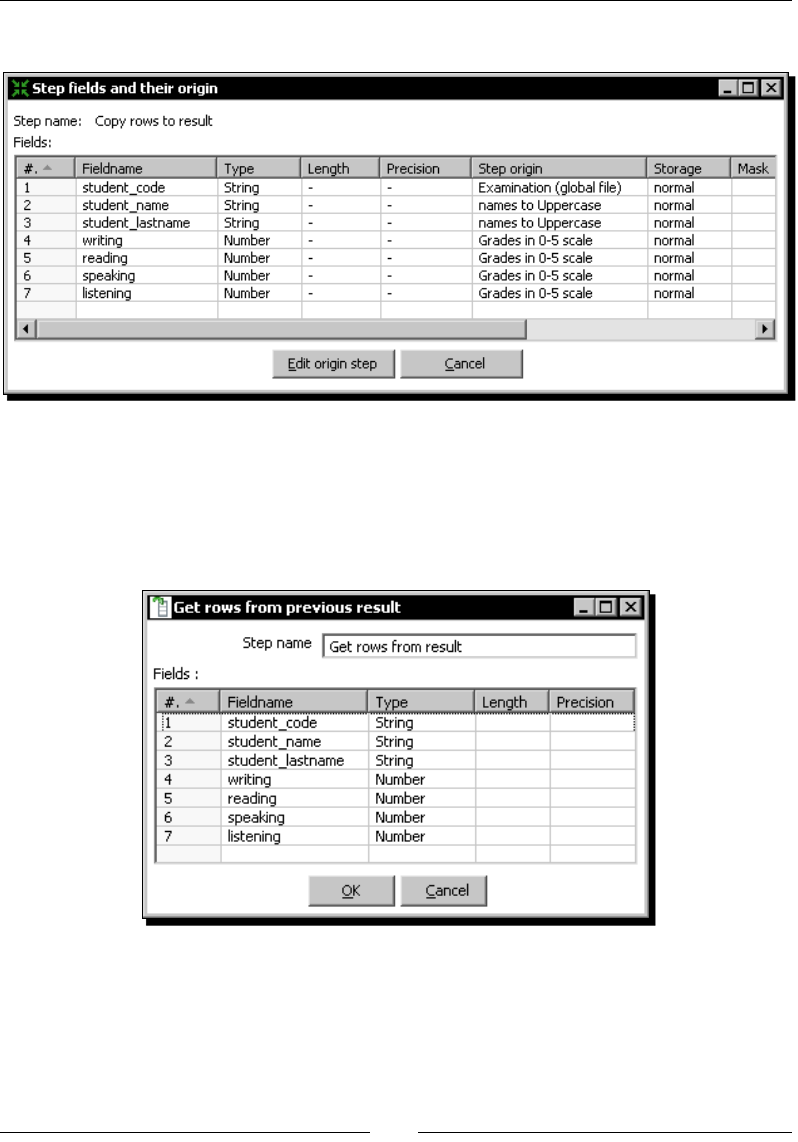

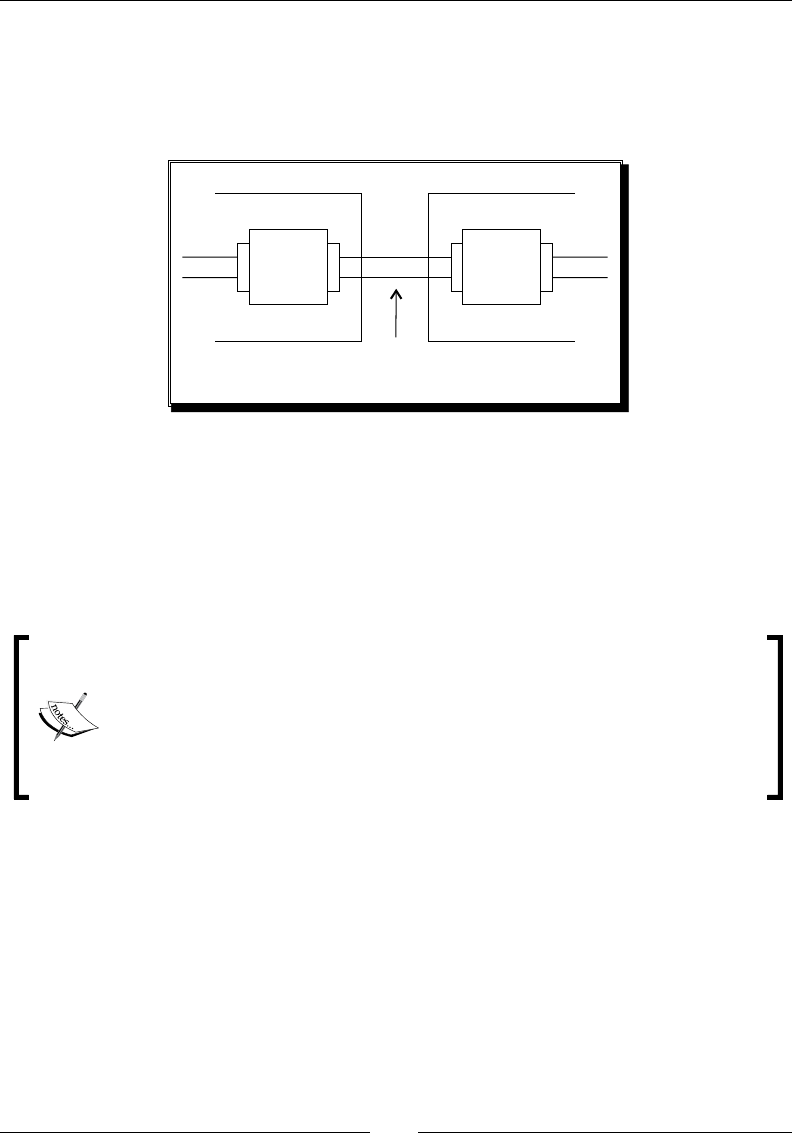

Time for acon – generang les with top scores 337

Reusing part of your transformaons 341

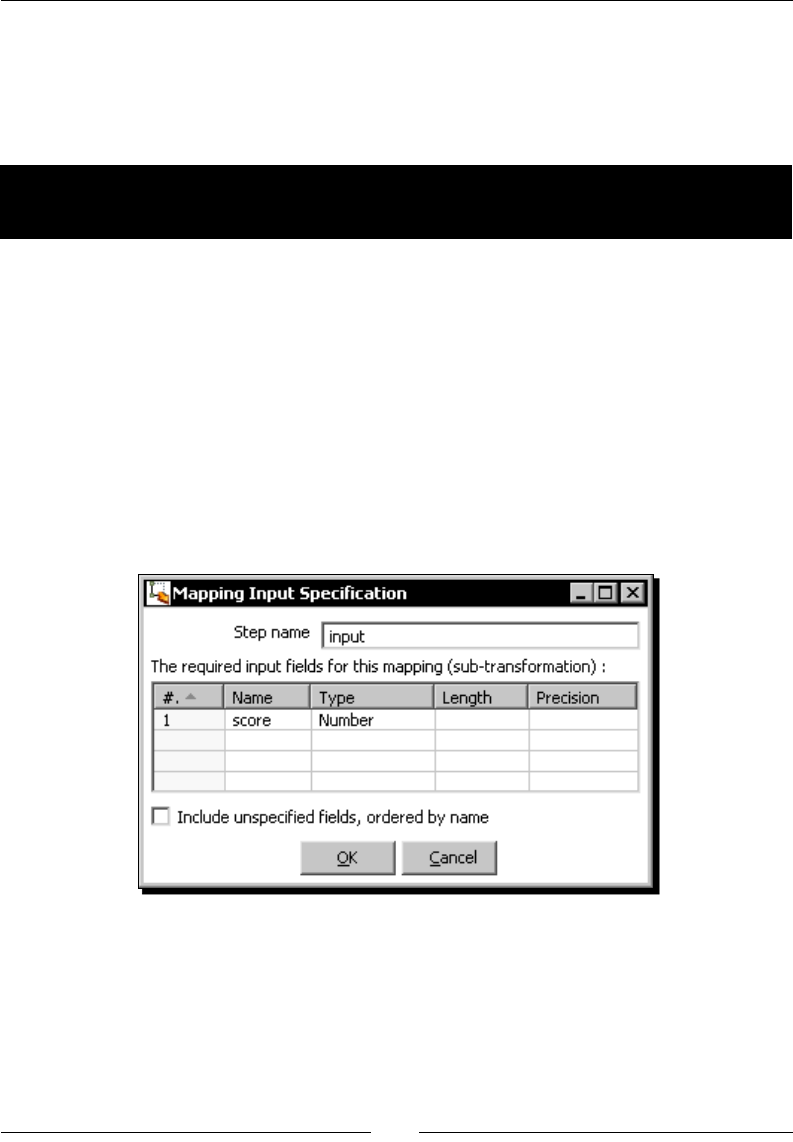

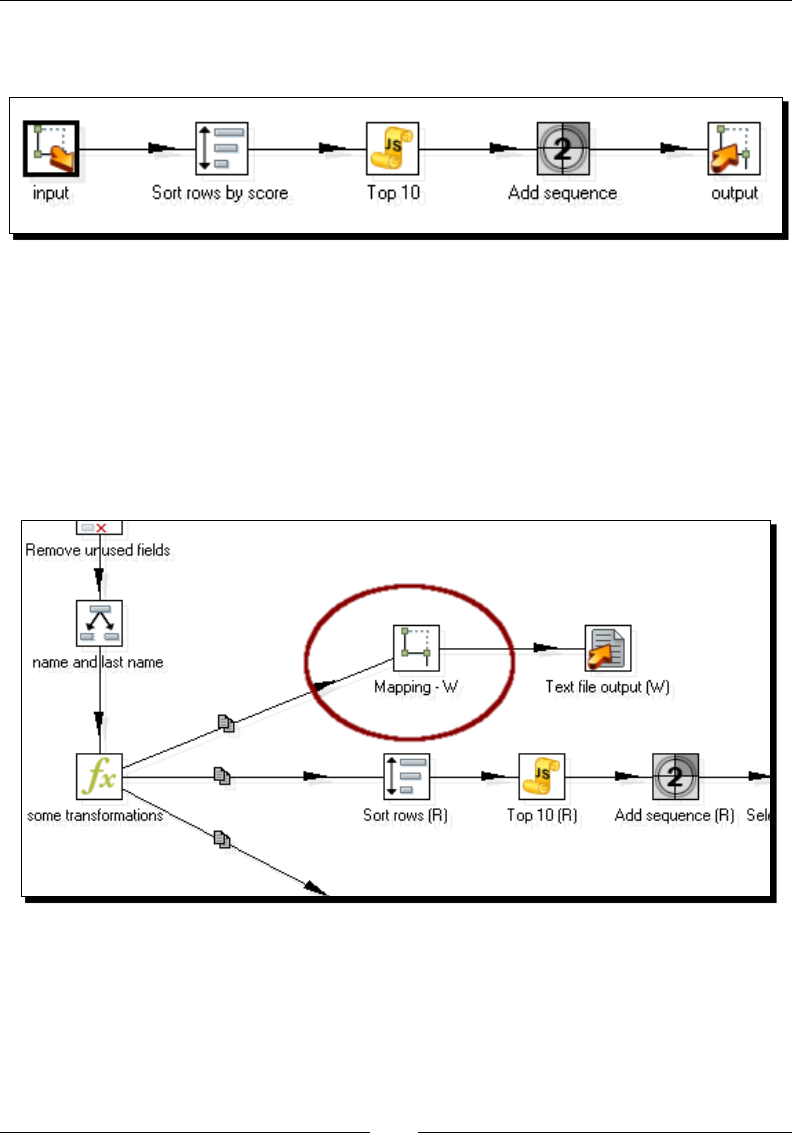

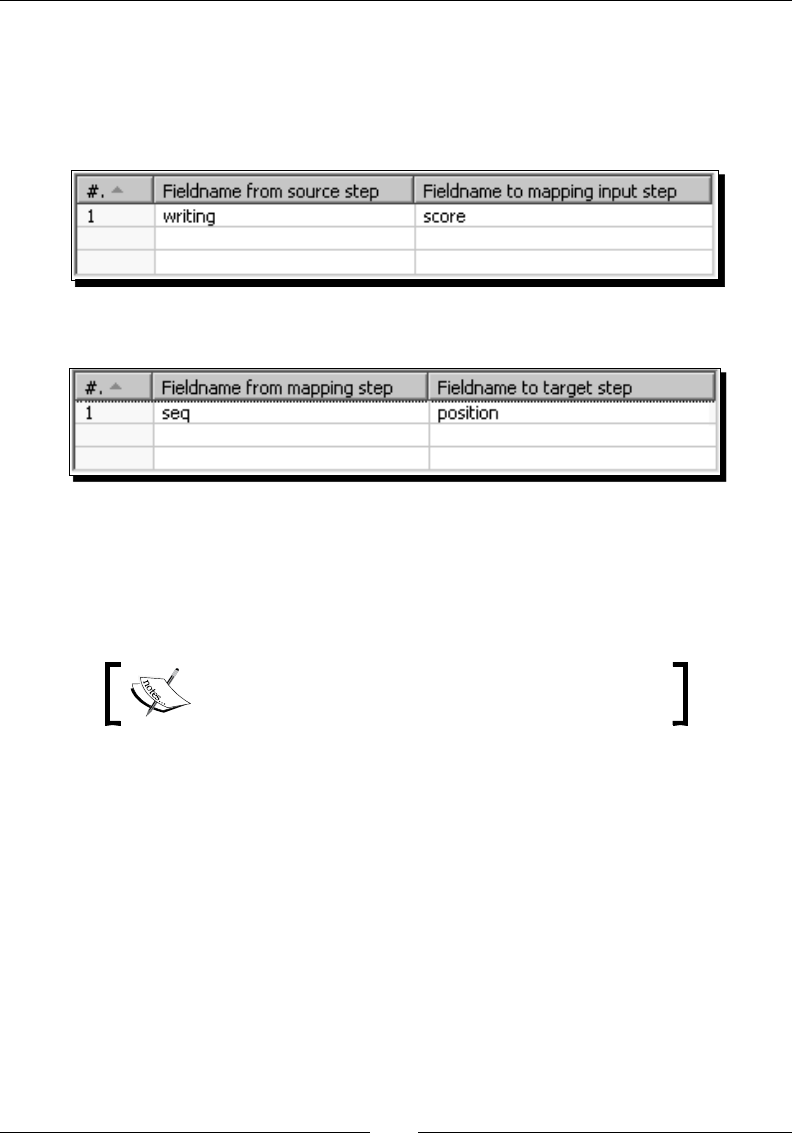

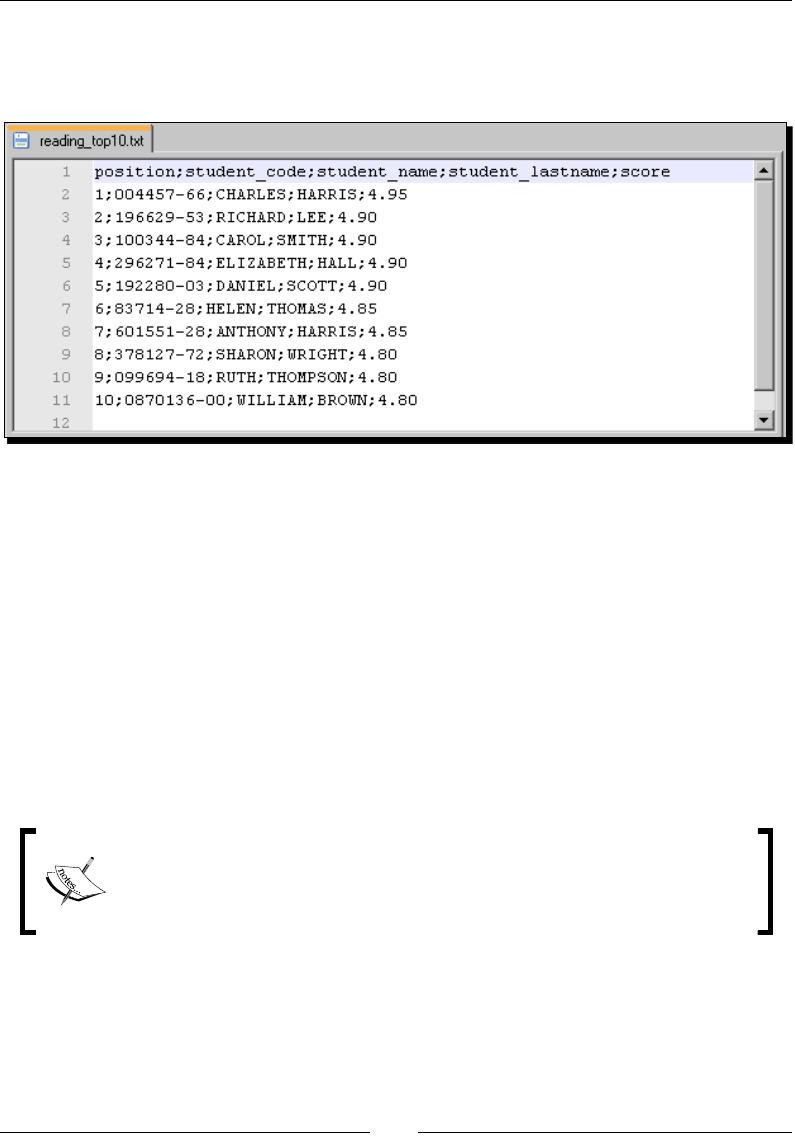

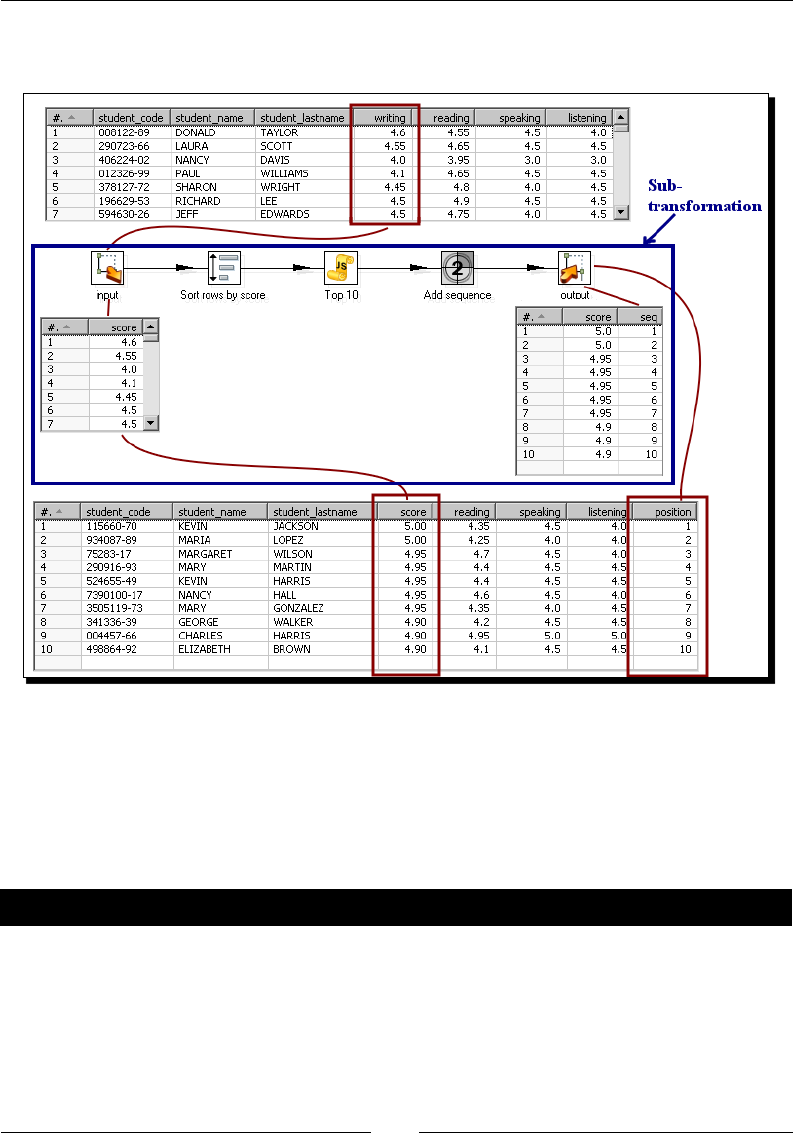

Time for acon – calculang the top scores with a subtransformaon 341

Creang and using subtransformaons 345

Creang a job as a process ow 348

Time for acon – spling the generaon of top scores by

copying and geng rows 348

Transferring data between transformaons by using the copy /get rows mechanism 352

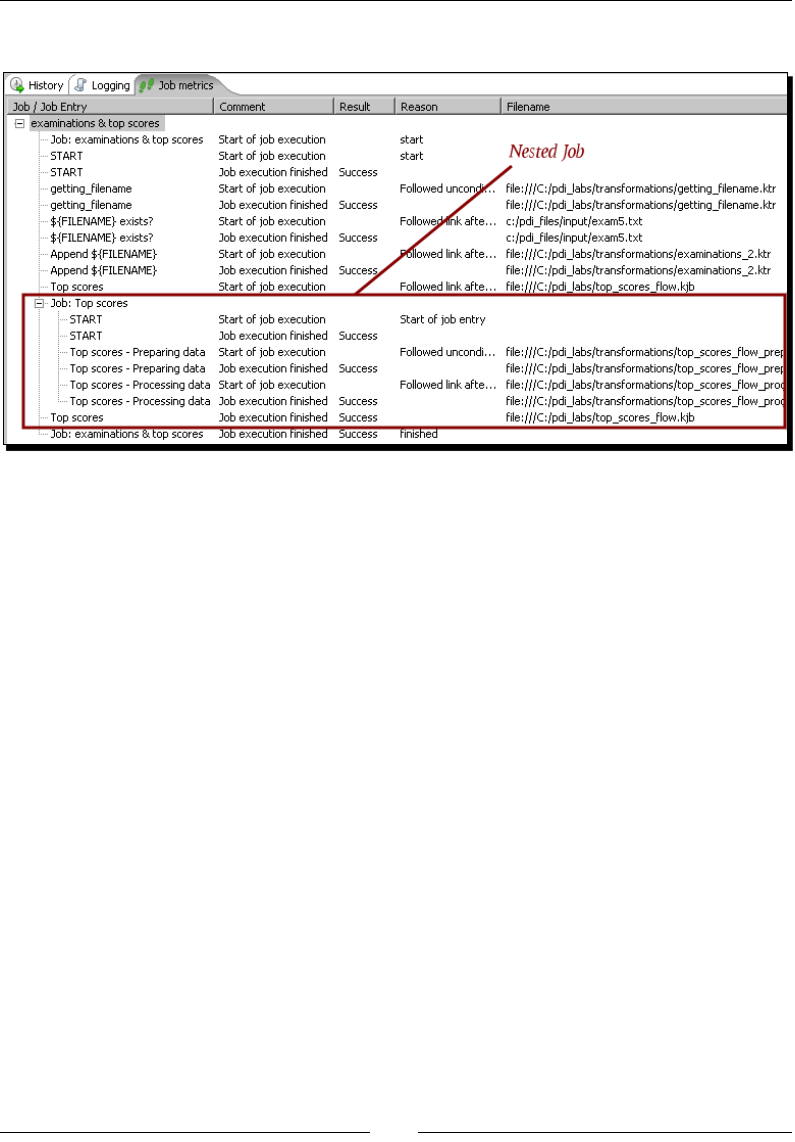

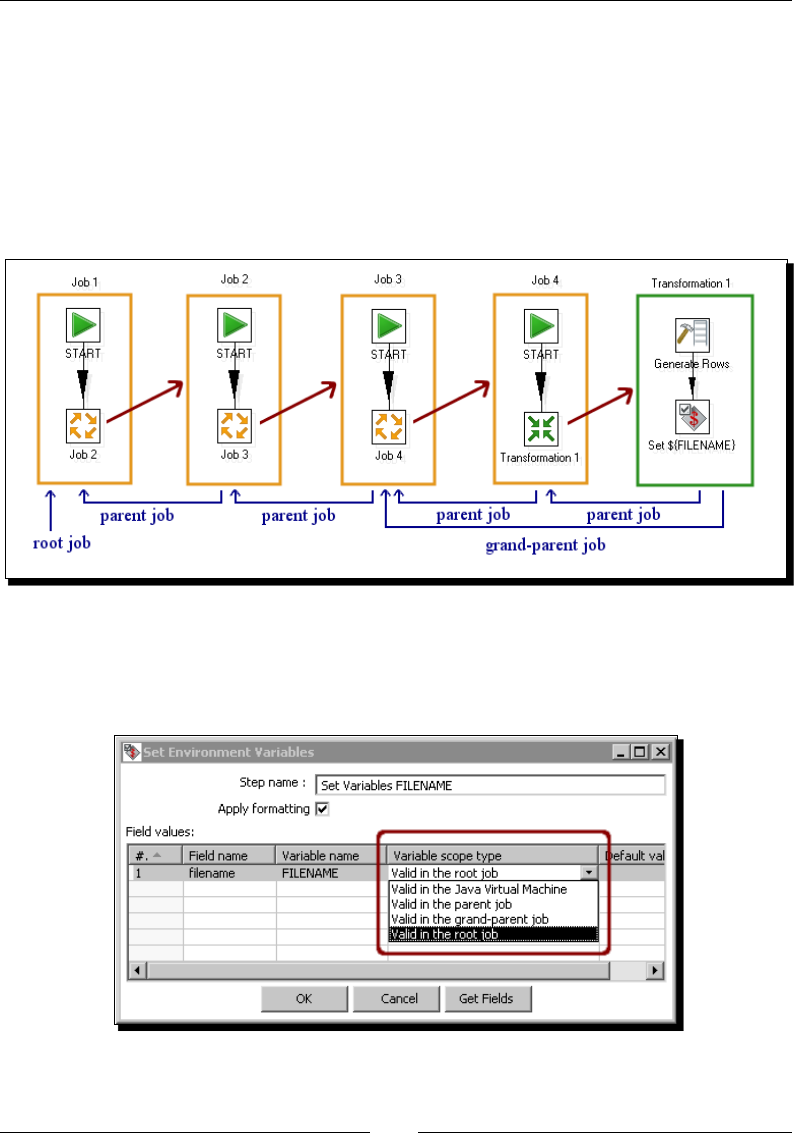

Nesng jobs 354

Time for acon – generang the les with top scores by nesng jobs 354

Running a job inside another job with a job entry 355

Understanding the scope of variables 356

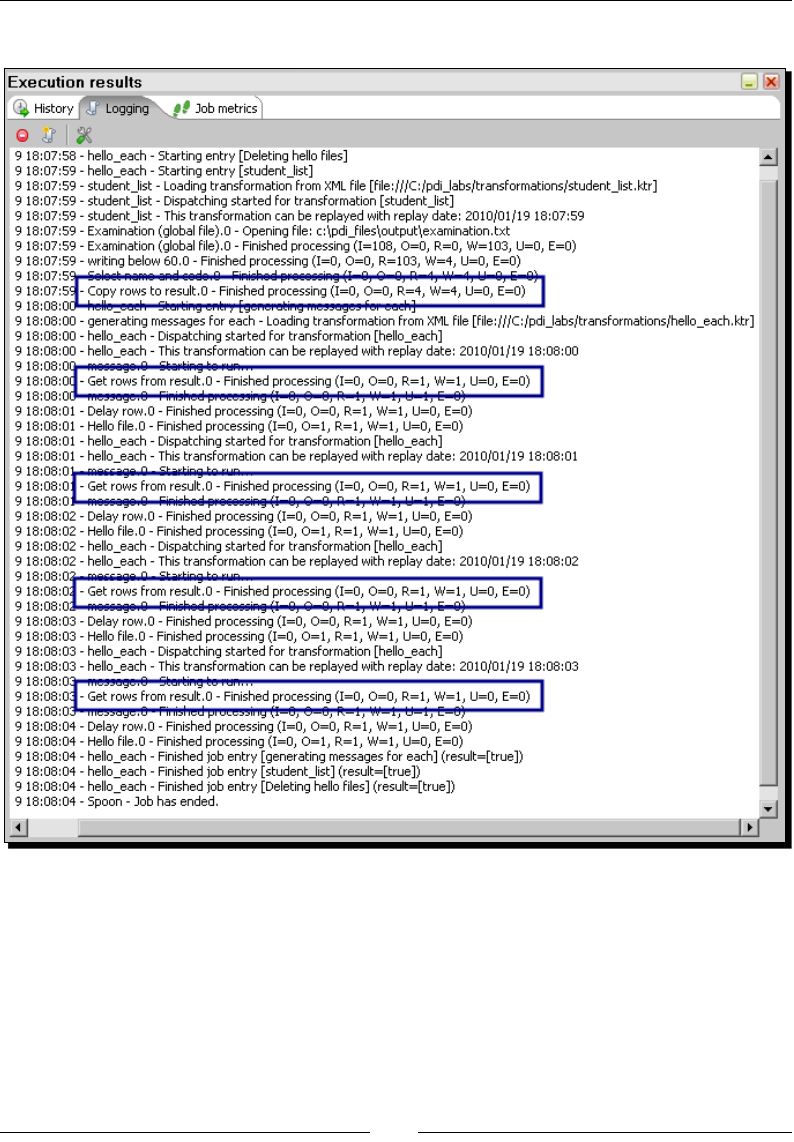

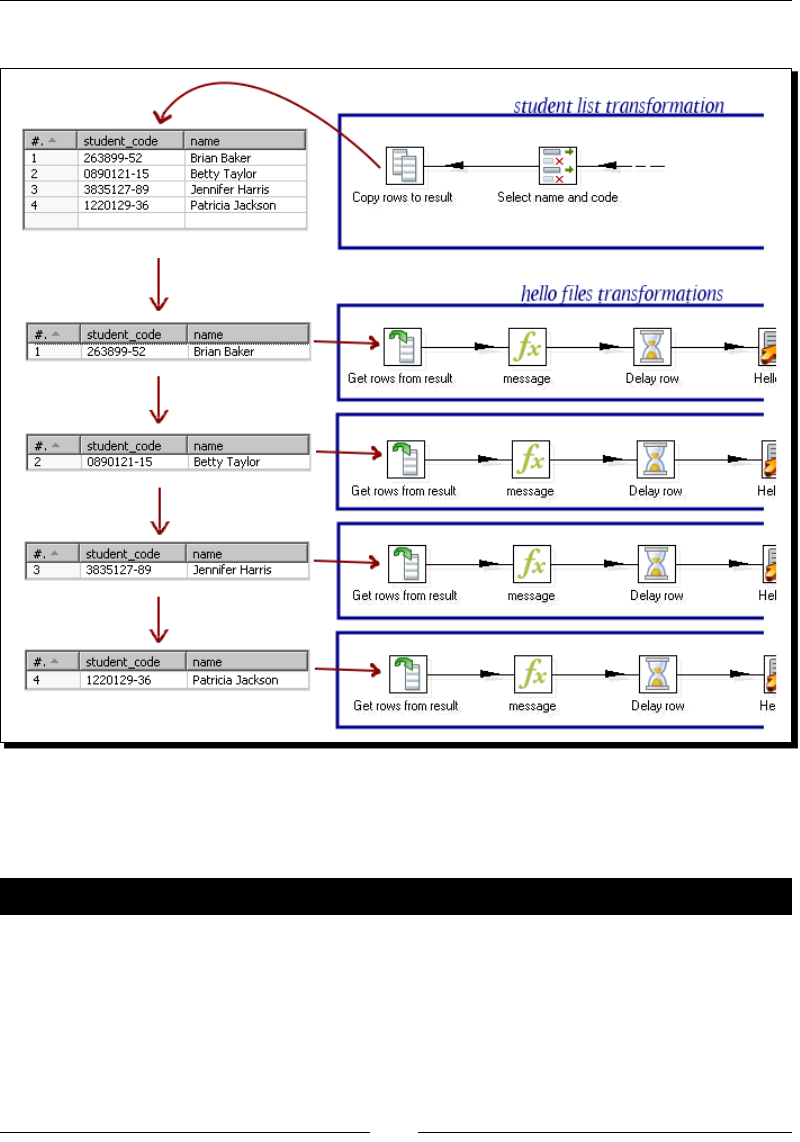

Iterang jobs and transformaons 357

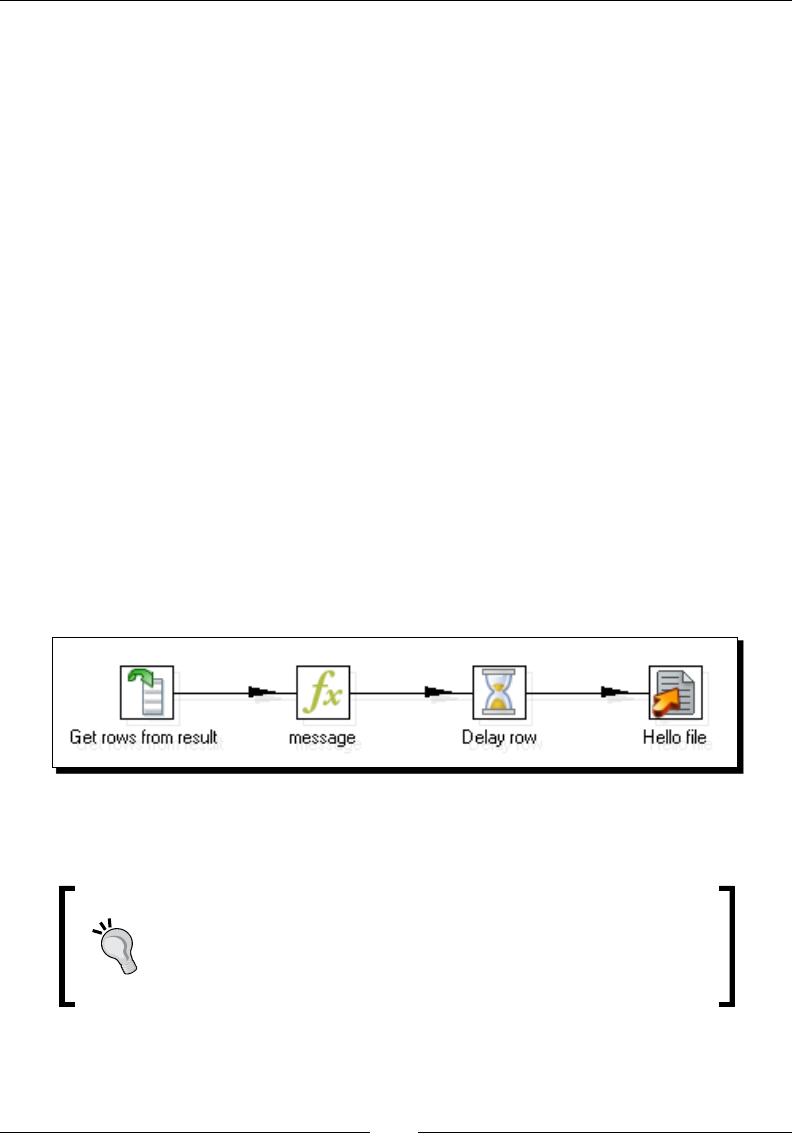

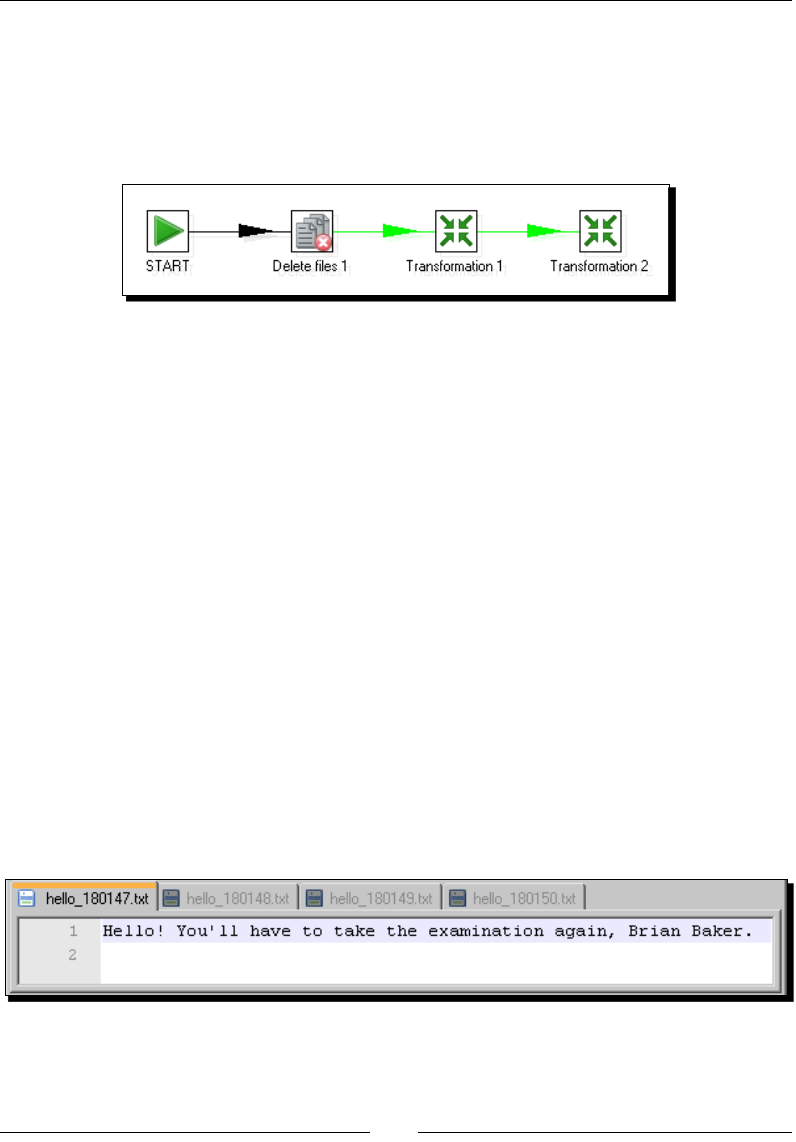

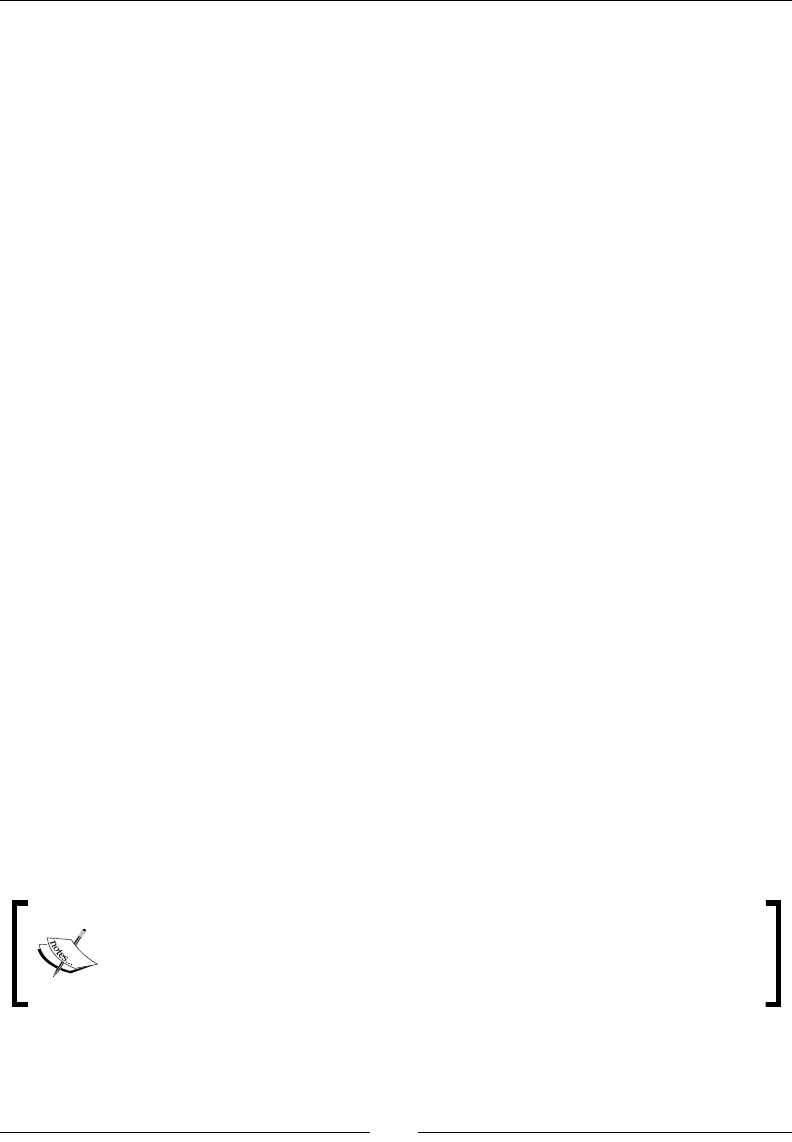

Time for acon – generang custom les by execung a transformaon

for every input row 358

Execung for each row 361

Summary 366

Chapter 12: Developing and Implemenng a Simple Datamart 367

Exploring the sales datamart 367

Deciding the level of granularity 370

Loading the dimensions 370

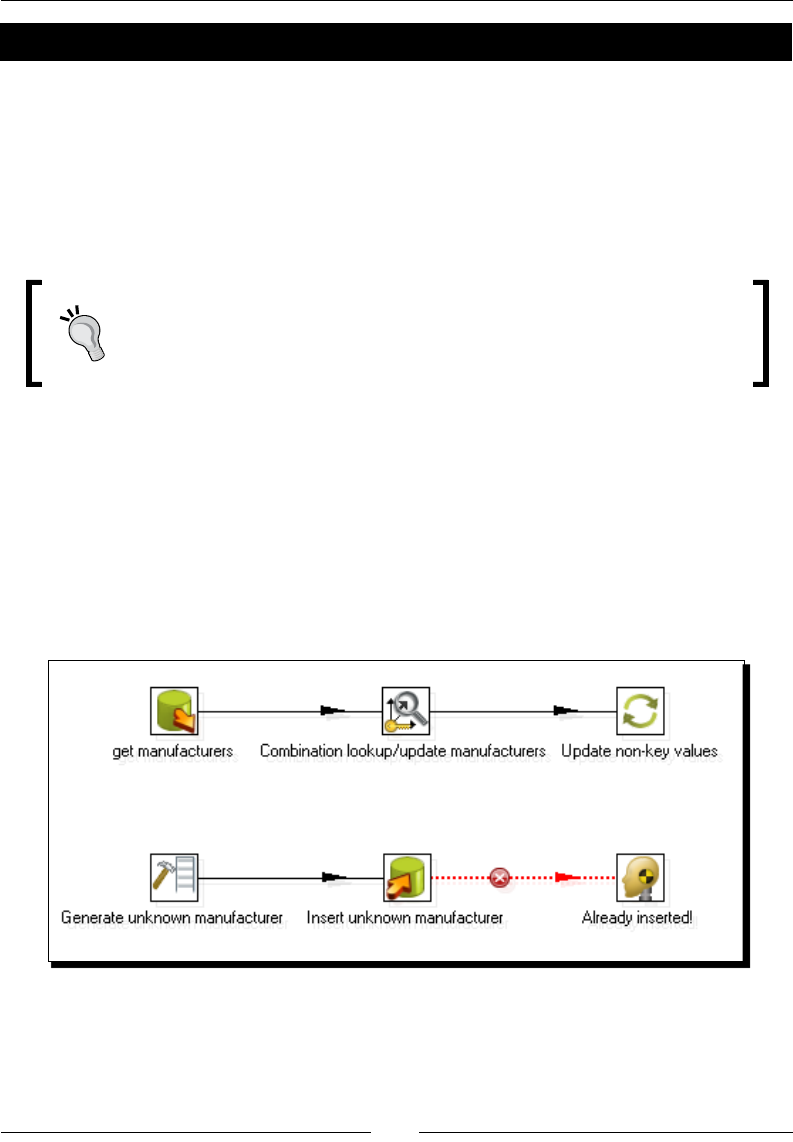

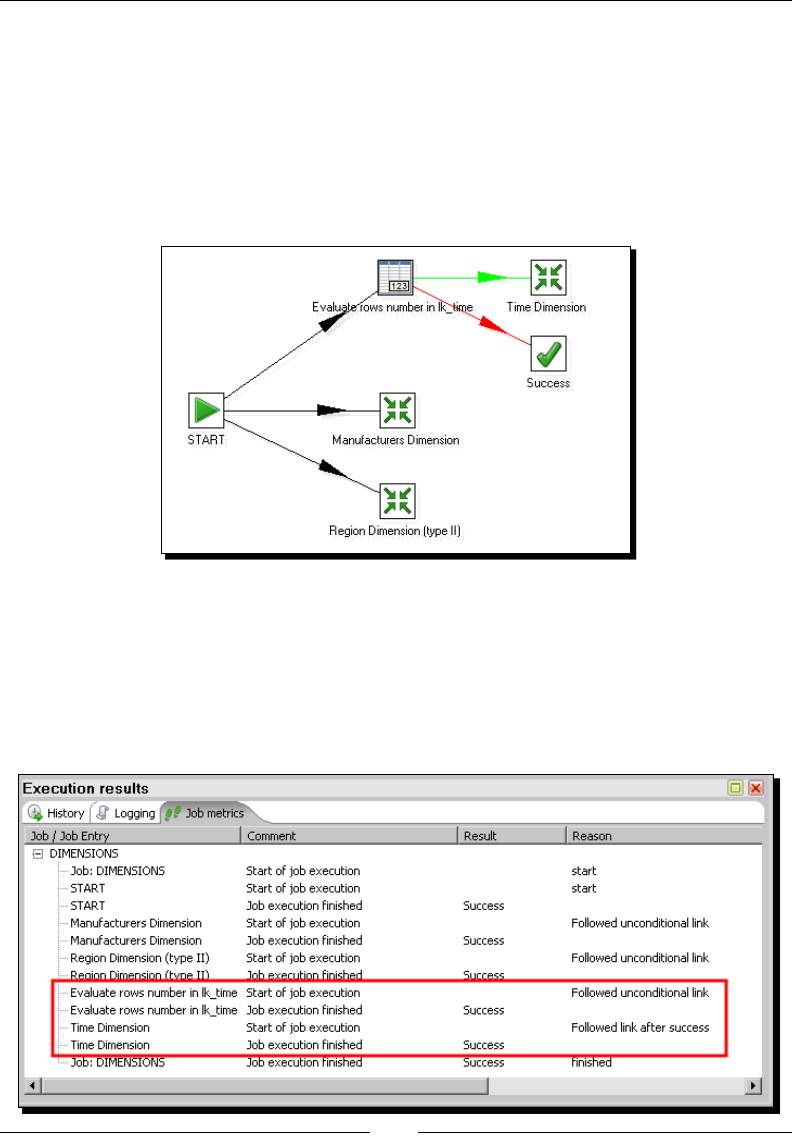

Time for acon – loading dimensions for the sales datamart 371

Extending the sales datamart model 376

Loading a fact table with aggregated data 378

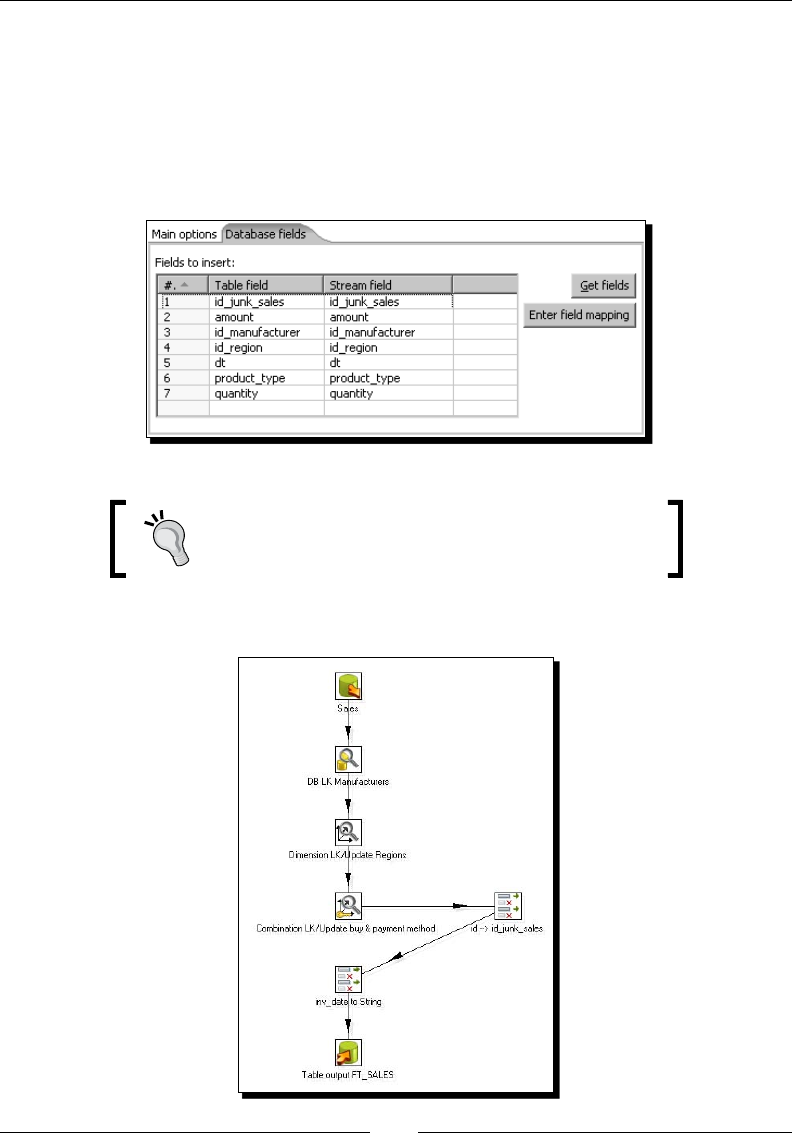

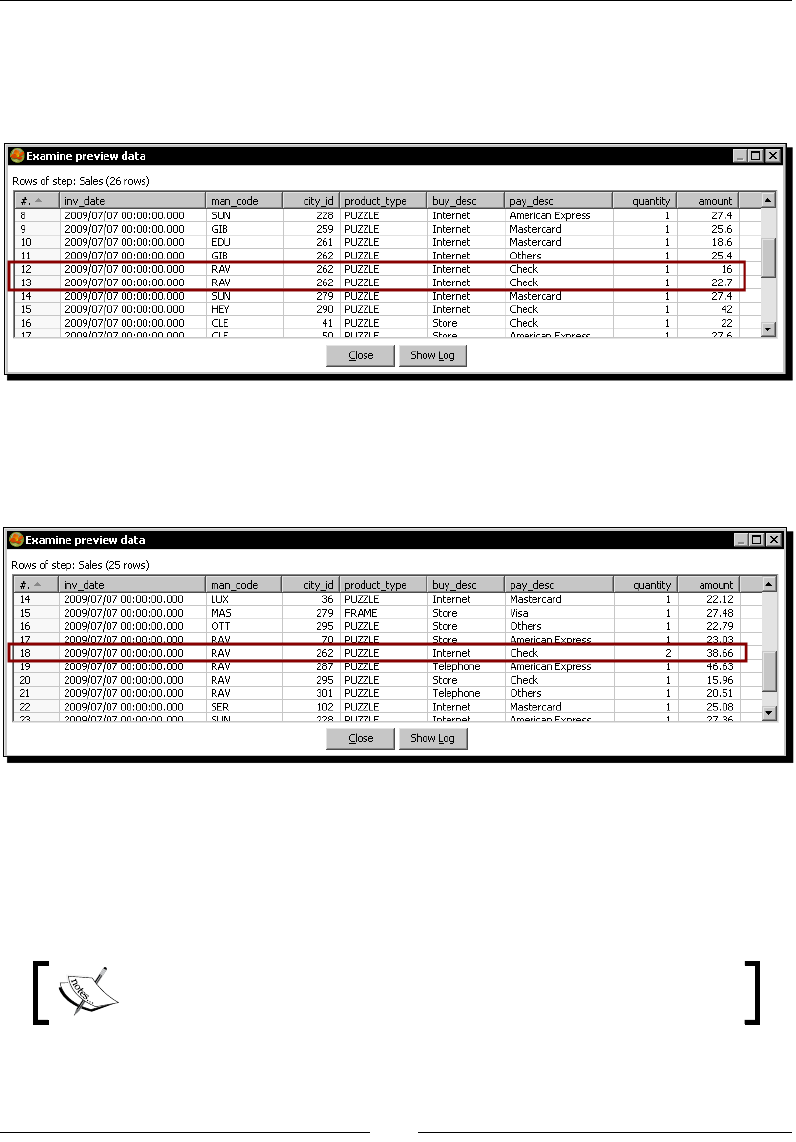

Time for acon – loading the sales fact table by looking up dimensions 378

Geng the informaon from the source with SQL queries 384

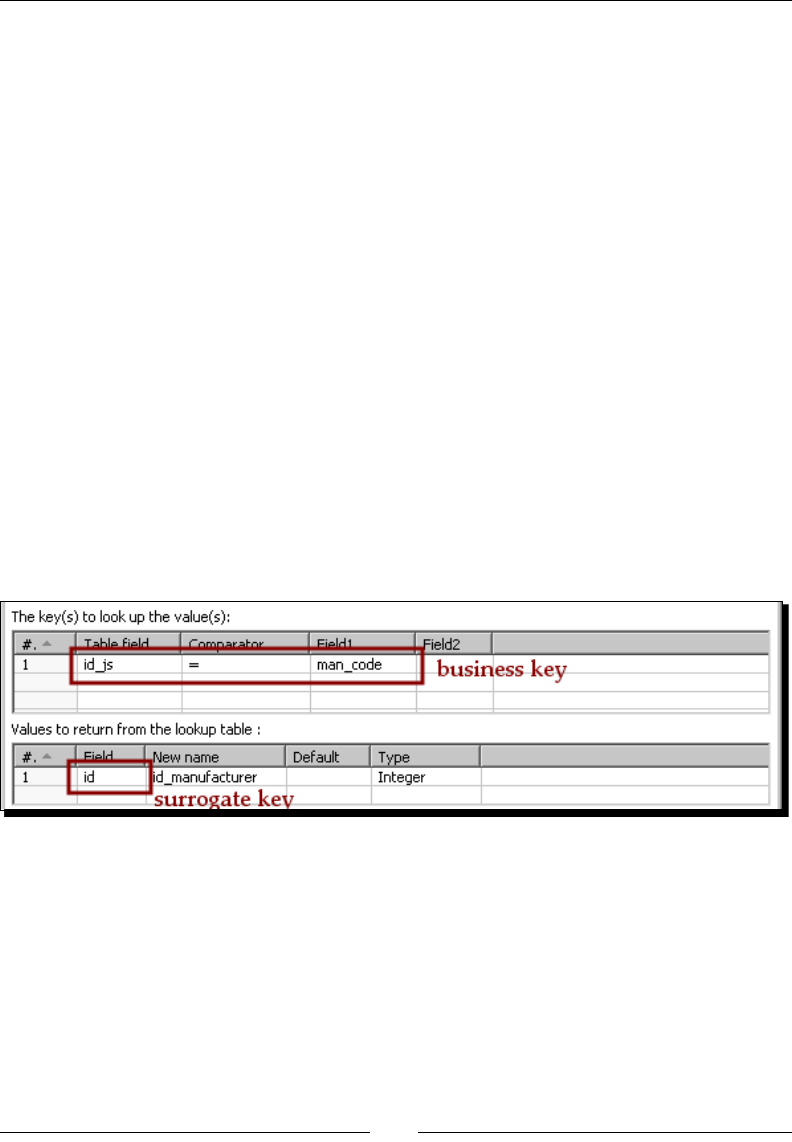

Translang the business keys into surrogate keys 388

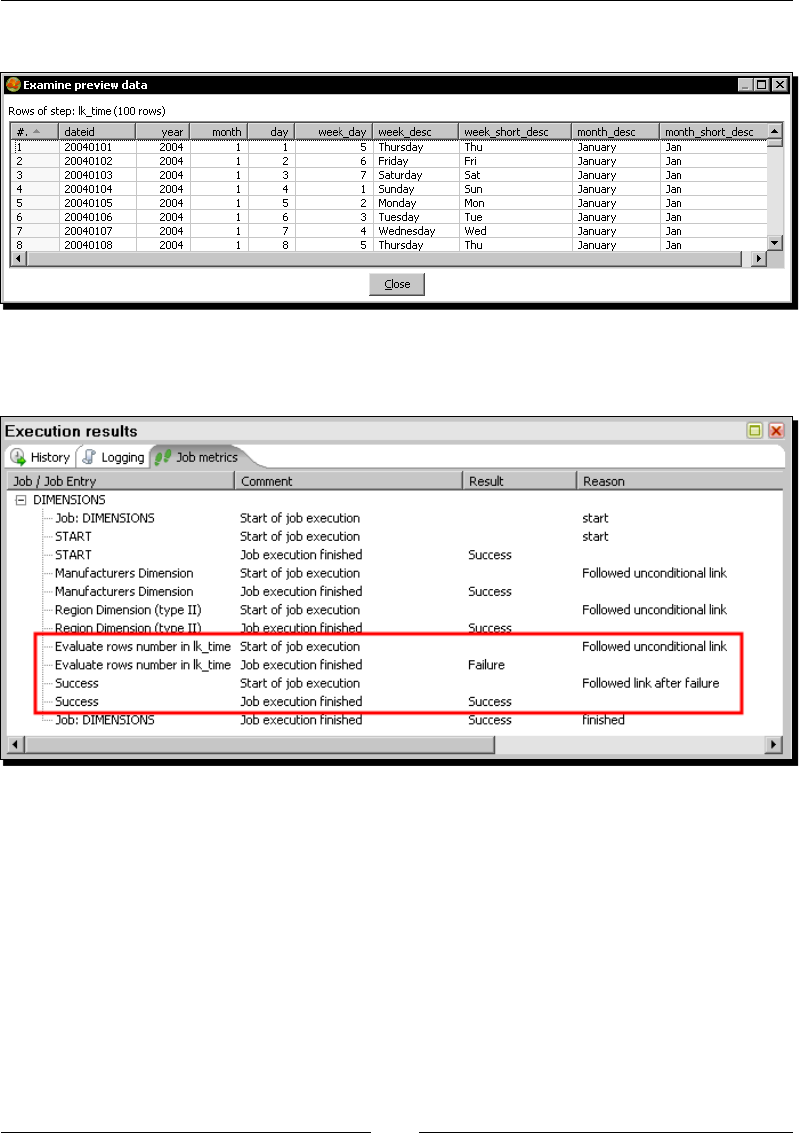

Obtaining the surrogate key for a Type I SCD 388

Obtaining the surrogate key for a Type II SCD 389

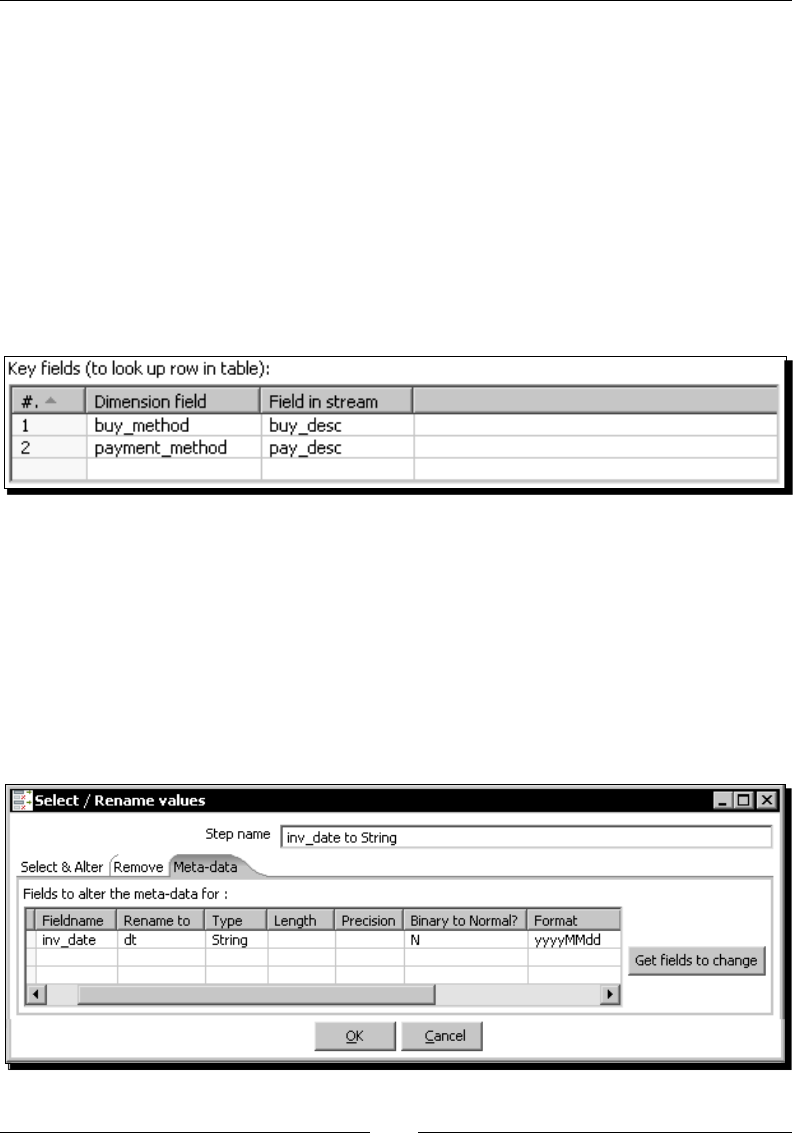

Obtaining the surrogate key for the Junk dimension 391

Obtaining the surrogate key for the Time dimension 391

Table of Contents

[ viii ]

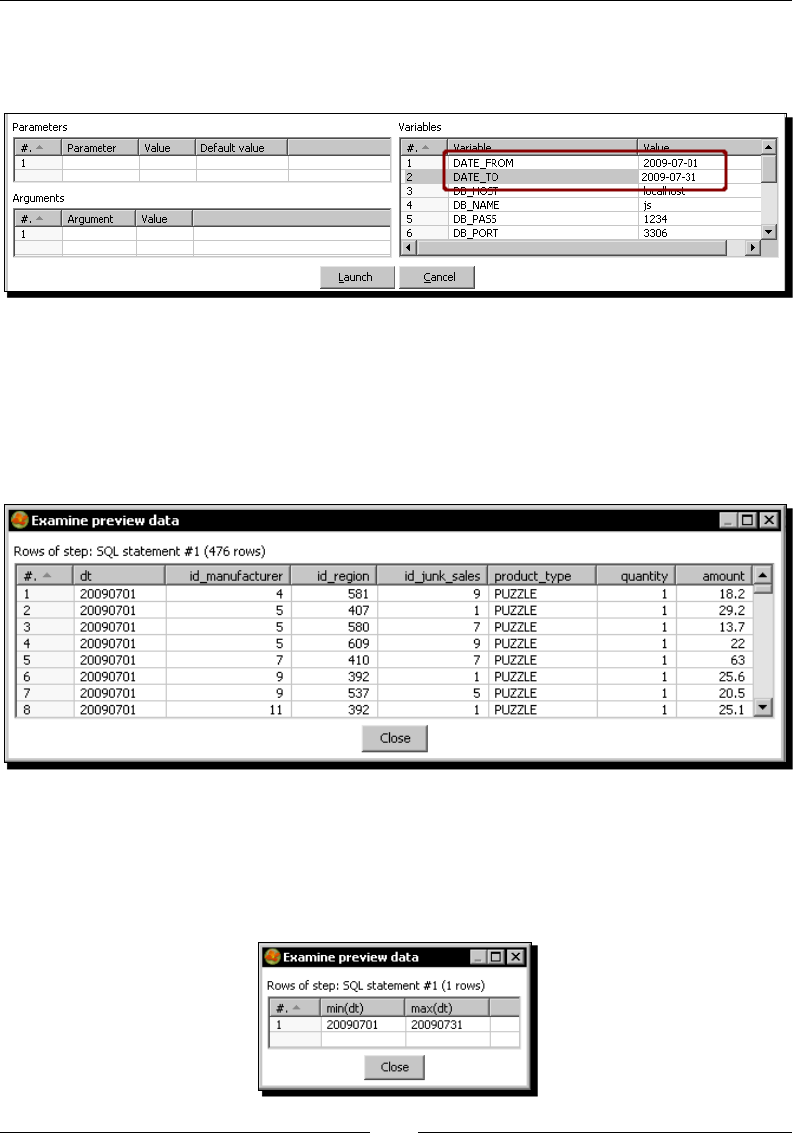

Geng facts and dimensions together 394

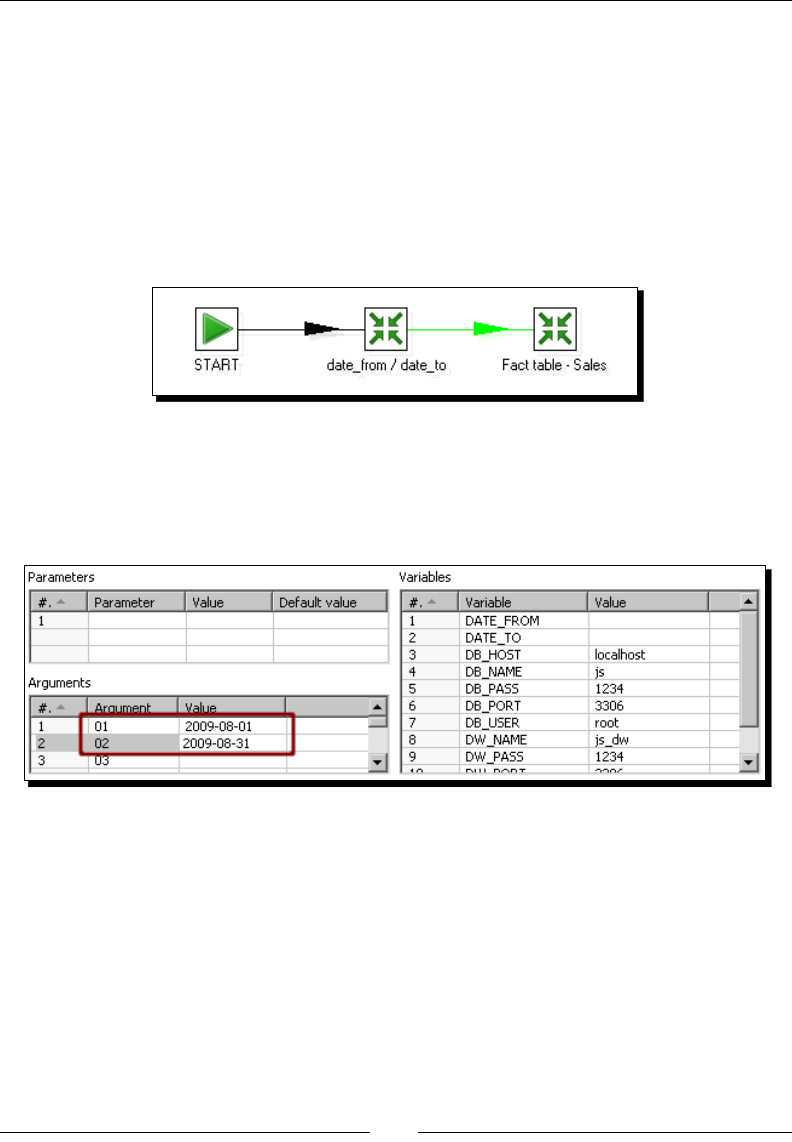

Time for acon – loading the fact table using a range of dates obtained

from the command line 394

Time for acon – loading the sales star 396

Geng rid of administrave tasks 399

Time for acon – automang the loading of the sales datamart 399

Summary 403

Chapter 13: Taking it Further 405

PDI best pracces 405

Geng the most out of PDI 408

Extending Kele with plugins 408

Overcoming real world risks with some remote execuon 410

Scaling out to overcome bigger risks 411

Integrang PDI and the Pentaho BI suite 412

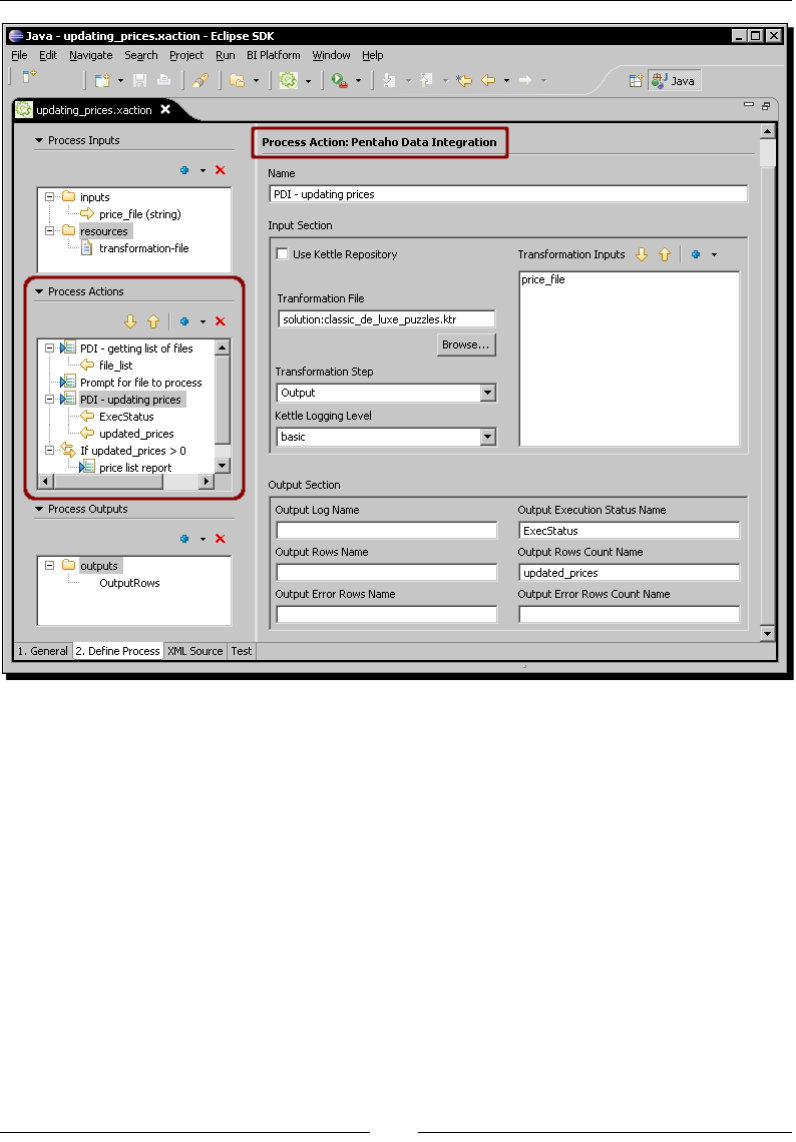

PDI as a process acon 412

PDI as a datasource 413

More about the Pentaho suite 414

PDI Enterprise Edion and Kele Developer Support 415

Summary 416

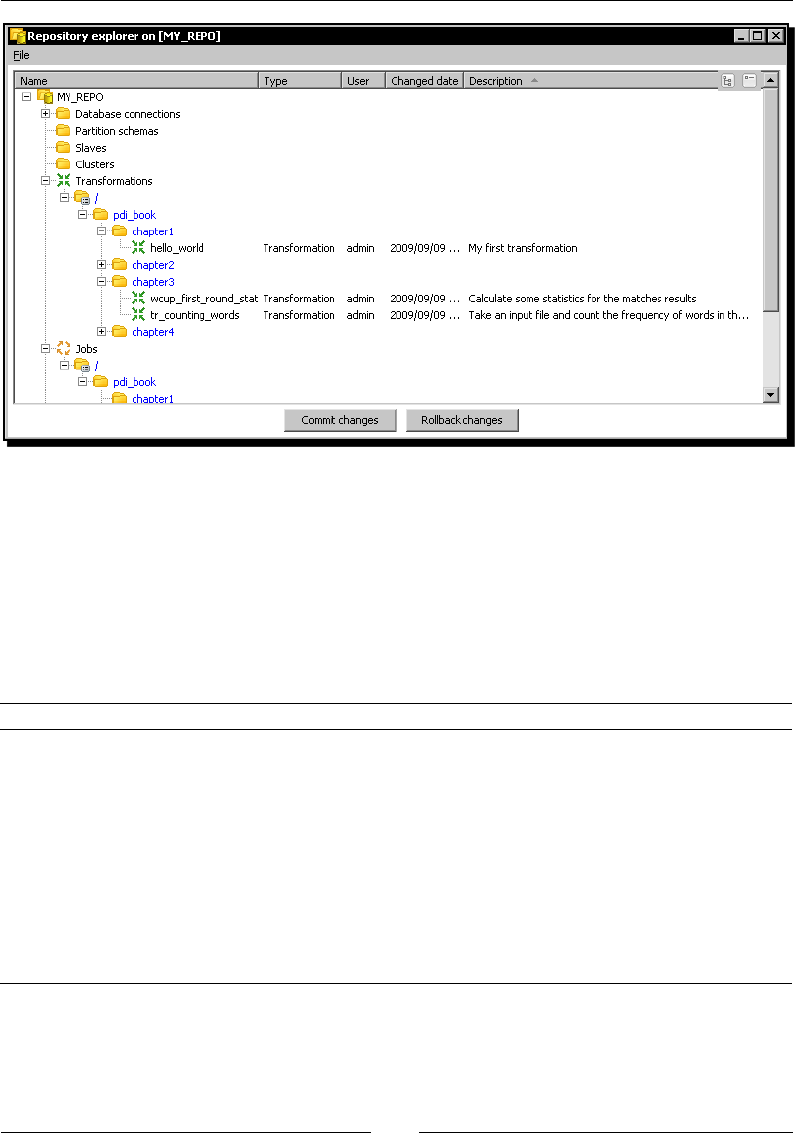

Appendix A: Working with Repositories 417

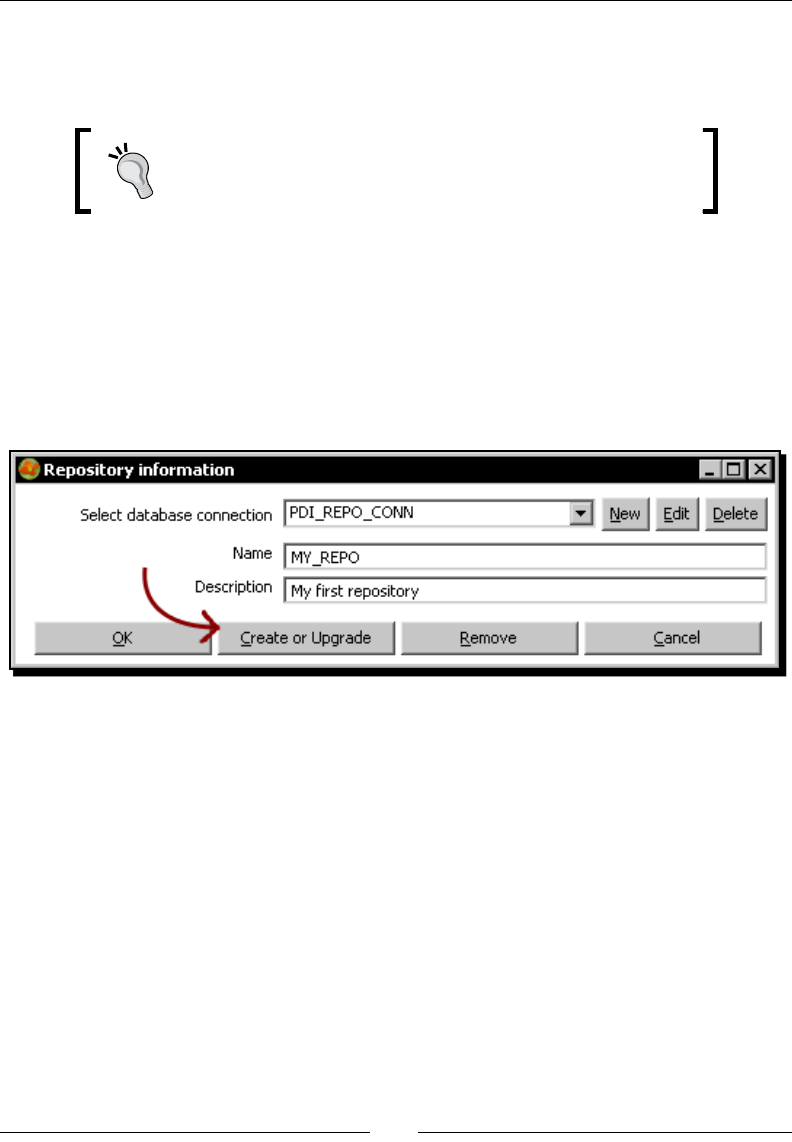

Creang a repository 418

Time for acon – creang a PDI repository 418

Creang repositories to store your transformaons and jobs 420

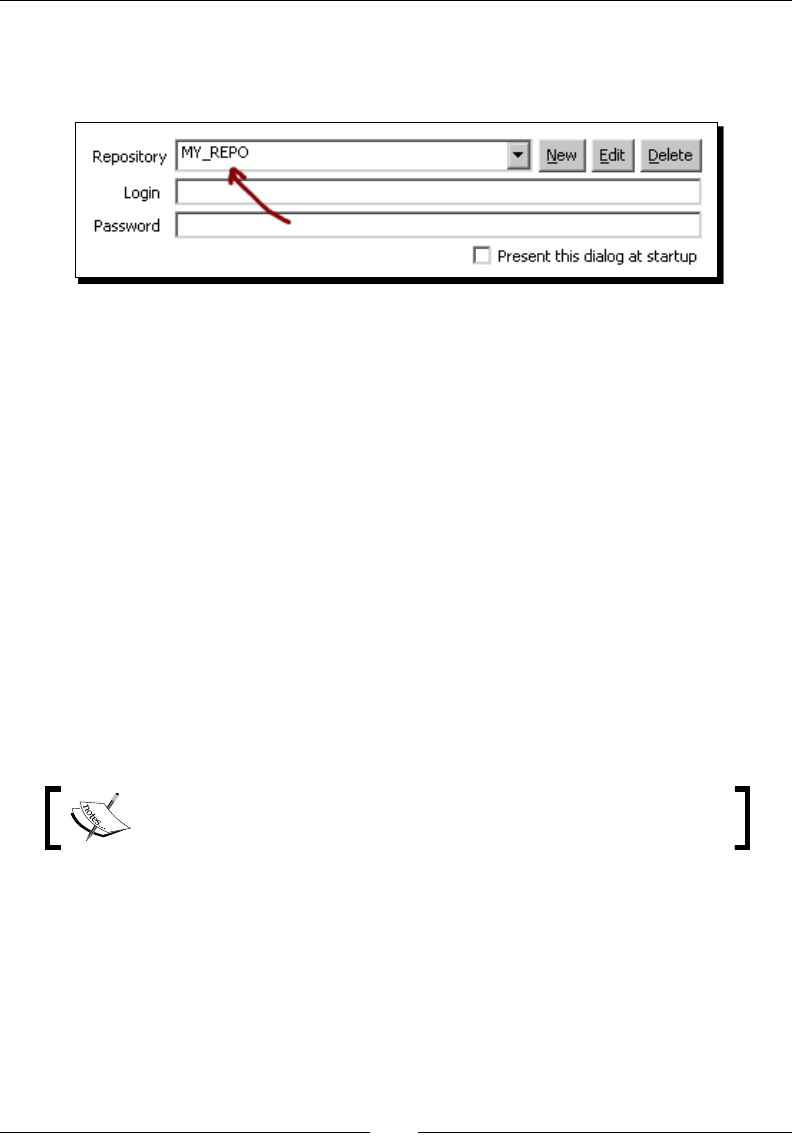

Working with the repository storage system 421

Time for acon – logging into a repository 421

Logging into a repository by using credenals 422

Dening repository user accounts 422

Creang transformaons and jobs in repository folders 423

Creang database connecons, parons, servers, and clusters 424

Backing up and restoring a repository 424

Examining and modifying the contents of a repository with

the Repository explorer 424

Migrang from a le-based system to a repository-based system and

vice-versa 426

Summary 427

Appendix B: Pan and Kitchen: Launching Transformaons and

Jobs from the Command Line 429

Running transformaons and jobs stored in les 429

Running transformaons and jobs from a repository 430

Specifying command line opons 431

Table of Contents

[ ix ]

Checking the exit code 432

Providing opons when running Pan and Kitchen 432

Log details 433

Named parameters 433

Arguments 433

Variables 433

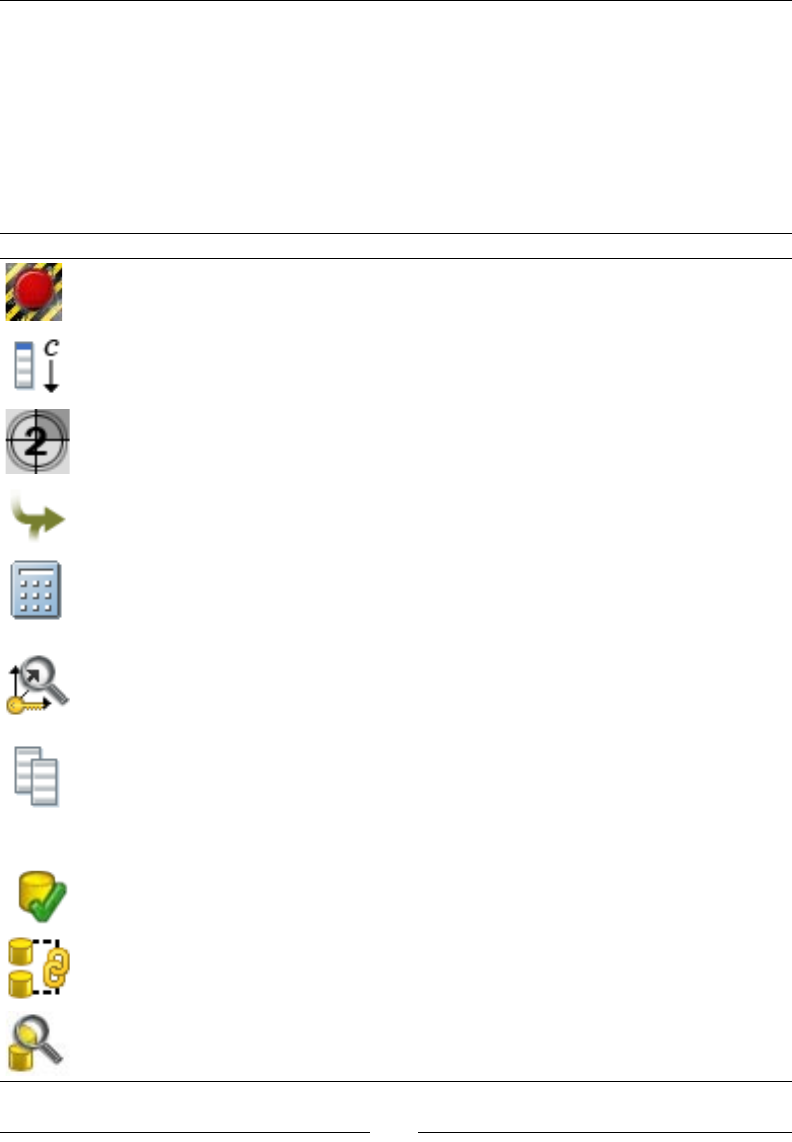

Appendix C: Quick Reference: Steps and Job Entries 435

Transformaon steps 436

Job entries 440

Appendix D: Spoon Shortcuts 443

General shortcuts 443

Designing transformaons and jobs 444

Grids 445

Repositories 445

Appendix E: Introducing PDI 4 Features 447

Agile BI 447

Visual improvements for designing transformaons and jobs 447

Experiencing the mouse-over assistance 447

Time for acon – creang a hop with the mouse-over assistance 448

Using the mouse-over assistance toolbar 448

Experiencing the sni-tesng feature 449

Experiencing the job drill-down feature 449

Experiencing even more visual changes 450

Enterprise features 450

Summary 450

Appendix F: Pop Quiz Answers 451

Chapter 1 451

PDI data sources 451

PDI prerequisites 451

PDI basics 451

Chapter 2 452

formang data 452

Chapter 3 452

concatenang strings 452

Chapter 4 452

data movement (copying and distribung) 452

spling a stream 452

Chapter 5 453

nding the seven errors 453

Table of Contents

[ x ]

Chapter 6 453

using Kele variables inside transformaons 453

Chapter 7 453

PDI error handling 453

Chapter 8 454

dening database connecons 454

database datatypes versus PDI datatypes 454

Insert/Update step versus Table Output/Update steps 454

ltering the rst 10 rows 454

Chapter 9 454

loading slowly changing dimensions 454

loading type III slowly changing dimensions 455

Chapter 10 455

dening PDI jobs 455

Chapter 11 455

using the Add sequence step 455

deciding the scope of variables 455

Chapter 12 456

modifying a star model and loading the star with PDI 456

Chapter 13 456

remote execuon and clustering 456

Index 457

Preface

Pentaho Data Integraon (aka Kele) is an engine along with a suite of tools responsible

for the processes of Extracng, Transforming, and Loading—beer known as the ETL

processes. PDI not only serves as an ETL tool, but it's also used for other purposes such as

migrang data between applicaons or databases, exporng data from databases to at

les, data cleansing, and much more. PDI has an intuive, graphical, drag-and-drop design

environment, and its ETL capabilies are powerful. However, geng started with PDI can be

dicult or confusing. This book provides the guidance needed to overcome that diculty,

covering the key features of PDI. Each chapter introduces new features, allowing you to

gradually get involved with the tool.

By the end of the book, you will have not only experimented with all kinds of examples, but

will also have built a basic but complete datamart with the help of PDI.

How to read this book

Although it is recommended that you read all the chapters, you don't need to. The book

allows you to tailor the PDI learning process according to your parcular needs.

The rst four chapters, along with Chapter 7 and Chapter 10, cover the core concepts. If

you don't know PDI and want to learn just the basics, reading those chapters would suce.

Besides, if you need to work with databases, you could include Chapter 8 in the roadmap.

If you already know the basics, you can improve your PDI knowledge by reading chapters 5,

6, and 11.

Finally, if you already know PDI and want to learn how to use it to load or maintain a

datawarehouse or datamart, you will nd all that you need in chapters 9 and 12.

While Chapter 13 is useful for anyone who is willing to take it further, all the appendices are

valuable resources for anyone who reads this book.

Preface

[ 2 ]

What this book covers

Chapter 1, Geng started with Pentaho Data Integraon serves as the most basic

introducon to PDI, presenng the tool. The chapter includes instrucons for installing PDI

and gives you the opportunity to play with the graphical designer (Spoon). The chapter also

includes instrucons for installing a MySQL server.

Chapter 2, Geng Started with Transformaons introduces one of the basic components

of PDI—transformaons. Then, it focuses on the explanaon of how to work with les. It

explains how to get data from simple input sources such as txt, csv, xml, and so on, do a

preview of the data, and send the data back to any of these common output formats. The

chapter also explains how to read command-line parameters and system informaon.

Chapter 3, Basic Data Manipulaon explains the simplest and most commonly used ways of

transforming data, including performing calculaons, adding constants, counng, ltering,

ordering, and looking for data.

Chapter 4—Controlling the Flow of Data explains dierent opons that PDI oers to combine

or split ows of data.

Chapter 5, Transforming Your Data with JavaScript Code and the JavaScript Step explains how

JavaScript coding can help in the treatment of data. It shows why you need to code inside

PDI, and explains in detail how to do it.

Chapter 6, Transforming the Row Set explains the ability of PDI to deal with some

sophiscated problems, such as normalizing data from pivoted tables, in a simple fashion.

Chapter 7, Validang Data and Handling Errors explains the dierent opons that PDI has to

validate data, and how to treat the errors that may appear.

Chapter 8, Working with Databases explains how to use PDI to work with databases. The

list of topics covered includes connecng to a database, previewing and geng data, and

inserng, updang, and deleng data. As database knowledge is not presumed, the chapter

also covers fundamental concepts of databases and the SQL language.

Chapter 9, Performing Advanced Operaons with Databases explains how to perform

advanced operaons with databases, including those specially designed to load

datawarehouses. A primer on datawarehouse concepts is also given in case you are not

familiar with the subject.

Chapter 10, Creang Basic Task Flow serves as an introducon to processes in PDI. Through

the creaon of simple jobs, you will learn what jobs are and what they are used for.

Chapter 11, Creang Advanced Transformaons and Jobs deals with advanced concepts that

will allow you to build complex PDI projects. The list of covered topics includes nesng jobs,

iterang on jobs and transformaons, and creang subtransformaons.

Preface

[ 3 ]

Chapter 12, Developing and implemenng a simple datamart presents a simple datamart

project, and guides you to build the datamart by using all the concepts learned throughout

the book.

Chapter 13, Taking it Further gives a list of best PDI pracces and recommendaons for

going beyond.

Appendix A, Working with repositories guides you step by step in the creaon of a PDI

database repository and then gives instrucons to work with it.

Appendix B, Pan and Kitchen: Launching Transformaons and Jobs from the Command Line is

a quick reference for running transformaons and jobs from the command line.

Appendix C, Quick Reference: Steps and Job Entries serves as a quick reference to steps and

job entries used throughout the book.

Appendix D, Spoon Shortcuts is an extensive list of Spoon shortcuts useful for saving me

when designing and running PDI jobs and transformaons.

Appendix E, Introducing PDI 4 features quickly introduces you to the architectural and

funconal features included in Kele 4—the version that was under development while

wring this book.

Appendix F, Pop Quiz Answers, contains answers to pop quiz quesons.

What you need for this book

PDI is a mulplaorm tool. This means no maer what your operang system is, you will

be able to work with the tool. The only prerequisite is to have JVM 1.5 or a higher version

installed. It is also useful to have Excel or Calc along with a nice text editor.

Having an Internet connecon while reading is extremely useful as well. Several links are

provided throughout the book that complement what is explained. Besides, there is the

PDI forum where you may search or post doubts if you are stuck with something.

Who this book is for

This book is for soware developers, database administrators, IT students, and everyone

involved or interested in developing ETL soluons or, more generally, doing any kind of data

manipulaon. If you have never used PDI before, this will be a perfect book to start with.

You will nd this book to be a good starng point if you are a database administrator, a data

warehouse designer, an architect, or any person who is responsible for data warehouse

projects and need to load data into them.

Preface

[ 4 ]

You don't need to have any prior data warehouse or database experience to read this book.

Fundamental database and data warehouse technical terms and concepts are explained in

an easy-to-understand language.

Conventions

In this book, you will nd a number of styles of text that disnguish between dierent

kinds of informaon. Here are some examples of these styles, and an explanaon of

their meaning.

Code words in text are shown as follows: "You read the examination.txt le, and did

some calculaons to see how the students did."

New terms and important words are shown in bold. Words that you see on the screen, in

menus or dialog boxes for example, appear in our text like this: "Edit the Sort rows step by

double-clicking it, click the Get Fields buon, and adjust the grid."

Warnings or important notes appear in a box like this.

Tips and tricks appear like this.

Reader feedback

Feedback from our readers is always welcome. Let us know what you think about this book—

what you liked or may have disliked. Reader feedback is important for us to develop tles

that you really get the most out of.

To send us general feedback, simply drop an email to feedback@packtpub.com, and

menon the book tle in the subject of your message.

If there is a book that you need and would like to see us publish, please send us a note in the

SUGGEST A TITLE form on www.packtpub.com or email suggest@packtpub.com.

If there is a topic that you have experse in and you are interested in either wring or

contribung to a book, see our author guide on www.packtpub.com/authors.

Preface

[ 5 ]

Customer support

Now that you are the proud owner of a Packt book, we have a number of things to help you

to get the most from your purchase.

Downloading the example code for the book

Visit http://www.packtpub.com/files/code/9546_Code.zip to

directly download the example code.

The downloadable les contain instrucons on how to use them.

Errata

Although we have taken every care to ensure the accuracy of our contents, mistakes do

happen. If you nd a mistake in one of our books—maybe a mistake in text or code—we

would be grateful if you would report this to us. By doing so, you can save other readers

from frustraon, and help us to improve subsequent versions of this book. If you nd any

errata, please report them by vising http://www.packtpub.com/support, selecng

your book, clicking on the let us know link, and entering the details of your errata.

Once your errata are veried, your submission will be accepted and the errata added

to any list of exisng errata. Any exisng errata can be viewed by selecng your tle

from http://www.packtpub.com/support.

Piracy

Piracy of copyright material on the Internet is an ongoing problem across all media. At Packt,

we take the protecon of our copyright and licenses very seriously. If you come across any

illegal copies of our works in any form on the Internet, please provide us with the locaon

address or website name immediately so that we can pursue a remedy.

Please contact us at copyright@packtpub.com with a link to the suspected pirated material.

We appreciate your help in protecng our authors, and our ability to bring you

valuable content.

Questions

You can contact us at questions@packtpub.com if you are having a problem with any

aspect of the book, and we will do our best to address it.

1

Getting Started with Pentaho

Data Integration

Pentaho Data Integraon is an engine along with a suite of tools responsible

for the processes of extracng, transforming, and loading—best known as the

ETL processes. This book is meant to teach you how to use PDI.

In this chapter you will:

Learn what Pentaho Data Integraon is

Install the soware and start working with the PDI graphical designer

Install MySQL, a database engine that you will use when you start working

with databases

Pentaho Data Integration and Pentaho BI Suite

Before introducing PDI, let's talk about Pentaho BI Suite. The Pentaho Business Intelligence

Suite is a collecon of soware applicaons intended to create and deliver soluons for

decision making. The main funconal areas covered by the suite are:

Analysis: The analysis engine serves muldimensional analysis. It's provided by the

Mondrian OLAP server and the JPivot library for navigaon and exploring.

Geng Started with Pentaho Data Integraon

[ 8 ]

Reporng: The reporng engine allows designing, creang, and distribung reports

in various known formats (HTML, PDF, and so on) from dierent kinds of sources.

The reports created in Pentaho are based mainly in the JFreeReport library, but it's

possible to integrate reports created with external reporng libraries such as Jasper

Reports or BIRT.

Data Mining: Data mining is running data through algorithms in order to understand

the business and do predicve analysis. Data mining is possible thanks to the

Weka Project.

Dashboards: Dashboards are used to monitor and analyze Key Performance

Indicators (KPIs). A set of tools incorporated to the BI Suite in the latest version

allows users to create interesng dashboards, including graphs, reports, analysis

views, and other Pentaho content, without much eort.

Data integraon: Data integraon is used to integrate scaered informaon

from dierent sources (applicaons, databases, les) and make the integrated

informaon available to the nal user. Pentaho Data Integraon—our main

concern—is the engine that provides this funconality.

All this funconality can be used standalone as well as integrated. In order to run analysis,

reports, and so on integrated as a suite, you have to use the Pentaho BI Plaorm. The

plaorm has a soluon engine, and oers crical services such as authencaon,

scheduling, security, and web services.

Chapter 1

[ 9 ]

This set of soware and services forms a complete BI Plaorm, which makes Pentaho Suite

the world's leading open source Business Intelligence Suite.

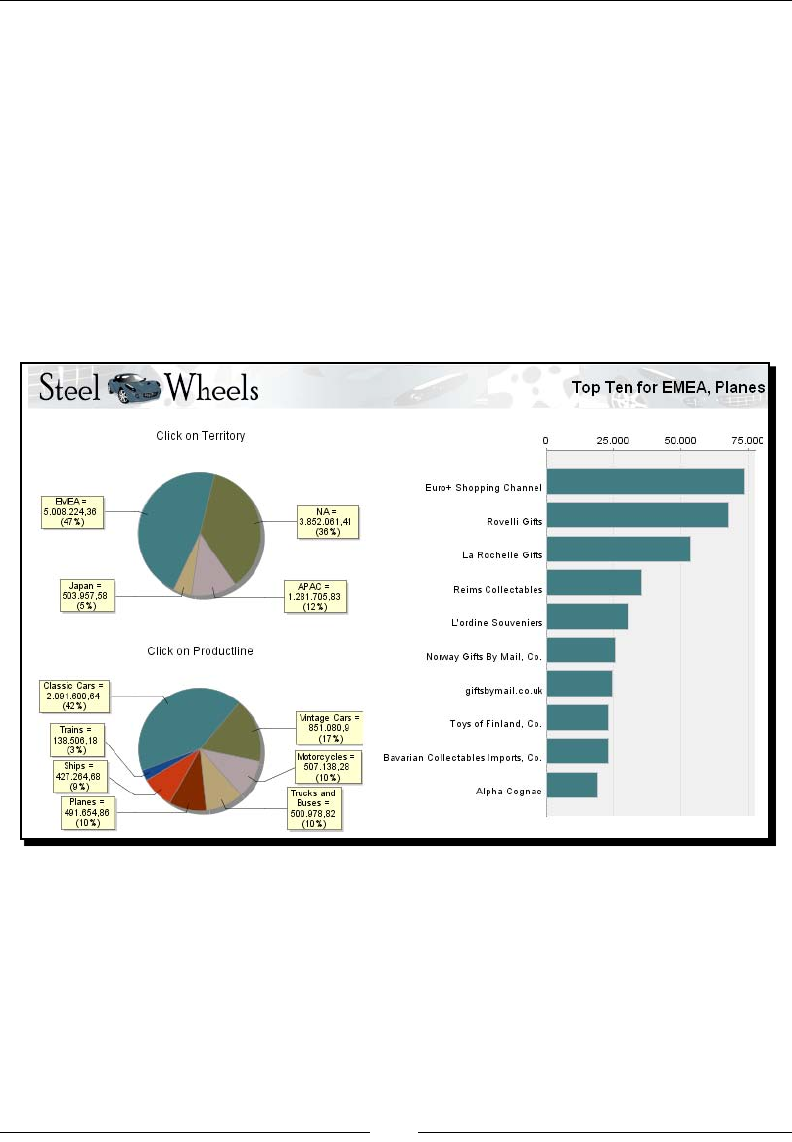

Exploring the Pentaho Demo

Despite being out of the scope of this book, it's worth to briey introduce the Pentaho

Demo. The Pentaho BI Plaorm Demo is a precongured installaon that lets you explore

several capabilies of the Pentaho plaorm. It includes sample reports, cubes, and

dashboards for Steel Wheels. Steel Wheels is a conal store that sells all kind of scale

replicas of vehicles.

The demo can be downloaded from http://sourceforge.net/projects/pentaho/

files/. Under the Business Intelligence Server folder, look for the latest stable

version. The le you have to download is named biserver-ce-3.5.2.stable.zip for

Windows and biserver-ce-3.5.2.stable.tar.gz for other systems.

In the same folder you will nd a le named biserver-getting_started-ce-

3.5.0.pdf. The le is a guide that introduces you the plaorm and gives you some

guidance on how to install and run it. The guide even includes a mini tutorial on building

a simple PDI input-output transformaon.

You can nd more about Pentaho BI Suite at www.pentaho.org.

Pentaho Data Integration

Most of the Pentaho engines, including the engines menoned earlier, were created as

community projects and later adopted by Pentaho. The PDI engine is no excepon—Pentaho

Data Integraon is the new denominaon for the business intelligence tool born as Kele.

The name Kele didn't come from the recursive acronym Kele Extracon,

Transportaon, Transformaon, and Loading Environment it has now, but from

KDE Extracon, Transportaon, Transformaon and Loading Environment,

as the tool was planned to be wrien on top of KDE, as menoned in the

introducon of the book.

In April 2006 the Kele project was acquired by the Pentaho Corporaon and Ma Casters,

Kele's founder, also joined the Pentaho team as a Data Integraon Architect.

Geng Started with Pentaho Data Integraon

[ 10 ]

When Pentaho announced the acquision, James Dixon, the Chief Technology Ocer, said:

We reviewed many alternaves for open source data integraon, and Kele clearly

had the best architecture, richest funconality, and most mature user interface.

The open architecture and superior technology of the Pentaho BI Plaorm

and Kele allowed us to deliver integraon in only a few days, and make that

integraon available to the community.

By joining forces with Pentaho, Kele beneted from a huge developer community, as well

as from a company that would support the future of the project.

From that moment the tool has grown constantly. Every few months a new release is

available, bringing to the users, improvements in performance and exisng funconality,

new funconality, ease of use, and great changes in look and feel. The following is a meline

of the major events related to PDI since its acquision by Pentaho:

June 2006: PDI 2.3 is released. Numerous developers had joined the project and

there were bug xes provided by people in various regions of the world. Among

other changes, the version included enhancements for large scale environments

and mullingual capabilies.

February 2007: Almost seven months aer the last major revision, PDI 2.4 is

released including remote execuon and clustering support (more on this in

Chapter 13), enhanced database support, and a single designer for the two

main elements you design in Kele—jobs and transformaons.

May 2007: PDI 2.5 is released including many new features, the main feature being

the advanced error handling.

November 2007: PDI 3.0 emerges totally redesigned. Its major library changed to

gain massive performance. The look and feel also changed completely.

October 2008: PDI 3.1 comes with an easier-to-use tool, along with a lot of new

funconalies as well.

April 2009: PDI 3.2 is released with a really large number of changes for a

minor version—new funconality, visualizaon improvements, performance

improvements, and a huge pile of bug xes. The main change in this version was the

incorporaon of dynamic clustering (see Chapter 13 for details).

In 2010 PDI 4.0 will be released, delivering mostly improvements with regard to

enterprise features such as version control.

Most users sll refer to PDI as Kele, its further name. Therefore, the names PDI,

Pentaho Data Integraon, and Kele will be used interchangeably throughout

the book.

Chapter 1

[ 11 ]

Using PDI in real world scenarios

Paying aenon to its name, Pentaho Data Integraon, you could think of PDI as a tool to

integrate data.

In you look at its original name, K.E.T.T.L.E., then you must conclude that it is a tool used

for ETL processes which, as you may know, are most frequently seen in data warehouse

environments.

In fact, PDI not only serves as a data integrator or an ETL tool, but is such a powerful tool

that it is common to see it used for those and for many other purposes. Here you have

some examples.

Loading datawarehouses or datamarts

The loading of a datawarehouse or a datamart involves many steps, and there are many

variants depending on business area or business rules. However, in every case, the process

involves the following steps:

Extracng informaon from one or dierent databases, text les, and other sources.

The extracon process may include the task of validang and discarding data that

doesn't match expected paerns or rules.

Transforming the obtained data to meet the business and technical needs required

on the target. Transformaon implies tasks such as converng data types, doing

some calculaons, ltering irrelevant data, and summarizing.

Loading the transformed data into the target database. Depending on the

requirements, the loading may overwrite the exisng informaon, or may

add new informaon each me it is executed.

Geng Started with Pentaho Data Integraon

[ 12 ]

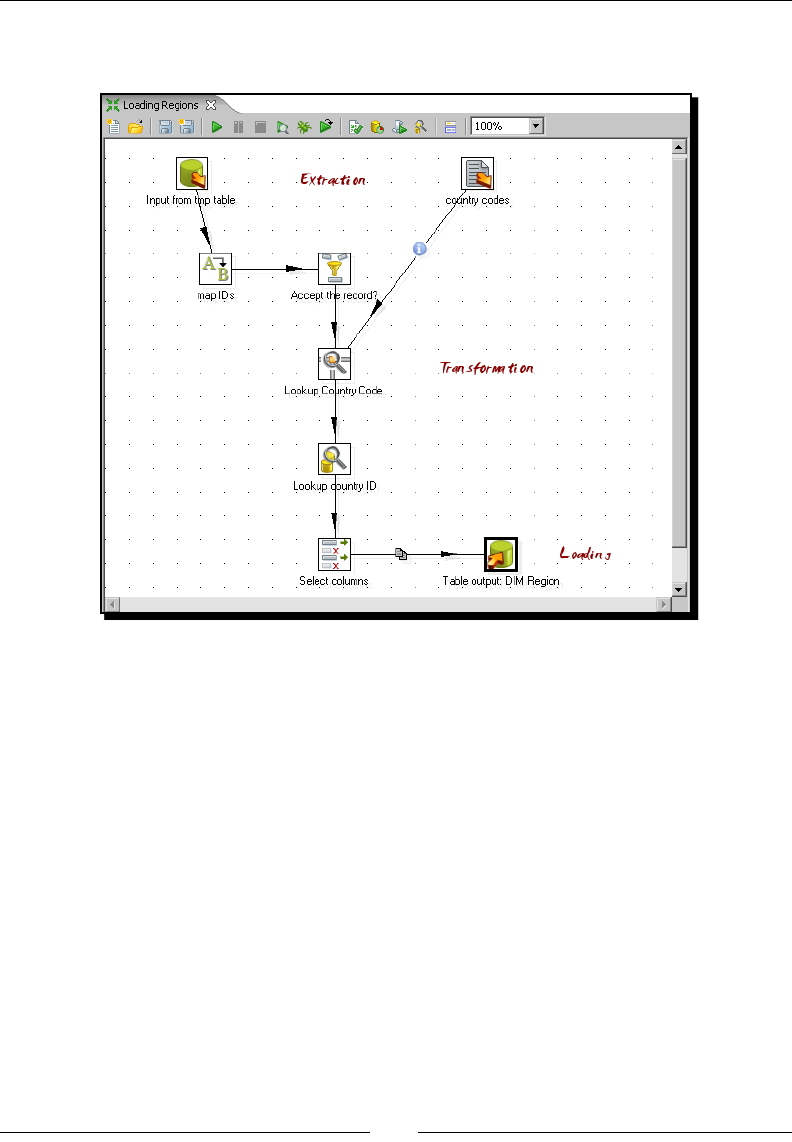

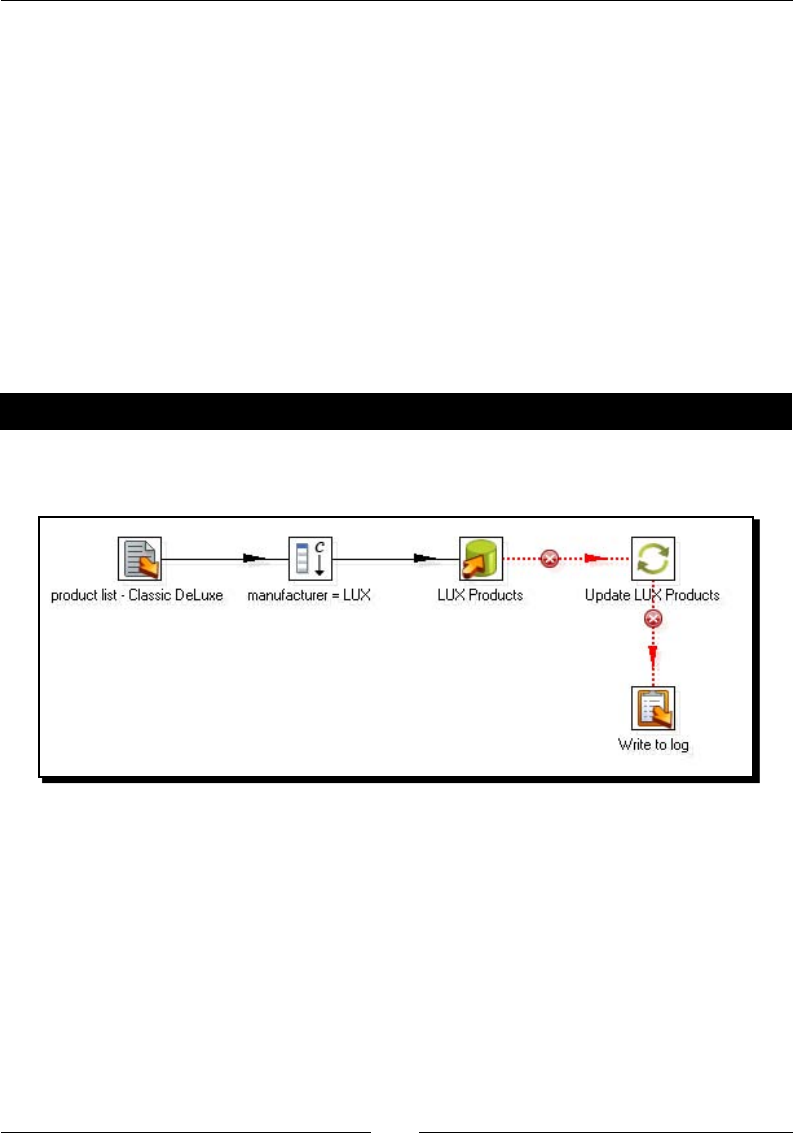

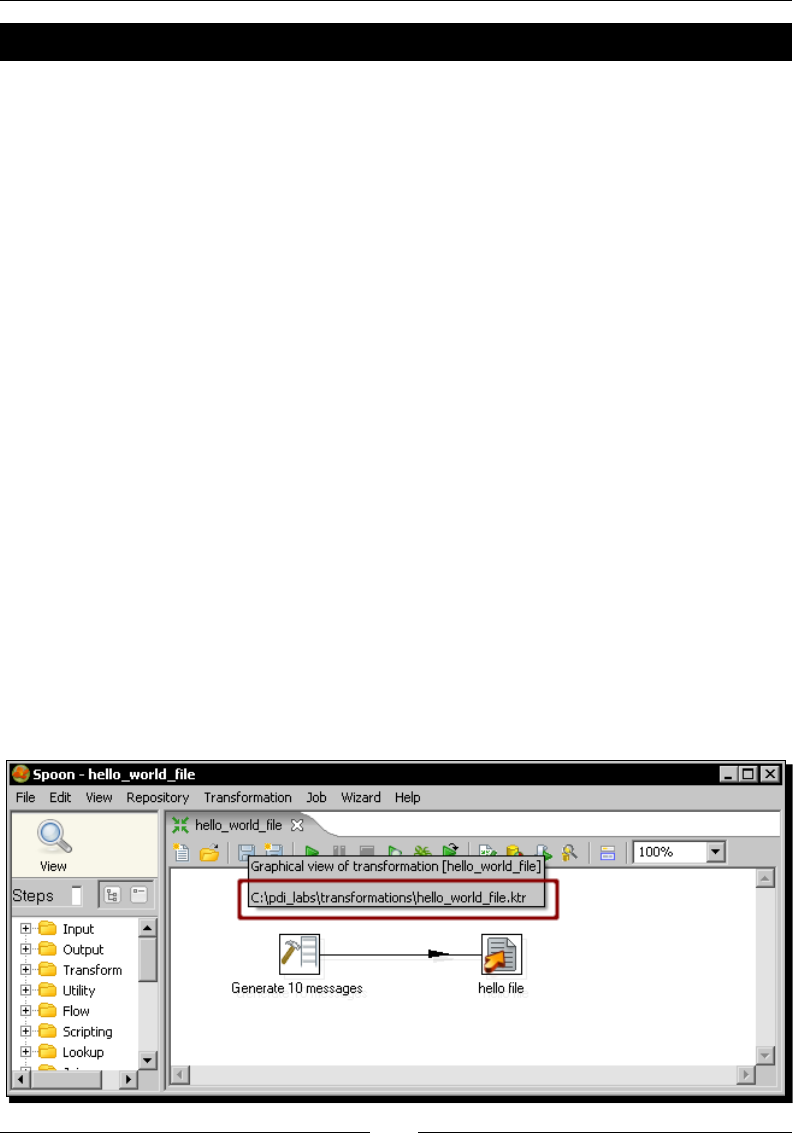

Kele comes ready to do every stage of this loading process. The following sample

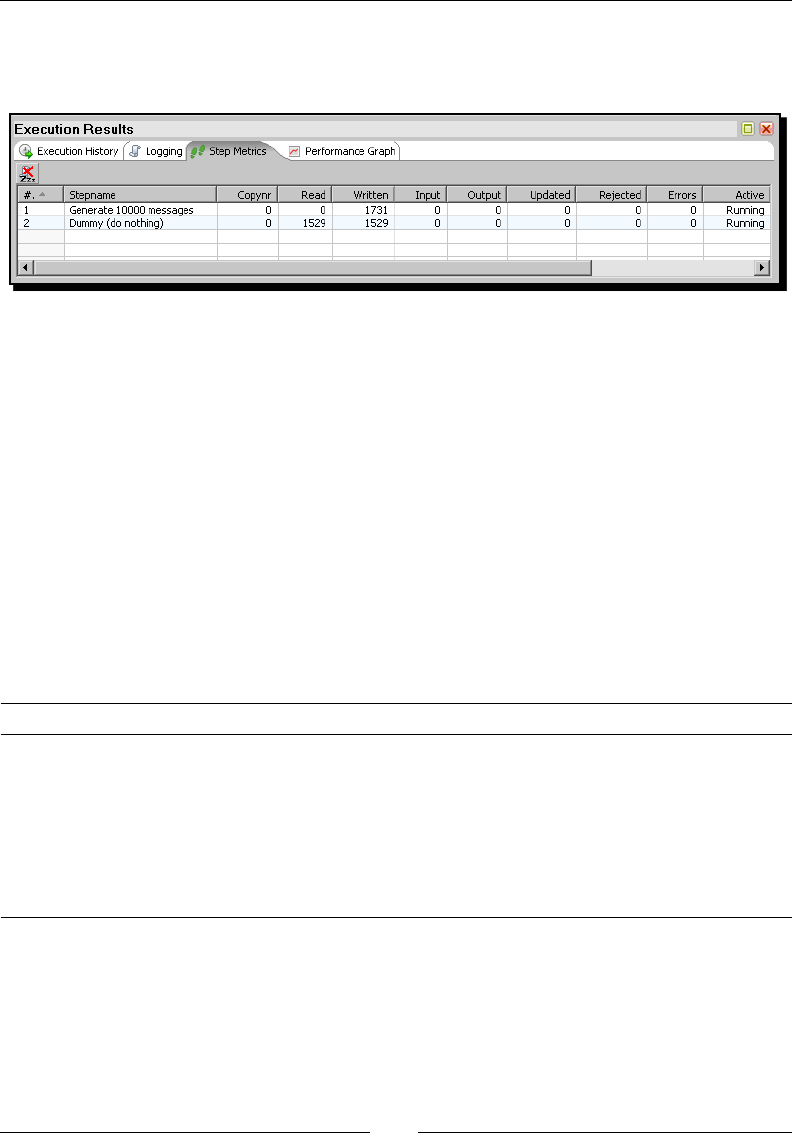

screenshot shows a simple ETL designed with Kele:

Integrating data

Imagine two similar companies that need to merge their databases in order to have a unied

view of the data, or a single company that has to combine informaon from a main ERP

applicaon and a CRM applicaon, though they're not connected. These are just two of

hundreds of examples where data integraon is needed. Integrang data is not just a maer

of gathering and mixing data; some conversions, validaon, and transport of data has to be

done. Kele is meant to do all those tasks.

Data cleansing

Why do we need that data be correct and accurate? There are many reasons—for the

eciency of business, to generate trusted conclusions in data mining or stascal studies,

to succeed when integrang data, and so on. Data cleansing is about ensuring that the

data is correct and precise. This can be ensured by verifying if the data meets certain rules,

discarding or correcng those that don't follow the expected paern, seng default values

for missing data, eliminang informaon that is duplicated, normalizing data to conform

minimum and maximum values, and so on—tasks that Kele makes possible, thanks to its

vast set of transformaon and validaon capabilies.

Chapter 1

[ 13 ]

Migrating information

Think of a company of any size that uses a commercial ERP applicaon. One day the owners

realize that the licences are consuming an important share of its budget and so they decide

to migrate to an open source ERP. The company will no longer have to pay licences, but if

they want to do the change, they will have to migrate the informaon. Obviously it is not an

opon to start from scratch, or type the informaon by hand. Kele makes the migraon

possible, thanks to its ability to interact with most kinds of sources and desnaons such as

plain les, and commercial and free databases and spreadsheets.

Exporting data

Somemes you are forced by government regulaons to export certain data to be processed

by legacy systems. You can't just print and deliver some reports containing the required data.

The data has to have a rigid format, with columns that have to obey some rules (size, format,

content), dierent records for heading and tail, just to name some common demands. Kele

has the power to take crude data from the source and generate these kinds of ad hoc reports.

Integrating PDI using Pentaho BI

The previous examples show typical uses of PDI as a standalone applicaon. However, Kele

may be used as part of a process inside the Pentaho BI Plaorm. There are many things

embedded in the Pentaho applicaon that Kele can do—preprocessing data for an on-line

report, sending mails in a schedule fashion, or generang spreadsheet reports.

You'll nd more on this in Chapter 13. However, the use of PDI integrated

with the BI Suite is beyond the scope of this book.

Pop quiz – PDI data sources

Which of the following aren't valid sources in Kele:

1. Spreadsheets

2. Free database engines

3. Commercial database engines

4. Flat les

5. None of the above

Geng Started with Pentaho Data Integraon

[ 14 ]

Installing PDI

In order to work with PDI you need to install the soware. It's a simple task; let's do it.

Time for action – installing PDI

These are the instrucons to install Kele, whatever your operang system.

The only prerequisite to install PDI is to have JRE 5.0 or higher installed. If you don't have it,

please download it from http://www.javasoft.com/ and install it before proceeding.

Once you have checked the prerequisite, follow these steps:

1. From http://community.pentaho.com/sourceforge/ follow the link to

Pentaho Data Integraon (Kele). Alternavely, go directly to the download page

http://sourceforge.net/projects/pentaho/files/Data Integration.

2. Choose the newest stable release. At this me, it is 3.2.0.

3. Download the le that matches your plaorm. The preceding screenshot should

help you.

4. Unzip the downloaded le in a folder of your choice

—C:/Kettle or /home/your_dir/kettle.

Chapter 1

[ 15 ]

5. If your system is Windows, you're done. Under UNIX-like environments, it's

recommended that you make the scripts executable. Assuming that you

chose Kele as the installaon folder, execute the following command:

cd Kettle

chmod +x *.sh

What just happened?

You have installed the tool in just a few minutes. Now you have all you need to start working.

Pop quiz – PDI prerequisites

Which of the following are mandatory to run PDI? You may choose more than one opon.

1. Kele

2. Pentaho BI plaorm

3. JRE

4. A database engine

Launching the PDI graphical designer: Spoon

Now that you've installed PDI, you must be eager to do some stu with data. That will be

possible only inside a graphical environment. PDI has a desktop designer tool named Spoon.

Let's see how it feels to work with it.

Time for action – starting and customizing Spoon

In this tutorial you're going to launch the PDI graphical designer and get familiarized with itsn this tutorial you're going to launch the PDI graphical designer and get familiarized with its

main features.

1. Start Spoon.

If your system is Windows, type the following command:

Spoon.bat

In other plaorms such as Unix, Linux, and so on, type:

Spoon.sh

If you didn't make spoon.sh executable, you may type:

sh Spoon.sh

Geng Started with Pentaho Data Integraon

[ 16 ]

2. As soon as Spoon starts, a dialog window appears asking for the repository

connecon data. Click the No Repository buon. The main window appears. You

will see a small window with the p of the day. Aer reading it, close that window.

3. A welcome! window appears with some useful links for you to see.

4. Close the welcome window. You can open that window later from the main menu.

5. Click Opons... from the Edit menu. A window appears where you can change

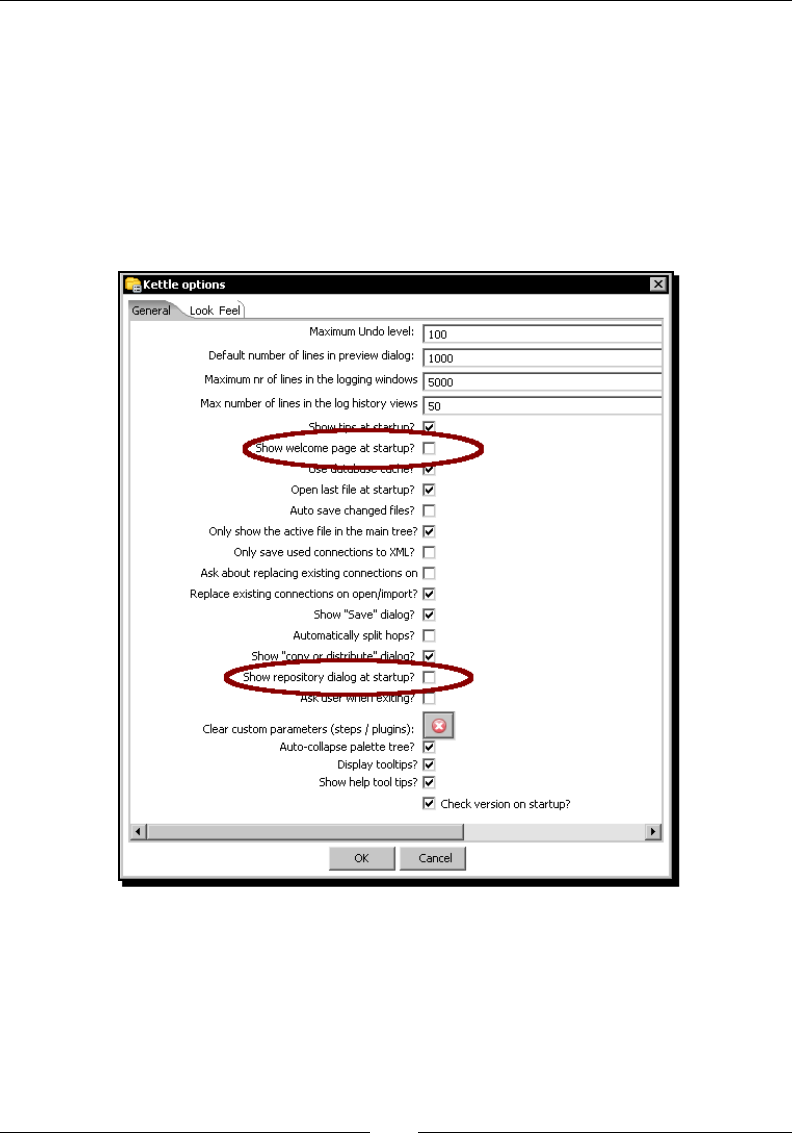

various general and visual characteriscs. Uncheck the circled checkboxes:

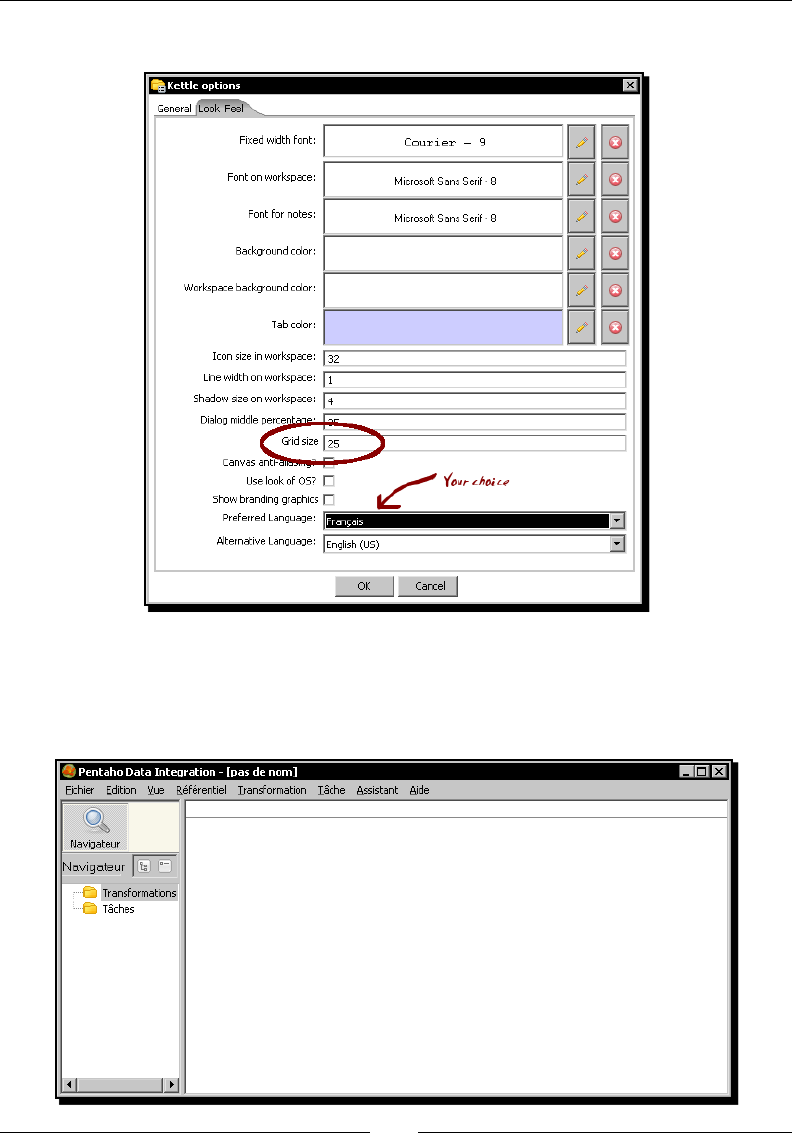

6. Select the tab window Look Feel.

Chapter 1

[ 17 ]

7. Change the Grid size and Preferred Language sengs as follows:

8. Click the OK buon.

9. Restart Spoon in order to apply the changes. You should neither see the repository

dialog, nor the welcome window. You should see the following screen instead:

Geng Started with Pentaho Data Integraon

[ 18 ]

What just happened?

You ran for the rst me the graphical designer of PDI Spoon, and applied some

custom conguraon.

From the Look Feel conguraon window, you changed the size of the doed grid that

appears in the canvas area while you are working. You also changed the preferred language.

In the Opon tab window, you chose not to show either the repository dialog or the

welcome window at startup. These changes were applied as you restarted the tool, not

before.

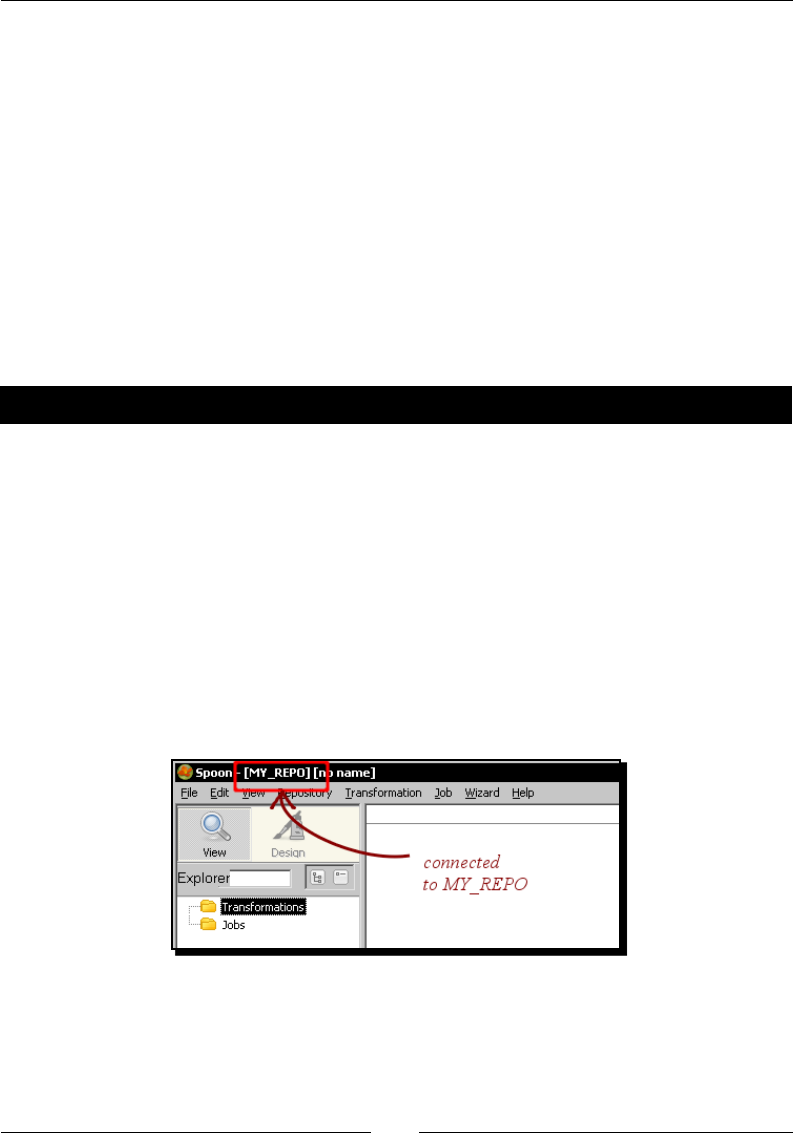

The second me you launched the tool, the repository dialog didn't show up. When the

main window appeared, all the visible texts were shown in French, which was the selected

language, and instead of the welcome window, there was a blank screen.

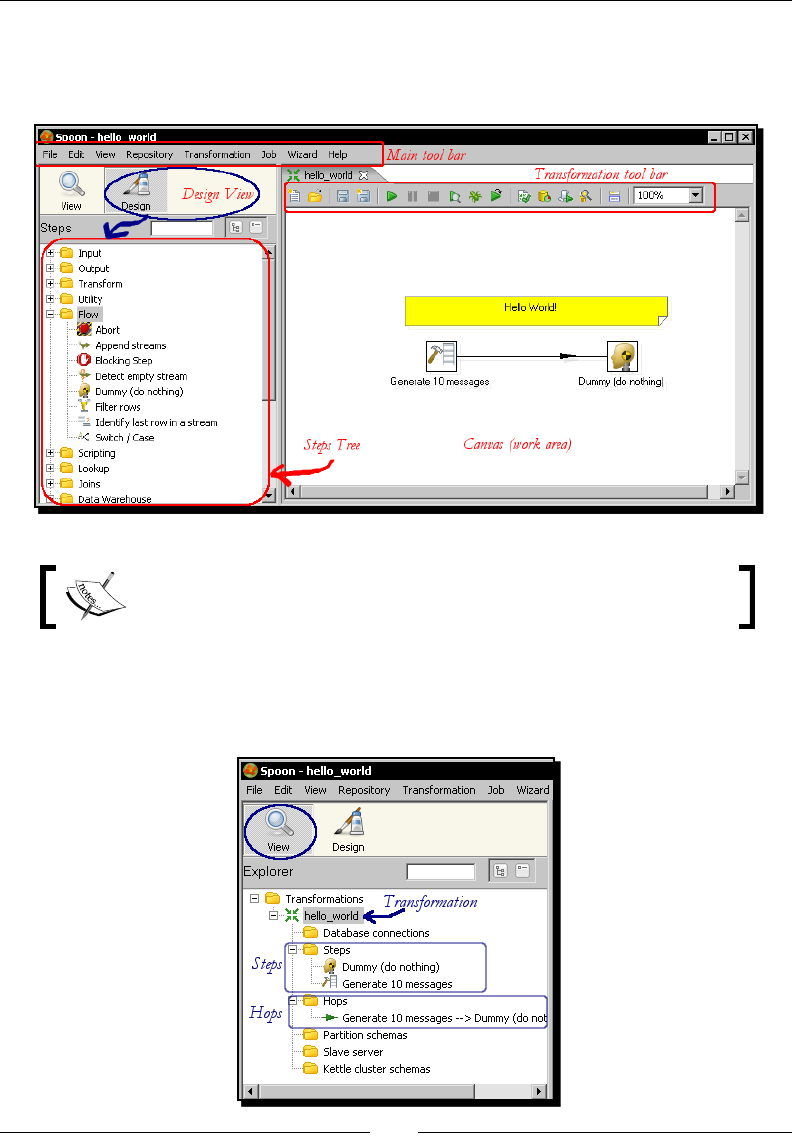

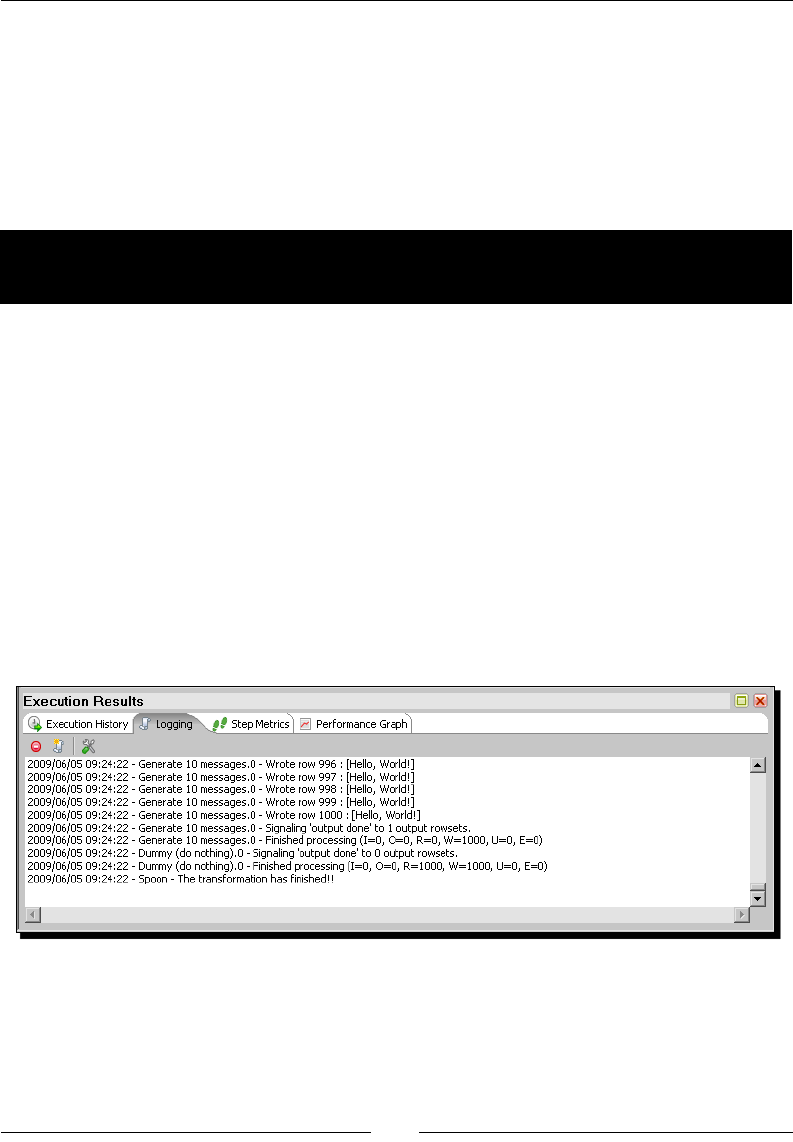

Spoon

This tool that you're exploring in this secon is the PDI's desktop design tool. With Spoon you

design, preview, and test all your work, that is, transformaons and jobs. When you see PDI

screenshots, what you are really seeing are Spoon screenshots. The other PDI components

that you will meet in the following chapters are executed from terminal windows.

Setting preferences in the Options window

In the tutorial you changed some preferences in the Opons window. There are several look

and feel characteriscs you can change beyond those you changed. Feel free to experiment

with this seng.

Remember to restart Spoon in order to see the changes applied.

If you choose any language as preferred language other than English, you

should select a dierent language as alternave. If you do so, every name or

descripon not translated to your preferred language will be shown in the

alternave language.

Just for the curious people: Italian and French are the overall winners of the list of languages

to which the tool has been translated from English. Below them follow Korean, Argennean

Spanish, Japanese, and Chinese.

Chapter 1

[ 19 ]

One of the sengs you changed was the appearance of the welcome window at start up.

The welcome window has many useful links, all related with the tool: wiki pages, news,

forum access, and more. It's worth exploring them.

You don't have to change the sengs again to see the welcome window.

You can open it from the menu Help | Show the Welcome Screen.

Storing transformations and jobs in a repository

The rst me you launched Spoon, you chose No Repository. Aer that, you congured

Spoon to stop asking you for the Repository opon. You must be curious about what the

repository is and why not to use it. Let's explain it.

As said, the results of working with PDI are Transformaons and Jobs. In order to save the

Transformaons and Jobs, PDI oers two methods:

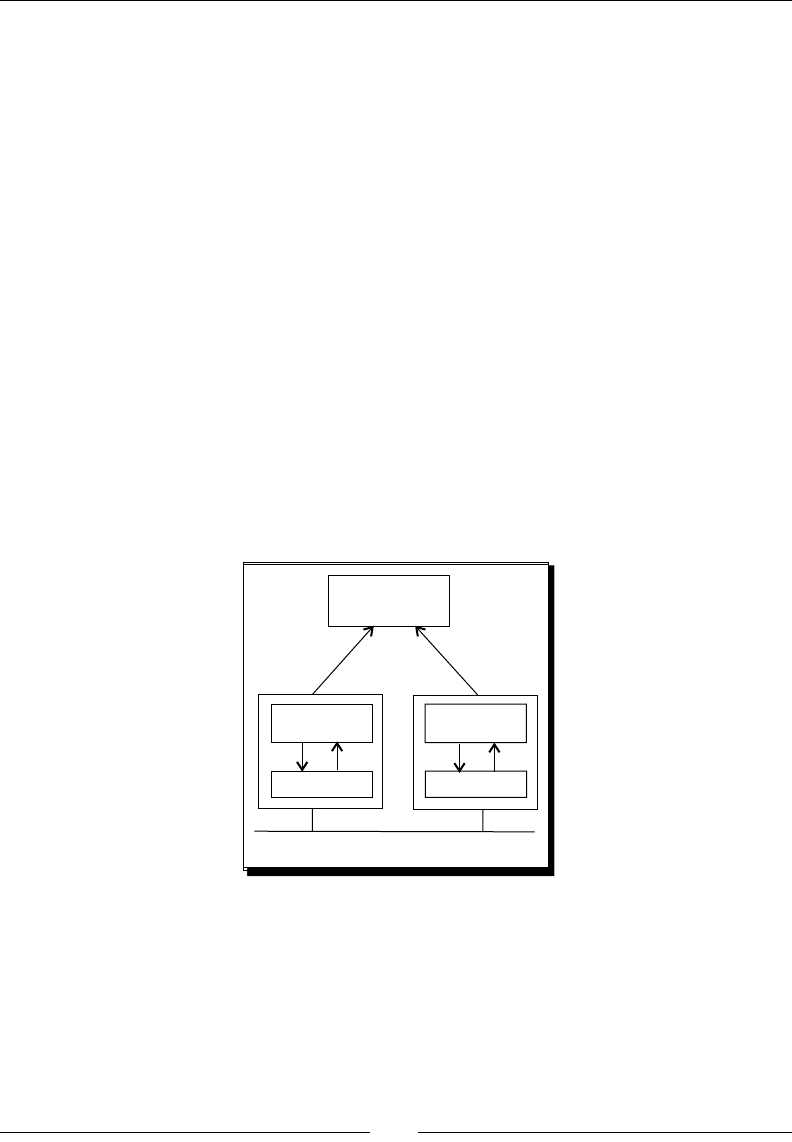

Repository: When you use the repository method you save jobs and

transformaons in a repository. A repository is a relaonal database specially

designed for this purpose.

Files: The les method consists of saving jobs and transformaons as regular XML

les in the lesystem, with extension kjb and ktr respecvely.

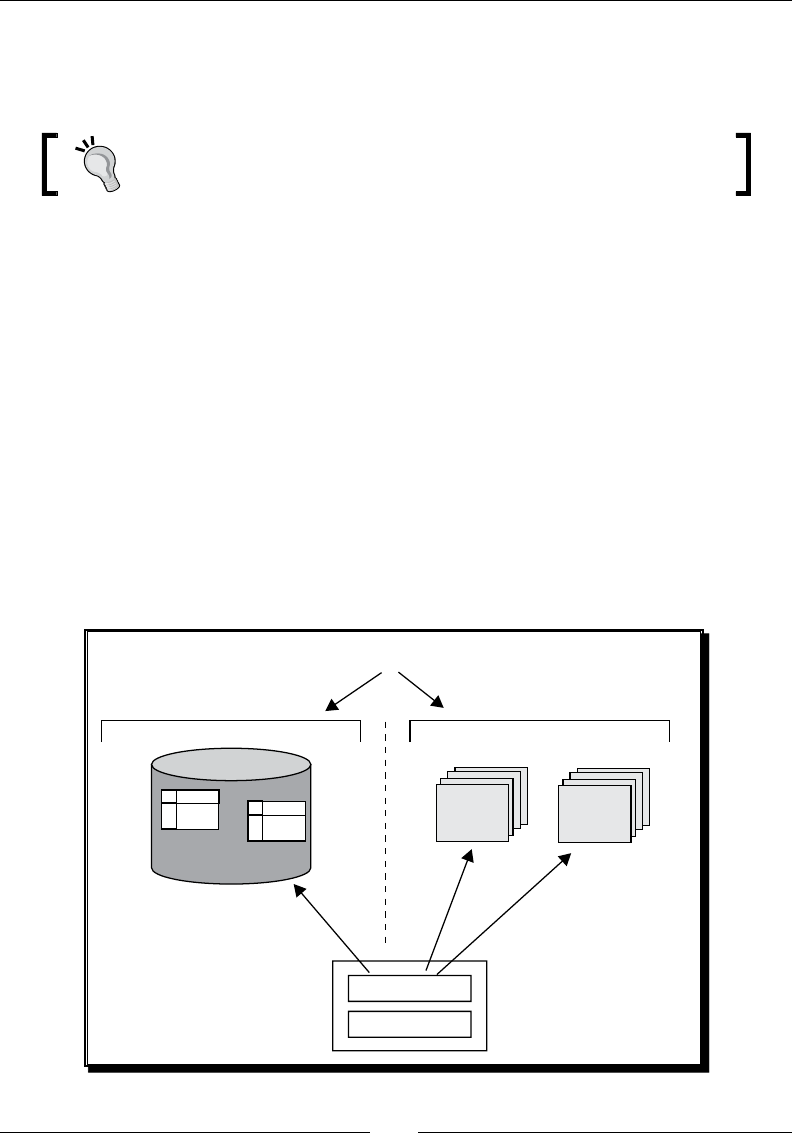

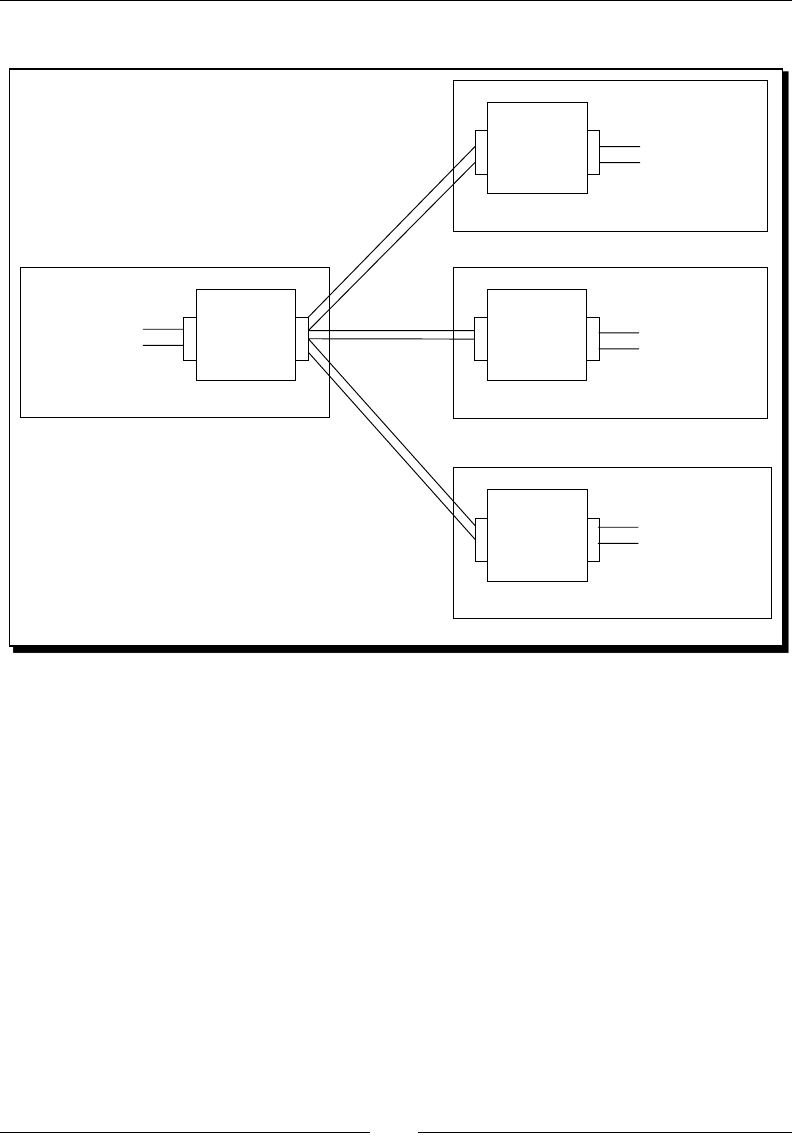

The following diagram summarizes this:

exclusive

REPOSITORY FILE SYSTEM

.ktr .kjb

Design, Preview, Run

SPOON

Kettle Engine KETTLE

Transfor

mations Jobs

Transformations Jobs

Design, Preview, Run

Geng Started with Pentaho Data Integraon

[ 20 ]

You cannot mix the two methods (les and repository) in the same project. Therefore, you

must choose the method when you start the tool.

Why did we choose not to work with repository, or in other words, to work with les? This is

mainly for the following two reasons:

Working with les is more natural and praccal for most users.

Working with repository requires minimum database knowledge and that you also

have access to a database engine from your computer. Having both precondions

would allow you to learn working with both methods. However, it's probable that

you haven't.

Throughout this book, we will use the le method. For details of working with repositories,

please refer to Appendix A.

Creating your rst transformation

Unl now, you've seen the very basic elements of Spoon. For sure, you must be waing to do

some interesng task beyond looking around. It's me to create your rst transformaon.

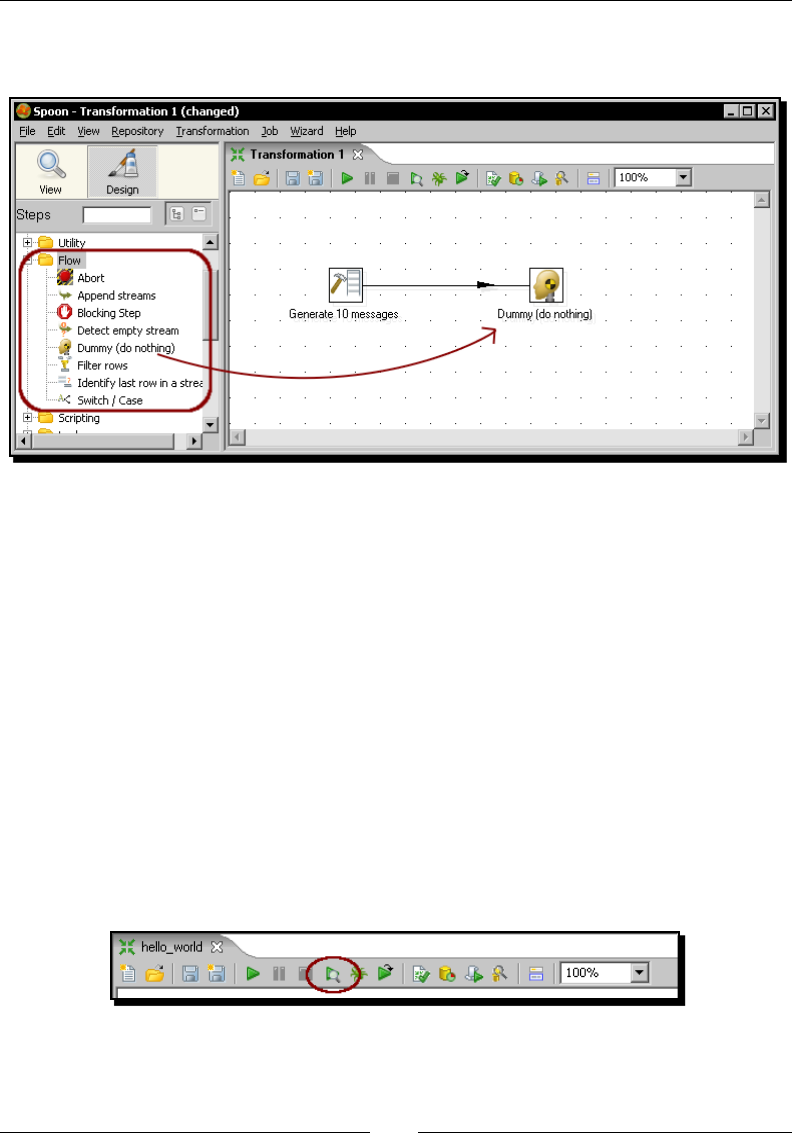

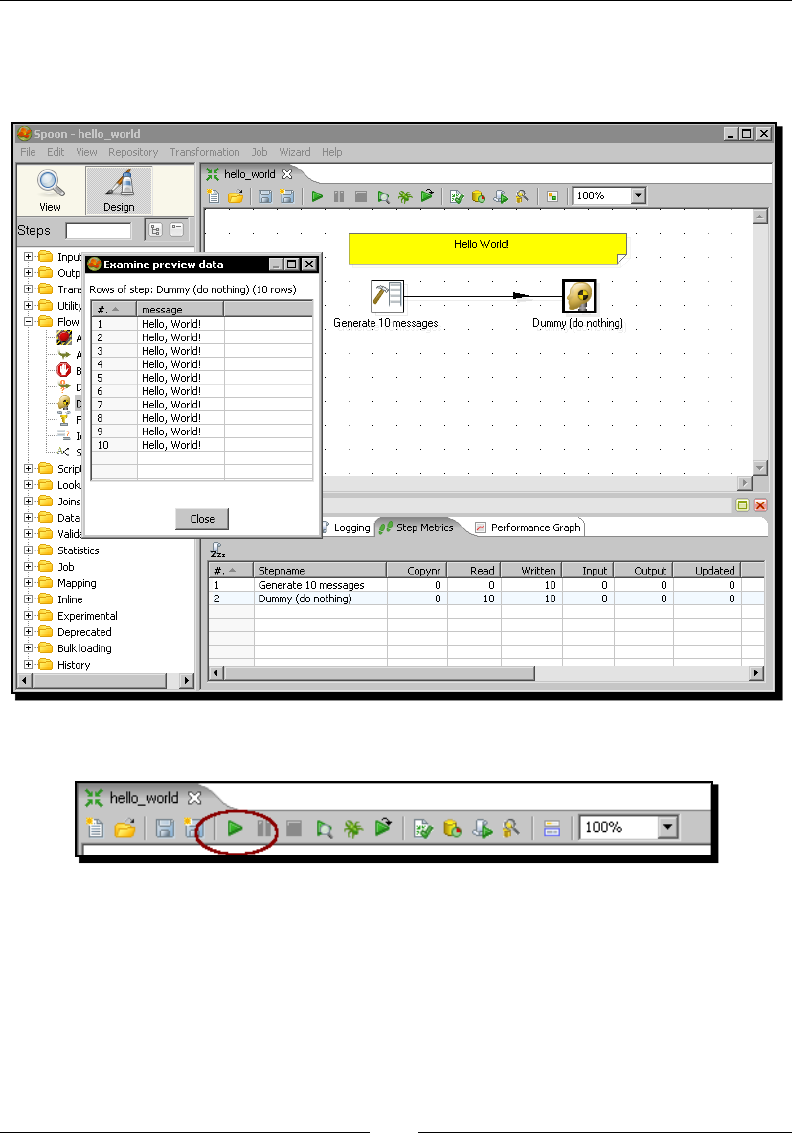

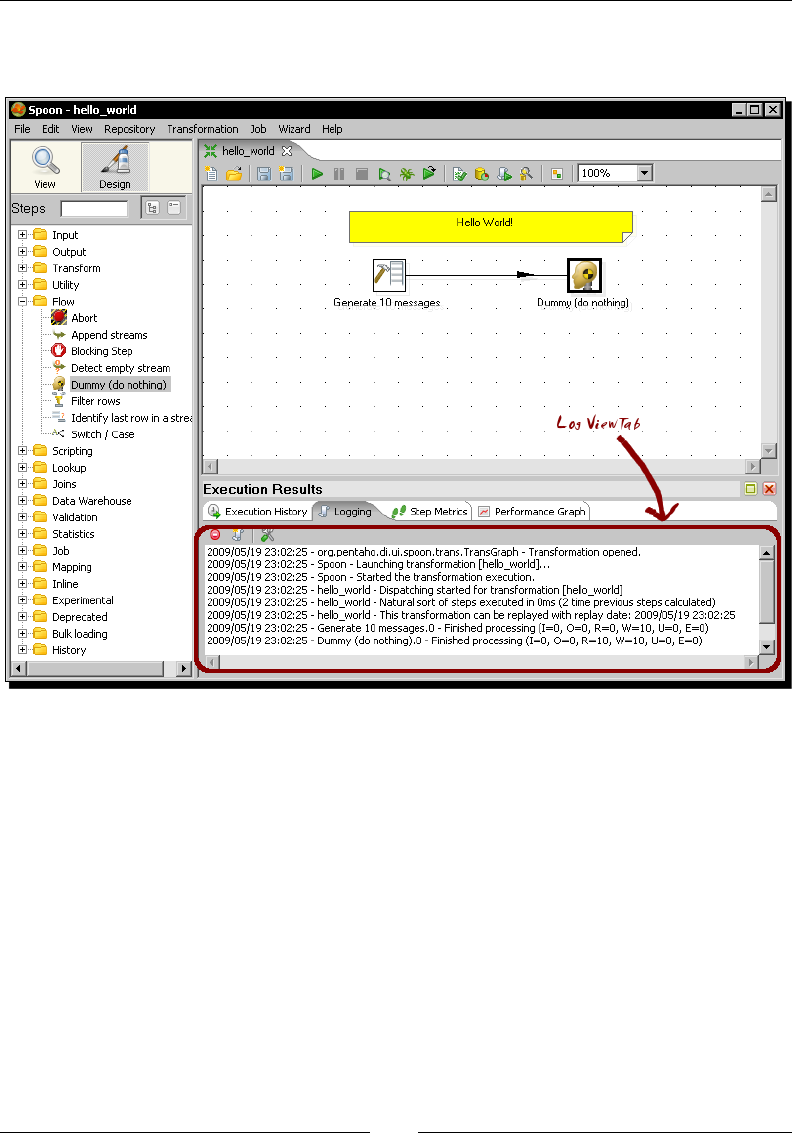

Time for action – creating a hello world transformation

How about starng by saying Hello to the World? Not original but enough for a very rst

praccal exercise. Here is how you do it:

1. Create a folder named pdi_labs under the folder of your choice.

2. Open Spoon.

3. From the main menu select File | New Transformaon.

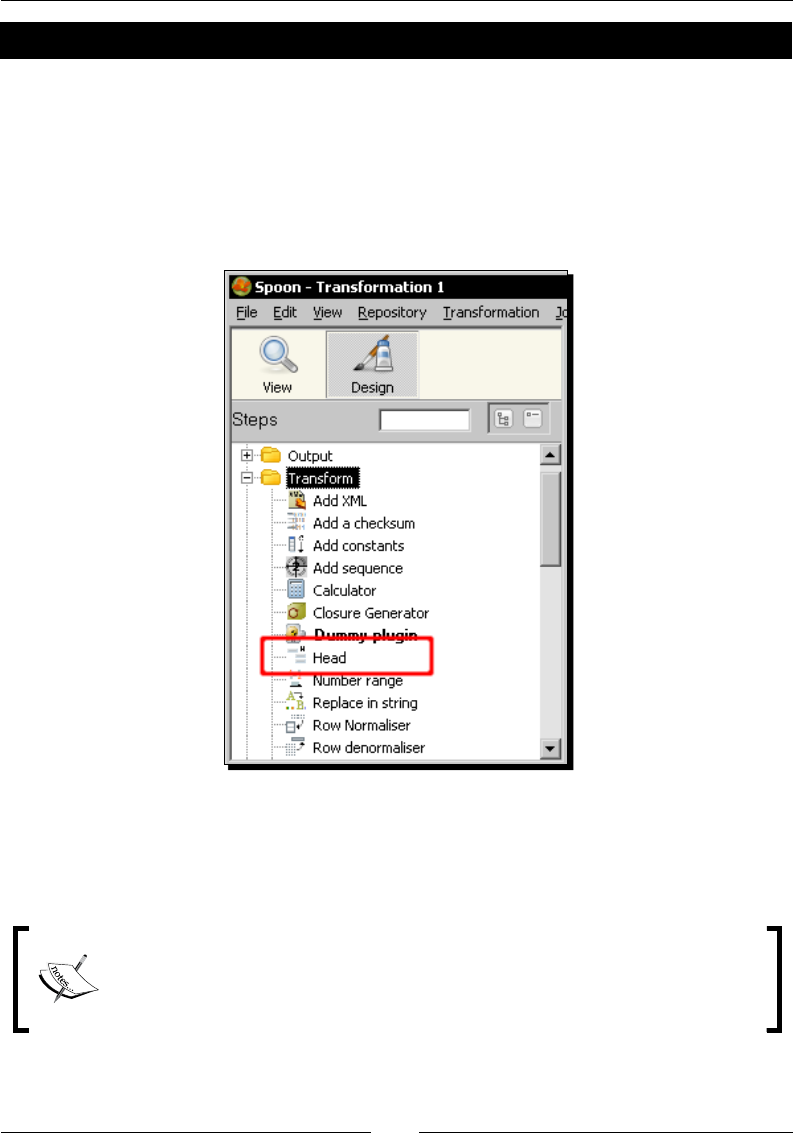

4. At the le-hand side of the screen, you'll see a tree of Steps. Expand the Input

branch by double-clicking it.

5. Le-click the Generate Rows icon.

Chapter 1

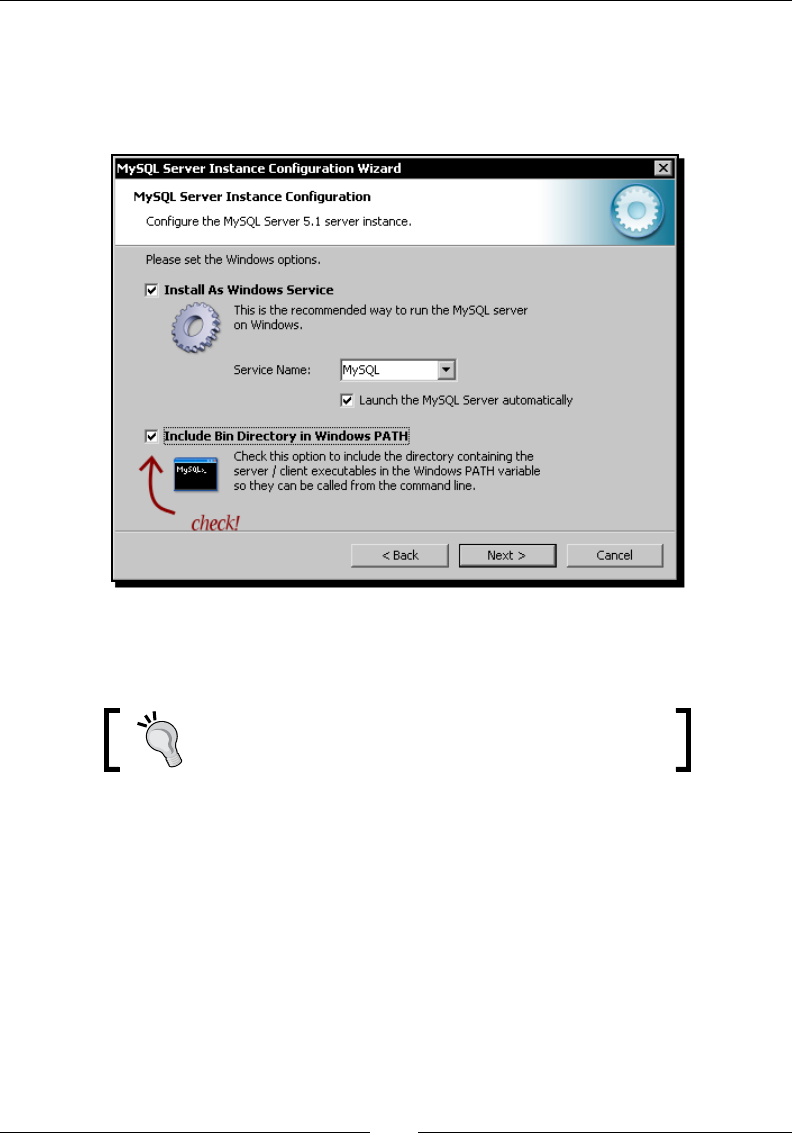

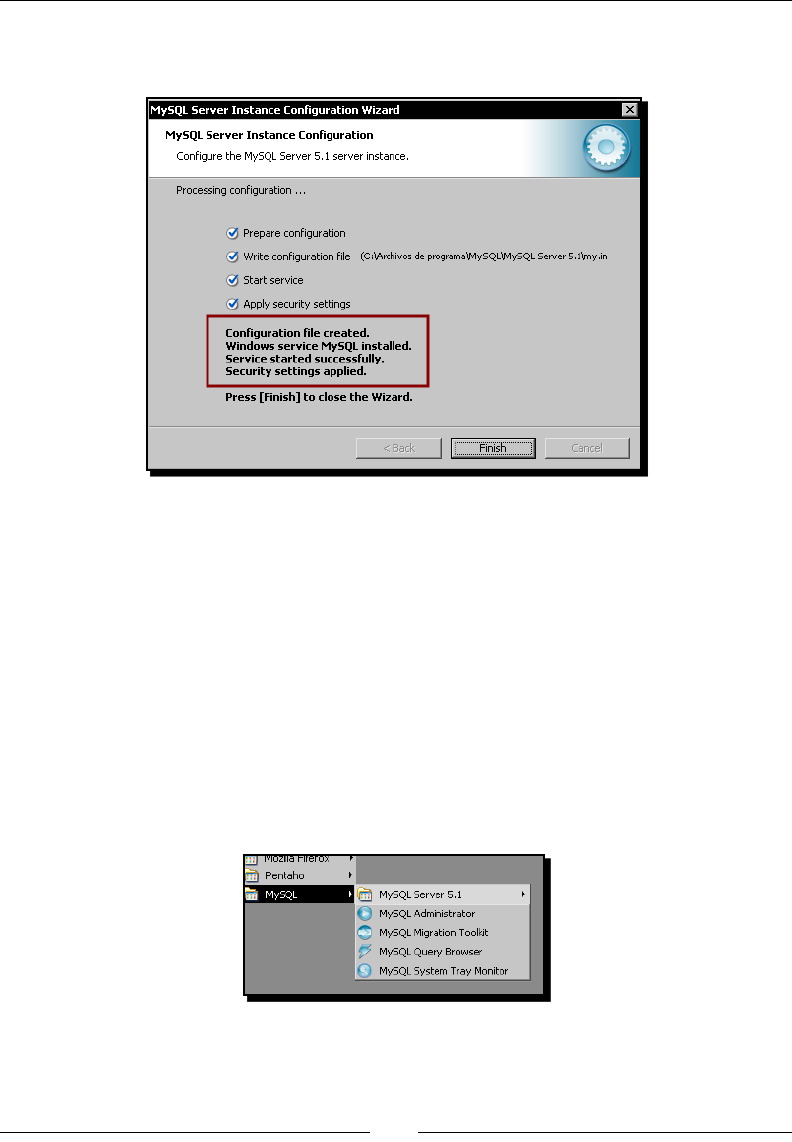

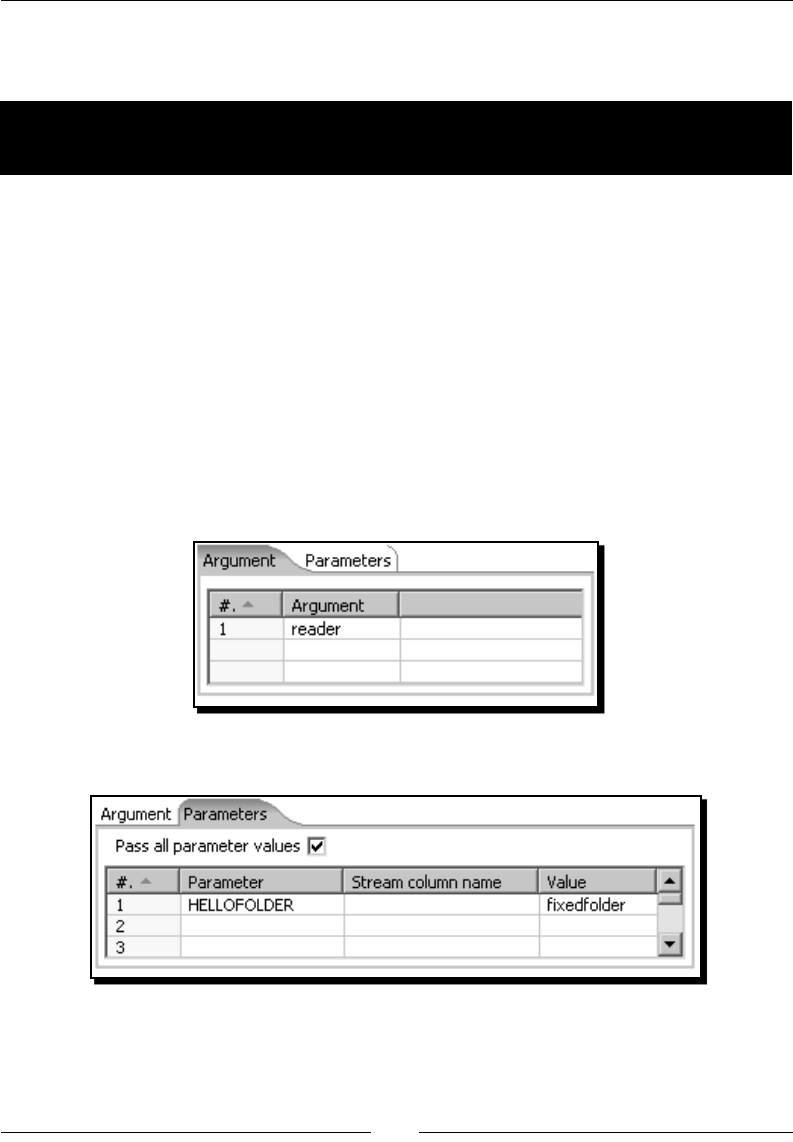

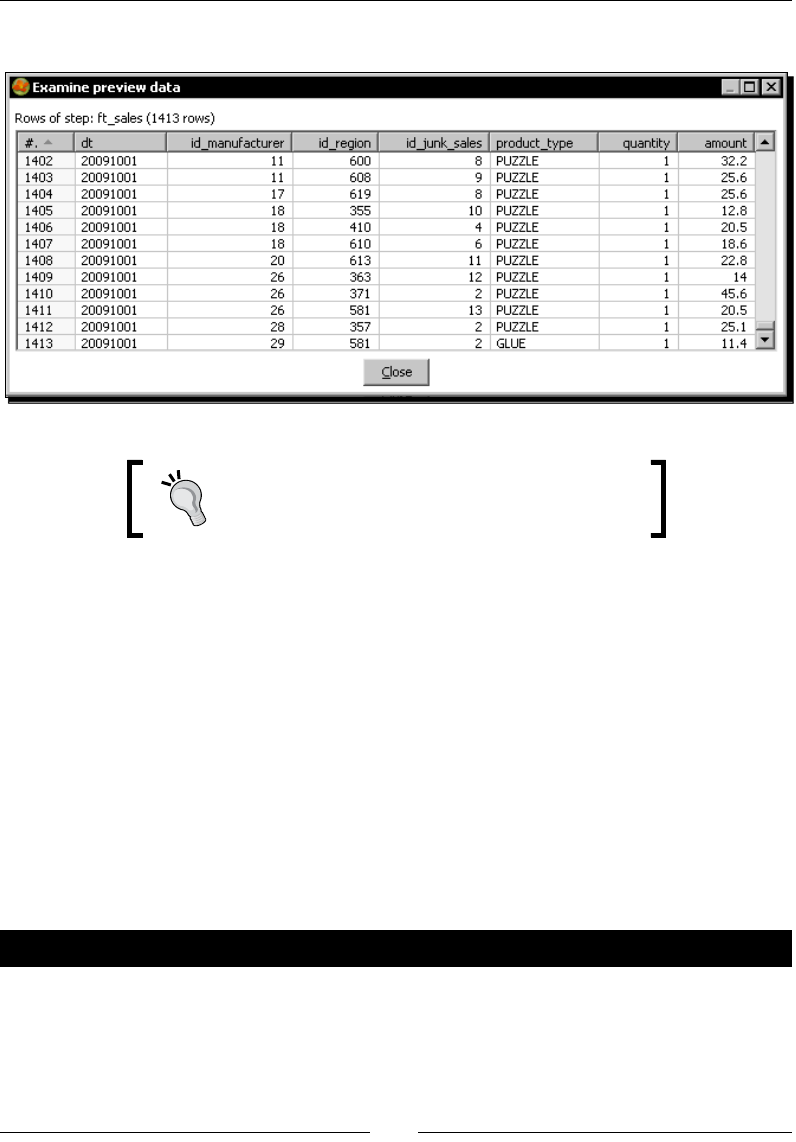

[ 21 ]