ARM Cortex A Series Programmer’s Guide For ARMv8 Programmer's V1.0 Min

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 296 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- ARM Cortex-A Series Programmer’s Guide for ARMv8-A

- Contents

- Preface

- 1: Introduction

- 2: ARMv8-A Architecture and Processors

- 3: Fundamentals of ARMv8

- 4: ARMv8 Registers

- 5: An Introduction to the ARMv8 Instruction Sets

- 6: The A64 instruction set

- 6.1 Instruction mnemonics

- 6.2 Data processing instructions

- 6.3 Memory access instructions

- 6.3.1 Load instruction format

- 6.3.2 Store instruction format

- 6.3.3 Floating-point and NEON scalar loads and stores

- 6.3.4 Specifying the address for a Load or Store instruction

- 6.3.5 Accessing multiple memory locations

- 6.3.6 Unprivileged access

- 6.3.7 Prefetching memory

- 6.3.8 Non-temporal load and store pair

- 6.3.9 Memory access atomicity

- 6.3.10 Memory barrier and fence instructions

- 6.3.11 Synchronization primitives

- 6.4 Flow control

- 6.5 System control and other instructions

- 7: AArch64 Floating-point and NEON

- 8: Porting to A64

- 9: The ABI for ARM 64-bit Architecture

- 10: AArch64 Exception Handling

- 11: Caches

- 12: The Memory Management Unit

- 12.1 The Translation Lookaside Buffer

- 12.2 Separation of kernel and application Virtual Address spaces

- 12.3 Translating a Virtual Address to a Physical Address

- 12.4 Translation tables in ARMv8-A

- 12.5 Translation table configuration

- 12.6 Translations at EL2 and EL3

- 12.7 Access permissions

- 12.8 Operating system use of translation table descriptors

- 12.9 Security and the MMU

- 12.10 Context switching

- 12.11 Kernel access with user permissions

- 13: Memory Ordering

- 14: Multi-core processors

- 15: Power Management

- 16: big.LITTLE Technology

- 17: Security

- 18: Debug

- 18.1 ARM debug hardware

- 18.2 ARM trace hardware

- 18.3 DS-5 debug and trace

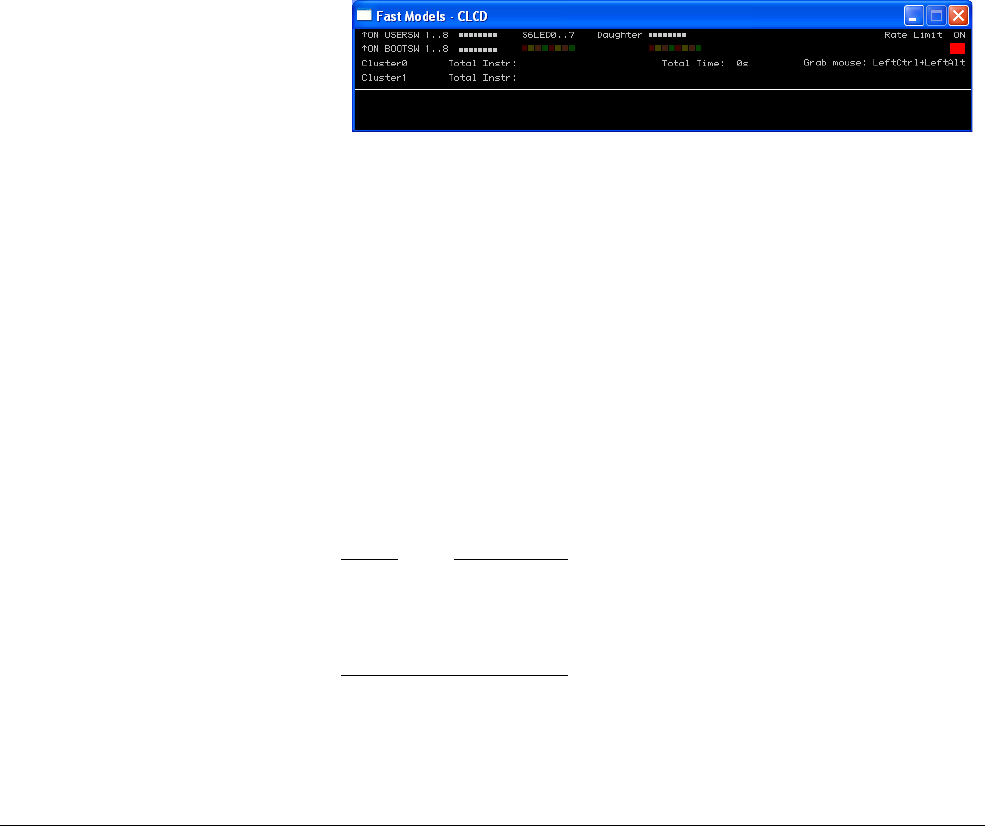

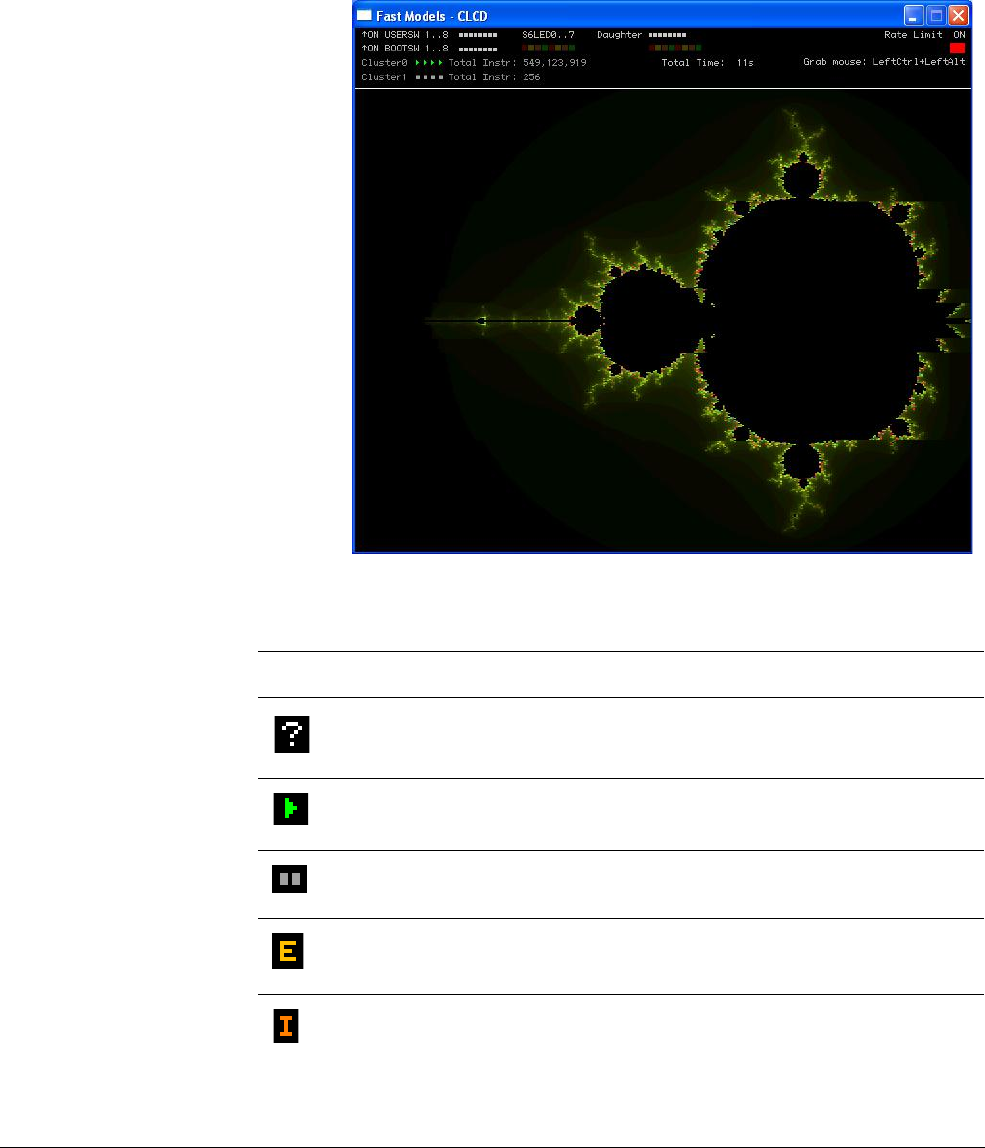

- 19: ARMv8 Models

- 19.1 ARM Fast Models

- 19.2 ARMv8-A Foundation Platform

- 19.2.1 Limitations of the Foundation Platform

- 19.2.2 Software requirements

- 19.2.3 Where to get the ARM Foundation Platform

- 19.2.4 Verifying the installation

- 19.2.5 Running the example program

- 19.2.6 Troubleshooting the example program

- 19.2.7 The kernel

- 19.2.8 Configuring the kernel command line

- 19.2.9 Choice of root filesystem

- 19.2.10 Setting up a block device image for a root file system

- 19.2.11 Starting the Foundation platform

- 19.2.12 Network connections

- 19.2.13 Setting up a network connection

- 19.2.14 Command-line overview

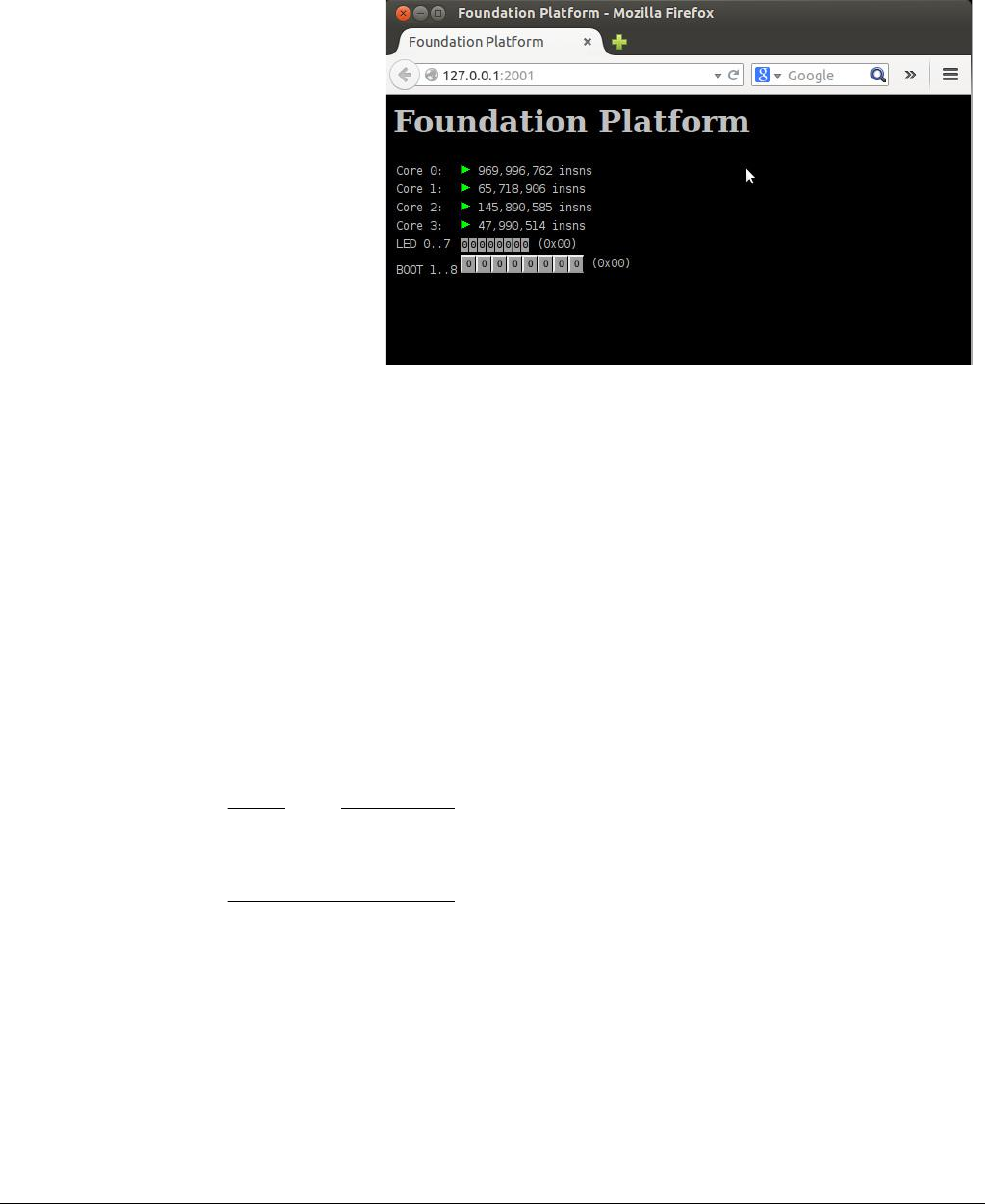

- 19.2.15 Web interface

- 19.2.16 UARTs

- 19.2.17 UART output

- 19.2.18 Multicore configuration

- 19.2.19 Semihosting

- 19.2.20 Semihosting configuration

- 19.3 The Base Platform FVP

- 19.3.1 Software requirements

- 19.3.2 Verifying the installation

- 19.3.3 Semihosting support

- 19.3.4 Using a configuration GUI in your debugger

- 19.3.5 Setting model configuration options from Model Shell

- 19.3.6 Loading and running an application on the AEMv8-A Base Platform FVP

- 19.3.7 Running the example program from the command line

- 19.3.8 Running the example program using Model Debugger

- 19.3.9 Using the CLCD window

- 19.3.10 Using Ethernet with the AEMv8-A Base Platform FVP

- 19.3.11 Compatibility with VE model and platform

- 19.3.12 Where to get the ARMv8-A Base Platform FVP

- Index

Copyright © 2015 ARM. All rights reserved.

ARM DEN0024A (ID050815)

ARM® Cortex®-A Series

Version: 1.0

Programmer’s Guide for ARMv8-A

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. ii

ID050815 Non-Confidential

ARM Cortex-A Series

Programmer’s Guide for ARMv8-A

Copyright © 2015 ARM. All rights reserved.

Release Information

The following changes have been made to this book.

Proprietary Notice

This document is protected by copyright and other related rights and the practice or implementation of the information

contained in this document may be protected by one or more patents or pending patent applications. No part of this

document may be reproduced in any form by any means without the express prior written permission of ARM. No

license, express or implied, by estoppel or otherwise to any intellectual property rights is granted by this document

unless specifically stated.

Your access to the information in this document is conditional upon your acceptance that you will not use or permit

others to use the information for the purposes of determining whether implementations infringe any third party patents.

THIS DOCUMENT IS PROVIDED “AS IS”. ARM PROVIDES NO REPRESENTATIONS AND NO

WARRANTIES, EXPRESS, IMPLIED OR STATUTORY, INCLUDING, WITHOUT LIMITATION, THE IMPLIED

WARRANTIES OF MERCHANTABILITY, SATISFACTORY QUALITY, NON-INFRINGEMENT OR FITNESS

FOR A PARTICULAR PURPOSE WITH RESPECT TO THE DOCUMENT. For the avoidance of doubt, ARM makes

no representation with respect to, and has undertaken no analysis to identify or understand the scope and content of,

third party patents, copyrights, trade secrets, or other rights.

This document may include technical inaccuracies or typographical errors.

TO THE EXTENT NOT PROHIBITED BY LAW, IN NO EVENT WILL ARM BE LIABLE FOR ANY DAMAGES,

INCLUDING WITHOUT LIMITATION ANY DIRECT, INDIRECT, SPECIAL, INCIDENTAL, PUNITIVE, OR

CONSEQUENTIAL DAMAGES, HOWEVER CAUSED AND REGARDLESS OF THE THEORY OF LIABILITY,

ARISING OUT OF ANY USE OF THIS DOCUMENT, EVEN IF ARM HAS BEEN ADVISED OF THE

POSSIBILITY OF SUCH DAMAGES.

This document consists solely of commercial items. You shall be responsible for ensuring that any use, duplication or

disclosure of this document complies fully with any relevant export laws and regulations to assure that this document

or any portion thereof is not exported, directly or indirectly, in violation of such export laws. Use of the word “partner”

in reference to ARM’s customers is not intended to create or refer to any partnership relationship with any other

company. ARM may make changes to this document at any time and without notice.

If any of the provisions contained in these terms conflict with any of the provisions of any signed written agreement

covering this document with ARM, then the signed written agreement prevails over and supersedes the conflicting

provisions of these terms. This document may be translated into other languages for convenience, and you agree that if

there is any conflict between the English version of this document and any translation, the terms of the English version

of the Agreement shall prevail.

Words and logos marked with ® or ™ are registered trademarks or trademarks of ARM Limited or its affiliates in the

EU and/or elsewhere. All rights reserved. Other brands and names mentioned in this document may be the trademarks

of their respective owners. Please follow ARM’s trademark usage guidelines at

http://www.arm.com/about/trademark-usage-guidelines.php

Copyright © 2015, ARM Limited or its affiliates. All rights reserved.

ARM Limited. Company 02557590 registered in England.

110 Fulbourn Road, Cambridge, England CB1 9NJ.

Confidentiality Status

This document is Non-Confidential. The right to use, copy and disclose this document may be subject to license

restrictions in accordance with the terms of the agreement entered into by ARM and the party that ARM delivered this

document to.

Product Status

The information in this document is final, that is for a developed product.

Change history

Date Issue Confidentiality Change

24 March 2015 A Non-Confidential First release

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. iii

ID050815 Non-Confidential

Web Address

http://www.arm.com

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. iv

ID050815 Non-Confidential

Contents

ARM Cortex-A Series Programmer’s Guide for

ARMv8-A

Preface

Glossary ...................................................................................................................... ix

References ............................................................................................................... xiii

Feedback on this book ............................................................................................... xv

Chapter 1 Introduction

1.1 How to use this book ............................................................................................... 1-3

Chapter 2 ARMv8-A Architecture and Processors

2.1 ARMv8-A ................................................................................................................. 2-3

2.2 ARMv8-A Processor properties ............................................................................... 2-5

Chapter 3 Fundamentals of ARMv8

3.1 Execution states ...................................................................................................... 3-4

3.2 Changing Exception levels ...................................................................................... 3-5

3.3 Changing execution state ........................................................................................ 3-8

Chapter 4 ARMv8 Registers

4.1 AArch64 special registers ........................................................................................ 4-3

4.2 Processor state ........................................................................................................ 4-6

4.3 System registers ...................................................................................................... 4-7

4.4 Endianness ............................................................................................................ 4-12

4.5 Changing execution state (again) .......................................................................... 4-13

4.6 NEON and floating-point registers ......................................................................... 4-17

Contents

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. v

ID050815 Non-Confidential

Chapter 5 An Introduction to the ARMv8 Instruction Sets

5.1 The ARMv8 instruction sets ..................................................................................... 5-2

5.2 C/C++ inline assembly ............................................................................................. 5-9

5.3 Switching between the instruction sets .................................................................. 5-10

Chapter 6 The A64 instruction set

6.1 Instruction mnemonics ............................................................................................. 6-2

6.2 Data processing instructions .................................................................................... 6-3

6.3 Memory access instructions .................................................................................. 6-12

6.4 Flow control ........................................................................................................... 6-19

6.5 System control and other instructions .................................................................... 6-21

Chapter 7 AArch64 Floating-point and NEON

7.1 New features for NEON and Floating-point in AArch64 ........................................... 7-2

7.2 NEON and Floating-Point architecture .................................................................... 7-4

7.3 AArch64 NEON instruction format ........................................................................... 7-9

7.4 NEON coding alternatives ..................................................................................... 7-14

Chapter 8 Porting to A64

8.1 Alignment ................................................................................................................. 8-3

8.2 Data types ................................................................................................................ 8-4

8.3 Issues when porting code from a 32-bit to 64-bit environment ................................ 8-8

8.4 Recommendations for new C code ........................................................................ 8-10

Chapter 9 The ABI for ARM 64-bit Architecture

9.1 Register use in the AArch64 Procedure Call Standard ............................................ 9-3

Chapter 10 AArch64 Exception Handling

10.1 Exception handling registers .................................................................................. 10-4

10.2 Synchronous and asynchronous exceptions ......................................................... 10-7

10.3 Changes to execution state and Exception level caused by exceptions ............. 10-10

10.4 AArch64 exception table ...................................................................................... 10-12

10.5 Interrupt handling ................................................................................................. 10-14

10.6 The Generic Interrupt Controller .......................................................................... 10-17

Chapter 11 Caches

11.1 Cache terminology ................................................................................................. 11-3

11.2 Cache controller ..................................................................................................... 11-8

11.3 Cache policies ....................................................................................................... 11-9

11.4 Point of coherency and unification ....................................................................... 11-11

11.5 Cache maintenance ............................................................................................. 11-13

11.6 Cache discovery .................................................................................................. 11-18

Chapter 12 The Memory Management Unit

12.1 The Translation Lookaside Buffer .......................................................................... 12-4

12.2 Separation of kernel and application Virtual Address spaces ................................ 12-7

12.3 Translating a Virtual Address to a Physical Address ............................................. 12-9

12.4 Translation tables in ARMv8-A ............................................................................ 12-14

12.5 Translation table configuration ............................................................................. 12-18

12.6 Translations at EL2 and EL3 ............................................................................... 12-20

12.7 Access permissions ............................................................................................. 12-23

12.8 Operating system use of translation table descriptors ........................................ 12-25

12.9 Security and the MMU ......................................................................................... 12-26

12.10 Context switching ................................................................................................. 12-27

12.11 Kernel access with user permissions ................................................................... 12-29

Chapter 13 Memory Ordering

13.1 Memory types ........................................................................................................ 13-3

Contents

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. vi

ID050815 Non-Confidential

13.2 Barriers .................................................................................................................. 13-6

13.3 Memory attributes ................................................................................................ 13-11

Chapter 14 Multi-core processors

14.1 Multi-processing systems ...................................................................................... 14-3

14.2 Cache coherency ................................................................................................. 14-10

14.3 Multi-core cache coherency within a cluster ........................................................ 14-13

14.4 Bus protocol and the Cache Coherent Interconnect ............................................ 14-17

Chapter 15 Power Management

15.1 Idle management ................................................................................................... 15-3

15.2 Dynamic voltage and frequency scaling ................................................................ 15-6

15.3 Assembly language power instructions ................................................................. 15-7

15.4 Power State Coordination Interface ....................................................................... 15-8

Chapter 16 big.LITTLE Technology

16.1 Structure of a big.LITTLE system .......................................................................... 16-2

16.2 Software execution models in big.LITTLE ............................................................. 16-4

16.3 big.LITTLE MP ....................................................................................................... 16-7

Chapter 17 Security

17.1 TrustZone hardware architecture ........................................................................... 17-3

17.2 Switching security worlds through interrupts ......................................................... 17-5

17.3 Security in multi-core systems ............................................................................... 17-6

17.4 Switching between Secure and Non-secure state ................................................. 17-8

Chapter 18 Debug

18.1 ARM debug hardware ............................................................................................ 18-3

18.2 ARM trace hardware .............................................................................................. 18-9

18.3 DS-5 debug and trace .......................................................................................... 18-12

Chapter 19 ARMv8 Models

19.1 ARM Fast Models .................................................................................................. 19-2

19.2 ARMv8-A Foundation Platform .............................................................................. 19-4

19.3 The Base Platform FVP ....................................................................................... 19-16

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. vii

ID050815 Non-Confidential

Preface

In 2013, ARM released its 64-bit ARMv8 architecture, the first major change to the ARM

architecture since ARMv7 in 2007, and the most fundamental and far reaching change since the

original ARM architecture was created.

Development of the architecture has continued for some years. Early versions were being used

before the Cortex-A Series Programmer’s Guide for ARMv7-A was first released. The first of

the Programmer’s Guide series from ARM, it post-dated the introduction of the 32-bit ARMv7

architecture by some years. Almost immediately there were requests for a version to cover the

ARMv8 architecture. It was intended from the outset that a guide to ARMv8 should be available

as soon as possible.

This book was started when the first versions of the ARMv8 architecture were being tested and

codified. As always, moving from a system that is known and understood to something new and

unknown can present a number of problems. The engineers who supplied information for the

present book are, by and large, the same engineers who supplied the information for the original

Cortex-A Series Programmer’s Guide. This book has been made richer by their observations and

insights as they use, and solve the problems presented by the new architecture.

The Programmer’s Guides are meant to complement, rather than replace, other ARM

documentation available, such as the Technical Reference Manuals (TRMs) for the processors

themselves, documentation for individual devices or boards or, most importantly, the ARM

Architecture Reference Manual (the ARM ARM). They are intended to provide a gentle

introduction to the ARM architecture, and cover all the main concepts that you need to know

about, in an easy to read format, with examples of actual code in both C and assembly language,

and with hints and tips for writing your own code.

It might be argued that if you are an application developer, you do not need to know what goes

on inside a processor. ARM Application processors can easily be regarded as black boxes which

simply run your code when you say go. Instead, this book provides a single guide, bringing

Preface

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. viii

ID050815 Non-Confidential

together information from a wide variety of sources, for those programmers who get the system

to the point where application developers can run applications, such as those involved in ASIC

verification, or those working on boot code and device drivers.

During bring-up of a new board or System-on-Chip (SoC), engineers may have to investigate

issues with the hardware. Memory system behavior is among the most common places for these

to manifest, for example, deadlocks where the processor cannot make forward progress because

of memory system lock. Debugging these problems requires an understanding of the operation

and effect of cache or MMU use. This is different from debugging a failing piece of code.

In a similar vein, system architects (usually hardware engineers) make choices early in the

design about the implementation of DMA, frame buffers and other parts of the memory system

where an understanding of data flow between agents in required. In this case it is difficult to

make sensible decisions about it if you do not understand when a cache will help you and when

it gets in the way, or how the OS will use the MMU. Similar considerations apply in many other

places.

This is not an introductory level book, nor is it a purely technical description of the architecture

and processors, which merely state the facts with little or no explanation of ‘how’ and ‘why’.

ARM and all who have collaborated on this book hope it successfully navigates between the two

extremes, while attempting to explain some of the more intricate aspects of the architecture.

Preface

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. ix

ID050815 Non-Confidential

Glossary

Abbreviations and terms used in this document are defined here.

AAPCS ARM Architecture Procedure Call Standard.

AArch32 state The ARM 32-bit execution state that uses 32-bit general-purpose registers,

and a 32-bit Program Counter (PC), Stack Pointer (SP), and Link Register

(LR). AArch32 execution state provides a choice of two instruction sets,

A32 and T32, previously called the ARM and Thumb instruction sets.

AArch64 state The ARM 64-bit execution state that uses 64-bit general-purpose registers,

and a 64-bit Program Counter (PC), Stack Pointer (SP), and Exception

Link Registers (ELR). AArch64 execution state provides a single

instruction set, A64.

ABI Application Binary Interface.

ACE AXI Coherency Extensions.

AES Advanced Encryption Standard.

AMBA® Advanced Microcontroller Bus Architecture.

AMP Asymmetric Multi-Processing.

ARM ARM The ARM Architecture Reference Manual.

ASIC Application Specific Integrated Circuit.

ASID Address Space ID.

AXI Advanced eXtensible Interface.

BE8 Byte Invariant Big-Endian Mode.

BTAC Branch Target Address Cache.

BTB Branch Target Buffer.

CCI Cache Coherent Interface.

CHI Coherent Hub Interface.

CP15 Coprocessor 15 for AArch32 and ARMv7-A- System control coprocessor.

DAP Debug Access Port.

DMA Direct Memory Access.

DMB Data Memory Barrier.

DS-5™ The ARM Development Studio.

DSB Data Synchronization Barrier.

DSP Digital Signal Processing.

DSTREAM An ARM debug and trace unit.

DVFS Dynamic Voltage/Frequency Scaling.

EABI Embedded ABI.

ECC Error Correcting Code.

Preface

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. x

ID050815 Non-Confidential

ECT Embedded Cross Trigger.

EL0 Exception level used to execute user applications.

EL1 Exception level normally used to run operating systems.

EL2 Hypervisor Exception level. In the Normal world, or Non-Secure state,

this is used to execute hypervisor code.

EL3 Secure Monitor exception level.This is used to execute the code that

guards transitions between the Secure and Normal worlds.

ETB Embedded Trace Buffer™.

ETM Embedded Trace Macrocell™.

Execution state The operational state of the processor, either 64-bit (AArch64) or 32-bit

(AArch32).

FIQ An interrupt type (formerly fast interrupt).

FPSCR Floating-Point Status and Control Register.

GCC GNU Compiler Collection.

GIC Generic Interrupt Controller.

Harvard architecture

Architecture with physically separate storage and signal pathways for

instructions and data.

HCR Hyp Configuration Register.

HMP Heterogenous Multi-Processing.

IMPLEMENTATION DEFINED

Some properties of the processor are defined by the manufacturer.

IPA Intermediate Physical Address.

IRQ Interrupt Request, normally for external interrupts.

ISA Instruction Set Architecture.

ISB Instruction Synchronization Barrier.

ISR Interrupt Service Routine.

Jazelle™ The ARM bytecode acceleration technology.

LLP64 Indicates the size in bits of basic C data types. Under LLP64

int

and

long

data types are 32 bit, pointers and

long long

are 64 bits.

LP64 Indicates the size in bits of basic C data types. Under LP64

int

types are

32 bits, all others are 64 bits.

LPAE Large Physical Address Extension.

LSB Least Significant Bit.

MESI A cache coherency protocol with four states that are Modified, Exclusive,

Shared and Invalid.

MMU Memory Management Unit.

Preface

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. xi

ID050815 Non-Confidential

MOESI A cache coherency protocol with five states that are Modified, Owned,

Exclusive, Shared and Invalid.

Monitor mode When EL3 is using AArch32, the PE mode in which the Secure Monitor

must execute. This mode guards transitions between the Secure and

Normal worlds.

MPU Memory Protection Unit.

NEON™ The ARM Advanced SIMD Extensions.

NIC Network InterConnect.

Normal world The execution environment when the processor is in the Non-secure state.

PCS Procedure Call Standard.

PIPT Physically Indexed, Physically Tagged.

PoC Point of Coherency.

PoU Point of Unification.

PSR Program Status Register.

SCU Snoop Control Unit.

Secure world The execution environment when the processor is in the Secure State.

SIMD Single Instruction, Multiple Data.

SMC Secure Monitor Call. An ARM assembler instruction that causes an

exception that is taken synchronously to EL3.

SMC32 32-bit SMC calling convention

SMC64 64-bit SMC calling convention

SMC Function Identifier

A 32-bit integer which identifies which function is being invoked by this

SMC call. Passed in R0 or W0 to every SMC call

SMMU System MMU.

SMP Symmetric Multi-Processing.

SoC System on Chip.

SP Stack Pointer.

SPSR Saved Program Status Register.

Streamline A graphical performance analysis tool.

SVC Supervisor Call instruction.

SYS System Mode.

Thumb® An instruction set extension to ARM.

Thumb-2 A technology extending the Thumb instruction set to support both 16-bit

and 32-bit instructions.

TLB Translation Lookaside Buffer.

Preface

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. xii

ID050815 Non-Confidential

TrustedOS This is the operating system running in the Secure World. It supports the

execution of trusted applications in Secure EL0. When EL3 is using

AArch64 it executes in Secure EL1. When EL3 is using AArch32 it

executes in Secure EL3 modes other than Monitor mode.

TrustZone® The ARM security extension.

TTB Translation Table Base.

TTBR Translation Table Base Register.

UART Universal Asynchronous Receiver/Transmitter.

UEFI Unified Extensible Firmware Interface.

U-Boot A Linux Bootloader.

UNK Unknown.

UNKNOWN Values in a register cannot be known before they are reset.

UNPREDICTABLE

The value taken cannot be predicted.

USR User mode, a non-privileged processor mode.

VFP The ARM floating-point instruction set. Before ARMv7, the VFP

extension was called the Vector Floating-Point architecture, and was used

for vector operations.

VIPT Virtually Indexed, Physically Tagged.

VMID Virtual Machine Identifier.

XN Execute Never.

Preface

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. xiii

ID050815 Non-Confidential

References

ANSI/IEEE Std 754-1985, “IEEE Standard for Binary Floating-Point Arithmetic”.

ANSI/IEEE Std 754-2008, “IEEE Standard for Binary Floating-Point Arithmetic”.

ANSI/IEEE Std 1003.1-1990, “Standard for Information Technology - Portable Operating

System Interface (POSIX) Base Specifications, Issue 7”.

ANSI/IEEE Std 1149.1-2001, “IEEE Standard Test Access Port and Boundary-Scan

Architecture”.

The ARMv8 Architecture Reference Manual, known as the ARM ARM, fully describes the

ARMv8 instruction set architecture, programmer’s model, system registers, debug features and

memory model. It forms a detailed specification to which all implementations of ARM

processors must adhere.

References to the ARM Architecture Reference Manual in this document are to:

ARM® Architecture Reference Manual - ARMv8, for ARMv8-A architecture profile (ARM DDI

0487).

Note

In the event of a contradiction between this book and the ARM ARM, the ARM ARM is

definitive and must take precedence. In most instances, however, the ARM ARM and the

Cortex-A Series Programmer’s Guide for ARMv8-A cover two separate world views. The most

likely scenario is that this book describes something in a way that does not cover all

architecturally permitted behaviors, or simply rewords an abstract concept in more practical

terms.

ARM® Cortex®-A Series Programmer’s Guide for ARMv7-A (DEN 0013).

ARM® NEON™ Programmer’s Guide (DEN 0018).

ARM® Cortex®-A53 MPCore Processor Technical Reference Manual (DDI 0500).

ARM® Cortex®-A57 MPCore Processor Technical Reference Manual (DDI 0488).

ARM® Generic Interrupt Controller Architecture Specification (ARM IHI 0048).

ARM® Compiler armasm Reference Guide v6.01 (DUI 0802).

ARM® Compiler Software Development Guide v5.05 (DUI 0471).

ARM® C Language Extensions (IHI 0053).

ELF for the ARM® Architecture (ARM IHI 0044).

The individual processor Technical Reference Manuals provide a detailed description of the

processor behavior. They can be obtained from the ARM website documentation area

http://infocenter.arm.com

.

Connected community

The ARM Connected Community makes it easier to design using ARM processors and IP. It is

an interactive platform containing information, discussions and blogs which help you to develop

an ARM-based design efficiently, in collaboration with ARM engineers and our 1200+

Preface

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. xiv

ID050815 Non-Confidential

ecosystem Partners and enthusiasts. Visitors also use the community to find new companies to

work with from the many ARM Partners who first introduced their products and services in their

dedicated area. You can join the Connected Community on

http://community.arm.com

.

Preface

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. xv

ID050815 Non-Confidential

Feedback on this book

ARM hopes you find the Cortex-A Series Programmer’s Guide for ARMv8-A easy to read while

in enough depth to provide the comprehensive introduction to using the processors.

If you have any comments on this book, don’t understand our explanations, think something is

missing, or think that it is incorrect, send an e-mail to

errata@arm.com

. Give:

• The title.

• The number, ARM DEN0024A.

• The page number(s) to which your comments apply.

• What you think needs to be changed.

ARM also welcomes general suggestions for additions and improvements.

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 1-1

ID050815 Non-Confidential

Chapter 1

Introduction

ARMv8-A is the latest generation of the ARM architecture that is targeted at the Applications

Profile. In this book, the name ARMv8 is used to describe the overall architecture, which now

includes both 32-bit execution and 64-bit execution states. ARMv8 introduces the ability to

perform execution with 64-bit wide registers, but provides mechanisms for backwards

compatibility to enable existing ARMv7 software to be executed.

AArch64 is the name used to describe the 64-bit execution state of the ARMv8 architecture.

AArch32 describes the 32-bit execution state of the ARMv8 architecture, which is almost

identical to ARMv7. GNU and Linux documentation (except for Redhat and Fedora

distributions) sometimes refers to AArch64 as ARM64.

Because many of the concepts of the ARMv8-A architecture are shared with the ARMv7-A

architecture, the details of all those concepts are not covered here. As a general introduction to

the ARMv7-A architecture, refer to the ARM® Cortex®-A Series Programmer’s Guide. This

guide can also help you to familiarize yourself with some of the concepts discussed in this

volume. However, the ARMv8-A architecture profile is backwards compatible with earlier

iterations, like most versions of the ARM architecture. Therefore, there is a certain amount of

overlap between the way the ARMv8 architecture and previous architectures function. The

general principles of the ARMv7 architecture are only covered to explain the differences

between the ARMv8 and earlier ARMv7 architectures.

Cortex-A series processors now include both ARMv8-A and ARMv7-A implementations:

• The Cortex-A5, Cortex-A7, Cortex-A8, Cortex-A9, Cortex-A15, and Cortex-A17

processors all implement the ARMv7-A architecture.

• The Cortex-A53 and Cortex-A57 processors implement the ARMv8-A architecture.

Introduction

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 1-2

ID050815 Non-Confidential

ARMv8 processors still support software (with some exceptions) written for the ARMv7-A

processors. This means, for example, that 32-bit code written for the ARMv7 Cortex-A series

processors also runs on ARMv8 processors such as the Cortex-A57. However, the code will

only run when the ARMv8 processor is in the AArch32 execution state. The A64 64-bit

instruction set, however, does not run on ARMv7 processors, and only runs on the ARMv8

processors.

Some knowledge of the C programming language and microprocessors is assumed of the

readers of this book. There are pointers to further reading, referring to books and websites that

can give you a deeper level of background to the subject matter.

The change from 32-bit to 64-bit

There are several performance gains derived from moving to a 64-bit processor.

• The A64 instruction set provides some significant performance benefits, including a

larger register pool. The additional registers and the ARM Architecture Procedure Call

Standard (AAPCS) provide a performance boost when you must pass more than four

registers in a function call. On ARMv7, this would require using the stack, whereas in

AArch64 up to eight parameters can be passed in registers.

• Wider integer registers enable code that operates on 64-bit data to work more efficiently.

A 32-bit processor might require several operations to perform an arithmetic operation on

64-bit data. A 64-bit processor might be able to perform the same task in a single

operation, typically at the same speed required by the same processor to perform a 32-bit

operation. Therefore, code that performs many 64-bit sized operations is significantly

faster.

• 64-bit operation enables applications to use a larger virtual address space. While the Large

Physical Address Extension (LPAE) extends the physical address space of a 32-bit

processor to 40-bit, it does not extend the virtual address space. This means that even with

LPAE, a single application is limited to a 32-bit (4GB) address space. This is because

some of this address space is reserved for the operating system.

• Software running on a 32-bit architecture might need to map some data in or out of

memory while executing. Having a larger address space, with 64-bit pointers, avoids this

problem. However, using 64-bit pointers does incur some cost. The same piece of code

typically uses more memory when running with 64-pointers than with 32-bit pointers.

Each pointer is stored in memory and requires eight bytes instead of four. This might

sound trivial, but can add up to a significant penalty. Furthermore, the increased usage of

memory space associated with a move to 64-bits can cause a drop in the number of

accesses that hit in the cache. This in turn can reduce performance.

The larger virtual address space also enables memory-mapping larger files. This is the

mapping of the file contents into the memory map of a thread. This can occur even though

the physical RAM might not be large enough to contain the whole file.

Introduction

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 1-3

ID050815 Non-Confidential

1.1 How to use this book

This book provides a single guide for programmers who want to use the Cortex-A series

processors that implement the ARMv8 architecture. The guide brings together information from

a wide variety of sources that is useful to both ARM assembly language and C programmers. It

is meant to complement rather than replace other ARM documentation available for ARMv8

processors. The other documents for specific information includes the ARM Technical

Reference Manuals (TRMs) for the processors themselves, documentation for individual

devices or boards or, most importantly, the ARM Architecture Reference Manual - ARMv8, for

ARMv8-A architecture profile - the ARM ARM.

This book is not written at an introductory level. It assumes some knowledge of the C

programming language and microprocessors. Hardware concepts such as caches and Memory

Management Units are covered, but only where this knowledge is valuable to the application

writer. The book looks at the way operating systems utilize ARMv8 features, and how to take

full advantage of the capabilities of the ARMv8 processors. Some chapters contain pointers to

additional reading. We also refer to books and web sites that can give a deeper level of

background to the subject matter, but often the main focus is the ARM-specific detail. No

assumptions are made on the use of any particular toolchain, and both GNU and ARM tools are

mentioned throughout the book.

If you are new to the ARMv8 architecture, Chapter 2 ARMv8-A Architecture and Processors

describes the previous 32-bit ARM architectures, introduces ARMv8, and describes some of the

properties of the ARMv8 processors. Next, Chapter 3 Fundamentals of ARMv8 describes the

building blocks of the architecture in the form of Exception levels and Execution states.

Chapter 4 ARMv8 Registers then describes the registers available to you in the ARMv8

architecture.

One of the most significant changes introduced in the ARMv8 architecture is the addition of a

64-bit instruction set, which complements the existing 32-bit architecture. Chapter 5 An

Introduction to the ARMv8 Instruction Sets describes the differences between the Instruction Set

Architecture (ISA) of ARMv7 (A32), and that of the A64 instruction set. Chapter 6 The A64

instruction set looks at the Instruction Set and its use in more detail. In addition to a new

instruction set for general operation, ARMv8 also has a changed NEON and floating-point

instruction set. Chapter 7 AArch64 Floating-point and NEON describes the changes in ARMv8

to ARM Advanced SIMD (NEON) and floating-point instructions. For a more detailed guide to

NEON and its capabilities at ARMv7, refer to the ARM® NEON™ Programmer’s Guide.

Chapter 8 Porting to A64 of this book covers the problems you might encounter when porting

code from other architectures, or previous ARM architectures to ARMv8. Chapter 9 The ABI

for ARM 64-bit Architecture describes the Application Binary Interface (ABI) for the ARM

architecture specification. The ABI is a specification for all the programming behavior of an

ARM target, which governs the form your 64-bit code takes. Chapter 10 AArch64 Exception

Handling describes the exception handling behavior of ARMv8 in AArch64 state.

Following this, the focus moves to the internal architecture of the processor. Chapter 11 Caches

describes the design of caches and how the use of caches can improve performance.

An important motivating factor behind ARMv8 and moving to a 64-bit architecture is

potentially enabling access to larger address space than is possible using just 32 bits. Chapter 12

The Memory Management Unit describes how the MMU converts virtual memory addresses to

physical addresses.

Chapter 13 Memory Ordering describes the weakly-ordered model of memory in the ARMv8

architecture. Generally, this means that the order of memory accesses is not required to be the

same as the program order for load and store operations. Only some programmers must be aware

of memory ordering issues. If your code interacts directly with the hardware or with code

Introduction

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 1-4

ID050815 Non-Confidential

executing on other cores, directly loads or writes instructions to be executed, or modifies page

tables, then you might have to think about ordering and barriers. This also applies if you are

implementing your own synchronization functions or lock-free algorithms.

Chapter 14 Multi-core processors describes how the ARMv8-A architecture supports systems

with multiple cores. Systems that use the ARMv8 processors are almost always implemented in

such a way. Chapter 15 Power Management describes how ARM cores use their hardware that

can reduce power use. A further aspect of power management, applied to multi-core and

multi-cluster systems is covered in Chapter 16 big.LITTLE Technology. This chapter describes

how big.LITTLE technology from ARM couples together an energy efficient LITTLE core with

a high performance big core, to provide a system with high performance and power efficiency.

Chapter 17 Security describes how the ARMv8 processors can create a Secure, or trusted system

that protects assets such as passwords or credit card details from unauthorized copying or

damage. The main part of the book then concludes with Chapter 18 Debug describing the

standard debug and trace features available in the Cortex-A53 and Cortex-A57 processors.

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 2-1

ID050815 Non-Confidential

Chapter 2

ARMv8-A Architecture and Processors

The ARM architecture dates back to 1985, but it has not stayed static. On the contrary, it has

developed massively since the early ARM cores, adding features and capabilities at each step:

ARMv4 and earlier

These early processors used only the ARM 32-bit instruction set.

ARMv4T The ARMv4T architecture added the Thumb 16-bit instruction set to the ARM

32-bit instruction set. This was the first widely licensed architecture. It was

implemented by the ARM7TDMI® and ARM9TDMI® processors.

ARMv5TE The ARMv5TE architecture added improvements for DSP-type operations,

saturated arithmetic, and for ARM and Thumb interworking. The ARM926EJ-S®

implements this architecture.

ARMv6 ARMv6 made several enhancements, including support for unaligned memory

accesses, significant changes to the memory architecture and for multi-processor

support. Additionally, some support for SIMD operations operating on bytes or

halfwords within the 32-bit registers was included. The ARM1136JF-S®

implements this architecture. The ARMv6 architecture also provided some

optional extensions, notably Thumb-2 and Security Extensions (TrustZone®).

Thumb-2 extends Thumb to be a mixed length 16-bit and 32-bit instruction set.

ARMv7-A The ARMv7-A architecture makes the Thumb-2 extensions mandatory and adds

the Advanced SIMD extensions (NEON). Before ARMv7, all cores conformed to

essentially the same architecture or feature set. To help address an increasing

range of differing applications, ARM introduced a set of architecture profiles:

• ARMv7-A provides all the features necessary to support a platform

Operating System such as Linux.

ARMv8-A Architecture and Processors

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 2-2

ID050815 Non-Confidential

• ARMv7-R provides predictable real-time high-performance.

• ARMv7-M is targeted at deeply-embedded microcontrollers.

An M profile was also added to the ARMv6 architecture to enable features

for the older architecture. The ARMv6M profile is used by low-cost

microprocessors with low power consumption.

ARMv8-A Architecture and Processors

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 2-3

ID050815 Non-Confidential

2.1 ARMv8-A

The ARMv8-A architecture is the latest generation ARM architecture targeted at the

Applications Profile. The name ARMv8 is used to describe the overall architecture, which now

includes both 32-bit execution and 64-bit execution. It introduces the ability to perform

execution with 64-bit wide registers, while preserving backwards compatibility with existing

ARMv7 software.

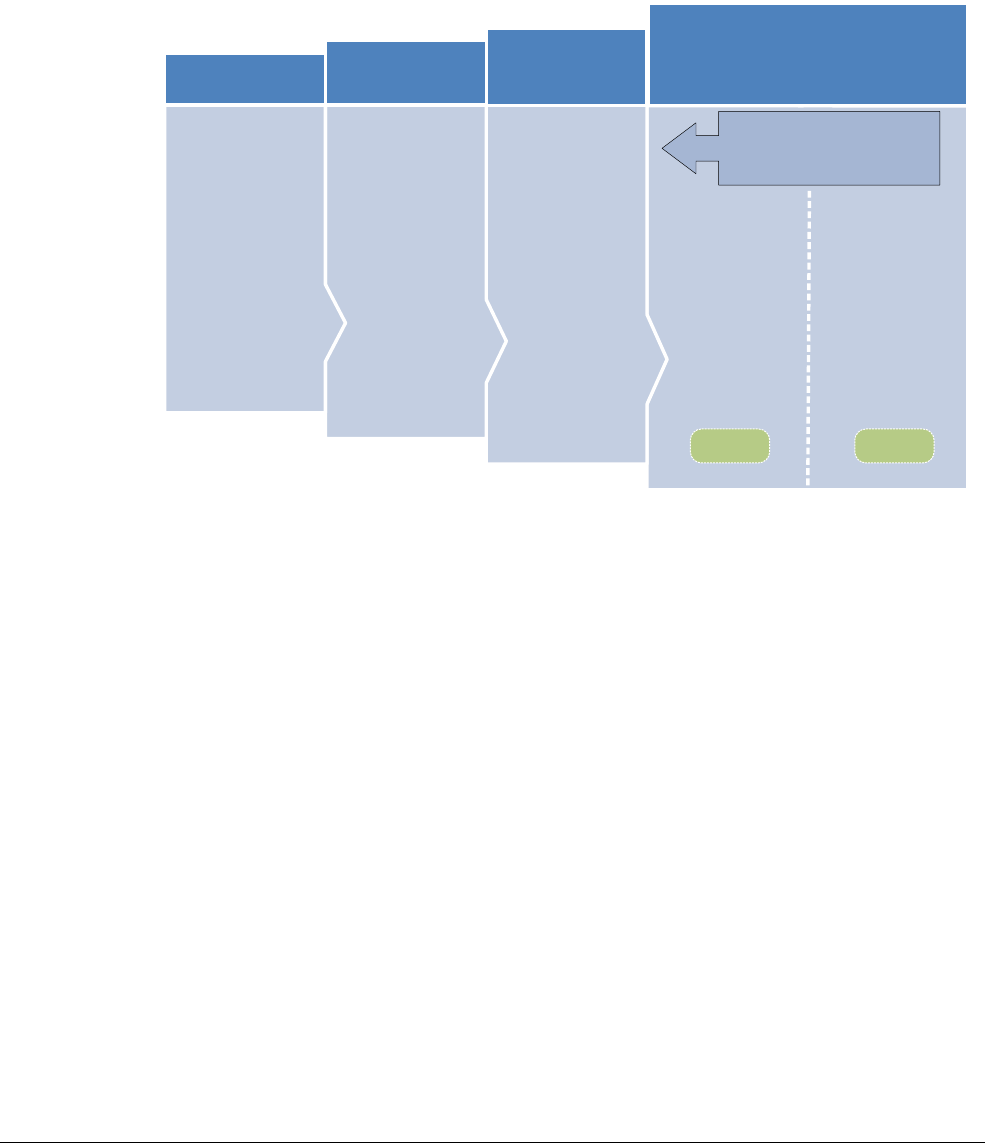

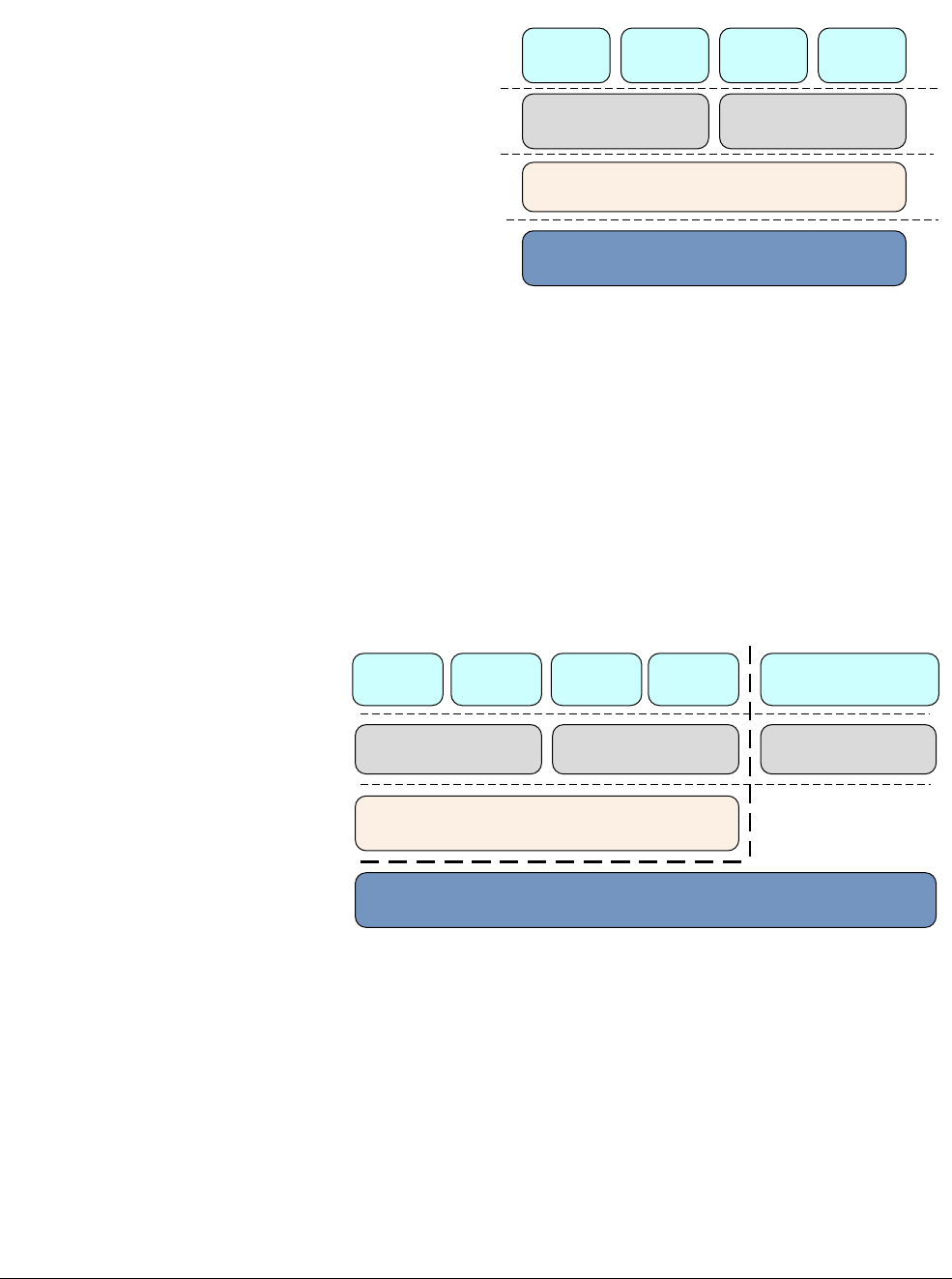

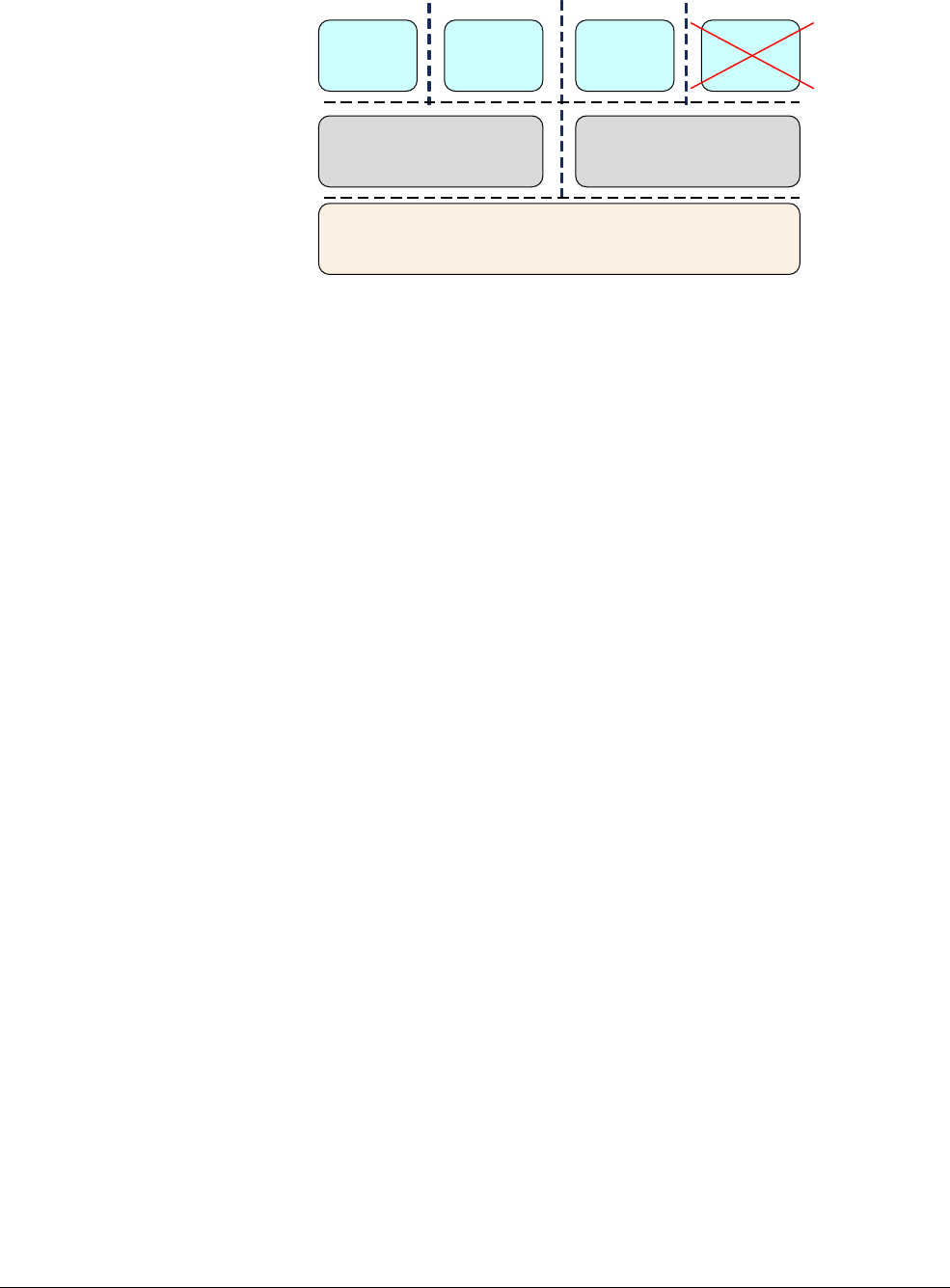

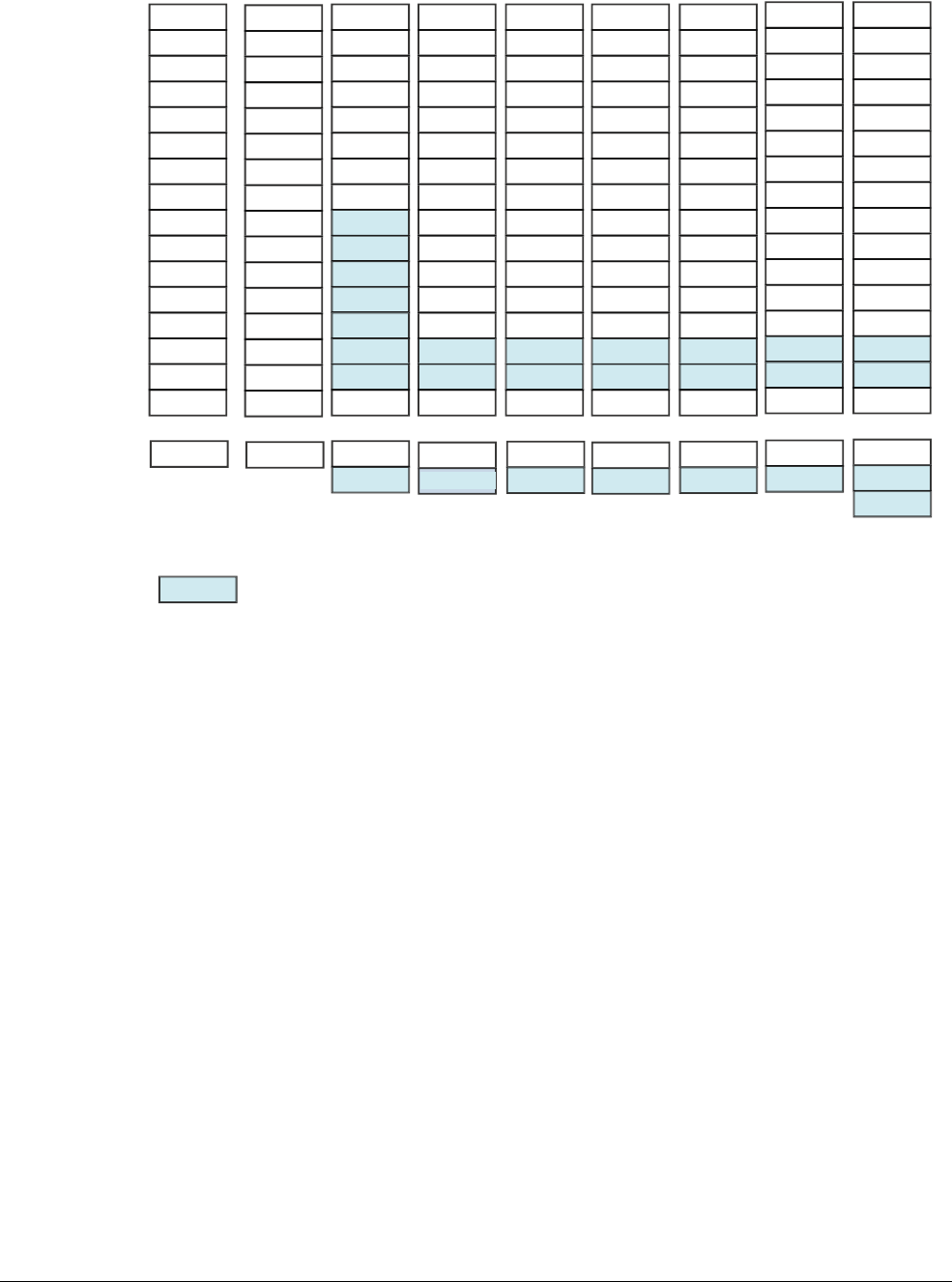

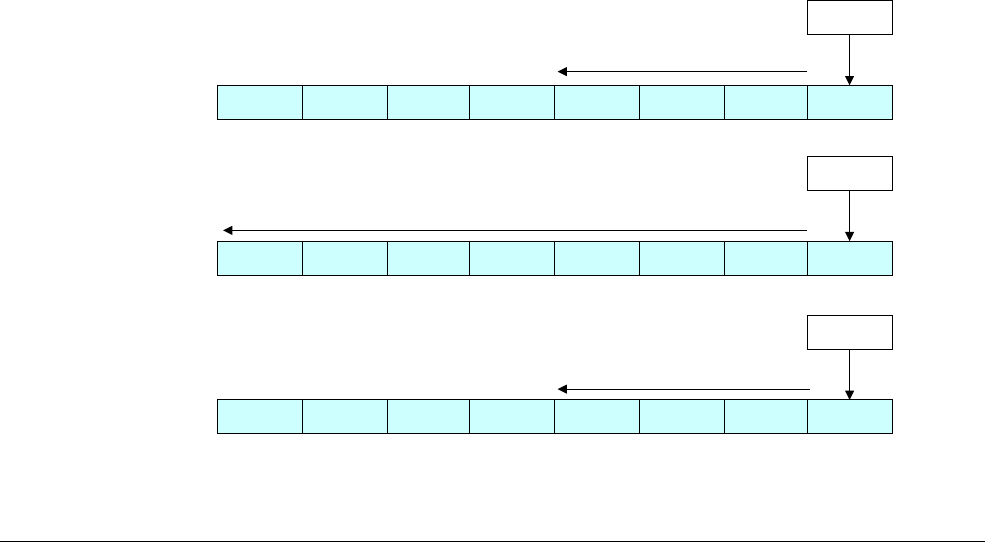

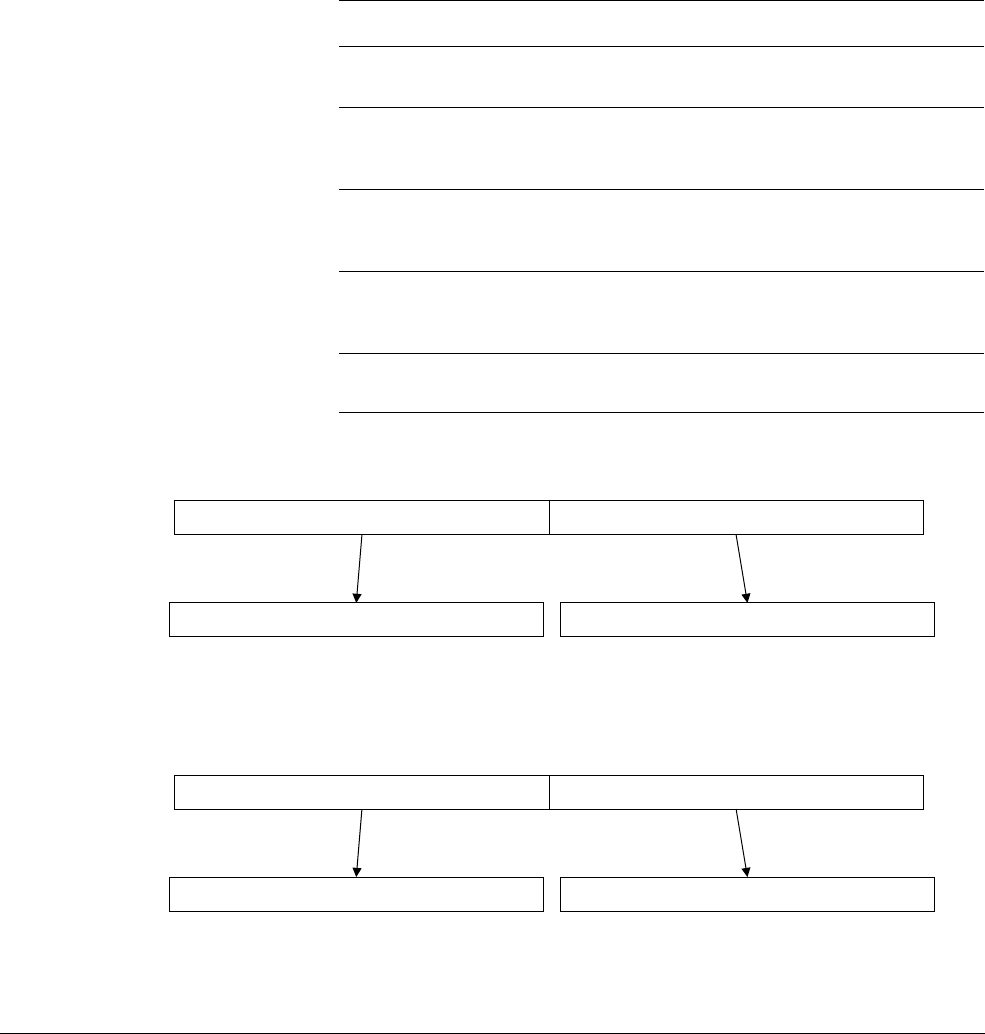

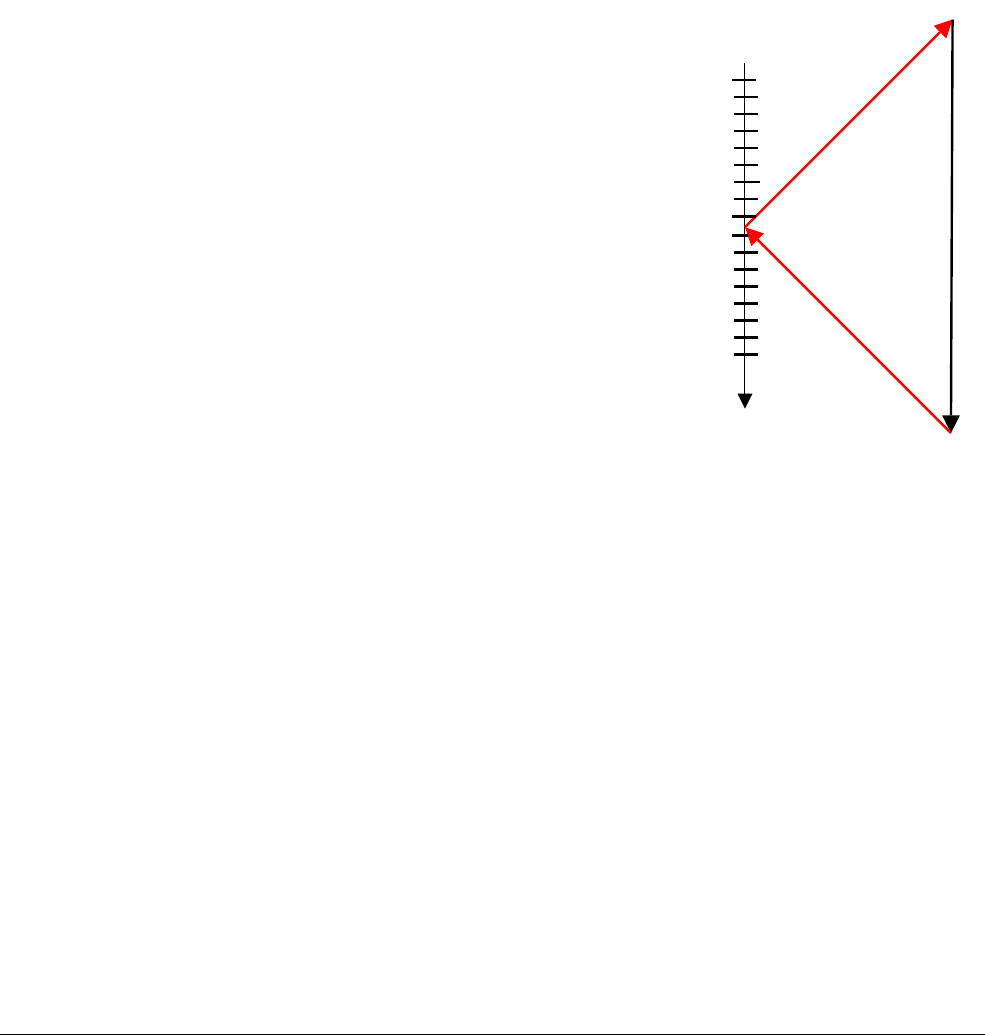

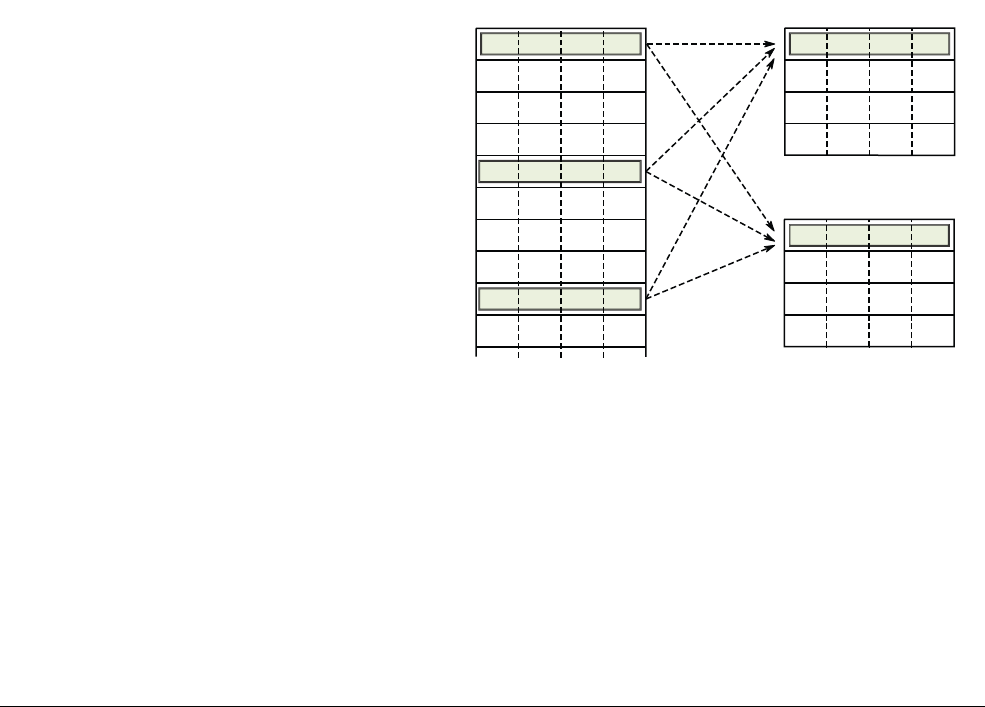

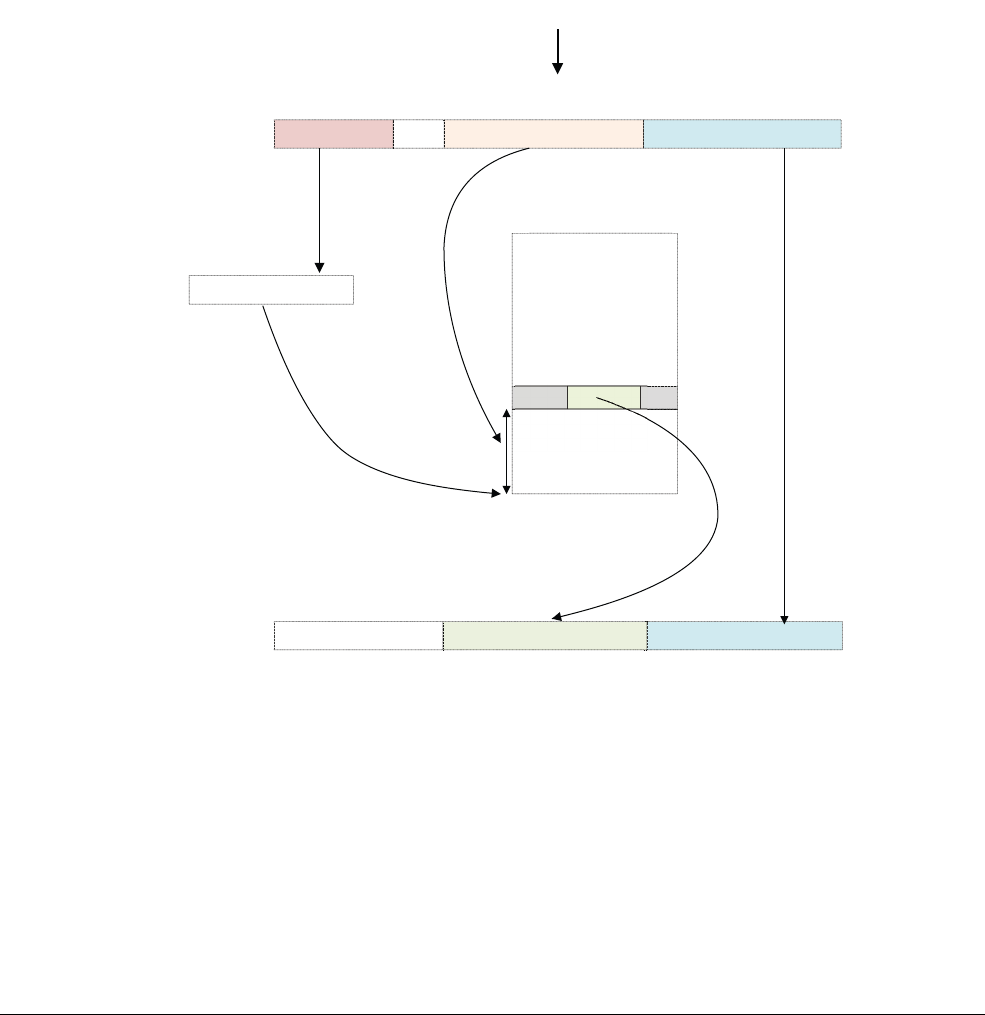

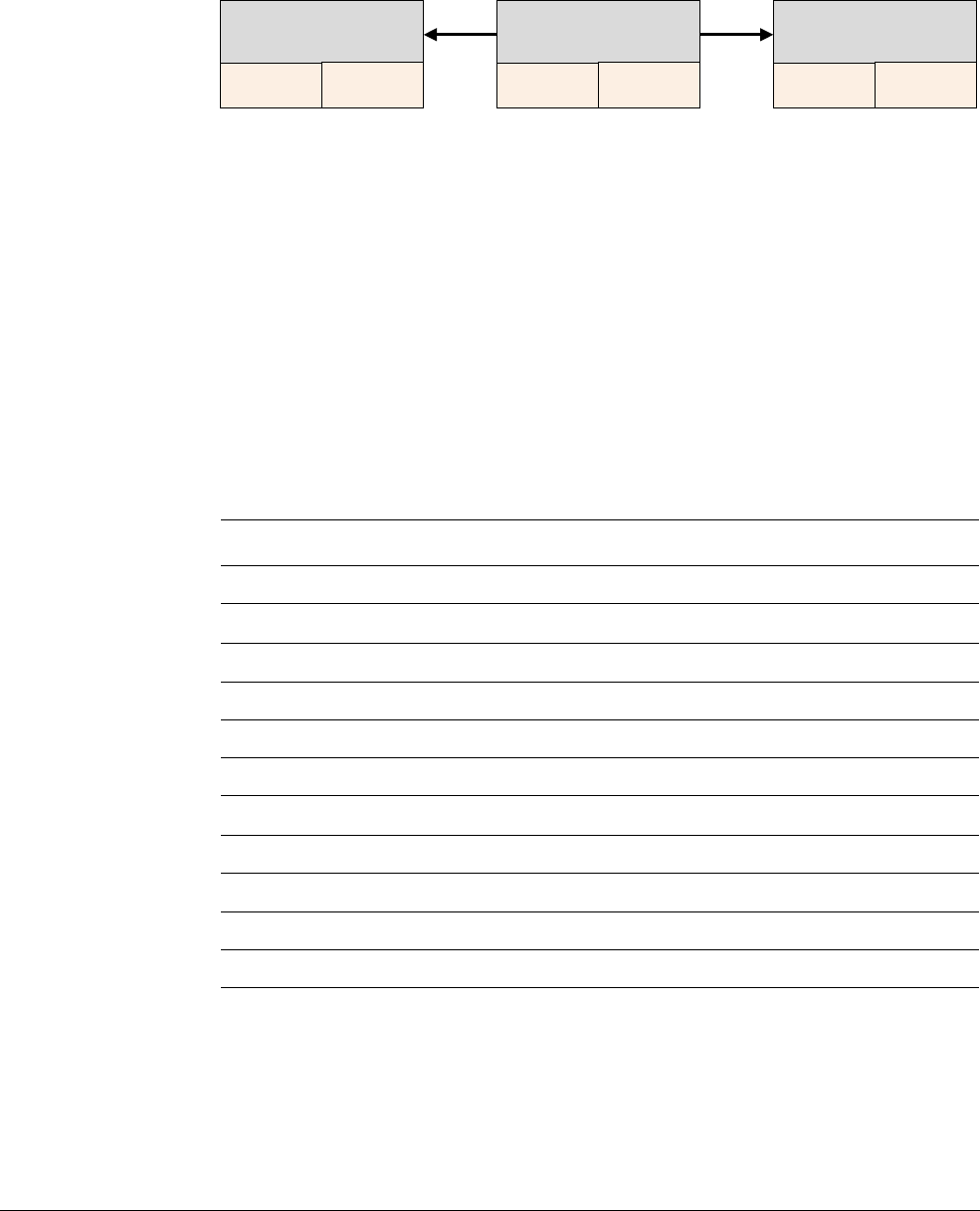

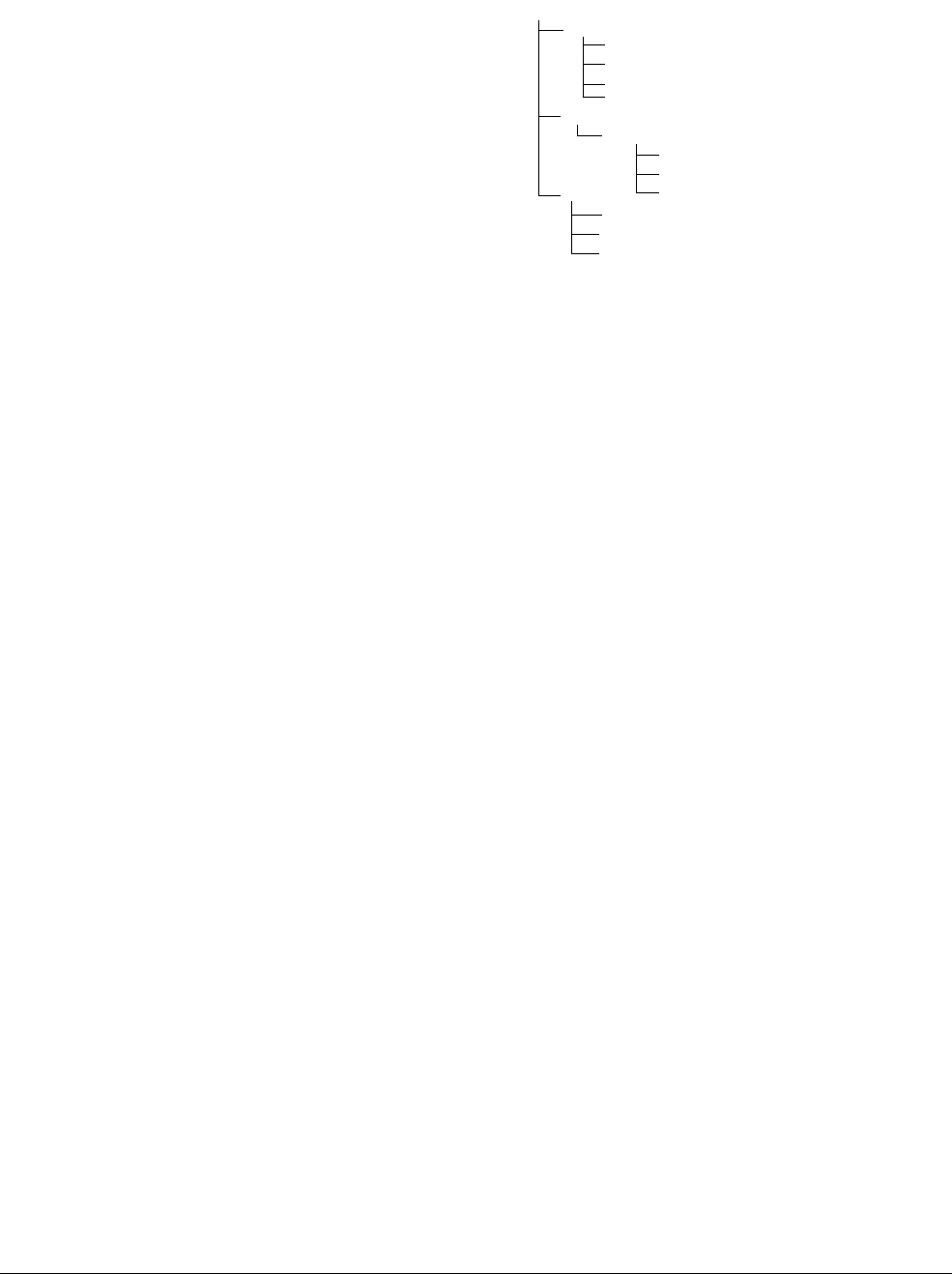

Figure 2-1 Development of the ARMv8 architecture

The ARMv8-A architecture introduces a number of changes, which enable significantly higher

performance processor implementations to be designed.

Large physical address

This enables the processor to access beyond 4GB of physical memory.

64-bit virtual addressing

This enables virtual memory beyond the 4GB limit. This is important for modern

desktop and server software using memory mapped file I/O or sparse addressing.

Automatic event signaling

This enables power-efficient, high-performance spinlocks.

Larger register files

Thirty-one 64-bit general-purpose registers increase performance and reduce

stack use.

Efficient 64-bit immediate generation

There is less need for literal pools.

Large PC-relative addressing range

A +/-4GB addressing range for efficient data addressing within shared libraries

and position-independent executables.

v8

v7

v6

v5

VFPv2 Thumb-2

TrustZone

SIMD

VFPv3/v4

NEON

A32+T32 ISAs

Scalar FP (SP

and DP)

Adv SIMD (SP

Float)

AArch32

Crypto Crypto

Key Feature ARMv7-A

Compatibility

A64 ISAs

Scalar FP (SP

and DP)

Adv SIMD (SP &

DP Float)

AArch64

ARMv8-A Architecture and Processors

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 2-4

ID050815 Non-Confidential

Additional 16KB and 64KB translation granules

This reduces Translation Lookaside Buffer (TLB) miss rates and depth of page

walks.

New exception model

This reduces OS and hypervisor software complexity.

Efficient cache management

User space cache operations improve dynamic code generation efficiency. Fast

Data cache clear using a Data Cache Zero instruction.

Hardware-accelerated cryptography

Provides 3× to 10× better software encryption performance. This is useful for

small granule decryption and encryption too small to offload to a hardware

accelerator efficiently, for example https.

Load-Acquire, Store-Release instructions

Designed for C++11, C11, Java memory models. They improve performance of

thread-safe code by eliminating explicit memory barrier instructions.

NEON double-precision floating-point advanced SIMD

This enables SIMD vectorization to be applied to a much wider set of algorithms,

for example, scientific computing, High Performance Computing (HPC) and

supercomputers.

ARMv8-A Architecture and Processors

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 2-5

ID050815 Non-Confidential

2.2 ARMv8-A Processor properties

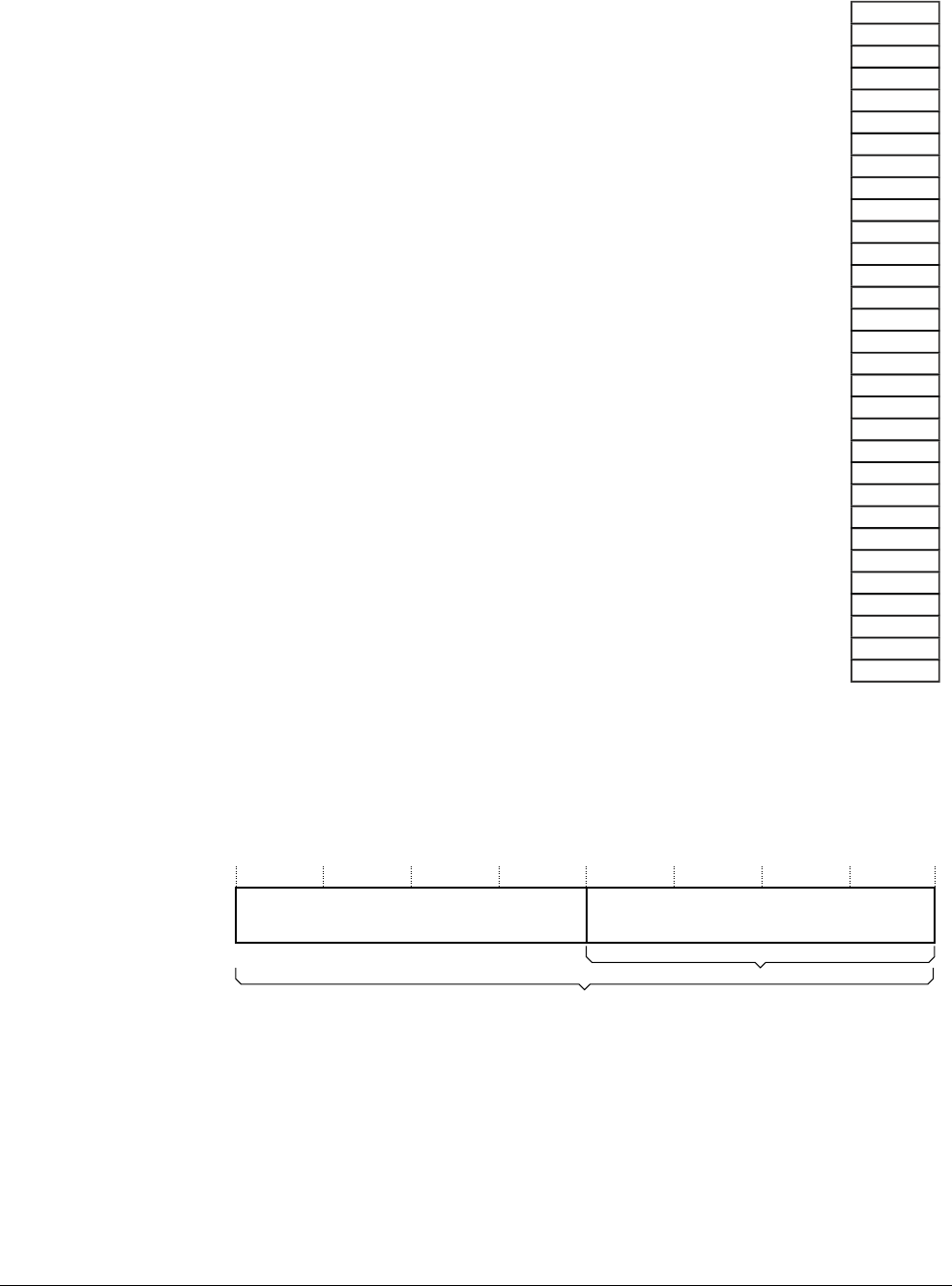

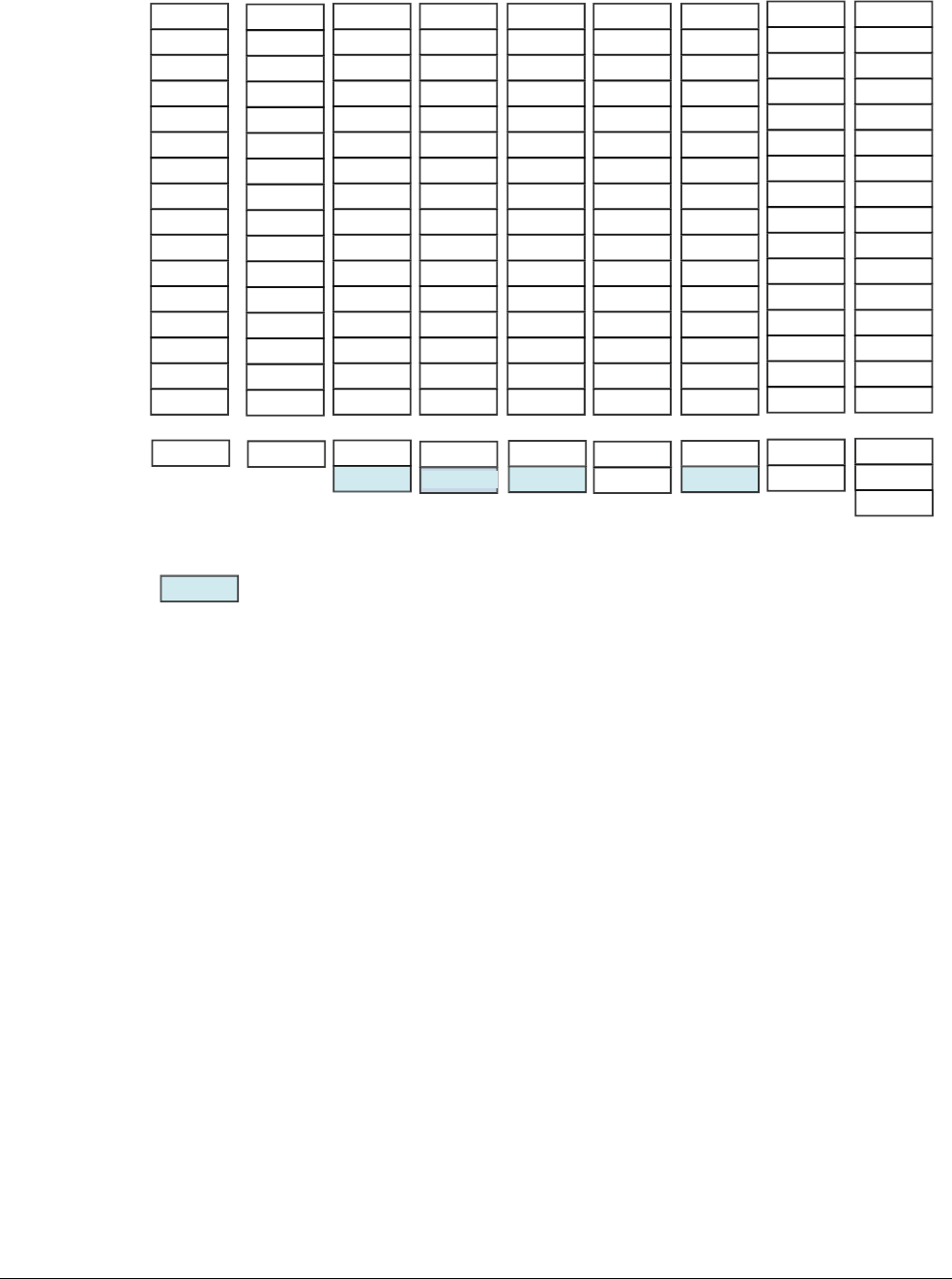

Table 2-1 compares the properties of the processor implementations from ARM that support the

ARMv8-A architecture.

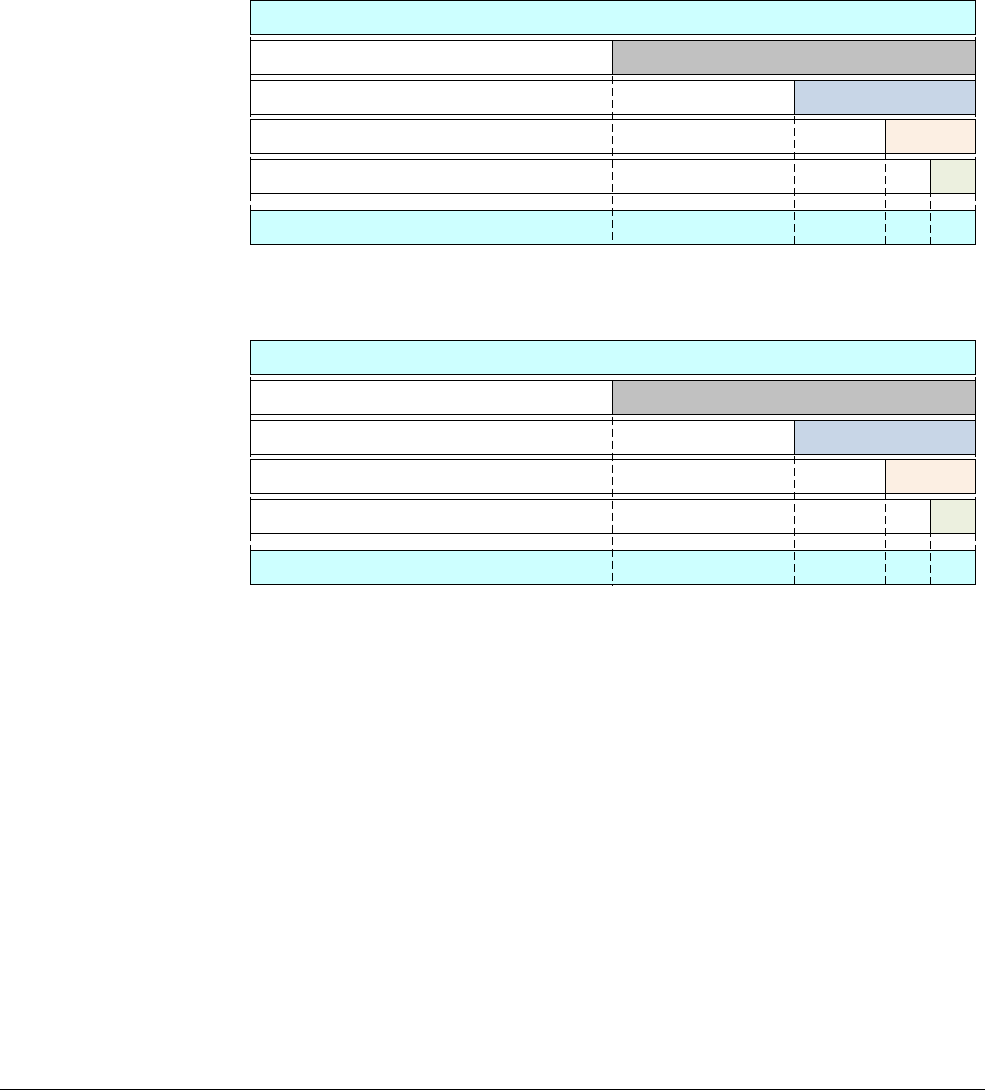

Table 2-1 Comparison of ARMv8-A processors

Processor

Cortex-A53 Cortex-A57

Release date July 2014 January 2015

Typical clock speed 2GHz on 28nm 1.5 to 2.5 GHz on 20nm

Execution order In-order Out of order, speculative

issue, superscalar

Cores 1 to 4 1 to 4

Integer Peak throughput 2.3MIPS/MHz 4.1 to 4.76MIPS/MHza

A.IMPLEMENTATION DEFINED

Floating-point Unit Yes Yes

Half-precision Yes Yes

Hardware Divide Yes Yes

Fused Multiply Accumulate Yes Yes

Pipeline stages 8 15+

Return stack entries 4 8

Generic Interrupt Controller External External

AMBA interface 64-bit I/F AMBA 4

(Supports AMBA 4

and AMBA 5)

128-bit I/F AMBA 4

(Supports AMBA 4 and

AMBA 5)

L1 Cache size (Instruction) 8KB to 64 KB 48KB

L1 Cache structure (Instruction) 2-way set associative 3-way set associative

L1 Cache size (Data) 8KB to 64KB 32KB

L1 Cache structure (Data) 4-way set associative 2-way set associative

L2 Cache Optional Integrated

L2 Cache size 128KB to 2MB 512KB to 2MB

L2 Cache structure 16-way set associative 16-way set associative

Main TLB entries 512 1024

uTLB entries 10 48 I-side

32 D-side

ARMv8-A Architecture and Processors

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 2-6

ID050815 Non-Confidential

2.2.1 ARMv8 processors

This section describes each of the processors that implement the ARMv8-A architecture. It only

gives a general description in each case. For more specific information on each processor, see

Table 2-1 on page 2-5.

The Cortex-A53 processor

The Cortex-A53 processor is a mid-range, low-power processor with between one and four

cores in a single cluster, each with an L1 cache subsystem, an optional integrated GICv3/4

interface, and an optional L2 cache controller.

The Cortex-A53 processor is an extremely power efficient processor capable of supporting

32-bit and 64-bit code. It delivers significantly higher performance than the highly successful

Cortex-A7 processor. It is capable of deployment as a standalone applications processor, or

paired with the Cortex-A57 processor in a big.LITTLE configuration for optimum performance,

scalability, and energy efficiency.

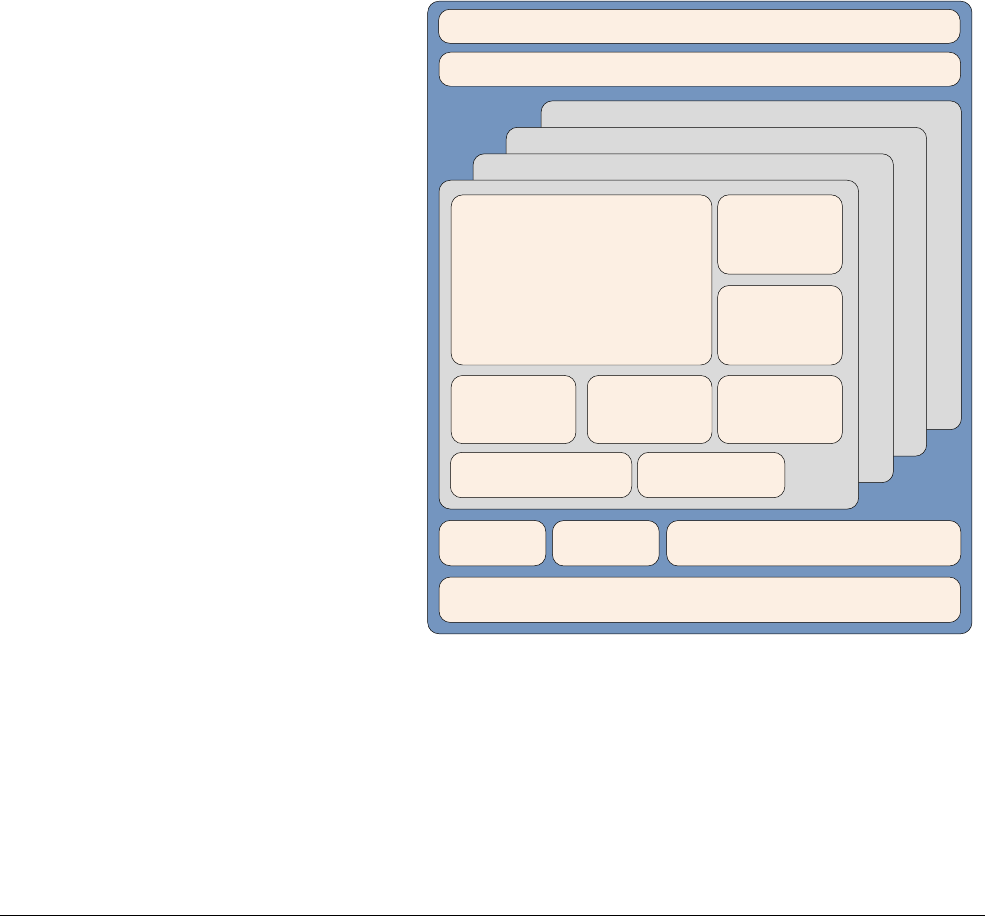

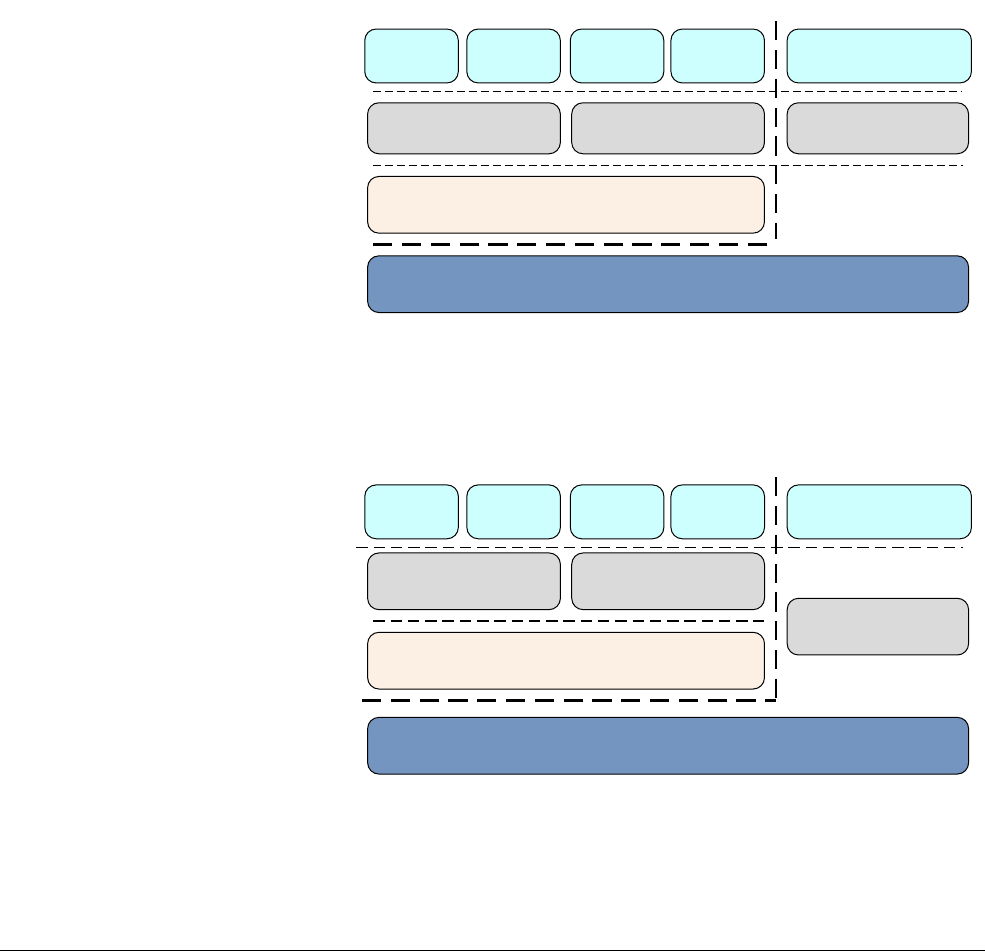

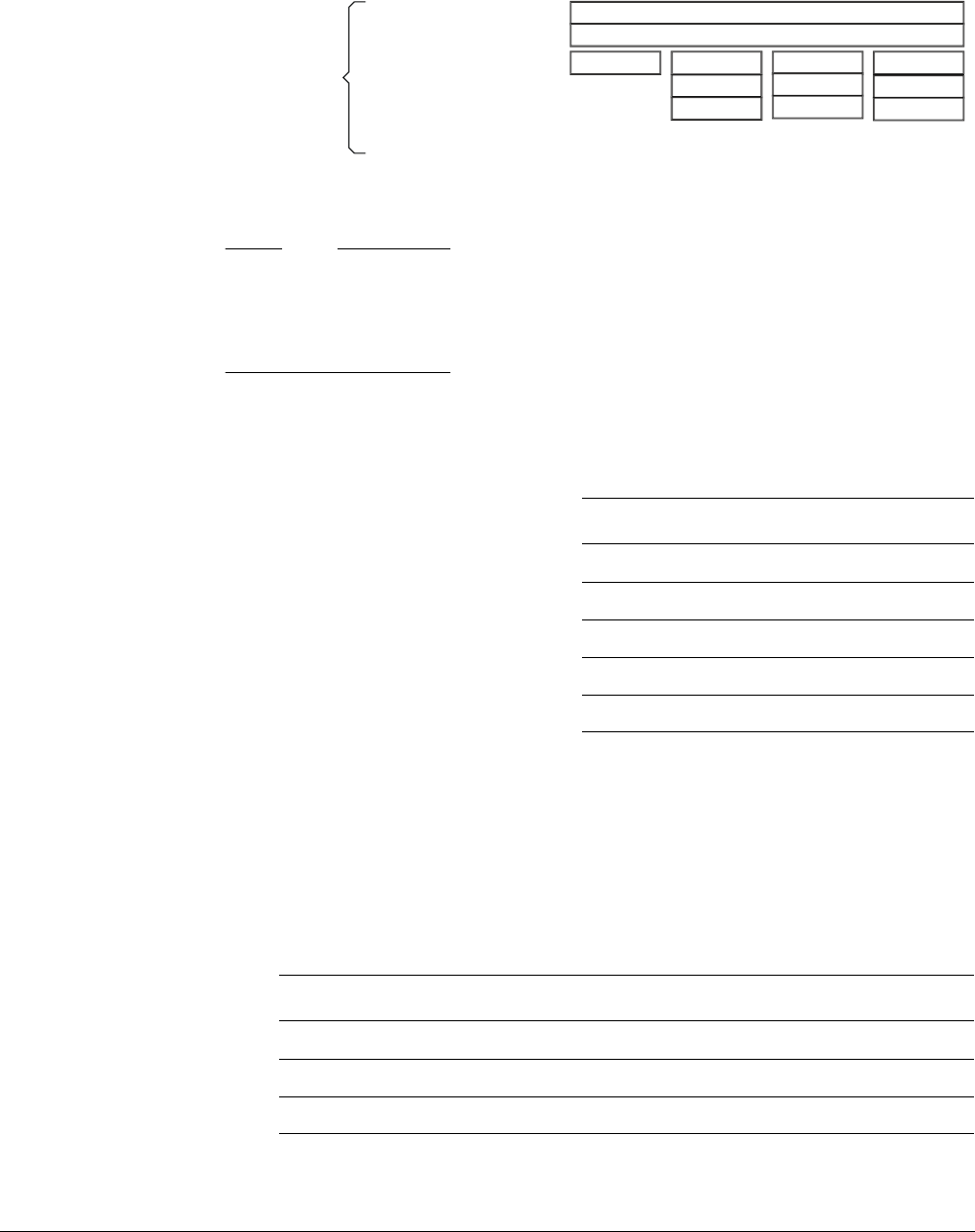

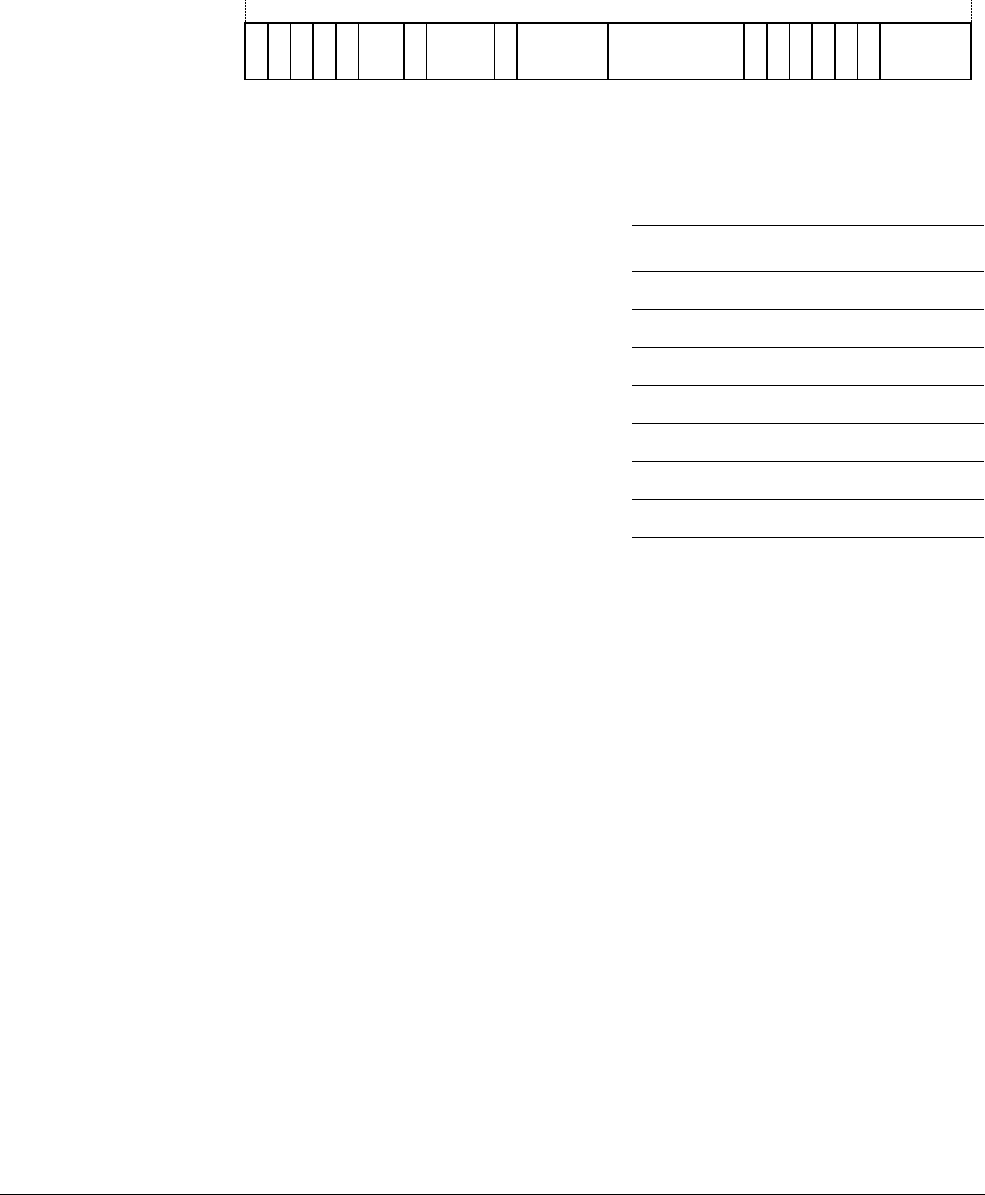

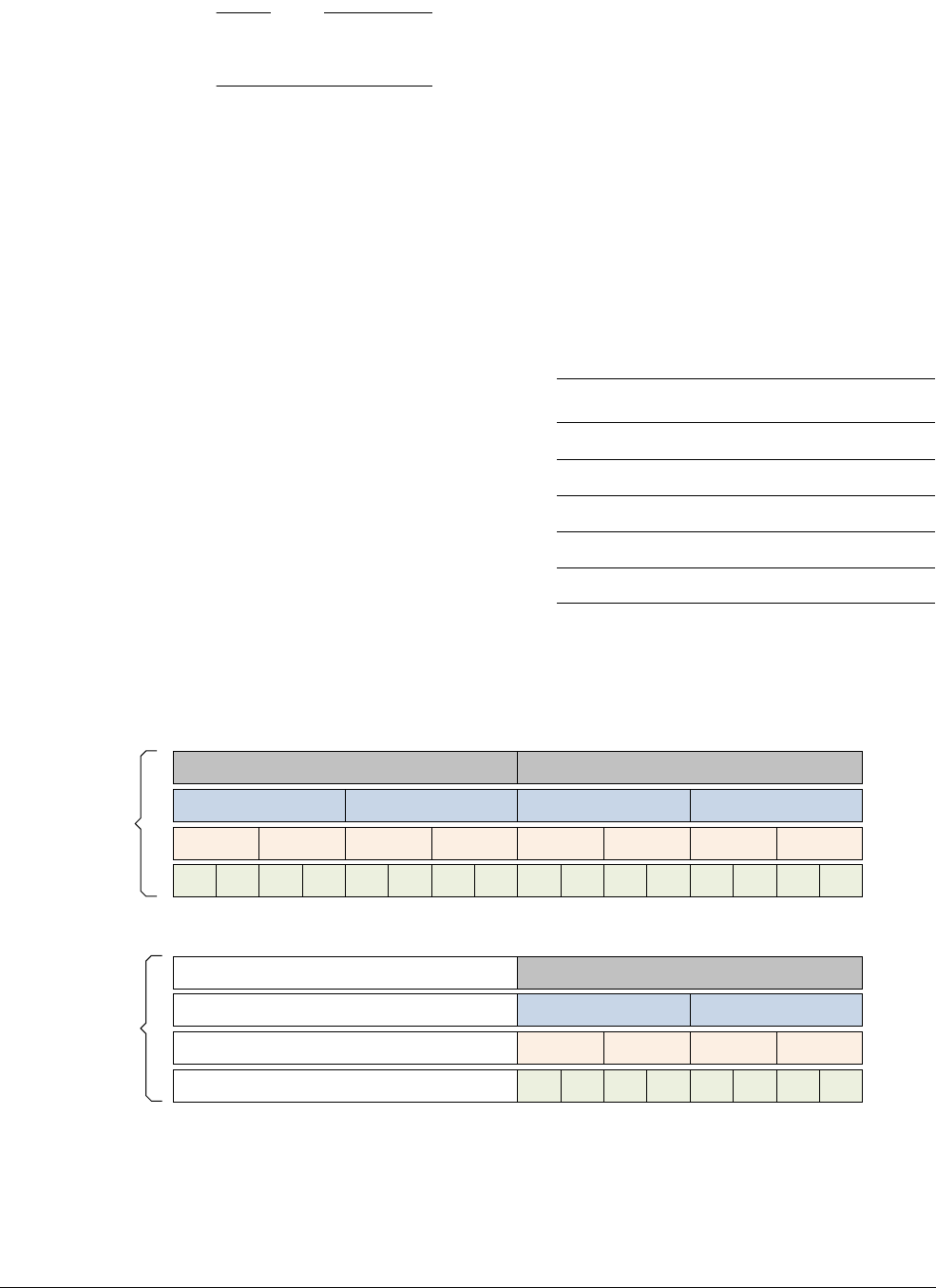

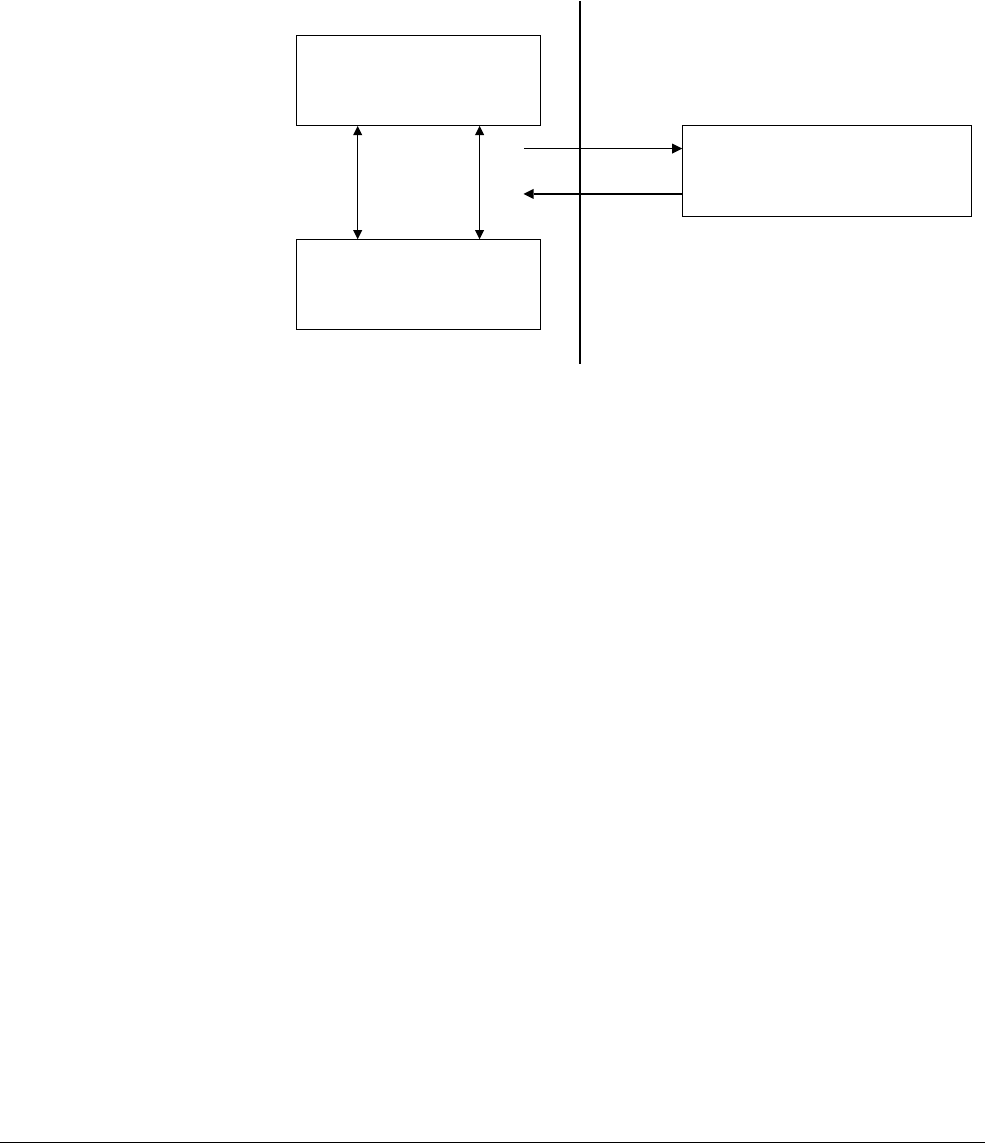

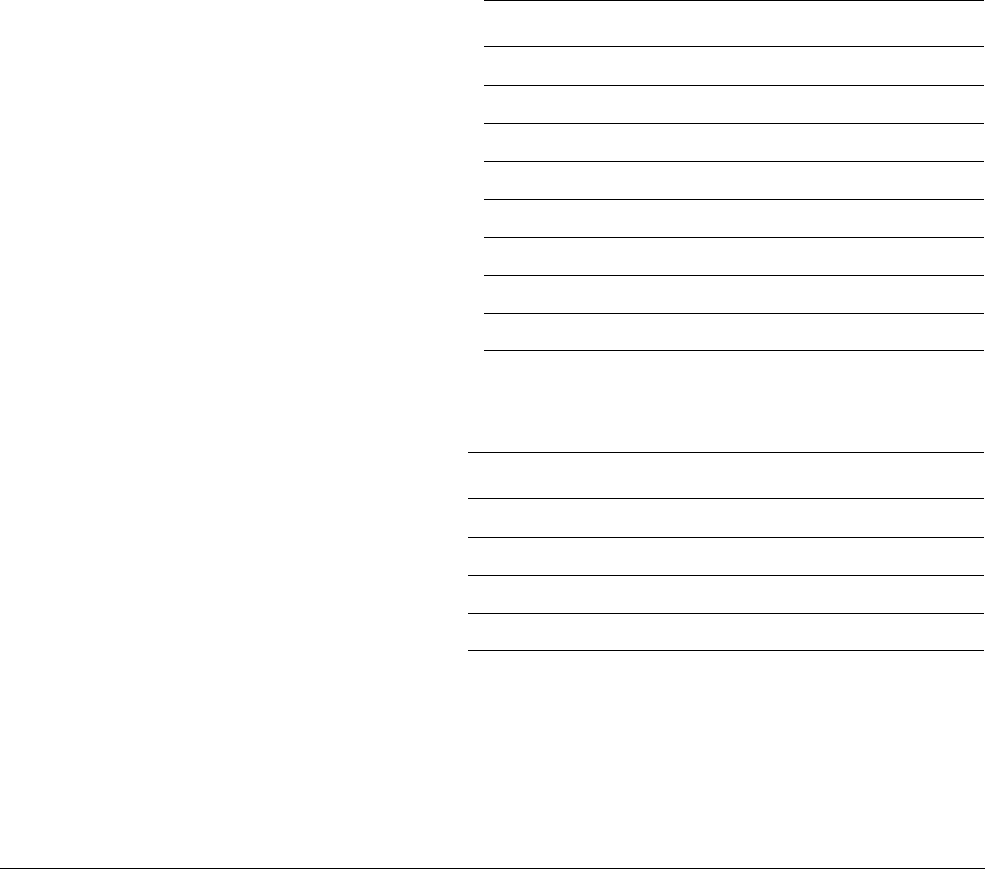

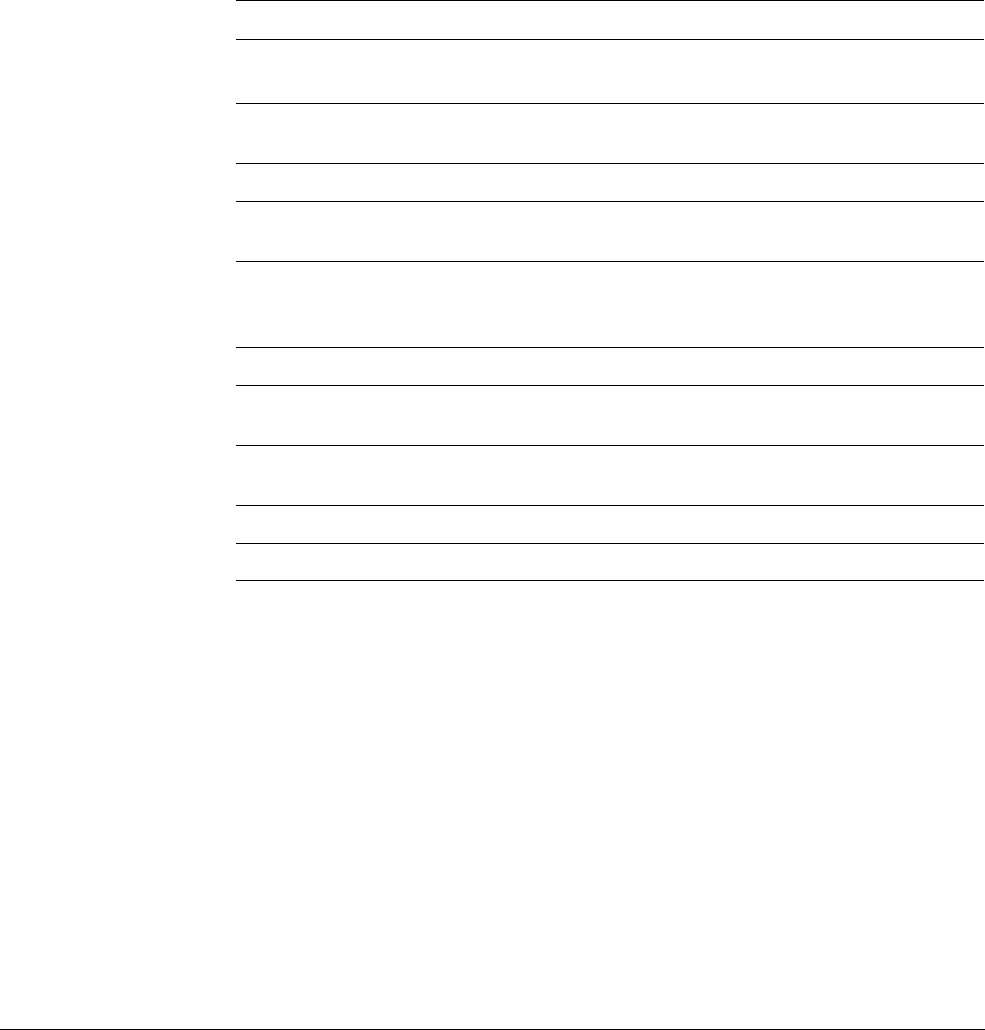

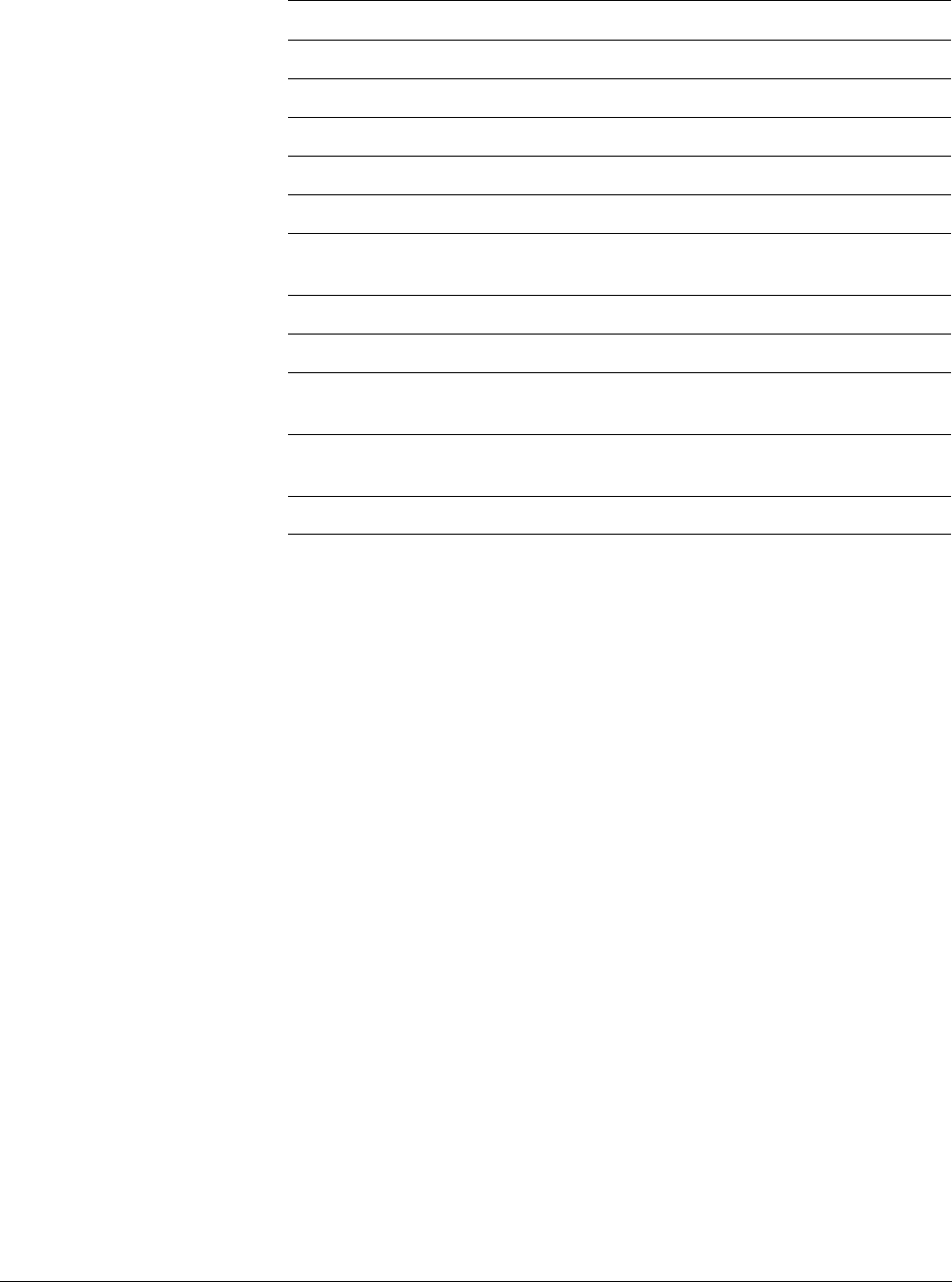

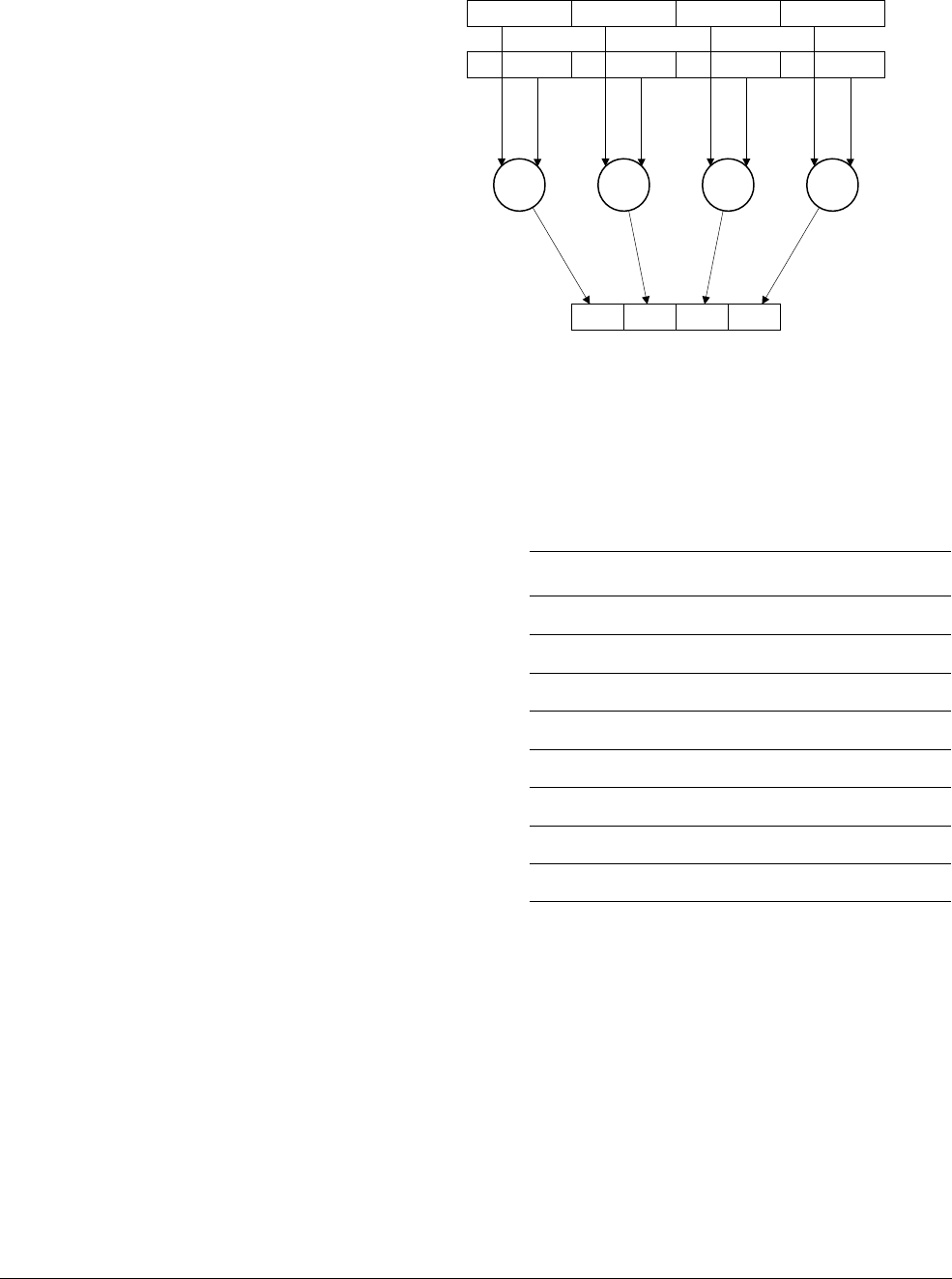

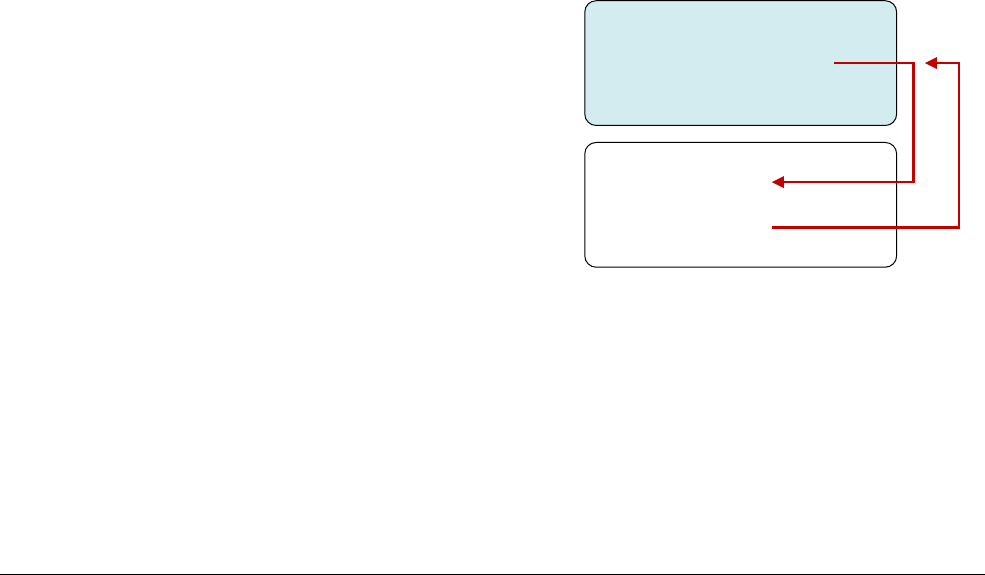

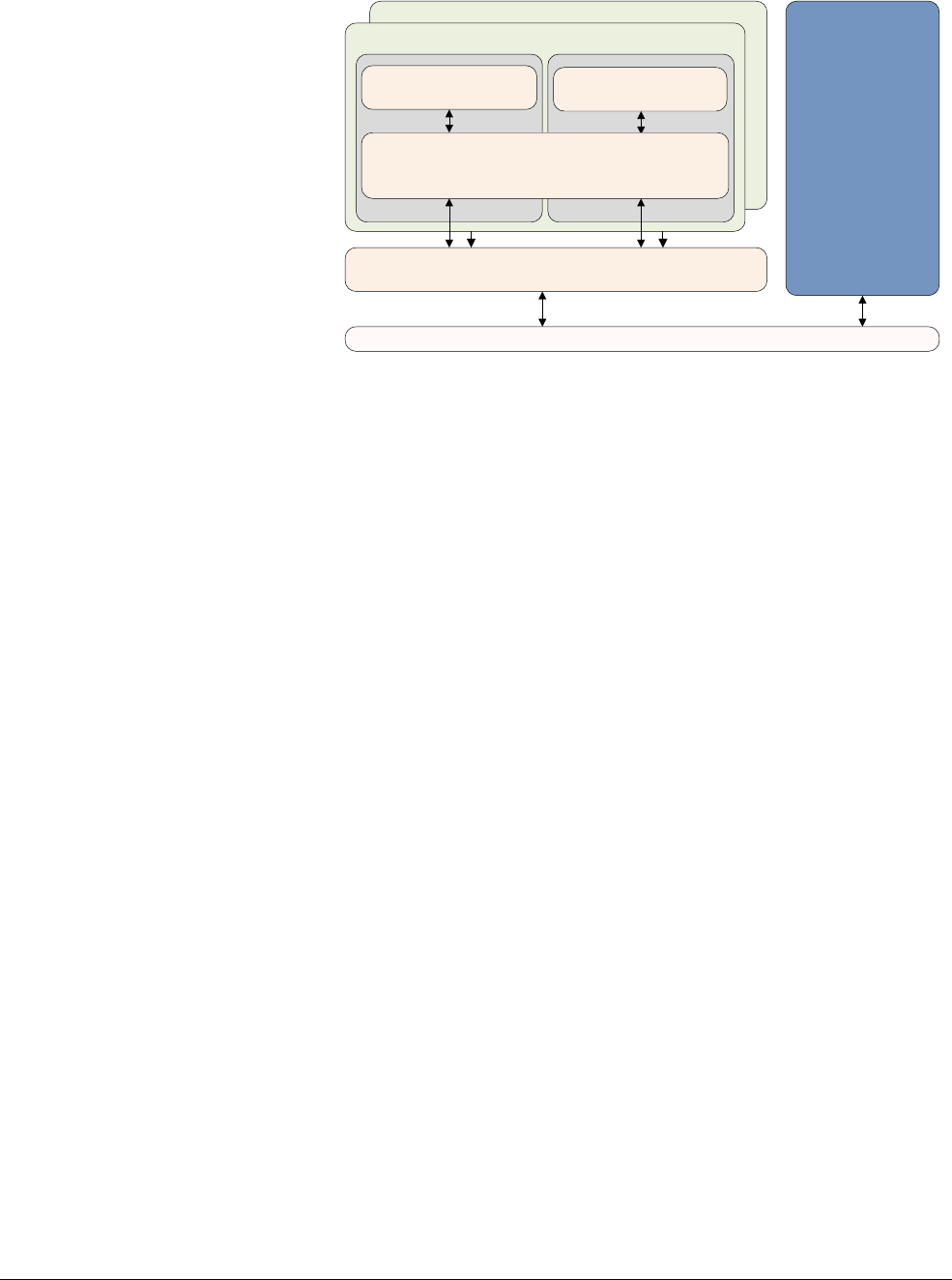

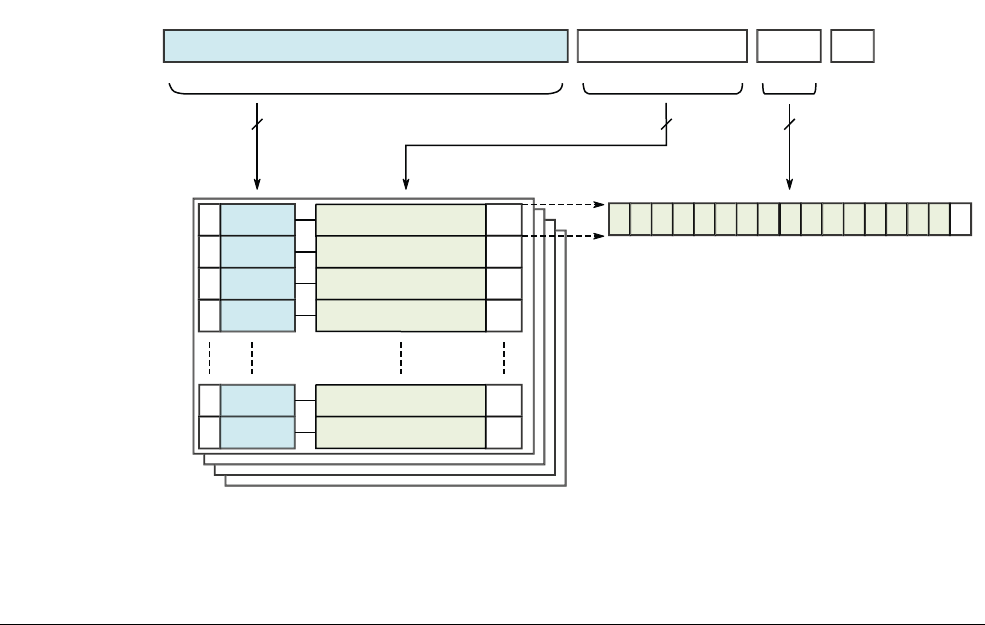

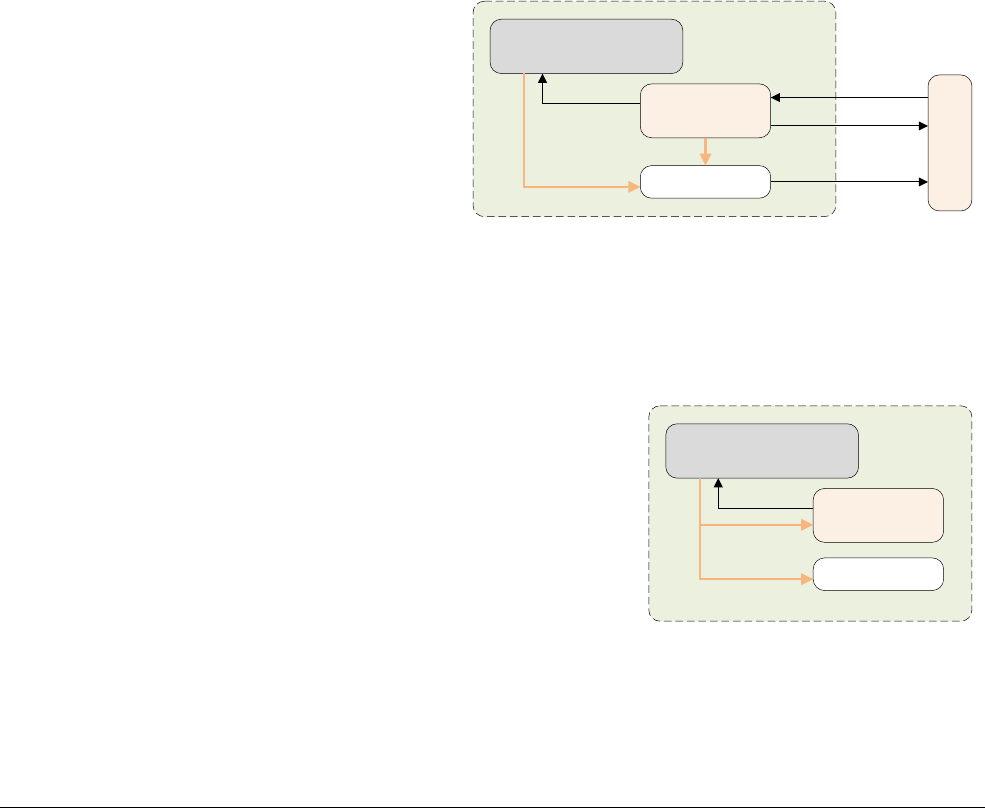

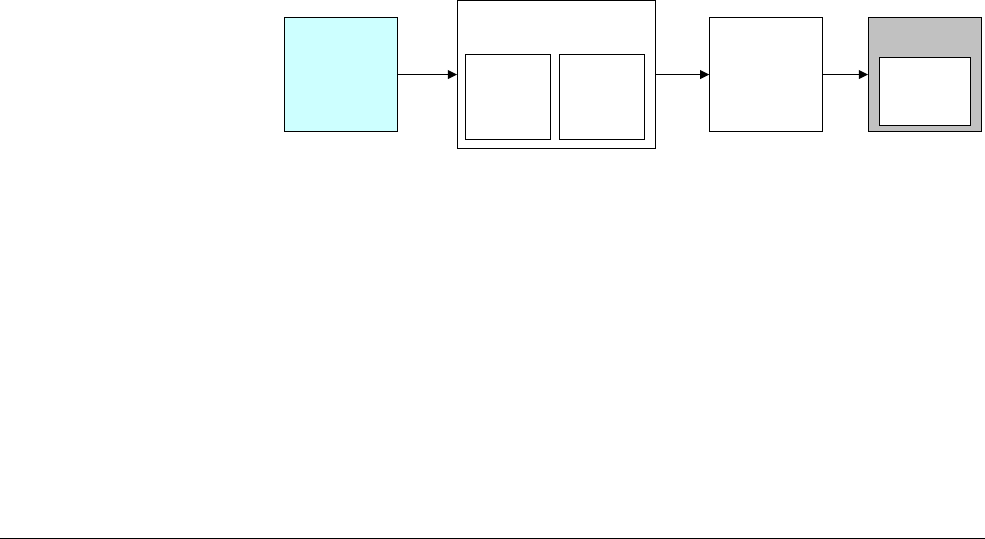

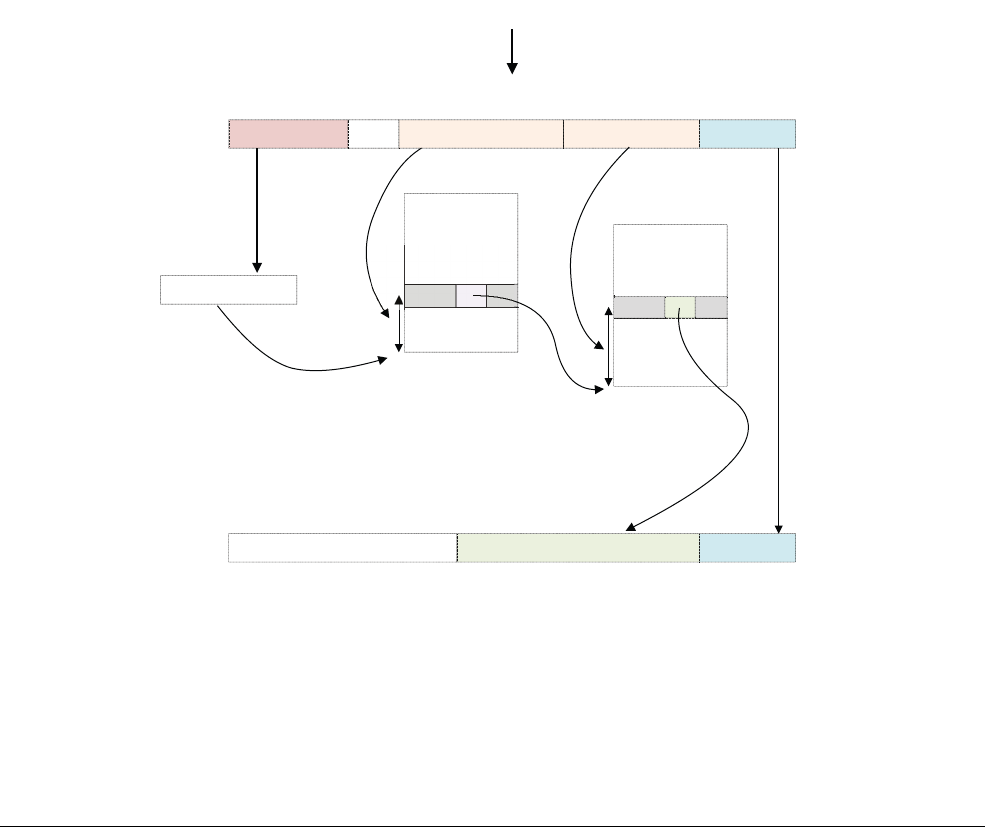

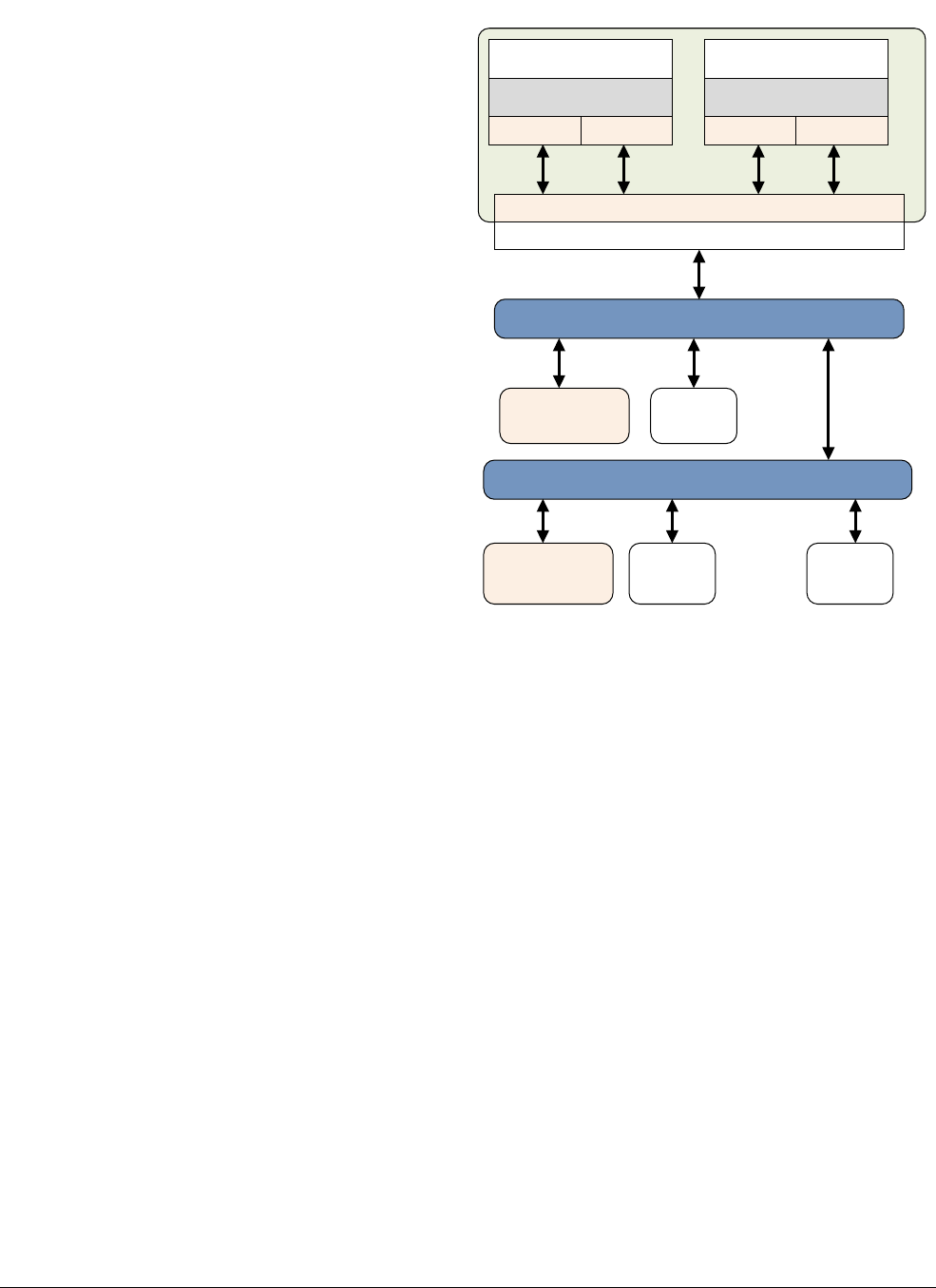

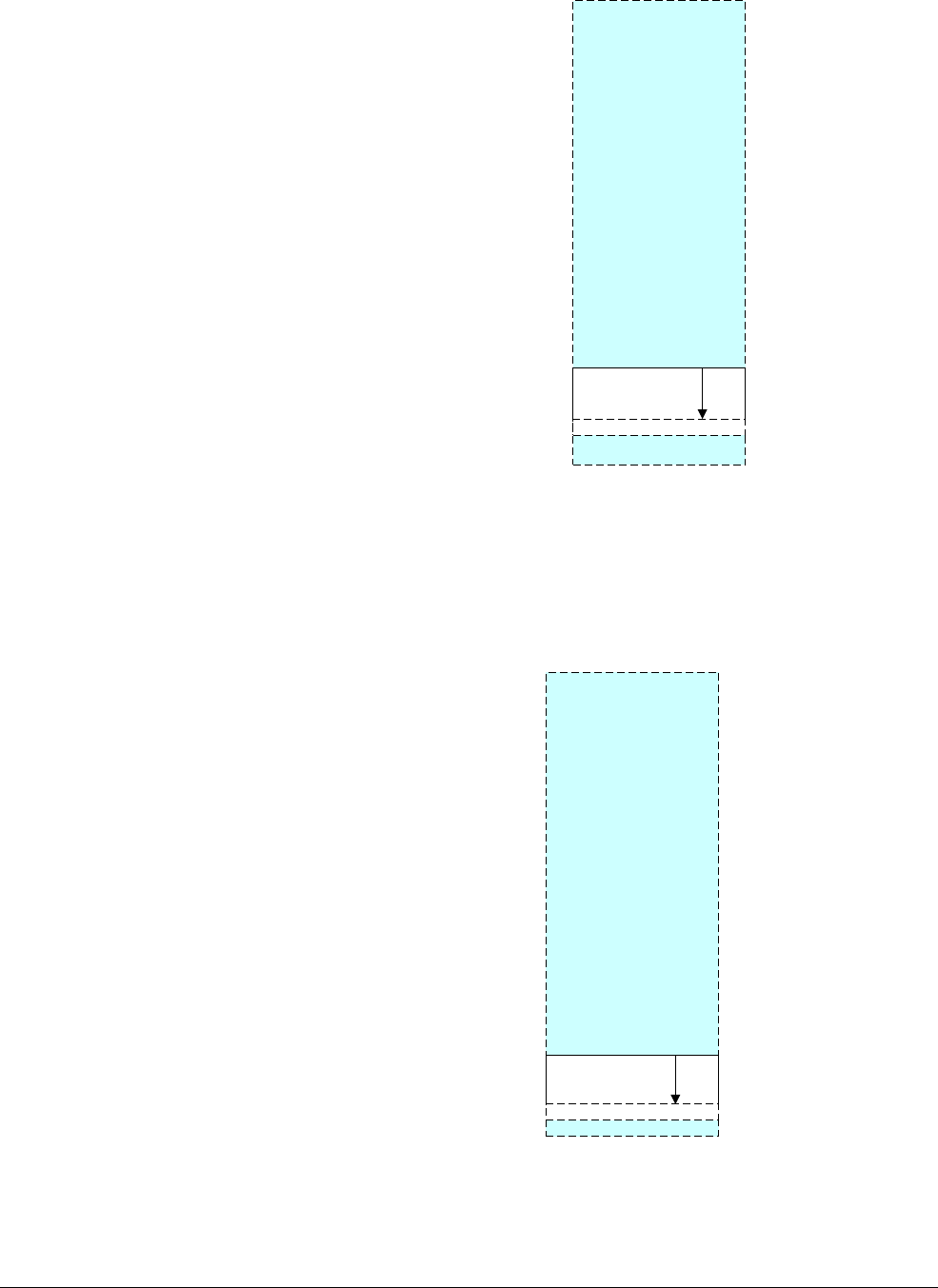

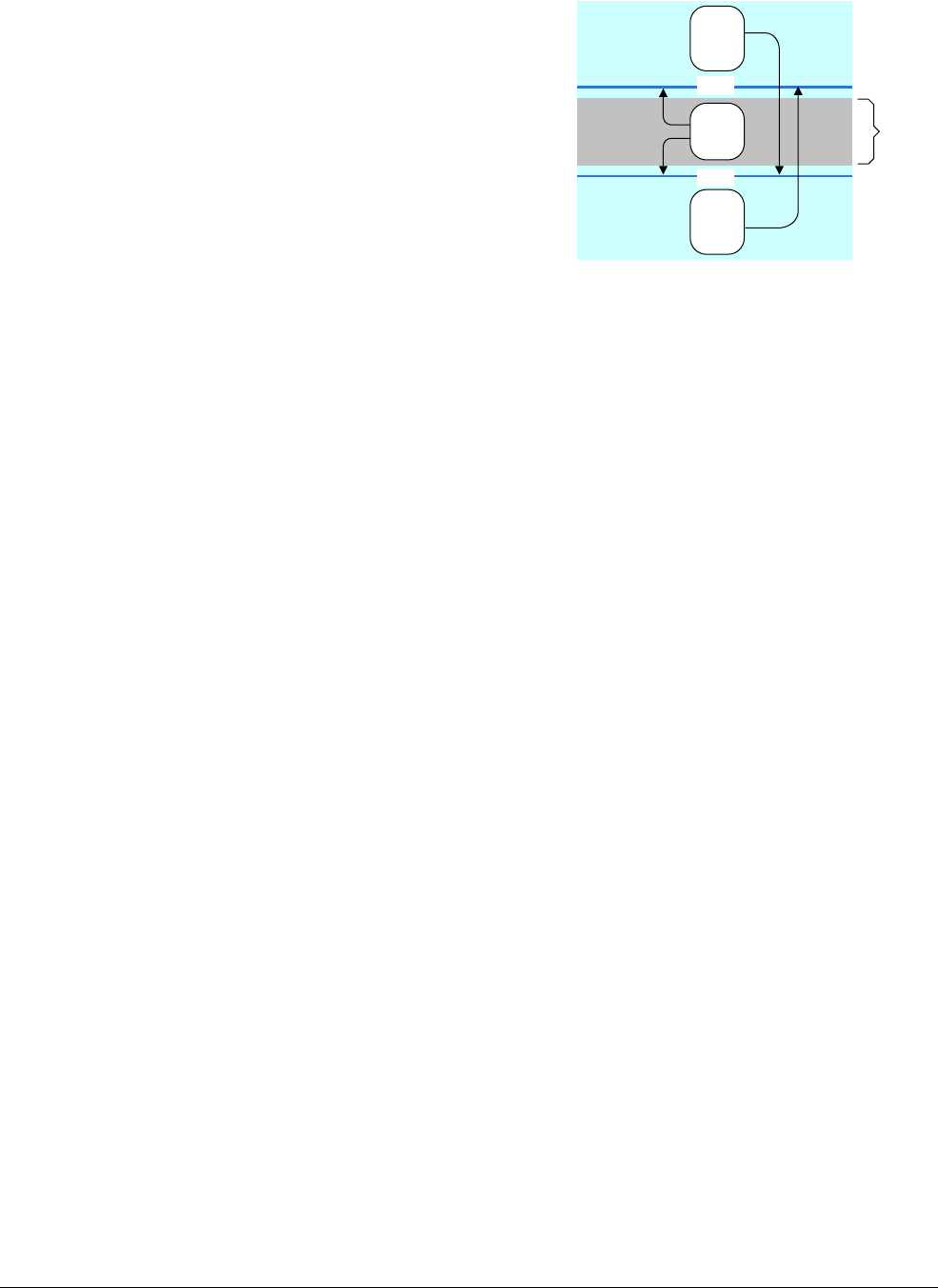

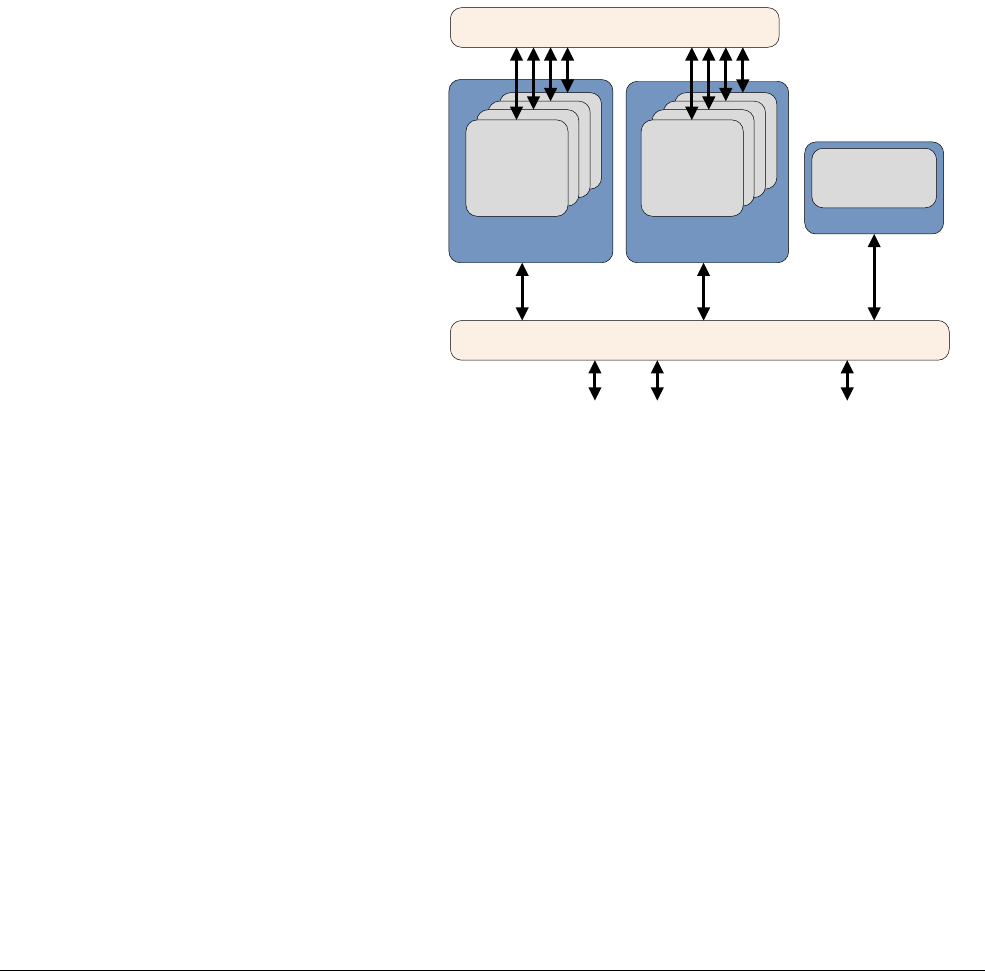

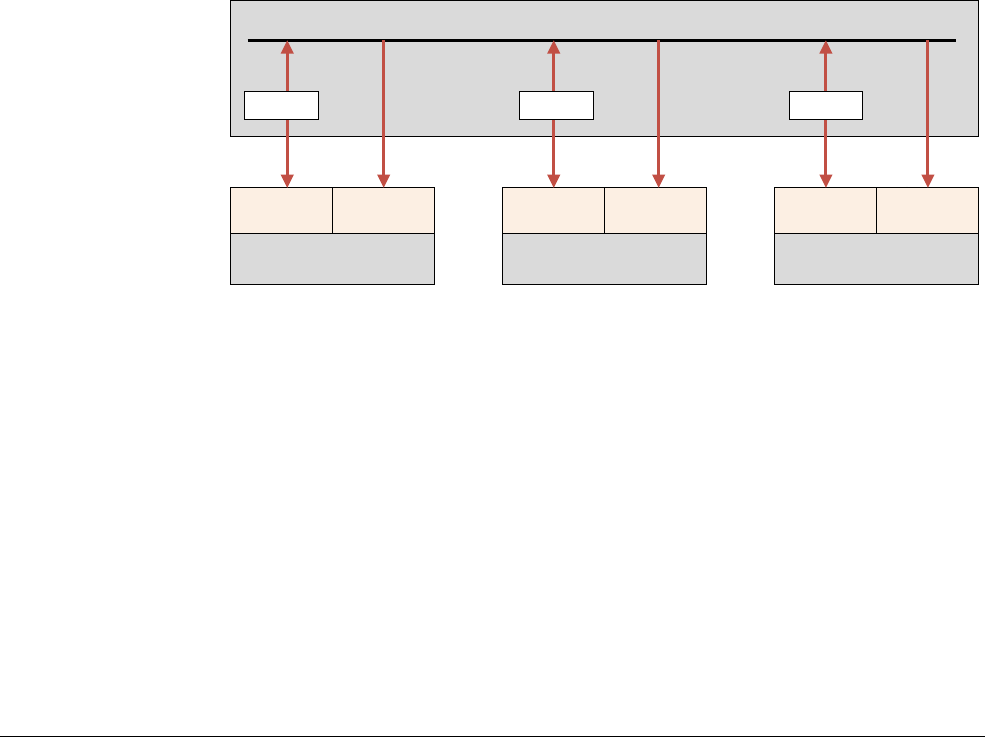

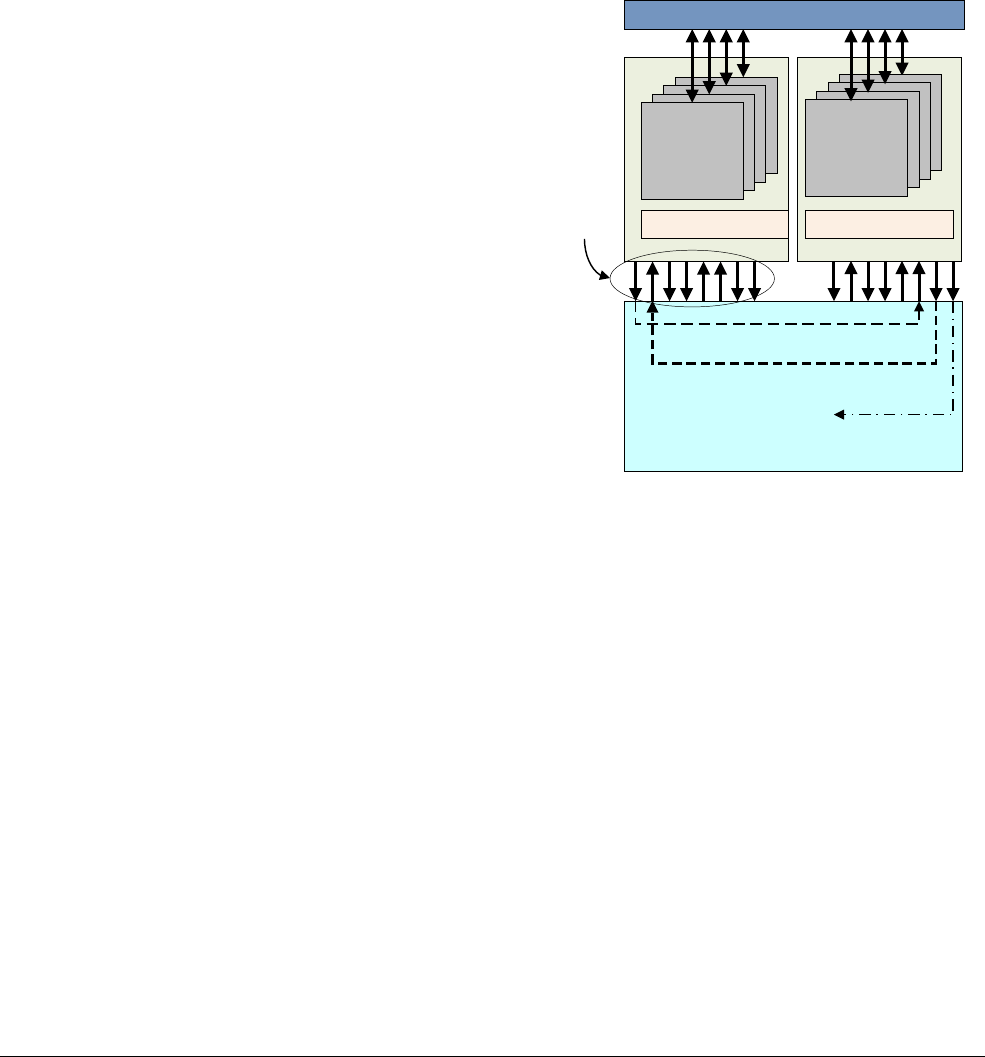

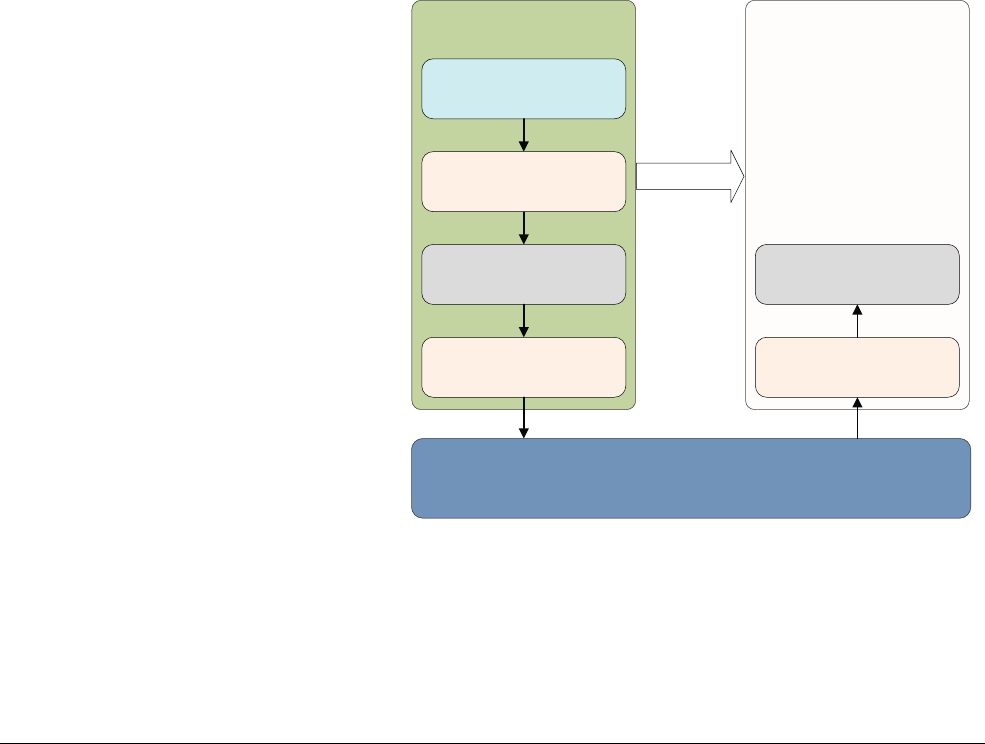

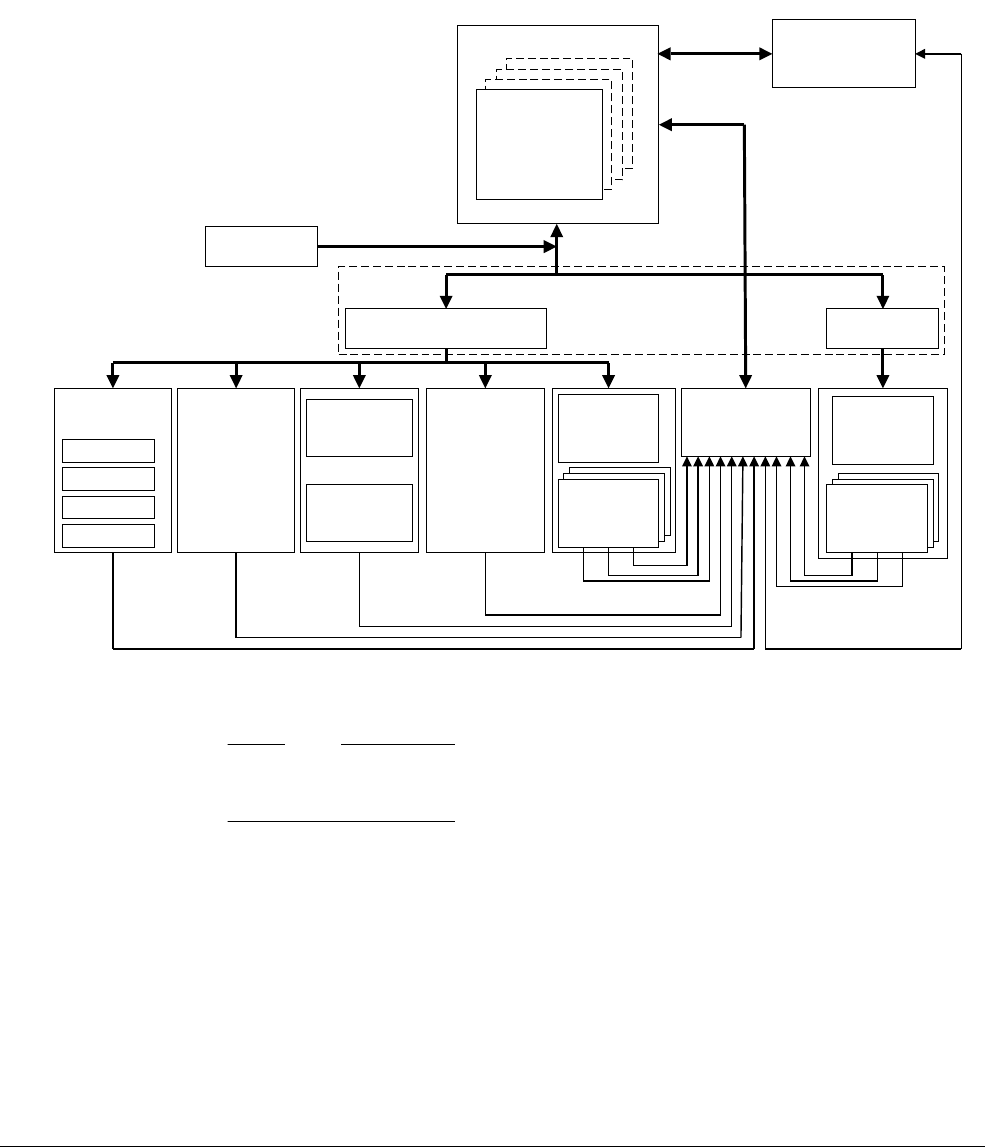

Figure 2-2 Cortex-A53 processor

The Cortex-A53 processor has the following features:

• In-order, eight stage pipeline.

• Lower power consumption from the use of hierarchical clock gating, power domains, and

advanced retention modes.

• Increased dual-issue capability from duplication of execution resources and dual

instruction decoders.

3

AMBA 4 ACE or AMBA 5 CHI Coherent Bus Interface

Generic Interrupt Controller

2

1

Core

0

Cortex-A53 processor

Level 1

Instruction

Cache

Level 1 Data

Cache w/ECC

NEON

Data Engine

with crypto ext

Floating-point

unit

SCU Integrated Level 2 Cache w/ECCACP

ARM CoreSight Multicore Debug and Trace

Performance Monitor

Unit

Memory

Management

Unit

Data Processing

Unit

ARMv8-A Architecture and Processors

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 2-7

ID050815 Non-Confidential

• Power-optimized L2 cache design delivers lower latency and balances performance with

efficiency.

The Cortex-A57 processor

The Cortex-A57 processor is targeted at mobile and enterprise computing applications

including compute intensive 64-bit applications such as high end computer, tablet, and server

products. It can be used with the Cortex-A53 processor into an ARM big.LITTLE configuration,

for scalable performance and more efficient energy use.

The Cortex-A57 processor features cache coherent interoperability with other processors,

including the ARM Mali™ family of Graphics Processing Units (GPUs) for GPU compute and

provides optional reliability and scalability features for high-performance enterprise

applications. It provides significantly more performance than the ARMv7 Cortex-A15

processor, at a higher level of power efficiency. The inclusion of cryptography extensions

improves performance on cryptography algorithms by 10 times over the previous generation of

processors.

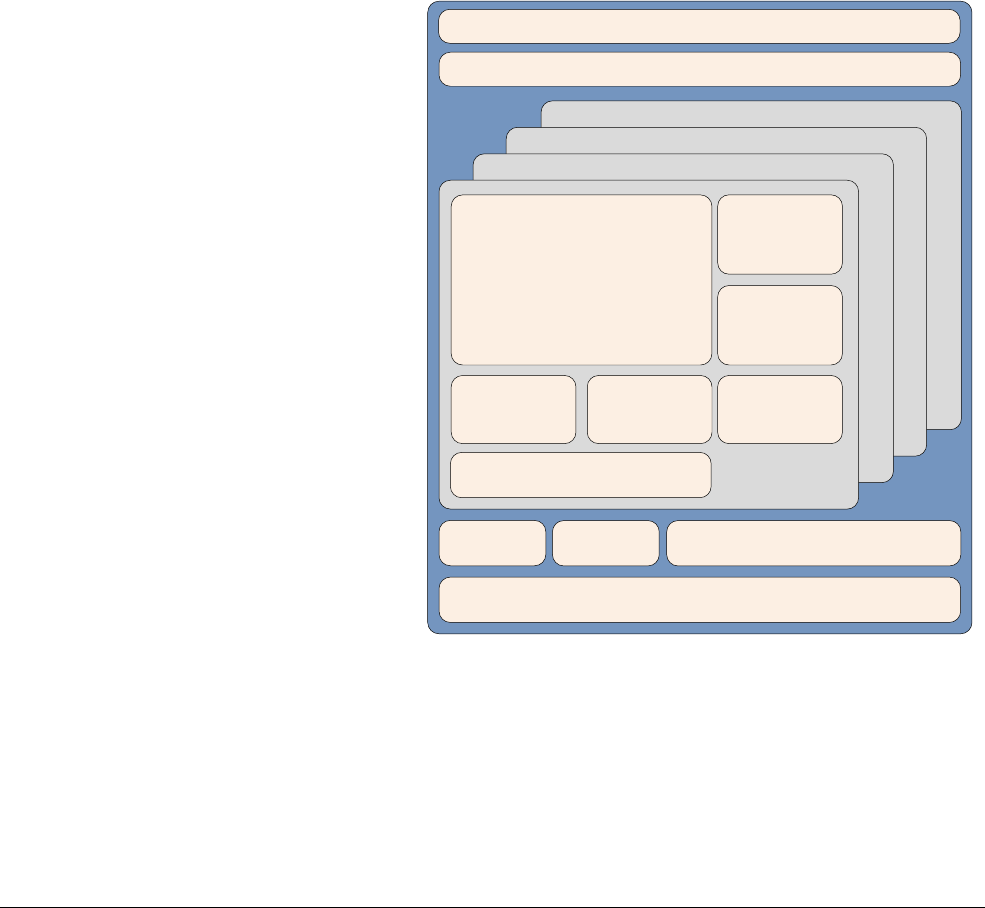

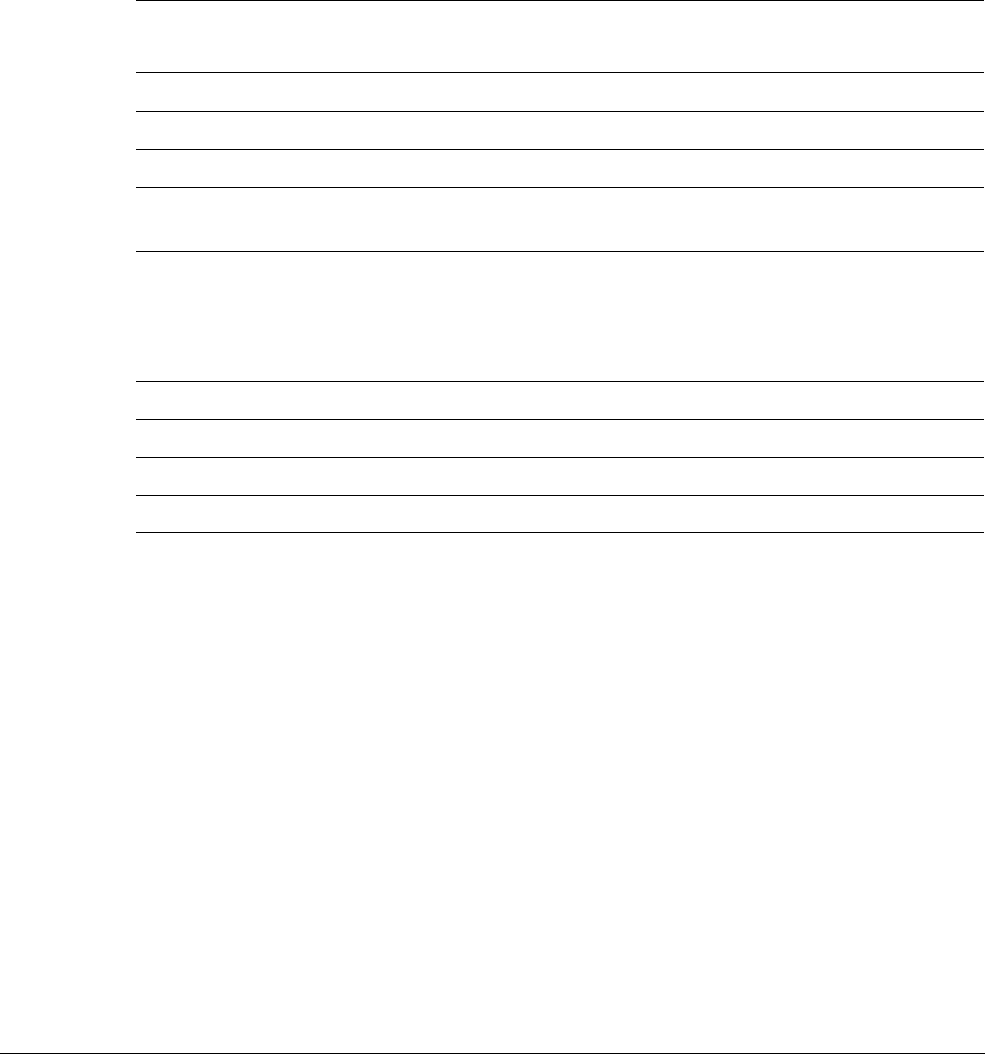

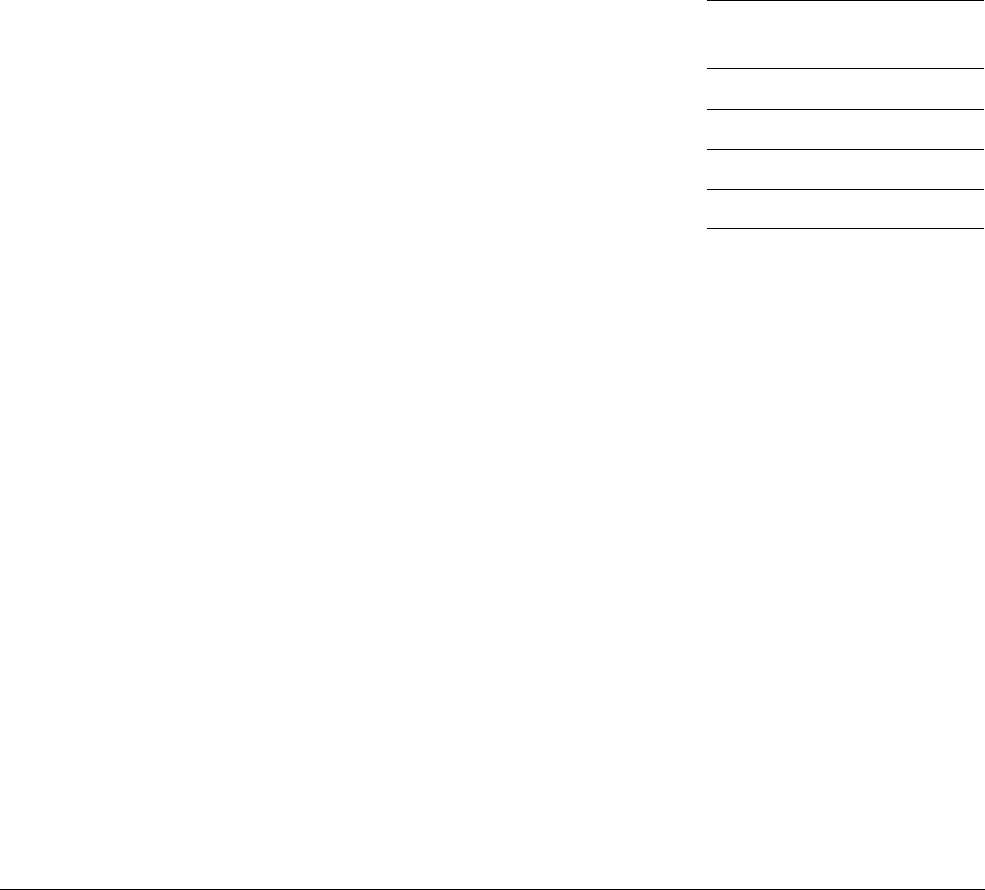

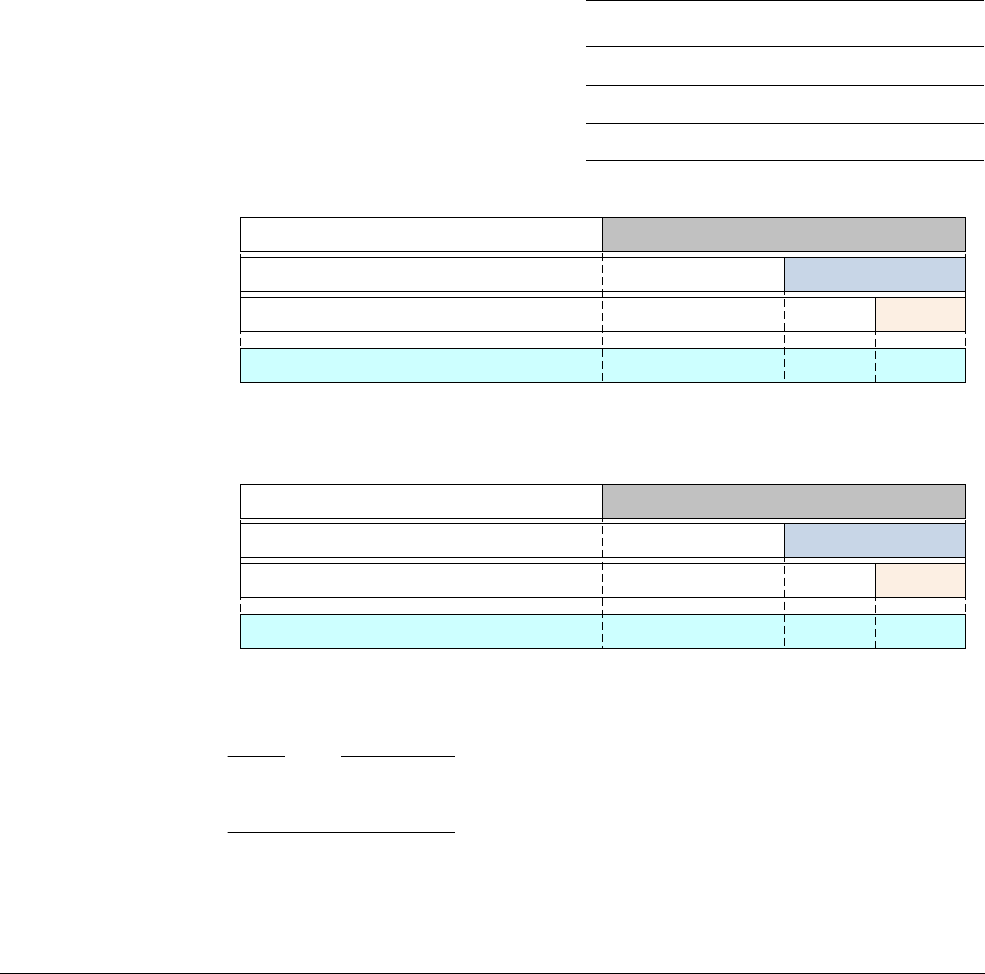

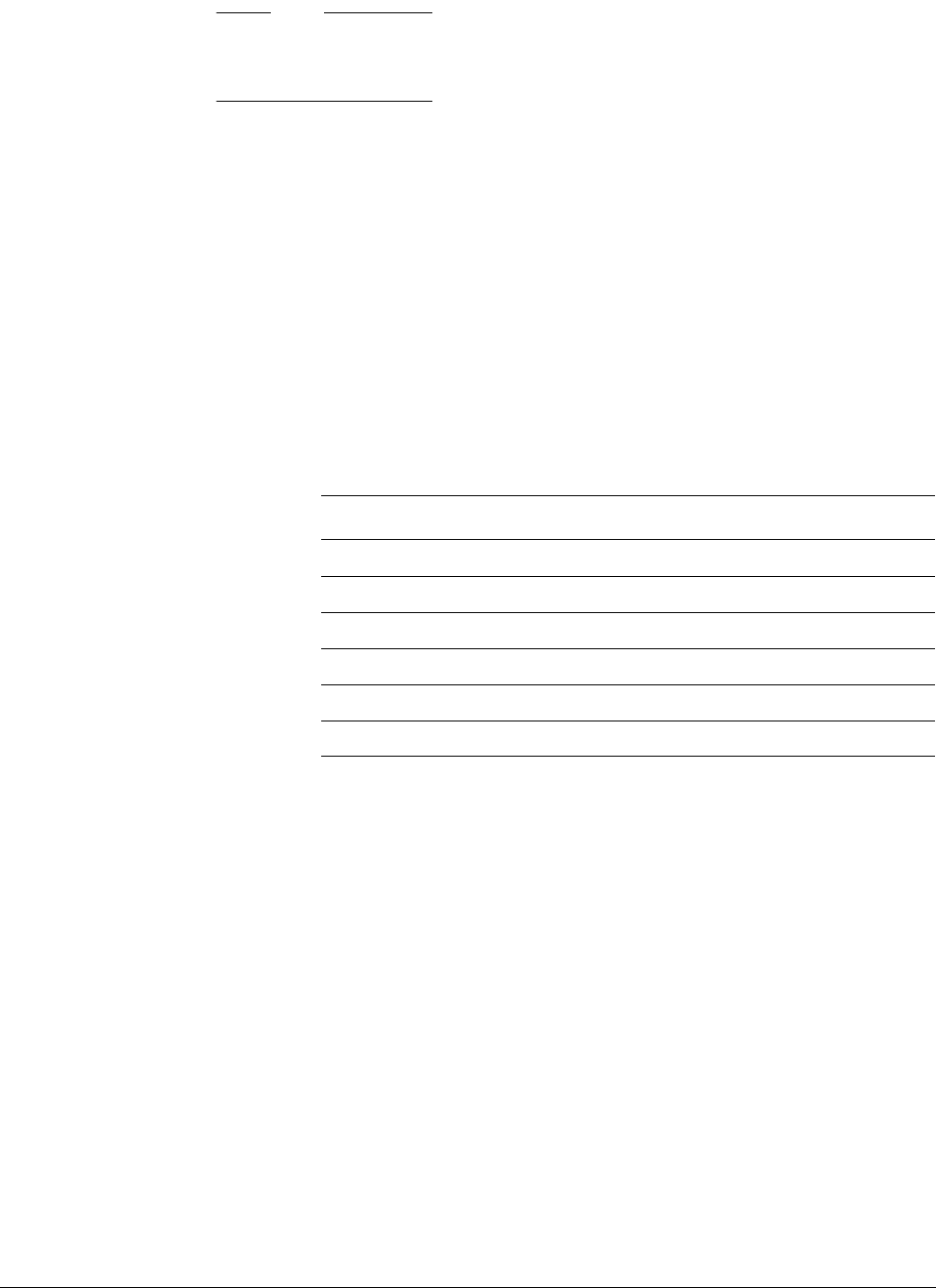

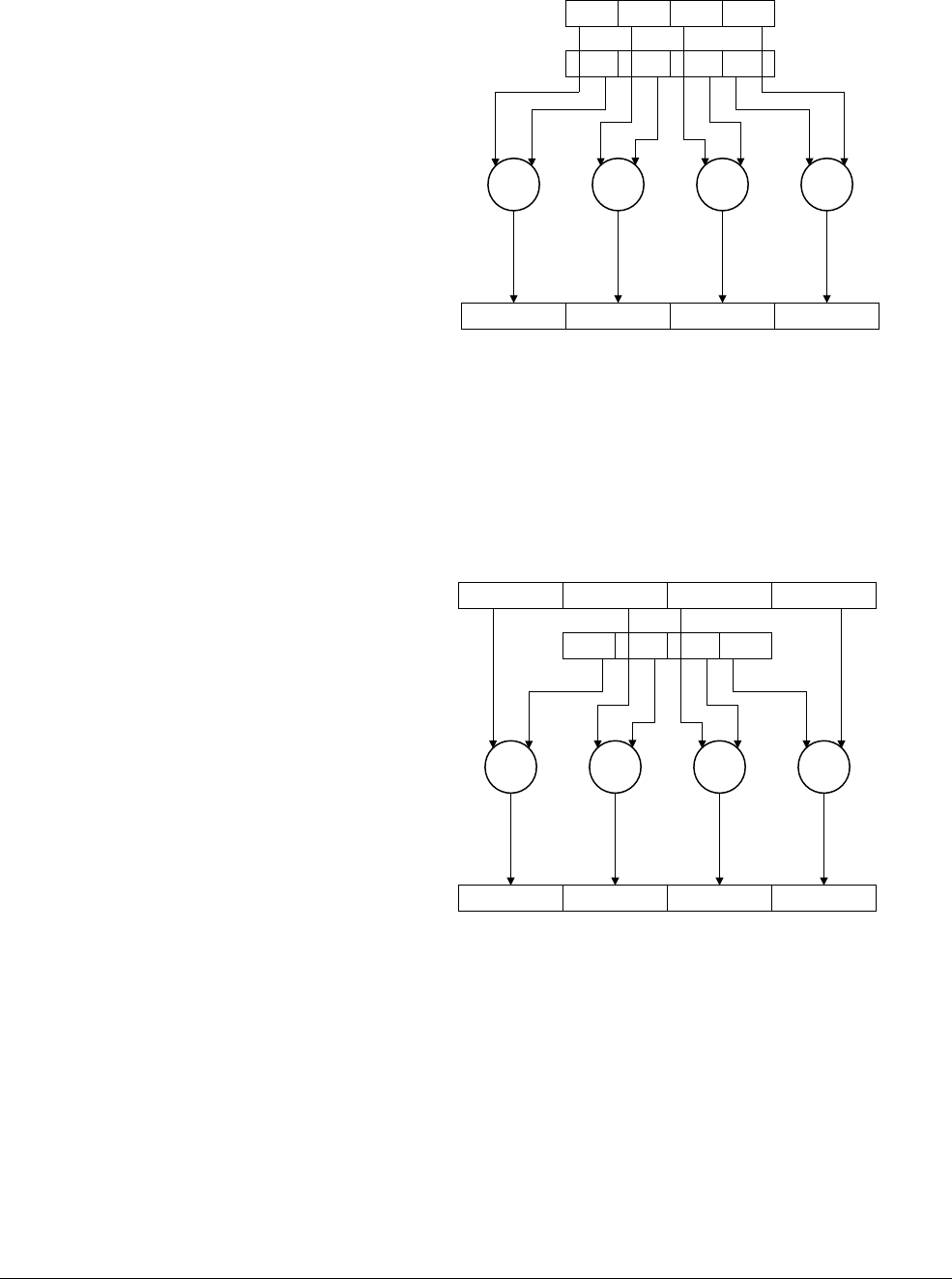

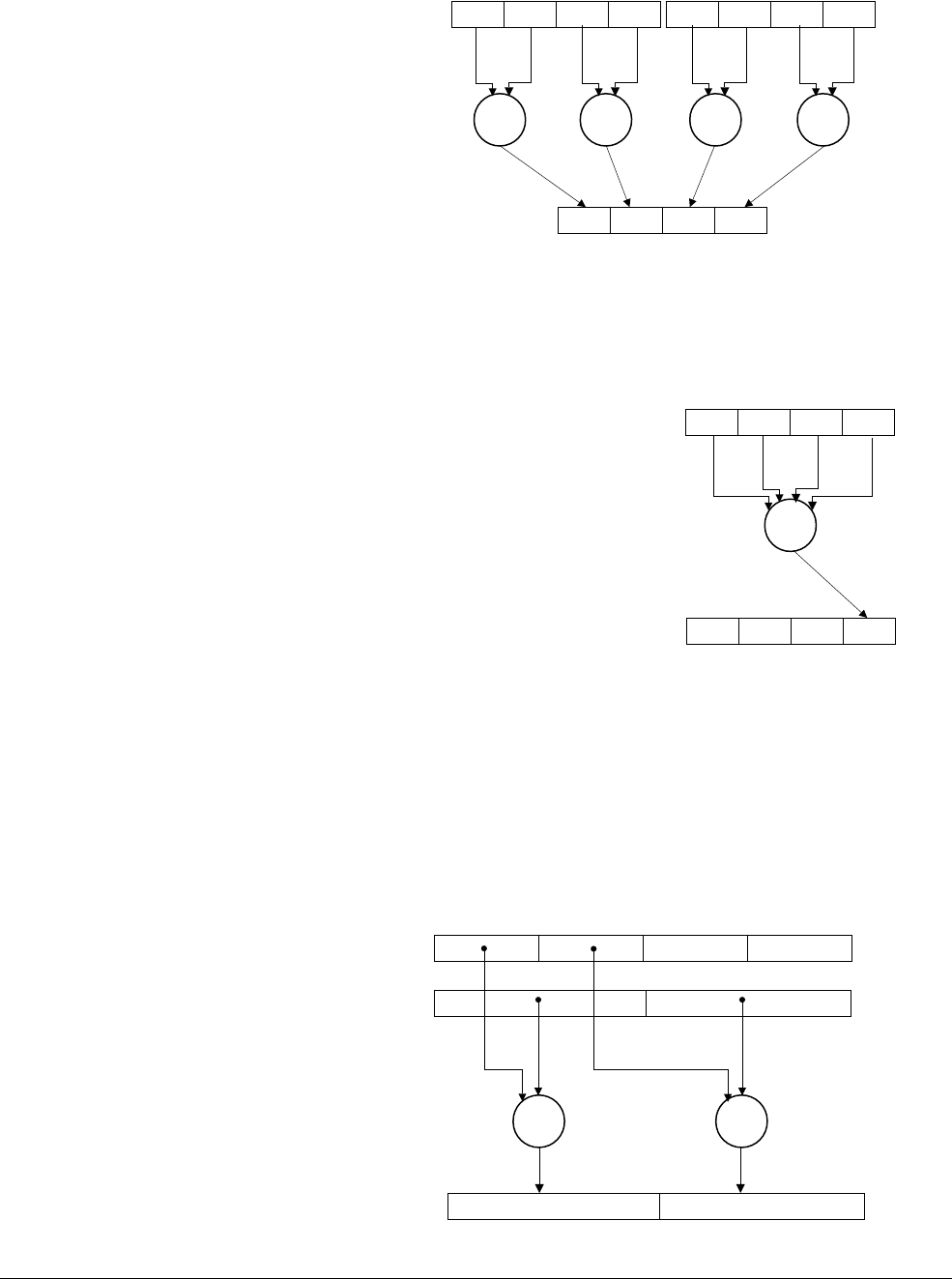

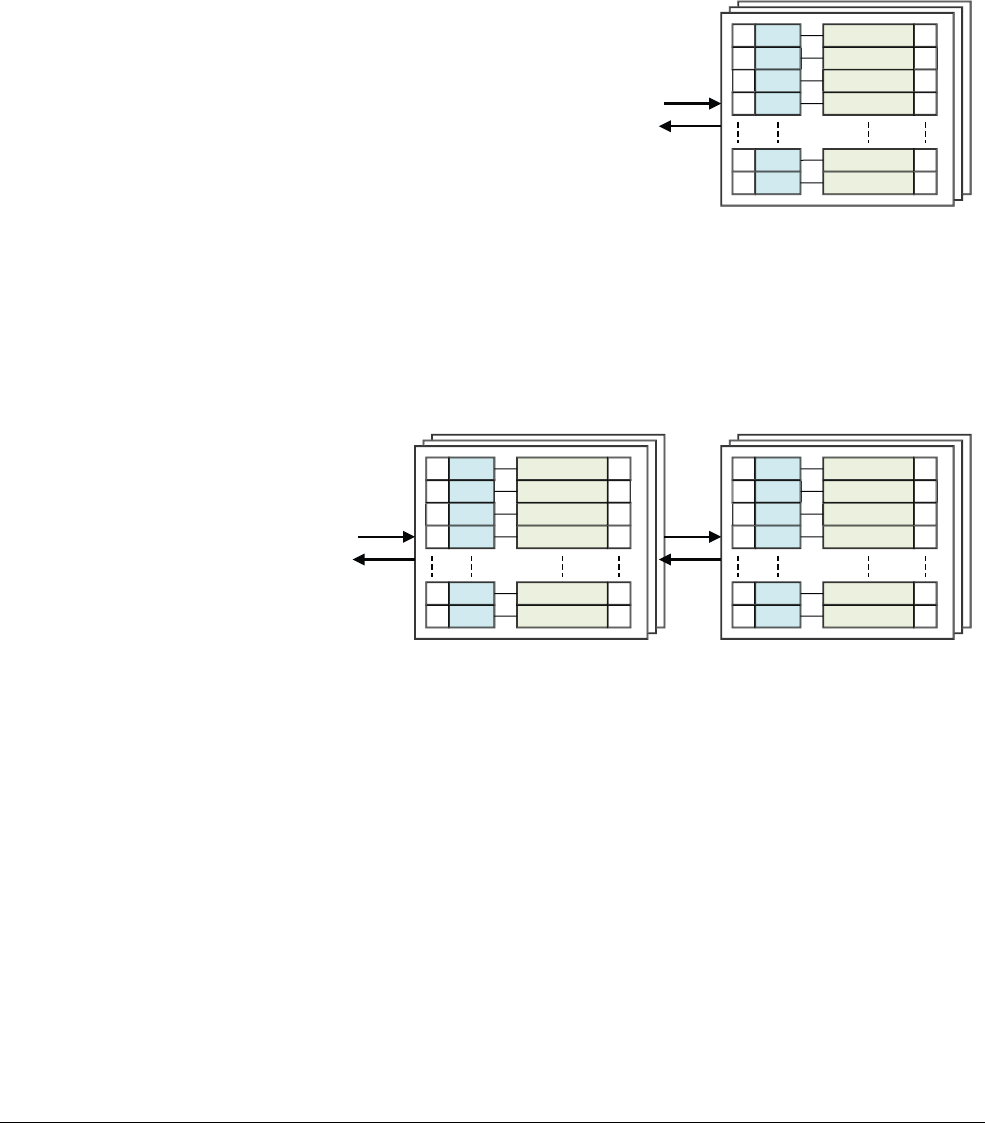

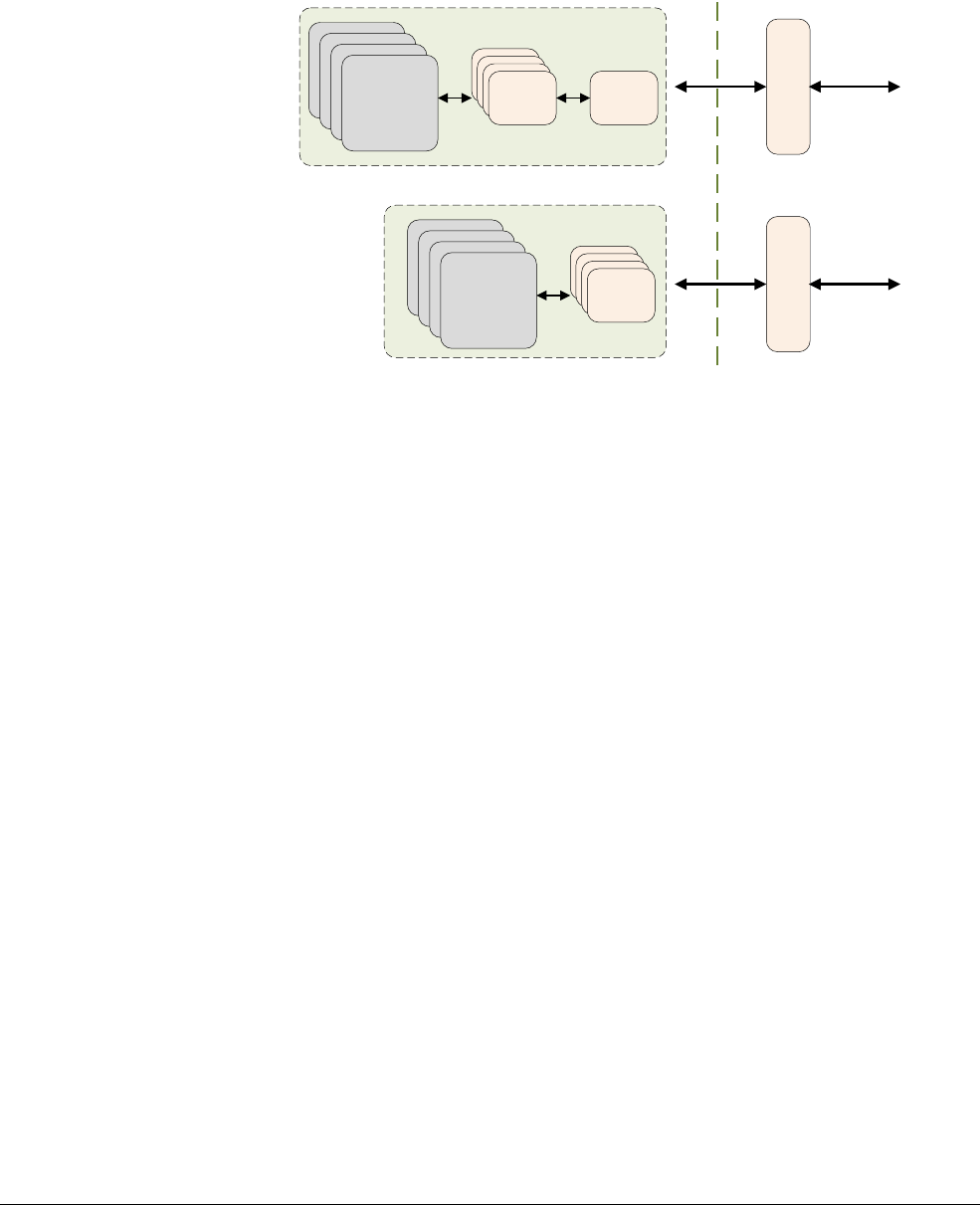

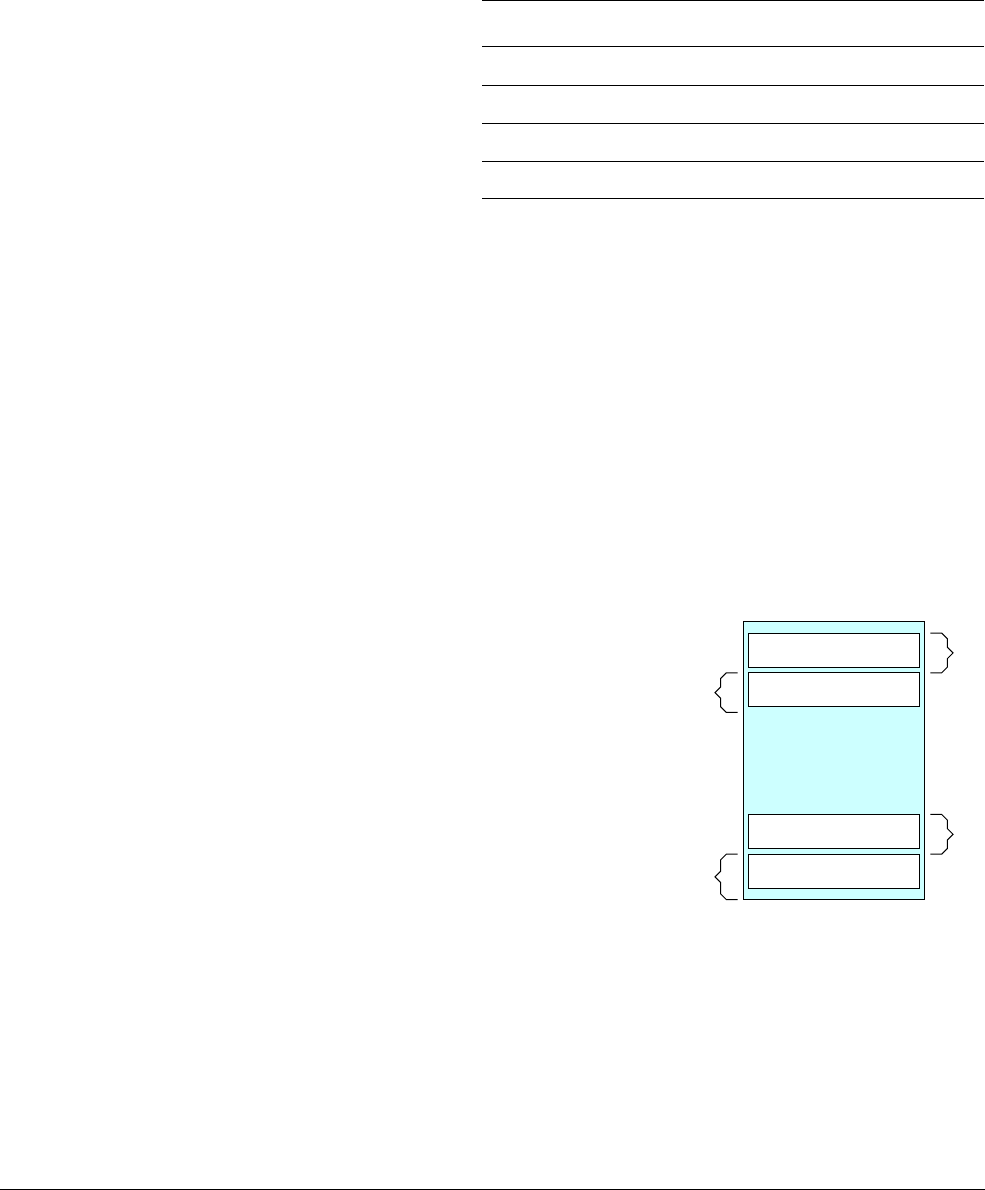

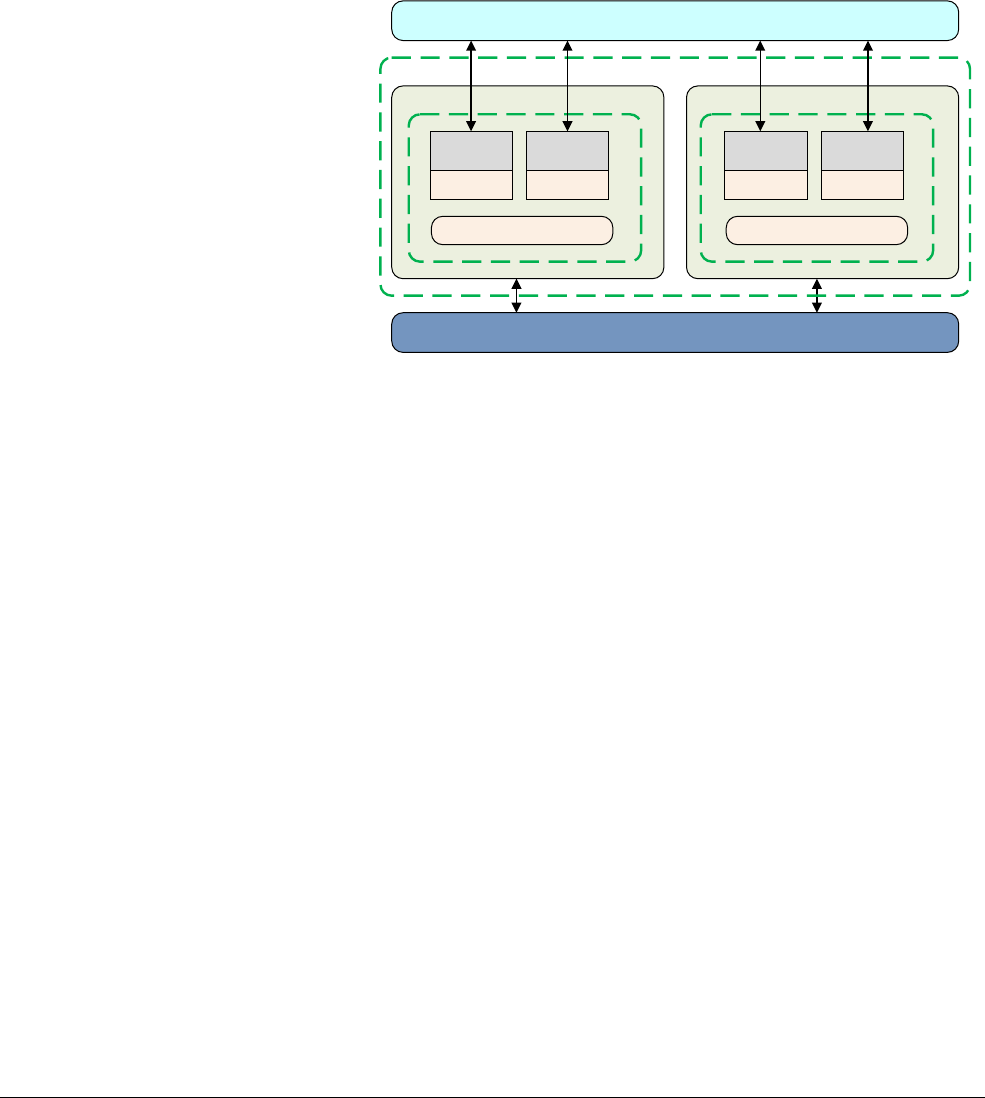

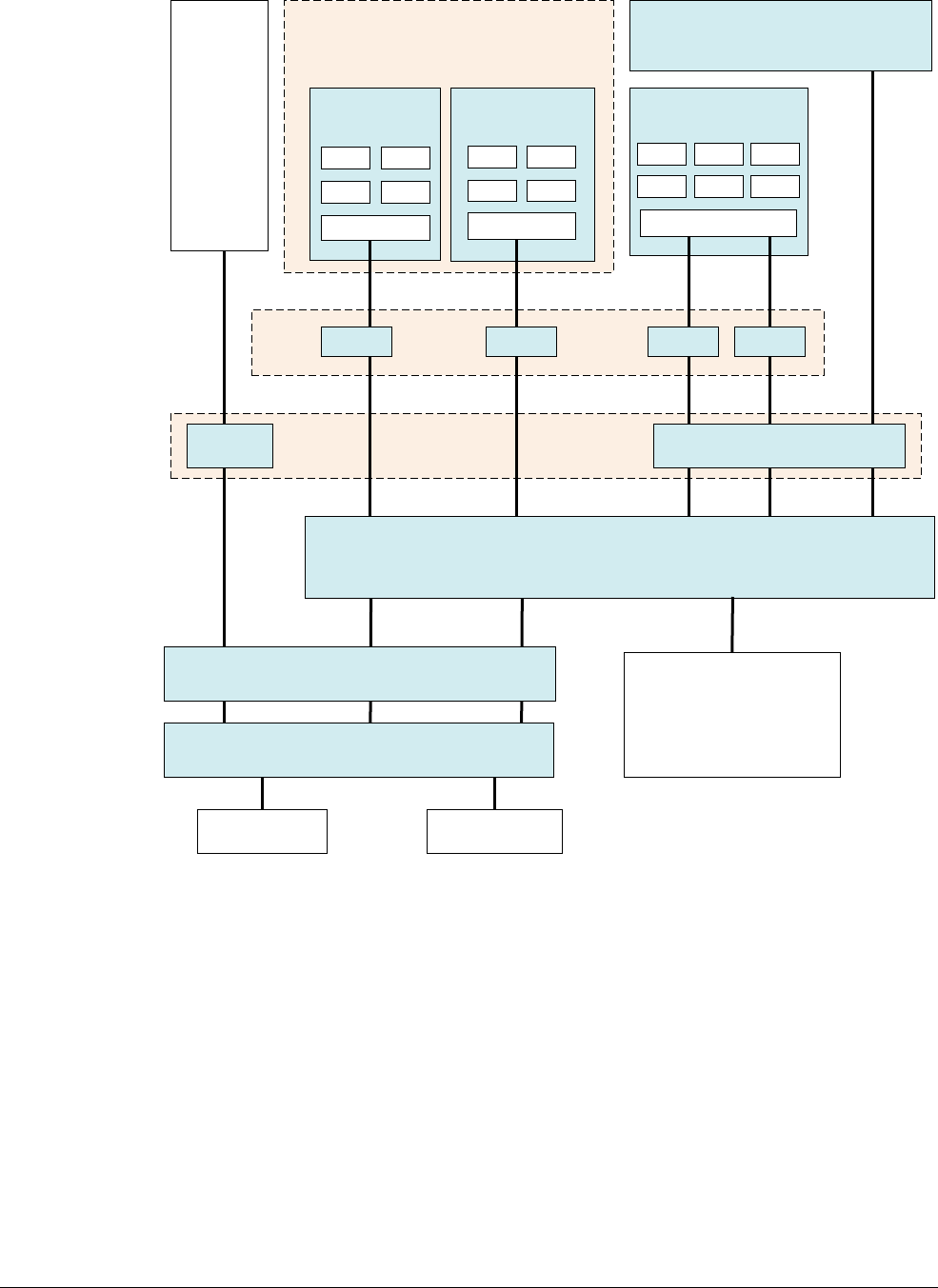

Figure 2-3 Cortex-A57 processor core

The Cortex-A57 processor fully implements the ARMv8-A architecture. It enables multi-core

operation with between one and four cores multi-processing within a single cluster. Multiple

coherent SMP clusters are possible, through AMBA5 CHI or AMBA 4 ACE technology. Debug

and trace are available through CoreSight technology.

The Cortex-A57 processor has the following features:

• Out-of-order, 15+ stage pipeline.

3

AMBA 4 ACE or AMBA5 CHI Coherent Bus Interface

Generic Interrupt Controller

2

1

Core

0

Cortex-A57 processor

Level 1

Instruction

Cache

Level 1 Data

Cache w/ECC

NEON

Data Engine

with crypto ext

Floating-point

unit

SCU Integrated Level 2 Cache w/ECCACP

ARM CoreSight Multicore Debug and Trace

Performance Monitor Unit

Memory

Protection Unit

ARMv8-A Architecture and Processors

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 2-8

ID050815 Non-Confidential

• Power-saving features include way-prediction, tag-reduction, and cache-lookup

suppression.

• Increased peak instruction throughput through duplication of execution resources.

Power-optimized instruction decode with localized decoding, 3-wide decode bandwidth.

• Performance optimized L2 cache design enables more than one core in the cluster to

access the L2 at the same time.

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 3-1

ID050815 Non-Confidential

Chapter 3

Fundamentals of ARMv8

In ARMv8, execution occurs at one of four Exception levels. In AArch64, the Exception level

determines the level of privilege, in a similar way to the privilege levels defined in ARMv7. The

Exception level determines the privilege level, so execution at ELn corresponds to privilege

PLn. Similarly, an Exception level with a larger value of n than another one is at a higher

Exception level. An Exception level with a smaller number than another is described as being

at a lower Exception level.

Exception levels provide a logical separation of software execution privilege that applies across

all operating states of the ARMv8 architecture. It is similar to, and supports the concept of,

hierarchical protection domains common in computer science.

The following is a typical example of what software runs at each Exception level:

EL0 Normal user applications.

EL1 Operating system kernel typically described as privileged.

EL2 Hypervisor.

EL3 Low-level firmware, including the Secure Monitor.

Fundamentals of ARMv8

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 3-2

ID050815 Non-Confidential

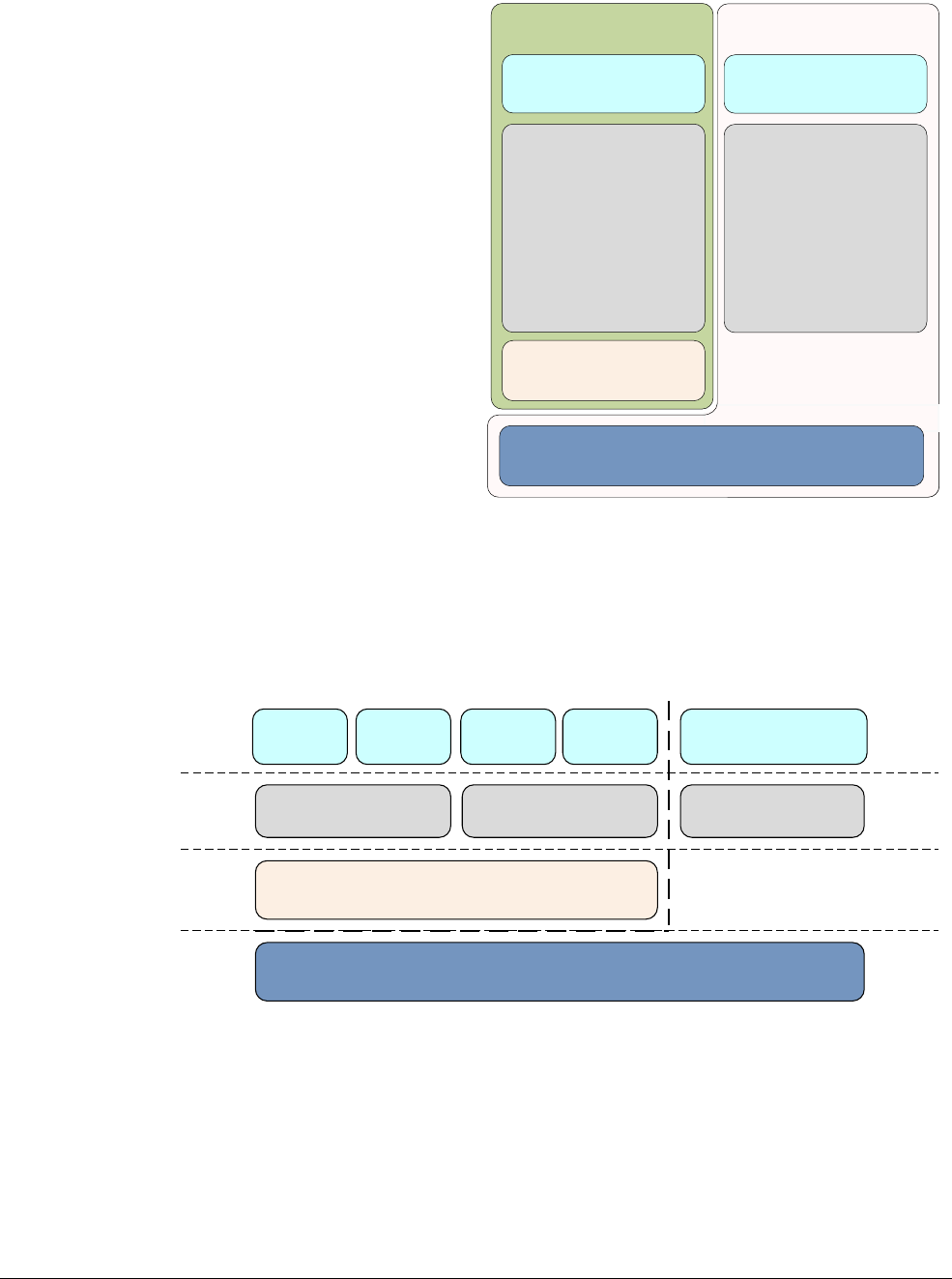

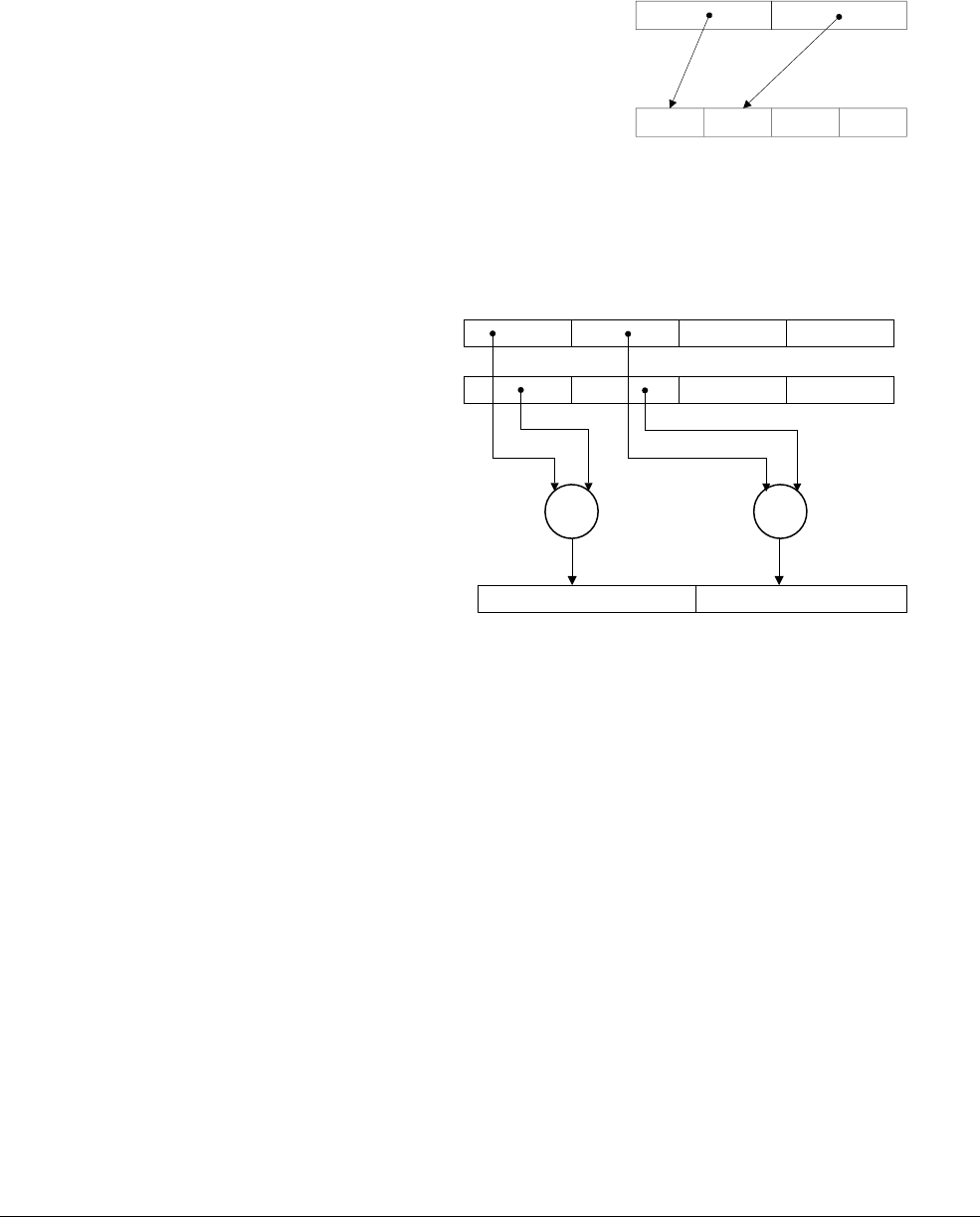

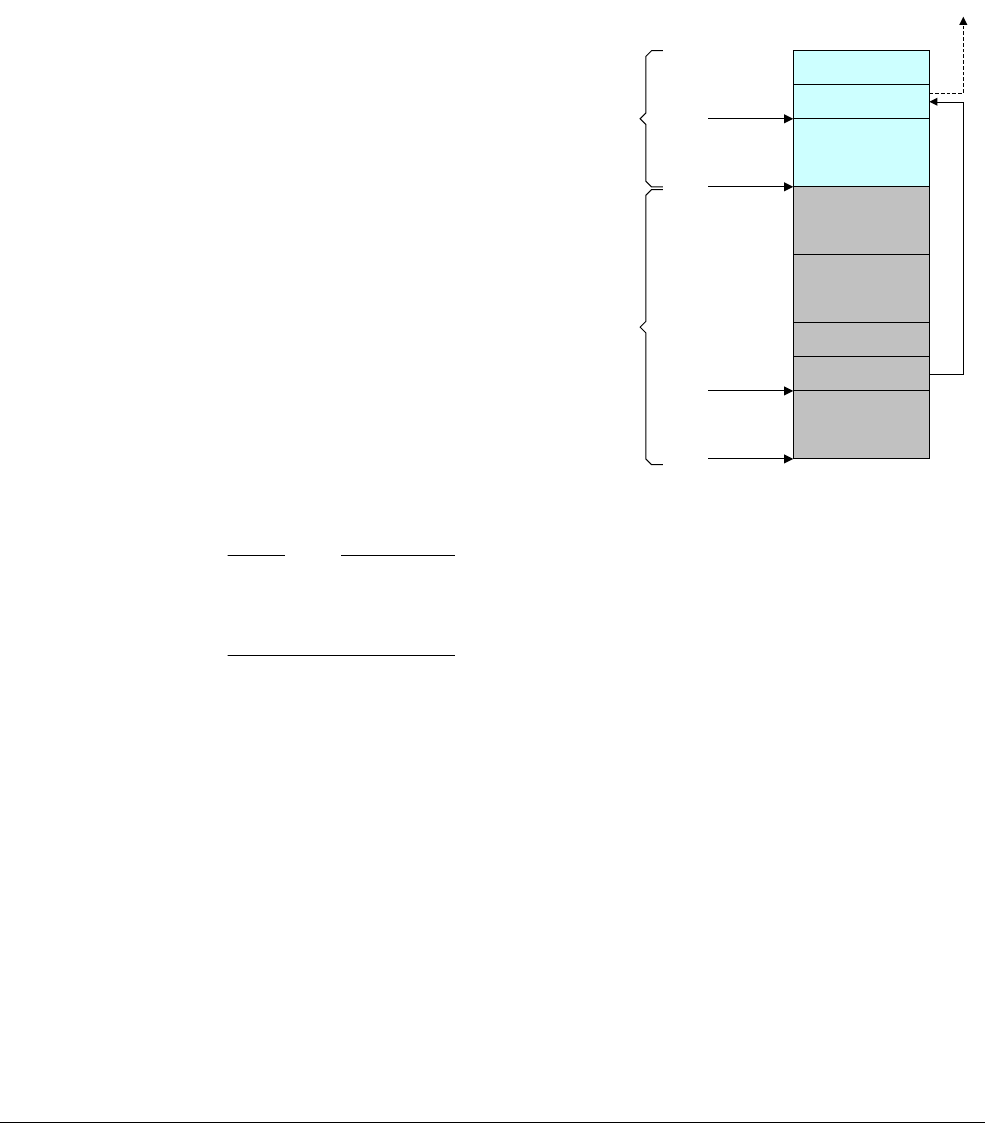

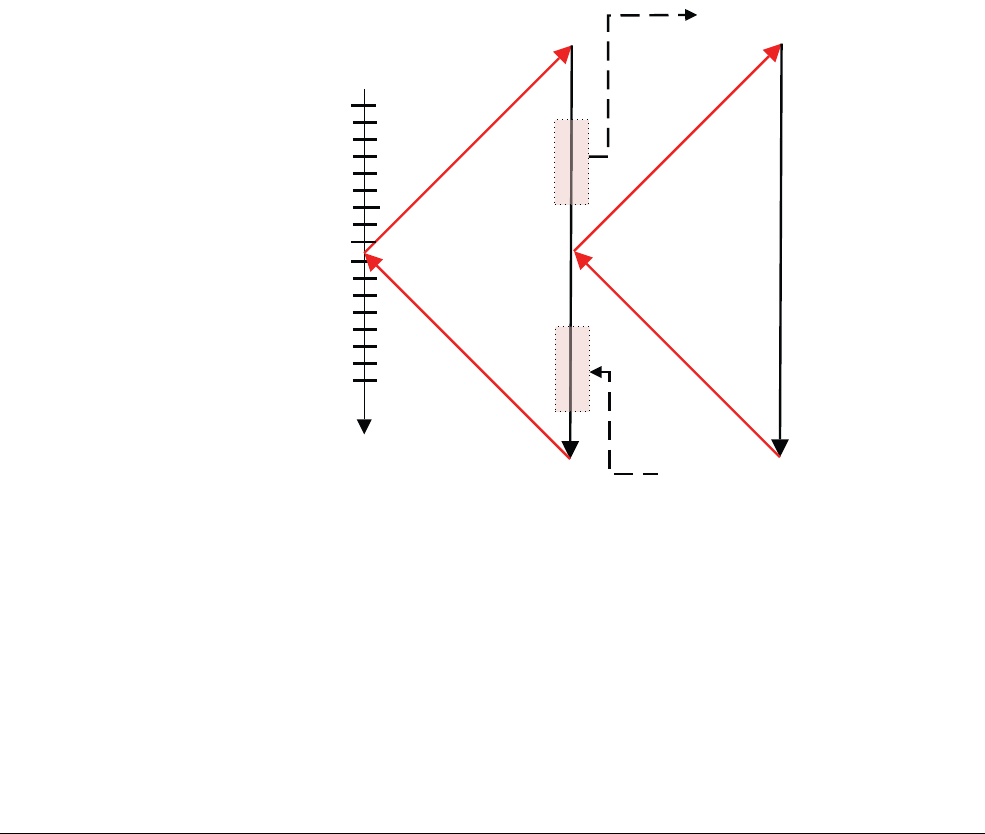

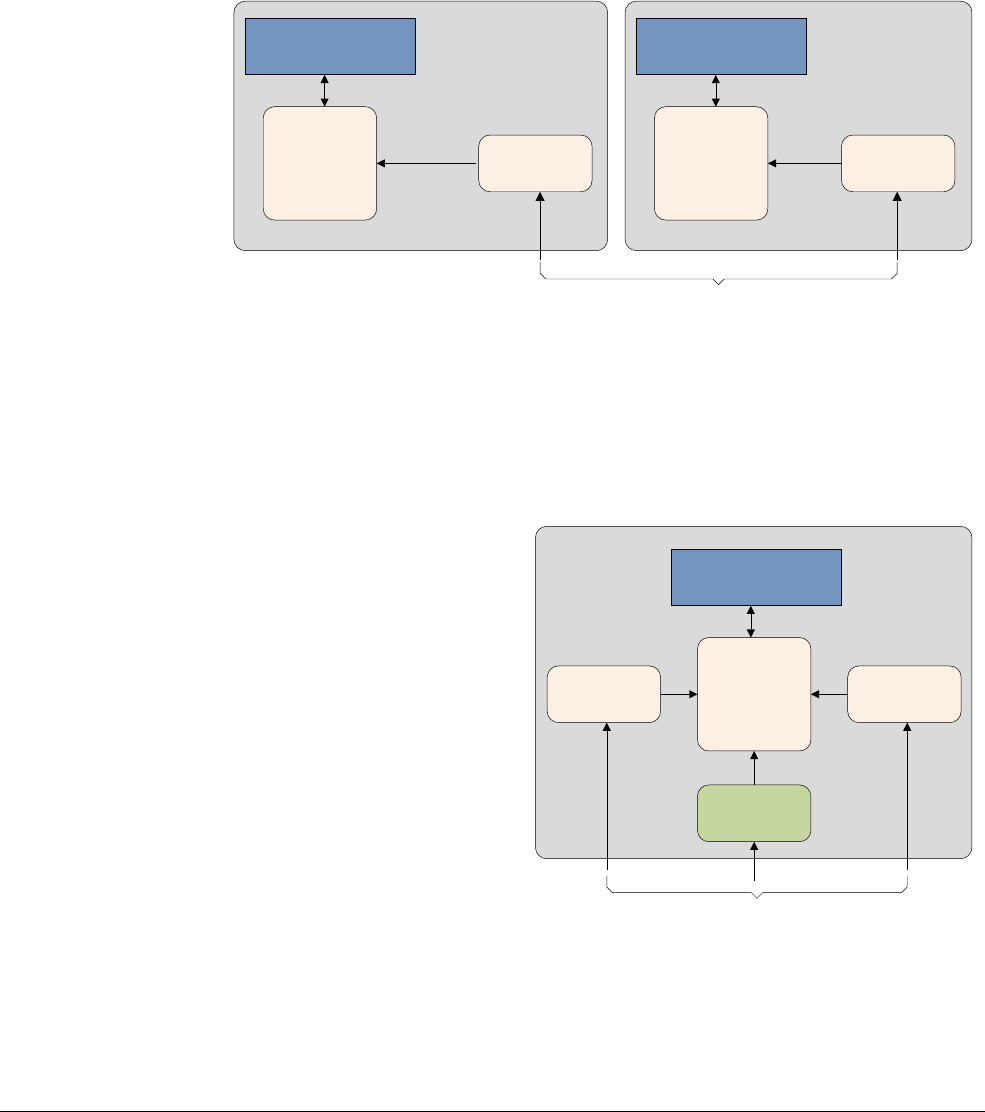

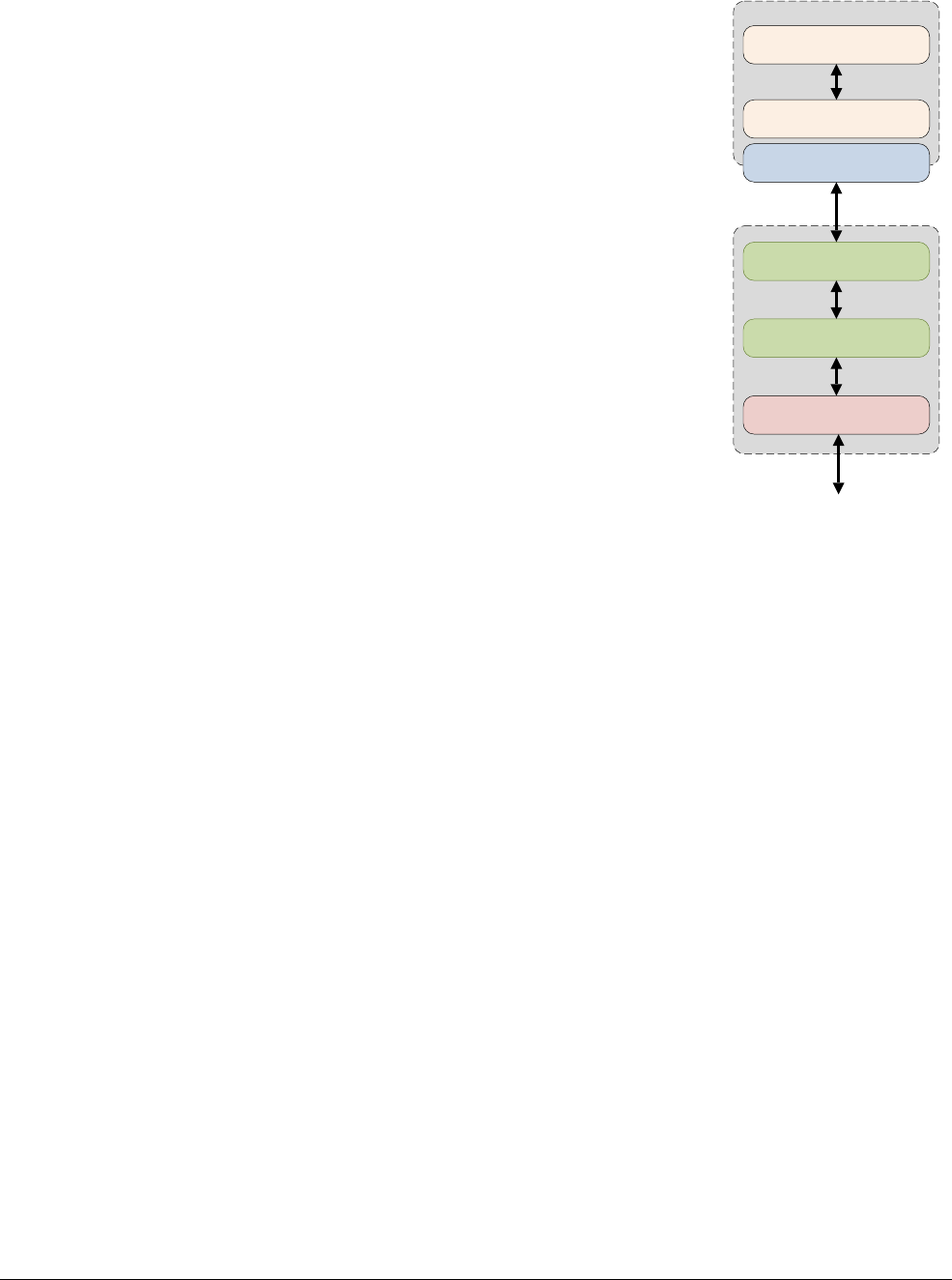

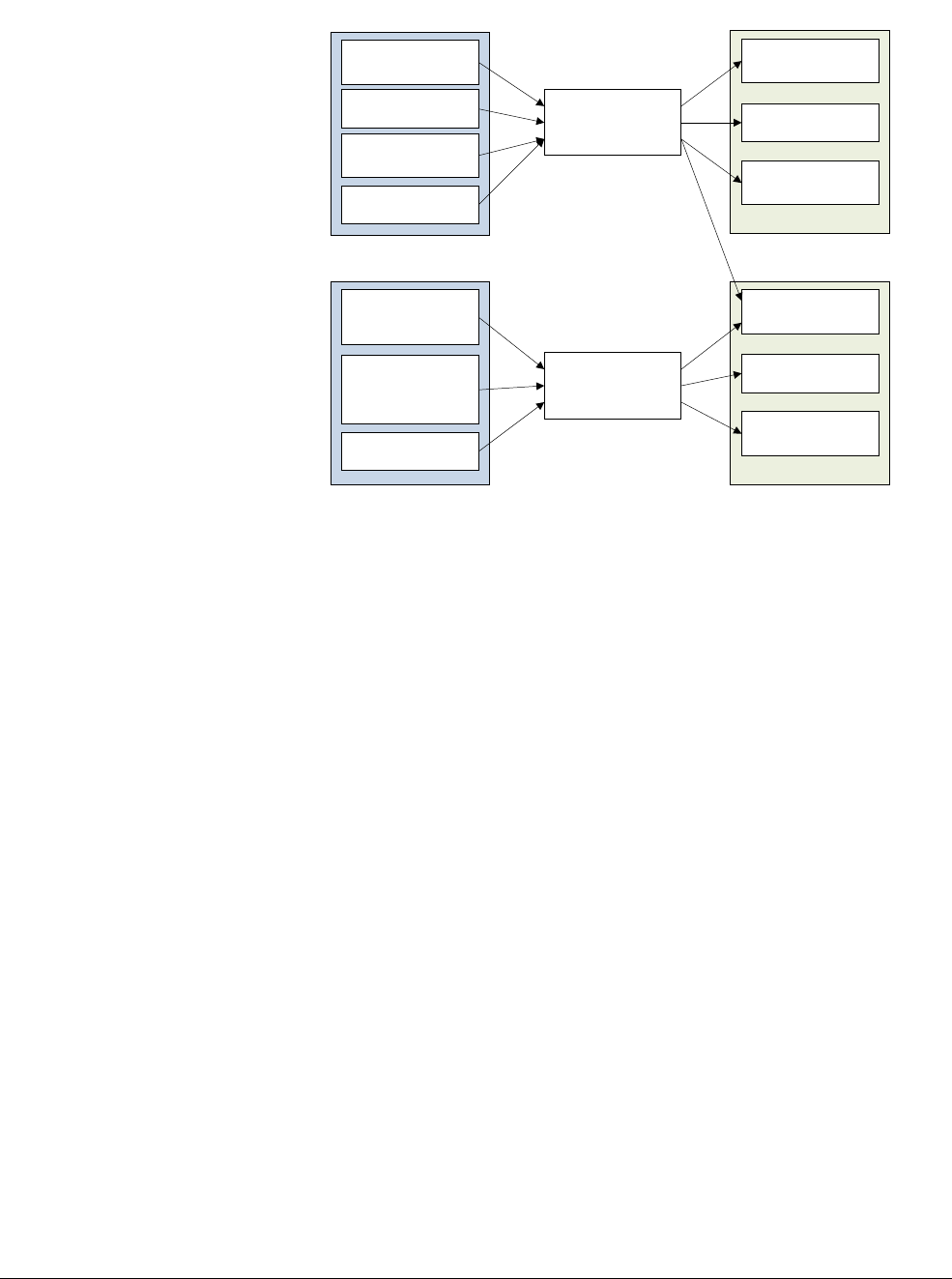

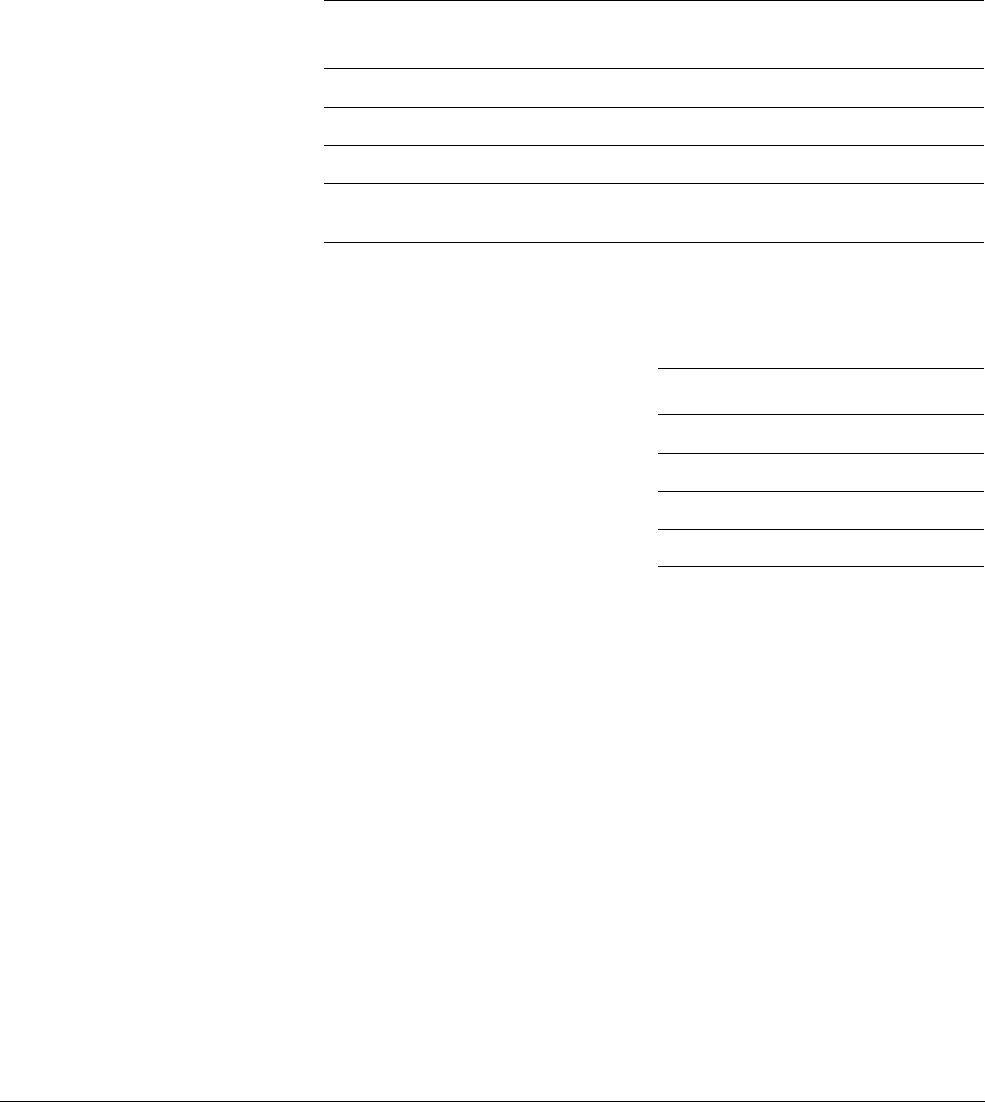

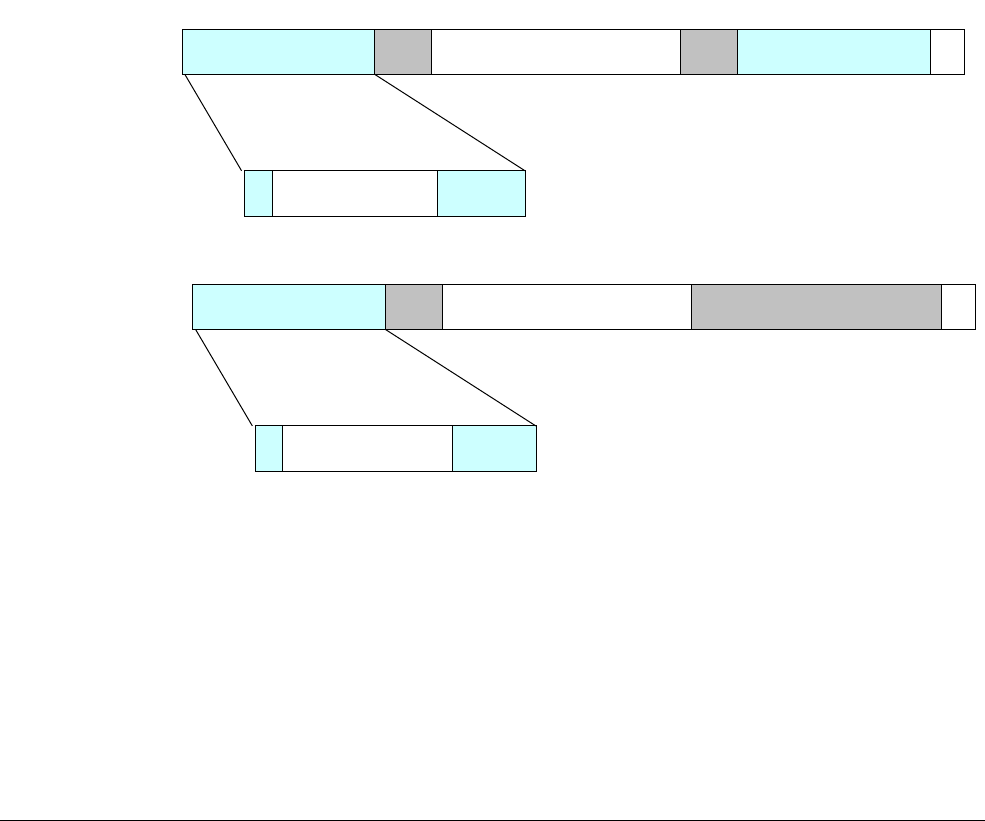

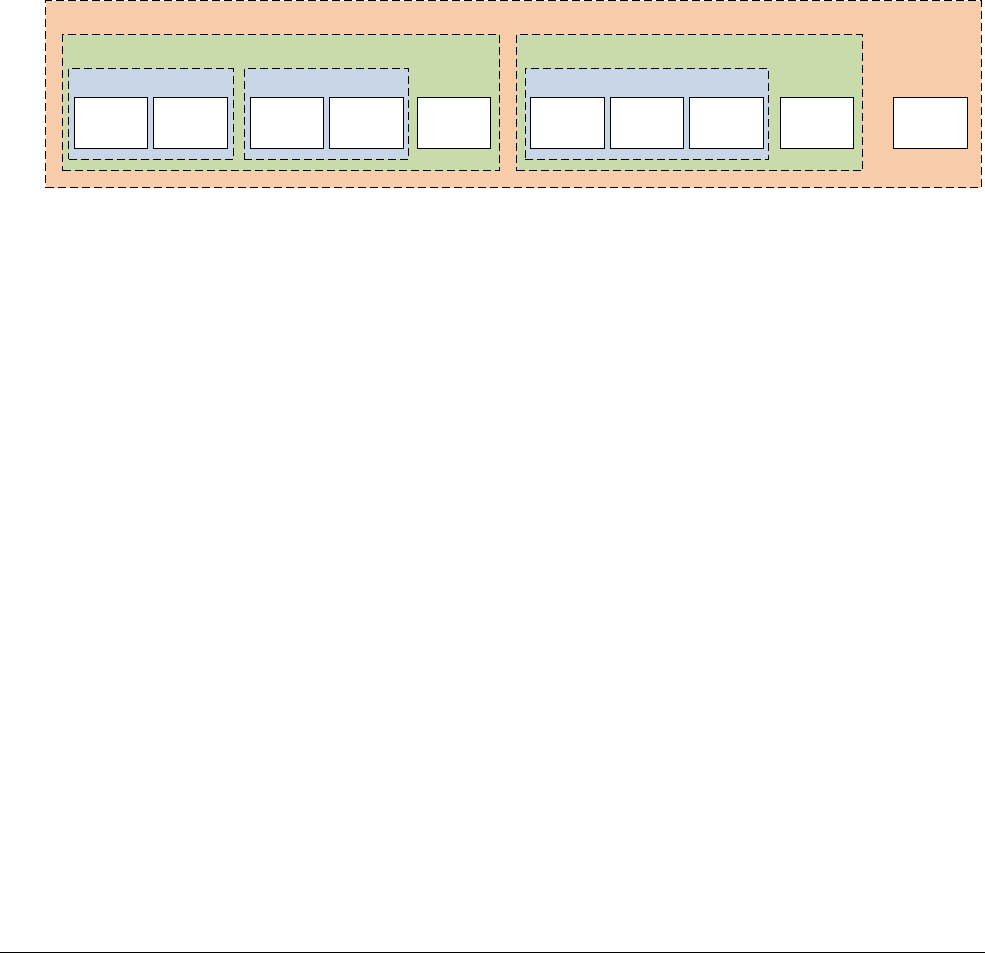

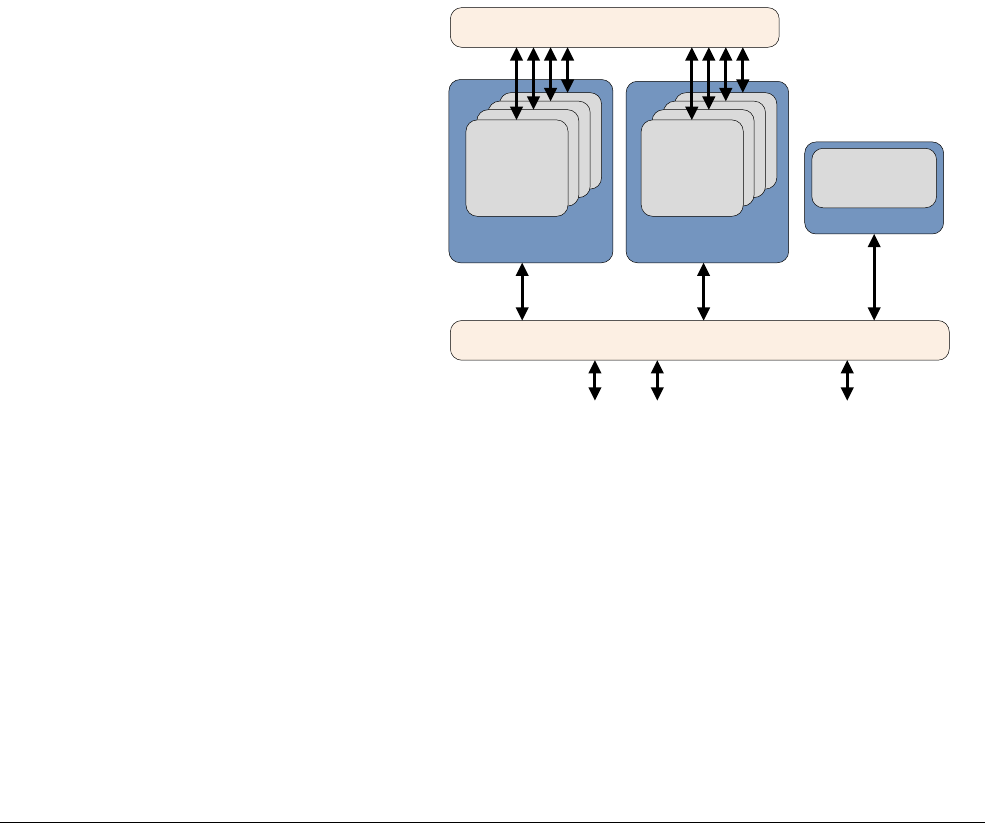

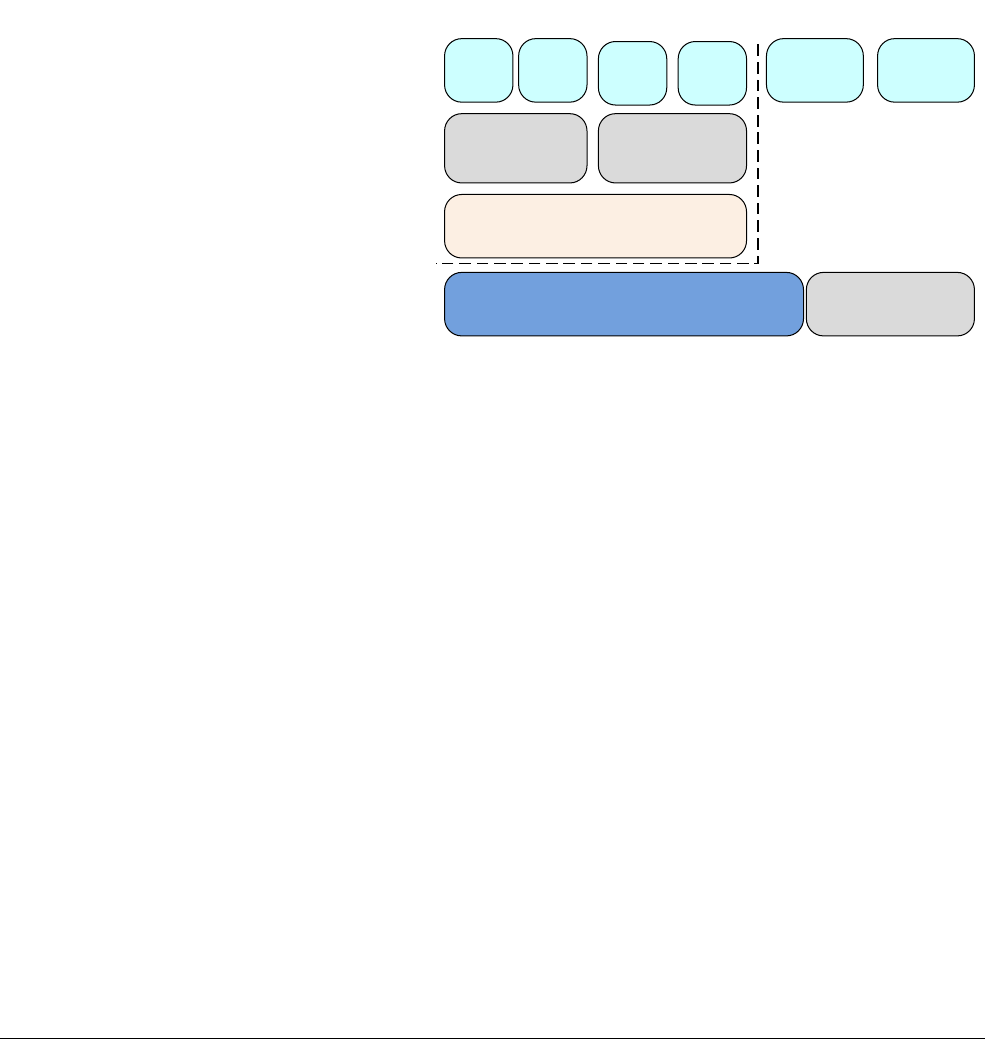

Figure 3-1 Exception levels

In general, a piece of software, such as an application, the kernel of an operating system, or a

hypervisor, occupies a single Exception level. An exception to this rule is in-kernel hypervisors

such as KVM, which operate across both EL2 and EL1.

ARMv8-A provides two security states, Secure and Non-secure. The Non-secure state is also

referred to as the Normal World. This enables an Operating System (OS) to run in parallel with

a trusted OS on the same hardware, and provides protection against certain software attacks and

hardware attacks. ARM TrustZone technology enables the system to be partitioned between the

Normal and Secure worlds. As with the ARMv7-A architecture, the Secure monitor acts as a

gateway for moving between the Normal and Secure worlds.

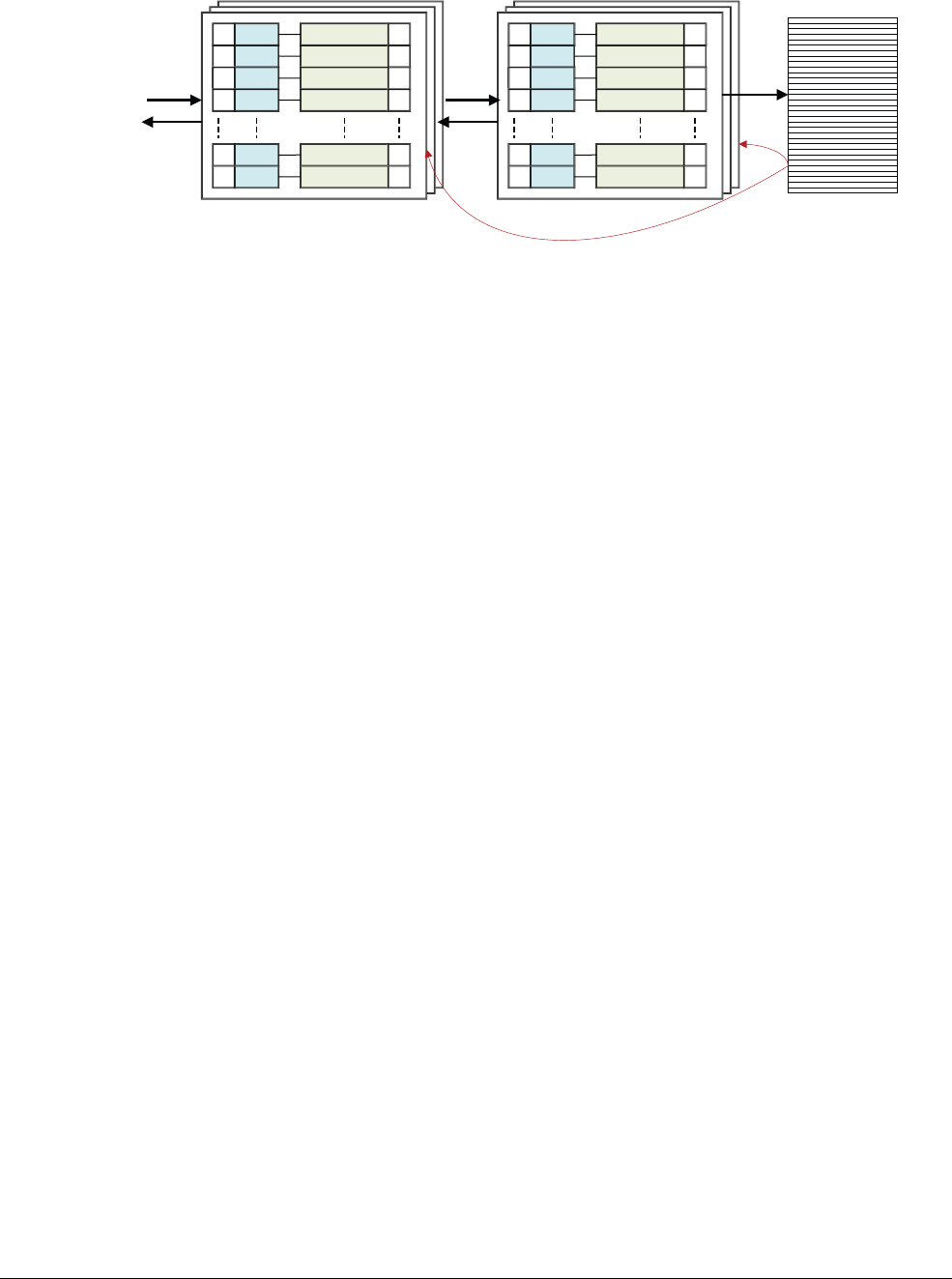

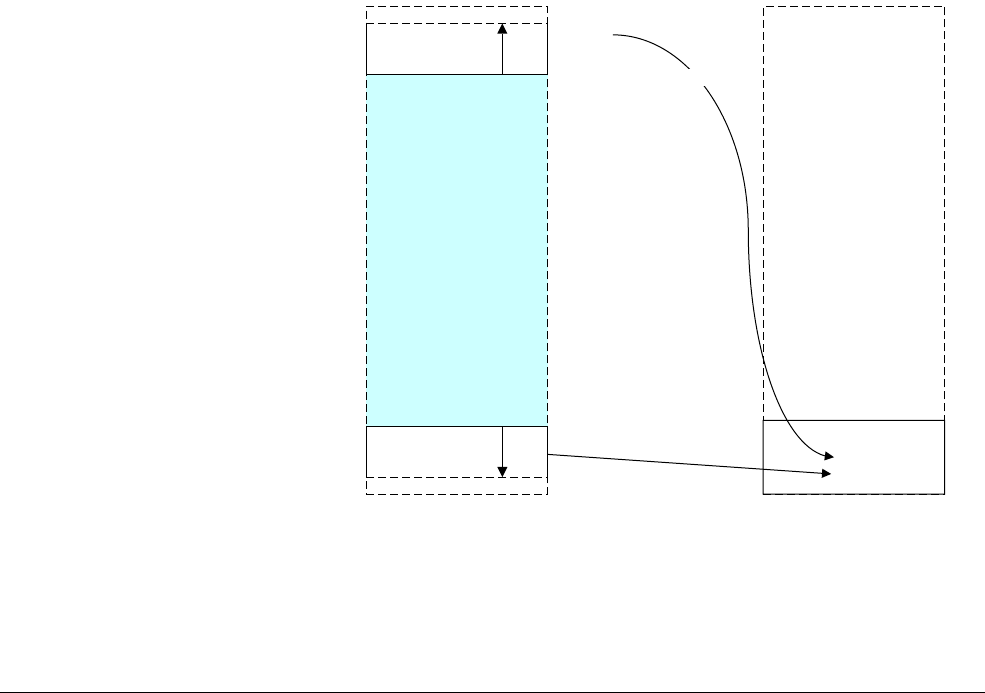

Figure 3-2 ARMv8 Exception levels in the Normal and Secure worlds

ARMv8-A also provides support for virtualization, though only in the Normal world. This

means that hypervisor, or Virtual Machine Manager (VMM) code can run on the system and

host multiple guest operating systems. Each of the guest operating systems is, essentially,

running on a virtual machine. Each OS is then unaware that it is sharing time on the system with

other guest operating systems.

Application

Normal world

EL0

EL1

EL3

EL2

Kernel Kernel

Secure monitor

Application Application Application

Hypervisor

Secure firmwareApplication

Normal world Secure world

Application Application Application

No Hypervisor in

Secure world

EL0

EL1

EL3

EL2

Guest OS Guest OS Trusted OS

Hypervisor

Secure monitor

Fundamentals of ARMv8

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 3-3

ID050815 Non-Confidential

The Normal world (which corresponds to the Non-secure state) has the following privileged

components:

Guest OS kernels

Such kernels include Linux or Windows running in Non-secure EL1. When

running under a hypervisor, the rich OS kernels can be running as a guest or host

depending on the hypervisor model.

Hypervisor

This runs at EL2, which is always Non-secure. The hypervisor, when present and

enabled, provides virtualization services to rich OS kernels.

The Secure world has the following privileged components:

Secure firmware

On an application processor, this firmware must be the first thing that runs at boot

time. It provides several services, including platform initialization, the

installation of the trusted OS, and routing of Secure monitor calls.

Trusted OS

Trusted OS provides Secure services to the Normal world and provides a runtime

environment for executing Secure or trusted applications.

The Secure monitor in the ARMv8 architecture is at a higher Exception level and is more

privileged than all other levels. This provides a logical model of software privilege.

Figure 3-2 on page 3-2 shows that a Secure version of EL2 is not available.

Fundamentals of ARMv8

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 3-4

ID050815 Non-Confidential

3.1 Execution states

The ARMv8 architecture defines two Execution States, AArch64 and AArch32. Each state is

used to describe execution using 64-bit wide general-purpose registers or 32-bit wide

general-purpose registers, respectively. While ARMv8 AArch32 retains the ARMv7 definitions

of privilege, in AArch64, privilege level is determined by the Exception level. Therefore,

execution at ELn corresponds to privilege PLn.

When in AArch64 state, the processor executes the A64 instruction set. When in AArch32 state,

the processor can execute either the A32 (called ARM in earlier versions of the architecture) or

the T32 (Thumb) instruction set.

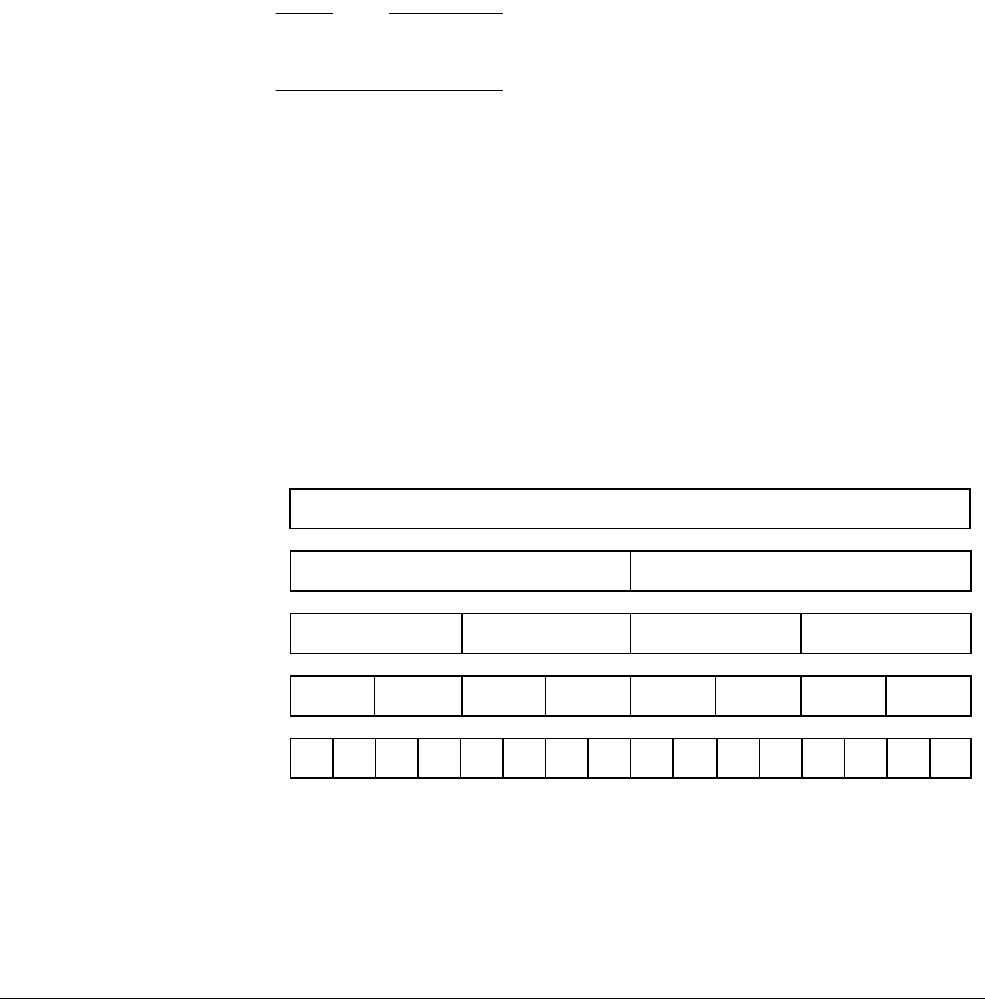

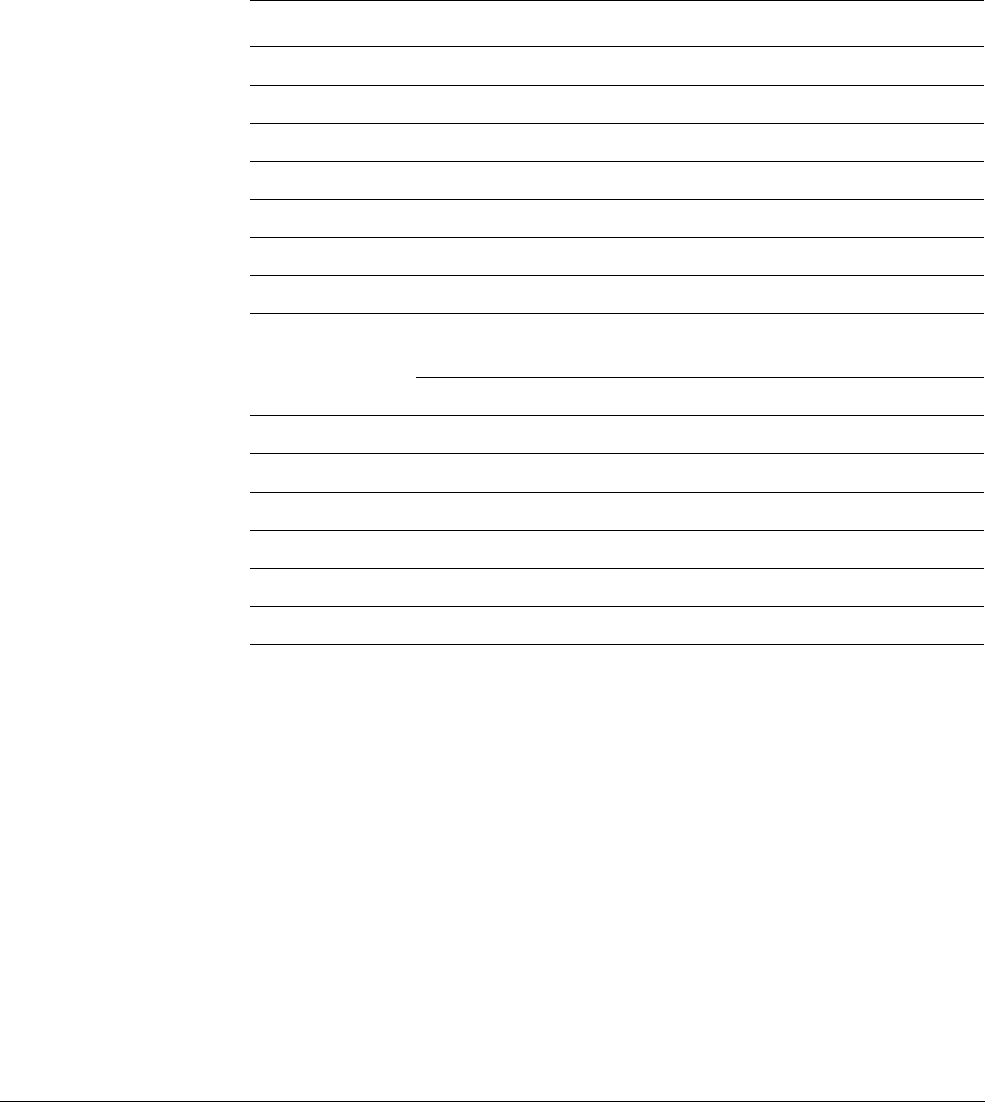

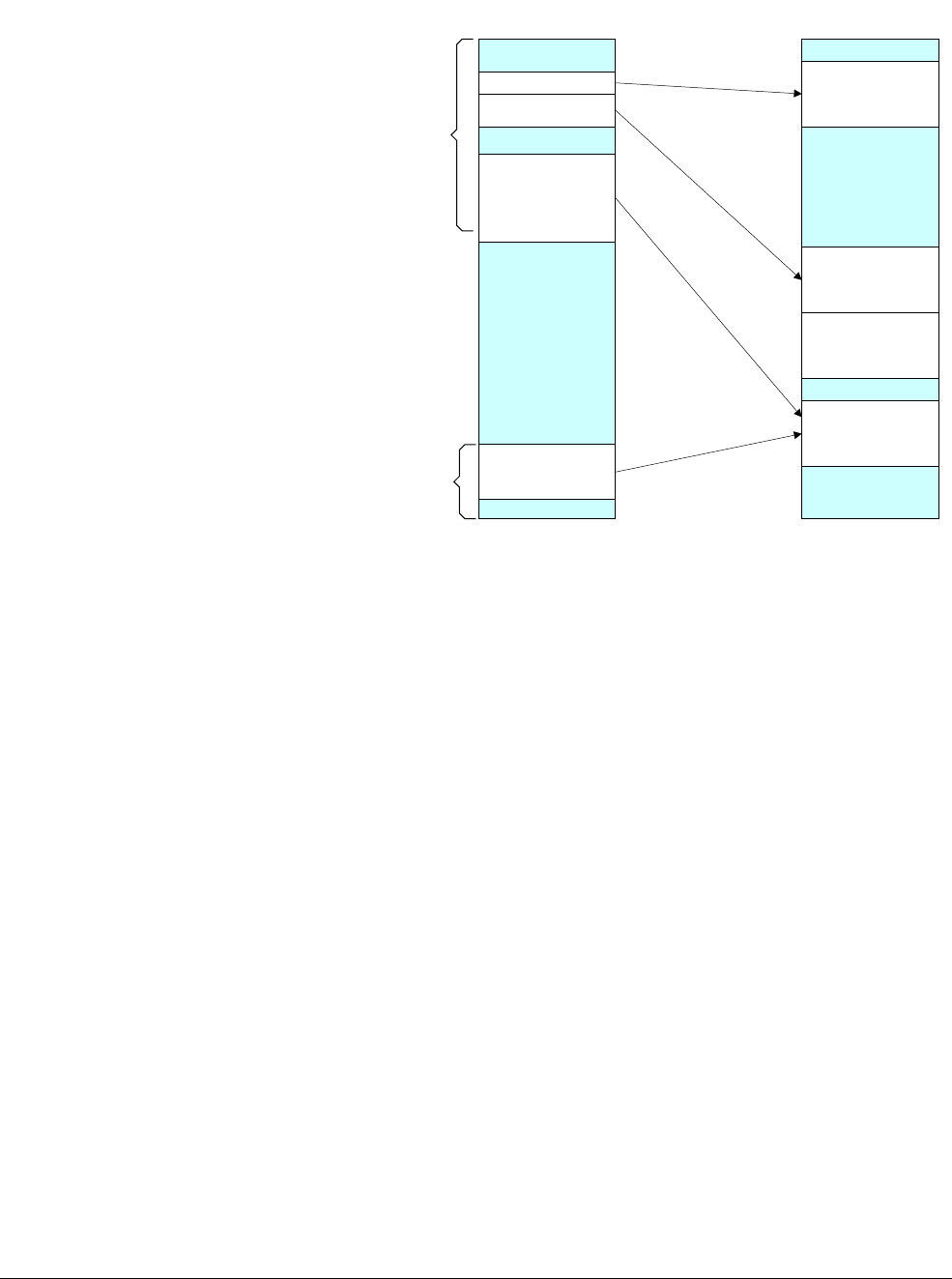

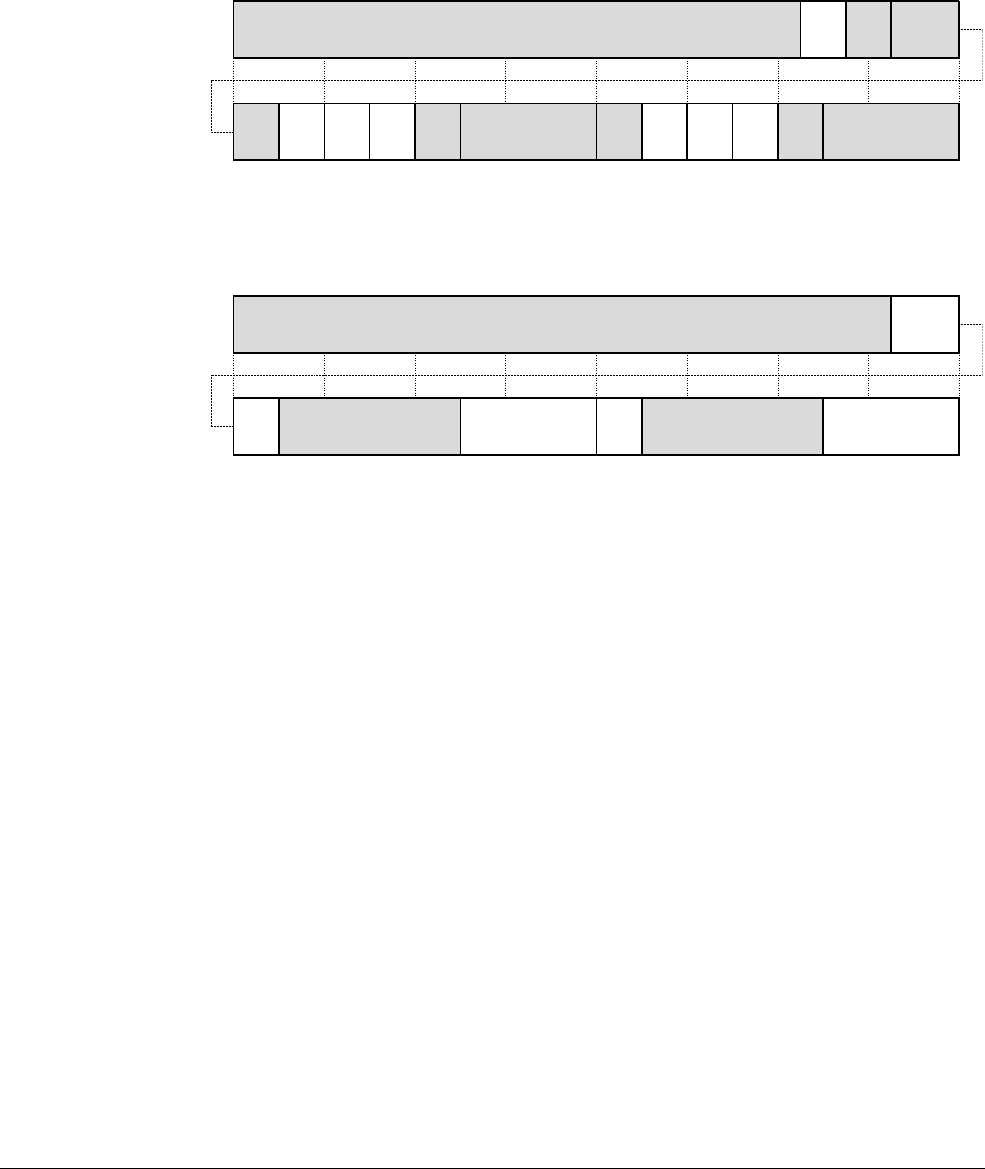

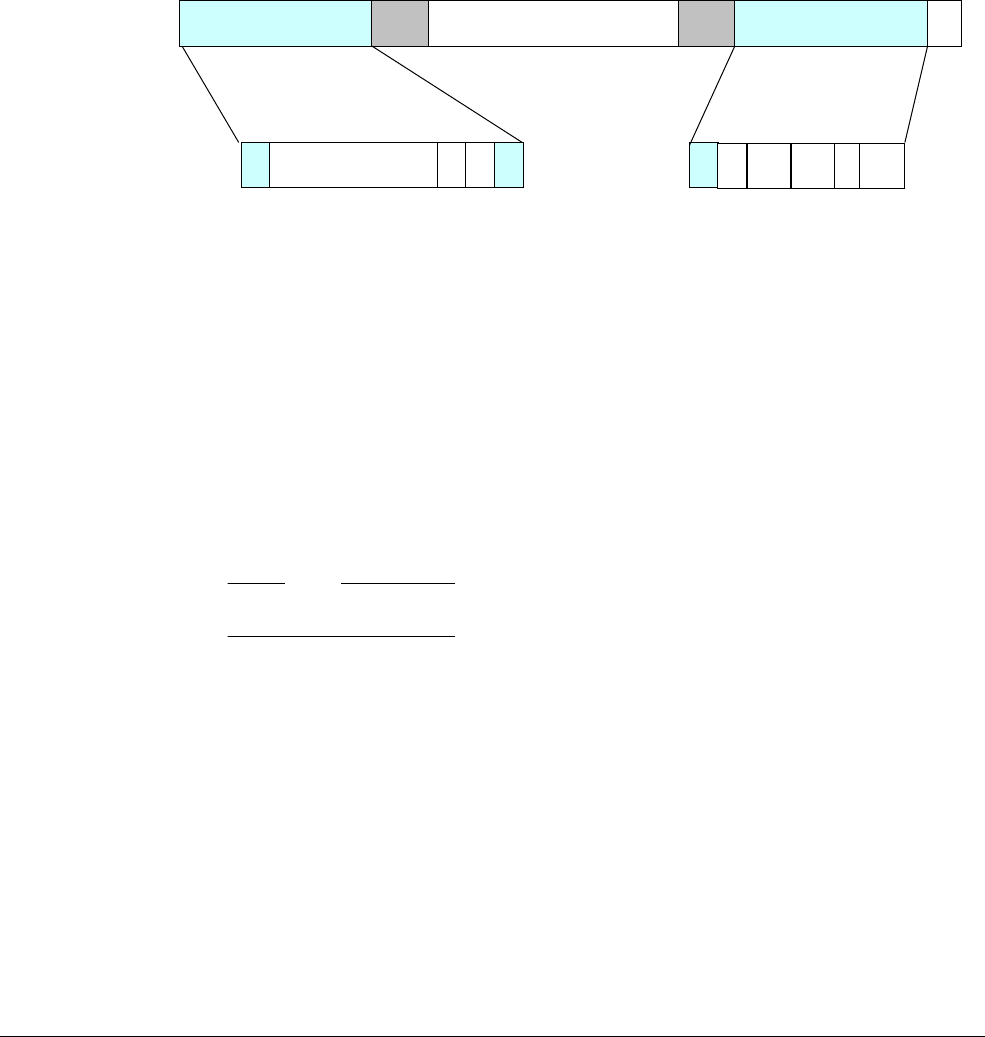

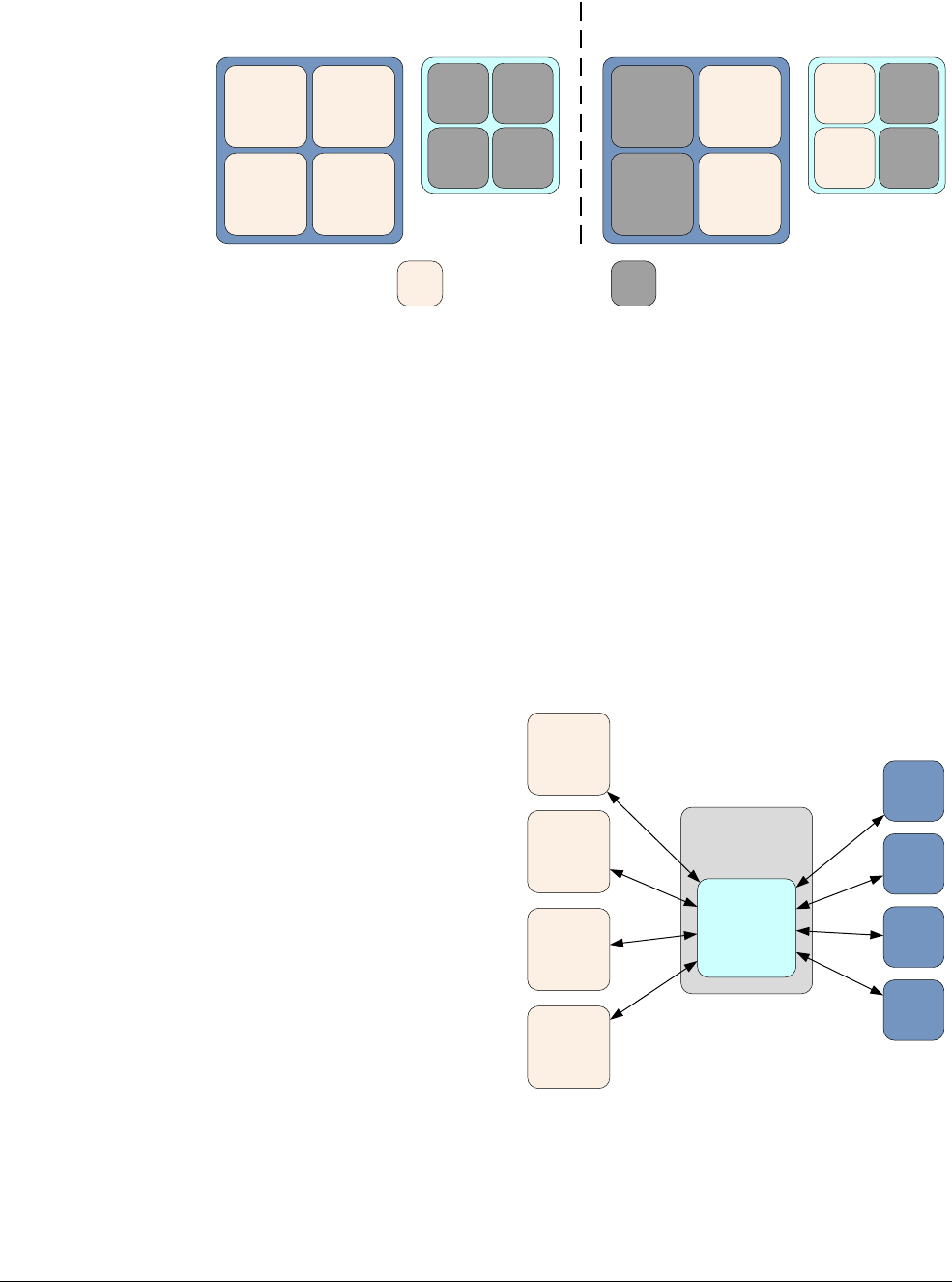

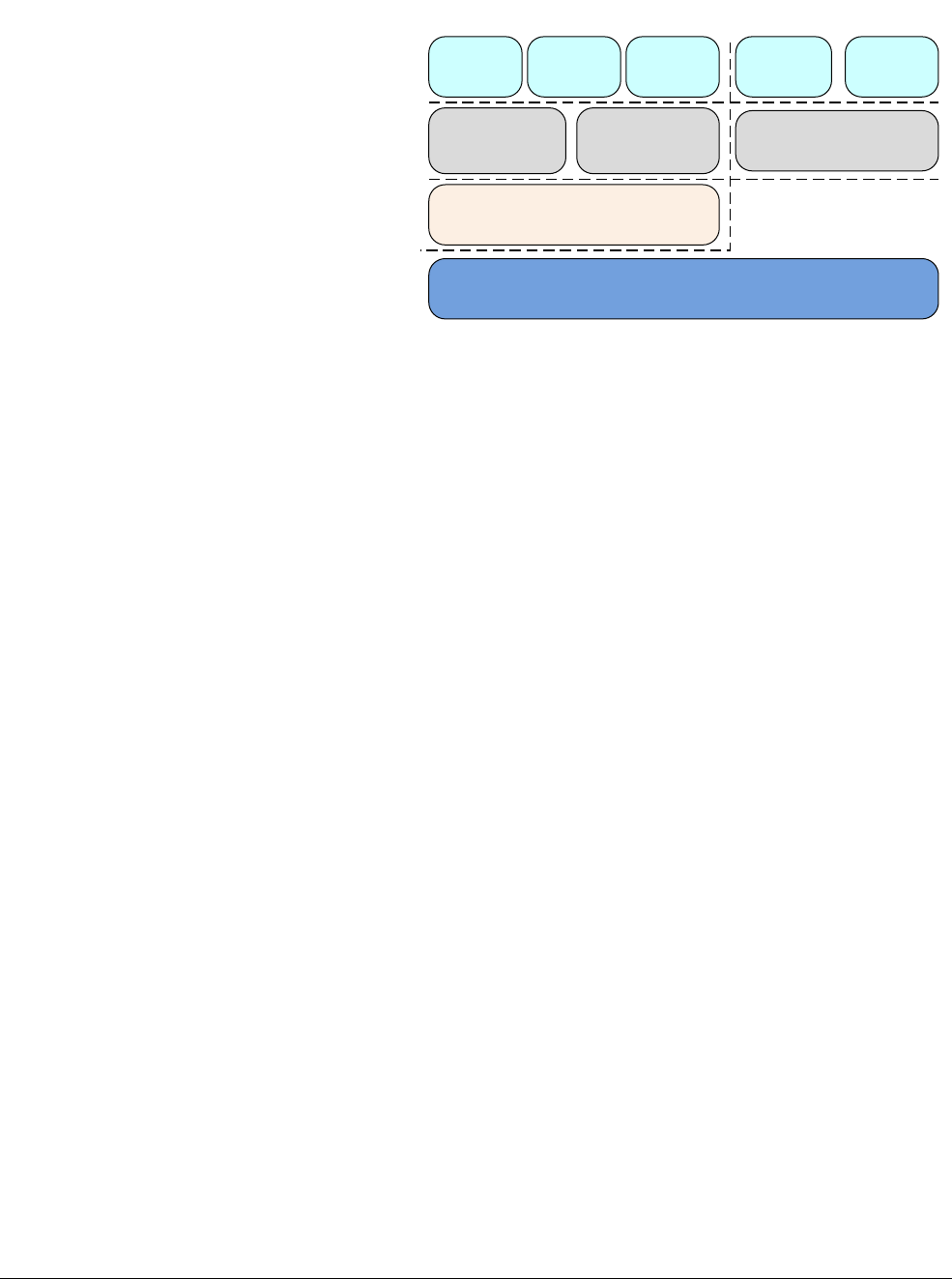

The following diagrams show the organization of the Exception levels in AArch64 and

AArch32.

In AArch64:

Figure 3-3 Exception levels in AArch64

In AArch32:

Figure 3-4 Exception levels in AArch32

In AArch32 state, Trusted OS software executes in Secure EL3, and in AArch64 state it

primarily executes in Secure EL1.

Secure firmwareApplication

Normal world Secure world

Application Application Application

No Hypervisor in

Secure world

EL0

EL1

EL3

EL2

Guest OS Guest OS Trusted OS

Hypervisor

Secure monitor

Secure firmwareApplication

Normal world Secure world

Application Application Application

No EL2 in Secure

world

EL0

EL1

EL3

EL2

Guest OS Guest OS

Trusted kernel

(operates at EL3)

Hypervisor

Secure monitor

Fundamentals of ARMv8

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 3-5

ID050815 Non-Confidential

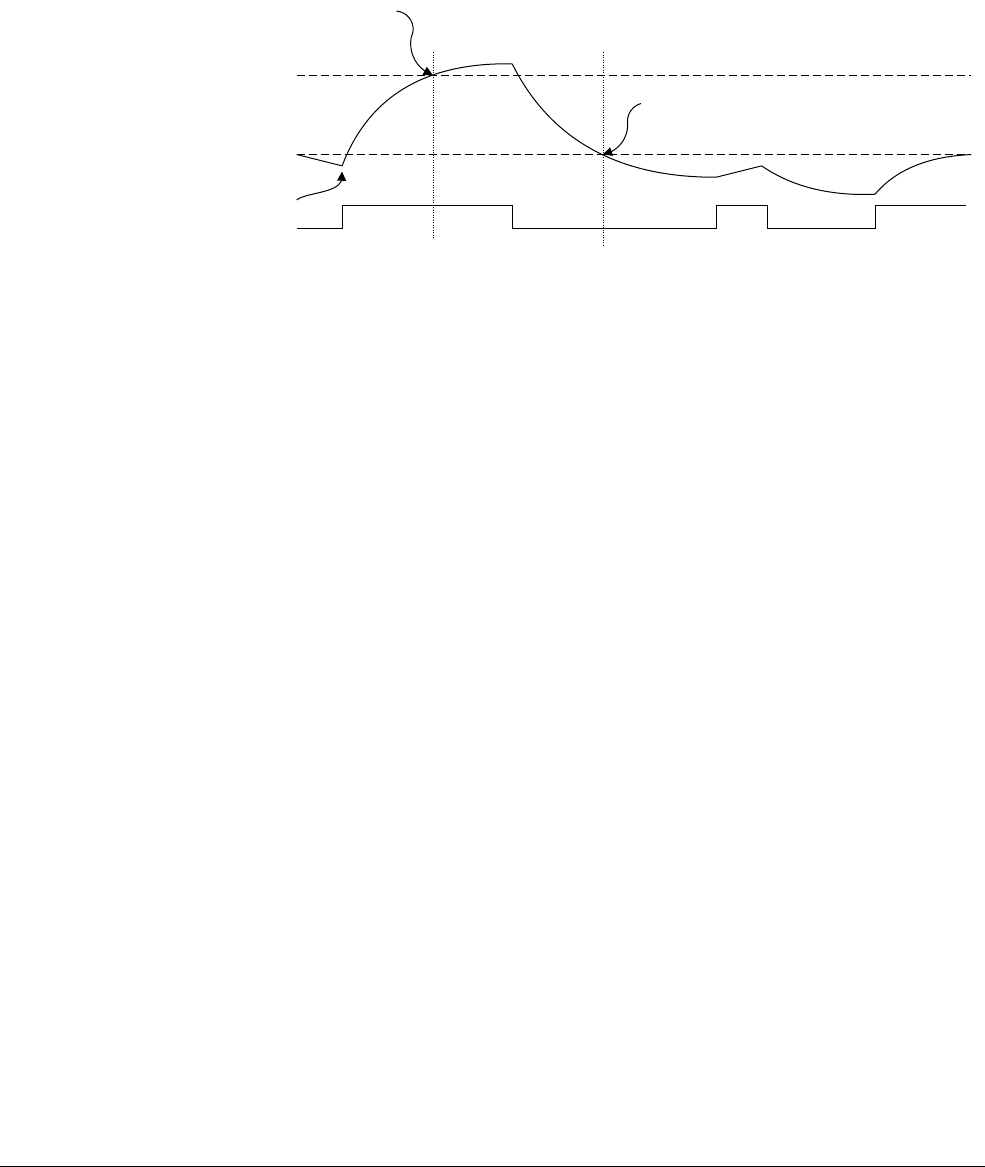

3.2 Changing Exception levels

In the ARMv7 architecture, the processor mode can change under privileged software control

or automatically when taking an exception. When an exception occurs, the core saves the

current execution state and the return address, enters the required mode, and possibly disables

hardware interrupts.

This is summarized in the following table. Applications operate at the lowest level of privilege,

PL0, previously unprivileged mode. Operating systems run at PL1, and the Hypervisor in a

system with the Virtualization extensions at PL2. The Secure monitor, which acts as a gateway

for moving between the Secure and Non-secure (Normal) worlds, also operates at PL1.

Table 3-1 ARMv7 processor modes

Mode Function Security

state

Privilege

level

User (USR) Unprivileged mode in which most applications run Both PL0

FIQ Entered on an FIQ interrupt exception Both PL1

IRQ Entered on an IRQ interrupt exception Both PL1

Supervisor

(SVC)

Entered on reset or when a Supervisor Call instruction (

SVC

)

is executed

Both PL1

Monitor (MON) Entered when the

SMC

instruction (Secure Monitor Call) is

executed or when the processor takes an exception which is

configured for secure handling.

Provided to support switching between Secure and

Non-secure states.

Secure only PL1

Abort (ABT) Entered on a memory access exception Both PL1

Undef (UND) Entered when an undefined instruction is executed Both PL1

System (SYS) Privileged mode, sharing the register view with User mode Both PL1

Hyp (HYP) Entered by the Hypervisor Call and Hyp Trap exceptions. Non-secure only PL2

Fundamentals of ARMv8

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 3-6

ID050815 Non-Confidential

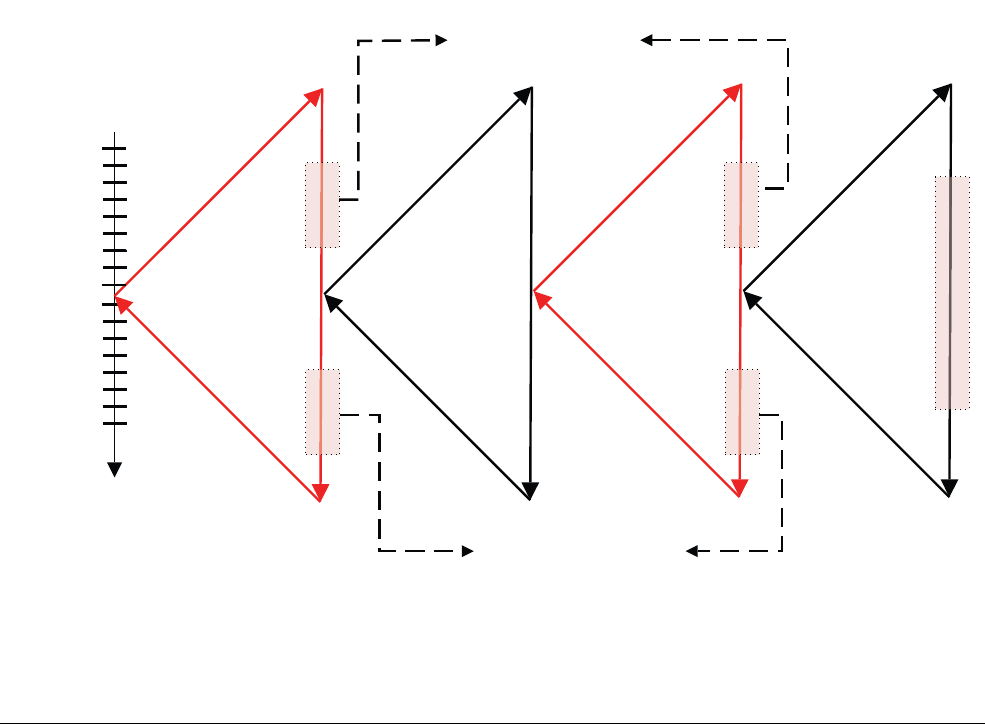

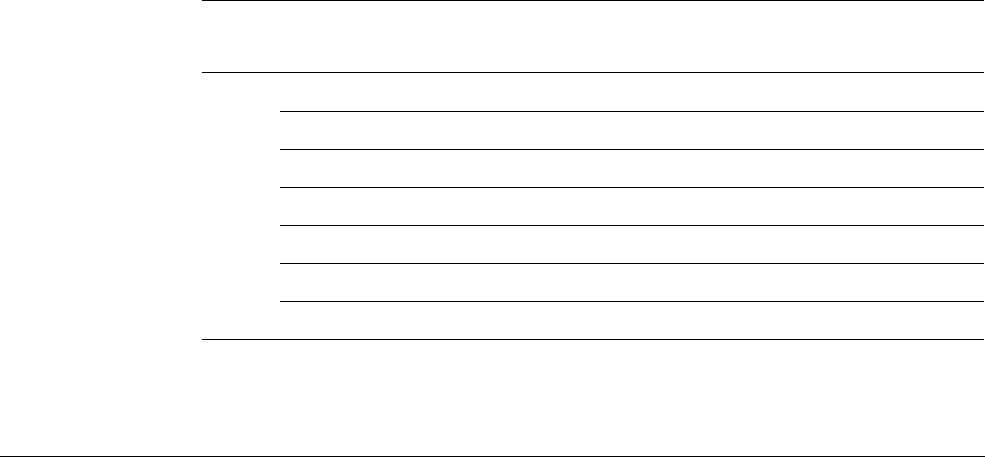

Figure 3-5 ARMv7 privilege levels

In AArch64, the processor modes are mapped onto the Exception levels as in Figure 3-6. As in

ARMv7 (AArch32) when an exception is taken, the processor changes to the Exception level

(mode) that supports the handling of the exception.

Figure 3-6 AArch32 processor modes

Movement between Exception levels follows these rules:

• Moves to a higher Exception level, such as from EL0 to EL1, indicate increased software

execution privilege.

• An exception cannot be taken to a lower Exception level.

• There is no exception handling at level EL0, exceptions must be handled at a higher

Exception level.

Secure PL0

USER mode

Secure state

Secure PL1

System mode (SYS)

Supervisor mode (SVC)

FIQ mode

IRQ mode

Undef (UND) mode

Abort (ABT) mode

Secure PL1

Monitor mode (MON)

Non-secure PL0

USER mode

Non-secure state

Non-secure PL1

System mode (SYS)

Supervisor mode (SVC)

FIQ mode

IRQ mode

Undef (UND) mode

Abort (ABT) mode

Non-secure PL2

Hyp mode

Secure firmwareApplication

Normal world Secure world

User

SVC, ABT, IRQ,

FIQ, UND, SYS

Hyp

Mon

Application Application Application

No Hypervisor in

Secure world

Guest OS Guest OS Trusted OS

Hypervisor

Secure monitor

EL1

EL2

EL0

EL3

Fundamentals of ARMv8

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 3-7

ID050815 Non-Confidential

• An exception causes a change of program flow. Execution of an exception handler starts,

at an Exception level higher than EL0, from a defined vector that relates to the exception

taken. Exceptions include:

— Interrupts such as IRQ and FIQ.

— Memory system aborts.

— Undefined instructions.

— System calls. These permit unprivileged software to make a system call to an

operating system.

— Secure monitor or hypervisor traps.

• Ending exception handling and returning to the previous Exception level is performed by

executing the

ERET

instruction.

• Returning from an exception can stay at the same Exception level or enter a lower

Exception level. It cannot move to a higher Exception level.

• The security state does change with a change of Exception level, except when retuning

from EL3 to a Non-secure state. See Switching between Secure and Non-secure state on

page 17-8.

Fundamentals of ARMv8

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 3-8

ID050815 Non-Confidential

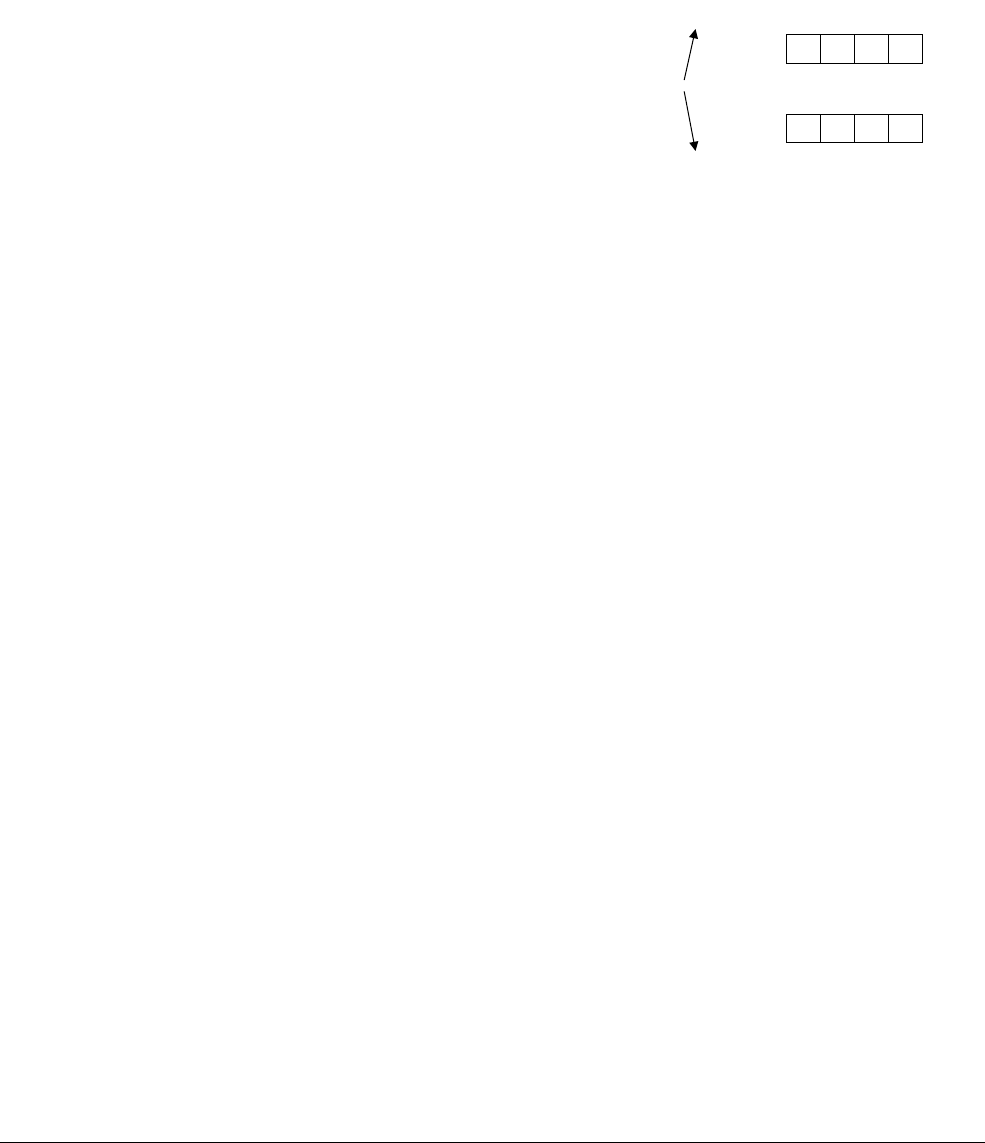

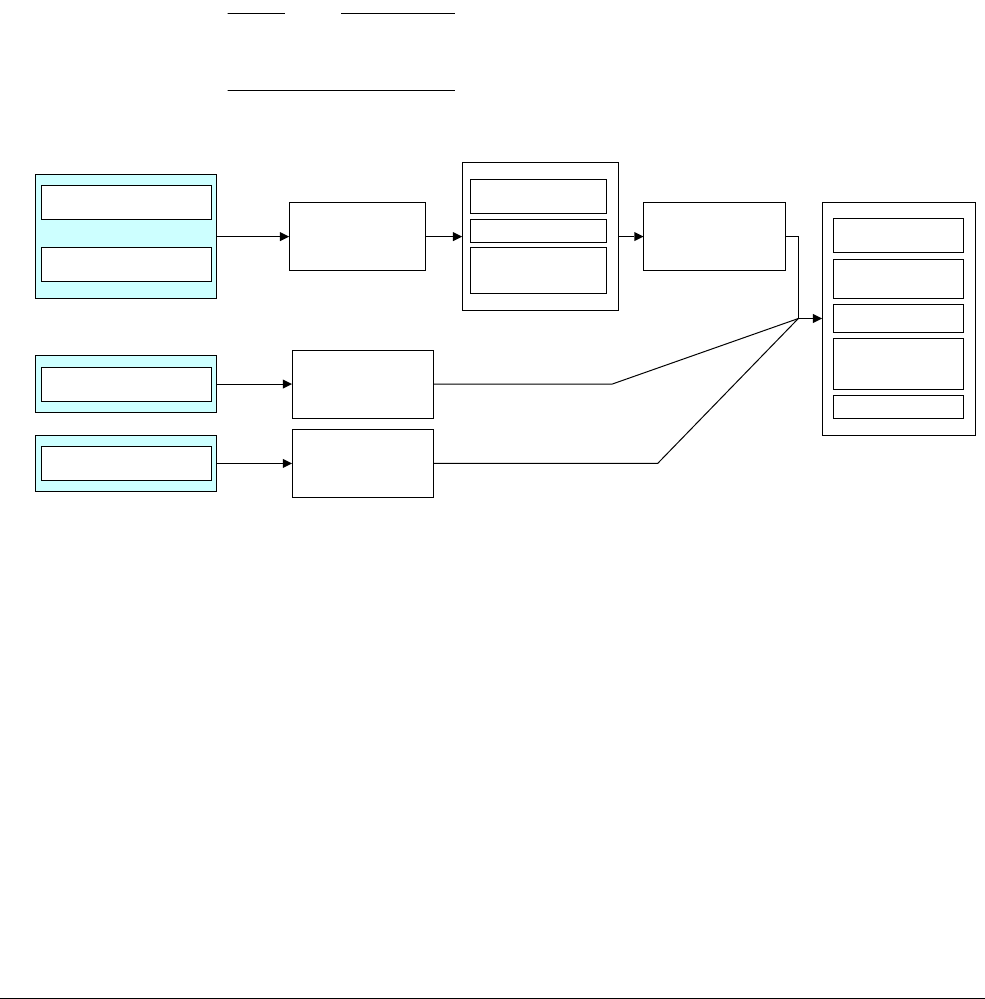

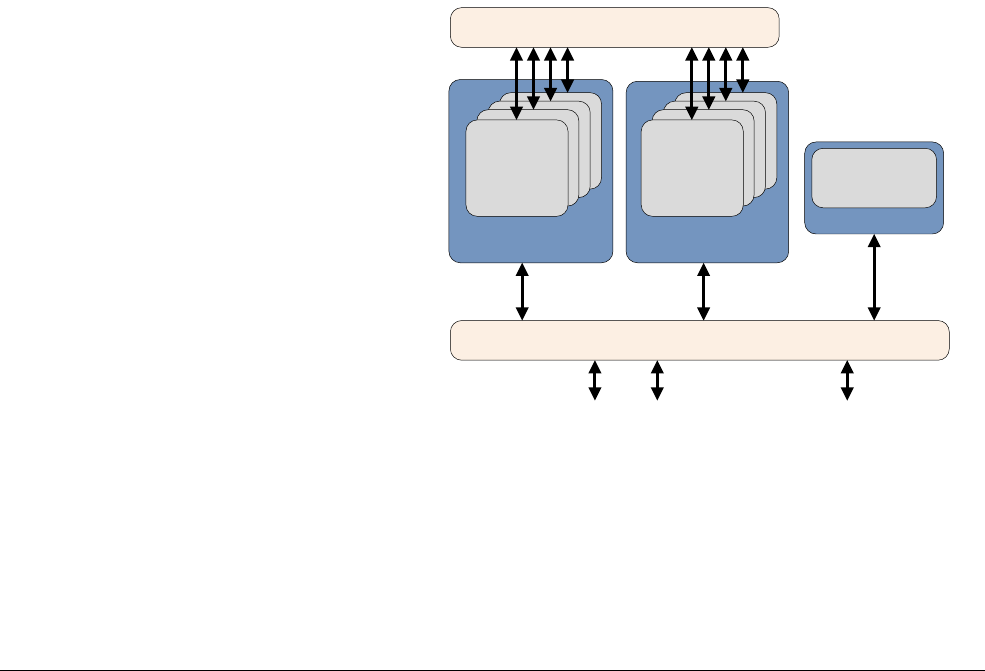

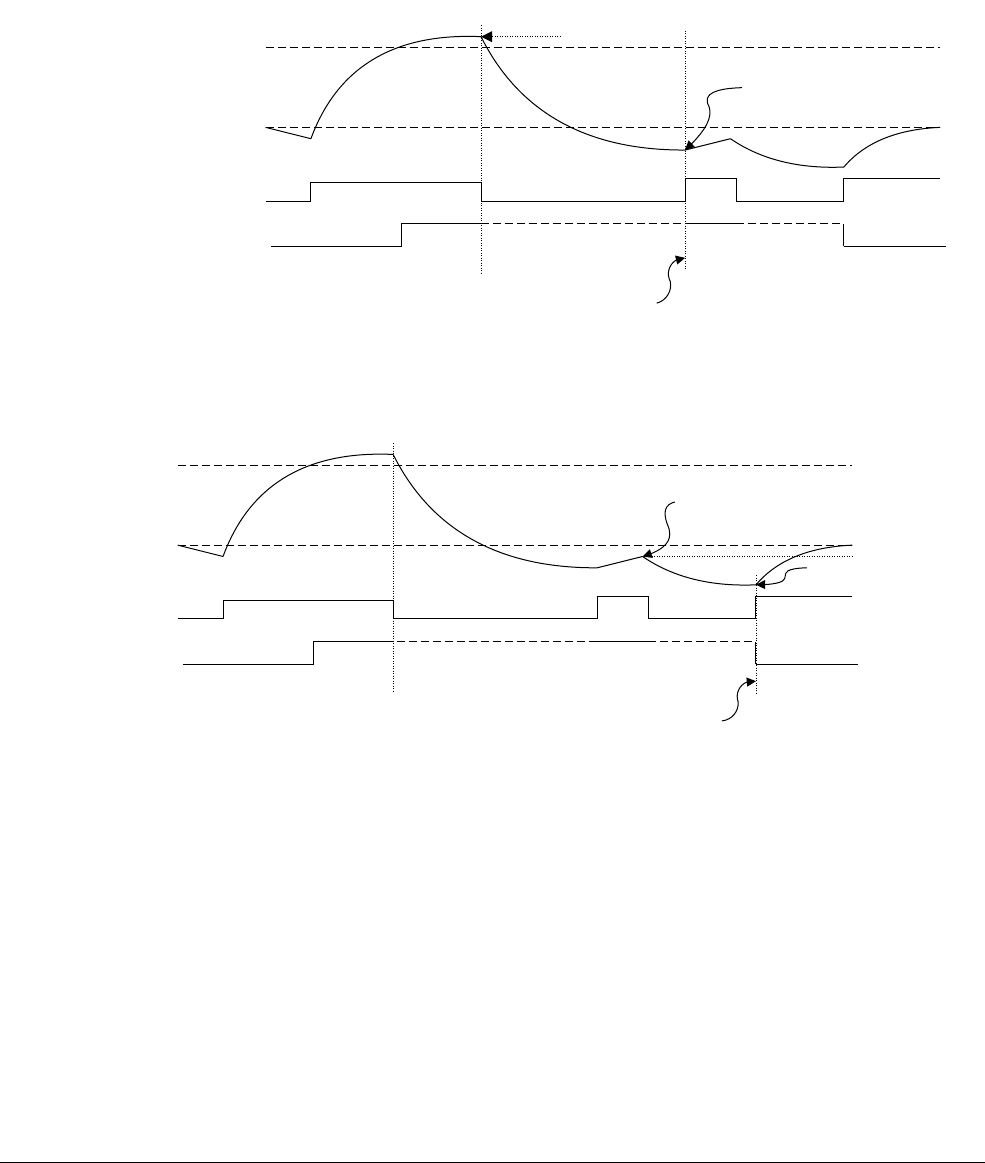

3.3 Changing execution state

There are times when you must change the execution state of your system. This could be, for

example, if you are running a 64-bit operating system, and want to run a 32-bit application at

EL0. To do this, the system must change to AArch32.

When the application has completed or execution returns to the OS, the system can switch back

to AArch64. Figure 3-7 on page 3-9 shows that you cannot do it the other way around. An

AArch32 operating system cannot host a 64-bit application.

To change between execution states at the same Exception level, you have to switch to a higher

Exception level then return to the original Exception level. For example, you might have 32-bit

and 64-bit applications running under a 64-bit OS. In this case, the 32-bit application can

execute and generate a Supervisor Call (

SVC

) instruction, or receive an interrupt, causing a

switch to EL1 and AArch64. (See Exception handling instructions on page 6-21.) The OS can

then do a task switch and return to EL0 in AArch64. Practically speaking, this means that you

cannot have a mixed 32-bit and 64-bit application, because there is no direct way of calling

between them.

You can only change execution state by changing Exception level. Taking an exception might

change from AArch32 to AArch64, and returning from an exception may change from AArch64

to AArch32.

Code at EL3 cannot take an exception to a higher exception level, so cannot change execution

state, except by going through a reset.

The following is a summary of some of the points when changing between AArch64 and

AArch32 execution states:

• Both AArch64 and AArch32 execution states have Exception levels that are generally

similar, but there are some differences between Secure and Non-secure operation. The

execution state the processor is in when the exception is generated can limit the Exception

levels available to the other execution state.

• Changing to AArch32 requires going from a higher to a lower Exception level. This is the

result of exiting an exception handler by executing the

ERET

instruction. See Exception

handling instructions on page 6-21.

• Changing to AArch64 requires going from a lower to a higher Exception level. The

exception can be the result of an instruction execution or an external signal.

• If, when taking an exception or returning from an exception, the Exception level remains

the same, the execution state cannot change.

• Where an ARMv8 processor operates in AArch32 execution state at a particular

Exception level, it uses the same exception model as in ARMv7 for exceptions taken to

that Exception level. In the AArch64 execution state, it uses the exception handling model

described in Chapter 10 AArch64 Exception Handling.

Interworking between the two states is therefore performed at the level of the Secure monitor,

hypervisor or operating system. A hypervisor or operating system executing in AArch64 state

can support AArch32 operation at lower privilege levels. This means that an OS running in

AArch64 can host both AArch32 and AArch64 applications. Similarly, an AArch64 hypervisor

can host both AArch32 and AArch64 guest operating systems. However, a 32-bit operating

system cannot host a 64-bit application and a 32-bit hypervisor cannot host a 64-bit guest

operating system.

Fundamentals of ARMv8

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 3-9

ID050815 Non-Confidential

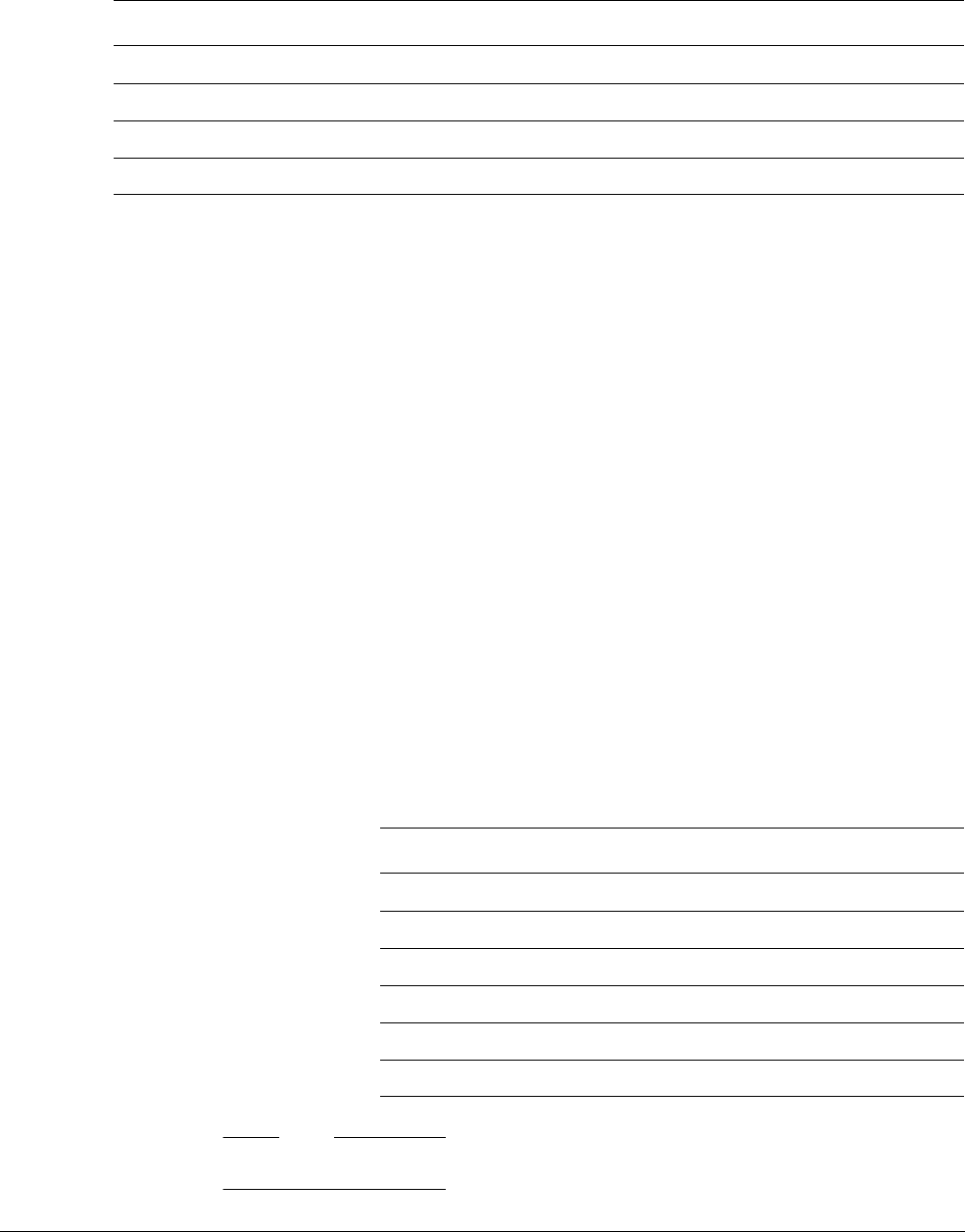

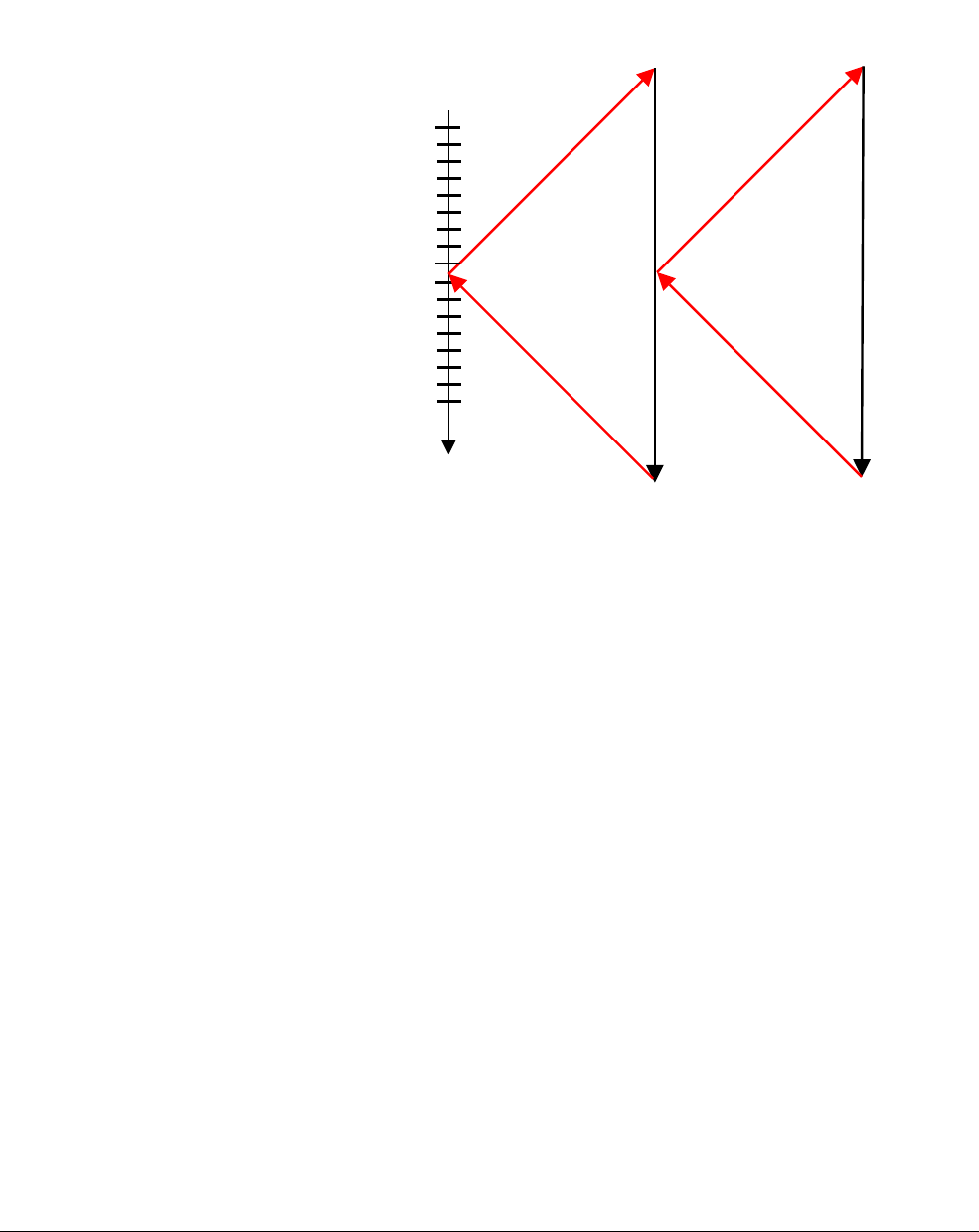

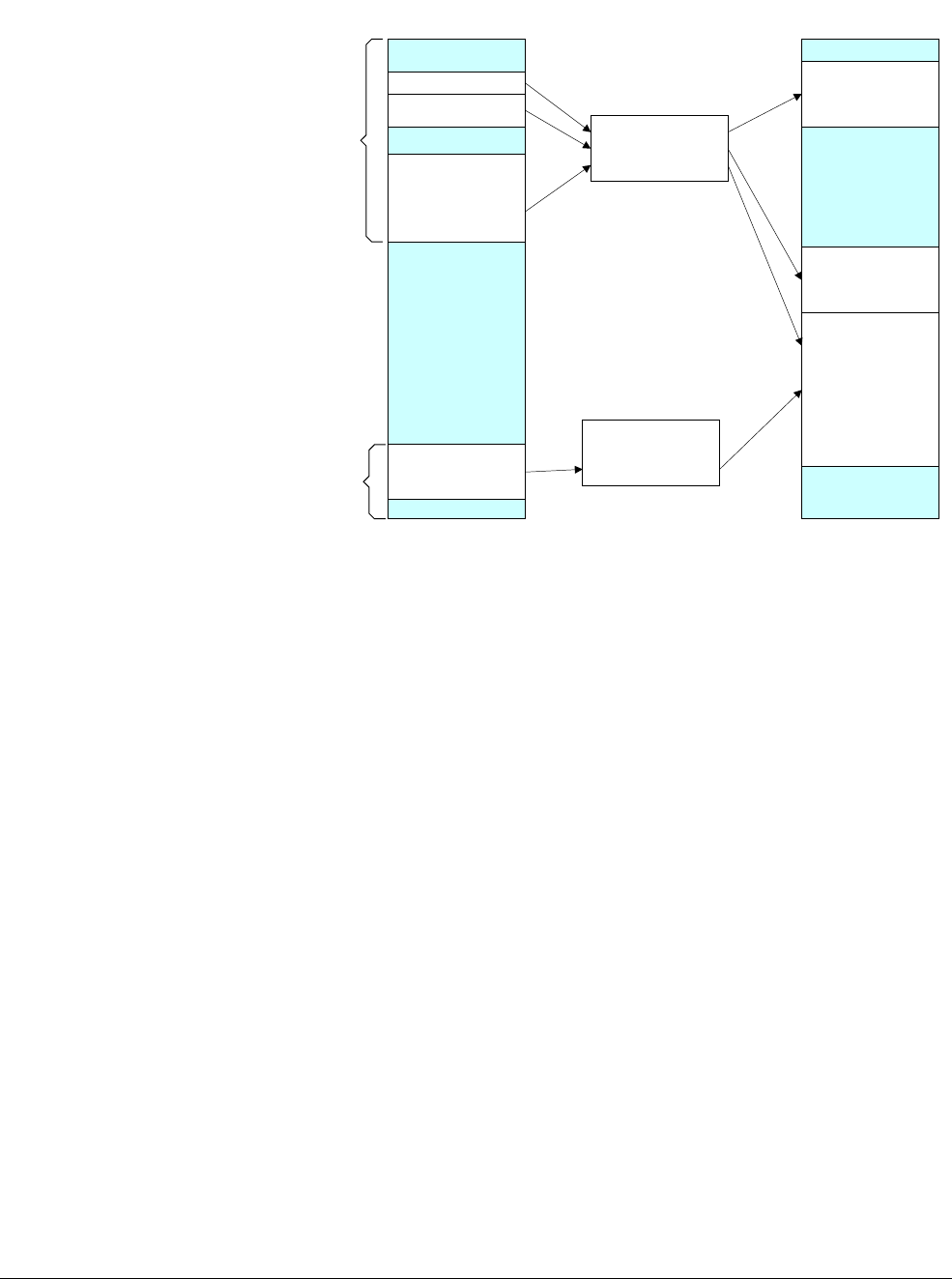

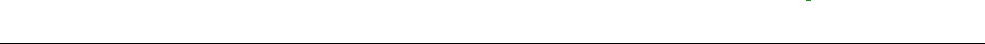

Figure 3-7 Moving between AArch32 and AArch64

For the highest implemented Exception level (EL3 on the Cortex-A53 and Cortex-A57

processors), which execution state to use for each Exception level when taking an exception is

fixed. The Exception level can only be changed by resetting the processor. For EL2 and EL1, it

is controlled by the System registers on page 4-7.

AArch64

App

EL0

EL1

EL2

An AArch64

OS can host

a mix of

AArch64

and AArch32

applications

An AArch32

OS cannot host

an AArch64

application

An AArch32

hypervisor

cannot host

an AArch64 OS

An AArch64

hypervisor

can host

an AArch64 and

AArch32 OS

AArch64 OS AArch32 OS

Hypervisor

AArch32

App

AArch32

App

AArch64

App

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 4-1

ID050815 Non-Confidential

Chapter 4

ARMv8 Registers

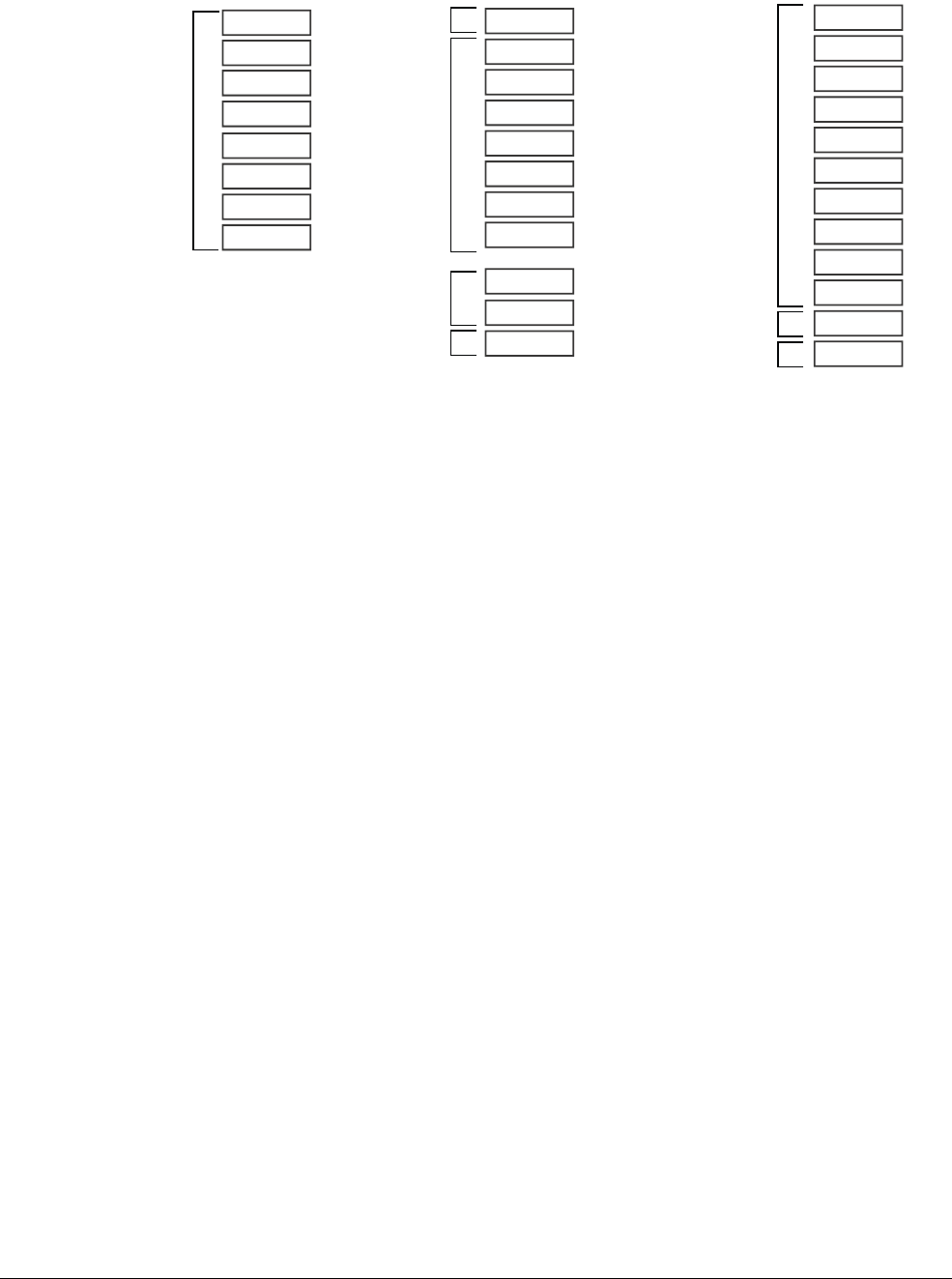

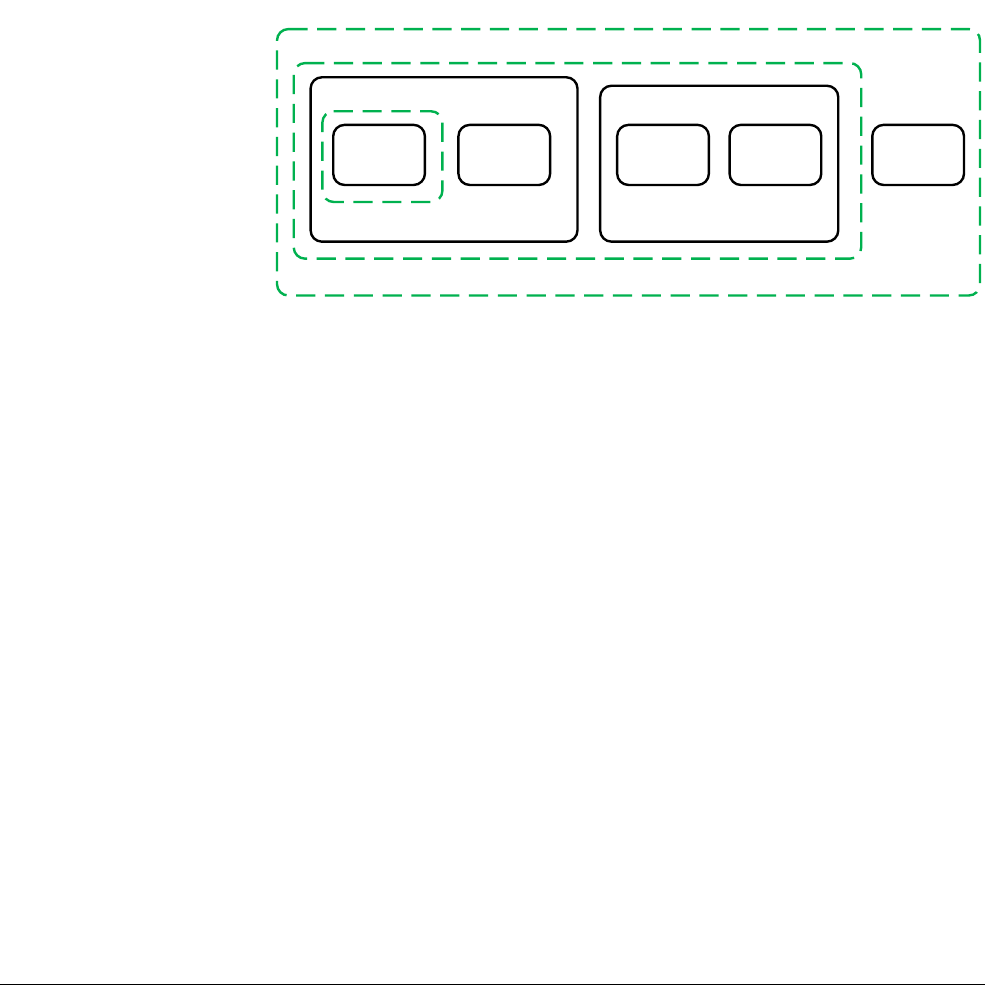

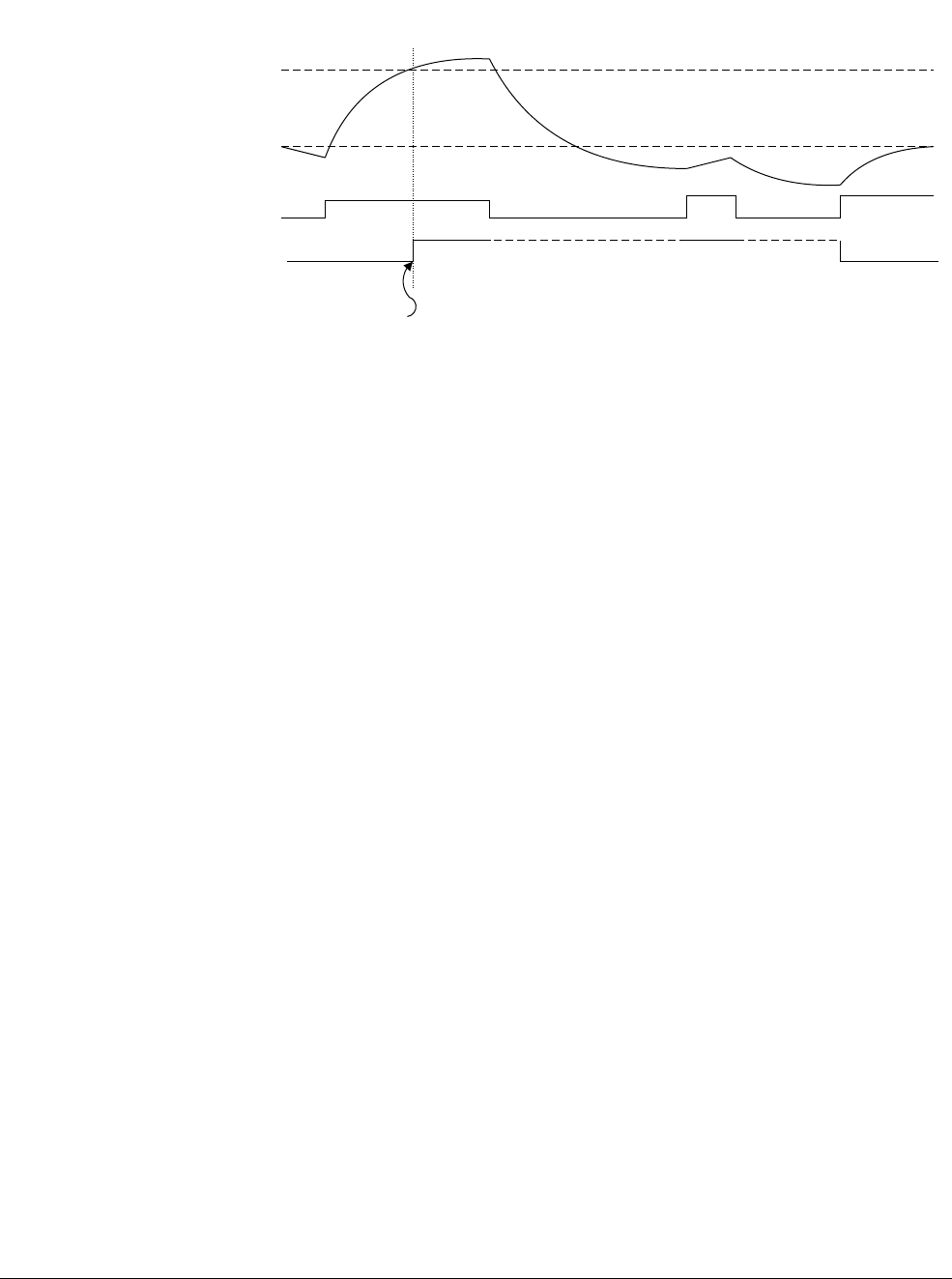

The AArch64 execution state provides 31 × 64-bit general-purpose registers accessible at all

times and in all Exception levels.

Each register is 64 bits wide and they are generally referred to as registers X0-X30.

ARMv8 Registers

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 4-2

ID050815 Non-Confidential

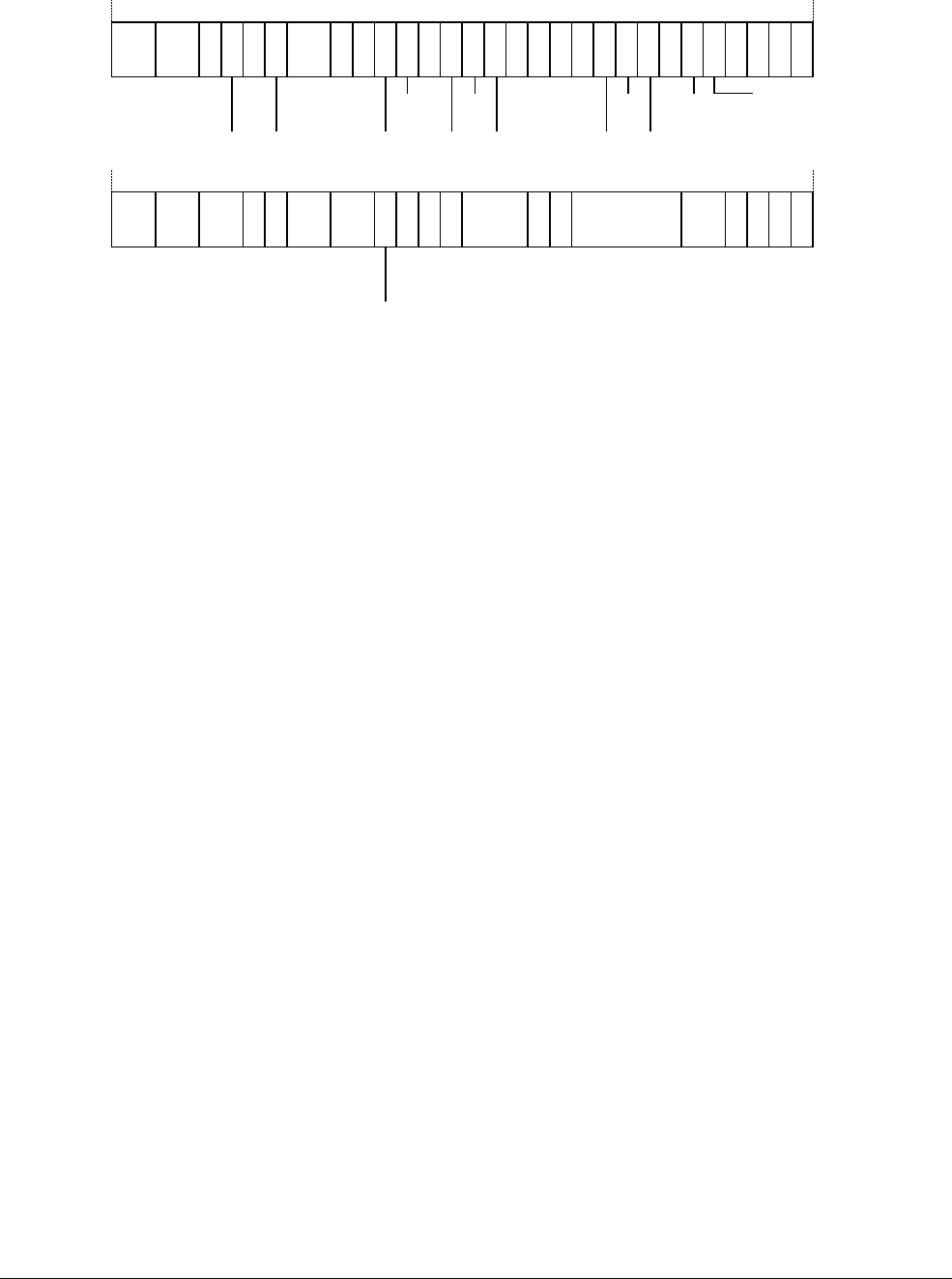

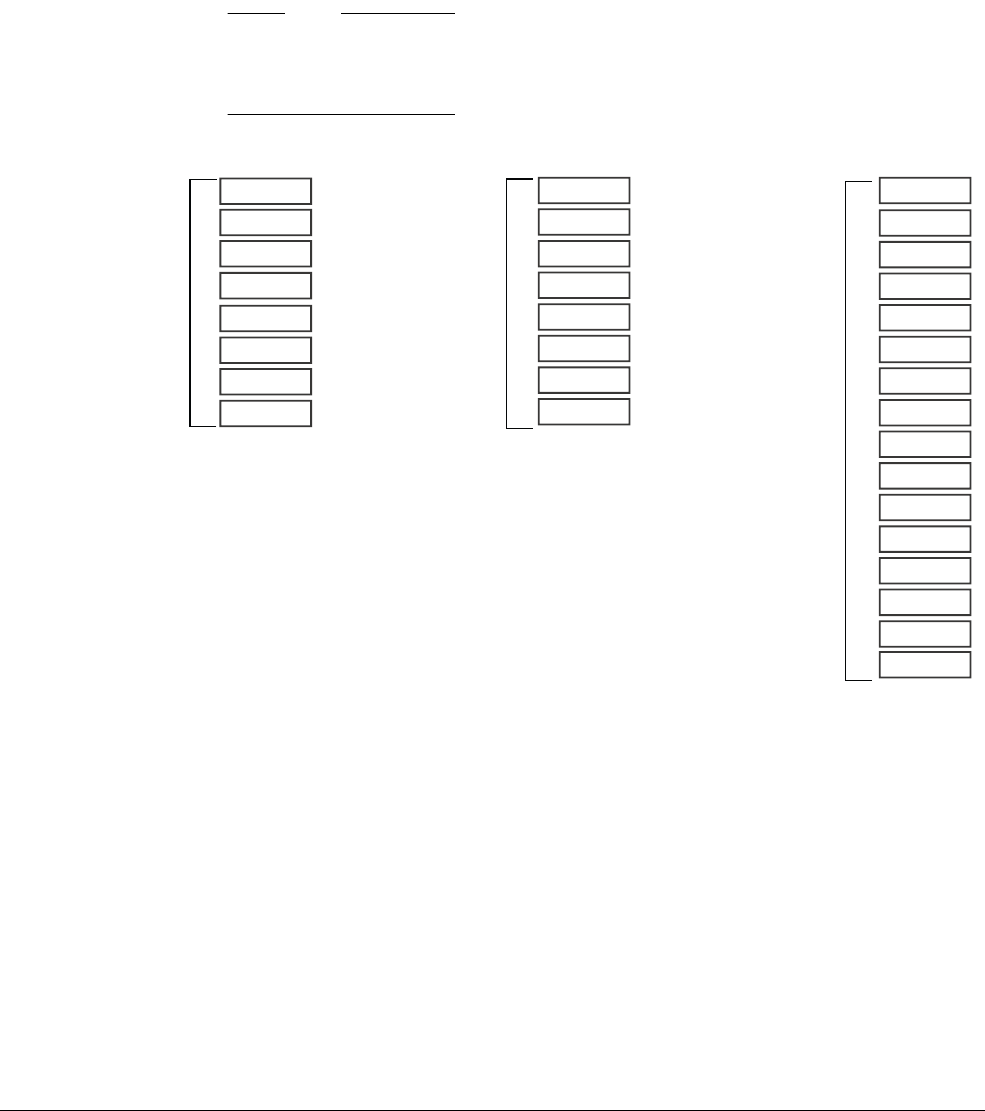

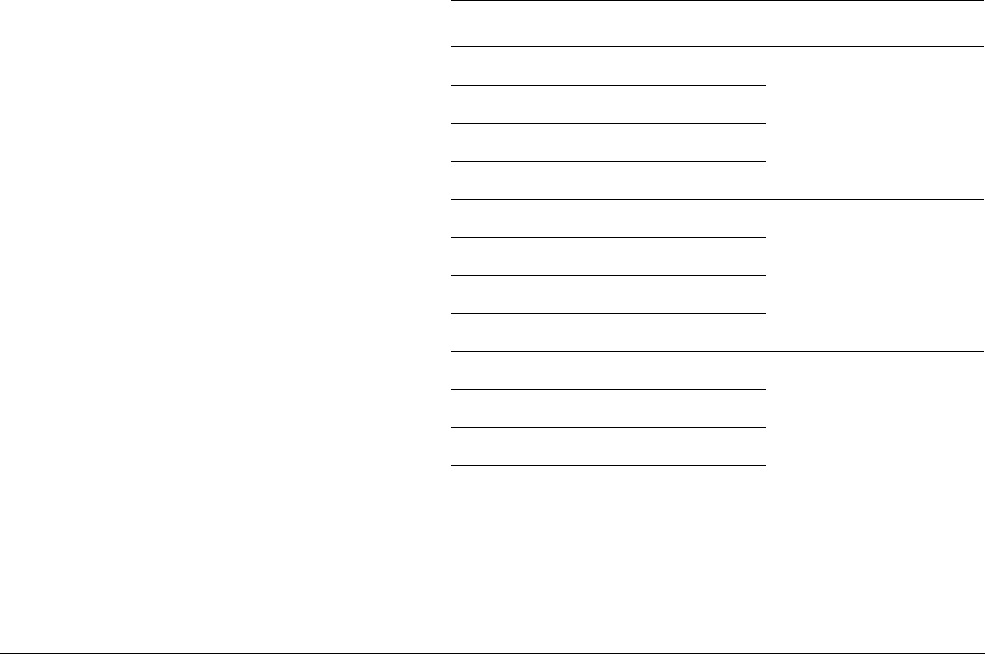

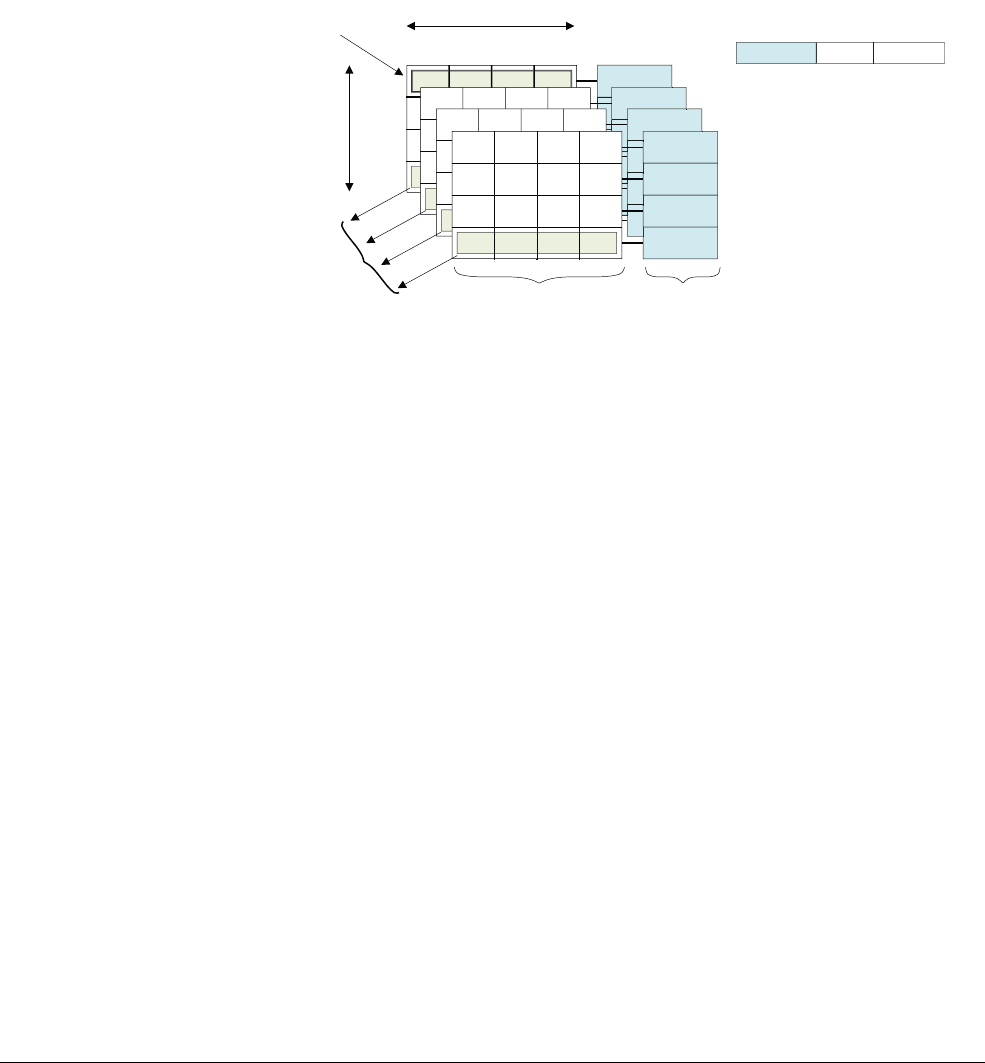

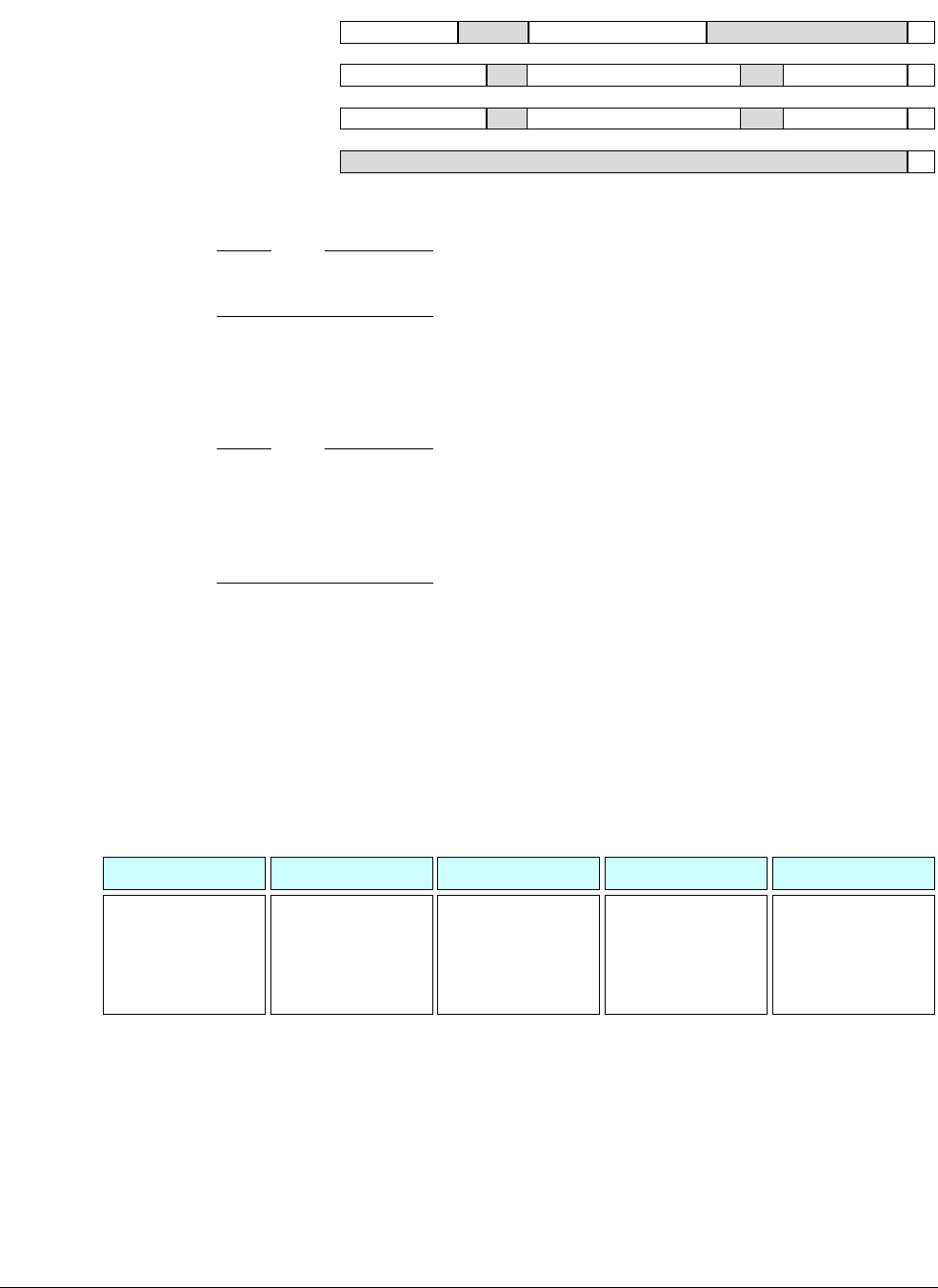

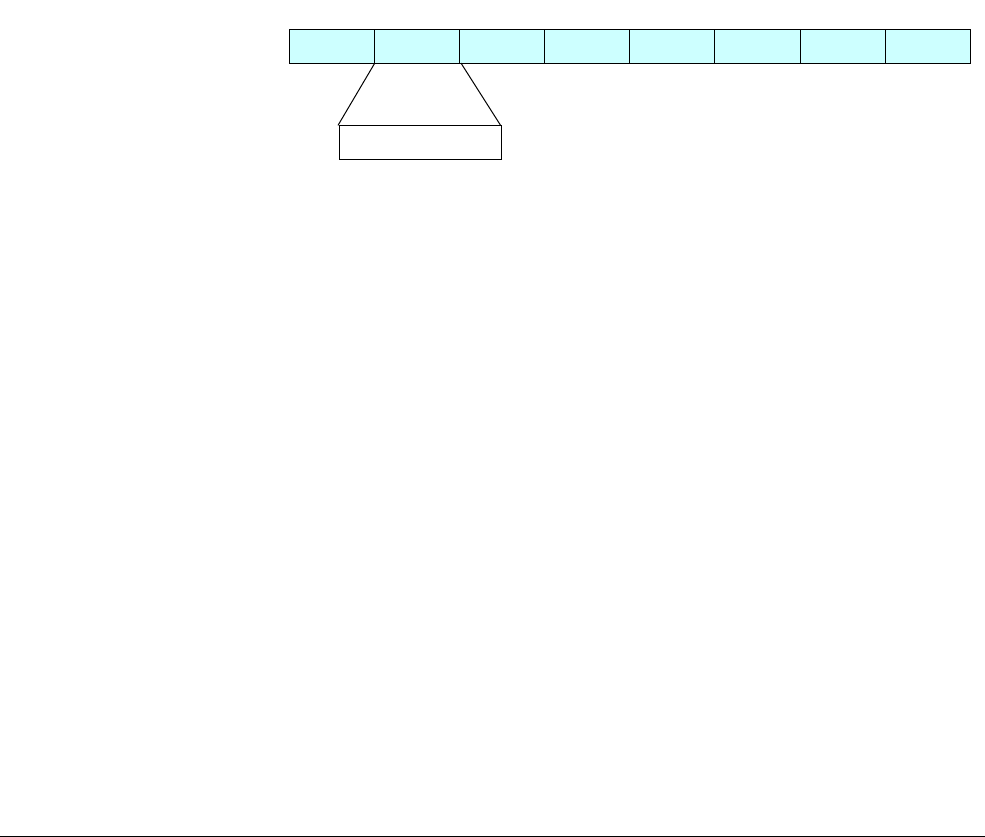

Figure 4-1 AArch64 general-purpose registers

Each AArch64 64-bit general-purpose register (X0-X30) also has a 32-bit (W0-W30) form.

Figure 4-2 64-bit register with W and X access.

The 32-bit W register forms the lower half of the corresponding 64-bit X register. That is, W0

maps onto the lower word of X0, and W1 maps onto the lower word of X1.

Reads from W registers disregard the higher 32 bits of the corresponding X register and leave

them unchanged. Writes to W registers set the higher 32 bits of the X register to zero. That is,

writing

0xFFFFFFFF

into W0 sets X0 to

0x00000000FFFFFFFF

.

X16/W16

X0/W0

X1/W1

X2/W2

X3/W3

X4/W4

X5/W5

X6/W6

X7/W7

X8/W8

X9/W9

X10/W10

X11/W11

X12/W12

X13/W13

X14/W14

X15/W15

X17/W17

X18/W18

X19/W19

X20/W20

X21/W21

X22/W22

X23/W23

X24/W24

X25/W25

X26/W26

X27/W27

X28/W28

X29/W29

X30/W30

Frame pointer

Procedure link register

EL0, EL1,

EL2, EL3

31 0

Wn

3263

Xn

ARMv8 Registers

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 4-3

ID050815 Non-Confidential

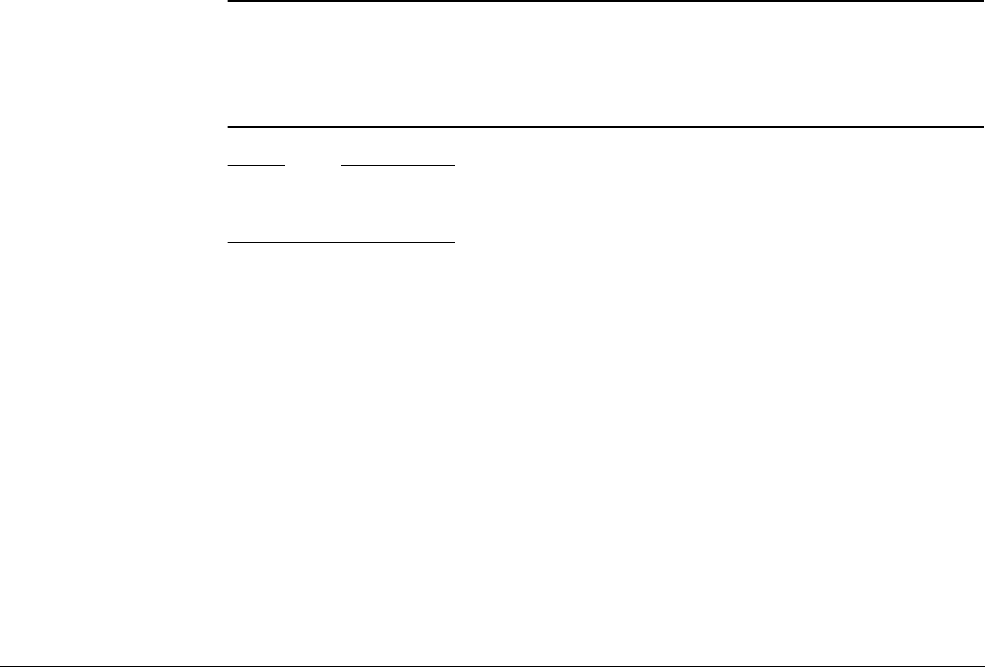

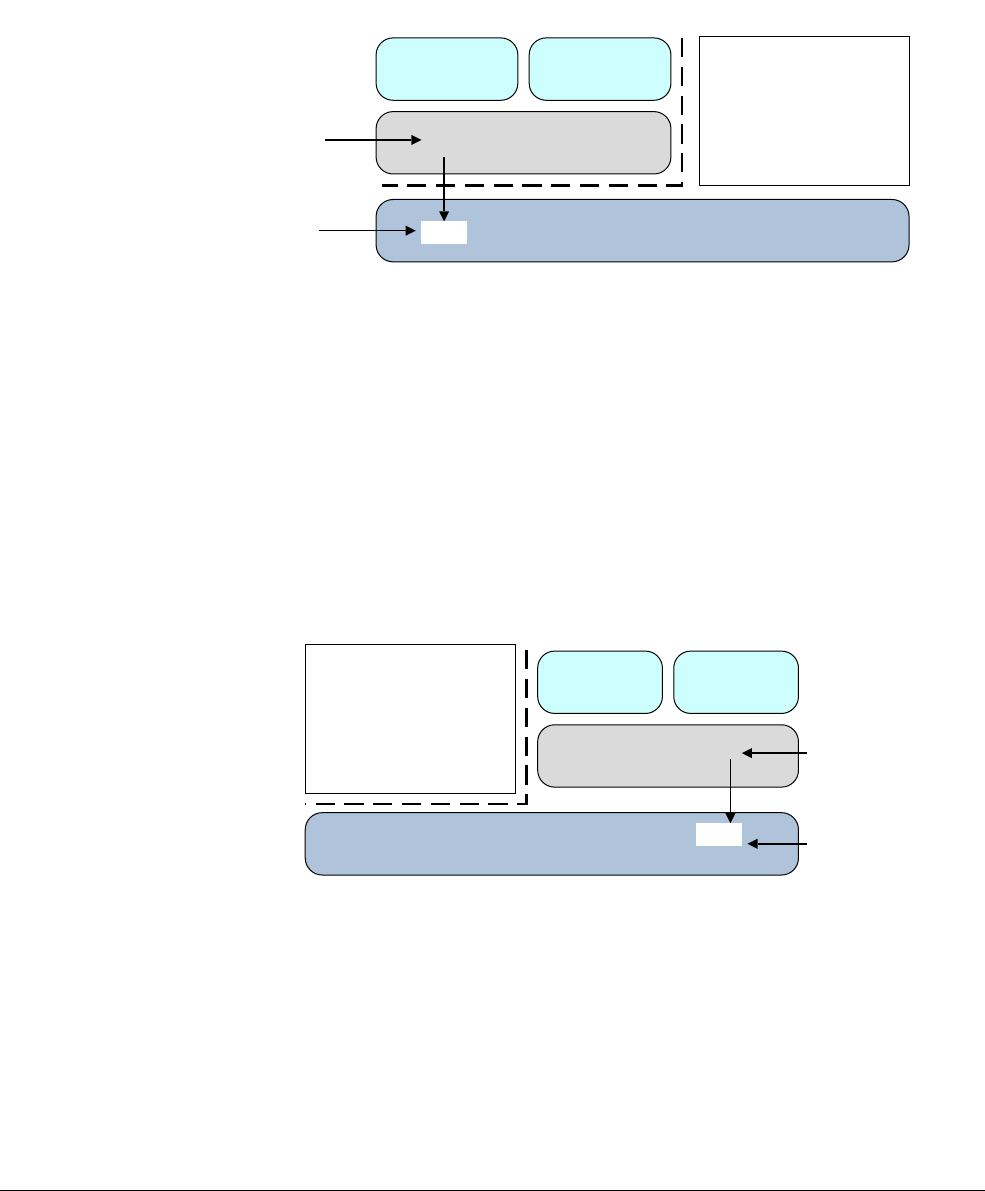

4.1 AArch64 special registers

In addition to the 31 core registers, there are also several special registers.

Figure 4-3 AArch64 special registers

Note

There is no register called X31 or W31. Many instructions are encoded such that the number 31

represents the zero register, ZR (WZR/XZR). There is also a restricted group of instructions

where one or more of the arguments are encoded such that number 31 represents the Stack

Pointer (SP).

When accessing the zero register, all writes are ignored and all reads return 0. Note that the

64-bit form of the SP register does not use an X prefix.

In the ARMv8 architecture, when executing in AArch64, the exception return state is held in the

following dedicated registers for each Exception level:

•Exception Link Register (ELR).

•Saved Processor State Register (SPSR).

There is a dedicated SP per Exception level, but it is not used to hold return state.

Special

registers Stack pointer

Zero register

Program counter

EL0 EL1 EL2 EL3

Program Status Register

Exception Link Register

XZR/WZR

SP_EL0

PC

SP_EL1

SPSR_EL1

ELR_EL1

SP_EL2

SPSR_EL2

ELR_EL2

SP_EL3

SPSR_EL3

ELR_EL3

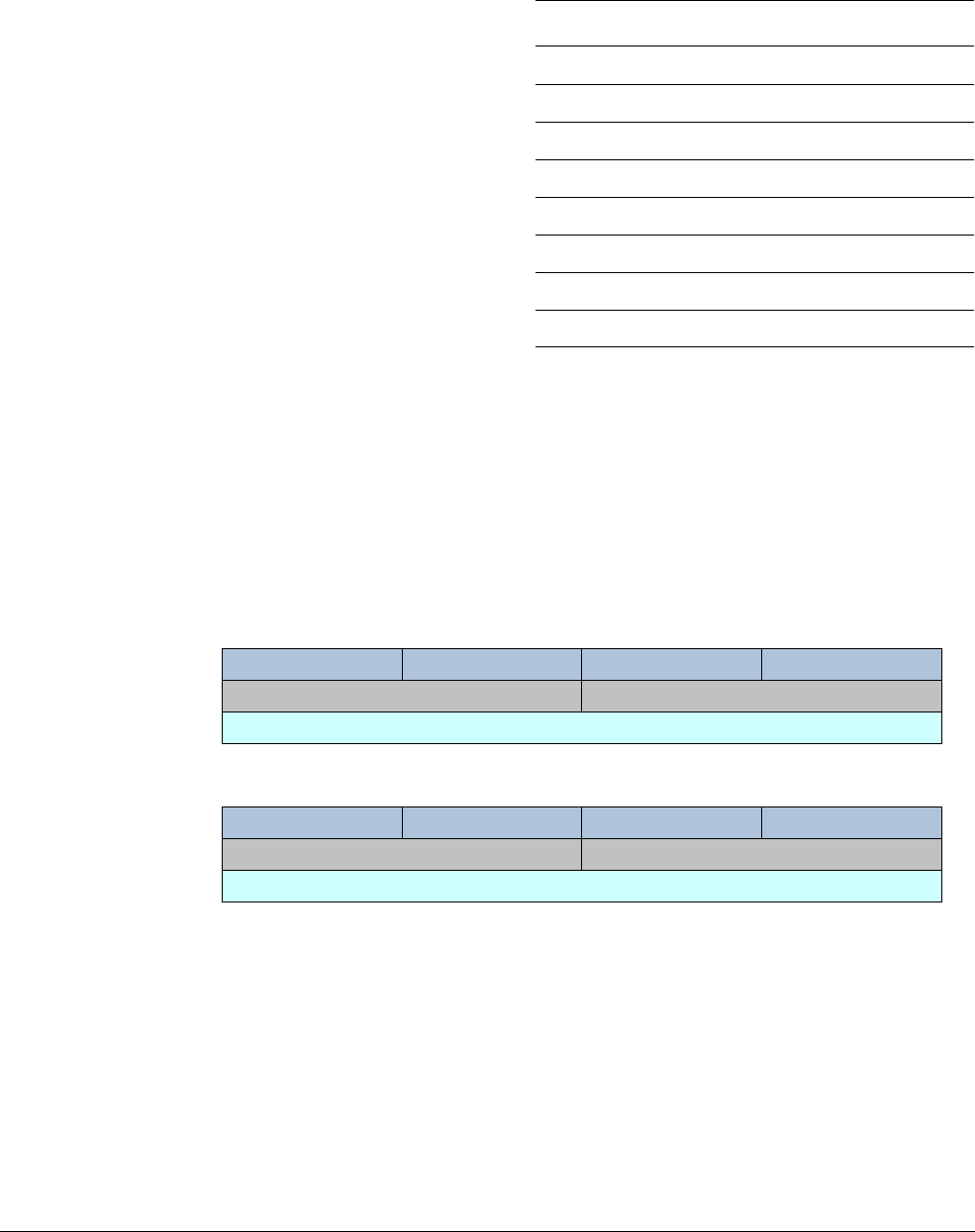

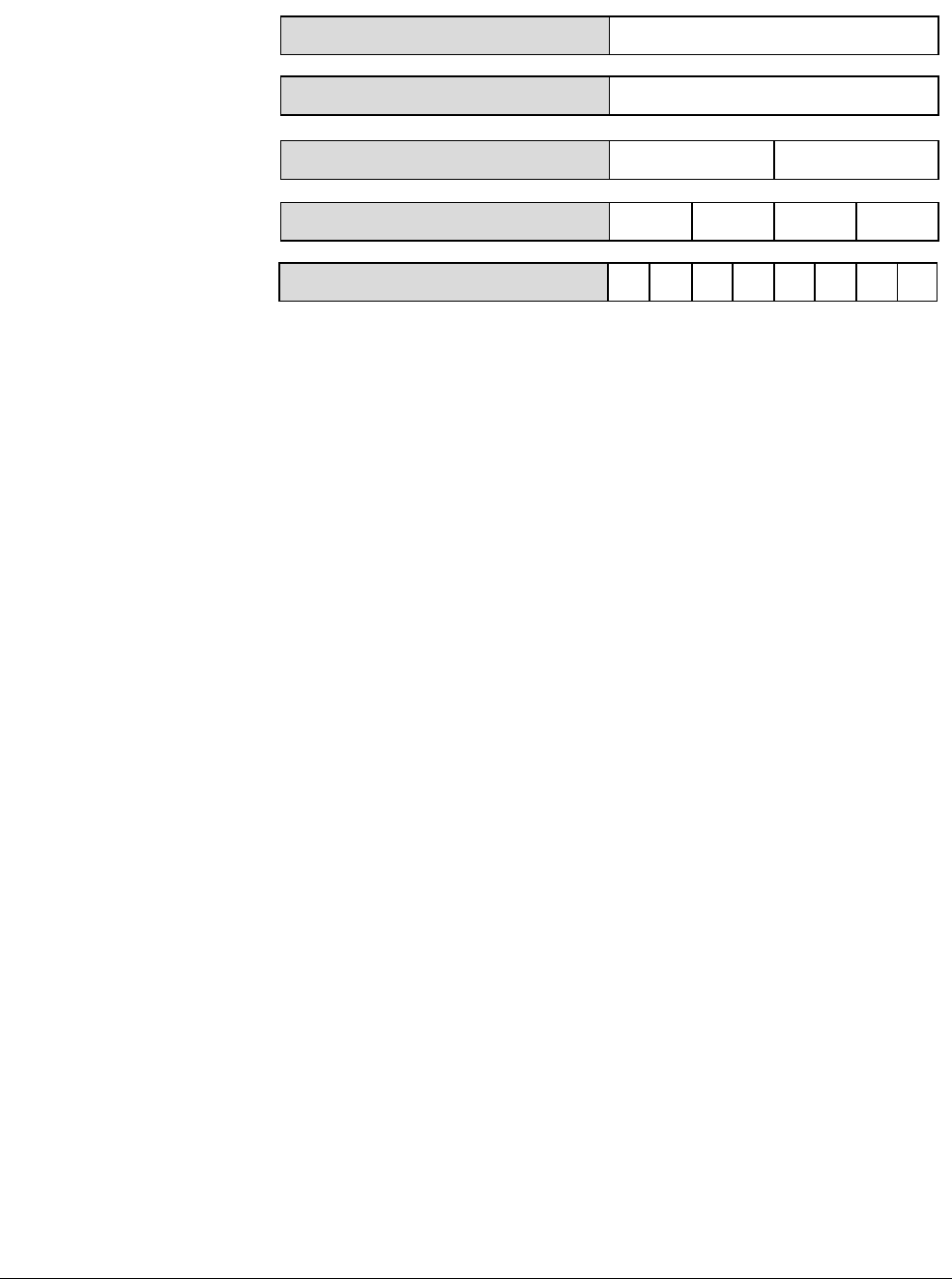

Table 4-1 Special registers in AArch64

Name Size Description

WZR 32 bits Zero register

XZR 64 bits Zero register

WSP 32 bits Current stack pointer

SP 64 bits Current stack pointer

PC 64 bits Program counter

Table 4-2 Special registers by Exception level

EL0 EL1 EL2 EL3

Stack Pointer (SP) SP_EL0 SP_EL1 SP_EL2 SP_EL3

Exception Link Register (ELR) ELR_EL1 ELR_EL2 ELR_EL3

Saved Process Status Register (SPSR) SPSR_EL1 SPSR_EL2 SPSR_EL3

ARMv8 Registers

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 4-4

ID050815 Non-Confidential

4.1.1 Zero register

The zero register reads as zero when used as a source register and discards the result when used

as a destination register. You can use the zero register in most, but not all, instructions.

4.1.2 Stack pointer

In the ARMv8 architecture, the choice of stack pointer to use is separated to some extent from

the Exception level. By default, taking an exception selects the stack pointer for the target

Exception level, SP_ELn. For example, taking an exception to EL1 selects SP_EL1. Each

Exception level has its own stack pointer, SP_EL0, SP_EL1, SP_EL2, and SP_EL3.

When in AArch64 at an Exception level other than EL0, the processor can use either:

• A dedicated 64-bit stack pointer associated with that Exception level (SP_ELn).

• The stack pointer associated with EL0 (SP_EL0).

EL0 can only ever access SP_EL0.

The t suffix indicates that the SP_EL0 stack pointer is selected. The h suffix indicates that the

SP_ELn stack pointer is selected.

The SP cannot be referenced by most instructions. However, some forms of arithmetic

instructions, for example, the

ADD

instruction, can read and write to the current stack pointer to

adjust the stack pointer in a function. For example:

ADD SP, SP, #0x10 // Adjust SP to be 0x10 bytes before its current value

4.1.3 Program Counter

One feature of the original ARMv7 instruction set was the use of

R15

, the Program Counter

(PC) as a general-purpose register. The PC enabled some clever programming tricks, but it

introduced complications for compilers and the design of complex pipelines. Removing direct

access to the PC in ARMv8 makes return prediction easier and simplifies the ABI specification.

The PC is never accessible as a named register. Its use is implicit in certain instructions such as

PC-relative load and address generation. The PC cannot be specified as the destination of a data

processing instruction or load instruction.

4.1.4 Exception Link Register (ELR)

The Exception Link Register holds the exception return address.

Table 4-3 AArch64 Stack pointer options

Exception

level Options

EL0 EL0t

EL1 EL1t, EL1h

EL2 EL2t, EL2h

EL3 EL3t, EL3h

ARMv8 Registers

ARM DEN0024A Copyright © 2015 ARM. All rights reserved. 4-5

ID050815 Non-Confidential

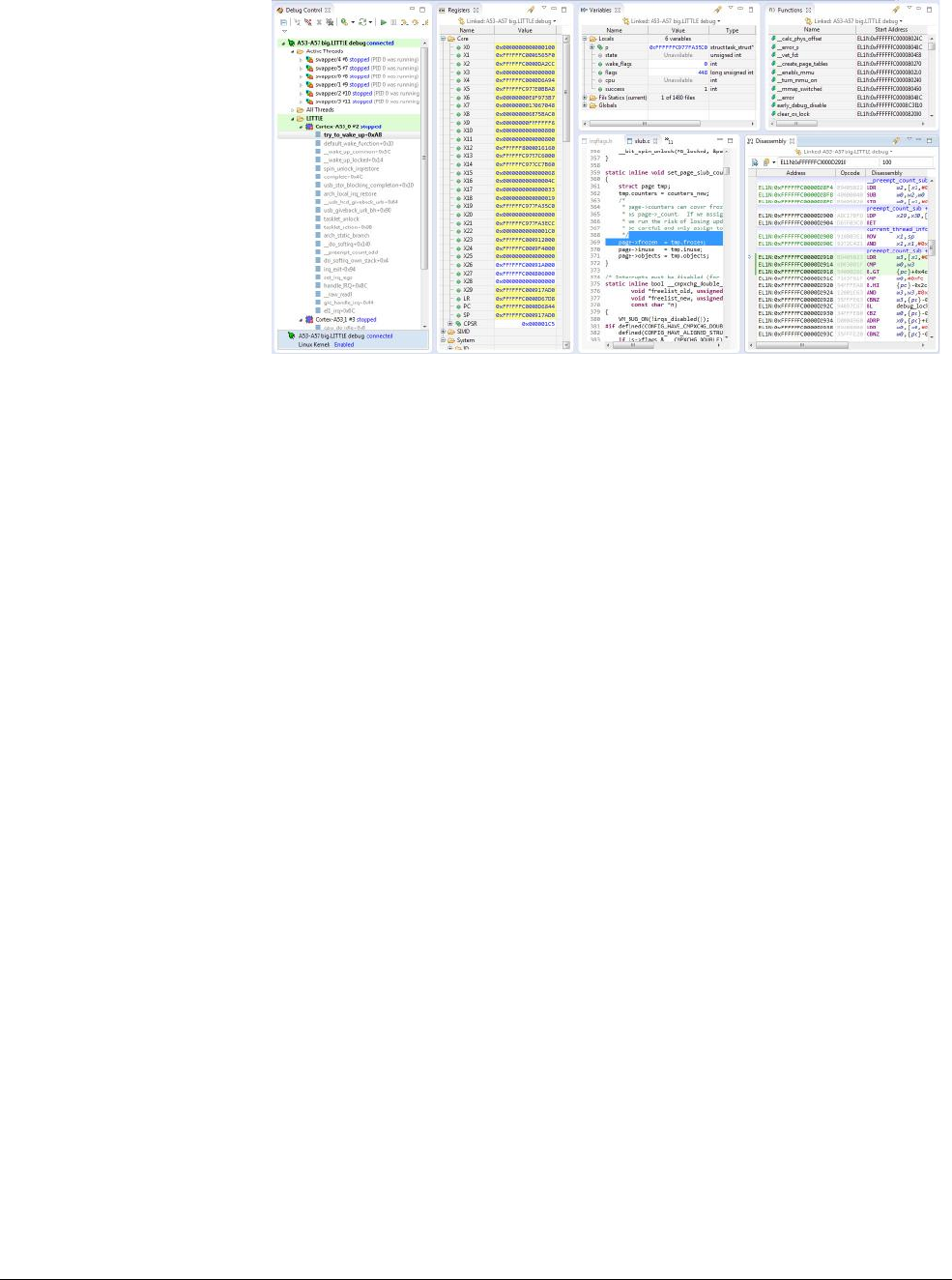

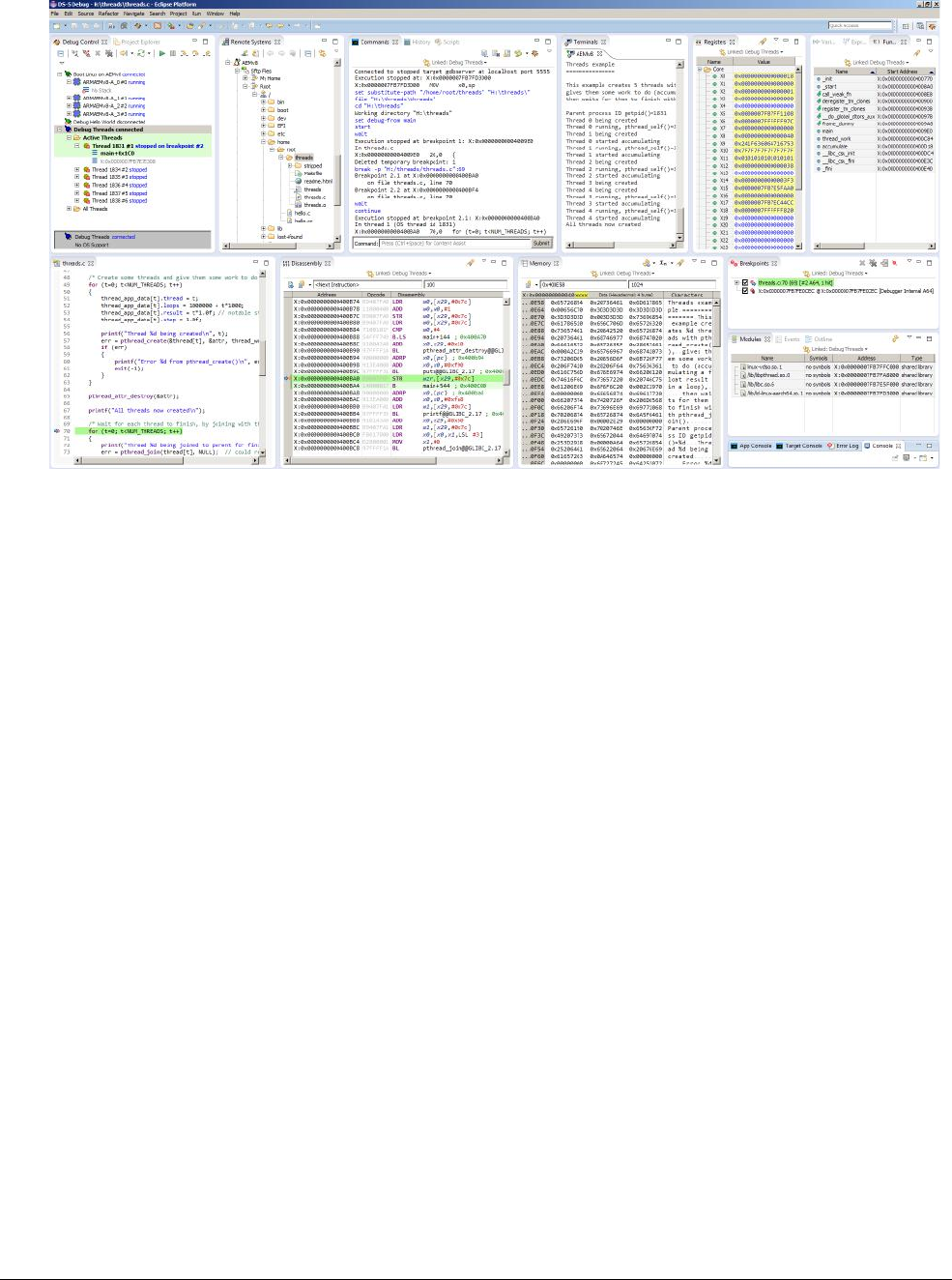

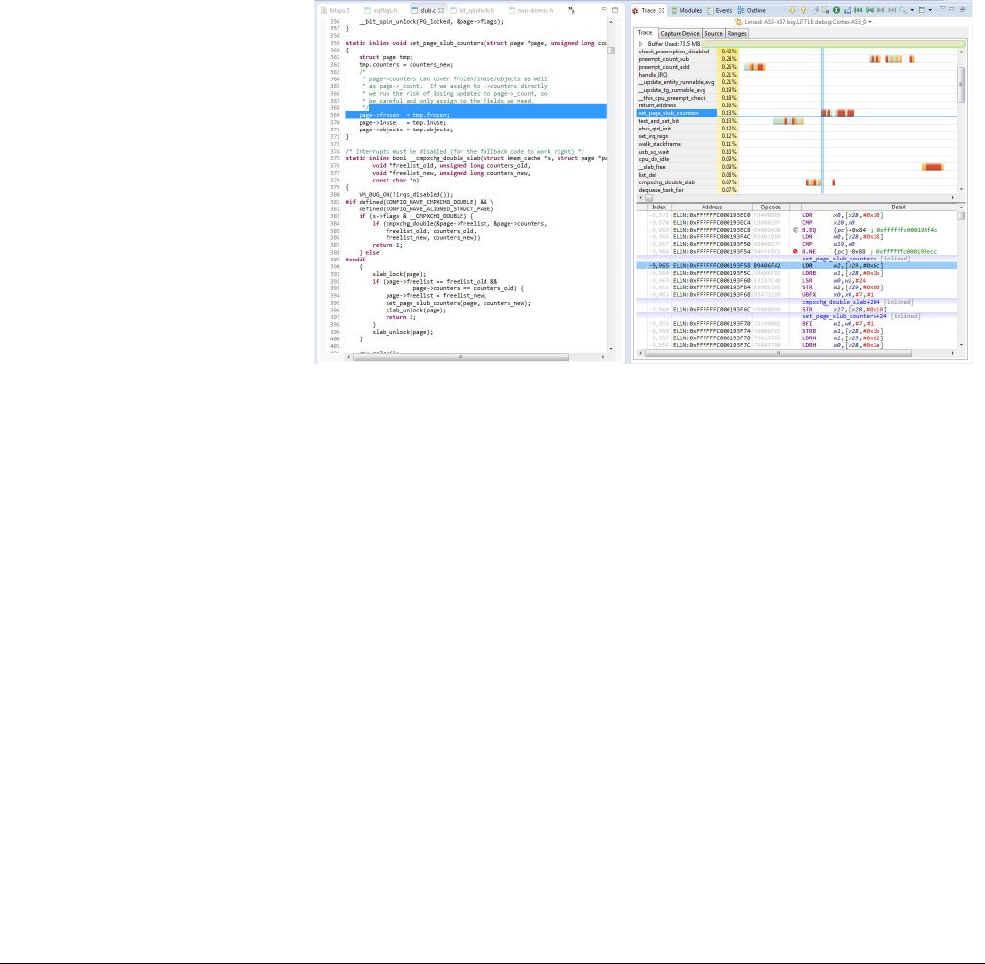

4.1.5 Saved Process Status Register