Programmers Guide

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 92

- Estimators

- Importing Data

- Introduction

- Tensors

- Graphs and Sessions

- Saving and Restoring

- Using GPUs

- Embeddings

- Debugging TensorFlow Programs

- TensorBoard: Visualizing Learning

- TensorBoard: Graph Visualization

Estimators

This document introduces Estimators--a high-level TensorFlow API that greatly simplifies machine learning programming.

Estimators encapsulate the following actions:

training

evaluation

prediction

export for serving

You may either use the pre-made Estimators we provide or write your own custom Estimators. All Estimators--whether pre-

made or custom--are classes based on the tf.estimator.Estimator class.

Note: TensorFlow also includes a deprecated Estimator class at tf.contrib.learn.Estimator, which you should not use.

Advantages of Estimators

Estimators provide the following benefits:

You can run Estimators-based models on a local host or on a distributed multi-server environment without changing your

model. Furthermore, you can run Estimators-based models on CPUs, GPUs, or TPUs without recoding your model.

Estimators simplify sharing implementations between model developers.

You can develop a state of the art model with high-level intuitive code, In short, it is generally much easier to create models

with Estimators than with the low-level TensorFlow APIs.

Estimators are themselves built on tf.layers, which simplifies customization.

Estimators build the graph for you. In other words, you don't have to build the graph.

Estimators provide a safe distributed training loop that controls how and when to:

build the graph

initialize variables

start queues

handle exceptions

create checkpoint files and recover from failures

save summaries for TensorBoard

When writing an application with Estimators, you must separate the data input pipeline from the model. This separation

simplifies experiments with different data sets.

Pre-made Estimators

Pre-made Estimators enable you to work at a much higher conceptual level than the base TensorFlow APIs. You no longer have

to worry about creating the computational graph or sessions since Estimators handle all the "plumbing" for you. That is, pre-

made Estimators create and manage Graph and Session objects for you. Furthermore, pre-made Estimators let you

experiment with different model architectures by making only minimal code changes. DNNClassifier, for example, is a pre-

made Estimator class that trains classification models through dense, feed-forward neural networks.

Structure of a pre-made Estimators program

A TensorFlow program relying on a pre-made Estimator typically consists of the following four steps:

1. Write one or more dataset importing functions. For example, you might create one function to import the training set and

another function to import the test set. Each dataset importing function must return two objects:

a dictionary in which the keys are feature names and the values are Tensors (or SparseTensors) containing the

corresponding feature data

a Tensor containing one or more labels

For example, the following code illustrates the basic skeleton for an input function:

(See Importing Data for full details.)

2. Define the feature columns. Each tf.feature_column identifies a feature name, its type, and any input pre-processing.

For example, the following snippet creates three feature columns that hold integer or floating-point data. The first two

feature columns simply identify the feature's name and type. The third feature column also specifies a lambda the program

will invoke to scale the raw data:

3. Instantiate the relevant pre-made Estimator. For example, here's a sample instantiation of a pre-made Estimator named

LinearClassifier:

4. Call a training, evaluation, or inference method. For example, all Estimators provide a train method, which trains a

model.

Benefits of pre-made Estimators

Pre-made Estimators encode best practices, providing the following benefits:

Best practices for determining where different parts of the computational graph should run, implementing strategies on a

single machine or on a cluster.

Best practices for event (summary) writing and universally useful summaries.

If you don't use pre-made Estimators, you must implement the preceding features yourself.

Custom Estimators

def input_fn(dataset):

... # manipulate dataset, extracting feature names and the label

return feature_dict, label

# Define three numeric feature columns.

population = tf.feature_column.numeric_column('population')

crime_rate = tf.feature_column.numeric_column('crime_rate')

median_education = tf.feature_column.numeric_column('median_education',

normalizer_fn='lambda x: x - global_education_mean')

# Instantiate an estimator, passing the feature columns.

estimator = tf.estimator.Estimator.LinearClassifier(

feature_columns=[population, crime_rate, median_education],

)

# my_training_set is the function created in Step 1

estimator.train(input_fn=my_training_set, steps=2000)

The heart of every Estimator--whether pre-made or custom--is its model function, which is a method that builds graphs for

training, evaluation, and prediction. When you are using a pre-made Estimator, someone else has already implemented the

model function. When relying on a custom Estimator, you must write the model function yourself. A companion document

explains how to write the model function.

Recommended workflow

We recommend the following workflow:

1. Assuming a suitable pre-made Estimator exists, use it to build your first model and use its results to establish a baseline.

2. Build and test your overall pipeline, including the integrity and reliability of your data with this pre-made Estimator.

3. If suitable alternative pre-made Estimators are available, run experiments to determine which pre-made Estimator produces

the best results.

4. Possibly, further improve your model by building your own custom Estimator.

Creating Estimators from Keras models

You can convert existing Keras models to Estimators. Doing so enables your Keras model to access Estimator's strengths, such

as distributed training. Call tf.keras.estimator.model_to_estimator as in the following sample:

Note that the names of feature columns and labels of a keras estimator come from the corresponding compiled keras model.

For example, the input key names for train_input_fn above can be obtained from keras_inception_v3.input_names, and

similarly, the predicted output names can be obtained fromkeras_inception_v3.output_names.

For more details, please refer to the documentation for tf.keras.estimator.model_to_estimator.

Importing Data

# Instantiate a Keras inception v3 model.

keras_inception_v3 = tf.keras.applications.inception_v3.InceptionV3(weights=None)

# Compile model with the optimizer, loss, and metrics you'd like to train with.

keras_inception_v3.compile(optimizer=tf.keras.optimizers.SGD(lr=0.0001, momentum=0.9),

loss='categorical_crossentropy',

metric='accuracy')

# Create an Estimator from the compiled Keras model. Note the initial model

# state of the keras model is preserved in the created Estimator.

est_inception_v3 = tf.keras.estimator.model_to_estimator(keras_model=keras_inception_v3)

# Treat the derived Estimator as you would with any other Estimator.

# First, recover the input name(s) of Keras model, so we can use them as the

# feature column name(s) of the Estimator input function:

keras_inception_v3.input_names # print out: ['input_1']

# Once we have the input name(s), we can create the input function, for example,

# for input(s) in the format of numpy ndarray:

train_input_fn = tf.estimator.inputs.numpy_input_fn(

x={"input_1": train_data},

y=train_labels,

num_epochs=1,

shuffle=False)

# To train, we call Estimator's train function:

est_inception_v3.train(input_fn=train_input_fn, steps=2000)

The tf.data API enables you to build complex input pipelines from simple, reusable pieces. For example, the pipeline for an

image model might aggregate data from files in a distributed file system, apply random perturbations to each image, and

merge randomly selected images into a batch for training. The pipeline for a text model might involve extracting symbols from

raw text data, converting them to embedding identifiers with a lookup table, and batching together sequences of different

lengths. The tf.data API makes it easy to deal with large amounts of data, different data formats, and complicated

transformations.

The tf.data API introduces two new abstractions to TensorFlow:

A tf.data.Dataset represents a sequence of elements, in which each element contains one or more Tensor objects. For

example, in an image pipeline, an element might be a single training example, with a pair of tensors representing the image

data and a label. There are two distinct ways to create a dataset:

Creating a source (e.g. Dataset.from_tensor_slices()) constructs a dataset from one or more tf.Tensor objects.

Applying a transformation (e.g. Dataset.batch()) constructs a dataset from one or more tf.data.Dataset objects.

A tf.data.Iterator provides the main way to extract elements from a dataset. The operation returned by

Iterator.get_next() yields the next element of a Dataset when executed, and typically acts as the interface between

input pipeline code and your model. The simplest iterator is a "one-shot iterator", which is associated with a particular

Dataset and iterates through it once. For more sophisticated uses, theIterator.initializer operation enables you to

reinitialize and parameterize an iterator with different datasets, so that you can, for example, iterate over training and

validation data multiple times in the same program.

Basic mechanics

This section of the guide describes the fundamentals of creating different kinds of Dataset and Iteratorobjects, and how to

extract data from them.

To start an input pipeline, you must define a source. For example, to construct a Dataset from some tensors in memory, you

can use tf.data.Dataset.from_tensors() or tf.data.Dataset.from_tensor_slices(). Alternatively, if your input data

are on disk in the recommended TFRecord format, you can construct atf.data.TFRecordDataset.

Once you have a Dataset object, you can transform it into a new Dataset by chaining method calls on the tf.data.Dataset

object. For example, you can apply per-element transformations such as Dataset.map()(to apply a function to each element),

and multi-element transformations such as Dataset.batch(). See the documentation for tf.data.Dataset for a complete

list of transformations.

The most common way to consume values from a Dataset is to make an iterator object that provides access to one element of

the dataset at a time (for example, by calling Dataset.make_one_shot_iterator()). Atf.data.Iterator provides two

operations: Iterator.initializer, which enables you to (re)initialize the iterator's state; and Iterator.get_next(), which

returns tf.Tensor objects that correspond to the symbolic next element. Depending on your use case, you might choose a

different type of iterator, and the options are outlined below.

Dataset structure

A dataset comprises elements that each have the same structure. An element contains one or more tf.Tensor objects, called

components. Each component has a tf.DType representing the type of elements in the tensor, and a tf.TensorShape

representing the (possibly partially specified) static shape of each element. The Dataset.output_types and

Dataset.output_shapes properties allow you to inspect the inferred types and shapes of each component of a dataset

element. The nested structure of these properties map to the structure of an element, which may be a single tensor, a tuple of

tensors, or a nested tuple of tensors. For example:

It is often convenient to give names to each component of an element, for example if they represent different features of a

training example. In addition to tuples, you can use collections.namedtuple or a dictionary mapping strings to tensors to

represent a single element of a Dataset.

The Dataset transformations support datasets of any structure. When using the Dataset.map(), Dataset.flat_map(), and

Dataset.filter() transformations, which apply a function to each element, the element structure determines the arguments

of the function:

Creating an iterator

Once you have built a Dataset to represent your input data, the next step is to create an Iterator to access elements from that

dataset. The tf.data API currently supports the following iterators, in increasing level of sophistication:

one-shot,

initializable,

reinitializable, and

feedable.

A one-shot iterator is the simplest form of iterator, which only supports iterating once through a dataset, with no need for

explicit initialization. One-shot iterators handle almost all of the cases that the existing queue-based input pipelines support,

but they do not support parameterization. Using the example of Dataset.range():

dataset1 = tf.data.Dataset.from_tensor_slices(tf.random_uniform([4, 10]))

print(dataset1.output_types) # ==> "tf.float32"

print(dataset1.output_shapes) # ==> "(10,)"

dataset2 = tf.data.Dataset.from_tensor_slices(

(tf.random_uniform([4]),

tf.random_uniform([4, 100], maxval=100, dtype=tf.int32)))

print(dataset2.output_types) # ==> "(tf.float32, tf.int32)"

print(dataset2.output_shapes) # ==> "((), (100,))"

dataset3 = tf.data.Dataset.zip((dataset1, dataset2))

print(dataset3.output_types) # ==> (tf.float32, (tf.float32, tf.int32))

print(dataset3.output_shapes) # ==> "(10, ((), (100,)))"

dataset = tf.data.Dataset.from_tensor_slices(

{"a": tf.random_uniform([4]),

"b": tf.random_uniform([4, 100], maxval=100, dtype=tf.int32)})

print(dataset.output_types) # ==> "{'a': tf.float32, 'b': tf.int32}"

print(dataset.output_shapes) # ==> "{'a': (), 'b': (100,)}"

dataset1 = dataset1.map(lambda x: ...)

dataset2 = dataset2.flat_map(lambda x, y: ...)

# Note: Argument destructuring is not available in Python 3.

dataset3 = dataset3.filter(lambda x, (y, z): ...)

Note: Currently, one-shot iterators are the only type that is easily usable with an Estimator.

An initializable iterator requires you to run an explicit iterator.initializer operation before using it. In exchange for this

inconvenience, it enables you to parameterize the definition of the dataset, using one or more tf.placeholder() tensors that

can be fed when you initialize the iterator. Continuing the Dataset.range()example:

A reinitializable iterator can be initialized from multiple different Dataset objects. For example, you might have a training

input pipeline that uses random perturbations to the input images to improve generalization, and a validation input pipeline

that evaluates predictions on unmodified data. These pipelines will typically use different Dataset objects that have the same

structure (i.e. the same types and compatible shapes for each component).

dataset = tf.data.Dataset.range(100)

iterator = dataset.make_one_shot_iterator()

next_element = iterator.get_next()

for i in range(100):

value = sess.run(next_element)

assert i == value

max_value = tf.placeholder(tf.int64, shape=[])

dataset = tf.data.Dataset.range(max_value)

iterator = dataset.make_initializable_iterator()

next_element = iterator.get_next()

# Initialize an iterator over a dataset with 10 elements.

sess.run(iterator.initializer, feed_dict={max_value: 10})

for i in range(10):

value = sess.run(next_element)

assert i == value

# Initialize the same iterator over a dataset with 100 elements.

sess.run(iterator.initializer, feed_dict={max_value: 100})

for i in range(100):

value = sess.run(next_element)

assert i == value

A feedable iterator can be used together with tf.placeholder to select what Iterator to use in each call to

tf.Session.run, via the familiar feed_dict mechanism. It offers the same functionality as a reinitializable iterator, but it

does not require you to initialize the iterator from the start of a dataset when you switch between iterators. For example, using

the same training and validation example from above, you can usetf.data.Iterator.from_string_handle to define a

feedable iterator that allows you to switch between the two datasets:

# Define training and validation datasets with the same structure.

training_dataset = tf.data.Dataset.range(100).map(

lambda x: x + tf.random_uniform([], -10, 10, tf.int64))

validation_dataset = tf.data.Dataset.range(50)

# A reinitializable iterator is defined by its structure. We could use the

# `output_types` and `output_shapes` properties of either `training_dataset`

# or `validation_dataset` here, because they are compatible.

iterator = tf.data.Iterator.from_structure(training_dataset.output_types,

training_dataset.output_shapes)

next_element = iterator.get_next()

training_init_op = iterator.make_initializer(training_dataset)

validation_init_op = iterator.make_initializer(validation_dataset)

# Run 20 epochs in which the training dataset is traversed, followed by the

# validation dataset.

for _ in range(20):

# Initialize an iterator over the training dataset.

sess.run(training_init_op)

for _ in range(100):

sess.run(next_element)

# Initialize an iterator over the validation dataset.

sess.run(validation_init_op)

for _ in range(50):

sess.run(next_element)

Consuming values from an iterator

The Iterator.get_next() method returns one or more tf.Tensor objects that correspond to the symbolic next element of

an iterator. Each time these tensors are evaluated, they take the value of the next element in the underlying dataset. (Note that,

like other stateful objects in TensorFlow, calling Iterator.get_next() does not immediately advance the iterator. Instead

you must use the returned tf.Tensor objects in a TensorFlow expression, and pass the result of that expression to

tf.Session.run() to get the next elements and advance the iterator.)

If the iterator reaches the end of the dataset, executing the Iterator.get_next() operation will raise a

tf.errors.OutOfRangeError. After this point the iterator will be in an unusable state, and you must initialize it again if you

want to use it further.

# Define training and validation datasets with the same structure.

training_dataset = tf.data.Dataset.range(100).map(

lambda x: x + tf.random_uniform([], -10, 10, tf.int64)).repeat()

validation_dataset = tf.data.Dataset.range(50)

# A feedable iterator is defined by a handle placeholder and its structure. We

# could use the `output_types` and `output_shapes` properties of either

# `training_dataset` or `validation_dataset` here, because they have

# identical structure.

handle = tf.placeholder(tf.string, shape=[])

iterator = tf.data.Iterator.from_string_handle(

handle, training_dataset.output_types, training_dataset.output_shapes)

next_element = iterator.get_next()

# You can use feedable iterators with a variety of different kinds of iterator

# (such as one-shot and initializable iterators).

training_iterator = training_dataset.make_one_shot_iterator()

validation_iterator = validation_dataset.make_initializable_iterator()

# The `Iterator.string_handle()` method returns a tensor that can be evaluated

# and used to feed the `handle` placeholder.

training_handle = sess.run(training_iterator.string_handle())

validation_handle = sess.run(validation_iterator.string_handle())

# Loop forever, alternating between training and validation.

while True:

# Run 200 steps using the training dataset. Note that the training dataset is

# infinite, and we resume from where we left off in the previous `while` loop

# iteration.

for _ in range(200):

sess.run(next_element, feed_dict={handle: training_handle})

# Run one pass over the validation dataset.

sess.run(validation_iterator.initializer)

for _ in range(50):

sess.run(next_element, feed_dict={handle: validation_handle})

A common pattern is to wrap the "training loop" in a try-except block:

If each element of the dataset has a nested structure, the return value of Iterator.get_next() will be one or more

tf.Tensor objects in the same nested structure:

Note that evaluating any of next1, next2, or next3 will advance the iterator for all components. A typical consumer of an

iterator will include all components in a single expression.

Reading input data

Consuming NumPy arrays

If all of your input data fit in memory, the simplest way to create a Dataset from them is to convert them to tf.Tensor objects

and use Dataset.from_tensor_slices().

dataset = tf.data.Dataset.range(5)

iterator = dataset.make_initializable_iterator()

next_element = iterator.get_next()

# Typically `result` will be the output of a model, or an optimizer's

# training operation.

result = tf.add(next_element, next_element)

sess.run(iterator.initializer)

print(sess.run(result)) # ==> "0"

print(sess.run(result)) # ==> "2"

print(sess.run(result)) # ==> "4"

print(sess.run(result)) # ==> "6"

print(sess.run(result)) # ==> "8"

try:

sess.run(result)

except tf.errors.OutOfRangeError:

print("End of dataset") # ==> "End of dataset"

sess.run(iterator.initializer)

while True:

try:

sess.run(result)

except tf.errors.OutOfRangeError:

break

dataset1 = tf.data.Dataset.from_tensor_slices(tf.random_uniform([4, 10]))

dataset2 = tf.data.Dataset.from_tensor_slices((tf.random_uniform([4]), tf.random_uniform([4,

100])))

dataset3 = tf.data.Dataset.zip((dataset1, dataset2))

iterator = dataset3.make_initializable_iterator()

sess.run(iterator.initializer)

next1, (next2, next3) = iterator.get_next()

Note that the above code snippet will embed the features and labels arrays in your TensorFlow graph as tf.constant()

operations. This works well for a small dataset, but wastes memory---because the contents of the array will be copied multiple

times---and can run into the 2GB limit for the tf.GraphDef protocol buffer.

As an alternative, you can define the Dataset in terms of tf.placeholder() tensors, and feed the NumPy arrays when you

initialize an Iterator over the dataset.

Consuming TFRecord data

The tf.data API supports a variety of file formats so that you can process large datasets that do not fit in memory. For

example, the TFRecord file format is a simple record-oriented binary format that many TensorFlow applications use for

training data. The tf.data.TFRecordDataset class enables you to stream over the contents of one or more TFRecord files as

part of an input pipeline.

The filenames argument to the TFRecordDataset initializer can either be a string, a list of strings, or a tf.Tensor of strings.

Therefore if you have two sets of files for training and validation purposes, you can use atf.placeholder(tf.string) to

represent the filenames, and initialize an iterator from the appropriate filenames:

# Load the training data into two NumPy arrays, for example using `np.load()`.

with np.load("/var/data/training_data.npy") as data:

features = data["features"]

labels = data["labels"]

# Assume that each row of `features` corresponds to the same row as `labels`.

assert features.shape[0] == labels.shape[0]

dataset = tf.data.Dataset.from_tensor_slices((features, labels))

# Load the training data into two NumPy arrays, for example using `np.load()`.

with np.load("/var/data/training_data.npy") as data:

features = data["features"]

labels = data["labels"]

# Assume that each row of `features` corresponds to the same row as `labels`.

assert features.shape[0] == labels.shape[0]

features_placeholder = tf.placeholder(features.dtype, features.shape)

labels_placeholder = tf.placeholder(labels.dtype, labels.shape)

dataset = tf.data.Dataset.from_tensor_slices((features_placeholder, labels_placeholder))

# [Other transformations on `dataset`...]

dataset = ...

iterator = dataset.make_initializable_iterator()

sess.run(iterator.initializer, feed_dict={features_placeholder: features,

labels_placeholder: labels})

# Creates a dataset that reads all of the examples from two files.

filenames = ["/var/data/file1.tfrecord", "/var/data/file2.tfrecord"]

dataset = tf.data.TFRecordDataset(filenames)

Consuming text data

Many datasets are distributed as one or more text files. The tf.data.TextLineDataset provides an easy way to extract lines

from one or more text files. Given one or more filenames, a TextLineDataset will produce one string-valued element per line

of those files. Like a TFRecordDataset, TextLineDataset accepts filenamesas a tf.Tensor, so you can parameterize it by

passing a tf.placeholder(tf.string).

By default, a TextLineDataset yields every line of each file, which may not be desirable, for example if the file starts with a

header line, or contains comments. These lines can be removed using the Dataset.skip() andDataset.filter()

transformations. To apply these transformations to each file separately, we use Dataset.flat_map() to create a nested

Dataset for each file.

Preprocessing data with Dataset.map()

filenames = tf.placeholder(tf.string, shape=[None])

dataset = tf.data.TFRecordDataset(filenames)

dataset = dataset.map(...) # Parse the record into tensors.

dataset = dataset.repeat() # Repeat the input indefinitely.

dataset = dataset.batch(32)

iterator = dataset.make_initializable_iterator()

# You can feed the initializer with the appropriate filenames for the current

# phase of execution, e.g. training vs. validation.

# Initialize `iterator` with training data.

training_filenames = ["/var/data/file1.tfrecord", "/var/data/file2.tfrecord"]

sess.run(iterator.initializer, feed_dict={filenames: training_filenames})

# Initialize `iterator` with validation data.

validation_filenames = ["/var/data/validation1.tfrecord", ...]

sess.run(iterator.initializer, feed_dict={filenames: validation_filenames})

filenames = ["/var/data/file1.txt", "/var/data/file2.txt"]

dataset = tf.data.TextLineDataset(filenames)

filenames = ["/var/data/file1.txt", "/var/data/file2.txt"]

dataset = tf.data.Dataset.from_tensor_slices(filenames)

# Use `Dataset.flat_map()` to transform each file as a separate nested dataset,

# and then concatenate their contents sequentially into a single "flat" dataset.

# * Skip the first line (header row).

# * Filter out lines beginning with "#" (comments).

dataset = dataset.flat_map(

lambda filename: (

tf.data.TextLineDataset(filename)

.skip(1)

.filter(lambda line: tf.not_equal(tf.substr(line, 0, 1), "#"))))

The Dataset.map(f) transformation produces a new dataset by applying a given function f to each element of the input

dataset. It is based on the map() function that is commonly applied to lists (and other structures) in functional programming

languages. The function f takes the tf.Tensor objects that represent a single element in the input, and returns the tf.Tensor

objects that will represent a single element in the new dataset. Its implementation uses standard TensorFlow operations to

transform one element into another.

This section covers common examples of how to use Dataset.map().

Parsing tf.Example protocol buffer messages

Many input pipelines extract tf.train.Example protocol buffer messages from a TFRecord-format file (written, for example,

using tf.python_io.TFRecordWriter). Each tf.train.Example record contains one or more "features", and the input

pipeline typically converts these features into tensors.

Decoding image data and resizing it

When training a neural network on real-world image data, it is often necessary to convert images of different sizes to a

common size, so that they may be batched into a fixed size.

# Transforms a scalar string `example_proto` into a pair of a scalar string and

# a scalar integer, representing an image and its label, respectively.

def _parse_function(example_proto):

features = {"image": tf.FixedLenFeature((), tf.string, default_value=""),

"label": tf.FixedLenFeature((), tf.int32, default_value=0)}

parsed_features = tf.parse_single_example(example_proto, features)

return parsed_features["image"], parsed_features["label"]

# Creates a dataset that reads all of the examples from two files, and extracts

# the image and label features.

filenames = ["/var/data/file1.tfrecord", "/var/data/file2.tfrecord"]

dataset = tf.data.TFRecordDataset(filenames)

dataset = dataset.map(_parse_function)

# Reads an image from a file, decodes it into a dense tensor, and resizes it

# to a fixed shape.

def _parse_function(filename, label):

image_string = tf.read_file(filename)

image_decoded = tf.image.decode_image(image_string)

image_resized = tf.image.resize_images(image_decoded, [28, 28])

return image_resized, label

# A vector of filenames.

filenames = tf.constant(["/var/data/image1.jpg", "/var/data/image2.jpg", ...])

# `labels[i]` is the label for the image in `filenames[i].

labels = tf.constant([0, 37, ...])

dataset = tf.data.Dataset.from_tensor_slices((filenames, labels))

dataset = dataset.map(_parse_function)

Applying arbitrary Python logic with tf.py_func()

For performance reasons, we encourage you to use TensorFlow operations for preprocessing your data whenever possible.

However, it is sometimes useful to call upon external Python libraries when parsing your input data. To do so, invoke, the

tf.py_func() operation in a Dataset.map() transformation.

Batching dataset elements

Simple batching

The simplest form of batching stacks n consecutive elements of a dataset into a single element. The Dataset.batch()

transformation does exactly this, with the same constraints as the tf.stack() operator, applied to each component of the

elements: i.e. for each component i, all elements must have a tensor of the exact same shape.

Batching tensors with padding

import cv2

# Use a custom OpenCV function to read the image, instead of the standard

# TensorFlow `tf.read_file()` operation.

def _read_py_function(filename, label):

image_decoded = cv2.imread(image_string, cv2.IMREAD_GRAYSCALE)

return image_decoded, label

# Use standard TensorFlow operations to resize the image to a fixed shape.

def _resize_function(image_decoded, label):

image_decoded.set_shape([None, None, None])

image_resized = tf.image.resize_images(image_decoded, [28, 28])

return image_resized, label

filenames = ["/var/data/image1.jpg", "/var/data/image2.jpg", ...]

labels = [0, 37, 29, 1, ...]

dataset = tf.data.Dataset.from_tensor_slices((filenames, labels))

dataset = dataset.map(

lambda filename, label: tuple(tf.py_func(

_read_py_function, [filename, label], [tf.uint8, label.dtype])))

dataset = dataset.map(_resize_function)

inc_dataset = tf.data.Dataset.range(100)

dec_dataset = tf.data.Dataset.range(0, -100, -1)

dataset = tf.data.Dataset.zip((inc_dataset, dec_dataset))

batched_dataset = dataset.batch(4)

iterator = batched_dataset.make_one_shot_iterator()

next_element = iterator.get_next()

print(sess.run(next_element)) # ==> ([0, 1, 2, 3], [ 0, -1, -2, -3])

print(sess.run(next_element)) # ==> ([4, 5, 6, 7], [-4, -5, -6, -7])

print(sess.run(next_element)) # ==> ([8, 9, 10, 11], [-8, -9, -10, -11])

The above recipe works for tensors that all have the same size. However, many models (e.g. sequence models) work with input

data that can have varying size (e.g. sequences of different lengths). To handle this case, theDataset.padded_batch()

transformation enables you to batch tensors of different shape by specifying one or more dimensions in which they may be

padded.

The Dataset.padded_batch() transformation allows you to set different padding for each dimension of each component,

and it may be variable-length (signified by None in the example above) or constant-length. It is also possible to override the

padding value, which defaults to 0.

Training workflows

Processing multiple epochs

The tf.data API offers two main ways to process multiple epochs of the same data.

The simplest way to iterate over a dataset in multiple epochs is to use the Dataset.repeat() transformation. For example, to

create a dataset that repeats its input for 10 epochs:

Applying the Dataset.repeat() transformation with no arguments will repeat the input indefinitely. The

Dataset.repeat() transformation concatenates its arguments without signaling the end of one epoch and the beginning of

the next epoch.

If you want to receive a signal at the end of each epoch, you can write a training loop that catches the

tf.errors.OutOfRangeError at the end of a dataset. At that point you might collect some statistics (e.g. the validation error)

for the epoch.

dataset = tf.data.Dataset.range(100)

dataset = dataset.map(lambda x: tf.fill([tf.cast(x, tf.int32)], x))

dataset = dataset.padded_batch(4, padded_shapes=[None])

iterator = dataset.make_one_shot_iterator()

next_element = iterator.get_next()

print(sess.run(next_element)) # ==> [[0, 0, 0], [1, 0, 0], [2, 2, 0], [3, 3, 3]]

print(sess.run(next_element)) # ==> [[4, 4, 4, 4, 0, 0, 0],

# [5, 5, 5, 5, 5, 0, 0],

# [6, 6, 6, 6, 6, 6, 0],

# [7, 7, 7, 7, 7, 7, 7]]

filenames = ["/var/data/file1.tfrecord", "/var/data/file2.tfrecord"]

dataset = tf.data.TFRecordDataset(filenames)

dataset = dataset.map(...)

dataset = dataset.repeat(10)

dataset = dataset.batch(32)

Randomly shuffling input data

The Dataset.shuffle() transformation randomly shuffles the input dataset using a similar algorithm to

tf.RandomShuffleQueue: it maintains a fixed-size buffer and chooses the next element uniformly at random from that buffer.

Using high-level APIs

The tf.train.MonitoredTrainingSession API simplifies many aspects of running TensorFlow in a distributed setting.

MonitoredTrainingSession uses the tf.errors.OutOfRangeError to signal that training has completed, so to use it with

the tf.data API, we recommend usingDataset.make_one_shot_iterator(). For example:

filenames = ["/var/data/file1.tfrecord", "/var/data/file2.tfrecord"]

dataset = tf.data.TFRecordDataset(filenames)

dataset = dataset.map(...)

dataset = dataset.batch(32)

iterator = dataset.make_initializable_iterator()

next_element = iterator.get_next()

# Compute for 100 epochs.

for _ in range(100):

sess.run(iterator.initializer)

while True:

try:

sess.run(next_element)

except tf.errors.OutOfRangeError:

break

# [Perform end-of-epoch calculations here.]

filenames = ["/var/data/file1.tfrecord", "/var/data/file2.tfrecord"]

dataset = tf.data.TFRecordDataset(filenames)

dataset = dataset.map(...)

dataset = dataset.shuffle(buffer_size=10000)

dataset = dataset.batch(32)

dataset = dataset.repeat()

To use a Dataset in the input_fn of a tf.estimator.Estimator, we also recommend using

Dataset.make_one_shot_iterator(). For example:

filenames = ["/var/data/file1.tfrecord", "/var/data/file2.tfrecord"]

dataset = tf.data.TFRecordDataset(filenames)

dataset = dataset.map(...)

dataset = dataset.shuffle(buffer_size=10000)

dataset = dataset.batch(32)

dataset = dataset.repeat(num_epochs)

iterator = dataset.make_one_shot_iterator()

next_example, next_label = iterator.get_next()

loss = model_function(next_example, next_label)

training_op = tf.train.AdagradOptimizer(...).minimize(loss)

with tf.train.MonitoredTrainingSession(...) as sess:

while not sess.should_stop():

sess.run(training_op)

def dataset_input_fn():

filenames = ["/var/data/file1.tfrecord", "/var/data/file2.tfrecord"]

dataset = tf.data.TFRecordDataset(filenames)

# Use `tf.parse_single_example()` to extract data from a `tf.Example`

# protocol buffer, and perform any additional per-record preprocessing.

def parser(record):

keys_to_features = {

"image_data": tf.FixedLenFeature((), tf.string, default_value=""),

"date_time": tf.FixedLenFeature((), tf.int64, default_value=""),

"label": tf.FixedLenFeature((), tf.int64,

default_value=tf.zeros([], dtype=tf.int64)),

}

parsed = tf.parse_single_example(record, keys_to_features)

# Perform additional preprocessing on the parsed data.

image = tf.image.decode_jpeg(parsed["image_data"])

image = tf.reshape(image, [299, 299, 1])

label = tf.cast(parsed["label"], tf.int32)

return {"image_data": image, "date_time": parsed["date_time"]}, label

# Use `Dataset.map()` to build a pair of a feature dictionary and a label

# tensor for each example.

dataset = dataset.map(parser)

dataset = dataset.shuffle(buffer_size=10000)

dataset = dataset.batch(32)

dataset = dataset.repeat(num_epochs)

iterator = dataset.make_one_shot_iterator()

# `features` is a dictionary in which each value is a batch of values for

# that feature; `labels` is a batch of labels.

features, labels = iterator.get_next()

return features, labels

Introduction

This guide gets you started programming in the low-level TensorFlow APIs (TensorFlow Core), showing you how to:

Manage your own TensorFlow program (a tf.Graph) and TensorFlow runtime (a tf.Session), instead of relying on

Estimators to manage them.

Run TensorFlow operations, using a tf.Session.

Use high level components (datasets, layers, and feature_columns) in this low level environment.

Build your own training loop, instead of using the one provided by Estimators.

We recommend using the higher level APIs to build models when possible. Knowing TensorFlow Core is valuable for the

following reasons:

Experimentation and debugging are both more straight forward when you can use low level TensorFlow operations directly.

It gives you a mental model of how things work internally when using the higher level APIs.

Setup

Before using this guide, install TensorFlow.

To get the most out of this guide, you should know the following:

How to program in Python.

At least a little bit about arrays.

Ideally, something about machine learning.

Feel free to launch python and follow along with this walkthrough. Run the following lines to set up your Python

environment:

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import numpy as np

import tensorflow as tf

Tensor Values

The central unit of data in TensorFlow is the tensor. A tensor consists of a set of primitive values shaped into an array of any

number of dimensions. A tensor's rank is its number of dimensions, while its shape is a tuple of integers specifying the array's

length along each dimension. Here are some examples of tensor values:

3. # a rank 0 tensor; a scalar with shape [],

[1., 2., 3.] # a rank 1 tensor; a vector with shape [3]

[[1., 2., 3.], [4., 5., 6.]] # a rank 2 tensor; a matrix with shape [2, 3]

[[[1., 2., 3.]], [[7., 8., 9.]]] # a rank 3 tensor with shape [2, 1, 3]

TensorFlow uses numpy arrays to represent tensor values.

TensorFlow Core Walkthrough

You might think of TensorFlow Core programs as consisting of two discrete sections:

1. Building the computational graph (a tf.Graph).

2. Running the computational graph (using a tf.Session).

Graph

A computational graph is a series of TensorFlow operations arranged into a graph. The graph is composed of two types of

objects.

Operations (or "ops"): The nodes of the graph. Operations describe calculations that consume and produce tensors.

Tensors: The edges in the graph. These represent the values that will flow through the graph. Most TensorFlow functions

return tf.Tensors.

Important: tf.Tensors do not have values, they are just handles to elements in the computation graph.

Let's build a simple computational graph. The most basic operation is a constant. The Python function that builds the operation

takes a tensor value as input. The resulting operation takes no inputs. When run, it outputs the value that was passed to the

constructor. We can create two floating point constants a and b as follows:

a = tf.constant(3.0, dtype=tf.float32)

b = tf.constant(4.0) # also tf.float32 implicitly

total = a + b

print(a)

print(b)

print(total)

The print statements produce:

Tensor("Const:0", shape=(), dtype=float32)

Tensor("Const_1:0", shape=(), dtype=float32)

Tensor("add:0", shape=(), dtype=float32)

Notice that printing the tensors does not output the values 3.0, 4.0, and 7.0 as you might expect. The above statements only

build the computation graph. These tf.Tensor objects just represent the results of the operations that will be run.

Each operation in a graph is given a unique name. This name is independent of the names the objects are assigned to in

Python. Tensors are named after the operation that produces them followed by an output index, as in "add:0" above.

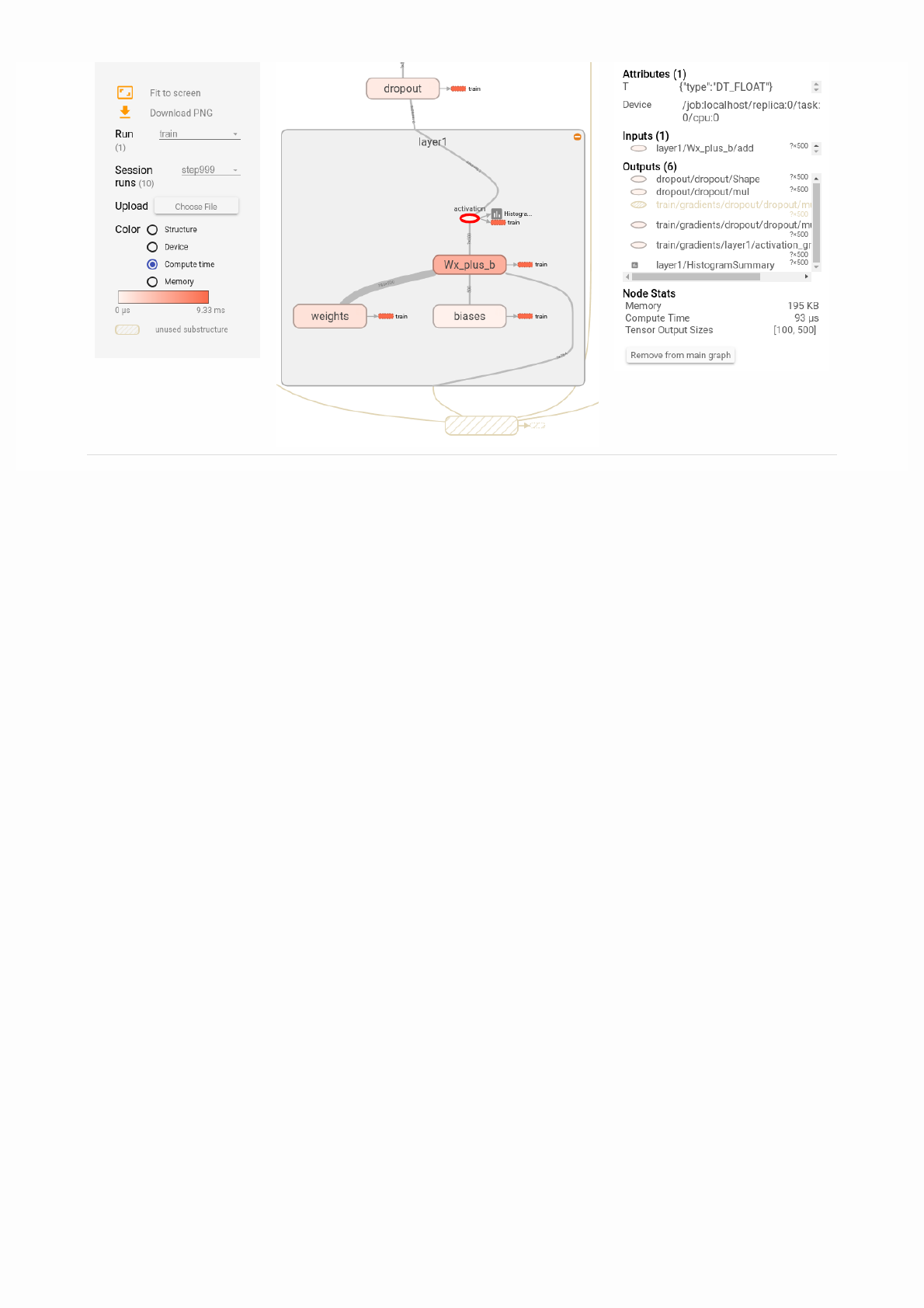

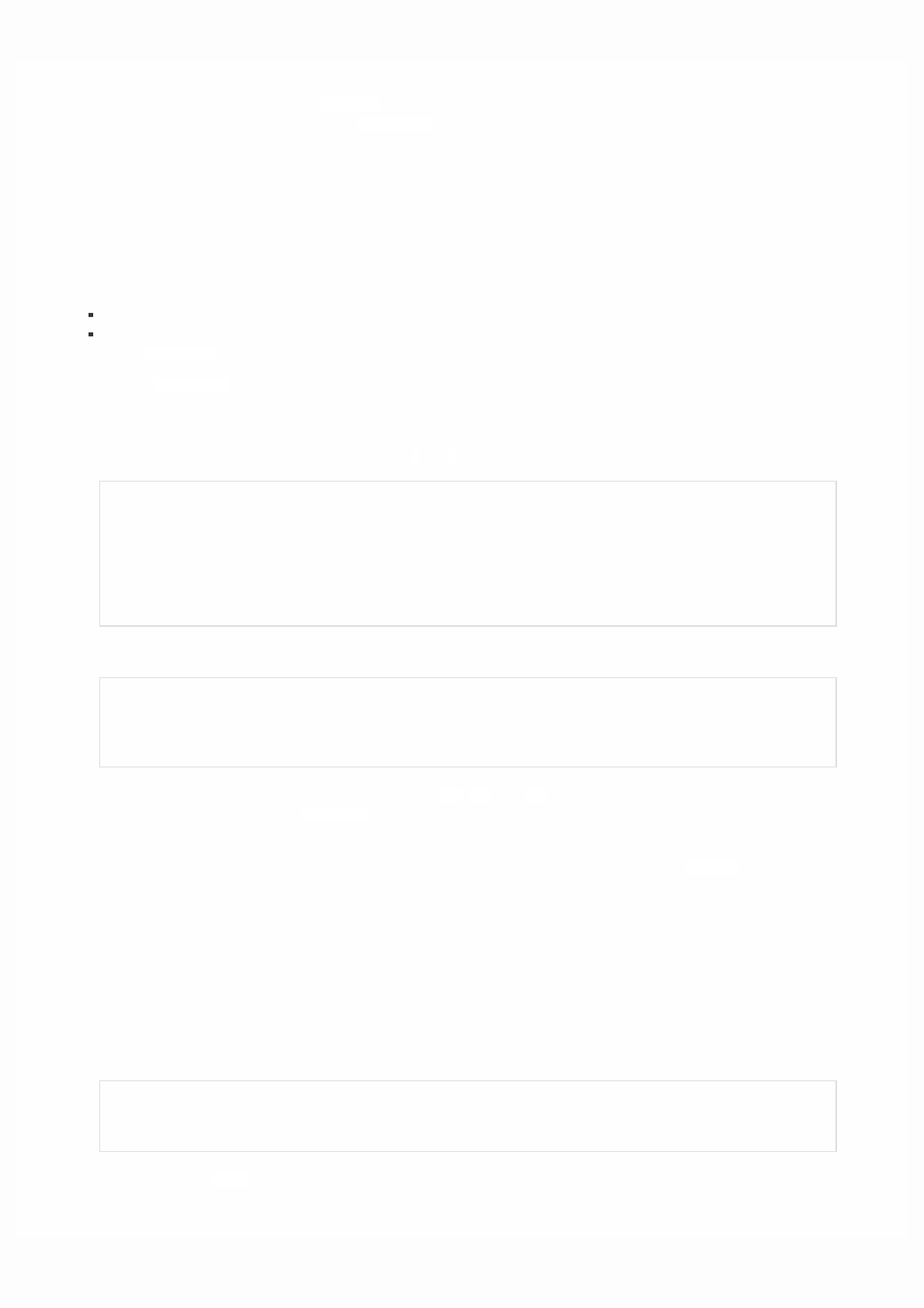

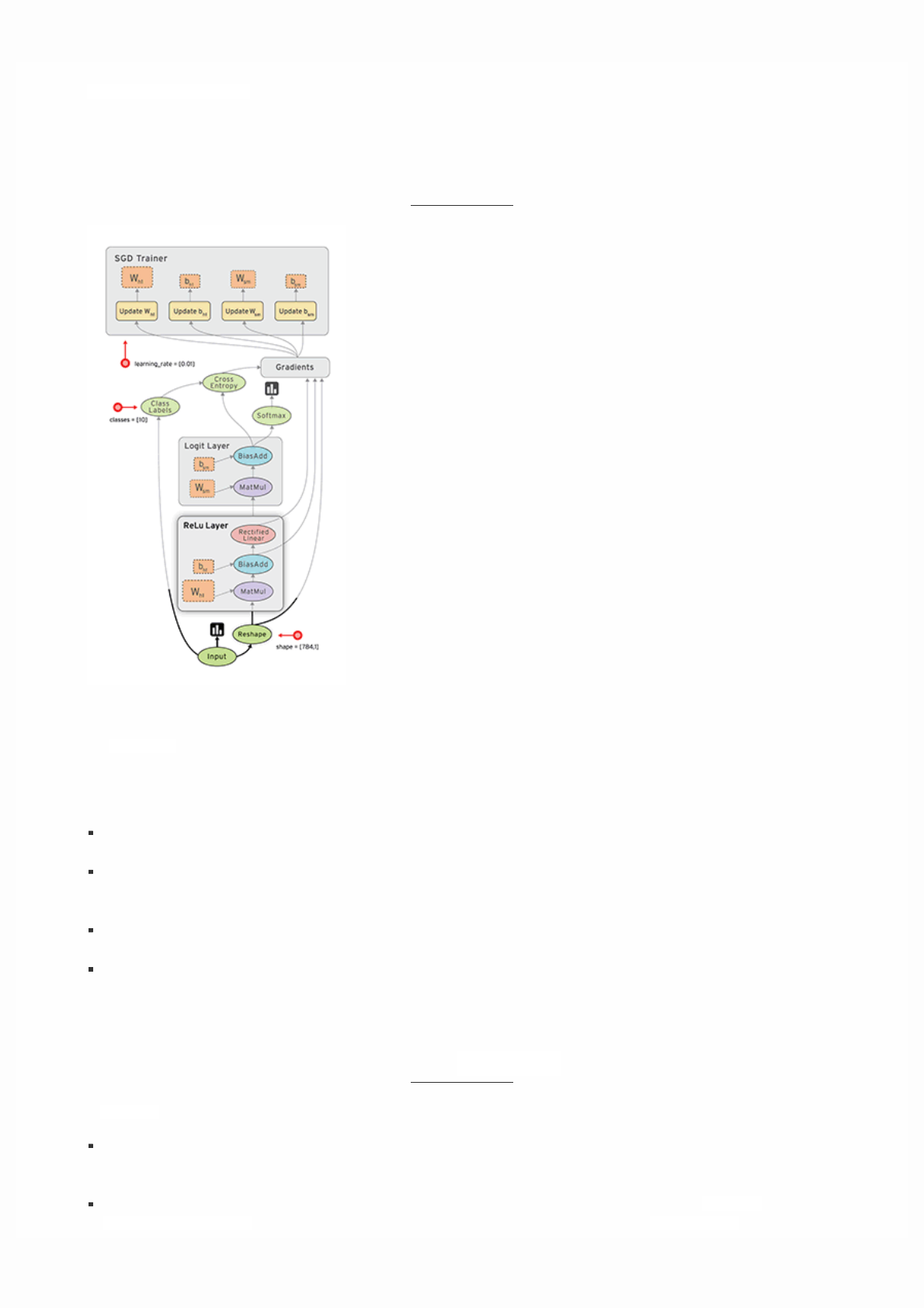

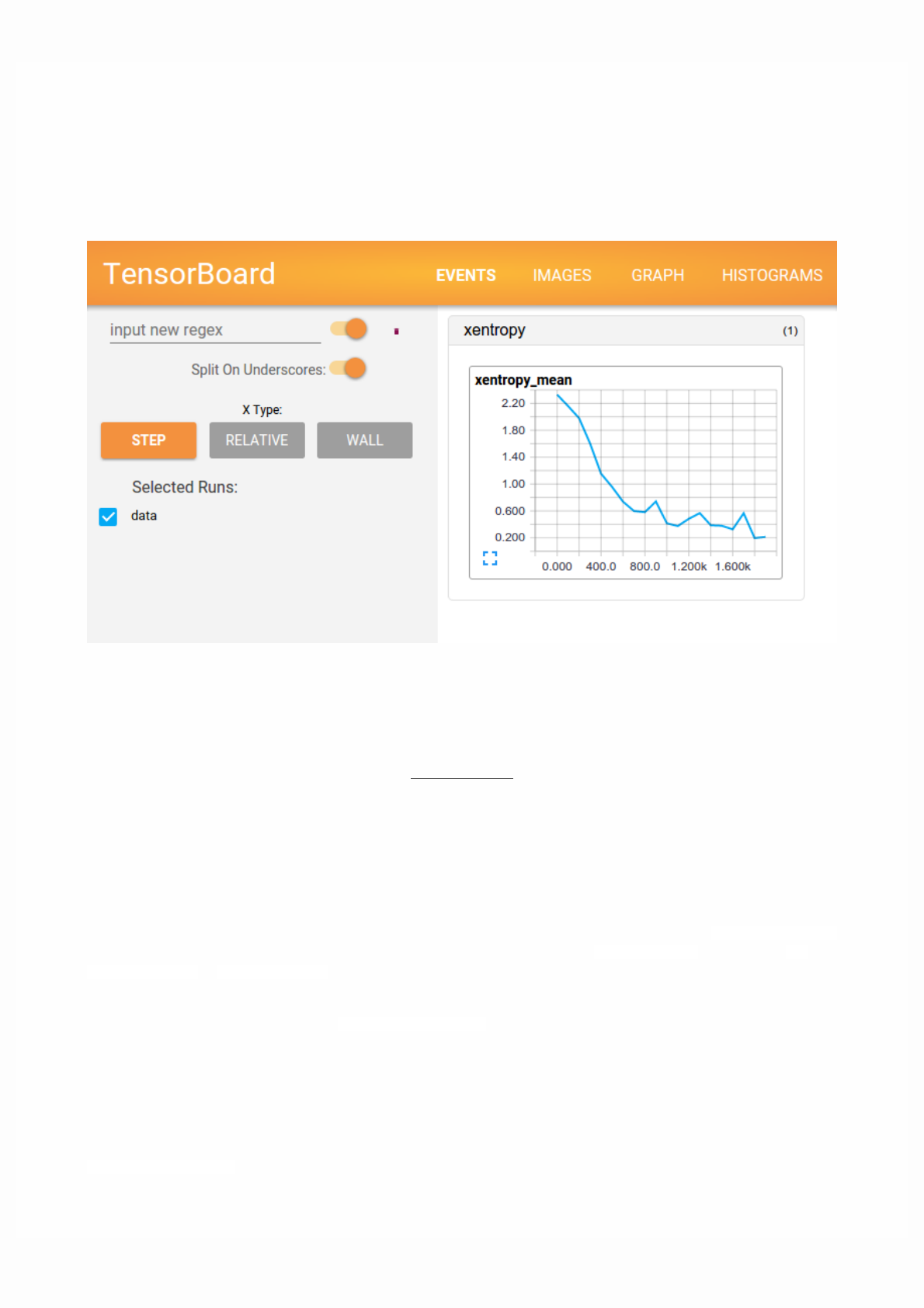

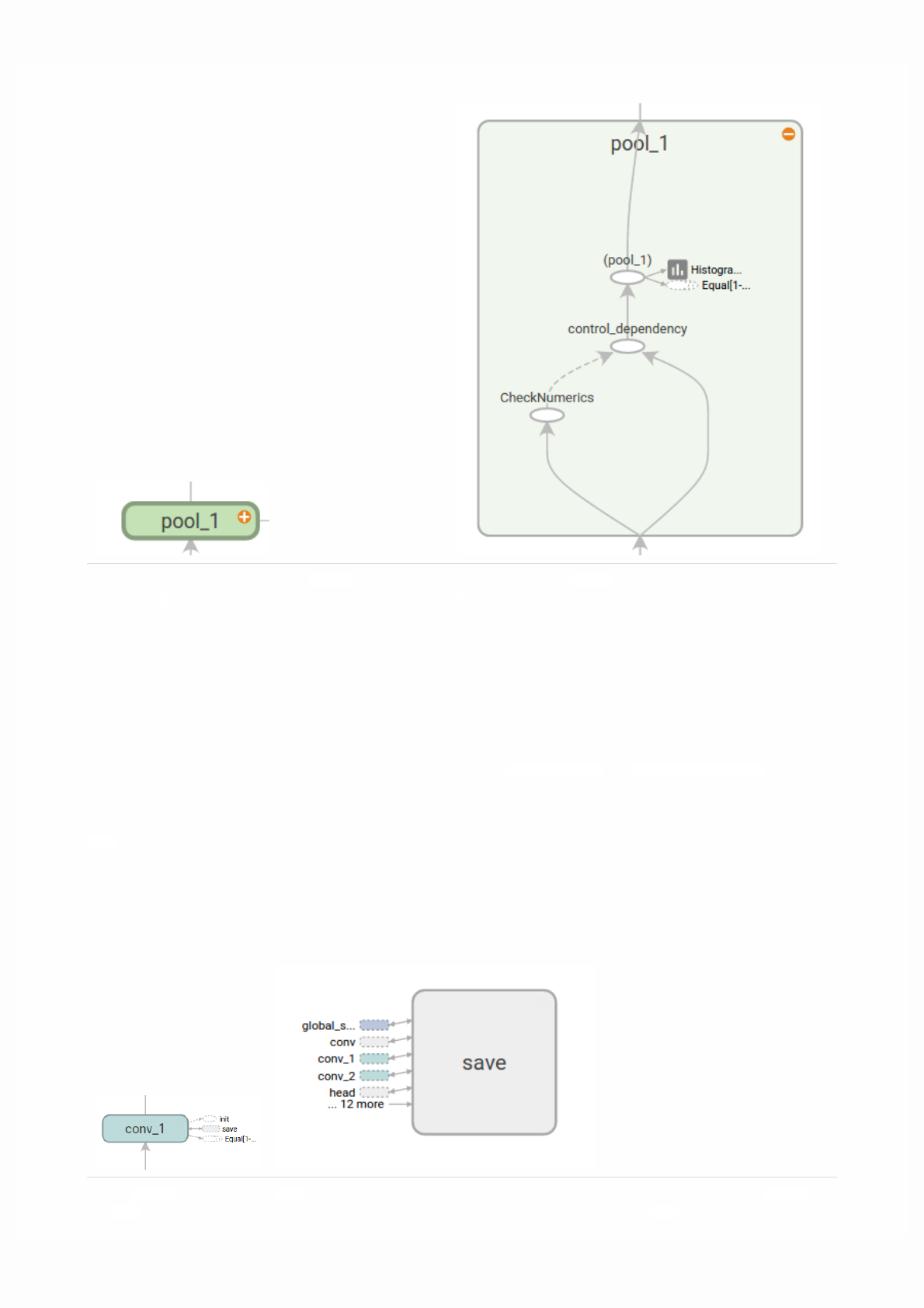

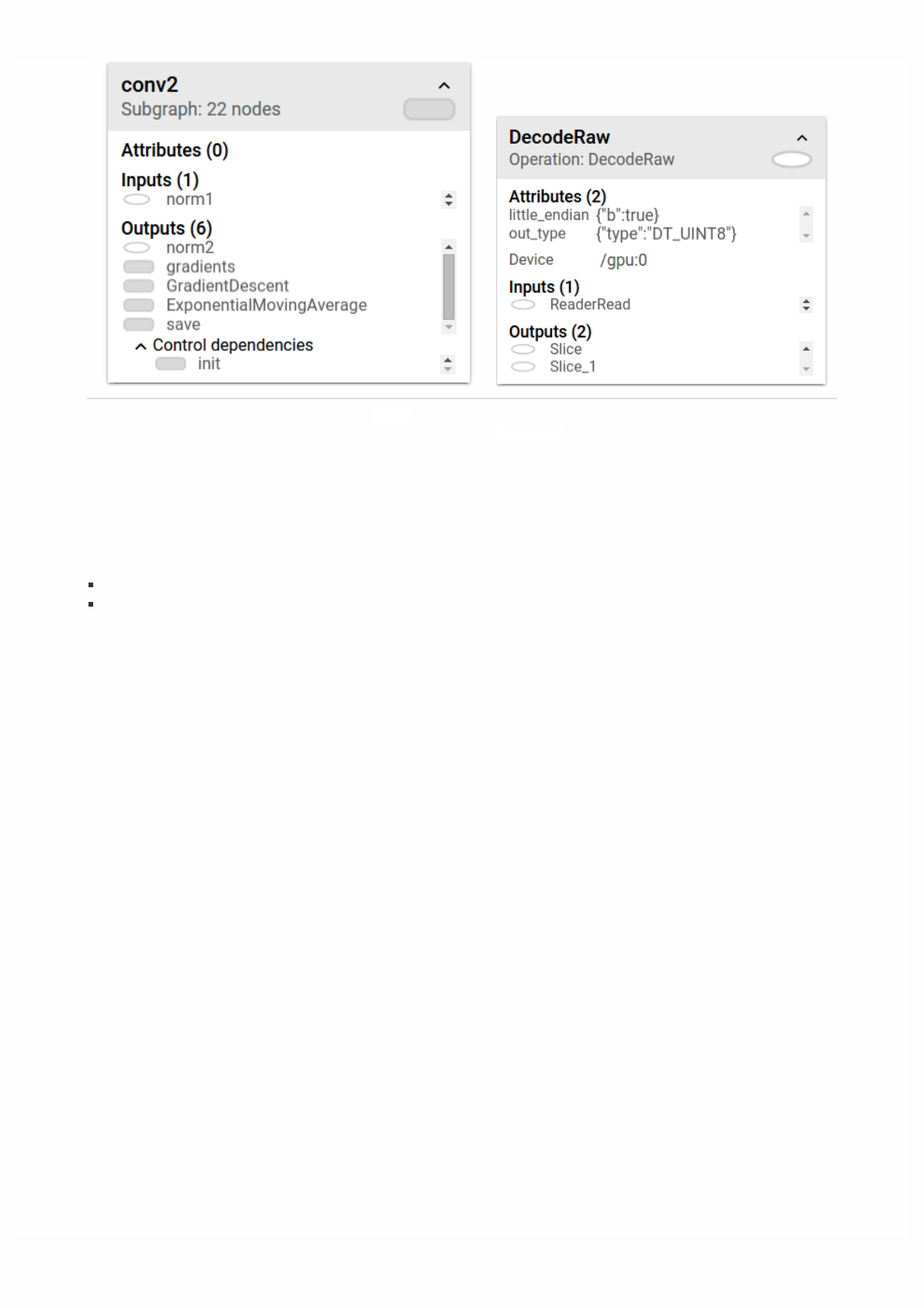

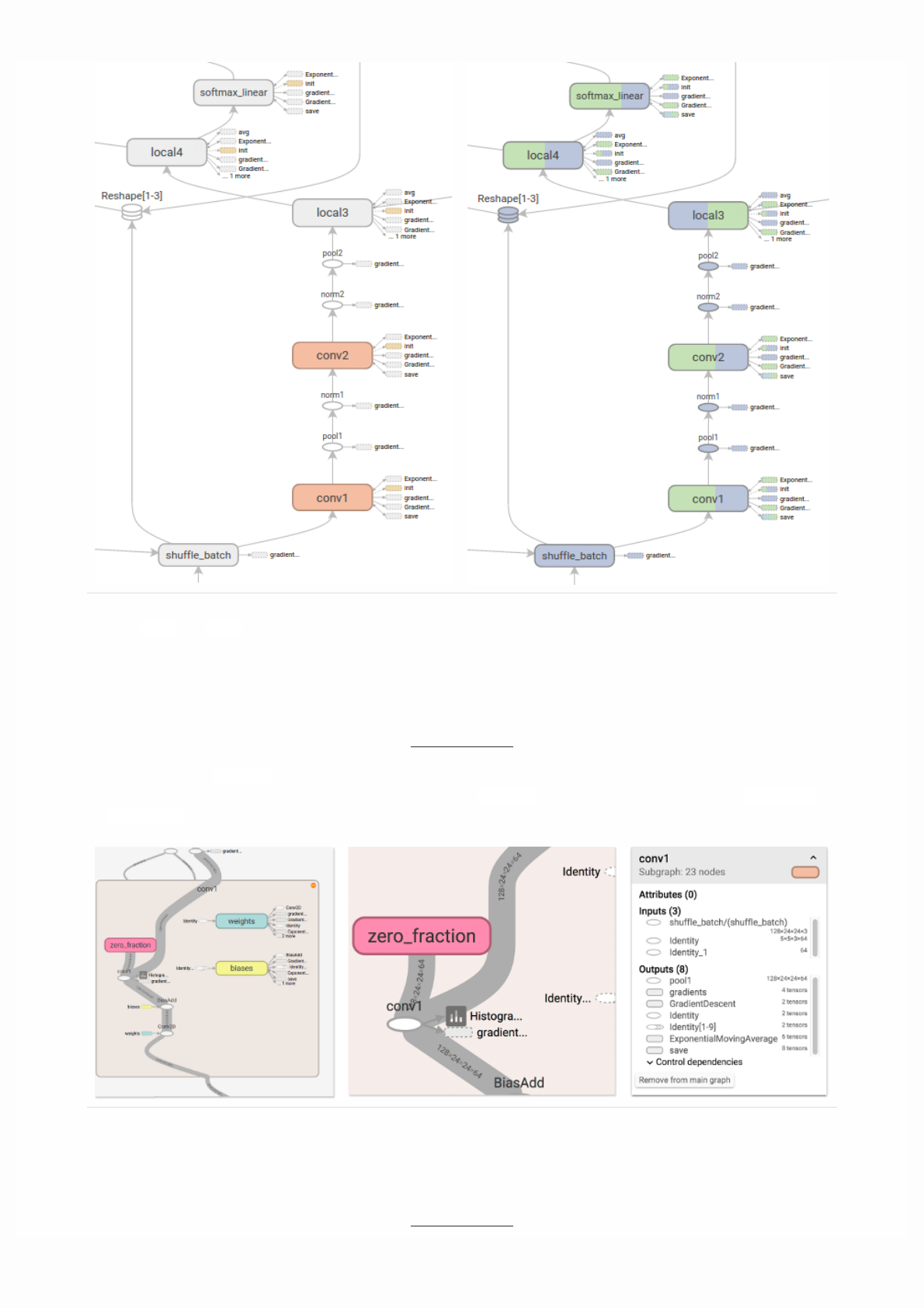

TensorBoard

TensorFlow provides a utility called TensorBoard. One of TensorBoard's many capabilities is visualizing a computation graph.

You can easily do this with a few simple commands.

First you save the computation graph to a TensorBoard summary file as follows:

writer = tf.summary.FileWriter('.')

writer.add_graph(tf.get_default_graph())

This will produce an event file in the current directory with a name in the following format:

events.out.tfevents.{timestamp}.{hostname}

Now, in a new terminal, launch TensorBoard with the following shell command:

tensorboard --logdir .

Then open TensorBoard's graphs page in your browser, and you should see a graph similar to the following:

For more about TensorBoard's graph visualization tools see TensorBoard: Graph Visualization.

Session

To evaluate tensors, instantiate a tf.Session object, informally known as a session. A session encapsulates the state of the

TensorFlow runtime, and runs TensorFlow operations. If a tf.Graph is like a .py file, a tf.Session is like the python

executable.

The following code creates a tf.Session object and then invokes its run method to evaluate the totaltensor we created

above:

sess = tf.Session()

print(sess.run(total))

When you request the output of a node with Session.run TensorFlow backtracks through the graph and runs all the nodes

that provide input to the requested output node. So this prints the expected value of 7.0:

7.0

You can pass multiple tensors to tf.Session.run. The run method transparently handles any combination of tuples or

dictionaries, as in the following example:

print(sess.run({'ab':(a, b), 'total':total}))

which returns the results in a structure of the same layout:

{'total': 7.0, 'ab': (3.0, 4.0)}

During a call to tf.Session.run any tf.Tensor only has a single value. For example, the following code calls

tf.random_uniform to produce a tf.Tensor that generates a random 3-element vector (with values in [0,1)):

vec = tf.random_uniform(shape=(3,))

out1 = vec + 1

out2 = vec + 2

print(sess.run(vec))

print(sess.run(vec))

print(sess.run((out1, out2)))

The result shows a different random value on each call to run, but a consistent value during a single run(out1 and out2

receive the same random input):

[ 0.52917576 0.64076328 0.68353939]

[ 0.66192627 0.89126778 0.06254101]

(

array([ 1.88408756, 1.87149239, 1.84057522], dtype=float32),

array([ 2.88408756, 2.87149239, 2.84057522], dtype=float32)

)

Some TensorFlow functions return tf.Operations instead of tf.Tensors. The result of calling run on an Operation is None.

You run an operation to cause a side-effect, not to retrieve a value. Examples of this include the initialization, and training ops

demonstrated later.

Feeding

As it stands, this graph is not especially interesting because it always produces a constant result. A graph can be parameterized

to accept external inputs, known as placeholders. A placeholder is a promise to provide a value later, like a function argument.

x = tf.placeholder(tf.float32)

y = tf.placeholder(tf.float32)

z = x + y

The preceding three lines are a bit like a function in which we define two input parameters (x and y) and then an operation on

them. We can evaluate this graph with multiple inputs by using the feed_dict argument of the run method to feed concrete

values to the placeholders:

print(sess.run(z, feed_dict={x: 3, y: 4.5}))

print(sess.run(z, feed_dict={x: [1, 3], y: [2, 4]}))

This results in the following output:

7.5

[ 3. 7.]

Also note that the feed_dict argument can be used to overwrite any tensor in the graph. The only difference between

placeholders and other tf.Tensors is that placeholders throw an error if no value is fed to them.

Datasets

Placeholders work for simple experiments, but Datasets are the preferred method of streaming data into a model.

To get a runnable tf.Tensor from a Dataset you must first convert it to a tf.data.Iterator, and then call the Iterator's

get_next method.

The simplest way to create an Iterator is with the make_one_shot_iterator method. For example, in the following code the

next_item tensor will return a row from the my_data array on each run call:

my_data = [

[0, 1,],

[2, 3,],

[4, 5,],

[6, 7,],

]

slices = tf.data.Dataset.from_tensor_slices(my_data)

next_item = slices.make_one_shot_iterator().get_next()

Reaching the end of the data stream causes Dataset to throw an OutOfRangeError. For example, the following code reads the

next_item until there is no more data to read:

while True:

try:

print(sess.run(next_item))

except tf.errors.OutOfRangeError:

break

For more details on Datasets and Iterators see: Importing Data.

Layers

A trainable model must modify the values in the graph to get new outputs with the same input. Layers are the preferred way

to add trainable parameters to a graph.

Layers package together both the variables and the operations that act on them, . For example a densely-connected layer

performs a weighted sum across all inputs for each output and applies an optional activation function. The connection weights

and biases are managed by the layer object.

Creating Layers

The following code creates a Dense layer that takes a batch of input vectors, and produces a single output value for each. To

apply a layer to an input, call the layer as if it were a function. For example:

x = tf.placeholder(tf.float32, shape=[None, 3])

linear_model = tf.layers.Dense(units=1)

y = linear_model(x)

The layer inspects its input to determine sizes for its internal variables. So here we must set the shape of the xplaceholder so

that the layer can build a weight matrix of the correct size.

Now that we have defined the calculation of the output, y, there is one more detail we need to take care of before we run the

calculation.

Initializing Layers

The layer contains variables that must be initialized before they can be used. While it is possible to initialize variables

individually, you can easily initialize all the variables in a TensorFlow graph as follows:

init = tf.global_variables_initializer()

sess.run(init)

Important: Calling tf.global_variables_initializer only creates and returns a handle to a TensorFlow operation. That

op will initialize all the global variables when we run it with tf.Session.run.

Also note that this global_variables_initializer only initializes variables that existed in the graph when the initializer

was created. So the initializer should be one of the last things added during graph construction.

Executing Layers

Now that the layer is initialized, we can evaluate the linear_model's output tensor as we would any other tensor. For

example, the following code:

print(sess.run(y, {x: [[1, 2, 3],[4, 5, 6]]}))

will generate a two-element output vector such as the following:

[[-3.41378999]

[-9.14999008]]

Layer Function shortcuts

For each layer class (like tf.layers.Dense) TensorFlow also supplies a shortcut function (like tf.layers.dense). The only

difference is that the shortcut function versions create and run the layer in a single call. For example, the following code is

equivalent to the earlier version:

x = tf.placeholder(tf.float32, shape=[None, 3])

y = tf.layers.dense(x, units=1)

init = tf.global_variables_initializer()

sess.run(init)

print(sess.run(y, {x: [[1, 2, 3], [4, 5, 6]]}))

While convenient, this approach allows no access to the tf.layers.Layer object. This makes introspection and debugging

more difficult, and layer reuse impossible.

Feature columns

The easiest way to experiment with feature columns is using the tf.feature_column.input_layer function. This function

only accepts dense columns as inputs, so to view the result of a categorical column you must wrap it in an

tf.feature_column.indicator_column. For example:

features = {

'sales' : [[5], [10], [8], [9]],

'department': ['sports', 'sports', 'gardening', 'gardening']}

department_column = tf.feature_column.categorical_column_with_vocabulary_list(

'department', ['sports', 'gardening'])

department_column = tf.feature_column.indicator_column(department_column)

columns = [

tf.feature_column.numeric_column('sales'),

department_column

]

inputs = tf.feature_column.input_layer(features, columns)

Running the inputs tensor will parse the features into a batch of vectors.

Feature columns can have internal state, like layers, so they often need to be initialized. Categorical columns use lookup tables

internally and these require a separate initialization op, tf.tables_initializer.

var_init = tf.global_variables_initializer()

table_init = tf.tables_initializer()

sess = tf.Session()

sess.run((var_init, table_init))

Once the internal state has been initialized you can run inputs like any other tf.Tensor:

print(sess.run(inputs))

This shows how the feature columns have packed the input vectors, with the one-hot "department" as the first two indices and

"sales" as the third.

[[ 1. 0. 5.]

[ 1. 0. 10.]

[ 0. 1. 8.]

[ 0. 1. 9.]]

Training

Now that you're familiar with the basics of core TensorFlow, let's train a small regression model manually.

Define the data

First let's define some inputs, x, and the expected output for each input, y_true:

x = tf.constant([[1], [2], [3], [4]], dtype=tf.float32)

y_true = tf.constant([[0], [-1], [-2], [-3]], dtype=tf.float32)

Define the model

Next, build a simple linear model, with 1 output:

linear_model = tf.layers.Dense(units=1)

y_pred = linear_model(x)

You can evaluate the predictions as follows:

sess = tf.Session()

init = tf.global_variables_initializer()

sess.run(init)

print(sess.run(y_pred))

The model hasn't yet been trained, so the four "predicted" values aren't very good. Here's what we got; your own output will

almost certainly differ:

[[ 0.02631879]

[ 0.05263758]

[ 0.07895637]

[ 0.10527515]]

loss

To optimize a model, you first need to define the loss. We'll use the mean square error, a standard loss for regression problems.

While you could do this manually with lower level math operations, the tf.losses module provides a set of common loss

functions. You can use it to calculate the mean square error as follows:

loss = tf.losses.mean_squared_error(labels=y_true, predictions=y_pred)

print(sess.run(loss))

This will produce a loss value, something like:

2.23962

Training

TensorFlow provides optimizers implementing standard optimization algorithms. These are implemented as sub-classes of

tf.train.Optimizer. They incrementally change each variable in order to minimizethe loss. The simplest optimization

algorithm is gradient descent, implemented by tf.train.GradientDescentOptimizer. It modifies each variable according

to the magnitude of the derivative of loss with respect to that variable. For example:

optimizer = tf.train.GradientDescentOptimizer(0.01)

train = optimizer.minimize(loss)

This code builds all the graph components necessary for the optimization, and returns a training operation. When run, the

training op will update variables in the graph. You might run it as follows:

for i in range(100):

_, loss_value = sess.run((train, loss))

print(loss_value)

Since train is an op, not a tensor, it doesn't return a value when run. To see the progression of the loss during training, we run

the loss tensor at the same time, producing output like the following:

1.35659

1.00412

0.759167

0.588829

0.470264

0.387626

0.329918

0.289511

0.261112

0.241046

...

Complete program

x = tf.constant([[1], [2], [3], [4]], dtype=tf.float32)

y_true = tf.constant([[0], [-1], [-2], [-3]], dtype=tf.float32)

linear_model = tf.layers.Dense(units=1)

y_pred = linear_model(x)

loss = tf.losses.mean_squared_error(labels=y_true, predictions=y_pred)

optimizer = tf.train.GradientDescentOptimizer(0.01)

train = optimizer.minimize(loss)

init = tf.global_variables_initializer()

sess = tf.Session()

sess.run(init)

for i in range(100):

_, loss_value = sess.run((train, loss))

print(loss_value)

print(sess.run(y_pred))

Rank Math entity

0 Scalar (magnitude only)

Next steps

To learn more about building models with TensorFlow consider the following:

Custom Estimators, to learn how to build customized models with TensorFlow. Your knowledge of TensorFlow Core will

help you understand and debug your own models.

If you want to learn more about the inner workings of TensorFlow consider the following documents, which go into more

depth on many of the topics discussed here:

Graphs and Sessions

Tensors

Variables

Tensors

TensorFlow, as the name indicates, is a framework to define and run computations involving tensors. A tensoris a

generalization of vectors and matrices to potentially higher dimensions. Internally, TensorFlow represents tensors as n-

dimensional arrays of base datatypes.

When writing a TensorFlow program, the main object you manipulate and pass around is the tf.Tensor. A tf.Tensor object

represents a partially defined computation that will eventually produce a value. TensorFlow programs work by first building a

graph of tf.Tensor objects, detailing how each tensor is computed based on the other available tensors and then by running

parts of this graph to achieve the desired results.

A tf.Tensor has the following properties:

a data type (float32, int32, or string, for example)

a shape

Each element in the Tensor has the same data type, and the data type is always known. The shape (that is, the number of

dimensions it has and the size of each dimension) might be only partially known. Most operations produce tensors of fully-

known shapes if the shapes of their inputs are also fully known, but in some cases it's only possible to find the shape of a

tensor at graph execution time.

Some types of tensors are special, and these will be covered in other units of the Programmer's guide. The main ones are:

tf.Variable

tf.constant

tf.placeholder

tf.SparseTensor

With the exception of tf.Variable, the value of a tensor is immutable, which means that in the context of a single execution

tensors only have a single value. However, evaluating the same tensor twice can return different values; for example that

tensor can be the result of reading data from disk, or generating a random number.

Rank

The rank of a tf.Tensor object is its number of dimensions. Synonyms for rank include order or degree or n-dimension. Note

that rank in TensorFlow is not the same as matrix rank in mathematics. As the following table shows, each rank in TensorFlow

corresponds to a different mathematical entity:

1 Vector (magnitude and direction)

2 Matrix (table of numbers)

3 3-Tensor (cube of numbers)

n n-Tensor (you get the idea)

Rank 0

The following snippet demonstrates creating a few rank 0 variables:

mammal = tf.Variable("Elephant", tf.string)

ignition = tf.Variable(451, tf.int16)

floating = tf.Variable(3.14159265359, tf.float64)

its_complicated = tf.Variable(12.3 - 4.85j, tf.complex64)

Note: A string is treated as a single item in TensorFlow, not as a sequence of characters. It is possible to have scalar strings,

vectors of strings, etc.

Rank 1

To create a rank 1 tf.Tensor object, you can pass a list of items as the initial value. For example:

mystr = tf.Variable(["Hello"], tf.string)

cool_numbers = tf.Variable([3.14159, 2.71828], tf.float32)

first_primes = tf.Variable([2, 3, 5, 7, 11], tf.int32)

its_very_complicated = tf.Variable([12.3 - 4.85j, 7.5 - 6.23j], tf.complex64)

Higher ranks

A rank 2 tf.Tensor object consists of at least one row and at least one column:

mymat = tf.Variable([[7],[11]], tf.int16)

myxor = tf.Variable([[False, True],[True, False]], tf.bool)

linear_squares = tf.Variable([[4], [9], [16], [25]], tf.int32)

squarish_squares = tf.Variable([ [4, 9], [16, 25] ], tf.int32)

rank_of_squares = tf.rank(squarish_squares)

mymatC = tf.Variable([[7],[11]], tf.int32)

Higher-rank Tensors, similarly, consist of an n-dimensional array. For example, during image processing, many tensors of rank

4 are used, with dimensions corresponding to example-in-batch, image width, image height, and color channel.

my_image = tf.zeros([10, 299, 299, 3]) # batch x height x width x color

Rank Shape Dimension number Example

0 [] 0-D A 0-D tensor. A scalar.

1 [D0] 1-D A 1-D tensor with shape [5].

2 [D0, D1] 2-D A 2-D tensor with shape [3, 4].

Getting a tf.Tensor object's rank

To determine the rank of a tf.Tensor object, call the tf.rank method. For example, the following method programmatically

determines the rank of the tf.Tensor defined in the previous section:

r = tf.rank(my_image)

# After the graph runs, r will hold the value 4.

Referring to tf.Tensor slices

Since a tf.Tensor is an n-dimensional array of cells, to access a single cell in a tf.Tensor you need to specify n indices.

For a rank 0 tensor (a scalar), no indices are necessary, since it is already a single number.

For a rank 1 tensor (a vector), passing a single index allows you to access a number:

my_scalar = my_vector[2]

Note that the index passed inside the [] can itself be a scalar tf.Tensor, if you want to dynamically choose an element from

the vector.

For tensors of rank 2 or higher, the situation is more interesting. For a tf.Tensor of rank 2, passing two numbers returns a

scalar, as expected:

my_scalar = my_matrix[1, 2]

Passing a single number, however, returns a subvector of a matrix, as follows:

my_row_vector = my_matrix[2]

my_column_vector = my_matrix[:, 3]

The : notation is python slicing syntax for "leave this dimension alone". This is useful in higher-rank Tensors, as it allows you

to access its subvectors, submatrices, and even other subtensors.

Shape

The shape of a tensor is the number of elements in each dimension. TensorFlow automatically infers shapes during graph

construction. These inferred shapes might have known or unknown rank. If the rank is known, the sizes of each dimension

might be known or unknown.

The TensorFlow documentation uses three notational conventions to describe tensor dimensionality: rank, shape, and

dimension number. The following table shows how these relate to one another:

3 [D0, D1, D2] 3-D A 3-D tensor with shape [1, 4, 3].

n [D0, D1, ... Dn-1] n-D A tensor with shape [D0, D1, ... Dn-1].

Shapes can be represented via Python lists / tuples of ints, or with the tf.TensorShape.

Getting a tf.Tensor object's shape

There are two ways of accessing the shape of a tf.Tensor. While building the graph, it is often useful to ask what is already

known about a tensor's shape. This can be done by reading the shape property of a tf.Tensor object. This method returns a

TensorShape object, which is a convenient way of representing partially-specified shapes (since, when building the graph, not

all shapes will be fully known).

It is also possible to get a tf.Tensor that will represent the fully-defined shape of another tf.Tensor at runtime. This is done

by calling the tf.shape operation. This way, you can build a graph that manipulates the shapes of tensors by building other

tensors that depend on the dynamic shape of the input tf.Tensor.

For example, here is how to make a vector of zeros with the same size as the number of columns in a given matrix:

zeros = tf.zeros(my_matrix.shape[1])

Changing the shape of a tf.Tensor

The number of elements of a tensor is the product of the sizes of all its shapes. The number of elements of a scalar is always 1.

Since there are often many different shapes that have the same number of elements, it's often convenient to be able to change

the shape of a tf.Tensor, keeping its elements fixed. This can be done with tf.reshape.

The following examples demonstrate how to reshape tensors:

rank_three_tensor = tf.ones([3, 4, 5])

matrix = tf.reshape(rank_three_tensor, [6, 10]) # Reshape existing content into

# a 6x10 matrix

matrixB = tf.reshape(matrix, [3, -1]) # Reshape existing content into a 3x20

# matrix. -1 tells reshape to calculate

# the size of this dimension.

matrixAlt = tf.reshape(matrixB, [4, 3, -1]) # Reshape existing content into a

#4x3x5 tensor

# Note that the number of elements of the reshaped Tensors has to match the

# original number of elements. Therefore, the following example generates an

# error because no possible value for the last dimension will match the number

# of elements.

yet_another = tf.reshape(matrixAlt, [13, 2, -1]) # ERROR!

Data types

In addition to dimensionality, Tensors have a data type. Refer to the tf.DataType page in the programmer's guide for a full

list of the data types.

It is not possible to have a tf.Tensor with more than one data type. It is possible, however, to serialize arbitrary data

structures as strings and store those in tf.Tensors.

It is possible to cast tf.Tensors from one datatype to another using tf.cast:

# Cast a constant integer tensor into floating point.

float_tensor = tf.cast(tf.constant([1, 2, 3]), dtype=tf.float32)

To inspect a tf.Tensor's data type use the Tensor.dtype property.

When creating a tf.Tensor from a python object you may optionally specify the datatype. If you don't, TensorFlow chooses a

datatype that can represent your data. TensorFlow converts Python integers to tf.int32 and python floating point numbers to

tf.float32. Otherwise TensorFlow uses the same rules numpy uses when converting to arrays.

Evaluating Tensors

Once the computation graph has been built, you can run the computation that produces a particular tf.Tensorand fetch the

value assigned to it. This is often useful for debugging as well as being required for much of TensorFlow to work.

The simplest way to evaluate a Tensor is using the Tensor.eval method. For example:

constant = tf.constant([1, 2, 3])

tensor = constant * constant

print tensor.eval()

The eval method only works when a default tf.Session is active (see Graphs and Sessions for more information).

Tensor.eval returns a numpy array with the same contents as the tensor.

Sometimes it is not possible to evaluate a tf.Tensor with no context because its value might depend on dynamic information

that is not available. For example, tensors that depend on placeholders can't be evaluated without providing a value for the

placeholder.

p = tf.placeholder(tf.float32)

t = p + 1.0

t.eval() # This will fail, since the placeholder did not get a value.

t.eval(feed_dict={p:2.0}) # This will succeed because we're feeding a value

# to the placeholder.

Note that it is possible to feed any tf.Tensor, not just placeholders.

Other model constructs might make evaluating a tf.Tensor complicated. TensorFlow can't directly evaluate tf.Tensors

defined inside functions or inside control flow constructs. If a tf.Tensor depends on a value from a queue, evaluating the

tf.Tensor will only work once something has been enqueued; otherwise, evaluating it will hang. When working with queues,

remember to call tf.train.start_queue_runners before evaluating any tf.Tensors.

Printing Tensors

For debugging purposes you might want to print the value of a tf.Tensor. While tfdbg provides advanced debugging

support, TensorFlow also has an operation to directly print the value of a tf.Tensor.

Note that you rarely want to use the following pattern when printing a tf.Tensor:

t = <<some tensorflow operation>>

print t # This will print the symbolic tensor when the graph is being built.

# This tensor does not have a value in this context.

This code prints the tf.Tensor object (which represents deferred computation) and not its value. Instead, TensorFlow

provides the tf.Print operation, which returns its first tensor argument unchanged while printing the set of tf.Tensors it is

passed as the second argument.

To correctly use tf.Print its return value must be used. See the example below

t = <<some tensorflow operation>>

tf.Print(t, [t]) # This does nothing

t = tf.Print(t, [t]) # Here we are using the value returned by tf.Print

result = t + 1 # Now when result is evaluated the value of `t` will be printed.

When you evaluate result you will evaluate everything result depends upon. Since result depends upon t, and

evaluating t has the side effect of printing its input (the old value of t), t gets printed.Variables

A TensorFlow variable is the best way to represent shared, persistent state manipulated by your program.

Variables are manipulated via the tf.Variable class. A tf.Variable represents a tensor whose value can be changed by

running ops on it. Unlike tf.Tensor objects, a tf.Variable exists outside the context of a single session.run call.

Internally, a tf.Variable stores a persistent tensor. Specific ops allow you to read and modify the values of this tensor. These

modifications are visible across multiple tf.Sessions, so multiple workers can see the same values for a tf.Variable.

Creating a Variable

The best way to create a variable is to call the tf.get_variable function. This function requires you to specify the Variable's

name. This name will be used by other replicas to access the same variable, as well as to name this variable's value when

checkpointing and exporting models. tf.get_variable also allows you to reuse a previously created variable of the same

name, making it easy to define models which reuse layers.

To create a variable with tf.get_variable, simply provide the name and shape

my_variable = tf.get_variable("my_variable", [1, 2, 3])

This creates a variable named "my_variable" which is a three-dimensional tensor with shape [1, 2, 3]. This variable will, by

default, have the dtype tf.float32 and its initial value will be randomized viatf.glorot_uniform_initializer.

You may optionally specify the dtype and initializer to tf.get_variable. For example:

my_int_variable = tf.get_variable("my_int_variable", [1, 2, 3], dtype=tf.int32,

initializer=tf.zeros_initializer)

TensorFlow provides many convenient initializers. Alternatively, you may initialize a tf.Variable to have the value of a

tf.Tensor. For example:

other_variable = tf.get_variable("other_variable", dtype=tf.int32,

initializer=tf.constant([23, 42]))

Note that when the initializer is a tf.Tensor you should not specify the variable's shape, as the shape of the initializer tensor

will be used.

Variable collections

Because disconnected parts of a TensorFlow program might want to create variables, it is sometimes useful to have a single

way to access all of them. For this reason TensorFlow provides collections, which are named lists of tensors or other objects,

such as tf.Variable instances.

By default every tf.Variable gets placed in the following two collections: * tf.GraphKeys.GLOBAL_VARIABLES --- variables

that can be shared across multiple devices, * tf.GraphKeys.TRAINABLE_VARIABLES--- variables for which TensorFlow will

calculate gradients.

If you don't want a variable to be trainable, add it to the tf.GraphKeys.LOCAL_VARIABLES collection instead. For example,

the following snippet demonstrates how to add a variable named my_local to this collection:

my_local = tf.get_variable("my_local", shape=(),

collections=[tf.GraphKeys.LOCAL_VARIABLES])

Alternatively, you can specify trainable=False as an argument to tf.get_variable:

my_non_trainable = tf.get_variable("my_non_trainable",

shape=(),

trainable=False)

You can also use your own collections. Any string is a valid collection name, and there is no need to explicitly create a

collection. To add a variable (or any other object) to a collection after creating the variable, calltf.add_to_collection. For

example, the following code adds an existing variable named my_local to a collection named my_collection_name:

tf.add_to_collection("my_collection_name", my_local)

And to retrieve a list of all the variables (or other objects) you've placed in a collection you can use:

tf.get_collection("my_collection_name")

Device placement

Just like any other TensorFlow operation, you can place variables on particular devices. For example, the following snippet

creates a variable named v and places it on the second GPU device:

with tf.device("/device:GPU:1"):

v = tf.get_variable("v", [1])

It is particularly important for variables to be in the correct device in distributed settings. Accidentally putting variables on

workers instead of parameter servers, for example, can severely slow down training or, in the worst case, let each worker

blithely forge ahead with its own independent copy of each variable. For this reason we provide

tf.train.replica_device_setter, which can automatically place variables in parameter servers. For example:

cluster_spec = {

"ps": ["ps0:2222", "ps1:2222"],

"worker": ["worker0:2222", "worker1:2222", "worker2:2222"]}

with tf.device(tf.train.replica_device_setter(cluster=cluster_spec)):

v = tf.get_variable("v", shape=[20, 20]) # this variable is placed

# in the parameter server

# by the replica_device_setter

Initializing variables

Before you can use a variable, it must be initialized. If you are programming in the low-level TensorFlow API (that is, you are

explicitly creating your own graphs and sessions), you must explicitly initialize the variables. Most high-level frameworks such

as tf.contrib.slim, tf.estimator.Estimator and Keras automatically initialize variables for you before training a model.

Explicit initialization is otherwise useful because it allows you not to rerun potentially expensive initializers when reloading a

model from a checkpoint as well as allowing determinism when randomly-initialized variables are shared in a distributed

setting.

To initialize all trainable variables in one go, before training starts, calltf.global_variables_initializer(). This function

returns a single operation responsible for initializing all variables in the tf.GraphKeys.GLOBAL_VARIABLES collection.

Running this operation initializes all variables. For example:

session.run(tf.global_variables_initializer())

# Now all variables are initialized.

If you do need to initialize variables yourself, you can run the variable's initializer operation. For example:

session.run(my_variable.initializer)

You can also ask which variables have still not been initialized. For example, the following code prints the names of all

variables which have not yet been initialized:

print(session.run(tf.report_uninitialized_variables()))

Note that by default tf.global_variables_initializer does not specify the order in which variables are initialized.

Therefore, if the initial value of a variable depends on another variable's value, it's likely that you'll get an error. Any time you

use the value of a variable in a context in which not all variables are initialized (say, if you use a variable's value while

initializing another variable), it is best to use variable.initialized_value()instead of variable:

v = tf.get_variable("v", shape=(), initializer=tf.zeros_initializer())

w = tf.get_variable("w", initializer=v.initialized_value() + 1)

Using variables

To use the value of a tf.Variable in a TensorFlow graph, simply treat it like a normal tf.Tensor:

v = tf.get_variable("v", shape=(), initializer=tf.zeros_initializer())

w = v + 1 # w is a tf.Tensor which is computed based on the value of v.

# Any time a variable is used in an expression it gets automatically

# converted to a tf.Tensor representing its value.

To assign a value to a variable, use the methods assign, assign_add, and friends in the tf.Variable class. For example, here

is how you can call these methods:

v = tf.get_variable("v", shape=(), initializer=tf.zeros_initializer())

assignment = v.assign_add(1)

tf.global_variables_initializer().run()

sess.run(assignment) # or assignment.op.run()

Most TensorFlow optimizers have specialized ops that efficiently update the values of variables according to some gradient

descent-like algorithm. See tf.train.Optimizer for an explanation of how to use optimizers.

Because variables are mutable it's sometimes useful to know what version of a variable's value is being used at any point in

time. To force a re-read of the value of a variable after something has happened, you can usetf.Variable.read_value. For

example:

v = tf.get_variable("v", shape=(), initializer=tf.zeros_initializer())

assignment = v.assign_add(1)

with tf.control_dependencies([assignment]):

w = v.read_value() # w is guaranteed to reflect v's value after the

# assign_add operation.

Sharing variables

TensorFlow supports two ways of sharing variables:

Explicitly passing tf.Variable objects around.

Implicitly wrapping tf.Variable objects within tf.variable_scope objects.

While code which explicitly passes variables around is very clear, it is sometimes convenient to write TensorFlow functions

that implicitly use variables in their implementations. Most of the functional layers fromtf.layer use this approach, as well as

all tf.metrics, and a few other library utilities.

Variable scopes allow you to control variable reuse when calling functions which implicitly create and use variables. They also

allow you to name your variables in a hierarchical and understandable way.

For example, let's say we write a function to create a convolutional / relu layer:

def conv_relu(input, kernel_shape, bias_shape):

# Create variable named "weights".

weights = tf.get_variable("weights", kernel_shape,

initializer=tf.random_normal_initializer())

# Create variable named "biases".