Python 2.6 Text Processing Beginner's Guide (2010)

Python%202.6%20Text%20Processing%20-%20Beginner's%20Guide%20(2010)

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 380 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Cover

- Copyright

- Credits

- About the Author

- About the Reviewer

- Table of Contents

- Preface

- Chapter 1:

Getting Started

- Categorizing types of text data

- Ensuring you have Python installed

- Implementing a simple cipher

- Time for action – implementing a ROT13 encoder

- Time for action – processing as a filter

- Time for action – skipping over markup tags

- Supporting third-party modules

- Time for action – installing SetupTools

- Running a virtual environment

- Time for action – configuring a virtual environment

- Where to get help?

- Summary

- Chapter 2:

Working with the IO System

- Parsing web server logs

- Time for action – generating transfer statistics

- Using objects interchangeably

- Time for action – introducing a new log format

- Accessing files directly

- Time for action – accessing files directly

- Time for action – handling compressed files

- Accessing multiple files

- Time for action – spell-checking HTML content

- Accessing remote files

- Time for action – spell-checking live HTML pages

- Time for action – handling urllib 2 errors

- Handling string IO instances

- Understanding IO in Python 3

- Summary

- Chapter 3:

Python String Services

- Understanding the basics of string object

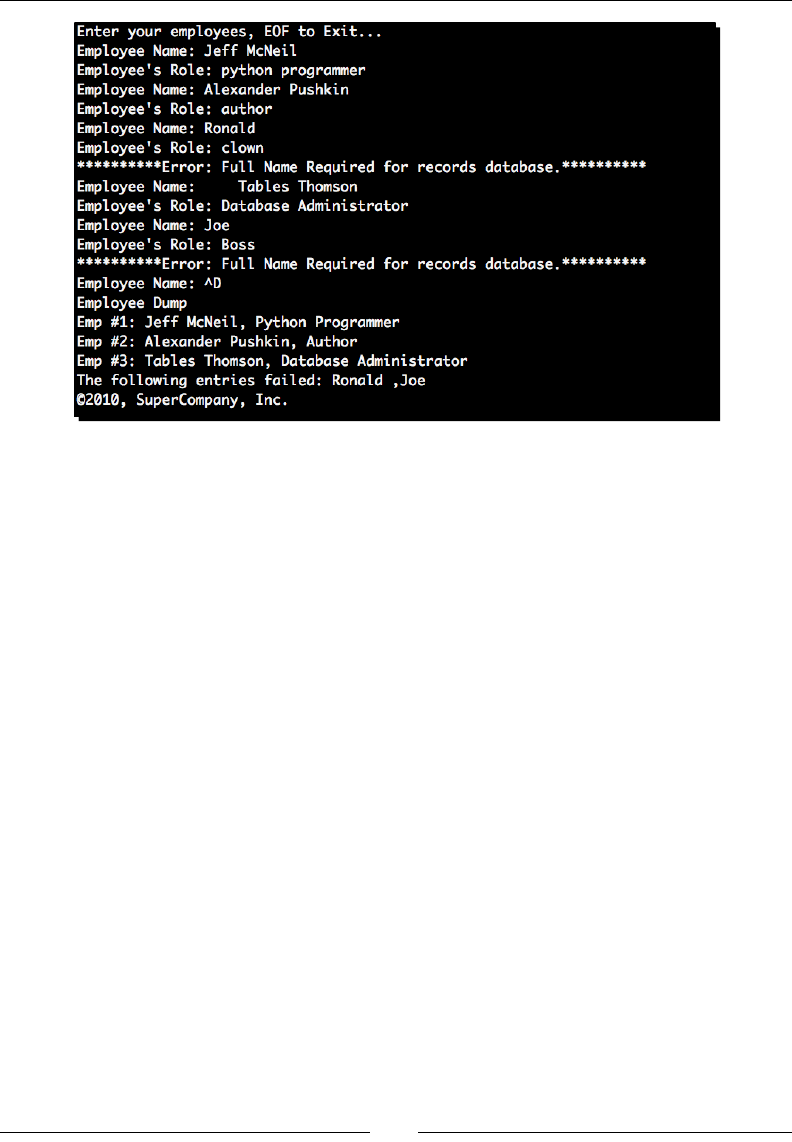

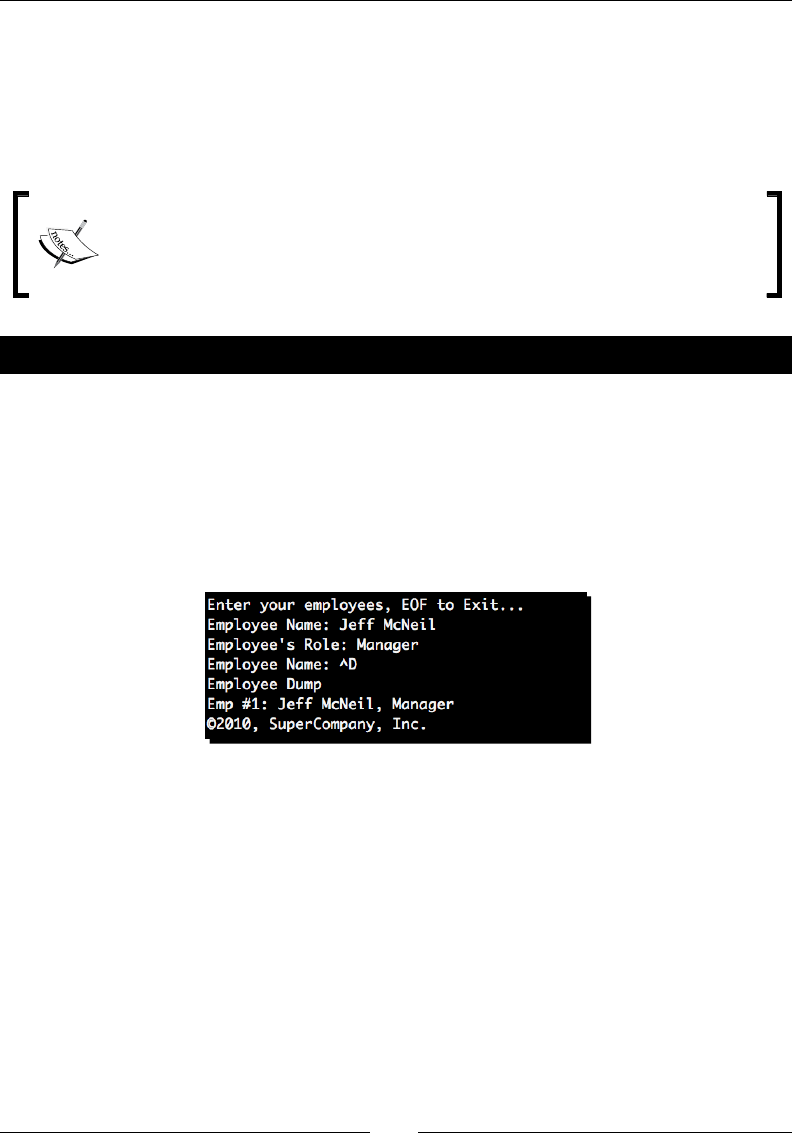

- Time for action – employee management

- String formatting

- Time for action – customizing log processor output

- Time for action – adding status code data

- Creating templates

- Time for action – displaying warnings on malformed lines

- Calling string object methods

- Time for action – simple manipulation with string methods

- Summary

- Chapter 4:

Text Processing Using the Standard Library

- Reading CSV data

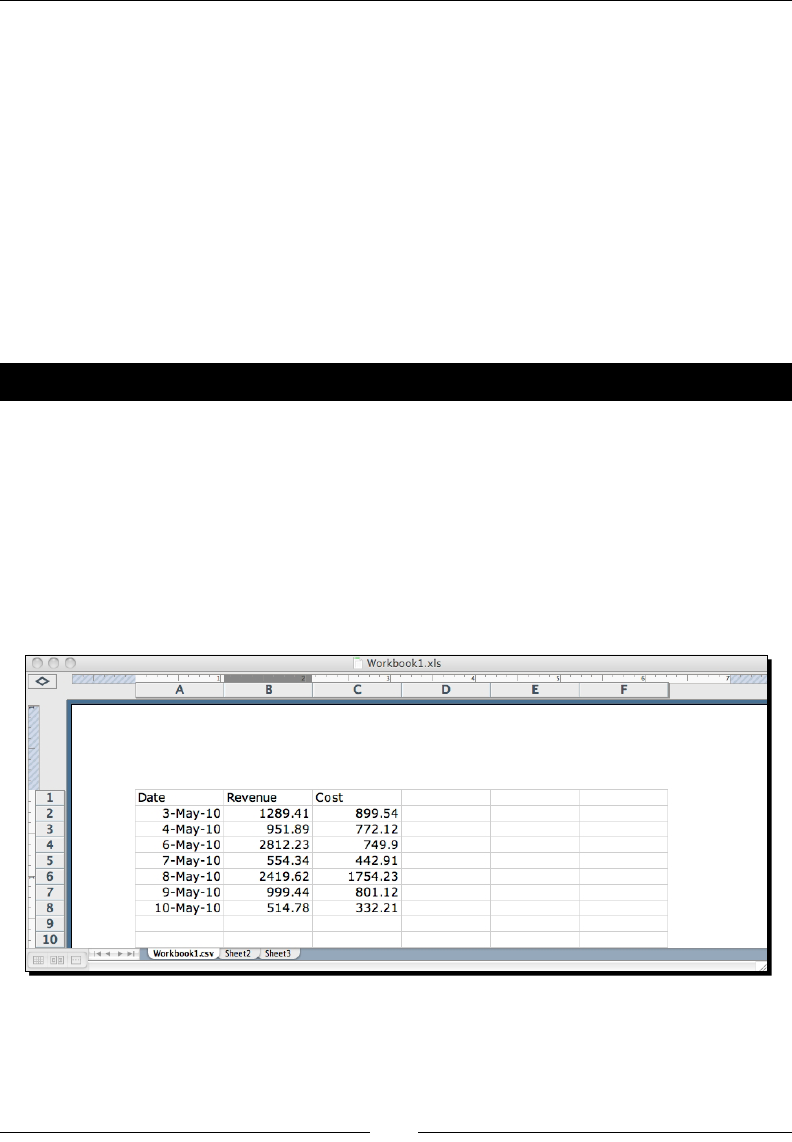

- Time for action – processing Excel formats

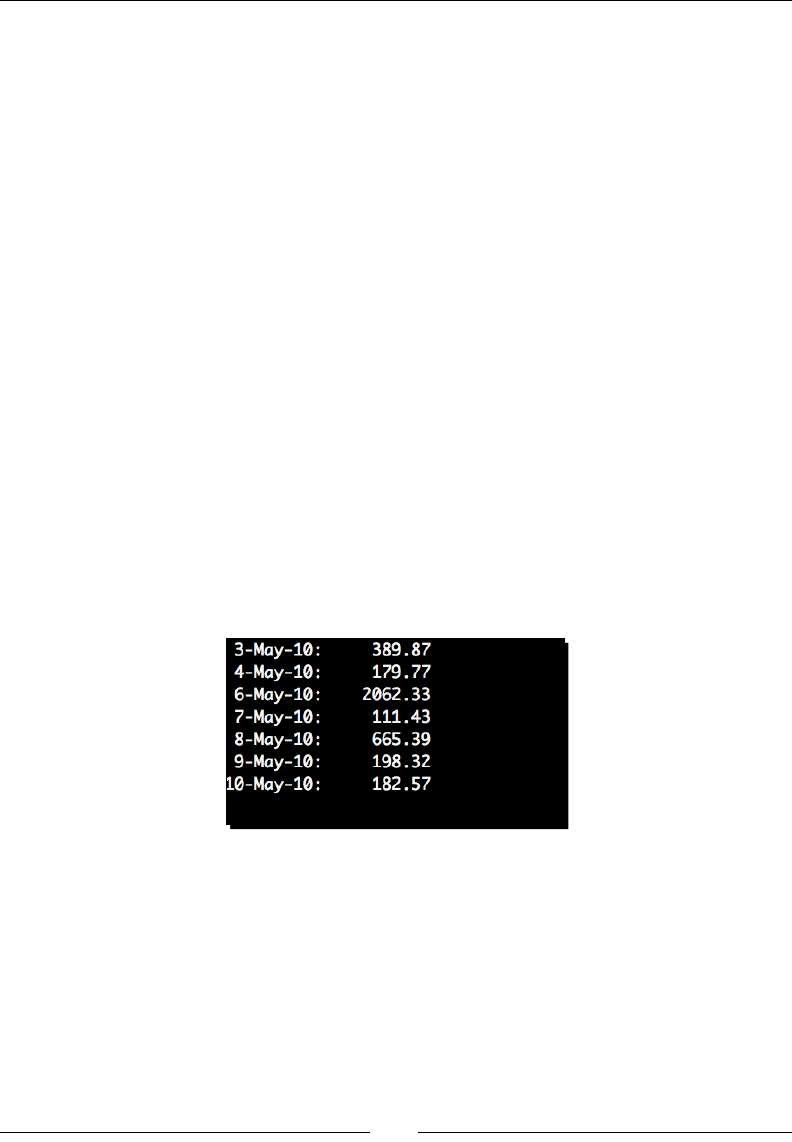

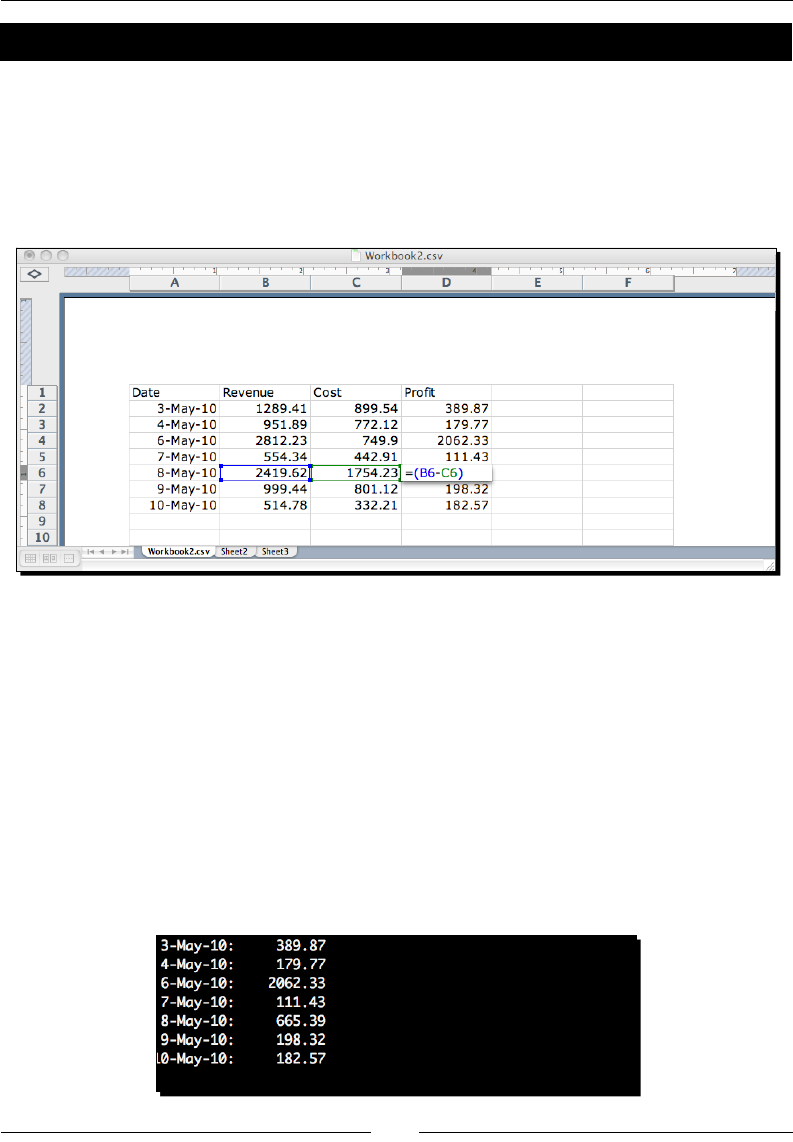

- Time for action – CSV and formulas

- Time for action – processing custom CSV formats

- Writing CSV data

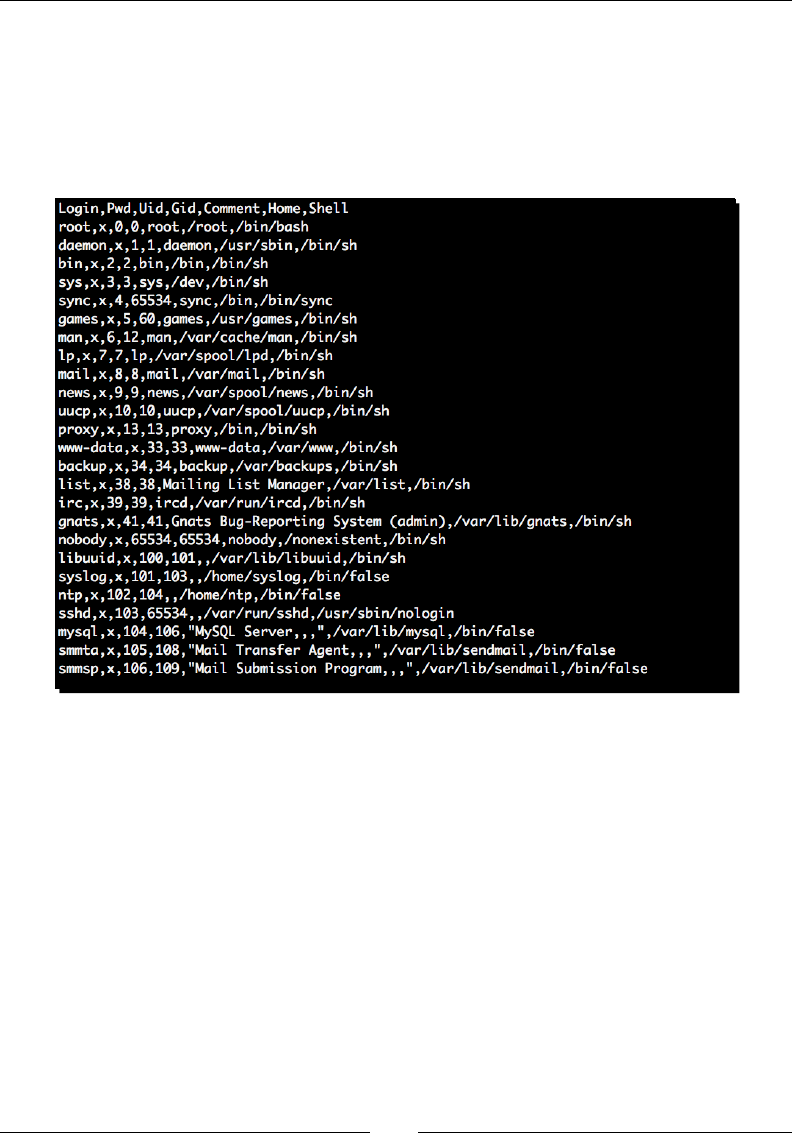

- Time for action – creating a spreadsheet of UNIX users

- Modifying application configuration files

- Time for action – adding basic configuration read support

- Time for action – relying on configuration value interpolation

- Time for action – configuration defaults

- Writing configuration data

- Time for action – generating a configuration file

- Reconfiguring our source

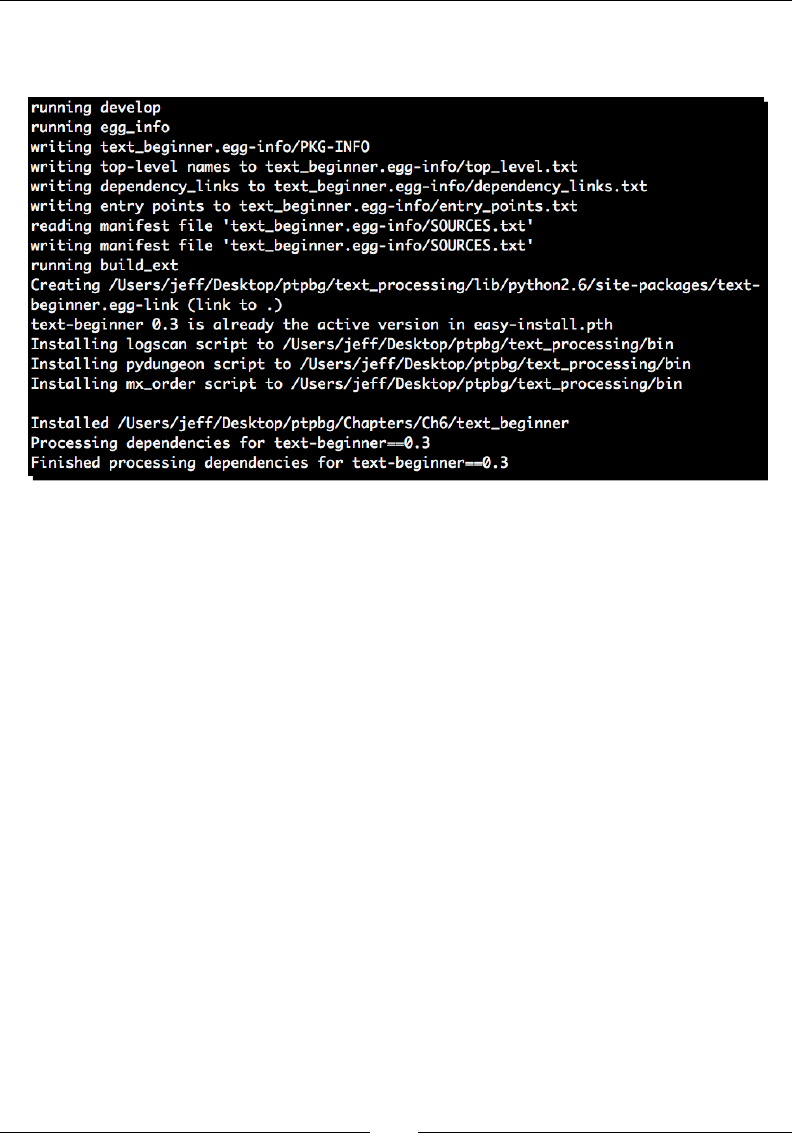

- Time for action – creating an egg-based package

- Working with JSON

- Time for action – writing JSON data

- Summary

- Chapter 5: Regular Expressions

- Chapter 6:

Structured Markup

- XML data

- SAX processing

- Time for action – event-driven processing

- Time for action – driving incremental processing

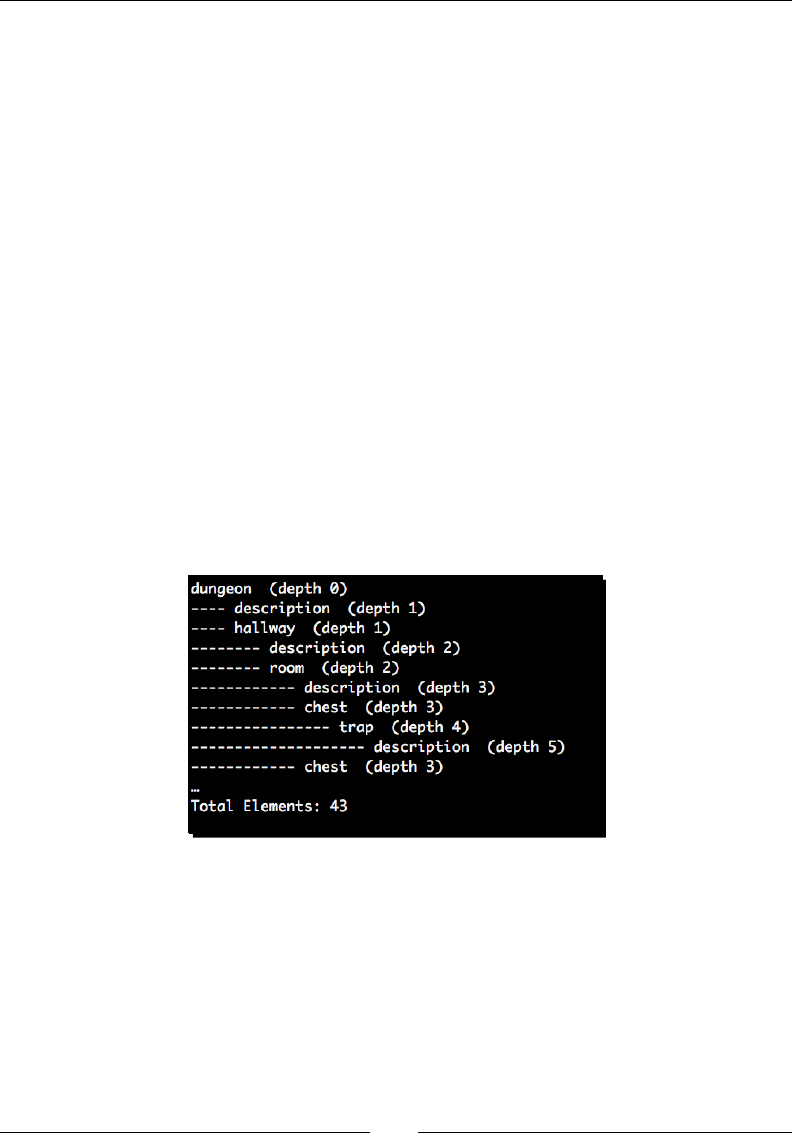

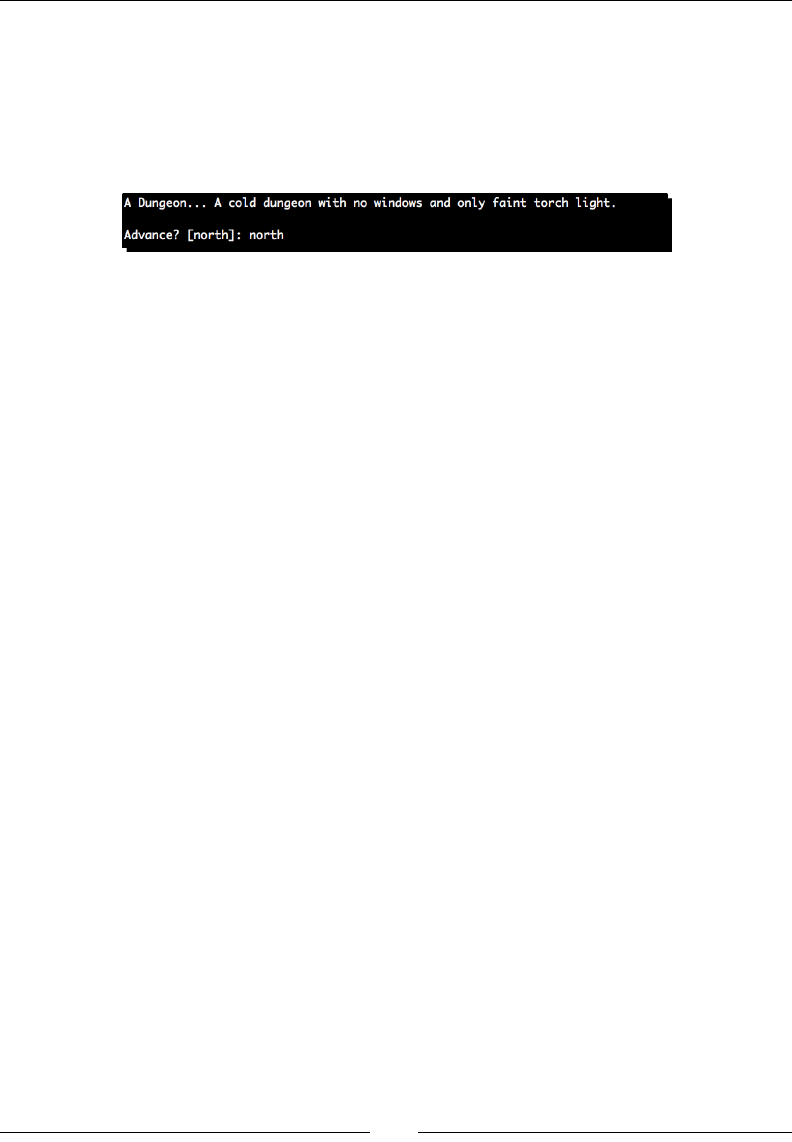

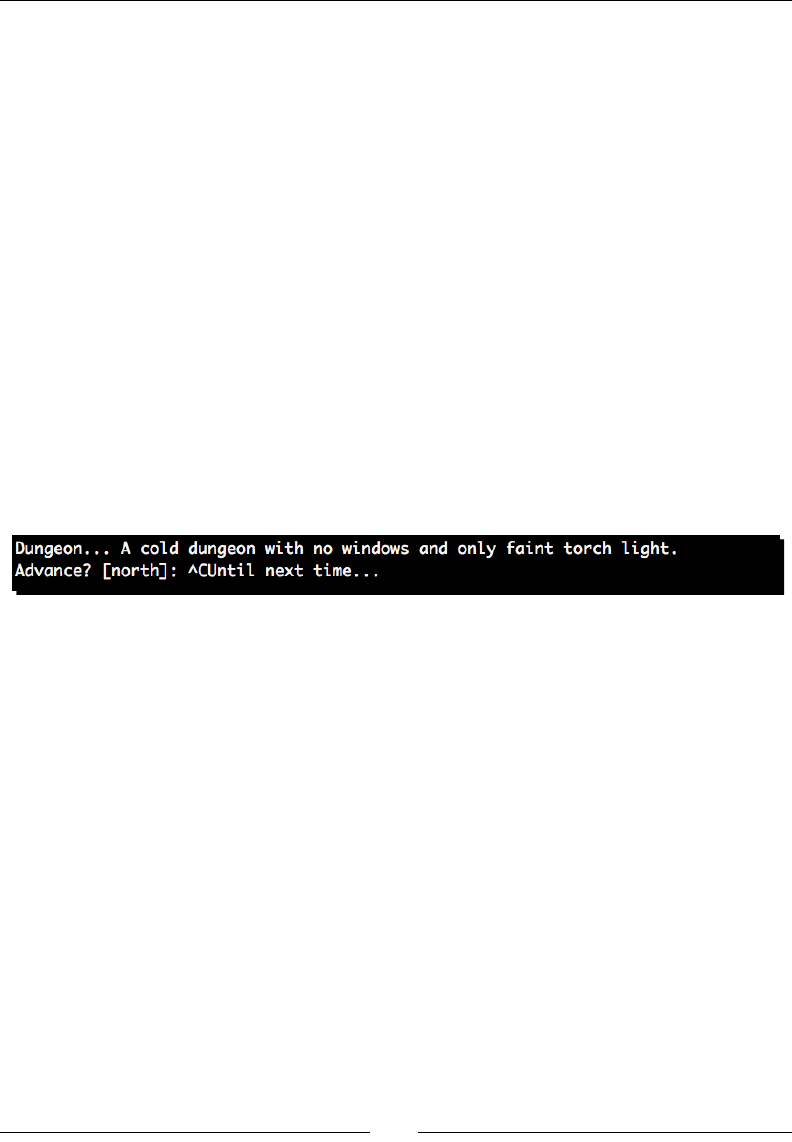

- Time for action – creating a dungeon adventure game

- The Document Object Model

- Time for action – updating our game to use DOM processing

- XPath

- Time for action – using XPath in our adventure

- Reading HTML

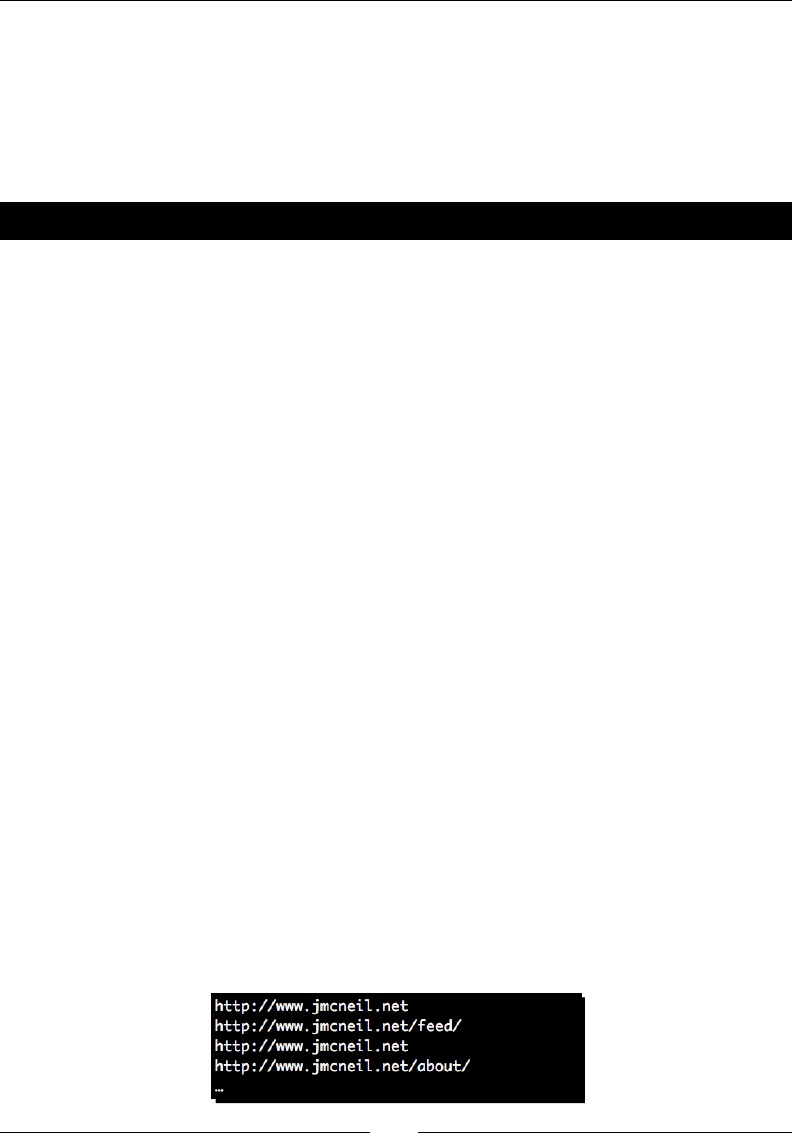

- Time for action – displaying links in an HTML page

- Summary

- Chapter 7:

Creating Templates

- Time for action – installing Mako

- Basic Mako usage

- Time for action – loading a simple Mako template

- Time for action – reformatting the date with Python code

- Time for action – defining Mako def tags

- Time for action – converting mail message to use namespaces

- Inheriting from base templates

- Time for action – updating base template

- Time for action – adding another inheritance layer

- Customizing

- Time for action – creating custom Mako tags

- Overviewing alternative approaches

- Summary

- Chapter 8:

Understanding Encodings and i18n

- Understanding basic character encodings

- Unicode

- Encodings in Python

- Time for action – manually decoding

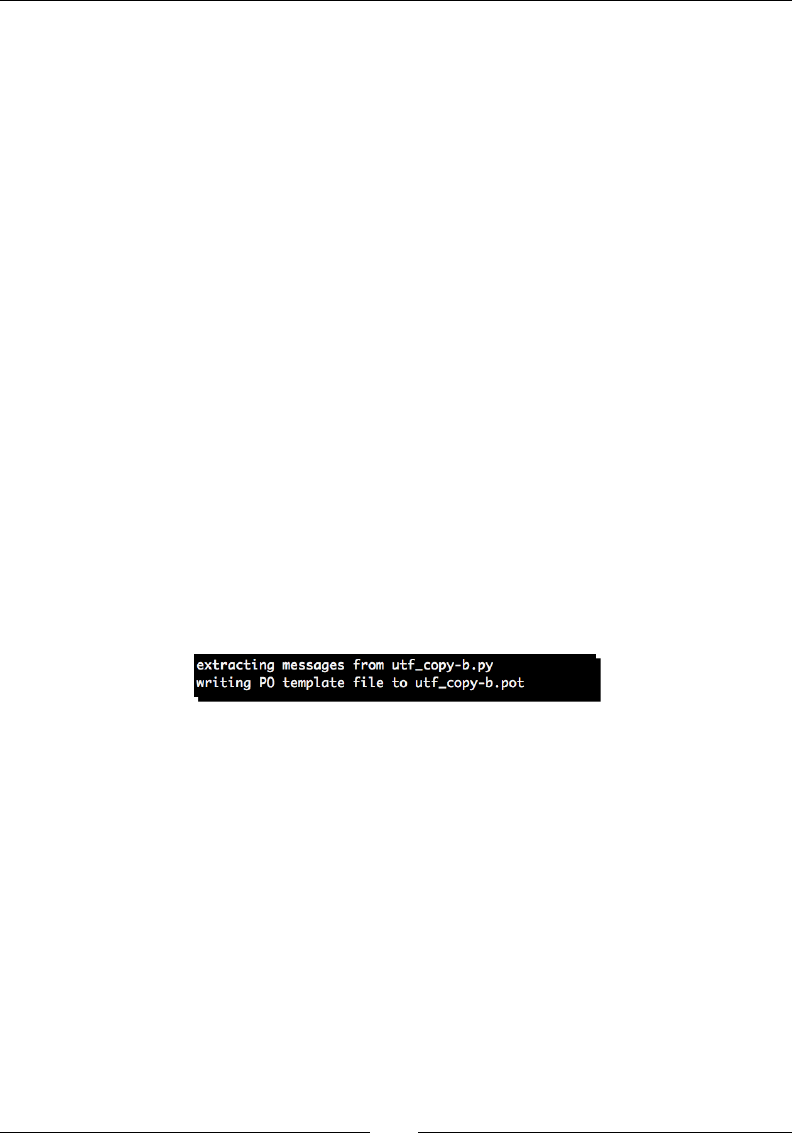

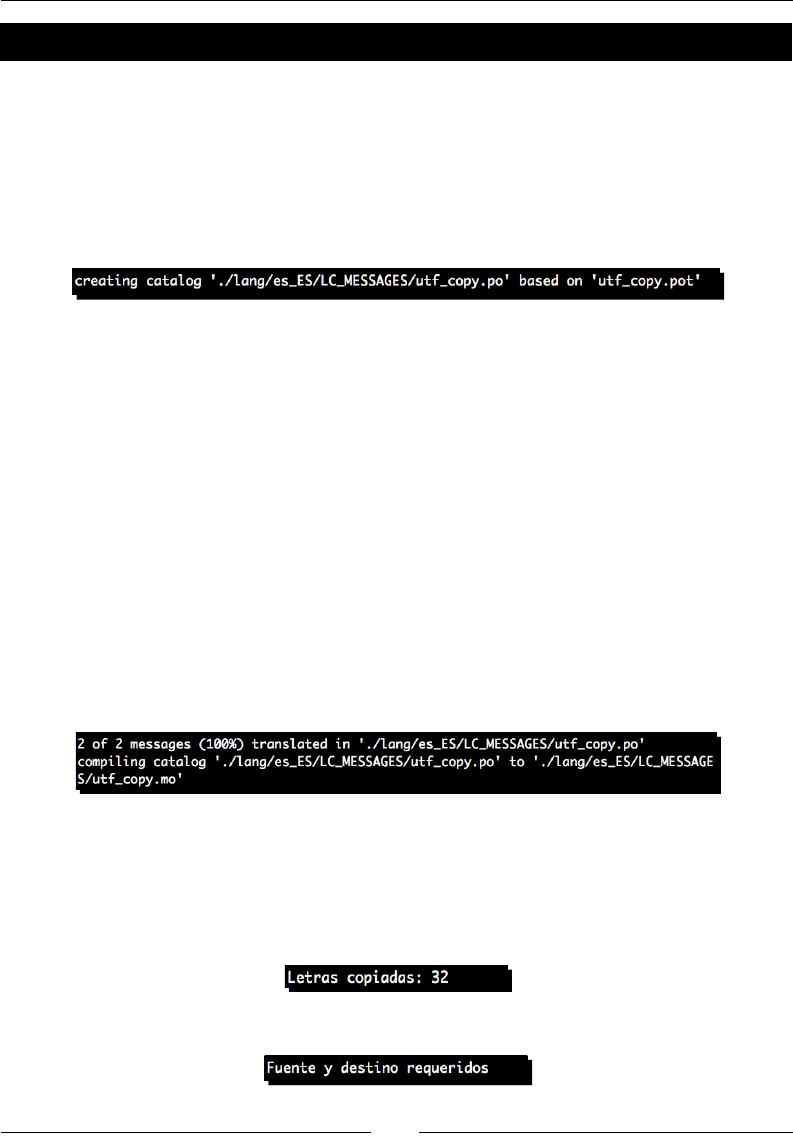

- Time for action – copying Unicode data

- Time for action – fixing our copy application

- The codecs module

- Time for action – changing encodings

- Adopting good practices

- Internationalization and Localization

- Time for action – preparing for multiple languages

- Time for action – providing translations

- Summary

- Chapter 9:

Advanced Output Formats

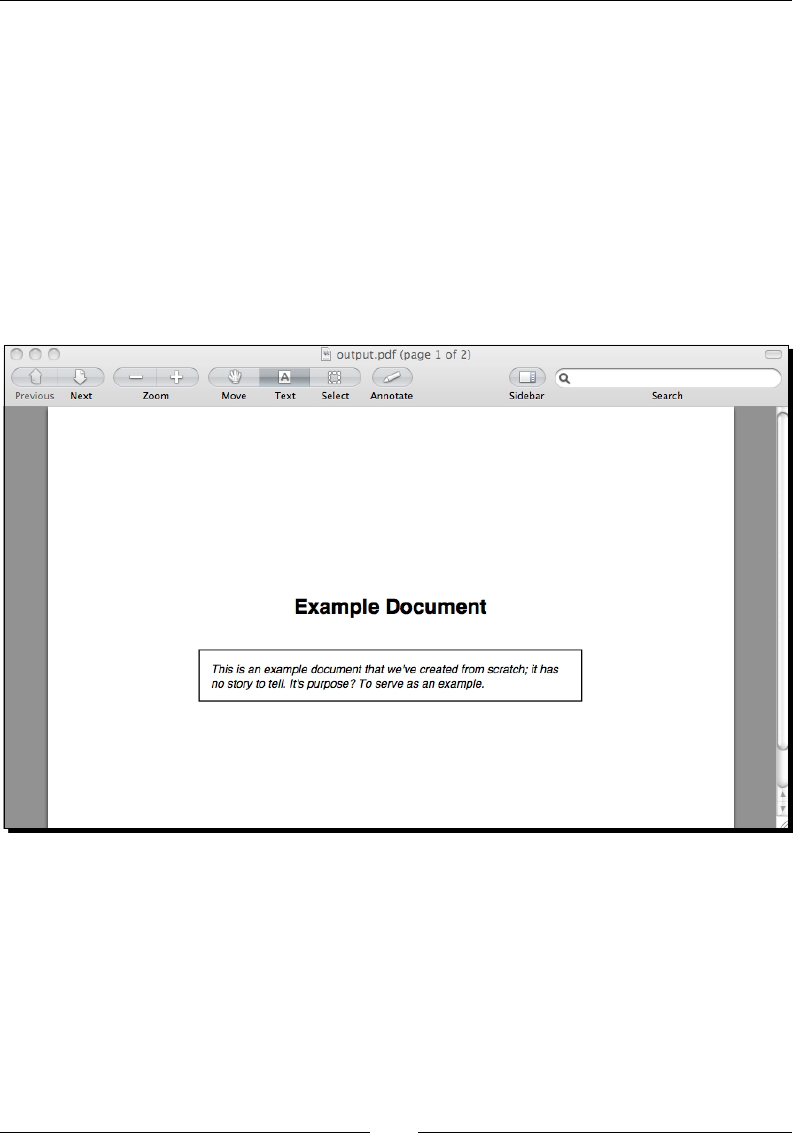

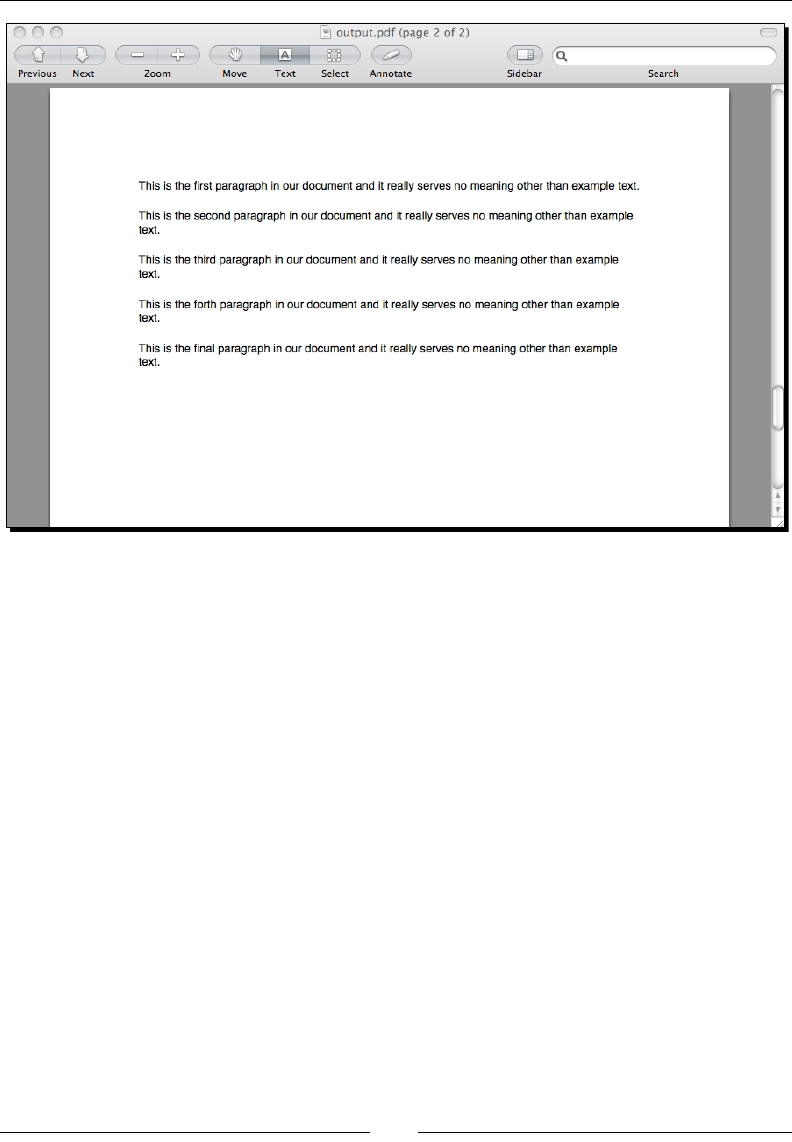

- Dealing with PDF files using PLATYPUS

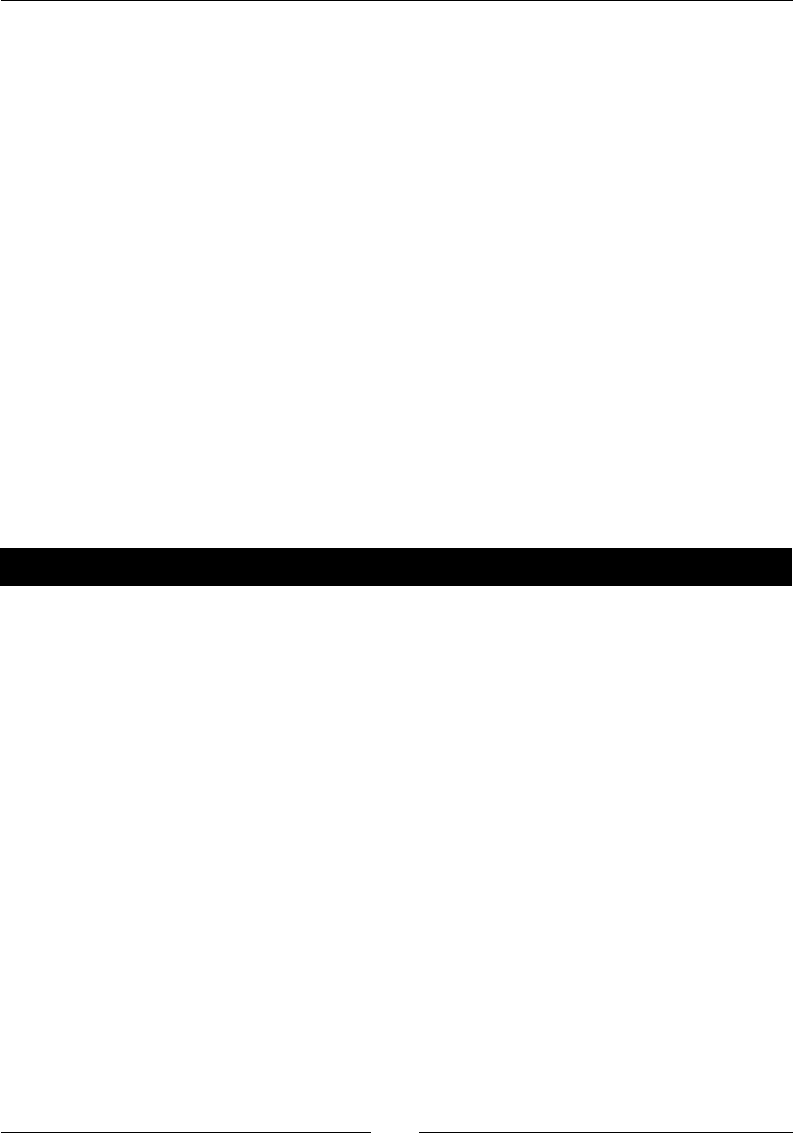

- Time for action – installing ReportLab

- Time for action – writing PDF with basic layout and style

- Writing native Excel data

- Time for action – installing xlwt

- Time for action – generating XLS data

- Working with OpenDocument files

- Time for action – installing ODFPy

- Time for action – generating ODT data

- Summary

- Chapter 10: Advanced Parsing and Grammars

- Chapter 11:

Searching and Indexing

- Understanding search complexity

- Time for action – implementing a linear search

- Text indexing

- Time for action – installing Nucular

- Time for action – full text indexing

- Time for action – measuring index benefit

- Time for action – field-qualified indexes

- Time for action – performing advanced Nucular queries

- Indexing and searching other data

- Time for action – indexing Open Office documents

- Other index systems

- Summary

- Appendix A: Looking for Additional Resources

- Appendix B:

Pop Quiz Answers

- Chapter 1: Getting Started

- Chapter 2: Working with the IO System

- Chapter 3: Python String Services

- Chapter 4: Text Processing Using the Standard Library

- Chapter 5: Regular Expressions

- Chapter 6: Structured Markup

- Chapter 7: Creating Templates

- Chapter 8: Understanding Encoding and i18n

- Chapter 9: Advanced Output Formats

- Chapter 11: Searching and Indexing

- Index

Python 2.6 Text Processing

Beginner's Guide

The easiest way to learn how to manipulate text with Python

Je McNeil

BIRMINGHAM - MUMBAI

Python 2.6 Text Processing

Beginner's Guide

Copyright © 2010 Packt Publishing

All rights reserved. No part of this book may be reproduced, stored in a retrieval system,

or transmied in any form or by any means, without the prior wrien permission of the

publisher, except in the case of brief quotaons embedded in crical arcles or reviews.

Every eort has been made in the preparaon of this book to ensure the accuracy of the

informaon presented. However, the informaon contained in this book is sold without

warranty, either express or implied. Neither the author, nor Packt Publishing, and its dealers

and distributors will be held liable for any damages caused or alleged to be caused directly or

indirectly by this book.

Packt Publishing has endeavored to provide trademark informaon about all of the

companies and products menoned in this book by the appropriate use of capitals.

However, Packt Publishing cannot guarantee the accuracy of this informaon.

First published: December 2010

Producon Reference: 1081210

Published by Packt Publishing Ltd.

32 Lincoln Road

Olton

Birmingham, B27 6PA, UK.

ISBN 978-1-849512-12-1

www.packtpub.com

Cover Image by John Quick (john@johnmquick.com)

Credits

Author

Je McNeil

Reviewer

Maurice HT Ling

Acquision Editor

Steven Wilding

Development Editor

Reshma Sundaresan

Technical Editor

Gauri Iyer

Indexer

Tejal Daruwale

Editorial Team Leader

Mithun Sehgal

Project Team Leader

Priya Mukherji

Project Coordinator

Shubhanjan Chaerjee

Proofreader

Jonathan Todd

Graphics

Nilesh R. Mohite

Producon Coordinator

Kruthika Bangera

Cover Work

Kruthika Bangera

About the Author

Je McNeil has been working in the Internet Services industry for over 10 years. He cut

his teeth during the late 90's Internet boom and has been developing soware for Unix and

Unix-avored systems ever since. Je has been a full-me Python developer for the beer

half of that me and has professional experience with a collecon of other languages,

including C, Java, and Perl. He takes an interest in systems administraon and server

automaon problems. Je recently joined Google and has had the pleasure of working with

some very talented individuals.

I'd like to above all thank Julie, Savannah, Phoebe, Maya, and Trixie for

allowing me to lock myself in the oce every night for months. The

Web.com gang and those in the Python community willing to share their

authoring experiences. Finally, Steven Wilding, Reshma Sundaresan,

Shubhanjan Chaerjee, and the rest of the Packt Publishing team for all of

the hard work and guidance.

About the Reviewer

Maurice HT Ling completed his Ph.D. in Bioinformacs and B.Sc(Hons) in Molecular and

Cell Biology from the University of Melbourne where he worked on microarray analysis

and text mining for protein-protein interacons. He is currently an honorary fellow in the

University of Melbourne, Australia. Maurice holds several Chief Editorships, including the

Python papers, Computaonal, and Mathemacal Biology, and Methods and Cases in

Computaonal, Mathemacal and Stascal Biology. In Singapore, he co-founded the Python

User Group (Singapore) and is the co-chair of PyCon Asia-Pacic 2010. In his free me,

Maurice likes to train in the gym, read, and enjoy a good cup of coee. He is also a senior

fellow of the Internaonal Fitness Associaon, USA.

www.PacktPub.com

Support les, eBooks, discount offers and more

You might want to visit www.PacktPub.com for support les and downloads related

to your book.

Did you know that Packt oers eBook versions of every book published, with PDF and ePub

les available? You can upgrade to the eBook version at www.PacktPub.com, and as a print

book customer, you are entled to a discount on the eBook copy. Get in touch with us at

service@packtpub.com for more details.

At www.PacktPub.com, you can also read a collecon of free technical arcles, sign up for a

range of free newsleers, and receive exclusive discounts and oers on Packt books and eBooks.

http://PacktLib.PacktPub.com

Do you need instant soluons to your IT quesons? PacktLib is Packt's online digital book

library. Here, you can access, read, and search across Packt's enre library of books.

Why Subscribe?

Fully searchable across every book published by Packt

Copy and paste, print, and bookmark content

On demand and accessible via web browser

Free Access for Packt account holders

If you have an account with Packt at www.PacktPub.com, you can use this to access

PacktLib today and view nine enrely free books. Simply use your login credenals for

immediate access.

Table of Contents

Preface 1

Chapter 1: Geng Started 7

Categorizing types of text data 8

Providing informaon through markup 8

Meaning through structured formats 9

Understanding freeform content 9

Ensuring you have Python installed 9

Providing support for Python 3 10

Implemenng a simple cipher 10

Time for acon – implemenng a ROT13 encoder 11

Processing structured markup with a lter 15

Time for acon – processing as a lter 15

Time for acon – skipping over markup tags 18

State machines 22

Supporng third-party modules 23

Packaging in a nutshell 23

Time for acon – installing SetupTools 23

Running a virtual environment 25

Conguring virtualenv 25

Time for acon – conguring a virtual environment 25

Where to get help? 28

Summary 28

Chapter 2: Working with the IO System 29

Parsing web server logs 30

Time for acon – generang transfer stascs 31

Using objects interchangeably 35

Time for acon – introducing a new log format 35

Accessing les directly 37

Table of Contents

[ ii ]

Time for acon – accessing les directly 37

Context managers 39

Handling other le types 41

Time for acon – handling compressed les 41

Implemenng le-like objects 42

File object methods 43

Enabling universal newlines 45

Accessing mulple les 45

Time for acon – spell-checking HTML content 46

Simplifying mulple le access 50

Inplace ltering 51

Accessing remote les 52

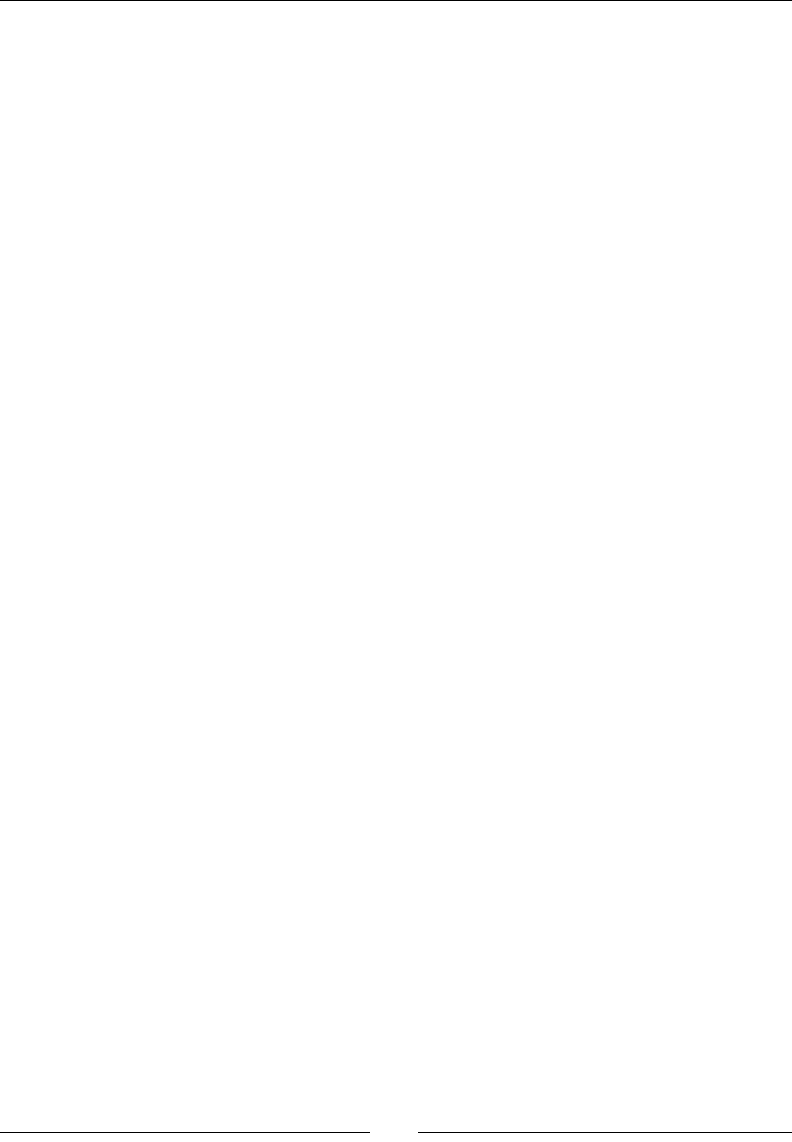

Time for acon – spell-checking live HTML pages 52

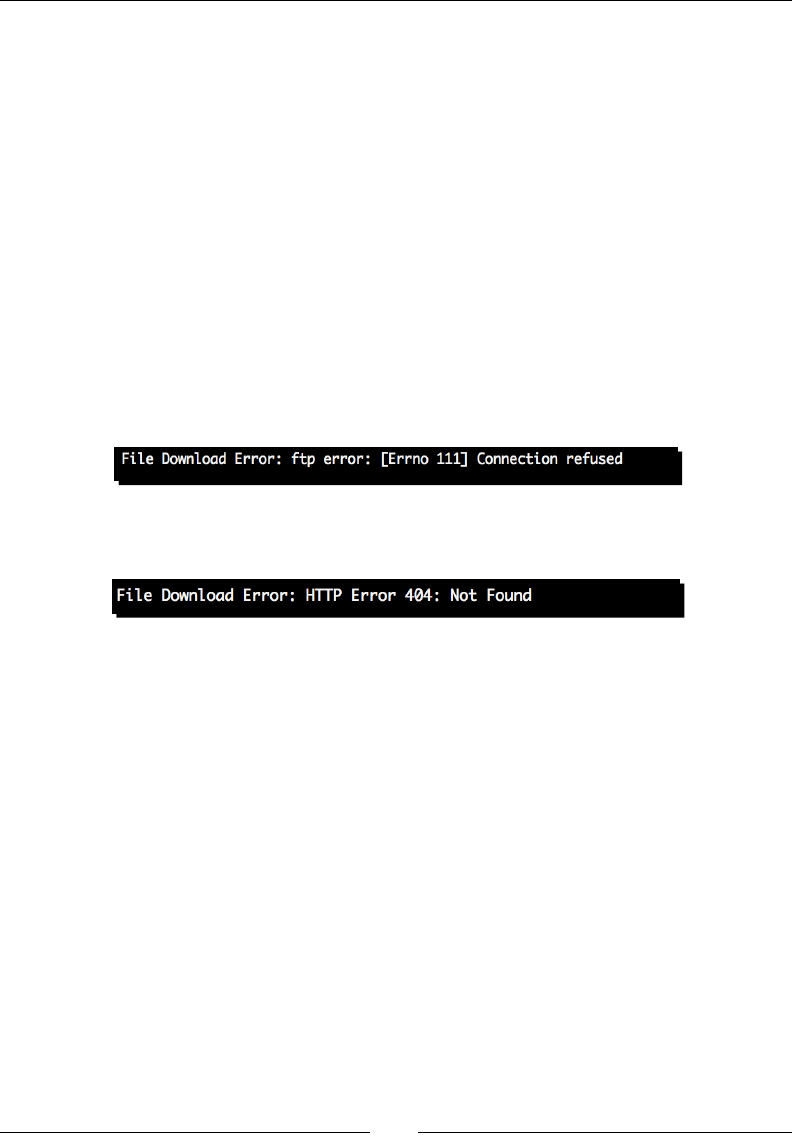

Error handling 55

Time for acon – handling urllib 2 errors 55

Handling string IO instances 57

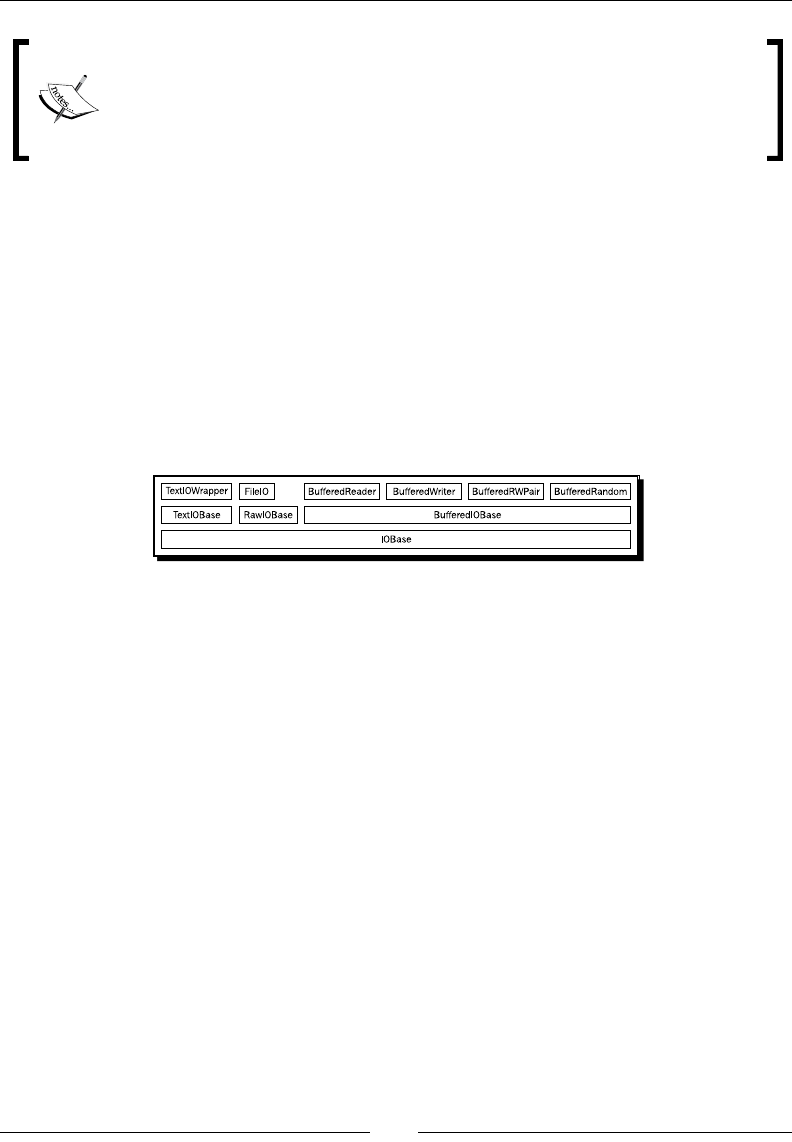

Understanding IO in Python 3 58

Summary 59

Chapter 3: Python String Services 61

Understanding the basics of string object 61

Dening strings 62

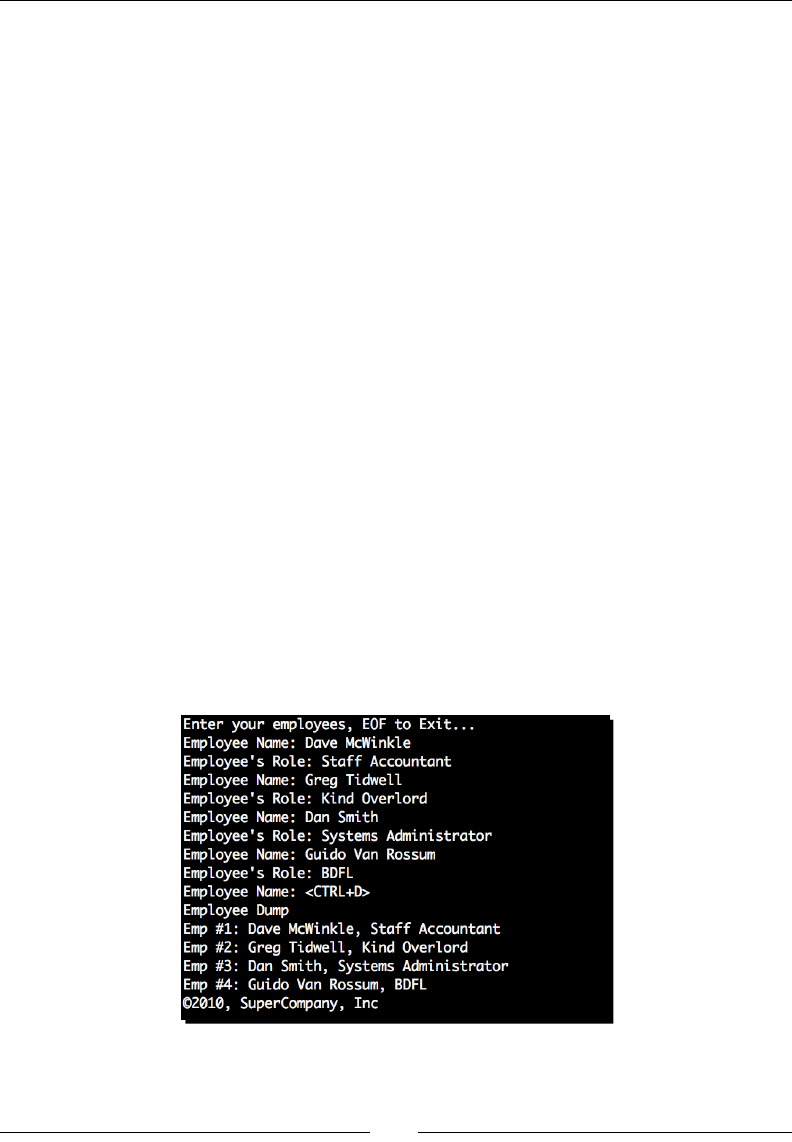

Time for acon – employee management 62

Building non-literal strings 68

String formang 68

Time for acon – customizing log processor output 68

Percent (modulo) formang 74

Mapping key 75

Conversion ags 76

Minimum width 76

Precision 76

Width 77

Conversion type 77

Using the format method approach 78

Time for acon – adding status code data 79

Making use of conversion speciers 83

Creang templates 86

Time for acon – displaying warnings on malformed lines 86

Template syntax 88

Rendering a template 88

Calling string object methods 89

Time for acon – simple manipulaon with string methods 89

Aligning text 92

Table of Contents

[ iii ]

Detecng character classes 92

Casing 93

Searching strings 93

Dealing with lists of strings 94

Treang strings as sequences 95

Summary 96

Chapter 4: Text Processing Using the Standard Library 97

Reading CSV data 98

Time for acon – processing Excel formats 98

Time for acon – CSV and formulas 101

Reading non-Excel data 103

Time for acon – processing custom CSV formats 103

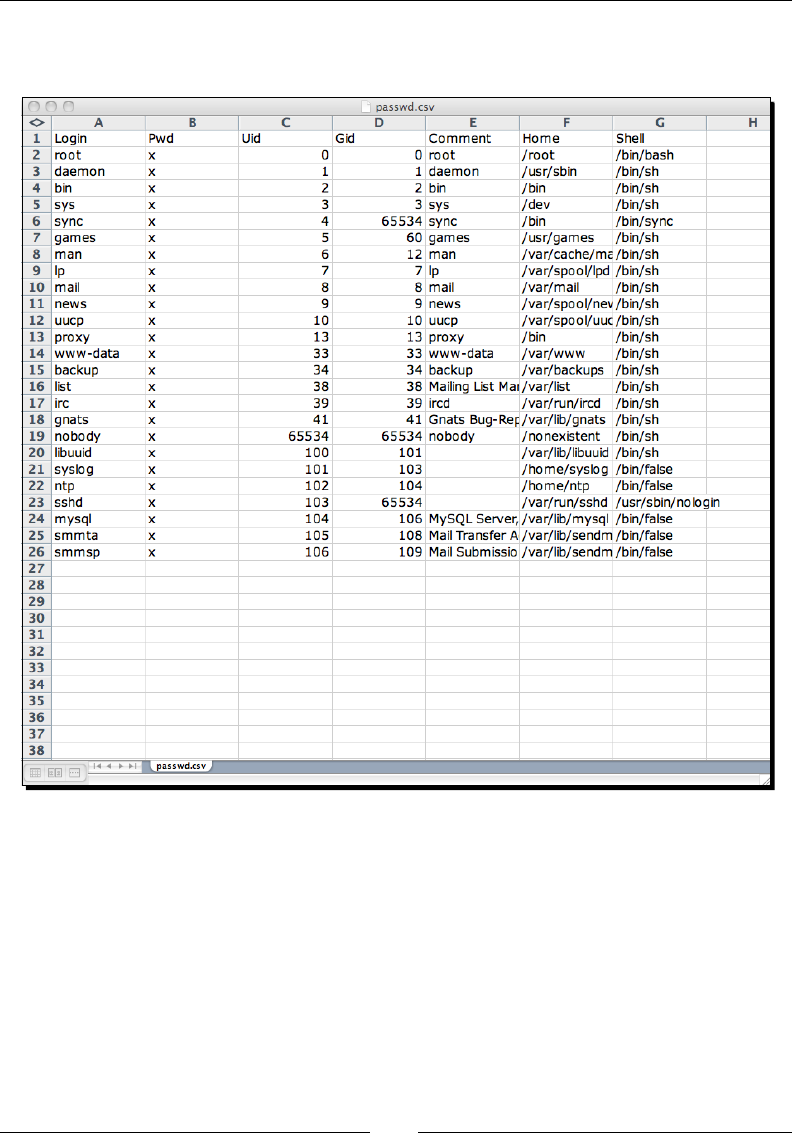

Wring CSV data 106

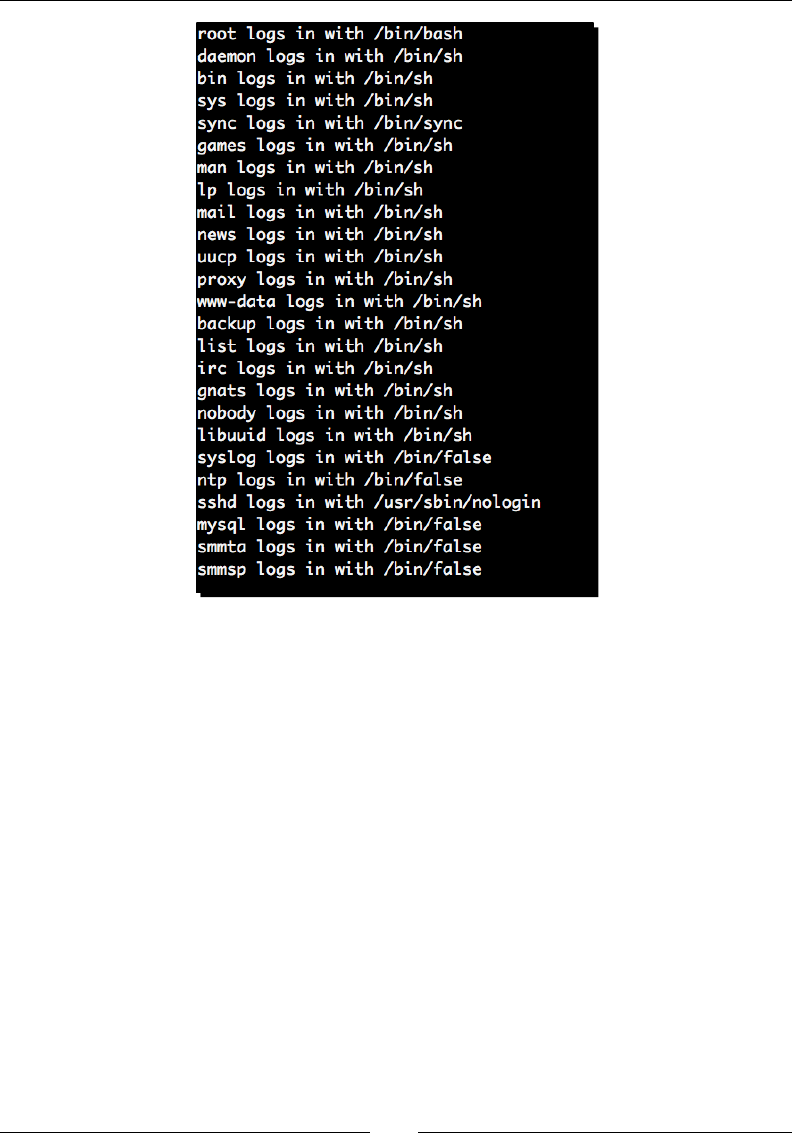

Time for acon – creang a spreadsheet of UNIX users 106

Modifying applicaon conguraon les 110

Time for acon – adding basic conguraon read support 110

Using value interpolaon 114

Time for acon – relying on conguraon value interpolaon 114

Handling default opons 116

Time for acon – conguraon defaults 116

Wring conguraon data 118

Time for acon – generang a conguraon le 119

Reconguring our source 122

A note on Python 3 122

Time for acon – creang an egg-based package 122

Understanding the setup.py le 131

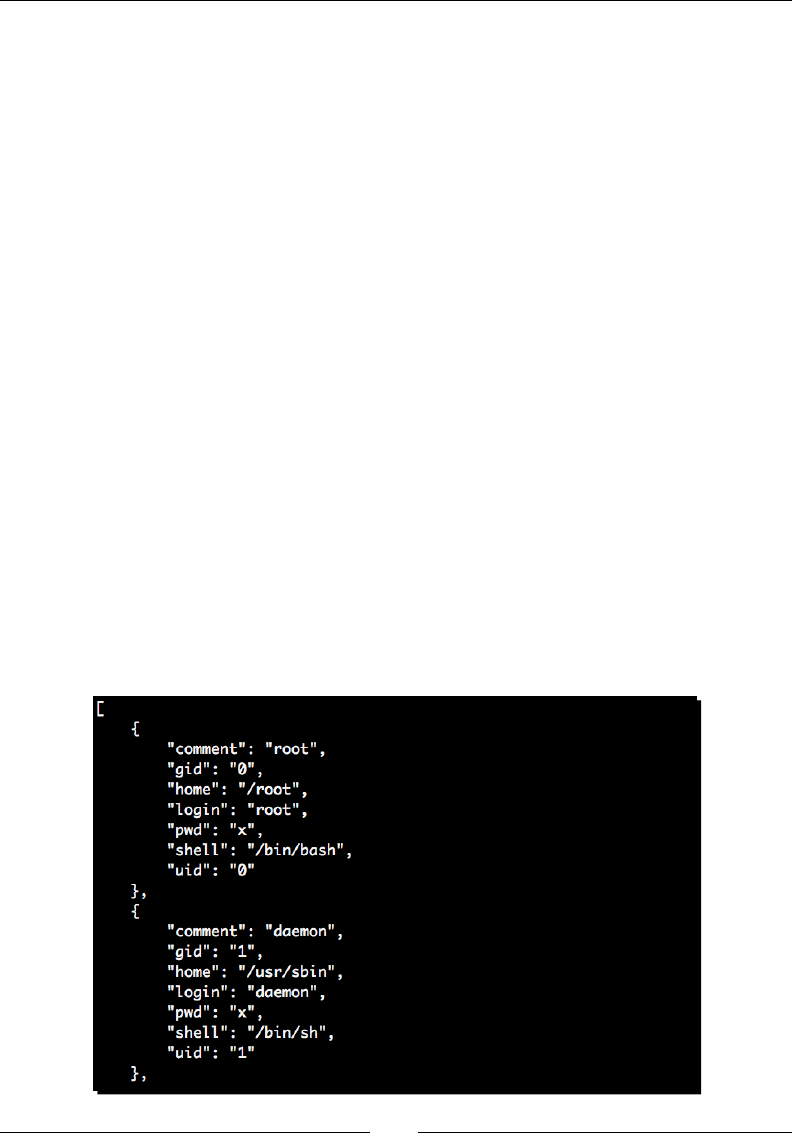

Working with JSON 132

Time for acon – wring JSON data 132

Encoding data 134

Decoding data 135

Summary 136

Chapter 5: Regular Expressions 137

Simple string matching 138

Time for acon – tesng an HTTP URL 138

Understanding the match funcon 140

Learning basic syntax 140

Detecng repeon 140

Specifying character sets and classes 141

Applying anchors to restrict matches 143

Wrapping it up 144

Table of Contents

[ iv ]

Advanced paern matching 145

Grouping 145

Time for acon – regular expression grouping 146

Using greedy versus non-greedy operators 149

Asserons 150

Performing an 'or' operaon 152

Implemenng Python-specic elements 153

Other search funcons 153

search 153

ndall and nditer 153

split 154

sub 154

Compiled expression objects 155

Dealing with performance issues 156

Parser ags 156

Unicode regular expressions 157

The match object 158

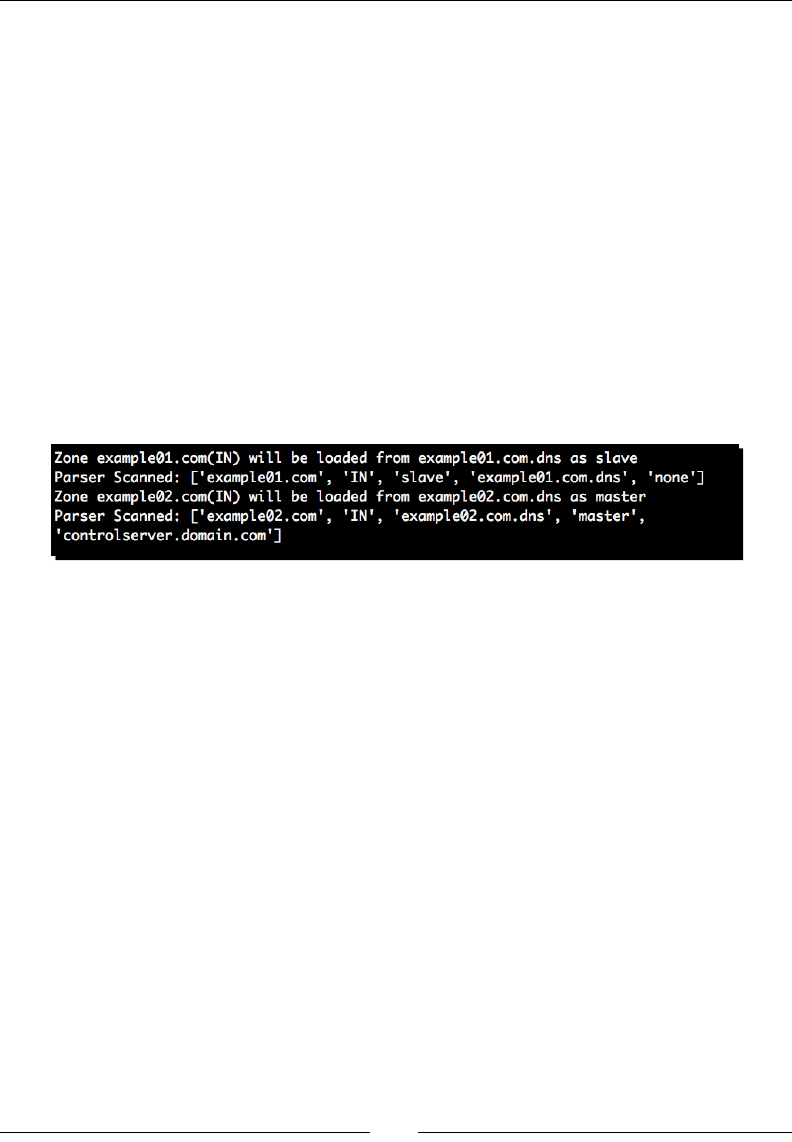

Processing bind zone les 158

Time for acon – reading DNS records 159

Summary 164

Chapter 6: Structured Markup 165

XML data 166

SAX processing 168

Time for acon – event-driven processing 168

Incremental processing 171

Time for acon – driving incremental processing 171

Building an applicaon 172

Time for acon – creang a dungeon adventure game 172

The Document Object Model 176

xml.dom.minidom 176

Time for acon – updang our game to use DOM processing 176

Creang and modifying documents programmacally 183

XPath 185

Accessing XML data using ElementTree 186

Time for acon – using XPath in our adventure 187

Reading HTML 194

Time for acon – displaying links in an HTML page 194

BeaufulSoup 195

Summary 196

Table of Contents

[ v ]

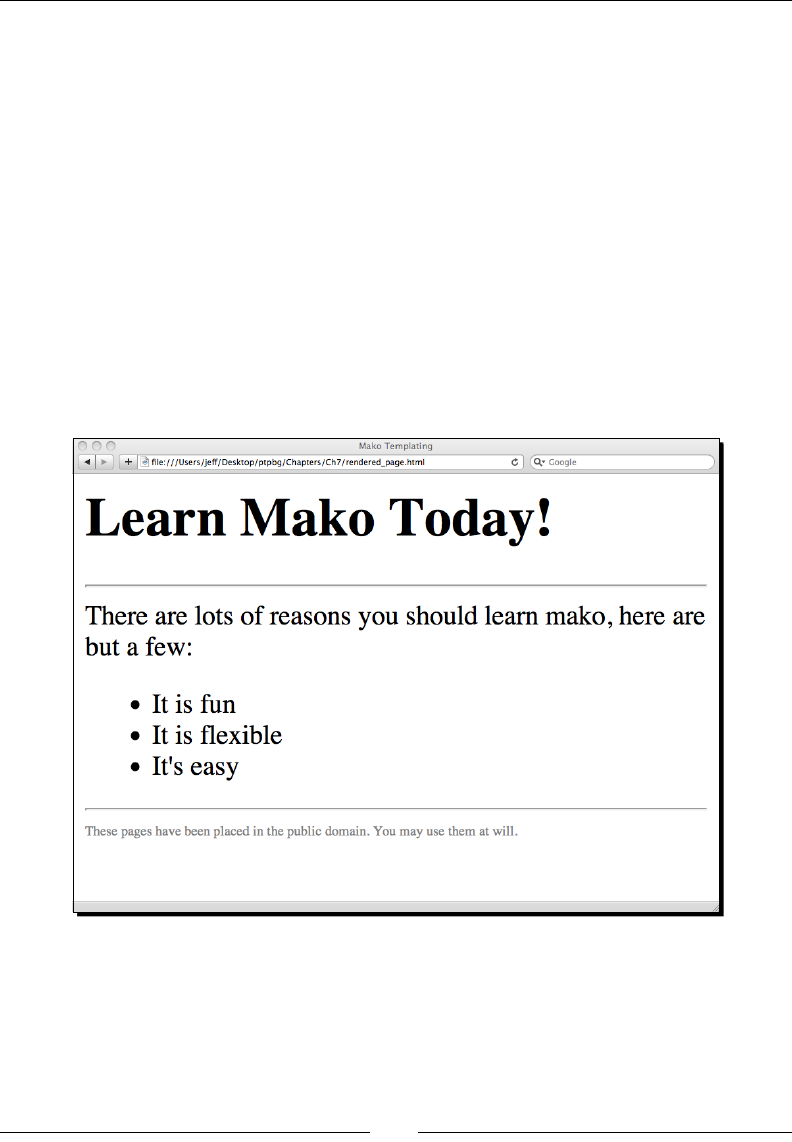

Chapter 7: Creang Templates 197

Time for acon – installing Mako 198

Basic Mako usage 199

Time for acon – loading a simple Mako template 199

Generang a template context 203

Managing execuon with control structures 204

Including Python code 205

Time for acon – reformang the date with Python code 205

Adding funconality with tags 206

Rendering les with %include 206

Generang mulline comments with %doc 207

Documenng Mako with %text 207

Dening funcons with %def 208

Time for acon – dening Mako def tags 208

Imporng %def secons using %namespace 210

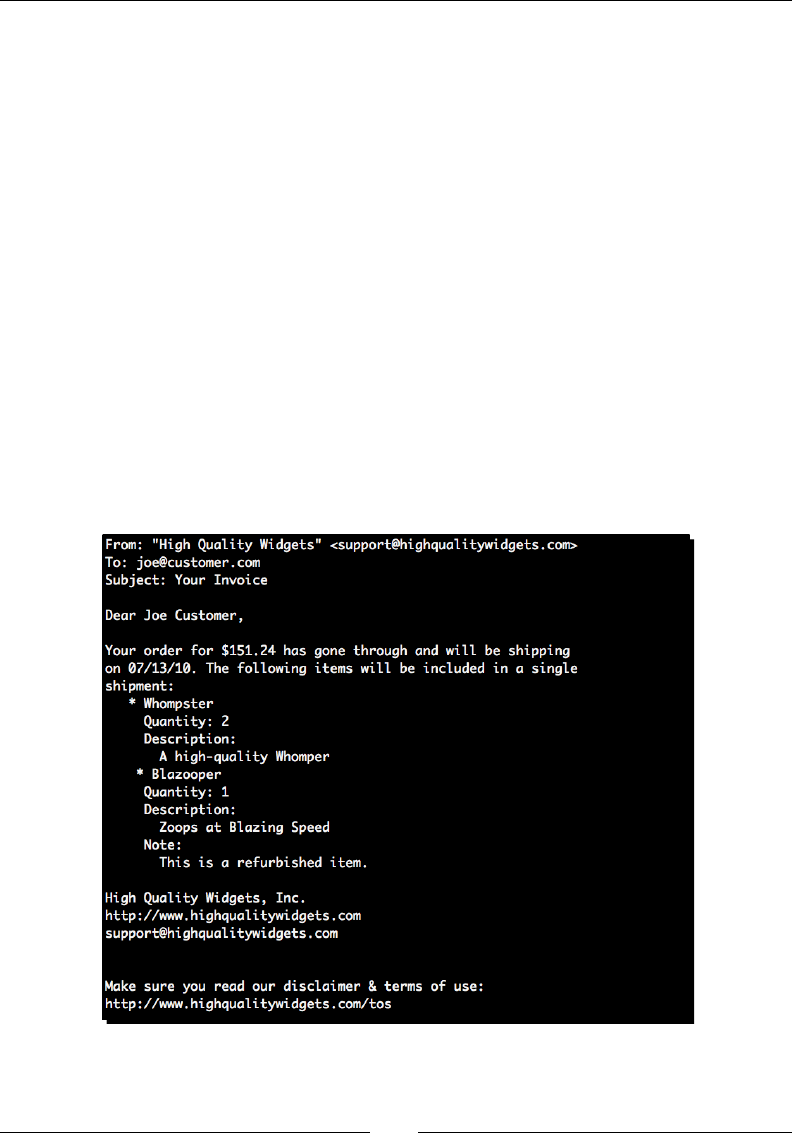

Time for acon – converng mail message to use namespaces 210

Filtering output 213

Expression lters 214

Filtering the output of %def blocks 214

Seng default lters 215

Inhering from base templates 215

Time for acon – updang base template 215

Growing the inheritance chain 218

Time for acon – adding another inheritance layer 219

Inhering aributes 221

Customizing 222

Custom tags 222

Time for acon – creang custom Mako tags 223

Customizing lters 226

Overviewing alternave approaches 226

Summary 227

Chapter 8: Understanding Encodings and i18n 229

Understanding basic character encodings 230

ASCII 230

Limitaons of ASCII 231

KOI8-R 232

Unicode 232

Using Unicode with Python 3 233

Understanding Unicode 234

Design goals 234

Organizaonal structure 236

Backwards compability 236

Table of Contents

[ vi ]

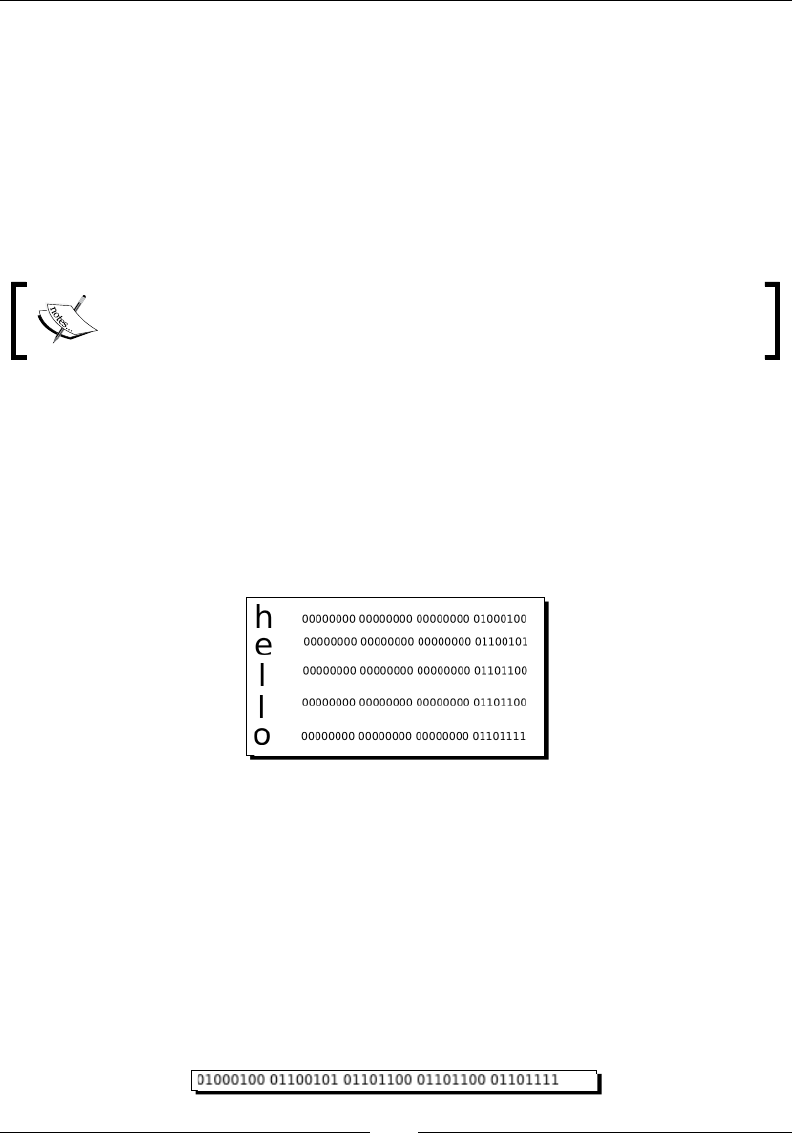

Encoding 237

UTF-32 237

UTF-8 237

Encodings in Python 238

Time for acon – manually decoding 239

Reading Unicode 240

Wring Unicode strings 241

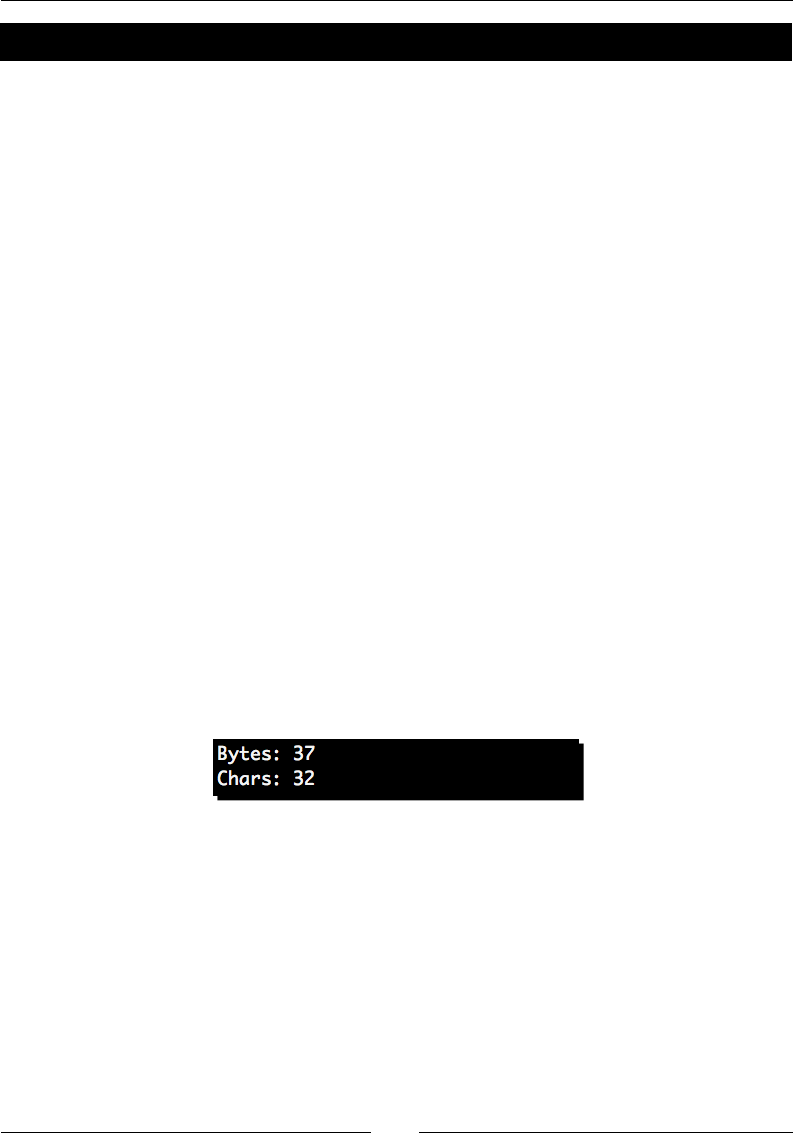

Time for acon – copying Unicode data 242

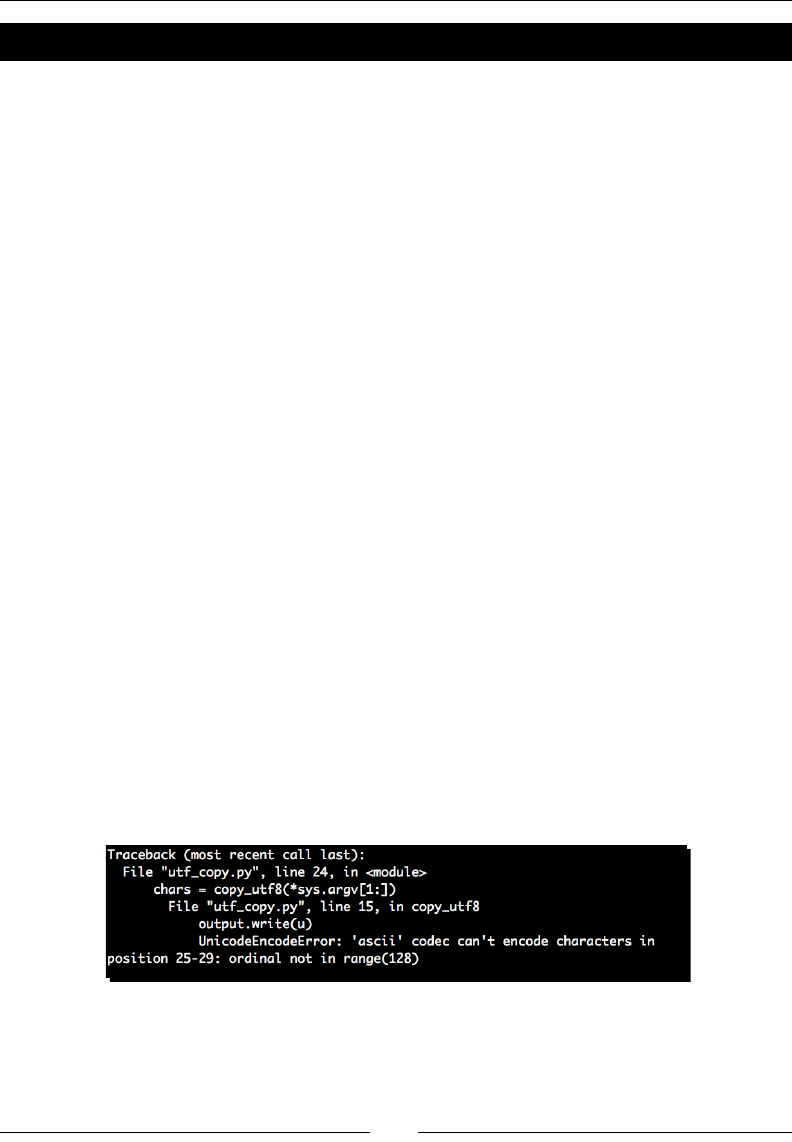

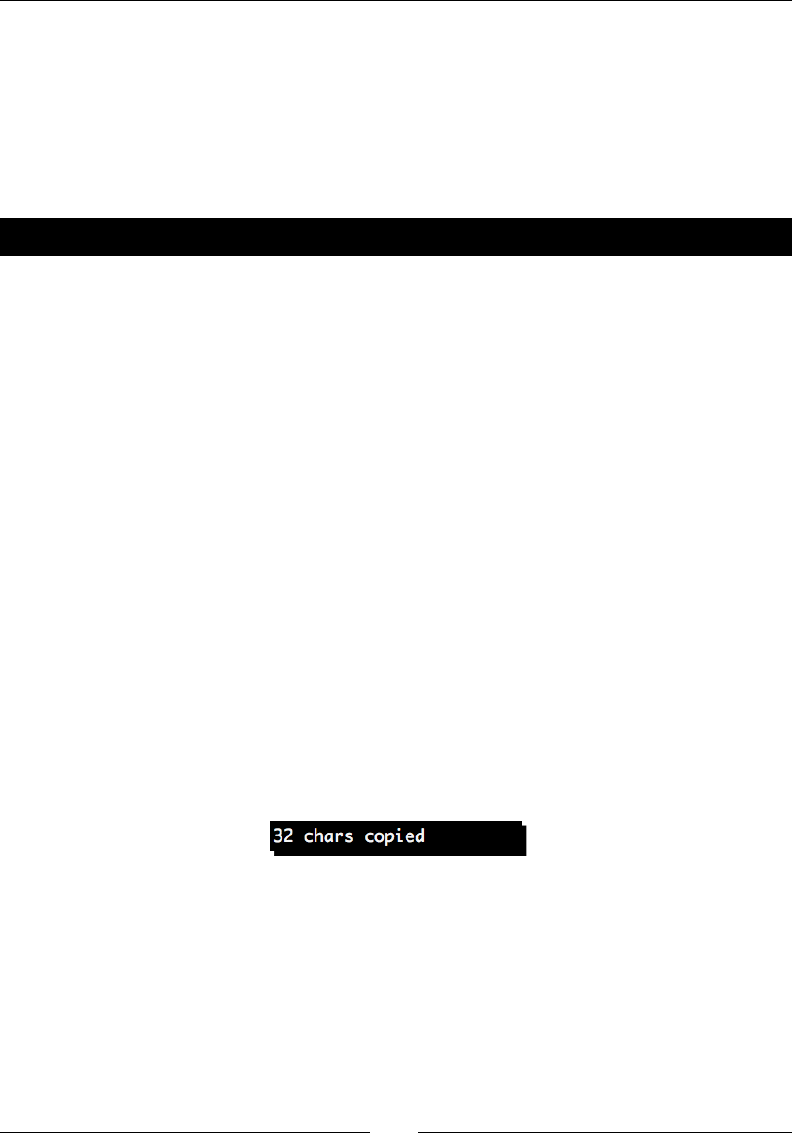

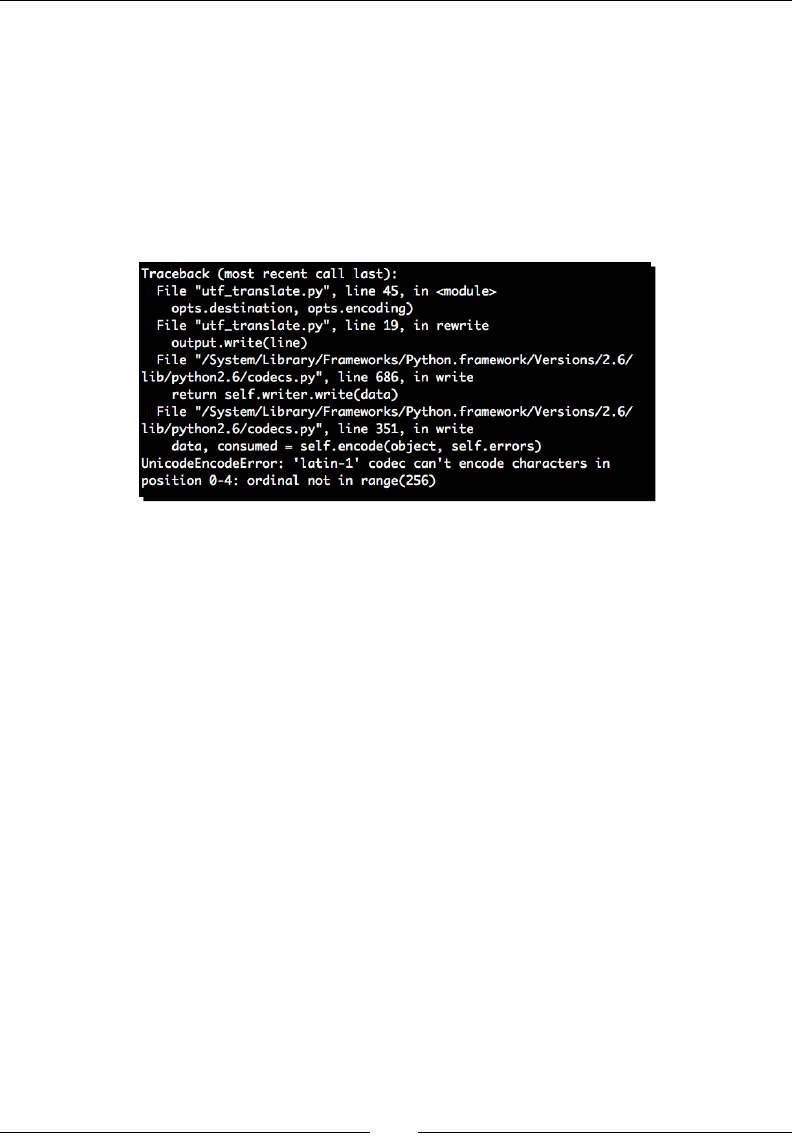

Time for acon – xing our copy applicaon 244

The codecs module 245

Time for acon – changing encodings 245

Adopng good pracces 248

Internaonalizaon and Localizaon 249

Preparing an applicaon for translaon 250

Time for acon – preparing for mulple languages 250

Time for acon – providing translaons 253

Looking for more informaon on internaonalizaon 254

Summary 255

Chapter 9: Advanced Output Formats 257

Dealing with PDF les using PLATYPUS 258

Time for acon – installing ReportLab 258

Generang PDF documents 259

Time for acon – wring PDF with basic layout and style 259

Wring nave Excel data 266

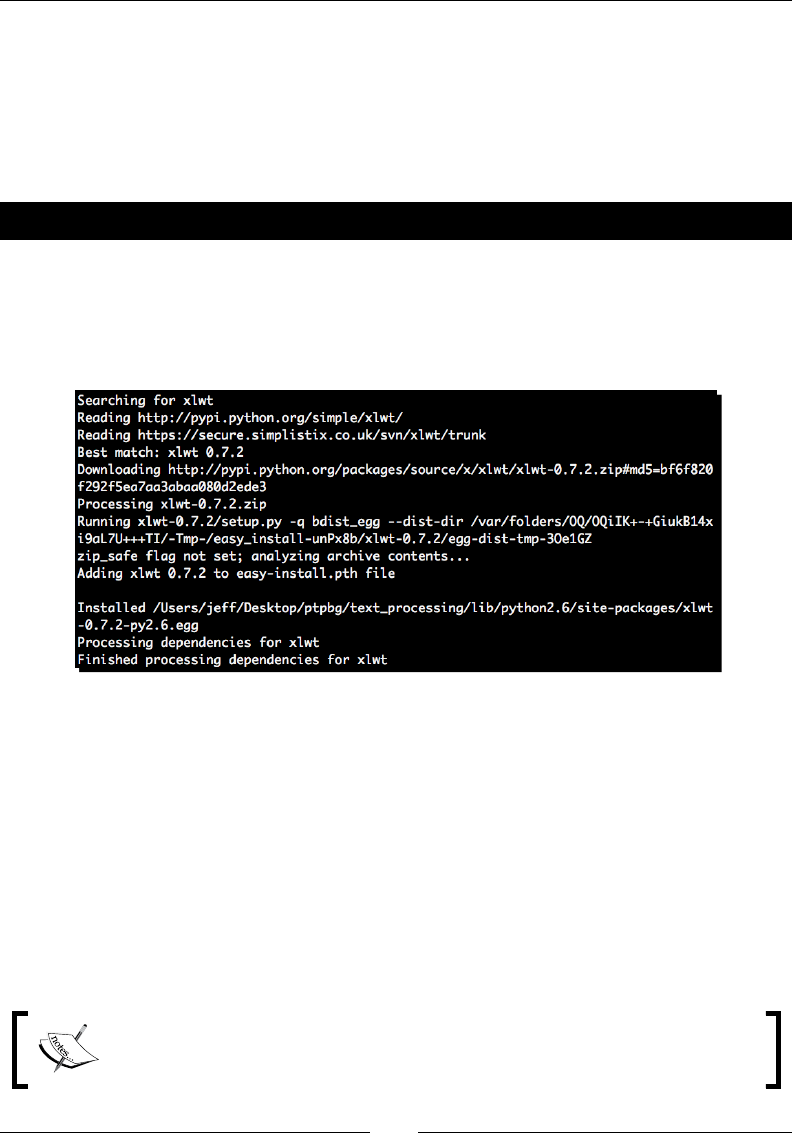

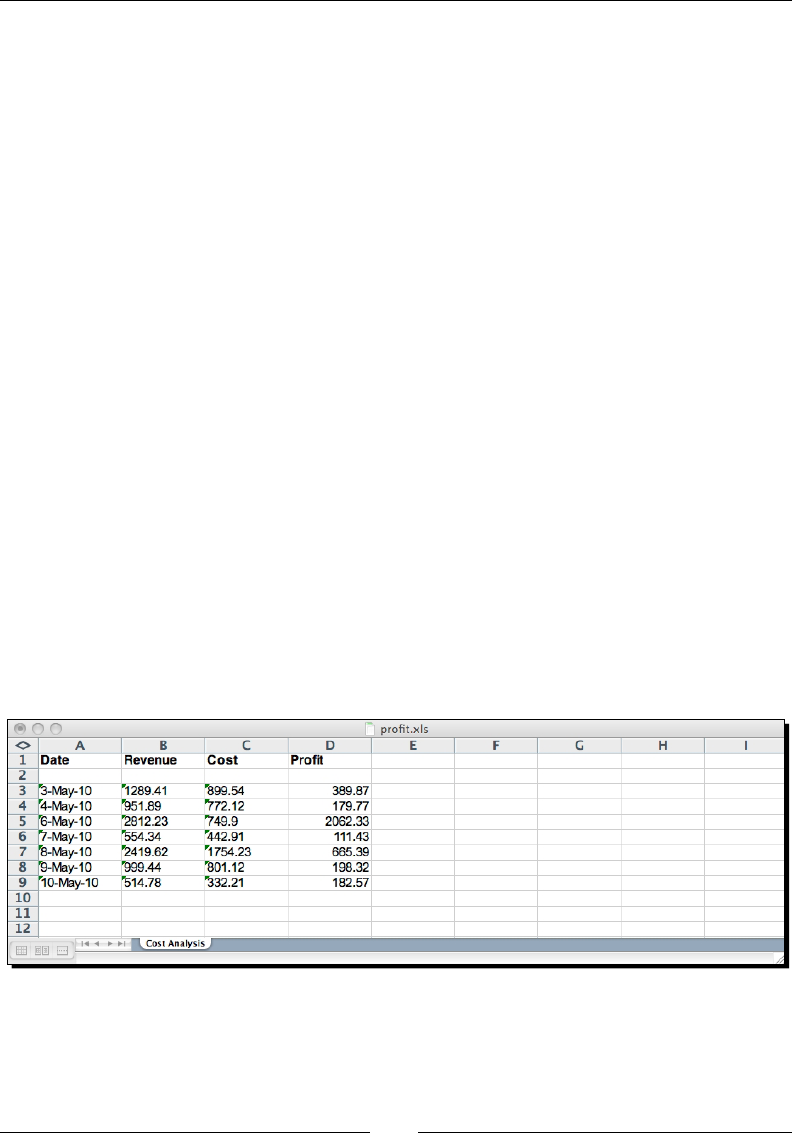

Time for acon – installing xlwt 266

Building XLS documents 267

Time for acon – generang XLS data 267

Working with OpenDocument les 271

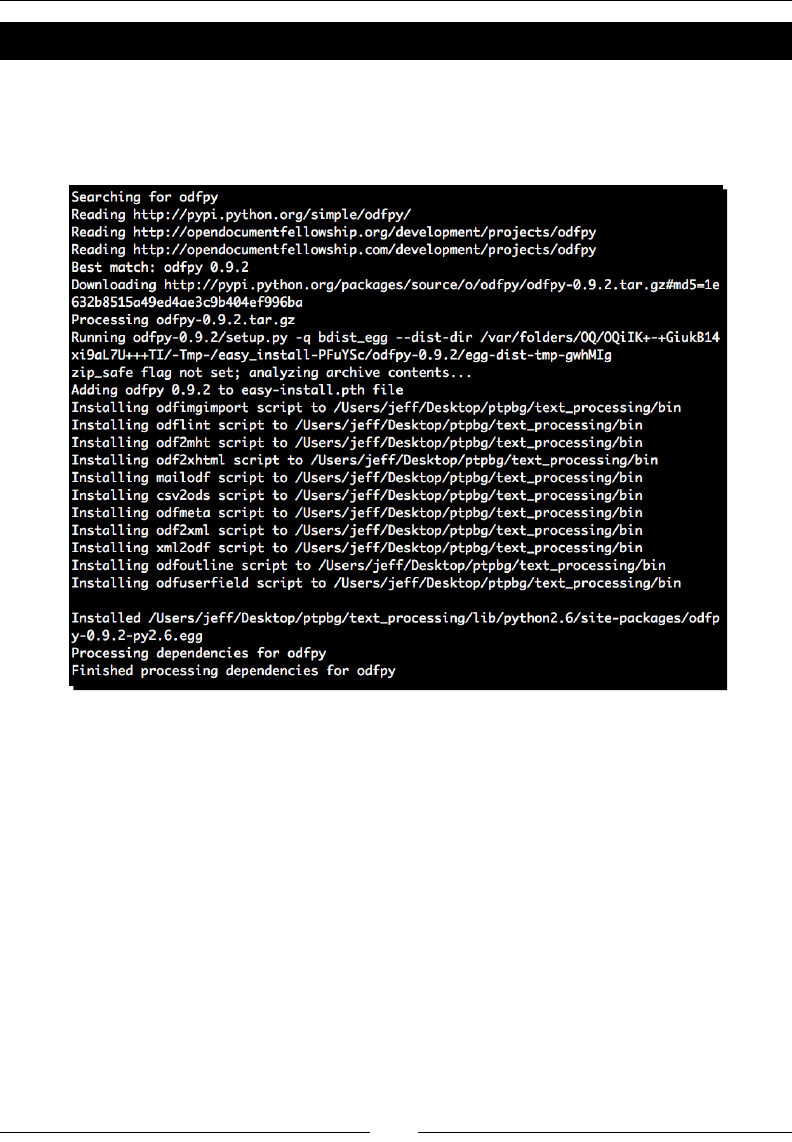

Time for acon – installing ODFPy 272

Building an ODT generator 273

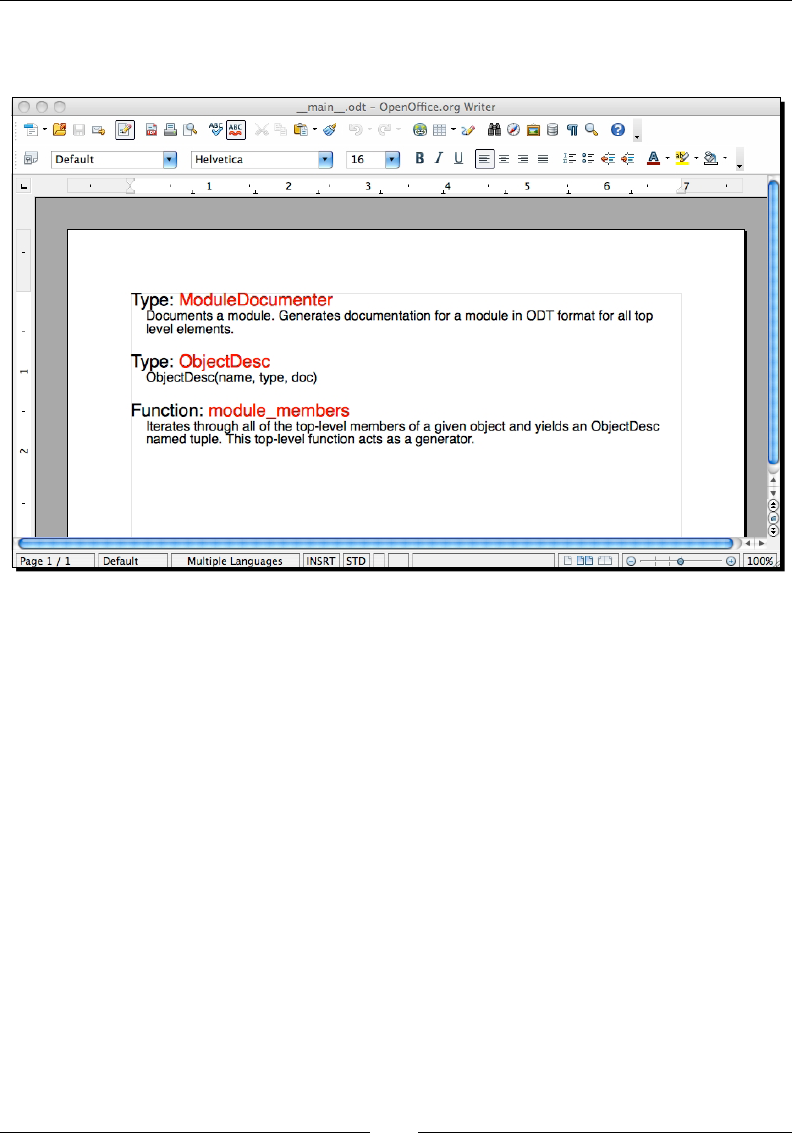

Time for acon – generang ODT data 273

Summary 277

Chapter 10: Advanced Parsing and Grammars 279

Dening a language syntax 280

Specifying grammar with Backus-Naur Form 281

Grammar-driven parsing 282

PyParsing 283

Time for acon – installing PyParsing 283

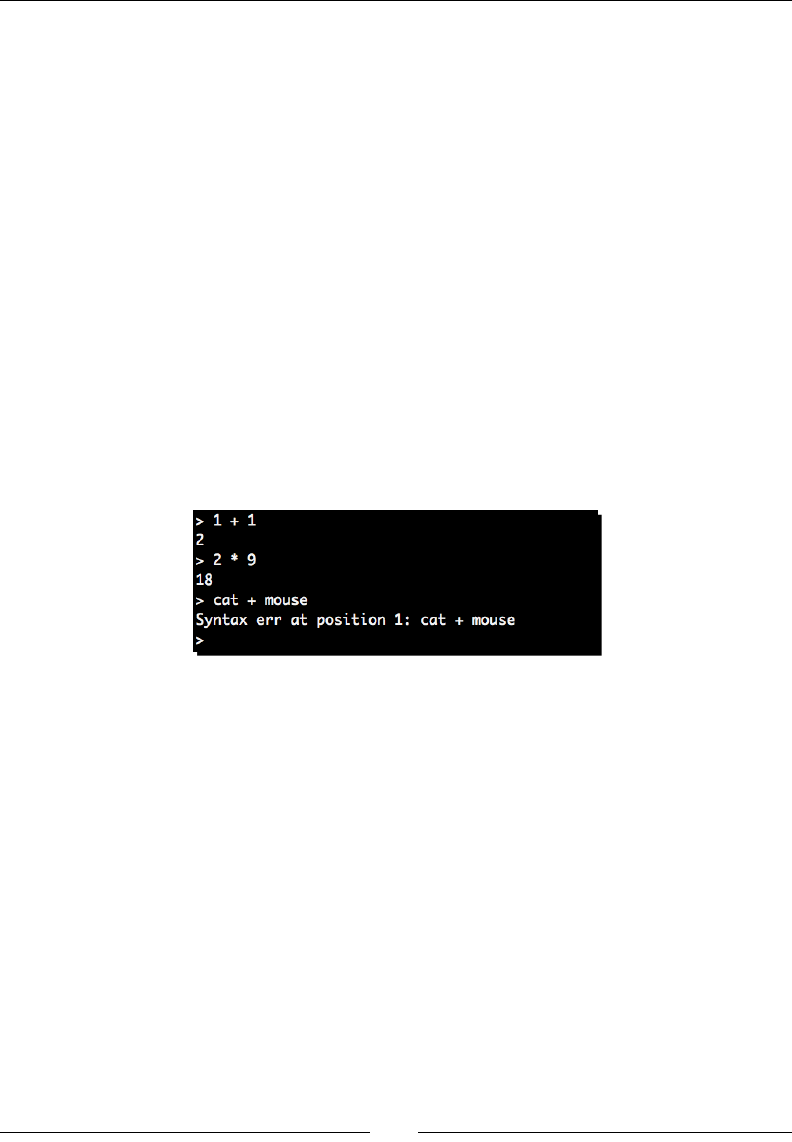

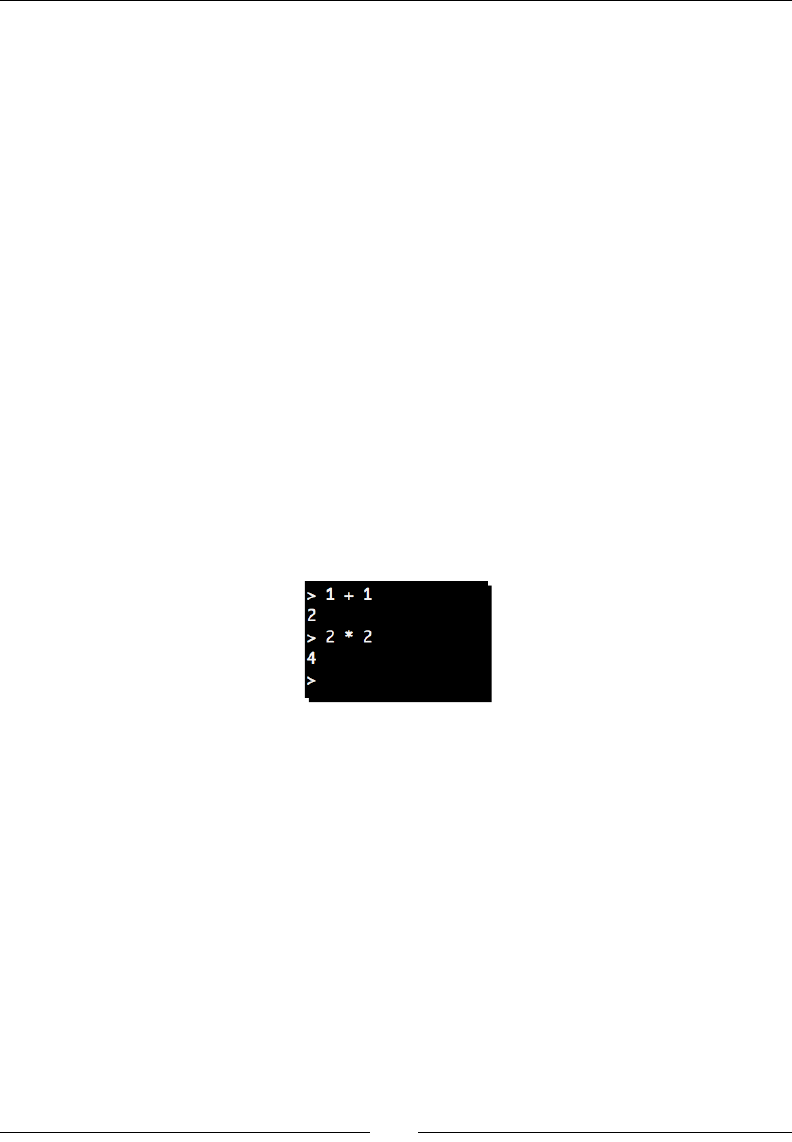

Time for acon – implemenng a calculator 284

Parse acons 287

Time for acon – handling type translaons 287

Table of Contents

[ vii ]

Suppressing parts of a match 289

Time for acon – suppressing porons of a match 289

Processing data using the Natural Language Toolkit 297

Time for acon – installing NLTK 298

NLTK processing examples 298

Removing stems 298

Discovering collocaons 299

Summary 300

Chapter 11: Searching and Indexing 301

Understanding search complexity 302

Time for acon – implemenng a linear search 302

Text indexing 304

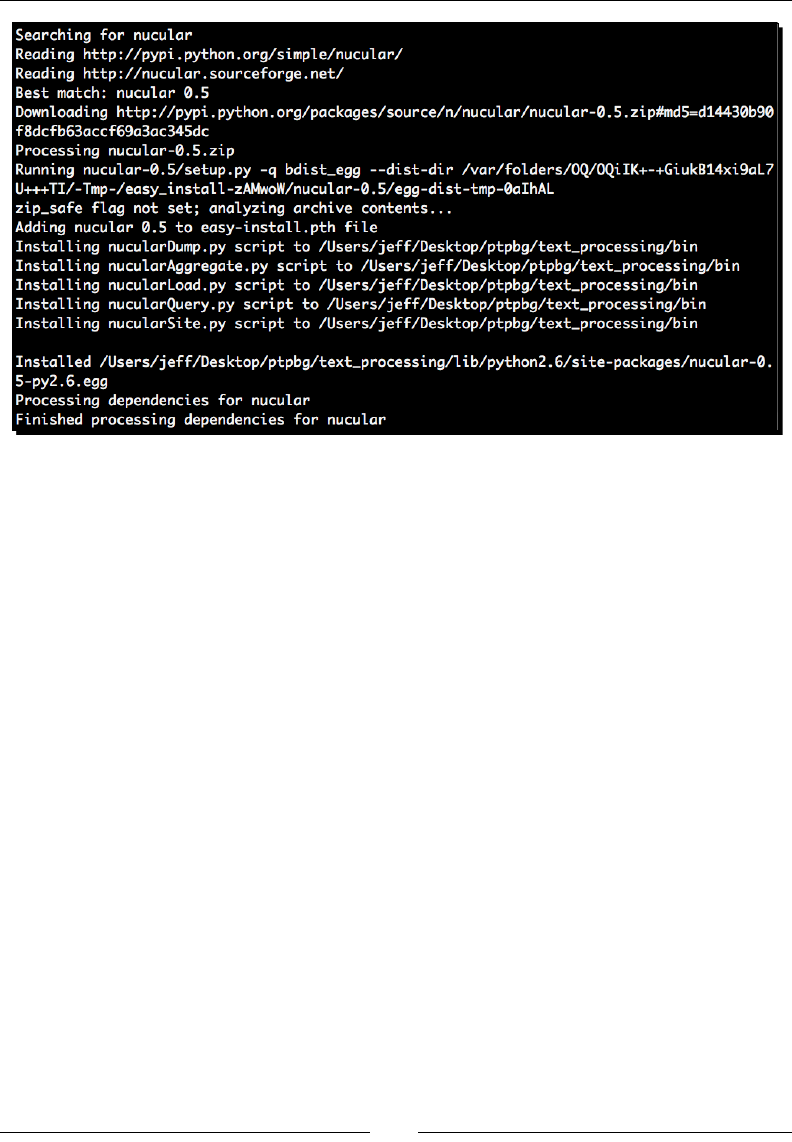

Time for acon – installing Nucular 304

An introducon to Nucular 305

Time for acon – full text indexing 307

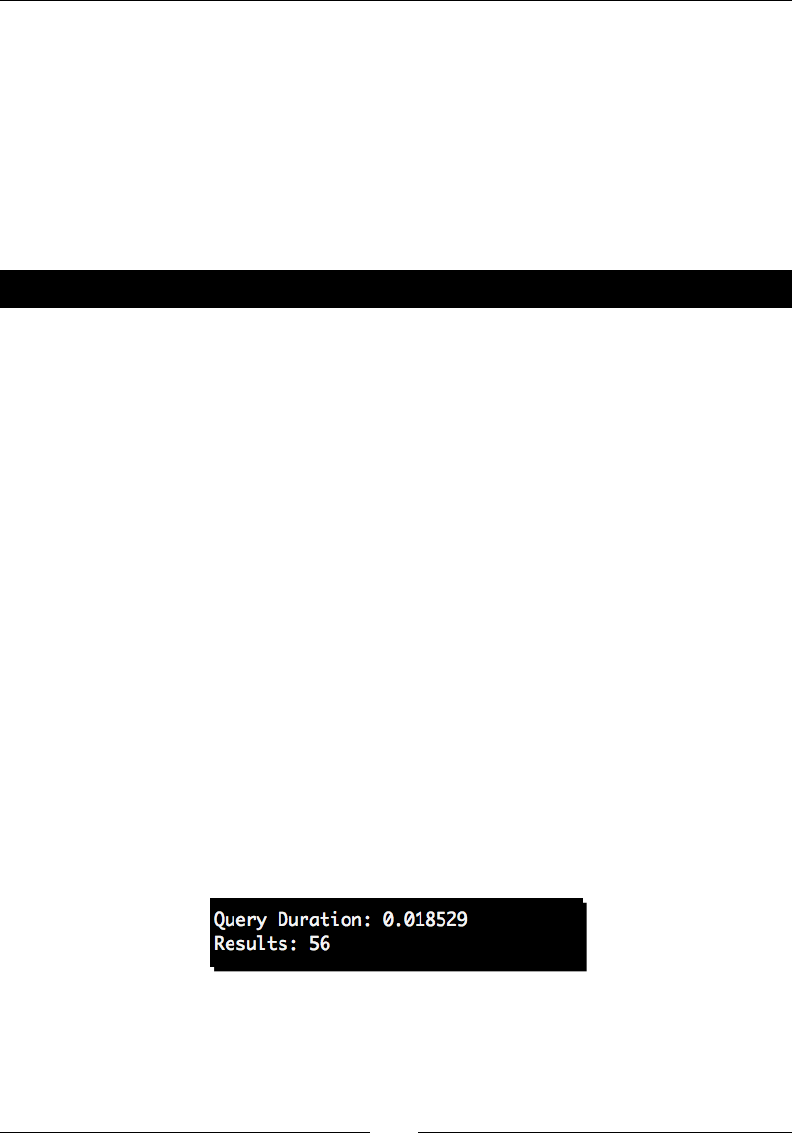

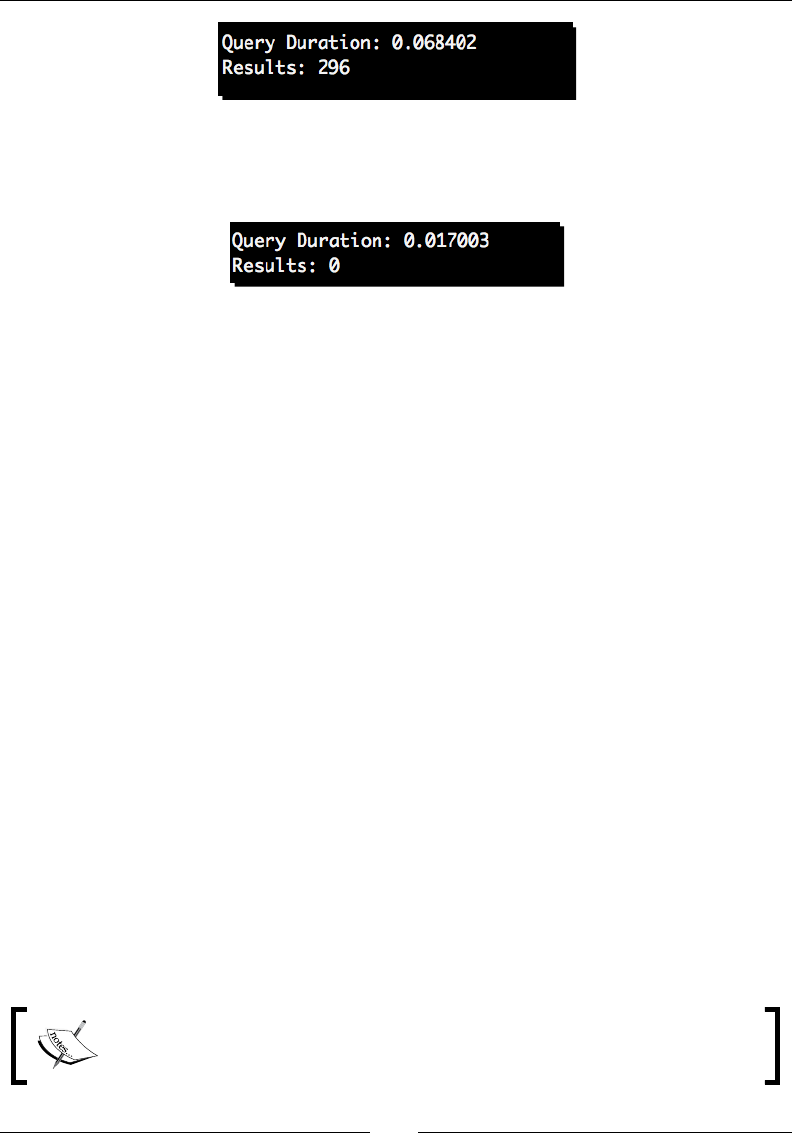

Time for acon – measuring index benet 310

Scripts provided by Nucular 312

Using XML les 312

Advanced Nucular features 313

Time for acon – eld-qualied indexes 314

Performing an enhanced search 317

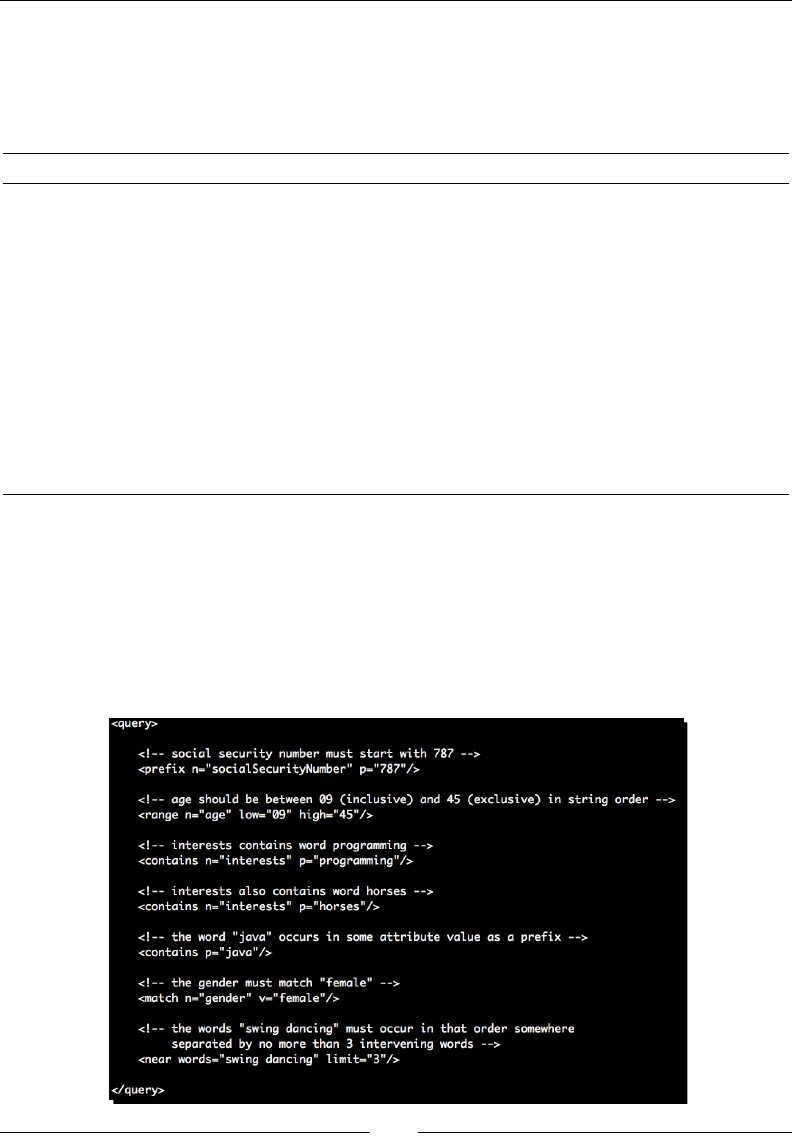

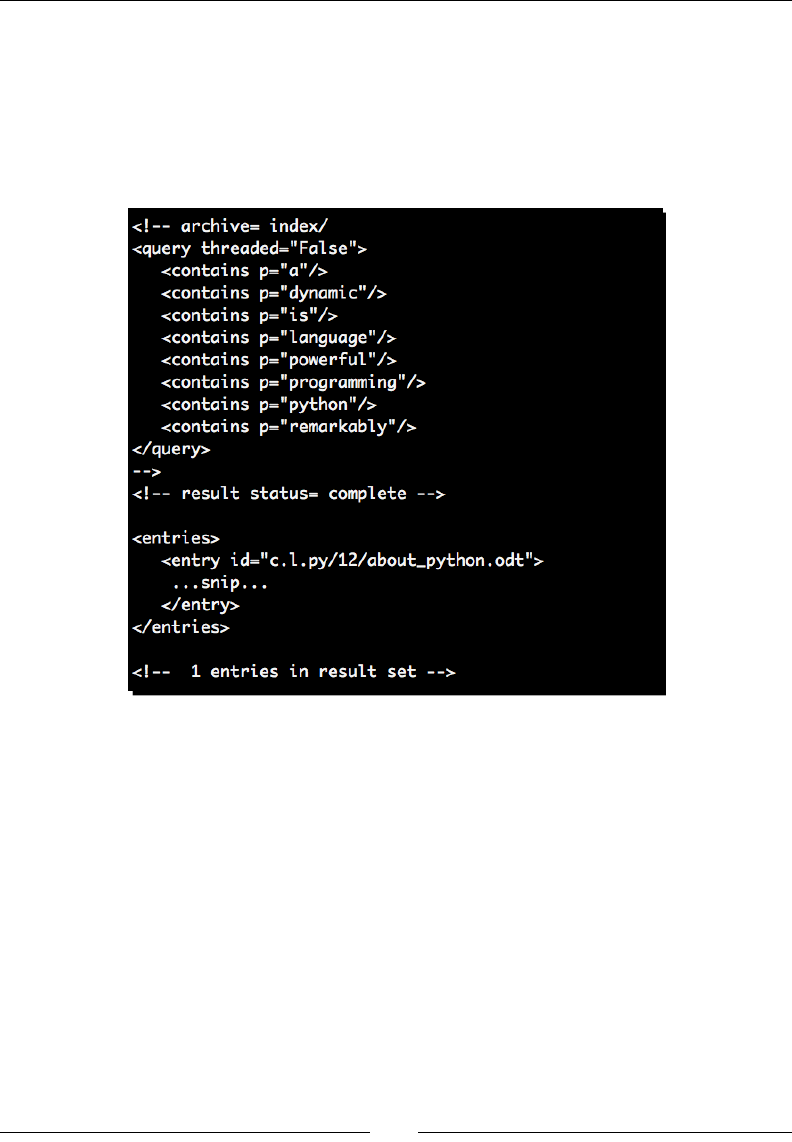

Time for acon – performing advanced Nucular queries 317

Indexing and searching other data 320

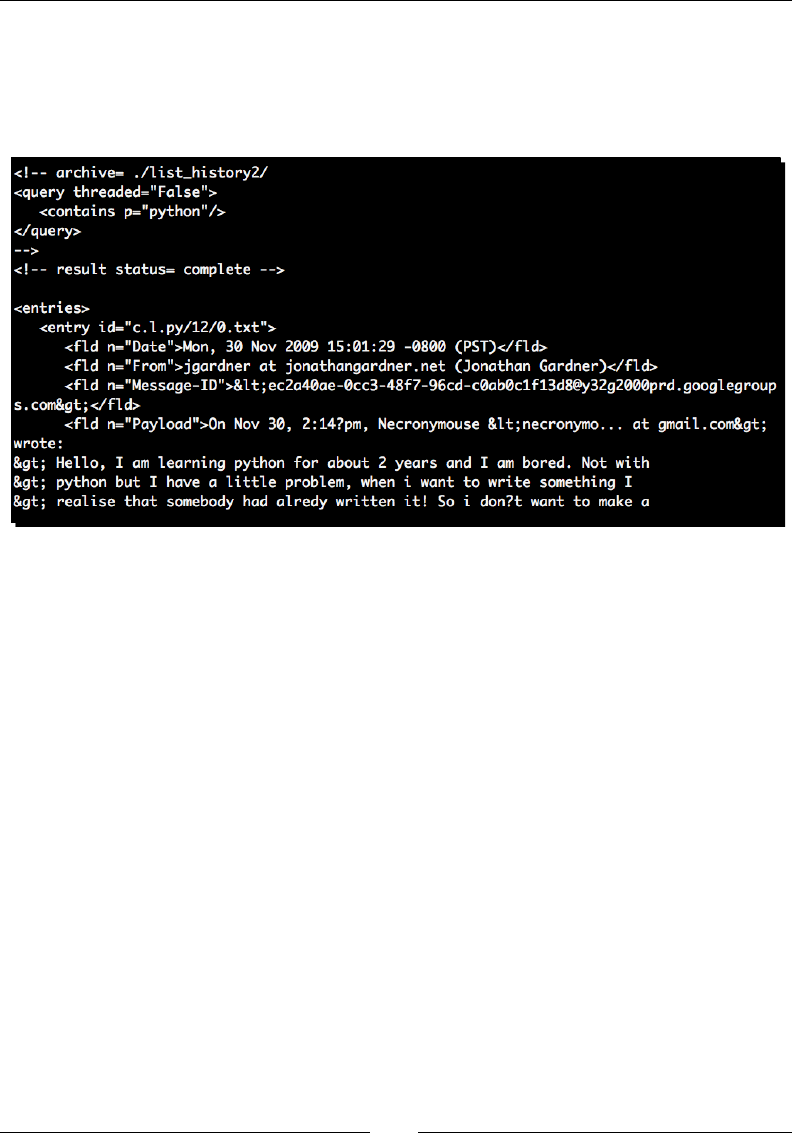

Time for acon – indexing Open Oce documents 320

Other index systems 325

Apache Lucene 325

ZODB and zc.catalog 325

SQL text indexing 325

Summary 326

Appendix A: Looking for Addional Resources 327

Python resources 328

Unocial documentaon 328

Python enhancement proposals 328

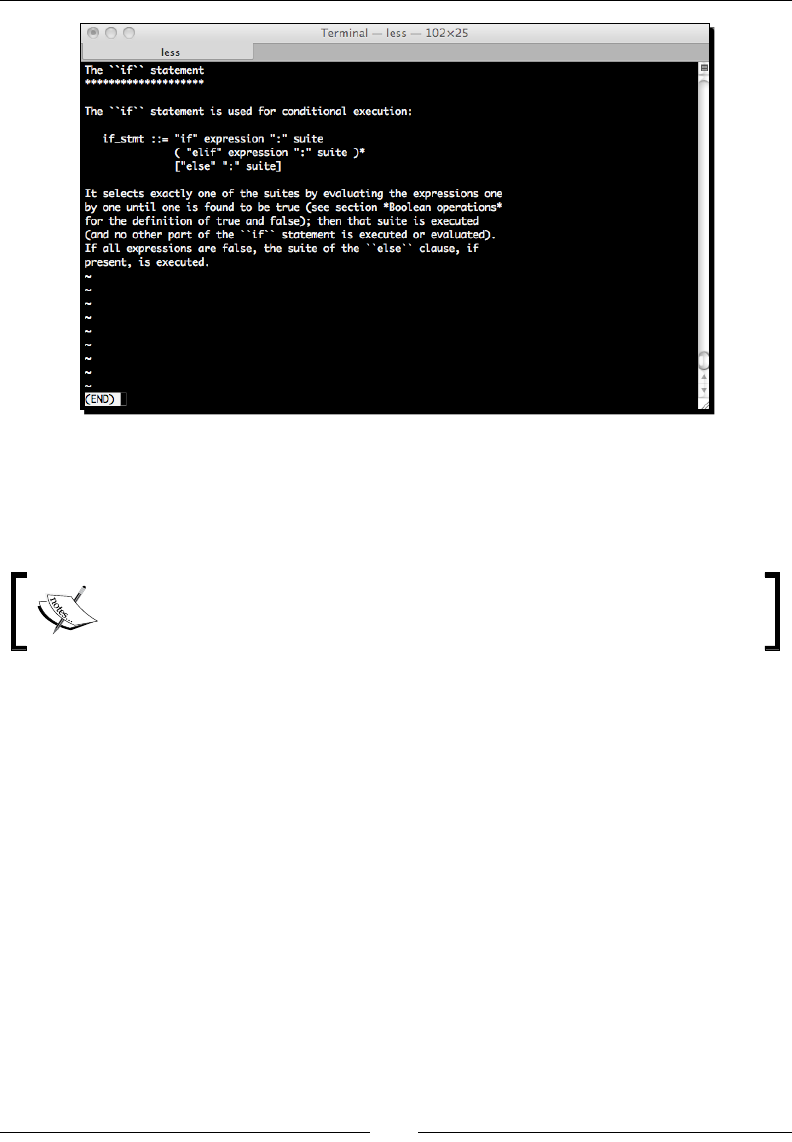

Self-documenng 329

Using other documentaon tools 331

Community resources 332

Following groups and mailing lists 332

Finding a users' group 333

Aending a local Python conference 333

Honorable menon 333

Lucene and Solr 333

Table of Contents

[ viii ]

Generang C-based parsers with GNU Bison 334

Apache Tika 335

Geng started with Python 3 335

Major language changes 336

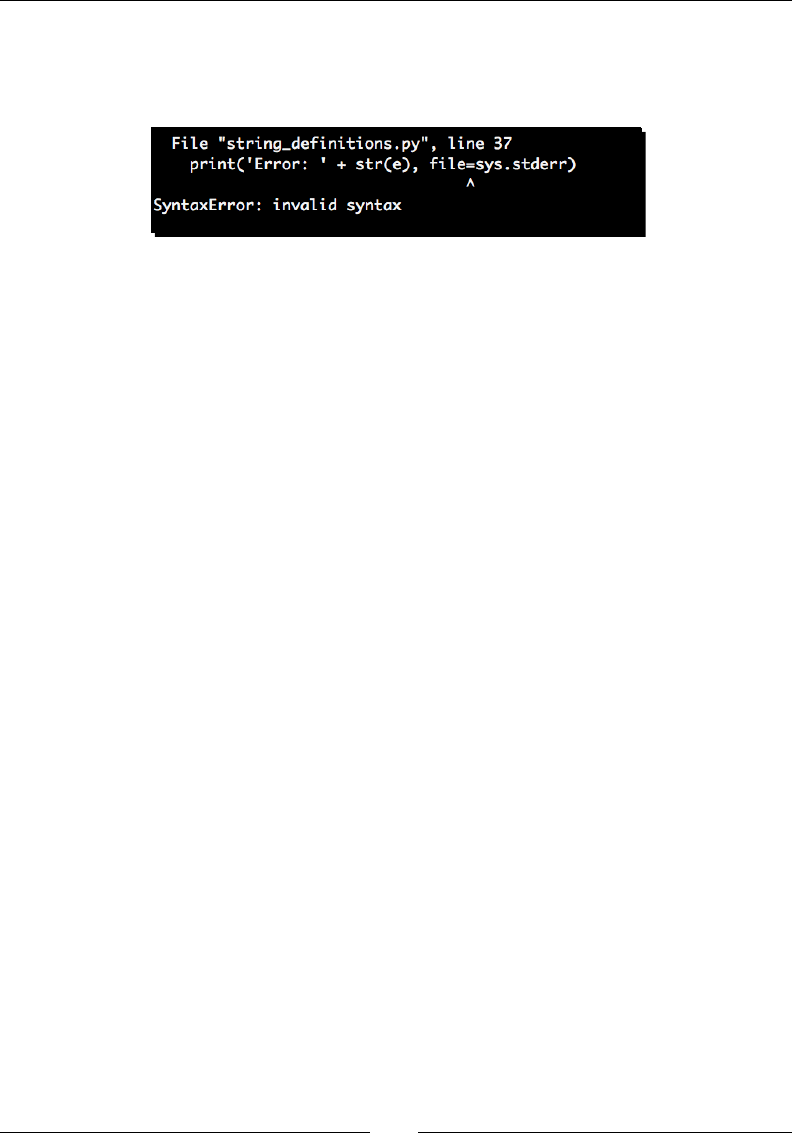

Print is now a funcon 336

Catching excepons 337

Using metaclasses 338

New reserved words 338

Major library changes 339

Changes to list comprehensions 339

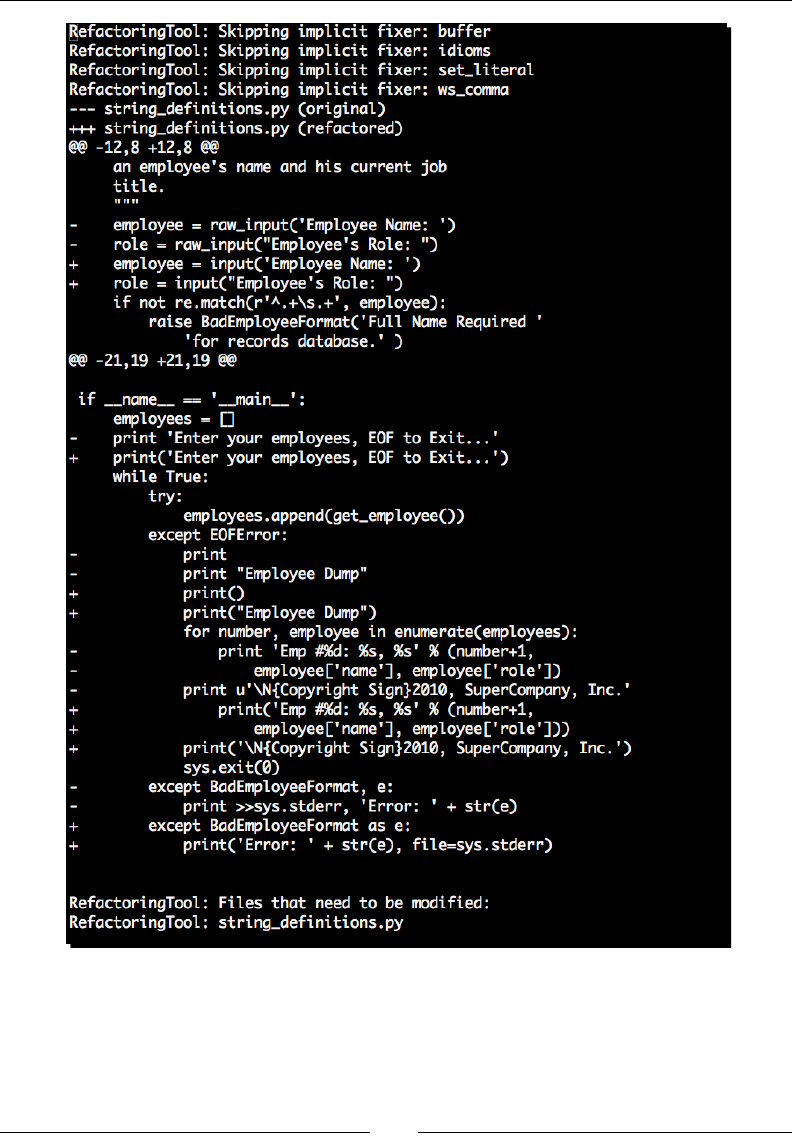

Migrang to Python 3 339

Time for acon – using 2to3 to move to Python 3 340

Summary 342

Appendix B: Pop Quiz Answers 343

Chapter 1: Geng Started 343

ROT 13 Processing Answers 343

Chapter 2: Working with the IO System 344

File-like objects 344

Chapter 3: Python String Services 344

String literals 344

String formang 345

Chapter 4: Text Processing Using the Standard Library 345

CSV handling 345

JSON formang 346

Chapter 5: Regular Expressions 346

Regular expressions 346

Understanding the Pythonisms 346

Chapter 6: Structured Markup 347

SAX processing 347

Chapter 7: Creang Templates 347

Template inheritance 347

Chapter 8: Understanding Encoding and i18n 347

Character encodings 347

Python encodings 348

Internaonalizaon 348

Chapter 9: Advanced Output Formats 348

Creang XLS documents 348

Chapter 11: Searching and Indexing 349

Introducon to Nucular 349

Index 351

Preface

The Python Text Processing Beginner's Guide is intended to provide a gentle, hands-on

introducon to processing, understanding, and generang textual data using the Python

programming language. Care is taken to ensure the content is example-driven, while sll

providing enough background informaon to allow for a solid understanding of the topics

covered.

Throughout the book, we use real world examples such as logle processing and PDF

creaon to help you further understand dierent aspects of text handling. By the me you've

nished, you'll have a solid working knowledge of both structured and unstructured text

data management. We'll also look at praccal indexing and character encodings.

A good deal of supporng informaon is included. We'll touch on packaging, Python IO,

third-party ulies, and some details on working with the Python 3 series releases. We'll

even spend a bit of me porng a small example applicaon to the latest version.

Finally, we do our best to provide a number of high quality external references. While this

book will cover a broad range of topics, we also want to help you dig deeper when necessary.

What this book covers

Chapter 1, Geng Started: This chapter provides an introducon into character and string

data types and how strings are represented using underlying integers. We'll implement a

simple encoding script to illustrate how text can be manipulated at the character level. We

also set up our systems to allow safe third-party library installaon.

Chapter 2, Working with the IO System: Here, you'll learn how to access your data. We cover

Python's IO capabilies in this chapter. We'll learn how to access les locally and remotely.

Finally, we cover how Python's IO layers change in Python 3.

Chapter 3, Python String Services: Covers Python's core string funconality. We look at the

methods of string objects, the core template classes, and Python's various string formang

methods. We introduce the dierences between Unicode and string objects here.

Preface

[ 2 ]

Chapter 4, Test Processing Using the Standard Library: The standard Python distribuon

includes a powerful set of built-in libraries designed to manage textual content. We look

at conguraon le reading and manipulaon, CSV les, and JSON data. We take a bit of a

detour at the end of this chapter to learn how to create your own redistributable Python egg

les.

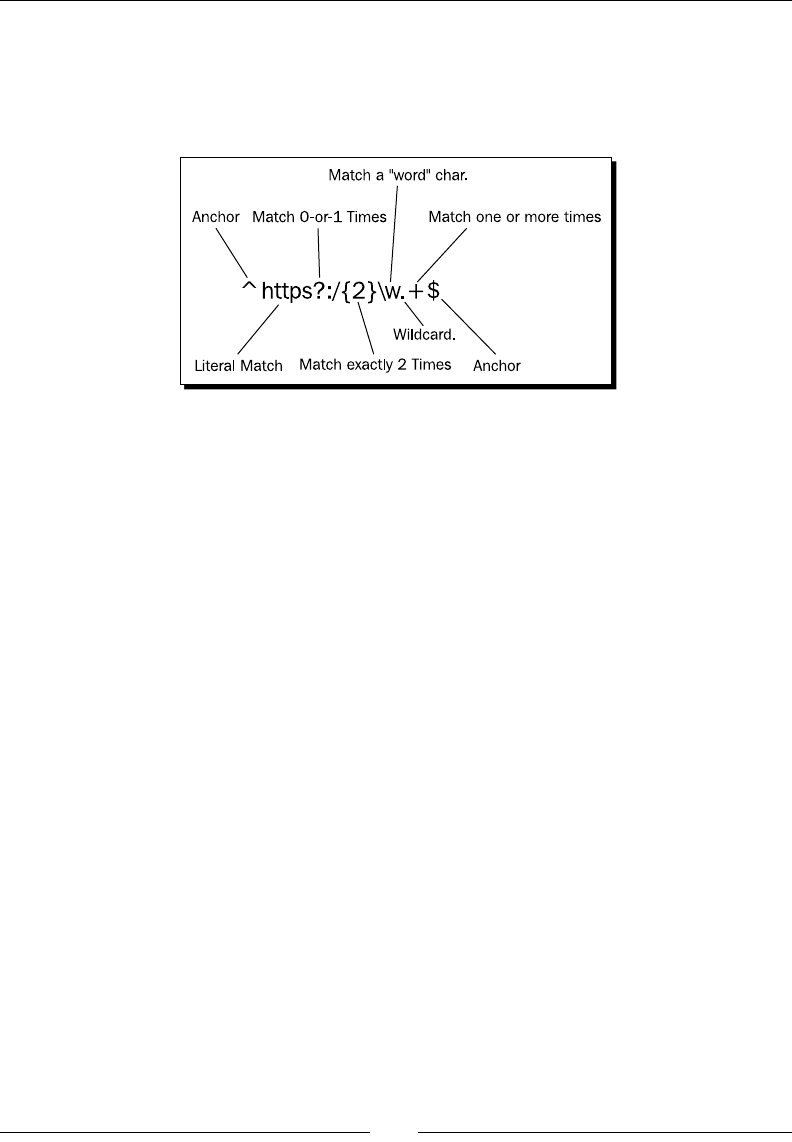

Chapter 5, Regular Expressions: Looks at Python's regular expression implementaon and

teaches you how to implement them. We look at standardized concepts as well as Python's

extensions. We'll break down a few graphically so that the component parts are easy to piece

together. You'll also learn how to safely use regular expressions with internaonal alphabets.

Chapter 6, Structured Markup: Introduces you to XML and HTML processing. We create an

adventure game using both SAX and DOM approaches. We also look briey at lxml and

ElementTree. HTML parsing is also covered.

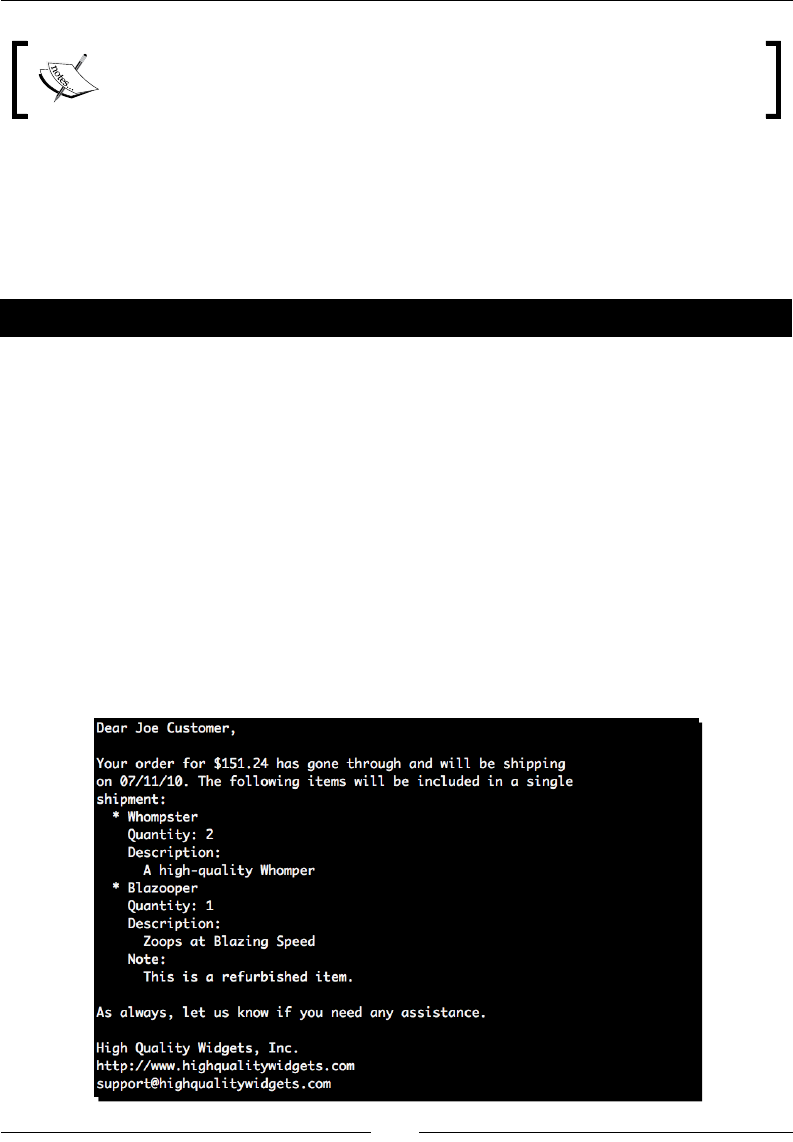

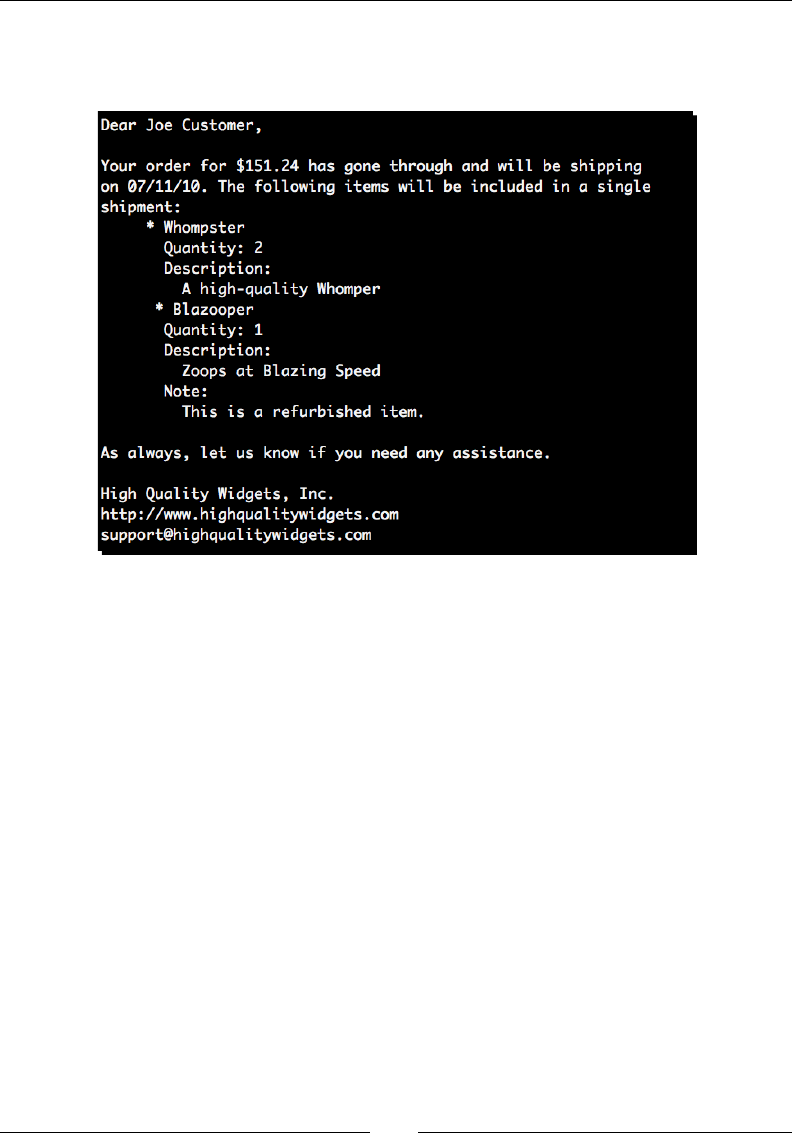

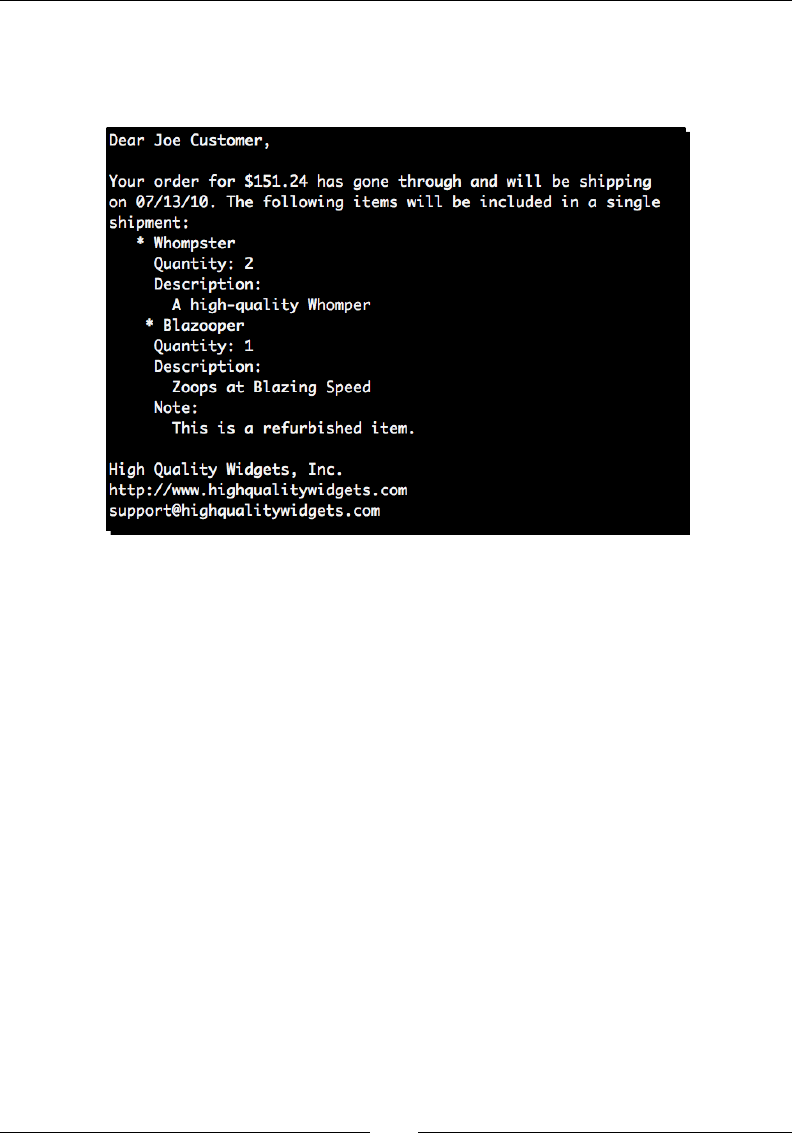

Chapter 7, Creang Templates: Using the Mako template language, we'll generate e-mail

and HTML text templates much like the ones that you'll encounter within common web

frameworks. We visit template creaon, inheritance, lters, and custom tag creaon.

Chapter 8, Understanding Encodings and i18n: We provide a look into character encoding

schemes and how they work. For reference, we'll examine ASCII as well as KOI8-R. We also

look into Unicode and its various encoding mechanisms. Finally, we nish up with a quick

look at applicaon internaonalizaon.

Chapter 9, Advanced Output Formats: Provides informaon on how to generate PDF, Excel,

and OpenDocument data. We'll build these document types from scratch using direct Python

API calls relying on third-party libraries.

Chapter 10, Advanced Parsing and Grammars: A look at more advanced text manipulaon

techniques such as those used by programming language designers. We'll use the PyParsing

library to handle some conguraon le management and look into the Python Natural

Language Toolkit.

Chapter 11, Searching and Indexing: A praccal look at full text searching and the benet an

index can provide. We'll use the Nucular system to index a collecon of small text les and

make them quickly searchable.

Appendix A, Looking for Addional Resources: It introduces you to places of interest on the

Internet and some community resources. In this appendix, you will learn to create your own

documentaon and to use Java Lucene based engines. You will also learn about dierences

between Python 2 & Python 3 and to port code to Python 3.

Preface

[ 3 ]

What you need for this book

This book assumes you've an elementary knowledge of the Python programming language,

so we don't provide a tutorial introducon. From a soware angle, you'll simply need a

version of Python (2.6 or later) installed. Each me we require a third-party library, we'll

detail the installaon in text.

Who this book is for

If you are a novice Python developer who is interested in processing text then this book is for

you. You need no experience with text processing, though basic knowledge of Python would

help you to beer understand some of the topics covered by this book. As the content of this

book develops gradually, you will be able to pick up Python while reading.

Conventions

In this book, you will nd several headings appearing frequently.

To give clear instrucons of how to complete a procedure or task, we use:

Time for action – heading

1. Acon 1

2. Acon 2

3. Acon 3

Instrucons oen need some extra explanaon so that they make sense, so they are

followed with:

What just happened?

This heading explains the working of tasks or instrucons that you have just completed.

You will also nd some other learning aids in the book, including:

Pop Quiz – heading

These are short mulple choice quesons intended to help you test your own understanding.

Preface

[ 4 ]

Have a go hero – heading

These set praccal challenges and give you ideas for experimenng with what you have

learned.

You will also nd a number of styles of text that disnguish between dierent kinds of

informaon. Here are some examples of these styles, and explanaons of their meanings.

Code words in text are shown as follows: "First of all, we imported the re module"

A block of code is set as follows:

parser = OptionParser()

parser.add_option('-f', '--file', help="CSV Data File")

opts, args = parser.parse_args()

if not opts.file:

When we wish to draw your aenon to a parcular part of a code block, the relevant lines

or items are set in bold:

def init_game(self):

"""

Process World XML.

"""

self.location = parse(open(self.world)).documentElement

Any command-line input or output is wrien as follows:

(text_processing)$ python render_mail.py thank_you-e.txt

New terms and important words are shown in bold. Words that you see on the screen, in

menus or dialog boxes for example, appear in the text like this: "Any X found in the source

data would simply become an A in the output data.".

Warnings or important notes appear in a box like this.

Tips and tricks appear like this.

Preface

[ 5 ]

Reader feedback

Feedback from our readers is always welcome. Let us know what you think about this

book—what you liked or may have disliked. Reader feedback is important for us to

develop tles that you really get the most out of.

To send us general feedback, simply send an e-mail to feedback@packtpub.com, and

menon the book tle via the subject of your message.

If there is a book that you need and would like to see us publish, please send us a note in the

SUGGEST A TITLE form on www.packtpub.com or e-mail suggest@packtpub.com.

If there is a topic that you have experse in and you are interested in either wring or

contribung to a book, see our author guide on www.packtpub.com/authors.

Customer support

Now that you are the proud owner of a Packt book, we have a number of things to help you

to get the most from your purchase.

Downloading the example code for this book

You can download the example code les for all Packt books you have purchased

from your account at http://www.PacktPub.com. If you purchased this

book elsewhere, you can visit http://www.PacktPub.com/support and

register to have the les e-mailed directly to you.

Errata

Although we have taken every care to ensure the accuracy of our content, mistakes do

happen. If you nd a mistake in one of our books—maybe a mistake in the text or the

code—we would be grateful if you would report this to us. By doing so, you can save other

readers from frustraon and help us improve subsequent versions of this book. If you

nd any errata, please report them by vising http://www.packtpub.com/support,

selecng your book, clicking on the errata submission form link, and entering the details

of your errata. Once your errata are veried, your submission will be accepted and the

errata will be uploaded on our website, or added to any list of exisng errata, under the

Errata secon of that tle. Any exisng errata can be viewed by selecng your tle from

http://www.packtpub.com/support.

Preface

[ 6 ]

Piracy

Piracy of copyright material on the Internet is an ongoing problem across all media. At Packt,

we take the protecon of our copyright and licenses very seriously. If you come across any

illegal copies of our works, in any form, on the Internet, please provide us with the locaon

address or website name immediately so that we can pursue a remedy.

Please contact us at copyright@packtpub.com with a link to the suspected pirated material.

We appreciate your help in protecng our authors, and our ability to bring you valuable

content.

Questions

You can contact us at questions@packtpub.com if you are having a problem with any

aspect of the book, and we will do our best to address it.

1

Getting Started

As computer professionals, we deal with text data every day. Developers and

programmers interact with XML and source code. System administrators

have to process and understand logles. Managers need to understand and

format nancial data and reports. Web designers put in me, hand tuning and

polishing up HTML content. Managing this broad range of formats can seem

like a daunng task, but it's really not that dicult.

This book aims to introduce you, the programmer, to a variety of methods used

to process these data formats. We'll look at approaches ranging from standard

language funcons through more complex third-party modules. Somewhere in

there, we'll cover a ulity that's just the right tool for your specic job. In the

process, we hope to also cover some Python development best pracces.

Where appropriate, we'll look into implementaon details enough to help you

understand the techniques used. Most of the me, though, we'll work as hard

as we can to get you up on your feet and crunching those text les.

You'll nd that Python makes tasks like this quite painless through its clean and

easy-to-understand syntax, vast community, and the available collecon of

addional ulies and modules.

In this chapter, we shall:

Briey introduce the data formats handled in this book

Implement a simple ROT13 translator

Introduce you to basic processing via lter programs

Learn state machine basics

Geng Started

[ 8 ]

Learn how to install supporng libraries and components safely and without

administrave access

Look at where to nd more informaon on introductory topics

Categorizing types of text data

Textual data comes in a variety of formats. For our purposes, we'll categorize text into three

very broad groups. Isolang down into segments helps us to understand the problem a bit

beer, and subsequently choose a parsing approach. Each one of these sweeping groups can

be further broken down into more detailed chunks.

One thing to remember when working your way through the book is that text content isn't

limited to the Lan alphabet. This is especially true when dealing with data acquired via the

Internet. We'll cover some of the techniques and tricks to handling internaonalized data in

Chapter 8, Understanding Encoding and i18n.

Providing information through markup

Structured text includes formats such as XML and HTML. These formats generally consist of

text content surrounded by special symbols or markers that give extra meaning to a le's

contents. These addional tags are usually meant to convey informaon to the processing

applicaon and to arrange informaon in a tree-like structure. Markup allows a developer to

dene his or her own data structure, yet rely on standardized parsers to extract elements.

For example, consider the following contrived HTML document.

<html>

<head>

<title>Hello, World!</title>

</head>

<body>

<p>

Hi there, all of you earthlings.

</p>

<p>

Take us to your leader.

</p>

</body>

</html>

In this example, our document's tle is clearly idened because it is surrounded by opening

and closing <title> and </title> elements.

Chapter 1

[ 9 ]

Note that although the document's tags give each element

a meaning, it's sll up to the applicaon developer to

understand what to do with a title object or a p element.

Noce that while it sll has meaning to us humans, it is also laid out in such a way as to make

it computer friendly. We'll take a deeper look into these formats in Chapter 6, Structured

Markup. Python provides some rich libraries for dealing with these popular formats.

One interesng aspect to these formats is that it's possible to embed references to validaon

rules as well as the actual document structure. This is a nice benet in that we're able to rely

on the parser to perform markup validaon for us. This makes our job much easier as it's

possible to trust that the input structure is valid.

Meaning through structured formats

Text data that falls into this category includes things such as conguraon les, marker

delimited data, e-mail message text, and JavaScript Object Notaon web data. Content

within this second category does not contain explicit markup much like XML and HTML does,

but the structure and formang is required as it conveys meaning and informaon about

the text to the parsing applicaon. For example, consider the format of a Windows INI le

or a Linux system's /etc/hosts le. There are no tags, but the column on the le clearly

means something other than the column on the right.

Python provides a collecon of modules and libraries intended to help us handle popular

formats from this category. We'll look at Python's built-in text services in detail when we get

to Chapter 4, The Standard Library to the Rescue.

Understanding freeform content

This category contains data that does not fall into the previous two groupings. This describes

e-mail message content, leers, book copy, and other unstructured character-based content.

However, this is where we'll largely have to look at building our own processing components.

There are external packages available to us if we wish to perform common funcons. Some

examples include full text searching and more advanced natural language processing.

Ensuring you have Python installed

Our rst order of business is to ensure that you have Python installed. You'll need it in order

to complete most of the examples in this book. We'll be working with Python 2.6 and we

assume that you're using that same version. If there are any drasc dierences in earlier

releases, we'll make a note of them as we go along. All of the examples should sll funcon

properly with Python 2.4 and later versions.

Geng Started

[ 10 ]

If you don't have Python installed, you can download the latest 2.X version from http://

www.python.org. Most Linux distribuons, as well as Mac OS, usually have a version of

Python preinstalled.

At the me of this wring, Python 2.6 was the latest version available, while 2.7 was in an

alpha state.

Providing support for Python 3

The examples in this book are wrien for Python 2. However, wherever possible, we will

provide code that has already been ported to Python 3. You can nd the Python 3 code in

the Python3 directories in the code bundle available on the Packt Publishing FTP site.

Unfortunately, we can't promise that all of the third-party libraries that we'll use will support

Python 3. The Python community is working hard to port popular modules to version 3.0.

However, as the versions are incompable, there is a lot of work remaining. In situaons

where we cannot provide example code, we'll note this.

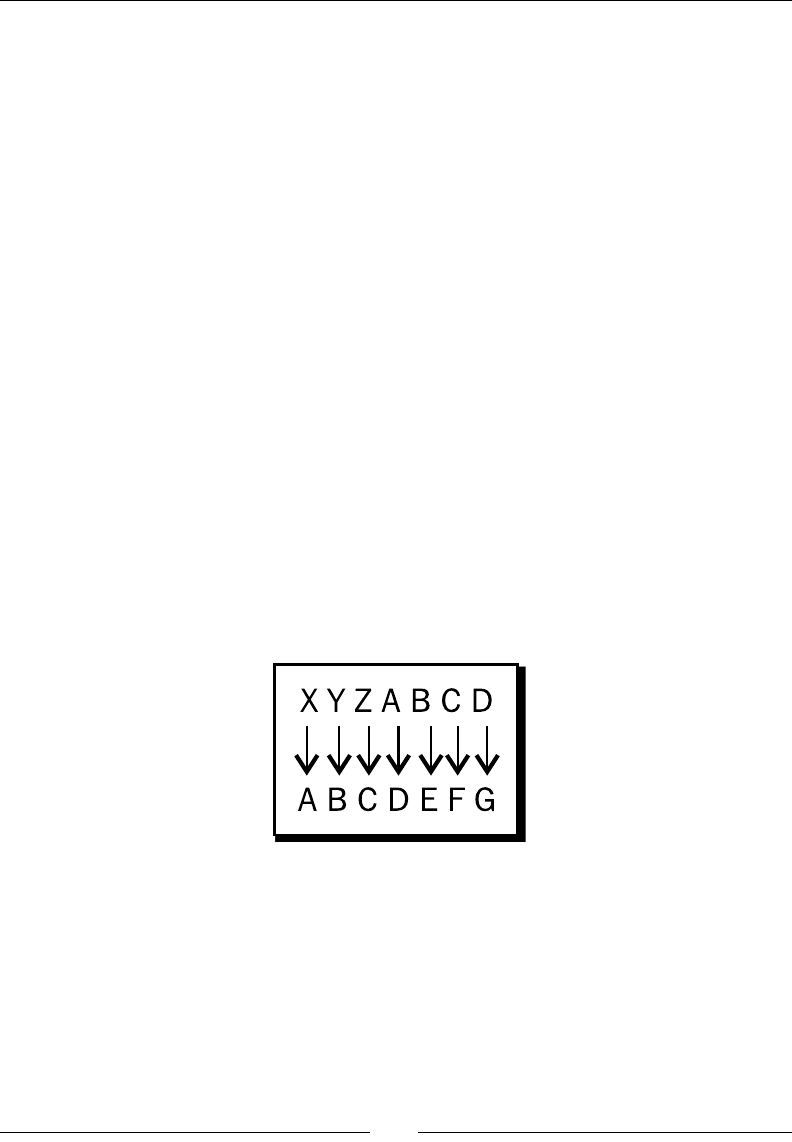

Implementing a simple cipher

Let's get going early here and implement our rst script to get a feel for what's in store.

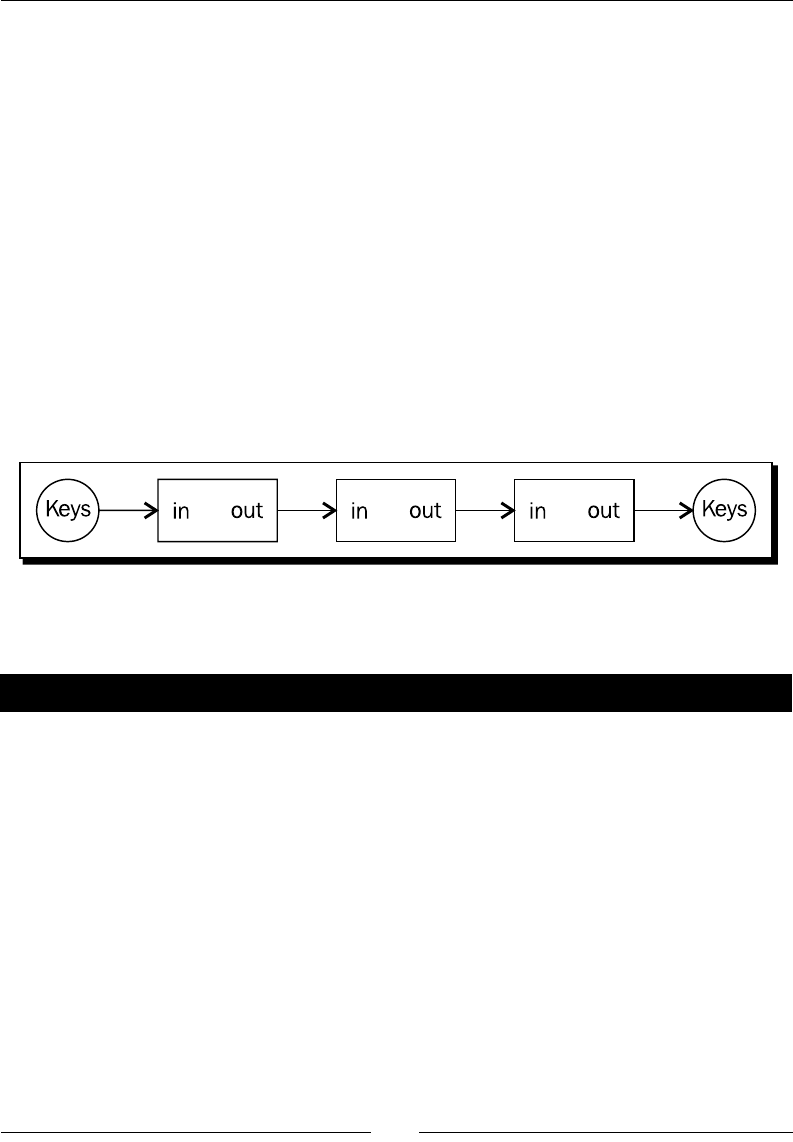

A Caesar Cipher is a simple form of cryptography in which each leer of the alphabet is shied

down by a number of leers. They're generally of no cryptographic use when applied alone,

but they do have some valid applicaons when paired with more advanced techniques.

This preceding diagram depicts a cipher with an oset of three. Any X found in the source

data would simply become an A in the output data. Likewise, any A found in the input data

would become a D.

Chapter 1

[ 11 ]

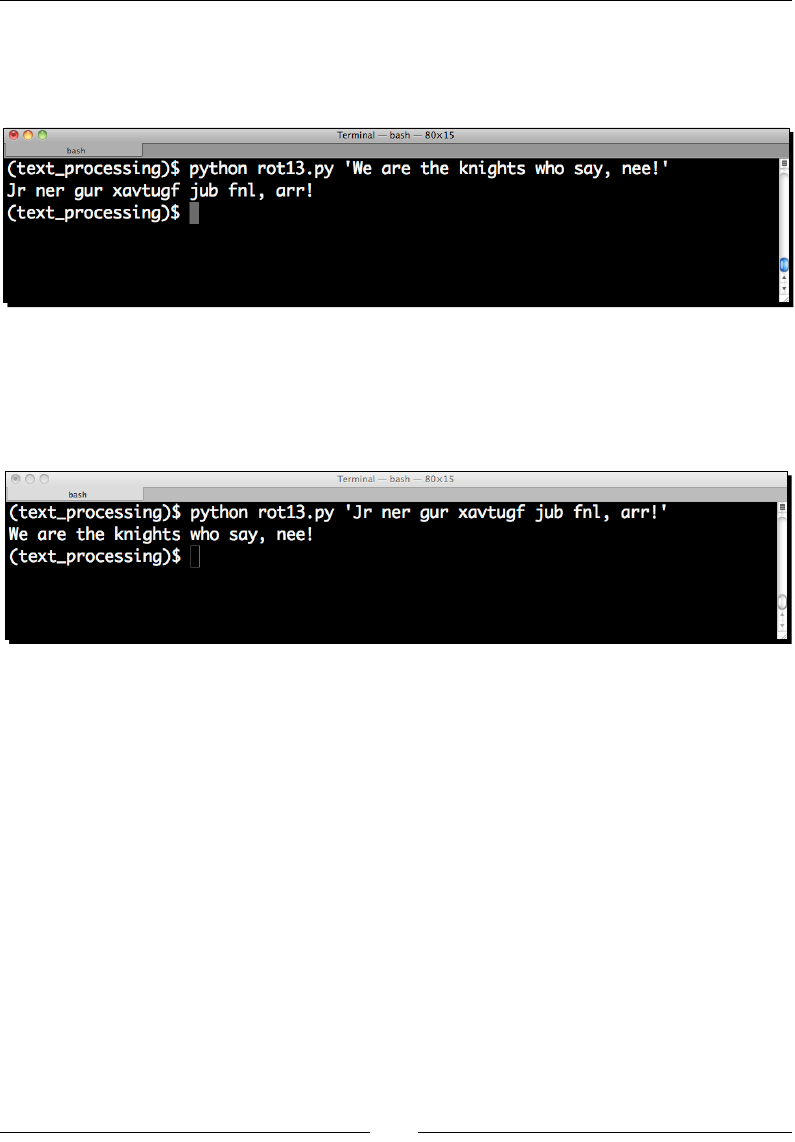

Time for action – implementing a ROT13 encoder

The most popular implementaon of this system is ROT13. As its name suggests, ROT13

shis – or rotates – each leer by 13 spaces to produce an encrypted result. As the English

alphabet has 26 leers, we simply run it a second me on the encrypted text in order to get

back to our original result.

Let's implement a simple version of that algorithm.

1. Start your favorite text editor and create a new Python source le. Save it

as rot13.py.

2. Enter the following code exactly as you see it below and save the le.

import sys

import string

CHAR_MAP = dict(zip(

string.ascii_lowercase,

string.ascii_lowercase[13:26] + string.ascii_lowercase[0:13]

)

)

def rotate13_letter(letter):

"""

Return the 13-char rotation of a letter.

"""

do_upper = False

if letter.isupper():

do_upper = True

letter = letter.lower()

if letter not in CHAR_MAP:

return letter

else:

letter = CHAR_MAP[letter]

if do_upper:

letter = letter.upper()

return letter

if __name__ == '__main__':

for char in sys.argv[1]:

sys.stdout.write(rotate13_letter(char))

sys.stdout.write('\n')

Geng Started

[ 12 ]

3. Now, from a command line, execute the script as follows. If you've entered all of the

code correctly, you should see the same output.

$ python rot13.py 'We are the knights who say, nee!'

4. Run the script a second me, using the output of the rst run as the new input

string. If everything was entered correctly, the original text should be printed to

the console.

$ python rot13.py 'Dv ziv gsv pmrtsgh dsl hzb, mvv!'

What just happened?

We implemented a simple text-oriented cipher using a collecon of Python's string handling

features. We were able to see it put to use for both encoding and decoding source text.

We saw a lot of stu in this lile example, so you should have a good feel for what can be

accomplished using the standard Python string object.

Following our inial module imports, we dened a diconary named CHAR_MAP, which

gives us a nice and simple way to shi our leers by the required 13 places. The value of a

diconary key is the target leer! We also took advantage of string slicing here. We'll look at

slicing a bit more in later chapters, but it's a convenient way for us to extract a substring from

an exisng string object.

Chapter 1

[ 13 ]

In our translaon funcon rotate13_letter, we checked whether our input character

was uppercase or lowercase and then saved that as a Boolean aribute. We then forced our

input to lowercase for the translaon work. As ROT13 operates on leers alone, we only

performed a rotaon if our input character was a leer of the Lan alphabet. We allowed

other values to simply pass through. We could have just as easily forced our string to a pure

uppercased value.

The last thing we do in our funcon is restore the leer to its proper case, if necessary. This

should familiarize you with upper- and lowercasing of Python ASCII strings.

We're able to change the case of an enre string using this same method; it's not limited to

single characters.

>>> name = 'Ryan Miller'

>>> name.upper()

'RYAN MILLER'

>>> "PLEASE DO NOT SHOUT".lower()

'please do not shout'

>>>

It's worth poinng out here that a single character string is sll a string.

There is not a char type, which you may be familiar with if you're coming

from a dierent language such as C or C++. However, it is possible to

translate between character ASCII codes and back using the ord and chr

built-in methods and a string with a length of one.

Noce how we were able to loop through a string directly using the Python for syntax.

A string object is a standard Python iterable, and we can walk through them detailed as

follows. In pracce, however, this isn't something you'll normally do. In most cases, it makes

sense to rely on exisng libraries.

$ python

Python 2.6.1 (r261:67515, Jul 7 2009, 23:51:51)

[GCC 4.2.1 (Apple Inc. build 5646)] on darwin

Type "help", "copyright", "credits" or "license" for more information.

>>> for char in "Foo":

... print char

...

F

o

o

>>>

Geng Started

[ 14 ]

Finally, you should note that we ended our script with an if statement such as the following:

>>> if__name__ == '__main__'

Python modules all contain an internal __name__ variable that corresponds to the name of

the module. If a module is executed directly from the command line, as is this script, whose

name value is set to __main__, this code only runs if we've executed this script directly. It

will not run if we import this code from a dierent script. You can import the code directly

from the command line and see for yourself.

$ python

Python 2.6.1 (r261:67515, Jul 7 2009, 23:51:51)

[GCC 4.2.1 (Apple Inc. build 5646)] on darwin

Type "help", "copyright", "credits" or "license" for more information.

>>> import rot13

>>> dir(rot13)

['CHAR_MAP', '__builtins__', '__doc__', '__file__', '__name__', '__

package__', 'rotate13_letter', 'string', 'sys']

>>>

Noce how we were able to import our module and see all of the methods and aributes

inside of it, but the driver code did not execute. This is a convenon we'll use throughout the

book in order to help achieve maximum reusability.

Have a go hero – more translation work

Each Python string instance contains a collecon of methods that operate on one or more

characters. You can easily display all of the available methods and aributes by using the dir

method. For example, enter the following command into a Python window. Python responds

by prinng a list of all methods on a string object.

>>> dir("content")

['__add__', '__class__', '__contains__', '__delattr__', '__doc__',

'__eq__', '__format__', '__ge__', '__getattribute__', '__getitem__',

'__getnewargs__', '__getslice__', '__gt__', '__hash__', '__init__', '__

le__', '__len__', '__lt__', '__mod__', '__mul__', '__ne__', '__new__',

'__reduce__', '__reduce_ex__', '__repr__', '__rmod__', '__rmul__', '__

setattr__', '__sizeof__', '__str__', '__subclasshook__', '_formatter_

field_name_split', '_formatter_parser', 'capitalize', 'center', 'count',

'decode', 'encode', 'endswith', 'expandtabs', 'find', 'format', 'index',

'isalnum', 'isalpha', 'isdigit', 'islower', 'isspace', 'istitle',

'isupper', 'join', 'ljust', 'lower', 'lstrip', 'partition', 'replace',

'rfind', 'rindex', 'rjust', 'rpartition', 'rsplit', 'rstrip', 'split',

'splitlines', 'startswith', 'strip', 'swapcase', 'title', 'translate',

'upper', 'zfill']

>>>

Chapter 1

[ 15 ]

Much like the isupper and islower methods discussed previously, we also have an

isspace method. Using this method, in combinaon with your newfound knowledge of

Python strings, update the method we dened previously to translate spaces to underscores

and underscores to spaces.

Processing structured markup with a lter

Our ROT13 applicaon works great for simple one-line strings that we can t on the

command line. However, it wouldn't work very well if we wanted to encode an enre

le, such as the HTML document we took a look at earlier. In order to support larger text

documents, we'll need to change the way we accept input. We'll redesign our applicaon to

work as a lter.

A lter is an applicaon that reads data from its standard input le descriptor and writes to

its standard output le descriptor. This allows users to create command pipelines that allow

mulple ulies to be strung together. If you've ever typed a command such as cat /etc/

hosts | grep mydomain.com, you've set up a pipeline

In many circumstances, data is fed into the pipeline via the keyboard and completes its

journey when a processed result is displayed on the screen.

Time for action – processing as a lter

Let's make the changes required to allow our simple ROT13 processor to work as a

command-line lter. This will allow us to process larger les.

1. Create a new source le and enter the following code. When complete, save the le

as rot13-b.py.

import sys

import string

CHAR_MAP = dict(zip(

string.ascii_lowercase,

string.ascii_lowercase[13:26] + string.ascii_lowercase[0:13]

)

)

def rotate13_letter(letter):

"""

Geng Started

[ 16 ]

Return the 13-char rotation of a letter.

"""

do_upper = False

if letter.isupper():

do_upper = True

letter = letter.lower()

if letter not in CHAR_MAP:

return letter

else:

letter = CHAR_MAP[letter]

if do_upper:

letter = letter.upper()

return letter

if __name__ == '__main__':

for line in sys.stdin:

for char in line:

sys.stdout.write(rotate13_letter(char))

2. Enter the following HTML data into a new text le and save it as sample_page.

html. We'll use this as example input to our updated rot13.py.

<html>

<head>

<title>Hello, World!</title>

</head>

<body>

<p>

Hi there, all of you earthlings.

</p>

<p>

Take us to your leader.

</p>

</body>

</html>

3. Now, run our rot13.py example and provide our HTML document as standard

input data. The exact method used will vary with your operang system. If you've

entered the code successfully, you should simply see a new prompt.

$ cat sample_page.html | python rot13-b.py > rot13.html

$

Chapter 1

[ 17 ]

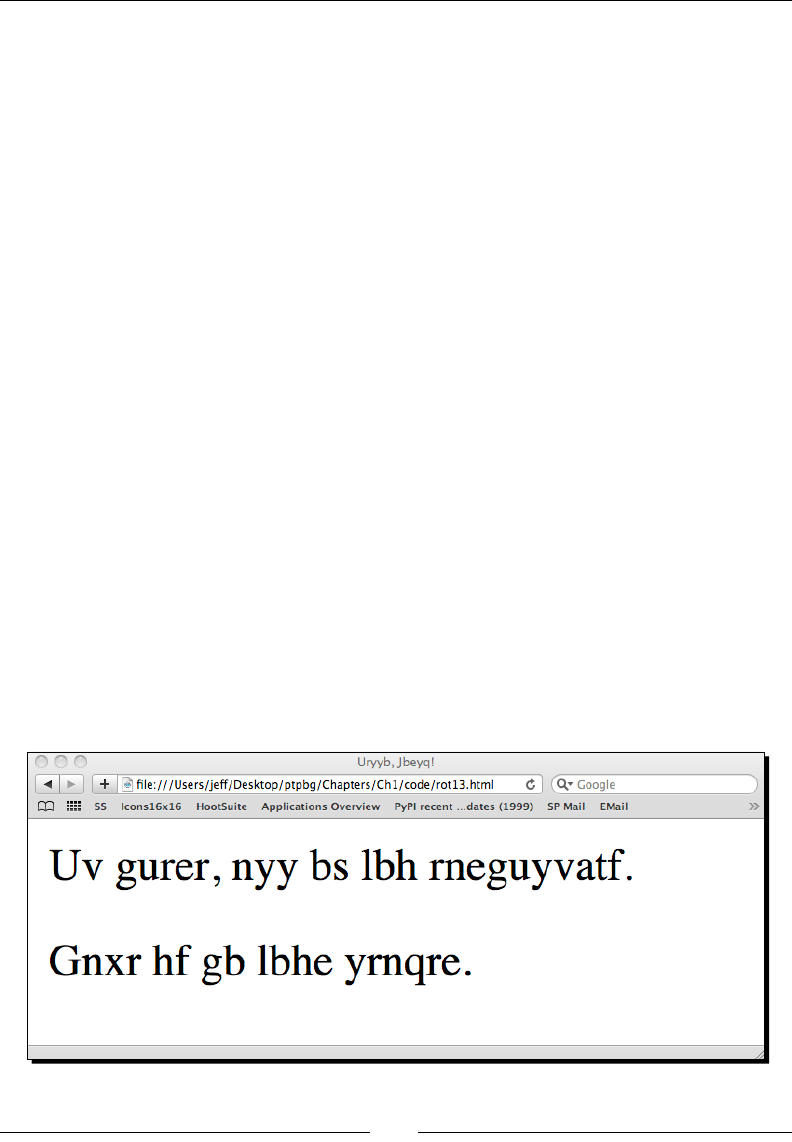

4. The contents of rot13.html should be as follows. If that's not the case, double

back and make sure everything is correct.

<ugzy>

<urnq>

<gvgyr>Uryyb, Jbeyq!</gvgyr>

</urnq>

<obql>

<c>

Uv gurer, nyy bs lbh rneguyvatf.

</c>

<c>

Gnxr hf gb lbhe yrnqre.

</c>

</obql>

</ugzy>

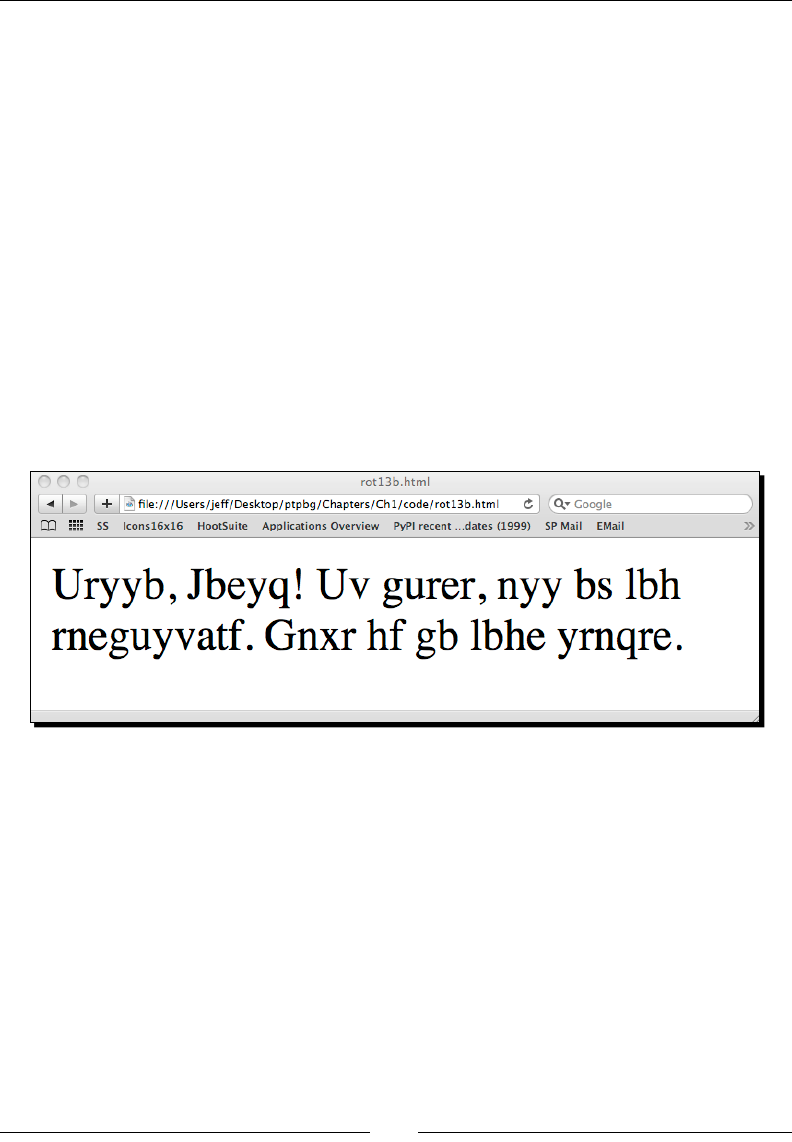

5. Open the translated HTML le using your web browser.

What just happened?

We updated our rot13.py script to read standard input data rather than rely on a

command-line opon. Doing this provides opmal congurability going forward and lets us

feed input of varying length from a collecon of dierent sources. We did this by looping on

each line available on the sys.stdin le stream and calling our translaon funcon. We

wrote each character returned by that funcon to the sys.stdout stream.

Next, we ran our updated script via the command line, using sample_page.html as input.

As expected, the encoded version was printed on our terminal.

As you can see, there is a major problem with our output. We should have a proper page

tle and our content should be broken down into dierent paragraphs.

Geng Started

[ 18 ]

Remember, structured markup text is sprinkled with

tag elements that dene its structure and organizaon.

In this example, we not only translated the text content, we also translated the markup

tags, rendering them meaningless. A web browser would not be able to display this data

properly. We'll need to update our processor code to ignore the tags. We'll do just that

in the next secon.

Time for action – skipping over markup tags

In order to preserve the proper, structured HTML that tags provide, we need to ensure we

don't include them in our rotaon. To do this, we'll keep track of whether or not our input

stream is currently within a tag. If it is, we won't translate our leers.

1. Once again, create a new Python source le and enter the following code. When

you're nished, save the le as rot13-c.py.

import sys

from optparse import OptionParser

import string

CHAR_MAP = dict(zip(

string.ascii_lowercase,

string.ascii_lowercase[13:26] + string.ascii_lowercase[0:13]

)

)

class RotateStream(object):

"""

General purpose ROT13 Translator

A ROT13 translator smart enough to skip

Markup tags if that's what we want.

"""

MARKUP_START = '<'

MARKUP_END = '>'

def __init__(self, skip_tags):

self.skip_tags = skip_tags

def rotate13_letter(self, letter):

"""

Return the 13-char rotation of a letter.

"""

do_upper = False

if letter.isupper():

Chapter 1

[ 19 ]

do_upper = True

letter = letter.lower()

if letter not in CHAR_MAP:

return letter

else:

letter = CHAR_MAP[letter]

if do_upper:

letter = letter.upper()

return letter

def rotate_from_file(self, handle):

"""

Rotate from a file handle.

Takes a file-like object and translates

text from it into ROT13 text.

"""

state_markup = False

for line in handle:

for char in line:

if self.skip_tags:

if state_markup:

# here we're looking for a closing

# '>'

if char == self.MARKUP_END:

state_markup = False

else:

# Not in a markup state, rotate

# unless we're starting a new

# tag

if char == self.MARKUP_START:

state_markup = True

else:

char = self.rotate13_letter(char)

else:

char = self.rotate13_letter(char)

# Make this a generator

yield char

if __name__ == '__main__':

parser = OptionParser()

parser.add_option('-t', '--tags', dest="tags",

help="Ignore Markup Tags", default=False,

Geng Started

[ 20 ]

action="store_true")

options, args = parser.parse_args()

rotator = RotateStream(options.tags)

for letter in rotator.rotate_from_file(sys.stdin):

sys.stdout.write(letter)

2. Run the same example.html le that we created for the last example through the

new processor. This me, be sure to pass a -t command-line opon.

$ cat sample_page.html | python rot13-c.py -t > rot13.html

$

3. If everything was entered correctly, the contents of rot13.html should be exactly

as follows.

<html>

<head>

<title>Uryyb, Jbeyq!</title>

</head>

<body>

<p>

Uv gurer, nyy bs lbh rneguyvatf.

</p>

<p>

Gnxr hf gb lbhe yrnqre.

</p>

</body>

</html>

4. Open the translated le in your web browser.

Chapter 1

[ 21 ]

What just happened?

That was a prey complex example, so let's step through it. We did quite a bit. First, we

moved away from a simple rotate13_letter funcon and wrapped almost all of our

funconality in a Python class named RotateStream. Doing this helps us ensure that our

code will be reusable down the road.

We dene a __init__ method within the class that accepts a single parameter named

skip_tags. The value of this parameter is assigned to the self parameter so we can access

it later from within other methods. If this is a True value, then our parser class will know

that it's not supposed to translate markup tags.

Next, you'll see our familiar rotate13_letter method (it's a method now as it's dened

within a class). The only real dierence here is that in addion to the letter parameter,

we're also requiring the standard self parameter.

Finally, we have our rotate_from_file method. This is where the bulk of our new

funconality was added. Like before, we're iterang through all of the characters available

on a le stream. This me, however, the le stream is passed in as a handle parameter.

This means that we could have just as easily passed in an open le handle rather than the

standard in le handle.

Inside the method, we implement a simple state machine, with two possible states. Our

current state is saved in the state_markup Boolean aribute. We only rely on it if the value

of self.skip_tags set in the __init__ method is True.

1. If state_markup is True, then we're currently within the context of a markup tag

and we're looking for the > character. When it's found, we'll change state_markup

to False. As we're inside a tag, we'll never ask our class to perform a ROT13

operaon.

2. If state_markup is False, then we're parsing standard text. If we come across

the < character, then we're entering a new markup tag. We set the value of state_

markup to True. Finally, if we're not in tag, we'll call rotate13_letter to perform

our ROT13 operaon.

You should also noce some unfamiliar code at the end of the source lisng. We've taken

advantage of the OptionParser class, which is part of the standard library. We've added

a single opon that will allow us to selecvely enable our markup bypass funconality. The

value of this opon is passed into RotateStream's __init__ method.

The nal two lines of the lisng show how we pass the sys.stdin le handle to rotate_

from_file and iterate over the results. The rotate_from_file method has been dened

as a generator funcon. A generator funcon returns values as it processes rather than

waing unl compleon. This method avoids storing all of the result in memory and lowers

overall applicaon memory consumpon.

Geng Started

[ 22 ]

State machines

A state machine is an algorithm that keeps track of an applicaon's internal state. Each

state has a set of available transions and funconality associated with it. In this example,

we were either inside or outside of a tag. Applicaon behavior changed depending on

our current state. For example, if we were inside then we could transion to outside. The

opposite also holds true.

The state machine concept is advanced and won't be covered in detail. However, it is a

major method used when implemenng text-processing machinery. For example, regular

expression engines are generally built on variaons of this model. For more informaon

on state machine implementaon, see the Wikipedia arcle available at http://

en.wikipedia.org/wiki/Finite-state_machine.

Pop Quiz – ROT 13 processing

1. We dene MARKUP_START and MARKUP_END class constants within our

RotateStream class. How might our state machine be aected if these

values were swapped?

2. Is it possible to use ROT13 on a string containing characters found outside of the

English alphabet?

3. What would happen if we embedded > or < signs within our text content or tag

values?

4. In our example, we read our input a line at a me. Can you think of a way to make

this more ecient?

Have a go hero – support multiple input channels

We've briey covered reading data via standard in as well as processing simple

command-line opons. Your job is to integrate the two so that your applicaon will

simply translate a command-line value if one is present before defaulng to standard input.

If you're able to implement this, try extending the opon handling code so that your input

string can be passed in to the rotaon applicaon using a command-line opon.

$python rot13-c.py –s 'myinputstring'

zlvachgfgevat

$

Chapter 1

[ 23 ]

Supporting third-party modules

Now that we've got our rst example out of the way, we're going to take a lile bit of a

detour and learn how to obtain and install third-party modules. This is important, as we'll

install a few throughout the remainder of the book.

The Python community maintains a centralized package repository, termed the Python

Package Index (or PyPI). It is available on the web at http://pypi.python.org. From

there, it is possible to download packages as compressed source distribuons, or in some

cases, pre-packaged Python components. PyPI is also a rich source of informaon. It's a

great place to learn about available third-party applicaons. Links are provided to individual

package documentaon if it's not included directly into the package's PyPI page.

Packaging in a nutshell

There are at least two dierent popular methods of packaging and deploying Python

packages. The distutils package is part of the standard distribuon and provides a

mechanism for building and installing Python soware. Packages that take advantage of the

distutils system are downloaded as a source distribuon and built and installed by a local

user. They are installed by simply creang an addional directory structure within the system

Python directory that matches the package name.

In an eort to make packages more accessible and self-contained, the concept of the

Python Egg was introduced. An egg le is simply a ZIP archive of a package. When an egg is

installed, the ZIP le itself is placed on the Python path, rather than a subdirectory.

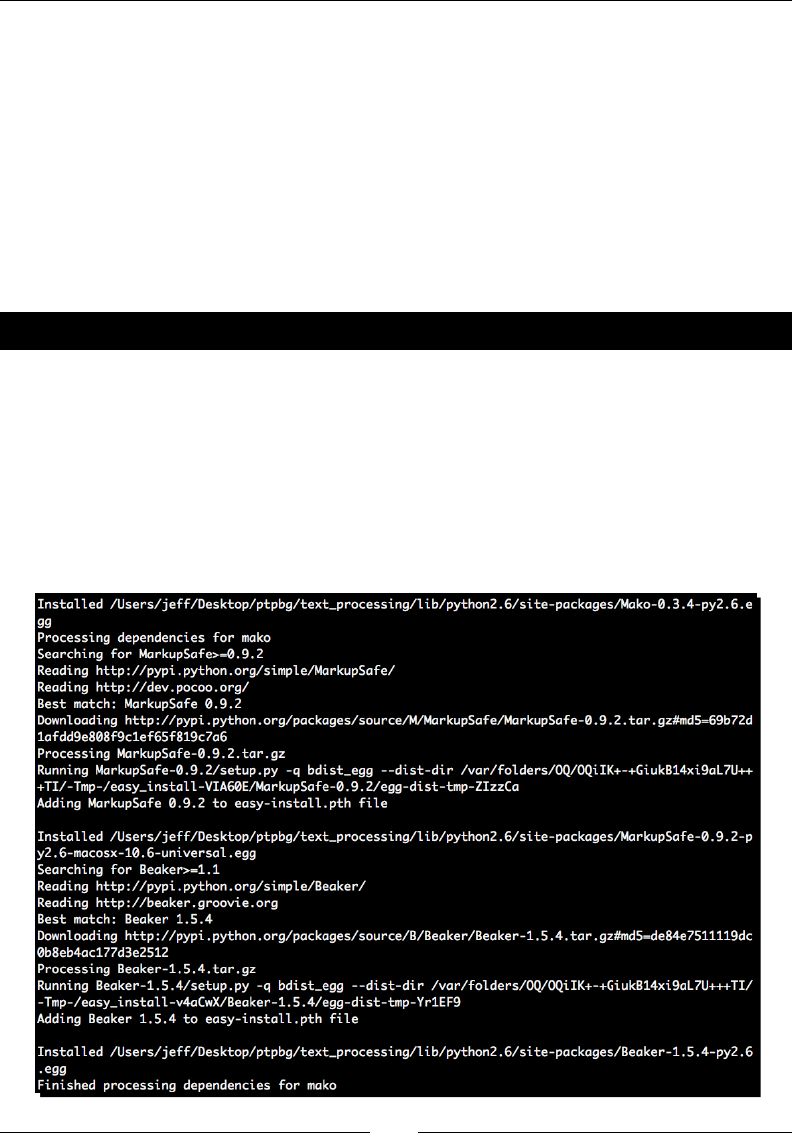

Time for action – installing SetupTools

Egg les have largely become the de facto standard in Python packaging. In order to install,

develop, and build egg les, it is necessary to install a third-party tool kit. The most popular

is SetupTools, and this is what we'll be working with throughout this book. The installaon

process is fairly easy to complete and is rather self-contained. Installing SetupTools gives us

access to the easy_install command, which automates the download and installaon of

packages that have been registered with PyPI.

1. Download the installaon script, which is available at http://peak.

telecommunity.com/dist/ez_setup.py. This same script will be

used for all versions of Python.

Geng Started

[ 24 ]

2. As an administrave user, run the ez_setup.py script from the command line. The

SetupTools installaon process will complete. If you've executed the script with the

proper rights, you should see output similar as follows:

# python ez_setup.py

Downloading http://pypi.python.org/packages/2.6/s/setuptools/

setuptools-0.6c11-py2.6.egg

Processing setuptools-0.6c11-py2.6.egg

creating /usr/lib/python2.6/site-packages/setuptools-0.6c11-

py2.6.egg

Extracting setuptools-0.6c11-py2.6.egg to /usr/lib/python2.6/site-

packages

Adding setuptools 0.6c11 to easy-install.pth file

Installing easy_install script to /usr/bin

Installing easy_install-2.6 script to /usr/bin

Installed /usr/lib/python2.6/site-packages/setuptools-0.6c11-

py2.6.egg

Processing dependencies for setuptools==0.6c11

Finished processing dependencies for setuptools==0.6c11

#

What just happened?

We downloaded the SetupTools installaon script and executed it as an administrave

user. By doing so, our system Python environment was congured so that we can install egg

les in the future via the SetupTools easy_install system.

SetupTools does not currently work with Python 3.0. There is, however, an

alternave available via the Distribute project. Distribute is intended to be a

drop-in replacement for SetupTools and will work with either major Python

version. For more informaon, or to download the installer, visit http://

pypi.python.org/pypi/distribute.

Chapter 1

[ 25 ]

Running a virtual environment

Now that we have SetupTools installed, we can install third-party packages by simply

running the easy_install command. This is nice because package dependencies will

automacally be downloaded and installed so we no longer have to do this manually.

However, there's sll one piece missing. Even though we can install these packages easily,

we sll need to retain administrave privileges to do so. Addionally, all of the packages

that we chose to install will be placed in the system's Python library directory, which has

the potenal to cause inconsistencies and problems down the road.. As you've probably

guessed, there's a ulity to address that.

Python 2.6 introduces the concept of a local user package directory. This is

simply an addional locaon found within your user home directory that Python

searches for installed packages. It is possible to install eggs into this locaon via

easy_install with a –user command-line switch. For more informaon,

see http://www.python.org/dev/peps/pep-0370/.

Conguring virtualenv

The virtualenv package, distributed as a Python egg, allows us to create an isolated

Python environment anywhere we wish. The environment comes complete with a bin

directory containing a Python binary, its own installaon of SetupTools, and an instance-

specic library directory. In short, it creates a locaon for us to install and congure Python

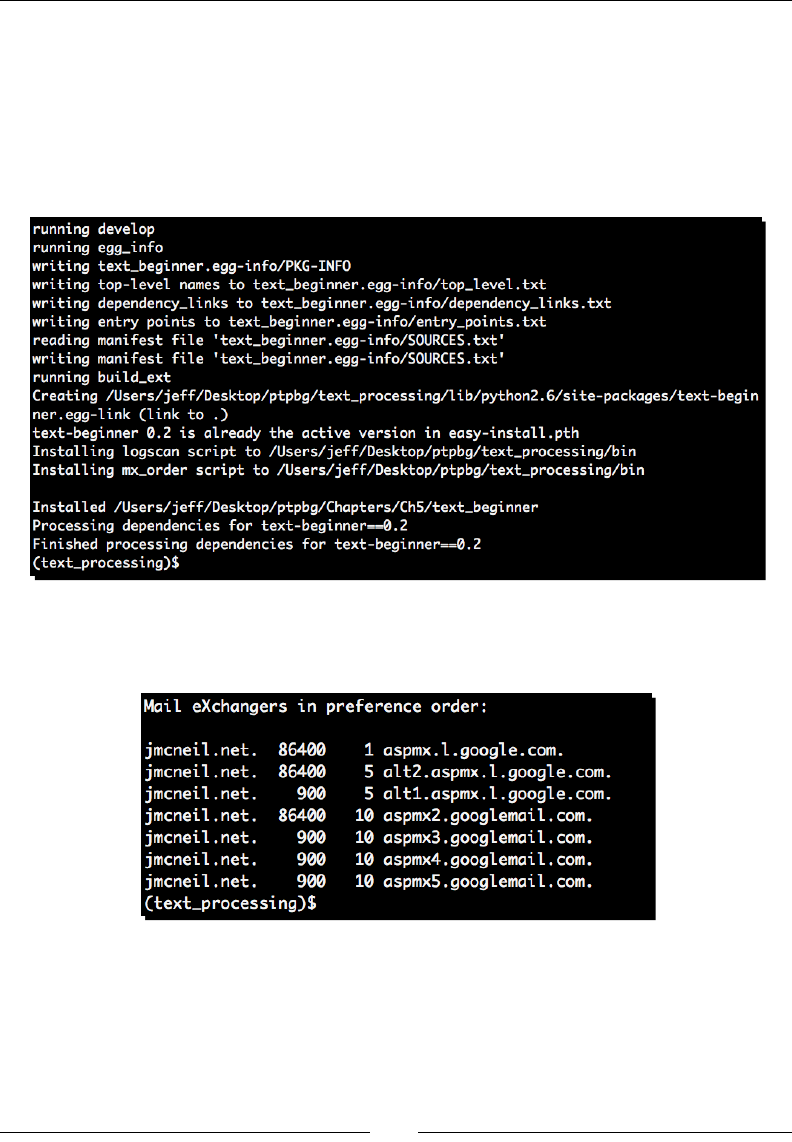

without interfering with the system installaon.

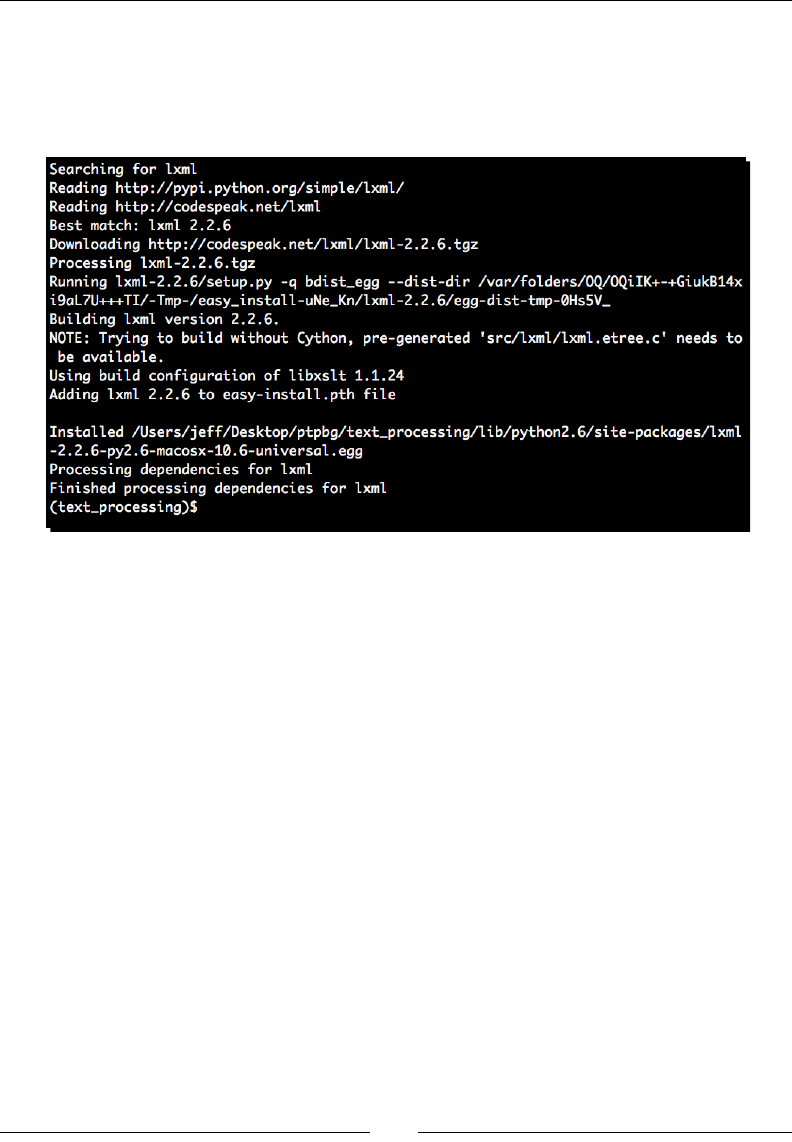

Time for action – conguring a virtual environment

Here, we'll enable the virtualenv package, which will illustrate how to install packages

from the PyPI site. We'll also congure our rst environment, which we'll use throughout the

book for the rest of our examples and code illustraons.

1. As a user with administrave privileges, install virtualenv from the system

command line by running easy_install virtualenv. If you have the correct

permissions, your output should be similar to the following.

Searching for virtualenv

Reading http://pypi.python.org/simple/virtualenv/

Reading http://virtualenv.openplans.org

Best match: virtualenv 1.4.5

Downloading http://pypi.python.org/packages/source/v/virtualenv/

virtualenv-1.4.5.tar.gz#md5=d3c621dd9797789fef78442e336df63e

Processing virtualenv-1.4.5.tar.gz

Geng Started

[ 26 ]

Running virtualenv-1.4.5/setup.py -q bdist_egg --dist-dir /tmp/

easy_install-rJXhVC/virtualenv-1.4.5/egg-dist-tmp-AvWcd1

warning: no previously-included files matching '*.*' found under

directory 'docs/_templates'

Adding virtualenv 1.4.5 to easy-install.pth file

Installing virtualenv script to /usr/bin

Installed /usr/lib/python2.6/site-packages/virtualenv-1.4.5-

py2.6.egg

Processing dependencies for virtualenv

Finished processing dependencies for virtualenv

2. Drop administrave privileges as we won't need them any longer. Ensure that you're

within your home directory and create a new virtual instance by running:

$ virtualenv --no-site-packages text_processing

3. Step into the newly created text_processing directory and acvate the

virtual environment. Windows users will do this by simply running the Scripts\

activate applicaon, while Linux users must instead source the script using the

shell's dot operator.

$ . bin/activate

4. If you've done this correctly, you should now see your command-line prompt change

to include the string (text_processing). This serves as a visual cue to remind you

that you're operang within a specic virtual environment.

(text_processing)$ pwd

/home/jmcneil/text_processing

(text_processing)$ which python

/home/jmcneil/text_processing/bin/python

(text_processing)$

5. Finally, deacvate the environment by running the deacvate command. This will

return your shell environment to default. Note that once you've done this, you're

once again working with the system's Python install.

(text_processing)$ deactivate

$ which python

/usr/bin/python

$

Chapter 1

[ 27 ]

If you're running Windows, by default python.exe and easy_install.

exe are not placed on your system %PATH%. You'll need to manually congure

your %PATH% variable to include C:\Python2.6\ and C:\Python2.6\

Scripts. Addional scripts added by easy_install will also be placed in

this directory, so it's worth seng up your %PATH% variable.

What just happened?

We installed the virtualenv package using the easy_install command directly o of

the Python Package index. This is the method we'll use for installing any third-party packages

going forward. You should now be familiar with the easy_install process. Also, note that

for the remainder of the book, we'll operate from within this text_processing virtual

environment. Addional packages are installed using this same technique from within the

connes of our environment.

Aer the install process was completed, we congured and acvated our rst virtual

environment. You saw how to create a new instance via the virtualenv command and

you also learned how to subsequently acvate it using the bin/activate script. Finally, we

showed you how to deacvate your environment and return to your system's default state.

Have a go hero – install your own environment

Now that you know how to set up your own isolated Python environment, you're encouraged

to create a second one and install a collecon of third-party ulies in order to get the hang of

the installaon process.

1. Create a new environment and name it as of your own choice.

2. Point your browser to http://pypi.python.org and select one or more

packages that you nd interesng. Install them via the easy_install command

within your new virtual environment.

Note that you should not require administrave privileges to do this. If you receive an error

about permissions, make certain you've remembered to acvate your new environment.

Deacvate when complete. Some of the packages available for install may require a correctly

congured C-language compiler.

Geng Started

[ 28 ]

Where to get help?

The Python community is a friendly bunch of people. There is a wide range of online

resources you can take advantage of if you nd yourself stuck. Let's take a quick look at

what's out there.

Home site: The Python website, available at http://www.python.org.

Specically, the documentaon secon. The standard library reference is a

wonderful asset and should be something you keep at your ngerps. This site also

contains a wonderful tutorial as well as a complete language specicaon.

Member groups: The comp.lang.python newsgroup. Available via Google

groups as well as an e-mail gateway, this provides a general-purpose locaon to

ask Python-related quesons. A very smart bunch of developers patrol this group;

you're certain to get a quality answer.

Forums: Stack Overow, available at http://www.stackoverflow.com.

Stack overow is a website dedicated to developers. You're welcome to ask your

quesons, as well as answer others' inquires, if you're up to it!

Mailing list: If you have a beginner-level queson, there is a Python tutor mailing

list available o of the Python.org site. This is a great place to ask your beginner

quesons no maer how basic they might be!

Centralized package repository: The Python Package Index at http://pypi.

python.org. Chances are someone has already had to do exactly what it is

you're doing.

If all else fails, you're more than welcome to contact the author via e-mail to questions@

packtpub.com. Every eort will be made to answer your queson, or point you to a freely

available resource where you can nd your resoluon.

Summary

This chapter introduced you to the dierent categories of text that we'll cover in greater

detail throughout the book and provided you with a lile bit of informaon as to how we'll

manage our packaging going forward.

We performed a few low-level text translaons by implemenng a ROT13 encoder and

highlighted the dierences between freeform and structured markup. We'll examine these

categories in much greater detail as we move on. The goal of that exercise was to learn some

byte-level transformaon techniques.

Finally, we touched on a couple of dierent ways to read data into our applicaons. In our

next chapter, we'll spend a great deal of me geng to know the IO system and learning

how you can extract text from a collecon of sources.

2

Working with the IO System

Now that we've covered some basic text-processing methods and introduced

you to some core Python best pracces, it's me we take a look at how to

actually get to your data. Reading some example text from the command line is

an easy process, but geng to real world data can be more dicult. However,

it's important to understand how to do so.

Python provides all of the standard le IO mechanisms you would expect from

any full-featured programming language. Addionally, there is a wide range of

standard library modules included that enable you to access data via various

network services such as HTTP, HTTPS, and FTP.

In this chapter, we'll focus on those methods and systems. We'll look at standard le

funconality, the extended abilies within the standard library, and how these components

can be used interchangeably in many situaons.

As part of our introducon to le input and output, we'll also cover some common

excepon-handling techniques that are especially helpful when dealing with external data.

In this chapter, we shall:

Look at Python's le IO and examine the objects created by the open factory funcon

Understand text-based and raw IO, and how they dier

Examine the urllib and urllib2 modules and detail le access via HTTP and FTP

streams

Handle le IO using Context Managers

Learn about le-like objects and methods to use objects interchangeably for

maximum reuse

Working with the IO System

[ 30 ]

Introduce excepons with a specic focus on idioms specic to le IO and how to

deal with certain error condions

Introduce a web server logle processor, which we'll expand upon throughout

future chapters

Examine ways to deal with mulple les

We'll also spend some me looking at changes to the IO subsystem in future

versions of Python

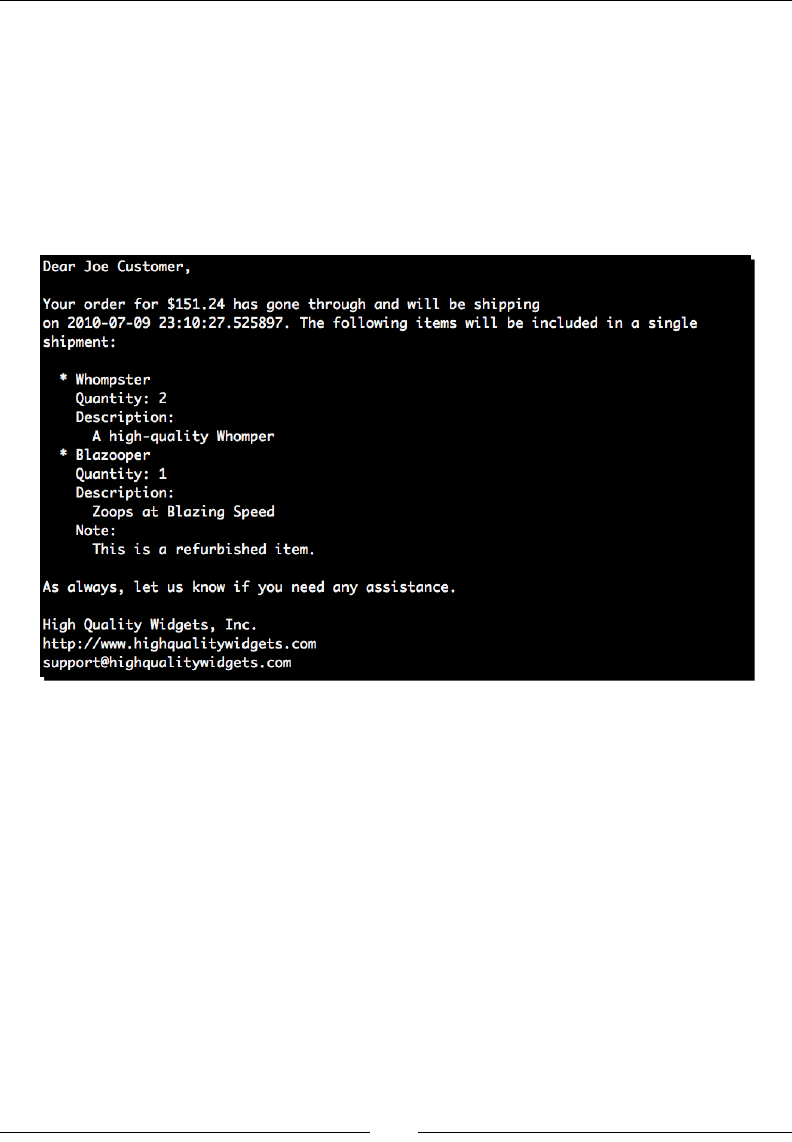

Parsing web server logs

We're going to introduce a web server log parser in this secon that we'll build upon

throughout the remainder of the book. We're going to start by assuming the logle is in the

standard Apache combined format.

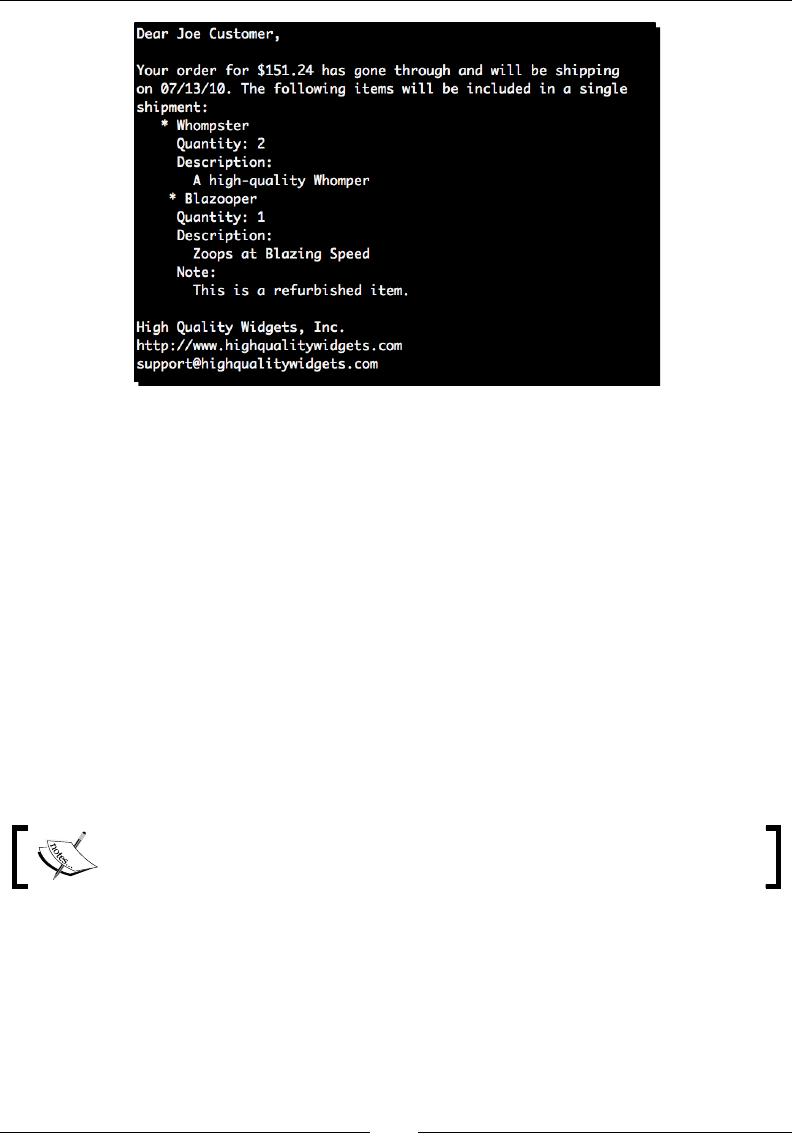

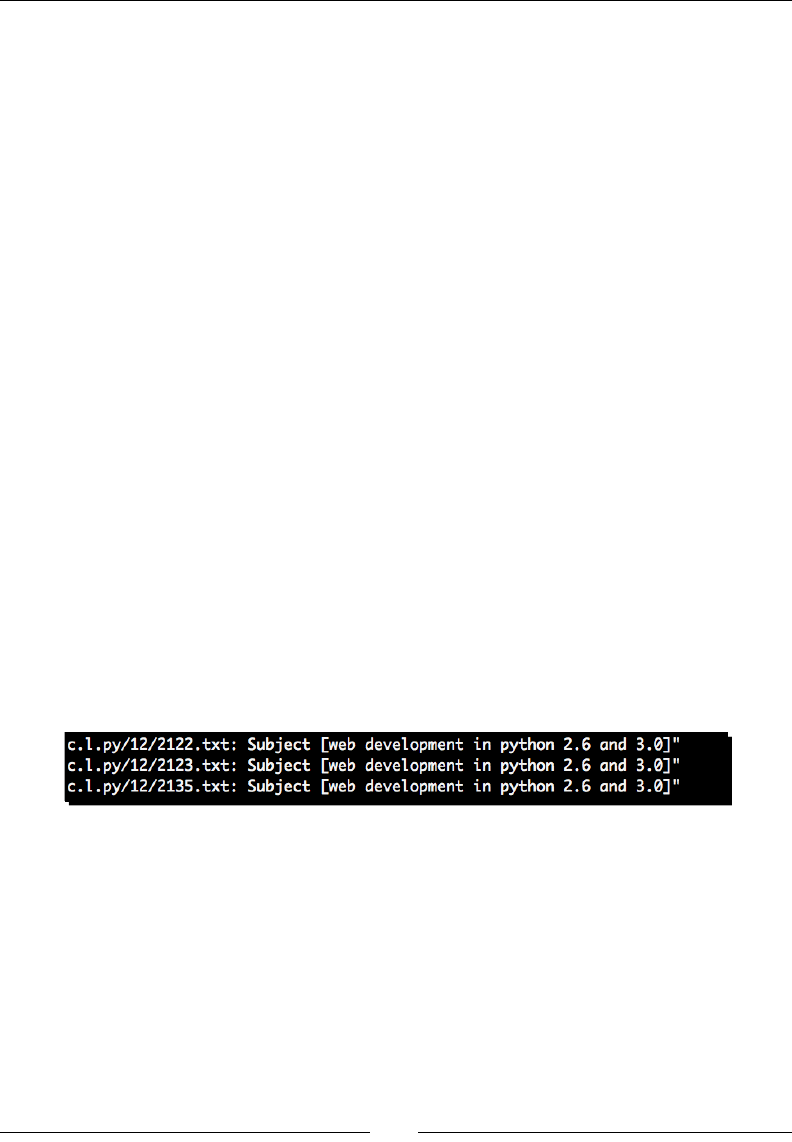

For example, the following line represents an HTTP request for the root directory of a

website. The request is successful, as indicated by the 200 series response code.

In order, the above line contains the remote IP address of the client, the remote identd

name, the authencated username, the server's mestamp, the rst line of the request, the

HTTP response code, the size of the le as returned by the server, the referring page, and

nally the User Agent, or the browser soware running on the end user's computer.

The dashes in the previous screenshot indicate a missing value. This doesn't necessarily

correspond to an error condion. For example, if the page is not password-protected then

there will be no remote user. The dash is a common condion we'll need to handle.

Chapter 2

[ 31 ]

For more informaon on web server log formats and available data points,

please see your web server documentaon. Apache logs were used to write

this book; documentaon for the Apache web server is available at http://

httpd.apache.org/docs/2.2/mod/mod_log_config.html

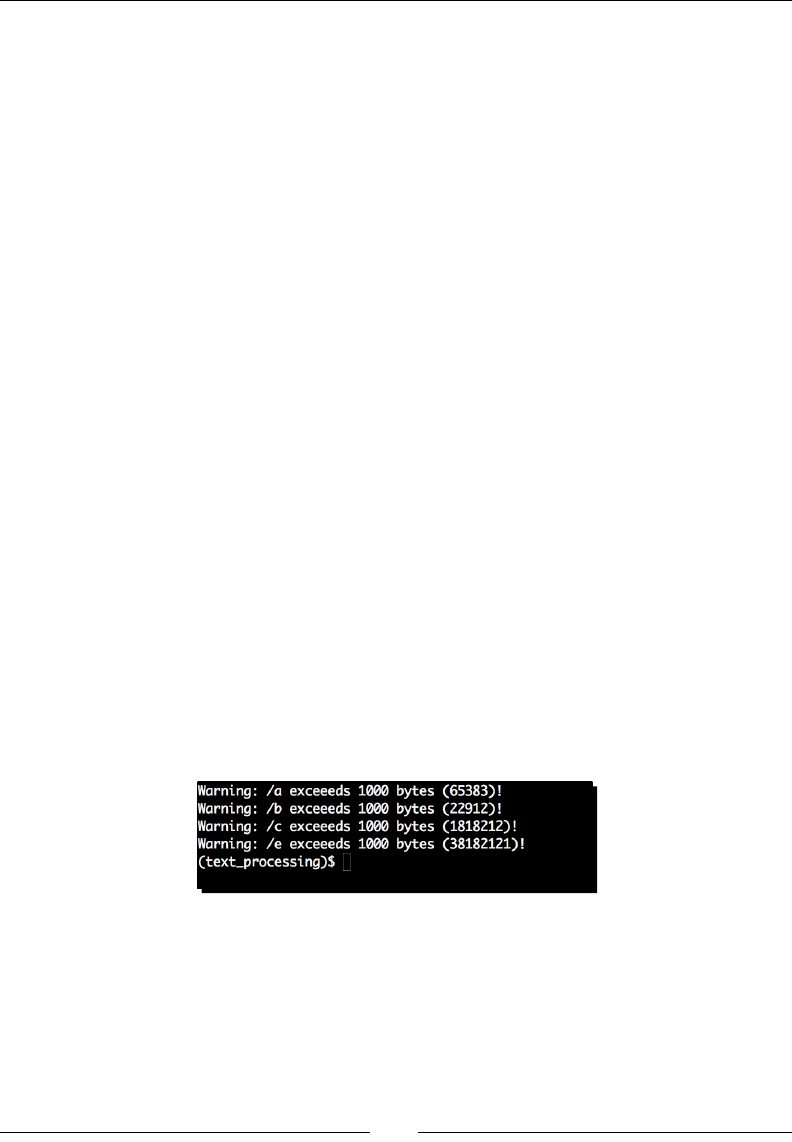

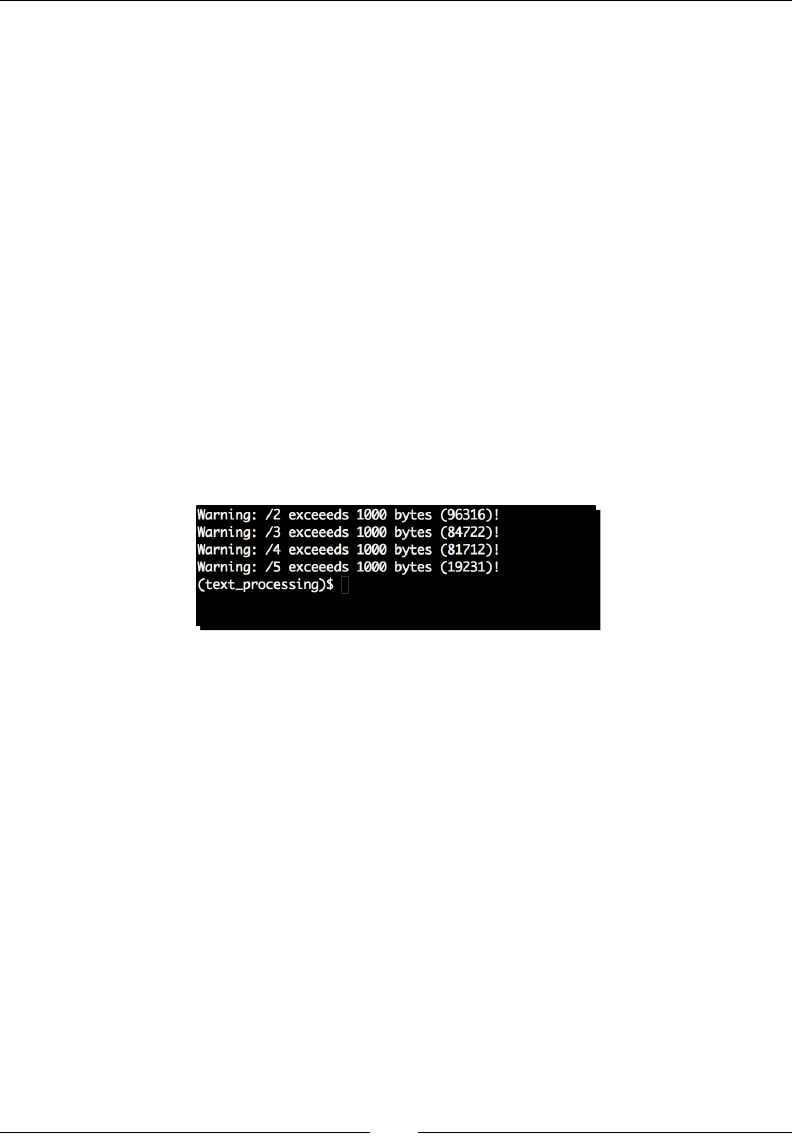

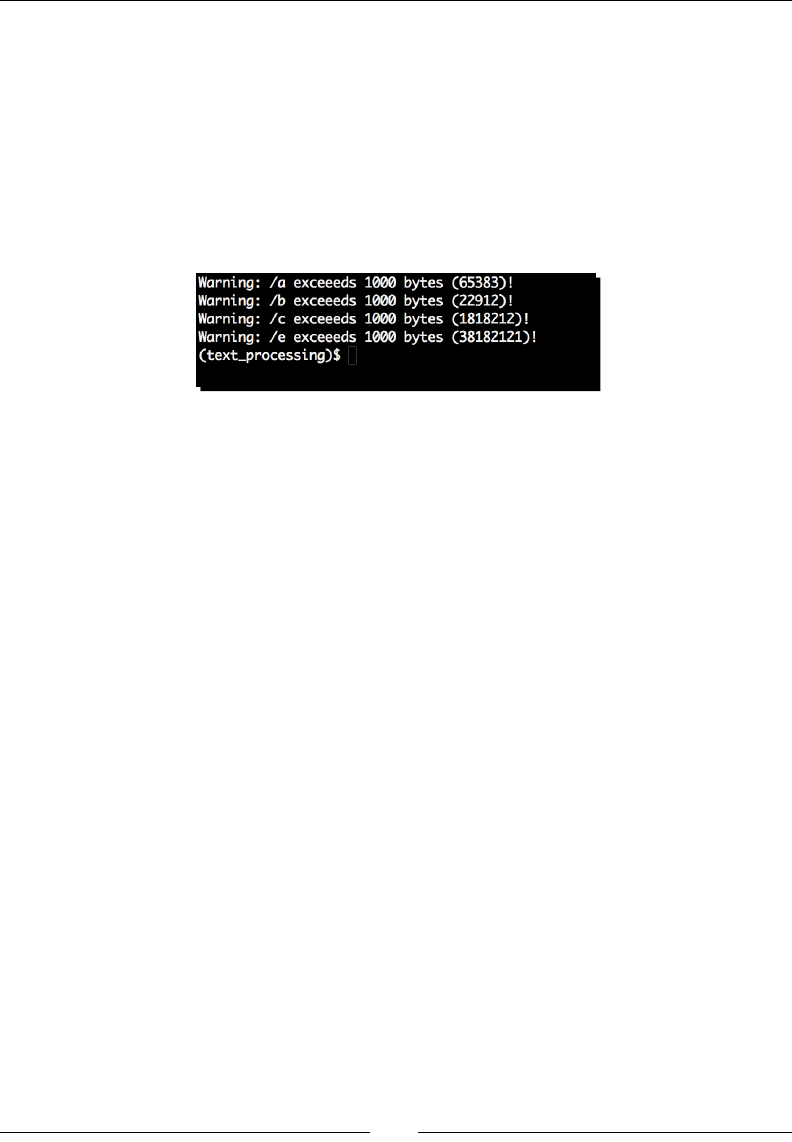

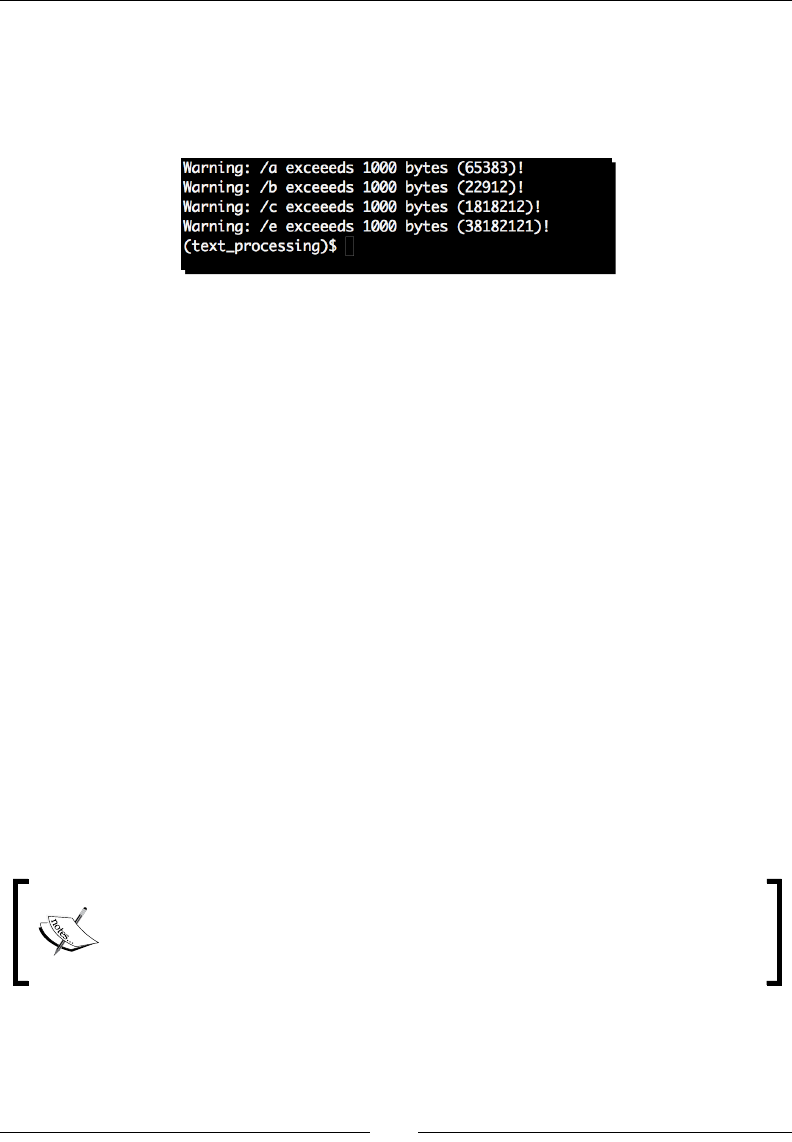

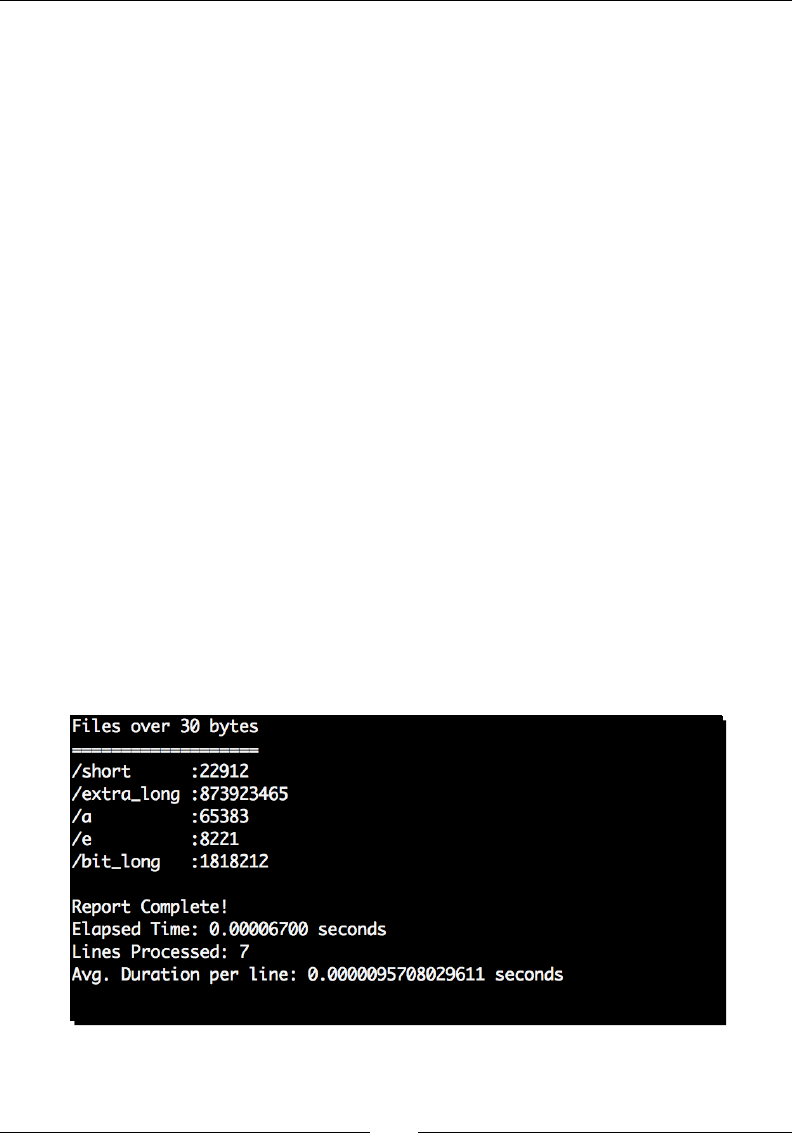

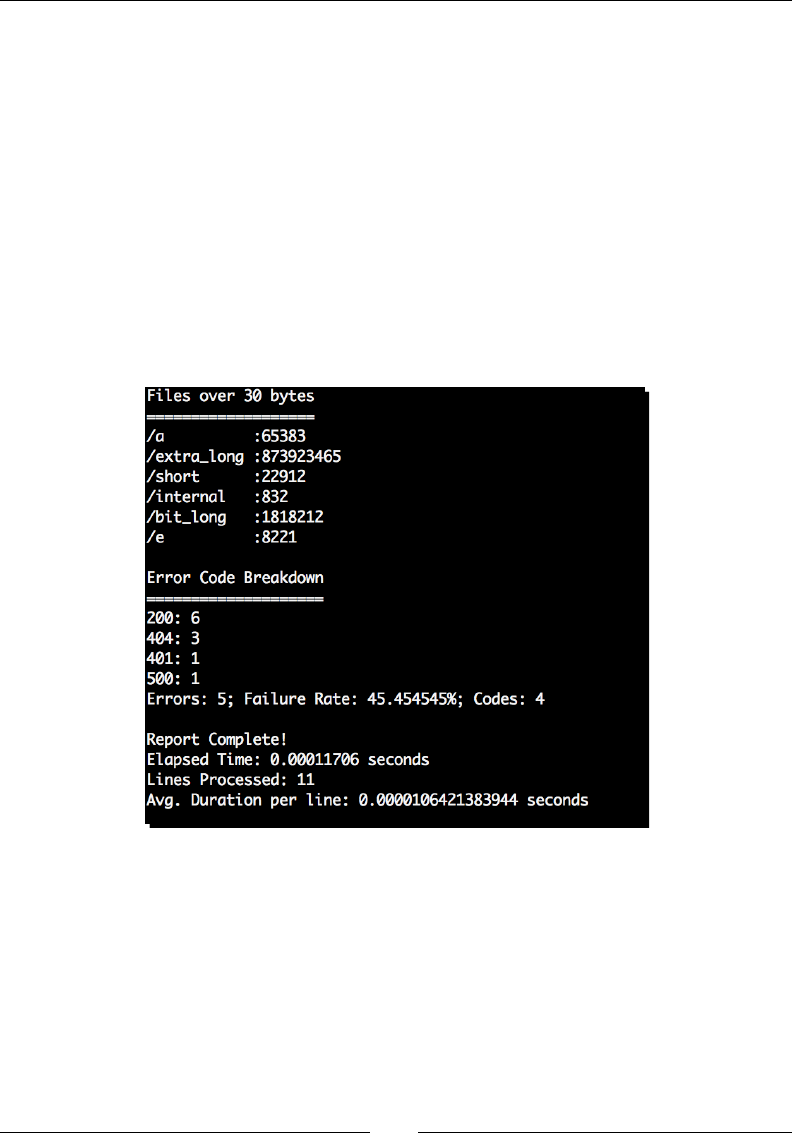

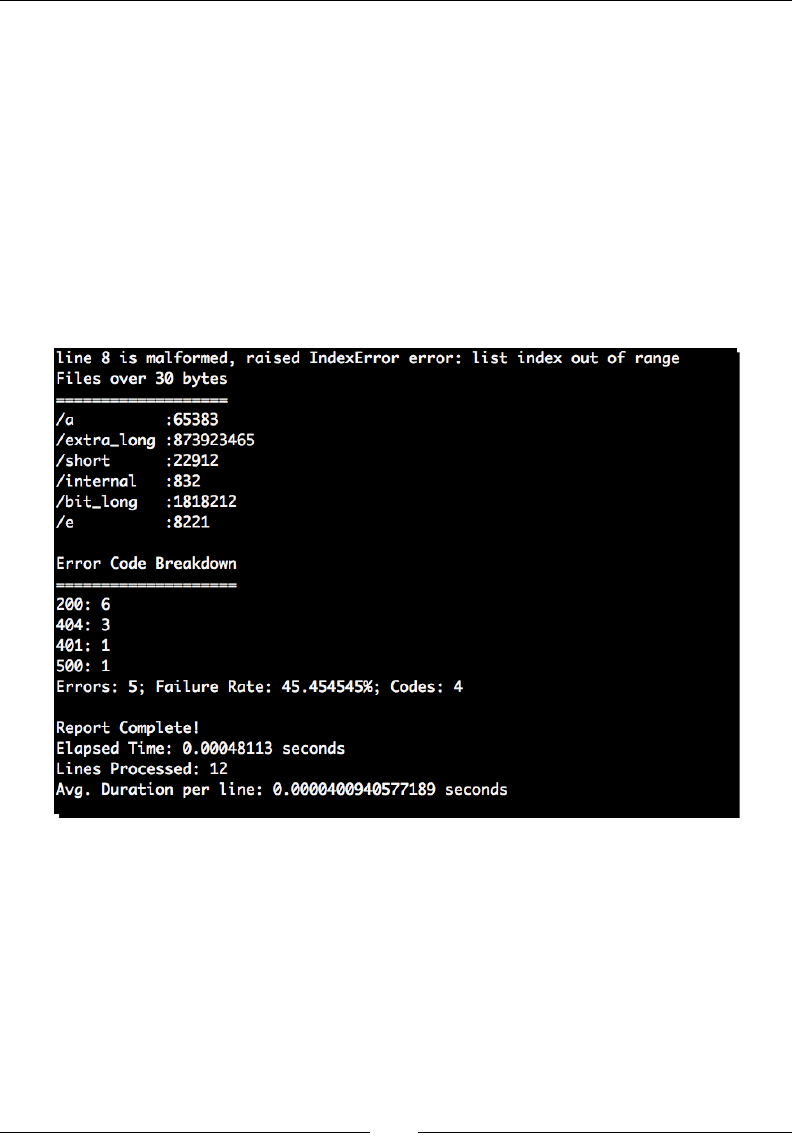

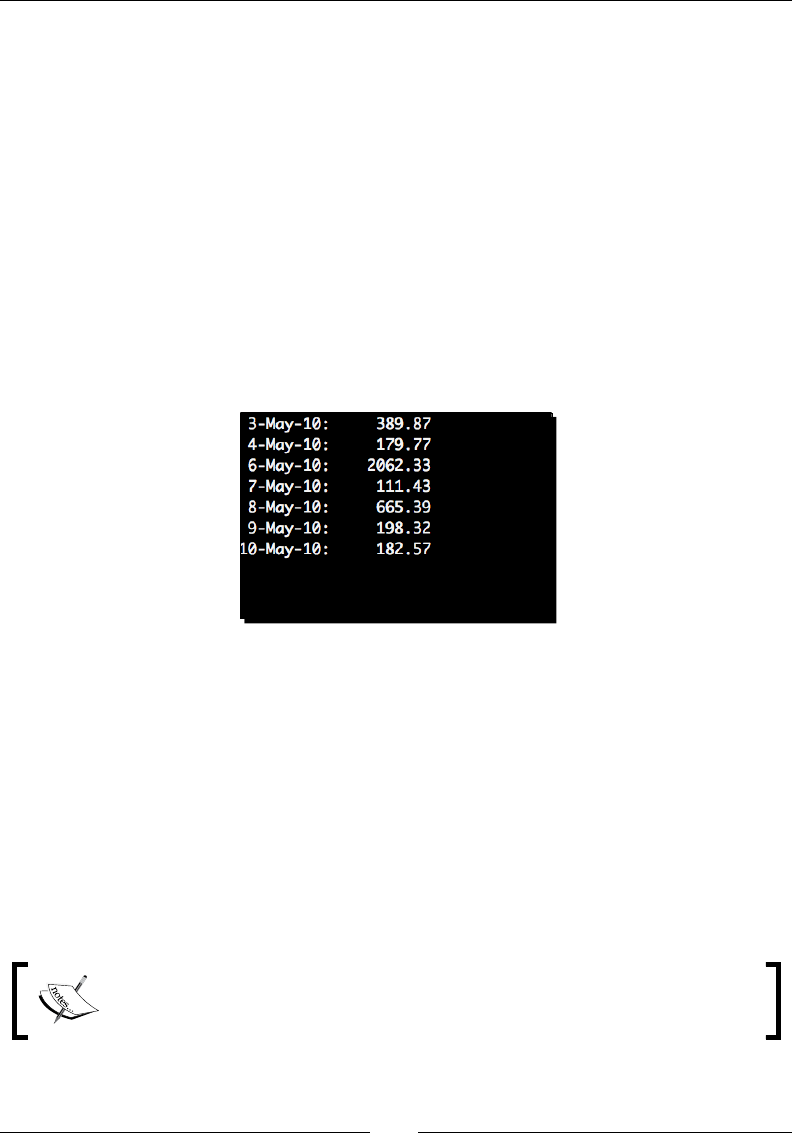

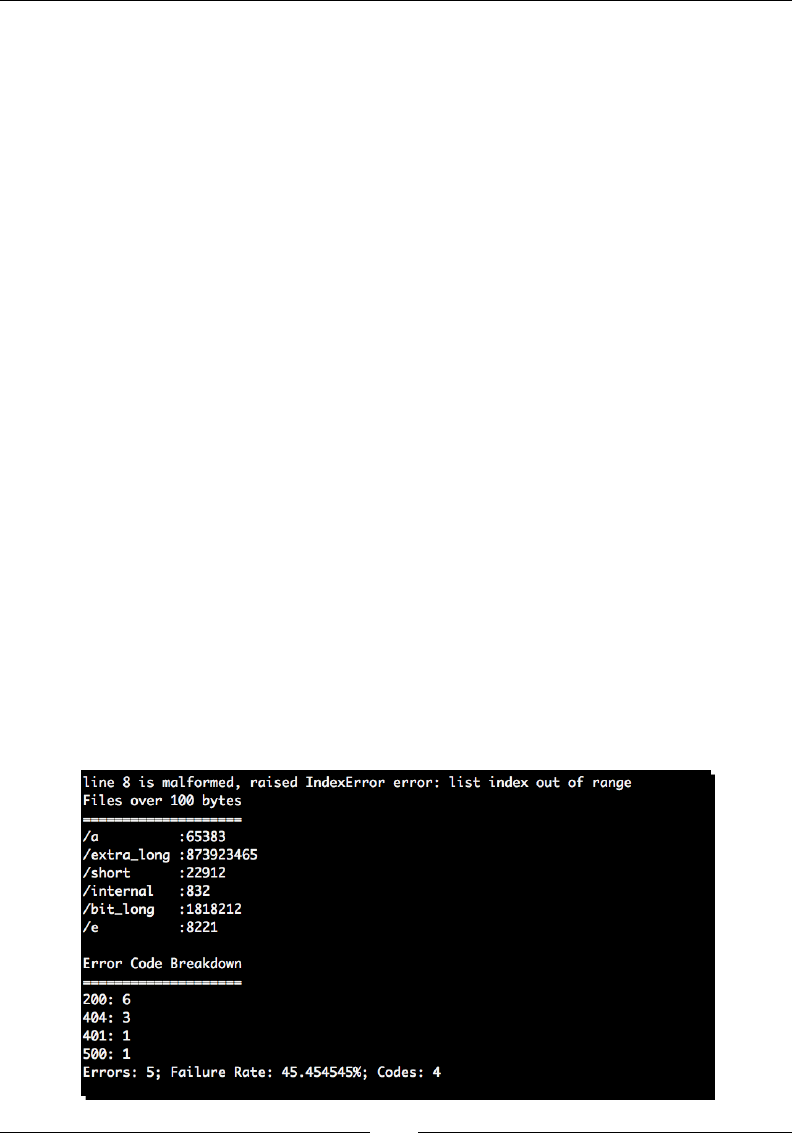

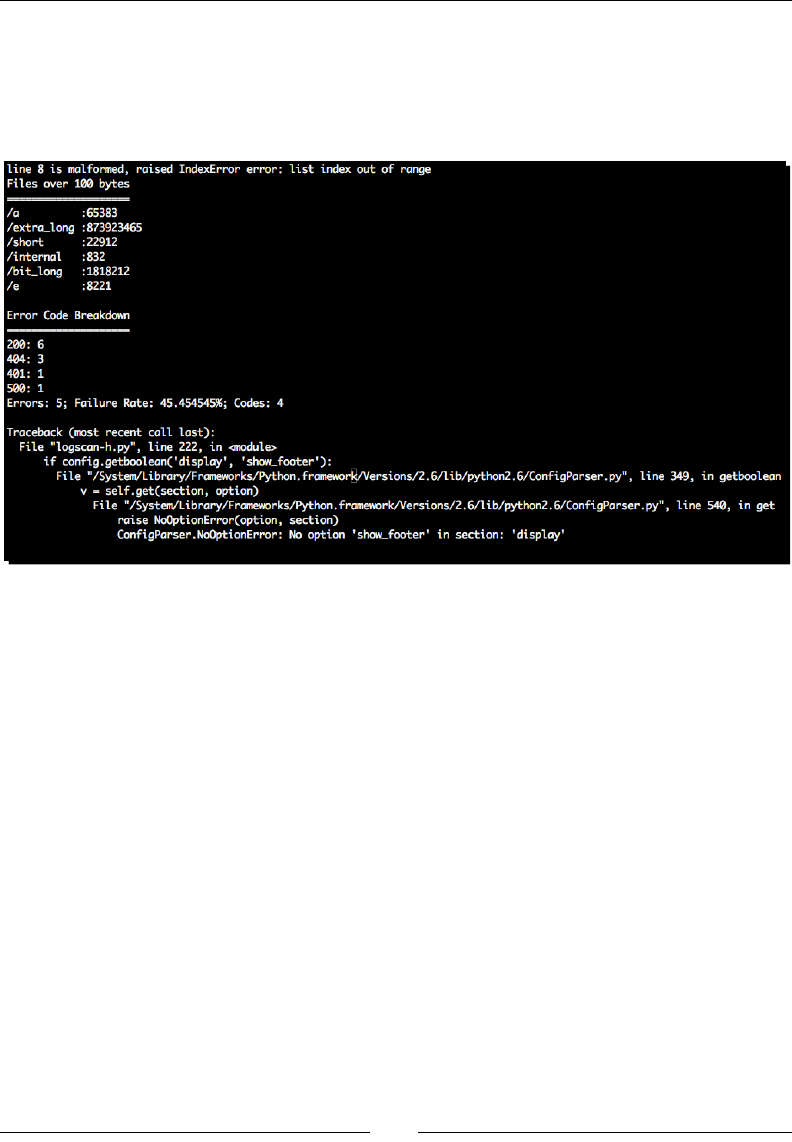

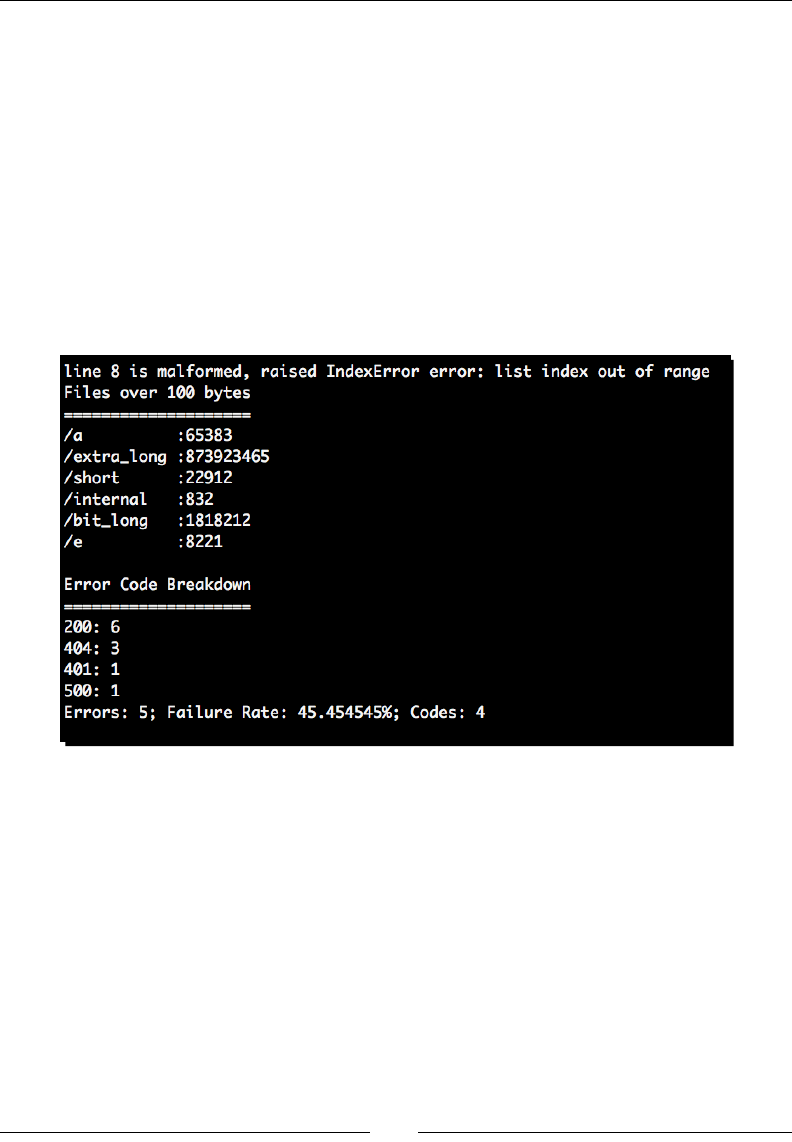

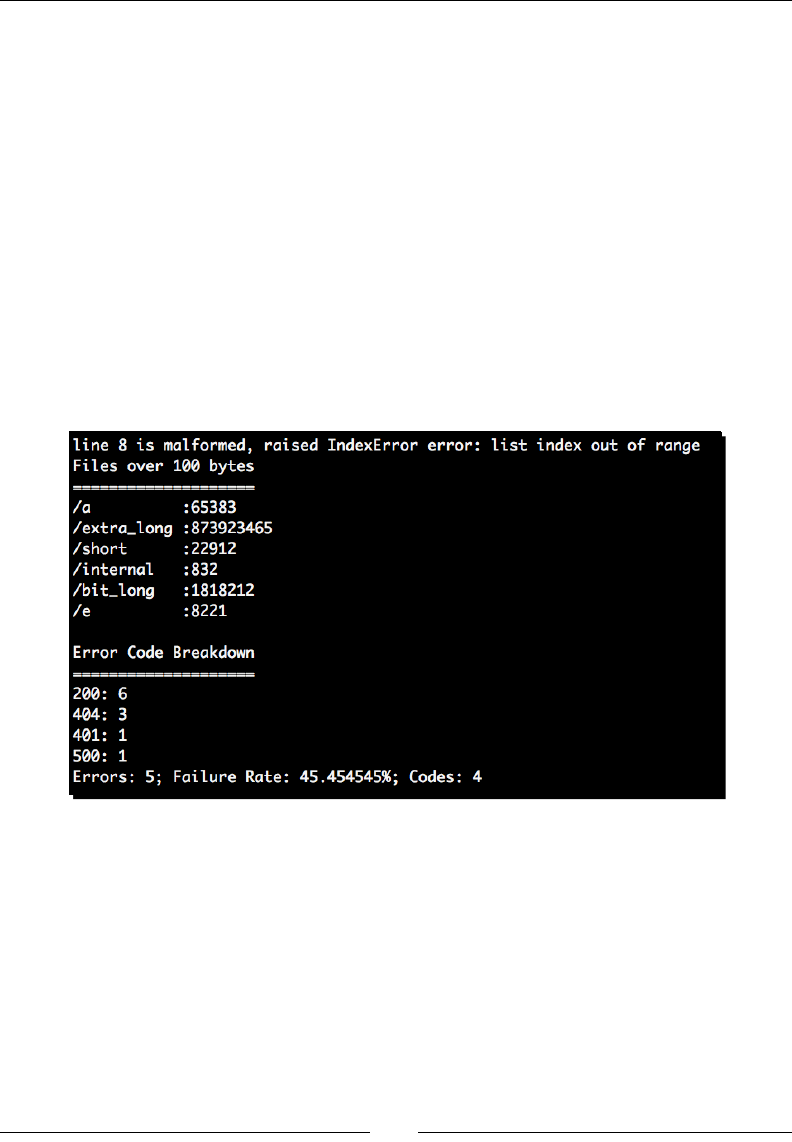

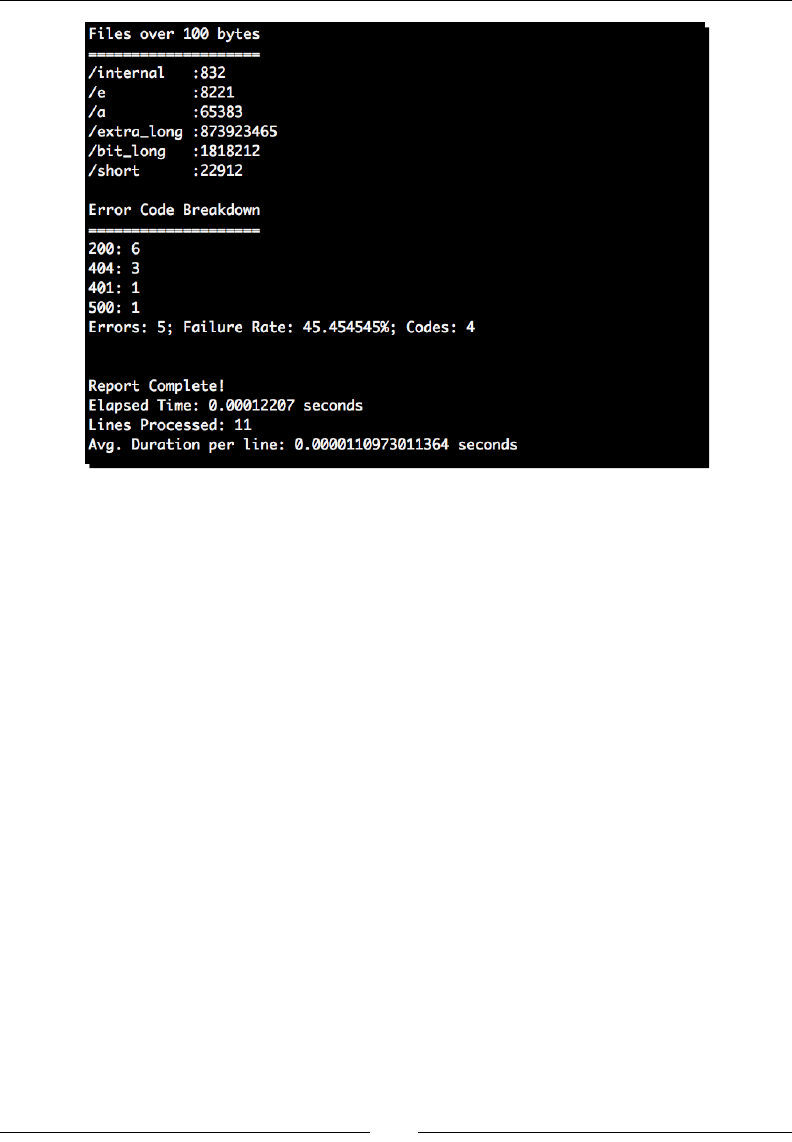

Time for action – generating transfer statistics

Now, let's start our processor. Inially, we'll build enough funconality to scan our logle

as read via standard input and report les served over a given size. System administrators

may nd ulies such as this useful when aempng to track down abusive users. It's also

generally a good idea to iteravely add funconality to an applicaon in development.

1. First, step into the virtual environment created in Chapter 1, Geng Started and

acvate it so that all of our work is isolated locally. Only the UNIX method is shown

here.

$ cd text_processing/

$ . bin/activate

2. Create an empty Python le and name it logscan.py. Enter the following code:

#!/usr/bin/python

import sys

from optparse import OptionParser

class LogProcessor(object):

"""

Process a combined log format.

This processor handles logfiles in a combined format,

objects that act on the results are passed in to

the init method as a series of methods.

"""

def __init__(self, call_chain=None):

"""

Setup parser.

Save the call chain. Each time we process a log,

we'll run the list of callbacks with the processed

log results.

"""

if call_chain is None: