Python Multimedia:Beginner's Guide Multimedia Beginner's (2010)

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 292 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Cover

- Copyright

- Credits

- About the Author

- About the Reviewers

- Table of Contents

- Preface

- Chapter 1: Python and Multimedia

- Chapter 2: Working with Images

- Installation prerequisites

- Reading and writing images

- Time for action – image file converter

- Time for action – creating a new image containing some text

- Time for action – reading images from archives

- Basic image manipulations

- Time for action – resizing

- Time for action – rotating

- Time for action – flipping

- Time for action – capture screenshots at intervals

- Time for action – cropping an image

- Time for action – pasting: mirror the smiley face!

- Project: Thumbnail Maker

- Time for action – play with Thumbnail Maker application

- Time for action – generating the UI code

- Time for action – connecting the widgets

- Time for action – developing image processing code

- Summary

- Chapter 3: Enhancing Images

- Installation and download prerequisites

- Adjusting brightness and contrast

- Time for action—adjusting brightness and contrast

- Tweaking colors

- Time for action – swap colors within an image!

- Time for action – change the color of a flower

- Blending

- Time for action – blending two images

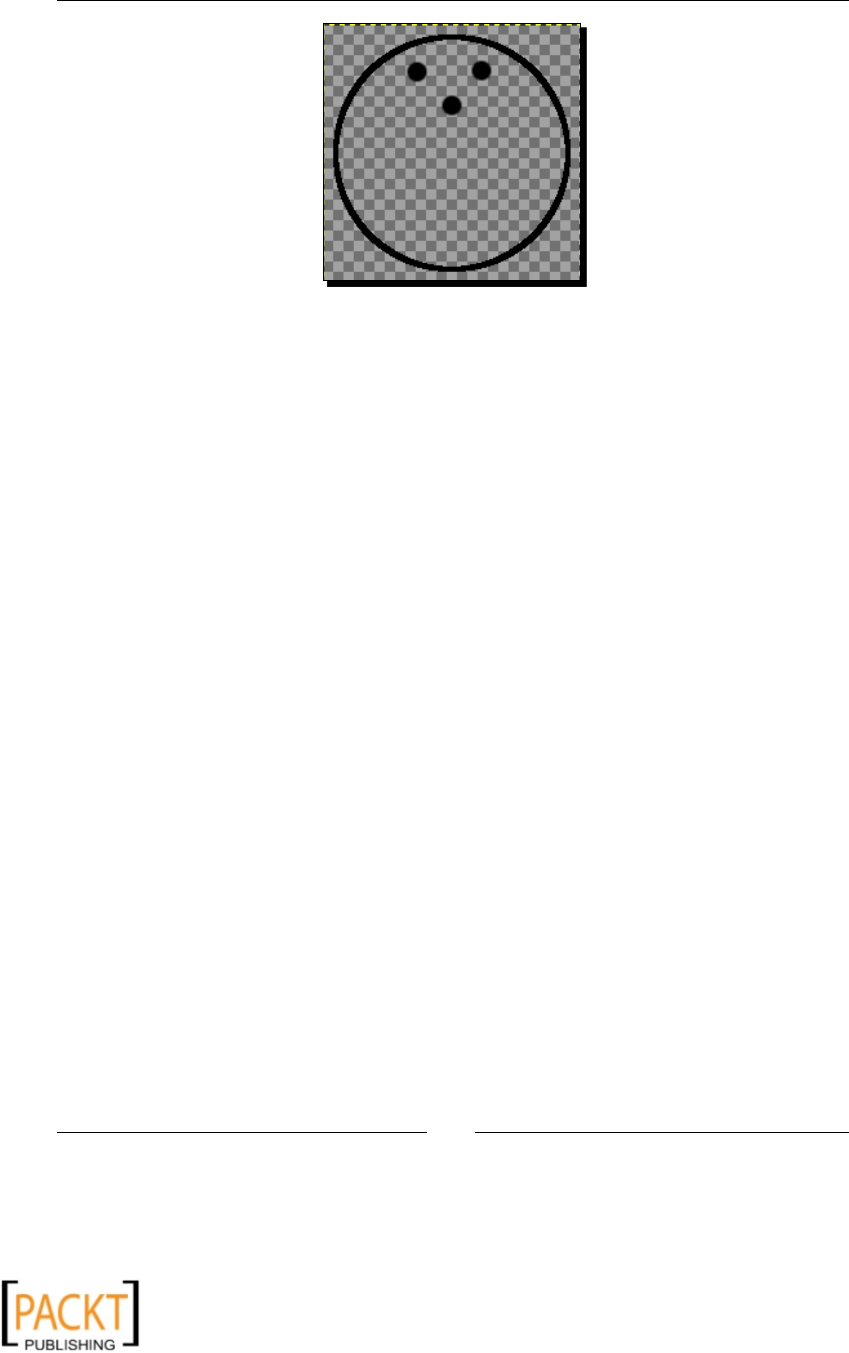

- Creating transparent images

- Time for action – create transparency

- Making composites with image mask

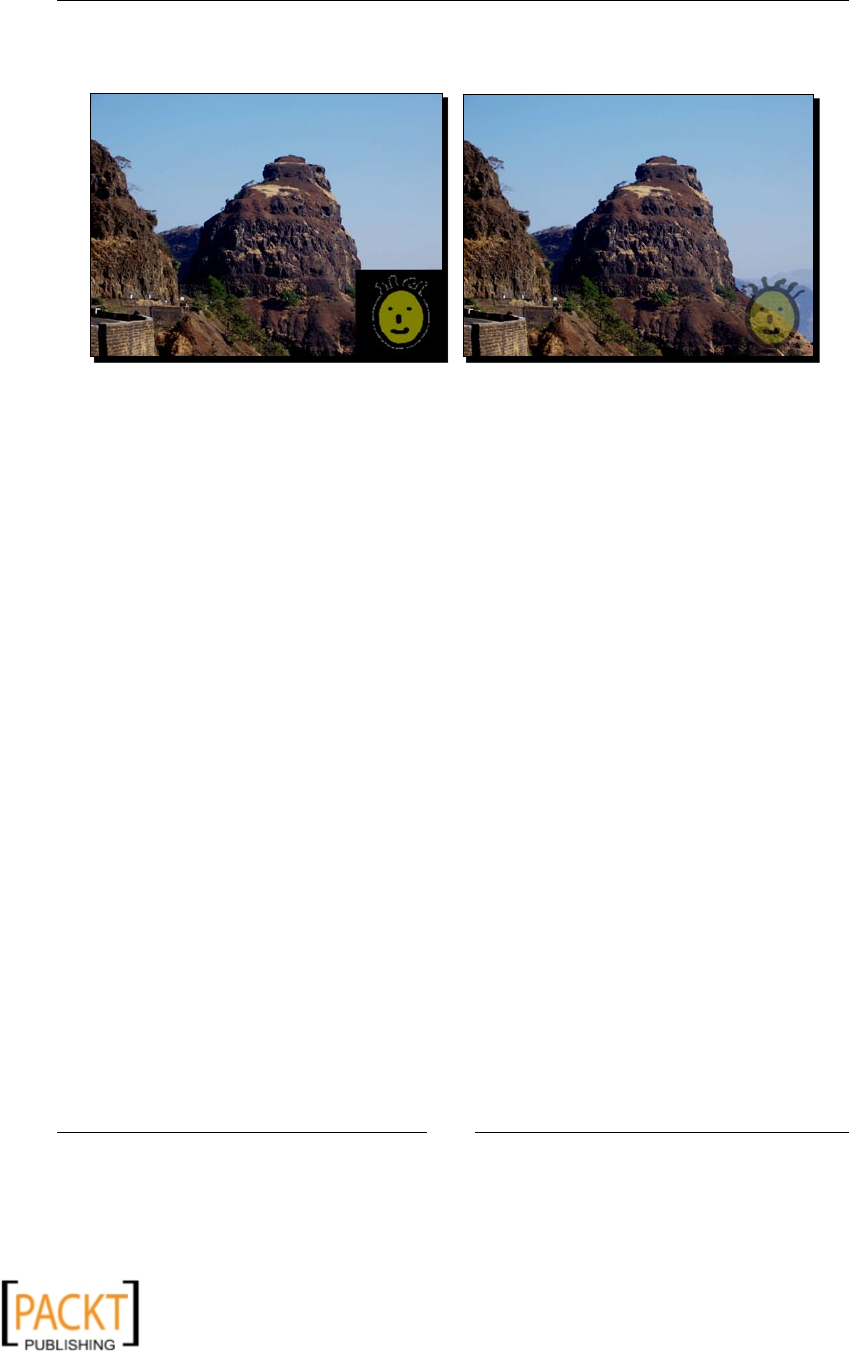

- Time for action – making composites with image mask

- Project: Watermark Maker Tool

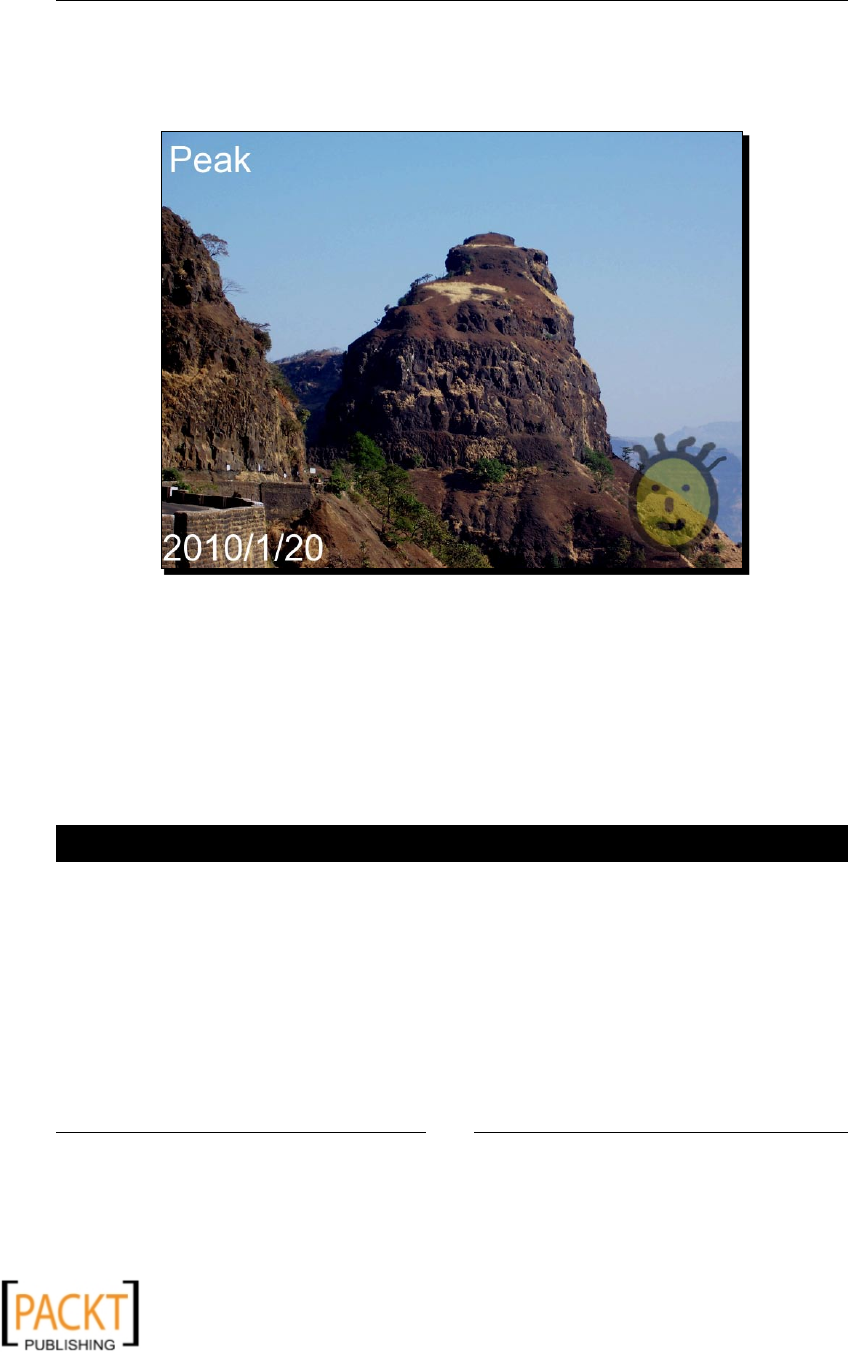

- Time for action – Watermark Maker Tool

- Applying image filters

- Time for action – smoothing an image

- Time for action – detecting and enhancing edges

- Time for action – embossing

- Adding a border

- Time for action – enclosing a picture in a photoframe

- Summary

- Chapter 4: Fun with Animations

- Installation prerequisites

- A primer on Pyglet

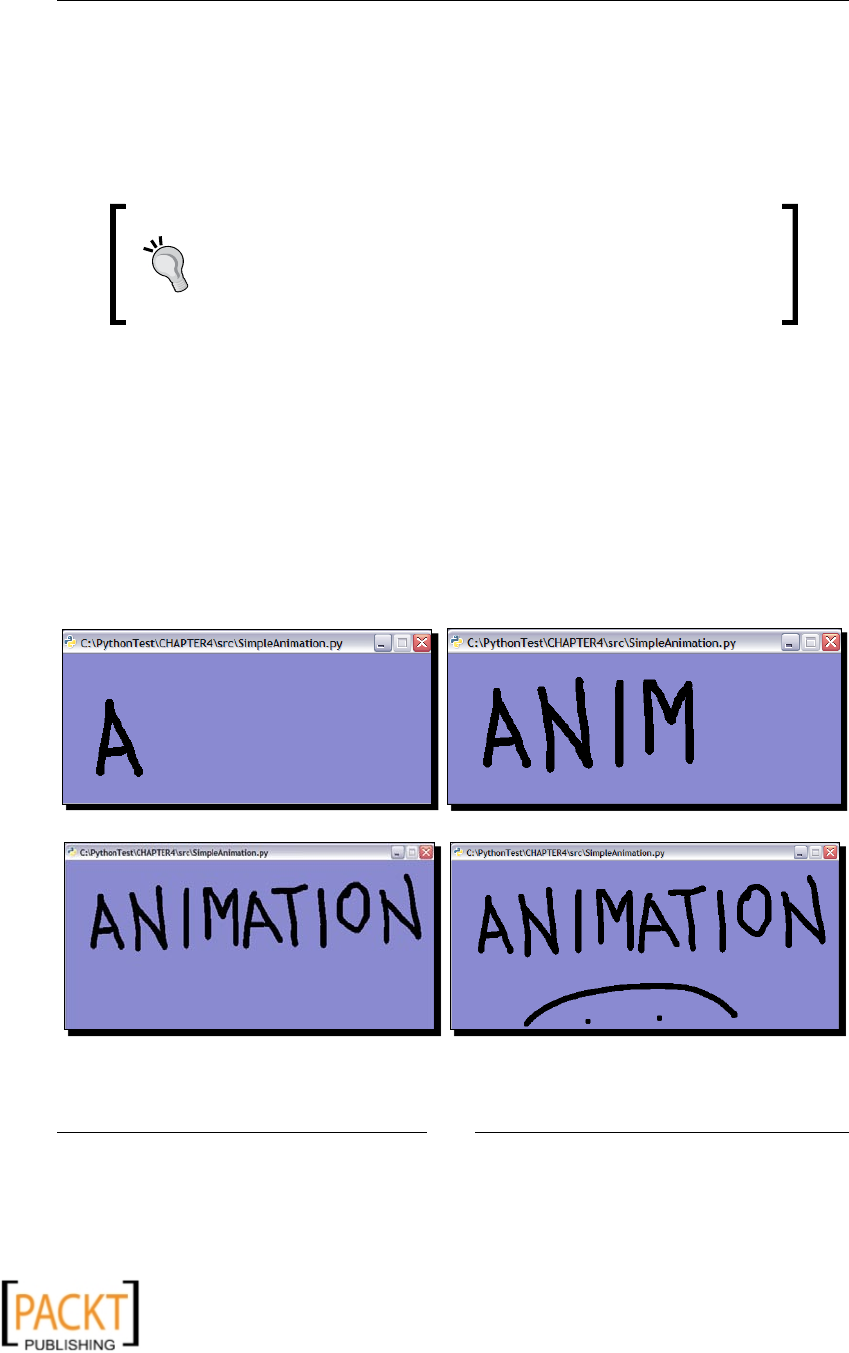

- Animations with Pyglet

- Time for action – viewing an existing animation

- Time for action – animation using a sequence of images

- Time for action – bouncing ball animation

- Time for action – a simple bowling animation

- Time for action – raindrops animation

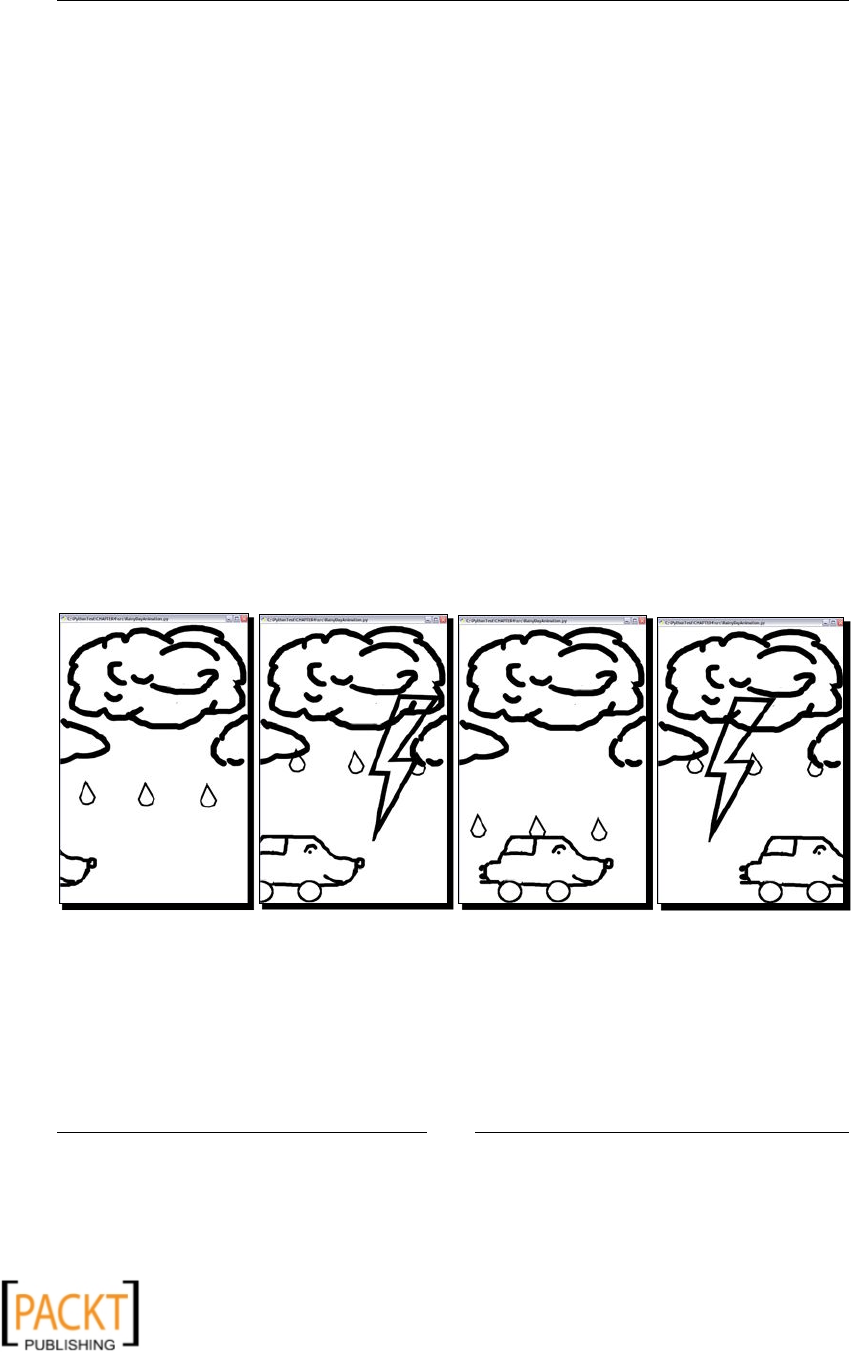

- Project: drive on a rainy day!

- Time for action – drive on a rainy day!

- Summary

- Chapter 5: Working with Audios

- Installation prerequisites

- A primer on GStreamer

- Playing music

- Time for action – playing an audio: method 1

- Time for action – playing an audio: method 2

- Converting audio file format

- Time for action – audio file format converter

- Extracting part of an audio

- Time for action – MP3 cutter!

- Recording

- Time for action – recording

- Summary

- Chapter 6: Audio Controls and Effects

- Controlling playback

- Time for action – pause and resume a playing audio stream

- Time for action – MP3 cutter from basic principles

- Adjusting volume

- Time for action – adjusting volume

- Audio effects

- Time for action – fading effects

- Time for action – adding echo effect

- Project: combining audio clips

- Time for action – creating custom audio by combining clips

- Audio mixing

- Time for action – mixing audio tracks

- Visualizing an audio track

- Time for action – audio visualizer

- Summary

- Chapter 7: Working with Videos

- Installation prerequisites

- Playing a video

- Time for action – video player!

- Video format conversion

- Time for action – video format converter

- Video manipulations and effects

- Time for action – resize a video

- Time for action – crop a video

- Adding text and time on a video stream

- Time for action – overlay text on a video track

- Separating audio and video tracks

- Time for action – audio and video tracks

- Mixing audio and video tracks

- Time for action – audio/video track mixer

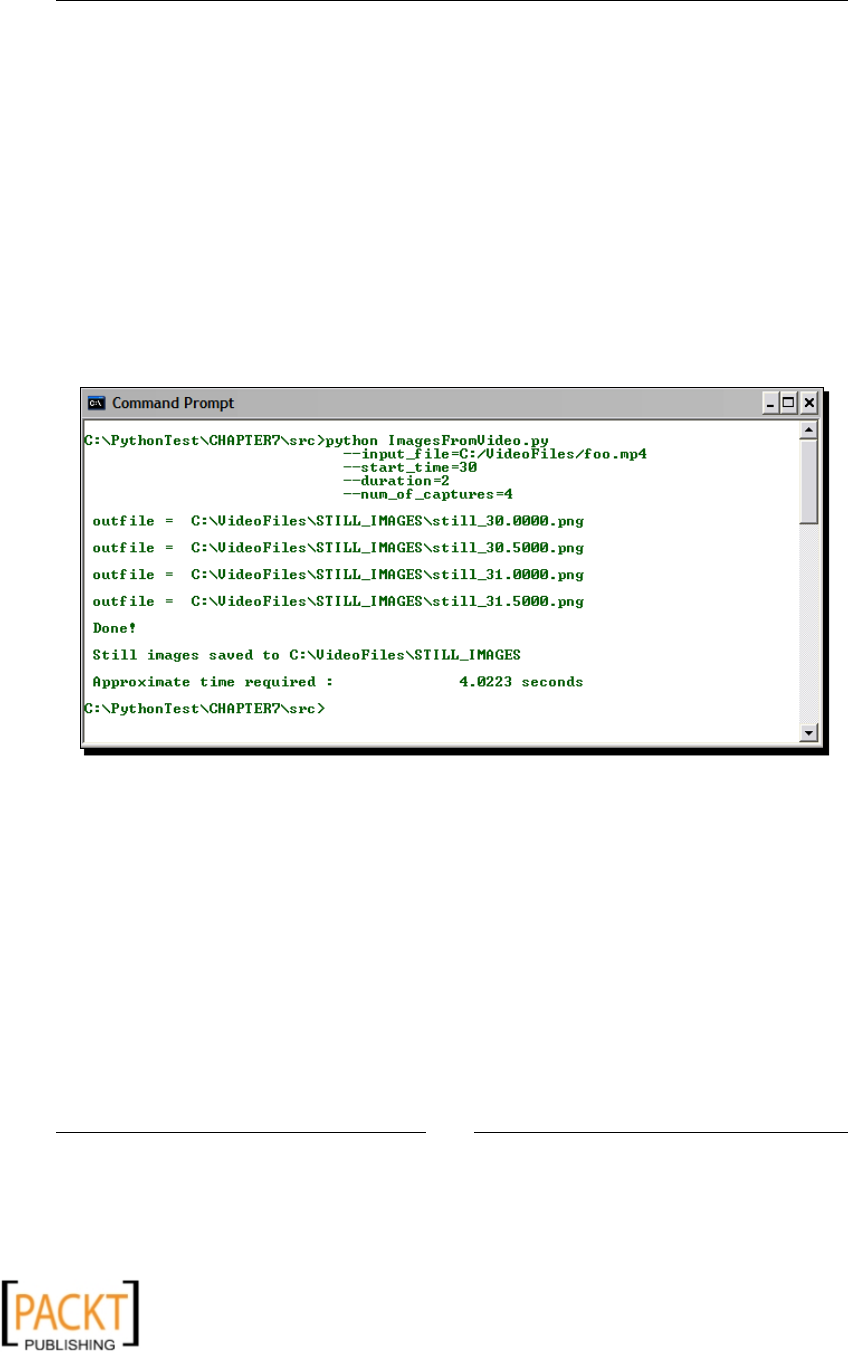

- Saving video frames as images

- Time for action – saving video frames as images

- Summary

- Chapter 8: GUI-based Media Players Using QT Phonon

- Installation prerequisites

- Introduction to QT Phonon

- Project: GUI-based music player

- Time for action – generating the UI code

- Time for action – connecting the widgets

- Time for action – developing the audio player code

- Project: GUI-based video player

- Time for action – generating the UI code

- Time for action – developing the video player code

- Summary

- Index

Python Multimedia

Beginner's Guide

Learn how to develop mulmedia applicaons using Python

with this praccal step-by-step guide

Ninad Sathaye

BIRMINGHAM - MUMBAI

x

x

Python Multimedia

Beginner's Guide

Copyright © 2010 Packt Publishing

All rights reserved. No part of this book may be reproduced, stored in a retrieval system,

or transmied in any form or by any means, without the prior wrien permission of the

publisher, except in the case of brief quotaons embedded in crical arcles or reviews.

Every eort has been made in the preparaon of this book to ensure the accuracy of the

informaon presented. However, the informaon contained in this book is sold without

warranty, either express or implied. Neither the author, nor Packt Publishing, and its dealers

and distributors will be held liable for any damages caused or alleged to be caused directly or

indirectly by this book.

Packt Publishing has endeavored to provide trademark informaon about all of the

companies and products menoned in this book by the appropriate use of capitals.

However, Packt Publishing cannot guarantee the accuracy of this informaon.

First published: August 2010

Producon Reference: 1060810

Published by Packt Publishing Ltd.

32 Lincoln Road

Olton

Birmingham, B27 6PA, UK.

ISBN 978-1-849510-16-5

www.packtpub.com

Cover Image by Ed Maclean (edmaclean@gmail.com)

x

x

Credits

Author

Ninad Sathaye

Reviewers

Maurice HT Ling

Daniel Waterworth

Sivan Greenberg

Acquision Editor

Steven Wilding

Development Editor

Eleanor Duy

Technical Editor

Charumathi Sankaran

Indexers

Hemangini Bari

Tejal Daruwale

Editorial Team Leader

Aanchal Kumar

Project Team Leader

Priya Mukherji

Project Coordinator

Prasad Rai

Proofreader

Lynda Sliwoski

Graphics

Geetanjali Sawant

Producon Coordinators

Shantanu Zagade

Aparna Bhagat

Cover Work

Aparna Bhagat

x

x

About the Author

Ninad Sathaye (ninad.consult@gmail.com) has more than six years of experience in

soware design and development. He is currently working at IBM, India. Prior to working for

IBM, he was a Systems Programmer at Nanorex Inc. based in Michigan, U.S.A. At Nanorex,

he was involved in the development of an open source, interacve 3D CAD soware, wrien

in Python and C. This is where he developed passion for the Python programming language.

Besides programming, his favorite hobbies are reading and traveling.

Ninad holds a Master of Science degree in Mechanical Engineering from Kansas State

University, U.S.A.

I would like to thank everyone at Packt Publishing, especially, Eleanor Duy,

Steven Wilding, Charu Sankaran, and Prasad Rai for their co-operaon.

This book wouldn't have been possible without your help. I also want to

thank all the technical reviewers of the book for their valuable suggesons.

I wish to express my sincere thanks and appreciaon to Rahul Nayak, my

colleague, who provided many professional quality photographs for this

book. I owe a special thanks to Mark Sims and Bruce Smith, my former

colleagues, for introducing me to the amusing world of Python. Finally,

this book wouldn't have been possible without the encouragement and

support of my whole family. I owe my loving thanks to my wife, Ara, for

providing valuable feedback. She also happens to be the photographer of

several of the pictures used throughout this book.

x

x

About the Reviewers

Maurice HT Ling completed his Ph.D. in Bioinformacs and B.Sc (Hons) in Molecular and

Cell Biology, where he worked on microarray analysis and text mining for protein-protein

interacons. He is currently an Honorary Fellow at The University of Melbourne and

a Lecturer at Singapore Polytechnic where he lectures on microbiology and

computaonal biology.

Maurice holds several Chief Editorships including The Python Papers, iConcept Journal

of Computaonal and Mathemacal Biology, and Methods and Cases in Computaonal,

Mathemacal, and Stascal Biology. In his free me, Maurice likes to train in the gym,

read, and enjoy a good cup of coee. He is also a Senior Fellow of the Internaonal Fitness

Associaon, U.S.A.

Daniel Waterworth is a Python fanac who can oen be found behind his keyboard. He is

always beavering away on a new project having learned to program from a young age. He is a

keen blogger and his ideas can be found at http://active-thought.com.

Sivan Greenberg is a Forum Nokia Champion, with almost ten years of mul-disciplinary

IT experience and a sharp eye for quality. He started with open source technologies and

the Debian project back in 2002. Joining Ubuntu development two years later, Sivan also

contributed to various other open source projects, such as Plone and Nokia's Maemo.

He has experience with quality assurance, applicaon and web development, UNIX system

administraon (including some rather exoc IBM plaorms), and GUI programming and

documentaon. He's been using Python for all of his development needs for the last ve

years. He is currently involved with Nokia's MeeGo project and works with CouchDB and

Python in his day job for a living.

I thank my unique and amazing family, specically my Dad Eric for igning

the spark of curiosity from day zero.

x

x

x

x

To my daughter, Anvita

x

x

x

x

Table of Contents

Preface 1

Chapter 1: Python and Mulmedia 7

Mulmedia 8

Mulmedia processing 8

Image processing 8

Audio and video processing 10

Compression 10

Mixing 11

Eding 11

Animaons 11

Built-in mulmedia support 12

winsound 12

audioop 12

wave 13

External mulmedia libraries and frameworks 13

Python Imaging Library 13

PyMedia 13

GStreamer 13

Pyglet 14

PyGame 14

Sprite 14

Display 14

Surface 14

Draw 14

Event 15

Image 15

Music 15

Time for acon – a simple applicaon using PyGame 15

QT Phonon 18

Other mulmedia libraries 19

x

x

Table of Contents

[ ii ]

Snack Sound Toolkit 19

PyAudiere 20

Summary 20

Chapter 2: Working with Images 21

Installaon prerequisites 21

Python 21

Windows plaorm 22

Other plaorms 22

Python Imaging Library (PIL) 22

Windows plaorm 22

Other plaorms 22

PyQt4 23

Windows plaorm 23

Other plaorms 24

Summary of installaon prerequisites 24

Reading and wring images 25

Time for acon – image le converter 25

Creang an image from scratch 28

Time for acon – creang a new image containing some text 28

Reading images from archive 29

Time for acon – reading images from archives 29

Basic image manipulaons 30

Resizing 30

Time for acon – resizing 30

Rotang 33

Time for acon – rotang 34

Flipping 35

Time for acon – ipping 35

Capturing screenshots 36

Time for acon – capture screenshots at intervals 36

Cropping 39

Time for acon – cropping an image 39

Pasng 40

Time for acon – pasng: mirror the smiley face! 40

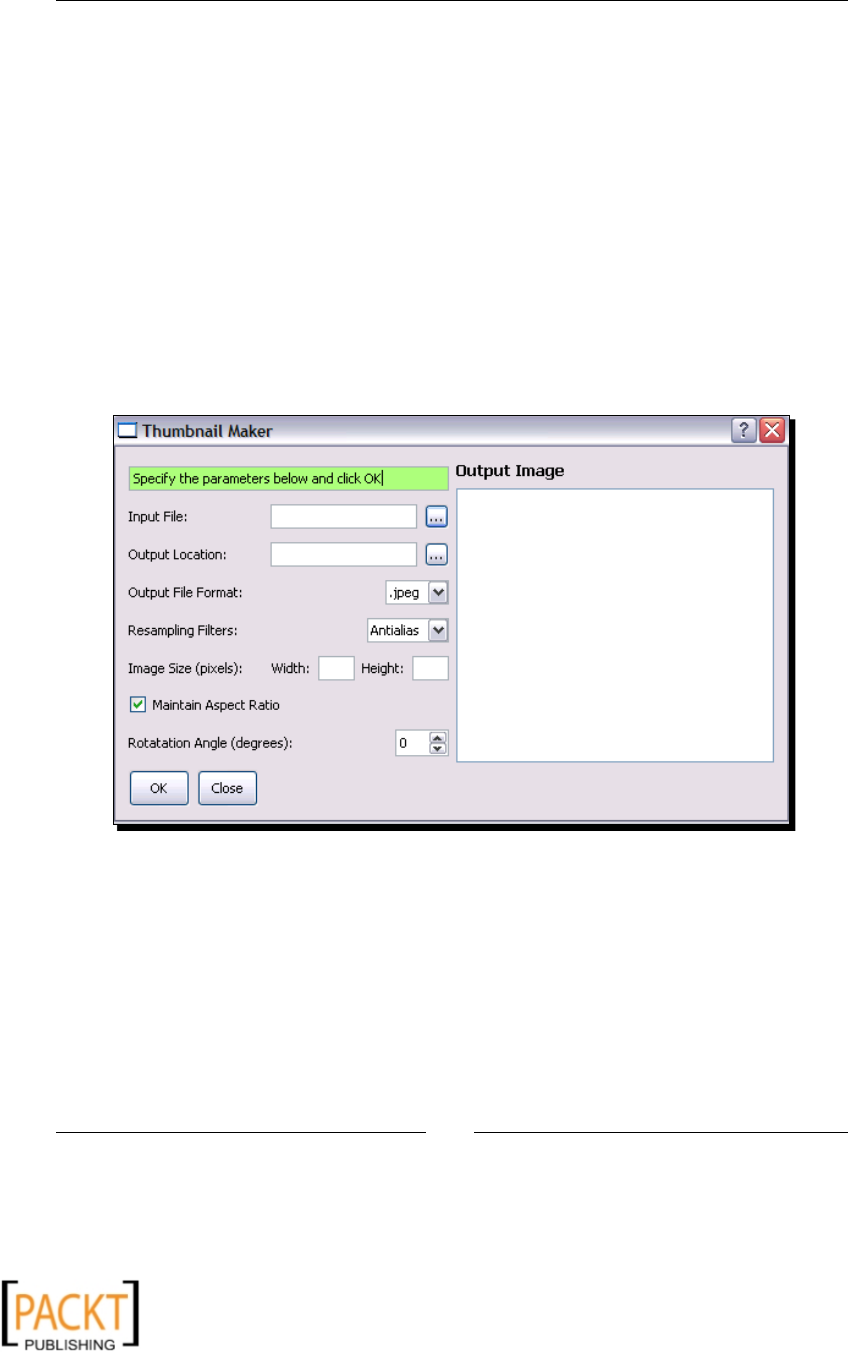

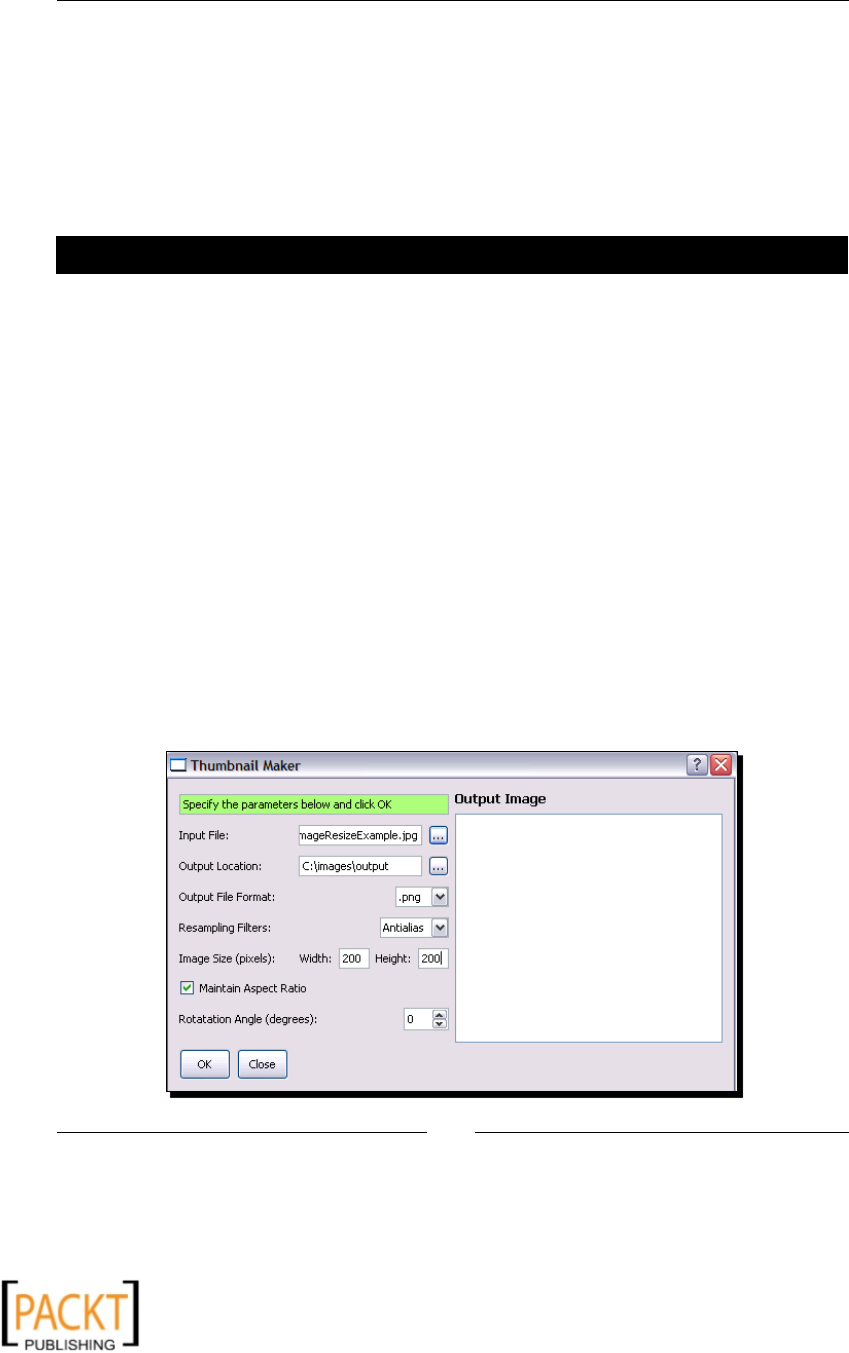

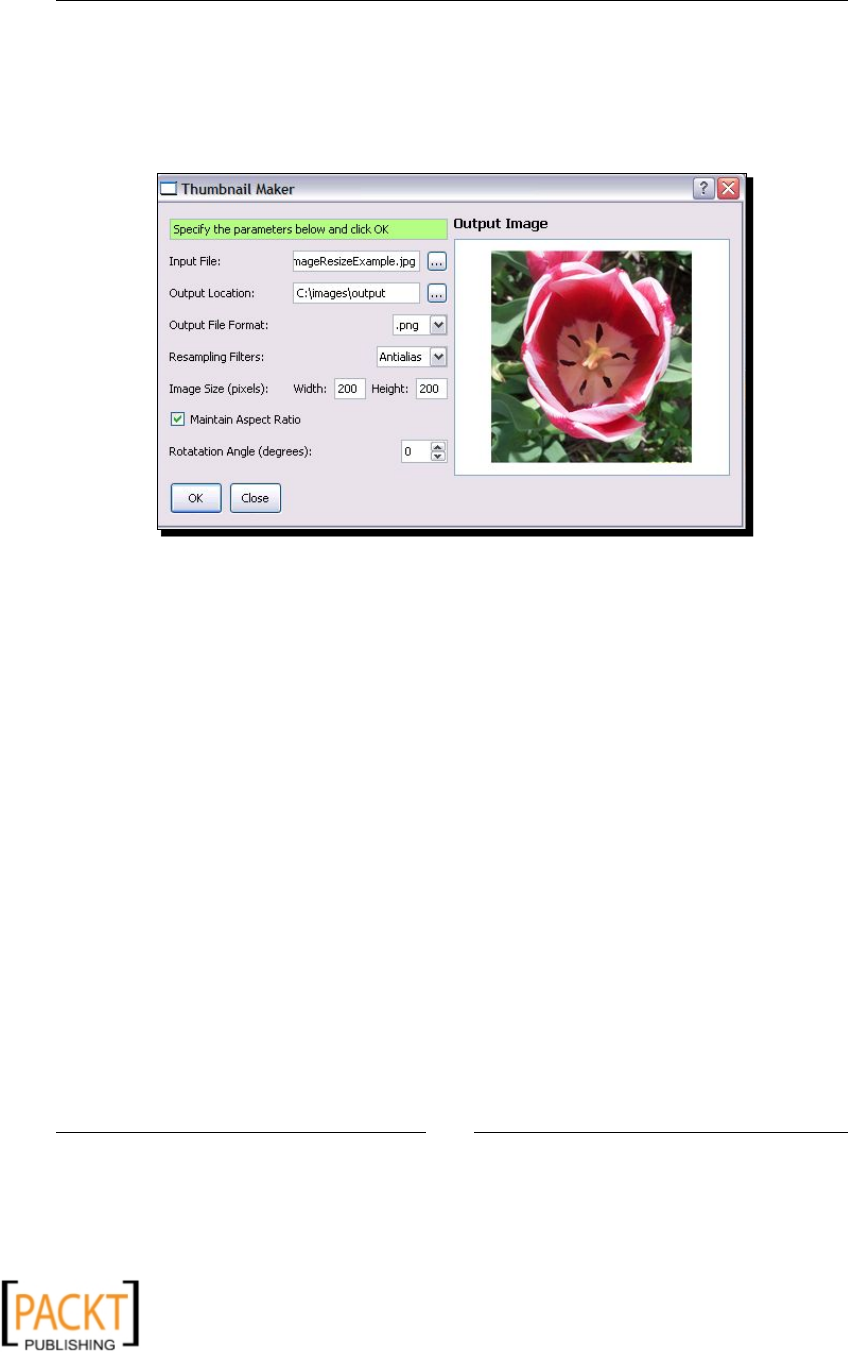

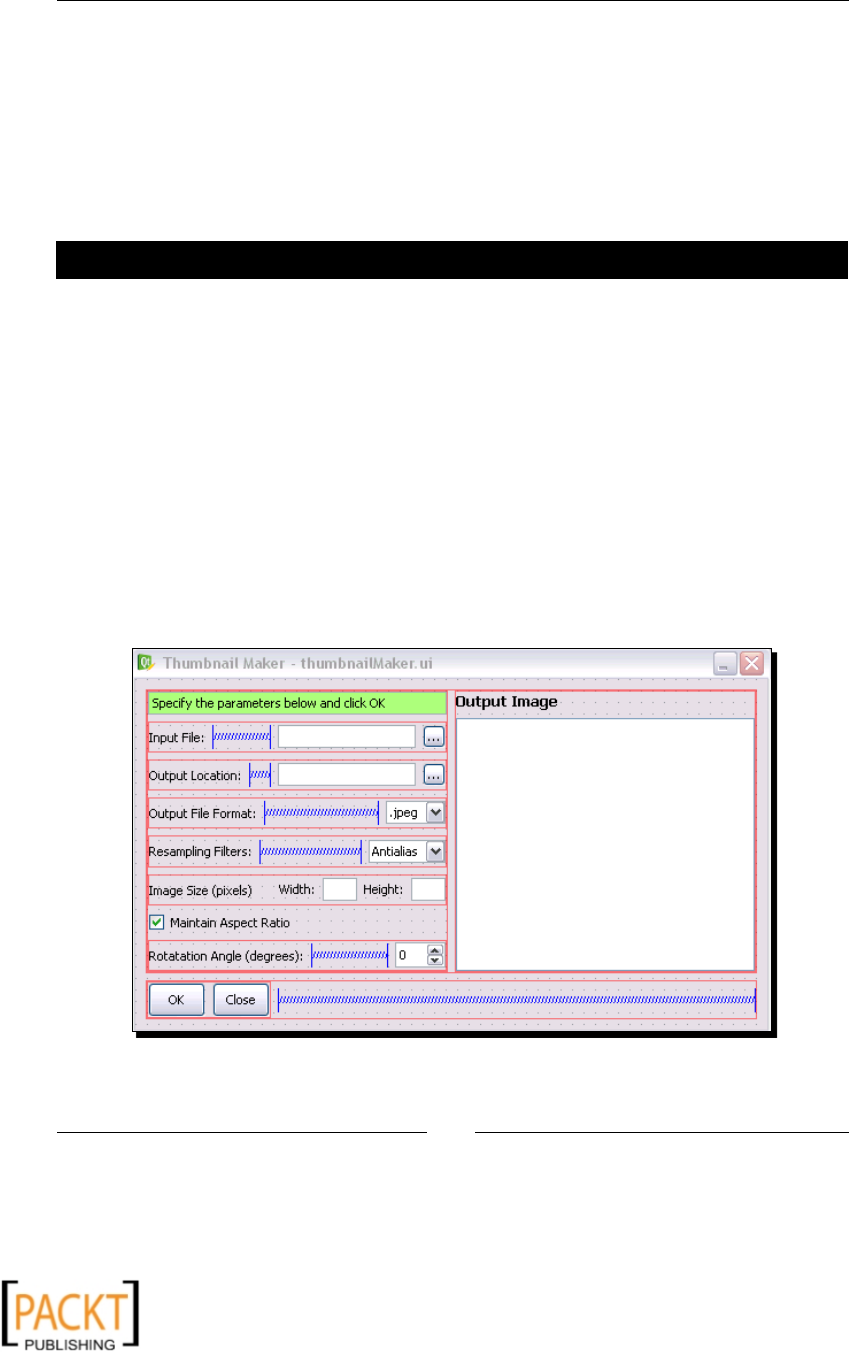

Project: Thumbnail Maker 42

Time for acon – play with Thumbnail Maker applicaon 43

Generang the UI code 45

Time for acon – generang the UI code 45

Connecng the widgets 47

Time for acon – connecng the widgets 48

Developing the image processing code 49

x

x

Table of Contents

[ iii ]

Time for acon – developing image processing code 49

Summary 53

Chapter 3: Enhancing Images 55

Installaon and download prerequisites 56

Adjusng brightness and contrast 56

Time for acon – adjusng brightness and contrast 56

Tweaking colors 59

Time for acon – swap colors within an image! 59

Changing individual image band 61

Time for acon – change the color of a ower 61

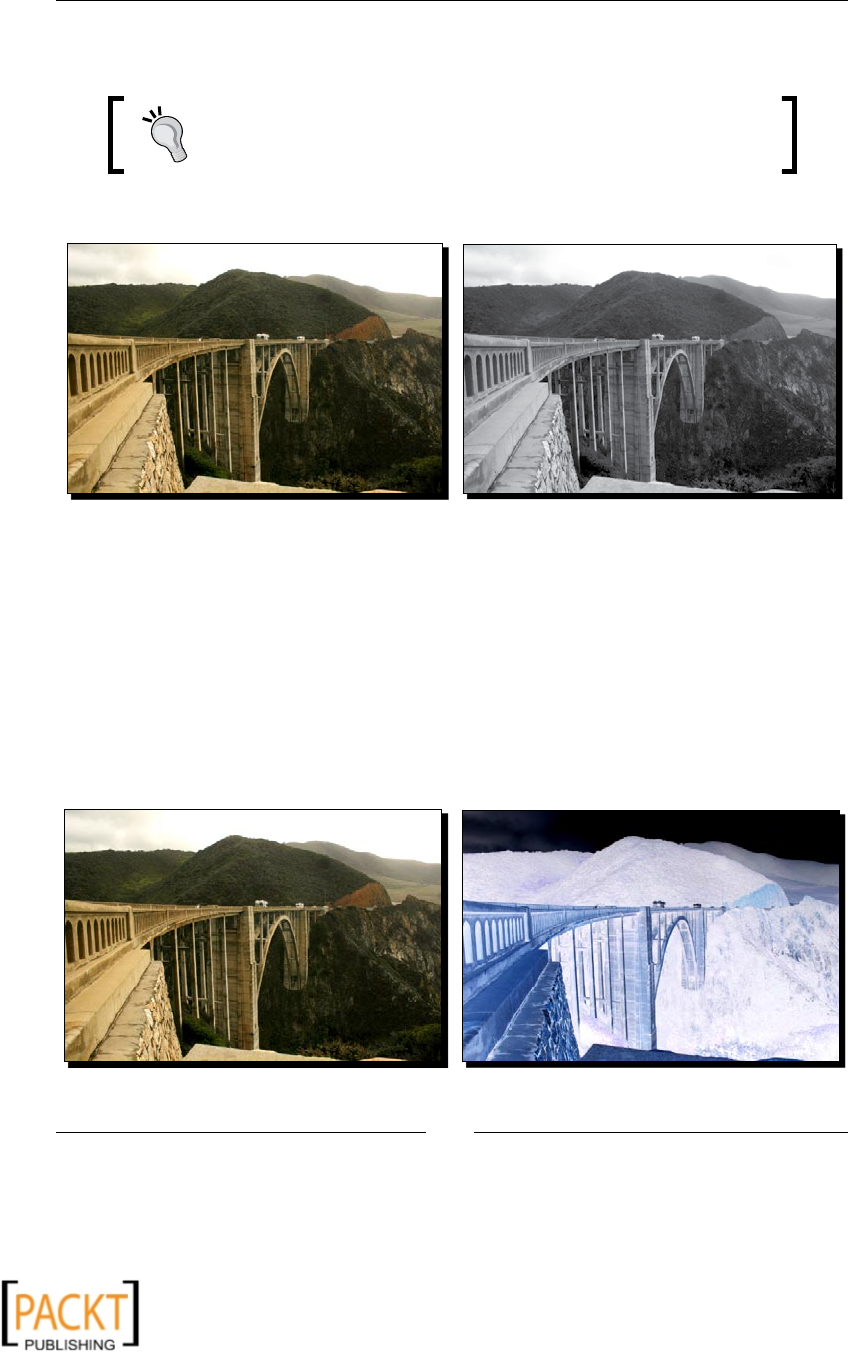

Gray scale images 63

Cook up negaves 64

Blending 65

Time for acon – blending two images 65

Creang transparent images 68

Time for acon – create transparency 68

Making composites with image mask 70

Time for acon – making composites with image mask 71

Project: Watermark Maker Tool 72

Time for acon – Watermark Maker Tool 73

Applying image lters 81

Smoothing 82

Time for acon – smoothing an image 82

Sharpening 84

Blurring 84

Edge detecon and enhancements 85

Time for acon – detecng and enhancing edges 85

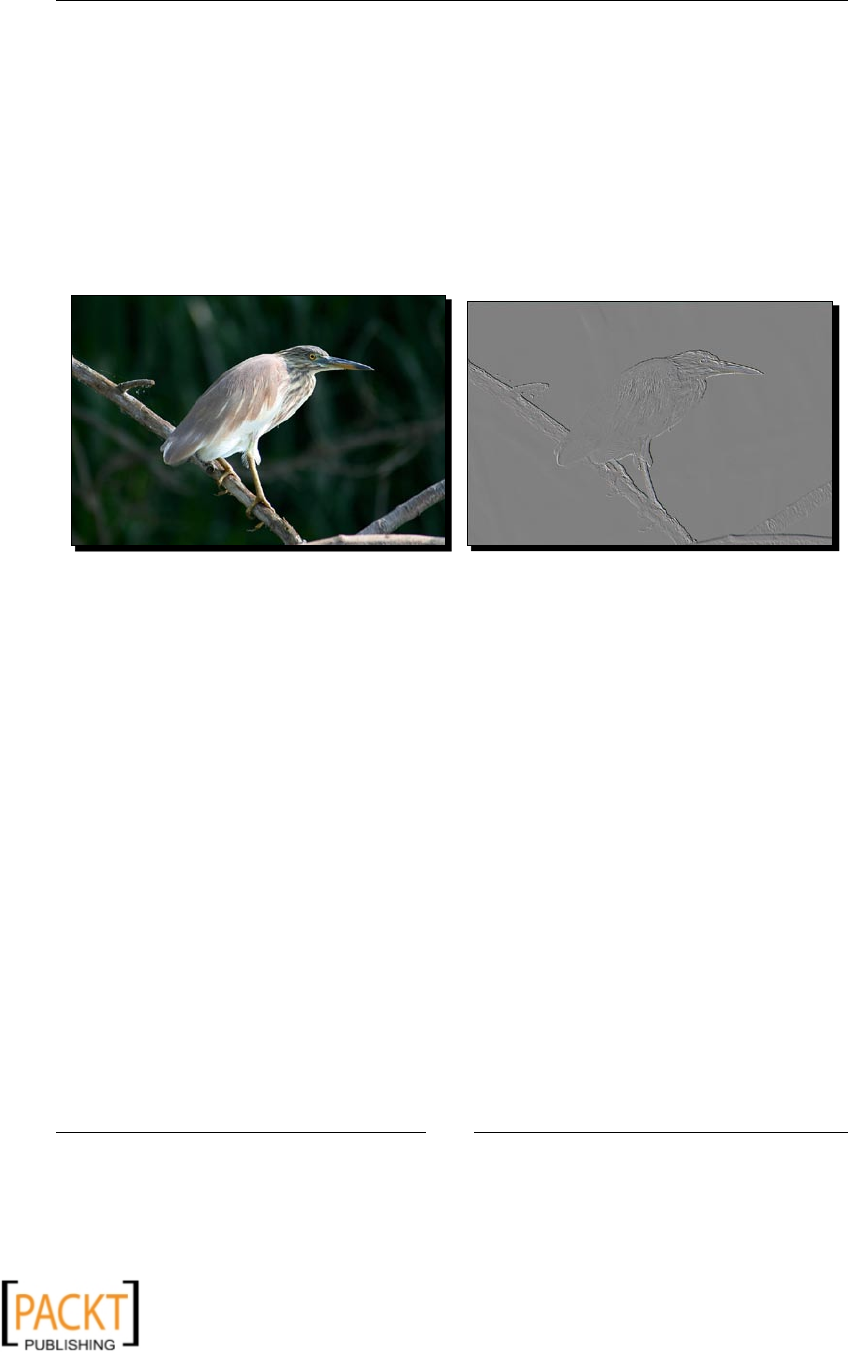

Embossing 87

Time for acon – embossing 87

Adding a border 88

Time for acon – enclosing a picture in a photoframe 89

Summary 90

Chapter 4: Fun with Animaons 91

Installaon prerequisites 92

Pyglet 92

Windows plaorm 92

Other plaorms 92

Summary of installaon prerequisites 93

Tesng the installaon 93

A primer on Pyglet 94

x

x

Table of Contents

[ iv ]

Important components 94

Window 94

Image 95

Sprite 95

Animaon 95

AnimaonFrame 95

Clock 95

Displaying an image 96

Mouse and keyboard controls 97

Adding sound eects 97

Animaons with Pyglet 97

Viewing an exisng animaon 97

Time for acon – viewing an exisng animaon 98

Animaon using a sequence of images 100

Time for acon – animaon using a sequence of images 100

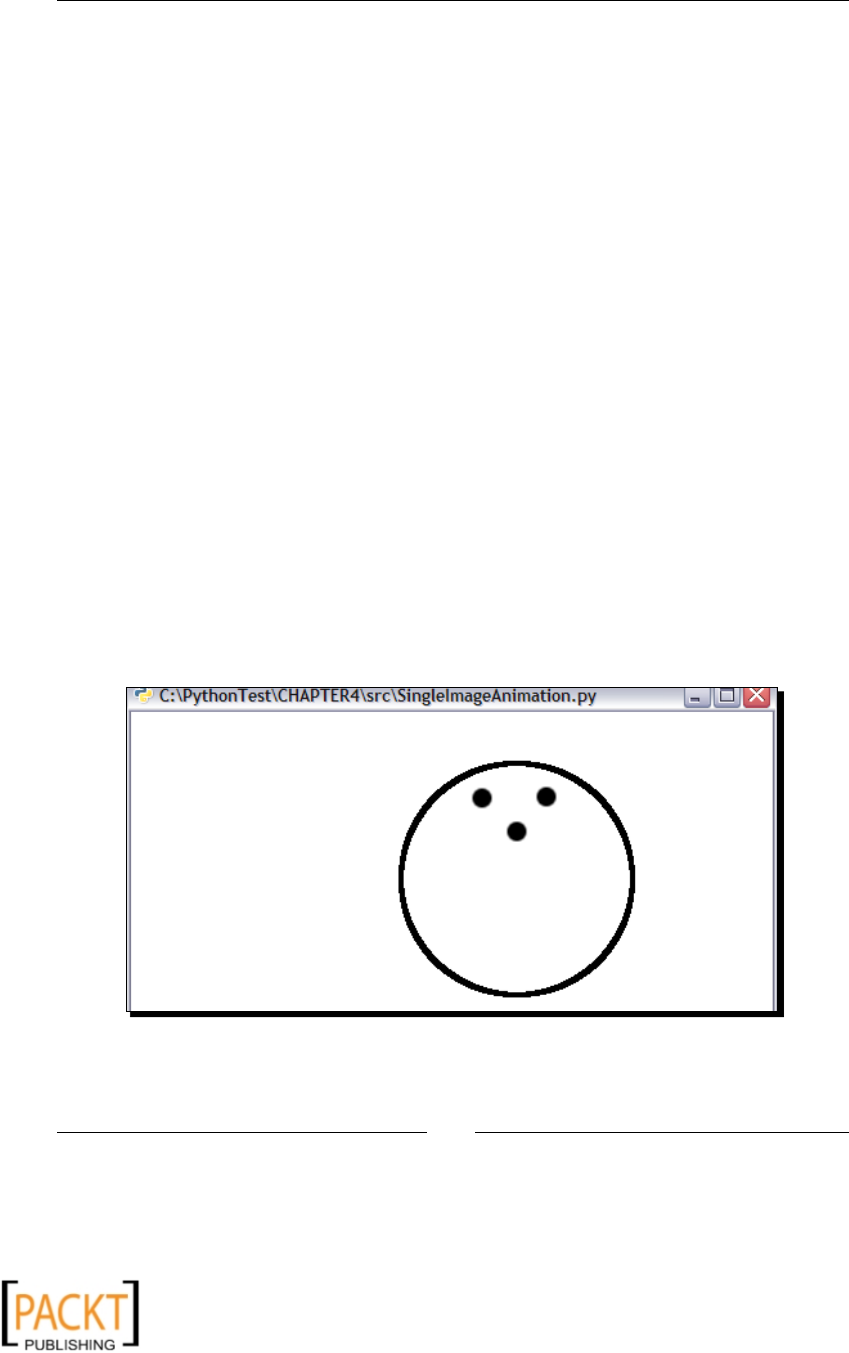

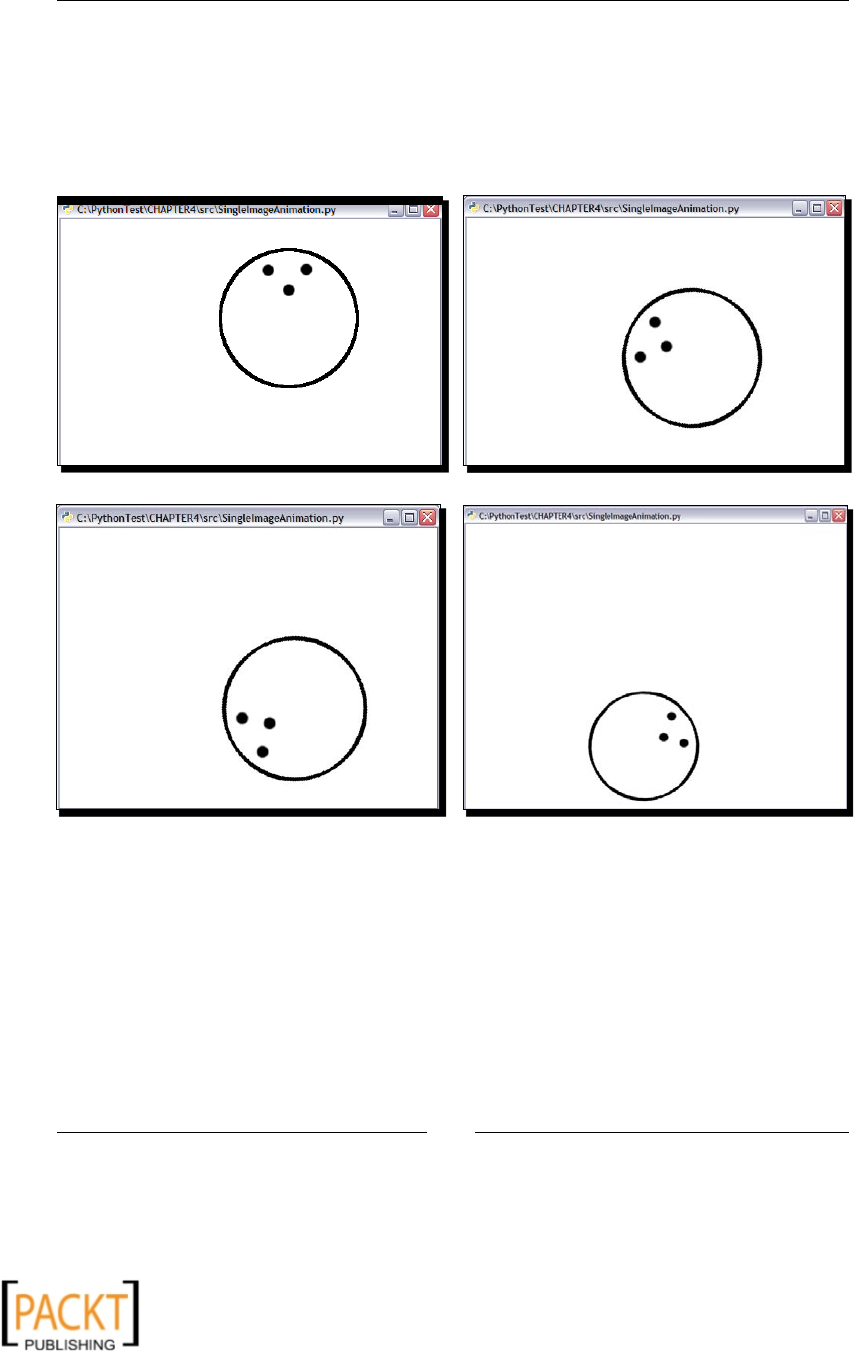

Single image animaon 102

Time for acon – bouncing ball animaon 102

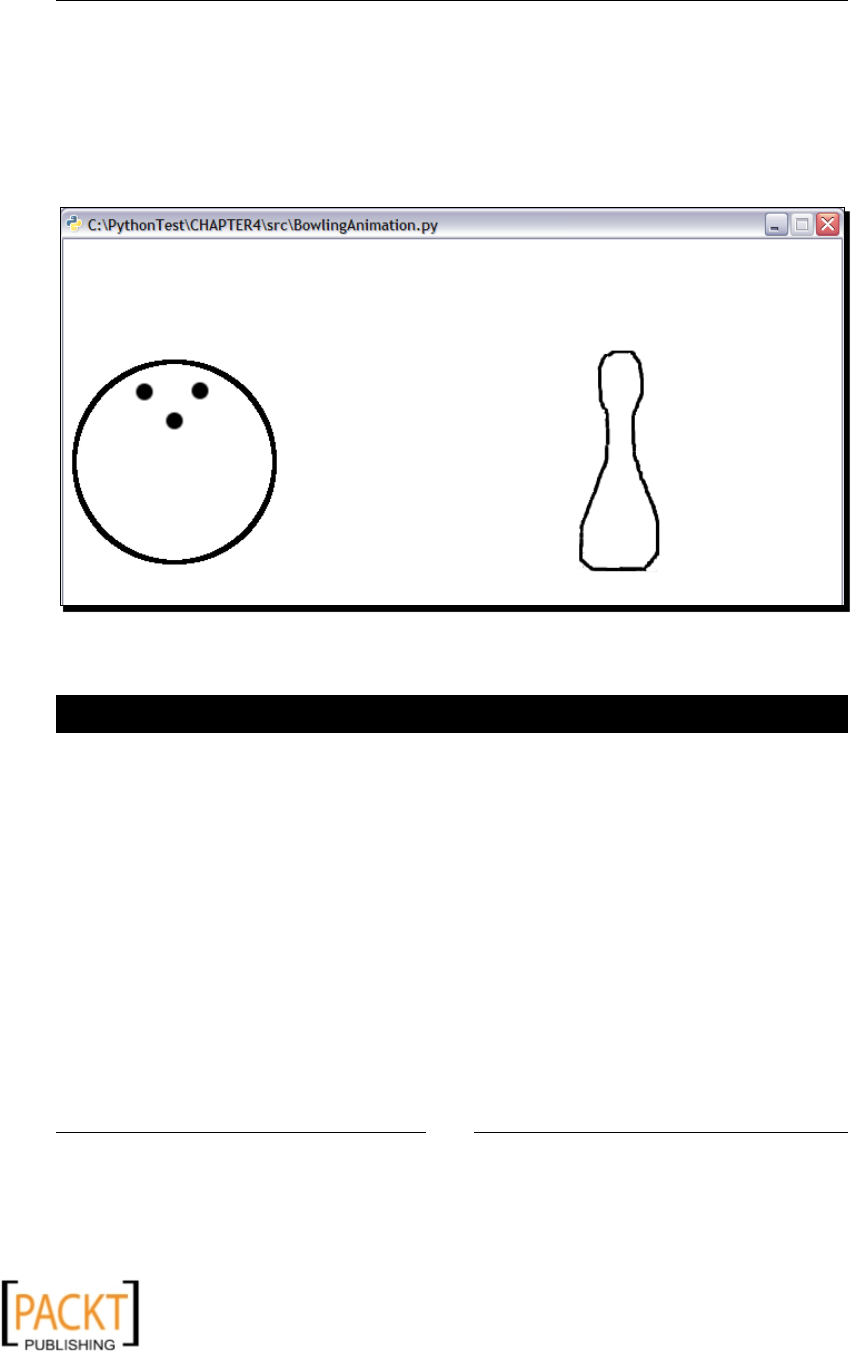

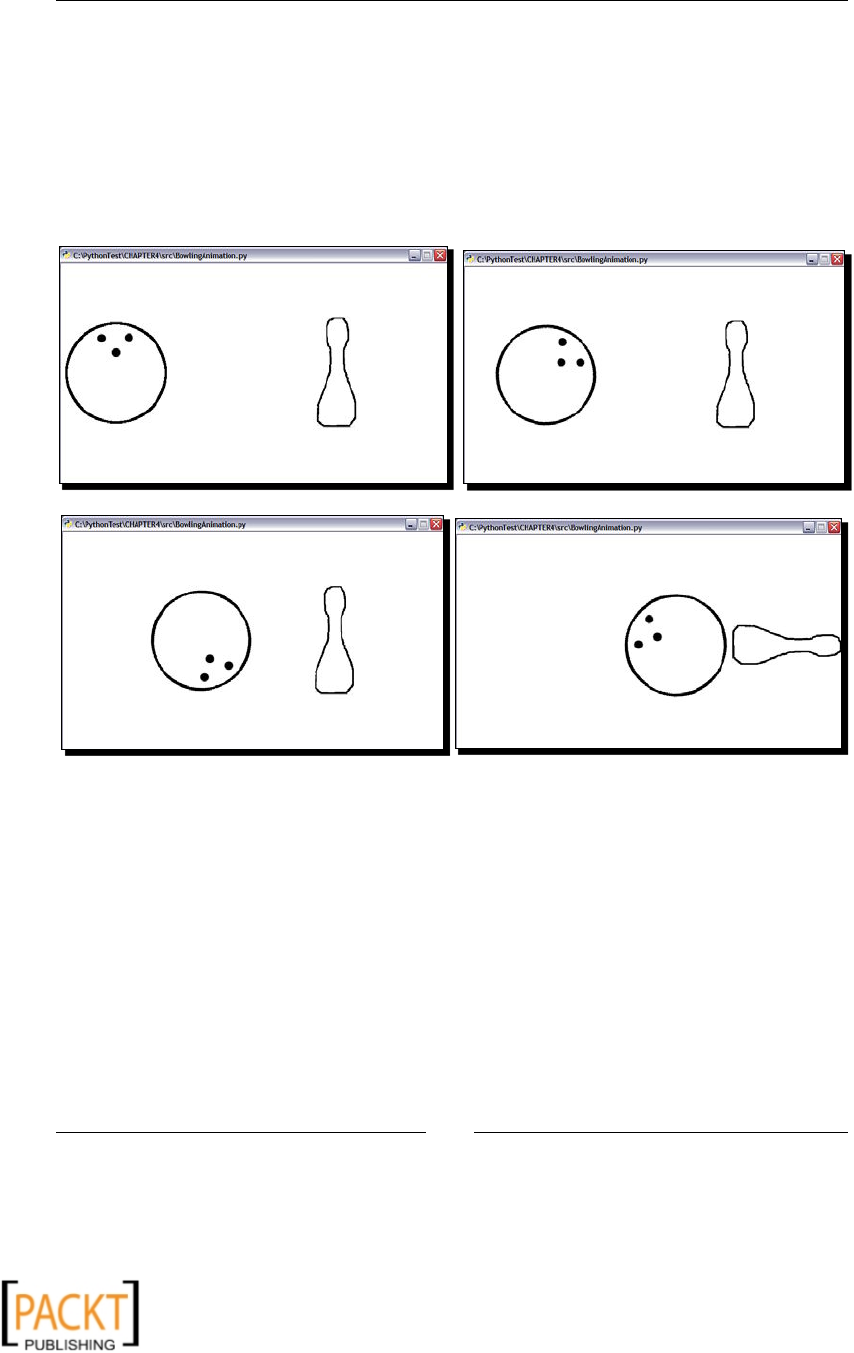

Project: a simple bowling animaon 108

Time for acon – a simple bowling animaon 108

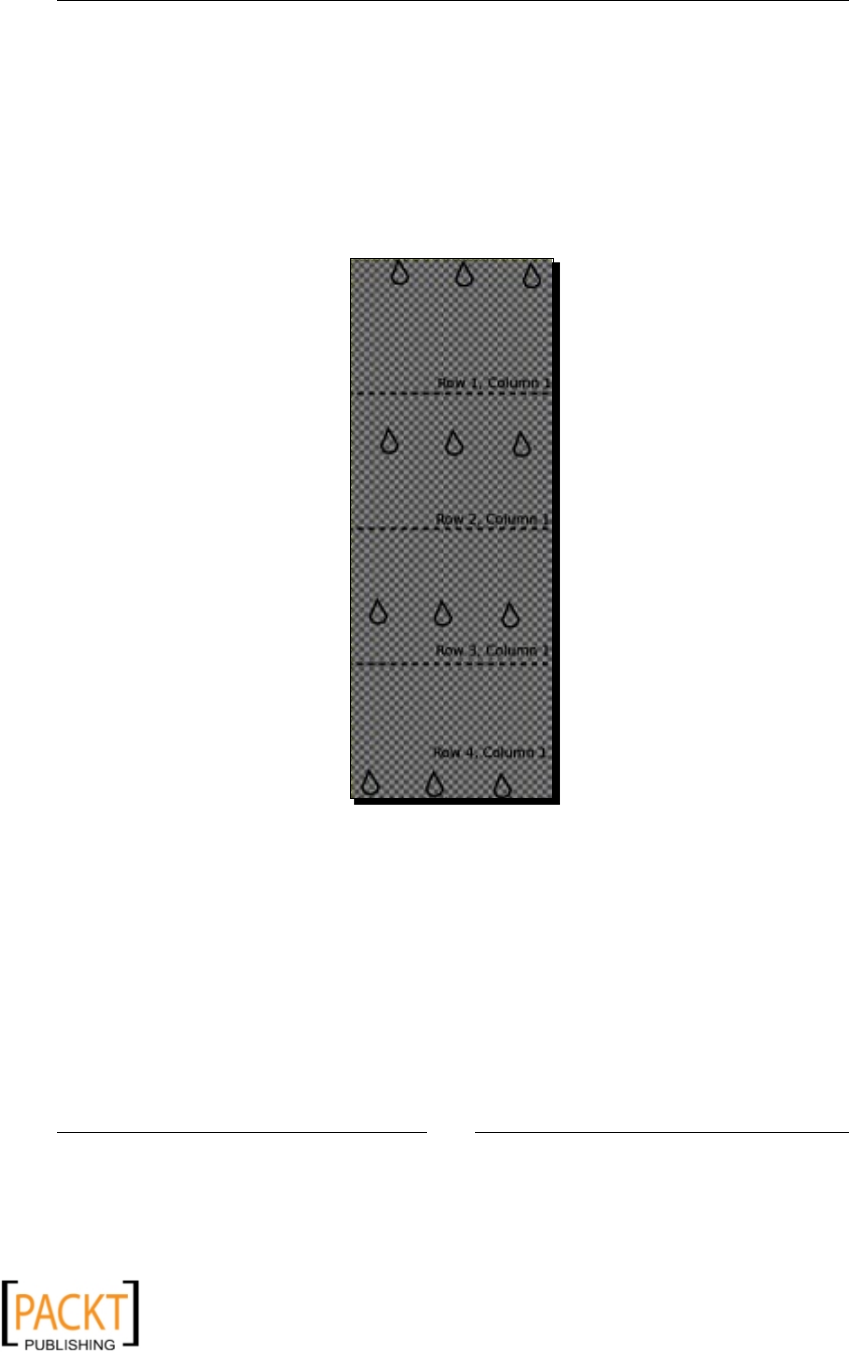

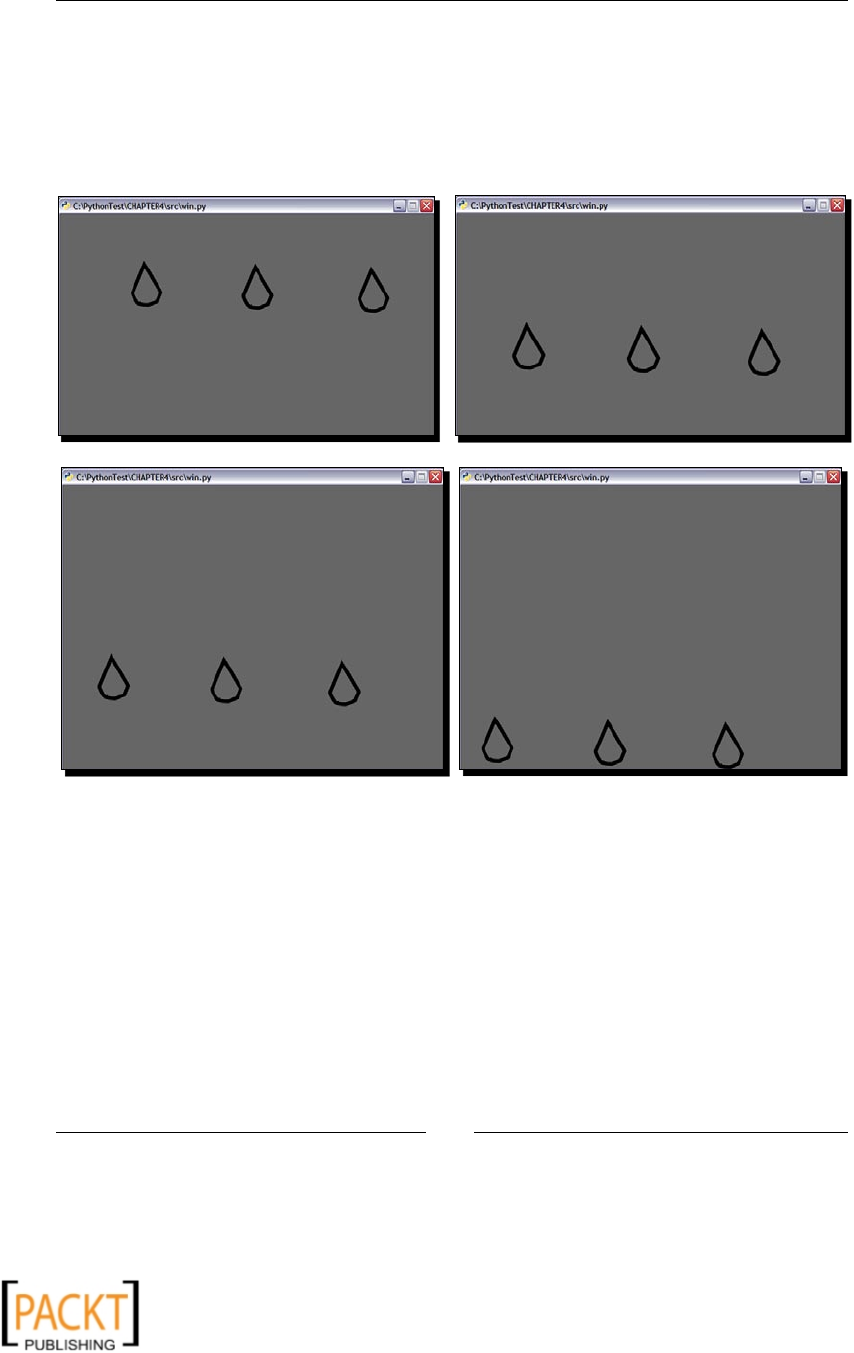

Animaons using dierent image regions 113

Time for acon – raindrops animaon 114

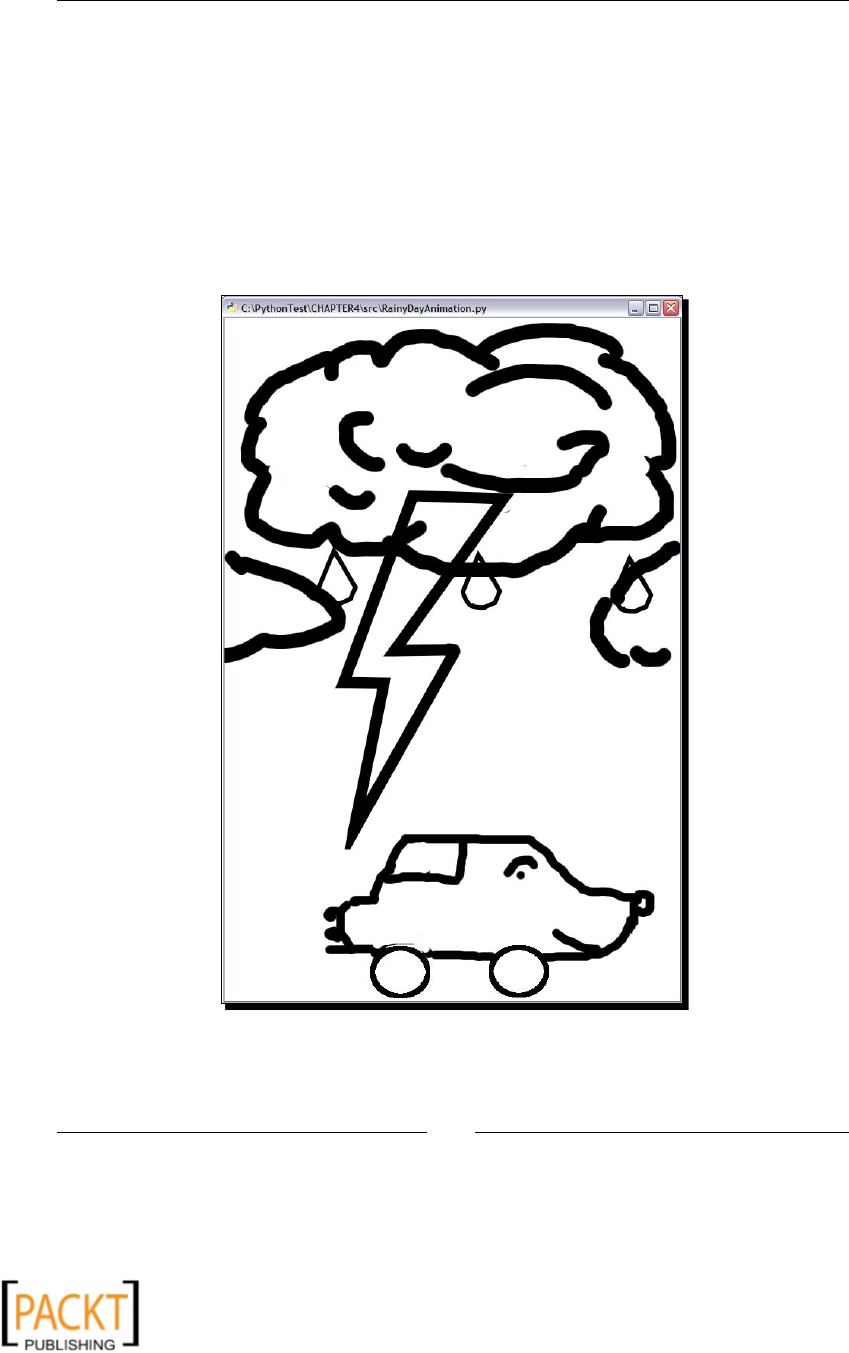

Project: drive on a rainy day! 117

Time for acon – drive on a rainy day! 118

Summary 122

Chapter 5: Working with Audios 123

Installaon prerequisites 123

GStreamer 124

Windows plaorm 124

Other plaorms 125

PyGobject 125

Windows plaorm 125

Other plaorms 125

Summary of installaon prerequisites 126

Tesng the installaon 127

A primer on GStreamer 127

gst-inspect and gst-launch 128

Elements and pipeline 128

Plugins 129

Bins 129

Pads 130

Dynamic pads 130

Ghost pads 131

Caps 131

x

x

Table of Contents

[ v ]

Bus 131

Playbin/Playbin2 131

Playing music 132

Time for acon – playing an audio: method 1 133

Building a pipeline from elements 137

Time for acon – playing an audio: method 2 138

Playing an audio from a website 141

Converng audio le format 142

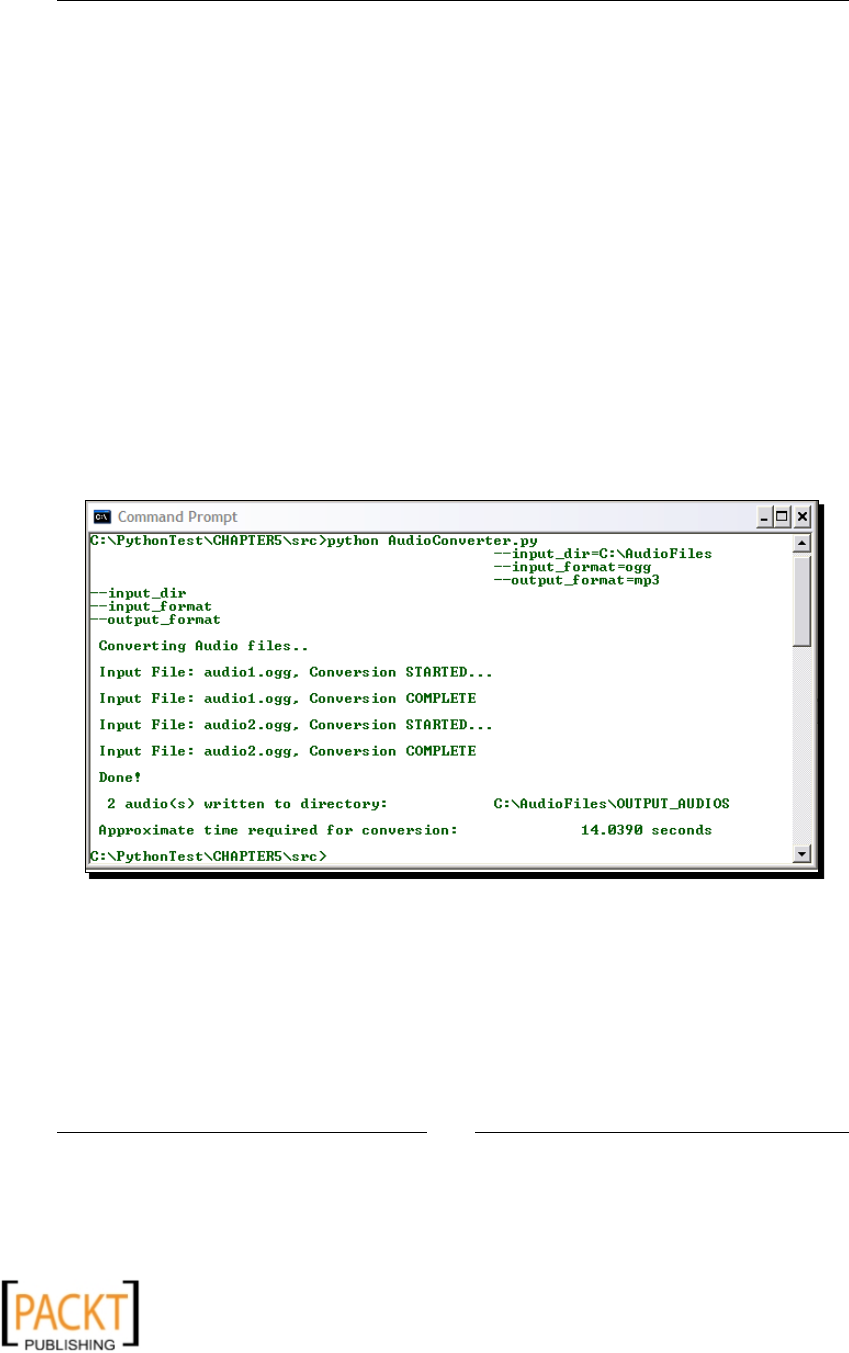

Time for acon – audio le format converter 142

Extracng part of an audio 150

The Gnonlin plugin 151

Time for acon – MP3 cuer! 152

Recording 156

Time for acon – recording 157

Summary 160

Chapter 6: Audio Controls and Eects 161

Controlling playback 161

Play 162

Pause/resume 162

Time for acon – pause and resume a playing audio stream 162

Stop 165

Fast-forward/rewind 166

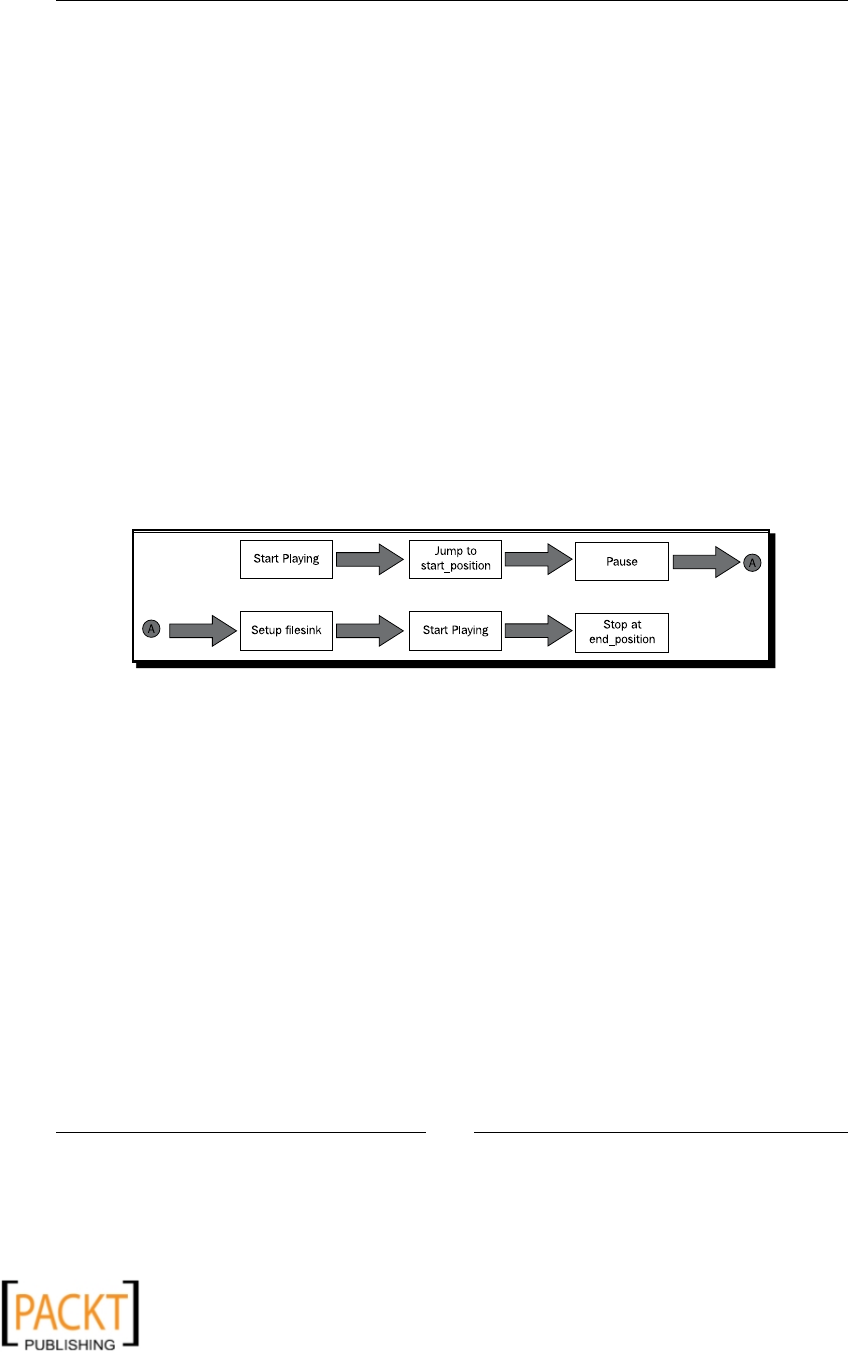

Project: extract audio using playback controls 166

Time for acon – MP3 cuer from basic principles 167

Adjusng volume 173

Time for acon – adjusng volume 173

Audio eects 175

Fading eects 175

Time for acon – fading eects 176

Echo echo echo... 179

Time for acon – adding echo eect 179

Panning/panorama 182

Project: combining audio clips 183

Media 'meline' explained 184

Time for acon – creang custom audio by combining clips 185

Audio mixing 194

Time for acon – mixing audio tracks 194

Visualizing an audio track 196

Time for acon – audio visualizer 196

Summary 199

x

x

Table of Contents

[ vi ]

Chapter 7: Working with Videos 201

Installaon prerequisites 202

Playing a video 203

Time for acon – video player! 203

Playing video using 'playbin' 208

Video format conversion 209

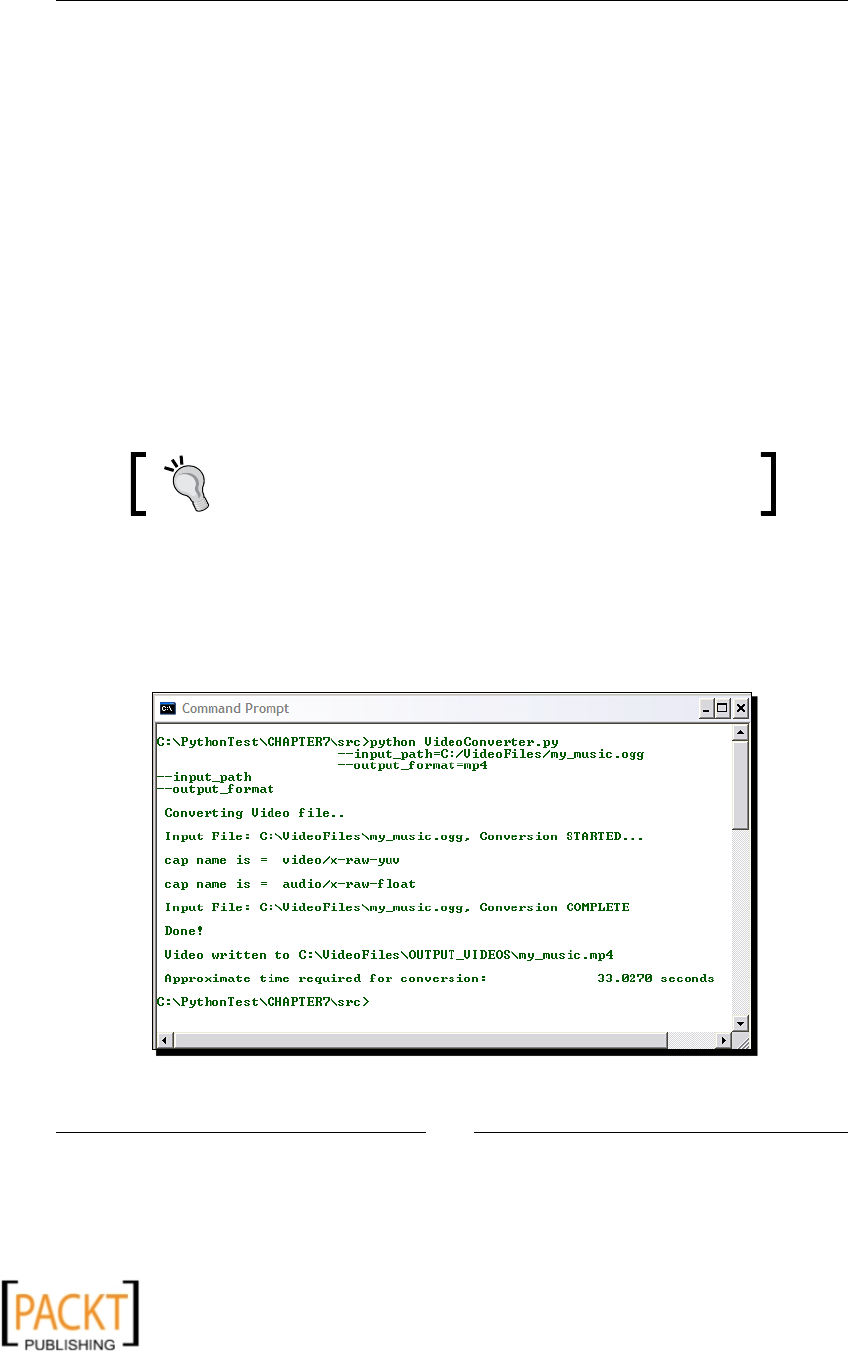

Time for acon – video format converter 209

Video manipulaons and eects 215

Resizing 215

Time for acon – resize a video 216

Cropping 217

Time for acon – crop a video 218

Adjusng brightness and contrast 219

Creang a gray scale video 220

Adding text and me on a video stream 220

Time for acon – overlay text on a video track 220

Separang audio and video tracks 223

Time for acon – audio and video tracks 223

Mixing audio and video tracks 226

Time for acon – audio/video track mixer 226

Saving video frames as images 230

Time for acon – saving video frames as images 230

Summary 235

Chapter 8: GUI-based Media Players Using QT Phonon 237

Installaon prerequisites 238

PyQt4 238

Summary of installaon prerequisites 238

Introducon to QT Phonon 238

Main components 239

Media graph 239

Media object 239

Sink 239

Path 239

Eects 239

Backends 239

Modules 240

MediaNode 240

MediaSource 240

MediaObject 240

Path 240

AudioOutput 241

Eect 241

VideoPlayer 241

x

x

Table of Contents

[ vii ]

SeekSlider 241

volumeSlider 241

Project: GUI-based music player 241

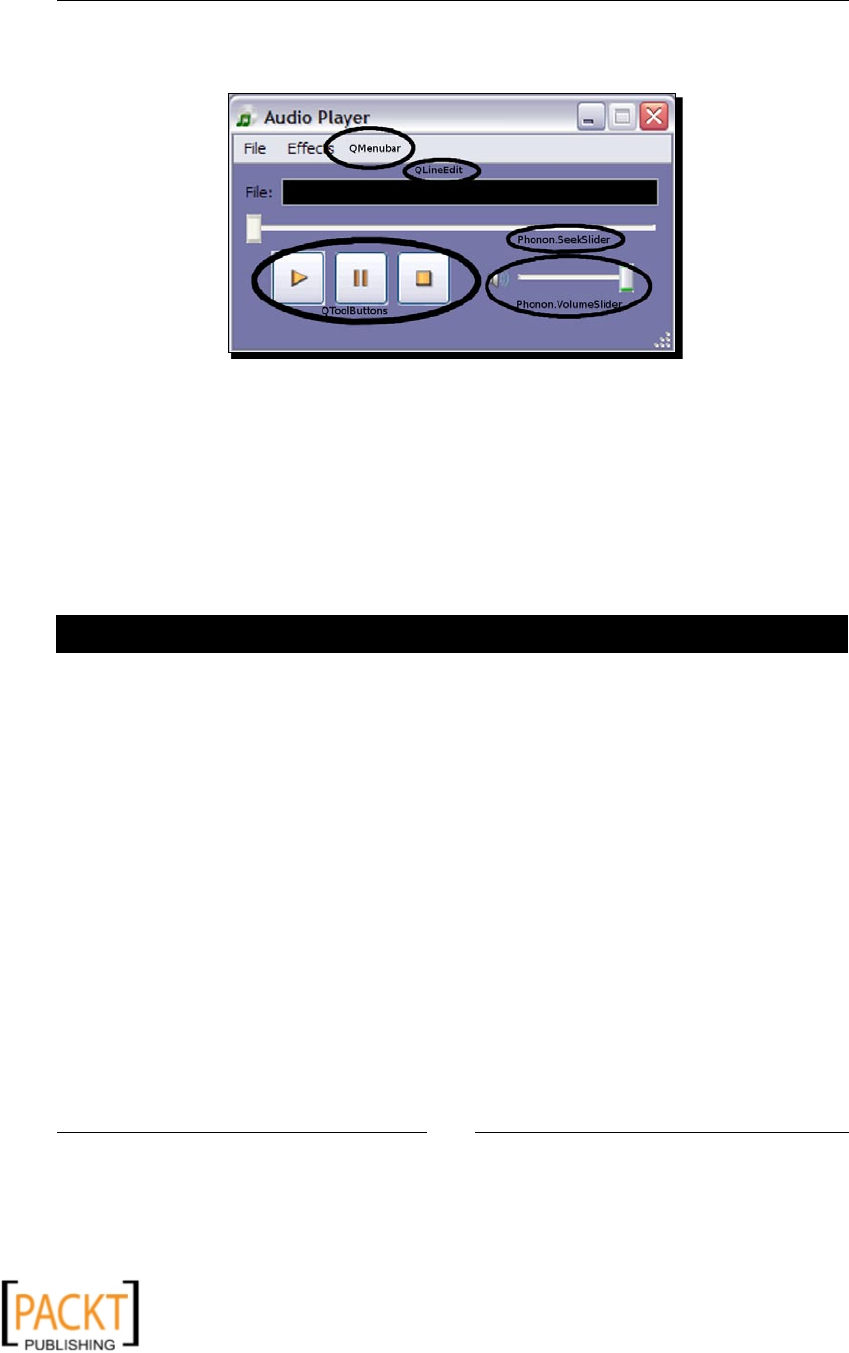

GUI elements in the music player 242

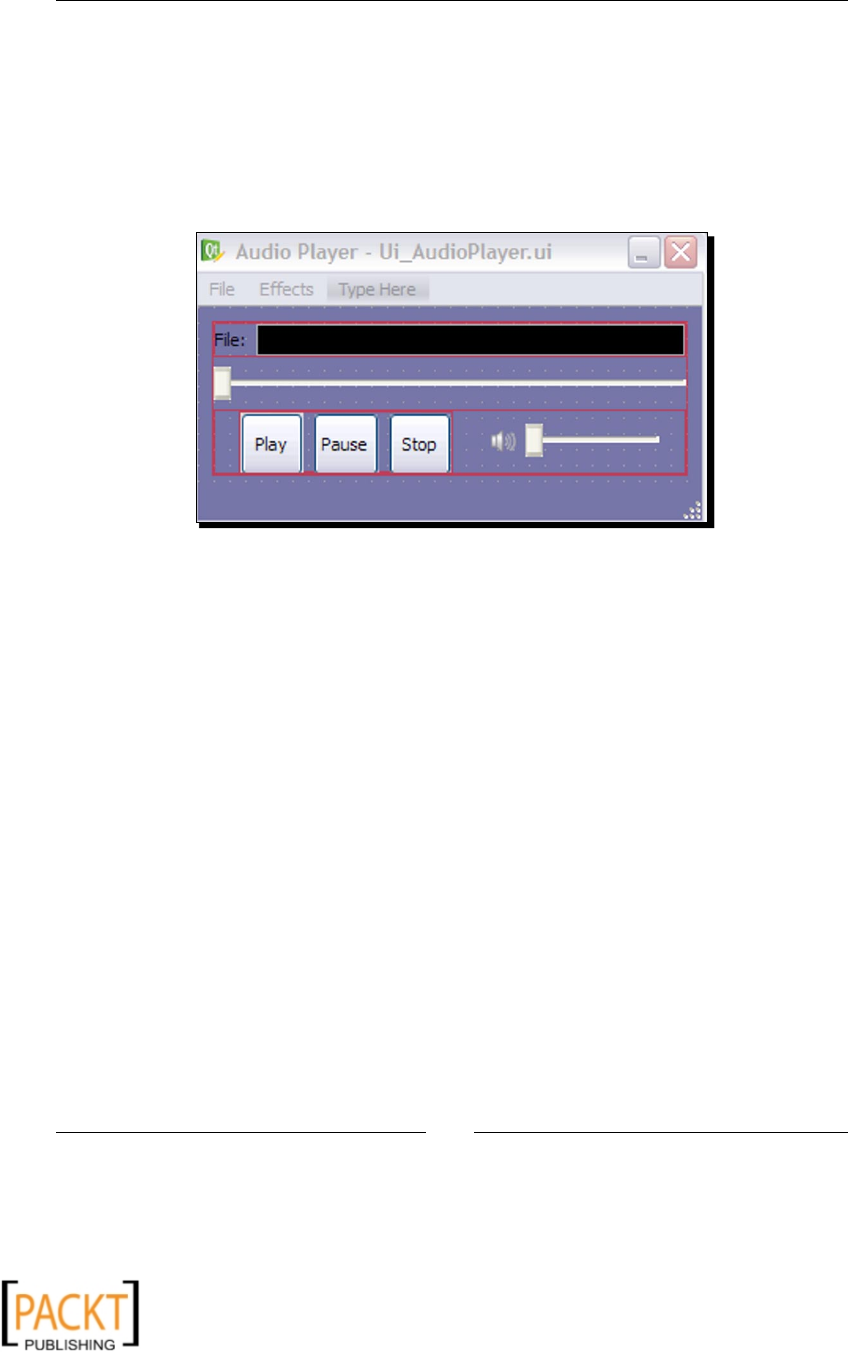

Generang the UI code 243

Time for acon – generang the UI code 243

Connecng the widgets 247

Time for acon – connecng the widgets 247

Developing the audio player code 249

Time for acon – developing the audio player code 250

Project: GUI-based video player 257

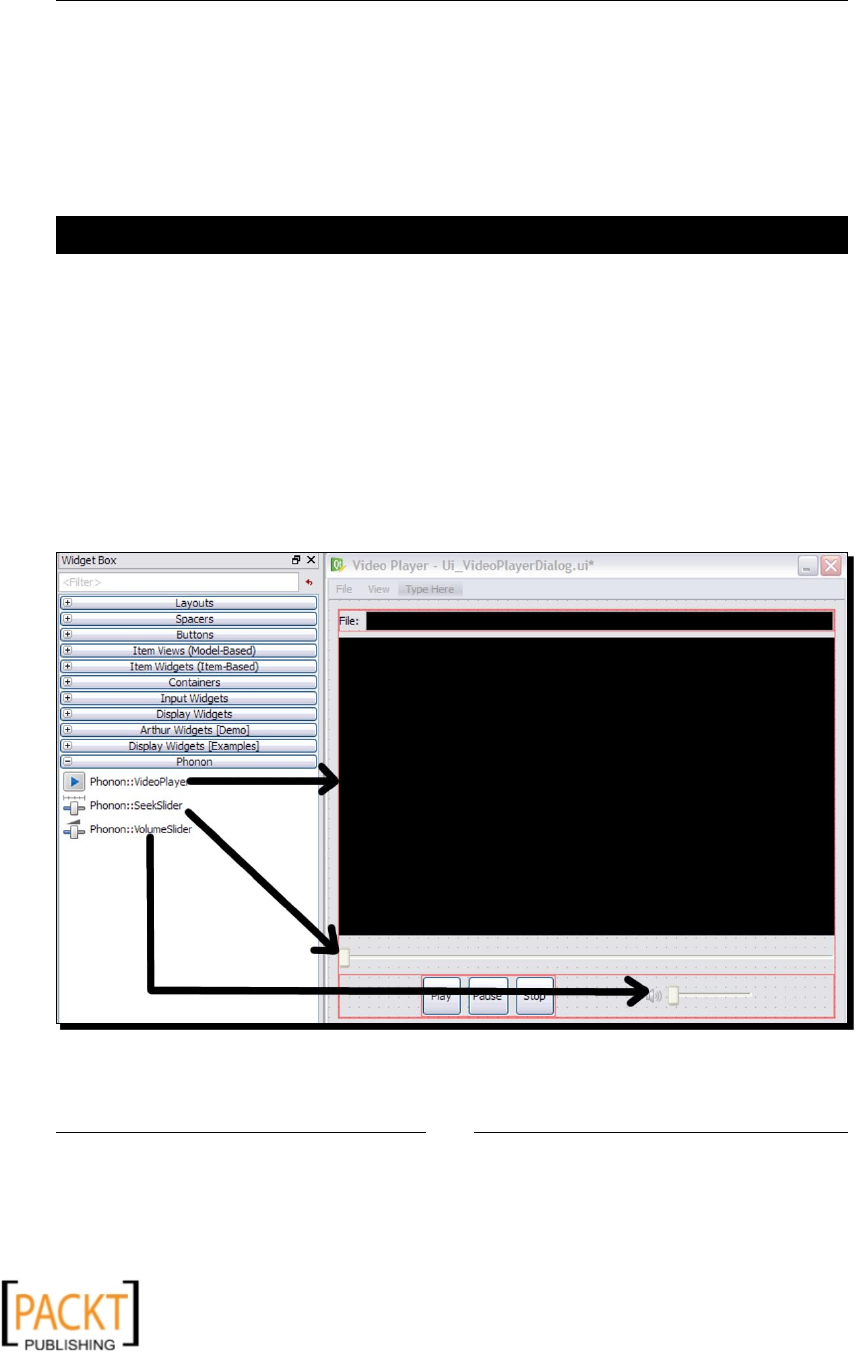

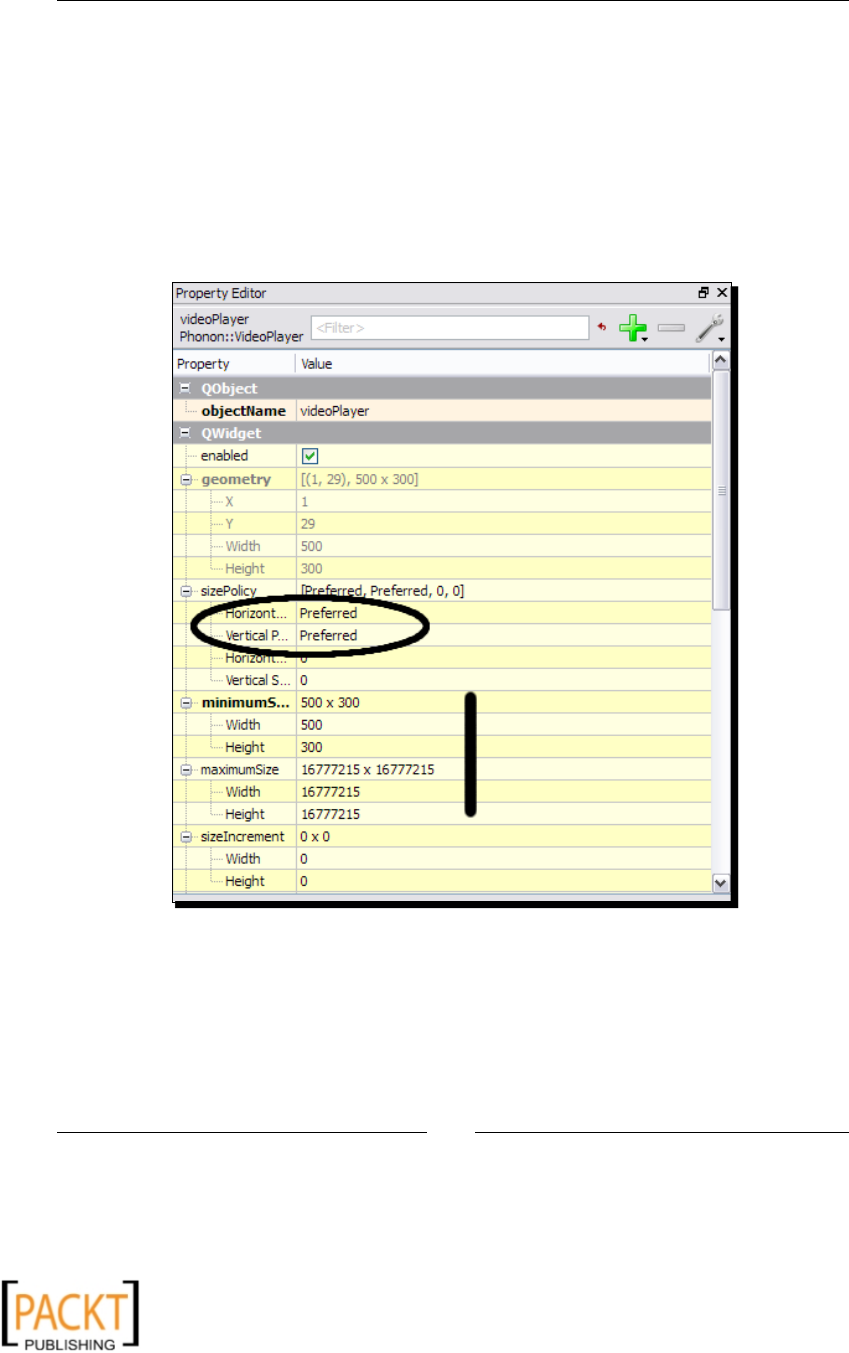

Generang the UI code 258

Time for acon – generang the UI code 258

Connecng the widgets 260

Developing the video player code 261

Time for acon – developing the video player code 261

Summary 264

Index 265

x

x

x

x

Preface

Mulmedia applicaons are used in a broad spectrum of elds. Wring applicaons that

work with images, videos, and other sensory eects is great. Not every applicaon gets

to make full use of audio/visual eects, but a certain amount of mulmedia makes any

applicaon very appealing.

This book is all about mulmedia processing using Python. This step by step guide gives

you a hands-on experience with developing excing mulmedia applicaons. You will build

applicaons for processing images, creang 2D animaons and processing audio and video.

There are numerous mulmedia libraries for which Python bindings are available. These

libraries enable working with dierent kinds of media, such as images, audio, video, games,

and so on. This book introduces the reader to some of these (open source) libraries through

several implausibly excing projects. Popular mulmedia frameworks and libraries, such

as GStreamer, Pyglet, QT Phonon, and Python Imaging library are used to develop various

mulmedia applicaons.

What this book covers

Chapter 1, Python and Mulmedia teaches you a few things about popular mulmedia

frameworks for mulmedia processing using Python and shows you how to develop a

simple interacve applicaon using PyGame.

Chapter 2, Working with Images explains basic image conversion and manipulaon

techniques using the Python Imaging Library. With the help of several examples and code

snippets, we will perform some basic manipulaons on the image, such as pasng an image

on to another, resizing, rotang/ipping, cropping, and so on. We will write tools to capture

a screenshot and convert image les between dierent formats. The chapter ends with

an excing project where we develop an image processing applicaon with a graphical

user interface.

x

x

Preface

[ 2 ]

Chapter 3, Enhancing Images describes how to add special eects to an image using Python

Imaging Library. You will learn techniques to enhance digital images using image lters, for

example, reducing 'noise' from a picture, smoothing and sharpening images, embossing, and

so on. The chapter will cover topics such as selecvely changing the colors within an image.

We will develop some exing ulies for blending images together, adding transparency

eects, and creang watermarks.

Chapter 4, Fun with Animaons introduces you to the fundamentals of developing animaons

using Python and Pyglet mulmedia applicaon development frameworks. We will work

on some excing projects such as animang a fun car out for a ride in a thunderstorm, a

'bowling animaon' with keyboard controls, and so on.

Chapter 5, Working with Audios teaches you how to get to grips with the primer on

GStreamer mulmedia framework and use this API for audio and video processing. In this

chapter, we will develop some simple audio processing tools for 'everyday use'. We will

develop tools such as a command-line audio player, a le format converter, an MP3 cuer

and audio recorder.

Chapter 6, Audio Controls and Eects describes how to develop tools for adding audio eects,

mixing audio tracks, creang custom music tracks, visualizing an audio track, and so on.

Chapter 7, Working with Videos explains the fundamentals of video processing. This

chapter will cover topics such as converng video between dierent video formats, mixing

or separang audio and video tracks, saving one or more video frames as sll images,

performing basic video manipulaons such as cropping, resizing, adjusng brightness,

and so on.

Chapter 8, GUI-based Media Players using QT Phonon takes you through the fundamental

components of the QT Phonon framework. We will use QT Phonon to develop audio and

video players using a graphical user interface.

Who this book is for

Python developers who want to dip their toes into working with images, animaons, and

audio and video processing using Python.

Conventions

In this book, you will nd several headings appearing frequently.

To give clear instrucons of how to complete a procedure or task, we use:

x

x

Preface

[ 3 ]

Time for action – heading

1. Acon 1

2. Acon 2

3. Acon 3

Instrucons oen need some extra explanaon so that they make sense, so they are

followed with:

What just happened?

This heading explains the working of tasks or instrucons that you have just completed.

You will also nd some other learning aids in the book, including:

Pop quiz – heading

These are short mulple choice quesons intended to help you test your own understanding.

Have a go hero – heading

These set praccal challenges and give you ideas for experimenng with what you

have learned.

You will also nd a number of styles of text that disnguish between dierent kinds of

informaon. Here are some examples of these styles, and an explanaon of their meaning.

Code words in text are shown as follows: "The diconary self.addedEffects keeps track

of all the audio."

A block of code is set as follows:

1 def __init__(self):

2 self.constructPipeline()

3 self.is_playing = False

4 self.connectSignals()

When we wish to draw your aenon to a parcular part of a code block, the relevant lines

or items are set in bold:

1 def constructPipeline(self):

2 self.pipeline = gst.Pipeline()

3 self.filesrc = gst.element_factory_make(

4 "gnlfilesource")

x

x

Preface

[ 4 ]

Any command-line input or output is wrien as follows:

>>>import pygst

New terms and important words are shown in bold. Words that you see on the screen, in

menus or dialog boxes for example, appear in the text like this: "You will need to tweak the

Eects menu UI and make some other changes in the code to keep track of the added eects."

Warnings or important notes appear in a box like this.

Tips and tricks appear like this.

Reader feedback

Feedback from our readers is always welcome. Let us know what you think about this

book—what you liked or may have disliked. Reader feedback is important for us to

develop tles that you really get the most out of.

To send us general feedback, simply send an e-mail to feedback@packtpub.com, and

menon the book tle via the subject of your message.

If there is a book that you need and would like to see us publish, please send us a note in the

SUGGEST A TITLE form on www.packtpub.com or e-mail suggest@packtpub.com.

If there is a topic that you have experse in and you are interested in either wring or

contribung to a book, see our author guide on www.packtpub.com/authors.

Customer support

Now that you are the proud owner of a Packt book, we have a number of things to help you

to get the most from your purchase.

Downloading the example code for this book

You can download the example code les for all Packt books you have purchased

from your account at http://www.PacktPub.com. If you purchased this

book elsewhere, you can visit http://www.PacktPub.com/support and

register to have the les e-mailed directly to you.

x

x

Preface

[ 5 ]

Errata

Although we have taken every care to ensure the accuracy of our content, mistakes do happen.

If you nd a mistake in one of our books—maybe a mistake in the text or the code—we

would be grateful if you would report this to us. By doing so, you can save other readers from

frustraon and help us improve subsequent versions of this book. If you nd any errata, please

report them by vising http://www.packtpub.com/support, selecng your book, clicking

on the errata submission form link, and entering the details of your errata. Once your errata

are veried, your submission will be accepted and the errata will be uploaded on our website,

or added to any list of exisng errata, under the Errata secon of that tle. Any exisng errata

can be viewed by selecng your tle from http://www.packtpub.com/support.

Piracy

Piracy of copyright material on the Internet is an ongoing problem across all media. At Packt,

we take the protecon of our copyright and licenses very seriously. If you come across any

illegal copies of our works, in any form, on the Internet, please provide us with the locaon

address or website name immediately so that we can pursue a remedy.

Please contact us at copyright@packtpub.com with a link to the suspected pirated material.

We appreciate your help in protecng our authors, and our ability to bring you

valuable content.

Questions

You can contact us at questions@packtpub.com if you are having a problem with any

aspect of the book, and we will do our best to address it.

x

x

x

x

1

Python and Multimedia

Since its concepon in 1989, Python has gained increasing popularity as a

general purpose programming language. It is a high-level, object-oriented

language with a comprehensive standard library. The language features

such as automac memory management and easy readability have aracted

the aenon of a wide range of developer communies. Typically, one can

develop complex applicaons in Python very quickly compared to some other

languages. It is used in several open source as well as commercial scienc

modeling and visualizaon soware packages. It has already gained popularity

in industries such as animaon and game development studios, where the focus

is on mulmedia applicaon development. This book is all about mulmedia

processing using Python.

In this introductory chapter, we shall:

Learn about mulmedia and mulmedia processing

Discuss a few popular mulmedia frameworks for mulmedia processing

using Python

Develop a simple interacve applicaon using PyGame

So let's get on with it.

x

x

Python and Mulmedia

[ 8 ]

Multimedia

We use mulmedia applicaons in our everyday lives. It is mulmedia that we deal

with while watching a movie or listening to a song or playing a video game. Mulmedia

applicaons are used in a broad spectrum of elds. Mulmedia has a crucial role to play

in the adversing and entertainment industry. One of the most common usages is to add

audio and video eects to a movie. Educaonal soware packages such as a ight or a drive

simulator use mulmedia to teach various topics in an interacve way.

So what really is mulmedia? In general, any applicaon that makes use of dierent sources

of digital media is termed as a digital mulmedia. A video, for instance, is a combinaon

of dierent sources or contents. The contents can be an audio track, a video track, and a

subtle track. When such video is played, all these media sources are presented together

to accomplish the desired eect.

A mulchannel audio can have a background music track and a lyrics track. It may even

include various audio eects. An animaon can be created by using a bunch of digital images

that are displayed quickly one aer the other. These are dierent examples of mulmedia.

In the case of computer or video games, another dimension is added to the applicaon,

the user interacon. It is oen termed as an interacve type of mulmedia. Here, the users

determine the way the mulmedia contents are presented. With the help of devices such as

keyboard, mouse, trackball, joysck, and so on, the users can interacvely control the game.

Multimedia processing

We discussed some of the applicaon domains where mulmedia is extensively used.

The focus of this book will be on mulmedia processing, using which various mulmedia

applicaons will be developed.

Image processing

Aer taking a snap with a digital camera, we oen tweak the original digital image for

various reasons. One of the most common reasons is to remove blemishes from the image,

such as removing 'red-eye' or increasing the brightness level if the picture was taken in

insucient light, and so on. Another reason for doing so is to add special eects that

give a pleasing appearance to the image. For example, making a family picture black and

white and digitally adding a frame around the picture gives it a nostalgic eect. The next

illustraon shows an image before and aer the enhancement. Somemes, the original

image is modied just to make you understand important informaon presented by the

image. Suppose the picture represents a complicated assembly of components. One can add

special eects to the image so that only edges in the picture are shown as highlighted. This

x

x

Chapter 1

[ 9 ]

informaon can then be used to detect, for instance, interference between the components.

Thus, we digitally process the image further unl we get the desired output image.

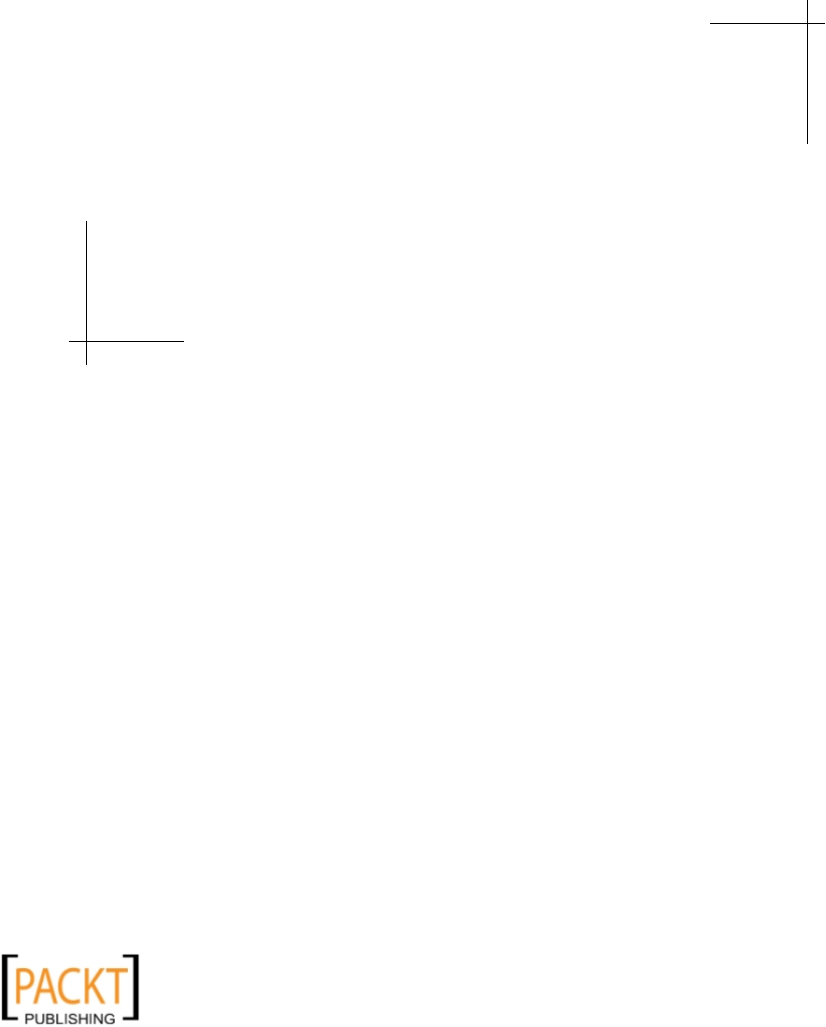

An example where a border is added around an image to change its appearance is as follows:

Digital image processing can be viewed as an applicaon of various algorithms/lters on

the image data. One of the examples is an image smoothing lter. Image smoothing means

reducing the noise from the image. The random changes in brightness and color levels within

the image data are typically referred to as image noise. The smoothing algorithms modify

the input image data so that this noise is reduced in the resultant image.

Another commonly performed image processing operaon is blending. As the name

suggests, blending means mixing two compable images to create a new image. Typically,

the data of the two input images is interpolated using a constant value of alpha to produce

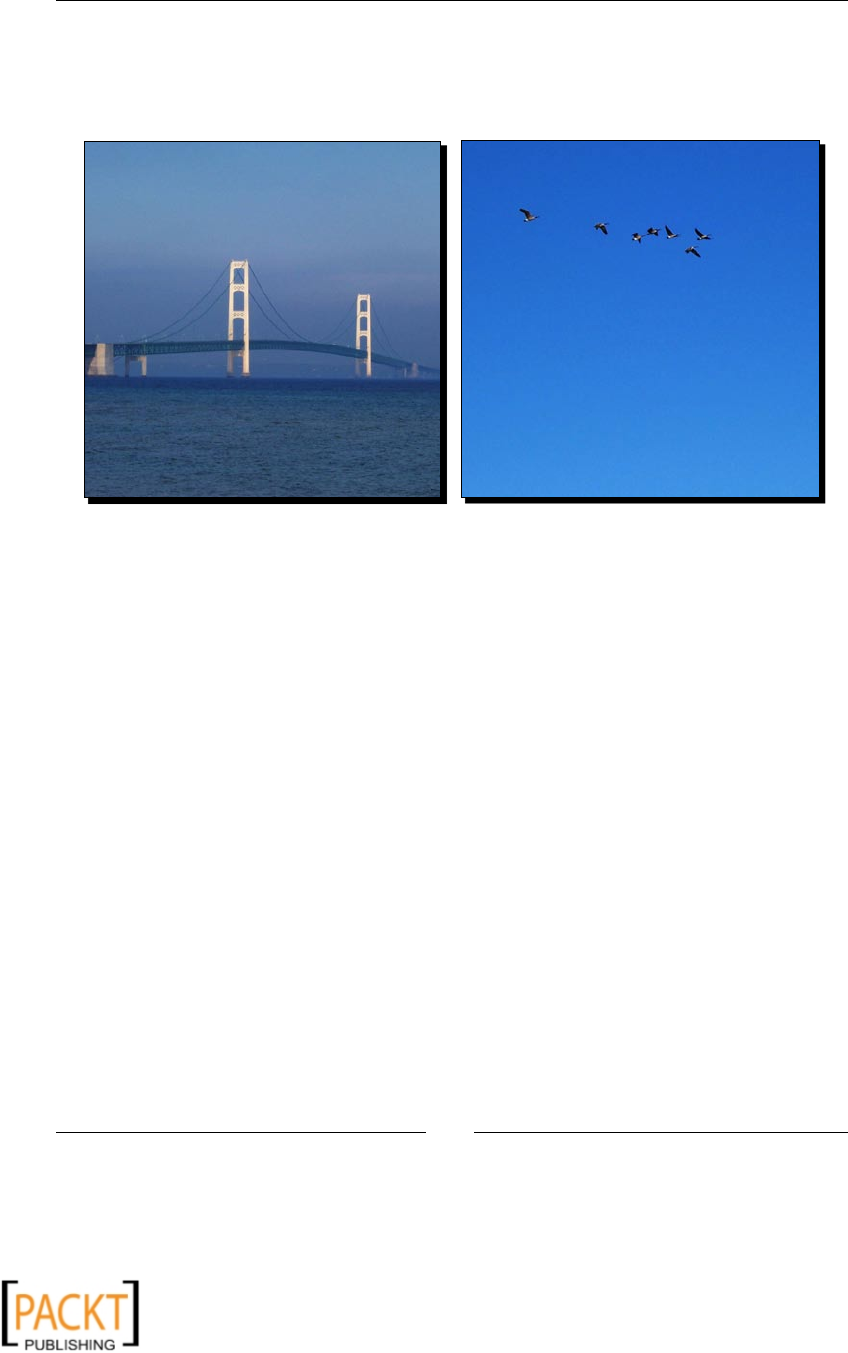

a nal image. The next illustraon shows the two input images and the resultant image

aer blending. In the coming chapters we will learn several of such digital image

processing techniques.

The pictures of the bridge and the ying birds are taken at dierent locaons. Using image

processing techniques these two images can be blended together so that they appear as a

single picture:

x

x

Python and Mulmedia

[ 10 ]

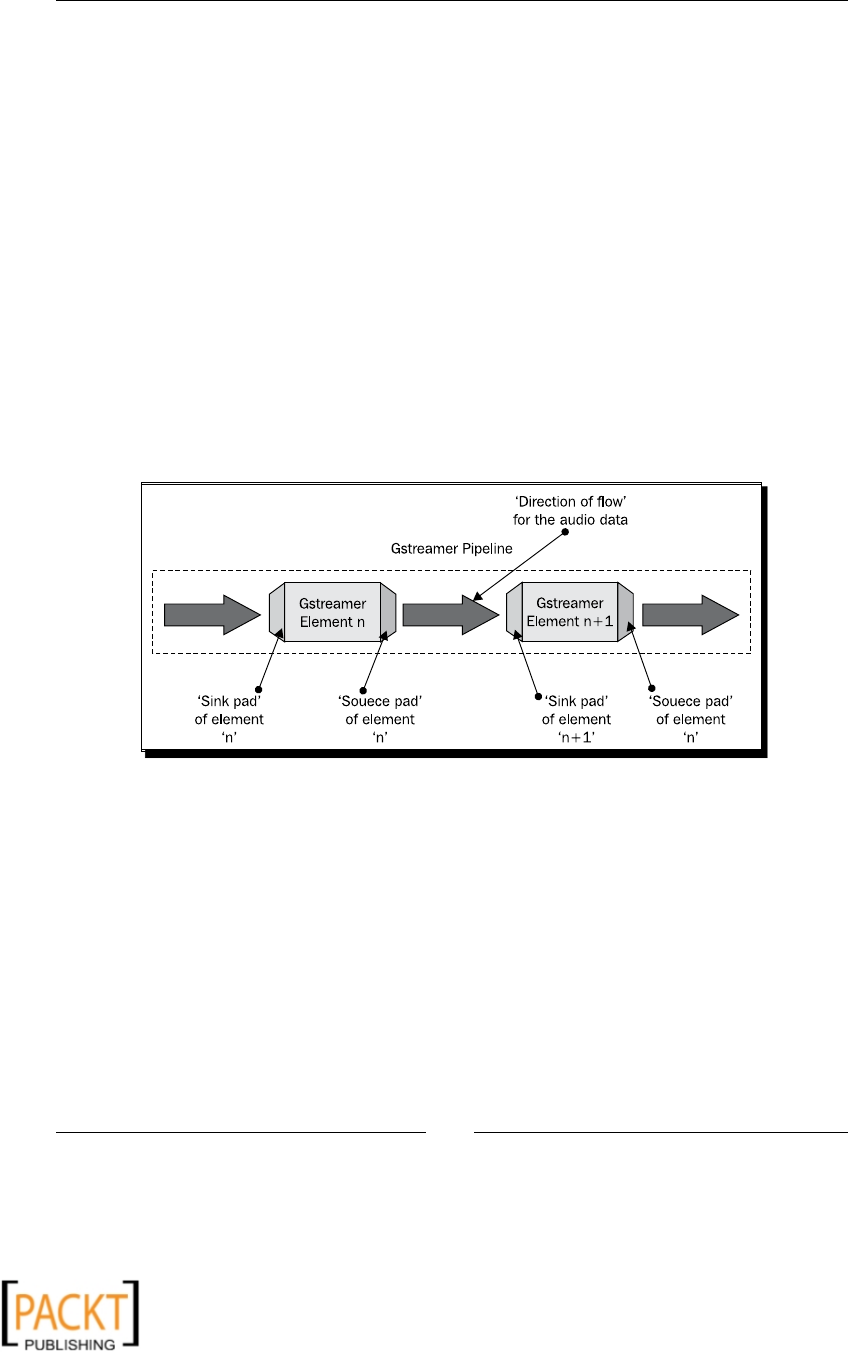

Audio and video processing

When you are listening to music on your computer, your music player is doing several things

in the background. It processes the digital media data so that it can be transformed into a

playable format that an output media device, such as an audio speaker, requires. The media

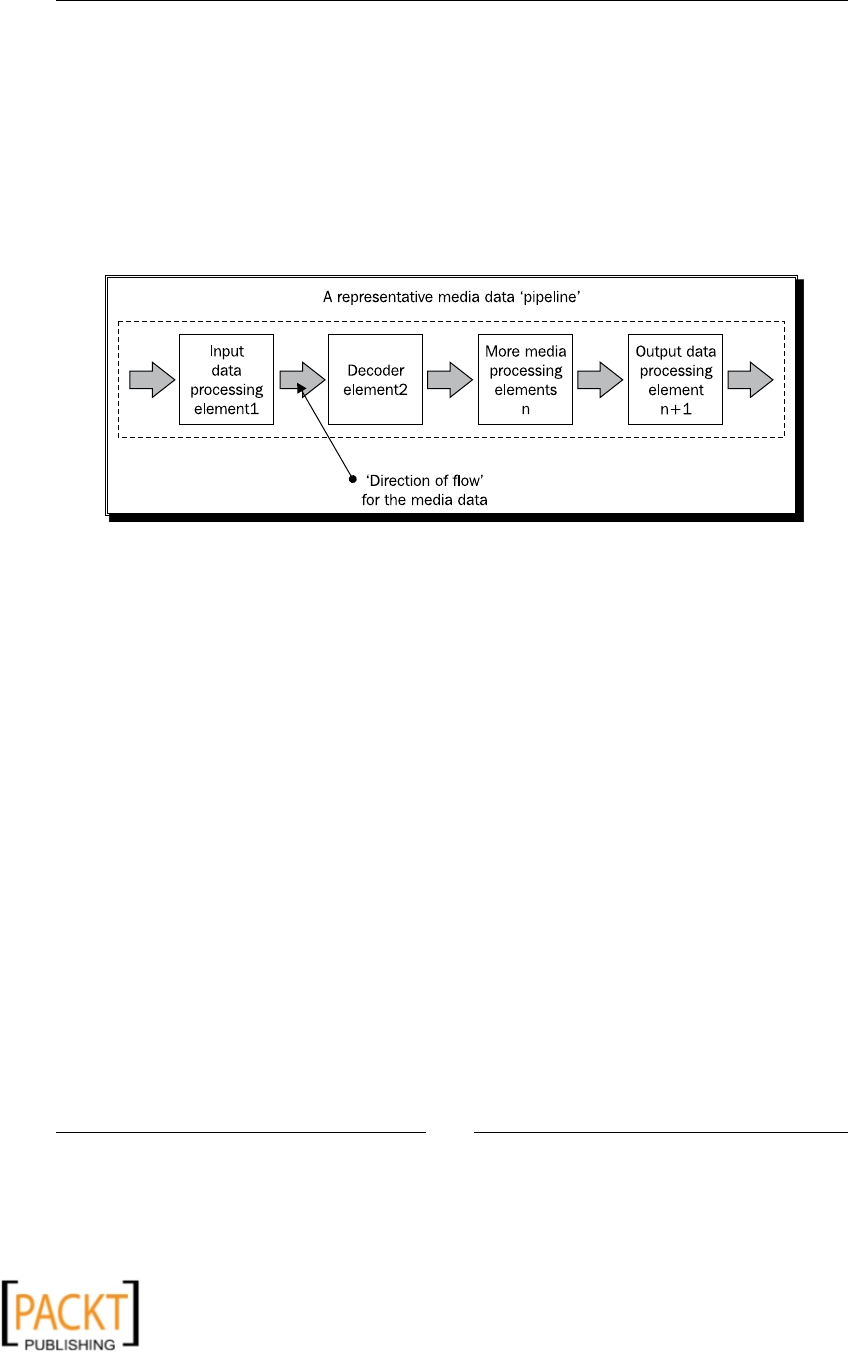

data ows through a number of interconnected media handling components, before it

reaches a media output device or a media le to which it is wrien. This is shown in the

next illustraon.

The following image shows a media data processing pipeline:

Audio and video processing encompasses a number of things. Some of them are briey

discussed in this secon. In this book, we will learn various audio-video processing

techniques using Python bindings of the GStreamer mulmedia framework.

Compression

If you record footage on your camcorder and then transfer it to your computer, it will

take up a lot of space. In order to save those moments on a VCD or a DVD, you almost

always have to compress the audio-video data so that it occupies less space. There are two

types of audio and video compression; lossy and lossless. The lossy compression is very

common. Here, some data is assumed unnecessary and is not retained in the compressed

media. For example, in a lossy video compression, even if some of the original data is lost,

it has much less impact on the overall quality of the video. On the other hand, in lossless

compression, the data of a compressed audio or video perfectly matches the original data.

The compression rao, however, is very low. As we go along, we will write audio-video data

conversion ulies to compress the media data.

x

x

Chapter 1

[ 11 ]

Mixing

Mixing is a way to create composite media using more than one media source. In case of

audio mixing, the audio data from dierent sources is combined into one or more audio

channels. For example, it can be used to add audio eect, in order to synchronize separate

music and lyrics tracks. In the coming chapters, we will learn more about the media mixing

techniques used with Python.

Editing

Media mixing can be viewed as a type of media eding. Media eding can be broadly divided

into linear eding and non-linear eding. In linear eding, the programmer doesn't control

the way media is presented. Whereas in non-linear eding, eding is done interacvely. This

book will cover the basics of media eding. For example, we will learn how to create a new

audio track by combining porons of dierent audio les.

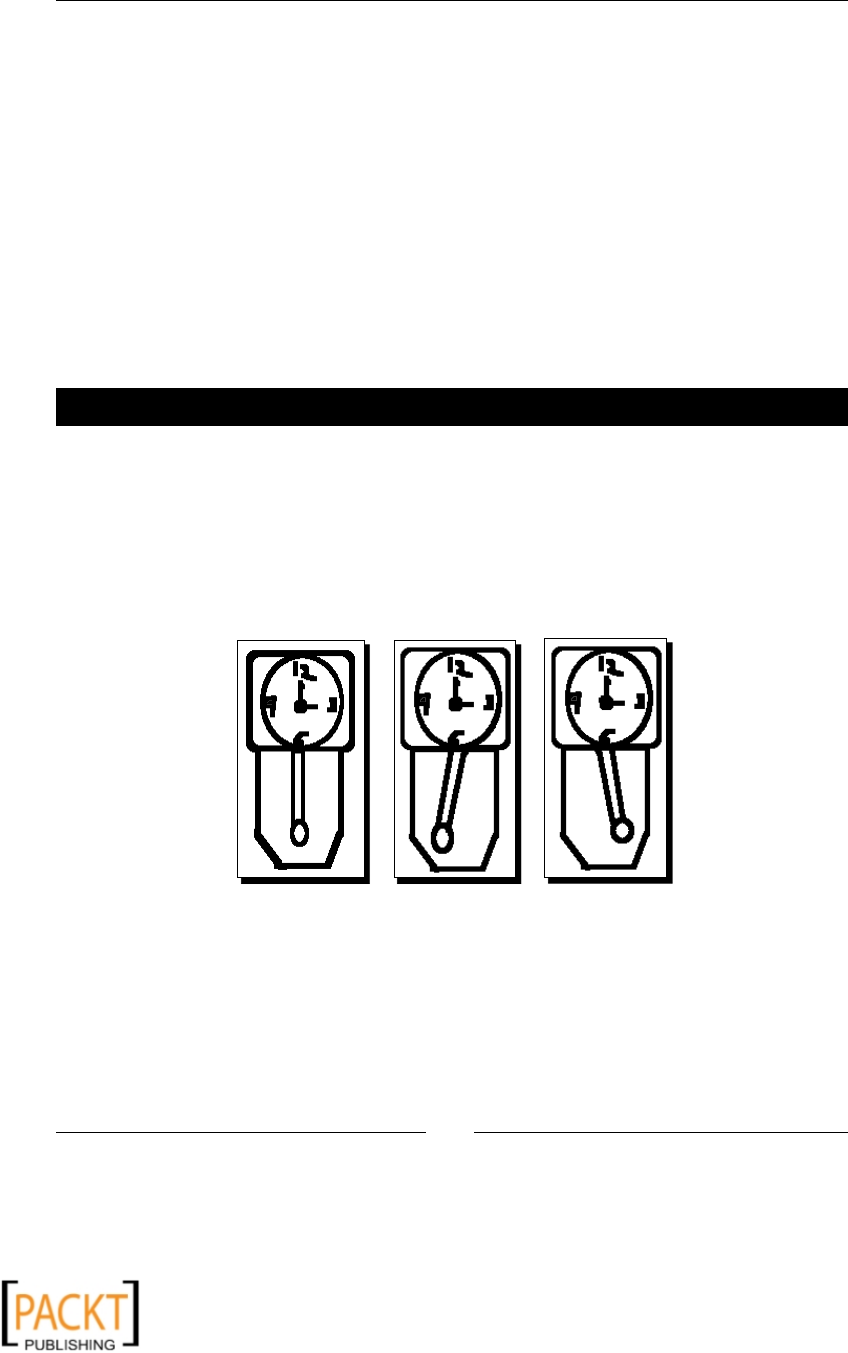

Animations

An animaon can be viewed as an opcal illusion of moon created by displaying a

sequence of image frames one aer the other. Each of these image frames is slightly

dierent from the previously displayed one. The next illustraon shows animaon

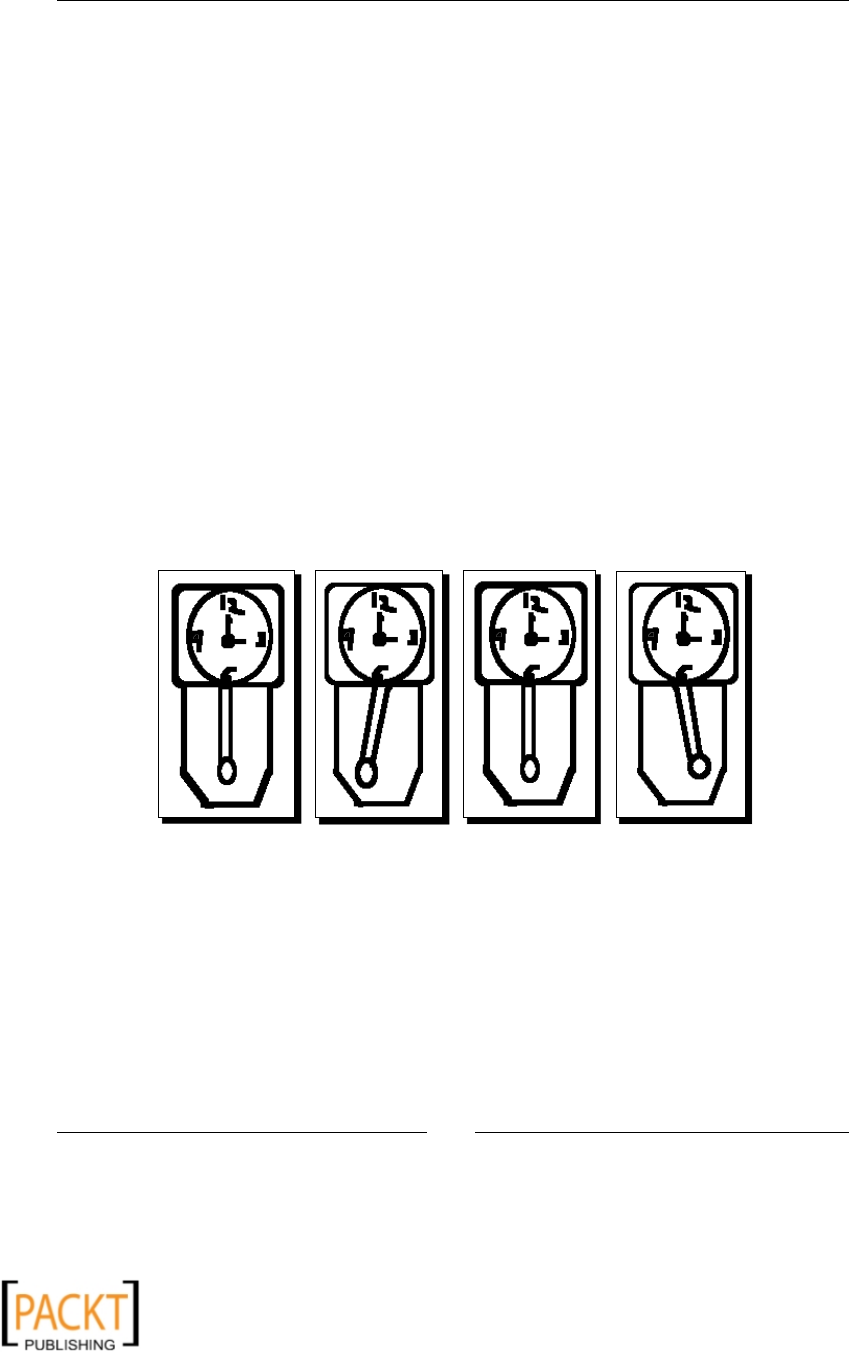

frames of a 'grandfather's clock':

As you can see, there are four image frames in a clock animaon. These frames are

quickly displayed one aer the other to achieve the desired animaon eect. Each of

these images will be shown for 0.25 seconds. Therefore, it simulates the pendulum

oscillaon of one second.

x

x

Python and Mulmedia

[ 12 ]

Cartoon animaon is a classic example of animaon. Since its debut in the early tweneth

century, animaon has become a prominent entertainment industry. Our focus in this

book will be on 2D cartoon animaons built using Python. In Chapter 4, we will learn some

techniques to build such animaons. Creang a cartoon character and bringing it to 'life' is

a laborious job. Unl the late 70s, most of the animaons and eects were created without

the use of computers. In today's age, much of the image creaon work is produced digitally.

The state-of-the-art technology makes this process much faster. For example, one can apply

image transformaons to display or move a poron of an image, thereby avoiding the need

to create the whole cartoon image for the next frame.

Built-in multimedia support

Python has a few built-in mulmedia modules for applicaon development. We will skim

through some of these modules.

winsound

The winsound module is available on the Windows plaorm. It provides an interface which

can be used to implement fundamental audio-playing elements in the applicaon. A sound

can be played by calling PlaySound(sound, flags). Here, the argument sound is used

to specify the path of an audio le. If this parameter is specied as None, the presently

streaming audio (if any) is stopped. The second argument species whether the le to be

played is a sound le or a system sound. The following code snippet shows how to play a

wave formaed audio le using winsound module.

from winsound import PlaySound, SND_FILENAME

PlaySound("C:/AudioFiles/my_music.wav", SND_FILENAME )

This plays the sound le specied by the rst argument to the funcon PlaySound. The

second argument, SND_FILENAME, says that the rst argument is an audio le. If the ag

is set as SND_ALIAS, it means the value for the rst argument is a system sound from

the registry.

audioop

This module is used for manipulang the raw audio data. One can perform several useful

operaons on sound fragments. For example, it can nd the minimum and maximum values

of all the samples within a sound fragment.

x

x

Chapter 1

[ 13 ]

wave

The wave module provides an interface to read and write audio les with WAV le format.

The following line of code opens a wav le.

import wave

fil = wave.open('horn.wav', 'r')

The rst argument of method open is the locaon where the path to the wave le is

specied. The second argument 'r' returns a Wave_read object. This is the mode in which

the audio le is opened, 'r' or 'rb' for read-only mode and 'w' or 'wb' for write-only mode.

External multimedia libraries and frameworks

There are several open source mulmedia frameworks available for mulmedia applicaon

development. The Python bindings for most of these are readily available. We will discuss

a few of the most popular mulmedia frameworks here. In the chapters that follow, we will

make use of many of these libraries to create some useful mulmedia applicaons.

Python Imaging Library

Python Imaging Library provides image processing funconality in Python. It supports several

image formats. Later in this book, a number of image processing techniques using PIL will

be discussed thoroughly. We will learn things such as image format conversion and various

image manipulaon and enhancement techniques using the Python Imaging Library.

PyMedia

PyMedia is a popular open source media library that supports audio/video manipulaon of

a wide range of mulmedia formats.

GStreamer

This framework enables mulmedia manipulaon. It is a framework on top of which one

can develop mulmedia applicaons. The rich set of libraries it provides makes it easier

to develop applicaons with complex audio/video processing capabilies. GStreamer is

wrien in C programming language and provides bindings for some other programming

languages including Python. Several open source projects use GStreamer framework to

develop their own mulmedia applicaon. Comprehensive documentaon is available on

the GStreamer project website. GStreamer Applicaon Development Manual is a very good

starng point. This framework will be extensively used later in this group to develop audio

and video applicaons.

x

x

Python and Mulmedia

[ 14 ]

Pyglet

Interested in animaons and gaming applicaons? Pyglet is here to help. Pyglet provides

an API for developing mulmedia applicaons using Python. It is an OpenGL-based library

that works on mulple plaorms. It is one of the popular mulmedia frameworks for

development of games and other graphically intense applicaons. It supports mulple

monitor conguraon typically needed for gaming applicaon development. Later in this

book, we will be extensively using this Pyglet framework for creang animaons.

PyGame

PyGame (www.pygame.org) is another very popular open source framework that provides

an API for gaming applicaon development needs. It provides a rich set of graphics and

sound libraries. We won't be using PyGame in this book. But since it is a prominent

mulmedia framework, we will briey discuss some of its most important modules and

work out a simple example. The PyGame website provides ample resources on use of this

framework for animaon and game programming.

Sprite

The Sprite module contains several classes; out of these, Sprite and Group are the

most important. Sprite is the super class of all the visible game objects. A Group object

is a container for several instances of Sprite.

Display

As the name suggests, the Display module has funconality dealing with the display. It is

used to create a Surface instance for displaying the Pygame window. Some of the important

methods of this module include flip and update. The former is called to make sure that

everything drawn is properly displayed on the screen. Whereas the laer is used if you

just want to update a poron of the screen.

Surface

This module is used to display an image. The instance of Surface represents an image. The

following line of code creates such an instance.

surf = pygame.display.set_mode((800,600))

The API method, display.set_mode, is used to create this instance. The width and height

of the window are specied as arguments to this method.

Draw

With the Draw module, one can render several basic shapes within the Surface. Examples

include circles, rectangles, lines, and so on.

x

x

Chapter 1

[ 15 ]

Event

This is another important module of PyGame. An event is said to occur when, for instance,

the user clicks a mouse buon or presses a key and so on. The event informaon is used to

instruct the program to execute in a certain way.

Image

The Image module is used to process images with dierent le formats. The loaded image is

represented by a surface.

Music

Pygame.mixer.music provides convenient methods for controlling playback such as play,

reverse, stop, and so on.

The following is a simple program that highlights some of the fundamental concepts

of animaon and game programming. It shows how to display objects in an applicaon

window and then interacvely modify their posions. We will use PyGame to accomplish

this task. Later in this book, we will use a dierent mulmedia framework, Pyglet, for

creang animaons.

Time for action – a simple application using PyGame

This example will make use of the modules we just discussed. For this applicaon to

work, you will need to install PyGame. The binary and source distribuon of PyGame is

available on Pygame's website.

1. Create a new Python source le and write the following code in it.

1 import pygame

2 import sys

3

4 pygame.init()

5 bgcolor = (200, 200, 100)

6 surf = pygame.display.set_mode((400,400))

7

8 circle_color = (0, 255, 255)

9 x, y = 200, 300

10 circle_rad = 50

11

12 pygame.display.set_caption("My Pygame Window")

13

14 while True:

15 for event in pygame.event.get():

x

x

Python and Mulmedia

[ 16 ]

16 if event.type == pygame.QUIT:

17 sys.exit()

18 elif event.type == pygame.KEYDOWN:

19 if event.key == pygame.K_UP:

20 y -= 10

21 elif event.key == pygame.K_DOWN:

22 y += 10

23 elif event.key == pygame.K_RIGHT:

24 x += 10

25 elif event.key == pygame.K_LEFT:

26 x -= 10

27

28 circle_pos = (x, y)

29

30 surf.fill(bgcolor)

31 pygame.draw.circle(surf, circle_color ,

32 circle_pos , circle_rad)

33 pygame.display.flip()

2. The rst line imports the pygame package. On line 4, the modules within this

pygame package are inialized. An instance of class Surface is created using

display.set_mode method. This is the main PyGame window inside which the

images will be drawn. To ensure that this window is constantly displayed on the

screen, we need to add a while loop that will run forever, unl the window is

closed by the user. In this simple applicaon everything we need is placed inside the

while loop. The background color of the PyGame window represented by object

surf is set on line 30.

3. A circular shape is drawn in the PyGame surface by the code on line 31. The

arguments to draw.circle are (Surface, color, position, radius) . This

creates a circle at the posion specied by the argument circle_pos. The instance

of class Surface is sent as the rst argument to this method.

4. The code block 16-26 captures certain events. An event occurs when, for instance,

a mouse buon or a key is pressed. In this example, we instruct the program to

do certain things when the arrow keys are pressed. When the RIGHT arrow key is

pressed, the circle is drawn with the x coordinate oset by 10 pixels to the previous

posion. As a result, the circle appears to be moving towards right whenever you

press the RIGHT arrow key. When the PyGame window is closed, the pygame.QUIT

event occurs. Here, we simply exit the applicaon by calling sys.exit() as done

on line 17.

x

x

Chapter 1

[ 17 ]

5. Finally, we need to ensure that everything drawn on the Surface is visible. This is

accomplished by the code on line 31. If you disable this line, incompletely drawn

images may appear on the screen.

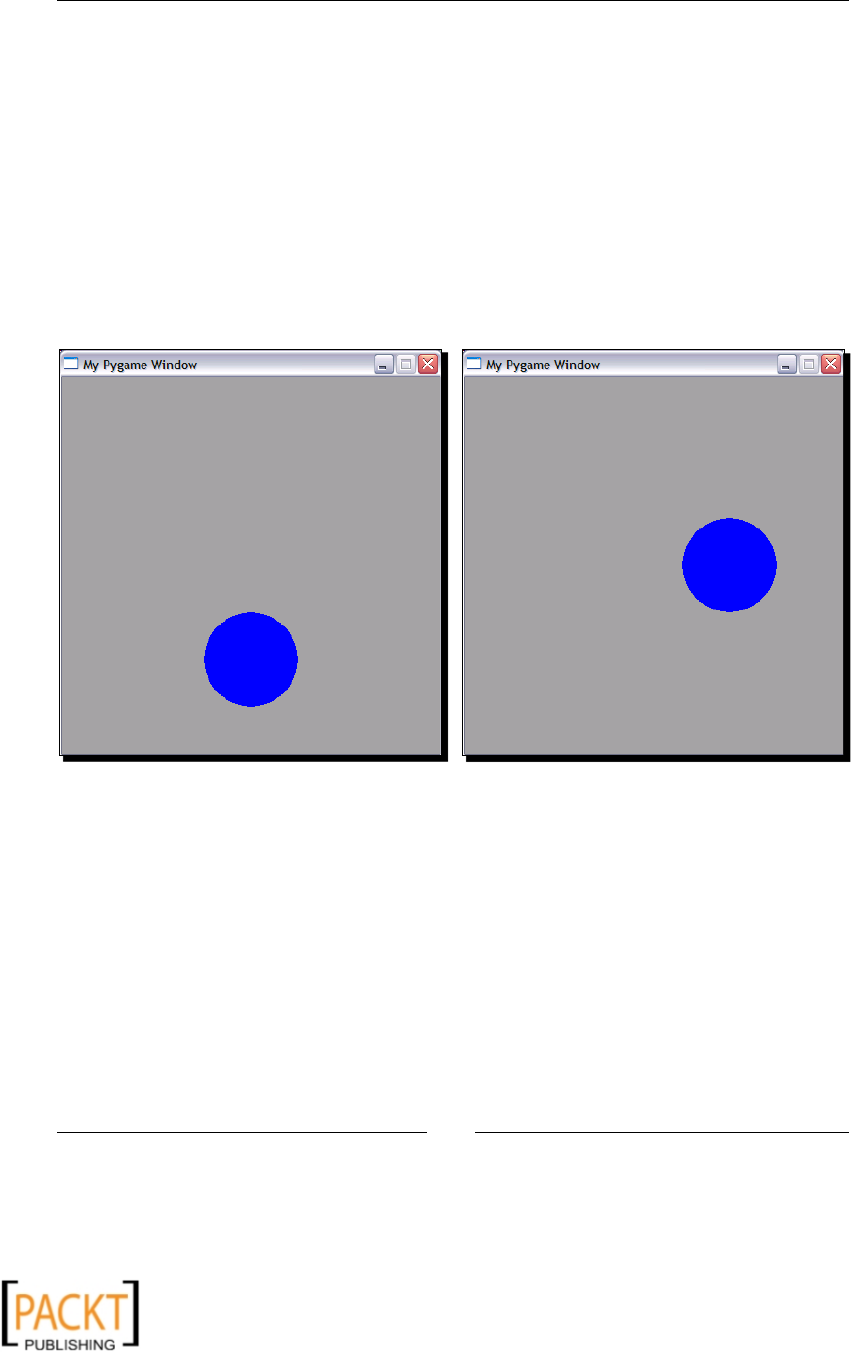

6. Execute the program from a terminal window. It will show a new graphics window

containing a circular shape. If you press the arrow keys on the keyboard, the circle

will move in the direcon indicated by the arrow key. The next illustraon shows the

screenshot of the original circle posion (le) and when it is moved using the UP

and RIGHT arrow keys.

A simple PyGame applicaon with a circle drawn within the Surface (window).

The image on the right side is a screenshot taken aer maneuvering the posion

of the circle with the help of arrow keys:

What just happened?

We used PyGame to create a simple user interacve applicaon. The purpose of this

example was to introduce some of the basic concepts behind animaon and game

programming. It was just a preview of what is coming next! Later in this book we

will use Pyglet framework to create some interesng 2D animaons.

x

x

Python and Mulmedia

[ 18 ]

QT Phonon

When one thinks of a media player, it is almost always associated with a graphical user

interface. Of course one can work with command-line mulmedia players. But a media

player with a GUI is a clear winner as it provides an easy to use, intuive user interface to

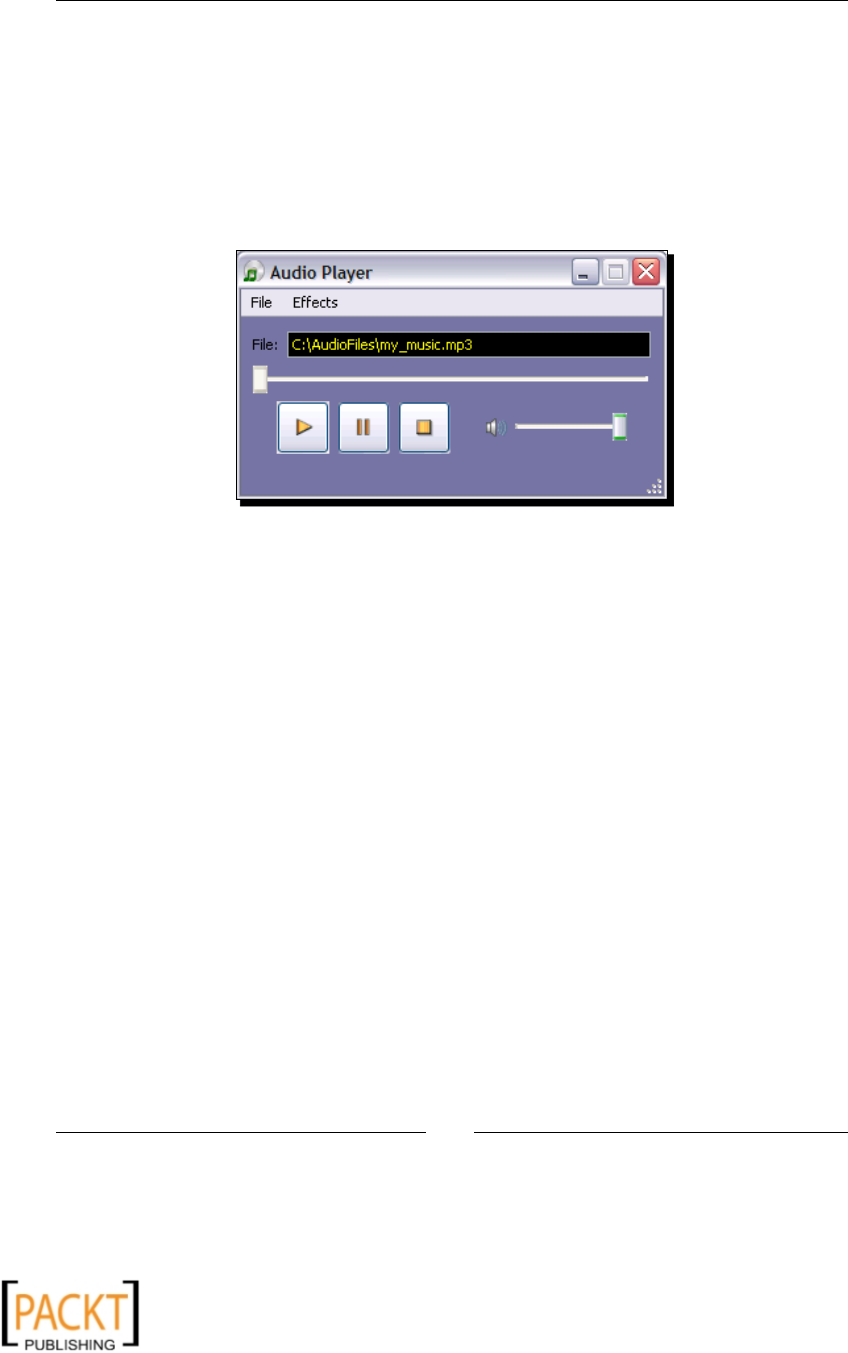

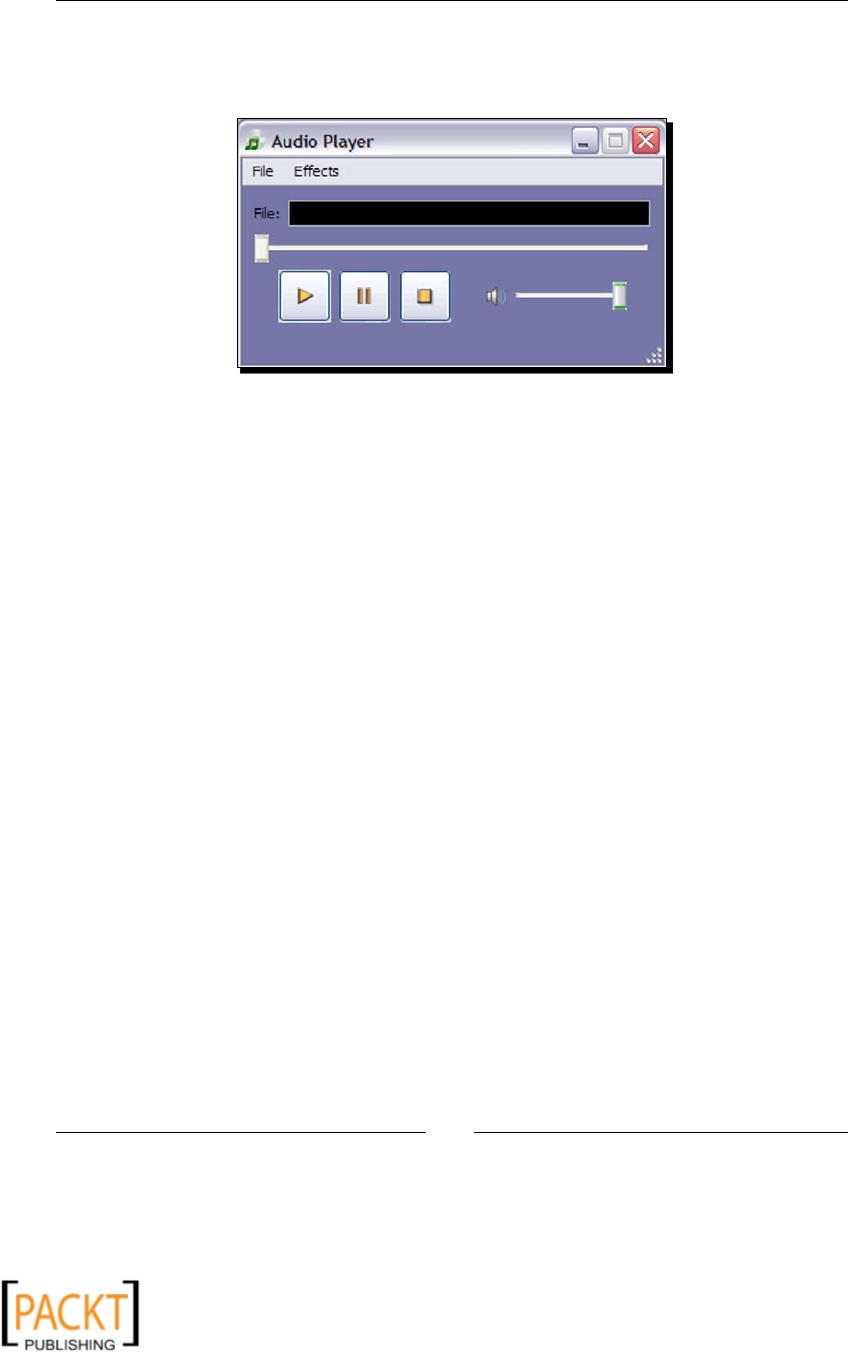

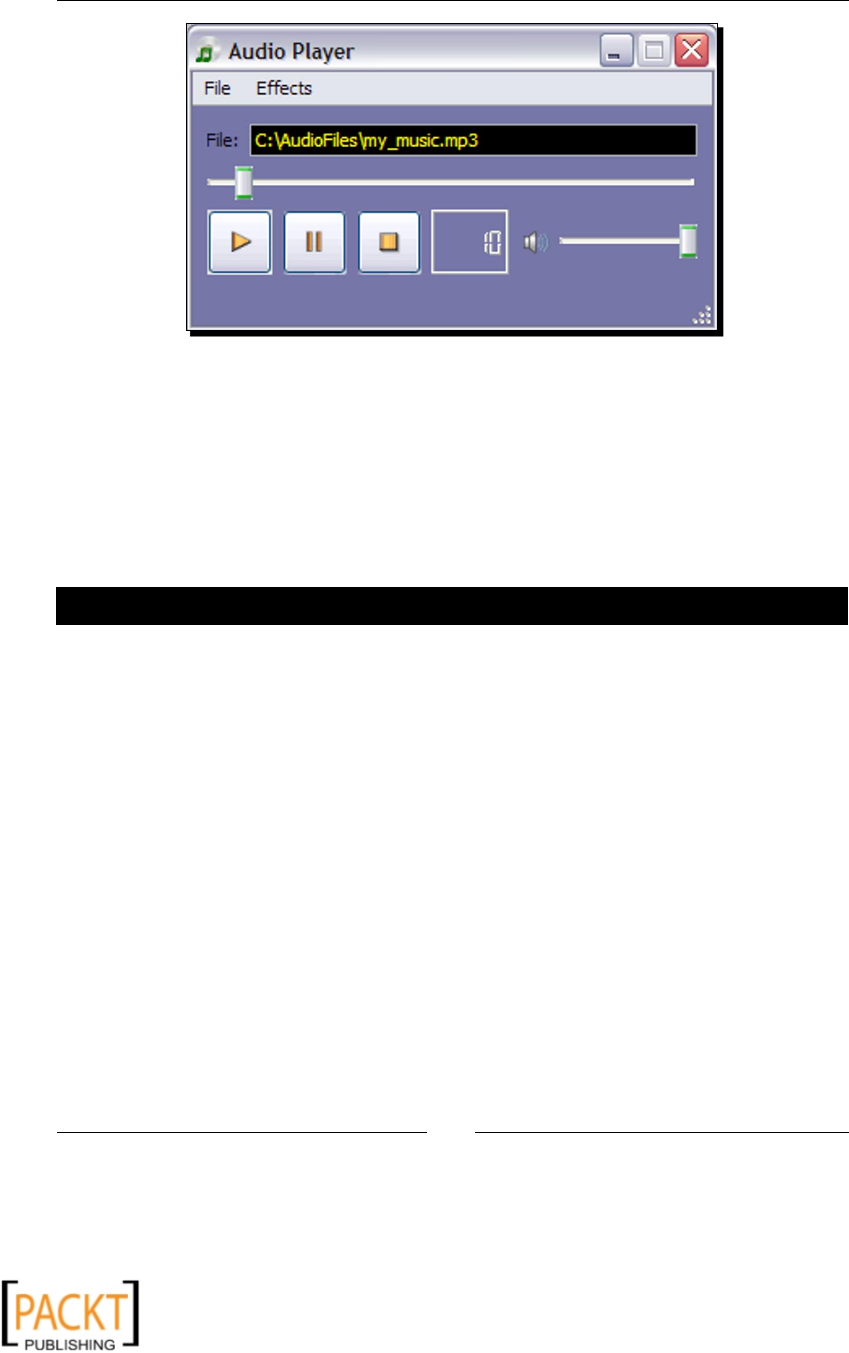

stream a media and control its playback. The next screenshot shows the user interface of an

audio player developed using QT Phonon.

An Audio Player applicaon developed with QT Phonon:

QT is an open source GUI framework. 'Phonon' is a mulmedia package within QT that

supports audio and video playback. Note that, Phonon is meant for simple media player

funconality. For complex audio/video player funconality, you should use mulmedia

frameworks like GStreamer. Phonon depends on a plaorm-specic backend for media

processing. For example, on Windows plaorm the backend framework is DirectShow.

The supported funconality may vary depending on the plaorm.

To develop a media processing applicaon, a media graph is created in Phonon. This media

graph contains various interlinked media nodes. Each media node does a poron of media

processing. For example, an eects node will add an audio eect, such as echo to the media.

Another node will be responsible for outpung the media from an audio or video device

and so on. In chapter 8, we will develop audio and video player applicaons using Phonon

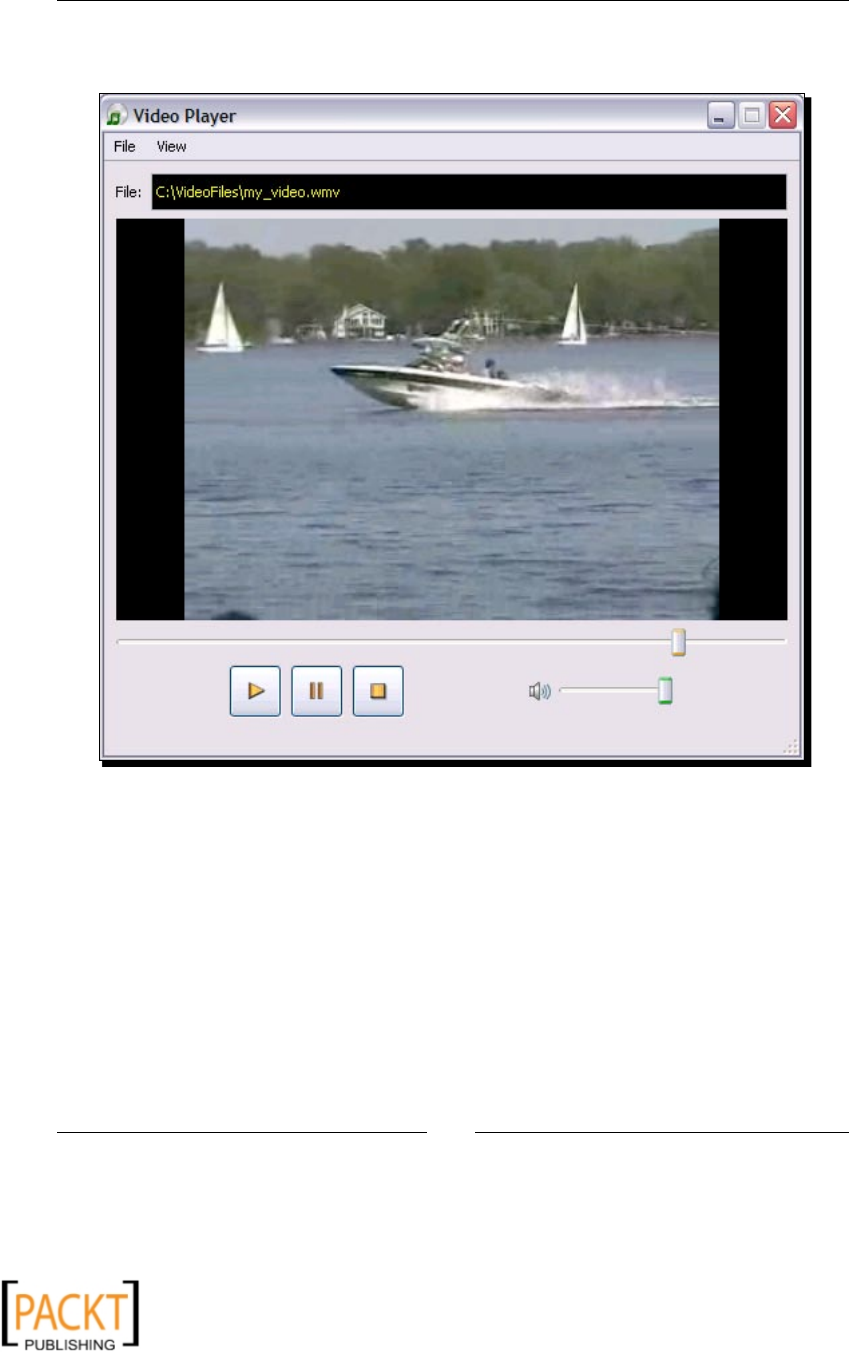

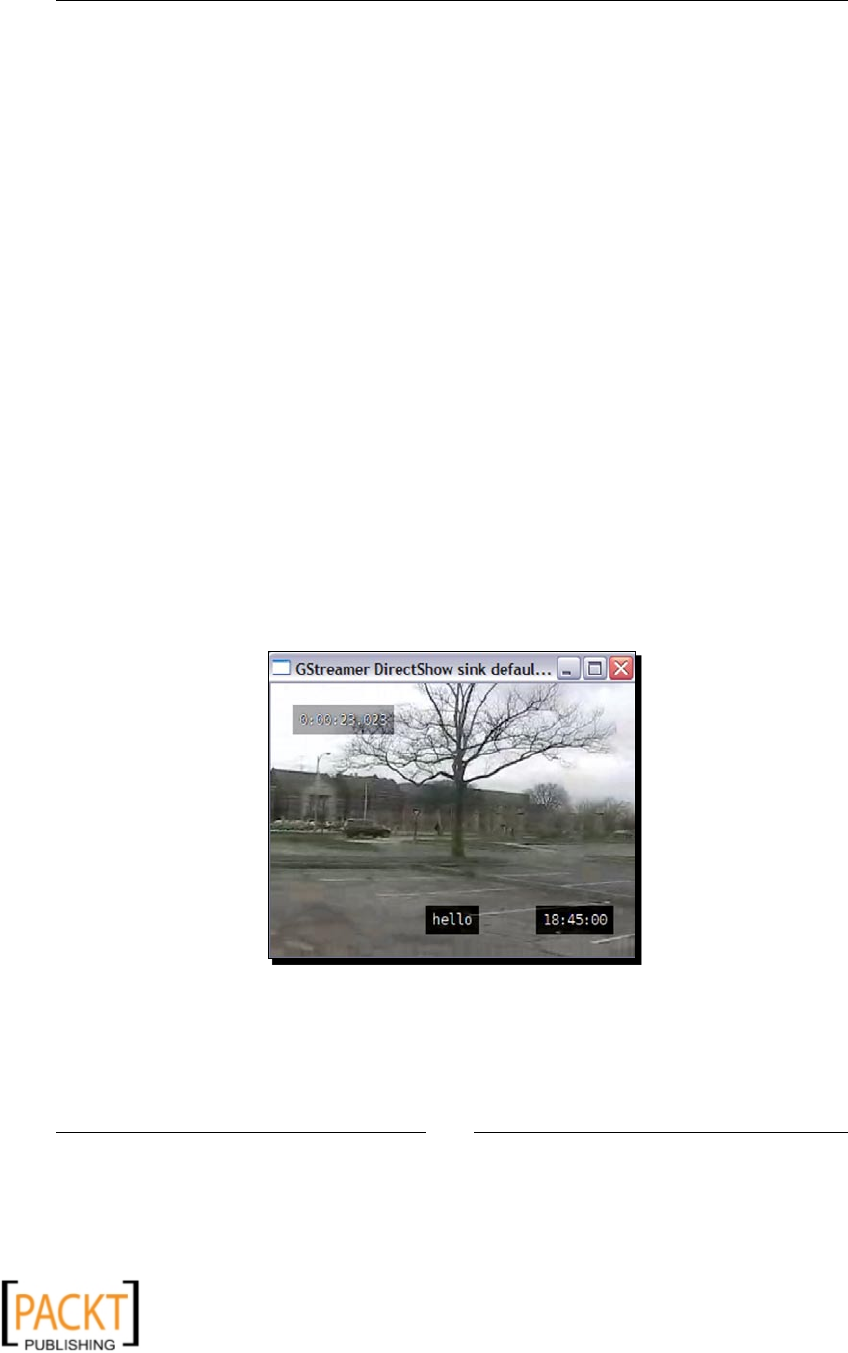

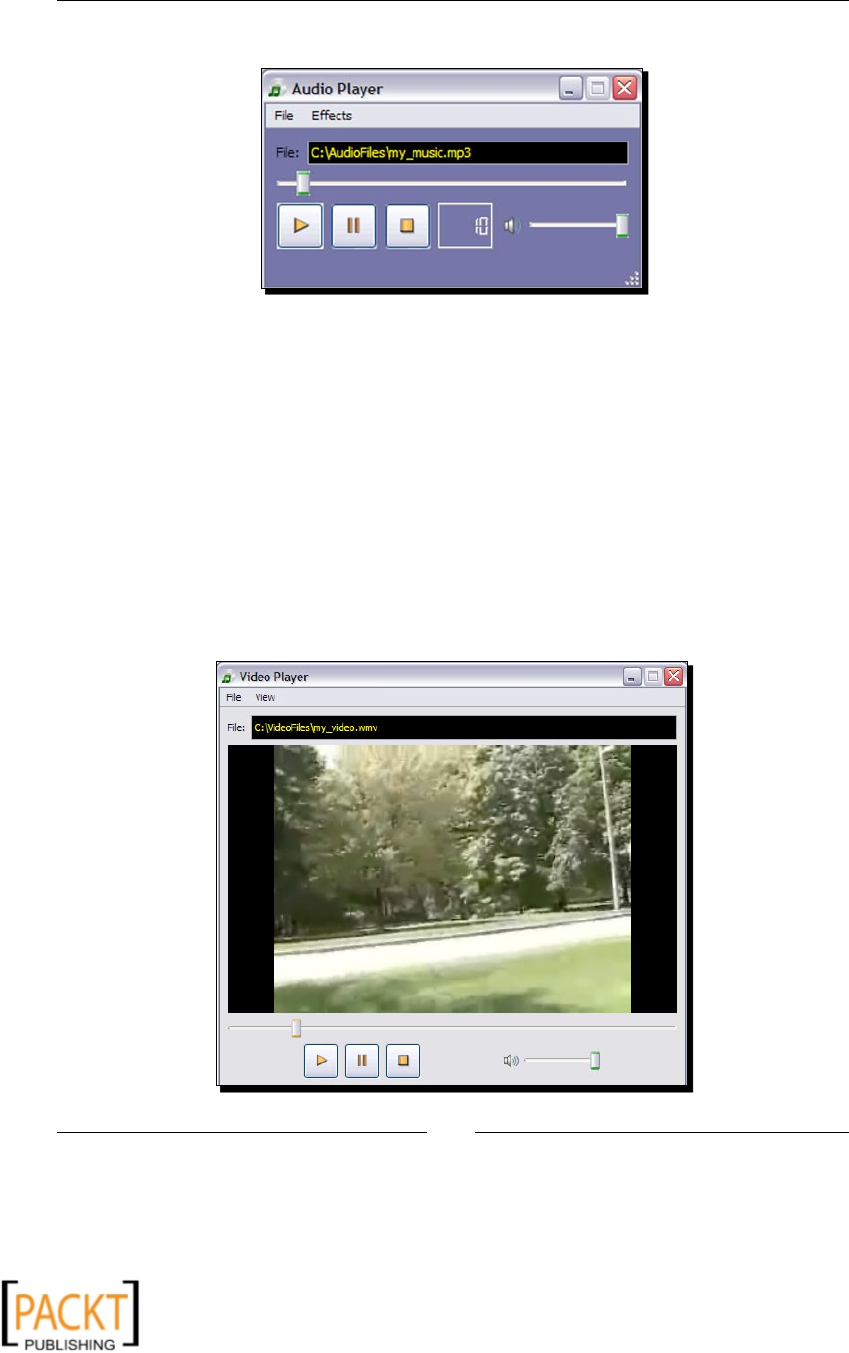

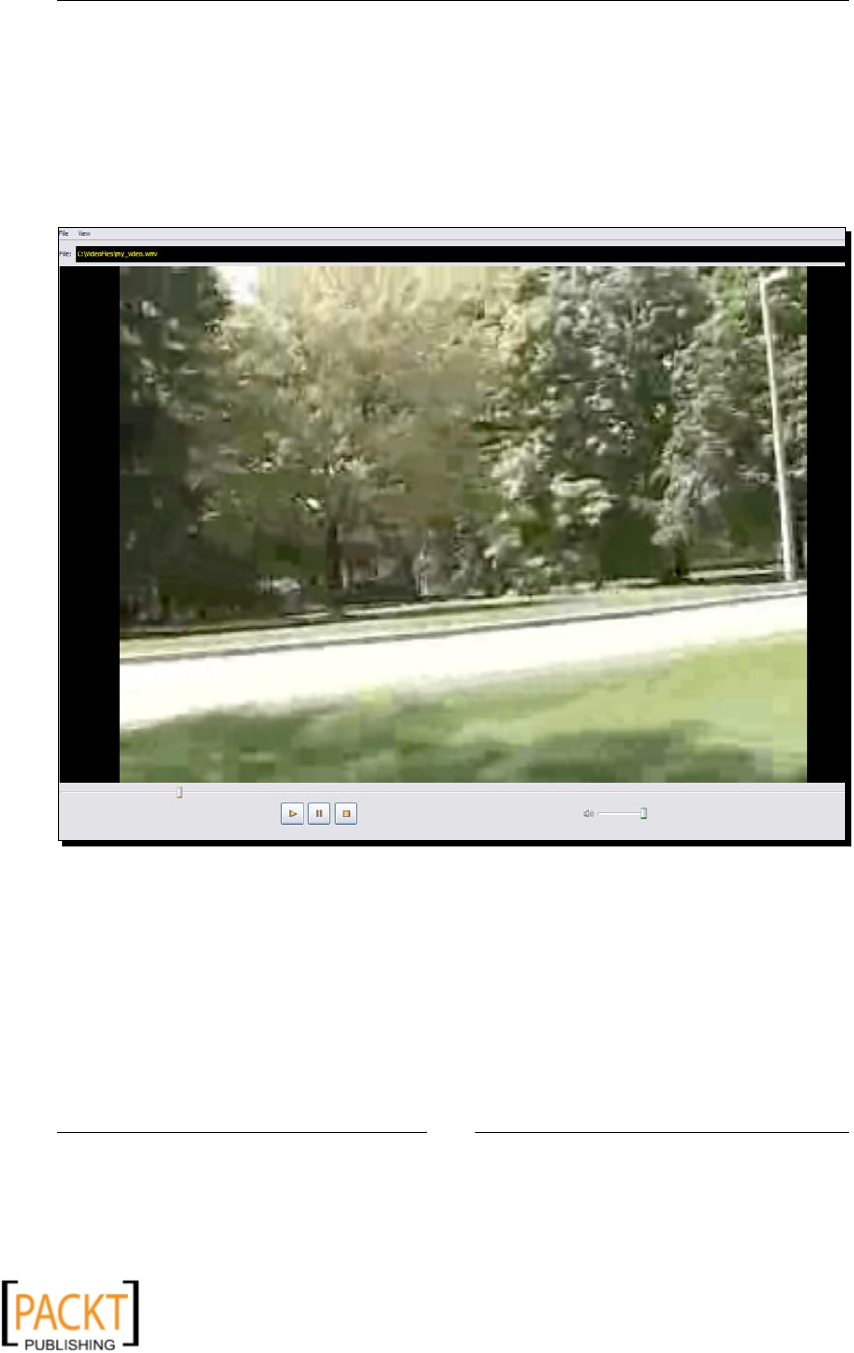

framework. The next illustraon shows a video player streaming a video. It is developed

using QT Phonon. We will be developing this applicaon in Chapter 8.

x

x

Chapter 1

[ 19 ]

Using various built-in modules of QT Phonon, it is very easy to create GUI-based audio

and video players. This example shows a video player in acon:

Other multimedia libraries

Python bindings for several other mulmedia libraries are available on various plaorms.

Some of the popular libraries are menoned below.

Snack Sound Toolkit

Snack is an audio toolkit that is used to create cross-plaorm audio applicaons.

It includes audio analysis and input-output funconality and it has support for

audio visualizaon as well. The ocial website for Snack Sound Toolkit is

http://www.speech.kth.se/snack/.

x

x

Python and Mulmedia

[ 20 ]

PyAudiere

PyAudiere (http://pyaudiere.org/) is an open source audio library. It provides an API

to easily implement the audio funconality in various applicaons. It is based on Audiere

Sound Library.

Summary

This chapter served as an introducon to mulmedia processing using Python.

Specically, in this chapter we covered:

An overview of mulmedia processing. It introduced us to digital image, audio, and

video processing.

We learned about a number of freely available mulmedia frameworks that can be

used for mulmedia processing.

Now that we know what mulmedia libraries and frameworks are out there, we're ready to

explore these to develop excing mulmedia applicaons!

x

x

2

Working with Images

In this chapter, we will learn basic image conversion and manipulaon

techniques using the Python Imaging Library. The chapter ends with an excing

project where we create an image processing applicaon.

In this chapter, we shall:

Learn various image I/O operaons for reading and wring images using the Python

Imaging Library (PIL)

With the help of several examples and code snippets, perform some basic

manipulaons on the image, such as resizing, rotang/ ipping, cropping,

pasng, and so on.

Write an image-processing applicaon by making use of PIL

Use the QT library as a frontend (GUI) for this applicaon

So let's get on with it!

Installation prerequisites

Before we jump in to the main chapter, it is necessary to install the following packages.

Python

In this book we will use Python Version 2.6, or to be more specic, Version 2.6.4.

It can be downloaded from the following locaon:

http://python.org/download/releases/

x

x

Working with Images

[ 22 ]

Windows platform

For Windows, just download and install the plaorm-specic binary distribuon of

Python 2.6.4.

Other platforms

For other plaorms, such as Linux, Python is probably already installed on your machine.

If the installed version is not 2.6, build and install it from the source distribuon. If you are

using a package manager on a Linux system, search for Python 2.6. It is likely that you will

nd the Python distribuon there. Then, for instance, Ubuntu users can install Python from

the command prompt as:

$sudo apt-get python2.6

Note that for this, you must have administrave permission on the machine on which you

are installing Python.

Python Imaging Library (PIL)

We will learn image-processing techniques by making extensive use of the Python Imaging

Library (PIL) throughout this chapter. As menoned in Chapter 1, PIL is an open source

library. You can download it from http://www.pythonware.com/products/pil/.

Install the PIL Version 1.1.6 or later.

Windows platform

For Windows users, installaon is straighorward—use the binary distribuon PIL 1.1.6 for

Python 2.6.

Other platforms

For other plaorms, install PIL 1.1.6 from the source. Carefully review the README le in

the source distribuon for the plaorm-specic instrucons. Libraries listed in the following

table are required to be installed before installing PIL from the source. For some plaorms

like Linux, the libraries provided in the OS should work ne. However, if those do not work,

install a pre-built "libraryName-devel" version of the library. For example, for JPEG support,

the name will contain "jpeg-devel-", and something similar for the others. This is generally

applicable to rpm-based distribuons. For Linux avors like Ubuntu, you can use the

following command in a shell window.

$sudo apt-get install python-imaging.

x

x

Chapter 2

[ 23 ]

However, you should make sure that this installs Version 1.1.6 or later. Check PIL

documentaon for further plaorm-specic instrucons. For Mac OSX, see if you can use

fink to install these libraries. See http://www.finkproject.org/ for more details.

You can also check the website http://pythonmac.org or Darwin ports website

http://darwinports.com/ to see if a binary package installer is available. If such

a pre-built version is not available for any library, install it from the source.

The PIL prerequisites for installing PIL from source are listed in the following table:

Library URL Version Installaon opons

(a) or (b)

libjpeg

(JPEG support)

http://www.ijg.

org/files

7 or 6a or

6b

(a) Pre-built version. For example:

jpeg-devel-7

Check if you can do:

sudo apt-install libjpeg

(works on some avors of Linux)

(b) Source tarball. For example:

jpegsrc.v7.tar.gz

zib

(PNG support)

http://www.gzip.

org/zlib/

1.2.3 or

later

(a) Pre-built version. For example:

zlib-devel-1.2.3..

(b) Install from the source.

freetype2

(OpenType /TrueType

support)

http://www.

freetype.org

2.1.3 or

later

(a) Pre-built version. For example:

freetype2-devel-2.1.3..

(b) Install from the source.

PyQt4

This package provides Python bindings for Qt libraries. We will use PyQt4 to generate GUI for

the image-processing applicaon that we will develop later in this chapter. The GPL version is

available at: http://www.riverbankcomputing.co.uk/software/pyqt/download.

Windows platform

Download and install the binary distribuon pertaining to Python 2.6. For example, the

executable le's name could be 'PyQt-Py2.6-gpl-4.6.2-2.exe'. Other than Python, it includes

everything needed for GUI development using PyQt.

x

x

Working with Images

[ 24 ]

Other platforms

Before building PyQt, you must install SIP Python binding generator. For further details,

refer to the SIP homepage: http://www.riverbankcomputing.com/software/sip/.

Aer installing SIP, download and install PyQt 4.6.2 or later, from the source tarball. For

Linux/Unix source, the lename will start with PyQt-x11-gpl-.. and for Mac OS X,

PyQt-mac-gpl-... Linux users should also check if PyQt4 distribuon is already

available through the package manager.

Summary of installation prerequisites

Package Download locaon Version Windows

plaorm

Linux/Unix/OS X plaorms

Python http://python.org/

download/releases/

2.6.4

(or any

2.6.x)

Install using

binary

distribuon

(a) Install from binary; Also

install addional developer

packages (For example, with

python-devel in the

package name in the rpm

systems) OR

(b) Build and install from the

source tarball.

(c) MAC users can also check

websites such as http://

darwinports.com/ or

http://pythonmac.org/.

PIL www.pythonware.com/

products/pil/

1.1.6 or

later

Install PIL 1.1.6

(binary) for

Python 2.6

(a) Install prerequisites if

needed. Refer to Table #1 and

the README le in PIL source

distribuon.

(b) Install PIL from source.

(c) MAC users can also check

websites like http://

darwinports.com/ or

http://pythonmac.org/.

PyQt4 http://www.

riverbankcomputing.

co.uk/software/

pyqt/download

4.6.2 or

later

Install using

binary

pertaining to

Python 2.6

(a) First install SIP 4.9 or later.

(b) Then install PyQt4.

x

x

Chapter 2

[ 25 ]

Reading and writing images

To manipulate an exisng image, we must open it rst for eding and we also require the

ability to save the image in a suitable le format aer making changes. The Image module in

PIL provides methods to read and write images in the specied image le format. It supports

a wide range of le formats.

To open an image, use Image.open method. Start the Python interpreter and write the

following code. You should specify an appropriate path on your system as an argument to

the Image.open method.

>>>import Image

>>>inputImage = Image.open("C:\\PythonTest\\image1.jpg")

This will open an image le by the name image1.jpg. If the le can't be opened, an

IOError will be raised, otherwise, it returns an instance of class Image.

For saving image, use the save method of the Image class. Make sure you replace the

following string with an appropriate /path/to/your/image/file.

>>>inputImage.save("C:\\PythonTest\\outputImage.jpg")

You can view the image just saved, using the show method of Image class.

>>>outputImage = Image.open("C:\\PythonTest\\outputImage.jpg")

>>>outputImage.show()

Here, it is essenally the same image as the input image, because we did not make any

changes to the output image.

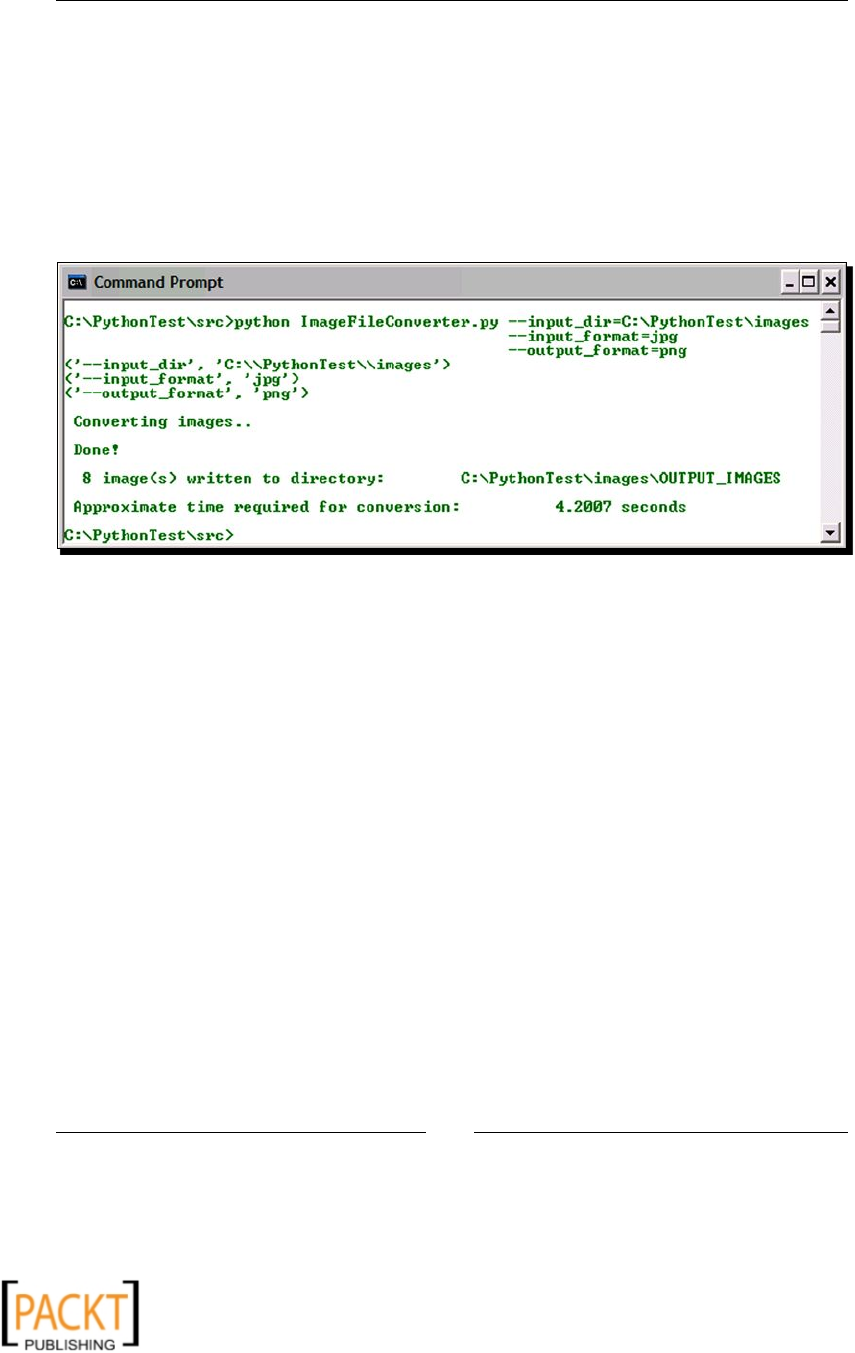

Time for action – image le converter

With this basic informaon, let's build a simple image le converter. This ulity will

batch-process image les and save them in a user-specied le format.

To get started, download the le ImageFileConverter.py from the Packt website,

www.packtpub.com. This le can be run from the command line as:

python ImageConverter.py [arguments]

Here, [arguments] are:

--input_dir: The directory path where the image les are located.

--input_format: The format of the image les to be converted. For example, jpg.

x

x

Working with Images

[ 26 ]

--output_dir: The locaon where you want to save the converted images.

--output_format: The output image format. For example, jpg, png, bmp,

and so on.

The following screenshot shows the image conversion ulity in acon on Windows XP, that

is, running image converter from the command line.

Here, it will batch-process all the .jpg images within C:\PythonTest\images and save

them in png format in the directory C:\PythonTest\images\OUTPUT_IMAGES.

The le denes class ImageConverter . We will discuss the most important methods in

this class.

def processArgs: This method processes all the command-line arguments

listed earlier. It makes use of Python's built-in module getopts to process these

arguments. Readers are advised to review the code in the le ImageConverter.py

in the code bundle of this book for further details on how these arguments

are processed.

def convertImage: This is the workhorse method of the image-conversion ulity.

1 def convertImage(self):

2 pattern = "*." + self.inputFormat

3 filetype = os.path.join(self.inputDir, pattern)

4 fileList = glob.glob(filetype)

5 inputFileList = filter(imageFileExists, fileList)

6

7 if not len(inputFileList):

8 print "\n No image files with extension %s located \

9 in dir %s"%(self.outputFormat, self.inputDir)

10 return

11 else:

x

x

Chapter 2

[ 27 ]

12 # Record time before beginning image conversion

13 starttime = time.clock()

14 print "\n Converting images.."

15

16 # Save image into specified file format.

17 for imagePath in inputFileList:

18 inputImage = Image.open(imagePath)

19 dir, fil = os.path.split(imagePath)

20 fil, ext = os.path.splitext(fil)

21 outPath = os.path.join(self.outputDir,

22 fil + "." + self.outputFormat)

23 inputImage.save(outPath)

24

25 endtime = time.clock()

26 print "\n Done!"

27 print "\n %d image(s) written to directory:\

28 %s" %(len(inputFileList), self.outputDir)

29 print "\n Approximate time required for conversion: \

30 %.4f seconds" % (endtime – starttime)

Now let's review the preceding code.

1. Our rst task is to get a list of all the image les to be saved in a dierent format.

This is achieved by using glob module in Python. Line 4 in the code snippet nds all

the le path names that match the paern specied by the local variable fileType.

On line 5, we check whether the image le in fileList exists. This operaon can

be eciently performed over the whole list using the built-in filter funconality

in Python.

2. The code block between lines 7 to 14 ensures that one or more images exist. If so, it

will record the me before beginning the image conversion.

3. The next code block (lines 17-23) carries out the image le conversion. On line 18,

we use Image.open to open the image le. Line 18 creates an Image object.

Then the appropriate output path is derived and nally the output image is saved

using the save method of the Image module.

What just happened?

In this simple example, we learned how to open and save image les in a specied image

format. We accomplished this by wring an image le converter that batch-processes a

specied image le. We used PIL's Image.open and Image.save funconality along with

Python's built-in modules such as glob and filter.

Now we will discuss other key aspects related to the image reading and wring.

x

x

Working with Images

[ 28 ]

Creating an image from scratch

So far we have seen how to open an exisng image. What if we want to create our own

image? As an example, it you want to create fancy text as an image, the funconality that we

are going to discuss now comes in handy. Later in this book, we will learn how to use such

an image containing some text to embed into another image. The basic syntax for creang a

new image is:

foo = Image.new(mode, size, color)

Where, new is the built-in method of class Image. Image.new takes three arguments,

namely, mode, size, and color. The mode argument is a string that gives informaon about

the number and names of image bands. Following are the most common values for mode

argument: L (gray scale) and RGB (true color). The size is a tuple specifying dimensions

of the image in pixels, whereas, color is an oponal argument. It can be assigned an RGB

value (a 3-tuple) if it's a mul-band image. If it is not specied, the image is lled with

black color.

Time for action – creating a new image containing some text

As already stated, it is oen useful to generate an image containing only some text or a

common shape. Such an image can then be pasted onto another image at a desired angle

and locaon. We will now create an image with text that reads, "Not really a fancy text!"

1. Write the following code in a Python source le:

1 import Image

2 import ImageDraw

3 txt = "Not really a fancy text!"

4 size = (150, 50)

5 color = (0, 100, 0)

6 img = Image.new('RGB', size, color)

7 imgDrawer = ImageDraw.Draw(img)

8 imgDrawer.text((5, 20), txt)

9 img.show()

2. Let's analyze the code line by line. The rst two lines import the necessary modules

from PIL. The variable txt is the text we want to include in the image. On line 7,

the new image is created using Image.new. Here we specify the mode and size

arguments. The oponal color argument is specied as a tuple with RGB values

pertaining to the "dark green" color.

x

x

Chapter 2

[ 29 ]

3. The ImageDraw module in PIL provides graphics support for an Image object.

The funcon ImageDraw.Draw takes an image object as an argument to create a

Draw instance. In output code, it is called imgDrawer, as used on line 7. This Draw

instance enables drawing various things in the given image.

4. On line 8, we call the text method of the Draw instance and supply posion

(a tuple) and the text (stored in the string txt) as arguments.

5. Finally, the image can be viewed using img.show() call. You can oponally

save the image using Image.save method. The following screenshot shows

the resultant image.

What just happened?

We just learned how to create an image from scratch. An empty image was created using the

Image.new method. Then, we used the ImageDraw module in PIL to add text to this image.

Reading images from archive

If the image is part of an archived container, for example, a TAR archive, we can use the

TarIO module in PIL to open it and then call Image.open to pass this TarIO instance

as an argument.

Time for action – reading images from archives

Suppose there is an archive le images.tar containing image le image1.jpg. The

following code snippet shows how to read image1.jpg from the tarball.

>>>import TarIO

>>>import Images

>>>fil = TarIO.TarIO("images.tar", "images/image1.jpg")

>>>img = Image.open(fil)

>>>img.show()

What just happened?

We learned how to read an image located in an archived container.

x

x

Working with Images

[ 30 ]

Have a go hero – add new features to the image le converter

Modify the image conversion code so that it supports the following new funconality, which:

1. Takes a ZIP le containing images as input

2. Creates a TAR archive of the converted images

Basic image manipulations

Now that we know how to open and save images, let's learn some basic techniques to

manipulate images. PIL supports a variety of geometric manipulaon operaons, such as

resizing an image, rotang it by an angle, ipping it top to boom or le to right, and so on.

It also facilitates operaons such as cropping, cung and pasng pieces of images, and

so on.

Resizing

Changing the dimensions of an image is one of the most frequently used image manipulaon

operaons. The image resizing is accomplished using Image.resize in PIL. The following

line of code explains how it is achieved.

foo = img.resize(size, filter)

Here, img is an image (an instance of class Image) and the result of resizing operaon is

stored in foo (another instance of class Image). The size argument is a tuple (width,

height). Note that the size is specied in pixels. Thus, resizing the image means modifying

the number of pixels in the image. This is also known as image re-sampling. The Image.

resize method also takes filter as an oponal argument. A filter is an interpolaon

algorithm used while re-sampling the given image. It handles deleon or addion of pixels

during re-sampling, when the resize operaon is intended to make image smaller or larger in

size respecvely. There are four lters available. The resize lters in the increasing order

of quality are NEAREST, BILINEAR, BICUBIC, and ANTIALIAS. The default lter opon

is NEAREST.

Time for action – resizing

Let's now resize images by modifying their pixel dimensions and applying various lters

for re-sampling.

1. Download the le ImageResizeExample.bmp from the Packt website. We will

use this as the reference le to create scaled images. The original dimensions of

ImageResizeExample.bmp are 200 x 212 pixels.

x

x

Chapter 2

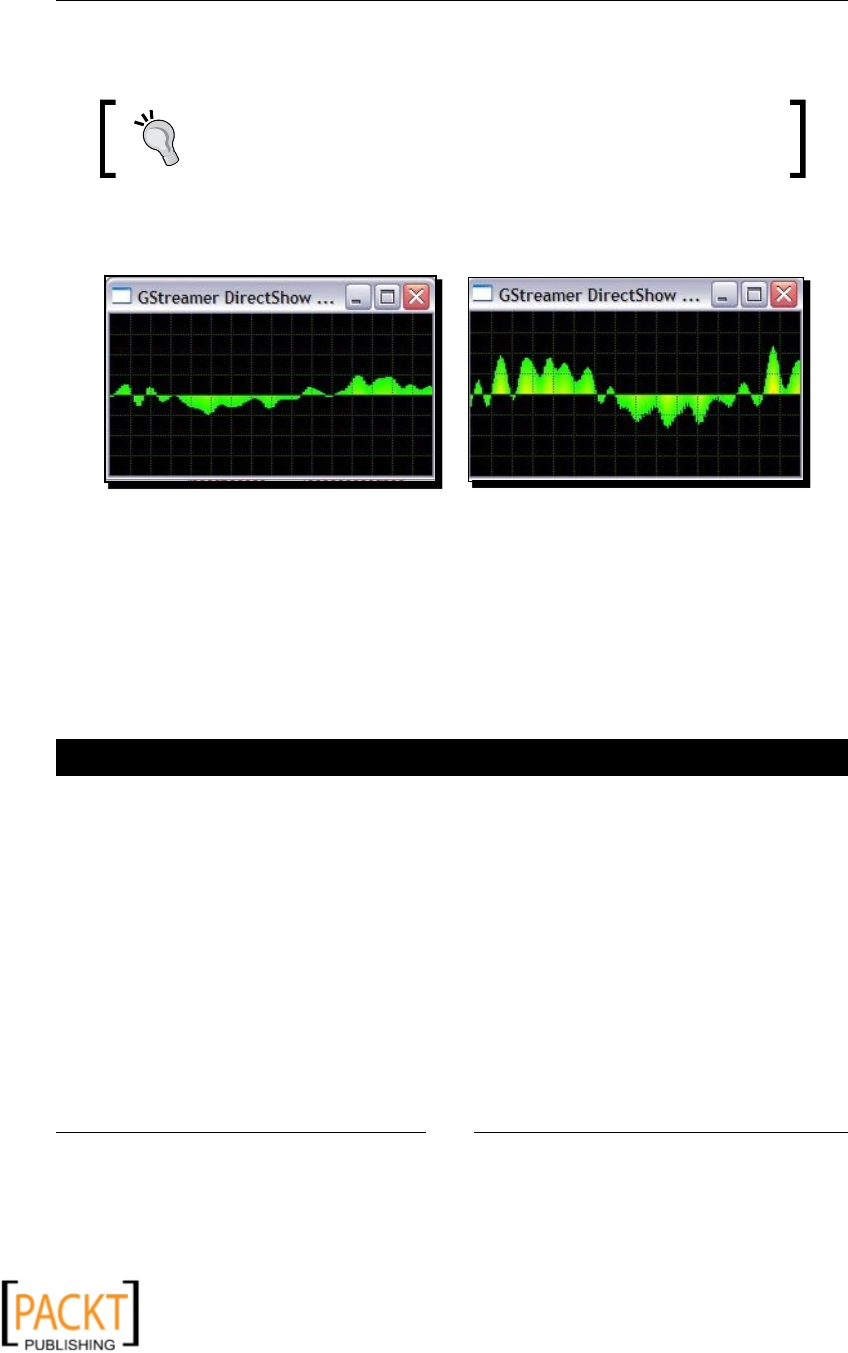

[ 31 ]

2. Write the following code in a le or in Python interpreter. Replace the inPath and

outPath strings with the appropriate image path on your machine.

1 import Image

2 inPath = "C:\\images\\ImageResizeExample.jpg"

3 img = Image.open(inPath)

4 width , height = (160, 160)

5 size = (width, height)

6 foo = img.resize(size)

7 foo.show()

8 outPath = "C:\\images\\foo.jpg"

9 foo.save(outPath)

3. The image specied by the inPath will be resized and saved as the image

specied by the outPath. Line 6 in the code snippet does the resizing job and

nally we save the new image on line 9. You can see how the resized image looks

by calling foo.show().

4. Let's now specify the filter argument. In the following code, on line 14, the

filterOpt argument is specied in the resize method. The valid filter opons

are specied as values in the diconary filterDict. The keys of filterDict

are used as the lenames of the output images. The four images thus obtained are

compared in the next illustraon. You can clearly noce the dierence between the

ANTIALIAS image and the others (parcularly, look at the ower petals in these

images). When the processing me is not an issue, choose the ANTIALIAS lter

opon as it gives the best quality image.

1 import Image

2 inPath = "C:\\images\\ImageResizeExample.jpg"

3 img = Image.open(inPath)

4 width , height = (160, 160)

5 size = (width, height)

6 filterDict = {'NEAREST':Image.NEAREST,

7 'BILINEAR':Image.BILINEAR,

8 'BICUBIC':Image.BICUBIC,

9 'ANTIALIAS':Image.ANTIALIAS }

10

11 for k in filterDict.keys():

12 outPath= "C:\\images\\" + k + ".jpg"

13 filterOpt = filterDict[k]

14 foo = img.resize(size, filterOpt)

15 foo.save(outPath)

x

x

Working with Images

[ 32 ]

The resized images with dierent lter opons appear as follows. Clockwise

from le, Image.NEAREST, Image.BILENEAR, Image.BICUBIC, and

Image.ANTIALIAS:

5. The resize funconality illustrated here, however, doesn't preserve the aspect

rao of the resulng image. The image will appear distorted if one dimension is

stretched more or stretched less in comparison with the other dimension. PIL's

Image module provides another built-in method to x this. It will override the

larger of the two dimensions, such that the aspect rao of the image is maintained.

import Image

inPath = "C:\\images\\ResizeImageExample.jpg"

img = Image.open(inPath)

width , height = (100, 50)

size = (width, height)

outPath = "C:\\images\\foo.jpg"

img.thumbnail(size, Image.ANTIALIAS)

img.save(outPath)

x

x

Chapter 2

[ 33 ]

6. This code will override the maximum pixel dimension value (width in this case)

specied by the programmer and replace it with a value that maintains the aspect

rao of the image. In this case, we have an image with pixel dimensions (47, 50).

The resultant images are compared in the following illustraon.

It shows the comparison of output images for methods Image.thumbnail

and Image.resize.

What just happened?

We just learned how image resizing is done using PIL's Image module, by wring a few lines

of code. We also learned dierent types of lters used in image resizing (re-sampling). And

nally, we also saw how to resize an image while sll keeping the aspect rao intact (that is,

without distoron), using the Image.thumbnail method.

Rotating

Like image resizing, rotang an image about its center is another commonly performed

transformaon. For example, in a composite image, one may need to rotate the text by

certain degrees before embedding it in another image. For such needs, there are methods

such as rotate and transpose available in PIL's Image module. The basic syntax to

rotate an image using Image.rotate is as follows:

foo = img.rotate(angle, filter)

Where, the angle is provided in degrees and filter, the oponal argument, is the

image-re-sampling lter. The valid filter value can be NEAREST, BILINEAR, or BICUBIC.

You can rotate the image using Image.transpose only for 90-, 180-, and 270-degree

rotaon angles.

x

x

Working with Images

[ 34 ]

Time for action – rotating

1. Download the le Rotate.png from the Packt website. Alternavely, you can use

any supported image le of your choice.

2. Write the following code in Python interpreter or in a Python le. As always, specify

the appropriate path strings for inPath and outPath variables.

1 import Image

2 inPath = "C:\\images\\Rotate.png"

3 img = Image.open(inPath)

4 deg = 45

5 filterOpt = Image.BICUBIC

6 outPath = "C:\\images\\Rotate_out.png"

7 foo = img.rotate(deg, filterOpt)

8 foo.save(outPath)

3. Upon running this code, the output image, rotated by 45 degrees, is saved to the

outPath. The lter opon Image.BICUBIC ensures highest quality. The next

illustraon shows the original and the images rotated by 45 and 180 degrees

respecvely—the original and rotated images.

4. There is another way to accomplish rotaon for certain angles by using the

Image.transpose funconality. The following code achieves a 270-degree

rotaon. Other valid opons for rotaon are Image.ROTATE_90 and

Image.ROTATE_180.

import Image

inPath = "C:\\images\\Rotate.png"

img = Image.open(inPath)

outPath = "C:\\images\\Rotate_out.png"

foo = img.transpose(Image.ROTATE_270)

foo.save(outPath)

x

x

Chapter 2

[ 35 ]

What just happened?

In the previous secon, we used Image.rotate to accomplish rotang an image by the

desired angle. The image lter Image.BICUBIC was used to obtain beer quality output

image aer rotaon. We also saw how Image.transpose can be used for rotang the

image by certain angles.

Flipping

There are mulple ways in PIL to ip an image horizontally or vercally. One way to achieve

this is using the Image.transpose method. Another opon is to use the funconality from

the ImageOps module . This module makes the image-processing job even easier with some

ready-made methods. However, note that the PIL documentaon for Version 1.1.6 states

that ImageOps is sll an experimental module.

Time for action – ipping

Imagine that you are building a symmetric image using a bunch of basic shapes. To create

such an image, an operaon that can ip (or mirror) the image would come in handy. So let's

see how image ipping can be accomplished.

1. Write the following code in a Python source le.

1 import Image

2 inPath = "C:\\images\\Flip.png"

3 img = Image.open(inPath)

4 outPath = "C:\\images\\Flip_out.png"

5 foo = img.transpose(Image.FLIP_LEFT_RIGHT)

6 foo.save(outPath)

2. In this code, the image is ipped horizontally by calling the transpose method.

To ip the image vercally, replace line 5 in the code with the following:

foo = img.transpose(Image.FLIP_TOP_BOTTOM)

3. The following illustraon shows the output of the preceding code when the image is

ipped horizontally and vercally.

x

x

Working with Images

[ 36 ]

4. The same eect can be achieved using the ImageOps module. To ip the

image horizontally, use ImageOps.mirror, and to ip the image vercally,

use ImageOps.flip.

import ImageOps

# Flip image horizontally

foo1 = ImageOps.mirror(img)

# Flip image vertically

foo2 = ImageOps.flip(img)

What just happened?

With the help of example, we learned how to ip an image horizontally or vercally using

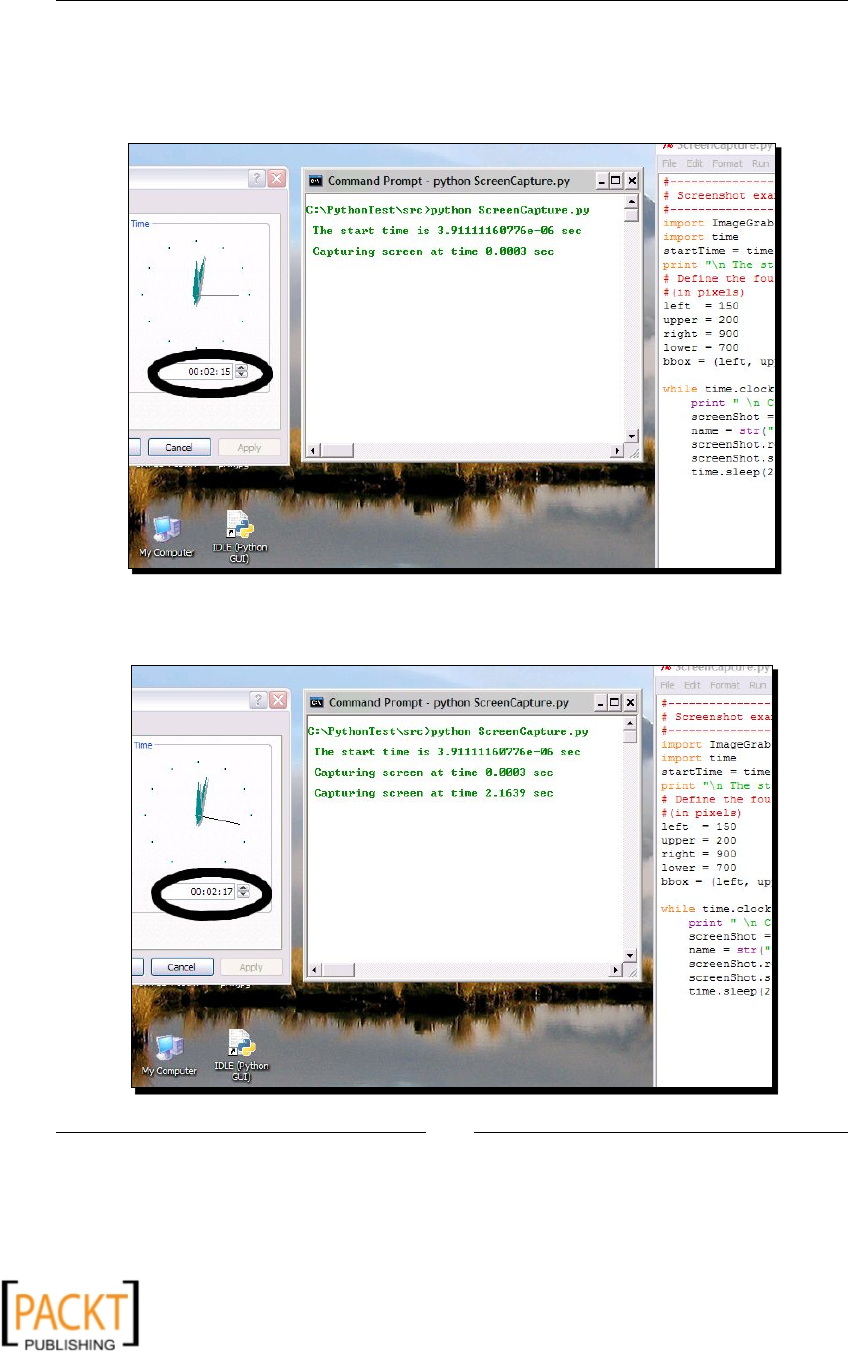

Image.transpose and also by using methods in class ImageOps. This operaon will be