Quick Fatigue Tool User Guide

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 266 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- User Guide

- Version Information

- Acknowledgements

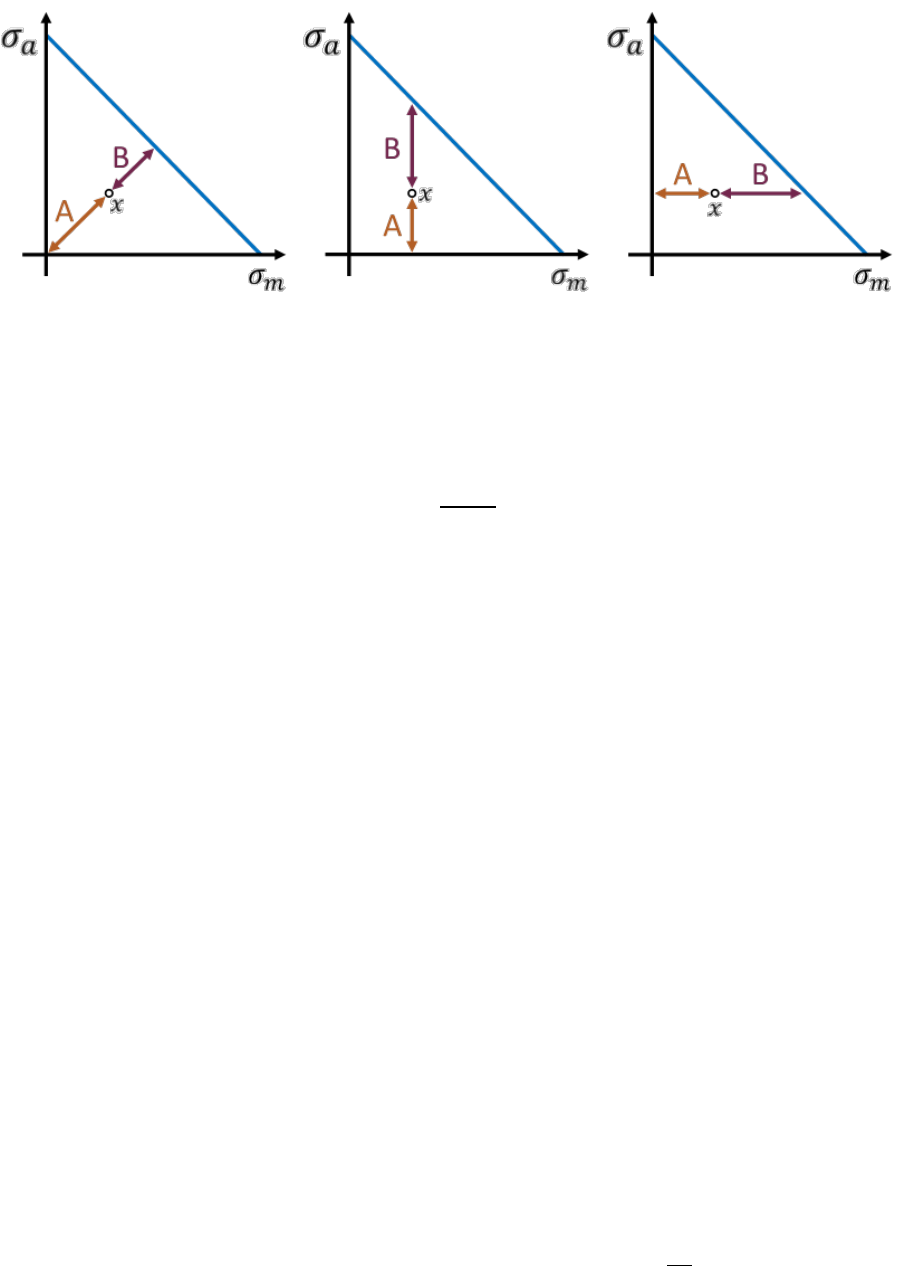

- 1. Introduction

- 2. Getting started

- 3. Defining fatigue loadings

- 4. Analysis techniques

- 5. Materials

- 6. Analysis algorithms

- 6.1 Background

- 6.2 Stress-based Brown-Miller

- 6.3 Normal Stress

- 6.4 Findley’s Method

- 6.5 Stress Invariant Parameter

- 6.6 BS 7608 Fatigue of Welded Steel Joints

- 6.6.1 Overview

- 6.6.2 Derivation of the ,𝑺-𝒓.−𝑵 curve

- 6.6.3 Analysis of axially loaded bolts

- 6.6.4 Effect of the characteristic length

- 6.6.5 Effect of stress relief

- 6.6.6 Effect of small cycles

- 6.6.7 Effect of large cycles

- 6.6.8 Effect of exposure to sea water

- 6.6.9 Failure mode

- 6.6.10 Specifying the ,𝑺-𝒓.−𝑵 curve as a BS 7608 weld class

- 6.6.11 Specifying the ,𝑺-𝒓.−𝑵 curve as user data

- 6.6.12 Compatibility with other features

- 6.6.13 Configuring the analysis parameters

- 6.7 NASALIFE

- 6.8 Uniaxial Stress-Life

- 6.9 Uniaxial Strain-Life

- 6.10 User-defined algorithms

- 7. Mean stress corrections

- 8. Safety factor analysis

- 9. Job and environment files

- 10. Output

- 10.1 Background

- 10.2 Output variables

- 10.3 Viewing output

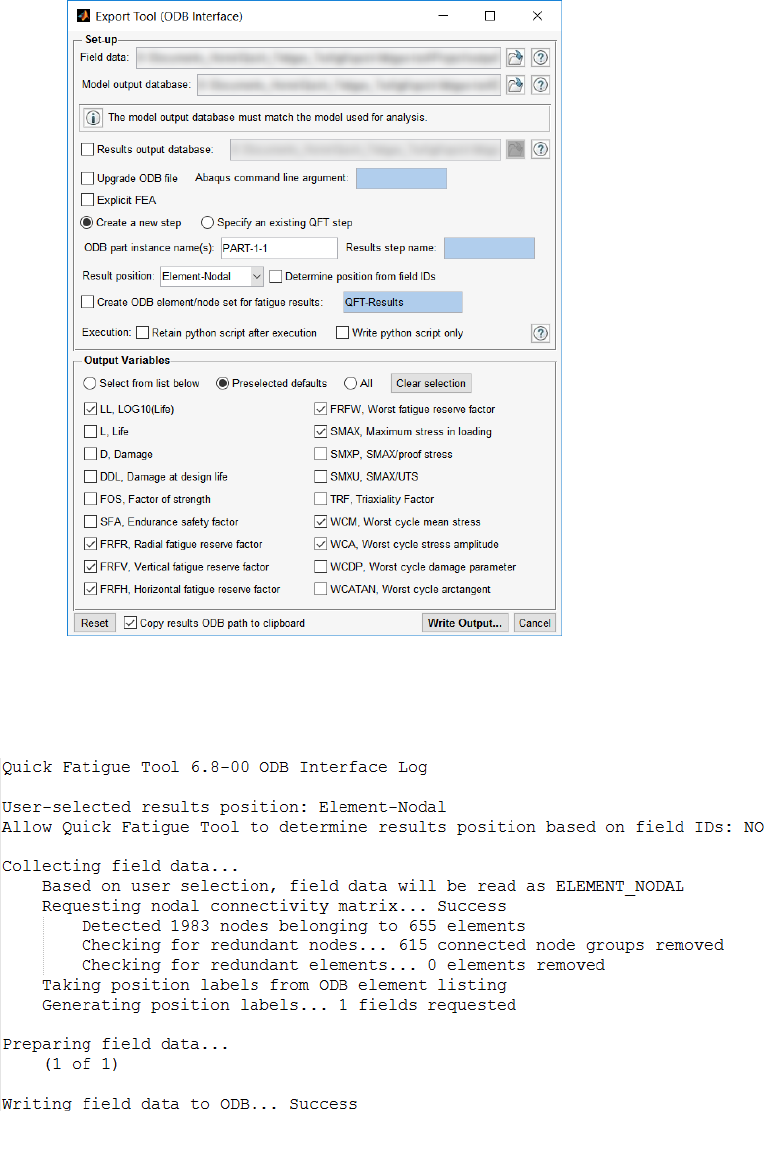

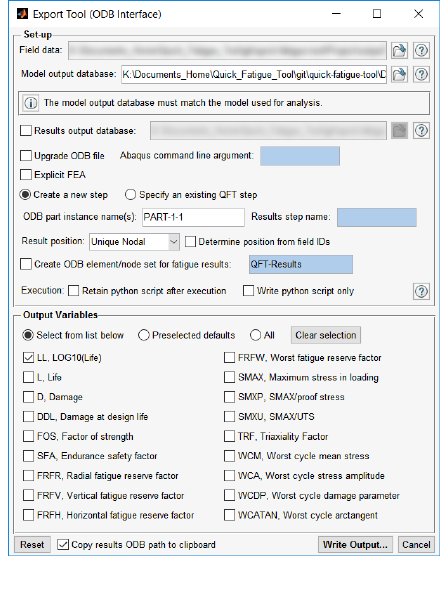

- 10.4 The ODB Interface

- 10.4.1 Overview

- 10.4.2 Accessing the ODB interface via the Export Tool

- 10.4.3 Enabling the ODB interface via the environment file

- 10.4.4 Configuring the ODB interface

- 10.4.5 Mismatching ODB files

- 10.4.6 Large ODB files

- 10.4.7 Exporting field data to multiple Abaqus ODB part instances

- 10.4.8 Minimum requirement for output

- 11. FEA Modelling techniques

- 12. Supplementary analysis procedures

- 12.1 Background

- 12.2 Yield criteria

- 12.3 Composite failure criteria

- 12.3.1 Overview

- 12.3.2 Conventions for fibre-reinforced composites

- 12.3.3 Conventions for closed cell PVC foam

- 12.3.4 Material properties

- 12.3.5 Model definition

- 12.3.6 Loading definition

- 12.3.7 Maximum stress theory

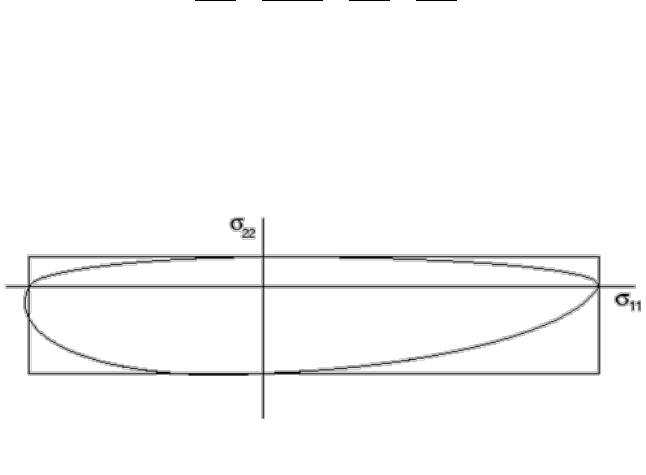

- 12.3.8 Tsai-Hill theory

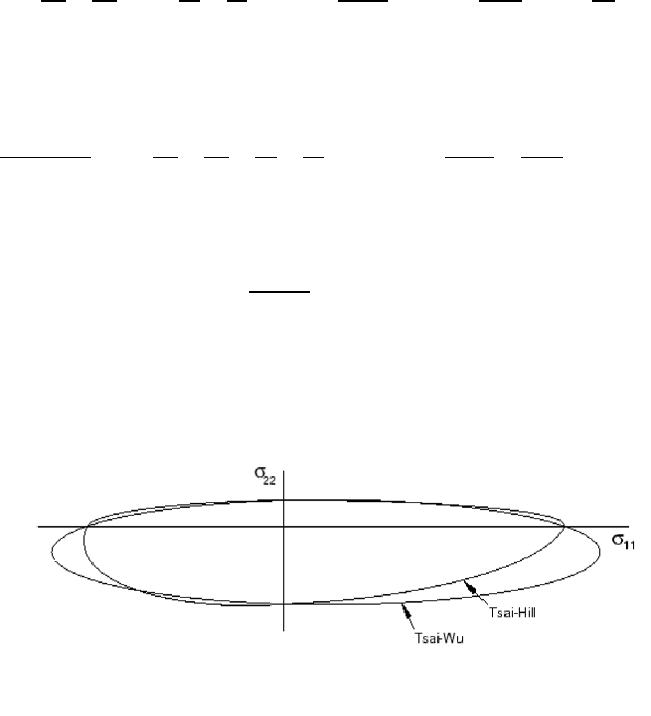

- 12.3.9 Tsai-Wu theory for fibre-reinforced composites

- 12.3.10 Tsai-Wu theory for closed cell PVC foam

- 12.3.11 Azzi-Tsai-Hill theory

- 12.3.12 Maximum strain failure theory

- 12.3.13 Hashin’s damage initiation criteria

- 12.3.14 LaRC05 damage initiation criteria

- 12.3.15 Output

- 12.3.16 Example Usage

- 13. Tutorial A: Analysis of a welded plate with Abaqus

- 14. Tutorial B: Complex loading of an exhaust manifold

- Appendix I. Fatigue analysis techniques

- Appendix II. Materials data generation

- Appendix III. Gauge fatigue toolbox

- References

1

QUICK FATIGUE TOOL FOR MATLAB®

Multiaxial Fatigue Analysis Code for Finite Element Models

User Guide

© Louis Vallance 2018

2

3

Version Information

Documentation revision: 60 [17/01/2018]

Concurrent code release: 6.11-10

Acknowledgements

Quick Fatigue Tool is a free, independent multiaxial fatigue analysis project. The author would like to

acknowledge the following people for making this work possible:

Dr.-Ing. Anders Winkler, SPE

Senior Technical Specialist

SIMULIA Nordics

Sweden

Technical Advice and collaboration

Fatigue materials data

Giovanni Morais Teixeira

Durability Technology Senior Manager

SIMULIA UK

United Kingdom

Technical advice and collaboration

Eli Billauer

Freelance Electrical Engineer

Isreal

Providing the code for the peak-valley

detection algorithm

Adam Nieslony

Professor of Mechanical Engineering

Opole University of Technology

Poland

Providing the code for the alternative

peak-picking method

Joni Keski-Rahkonen

Senior R&D Engineer

Rolls-Royce Oy Ab

Finland

Providing assistance with the critical

plane code

Bruno Luong

Providing the code for Cardan’s formula

which computes Eigenvalues for

multidimensional tensor arrays

4

Contents

1. Introduction .................................................................................................................................... 7

1.1 Overview ................................................................................................................................. 7

1.2 The stress-life methodology ................................................................................................... 8

1.3 The strain-life methodology .................................................................................................... 8

1.4 Why fatigue from FEA? ........................................................................................................... 9

1.5 Overview of syntax ................................................................................................................ 12

1.6 Required toolboxes ............................................................................................................... 13

1.7 Limitations............................................................................................................................. 14

1.8 Additional notes .................................................................................................................... 16

2. Getting started .............................................................................................................................. 17

2.1 Preparing the application ...................................................................................................... 17

2.2 How the application handles variables ................................................................................. 17

2.3 File structure ......................................................................................................................... 18

2.4 Configuring and running an analysis ..................................................................................... 19

2.5 The analysis method ............................................................................................................. 28

3. Defining fatigue loadings .............................................................................................................. 29

3.1 Loading methods ................................................................................................................... 29

3.2 Creating a stress dataset file ................................................................................................. 35

3.3 Creating a load history .......................................................................................................... 39

3.4 Load modulation ................................................................................................................... 42

3.5 High frequency loadings ....................................................................................................... 43

3.6 The dataset processor ........................................................................................................... 47

4. Analysis techniques ....................................................................................................................... 50

4.1 Background ........................................................................................................................... 50

4.2 Treatment of nonlinearity ..................................................................................................... 50

4.3 Surface finish and notch sensitivity ...................................................................................... 52

4.4 In-plane residual stress ......................................................................................................... 65

4.5 Analysis speed control .......................................................................................................... 66

4.6 Analysis groups ..................................................................................................................... 78

4.7 S-N knock-down factors ........................................................................................................ 87

4.8 Analysis continuation techniques ......................................................................................... 92

4.9 Virtual strain gauges ............................................................................................................. 97

5

5. Materials ..................................................................................................................................... 101

5.1 Background ......................................................................................................................... 101

5.2 Material databases ............................................................................................................. 102

5.3 Using material data for analysis .......................................................................................... 103

5.4 Creating materials using the Material Manager ................................................................. 104

5.5 Creating materials from a text file ...................................................................................... 105

5.6 General material properties ............................................................................................... 109

5.7 Fatigue material properties ................................................................................................ 110

5.8 Composite material properties ........................................................................................... 114

5.9 Estimation techniques ........................................................................................................ 118

6. Analysis algorithms ..................................................................................................................... 120

6.1 Background ......................................................................................................................... 120

6.2 Stress-based Brown-Miller .................................................................................................. 121

6.3 Normal Stress ...................................................................................................................... 125

6.4 Findley’s Method ................................................................................................................ 127

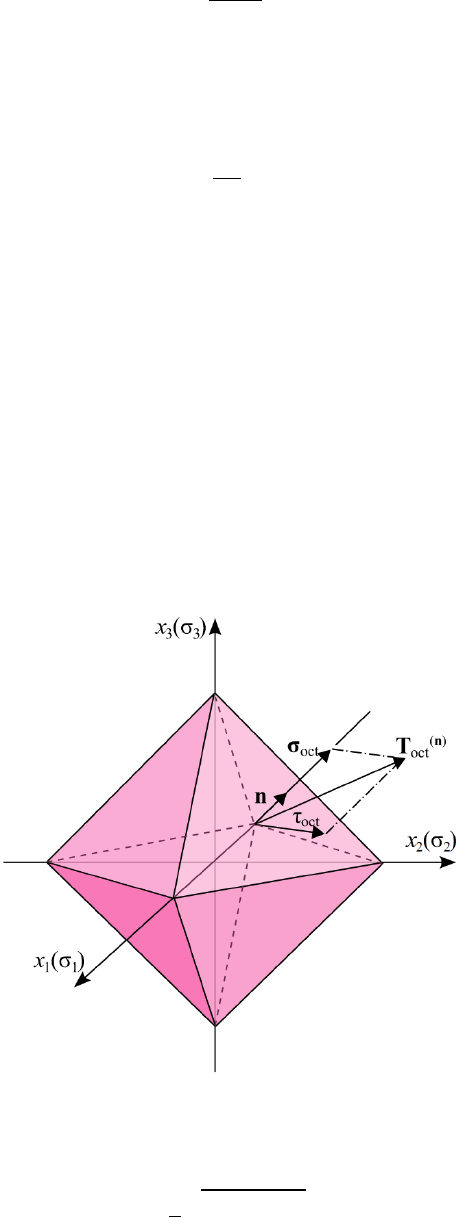

6.5 Stress Invariant Parameter ................................................................................................. 134

6.6 BS 7608 Fatigue of Welded Steel Joints .............................................................................. 138

6.7 NASALIFE ............................................................................................................................. 145

6.8 Uniaxial Stress-Life .............................................................................................................. 151

6.9 Uniaxial Strain-Life .............................................................................................................. 152

6.10 User-defined algorithms ..................................................................................................... 153

7. Mean stress corrections .............................................................................................................. 157

7.1 Background ......................................................................................................................... 157

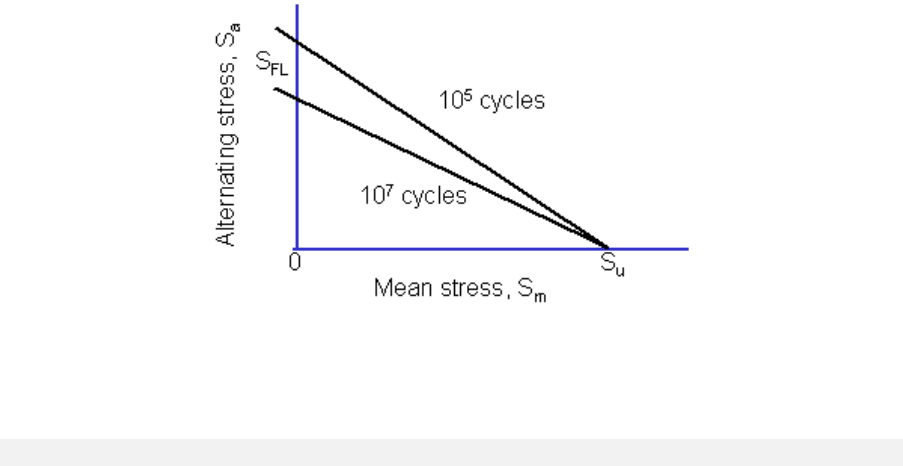

7.2 Goodman ............................................................................................................................ 159

7.3 Soderberg ............................................................................................................................ 162

7.4 Gerber ................................................................................................................................. 163

7.5 Morrow ............................................................................................................................... 164

7.6 Smith-Watson-Topper......................................................................................................... 165

7.7 Walker ................................................................................................................................. 166

7.8 R-ratio S-N curves................................................................................................................ 169

7.9 User-defined mean stress corrections ................................................................................ 171

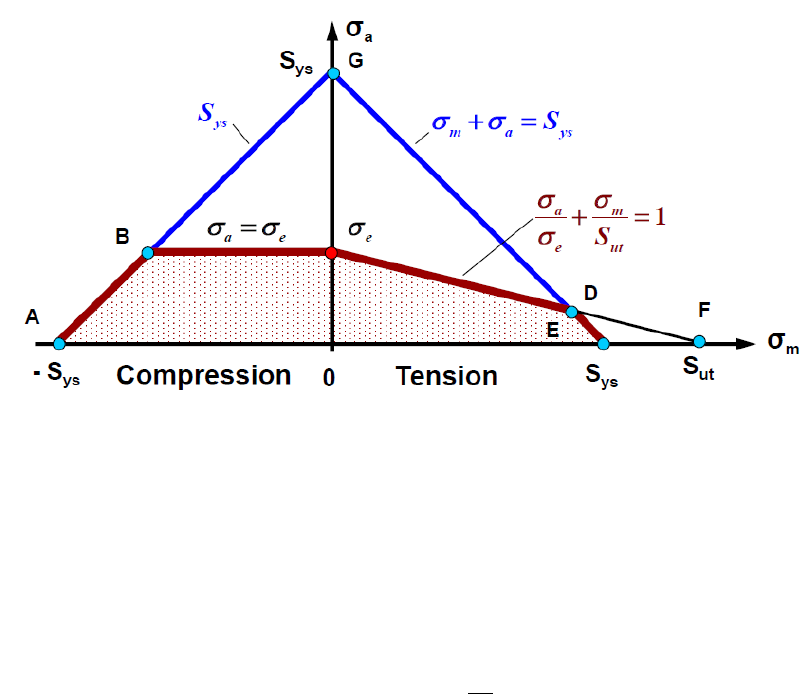

8. Safety factor analysis .................................................................................................................. 174

8.1 Background ......................................................................................................................... 174

8.2 Fatigue Reserve Factor ........................................................................................................ 174

6

8.3 Factor of Strength ............................................................................................................... 184

9. Job and environment files ........................................................................................................... 193

10. Output ..................................................................................................................................... 194

10.1 Background ......................................................................................................................... 194

10.2 Output variables.................................................................................................................. 196

10.3 Viewing output .................................................................................................................... 204

10.4 The ODB Interface ............................................................................................................... 206

11. FEA Modelling techniques ...................................................................................................... 220

11.1 Background ......................................................................................................................... 220

11.2 Preparing an FE model for fatigue analysis ......................................................................... 220

12. Supplementary analysis procedures ....................................................................................... 225

12.1 Background ......................................................................................................................... 225

12.2 Yield criteria ........................................................................................................................ 225

12.3 Composite failure criteria ................................................................................................... 227

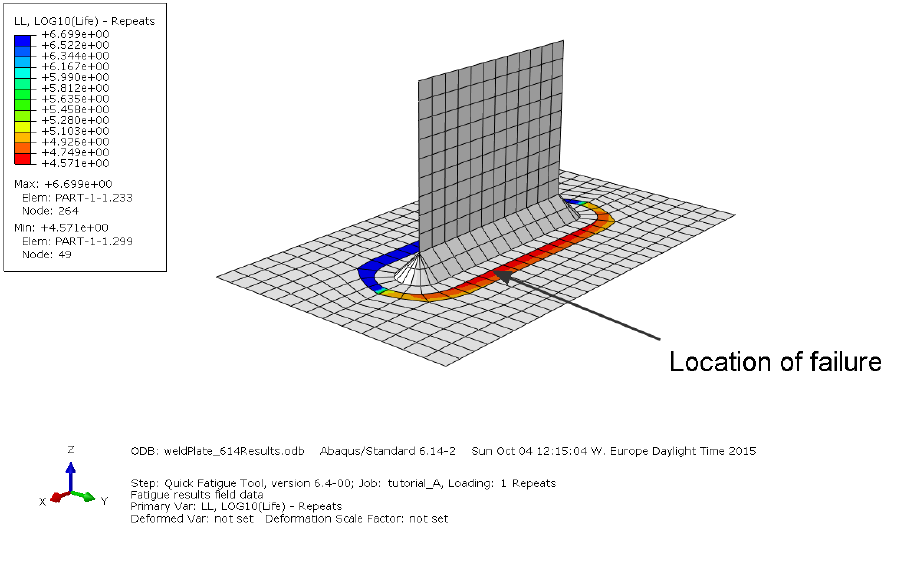

13. Tutorial A: Analysis of a welded plate with Abaqus ................................................................ 242

13.1 Background ......................................................................................................................... 242

13.2 Preparing the RPT file ......................................................................................................... 243

13.3 Running the analysis ........................................................................................................... 243

13.4 Post processing the results ................................................................................................. 244

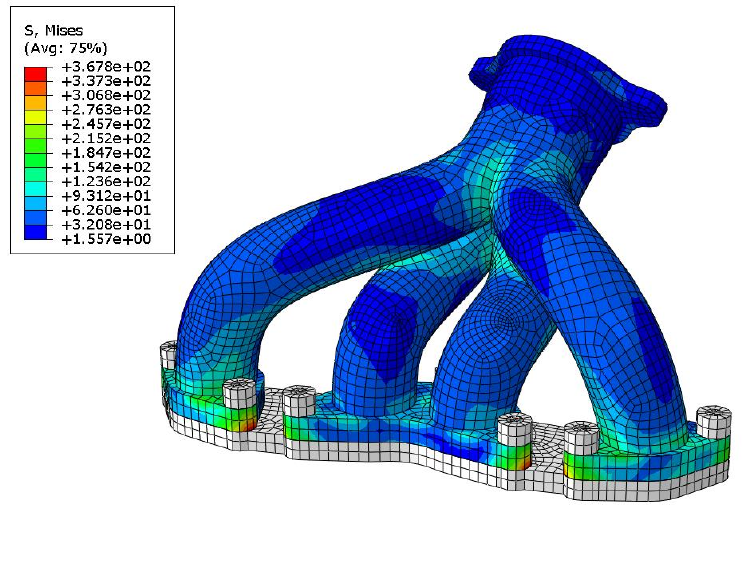

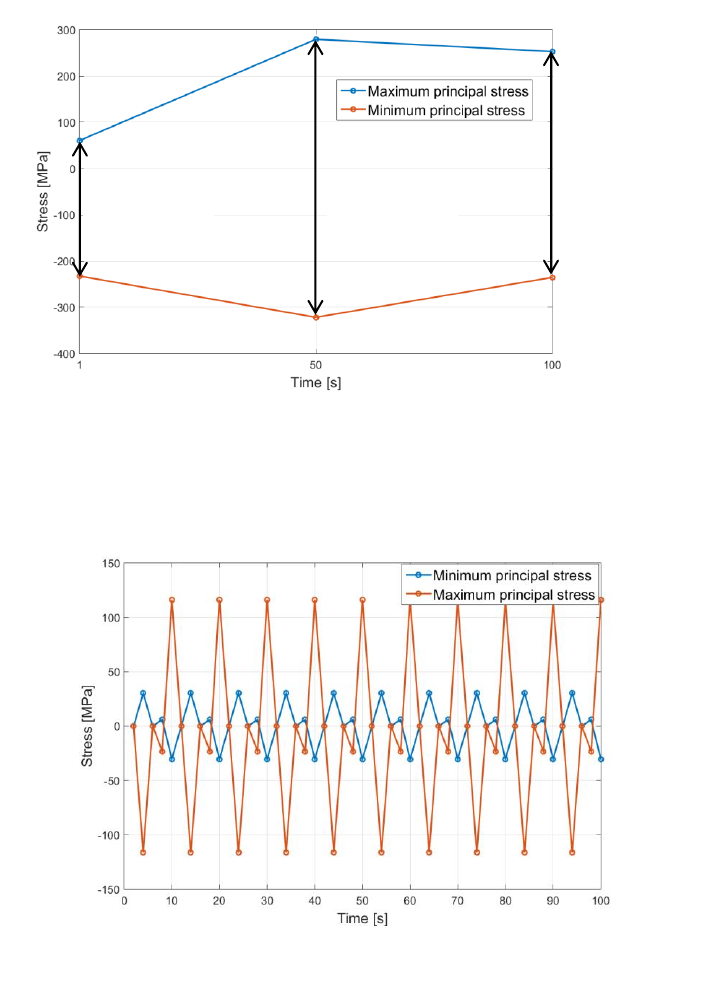

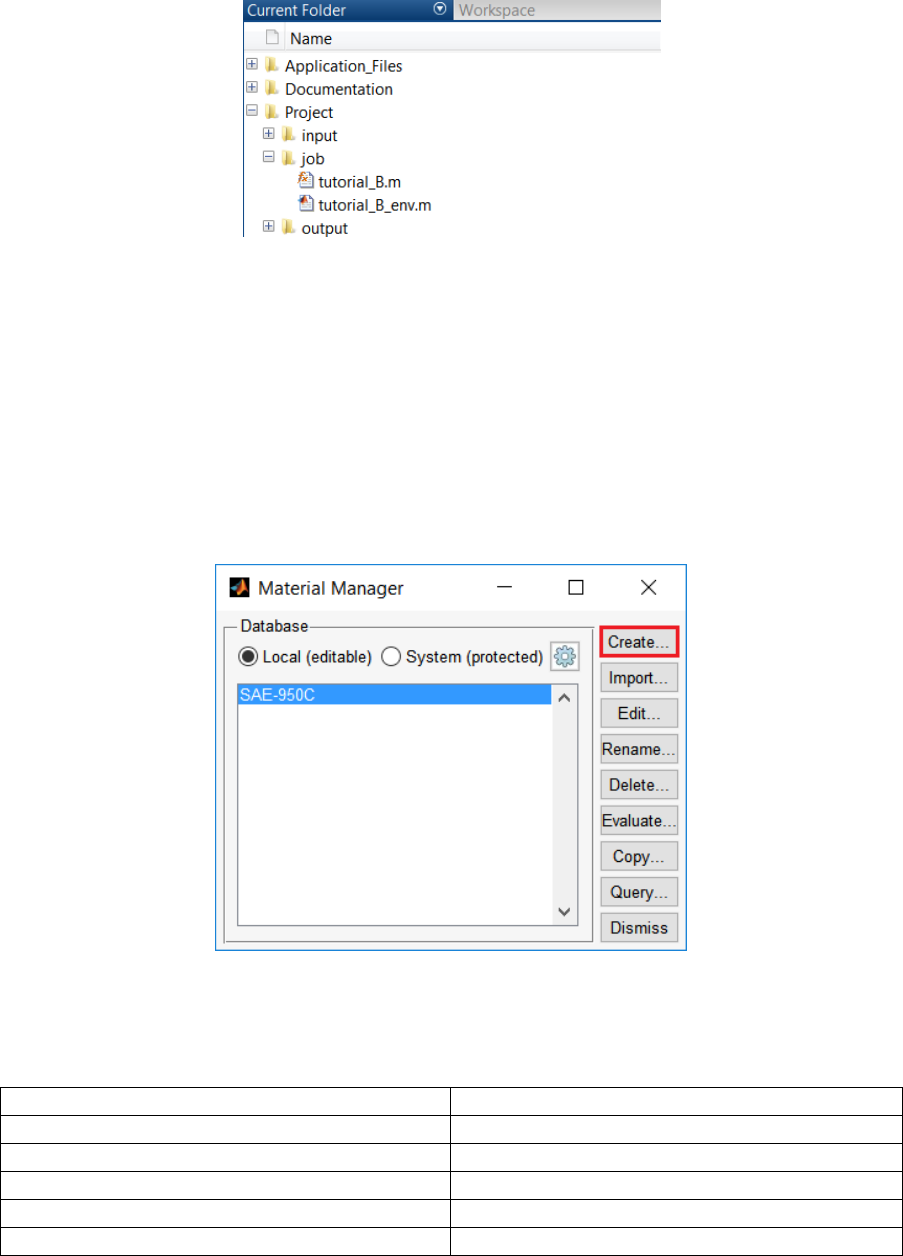

14. Tutorial B: Complex loading of an exhaust manifold .............................................................. 247

14.1 Background ......................................................................................................................... 247

14.2 Preparation ......................................................................................................................... 249

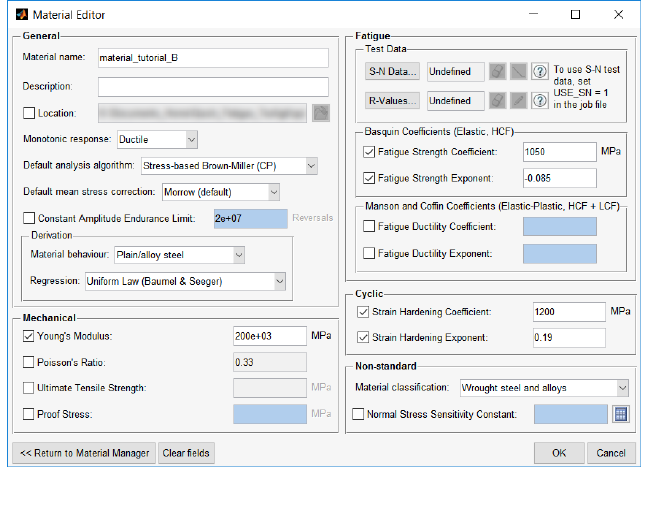

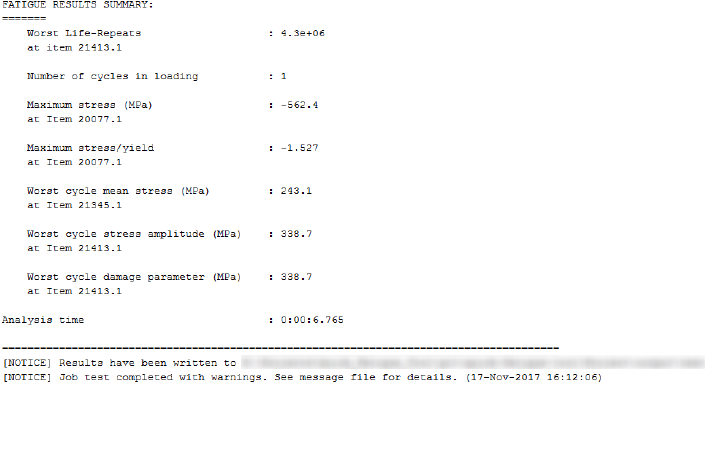

14.3 Defining the material .......................................................................................................... 250

14.4 Running the first analysis .................................................................................................... 251

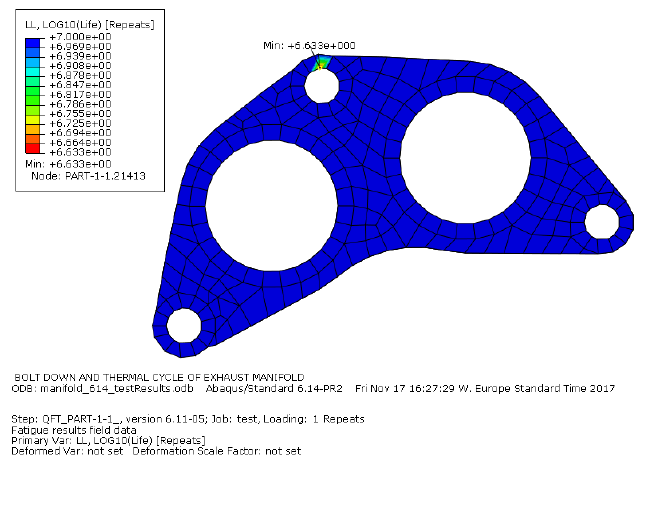

14.5 Viewing the results with Abaqus/Viewer ............................................................................ 253

14.6 Running the second analysis ............................................................................................... 254

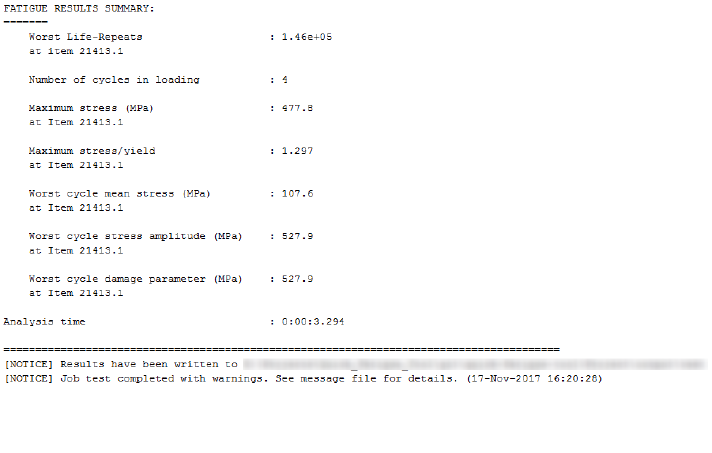

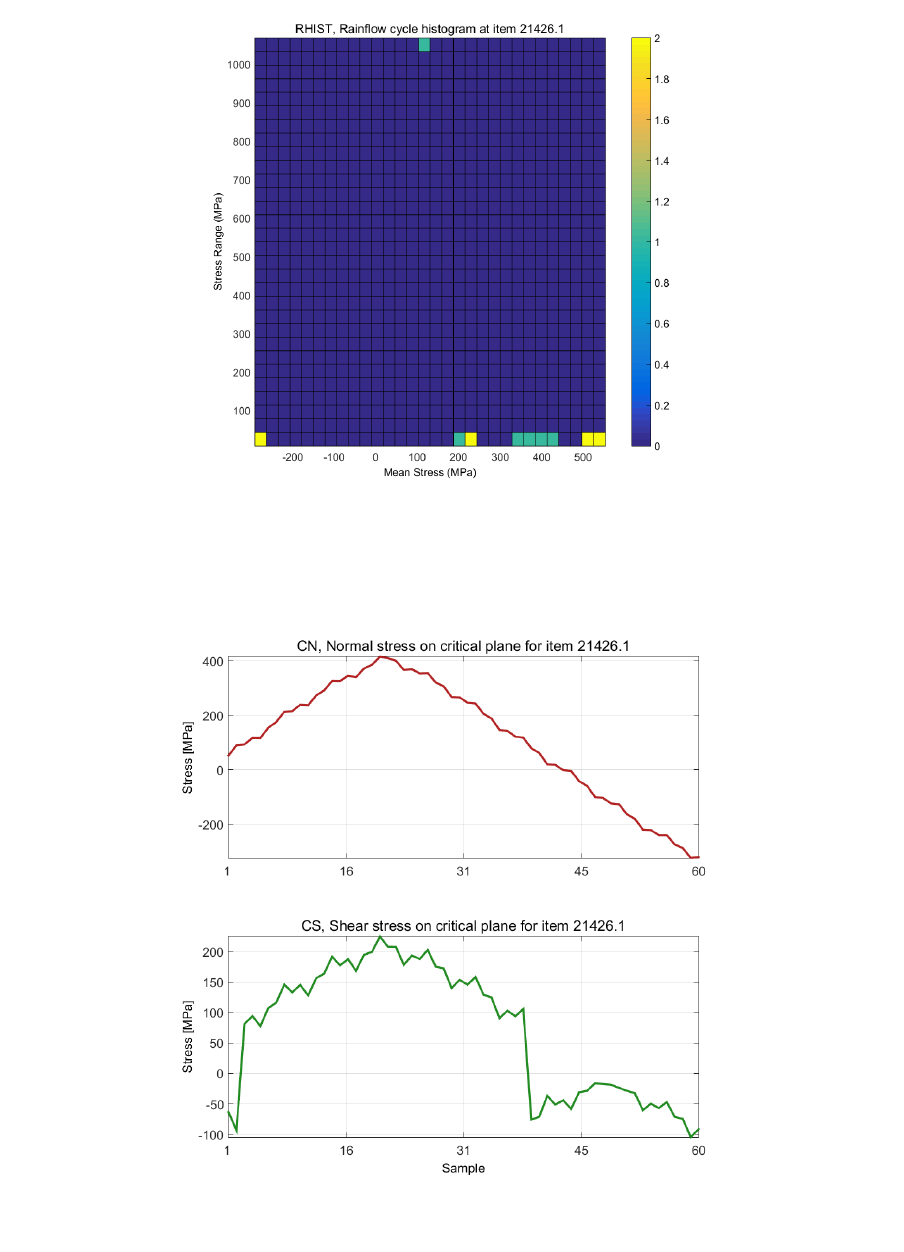

14.7 Post processing the results ................................................................................................. 256

Appendix I. Fatigue analysis techniques ........................................................................................ 260

Appendix II. Materials data generation .......................................................................................... 261

Appendix III. Gauge fatigue toolbox ............................................................................................. 262

References .......................................................................................................................................... 263

7

1. Introduction

1.1 Overview

Quick Fatigue Tool for MATLAB is an experimental fatigue analysis code. The application includes:

A general stress-life and strain-life fatigue analysis framework, configured via a text-based

interface;

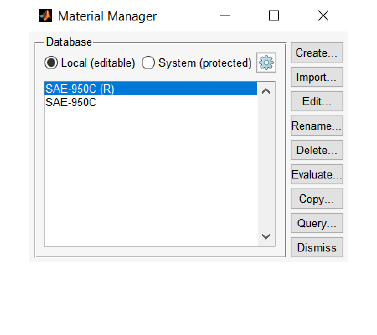

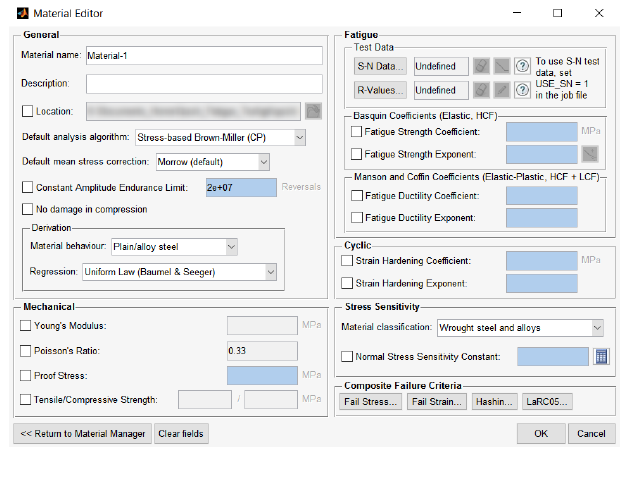

Material Manager, a material database and MATLAB application which allows the user to

create and store materials for fatigue analysis (Section 5);

Multiaxial Gauge Fatigue, a strain-life code and MATLAB application which allows the user to

perform fatigue analysis from measured strain gauge histories

(document Quick Fatigue Tool Appendices: A3);

Export Tool, an ODB interface which allows the user to export fatigue results to an

Output Database (.odb) file for visualization in SIMULIA Abaqus/Viewer (Section 10.4); and

Supplementary analysis tools for static failure assessment (Section 12).

Quick Fatigue Tool runs entirely within the MATLAB environment, making it a highly customizable

code which is free from external dependencies.

The general analysis framework allows the user to analyse elastic stresses from Finite Element Analysis

(FEA) results. One of the main advantages of calculating fatigue lives from FEA is that it eliminates the

requirement to manually compute stress concentration and notch sensitivity factors. The program is

optimised for reading field output from Abaqus field report (.rpt) files. However, field output can be

specified in any ASCII format provided the data structure in Section 3 is observed.

A Quick Fatigue Tool analysis requires the following inputs from the user:

1. A material definition

2. A loading definition consisting of:

a. Stress datasets

b. Load histories

The above input is specified by means of a job file. This is an .m script or text file containing a list of

options which completely define the analysis. Analyses are performed by running the job file. Basic

fatigue result output is written to the command window, and extensive output is written to a set of

individual data files.

8

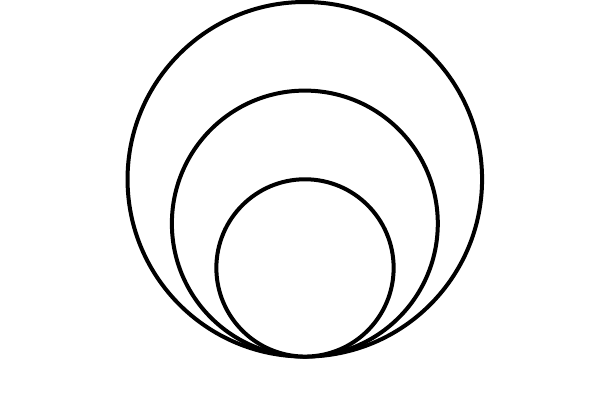

1.2 The stress-life methodology

The stress-life methodology is used for calculating fatigue damage where the expected lives are long

and the stresses are elastic. The method is also well-suited to infinite life design where a pass/fail

criterion based on the fatigue limit is sufficient. The stress-life approach ignores local plasticity and

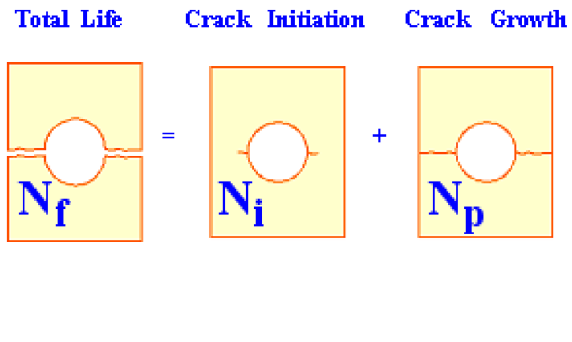

provides a “total life” estimate of fatigue life [1] [2] [3]. This is illustrated by Figure 1.1. If the analyst

wishes to gain insights into the life up to crack initiation (), or wishes to find the number of cycles

required to cause crack growth (), strain and fracture mechanics-based methods should be

explored instead [4].

1.3 The strain-life methodology

The strain-life methodology is used for calculating fatigue damage where the cycles are dominated by

local plasticity. Although the majority of engineering structures are designed such that the operational

stresses do not exceed the elastic limit, unavoidable design features such as notches can result in local

plastic strains.

The strain-life methodology correlates the local plastic deformation in the vicinity of a stress

concentration to the far-field elastic stresses and strains using the constitutive response determined

from displacement-controlled fatigue tests on simple (smooth) laboratory specimens.

Fatigue analysis using the strain-life methodology is capable of accurate predictions of crack initiation

down to a few hundred cycles. Depending on the strain-life data, failure is usually assumed as a surface

crack with a length of approximately .

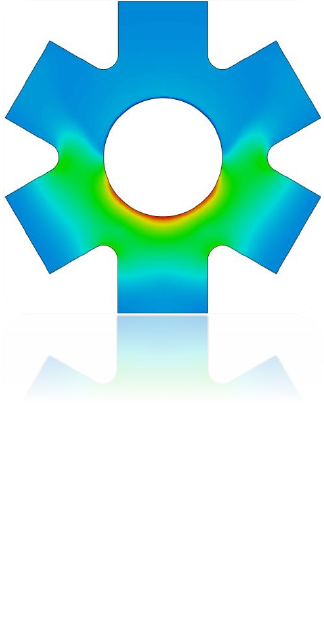

Figure 1.1: Illustration of the stress-life method where the total life, Nf, is

the sum of the life to crack initiation, Ni, and life to final crack propagation,

Np.

9

1.4 Why fatigue from FEA?

Modern design workflows demand a complex and multidisciplinary mind set from the analyst [5]. The

combination of complex geometry and service loading can make the determination of the most

important stresses an insurmountable task in the absence of powerful computer software.

The finite element method is a popular tool which allows the analyst to determine the stresses acting

on a component with a high degree of accuracy. However, selection of the correct stress is often still

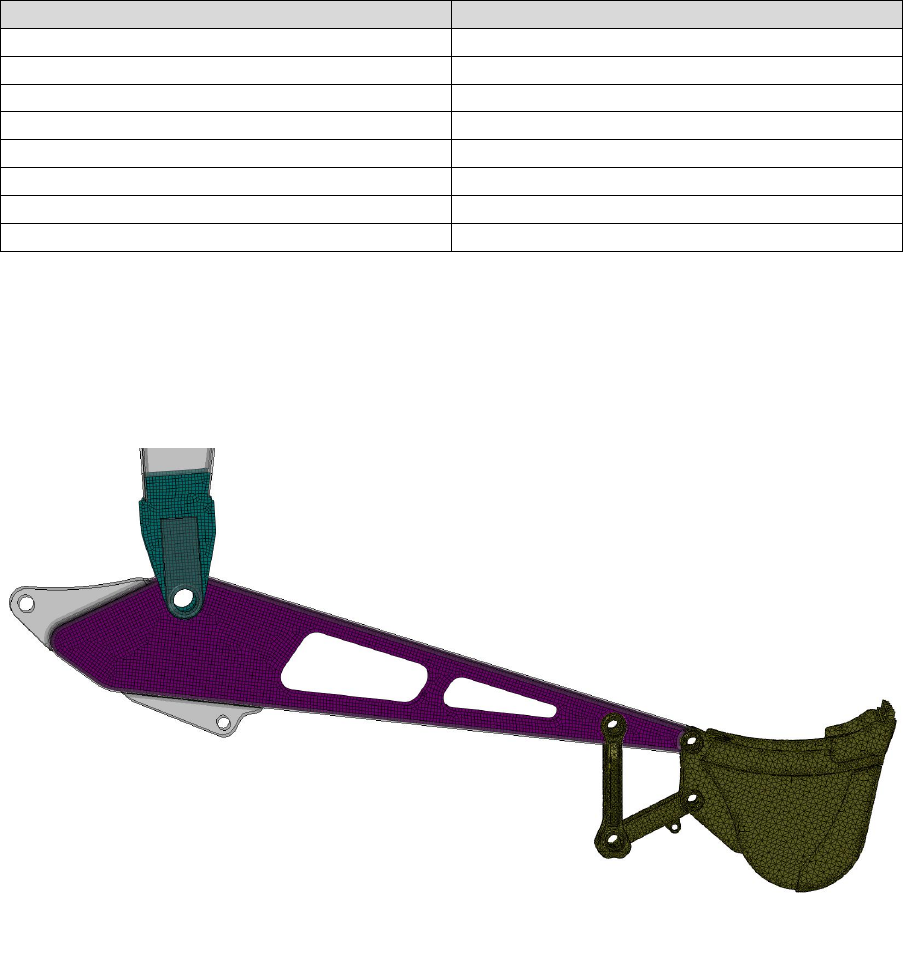

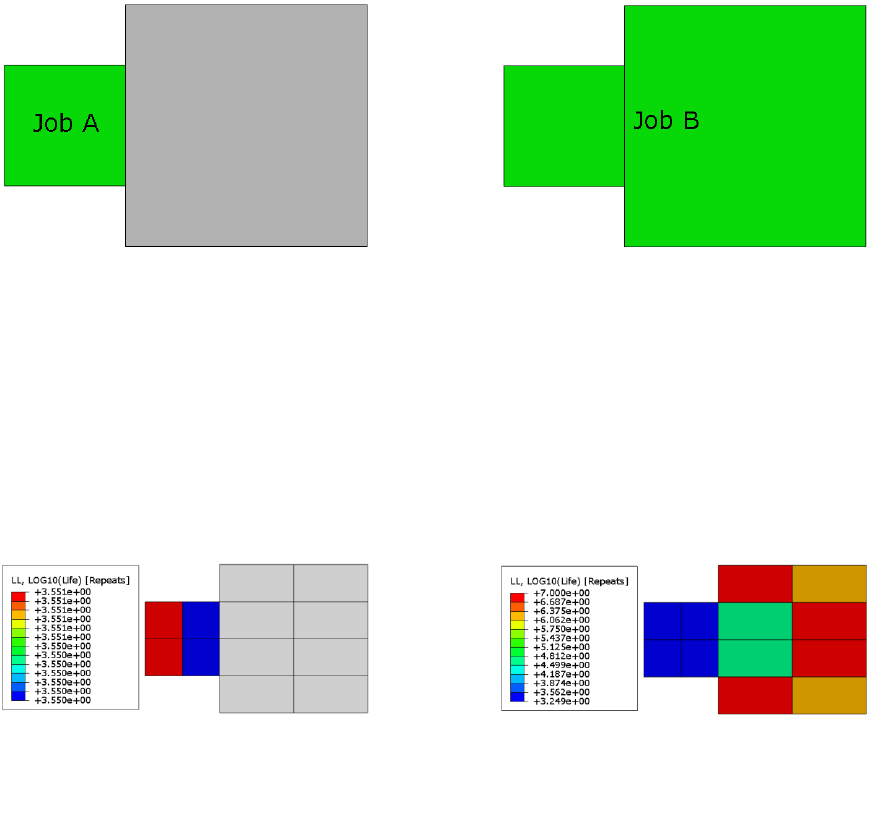

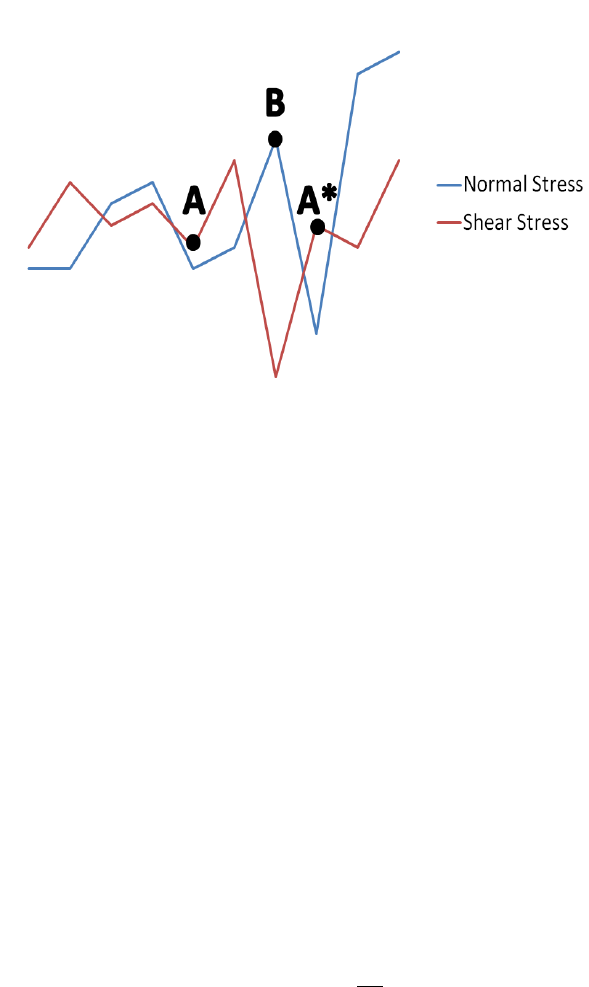

not obvious. Take Figure 1.2 as an example.

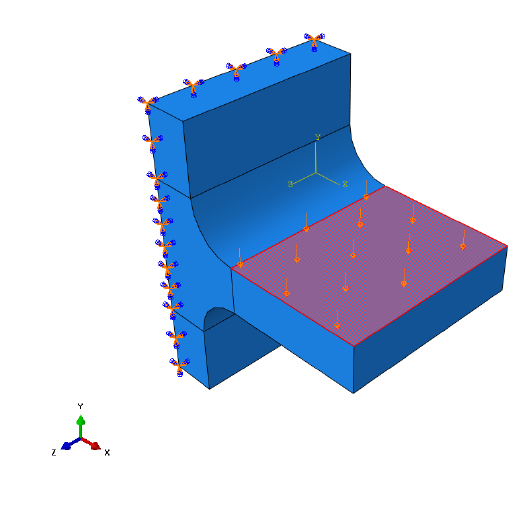

A simple fillet joint is loaded in bending by a unidirectional pressure force. The load is applied to

and then removed, resulting in a single pulsating loading event. Figure 1.3 shows the result of

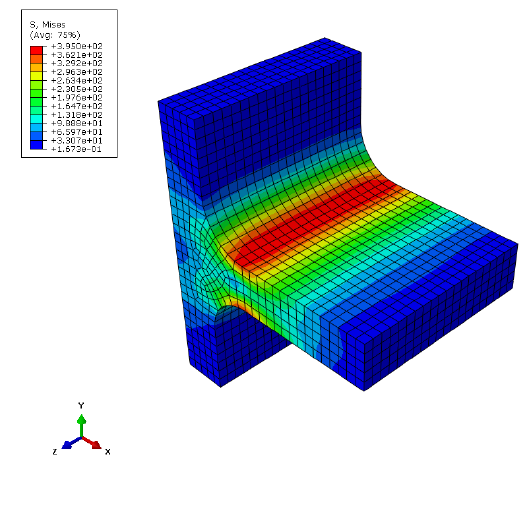

the finite element analysis. The simplest way to relate the stress to fatigue life is by the Wöhler stress-

life curve [6]:

[1.1]

The damage parameter, , is related to the fatigue life in repeats, by the material constants

and . Considering the results from Figure 1.3, it is not obvious which stress should be chosen to take

the place of the parameter . There are several approaches for the evaluation of the fatigue life.

Figure 1.2: Uniaxial load applied to a fillet joint

10

A common approach is to take the node with the maximum principal stress and substitute this value

into the stress-life equation. Alternatively, the model can be viewed in terms of effective stress (for

example, von Mises), and using this parameter for the fatigue calculation. Both of these approaches

have serious drawbacks in that they do not correctly account for the presence of multiaxiality and

non-proportionality which commonly arises in fatigue loadings. Fatigue results obtained using these

techniques can be in significant error and even miss the location of fatigue crack initiation.

The best practise is to employ multiaxial algorithms which correctly identify the stresses on the most

damaging planes. Even unidirectional loads, such as those in the above example, can result in

multiaxial stress fields. Therefore, multiaxial analysis algorithms are always recommended over

uniaxial and effective stress methods.

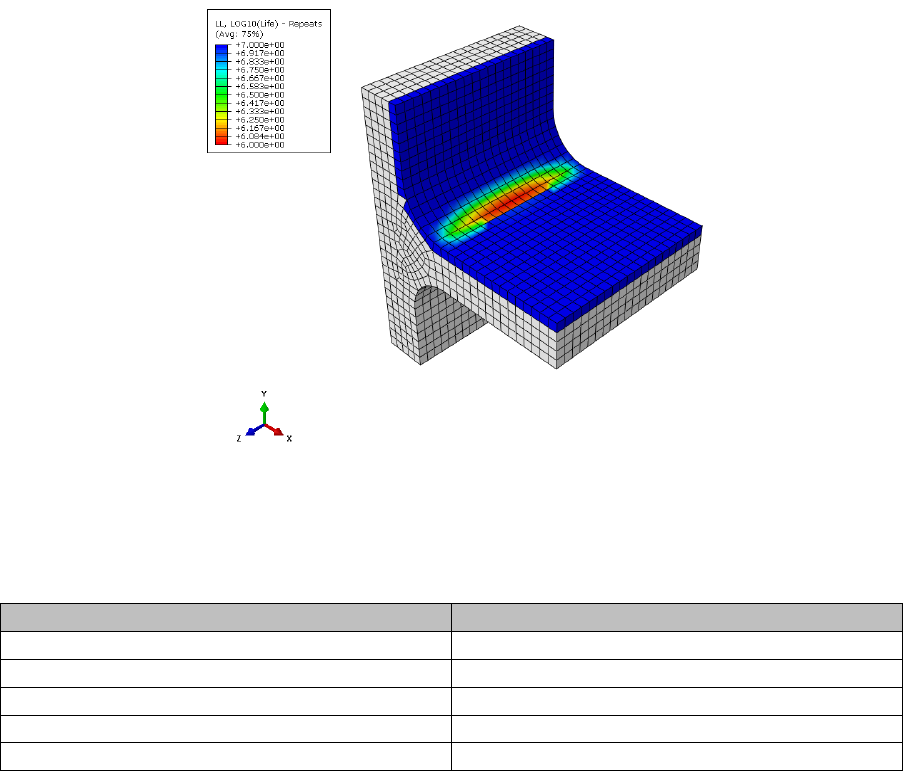

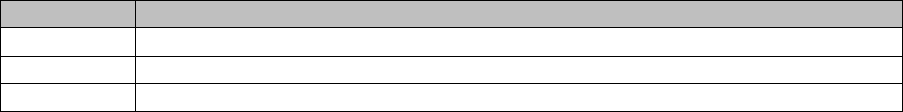

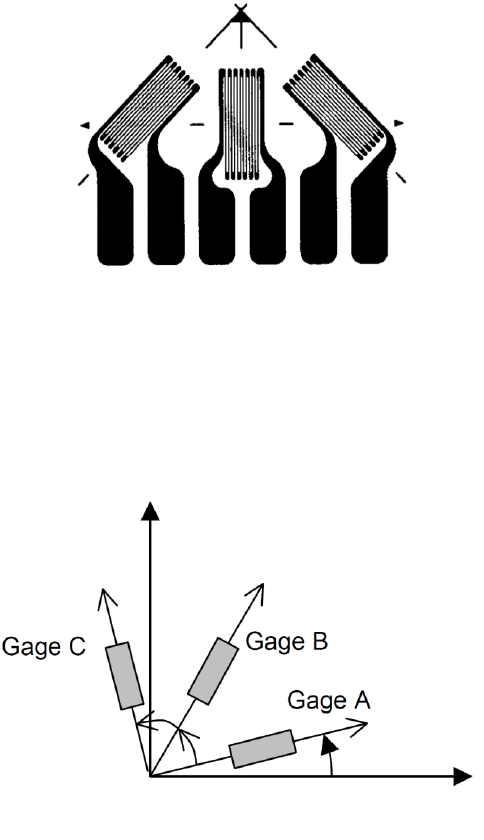

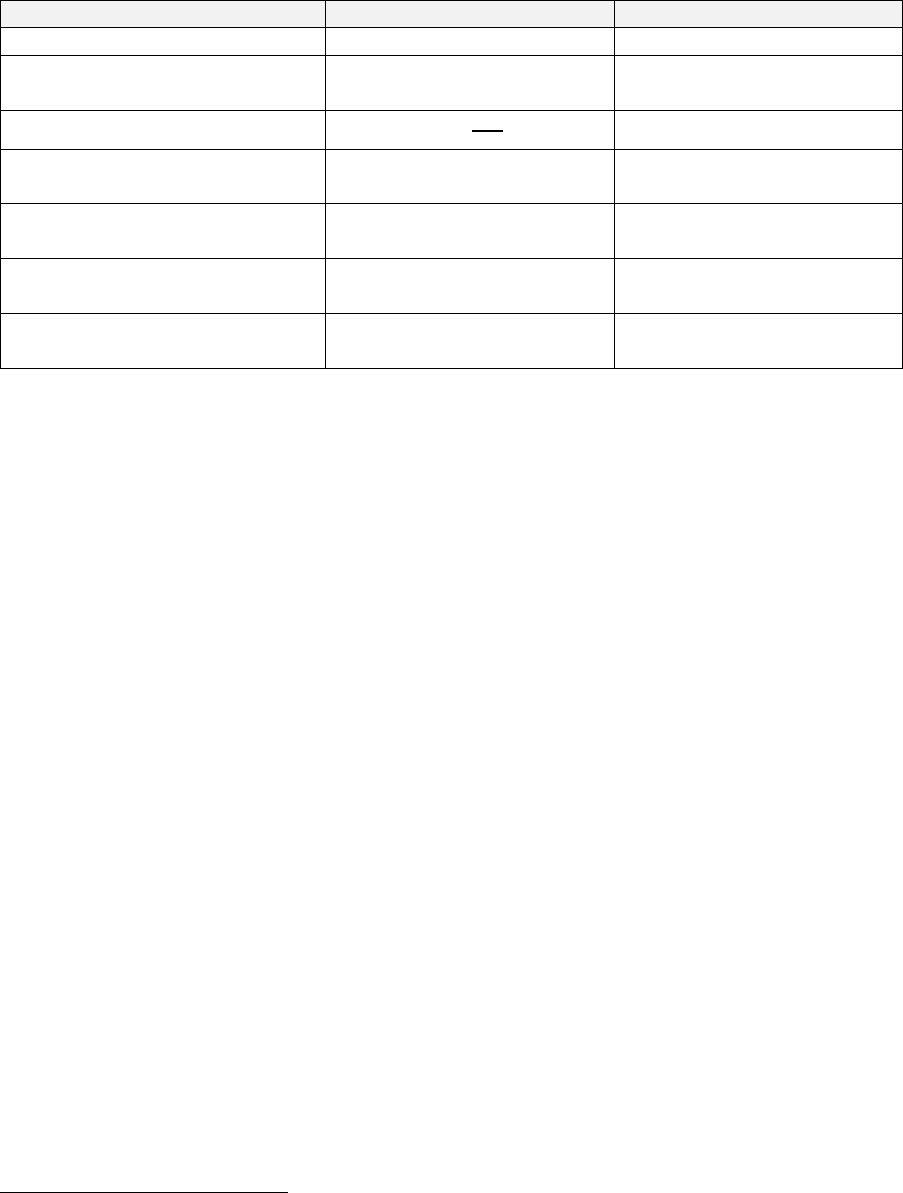

The fillet joint is analysed using Quick Fatigue Tool with several fatigue criteria, the results of which

are summarised in Figure 1.4 and the tabulated data. Algorithms with “(CP)” indicate that they are

multiaxial (critical plane) methods.

The Uniaxial Stress-Life method underestimates the fatigue life, whereas the von Mises stress

overestimates. By considering the maximum principal stress, the uniaxial method assumes that fatigue

failure will occur on a plane perpendicular to the material surface where the shear stress is zero. In

reality, most metals experience crack initiation on shear planes where there is no normal stress. For

this reason, both the uniaxial and normal stress methods produce highly conservative life predictions.

The Stress-based Brown-Miller and Findley’s Method produce the most accurate results, since they

consider the action of both the normal and shear stress acting on several planes.

Figure 1.3: FEA stresses on the fillet joint due to bending load

11

Analysis algorithm

Fatigue life (repeats)

Uniaxial Stress-Life

462,000

Stress Invariant Parameter (von Mises)

1,570,000

Normal Stress (CP)

263,000

Stress-based Brown-Miller (CP)

800,000

Findley’s Method (CP)

1,040,000

By combining results from FEA with a multiaxial analysis technique, the most accurate life prediction

can be obtained. Due to the fact that multiple planes have to be searched in order to take into account

multiaxial stress states, the multiaxial algorithms are very time-consuming compared to the uniaxial

and effective stress methods. Confining the analysis to the location of maximum stress is not

guaranteed to be successful since the location of crack initiation is not guaranteed to coincide with

the location of maximum stress.

Figure 1.4: Fatigue analysis results showing (logarithmic) life using the

Stress-based Brown-Miller multiaxial algorithm

12

1.5 Overview of syntax

1.5.1 Overview

The Quick Fatigue Tool analysis job is created by a combination of job file options and environment

variables. Job file options represent the fundamental aspects of the fatigue analysis, such as material

definition, loading and fatigue analysis algorithm. Job file options are analysis-specific. Environment

variables are used to control the general behavior of Quick Fatigue Tool, such as load gating, critical

plane search precision and results format. Environment variables apply globally to all analyses, but

may be configured for specific analyses.

Detailed information on job file options and environment variables can be found in the document

Quick Fatigue Tool User Settings Reference Guide.

1.5.2 Job file options

All of the available job file options can be found in Project\job\template_job.m. These are standard

MATLAB variables which are passed into the main analysis function when the job file is run.

Job file options can be defined as character arrays or cells, as numeric arrays, or simply left empty,

depending on how the variable is defined.

1.5.3 Environment variables

All of the available environment variables can be found in Application_Files\default\environment.m.

These are MATLAB %APPDATA% variables which are read into the program application data at the

beginning of the analysis.

Environment variables can be defined as character arrays or cells, as numeric arrays, or simply left

empty, depending on how the variable is defined.

Environment variables are set using the setappdata() method. The first argument is the name of the

environment variable and the second argument is the value of the variable.

1.5.4 Units

Material data must be defined in the SI (mm) system of units (, , , ). Stress datasets

can use any system of units, and are automatically converted into before the analysis.

1.5.5 Documentation conventions

The following conventions are used to signify job file options and environment variables throughout

the Quick Fatigue Tool documentation:

job file options defined in MATLAB are presented in BOLDFACE;

job file options defined in text files are preceded by an asterisk ( * );

environment variables are presented in magenta;

file names and string parameters are presented in 'magenta' or 'magenta';

command line parameters are presented in magenta;

default parameters are underlined ( );

Items enclosed in bold square brackets ([ ]) are optional;

items appearing in a list separated by bars ( | ) are mutually exclusive; and

one value must be selected from a list of values enclosed by bold curly brackets ({ }).

13

1.6 Required toolboxes

Quick Fatigue Tool does not require any MATLAB toolboxes to function properly. However, certain

toolboxes are used to enhance the functionality of the code if Quick Fatigue Tool detects that they are

installed. Below is a summary of these toolboxes and the additional functionality they provide.

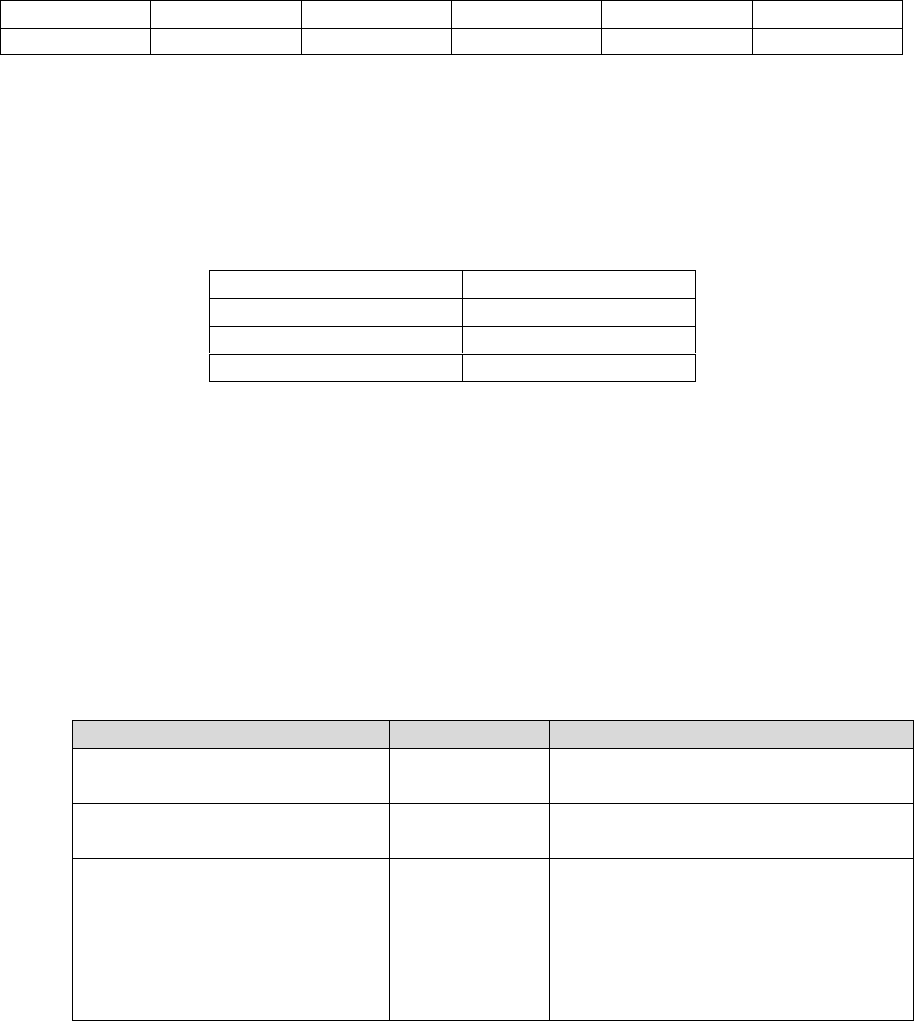

Toolbox

Functionality

Image Processing Toolbox

Strain gauge preview dialogue box for

Gauge Fatigue Toolbox apps. See Appendix III of

Quick Fatigue Tool Appendices for more

information

Symbolic Math Toolbox

Iterative solver for the following features:

Derivation of the normal stress

sensitivity constant () using the

General Formula option in the

Material Manager app

Derivation of the reference strains from

user-defined strain gauge orientations

using the Multiaxial Gauge Fatigue and

Rosette Analysis apps

Derivation of the initial fibre

misalignment angle () for the LaRC05

composite damage initiation criteria

Statistics Toolbox

RHIST and RC output variables

Signal Processing Toolbox

High frequency loadings with HF_DATASET and

HF_HISTORY

14

1.7 Limitations

1.7.1 Fatigue from FEA

FEA tools

Quick Fatigue Tool has been optimised to use stress data from an Abaqus output database (.odb) file

using the built-in field output report tool in Abaqus/CAE. Stress dataset files generated from other

third party FEA processors must adhere to the rules and conventions detailed in Section 3.2.

Datasets

Quick Fatigue Tool assumes that the FEA stress datasets are elastic for both stress and strain-based

fatigue analyses. In the latter case, the stresses are automatically corrected for the effect of plasticity.

Elements

Special-purpose elements and connector elements are not supported. Structural elements such as

beams, pipes and wires may be used, although they have not been thoroughly tested. Using any of

these elements may cause the analysis to produce error messages or crash.

Stress tensors read from FE models must use a Cartesian coordinate system.

If the model contains plane stress elements from an Abaqus .odb file, only the valid tensor

components are printed to the dataset (.rpt) file. In such cases, the user must set the option

PLANE_STRESS=1.0 in the job file. This ensures that Quick Fatigue Tool is able to correctly identify

plane stress elements.

When exporting stress datasets from Abaqus, Quick Fatigue Tool will fail to process the data if the

element stresses are written to more than two locations on the element. For example, Abaqus usually

writes stresses to two section points for shell elements (top and bottom faces). If the user requested

field output at more than two section points, or the element is defined from a composite section,

Quick Fatigue Tool will not be able to interpret the stress dataset file.

If the user wishes to analyse the surface of a composite structure, the best practise is to define a skin

on the surface of the structure and export the stresses from the skin elements only.

Part instances

Quick Fatigue Tool supports stress datasets which span multiple Abaqus part instances provided that

the element-node numbers are unique between instances. If the stress dataset contains duplicate

element-node numbers, Quick Fatigue Tool is unable to distinguish between individual part instances

and results may be reported at incorrect locations.

If the model is defined as assemblies of part instances and the fatigue analysis spans more than one

instance, the user can ensure unique element-node numbering as follows:

1. Enter the Mesh Module in Abaqus/CAE and select Part as the object

2. From the main menu, select Mesh → Global Numbering Control…

3. Specify a start label for the elements and nodes of the current part instance, such that they

do not clash with other part instances which will be included for fatigue analysis

15

It is strongly recommended that the user works with flat input files. This generates an output database

containing a single part instance, which guarantees unique element-node numbering:

1. (any module in Abaqus/CAE): From the main menu select Model → Edit Attributes → Model

name

2. Select Do not use parts and assemblies in input files

1.7.2 Loading

Quick Fatigue Tool does not directly support multiple block loading. A workaround involves splitting

the load spectrum into several jobs using the CONTINUE_FROM option, which allows

Quick Fatigue Tool to automatically overlay the fatigue damage onto the previous results to give the

total damage due to all blocks. Analysis continuation is discussed in Section 4.8.

1.7.3 Materials

It is assumed that stress relaxation does not occur during the loading and that the material is cyclically

stable. This expedites the fatigue calculation by allowing analysis of each node without considering

the effects of neighbouring nodes, but precludes the effect of global plasticity being accurately taken

into account. However, for the majority of cases this should not be an issue since the stress-life

method is elastic, and the strain-life method is intended for components experiencing relatively small

amounts of local plasticity.

Quick Fatigue Tool is applicable to metals and some engineering plastics where the stresses and

temperatures are sufficiently low that viscoelastic effects are negligible.

Treatment of local notch plasticity requires the use of strain-based fatigue methods. Treatment of

crack propagation requires the use of crack growth methods such as VCCT, CTOD and Paris Law LCF.

Analysis of viscoelastic, hyperelastic, anisotropic and quasi-brittle materials is not supported.

Materials whose fatigue behavior cannot reasonably be modelled by linear elastic stresses and stress-

life curves are not supported.

1.7.4 Performance

The MATLAB programming language is very convenient in terms of the ease and speed of development

it offers. However, in runtime the code is slow in comparison to other languages. Therefore, stress

datasets from even a modest finite element model can result in cumbersome analyses. The user is

advised to consult Section 10: Modelling considerations for assistance on how to minimise analysis

time without compromising on the accuracy of the solution.

16

1.7.5 GUI appearance

It is recommended that you set your monitor DPI scaling to and the resolution to x.

On Windows 7, the DPI settings are found at Control Panel\Appearance and Personalization\Display.

On Windows 10 the settings are at the same location, but you must select set a custom scaling level

under the “Change size of items” section.

If the above settings are not used, Material Manager, Export Tool and the Gauge Fatigue Toolbox apps

may display incorrectly.

An alternative to using the Material Manager app is to import material data from a text file. For

instructions on creating material text files, consult Section 5.6 “Creating a material from a text file”.

1.7.6 Validation

Quick Fatigue Tool has not been validated against any official standard. The author does not take any

responsibility for the accuracy or reliability of the code. Fatigue analysis results should be treated as

supplementary and further investigation is strongly recommended.

1.8 Additional notes

a) It is recommended that you consult the file README.txt in the Documentation directory

before proceeding to the next section of the guide

b) Modifying the file structure (e.g. renaming folders) may prevent the program from working.

c) The change log for the latest version can be found here

d) Quick Fatigue Tool is free for distribution without license, provided that the author

information is retained in each source file

To report a bug or to request an enhancement, please contact the author:

Louis VALLANCE

louisvallance@hotmail.co.uk

17

2. Getting started

2.1 Preparing the application

Preparing Quick Fatigue Tool requires minimal intervention from the user, although it is important to

follow a few simple steps before running an analysis:

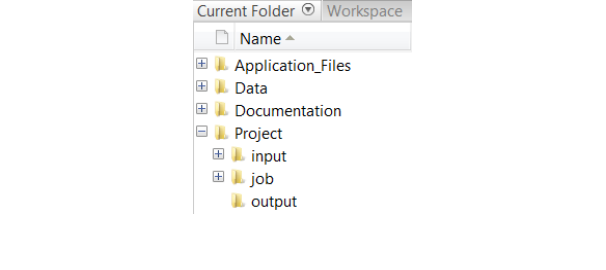

Make sure the working directory is set to the root of the Quick Fatigue Tool directory, e.g.

\..\Quick Fatigue Tool\6.x-yy. The directory structure is shown in Figure 2.1. All folders and sub folders

should be added to the MATLAB search path using the function addpath(), or by selecting the folders

Application_Files and Project, right-clicking and selecting Add to Path → Selected Folders and

Subfolders.

If the MATLAB working directory is not configured exactly as described above (e.g. the user enters the

job directory before running a job file), the application will not run.

Before running a fatigue analysis, it is recommended that you first run the job tutorial_intro. This is

because the initial run of a MATLAB function requires some additional computational overhead which

slows down the first analysis.

2.2 How the application handles variables

Quick Fatigue Tool does not store variables in the base workspace, nor does it modify or delete existing

workspace variables. During analysis, variables are stored either in the function workspaces or as

application-defined data using the setappdata() and getappdata() methods.

The application data is utilised for convenience, since variables can easily be accessed throughout the

code without having to pass variables between many functions. In order to prevent unwarranted data

loss, Quick Fatigue Tool does not modify existing application data by default. However, this means

that variables from previous analyses will remain in the application data and could cause unexpected

behavior in subsequent analyses, such as incorrect fatigue results and spurious crashes.

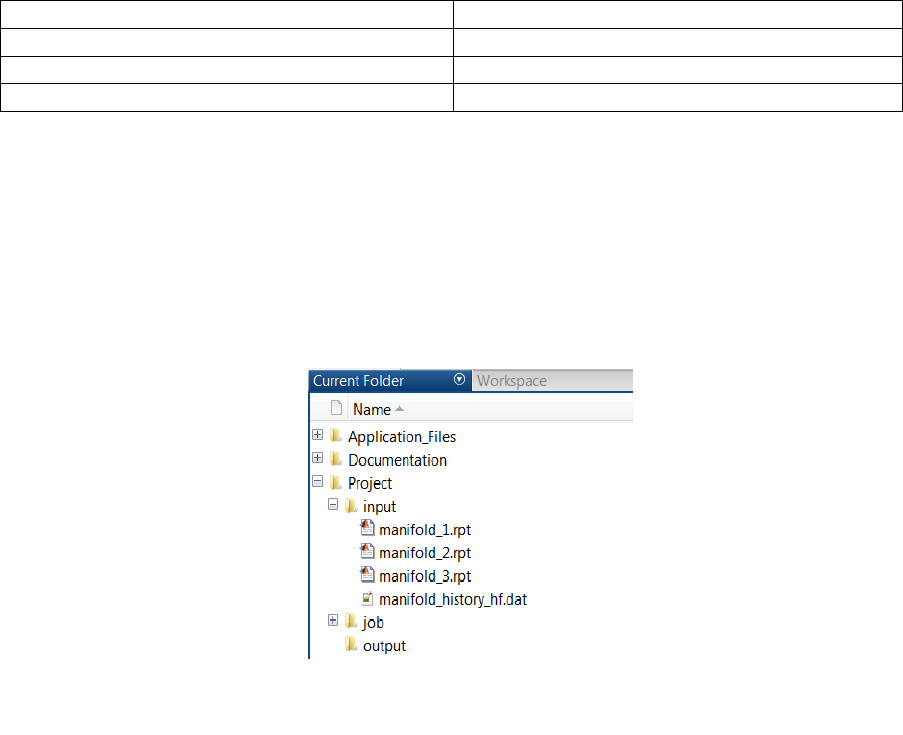

Figure 2.1: Quick Fatigue Tool file structure

18

In order to eliminate the possibility of such conflicts, the user is strongly advised to restart MATLAB

between each analysis so that the application data is cleared. If the user does not wish to restart

MATLAB for each analysis and is not concerned about Quick Fatigue Tool modifying the application

data, the following environment variable may be set with a value of 3.0:

Environment file usage:

Variable

Value

cleanAppData

{1.0 | 2.0 | 3.0 | 4.0}

This ensures that the application data is completely cleared before and after each analysis. This has

the same effect of restarting MATLAB.

The environment file and all of the available user settings are discussed in the document

Quick Fatigue Tool User Settings Reference Guide.

2.3 File structure

Quick Fatigue Tool separates various components of the code into folders. Below is a brief description

of what each folder contains:

Application_Files

Source code and application-specific settings.

There is usually no need to modify the contents

of this directory

Data

User-specific data (models, surface finish curves,

material data, etc.)

Documentation

README file and User Guide

input

Required location for stress datasets and load

histories

job

Job files defining each analysis

output

Fatigue results directory. If this folder does not

exist, it will automatically be created during the

analysis

19

2.4 Configuring and running an analysis

2.4.1 Configuring a standard analysis

Standard analyses are configured and submitted from an .m file.

In this example, a simple fatigue analysis is configured by combining a stress dataset with a load

history. The files can be found in the Data\tutorials\intro_tutorial folder.

1. Define a stress dataset: Open the file stress_uni.dat. A simple stress definition consists of six

components defining the Cauchy stress tensor. The components are defined in the following

order:

Column 1

Column 2

Column 3

Column 4

Column 5

Column 6

The file stress_uni.dat contains a stress tensor at a single material point in a state of uniaxial tension

().

2. Define a load history: Open the file history_fully_reversed.dat. A simple load history consists of

two loading points. Below is a list of common load definitions:

Load type

Definition

Fully-reversed (push-pull)

[1, -1]

Pure tension

[0, 1]

Pure compression

[0, -1]

The file history_fully_reversed.dat defines the fully-reversed loading event:

().

3. Study the job file: Open the job file tutorial_intro.m. The file contains a set of options specifying

all the information necessary for fatigue analysis. Options can be strings or numeric depending on

the meaning of the option. Not all options require a user setting. Below is a summary of each

option in the job file. The user need not worry about the number of definitions; all of the job file

options are explained in the document Quick Fatigue Tool User Settings Reference Guide and in

the tutorials later in this guide.

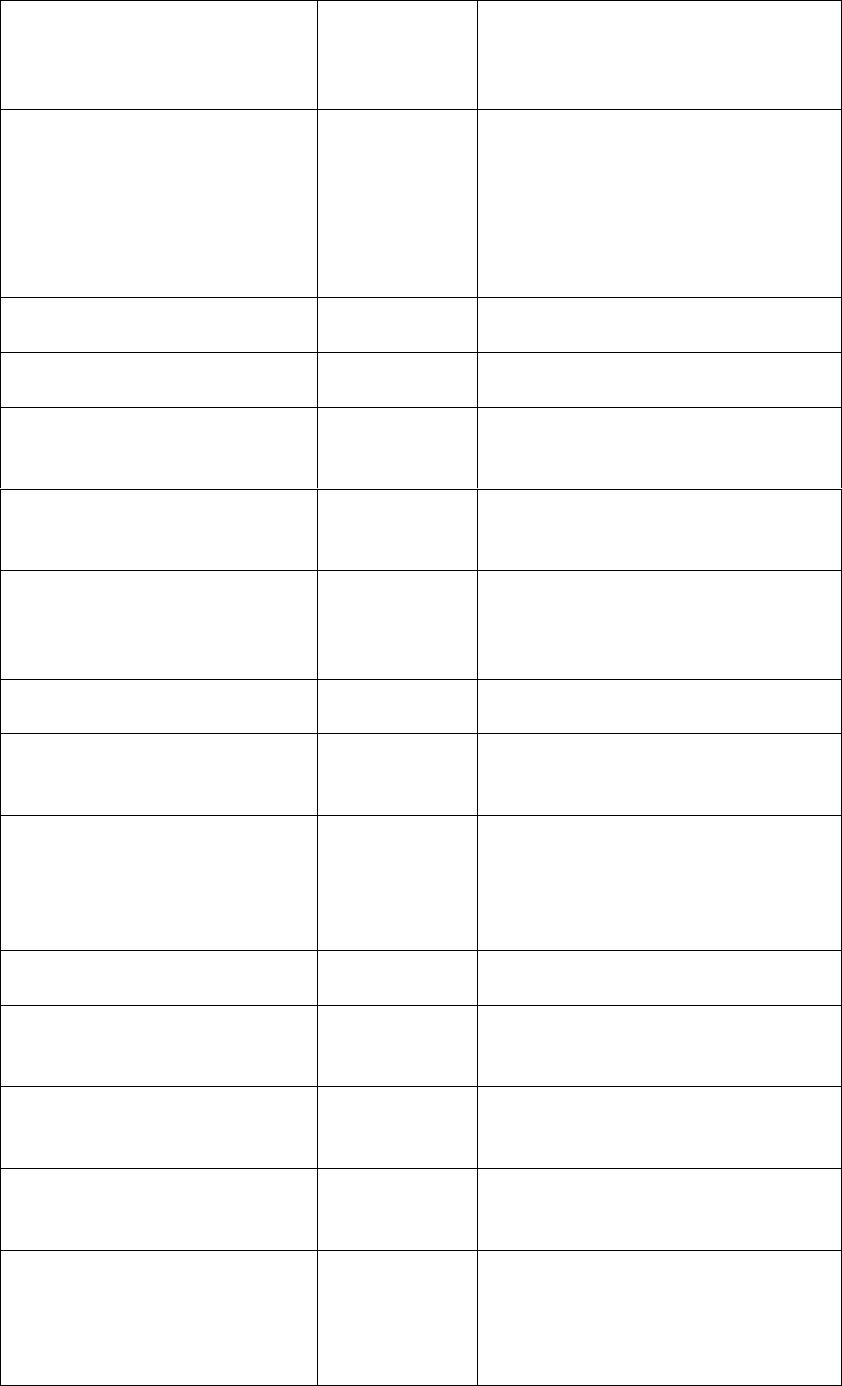

Option

Meaning

Additional notes

JOB_NAME

The name of

the job

JOB_DESCRIPTION

A description

of the job

The job name and description are

printed to the log file for reference

CONTINUE_FROM

Superimpose

results onto a

previous job

This feature is useful for block loading,

or specifying the analysis algorithm

based on model regions

20

DATA_CHECK

Runs the job

up to the

beginning of

the analysis

Useful for checking the message file

for initial notes and warnings, without

having to run the full fatigue analysis

MATERIAL

Material used

for analysis

'SAE-950C.mat' references a file

containing the material properties.

Materials are stored in

Data\material\local. Materials are

defined using the Material Manager

app. Usage of the app is discussed in

Section 5

USE_SN

Stress-life data

1.0; A flag indicating that S-N data

should be used if available

SN_SCALE

Stress-life data

scale factor

1.0; A linear scale factor applied to

each S-N data point

SN_KNOCK_DOWN

S-N knock-

down factors

Knock-down factors are not used in

this analysis. Knock-down factors are

discussed in Section 4.8

DATASET

Stress data

'stress_uni.dat' references the stress

dataset file. Stress datasets should be

saved in Project\input

HISTORY

Load history

'history_fully_reversed.dat'

references the load history file. Load

histories should be saved in

Project\input

UNITS

Stress units

3.0; A flag with the definition of MPa

CONV

Conversion

factor for

stress units

LOAD_EQ

Load

equivalency

The default loading equivalence is 1

repeat. If the loading represents

another dimension, the fatigue results

can be expressed in a more

appropriate unit (e.g. 1000 hours)

SCALE

Stress scale

0.8285; A linear scale factor applied

to the entire loading

OFFSET

Offset value

for stress

history

Loading offsets are discussed in

Section 3.4

REPEATS

Number of

repetitions of

the loading

HF_DATASET

Dataset(s) for

high frequency

loads

HF_HISTORY

Load history

for high

frequency

loads

21

HF_TIME

Time

compression

for high

frequency

loads

HF_SCALE

Scale factor for

high frequency

loads

High frequency loads are discussed in

Section 3.5

PLANE_STRESS

Element type

(3D stress or

planar)

0.0; A flag indicating that a 3D

element type should be assumed. The

distinction that Quick Fatigue Tool

makes about element types is

discussed in Sections 3.2.4 and 3.6.1

OUTPUT_DATABASE

Model output

database

(.odb) file from

an Abaqus FE

analysis

EXPLICIT_FEA

FEA procedure

type

PART_INSTANCE

FEA part

instance name

STEP_NAME

FEA step name

RESULT_POSITION

FEA result

position

Associating a job with an Abaqus .odb

file is discussed in Sections 4.6 and

9.5. This job is not associated with an

.odb file

ALGORITHM

Analysis

algorithm

0.0; A flag indicating that the default

analysis algorithm should be used

(Stress-based Brown-Miller). Analysis

algorithms are discussed in Section 6

MS_CORRECTION

Mean stress

correction

2.0; A flag indicating that the

Goodman mean stress correction will

be used. Mean stress corrections are

discussed in Section 7

ITEMS

List of items

for analysis

'ALL' indicates that all items in the

model (1) should be analysed.

Selecting analysis items is discussed in

Section 4.5.3

DESIGN_LIFE

The target life

of the system

'CAEL' indicates that the target life

should be set to the material’s

constant amplitude endurance limit

KT_DEF

Surface finish

definition

KT_CURVE

Surface finish

type

This analysis assumes a surface finish

factor of 1. Surface finish definition is

discussed in Section 4.3

22

NOTCH_CONSTANT

Notch

sensitivity

constant

NOTCH_RADIUS

Notch root

radius

GAUGE_LOCATION

Virtual strain

gauge

definition

GAUGE_ORIENTATION

Virtual strain

gauge

orientation

RESIDUAL

Residual stress

0.0; A residual stress value which is

added to the fatigue cycle during the

damage calculation. Residual stress is

discussed in Section 4.4

FACTOR_OF_STRENGTH

Factor of

strength

calculation

0.0; A flag indicating that a factor of

strength calculation will not be

performed. Factor of strength is

discussed in Section 8.3

FATIGUE_RESERVE_FACTOR

2.0; A flag indicating that the

Goodman B envelope will be used for

Fatigue Reserve Factor calculations.

The Fatigue Reserve Factor is

discussed in Section 8.2

HOTSPOT

Hotspot

calculation

0.0; A flag indicating that a hotspot

calculation will not be performed.

Factor of strength is discussed in

Section 4.5.3

OUTPUT_FIELD

Request for

field output

1.0; A flag indicating that field output

will not be written

OUTPUT_HISTORY

Request for

history output

0.0; A flag indicating that history

output will not be written

OUTPUT_FIGURE

Request for

MATLAB

figures

0.0; A flag indicating that MATLAB

figures will not be written. Analysis

output is discussed in Section 10

WELD_CLASS

Weld

classification

for BS 7608

analysis

YIELD_STRENGTH

Yield strength

for BS 7608

analyses

UTS

Ultimate

tensile

strength for BS

7608 analyses

DEVIATIONS_BELOW_MEAN

Degree of

uncertainty for

BS7608

analyses

23

FAILURE_MODE

Failure mode

for BS 7608

analyses

CHARACTERISTIC_LENGTH

Characteristic

dimension for

BS 7608

analyses

SEA_WATER

Environmental

effects factor

for BS 7608

analyses

This analysis does not require a weld

definition. The BS 7608 algorithm is

discussed in Section 6.6

4. Select the material and analysis type: This analysis uses SAE-950C Manten steel as the material.

The materials available for analysis are located in the Data\material folder. Stress units are in MPa.

The analysis algorithm is the default algorithm (Stress-based Brown-Miller), the Goodman mean

stress correction is used, as well as user-defined stress-life data points.

5. Run the job: To execute the analysis, right-click on tutorial_intro.m and click run.

6. A summary of the analysis progress is written to the command window. When the analysis is

complete, the command window should look like that of Figure 2.1.2.

7. The fatigue results summary reports a life of 732 thousand cycles to failure at location 1.1. This is

the default location when a stress dataset is provided without position labels.

8. In the Project\output directory, a folder with the name of the job is created which contains all of

the requested output. In this analysis, extensive output was not requested, so only the following

three basic files are written:

Figure 2.1.2: Fatigue results summary

24

File

Contents

<job_name>.log

Input summary

Analysis groups

Critical plane summary

Factor of Strength diagnostics

Fatigue results summary

<job_name >.msg

Pre and post analysis messages

o Analysis-specific notes offering

useful information to the user

o Analysis-specific warnings

explaining potential issues

with the analysis

<job_name >.sta

An item-by-item summary of the

analysis progress

9. Open the log and status files and examine their contents. According to the command window

summary, the analysis completed with warnings. Examine the contents of the message file:

a. Extensive output was not requested by the user

b. The damage at design life (10 million cycles by default) is over unity, corresponding to

failure

c. There is a warning that Quick Fatigue Tool encountered an ambiguity whilst determining

the element (stress tensor) type for analysis. A 3D stress tensor was assumed as the input

stress dataset. Since this assumption is correct, the warning can be ignored

10. To run another analysis, it is recommended that you first restart MATLAB to ensure that all the

application data from the previous analysis is cleared.

25

2.4.2 Configuring a data check analysis

A data check runs the job file through the analysis pre-processor, without performing the fatigue

analysis.

Job file usage:

Option

Value

DATA_CHECK

{0.0 | 1.0}

Data checks are useful for ensuring that the analysis definitions are valid, allowing the user to correct

errors which may otherwise only become apparent after a long analysis run. The data check feature

checks the following for consistency:

ODB interface settings

Results directory initialization

Analysis continuation settings

Material definitions and S-N interpolation

Algorithm and mean stress correction settings

Dataset and history definitions

Principal stress histories

Custom mean stress, FRF and surface finish data

Surface detection

Yield criteria analysis

Composite criteria analysis

Nodal elimination

Load proportionality

Virtual strain gauge definition

Duplicate analysis item IDs

Pertinent information regarding the data check run can be found in the message file in

Project\output\<jobName>.

If the user requested field output from the job file, the worst tensor and principal stress per node for

the whole model are written to the files datacheck_tensor.dat and datacheck_principal.dat,

respectively.

26

2.4.3 Configuring an analysis from a text file

Quick Fatigue Tool includes a text file processor, which allows the user to submit a job from an ASCII

text file containing only the options which are required to define the analysis. This results in job files

which are less cumbersome than the standard .m file which must contain every option regardless of

whether or not it is required.

To define a job from a text file, options are specified as keywords.

Job file usage:

Option

Value

*<keyword> =

<value>

Keywords are exactly the same as job file options, but they always begin with an asterisk (). For

example, the job file option DATASET is declared in the text file as *DATASET. Entries which do not

begin with an asterisk, or are not proceeded with an equal sign () followed by a value, are ignored

by the input file processor.

Job file options containing underscores are specified in the text file with spaces. For example, the

option JOB_NAME is specified in the text file as *JOB NAME.

The following should be noted when defining jobs from a text file:

it is not necessary to end the definition with a semi-colon;

it is not necessary to enclose strings with apostrophes;

white spaces are ignored; and

mathematical expressions are not supported.

The user must adhere to the following syntax when defining cells in the text file.

Cell type

Text input

Strings

{<>, <>,…, <>}

Numeric arrays

{[, ,…, ], [, ,…, ],…, [, ,…,

]}

Mixture of strings and numeric arrays

{<>, [, ,…, ]}

Any combination of strings and numerical inputs are supported, provided each element is separated

by a comma.

27

Jobs defined as text files are submitted from the command line.

Command line usage:

>> job <jobFile>

>> job <jobFile> 'option'

The parameter option has two mutually-inclusive values:

interactive – prints an echo of the message (.msg) file to the MATLAB command window.

datacheck – submits the analysis job as a data check analysis.

Any file extension is accepted provided the contents is ASCII text. Job files with the extension .inp can

be specified without appending .inp on the command line. For all other file types, the extension must

be specified. Apostrophes are not required when specifying the input file name.

Example usage

An example of a text-based job file is given by the file tutorial_intro.inp in the

Data\tutorials\intro_tutorial folder. Open the file and study its contents.

There is a text header at the beginning of the file, which is distinguished from the rest of the contents

by double asterisks (**) at the beginning of each line. These lines are ignored by Quick Fatigue Tool.

The first keyword is *USER MATERIAL, which is used to define material data. Guidance on creating

material data in a text file is found in Section 5.5.4 “Specifying material properties in a job file”.

Subsequent keywords specify analysis definitions for a uniaxial stress-life analysis. To submit the file

for analysis, execute the following command:

Command line usage:

>> job tutorial_intro

The material data is first read into the material database. If the material already exists in the

Data\material\local directory, the user is prompted to overwrite the existing material data. The

analysis keywords are then processed and the job is submitted for analysis.

Results of the fatigue analysis are written to Project\output\tutorial_intro.

28

2.5 The analysis method

1. A loading definition is created by combining elastic stress datasets with load histories to produce

a scaled history of stresses for each item in the model

2. If a high frequency loading is provided, it is interpolated and superimposed onto the original load

history

3. If requested, the load histories are pre-gated before the analysis which aims to remove small

cycles from the loading

4. The principal stress history is calculated for each analysis item

5. The load history at each point in the model is assessed for proportionality. The critical plane step

size may automatically be increased if the load is considered to be proportional

6. If requested, nodal elimination is performed which removes analysis items whose maximum stress

range is less than the fatigue limit of the material

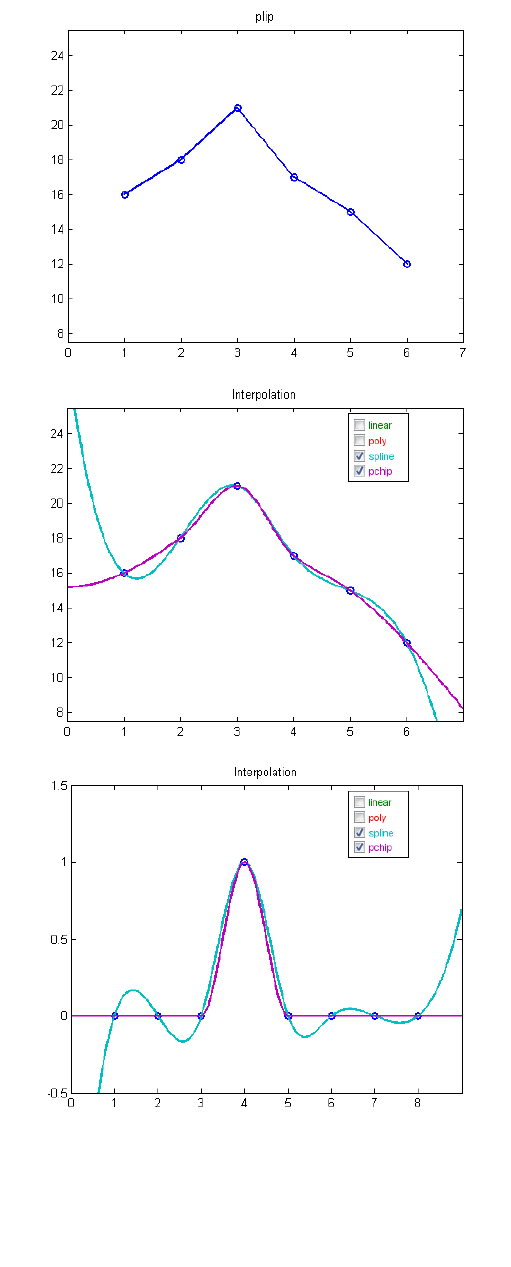

7. User stress-life data is interpolated to find the endurance curve for a fully-reversed cycle

8. Stresses are resolved onto planes in a spherical coordinate space to find the plane on which the

most damaging stresses occur

1

9. Stresses on this plane are counted using the rainflow cycle counting method

2

. If requested, the

stress tensors on this plane are gated prior to cycle counting

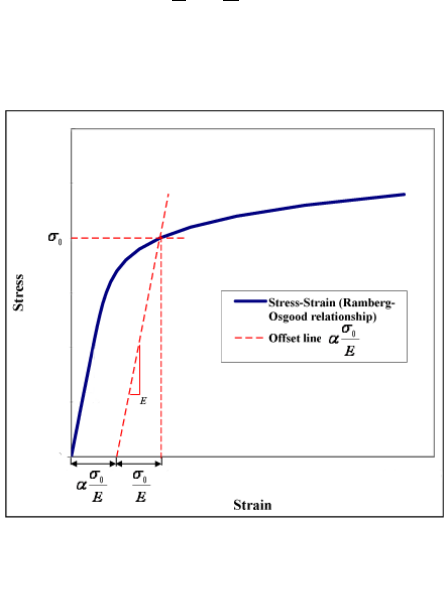

10. If requested, the stress cycles are corrected for material non-linearity

11. The stress cycles are corrected for the effect of mean stress

12. A damage calculation is performed for each cycle using Miner’s Rule of linear damage

accumulation [7]. The endurance limit may be reduced to 25% of its original value if the cycle

stress amplitudes are damaging

13. Steps 8-12 are repeated for each analysis item

14. If requested, the item with the worst life is analysed once more to calculate extensive output

15. If requested, Factor of Strength (FOS) iterations are performed. The damage is recalculated for

each analysis item to obtain the linear loading scale factor which, if applied to the original loading,

would result in the user-defined design life

1

Only if the fatigue analysis algorithm is multiaxial

2

Only if the load history contains more than two data points

29

3. Defining fatigue loadings

3.1 Loading methods

3.1.1 Overview

The loading definition forms the basis of the analysis, and describes the stress history at each point in

the model. Loadings usually consist of stress datasets (user-defined or from FEA) and load histories.

The stress datasets contain the static stress state at each location in the model. This can be at a node,

integration point, centroid or otherwise. The load history defines the variation of the stresses through

time. However, Quick Fatigue Tool does not distinguish elapsed time between loading points and

hence the load history is treated as being rate-independent.

Quick Fatigue Tool offers five methods for creating loading definitions:

1. Uniaxial history

2. Simple loading

3. Multiple load history (scale and combine) loading

4. Dataset sequences

5. Complex (block sequence) loading

3.1.2 Syntax

Stress datasets are specified as ASCII text files:

'dataset-file-name.*' | {'dataset-file-name-1.*', 'dataset-file-name-2.*',…, 'dataset-file-name-.*'}

Load histories are specified as ASCII text files, or directly as one or more vectors:

'load-history-file-name.*' | {'history-file-name-1.*', 'history-file-name-2.*',…, 'history-file-name-.*'}

[, ,…, ] | {, ,…, }

30

3.1.3 Uniaxial history

A single load history is supplied without a stress dataset.

Job file usage:

Option

Value

DATASET

' '

HISTORY

{'history-file-name.*' | [, ,…, ] }

The load history is analysed without respect to a particular model, and is only valid for uniaxial states

of stress. Uniaxial histories can only be used with the Uniaxial Stress-Life and Uniaxial Strain-Life

algorithms.

Job file usage:

Option

Value

ALGORITHM

{3.0 | 'UNIAXIAL STRAIN'}

ALGORITHM

{10.0 | 'UNIAXIAL STRESS'}

3.1.4 Simple Loading

A simple loading consists of a single stress dataset multiplied by a load history.

Job file usage:

Option

Value

DATASET

'dataset-file-name.*'

HISTORY

{'history-file-name.*' | [, ,…, ] }

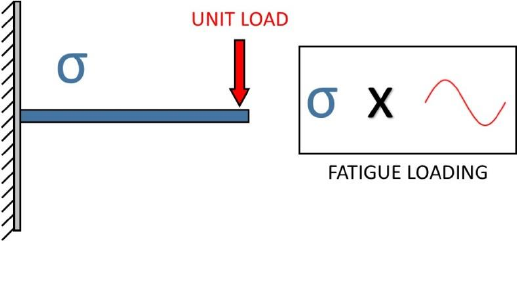

An example of a simple fatigue loading is given by Figure 3.1.

Figure 3.1: Demonstration of a simple loading. The stresses, , due

to a unit load are multiplied by a load history

31

Constant amplitude loading

The load history is defined as a minimum and maximum value, an example of which is given below.

Job file usage:

Option

Value

HISTORY

[, ]

The definition can be a single cycle, or repetitions of the same cycle.

Job file usage:

Option

Value

REPEATS

Single load history

The load history is defined as a time history of the loading, scaled with the stress dataset. The dataset

can be defined in two ways:

1. FEA stress from unit load, scaled by a time history of load values

2. FEA stress from maximum load, scaled by a time history of load scale factors

Both methods are valid provided that the FEA is linear and elastic.

32

3.1.5 Multiple load history (scale and combine) loading

A multiple load history consists of several stress datasets multiplied by the same number of histories.

At each analysis item, the stress tensor from each dataset is scaled with its respective load history and

combined into a single stress history. The number of stress datasets and load histories must be the

same, although the number of history points in each load history need not be the same.

Job file usage:

Option

Value

DATASET

{'dataset-file-name-1.*', 'dataset-file-name-2.*',…,

'dataset-file-name-.*'}

HISTORY

{'history-file-name-1.*', 'history-file-name-2.*',…,

'history-file-name-.*'}

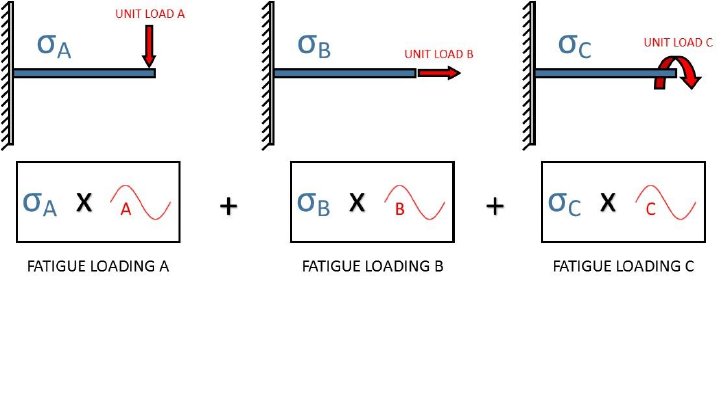

An example of a scale and combine loading is given by Figure 3.2.

Scale and combine loading is only physically meaningful for elastic stresses. Each channel can be scaled

by its respective load history since the load is directly proportional to the elastic FEA stress. The scale

and combine method assumes that each loading channel is occurring simultaneously.

The load history may be defined by any combination of load history files and vectors.

Job file usage:

Option

Value

HISTORY

{'history-file-name.*', [, ,…, ]}

Figure 3.2: Demonstration of a multiple load history (scale and combine). The stresses,

,

and due to unit loads , and are multiplied by their respective load histories and

summed to produce the resultant fatigue loading

33

3.1.6 Dataset sequences

A dataset sequence loading consists of several stress datasets.

Job file usage:

Option

Value

DATASET

{'dataset-file-name-1.*', 'dataset-file-name-2.*',…,

'dataset-file-name-.*'}

HISTORY

[ ]

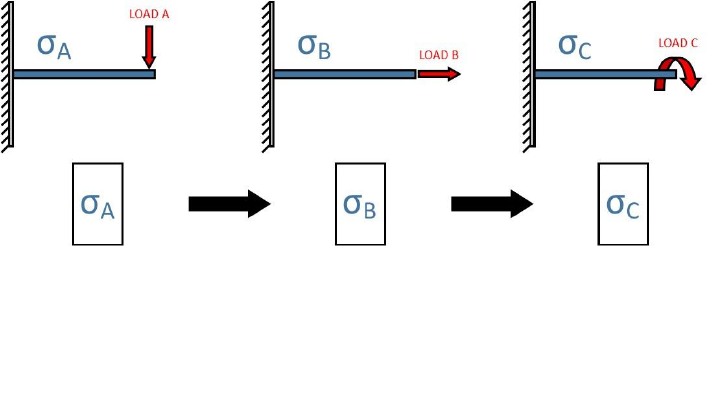

An example of a dataset sequence loading is given by Figure 3.3.

Since the fatigue loading is completely described by the variation of stresses between each dataset,

specification of load histories is not required.

Figure 3.3: Demonstration of a stress dataset sequence loading. The fatigue loading is formed

by the sequence of stress solutions , and , due to the applied loads , and ,

respectively

34

3.1.7 Complex (block sequence) loading

Complex loadings consist of multiple loading blocks, each representing a stage of the component’s

duty. Block sequences are useful in proving ground tests where the component is subjected to a

sequence of distinct loading events, and it is desirable to compute the individual damage contributions

from each event.

Currently, sequences of loading blocks cannot be defined in a single job. However, Quick Fatigue Tool

allows the user to run multiple jobs in series, wherein each job is treated as a separate loading block.

Job file usage:

Option

Value

JOB_NAME

'current-job-name'

CONTINUE_FROM

'previous-job-name'

When a job is run as a continuation of a previous job, Quick Fatigue Tool calculates the individual

damage contribution of the current job, then superimposes the result onto the previous job to give

the cumulative damage of the block sequence.

Analysis continuation is discussed further in Section 4.8.

35

3.2 Creating a stress dataset file

3.2.1 Dataset structure

Stress datasets are text files containing a list of stress tensors. The simplest way to create a stress

dataset file is to specify the tensor components as follows:

Line 1:

, , , , ,

Line 2:

, , , , ,

.

.

.

.

.

.

Line

, , , , ,

Each line defines the stress tensor at each location in the model.

3.2.2 Creating a dataset from Abaqus/Viewer

Stress datasets may be generated from finite element analysis (FEA). To create a stress dataset file

from Abaqus/Viewer, complete the following steps:

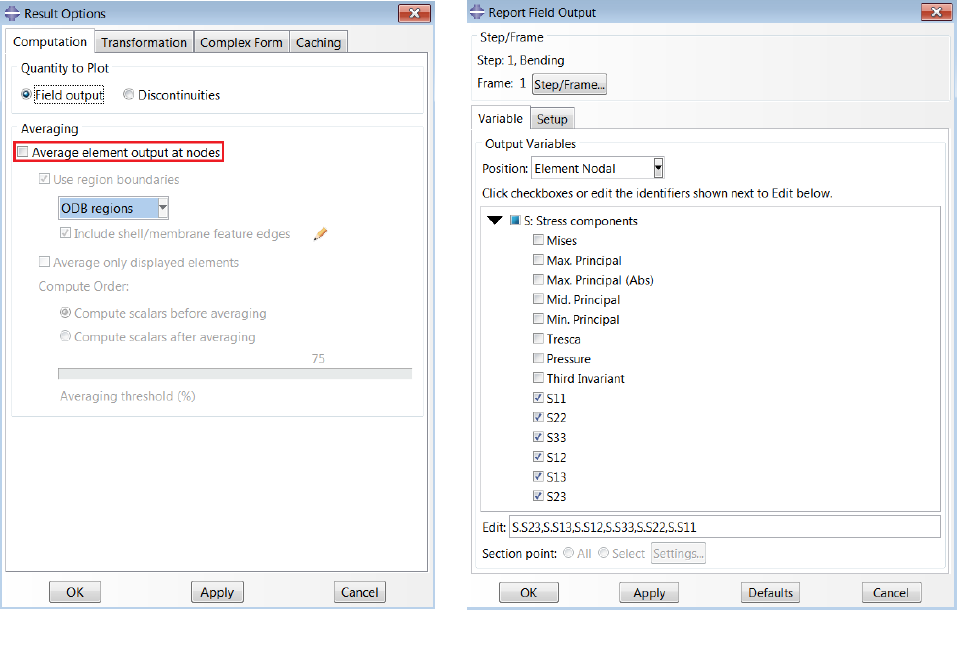

1. In the Visualization module, from the main menu, select Result → Options…

2. Under “Averaging”, uncheck “Average element output at nodes”.

3. From the main menu, select Report → Field Output…

4. Under “Step/Frame”, select the step and the frame in the analysis from which the stresses will

be written.

5. In the “Variable” tab, under “Output Variables”, select the position of the output (Integration

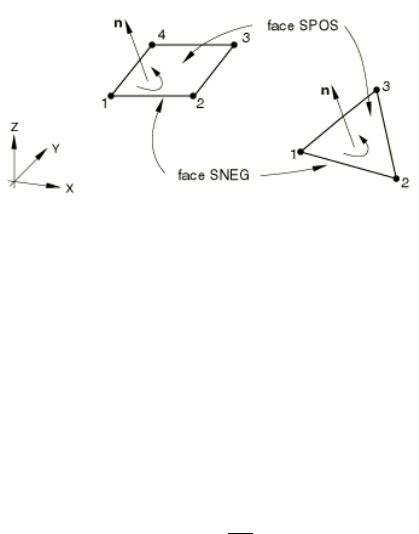

Point, Centroid, Element Nodal or Unique Nodal).

6. Expand the variable “S: Stress components” and select all the available Cauchy tensor

variables (S11, S22, S33, S12, S13 and S23); if plane stress elements are used, select (S11, S22,

S33 and S12) and set PLANE_STRESS=1.0 in the job file; if one-dimensional elements are used,

select (S11)

7. If the model contains results at multiple section points or plies, select Settings… from the

section point method and specify the section point or ply of interest

8. In the “Setup” tab, set the file path (e.g. \6.x-xx\Project\input\<filename>.rpt)

9. Under “Data”, uncheck “Column totals” and “Column min/max”. Make sure “Field output” is

checked.

10. Click OK

36

3.2.3 Creating datasets from other FEA packages

If the user wishes to create a stress dataset from an FEA package other than Abaqus, the following

standard data format must be observed for 3D stress elements:

MAIN POSITION ID

(OPTIONAL)

SUB POSITION ID

(OPTIONAL)

S11

S22

S33

S12

S13

S23

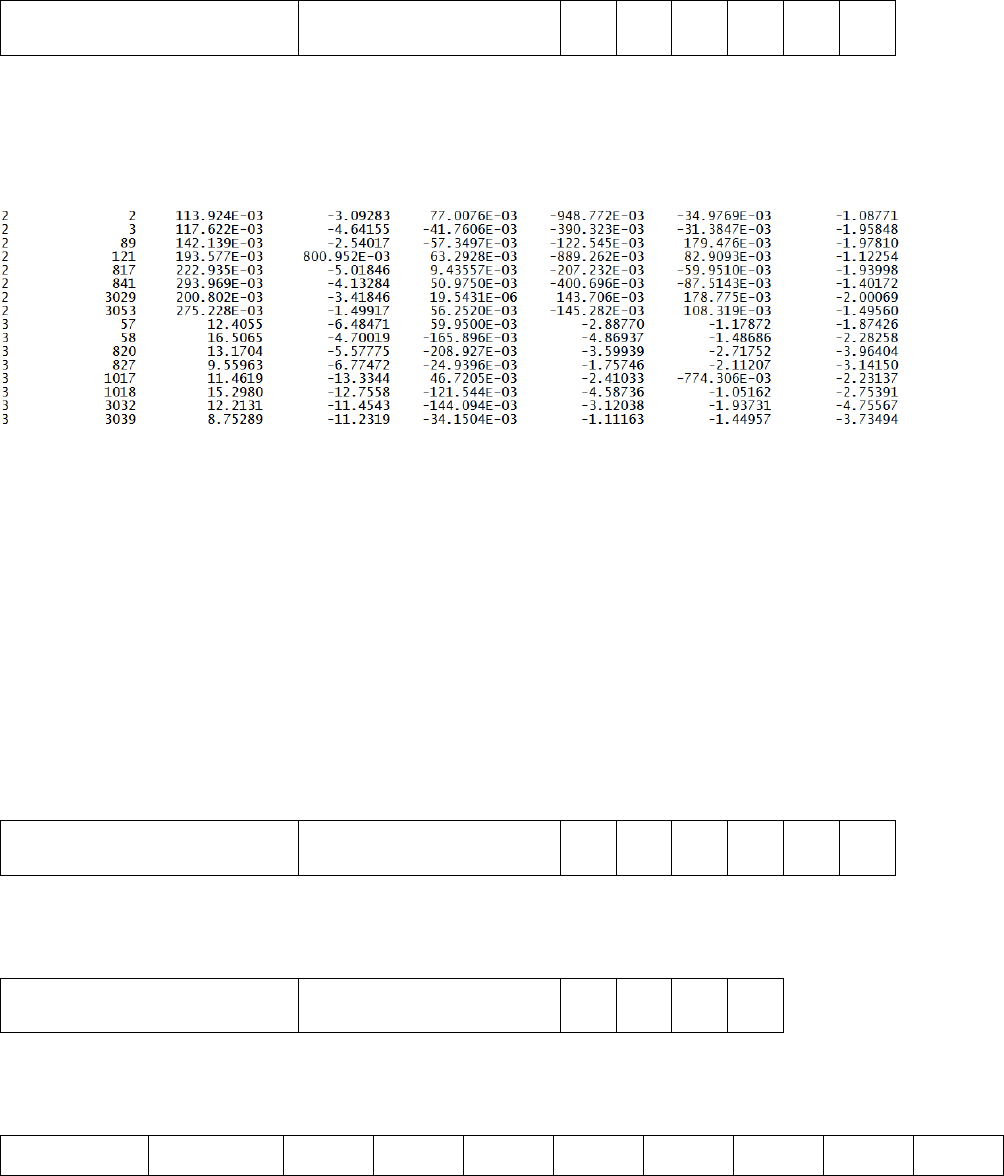

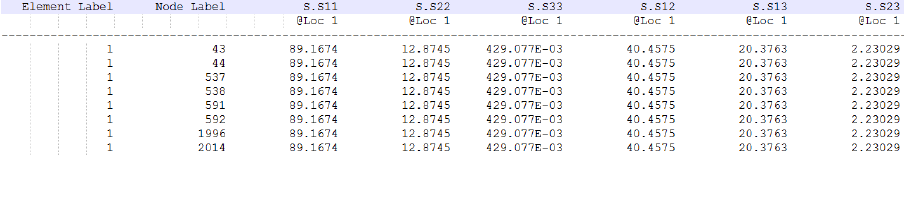

For example, a particular stress dataset may look like the following:

The position labels can be arbitrary, but usually represent the location on the finite element model

(e.g. element.node). Quick Fatigue Tool will quote the position of the shortest fatigue life. Position

labels are not compulsory: The stress dataset can be specified with tensor information only.

Furthermore, it is not compulsory to include both main and sub IDs. For example, if the stress data is

unique nodal (nodal averaged), there is only one position ID which is the node number.

3.2.4 Creating datasets with different element types

Quick Fatigue Tool automatically recognises stress datasets from Abaqus containing multiple element

types. Such files are split into regions, each of which defines the stress tensors for a specific element

type. If the stress dataset file is user-defined, the following conventions must be observed:

3D stress elements:

MAIN POSITION ID

(OPTIONAL)

SUB POSITION ID

(OPTIONAL)

S11

S22

S33

S12

S13

S23

Plane stress elements without shell face information:

MAIN POSITION ID

(OPTIONAL)

SUB POSITION ID

(OPTIONAL)

S11

S22

S33

S12

Plane stress elements with shell face information:

MAIN POSITION ID

(OPTIONAL)

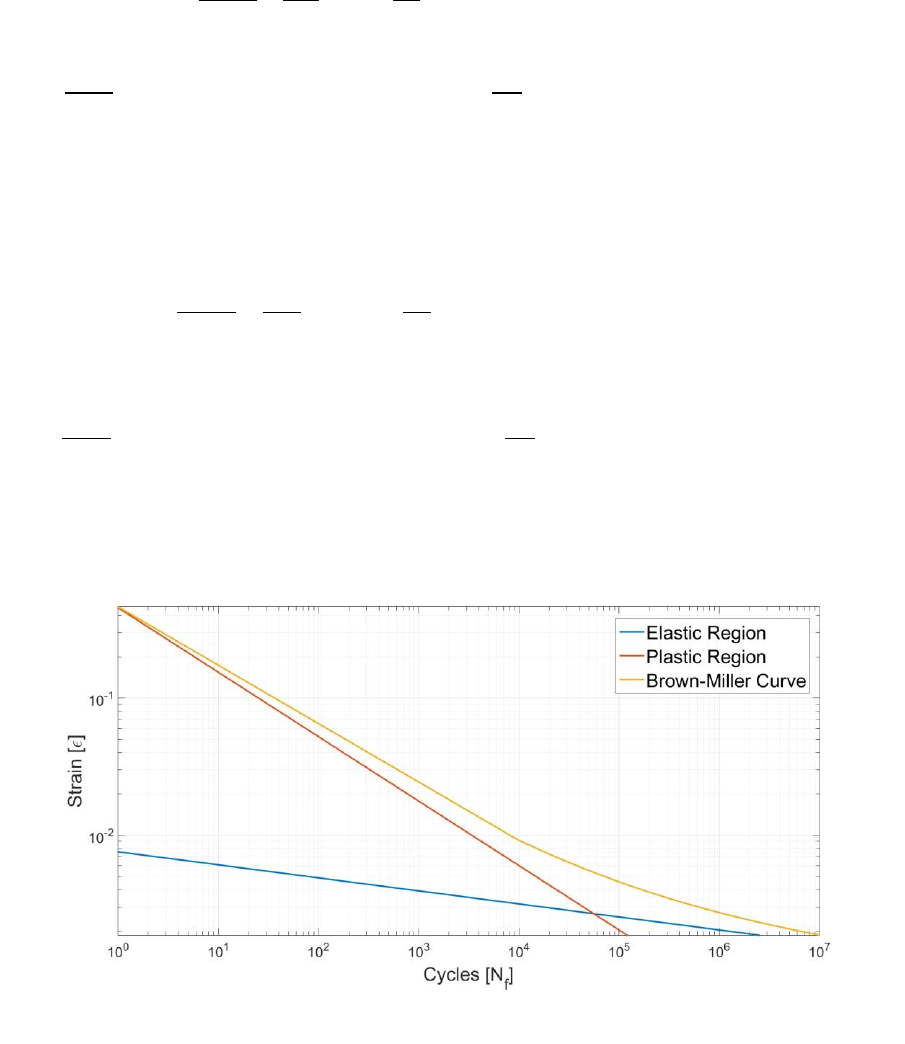

SUB POSITION ID

(OPTIONAL)

S11

(+ve face)

S11

(-ve face)

S22

(+ve face)

S22

(-ve face)

S33

(+ve face)

S33

(-ve face)

S12

(+ve face)

S12

(-ve face)

37

One-dimensional elements:

MAIN POSITION ID

(OPTIONAL)

SUB POSITION ID

(OPTIONAL)

S11

Below is an example of a user-defined stress dataset file containing two elements.

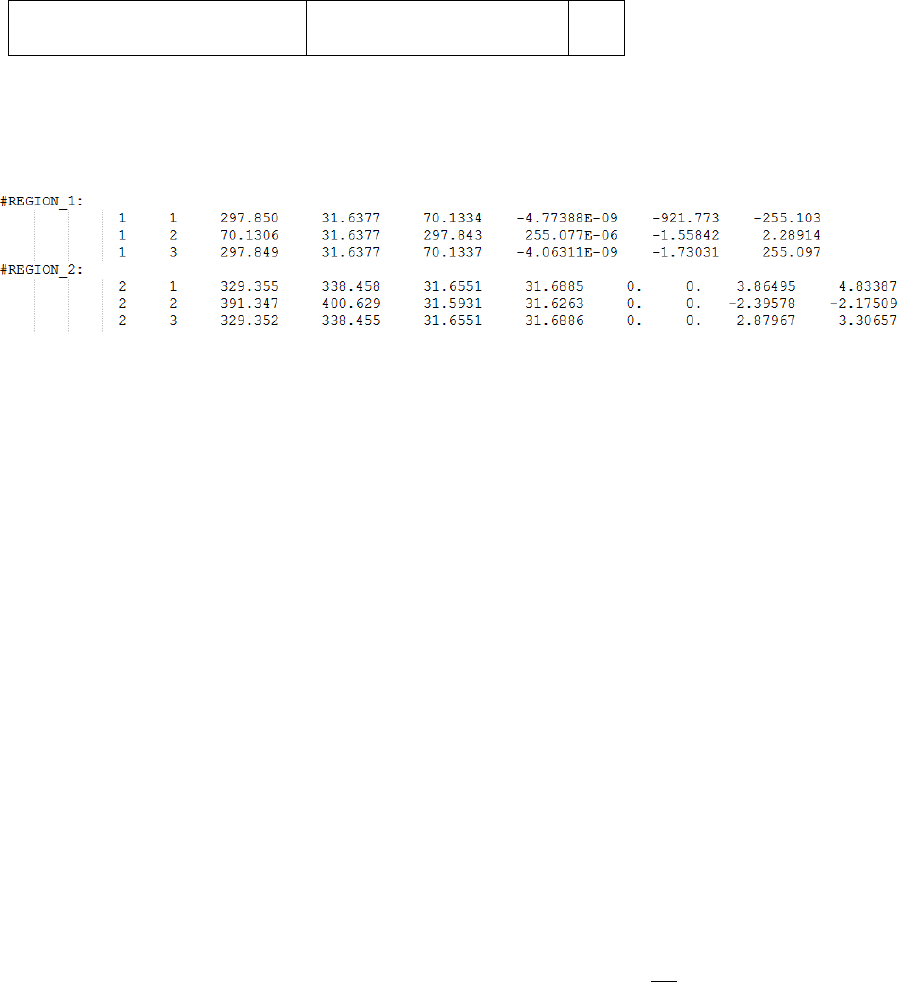

Each element region must be declared by a text header in order to be recognised, and the header

must start with a non-numeric character. In the above example, REGION_1 defines a 3D element and

REGION_2 defines a 2D element with results at both shell faces. Both elements are defined with

element-nodal (nodal un-averaged) position labels. All the datasets in the loading must be defined

with the same position labels otherwise the analysis will not run. To check whether or not the dataset

definition was processed correctly, Quick Fatigue Tool prints the number of detected regions to the

message file. This can be found in Project\output\<jobName>\<jobName>.msg.

Quick Fatigue Tool will automatically detect the element type based on the number of columns in the

dataset file. For example, if there are five columns, this will be interpreted as plane stress (four

columns define the tensor) with one column defining the element position (either unique nodal or

centroidal). If the dataset file contains six columns, this could either be interpreted as 3D stress (all six

columns define the stress tensor) with no position labels, or plane stress (four columns define the

tensor) with two columns defining the element position (either element-nodal or integration point).

This ambiguity is resolved with the use of the following job file option:

Job file usage:

Option

Value

PLANE_STRESS

{0.0 | 1.0}

If the value of PLANE_STRESS is set equal to 1.0, Quick Fatigue Tool will assume that the element

definition is plane stress if it encounters a data region with six data columns.

A complete description of how dataset files are interpreted is provided in Section 3.6.

38

3.2.5 Specifying stress dataset units

Since fatigue analysis uses the SI (mm) system of units, stress datasets are assumed to have units of

Megapascals () by default. If the datasets use a different system of units, these must be specified

in the job file so that Quick Fatigue Tool can convert them into the SI (mm) system.

Job file usage:

Option

Value

UNITS

{'Pa' | 'kPa' | 'MPa' | 'psi' | 'ksi' | 'Msi'}

If the stress dataset units are not listed, they can be user-defined.

Job file usage:

Option

Value

UNITS

0.0

CONV

The parameter is a constant which converts the stress dataset units into Pascals ().

[3.1]

The stress data is converted into the SI (mm) system using Equation 3.2.

[3.2]

39

3.3 Creating a load history

Load histories can be defined in three ways:

1. From a text file

2. As a direct definition

3. As a workspace variable

Create a load history from a text file

If the load history is defined from a text file, it must contain a single or vector of loading

points, as follows:

← First loading point

.

.

← Last loading point

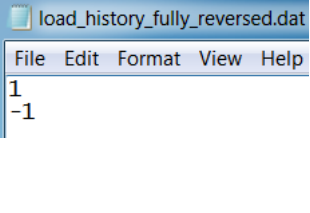

For example, a fully-reversed load history would look like that of Figure 3.4.

Job file usage:

Option

Value

HISTORY

'history-file-name.*'

Load history files must be stored in the Project\input folder in order for Quick Fatigue Tool to locate

the data.

Figure 3.4: Fully-reversed load history

40

Create a load history as a direct definition

Load histories can be specified in the job file as a vector of scale factors.

Job file usage:

Option

Value

HISTORY

[, ,…, ]

Alternatively, the load history can be defined as a function.

Job file usage:

Option

Value

HISTORY

where is a stress amplitude scale factor.

Create a load history as a workspace variable

If the load history is defined as a workspace variable, it must be a or numerical array.

Job file usage:

Option

Value

HISTORY

{, ,…, }

In addition, the variables declared in HISTORY must also be specified as inputs to the function

declaration and the function call.

Job file usage:

function [ ] = <jobName>(, ,…, )

The job is then submitted by executing the job file from the command line.

Command line usage:

>> <jobName>(, ,…, )

41

For scale and combine loadings, it is possible to define a load history using a combination of text files,

direct definitions and workspace variables.

Job file usage:

Option

Value

HISTORY

{'history-file-name.*', [, ], }

Treatment of multiple load histories

The load histories do not have to be the same length. Before the analysis, all the load histories will be

modified to have the same length by appending zeroes to the shorter histories. However, in order to

maximise the reliability and performance of the cycle counting algorithm, it is strongly recommended

that the loadings have a similar length.

Note that multiple load histories are not supported for uniaxial analysis.

42

3.4 Load modulation

The fatigue loading can be scaled and offset using the SCALE and OFFSET job file options, respectively.

The scaled and offset fatigue load, , is given by Equation 3.3.

[3.3]

Defining load scale factors

Load scale factors are defined as follows:

Job file usage:

Option

Value

SCALE

[, ,…, ]

If the analysis is a scale and combine loading, is the number of dataset-history pairs; each load scale

factor is multiplied by its respective dataset-history pair. If the analysis is a dataset sequence, is the

number of datasets; each load scale factor is multiplied by its respective dataset in the sequence.

If the user specifies the Uniaxial Stress-Life algorithm, a single scale factor may be specified. Load

history scales can be used with any loading methods.

Defining load offset values

Load offset values are defined as follows:

Job file usage:

Option

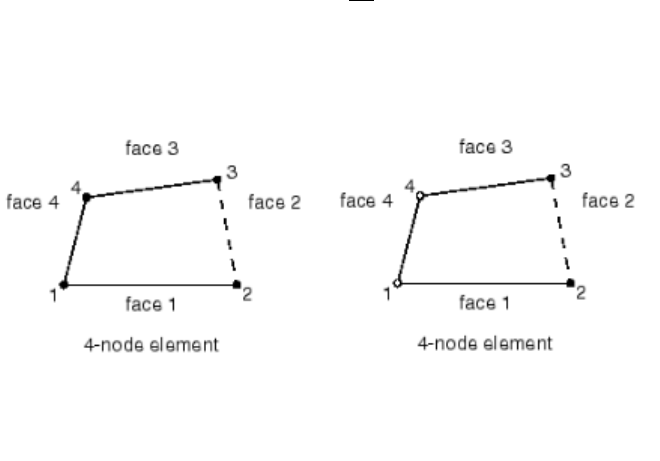

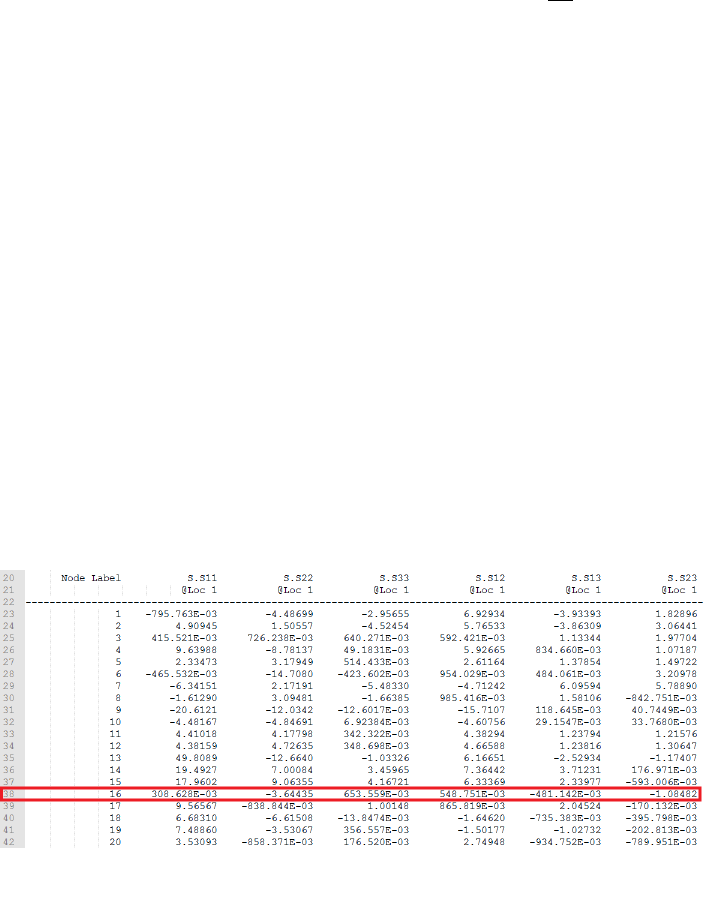

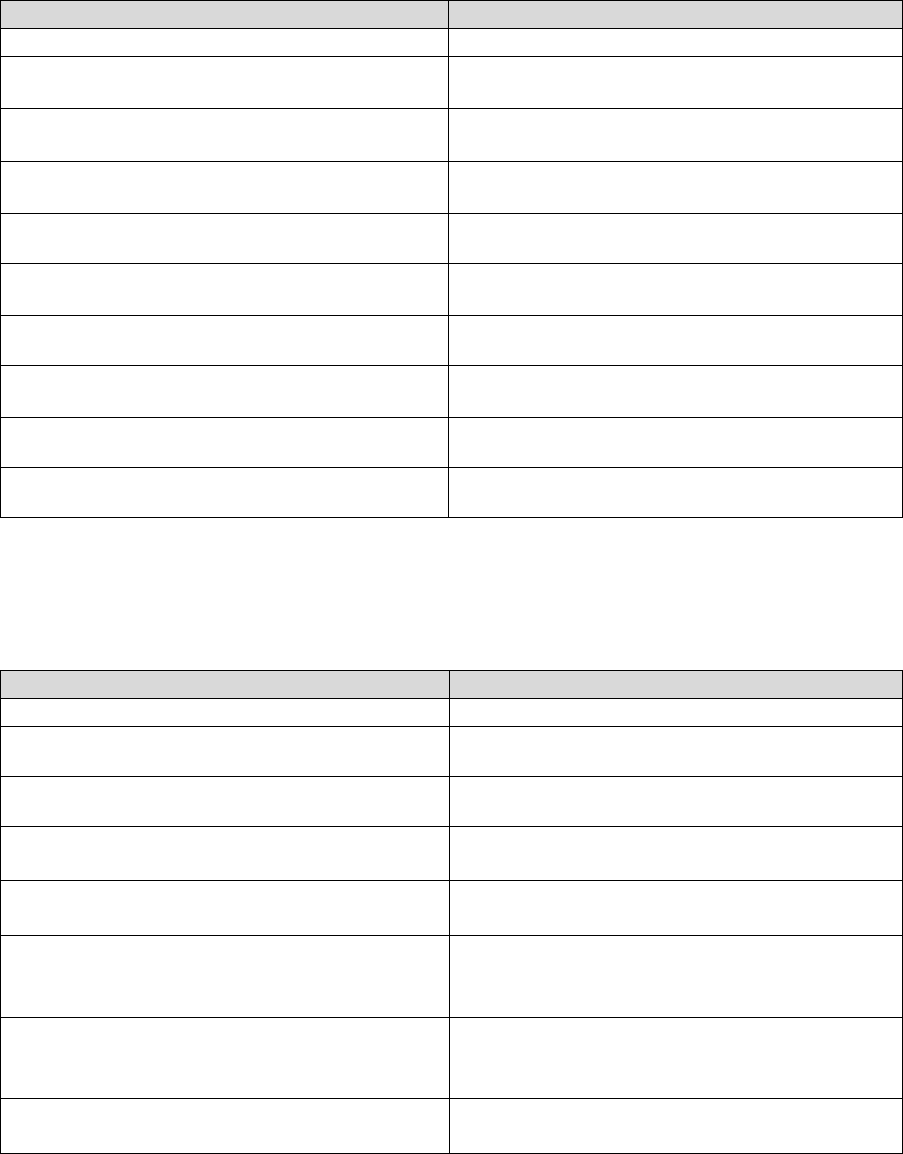

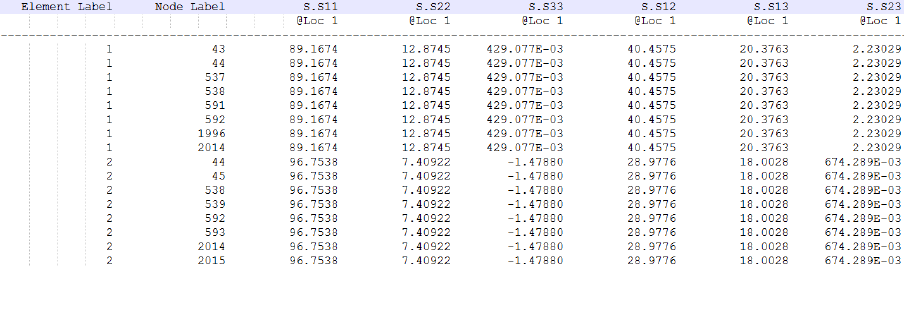

Value

OFFSET

[, ,…, ]

where is the number of dataset-history pairs; each load offset value is summed with its respective

dataset-history pair.

Since load offset values are applied to the load history points only, they may not be used with dataset

sequence loadings. Load offsets may be used with all other loading methods.

43

3.5 High frequency loadings

3.5.1 Overview

The scale and combine method outlined in Section 3.1 does not distinguish between elapsed time and

can produce physically incorrect load histories if two load signals with very different period are

analysed together. The solution is to superimpose the higher frequency load data onto the lower

frequency data.

3.5.2 Defining high frequency loadings

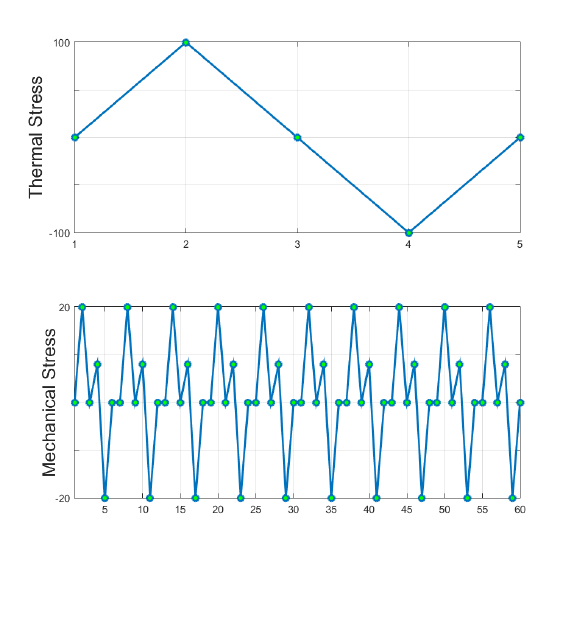

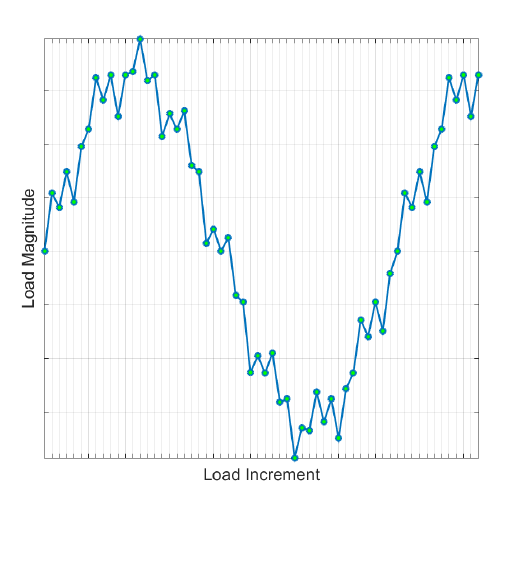

Take the example of a piston which experiences combined thermal and mechanical stresses shown

in Figure 3.5.

For a scale and combine loading, the two signals are defined by the load histories in Figure 3.5.

Normalized thermal load

[0, 1, 0, -1, 0]

Normalized mechanical load

[0,1,0,0.4,-1,0,0,1,0,0.4,-1,0,0,1,0,0.4,-

1,0,0,1,0,0.4,-1,0,0,1,0,0.4,-1,0,0,1,0,0.4,-

1,0,0,1,0,0.4,-1,0,0,1,0,0.4,-1,0,0,1,0,0.4,-

1,0,0,1,0,0.4,-1,0]

Figure 3.5: Thermal and mechanical load signals occurring over the same

period

44

A problem arises if the two loads occur over the same time interval. Since the mechanical load has

many more time points than the thermal load, Quick Fatigue Tool will append most of the mechanical

load onto the end of the load history. Using a standard scale and combine, the resulting load history

would be that of Figure 3.6.

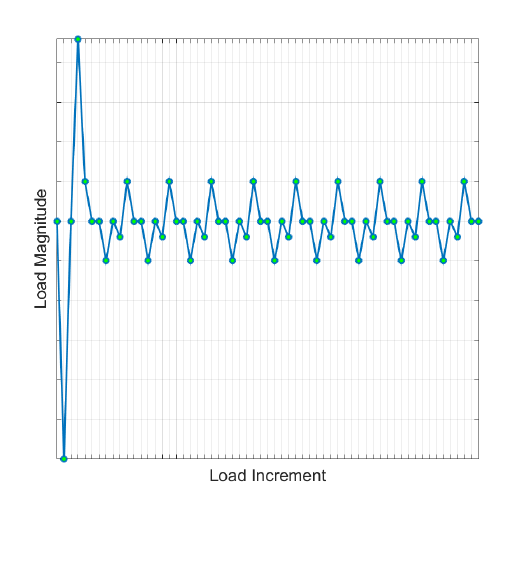

This loading definition is physically incorrect because it does not allow for the fact that the two loads

occur simultaneously over the same period. The solution is to define the mechanical load as a high

frequency dataset. The modified load histories are as follows:

Normalized thermal load

[0, 1, 0, -1, 0]

Normalized mechanical load

[0, 1, 0, 0.4, -1, 0]

In this case, the high frequency data is specified as a single repeat of the mechanical load.

Job file usage:

Option

Value

HF_DATASET

'mechanical-dataset-file-name.*'

HF_HISTORY

'mechanical-history-file-name.*'

HF_TIME

{100.0, 10.0}

Figure 3.6: Result of using the scale and combine technique for the

thermal-mechanical load

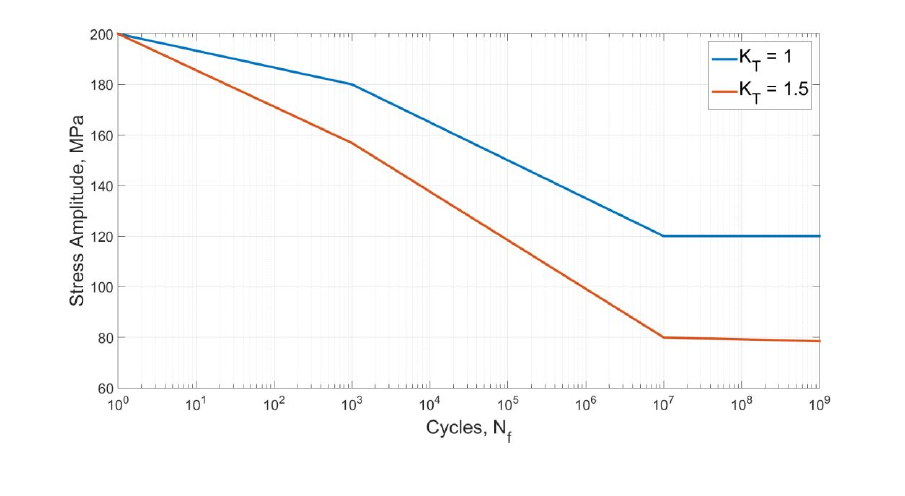

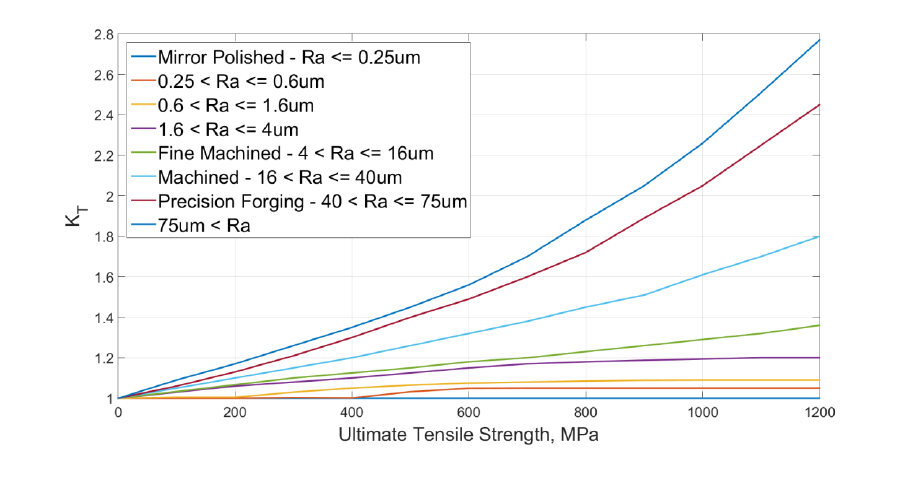

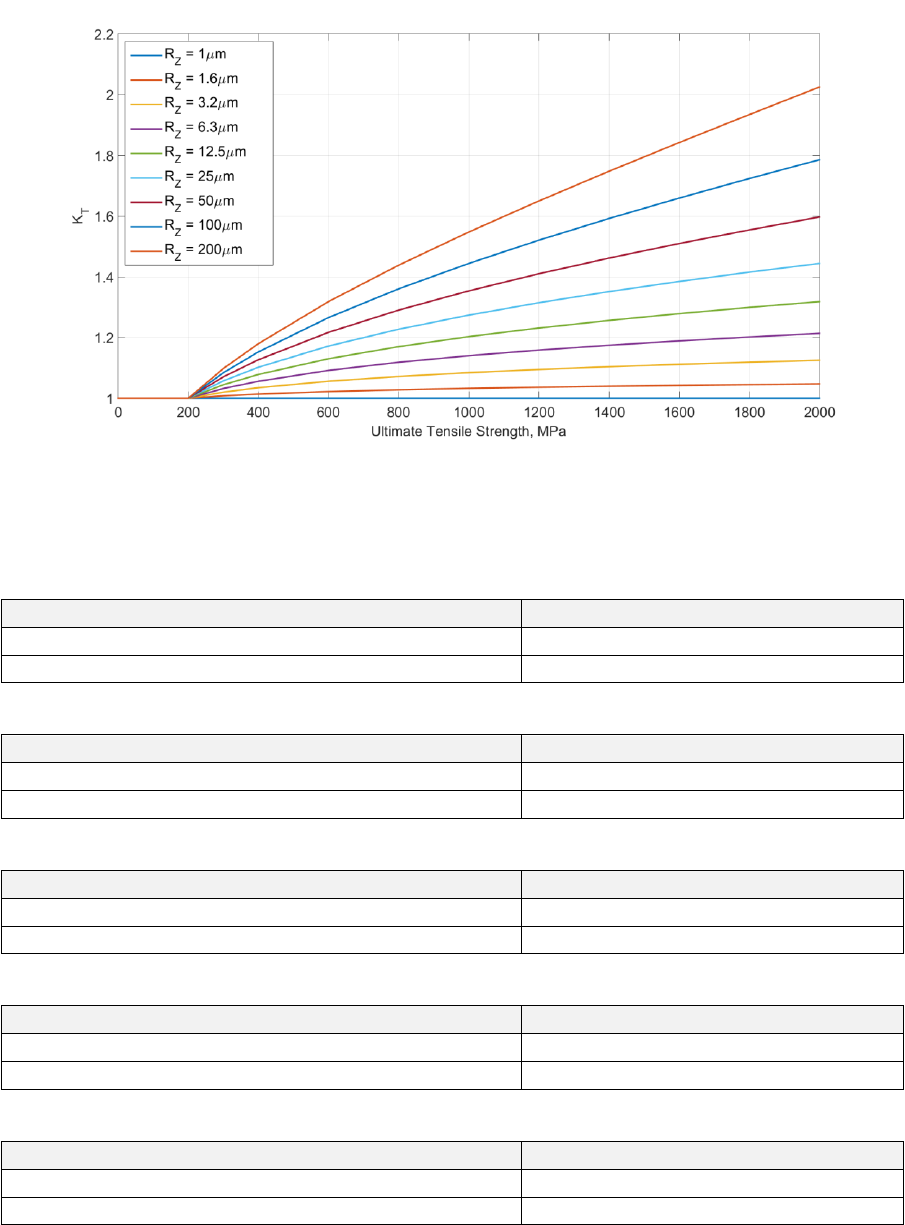

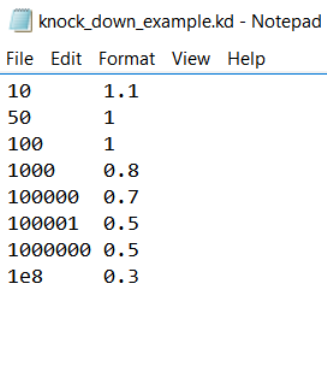

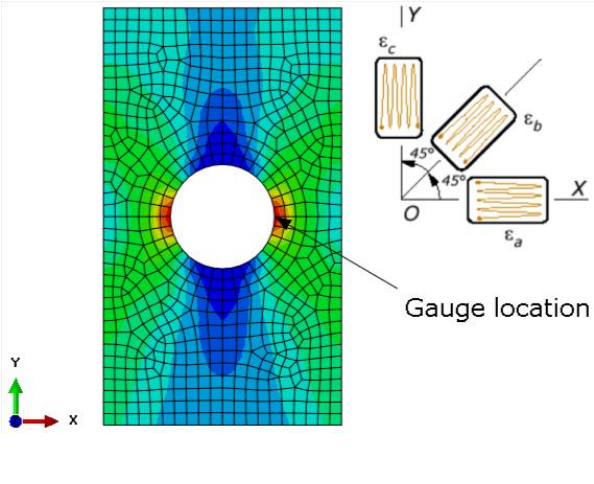

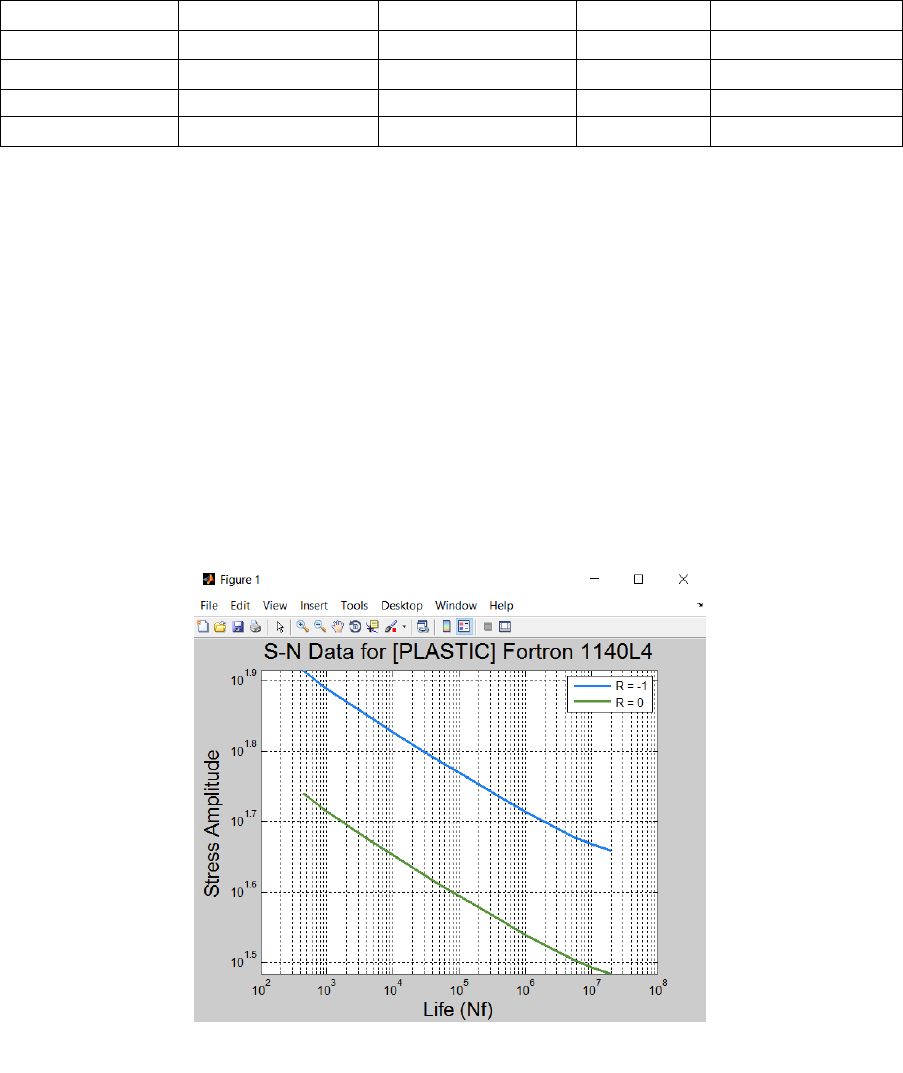

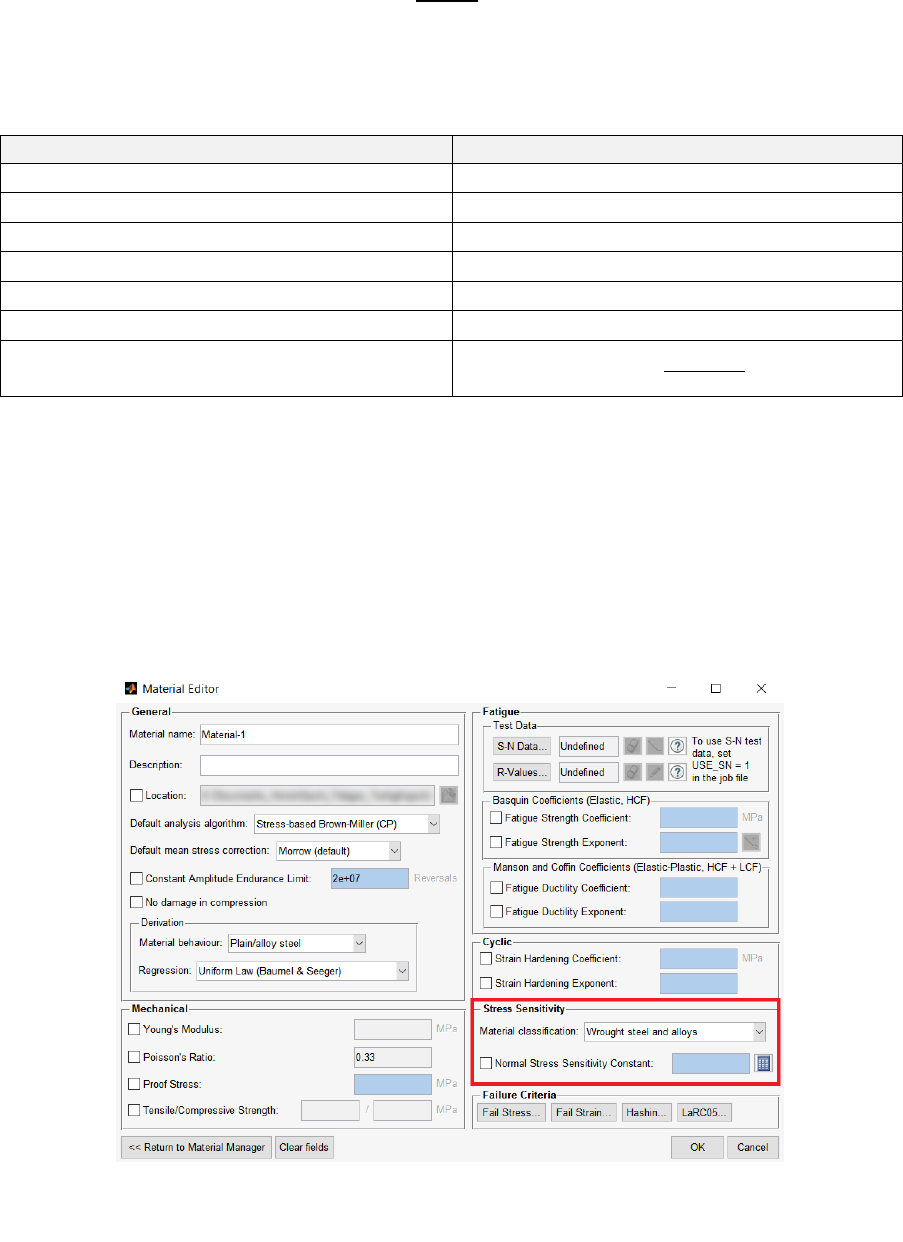

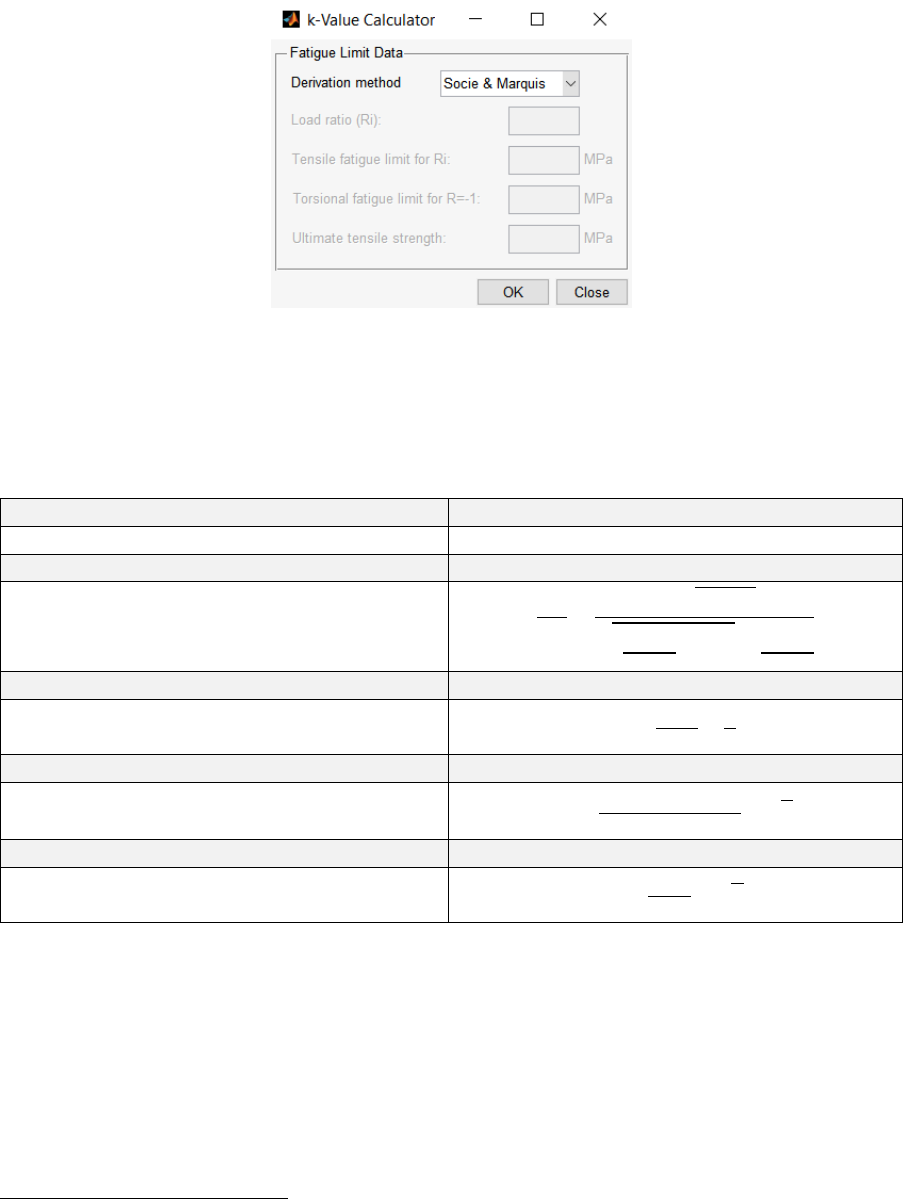

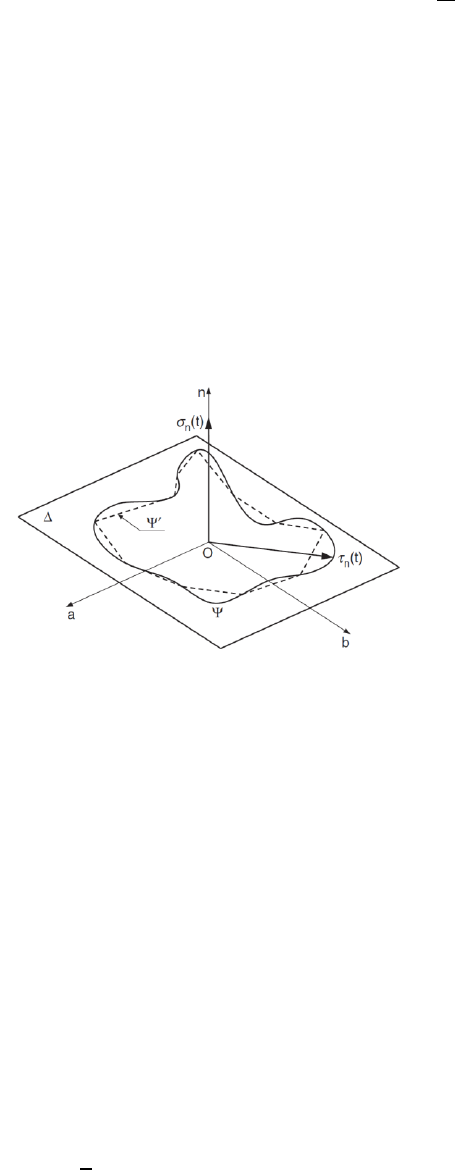

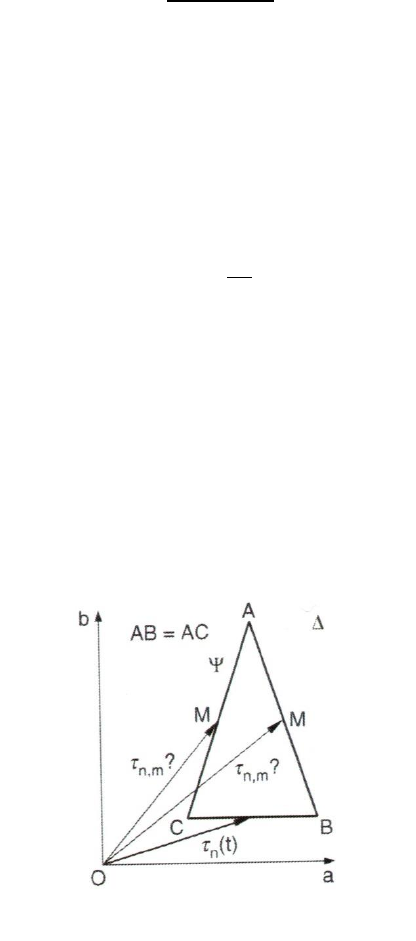

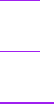

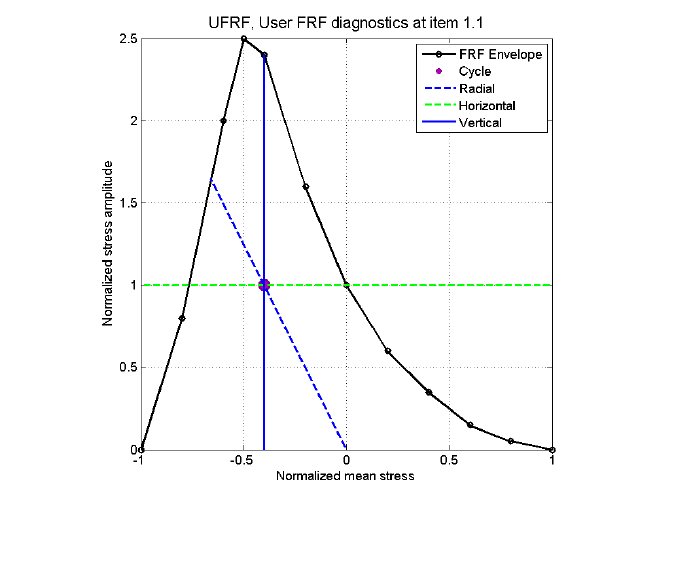

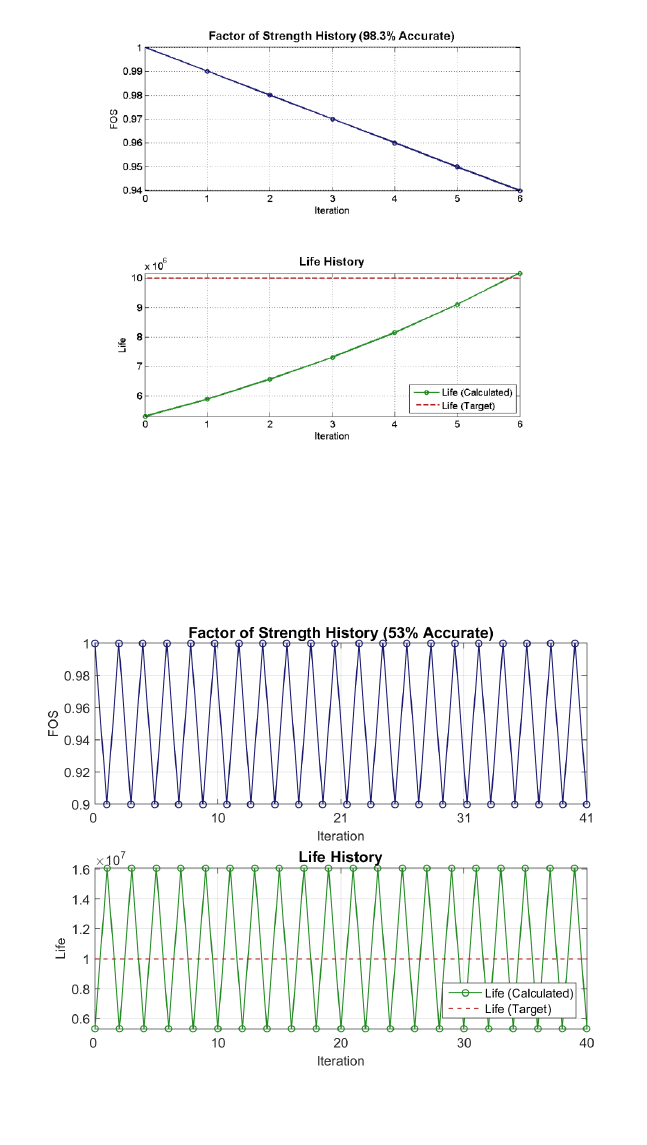

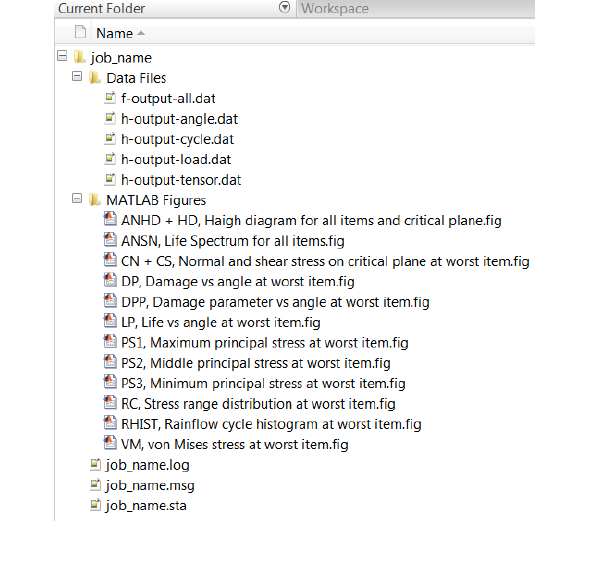

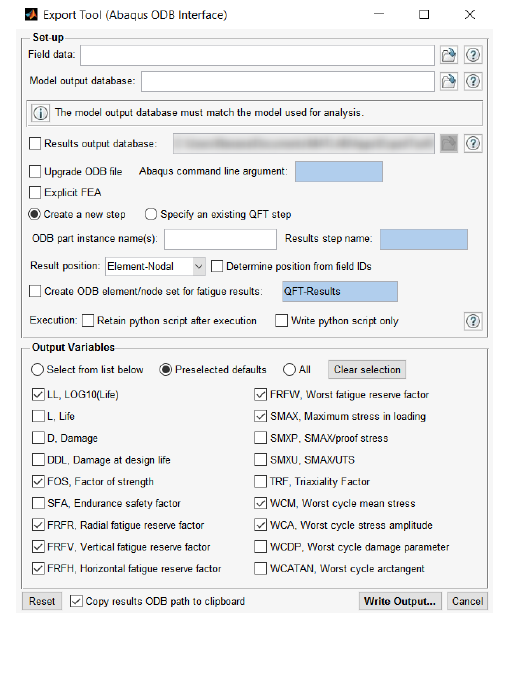

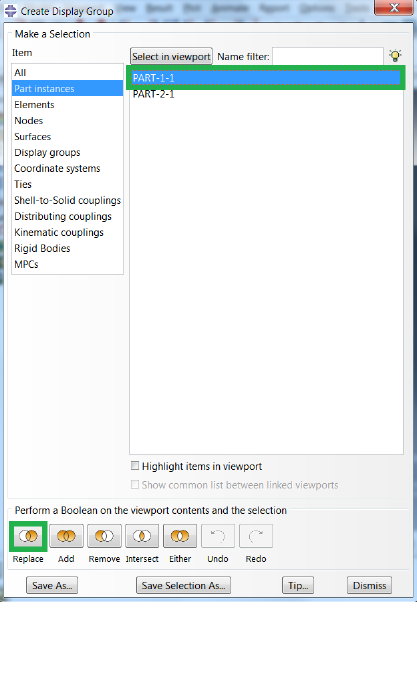

45