Climada Manual

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 72

- Instead of an Introduction: Preamble

- A visual primer

- A brief introduction to the concepts behind CLIMADA

- Getting started

- From tropical cyclone hazard generation to the adaptation cost curve

- Function reference

- CLIMADA modules

- Writing your own code

- Appendices

1

CLIMADA MANUAL 08 Feb 2018

Obtain the full package from https://github.com/davidnbresch/climada

David N. Bresch, david.bresch@gmail.com and dbresch@ethz.ch

Lea Mueller, muellele@gmail.com

CLIMADA is an open-source and -access global probabilistic risk modelling and

adaptation economics platform.

CLIMADA allows to assess risk, i.e. to estimate the expected economic impact of

weather and climate as a measure of risk today, the incremental increase from

economic growth and the further incremental increase due to climate change.

CLIMADA supports the appraisal of risk management options and adaptation

measures with the aim to strengthen weather and climate-resilient development.

The economics of climate adaptation methodology (ECA)

1

,

2

as implemented in

CLIMADA provides decision makers with a fact base to understand the impact of

weather and climate on their economies, including cost/benefit perspectives on

specific risk reduction measures.

Cite as

3

: Bresch, D. N., & Mueller, L., 2016: CLIMADA – the open-source and -access global

probabilistic risk modelling platform. https://github.com/davidnbresch/climada

The current version of climada does support the following hazards/perils:

Peril Coverage Resolution

4

Tropical cyclones and associated storm surge

5

global 10x10 and 1x1 km

European winter storms

6

all Europe 10x10 km

Wildfire and droughts

7

global 10x10 and 1x1 km

Earthquake and Volcano

8

global 10x10 and 1x1 km

Meteorites

9

global 10x10 and 1x1 km

River flood

10

(interface to existing hazard models) to be developed 1x1 km

Asset base

11

(kind of market portfolio) global 10x10 and 1x1 km

Population

12

global 10x10 and 1x1 km

Note on climate change: implemented by altering probabilistic hazard event sets (based on SREX

13

etc.), see pertinent sections in this manual.

Note on interoperability: CLIMADA hazard, exposure and vulnerability databases can be exported and

integrated into OASIS LMF ktools

14

. CLIMADA does support ktools as an alternative kernel.

1

See lecture course ETH Zurich: http://www.ied.ethz.ch/education/courses-offered/climrisk.html

2

For a list of case studies, see http://www.wcr.ethz.ch/research/casestudies.html

3

While climada is all lower-case in MATLAB, please use CLIMADA when referring to it in texts.

4

CLIMADA operates on a variable grid, i.e. any resolution is possible. The listed resolution is just

indicative of the resolution that is available by default.

5

Tropical cyclones are part of core climada, but see the section in additional modules, too.

6

See also climada module https://github.com/davidnbresch/climada_module_storm_europe

7

See also climada module https://github.com/davidnbresch/climada_module_drought_fire. Wildfire is

operational, drought is in early development stage.

8

See also climada module https://github.com/davidnbresch/climada_module_earthquake_volcano

9

See also climada module https://github.com/davidnbresch/climada_module_meteorite

10

See also climada module https://github.com/davidnbresch/climada_module_flood - still in early

development.

11

CLIMADA provides a default asset base for every country and default damage curves for every peril.

12

Based on satellite night-light intensity (open access). Interface to other sources (e.g. ssp2).

13

IPCC Special Report on Extremes, http://www.ipcc.ch/report/srex/

14

OASIS LMF and ktools, see http://www.oasislmf.org/the-toolkit

2

Instead of an Introduction: Preamble

Climate adaptation is an urgent priority for the custodians of national and local

economies, such as finance ministers and mayors. Such decision-makers ask:

• What is the potential climate-related damage to our economies and societies

over the coming decades?

• How much of that damage can we avert, with what measures?

• What investment will be required to fund those measures – and will the benefits

of that investment outweigh the costs?

The economics of climate adaptation methodology as implemented in climada

provides decision-makers with a fact base to answering these questions in a

systematic way. It enables them to understand the impact of climate on their

economies – and identify actions to minimize that impact at the lowest cost to

society. Hence it allows decision-makers to integrate adaptation with economic

development and sustainable growth. In essence, we provide a methodology to pro-

actively manage total climate risk. Using state-of-the-art probabilistic modelling, we

estimate the expected economic damage as a measure of risk today, the incremental

increase from economic growth and the further incremental increase due to climate

change. We then build a portfolio of adaptation measures, assessing the damage

aversion potential and cost-benefit ratio for each measure. The adaptation cost curve

illustrates that a balanced portfolio of prevention, intervention and insurance

measures allows to pro-actively managing total climate risk.

CLIMADA consists of the core module, providing the user with the key functionality to

perform an economics of climate adaptation assessment. Additional modules

implement global coverage (automatic asset generation), a series of hazards (tropical

cyclone, surge, rain, European winter storms, … and even earthquake, volcano and

meteorites) and further functionality, such as Google Earth access, animations…

CLIMADA runs on both MATLAB (version 7

15

and higher, all tested with version 9)

and GNU Octave (version 3.8.0

16

and higher). Some modules might not have been

thoroughly tested using Octave, but core CLIMADA works without limitations (in order

to read Excel, Octave’s IO package has to be installed, see section “Notes on

Octave” below). All CLIMADA is available on GitHub

17

.

15

see e.g. ch.mathworks.com/products/matlab and http://www.cse.cuhk.edu.hk/~cslui/CSCI1050/book.pdf

16

https://www.gnu.org/software/octave

17

Either use Clone to desktop in Git or install git first, then clone any repository by first creating an

empty folder (e.g. mkdir climada), then cd climada, then (note that the last . is part of the

command)

git clone https://github.com/davidnbresch/climada . See also climada_git_pull

3

Contents

Instead of an Introduction: Preamble ................................................................. 2

A visual primer..................................................................................................... 5

A brief introduction to the concepts behind CLIMADA ...................................... 6

Probabilistic damage model ............................................................................ 6

Adaptation cost curve ...................................................................................... 9

A note on decision-making ............................................................................ 11

Getting started ................................................................................................... 12

Local installation ............................................................................................ 12

Process on one page .................................................................................... 14

Excel interface to CLIMADA ......................................................................... 15

A note on Excel and Open Office file formats and their tolerance in

MATLAB and Octave ................................................................................. 18

Constructing your own entity ..................................................................... 18

From tropical cyclone hazard generation to the adaptation cost curve ........... 19

Hazard set ..................................................................................................... 19

Assets and damage functions ....................................................................... 26

Damage calculation ....................................................................................... 29

Dealing with uncertainty ................................................................................ 30

Adaptation cost curve .................................................................................... 31

A climada application example – tropical cyclone ensemble damage

forecasts ........................................................................................................ 35

Function reference ............................................................................................ 36

Basic entity functions..................................................................................... 36

Core calculations ........................................................................................... 36

Basic hazard functions .................................................................................. 37

Further display functions ............................................................................... 37

Tropical cyclone (TC) specific functions ....................................................... 38

Basic functions .............................................................................................. 38

Admin functions ............................................................................................. 38

Special functions (there are more) ................................................................ 39

CLIMADA modules............................................................................................ 39

advanced ....................................................................................................... 39

tropical_cyclone ............................................................................................. 40

storm_europe ................................................................................................ 40

country_risk ................................................................................................... 40

isimip .............................................................................................................. 40

4

earthquake_volcano ...................................................................................... 40

elevation_models .......................................................................................... 40

meteorite ........................................................................................................ 41

flood ............................................................................................................... 41

barisal_demo and salvador_demo ................................................................ 41

kml_toolbox.................................................................................................... 41

octave_io_fix .................................................................................................. 41

Some hints to useful data sources ................................................................ 41

Writing your own code ...................................................................................... 42

climada_init_vars ........................................................................................... 43

climada startup .............................................................................................. 45

Description of key climada structures ........................................................... 45

Notes on Octave (and OpenOffice) .............................................................. 50

Appendices ........................................................................................................ 51

climada, the inner workings .......................................................................... 51

Implementation .......................................................................................... 52

Insurance remarks ......................................................................................... 53

Insurability & forms of insurance ............................................................... 53

Insurance conditions .................................................................................. 56

climada implementation of insurance conditions ...................................... 57

Note on scenarios ......................................................................................... 58

climate impact scenarios – remarks on climada implementation ............. 59

Climate impact scenarios – sources ......................................................... 60

Tropical cyclones – technical remarks .......................................................... 61

Windfield calculation .................................................................................. 61

Single cyclone track evolution animation .................................................. 64

Economics of Climate Adaptation (ECA) – key routines .............................. 65

A remark on loss, damage and vulnerability ................................................ 69

Further sources of DRM/climate adaptation information/tools ..................... 70

MATLAB/Python – some possibly useful tools ............................................. 71

5

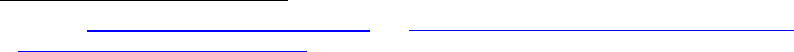

A visual primer

Figure: The demonstration code climada_demo

18

implements the concept of total

climate risk and cost-effective adaptation in an interactive way: The user can

experiment with key relevant factors (sliders, top) and instantly observe the effect –

both on risk (measured by expected damage, graph on the left) and the basket of

adaptation measures (shown as adaptation cost curve, graph on the right). The user

can also edit the underlying input data

19

and hence experiment further.

The simple call climada runs the core automatically and prompts for user input.

18

This GUI runs properly under MATLAB, see climada_demo_step_by_step for Octave for he time

being, as Octave does not yet support all GUI features.

19

Just edit the file ../climada/data/entities/demo_today.xls, then select Re-init from the GUI’s file menu.

Climate change

scenario

Implementation of adaptation

measures

The total climate risk and its key drivers,

e.g. for given parameters (set in sliders

above) economic growth contributes

second most to the total climate risk.

The adaptation measures, their

damage aversion potential and costs,

e.g. for 0.45 dollar invested in building

code, 1 dollar of loss can be averted.

Economic development

6

A brief introduction to the concepts behind CLIMADA

Instead of studying this now, the user might also jump to the step-by-step

introduction below and later come back.

Risk is the combination of the probability [or likelihood] of a consequence and its

magnitude, i.e. risk = probability x severity. Or, to be more specific:

risk = hazard x exposure x vulnerability

= (probability x intensity) x exposure x vulnerability

\…………………severity……………………../

where both the probability of occurrence and the (physical) intensity are part of the

hazard (sometimes named peril) and the ‘product’ of intensity, exposure and

vulnerability constitutes the severity. The product symbol ‘x’ does not stand for a

simple multiplication, but in fact a convolution of the respective distributions. Instead

of providing the general framework here, one can easily think of severity thus being

of the following form

severity = F(intensity,value,vulnerability)

where F is often of the form F = value * f(intensity),

where f(intensity) is the damage function which parametrizes vulnerability

Note that value is the asset value of the exposure, intensity the hazard intensity at

the exposure location and the * a simple multiplication. Note that assets do not

necessarily need to be monetary assets and value hence not necessarily a monetary

value, think about exposed people. In this simple form, vulnerability is given as a

function f of intensity (and asset class/type). See Appendix “climada, the inner

workings” below for details.

Any risk model hence attempts to quantify these elements in a way most appropriate

for the specific purpose. Depending on purpose, the level of detail in quantification of

any element will thus vary. For the geographical representation, think e.g. of a local

flood model at very high resolution of a few decameters compared to a global

earthquake model at e.g. 10 km resolution. For the vulnerability resolution, think e.g.

of a general description of building damage to an earthquake as a simple function of

modified Mercally intensity

20

compared to a detailed damage curve depending on

flood height in meters, building construction, number of floors, basements… and

usage (also called occupancy).

Probabilistic damage model

A model is nothing more than a simplified representation of reality. Natural hazard

models use the virtual world of computers in an attempt to simulate natural

catastrophe damage expected in reality. The quantification of natural catastrophe risk

depends on three basic elements (or sets of data), upon which the damage model

operates. They are:

• Hazard (sometimes also called peril): Where, how often and with what

intensity do events occur? A hazard (event) set is usually generated once and

stored for subsequent calls (resulting in massive speedup). A hazard event

20

Please note that a sophisticated earthquake model can indeed be built on MMI... see e.g.

https://github.com/davidnbresch/climada_module_earthquake_volcano

7

set comprises just many single events, i.e. one ‘footprint’ for each event.

Think of a single event footprint as for example a georeferenced distribution

(or simply a map) of windspeed.

• Assets (also referred to as value distribution or portfolio of exposed assets or

simply exposure): Where are the various types of potentially affected objects

located and what is their value? Think of the assets as for example a

georeferenced distribution (or simply a map) of houses, represented by their

replacement values – or of people, represented by their number at any given

location.

• Damage function (sometimes referred to as vulnerability or vulnerability

curve): What is the extent of damage at a given event intensity? A damage

function is just a simple function of the hazard intensity, but there can be

many damage functions for all kinds of assets (and obviously for different

hazards).

These three building blocks are combined in the process of estimating event damage

as follows:

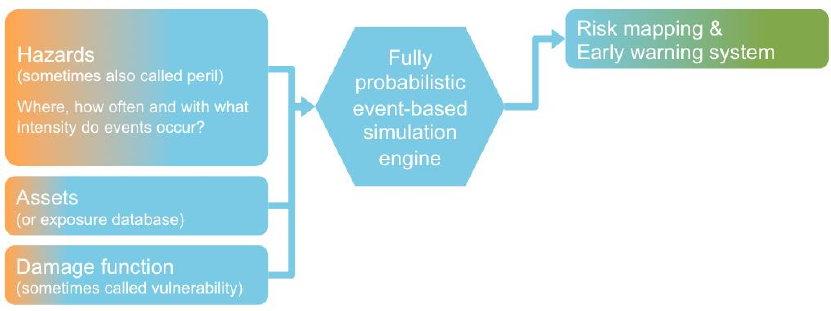

Fig: The basic three building blocks (hazard, assets and damage function). Main result is risk

quantification (here subsumed as risk mapping), but the same system can also be (operationally) used

to provide early warnings in terms of impact quantities, if fed with a particular (forecasted) single hazard

event (such as a wind field of a storm or an shake map of an earthquake).

This approach may generally be applied to all forms of natural hazard, whether

storm, flood, earthquake … or any other type of peril.

The simplest way to assess the damage is to simulate an individual natural

catastrophe scenario. This is known as “deterministic” or “scenario-based” modeling.

Such models often refer back to major historical damage events, applying these to

the assets that exist now (“as-if analysis”). The disadvantage of this method is that,

whilst it allows a single, extreme, individual event damage to be assessed, it fails to

take account of all the other events that might occur. It is not possible to calculate an

expected annual damage for a portfolio of assets on the basis of single event

damage, and any prediction as to the occurrence frequency of the model scenario

will remain very uncertain.

Today, in an attempt to avoid these problems, so-called “probabilistic” models (i.e. a

fully probabilistic simulation engine) are being used to assess hazards such as

storms and floods. Rather than simply analyzing one event, the computer is

programmed to function as a sort of time-lapse film camera, simulating all the

possible events that could unfold within a sufficiently long period of time (thousands

or tens of thousands of years). This type of model produces a “representative” list of

event damages (i.e. a list that accurately reflects the risk). From this list it is possible

8

to understand the relationship between damage potential and occurrence frequency,

and hence the cost of average and extreme damage burdens.

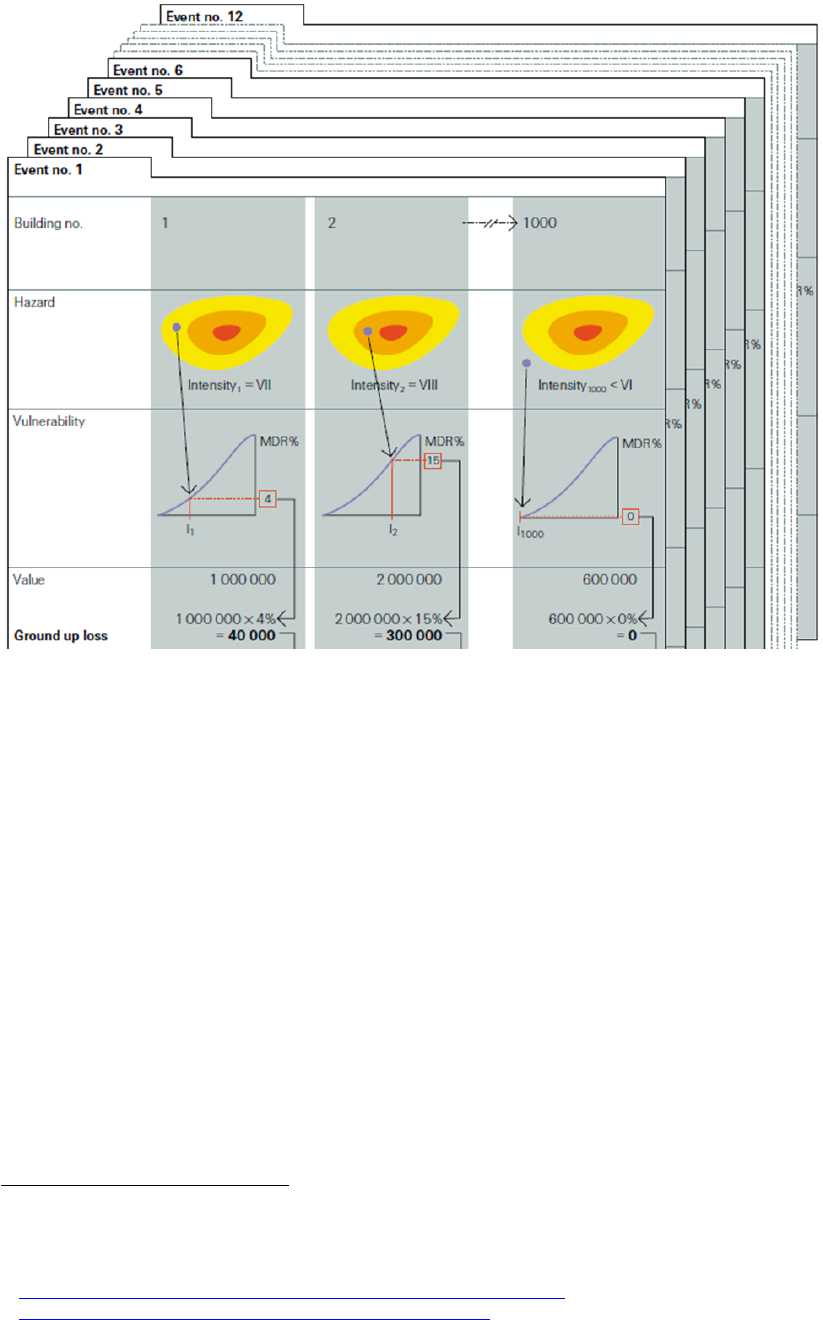

Figure (from Swiss Re publication "Natural catastrophes and reinsurance"): Using risk assessment

tools to calculate event damage. Let's assume a hypothetical portfolio containing 1000 assets

(buildings). For the sake of simplicity, let us assume that the risk assessment tool only contains 12

potential events over a projected period of 200 years. The following calculations would be performed:

• The hazard module generates the expected intensity (VII) for event no.1 at asset (building)

location no.1.

• The damage function (called vulnerability in the figure) corresponding to the asset provides us

with the mean damage ratio (MDR

21

) for given hazard intensity (4 stands for 4% of the asset's

value)

• The damage is calculated by multiplying the MDR and the value of the asset (1'000'000),

resulting in a (ground up) damage (called loss in the figure) of 40'000.

• Above steps are performed on all 1'000 assets in the portfolio. The sum of all damages

produces the total damage from event no.1, i.e. event damage no1.

• All above steps are then repeated for the other (11) events in the event set.

• Upon completion of all these stages in the modeling process, a list of all event damage is

produced, upon which damage statistics can be derived (average damage, max damage…).

See mentioned lecture course

22

or e.g. the Swiss Re publication

23

"Natural

catastrophes and reinsurance", which covers the methodology in detail.

21

Please note that climada uses MDR=MDD*PAA, where Mean damage degree (MDD) and percentage

of affected assets (PAA) allow to deal with local deductibles in a more appropriate form than a simple

Mean damage ration (MDR) model could do, since one does, due to the PAA, know how many assets

are affected, hence deductible application is more specific.

22

http://www.iac.ethz.ch/edu/courses/master/modules/climate-risk.html

23

http://media.swissre.com/documents/Nat_Cat_reins_en.pdf

9

Adaptation cost curve

While the assessment of cost and damage aversion potential of any adaptation

measure can be quite demanding, climada provides a consistent approach to do so.

The tool provides a common yet flexible framework to appraise a basket of

adaptation options (sometimes also referred to as resilience measures). Each option

can be specified to act on any component of the model (hazard, assets and damage

function) – or even a combination thereof.

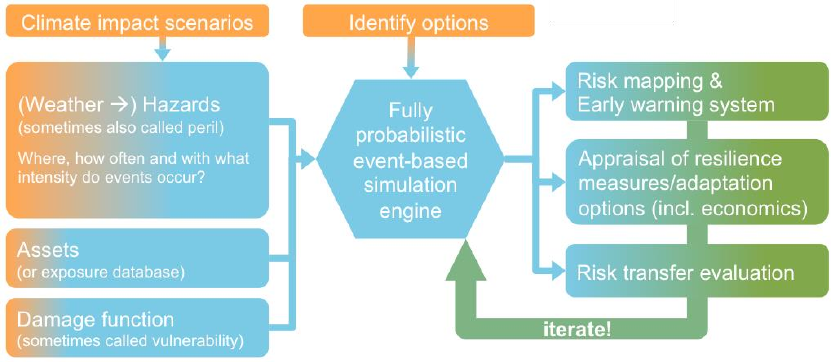

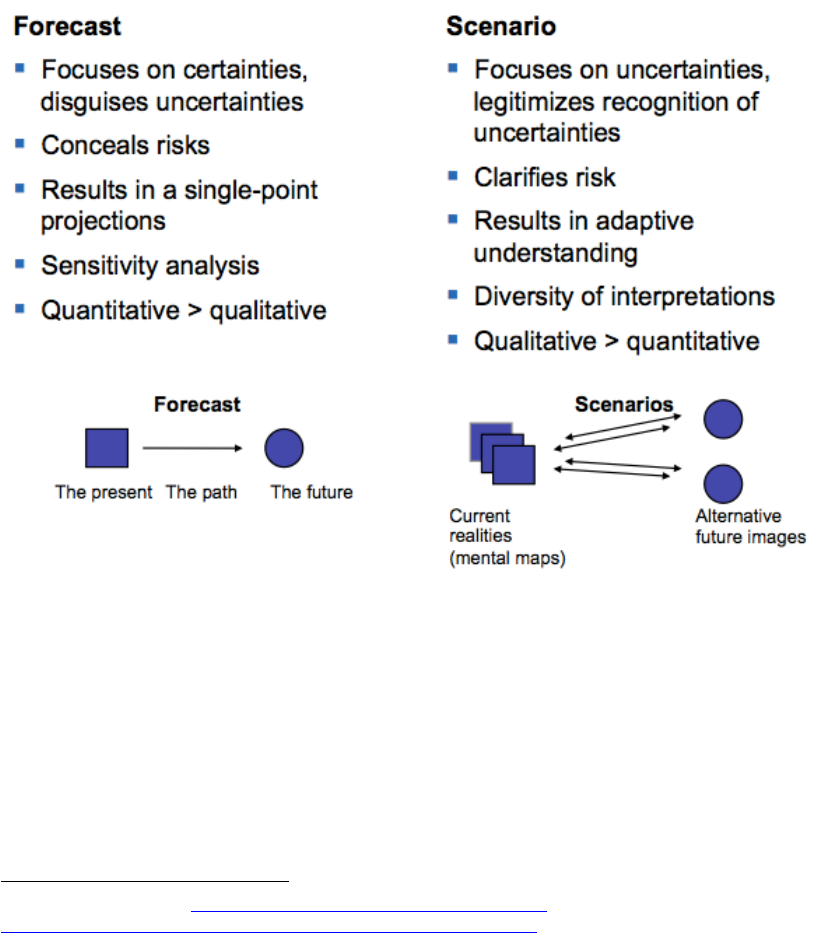

Fig: The climada model, including inputs such as different climate scenarios (which change the hazard

component, which itself can be understood as being built on weather events) as well as adaptation

options, plus additional outputs such as appraisal of resilience measures or adaptation options. Note the

emphasis on iterative approach in options appraisal (see main text, too).

The specific potential damage aversion comes with a certain degree of uncertainty,

even for measures for which extensive research exists – for example, for building

codes to fix roofs against hurricane winds. Hence, we strongly propose an iterative

approach, i.e. to re-run climada with a range of parameters in order to converge to a

consistent evaluation.

The assembled cost curve shows – from left to right – the range of measures from

most cost-efficient to least cost-efficient. The results should thus be used to start

discussions on the different measures and the opportunity to avert expected damage,

rather than be read as recommendations to implement certain measures.

10

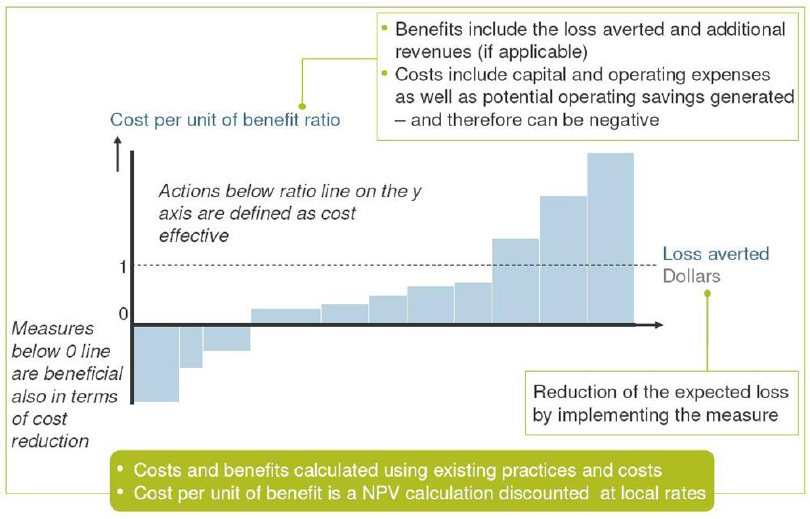

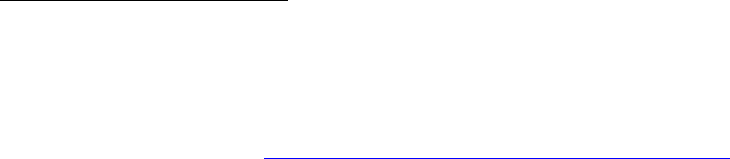

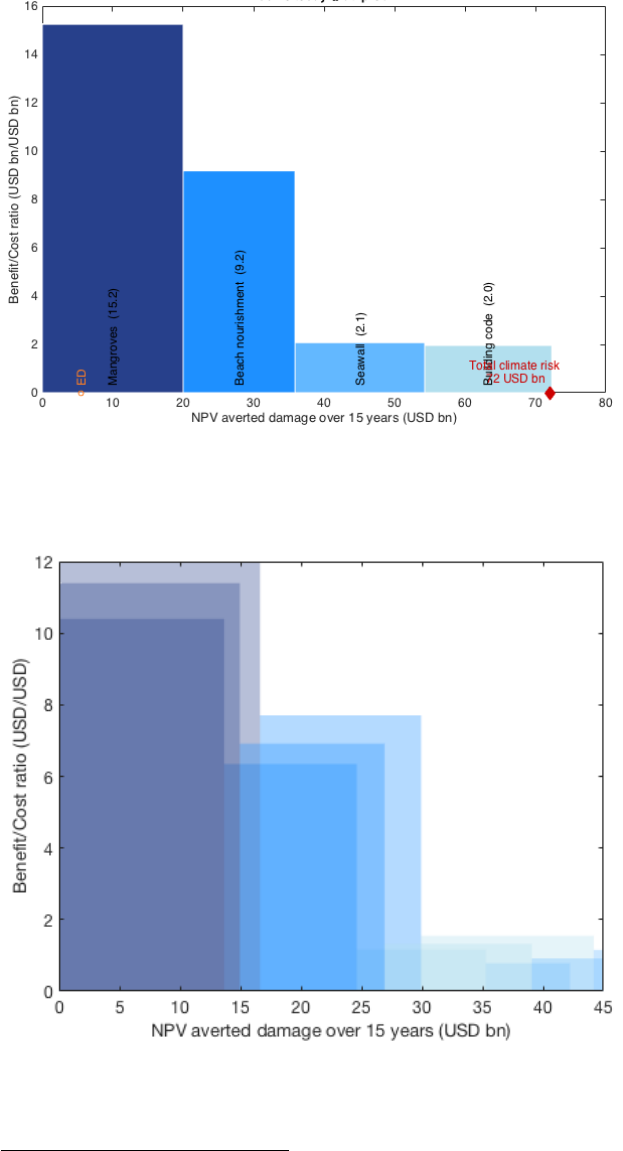

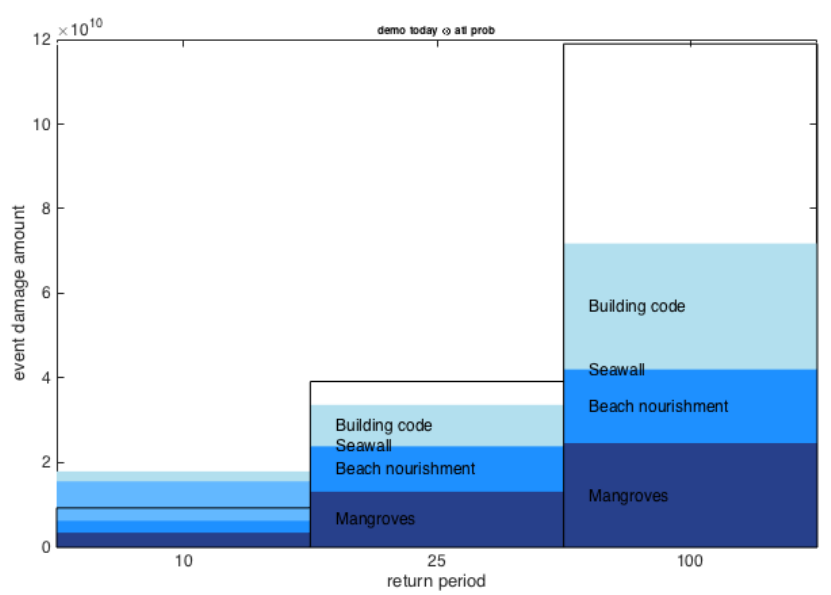

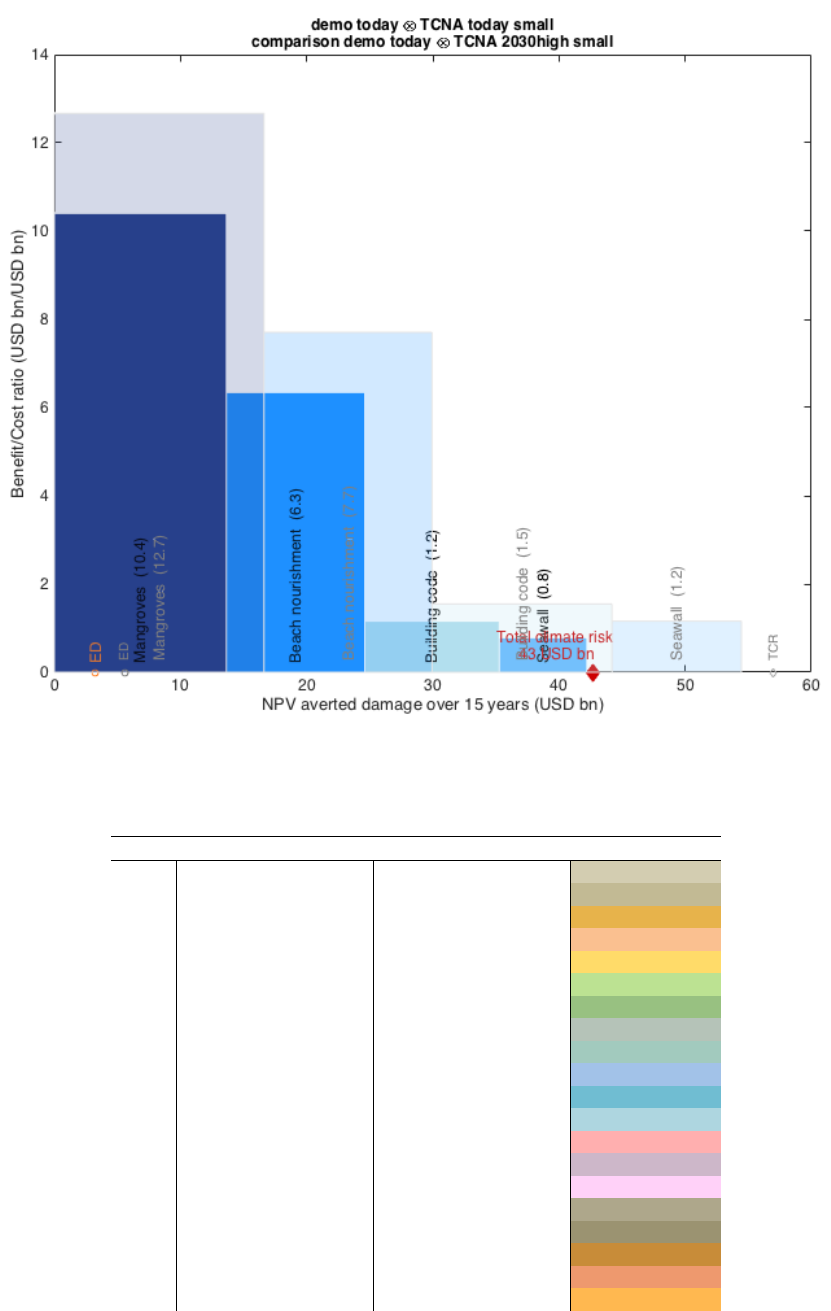

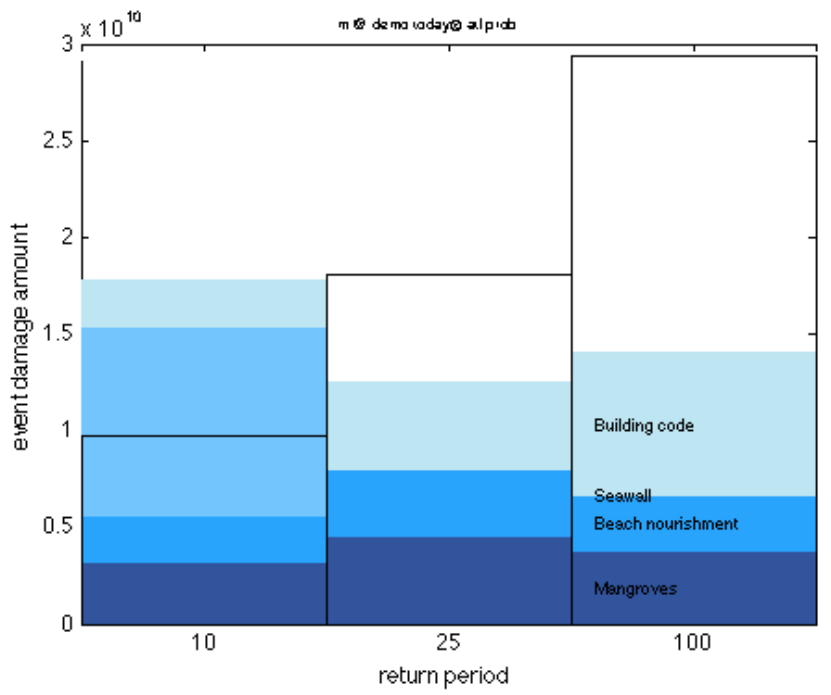

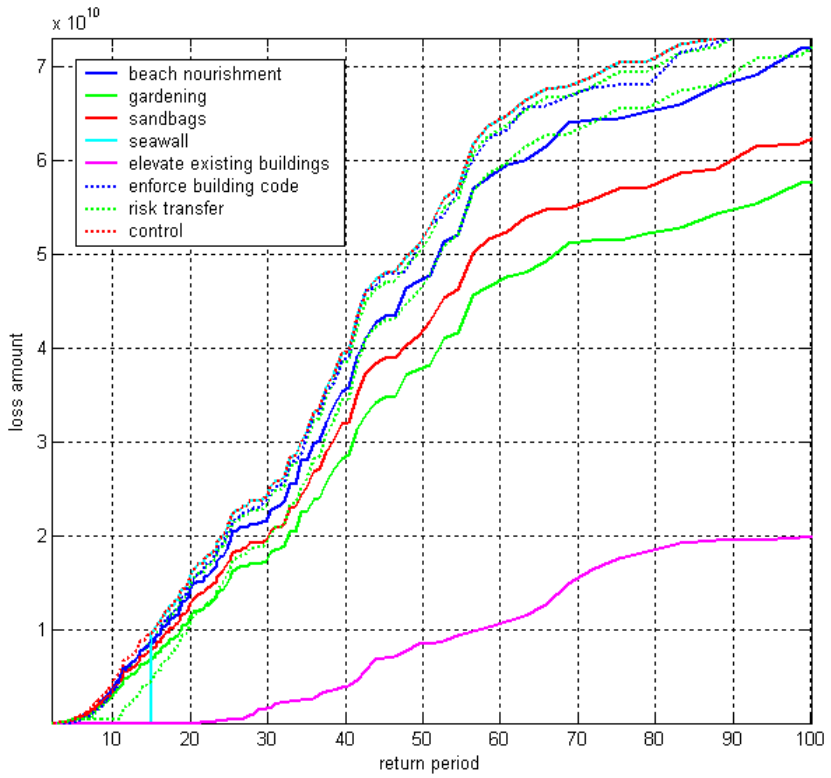

Figure: The width of each bar in a cost curve represents the cumulative potential of that measure to

reduce total expected damage up to 2030 for a given scenario. The height of each bar represents the

ratio between costs and benefits for that measure. Whether or not this ratio is attractive to a decision

maker depends on many factors, including risk appetite. After considering the other – including non-

economic – impacts and benefits related to implementing a measure, a risk-neutral decision maker

would select measures based on a sense of how much protection they offer and at what cost. The

advantage of calculating cost-benefit ratios for all measures is that doing so allows decision-makers to

compare measures using a single simple metric.

In a recipe form, the adaptation cost curve is constructed as follows (repeat for each

measure)

1. Calculate present value (PV) of costs of measure [e.g. Excel, outside of climada]

2. Risk today: import today's assets and damage functions (input via Excel) and

expose them to present hazard (part of climada)

2.1. climada calculates annual expected damage with no measures

2.2. climada calculates annual expected damage with measure applied

difference 2.1) minus 2.2) shows benefit of measure today

3. Future risk (e.g. year 2030): import future assets and damage functions (input via

Excel, damage functions likely to be unchanged) and expose them to future

hazard (part of climada)

3.1. climada calculates annual expected damage with no measures

3.2. climada calculates annual expected damage with measure applied

difference 3.1) minus 3.2) shows future benefit of measure

4. climada discounts benefits --> horizontal axis of adaptation cost curve

5. climada calculates the cost benefit ratio vertical axis of adaptation cost curve

11

A note on decision-making

While the climada tool does provide decision-makers with that a fact base, it does by

no means pre-empt any decision or constitute an adaptation strategy by itself. The

adaptation cost curve shall by no means be interpreted as a ‘recipe’ to be

implemented ‘from left to right’. Many more elements need to be considered in order

to take a decision, not least to contextualize model results.

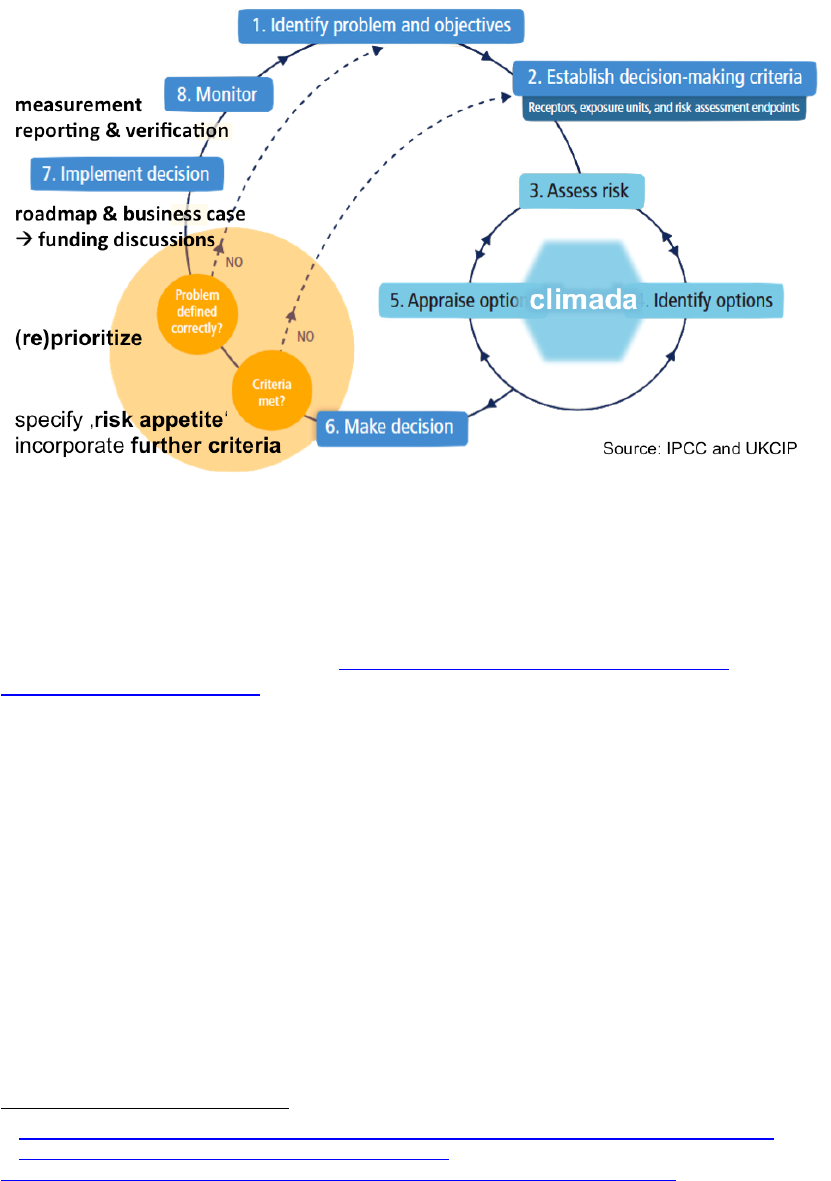

Fig: The decision-making context around climada. Note the necessary steps that precede any

application of climada – and even more so the steps that build on the modeling results.

Please refer to the descriptions of Economics of Climate Adaptation (ECA)

methodology

24

, which emphasizes mentioned context and provides a structured

approach to provide decision makers with a comprehensive fact base.

Please note that a comprehensive Economics of Climate Adaptation (ECA)

guidebook for practitioners

25

has recently been published. It describes the project

setup, the full planning cycle for an adaptation study and puts a lot of emphasis on

the stakeholder engagement. The ECA Guidebook is designed to accompany any

climate change adaptation assessment using the open-source ECA methodology and

its associated climada tool. The ECA Guidebook is particularly helpful in cases which

require an integrated approach towards the development, planning and financing of

specific adaptation measures.

24

http://media.swissre.com/documents/rethinking_shaping_climate_resilent_development_en.pdf

25

https://www.kfw-entwicklungsbank.de/PDF/Download-

Center/Materialien/2016_No6_Guidebook_Economics-of-Climate-Adaptation_EN.pdf

12

Getting started

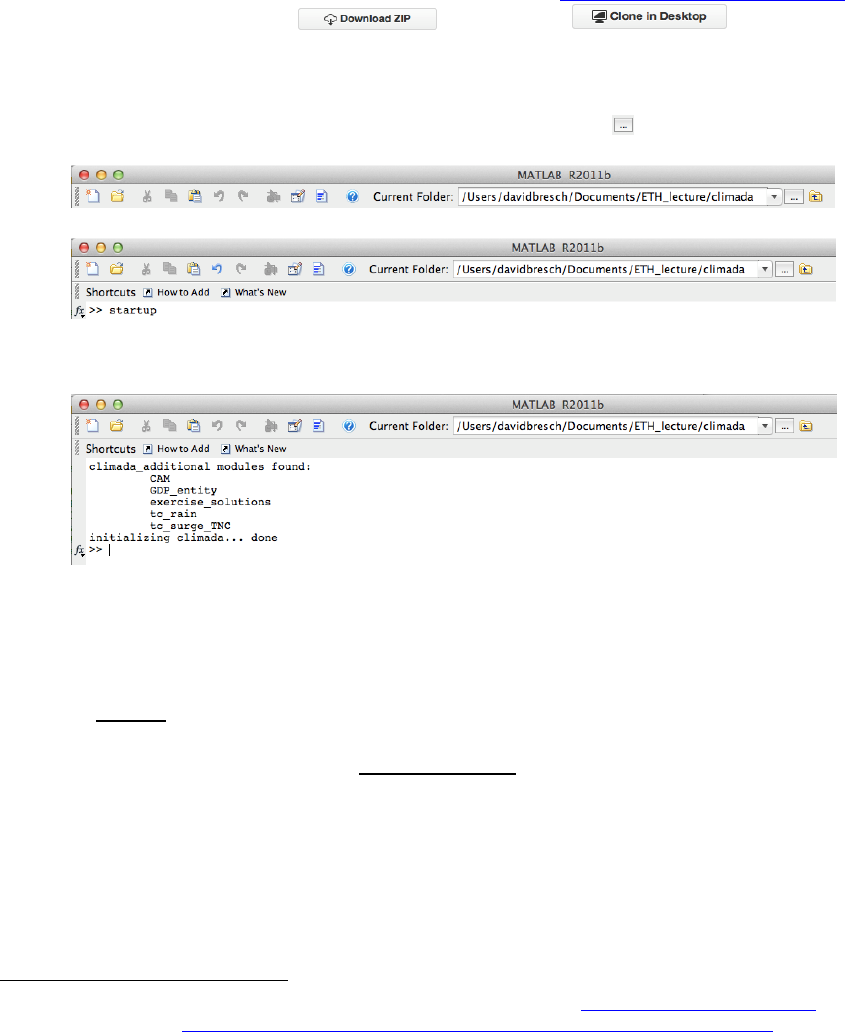

Local installation

Get the climada core module from GitHub

26

, i.e. go to https://github.com/davidnbresch/climada

and either just click on the button or on .or just type

git clone https://github.com/davidnbresch/climada.git in a shell (if you

have git installed).

• Set the MATLAB

27

Current Folder to climada

28

(use the button to browse),

e.g.:

• Enter startup in the MATLAB Command Window:

and press Enter (or Return). This initializes climada, sets some variables (e.g.

the location of the data folder

29

) and detects any additional modules

30

.

After that, the Command Window looks something like:

It’s ok if there are no further modules shown, as long as ... done appears.

• Start by just invoking the climada demonstration by entering climada_demo

in the MATLAB Command Window

31

, which is also the best way to test

whether climada works properly – you should see something as shown above

as a visual primer (see above) and be able to play with the sliders.

In Octave, use climada_demo_step_by_step, instead, as Octave does

not support guide (the interactive climada GUI has been built with).

In case you run climada on a remote machine (e.g. a cluster, if no window

system), test it by entering the following three commands:

entity=climada_entity_load('USA_UnitedStates_Florida_entity');

hazard=climada_hazard_load('USA_UnitedStates_Florida_atl_TC');

EDS=climada_EDS_calc(entity,hazard)

EDS then contains the event damage set and the variable EDS.ED should

contain a value close to 2.3824e+09 (i.e. simulated annual expected tropical

cyclone damage to Florida amounts to USD 2.4 billion).

26

About GitHub, recommended reading (especially chapters 1, 2 and 3): http://git-scm.com/book/en/v2

and directly to the pdf: https://progit2.s3.amazonaws.com/en/2015-02-21-5277c/progit-en.346.pdf

27

Same procedure in Octave, see also „Notes on Octave“ below.

28

Usually the folder you downloaded or cloned to from GitHub.

29

The global variable climada_global (a struct) contains all these variables. See the code

climada_init_vars.m which sets all these variables. Make sure you never issue a clear all command,

as this would also delete climada_global and hence climada would not find it’s stuff anymore.

30

A climada_advanced module extends the functionality of climada and allows users to further develop

climada without risking to change the core code. See further below for some examples of modules. Just

run climada_git_clone to obtain and install most modules fully automatically.

31

From now on, just type any command in Courier in the MATAB Command Window, as we will not

state this each time again.

13

• Note that the command climada allows you to run the essentials in one go,

i.e. to import assets and hazard event sets, to show a few plots for checks, to

run all calculations and to produce the final adaptation cost curve

32

.

• Further note that climada('TEST_CLIMADA')tests the full climada (very

similar to climada_demo_step_by_step), that climada('DEMO')

invokes the demo GUI (same as climada_demo) and

• climada('TC') invokes the interactive GUI to calculate the ensemble-

prediction damage for a specific tropical cyclone (automatically accesses the

web to find latest tracks etc.).

While the standard climada setup contains the data folder within climada, it is highly

recommended to create a folder named climada_data parallel to climada to allow for

your local climada data NOT being synched (only the folder ../climada/data within

core climada gets synched). Just run climada_git_clone, which installs most

modules and sets up such a local climada_data folder. This way, any data used in

climada beyond the default files will not be synchronised. Your directory tree shall

then look like

{parent_dir}/climada % contains core climada, usually no edits in there

{parent_dir}/climada_data % contains your data

Make sure at the start, the folder {parent_dir}/climada_data (your local data folder)

does at least contain the contents of {parent_dir}/climada/data (the sub-folder within

core climada). If you run climada_git_clone, this has been taken care of.

In case you do not want to (or cannot, on some systems) run climada_git_clone,

install modules yourself (skip this, if you are new to climada and come back to thism

later). In order to grant core climada access to additional modules (see

https://github.com/davidnbresch?tab=repositories and the section on climada

modules further below), create a folder ‘climada_modules’ on the same level as the

core climada folder to store any additional modules. This way, climada sources all

modules' code upon startup. Your directory tree shall then look like

{parent_dir}/climada % contains core climada, usually no edits in there

{parent_dir}/climada_data % contains your data

{parent_dir}/climada_modules % contains additional modules

Again, see the code climada_git_clone to automatically clone all modules and

climada_git_pull to automatically update all installed modules

33

.

As for a start, you can generate the asset base (so-called entity) for any country with

entity=climada_entity_country and create all standard hazard sets with

hazard_info=climada_hazards(entity). If you do not have the relevant

modules installed

34

, climada prompts you, or you might consider to run

climada_git_clone, to get all modules installed.

32

On subsequent calls, the routine suggests last inputs - and if the first file selection is the same as on

previous call, even asks to re-run with previous call's inputs without asking for each file’s confirmation. It

further checks for the entity file to have been edited since last call. If not, it does not ask for plotting

assets and damagefunctions again.

33

Proper working of these two routines depend on your operating environment (it issues system

commands to your system’s git). On latest MATLAB version, it looks as if one could use its own git (not

implemented) and for Octave, it depends again on how it is set up (access to system commands).

34

Depending on the country (i.e. its exposure to different perils), not all modules might be needed.

14

Process on one page

To cut the whole story short, CLIMADA (inter alia) produces an adaptation cost

curve, as shown in the lower right part of the visual primer (and many more nice

things). The following steps are required in order to come up with a climate

adaptation cost curve

1. Generate a hazard event set

35

a. Generate a hazard event set for today’s climate

i. Obtain historical events

ii. Produce the probabilistic events

iii. Store intensities at centroids

b. Repeat above steps for future hazard

(climate change impact scenarios, e.g. for 2030)

2. Import a list of assets and corresponding damage functions

36

(the so-called entity)

a. Read the list of today’s assets

i. Encode to centroids (to the nearest point where hazard information is

available, up to a distance threshold

37

)

ii. Read the damage functions and make sure they correspond to

assets

b. Repeat above steps for future assets (e.g. 2030)

3. Import the list of adaptation measures

(also stored into the entity structure)

a. Read the list of measures

4. Calculate the damages and benefits of measures

a. Calculate the damages

38

for the list of today’s assets, today’s hazard event

set and the list of measures

39

b. Repeat the previous step for future assets but still today’s hazard and the list

of measures

c. Finally, repeat the first step (a.) again now for future assets, the climate

change scenarios and the list of measures. Note that for this step, you need

to create the hazard event set for the climate change scenarios (e.g. 2030)

5. Display the results – e.g. in the form of an adaptation cost curve.

35

Provided for basic tropical cyclones by core climada and climada module for other (and refined)

hazards (see further below).

36

Sometimes also referred to as ‚vulnerability curves’ of just ‚vulnerabilities’. See lecture material for

proper definitions.

37

For the threshold, see the parameter climada_global.max_encoding_distance_m in

climada_init_vars, the encoding distance in meters. One theoretically could interpolate between

points where the hazard intensity is defined (in fact, technically trivial), but in order for the code to be

general, the user shall in such a case provide a higher resolution hazard set, as the interpolation

depends on the kind of hazard – and the performance would drop substantially if the interpolation is

repeated each time a damage is calculated. Note that for the full use of climada (adaptation options

appraisal), the full damage calculations are easily run hundreds of times. Hence it is much faster to

provide the hazard at the appropriate resolution. climada does not make any assumption beyond what is

provided. As we otherwise would lure the user into providing suboptimal inputs and ‘hope’ for climada to

fix it ;-)

38

In essence, we calculate damagej,k=valuek * f(intensityj,k), where valuek ist he value of asset k and

intensityj,k the hazard intensity of event j at location of asset k. f denotes the damage function, i.e. the

relation between the hazard intensity and the resulting damage (as a fraction of the asset value). See

“climada, the inner workings” further below for some more details on the damage calculation

39

climada quantifies the damage reduction benefit of each measure by comparing the damage with the

measure in place tot he (default) run with no measures in place. This is obviously done on the full event

damage set (EDS), i.e. event by event.

15

Excel interface to CLIMADA

The hazard module is usually provided by climada developers or advanced users (it

will be described in the next section “From tropical cyclone hazard generation to the

adaptation cost curve”, see also the description of the climada modules further

below). It forms an integral part of climada and can be developed for almost any

hazard (wind, flood, surge, landslides...).

The assets, the damage functions as well as the list (and costs) of adaptation

measures are defined in an Excel file which is imported into climada (for advanced

users, it is possible to import this information from any source and to modify free

within MATLAB, of course). This Excel file is referred to as ‘entity’. climada provides

several outputs, among them the adaptation cost curve (as a graphic in almost any

format). Obviously, any number calculated by climada can be exported, too.

To start with, the key interface to climada is an Excel file

40

, with three main tabs,

named ‘assets’, ‘damagefunctions’ and ‘measures’. To familiarize, one might briefly

read this section, then inspect the template (../data/entities/entity_template.xlsx), and

(if familiar with MATLAB, otherwise proceed first to “From tropical cyclone hazard

generation to the adaptation cost curve”), run

entity=climada_entity_read('entity_template','TCNA_today_small');

and inspect the content of entity in MATLAB (each field is briefly described in the

header section of climada_entity_read, too).

But first now for the contents of the entity:

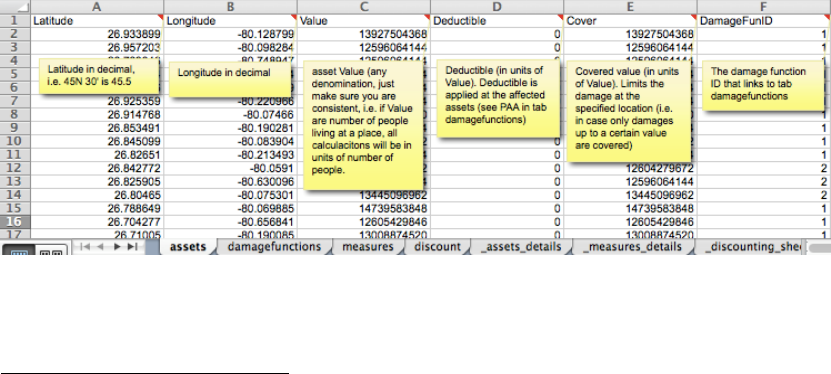

The tab 'assets' lists all exposed assets by location (Latitude/Longitude) and Value.

Please note that values do not necessarily need to be monetary values. E.g. in case

the number of exposed people is stated in the Value column, climada does calculate

the number of affected people (you will obviously use a damagefunction which

relates to people in this case). You can specify the unit of the Values in an additional

column labelled Value_unit, where you specify the unit for each Value entry, such as

‘USD’ or ‘people’ (best to start with only one value unit per Excel file, mixed use for

advanced users only). The column DamageFunID relates each asset to its

corresponding damage function (which is provided in tab damagefunctions).

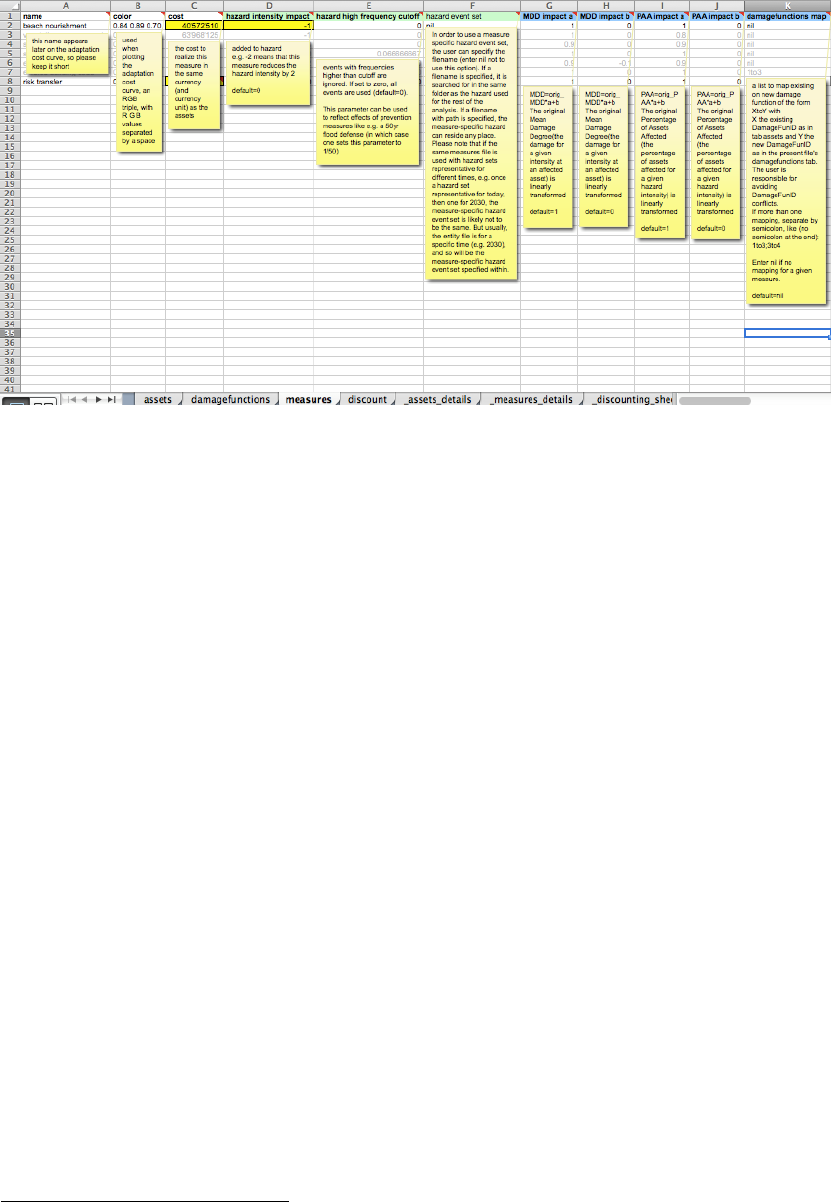

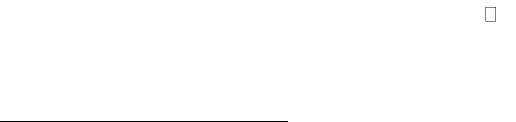

Figure: the assets tab, see ../data/entities/entity_template.xls and display the comment for each field of

the header rows (only key columns showed here). Mandatory are only Latitude, Longitude, Value

and DamageFunID (if not provided, Deductible is set to zero and Cover is set to Value).

40

Since the content of the Excel file is imported (using climada_entity_read) into MATLAB, any

other source can be used to define the content of the entity structure of climada, too. In order to

understand the entity structure, it’s in fact easiest to import the file ../data/entities/entity_template.xls

using entity=climada_entity_read and to inspect the resulting entity structure.

16

Note for advanced users: In addition to the columns visible in above figure (the essential ones, so to

say), one can add a column labelled Category_ID which allows later to select results based on a single

or a group of categories (see climada_viewer

41

). The column does contain just integer values i.e. 1

for category one which might e.g. be ‘Residential House’ and 2 for category ‘Commercial Building’. Such

names can be defined in the tab names (see the Excel template). Further, there can be a column

Region_ID, which allows to group assets into regions (if one runs a large set of assets, for example,

same rules as for Category_ID apply).

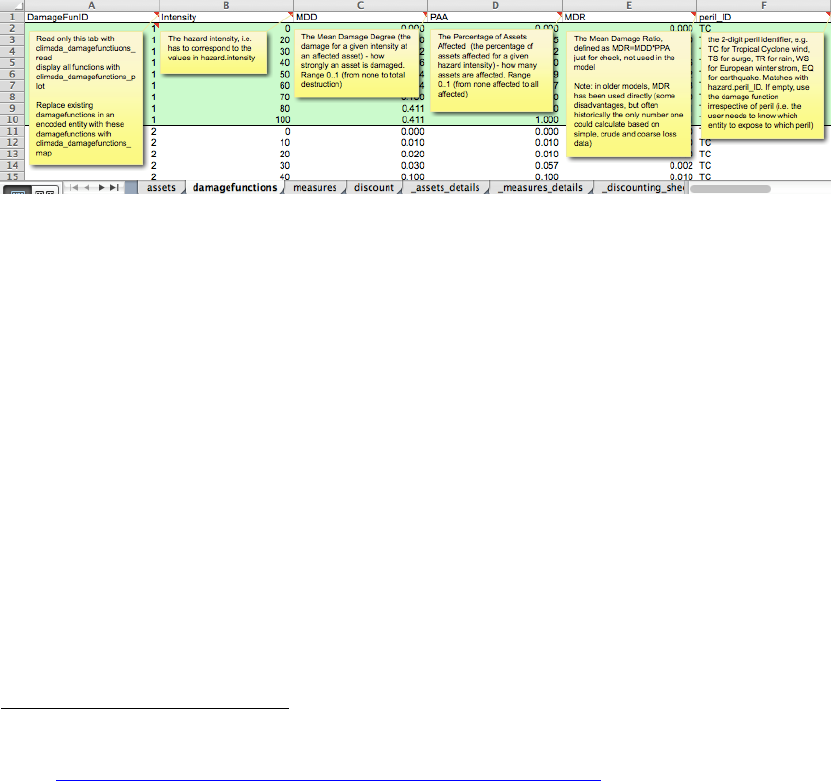

The tab 'damagefunctions' contains the relationship between the hazard intensity

(e.g. wind speed in m/s or storm surge height in meters) and the percentage of

affected assets (PAA) as well as the mean damage degree (MDD). What's called a

damage function in climada is elsewhere often also referred to as 'vulnerability

curve'. If for say a storm surge height of 1 meter, 50% of all assets are affected, and

the damage to these affected assets is 5% of their total value, the PAA is 0.5 and

MDD 0.05. In the case of value signifying exposed population, PAA is used to reflect

affected individuals, while MDD could be used to parameterize some sort of impact to

the affected individuals (e.g. using disability or quality adjusted life years,

DALY/QALY

42

). The DamageFunID is used to relate to the corresponding assets and

peril_ID to indicate for which peril/hazard the function shall apply. Please note that

there can be a damage function with DamageFunID equal to 1 for more than one

peril, say ‘TC’ and ‘EQ’. This way, one can run the same assets for more than one

hazard.

Figure: the damagefunctions tab, see ../data/entities/entity_template.xls. Mandatory fields are

DamageFunID, Intensity, MDD, PAA and peril_ID. MDR (=MDD*PAA) is ignored, just in the Excel

sheet for information.

Additional (optional) columns in the damagefunctions tab are Intensity_unit to specify

the unit of the intensity (for each entry, such as ‘m/s’) and name, a free name, eg.

'TC default'.

The tab 'measures' contains the list of climate adaptation measures

43

. It contains the

costs of the measures, i.e. the net present value of CAPEX and OPEX for each

measure. It also contains the parameterized impact of the measures on the hazard

and damage function. Imagine a coastal study region and say a mangrove forest.

Outside of climada, is has been calculated that the net present cost of this measure

amounts to 1'234'567 USD. Let's assume this mangrove forest slows down the wind

of a tropical cyclone by a certain amount, say 5 percent reduction in wind speed.

Both the cost as well as this 'parameterized' impact is hence entered in the

'measures' tab for this particular measure, cost goes into the cost column (obviously)

and the 5% windspeed reduction by putting 0.95 into the column

hazard_intensity_impact_a, since the resulting hazard intensity equals the original

hazard intensity times hazard_intensity_impact_a (plus hazard_intensity_impact_b,

41

But first make yourself familiar with climada as described on the following pages, as this result viewer

is rather for advanced use.

42

See http://www.iac.ethz.ch/edu/courses/master/modules/climate-risk.html (about lecture 9) for a

discussion of measures such as DALY and QALY.

43

note that for pure damage calculations (climada_EDS_calc), this information is not needed, i.e. one

can provide an entity Excel file with just the tabs assets and damagefunctions.

17

set to zero by default, or simply i=iorig*a+b). Note that climada can handle

parameterized impacts of higher complexity, too (please refer to the comments for

each column in the Excel template).

Figure: the measures tab, see ../data/entities/entity_template.xls. Mandatory fields are name, cost

and MDD_Impact_a and MDD_impact_b.

The (optional) tab ‘names’ contains

44

some speaking names for fields in other tabs

that are IDs, such as Category_ID and Region_ID. It just provides a name to be

shown e.g.in GUIs. There is a special use to communicate the reference year also

via this tab: If there is an entry reference_year in the Item column, a 0 in the ID

column and the reference year as a string in the name column, this value is stored

into the entity.assets.reference_year (otherwise, the default reference year

is used, see climada_init_vars). For ADVANCED users, the names tab also

allows to define global variables, such as the encoding distance, the default currency

or even folders such as hazards_dir etc., which are originally defined in

climada_init_vars (see section “Writing your own code” further below) but can

easily be redefined this way

45

.

Please note again that each column header in the Excel contains a detailed

explanation as a comment. The reference Excel sheet, called entity_template.xls can

be found in the entities sub-folder of the climada data folder

46

.

Note: If you use climada as an end-user (i.e. not developing anything, just to

‘process’ your Excel input and produce the waterfall and adaptation cost curve), the

simple call climada prompts the user to select the entity Excel file for today and in

the future as well as the hazard set for today and future, then runs all calculations

and shows the final adaptation cost curve. On subsequent calls, the routine suggests

last inputs - and if the first file selection is the same as on previous call, even asks to

re-run with previous call's inputs without asking for confirmation. This way, one can

edit the entity Excel file and then just call climada again.

44

See ../data/entities/entity_template.xlsx (there exists a .xls version for backward compatibility)

45

See See ../data/entities/entity_template_ADVANCED.xlsx, but please be aware of the impacts (as

one can re-define reference years etc. this way).

46

../data/entities/entity_template.xls

18

A note on coordinates: climada works best with standard geographical coordinates

47

(longitude -180..180, latitude -90..90). Please see climada_dateline_resolve to

resolve issues around the dateline (e.g. Fiji, where it is advisable to center the

coordinate system either somewhat left or right of the dateline in order to avoid

troubles with distance calculations).

A note on Excel and Open Office file formats and their tolerance in

MATLAB and Octave

Instead of .xls or .xlsx (use .xls 95 or 97 with MATLAB before R2015, and .xlsx with

latest MATLAB and Octave), climada also supports .ods (Open Office). If using .ods,

please avoid any cell comments, also see “Notes on Octave” further below. Please

do not use field format ‘Percentage’ in Open Office, but just ‘General’ or ‘Number’,

such that e.g. the discount_rate on tab discount is 0.02, not plain ‘2%’ in the .ods file

(2% works fine in .xls and .xlsx files). Please note further that starting Octave might

be easiest in a shell (terminal window), with path, e.g.

/Applications/Octave.app/Contents/Resources/usr/bin/octave

Constructing your own entity

A user might want to construct an entity from scratch, i.e. not import assets,

damagefunctions (and measures) from an Excel (or OpenOffice) file, but from any

other source (or define all in a MATLAB code file). In this case, it is advisable to first

familiarize with the Excel template (see above) to know the mandatory and optional

fields.

There are two options, once can either start from the entity template

48

, or really from

scratch:

▪ If one starts from the template, replace fields with your content, please make

sure all fields have the same final length (i.e. if you define 150 assets, i.e.

enter them in entity.assets.lon, entity.assets.lat,

entity.assets.Value, make sure all other fields in entity.assets are

of dimension 1x150).

▪ If one starts from scratch, populate the mandatory fields, see the comments in

the Excel template and the header section of climada_entity_read. Make

sure all fields in assets, damagefunction etc. have the same (corresponding)

length.

Once you defined your entity, please

▪ run climada_assets_complete, climada_damagefunctions_complete and

(if you define measures) climada_measures_complete to check entity

components for completeness.

▪ run entity.assets=climada_assets_encode(entity.assets) in

order to encode to a hazard.

▪ consider to run measures=climada_measures_encode(measures) in

order encode measures (i.e. convert some fields to machine-readable format

(i.e. if you populated entity.measures.damagefunctions_map, this fills

in entity.measures.damagefunctions_mapping

49

).

47

but one could use it on any other topology, as encoding does merely find nearest neighbours etc.

48

See ../data/entities/entity_template.xlsx (there exists a .xls version for backward compatibility)

49

This is the field used in climada_measures_impact (not entity.measures.damagefunctions_map)

19

Finally, test your entity by calling climada_EDS_calc and, if you defined measures,

also climada_measures_impact and carefully observe any warnings/errors

50

.

From tropical cyclone hazard generation to the adaptation

cost curve

51

In this section, we are going to illustrate the whole process step-by-step, using

tropical cyclone as the hazard and a few assets in South Florida for illustration

purposes. Note already here that climada provides global coverage for tropical

cyclone wind (often referred to as TC wind

52

) and storm surge (often referred to as

TC surge

53

) as well as other hazards, such as global earthquake

54

– see “climada

modules” section further below. For a comprehensive list of climada functions, please

refer to ../docs/code_overview.html

Instead of starting with a simple hazard set generation, the user might also jump to

the damage calculation right away, skip section “Hazard set” below and jump to the

second next section "Assets and damage functions". Please note that due to slower

processing speed of some explicit loops in Octave, the demo differs somewhat from

the MATLAB version as documented below (also with respect to certain graphics

features).

Hazard set

First, obtain the historic tracks

55

, i.e. define the name and location of the raw text file

with historical tropical cyclone tracks

56

global climada_global % to get access to climada_global

57

tc_track_file=[climada_global.data_dir filesep ...

'tc_tracks' filesep 'tracks.atl.txt'];

tc_track=climada_tc_read_unisys_database(tc_track_file,2);

% same as

58

tc_track=climada_tc_read_unisys_database('tracks.atl.txt',2);

Why 2 as last input? See help climada_tc_read_unisys_database and check the

description of the 2nd input parameter (it forces re-reading the database each time).

Let’s have a look at the output:

50

If you get really stuck, consider contacting the climada developers…

51

See the climada code climada_demo_step_by_step which performs all the steps and illustrates

the intermediate results by plots, just as shown here. Run climada_demo_step_by_step in debug

mode to follow (and understand ;-) each step.

52

Part of climada core module (i.e. the module this manual is part of)

53

Obtain it from https://github.com/davidnbresch/climada_module_tropical_cyclone and see the

manual(s) there.

54

See https://github.com/davidnbresch/climada_module_earthquake_volcano

55

See the function climada_tc_get_unisys_databases to automatically download all databases

from the internet (from weather.unisys.com/hurricane).

56

Note that the filename is defined using climada_global.data_dir in ordert to be machine and

file-system independent.

57

No need to look into this now, the structure climada_global just provides some machine and file-

system independent parameters. Later on, the advanced user might study the section about

climada_init_vars and climada_global further below.

58

Since most climada functions assume the default data (sub) folder if only the name (without path) is

passed.

20

tc_track(i) contains position tc_track(i).lon(j) and

tc_track(i).lat(j) for each timestep j as well as the corresponding intensity

tc_track(i).MaxSustainedWind. E.g. track number 1170 is hurricane Andrew:

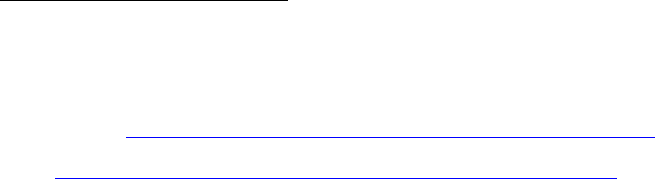

Figure: plot(tc_track(1170).lon,tc_track(1170).lat,'-r'); hold on;

set(gcf,'Color',[1 1 1]); axis equal

climada_plot_world_borders(2,'','',1) % plot world borders (for orientation)

In order to calculate the windfield of this particular single track, we first generate a

series of points on which to evaluate the windfield, we call these points centroids

59

:

centroids.lon=[];centroids.lat=[]; % init

next_centroid=1; % ugly code, but explicit for demonstration

for i=1:10

for j=1:10

centroids.lon(next_centroid)=i+(-85);

centroids.lat(next_centroid)=j+ 20;

next_centroid=next_centroid+1;

end % j

end % i

centroids.centroid_ID=1:length(centroids.lat);

Next, calculate the windfield

60

for a single track (Andrew again) as

59

Centroids are stored in a special folder ../data/centroids, see e.g. climada_centroids_read.

Please note that most routines requiring centroids are tolerant in the sense to also accept an entity

instead (where the assets with their coordinates are). See further below, just keep this in mind.

60

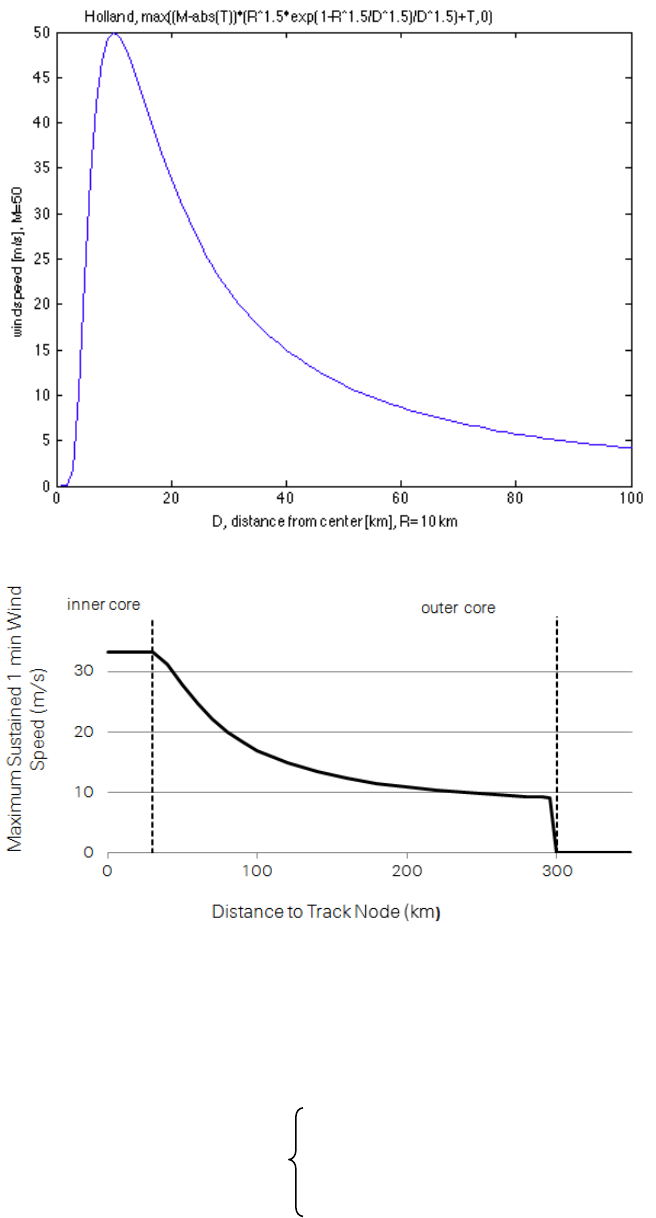

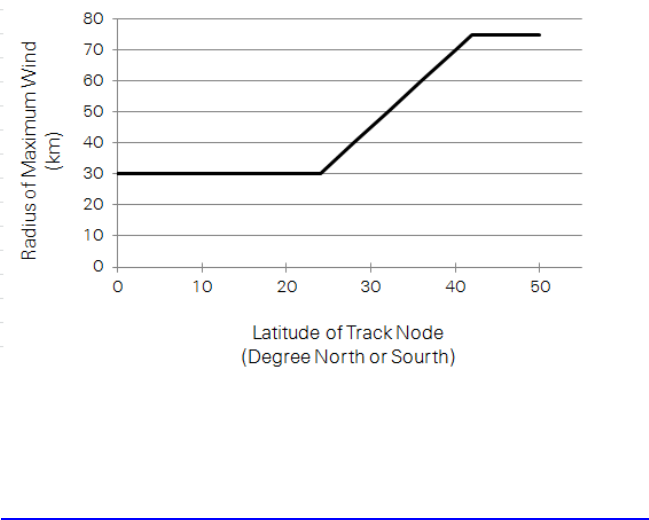

We implement a windfield according to Holland, G. J., 1980: An analytic model of the wind and

pressure profiles in hurricanes. Monthly Weather Review, 108, 1212-1218. In addition to the

axisymmetric vortex, we take forward speed into account. See also Holland, G. J., 2008: A Revised

Hurricane Pressure–Wind Model, Monthly Weather Review, 136, 3432-3445. A natural next step would

21

gust = climada_tc_windfield(climada_tc_equal_timestep(tc_track(1170)),centroids);

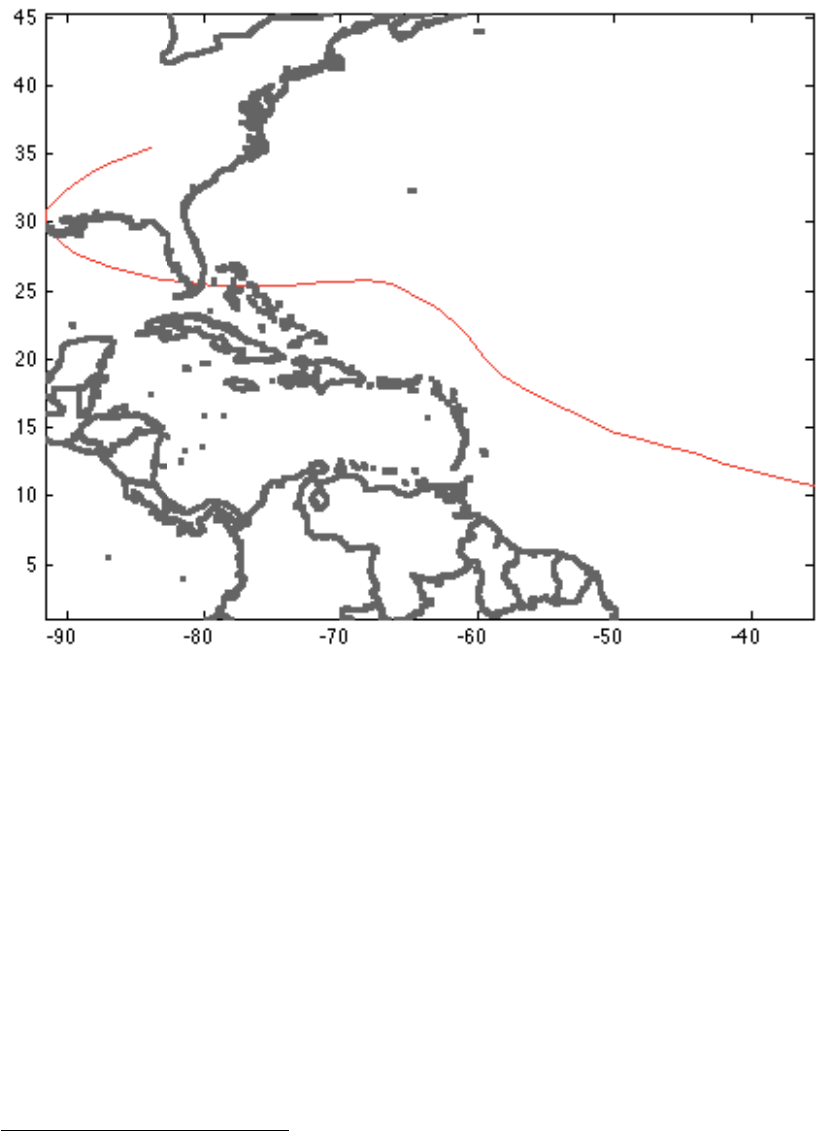

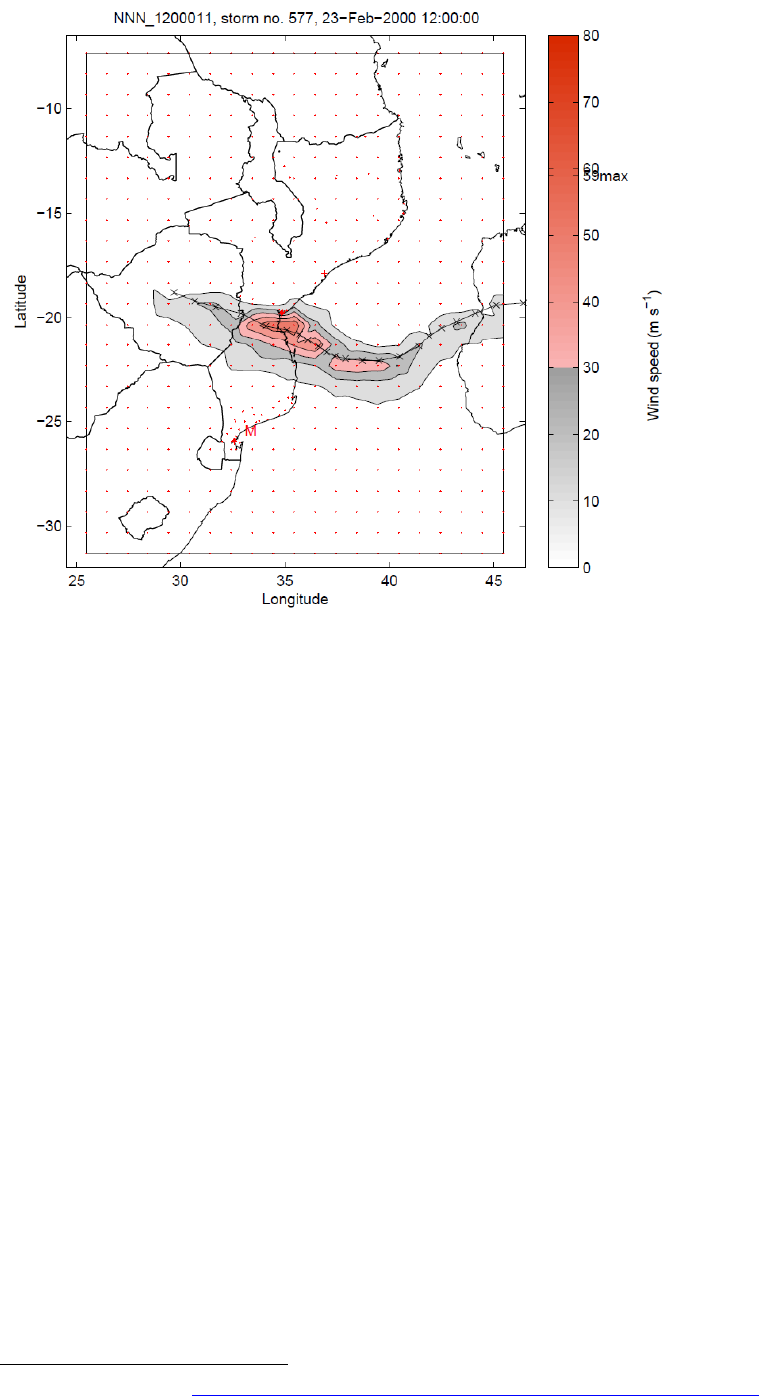

Figure: Gust wind field (in m/s) of Andrew, 1992:

climada_color_plot(gust,centroids.lon,centroids.lat)Note that without

climada_tc_equal_timestep in the call climada_tc_windfield above, we would not get a continuous

footprint due the fast forward speed of Andrew (try this by omitting climada_tc_equal_timestep in the

calculation of gust. Please consider some advice on appropriate colour schemes

61

.

We now generate the wind field not for one single hurricane, but for all events and

store them in an organized way, the so-called hazard event set:

hazard = climada_tc_hazard_set(tc_track,'atl_hist',centroids);

This hazard event set now contains the single Andrew wind field we generated

before in hazard.intensity(1170,:) and therefore we can reproduce the same

wind field with the following command (note the full(*), as we store a sparse matrix)

climada_color_plot(full(hazard.intensity(1170,:)),...

hazard.lon,hazard.lat,'none')

be the consideration of roughness (not implemented), see e.g. Vickery, P.J. et al., 2009: A Hurricane

Boundary Layer and Wind Field Model for Use in Engineering Applications. J. Appl. Meteor. Clim.

61

http://colorbrewer2.org and

http://www.personal.psu.edu/faculty/c/a/cab38/ColorBrewer/ColorBrewer_updates.html

22

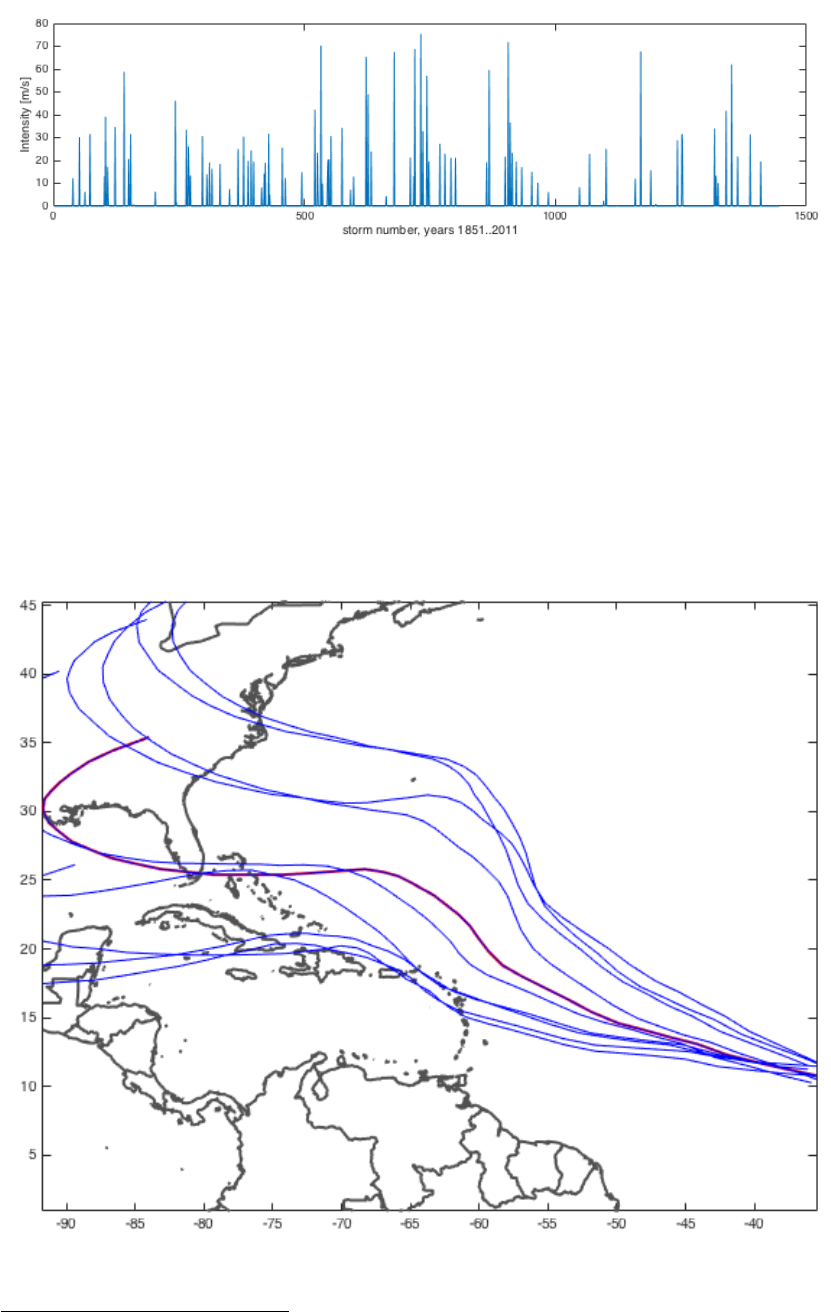

Or, instead, we can plot all hazard intensities at a given point (green circle

62

) like

Figure: figure; subplot(2,1,1) % only lower part shown here

climada_color_plot(full(hazard.intensity(1170,:)),...

hazard.lon,hazard.lat,'none'); hold on; plot(-81,26,'Og');

plot(centroids.lon(36),centroids.lat(36),'Og','MarkerSize',10);

subplot(2,1,2)

plot(full(hazard.intensity(:,36))); set(gcf,'Color',[1 1 1]);

xlabel(sprintf('storm number, years

%i..%i',tc_track(1).yyyy(1),tc_track(end).yyyy(end)))

ylabel('Intensity [m/s]')

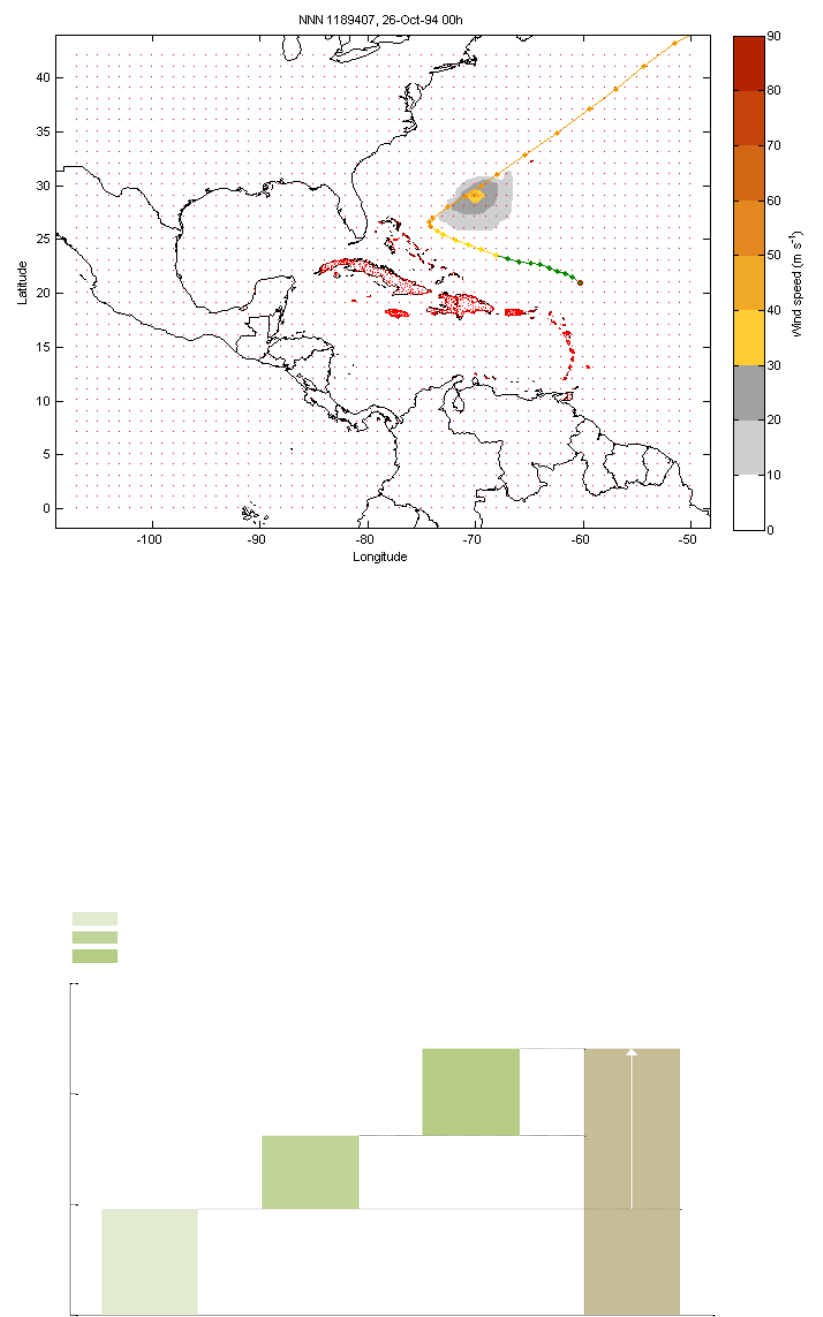

Instead of only historic tracks, we can generate artificial or probabilistic tracks, simply

by 'wiggling' the original tracks, e.g. for Andrew 1992 again:

tc_track_prob=climada_tc_random_walk(tc_track(1170));

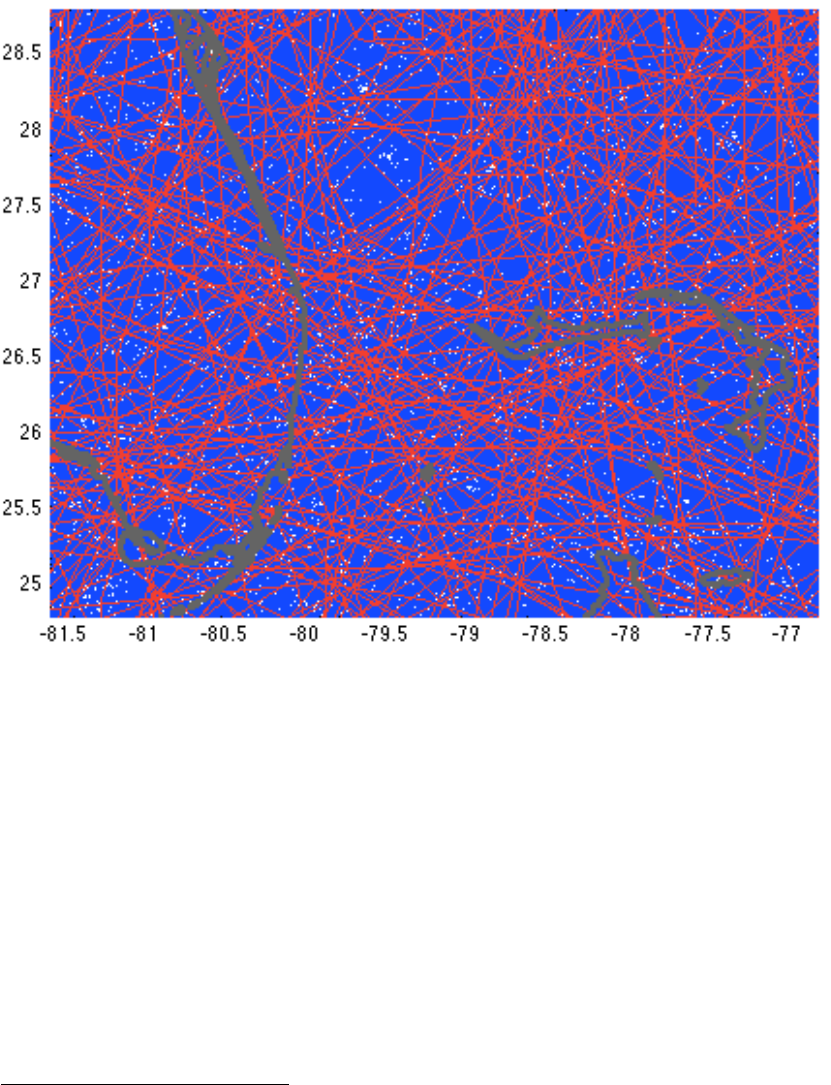

Figure: plot(tc_track(1170).lon,tc_track(1170).lat,'-r','LineWidth',2);

hold on; set(gcf,'Color',[1 1 1]); axis equal

62

Not shown here in the figure, but in the upper part of the figure when created in MATLAB.

23

climada_plot_world_borders(2,'','',1)

for track_i=1:length(tc_track_prob)

plot(tc_track_prob(track_i).lon,tc_track_prob(track_i).lat,'-b');

end

And repeated for all historic tracks, we obtain the full probabilistic track set

climada_global.waitbar=0; % switch waitbar off, speeds up

% hence the next line will take approx. 3 sec

tc_track_prob=climada_tc_random_walk(tc_track);

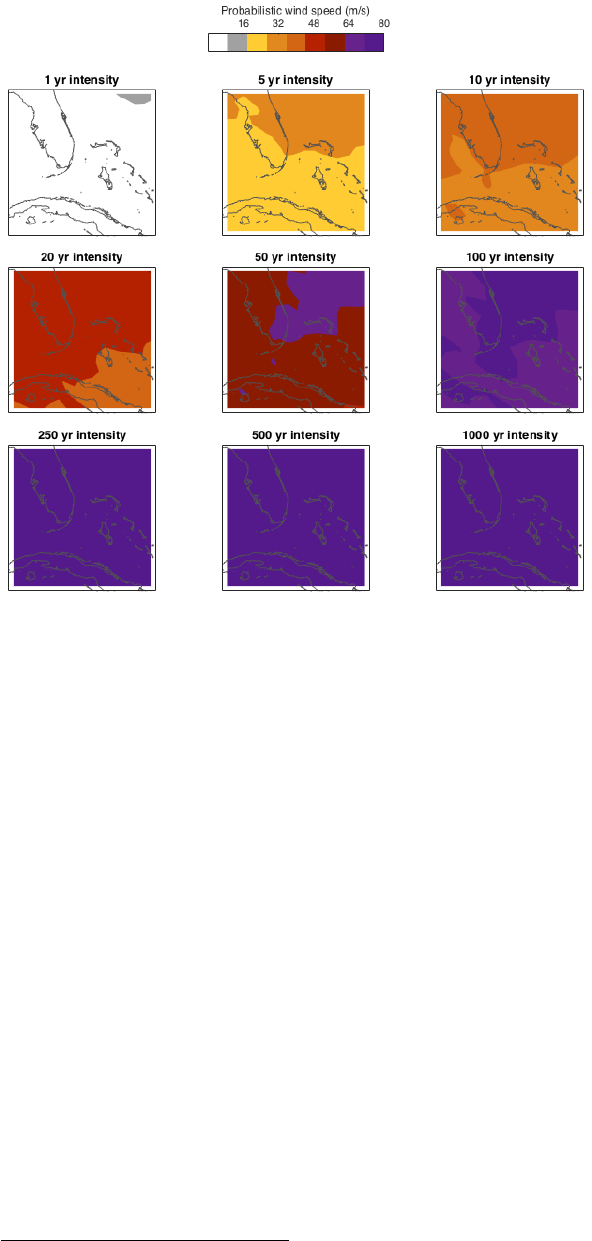

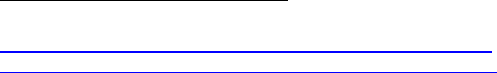

Figure (manually zoomed in, Southern tip of Florida):

for track_i=1:length(tc_track_prob)

plot(tc_track_prob(track_i).lon,tc_track_prob(track_i).lat,'-b');

hold on;end

for track_i=1:length(tc_track)

plot(tc_track(track_i).lon,tc_track(track_i).lat,'-r');end

climada_plot_world_borders(2,'','',1); set(gcf,'Color',[1 1 1]);

Note: Instead of this explicit code, consider climada_tc_info to print track information (name,

date....) to stdout and to show (nice) plots of historic (and probabilistic) tracks.

Next, we generate the wind fields for all 14’450 probabilistic tracks (takes a bit less

than 2 min on a MacBook Air

63

)

hazard=climada_tc_hazard_set(tc_track_prob,'atl_prob.mat',centroids);

63

Consider to set climada_global.parfor=1 before calling climada_tc_hazard_set . This invokes

parallel processing (parallel pool, using parfor).

24

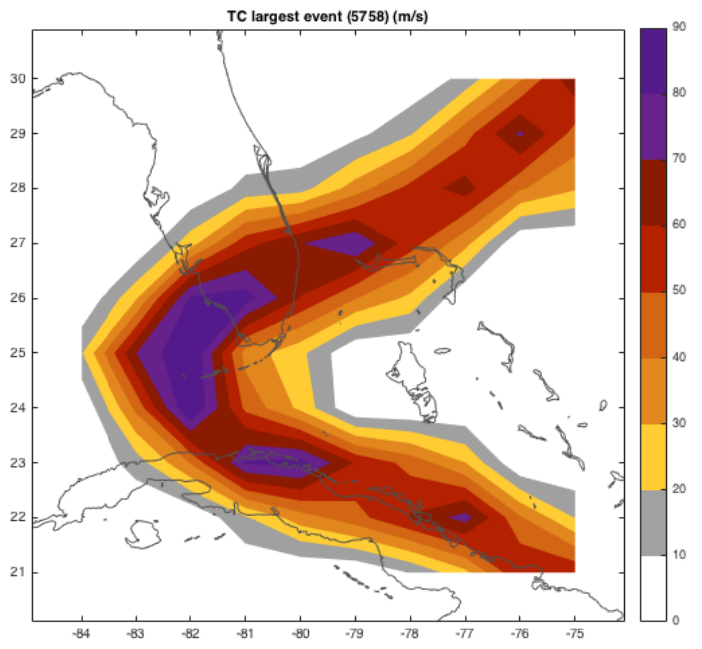

The hazard set now contains more than ten thousand (in fact 14’450) tropical cyclone

footprints, each stored at all centroids. We can for example plot the largest single

event with:

figure; climada_hazard_plot(hazard);

and generate the wind speed maps for several return periods:

25

Figure: climada_hazard_stats(hazard);

Before we move on, let’s explain the key elements of the hazard structure:

hazard.lon(i) and hazard.lat(i) contain the coordinates of centroid i, hence

hazard.intensity(j,i) contains the hazard intensity of event j at centroid i.

Further hazard.frequency(j) contains the single event frequency of event j.

These are in fact the key elements of the hazard structure; note that

hazard.intensity is a sparse array (refer to e.g. help sparse in MATLAB

64

).

You might refer to functions such as the mentioned climada_tc_hazard_set or

climada_excel_hazard_set

65

to see how a hazard event set is generated.

64

In essence, a sparse array stores the non-zero elements of an array only. Since a single event hits

only a few centroids – especially true for a hazard set covering a larger geographical region – we save a

lot of memory and speed up the calculations substantially.

65

This function generates a hazard event set based on Excel input. The Excel sheet needs to contain all

the event footprints. An easy method to use climada with a finite (small) number of predefined events

(more hazard event scenarios then a full probabilistic set). See file ../data/hazards/ Excel_hazard.xls

which contains a small example (for Mozambique).

26

Assets and damage functions

So much for the hazard event set, let’s now import an asset base (the small

asset example as used in climada_demo, the demonstration GUI as shown

above

66

). Before we do so, we load the hazard set file as used in

climada_demo, in order to later reproduce the results:

hazard=climada_hazard_load('TCNA_today_small.mat')

and are now in a position to import the Excel file with all the asset

information

67

:

entity=climada_entity_read('demo_today.xls',hazard) % note

68

Such an entity structure contains the asset, damage function and adaptation

measures information, the tabs in Excel are named accordingly, and so are

the elements of the imported structure

69

. In the asset sub-structure, we find

70

entity.assets.lat(k) and entity.assets.lon(k), the geographical

position of asset k (does not need to be the same geographic location as

centroid i, since assets are encoded to the hazard

71

)

entity.assets.Value(k) contains the Value of asset k. Please note that

Value can be a value of any kind, not necessarily a monetary one, e.g. it could

be number of people living in a given place.

entity.assets.DamageFunID(k) contains a reference ID (integer) to link

the specific asset with the corresponding damage function (see Excel tab

damagefunctions and entity.damagefunctions). Before we move on the

the damagefunctions, note that entity.assets.centroid_index(k)

contains the centroid index onto which asset k is mapped in the hazard event

set

72

.

66

One can also generate assets (value distributions) in climada, see e.g. the climada module

https://github.com/davidnbresch/climada_module_GDP_entity or

https://github.com/davidnbresch/climada_module_country_risk . Please note that the two column names

Latitude and Longitude are shortened to lat and lon in climada’s entity.assets structure – not least to

ease typing on the command line, e.g. plot(entity.assets.lon,entity.assets.lat)

67

Please have a look at the Excel file, each column header is explained by a small comment (tiny yellow

triangel in the upper right corner of the cell). Please consider (later) to use e.g.

climada_entity_country to generate a basic entity for any country worldwide (at 10 km resolution).

68

Please note that climada_entity_read stores a .mat file of the imported entity structure to speed

up re-reading. But in case you edit the original (.xls or similar) file, climada re-reads from the latest

version (i.e. overwrites the .mat file). This check is performed by climada_check_matfile, which

might be useful to the advanced (programming) user.

69

Please note that we discuss the measures information further below

70

We focus on the key content here, please inspect the structure in MATLAB yourself.

71

See function climada_assets_encode. Encoding means: map asset positions to calculation

centroids of the hazard event set. This step is required to allow the user to freely specify asset locations,

rather than stick to the centroids the hazard set has been stored at. A beginner-level user should not

need to deal with such technical details, though. See also the remark about encoding in the section

“Process on one page” above.

72

As mentioned in a previous footnote, the beginner level user does not need worry too much about,

this simply speeds up damage calculation substantially. See code climada_assets_encode_check

to (visually) check the encoding.

27

figure;climada_entity_plot(entity,4);% The asset distribution as stored in entity (read from

Excel sheet)

The damagefunctions sub-structure contains all damage function information,

i.e. entity.damagefunctions.DamageFunID contains the IDs which

refers to the asset’s DamageFunID. This way, we can provide different

damage functions for different (groups or sets of) assets.

entity.damagefunctions.Intensity contains the hazard intensity,

entity.damagefunctions.MDD the mean damage degree and

entity.damagefunctions.PAA the percentage of affected assets. Last

but not least, entity.damagefunctions.peril_ID contains the peril ID

(2-digit character) which allows to identify specific damage functions with

perils. This way, we can in fact use DamageFunID 1 in the assets to link to

damage function one, which can exist several times, one for each peril. The

damagefunctions are stored in a bit a special format, since we get the first

damagefunction as

73

pos=find(entity.damagefunctions.DamageFunID==...

entity.damagefunctions.DamageFunID(1))

plot(entity.damagefunctions.Intensity(pos),...

entity.damagefunctions.MDD(pos)) % not shown, see next figure

73

In the case there is only one perilID, see further details in climada_damagefunctions_plot

28

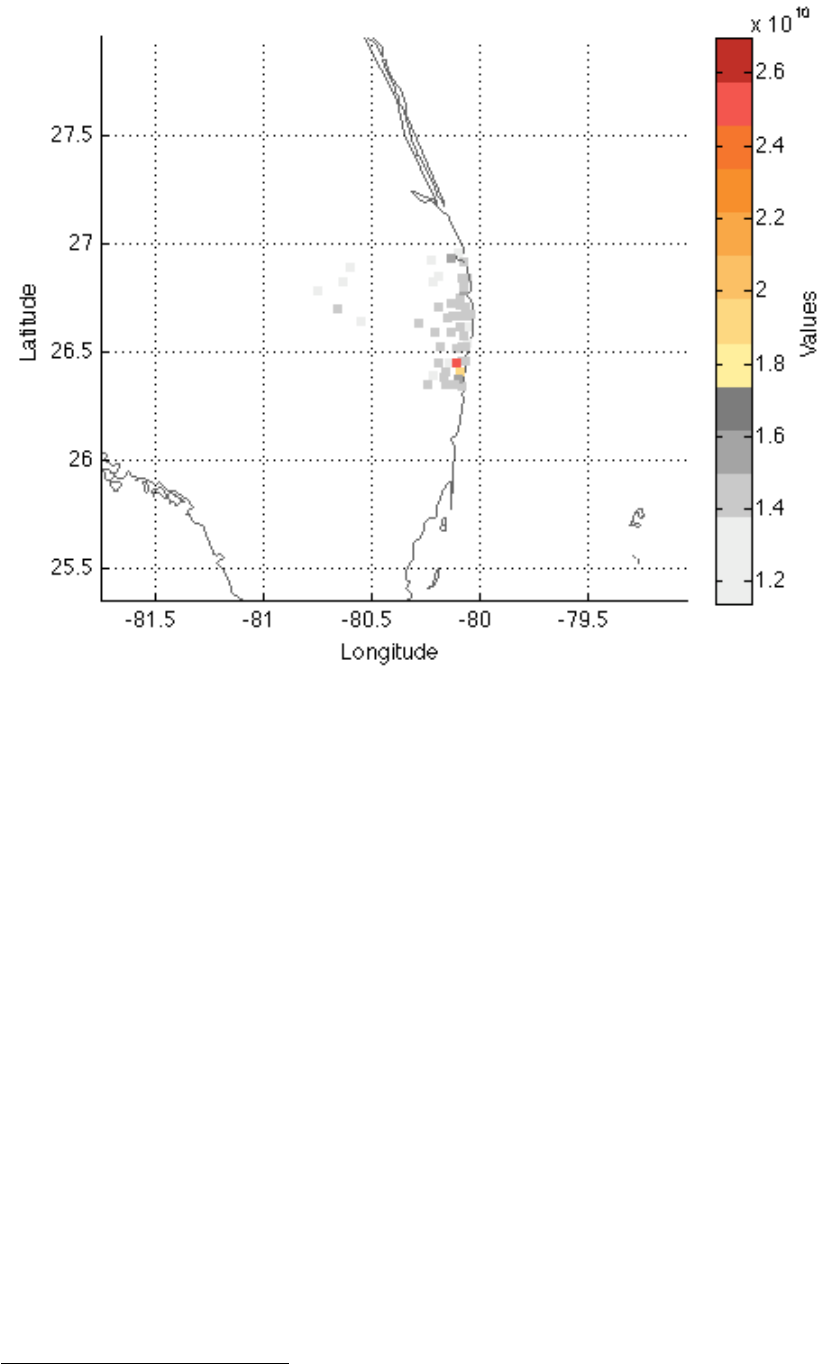

figure;climada_damagefunctions_plot(entity);% The three damage functions as defined in

the damagefunctions tab of the Excel file. TC is the peril ID and stands for tropical cyclone, while 001,

002 and 003 denote the DamageFunID. The horizontal axis denotes the hazard intensity (here tropical

cyclone windspeed, in m/s), the vertical axis is the same for MDD, PAA and MDR.

Note: Please consider using climada_nightlight_entity, which allows you to

generate an asset base on 10x10km or 1x1km resolution for any country of the

world.

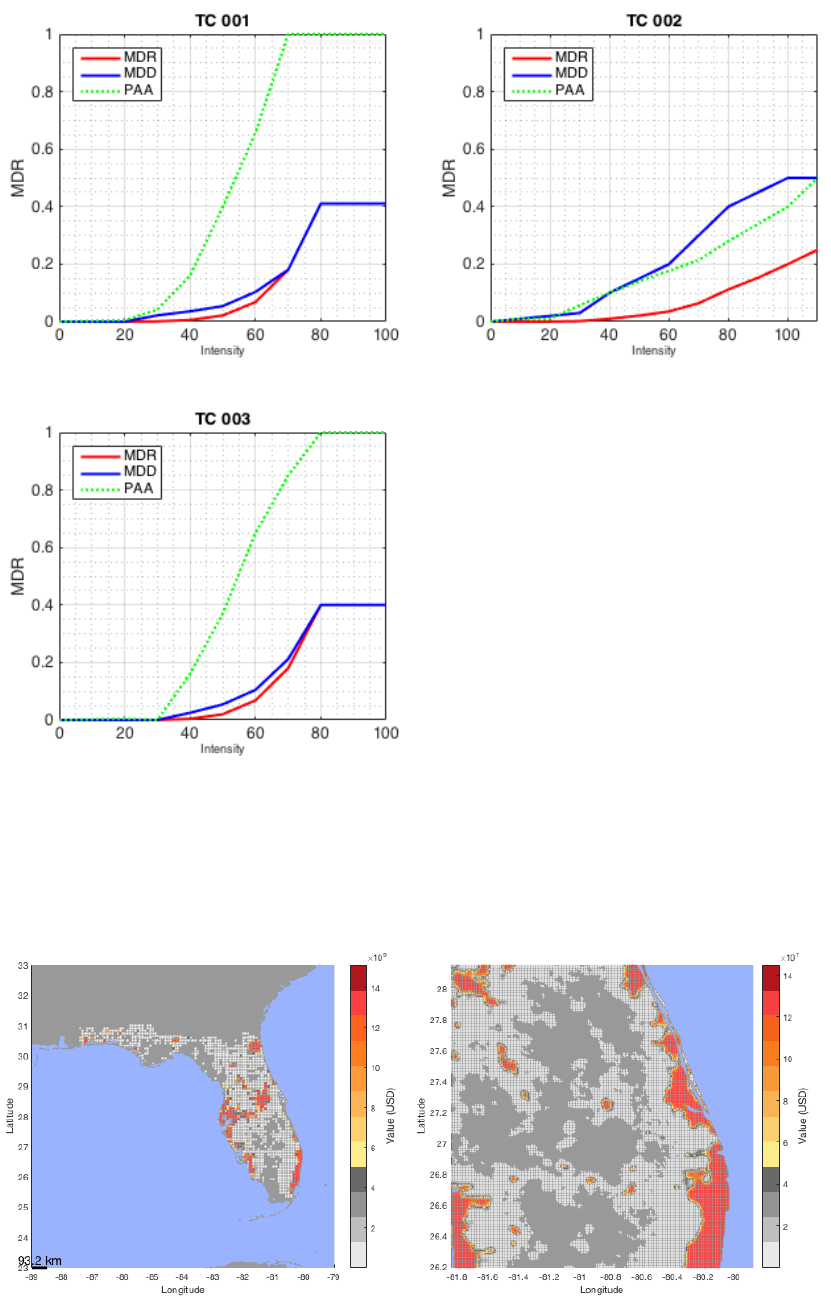

Fig: 10x10km (left) and 1x1km (right, zoomed in) default asset distribution for Florida. Generated with

entity=climada_nightlight_entity(‘USA’,’Florida’); climada_entity_plot(entity)

29

Damage calculation

And with that, we’re ready for the damage calculation, simply as:

EDS=climada_EDS_calc(entity,hazard)

Where EDS contains the event damage set, it contains the annual expected damage

in EDS.ED, the event damage for event j in EDS.damage(j), the event frequency in

EDS.frequency(j) and the event ID in EDS.event_ID(j). In further fields it

stores the link to the original assets, the damagefunctions and hazard set used.

Instead of plotting the event damage set (here a vector with 14’450 elements), one

rather refers to the damage exceedance frequency curve (DFC)

74

:

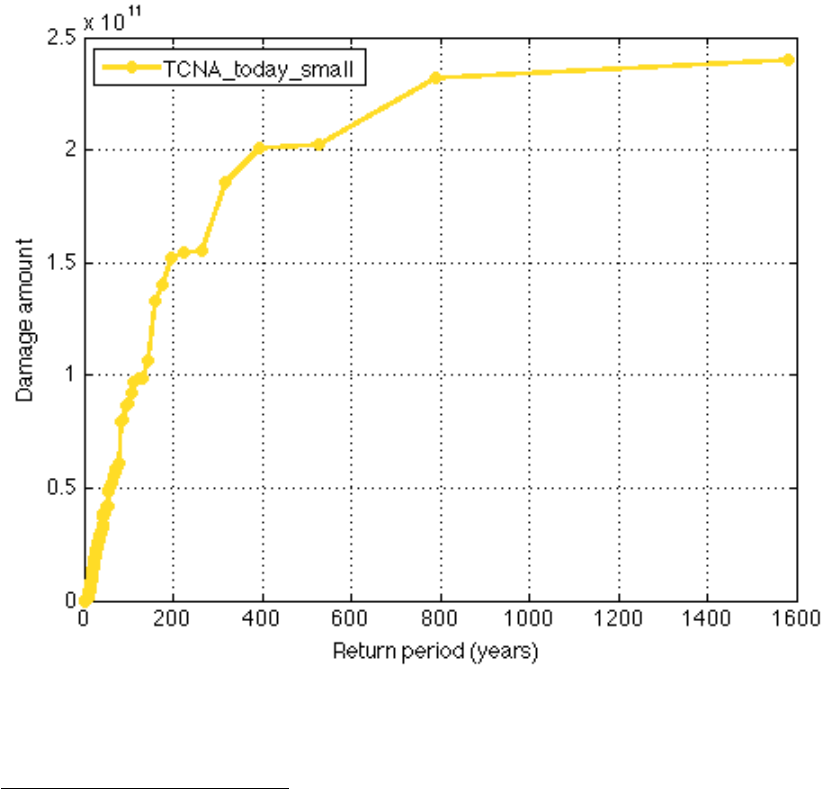

figure; climada_EDS_DFC(EDS); % show damage exceedance frequency (DEF) curve

The horizontal axis denotes the return period in years, the vertical axis the damage (in units the Values

were provided, here USD). The label of the curve denotes the hazard set used.

74

The damage (exceedance) frequency curve (DFC) is an annual per-occurrence damage exceedance

frequency curve, showing the return period of a certain damage level to be reached or exceeded for a

given return period. A DFC is constructed by sorting a per-occurrence damage event set (as shown for

historic events in Fig 2) by descending damage amount and assigning the corresponding return periods,

as given by the temporal extent of the damage event set. If the damage event set spans say 100 years

and contains for example only three damaging events of amounts a, b and c, with a>b>c, the largest

damage reached or exceed only once in these 100 years is a, while a damage level of b is reached or

exceeded twice in these 100 years, hence the return period for a damage level of b is 50 years, and c is

reached and/or exceeded three times, hence its return period is 33.3.. years.

Further, see e.g. McNeil, A.J., R. Frey, and P. Embrechts, Quantitative risk management: concepts,

techniques and tools. Vol. Revis. 2005, Princeton, N.J: Princeton Univ Press. and also Rice, J.A.,

Mathematical statistics and data analysis. Vol. 3rd. 2007, Belmont, CA: Thomson/Brooks/Cole.

30

While one would in a proper application of climada now calculate the damages of

future assets (to obtain the effect f economic growth) and then further repeat the

calculation with a future hazard set (to obtain the effect of climate change), we

illustrate the benefit of adaptation measures by simply using the assets and hazard

we have already used.

But before we do so, one remark about uncertainty – the first time reader shall either

skip or at least not spend time with the following short section. But since I’ve got so

many questions about dealing with uncertainty, I decided to cover the bare essentials

early on.

Dealing with uncertainty

In climada, we follow ‘measurement theory of probability’

75

and do not assume any

prior or suggest a possibly inappropriate approach

76

. The user is responsible for ANY

uncertainty calculation, as this way, he is in full control of the drivers (and

sensitivities). If user therefore might decide to quantify the effect of uncertainty

around damage functions, he would specify the distribution explicitly and just run his

own uncertainty assessment (from a simple trial to e.g. full Monte-Carlo approach).

Since all damage calculations a re easy to call functions, it is very easy to write a

small script that ‘samples’ e.g. several damage functions and runs the respective

statistics on the results, in the bare essence this could look something like:

Assume we have an entity with assets and damagefunctions and a hazard ready (as

at this stage the case), hence can just run the damage calculation with some

samples

77

around the original damage function:

sample_multiplier=[.7 .8 .9 1.1 1.2 1.3];

entity_orig=entity; % copy

EDS=climada_EDS_calc(entity,hazard); % the 'default' and to init EDS

for sample_i=1:length(sample_multiplier)

entity.damagefunctions.MDD= ...

entity_orig.damagefunctions.MDD*sample_multiplier(sample_i);

EDS(end+1)=climada_EDS_calc(entity,hazard);

end % sample_i

Since the annual expected damage (just to take one risk measure) is within each

EDS structure, to run some stats, we convert to an array (note that the first element

in ED is the default run, followed by the samples), e.g.

ED=[];for i=1:length(EDS),ED(end+1)=EDS(i).ED;end

And with that, one can run any statistics like mean(ED),std(ED)

Or, if one would like to run statistics on e.g. the 100 year return period damage,

simply convert the EDS into a DFC (that’s the elegance of it ;-):

75

See e.g. https://www.countbayesie.com/blog/2015/8/30/picture-guide-to-probability-spaces

76

e.g. one could (relatively easily) implement a beta-distribution on the damage uncertainty, but some

damage might be bound by an upper limit.

77

We use multipliers here to keep the code snippet simplest. But one could switch to another set of

damage functions or consider non-linear transformations, such as MDD=MDD.^2 or =MDD.^1/2 or …

(just in the e.g. MDD or PAA case make sure max(MDD) or max(PAA) do not exceed 1, e.g. use

MDD=min(MDD,1), unless you want that). One will very likely also ‘wiggle’ other parameters, such as

hazard intensity (start with simple tests such as e.g. hazard.intensity= hazard.intensity*.95).

31

DFC=climada_EDS2DFC(EDS,100); % calculate 100 year damage

damage_100=[];for i=1:length(EDS),damage_100(end+1)=DFC(i).damage;end

mean(damage_100),std(damage_100)

See also the visualization of uncertainty in the next section (climada_cost_curve)

Adaptation cost curve

As mentioned, the entity structure contains not only assets and damagefunctions, it

also holds the adaptation measures

78

. entity.measures.name{m} contains the

name of measure m, entity.measures.cost(m) the cost

79

. The following fields

allow the parameterization of the measure’s impact on both the hazard as well as the

damage function. entity.measures.hazard_intensity_impact(m) allows to

reduce the hazard intensity (e.g. -1 reduces tropical cyclone windspeed by 1 m/s) for

measure m. The hazard_high_frequency_cutoff

80

allows to specify a

frequency below which damages are suppressed due to the measures, e.g. the

construction/design level of a dam (hazard_high_frequency_cutoff=1/50

means the dam prevents damages up to the 50 year return period).

hazard_event_set allows to specify a measure-specific hazard event set, i.e. for

this particular measure, climada switches to the specified hazard event set instead of

the one used to assess the damages of the reference case. MDD_impact_a and

MDD_impact_b allow a linear transformation of the MDD (mean damage degree) of

the damage function, such that MDDeff = MDD_impact_a + MDD_impact_b * MDD.

Similarly, PAAeff = PAA_impact_a + PAA_impact_b * PAA.

damagefunctions_map allows to map to a new damage function to render the

effect of measure m, i.e. ‘1to3’ means instead of DamageFunID 1, DamageFunID 3

is used

81

. risk_transfer_attachement and risk_transfer_cover define the

attachement point and cover of a risk transfer layer

82

.

The simple call

measures_impact=climada_measures_impact(entity,hazard,'no');

does it all, e.g. it takes the entity and first calculates the EDSref using hazard in order

to create the baseline (situation with no measure applied). It then takes measure m

(m=1…), adjusts either hazard and/or damagefunctions according to the measure’s

specification and calculates a new EDSm. The difference to EDSref (i.e. EDSm-EDSref)

quantifies the benefit (averted damage) of measure m. By doing this on the event

damage set, a variety of measures can be compared, even account for measures

which for example only act on high frequency events (see

hazard_high_frequency_cutoff) or risk transfer layers (see

risk_transfer_attachement and risk_transfer_cover). This function

further handles all the measure impact discounting etc.

83

78

Please refer to the measures tab in the Excel file and the comments in each of the header fields.

79

entity.measures.color{m} contains the color (RGB) as shown in the adaptation cost curve of measure

m. colorRGB contains this converted into an RGB triple.

80

We do not repeat entity.measures.X(m) any more, just refer to X.

81

The filed entity.measures.damagefunctions_mapping contains the details, i.e. the mapping as used in

climada, a kind of ‘parsed’ version of e.g. ‘1to3’.

82

Please refer the tot he lecture, www.iac.ethz.ch/edu/courses/master/modules/climate_risk

83

See function climada_NPV

32

Since it would be quite cumbersome for the user to manually construct the adaptation

cost curve based on the detailed output provided by climada_measures_impact,

the following function

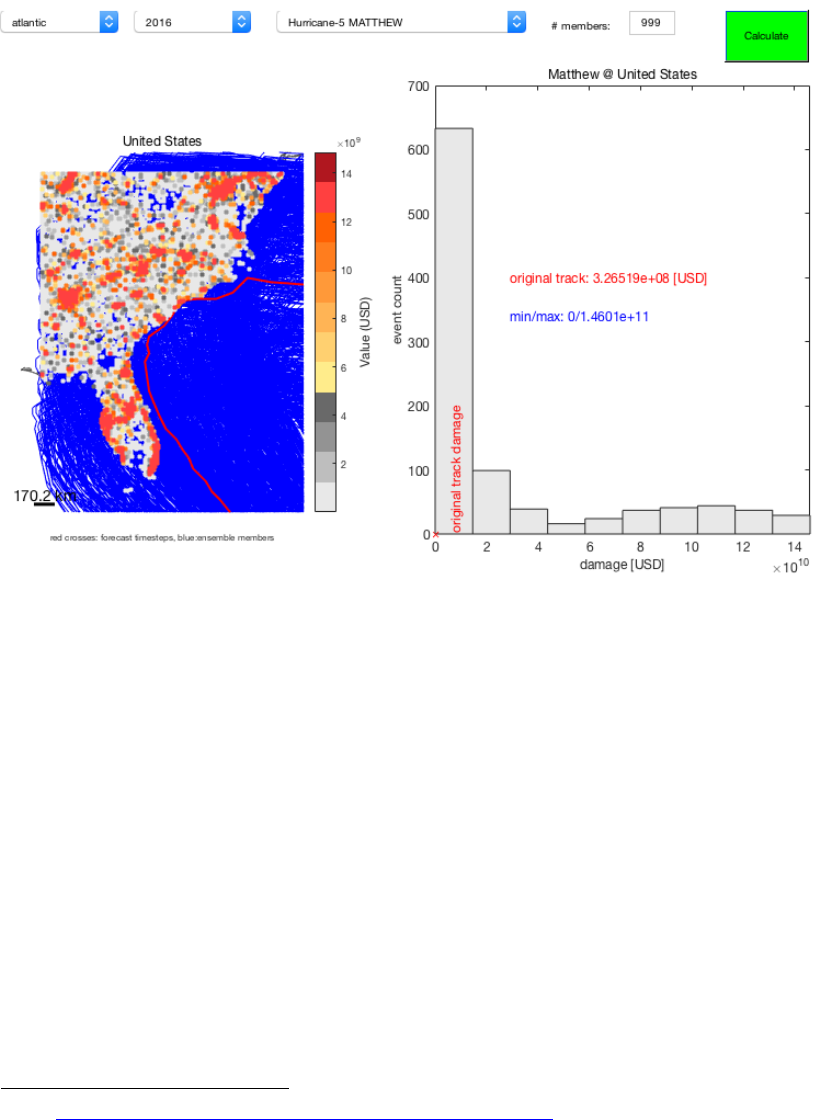

84