Ibic Make Manual

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 157 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Contents

- List of Figures

- Manual

- Introduction to make

- Running make in Context

- Running make in Parallel

- Recipes

- Practicals

- Examples

- Downloading Data From XNAT

- Running FreeSurfer

- DTI Distortion Correction with Conditionals

- Quantifying Arterial Spin Labeling Data

- Processing Scan Data for a Single Test Subject

- Preprocessing Resting State Data

- Generating A Methods Section

- Seed-based Functional Connectivity Analysis I

- Seed-based Functional Connectivity Analysis II

- Group Level Makefile - tapping/Makefile

- Subject Level Makefile - tapping/lib/resting/subject.mk

- Preprocessing - tapping/lib/resting/Preprocess.mk

- Generation of Nuisance Regressors - tapping/lib/resting/Regressors.mk

- ROI Timeseries Extraction - lib/resting/timeseries.mk

- First Level Analysis - lib/resting/fsl.mk

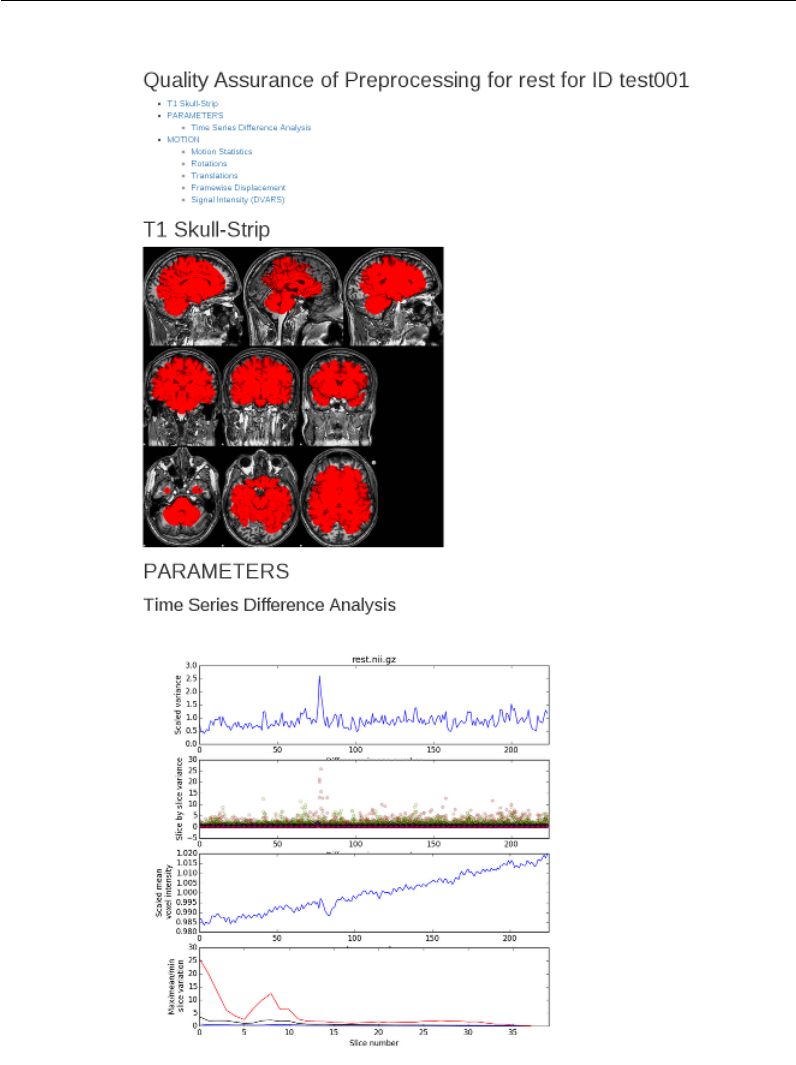

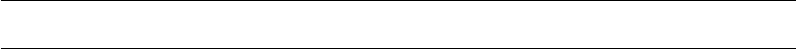

- Quality Assurance Reports - lib/resting/qa.mk

- Using ANTs Registration with FEAT

- Creating Result Tables Automatically Using Make

- Plotting Group FEAT Results Against Behavioral Measures

Using GNU Make for

Neuroimaging Workflow

University of Washington

Using GNU make for Neuroimaging Workflow

Mary K. Askren∗, Trevor K. M. Day, Natalie Koh, Zoé Mestre, Jennifer N.

Dines, Benjamin A. Korman, Susan J. Melhorn, Daniel J. Peterson, Matthew

Peverill, Swati D. Rane, Melissa A. Reilly, Maya A. Reiter, Kelly A.

Sambrook, Karl A. Woelfer, Xiaoyan Qin, Thomas J. Grabowski, and Tara

M. Madhyastha†

University of Washington, Seattle

August 18, 2017

∗askren@uw.edu

†madhyt@uw.edu

i

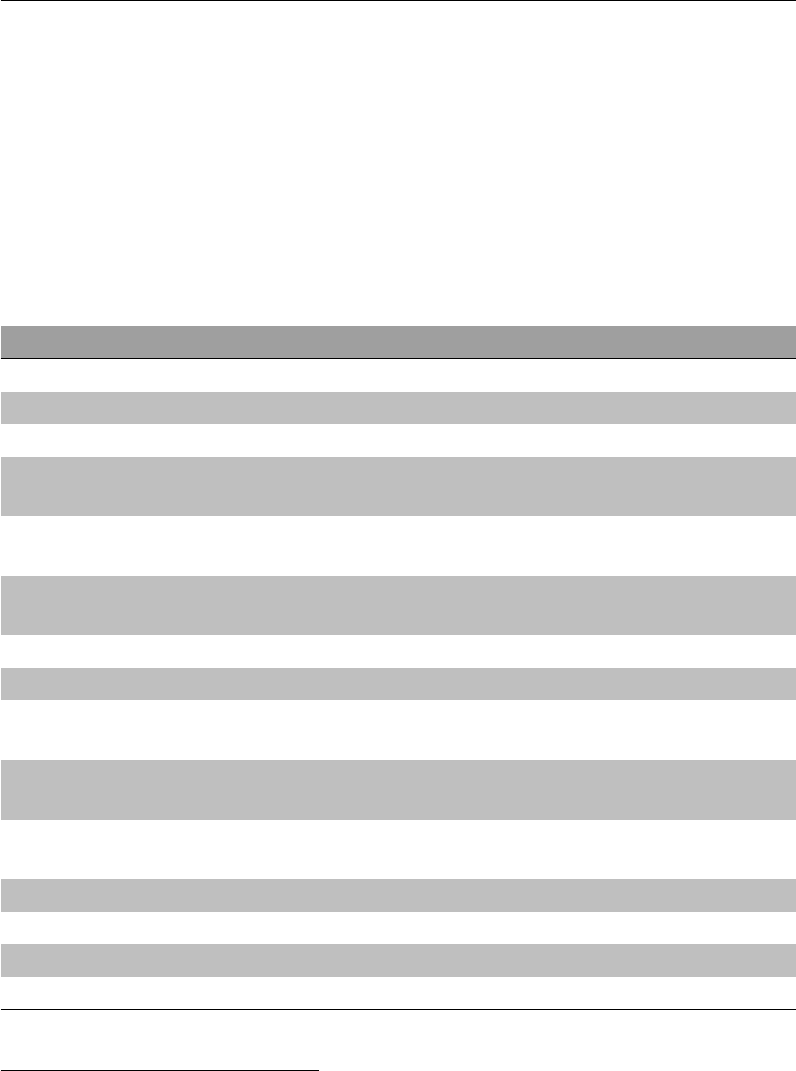

Contents

Contents ii

List of Figures vi

I Manual 1

1 Introduction to make 2

Conventions Used in This Manual ...................... 3

Quick Start Example .............................. 3

A More Realistic Example ........................... 5

What is the Difference Between a Makefile and a Shell Script? ...... 7

Make Built-In Functions ............................ 8

The shell and wildcard Functions .................. 8

Text and Filename Manipulation .................... 8

Logging Messages from make ...................... 10

Additional Resources For Learning About make ............... 11

2 Running make in Context 13

File Naming Conventions ........................... 13

Subject Identifiers ............................ 13

Filenames ................................. 15

Directory Structure ............................... 16

Setting up an Analysis Directory ....................... 18

Defining the basic directory structure ................. 18

Creating the session-level makefiles ................... 19

Creating the common subject-level makefile for each session ..... 20

Creating links to the session-level makefile ............... 21

Creating links to subject-level makefile ................. 21

Running analyses ............................. 21

Setting Important Variables .......................... 22

ii

Contents

Variables that control make’s Behavior ................. 22

Other important variables ........................ 24

Variable overrides ............................. 25

Suggested targets ............................. 25

3 Running make in Parallel 28

Guidelines for Writing Parallelizable Makefiles ............... 28

Each line in a recipe is, by default, executed in its own shell . . . . . 28

Filenames must be unique ........................ 29

Separate time-consuming tasks ..................... 30

Executing in Parallel .............................. 31

Using multiple cores ........................... 31

Using the grid engine .............................. 31

Setting FSLPARALLEL for FSL jobs ................... 32

Using qmake ................................ 32

How long will everything take? ..................... 33

Consideration of memory ........................ 33

Troubleshooting make ............................. 34

Find out what make is trying to do ................... 34

Use the trace option in make 4.1 .................... 34

Check for line continuation ....................... 35

No rule to make target! ......................... 35

Suspicious continuation in line #.................... 36

make keeps rebuilding targets ...................... 36

make doesn’t rebuild things that it should ............... 36

Using Make Functions to Save Time ..................... 37

Using Functions on Recipes ....................... 37

Using Functions to Create Dependency Lists ............. 39

4 Recipes 40

Obtaining a List of Subjects .......................... 40

Setting a variable that contains the subject id ............ 41

Using Conditional Statements ......................... 41

Setting a conditional flag ........................ 41

Using a conditional flag ......................... 42

Conditional execution based on the environment ........... 42

Conditional execution based on the Linux version ........... 43

iii

Contents

II Practicals 45

1 Overview of make 47

Lecture: The Conceptual Example of Making Breakfast .......... 47

Practical Example: Skull-stripping ...................... 49

Manipulating a single subject ...................... 49

Pattern rules and multiple subjects ................... 51

Phony targets ............................... 52

Secondary targets ............................. 53

make clean ................................ 54

2make in Context 56

Lecture: Organizing Subject Directories ................... 56

Practical Example: A More Realistic Longitudinal Directory Structure . . 57

Organizing Longitudinal Data ...................... 57

Recursive make .............................. 59

Running make over multiple subject directories ............ 60

Running FreeSurfer ............................ 61

3 Getting Down and Dirty with make 62

Practical Example: Running QMON ..................... 62

Practical Example: A Test Subject ...................... 63

Estimated total intracranial volume .................. 65

Hippocampal volumes .......................... 67

Implementing a Resting State Pipeline: Converting a Shell Script into a

Makefile .................................. 68

4 Advanced Topics & Quality Assurance with R Markdown 72

Creating a make help system ......................... 72

Makefile Directory Structure ......................... 73

The clean target ................................ 74

Creating New Makefile Rules On The Fly .................. 75

Incorporating R Markdown into make .................... 75

III Examples 81

1 Downloading Data From XNAT 83

2 Running FreeSurfer 86

3 DTI Distortion Correction with Conditionals 89

iv

Contents

4 Quantifying Arterial Spin Labeling Data 94

5 Processing Scan Data for a Single Test Subject 99

Testsubject Main Makefile ........................... 99

Testsubject FreeSurfer ............................. 103

Testsubject Transformations .......................... 105

Testsubject QA Makefile ............................ 107

6 Preprocessing Resting State Data 112

7 Generating A Methods Section 118

8 Seed-based Functional Connectivity Analysis I 121

9 Seed-based Functional Connectivity Analysis II 123

Group Level Makefile - tapping/Makefile ................. 123

Subject Level Makefile - tapping/lib/resting/subject.mk ....... 124

Preprocessing - tapping/lib/resting/Preprocess.mk .......... 125

Generation of Nuisance Regressors - tapping/lib/resting/Regressors.mk127

ROI Timeseries Extraction - lib/resting/timeseries.mk ........ 129

First Level Analysis - lib/resting/fsl.mk ................. 130

Quality Assurance Reports - lib/resting/qa.mk .............. 130

10 Using ANTs Registration with FEAT 132

Group Level Makefile .............................. 132

Subject Level Makefile ............................. 133

Preparatory Registrations - Prep.mk ..................... 134

Running Feat and Applying ANTs Registrations - Feat.mk ........ 135

11 Creating Result Tables Automatically Using Make 139

Simple Result Tables .............................. 139

Multiple Group Analyses ........................... 140

12 Plotting Group FEAT Results Against Behavioral Measures 141

Other Resources ................................ 149

v

List of Figures

1.1 Basic make syntax. ............................. 4

1.2 A very basic make recipe. .......................... 4

1.3 Automatic make variables .......................... 5

1.4 A more realistic example ........................... 5

1.5 An expansion of Figure 1.4 ......................... 6

1.6 A makefile expressed in bash ........................ 7

1.7 make filename manipulation functions ................... 9

1.8 How to use subst .............................. 9

1.9 How to use word ............................... 10

1.10 Using strip .................................. 10

1.11 make filename manipulation functions ................... 11

1.12 Extra-special variables ............................ 11

2.1 Example of good file naming conventions ................. 15

2.2 An example of a project directory. ..................... 17

2.3 A longitudinal analysis directory. ...................... 19

2.4 Session-level Makefile ............................ 20

2.5 Subject-level makefile ............................ 20

2.6 Creating symbolic links to the session-level makefile ........... 21

2.7 Making test ................................. 21

2.8 Output of recursive make .......................... 22

2.9 Setting $(SHELL) ............................... 22

2.10 Running DTIPrep in make ......................... 24

2.11 Controlling software version ......................... 24

2.12 Specifying BET flags in make ........................ 25

3.1 A non-functional multi-line recipe ..................... 29

3.2 A now-functional multi-line recipe ..................... 29

3.3 A multi-line recipe using “&&\”....................... 29

3.4 How not to name files ............................ 29

vi

List of Figures

3.5 The wrong way to run two long tasks ................... 30

3.6 The right way to run two long tasks .................... 30

3.7 Pattern-matching error handling ...................... 36

3.8 Two very similar make recipes ........................ 37

3.9 How to use the eval function ........................ 38

3.10 Using def ................................... 39

3.11 Looping over make function ......................... 39

4.1 Obtaining a list of subjects from a file. ................... 40

4.2 Obtaining a list of subjects using a wildcard. ............... 41

4.3 Determining the subject name from the current working directory and a

pattern. .................................... 41

4.4 Setting a variable to determine whether a FLAIR image has been acquired. 42

4.5 Setting a variable to indicate the study site. ................ 42

4.6 Testing the site variable to determine the name of the T1 image. . . . . 43

4.7 Testing to see whether an environment variable is undefined ....... 43

4.8 /etc/os-release .............................. 44

4.9 Testing the Linux version to determine whether to proceed ....... 44

1.1 Creating a waffwich ............................. 48

1.2 How to make a waffwich ........................... 49

1.3 Pattern-matching as a sieve ......................... 52

2.1 Script to create symbolic links within a longitudinal directory structure 58

3.1 resting-script file ............................. 70

4.1 make’s Help System ............................. 73

4.2 Example of a R Markdown File ....................... 78

4.3 QA report in R Markdown ......................... 80

1.1 XNAT access directory structure. ...................... 84

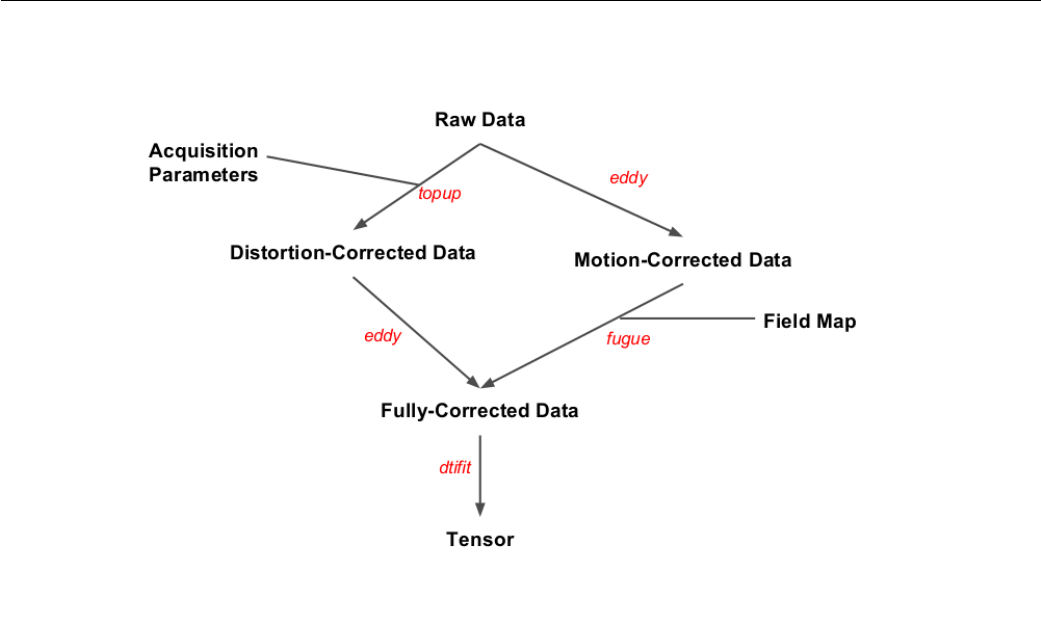

3.1 Flowchart of the Toy Makefile ........................ 91

4.1 Installing MATLAB Runtime Libraries and surround subtraction appli-

cation ..................................... 94

4.2 Entering location of installation folder for Surround Subtraction stan-

dalone MATLAB application. ........................ 95

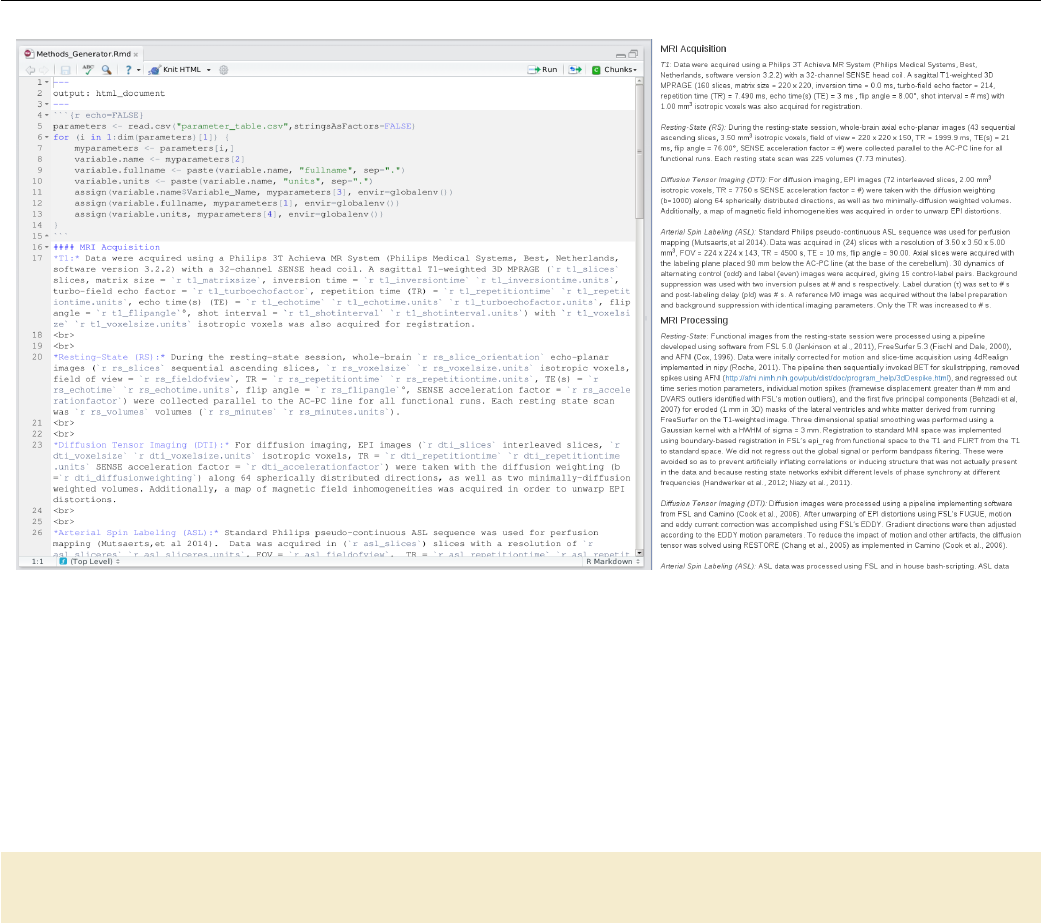

7.1 Methods generation in R Markdown .................... 120

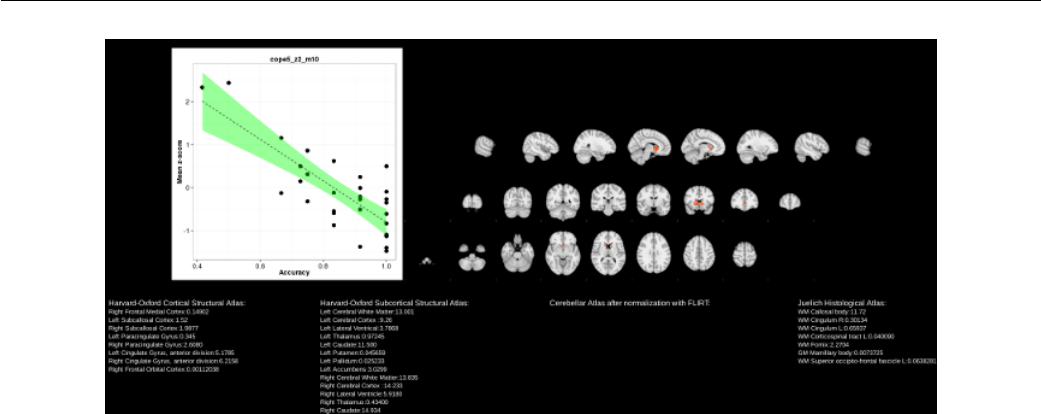

12.1 Report plotting group FEAT results against behavioral measures. . . . . 148

vii

Part I

Manual

1

Chapter 1

A Conceptual Introduction to

make for Neuroimaging Workflow

This guide is intended for scientists working with neuroimaging data (especially a

lot of it) who would like to spend less time on workflow and more time on science.

The principles of automation and parallelization, taken from computer science, can

easily be applied to neuroimaging to make certain parts of the analysis go quickly

so that the computer can do what it’s best at. This kind of automation supports

reproducible science (or at the very least, allows you to rerun your analysis extremely

quickly with a variant of the processing stream, or on a new dataset).

Over the last few years we have developed neuroimaging workflows using make,

a program from the 1970s that was originally used to describe how to compile and

link complicated software packages. It is an amazing program that is still in use

today. It is fairly easily repurposed to describe neuroimaging workflows, where you

specify how you “make” one file, which can depend on several other files, using

some set of commands. Once you have expressed these “rules” or “recipes” in a file

(a Makefile), you actually have a fully parallelizable program, which allows you to

run the Makefile over as many cores as you have (or on a Sun Grid Engine). This

has been done before: FreeSurfer incorporates make into their pipeline “behind the

scenes.” We have developed many examples of neuroimaging pipelines in multiple

domains that are written using make.

Modern tools for neuroimaging workflow (nipype, LONIPipeline) incorporate

data provenance (e.g., information about how and when the results were generated),

wrappers for arguments so that you do not need to worry about all the different

calling conventions of programs, and generation of flow graphs. However, make al-

lows expression of neuroimaging workflow with only the programming concepts of

parallelism and variables. Being able to incorporate programs from many different

packages without wrapping them saves time and effort. T. M. M. has taught sev-

2

Conventions Used in This Manual

eral undergraduates, graduates, and staff to use it to develop, debug, and execute

pipelines. These students have contributed examples to this manual. It is sim-

ple enough that the basic concepts can be understood by non-programmers. This

results in more time teaching and doing neuroimaging than teaching (and doing)

programming.

Conventions Used in This Manual

By convention, actual commands or filenames will be typeset in fixed-width (monospaced)

font (for example, ls,make, FSL’s bet, and flirt.) Makefile examples will also be

typeset in monospaced font.

Commands to be executed in a shell (we assume bash throughout this manual)

are written on a line beginning with a dollar sign ($) and displayed between two !You do not

type the $ to

execute bash

commands; it

stands for the

prompt.

horizontal lines, as follows:

$ echo Hello World!

Examples of commands from Makefiles are shown in an outlined box with no

special prompt character:

This is a make command.

Additionally, recipes are easily identified by their opening syntax, where the

recipe target is usually rendered in blue (see Figure 1.1 or Figure 1.2).

Data for the lab practicals and examples documented in this manual are available

from NITRC. We will assume that you have downloaded them into some location on

your own machines (e.g. makepipelines) and will refer to these files directly. To

run the example makefiles, you must set an environment variable, MAKEPIPELINES

to the location of this directory (see Examples). If you are at IBIC you may find

these examples on /project_space/makepipelines.

Throughout this manual we assume a UNIX style environment (e.g., Linux or

MacOS) with common neuroimaging packages installed. All examples have been

tested on Debian Wheezy using GNU Make 3.81. Please let us know of problems or

typos, as this manual is a work in progress.

Quick Start Example

!Recipes in

make need to be-

gin with a TAB

character. Not

eight spaces,

not nine, but

exactly one TAB

character.

A makefile is a text file created in your favorite text editor (e.g., txtedit,emacs,vi,

gedit –not Office or OpenOffice). By convention, it is typically called “Makefile”

or “foo.mk” (i.e., it has a .mk extension). It is like a little program that is run by the

3

Quick Start Example

program make, in the same way that a shell script is program run by the program

/bin/bash.

A makefile contains commands that describe how to create files from other files

(for example, a skull-stripped image is created from the raw T1 image, or a T1 to

standard space registration matrix is created from a standard space skull-stripped

brain and a T1 skull-stripped brain).

These commands take the form of “rules” that look like those in Figure 1.1.

target: dependencies

-4em-4emShell commands to create target from dependencies (a recipe),

beginning with a [TAB] character.

Figure 1.1: Basic make syntax.

This may be best understood by example. Figure 1.2 is a simple makefile that

executes FSL’s bet command to skull-strip a T1 NIfTI file.

s001_T1_skstrip.nii.gz: s001_T1.nii.gz

bet s001_T1.nii.gz s001_T1_skstrip.nii.gz -R

Figure 1.2: A very basic make recipe.

In Figure 1.2 the target file is s001_T1_skstrip.nii.gz, which “depends” on

s001_T1.nii.gz. More specifically, to “depend on” something means that a) it

cannot be created unless the dependency exits, and b) it must be recreated if the

dependency exists, but is newer than the target.

The “recipe” is the bet command that creates the skull-stripped image from the

T1 image. This executes in a shell, just as if you were typing it in the terminal.

If this rule is saved inside a file called Makefile in the same directory as s001_-

T1.nii.gz, then you can create the target as follows:

$ make s001_T1_skstrip.nii.gz

Alternatively, in this instance, you could call:

$ make

If you specify a target as an argument to make, it will create that target. If you

do not, make will build the first target in the file. In this case, they are the same.

4

A More Realistic Example

A More Realistic Example

In the example above, we specified exactly what file to create and what file it de-

pended upon. However, in neuroimaging analyses we normally have many subjects

and want to do the same things for all of them. It would be a royal pain to type in

every rule to create every file explicitly, although it would work. Instead we can use

the concepts of variables and patterns to help us. A variable is a name that is used

to store other things. You can set a variable to something and then refer to it later.

Figure Figure 1.3 lists the syntax for common make variables. A pattern is a sub-

string of text that can appear in different names, and is denoted using %. Suppose

we have a directory that contains the T1 images from 100 subjects with identifiers

s001 through s100. The T1 images are named s001_T1.nii.gz,s002_T1.nii.gz

and so on. This makefile will allow you to skull strip all of them (and on as many

processors as you can get a hold of (see Running make in Parallel) but I’m getting

ahead of myself here).

$@ is the target.

$< is the first dependency.

$(VAR) is a make variable.

Figure 1.3: Automatic make variables

The first two lines in Figure 1.4 set variables for Make. Yes, the syntax is icky

but it is well explained in the GNU make manual. We will summarize here. The first

variable is assigned to the result of a “wildcard” operation that expands to all files

with the pattern s???_T1.nii.gz. If you are not familiar with wildcards, if you do

a directory listing of that same pattern, it will match all files that begin with an “s,”

are followed by exactly three characters, and then “_T1.nii.gz.” In other words, the

T1files variable is set to be all T1 files belonging to those subjects in the current

directory.

T1files=$(wildcard s???_T1.nii.gz)

T1skullstrip=$(T1files:%_T1.nii.gz=%_T1_skstrip.nii.gz)

all: $(T1skullstrip)

%_T1_skstrip.nii.gz: %_T1.nii.gz

bet $< $@ -R

Figure 1.4: A more realistic example

The second variable is set using pattern substitution on the list of T1 files, sub-

stituting the file ending (_T1.nii.gz) for a new file ending (_T1_skstrip.nii.gz)

5

A More Realistic Example

to create the list of target files. Note that the percent sign (%) matches the subject

identifier. It is necessary to use the percent sign to match only the subject identifier

(and not the subject identifier plus the following underscore, or some other extension)

when matching parts of file names in this way.

We have now introduced a new type of rule (make all) which has no recipe.

Make will look for a file called all and this file will not exist. It will then try to

create all the things that all depends on (and those files, the skull stripped images,

don’t exist either). So it will then take names of each skull stripped file, one by

one, and look for a rule to make it. When it has done that, it will execute the

(nonexistent) recipe to make target all and be finished. Because the file all still

does not exist (by intention), trying to make the target will always result in trying

to make all the skull stripped files.

This brings us to the final rule. The percent sign in the target matches the same

text in the dependency. This target matches the name of each of the skull stripped

files desired by target all. So one by one, they will be created. In the recipe for

the final rule, we have used a shorthand of make to refer to both the dependency

that triggered the rule ($<) and the target ($@). We do this because since the rule

is generic, we do not know exactly what target we are making. However, we could

also write out the variables (as seen in Figure 1.5).

%_T1_skstrip.nii.gz: %_T1.nii.gz

bet $*_T1.nii.gz $*_T1_skstrip.nii.gz -R

Figure 1.5: An expansion of Figure 1.4

In this version of the rule, we use the notation $* to refer to what was matched

in the target/dependency by %. If the previous version looked to you like a sneeze,

this may be more readable.

Now you can run this makefile in many ways. As before, to create a specific file,

you can type:

$ make s001_T1_skstrip.nii.gz

To do everything, you can type: make all or just make.

Suppose you do this and later find that the T1 for subject 036 was not properly

cropped and reoriented. You regenerate this T1 image from the dicoms and put it

in this directory. Now if you type make again, it will regenerate the file s036_T1_-

skstrip.nii.gz, because the skull strip is now older than the original T1 image.

Suppose you acquire four more subjects. If you dump their T1 images into this

directory following the same naming convention and type make, off you go.

6

What is the Difference Between a Makefile and a Shell Script?

This probably seems like an enormous amount of effort to go to for some skull

stripping. However, the benefit becomes clearer as the complexity of the pipelines

increases.

What is the Difference Between a Makefile and a Shell

Script?

This is a really good question. A makefile is basically a specialized program that

is good at expressing how X depends upon Y and how to create it. The recipes in

a makefile are indeed little shell scripts, adding to the confusion. By making these

dependencies explicit, you enable the computer to execute as many of the recipes as

it can at once, finishing the work as quickly as possible. It allows the computer to

pick right back up if there is a crash or error that causes the job to die somewhere in

the middle. It allows you, the scientist, to decide that you want to change some step

three-quarters of the way down and redo everything that depends upon that step.

However, make will do no more work than it has to. It will not rebuild anything that

does not depend on anything you have changed, however indirectly. And magically,

once you have made dependencies explicit, you never need to remember what needs

to be updated following a change. The computer will do it for you.

Shell scripts are more general programs that can do all sorts of things, but

inherently do them one at a time. For example, the shell script that is the equivalent

to Figure 1.4 could be written something like Figure 1.6.

#!/bin/bash

for i in s???_T1.nii.gz

do

name=`basename $i .nii.gz`

bet $i $name_skstrip.nii.gz -R

done

Figure 1.6: A makefile expressed in bash

There is nothing in this script to explicitly tell the computer that each individual

T1 image can be processed independently of the others; the order does not matter.

So if you are on a multicore computer that can execute many processes at once, you

could not exploit this parallelism with this shell script. Furthermore, you can see

that to add a few subjects or redo a few subjects, you will need to edit the script to

avoid rerunning everyone.

7

Make Built-In Functions

If you have ever found yourself writing a shell script to do a large batch job,

and then commenting out some of the subject identifiers to redo the few that need

redoing, then commenting out some other parts and adding new lines to the program,

and so forth, you are dealing with the problems that make can help with. If you are

not convinced, see Overview of make for a more detailed explanation of how make

works.

Make Built-In Functions

make contains a number of built in functions that take the general form:

$(cmd ...)

These functions can be read about in section 8 of the GNU Make Manual, but

we will detail some of the more convenient functions here as well.

The shell and wildcard Functions

The shell and wildcard functions allows acces to the shell outside of make, they

can be thought of as performing command expansion outside of the makefile (e.g.

with the ‘cat foo‘ or $(cat foo) syntaxes). We often use the shell function to

identify subject names, for example:

$(shell cat subjects.txt)

wildcard can be used in a similar manner. It performs wildcard expansion much

in the same way as the bash shell. If all your subjects had a six-digit identifier of the

form that started with 12-, the wildcard function could be used in the following

manner to identify your subjects:

$(wildcard $(PROJECT_DIR)/subjects/12????)

In most cases, using 12* would be synonymous, but would include any file begin-

ning with 12-, for example, 129999.backup. For this reason, we recommend being

most explicit and using question marks over an asterisk.

Text and Filename Manipulation

There are several functions designed for manipulating filenames, whose name, argu-

ments, and description are given in Figure 1.7.

8

Make Built-In Functions

dir name(s) Equivalent to $ dirname name.

notdir name(s) Removes everything up to, and including, the fi-

nal /.

suffix name(s) Extracts the suffix (including the period).

basename names(s) Equivalent to $ basename name

addsuffix suffix,name(s) Appends suffix to all name(s).

addprefix prefix,name(s) Appends prefix to all name(s).

join list1,list2 Joins elements from lists pairwise.

realpath name(s) For each file, returns the absolute canonical

name. Returns empty string on failure.

abspath name(s) For reach file, return canonical name. Does not

resolve symlinks, and accepts non-extant files.

Figure 1.7: make filename manipulation functions

Additionally, make provides functionality for manipulating strings in other ways.

One function that is handy for manipulating files with the same basename but

different extensions is subst (for “substitute”). For example, if one script outputs a

.png file and your recipe calls for a .gif file1, you can refer to the .png intermediary

by calling $(subst gif,png,$@).

For example, if we take take-picture to output picture01.png to a given

directory, we could use subst in a makefile like so:

QA/images/picture01.gif: mprage/T1.nii.gz

$(BIN)/take-picture mprage/T1.nii.gz QA/images ;

convert $(subst gif,png,$@) $@

rm $(subst gif,png,$@)

Figure 1.8: How to use subst

convert is an ImageMagick function that, among its many powers, can convert

filetypes. We rm the .png file at the end, because the whole point of this filetype-

conversion exercise was to save disk space.

There also exists the patsubst function, whose functionality is mostly better

executed by using substitution references instead. See subsection 8.2 of the GNU

Make Manual for more information.

Avery handy function is word, which selects the nth word from a whitespace-

delimited string. Because dependency lists are whitespace-separated, this allows us

to select the nth dependency. For example, $(word 2,foo bar baz) would return

1.gif files are smaller.

9

Make Built-In Functions

bar. The list of dependencies can be accessed as $ˆ, in the following example, it is

mprage/T2.nii.gz that would be skull-stripped, not mprage/T1.nii.gz.

mprage/T2_brain.nii.gz: mprage/T1.nii.gz mprage/T2.nii.gz

bet $(word 2,$^) $@

Figure 1.9: How to use word

One can also use firstword but $(word 1,$ˆ

)has the advantage of being more

consistent. There is also words, which returns the number of words, and lastword,

whose functionalities seem limited in context. But perhaps there is a use for them.

The third most useful text-manipulation function is strip, which removes lead-

ing and trailing whitespace from its argument, and replaces internal whitespace

sequences with a single space.

strip’s functionality is highlighted in logic statements (read more about con-

ditionals in chapter 3), where whitespace can be introduced through things like

$(shell cat foo). Stripping knotty strings like that allows make’s limited logic to

compare all variables.

If each subject had a small text file flag in their directory that indicated their

status, say group, its contents could be read and acted up on.

GROUP=$(shell cat group)

ifeq ($(GROUP),TD)

Preprocess: Preprocess-TD QA-TD

else

Preprocess: Preprocess-ASD QA-ASD

endif

Figure 1.10: Using strip

In Figure 1.10, only the TD pipeline will be called, depending on the tag.

There are other string manipulation functions we have not found a use for, that

are documented here nonetheless for your reference.

Furthermore, there are special variables that can access the directory and file

path from the target and prerequisites.

Logging Messages from make

Further, there are three make control functions that will report a message to the

terminal, as well as additional behaviors, depending on the function.

•error generates a fatal error and exists make.

10

Additional Resources For Learning About make

findstring find,in Returns “find” if find is found in in, otherwise,

the empty string.

filter pattern,text Returns all words in text that match pattern

filter-out pattern,text Returns the inverse of filter.

sort list Sorts the list and removes duplicates.

Figure 1.11: make filename manipulation functions

$(@D) The directory part of the target, without the trailing slash.

$(@F) The file part of the target.

$(<D) The directory part of the first prerequisite.

$(<F) The file part of the first prerequisite.

$(ˆ

D) The directory parts of all prerequisites.

$(ˆ

F) The file parts of all prerequisites.

Figure 1.12: Extra-special variables

•warning prints a message to the screen with the name of the makefile and the

line number, but doesn’t stop execution.

•info prints a message, but does not print the name of the makefile or the line

number.

All these functions are called with the same syntax as the other functions,

$(func) "message...").

Additional Resources For Learning About make

The focus of this manual is on structuring neuroimaging projects using make. These

additional books and manuals will be extremely helpful for learning about make more

generally.

“GNU Make Manual,” Free Software Foundation. Last updated Oct 5, 2014.

Link to manual. Although this manual is for version 4.1, which is a somewhat newer

version than we use in our examples, most of the information here is the same. This

is an excellent reference for the syntax of make and its functionality.

“Managing Projects with GNU Make,” Third Edition by Robert Mecklenburg.

Nov 2004. Link to open book content. This O’Reilly book covers a lot of basics but

in a readable form for someone trying to manage a large scale project. Information

about directory structures is probably less relevant for neuroimaging applications.

11

Additional Resources For Learning About make

“The GNU Make Book” by John Graham-Cumming. April 2015. Link to pur-

chase. This book has an enormous amount of useful advanced information, including

details describing differences between different versions of make, many approaches

to debugging, and the help system that we use in our examples.

12

Chapter 2

Running make in Context

In the previous chapter we presented some toy examples. However, in real neu-

roimaging life one deals with hundreds of subjects with multiple types of scans and

many planned analyses. To keep this straight requires some conventions, whether

you use make or not. Here we describe some conventions that are useful for managing

realistic projects.

File Naming Conventions

File naming conventions are critical for scripting in general, and for Makefiles in par-

ticular. make is specifically designed to turn files with one extension (the extension

is the last several characters of the filename) into files with another extension. For

example, in Figure 1.2 we turned a file with an extension “T1.nii.gz” into a file with

the extension “T1_skstrip.nii.gz.” !“Extension”

often refers to

the file suffix,

e.g., “.csv,”but

there is no

reason it must

be limited to a

fixed number of

characters after

a period.

So we can use naming conventions within a project, and across projects consis-

tently to reuse rules that we write for common operations. In fact, make has many

built-in rules for compiling programs that are absolutely useless to us. But we can

write our own rules.

Thus, it is important to decide upon naming conventions.

Subject Identifiers

The first element of naming conventions is the subject identifier. This is usually the

first part of any file name that we might end up using outside of its directory. For

example, we might include the subject identifier in the final preprocessed resting

state data, allowing us to dump all those files into a new directory for subsequent

analysis with another program, without having to rename them or risk overwriting

other subject files. Some features (by no means exhaustive) that may be useful for

13

File Naming Conventions

subject identifiers are:

1. They should contain some indicator of which project the subjects

belong to.

This is particularly important if you work on multiple projects, or if you may

be pooling data from multiple studies. Typically choose a multi-letter code

that is the prefix to the subject ID.

2. If applicable, they should contain some indicator of treatment group

that the subject belongs to.

It is helpful to indicate in the subject ID (usually with another letter or digit

code) whether the subject is part of a treatment group or a control.

3. They should be short.

Short names are easier to type and to remember, and make it easier to work

with lists of directories that are named according to the subject id.

4. They should be the same length.

This is not necessary but helpful for pattern matching using wildcards in a

variety of contexts within make and without.

5. They should sort reasonably in UNIX.

Many utilities ultimately require a list of filenames, which is easy to generate

using wildcards and the treatment group code if the subject ID sort order is

reasonable. The classic problem is to name subjects S1 . . . S10, S11 – the

subjects will not be in numeric order without zero-padding to the subject

numbers.

6. They should be easy to type.

bash has many tricks to help you avoid typing, but when you have to type,

the farther you have to move the longer it takes. Do you really need capital

letters?

7. They should be easy to remember for short periods of time.

My attention span is very short when doing repetitive tasks; subject IDs that

are nine-digit random numbers are harder to remember than subject IDs that

are four letter random sequences.

8. They should be easy to divide into groups using patterns.

My favorite set of subject IDs were randomly generated four letter sequences

that sounded like fake English words, making it easy to divide the subjects

into groups of just about any size using the first letters and wildcards. This

makes it very easy to test something on a subset of subjects.

9. They should be consistently used in all data sets within the project.

If the subject identifier is “RC4101” in a directory, it should not be “RC4-101”

14

File Naming Conventions

in the corresponding REDCap database1and “101” in the neuropsychological

data. It is easier if everyone can decide upon one form of name.

Filenames

File naming conventions begin with converted raw data (NIfTI files derived from the

original DICOMs, for example), and continue on for each level of processing. We

find it helpful to give these files specific names and then to perform subject-specific

processing within the subject/session directory. The filenames for a recent active

project are shown in Figure 2.1, where the subject ID is “RTI001.”

Filename Description

RTI001_T1_brain.nii.gz Skull stripped T1 MPRAGE image

RTI001_T1.nii.gz T1 MPRAGE image

RTI001_read.nii.gz Reading task scan

RTI001_read_rest.nii.gz Resting state scan for reading ses-

sion

RTI001_read_fMRIB0_phase.nii.gz B0Field map phase image for read-

ing session

RTI001_read_fMRIB0_mag.nii.gz B0Field map magnitude image for

reading session

RTI001_read_fMRIB0_mag_brain.nii.gz Skull stripped B0magnitude image

RTI001_write.nii.gz Writing task scan

RTI001_write_rest.nii.gz Resting state scan for writing ses-

sion

RTI001_write_fMRIB0_phase.nii.gz B0Field map phase image for writ-

ing session

RTI001_write_fMRIB0_mag.nii.gz B0Field map magnitude image for

writing session

RTI001_write_fMRIB0_mag_brain.nii.gz Skull stripped B0magnitude image

RTI001_DTI.nii.gz DTI image

bvecs.txt b-vectors for DTI processing

bvals.txt b-values for DTI processing

Figure 2.1: Example of good file naming conventions

1REDCap is the Research Electronic Data Capture web application that we use for storing

subject-specific information.

15

Directory Structure

The specific file names chosen are not as important as consistency in the use

of extensions and names, and documentation of what these files are and how they

were processed. The more you can keep things the same between projects, the more

Makefiles you will be able to reuse from one study to another with minimal changes.

Directory Structure

Typically, we create a directory for each project. Within that project directory is

storage for scripts, masks, data files from other sources (e.g., cognitive or neuropsy-

chological data) and subject neuroimaging data. Subjects may have different time-

points or occasions of imaging data, as well as physiological data and task-related

behavioral data. An example hierarchy (for project Udall) is shown in Figure 2.2.

We have recreated this directory structure in $MAKEPIPELINES/Udall, without any

actual MRI data, so that you can follow the structure. You can also look at chapter 2

for a description of how to set up a similar directory structure for an actual data

set.

This directory is typically protected so that only members of the project who

are permitted by the Institutional Review Board to access the data may cd into the

directory, using a specific group related to the project. The setgid bit is set on the ?The setgid

bit on a direc-

tory ensures

that files cre-

ated here

assume the

group to which

the directory

belongs, and

not the group

to which the

person creating

them belongs.

project directory and the file permissions are set by default to permit group read,

write, and execute permission for each individual so that files people create within

the directory substructure are accessible by others in the project.

The structure is set up for a longitudinal project, where each subject is imaged

at separate time points (sessions). All subject data is stored under the directory

subjects (under subdirectories corresponding to each session). However, because

often it is convenient to conduct analyses on a single time point, we create a con-

venience directory at the top level (e.g., session3) which has symbolic links to all

of the subjects’ session 3 data. This can make it easier to perform subject-specific

processing.

Notice that in the project directory, we keep scripts that we write to process the

data in the bin directory. Although bin historically is where “binary” executable files

are kept, the distinction between executable bash scripts and compiled executables

is not terribly important. In this example we separate tcl scripts and Rscripts to

make things neater.

Other data files and miscellaneous support files for workflow are stored in the

lib/ subdirectory. Again, your naming conventions may differ and there may be

more categories than we have, but it is important to decide upon some structure

that everyone agrees upon for the project. This makes it much easier to find things.

16

Directory Structure

/project_space/Udall

bin.............................Holds bash scripts written for this project

R......................................................Holds R scripts

tcl...................................................Holds tcl scripts

data.................................Other sources of data for all subjects

lib ............... Holds things that are used by many scripts and analyses

makefiles ................................... Makefiles for this project

networkROIs.....................A set of ROI masks for fMRI analysis

tractographyMasks............A set of masks for tractography analysis

freesurfer............................ Freesurfer (cortical thickness) runs

tbss ................... Group-level Tract-Based Spatial Statistics analyses

subjects ............... All subject data collected in neuroimaging sessions

SUBJECT.............................................A specific subject

session1 ................................ Difference subject sessions

session2

session3

behavioral .................. Subject behavioral (e.g., task) data

Dicoms..........................................Original dicoms

Parrecs........PAR/REC files, the native Philips scanner format

physio ....................................... Physiological data

xfm_dir ....................................... Registration files

session3/SUBJECT ..... Symbolic link to the subject/session3 directories

incoming .................. Zip files with subject data from the scanner.

Figure 2.2: An example of a project directory.

We find it useful to locate the results of some subject-specific processing (e.g. co-

registration of files, subject-level DTI processing, subject-level task fMRI processing)

in the subject/session directories. However, other types of single-subject (and group)

analyses are more conveniently performed in directories (e.g. freesurfer/,tbss/)

that are stored at the top level of the project.

Data files that are collected from non-neuroimaging sources are usually kept in

text (tab or comma-separated) form in the data/ directory so that scripts can find

and use them (e.g., to use the subject age or some cognitive variable as a regressor

in an analysis). We have found it very useful to document, manage, and merge this

data very carefully, including the important QA variables that describe whether or

not a subject’s scan should be included in analyses.

Finally, note that we have a directory labeled incoming. This is a scratch direc-

tory (it could be a symbolic link to a scratch drive) for placing zip files that come from

the scanner, that contain the dicoms for the scan, the Philips-specific PAR/REC files,

17

Setting up an Analysis Directory

the physiological data logged (used to correct functional MRI data for heart rate and

respiration-related signal) and data from scanner tasks (e.g., EPRIME files).

Setting up an Analysis Directory

Up until this point we have only seen little examples of makefiles that process subjects

within a single directory. However, real studies include many subjects and many

analyses; each analysis often produces hundreds of files per subject. Often, several

researchers are trying to conduct different analyses on the same data set at the same

time.

For these reasons, we use a collection of makefiles to manage a project. This

section describes this basic recipe (which is a little complicated). You can also see

this recipe implemented in Udall. Typically the steps involving creating subject

directories and links are done by a script or an “incoming” makefile at the time that

the subject data appears from the scanner. Better, if you use an archival system

that you can write programs to access (for example, XNAT) a script can create all

the links and name files nicely for you (see Downloading Data From XNAT).

This recipe relies on the concept of a “symbolic link.” This is a file that points

to another file or directory. A symbolic link allows you to access a file or directory

using a different pathname. If you remove the link, you will not remove the thing

that it points to. However, if you remove the target of the link, the link won’t work

any more.

To create a symbolic link, use the ln command.

$ ln -s target linkname

target is the path of the file you’re linking to, and linkname is the name of the

new symbolic link.

Target paths can either be absolute or relative, but they must be relative to their

new location (in linkname). For example, using the directory structure in Figure 2.2,

the command to create the link session1/RC4101 would look like this (run from

Udall/session1:

$ ln -s ../subjects/RC4101/session1 RC4101

Defining the basic directory structure

Let us assume the project home (PROJHOME) is called $MAKEPIPELINES/Udall/.

There are three scans per individual. Let us also assume there are two subjects:

18

Setting up an Analysis Directory

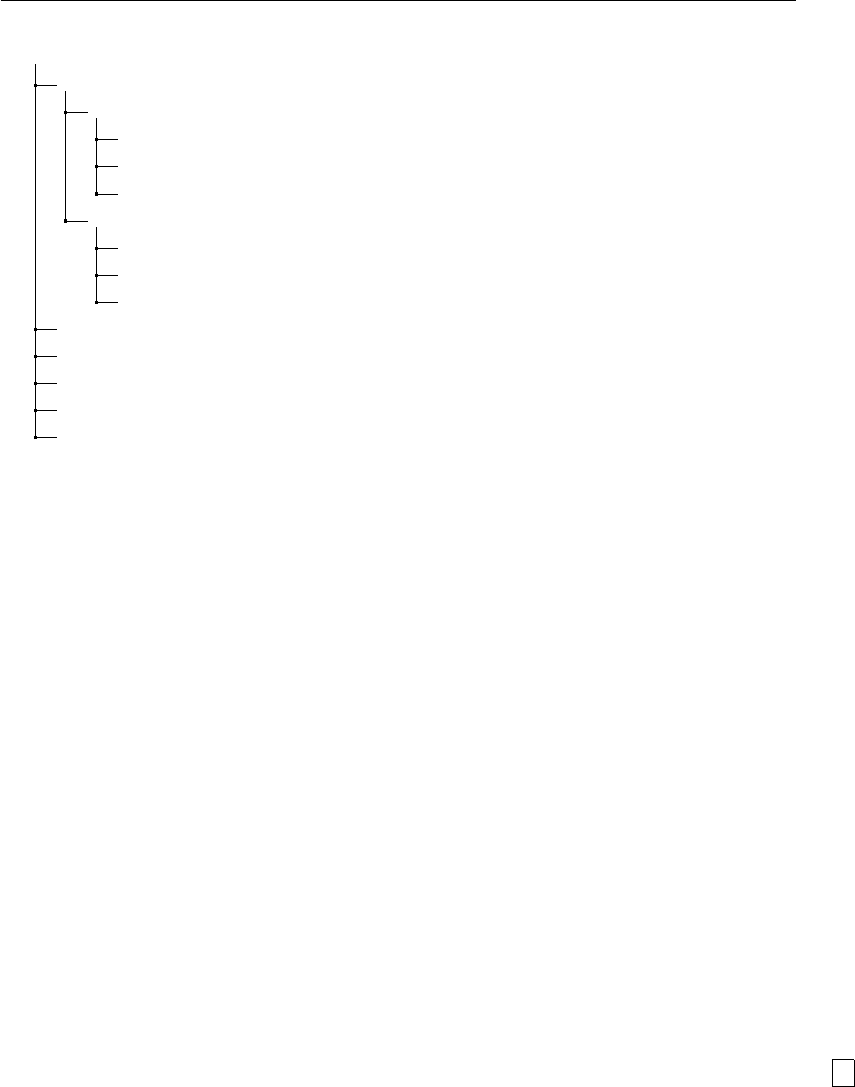

/project_space/Udall

subjects ............... All subject data collected in neuroimaging sessions

RC4101

session1.....................Subject-level processing happens here.

session2

session3

RC4103

session1

session2

session3

session1/RC4103..............Symbolic link to subjects/RC4103/session1.

session2/RC4101..............Symbolic link to subjects/RC4101/session2.

session2/RC4103..............................................and so on.

session3/RC4101

session3/RC4103

Figure 2.3: A longitudinal analysis directory.

RC4101 and RC4103. These are the directories that need to be in place. Note we

organize the actual files by subject ID and scan session. However, to make process-

ing at each cross-sectional point easier, we create directories with symbolic links to

the correct subject/session directories. This flexibility helps in many ways to make

cross-sectional and longitudinal analyses easier.

All simple subject-specific processing is well-organized within the subject/session

directories (for example, skull-stripping, possibly first level FEAT, DTI analysis, co-

registrations). We normally run FreeSurfer in a separate directory, because it likes

to put directories in a single place. Analyses that combine data across subjects or

timepoints (e.g. TBSS) best go in separate directories.

Figure 2.3 shows an example directory for a longitudinal study.

Creating the session-level makefiles

We will do all the subject-level processing from the PROJHOME/sessionN/ directories.

You will need to create a Makefile in PROJHOME/session1,PROJHOME/session2,

and PROJHOME/session3 whose only purpose is to run make within all the subject-

level directories beneath it. Figure 2.4 shows the example session-level makefile

(Udall/subjects/makefile_session.mk). ?#: the make

comment char-

acter.

This Makefile obtains the list of subjects using a wildcard (so it expects to be in

a directory where each subject has its own subdirectory). We will create symbolic

19

Setting up an Analysis Directory

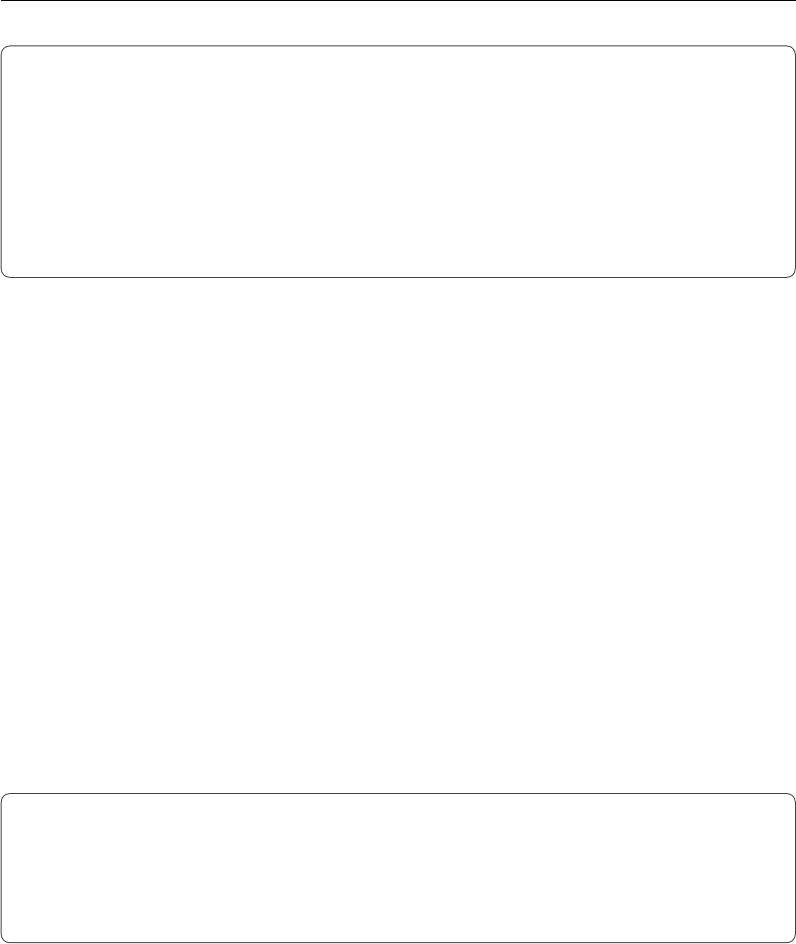

# Top level makefile

# make all will recursively make specified targets in each subject

directory.

SUBJECTS=$(wildcard RC4???)

.PHONY: all $(SUBJECTS)

all: $(SUBJECTS)

$(SUBJECTS):

$(MAKE) –directory=$@ $(TARGET)

Figure 2.4: Session-level Makefile

links to this Makefile later.

The name of the subject directory is declared to be a phony target, so even if it

exists, make will try to rebuild it. The recipe to do this is the very last line, which

calls make recursively within each subject directory. It also passes along a TARGET

variable. If unspecified, this would be the first target in the subject specific makefile.

Creating the common subject-level makefile for each session

As we described, the session-level makefile only exists to call make within all the sub-

directories (symbolic links) in each session. So, we need to create a makefile within

each subdirectory. However, we expect that the only thing that is different about

each session is session-specific processing, which can be controlled by a SESSION vari-

able. We can create a single subject-specific makefile and set up symbolic links to it

just like we intend to do with the session-level makefile. Figure 2.5 is an example of a

subject-level makefile that can be seen at Udall/subjects/makefile_subject.mk.

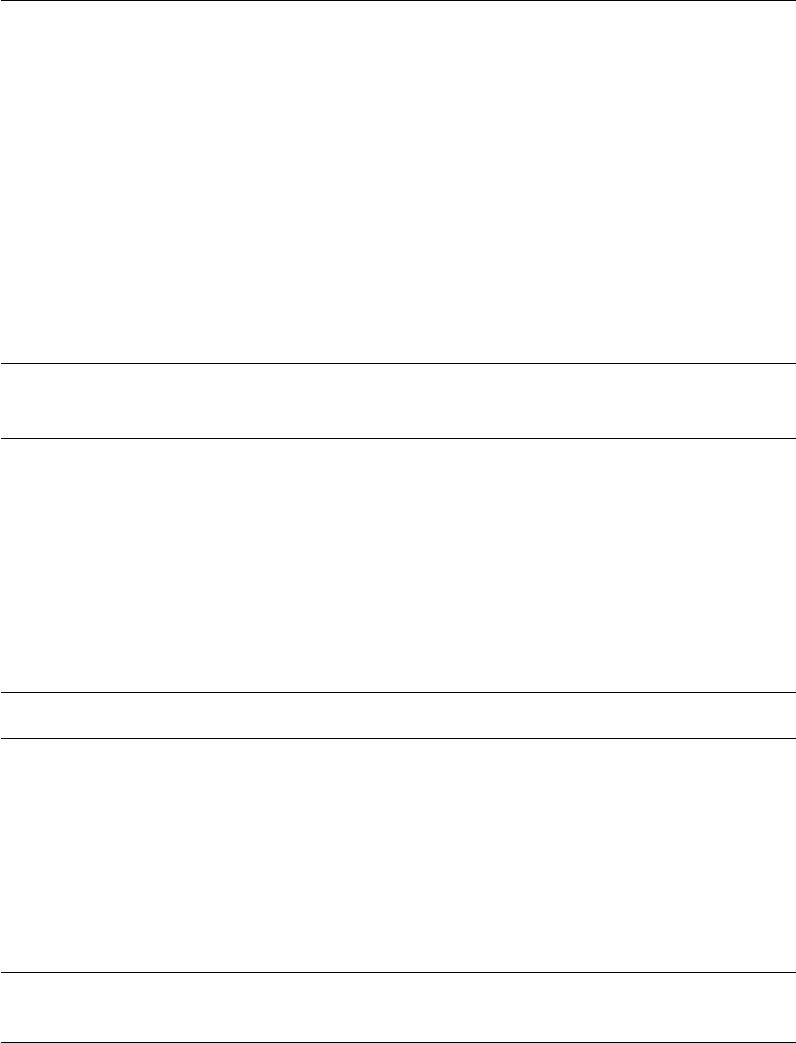

SESSION=$(shell pwd|egrep -o ’session[0-9]’|egrep -o ’[0-9]’)

subject=$(shell pwd|egrep -o ’RC4[0-9][0-9][0-9]’)

test:

@echo Testing that we are making $(subject) from session

$(SESSION)

Figure 2.5: Subject-level makefile

The subject-level makefile defines critical variables, such as the SESSION, which

it obtains from the directory path, and the subject variable, which it also obtains

from the directory path and a regular expression that matches the expected subject

name. To set these variables we use the program egrep, which allows us to extract

a specific pattern from the current working directory.

20

Setting up an Analysis Directory

The only rule is a little dummy rule (because we have no actual data in this test

directory) that ensures we have set these variables directly.

Creating links to the session-level makefile

Recall that the session-level makefile needs to be located within the directories

Udall/session1,Udall/session2 and Udall/session3. We could just copy it

there, but then if we modified it in one place we would have to remember to change

it everywhere. This would probably cause inconsistencies at some point.

Instead, we create a symbolic link to makefile_session.mk from each session

directory as follows:

cd $PROJHOME/Udall/session1

ln -s ../subjects/makefile_session.mk Makefile

Figure 2.6: Creating symbolic links to the session-level makefile

Creating links to subject-level makefile

The last step now is to create a symbolic link within each subject directory to the ap-

propriate subject-level makefile. For example, within the directory subjects/RC4101/session1/,

we can type

$ ln -s ../../makefile_subject.mk Makefile

Running analyses

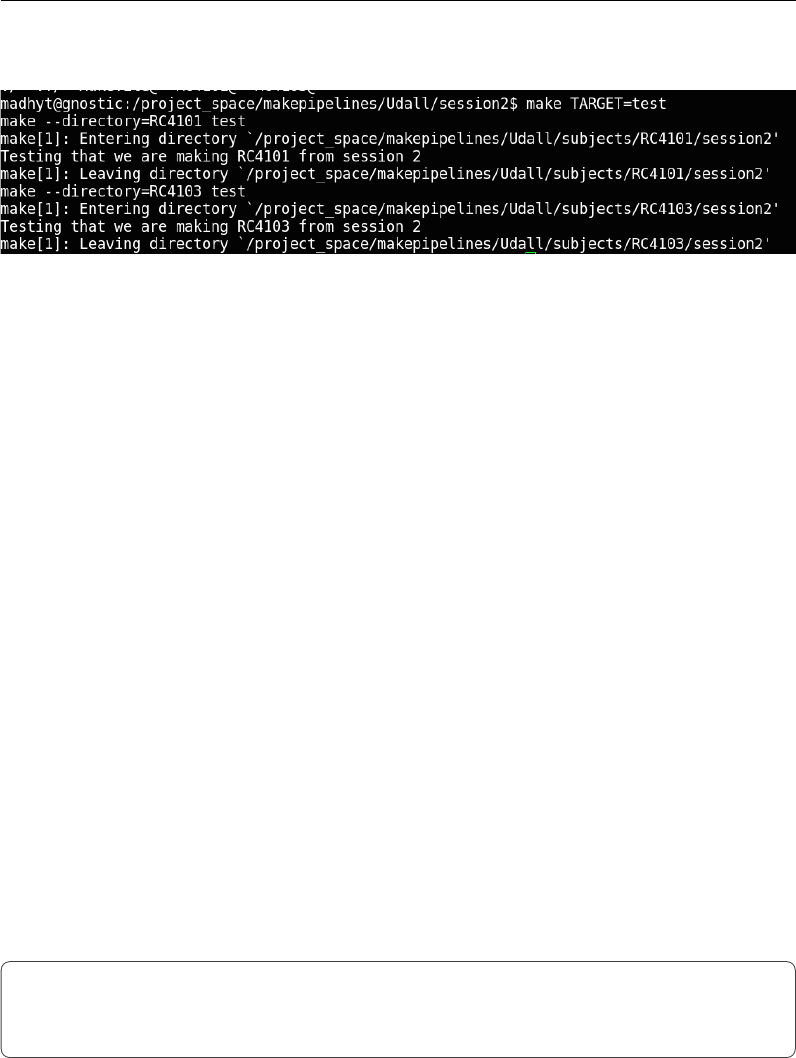

Once these steps are completed, you can conduct single-subject processing for each

session by changing directory to the correct location and issuing the specific make

command. Here, we illustrate how to make the “test” target for all subjects within

session2/.

cd $PROJHOME/Udall/session2

make TARGET=test

Figure 2.7: Making test

This should generate output similar to that shown in Figure 2.8, showing that

21

Setting Important Variables

make goes in to each of the subject directories and creates the test target.

Figure 2.8: Output of recursive make

Setting Important Variables

make uses variables to control a lot of aspects of its own behavior. We describe only

a few here; see the GNU Make manual for the full list. However, it also allows you to

set variables so that you can avoid unnecessary changes to makefiles when you move

them to different projects. This is very important, because after going through the

hassle of writing a makefile for an analysis once, we would like to reuse as much of

it as possible for subsequent studies. It is reasonable to change the recipes to reflect

the most appropriate scientific methods or new versions of software, but it’s not fun

to have to play with naming conventions, etc. We discuss some of the best practices

we have found for using variables to improve portability.

Variables that control make’s Behavior

SHELL

By default the shell used by make recipes is /bin/sh. This shell is one of many that

you can use interactively in Linux or MacOS, but it probably is not what you are

using interactively (because it lacks nice editing capabilities and is less usable than

other shells). Here, we set the default shell to /bin/bash, the same as what we use

interactively, so that we can be sure when we test something at the command line

that it will work similarly in a Makefile.

# Set the default shell

SHELL=/bin/bash

export SHELL

Figure 2.9: Setting $(SHELL)

22

Setting Important Variables

Note that a make variable is accessed within make by prefixing it with a $ sign

and surrounding it with parentheses. Therefore, SHELL is accessed within make as

$(SHELL).

TARGET

In the strategy that we outline for organizing makefiles and conducting subject-

level analyses, we run make recursively (i.e., within the subject directories) from the

session-level directory. To do this very generally, we call make from the session-level

by specifying the TARGET, or what it should “make” within the subject directory.

You do not need to do this within the subject directory itself. For example:

(in subjects/session1)

$ make TARGET=convert_dicoms

(in subjects/session1/s001)

$ make convert_dicoms

SUBJECTS

We think it makes life easier to set this variable to the list of subjects in the study

(or subjects for whom data has been collected). For example, given our directory

structure, when in the top level for a session, the subject identifiers can easily be

found with a wildcard on the directory names. The following statement sets the

variable SUBJECTS to be all the six-digit files in the current directory (i.e., all the

subject directories.)

SUBJECTS=$(wildcard [0-9][0-9][0-9][0-9][0-9][0-9])

subject

Often makefiles are intended to process a single subject. In this case, it is useful to

set a subject variable to be the subject identifier.

SESSION

When subject data is collected at multiple time points, it is useful to set a SESSION

variable that can be used to locate the correct subject files.

23

Setting Important Variables

Other important variables

Ultimately, when it comes time to publish, it is important to state what version of

the different software packages you have used. This means that you need to make

it difficult to accidentally run a job with a different version of the software. For

example, consider this rule to run DTIPrep, a program for eddy correction, motion

correction, and removal of noise from DTI images.

dtiprep/$(subject)_dwi_QCReport.txt: dtiprep$(subject)_dwi.nhdr

DTIPrep --DWINrrdFile $< -p dtiprep/default.xml --default

--check --outputFolder dtiprep/

Figure 2.10: Running DTIPrep in make

Unless you take preventative measures, the version of DTIPrep that is used de-

pends entirely on the caller’s path. So if the version of DTIPrep on one machine is

newer than that on another, results may differ. Alternatively, if my graduate student

runs this makefile, and happens to have the newest version of DTIPrep installed in

their own bin/ directory, that is the version that will be used. This is a big problem

for reproducibility.

A practical way to control for this is to specify the location of the program (if

that conveys version information) as in Figure 2.11.

DTIPREPHOME=/usr/local/DTIPrep_1.1.1_linux64/DTIPrep

dtiprep/$(subject)_dwi_QCReport.txt: dtiprep$(subject)_dwi.nhdr

$(DTIPREPHOME)/DTIPrep --DWINrrdFile $< -p

dtiprep/default.xml --default --check --outputFolder dtiprep/

Figure 2.11: Controlling software version

What about when the programs are installed in some default location, e.g. /usr/local/bin/,

with no version information? In our installation, this occurs frequently when using

other workflow scripts that express pipelines more complicated than what might

reasonably be put into a makefile.

In the case of simple scripts or statically linked programs, it is fairly easy to copy

them into a project-specific bin directory, giving them names that indicate their

versions. If you cannot do this, you need to check the version (if the program is kind

enough to provide an option that will provide the version) or to check the date that

the program was installed, to alert yourself to potential errors. It is useful to set

variables for things like reference brains, templates, and so forth.

24

Setting Important Variables

Variable overrides

It is probably a good idea not to edit a makefile too much once it works. But

sometimes, it is useful to reissue a command with different parameters. Target-

specific variables may be specified in the makefile and overridden on the command

line. In the example below, the default flags for FSL bet are specified as -f .4 -B

.

BETFLAGS = -f .4 -B

%skstrip.nii.gz: %flair.nii.gz

bet $< $@ $(BETFLAGS)

Figure 2.12: Specifying BET flags in make

However, these can be overriden from the command line as follows:

make BETFLAGS=’-f .4 -R’

Suggested targets

These suggestions come from experience building pipelines. Having conventions, so

that similarly named targets do similar kinds of things across different neuroimaging

workflows, is rather helpful and comforting, especially when you spend a lot of time

going between modalities and tools.

We propose splitting functions into multiple makefiles that can then be called

from a common makefile. It is helpful to avoid overruling target names for common

targets. See Processing Scan Data for a Single Test Subject for an example of how

this is done in practice.

all

This is the default, the first target in the file. Nothing in this target should

require human intervention.

help

We use a help system described by John Graham-Cumming, described in Ad-

vanced Topics & Quality Assurance with R Markdown, to document makefiles. You

can approach any makefile by typing make and get a list of documented targets and

their line numbers. From the programmer’s perspective, it is easy to add documen-

tation to a target, because the call to print help and the target are located right

next to each other.

25

Setting Important Variables

clean

Typically this target is used to remove all generated files (e.g., .o, dependency

lists) and clean up the directory to its original state so that one can type "make"

again and regenerate. So the idea is that “make clean” will clear the decks to allow

you to restart the pipeline from scratch. This is particularly useful if you have acci-

dentally altered some files and would like to make sure that you know exactly what

processing has occurred.

mostlyclean (or archive)

This is the same as clean, but does not remove things that are a pain to recreate

(e.g., involve hand checking, or time-consuming analysis) or are critical results for

publication. We use this because we are perpetually short of disk space, and this

helps to clean up.

.PHONY

.PHONY (the period in front is necessary) is a special target used to tell make which

targets are not real files. For example, common targets are all (to make everything)

and clean (to remove everything). If you create a file named “all” or “clean” in the

directory with the makefile, suddenly make will see that the target file “all” exists,

and will not do anything if it is newer than its dependencies.

To stop this rather unexpected behavior, list targets that are not real files as

.PHONY:

.PHONY: all clean anything_else_that_is_not_a_file

.SECONDARY

This target is used to define files that are created as intermediate products by implicit

rules, but that you don’t want deleted. This is critically important - perhaps a good

philosophy is to define here all the files that are a pain to recreate. See Overview of

make for a lesson on secondary targets.

.INTERMEDIATE

This target allows you to specify files that can be automatically deleted after the final

targets are created. For example, during resting state preprocessing (Preprocessing

Resting State Data) you create many intermediate files during the process (e.g., the

output of motion correction, despiking). These are useful to check for QA purposes

26

Setting Important Variables

but in the end you may not want to keep them. Specifying them as intermediate

targets will delete them after completion of the pipeline.

If you specify targets as intermediate, but you leave .SECONDARY blank, interme-

diates are treated as secondary and are not deleted automatically. However, if you

delete them (e.g., in a clean target), they will not be recreated when you run make

again so long as the targets depending on them already exist.

27

Chapter 3

Running make in Parallel

Although it is very powerful to be able to use makefiles to launch jobs in parallel,

some care needs to be taken when writing the makefile, as with any parallel program,

to ensure that simultaneously running jobs do not conflict with each other. In

general, it is good form to follow these rules for all makefiles, because it seems

highly likely that if you ignore them, there will come a deadline, and you will think

to yourself “I have eight cores and only a few days” and you will run make in parallel,

and all of your results will be subtly corrupted due to your lack of forethought,

something you won’t discover until you only have a few hours left. This is the way

of computers.

Guidelines for Writing Parallelizable Makefiles

There are a few key things to remember when setting running make in parallel.

Each line in a recipe is, by default, executed in its own shell

This means that any variables you set in one line won’t be “remembered” by the

next line, and so on. The best thing to do is to put all lines of a recipe on the same

line, by ending each of them with a semicolon and a backslash (;\). Similarly, when

debugging a recipe in a parallel makefile gone wrong, look first for a situation where

you have forgotten to put all lines of a recipe on the same line. For example, the

recipe shown in Figure 3.1 will not work as intended, not matter what, because the

first line of this recipe is not remembered by the second, and brain.volume.txt

will be empty.

Instead, write the script as shown in Figure 3.2. Note the ;\ in red connects the

two lines.

28

Guidelines for Writing Parallelizable Makefiles

set foo=`fslmaths brain.nii.gz -V | awk ’print $$2’`

cat $$foo > brain.volume.txt

Figure 3.1: A non-functional multi-line recipe

set foo=`fslmaths brain.nii.gz -V | awk ’print $$2’` ;\

cat $$foo > brain.volume.txt

Figure 3.2: A now-functional multi-line recipe

You can also use &&, a bash operator that executes the next command only if

the previous command was successful1. For example:

set foo=`fslmaths brain.nii.gz -V | awk ’print $$2’` &&\

cat $$foo > brain.volume.txt

Figure 3.3: A multi-line recipe using “&&\”

In this instance, bash will not attempt to cat the file if fslmaths or awk failed.

Filenames must be unique

It is very tempting to do something like the following, or the previous example, as

you would while interactively using a shell script.

some_program > foo.out ;\

do something --now --with foo.out

Figure 3.4: How not to name files

This will work great sequentially; first foo.out is created and then it is used.

You have attended to rule #1 and the commands will be executed together in one

shell. But consider what happens when four processors run this recipe independently,

in the same directory. Whichever recipe completes first will overwrite foo.out. This

could happen at any time, so it is entirely possible that process A writes foo.out

just in time for process B to use it. Meanwhile, process C can come along and rewrite

it again. You see the point. The best way to avoid this problem, no matter how

1In other words, has exited with an exit status ($?) of “0.”

29

Guidelines for Writing Parallelizable Makefiles

the makefile is used, is to always create temporary files (like foo.out) that include

a unique identifier. Alternately, you could use a subject identifier to form the name.

For longitudinal analysis we play it safe and often use a timepoint identifier as well.

Thus, whether you conduct your analysis using one subdirectory per subject or in

a single directory for the entire analysis, you can take the recipe you have written

and use it without modification. Another approach is to create unique temporary

names using the mktemp program and delete them when you are finished.

Many neuroimaging software packages use the convention that files within a

subject-specific directory that contains a subject identifier can have the same names.

In that case, just go with it: They have done that in part to avoid this confusion

while running the software in parallel on a shared filesystem.

Separate time-consuming tasks

Try to separate expensive tasks into seperate recipes. Suppose you have two time-

consuming steps: run_a and run_b.run_a takes half an hour to generate a.out and

run_b takes an hour to generate b.out. Additionally, run_a must complete before

the other can begin. Examine the rule in Figure 3.5.

a.out b.out: b.in

run_a ;\

run_b

Figure 3.5: The wrong way to run two long tasks

This sort of works, but suppose another task only needs a.out, and doesn’t

depend at all on b.out. You would spend a lot of extra time generating b.out,

especially if this was a batch job. A worse problem is that you have specified two

targets, a.out and b.out. This is like reproducing this rule twice. If you run in

parallel this rule will fire twice, once to create a.out and once to createb.out, and

you will spend twice as much effort as you need to (but in parallel). So it is better

for many reasons to write the rule in two separate lines, as in Figure 3.6.

a.out: b.in

run a

b.out: a.out

run_b

Figure 3.6: The right way to run two long tasks

30

Executing in Parallel

Executing in Parallel

Using multiple cores

Most modern computers have multiple processing elements (cores). You can use

these to execute multiple jobs simultaneously by passing the -j option to make. You

can either specify the number of jobs after -j or you can omit the argument and let

it use the maximum number of cores available.

It is good to know the number of cores on your machine. The command nproc

might work, or you can ask your system administrator.

You have to be careful not to start a lot more jobs than you have cores, other

wise the performance of all the jobs will suffer. Let us assume there are four cores

available. Pass the number of jobs to make.

$ make -j 4

Now you will execute the four cores simultaneously. This will work well if no one

else is using your machine to run jobs. For this reason, you may want to specify that

make should use fewer cores than available so that you can still get good response

time on the machine.

Recall that make will die when it encounters an error. When running jobs in

parallel, make can encounter an error that much faster. If one job dies, all the rest

will be stopped. This is rarely the behavior you want, because typically each job is

independent of the others. To tell make not to give up when the going gets tough,

use the -k (keep going!) flag.

$ make -j 4 -k

Using the grid engine

Four cores (or even eight or 12 or 24) is nice, but a cluster is even nicer. A cluster

gangs together several multi-core machines to act like one. At IBIC, our cluster

environment is the Sun Grid Engine (SGE) which is a batch queuing system. This

software layer allows you to submit jobs, queue them up, and farm them out to one

of the many machines in the cluster. Different sites may be configured differently,

so check with your system administrator. !In IBIC, you

can submit jobs

from any ma-

chine in a grid

engine. In IBIC,

pole and pons

are easy to type.

31

Using the grid engine

Setting FSLPARALLEL for FSL jobs

There are two ways to use make to run jobs in parallel on the SGE. The first is to

use the scripts for parallelism that are built in to FSL. In our configuration, all you

need to do is set the environment variable FSLPARALLEL from your shell as follows:

$ export FSLPARALLEL=true

This must be done before running make! Then, you run your makefile as you

would on a single machine, on a machine that is allowed to submit jobs to the SGE

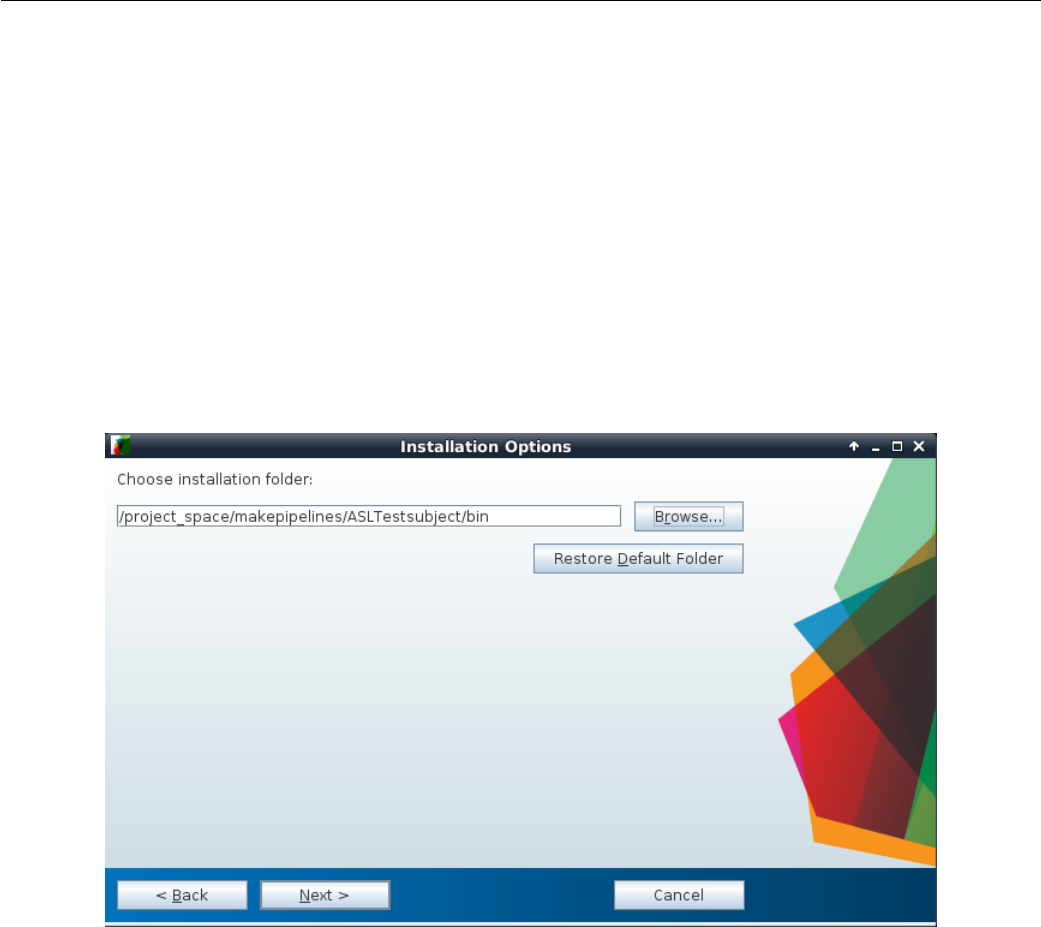

(check with your system administrator to find out what this is). What will happen

is that the FSL tools will see that this flag is set, and use the script fsl_sub to

break up the jobs and submit them to the SGE. You do not need to set the -j flag

as above, because FSL will control its own job submission and scheduling.

Note that this trick will only work if you are using primarily FSL tools that are

written to be parallel. What happens if you want to use something like bet on 900

brains (which is not parallelized), or other tools that are not from FSL?

Using qmake

By using qmake, a program that interacts with the SGE, you can automatically

parallelize jobs that are started by a makefile. This is a useful way to structure

your workflows, because you can run the same neuroimaging code on a single core,

a multicore, and the SGE simply by changing your command line. You may need

to discuss specifics of environment variables that need to be set to run qmake with

your system administrator. If you are using make in parallel, you also will probably

want to turn off FSLPARALLEL if you have enabled it by default.

There are two ways that you can execute qmake, giving you a lot of flexibility.

The first is by submitting jobs dynamically, so that each one goes into the job queue

just like a mini shell script. To do this, type

$ qmake -cwd -V -- -j 20 all

The flags that appear before the “--” are flags to qmake,and control grid

engine parameters. The -cwd flag means to start grid engine jobs from the current

directory (useful!) and -V tells it to pass all your environment variables along. If

you forget the -V, we promise you that very bad things will happen. For example,

FSL will crash because it can’t find its shared libraries. Many programs will “not

be found” because your path is not set correctly. Your jobs will crash, and that

earthquake will kill all of us.

32

Using the grid engine

On the opposite side of the “--” are flags to make.By default, just like

normal make, this will start exactly one job at a time. This is not very useful! You

probably want to specify how much parallelism you want by using the -j flag to

make (how many jobs to start at any one time). The above example runs 20 jobs

simultaneously. The last argument, all, is a target for make that is dependent upon

the particular makefile used.

One drawback of executing jobs dynamically is that make might never get enough

computer resources to finish. For this reason, there is also a parallel environment

for make that reserves some number of processors for the make process and then

manages those itself. You can specify your needs for this environment by typing

$ qmake -cwd -V -pe make 1-10 -- freesurfer

This command uses the -pe to specify the parallel environment called make and

reserves 10 nodes in this environment. The argument to make is freesurfer in this

example. Note that we do not use this environment in IBIC.

How long will everything take?

A good thing to do is to estimate how long your entire job will take by running make

on a single subject and measuring the “wall clock” time, or the time that it takes

between when you start running it and when it finishes. If you will be going home

for the night, add a command to print the system date (date) as the last line in

the recipe, or look at the timestamp of the last file created. Suppose one subject

takes 12 hours. Probably other subjects will take, on average, the same amount of

time. So you multiply the number of subjects by 12 hours, and divide by 24 to get

days. For 100 subjects, this job would take 50 days. This calculation tells you that

it would be a long time to wait for your results on your four-core workstation (and

in the meantime, it would be hard to do much else).