Manual

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 361 [warning: Documents this large are best viewed by clicking the View PDF Link!]

MOSES

Statistical

Machine Translation

System

User Manual and Code Guide

Philipp Koehn

pkoehn@inf.ed.ac.uk

University of Edinburgh

Abstract

This document serves as user manual and code guide for the Moses machine translation decoder. The

decoder was mainly developed by Hieu Hoang and Philipp Koehn at the University of Edinburgh and

extended during a Johns Hopkins University Summer Workshop and further developed under EuroMa-

trix and GALE project funding. The decoder (which is part of a complete statistical machine translation

toolkit) is the de facto benchmark for research in the field.

This document serves two purposes: a user manual for the functions of the Moses decoder and a code

guide for developers. In large parts, this manual is identical to documentation available at the official

Moses decoder web site http://www.statmt.org/. This document does not describe in depth the underlying

methods, which are described in the text book Statistical Machine Translation (Philipp Koehn, Cambridge

University Press, 2009).

February 3, 2018

2

Acknowledgments

The Moses decoder was supported by the European Framework 6 projects EuroMatrix, TC-Star,

the European Framework 7 projects EuroMatrixPlus, Let’s MT, META-NET and MosesCore

and the DARPA GALE project, as well as several universities such as the University of Edin-

burgh, the University of Maryland, ITC-irst, Massachusetts Institute of Technology, and others.

Contributors are too many to mention, but it is important to stress the substantial contributions

from Hieu Hoang, Chris Dyer, Josh Schroeder, Marcello Federico, Richard Zens, and Wade

Shen. Moses is an open source project under the guidance of Philipp Koehn.

Contents

1 Introduction 11

1.1 WelcometoMoses!.................................... 11

1.2 Overview ......................................... 11

1.2.1 Technology.................................... 11

1.2.2 Components ................................... 12

1.2.3 Development................................... 13

1.2.4 MosesinUse................................... 14

1.2.5 History ...................................... 14

1.3 GetInvolved ....................................... 14

1.3.1 MailingList.................................... 14

1.3.2 Suggestions.................................... 15

1.3.3 Development................................... 15

1.3.4 Use......................................... 15

1.3.5 Contribute .................................... 15

1.3.6 Projects ...................................... 16

2 Installation 23

2.1 Getting Started with Moses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

2.1.1 Easy Setup on Ubuntu (on other linux systems, you’ll need to install

packages that provide gcc, make, git, automake, libtool) . . . . . . . . . . 23

2.1.2 Compiling Moses directly with bjam . . . . . . . . . . . . . . . . . . . . . 25

2.1.3 Other software to install . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

2.1.4 Platforms ..................................... 27

2.1.5 OSXInstallation ................................. 27

2.1.6 LinuxInstallation ................................ 28

2.1.7 Windows Installation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

2.1.8 Run Moses for the first time . . . . . . . . . . . . . . . . . . . . . . . . . . 30

2.1.9 ChartDecoder .................................. 31

2.1.10 NextSteps .................................... 31

2.1.11 bjamoptions ................................... 31

2.2 BuildingwithEclipse .................................. 33

2.3 BaselineSystem...................................... 34

2.3.1 Overview..................................... 34

2.3.2 Installation .................................... 35

2.3.3 CorpusPreparation ............................... 36

2.3.4 Language Model Training . . . . . . . . . . . . . . . . . . . . . . . . . . . . 37

2.3.5 Training the Translation System . . . . . . . . . . . . . . . . . . . . . . . . 38

3

4CONTENTS

2.3.6 Tuning....................................... 39

2.3.7 Testing....................................... 40

2.3.8 Experiment Management System (EMS) . . . . . . . . . . . . . . . . . . . 43

2.4 Releases .......................................... 44

2.4.1 Release 4.0 (5th Oct, 2017) . . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

2.4.2 Release 3.0 (3rd Feb, 2015) . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

2.4.3 Release 2.1.1 (3rd March, 2014) . . . . . . . . . . . . . . . . . . . . . . . . . 44

2.4.4 Release 2.1 (21th Jan, 2014) . . . . . . . . . . . . . . . . . . . . . . . . . . . 45

2.4.5 Release 1.0 (28th Jan, 2013) . . . . . . . . . . . . . . . . . . . . . . . . . . . 45

2.4.6 Release 0.91 (12th October, 2012) . . . . . . . . . . . . . . . . . . . . . . . . 51

2.4.7 Status 11th July, 2012 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51

2.4.8 Status 13th August, 2010 . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

2.4.9 Status 9th August, 2010 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

2.4.10 Status 26th April, 2010 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 54

2.4.11 Status 1st April, 2010 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 54

2.4.12 Status 26th March, 2010 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 54

2.5 WorkinProgress..................................... 55

3 Tutorials 57

3.1 Phrase-basedTutorial .................................. 57

3.1.1 A Simple Translation Model . . . . . . . . . . . . . . . . . . . . . . . . . . 57

3.1.2 RunningtheDecoder .............................. 58

3.1.3 Trace........................................ 59

3.1.4 Verbose ...................................... 60

3.1.5 TuningforQuality................................ 64

3.1.6 TuningforSpeed................................. 65

3.1.7 Limit on Distortion (Reordering) . . . . . . . . . . . . . . . . . . . . . . . . 68

3.2 Tutorial for Using Factored Models . . . . . . . . . . . . . . . . . . . . . . . . . . 69

3.2.1 Train an unfactored model . . . . . . . . . . . . . . . . . . . . . . . . . . . 70

3.2.2 Train a model with POS tags . . . . . . . . . . . . . . . . . . . . . . . . . . 71

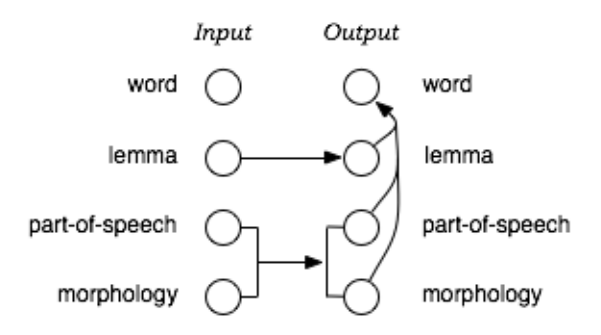

3.2.3 Train a model with generation and translation steps . . . . . . . . . . . . 74

3.2.4 Train a morphological analysis and generation model . . . . . . . . . . . 75

3.2.5 Train a model with multiple decoding paths . . . . . . . . . . . . . . . . . 76

3.3 SyntaxTutorial ...................................... 77

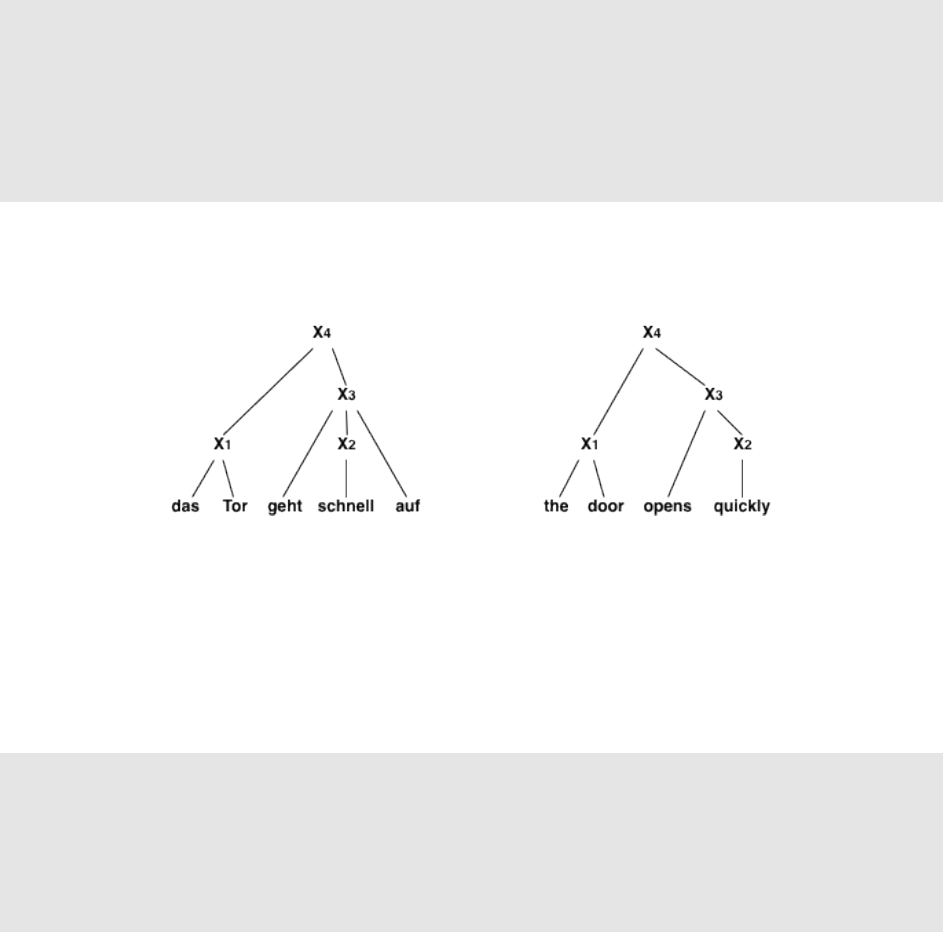

3.3.1 Tree-BasedModels................................ 77

3.3.2 Decoding ..................................... 79

3.3.3 DecoderParameters............................... 83

3.3.4 Training...................................... 85

3.3.5 Using Meta-symbols in Non-terminal Symbols (e.g., CCG) . . . . . . . . . 88

3.3.6 Different Kinds of Syntax Models . . . . . . . . . . . . . . . . . . . . . . . 89

3.3.7 Format of text rule table . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 94

3.4 OptimizingMoses .................................... 95

3.4.1 Multi-threaded Moses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 95

3.4.2 How much memory do I need during decoding? . . . . . . . . . . . . . . 96

3.4.3 How little memory can I get away with during decoding? . . . . . . . . . 98

3.4.4 FasterTraining.................................. 98

3.4.5 TrainingSummary................................100

3.4.6 LanguageModel.................................101

CONTENTS 5

3.4.7 Suffixarray....................................103

3.4.8 CubePruning...................................104

3.4.9 Minimizing memory during training . . . . . . . . . . . . . . . . . . . . . 104

3.4.10 Minimizing memory during decoding . . . . . . . . . . . . . . . . . . . . 104

3.4.11 Phrase-tabletypes................................106

3.5 Experiment Management System . . . . . . . . . . . . . . . . . . . . . . . . . . . . 107

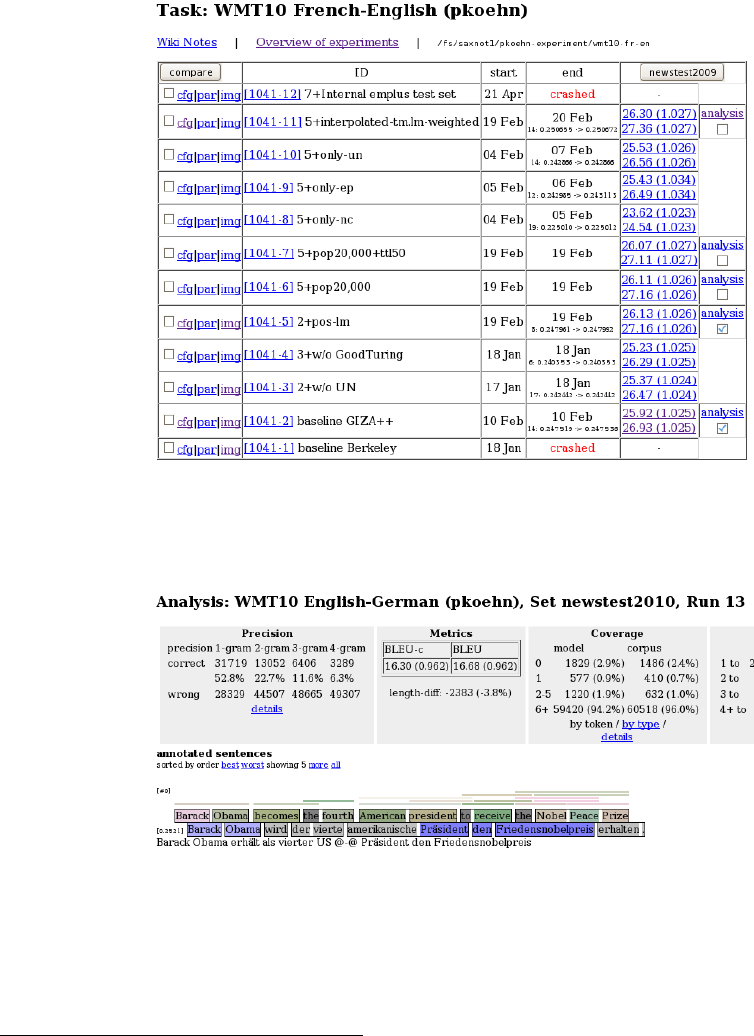

3.5.1 Introduction ...................................107

3.5.2 Requirements...................................108

3.5.3 QuickStart ....................................109

3.5.4 MoreExamples..................................112

3.5.5 Try a Few More Things . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 116

3.5.6 AShortManual .................................120

3.5.7 Analysis......................................128

4 User Guide 133

4.1 SupportTools.......................................133

4.1.1 Overview.....................................133

4.1.2 Converting Pharaoh configuration files to Moses configuration files . . . 133

4.1.3 Moses decoder in parallel . . . . . . . . . . . . . . . . . . . . . . . . . . . . 133

4.1.4 Filtering phrase tables for Moses . . . . . . . . . . . . . . . . . . . . . . . . 134

4.1.5 Reducing and Extending the Number of Factors . . . . . . . . . . . . . . . 134

4.1.6 Scoring translations with BLEU . . . . . . . . . . . . . . . . . . . . . . . . 135

4.1.7 Missing and Extra N-Grams . . . . . . . . . . . . . . . . . . . . . . . . . . 135

4.1.8 Making a Full Local Clone of Moses Model + ini File . . . . . . . . . . . . 135

4.1.9 Absolutizing Paths in moses.ini . . . . . . . . . . . . . . . . . . . . . . . . 136

4.1.10 Printing Statistics about Model Components . . . . . . . . . . . . . . . . . 136

4.1.11 Recaser ......................................137

4.1.12 Truecaser .....................................137

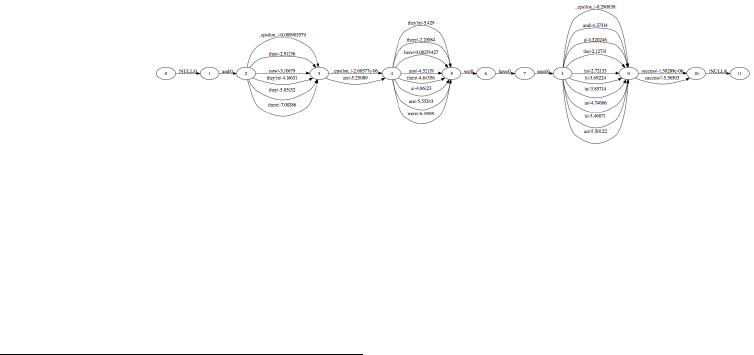

4.1.13 SearchgraphtoDOT...............................138

4.1.14 Threshold Pruning of Phrase Table . . . . . . . . . . . . . . . . . . . . . . 139

4.2 ExternalTools.......................................140

4.2.1 Word Alignment Tools . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 140

4.2.2 EvaluationMetrics................................143

4.2.3 Part-of-Speech Taggers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 144

4.2.4 SyntacticParsers.................................145

4.2.5 Other Open Source Machine Translation Systems . . . . . . . . . . . . . . 147

4.2.6 Other Translation Tools . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 148

4.3 UserDocumentation...................................149

4.4 AdvancedModels ....................................150

4.4.1 Lexicalized Reordering Models . . . . . . . . . . . . . . . . . . . . . . . . 150

4.4.2 Operation Sequence Model (OSM) . . . . . . . . . . . . . . . . . . . . . . . 153

4.4.3 Class-basedModels ...............................156

4.4.4 Multiple Translation Tables and Back-off Models . . . . . . . . . . . . . . 157

4.4.5 Global Lexicon Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 161

4.4.6 Desegmentation Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 162

4.4.7 Advanced Language Models . . . . . . . . . . . . . . . . . . . . . . . . . . 163

4.5 Efficient Phrase and Rule Storage . . . . . . . . . . . . . . . . . . . . . . . . . . . . 163

4.5.1 Binary Phrase Tables with On-demand Loading . . . . . . . . . . . . . . . 163

6CONTENTS

4.5.2 Compact Phrase Table . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 164

4.5.3 Compact Lexical Reordering Table . . . . . . . . . . . . . . . . . . . . . . . 167

4.5.4 Pruning the Translation Table . . . . . . . . . . . . . . . . . . . . . . . . . 167

4.5.5 Pruning the Phrase Table based on Relative Entropy . . . . . . . . . . . . 168

4.5.6 Pruning Rules based on Low Scores . . . . . . . . . . . . . . . . . . . . . . 172

4.6 Search ...........................................172

4.6.1 Contents......................................172

4.6.2 Generating n-Best Lists . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 172

4.6.3 Minimum Bayes Risk Decoding . . . . . . . . . . . . . . . . . . . . . . . . 174

4.6.4 Lattice MBR and Consensus Decoding . . . . . . . . . . . . . . . . . . . . 174

4.6.5 OutputSearchGraph ..............................176

4.6.6 Early Discarding of Hypotheses . . . . . . . . . . . . . . . . . . . . . . . . 178

4.6.7 Maintaining stack diversity . . . . . . . . . . . . . . . . . . . . . . . . . . . 178

4.6.8 CubePruning...................................178

4.7 OOVs ...........................................179

4.7.1 Contents......................................179

4.7.2 Handling Unknown Words . . . . . . . . . . . . . . . . . . . . . . . . . . . 179

4.7.3 Unsupervised Transliteration Model . . . . . . . . . . . . . . . . . . . . . 180

4.8 HybridTranslation....................................182

4.8.1 Contents......................................182

4.8.2 XMLMarkup...................................182

4.8.3 Specifying Reordering Constraints . . . . . . . . . . . . . . . . . . . . . . . 184

4.8.4 Fuzzy Match Rule Table for Hierachical Models . . . . . . . . . . . . . . . 185

4.8.5 Placeholder....................................185

4.9 MosesasaService ....................................188

4.9.1 Contents......................................188

4.9.2 MosesServer...................................188

4.9.3 Open Machine Translation Core (OMTC) - A proposed machine transla-

tionsystemstandard...............................189

4.10 IncrementalTraining...................................190

4.10.1 Contents......................................190

4.10.2 Introduction ...................................191

4.10.3 InitialTraining ..................................191

4.10.4 Virtual Phrase Tables Based on Sampling Word-aligned Bitexts . . . . . . 192

4.10.5 Updates......................................193

4.10.6 Phrase Table Features for PhraseDictionaryBitextSampling . . . . . . . . 195

4.10.7 Suffix Arrays for Hierarchical Models . . . . . . . . . . . . . . . . . . . . . 197

4.11 DomainAdaptation ...................................199

4.11.1 Contents......................................199

4.11.2 Translation Model Combination . . . . . . . . . . . . . . . . . . . . . . . . 200

4.11.3 OSM Model Combination (Interpolated OSM) . . . . . . . . . . . . . . . . 201

4.11.4 Online Translation Model Combination (Multimodel phrase table type) . 202

4.11.5 Alternate Weight Settings . . . . . . . . . . . . . . . . . . . . . . . . . . . . 204

4.11.6 Modified Moore-Lewis Filtering . . . . . . . . . . . . . . . . . . . . . . . . 206

4.12 ConstrainedDecoding..................................207

4.12.1 Contents......................................207

4.12.2 Constrained Decoding . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 207

4.13 Cache-basedModels...................................207

CONTENTS 7

4.13.1 Contents......................................207

4.13.2 Dynamic Cache-Based Phrase Table . . . . . . . . . . . . . . . . . . . . . . 208

4.13.3 Dynamic Cache-Based Language Model . . . . . . . . . . . . . . . . . . . 213

4.14 Pipeline Creation Language (PCL) . . . . . . . . . . . . . . . . . . . . . . . . . . . 218

4.15 ObsoleteFeatures.....................................221

4.15.1 BinaryPhrasetable ...............................221

4.15.2 Word-to-word alignment . . . . . . . . . . . . . . . . . . . . . . . . . . . . 222

4.15.3 Binary Reordering Tables with On-demand Loading . . . . . . . . . . . . 224

4.15.4 Continue Partial Translation . . . . . . . . . . . . . . . . . . . . . . . . . . 224

4.15.5 Distributed Language Model . . . . . . . . . . . . . . . . . . . . . . . . . . 225

4.15.6 Using Multiple Translation Systems in the Same Server . . . . . . . . . . 228

4.16 SparseFeatures......................................229

4.16.1 Word Translation Features . . . . . . . . . . . . . . . . . . . . . . . . . . . 230

4.16.2 Phrase Length Features . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 231

4.16.3 DomainFeatures.................................232

4.16.4 CountBinFeatures................................233

4.16.5 BigramFeatures .................................233

4.16.6 Soft Matching Features . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 233

4.17 Translating Web pages with Moses . . . . . . . . . . . . . . . . . . . . . . . . . . . 234

4.17.1 Introduction ...................................234

4.17.2 Detailed setup instructions . . . . . . . . . . . . . . . . . . . . . . . . . . . 237

5 Training Manual 241

5.1 Training ..........................................241

5.1.1 Trainingprocess .................................241

5.1.2 Running the training script . . . . . . . . . . . . . . . . . . . . . . . . . . . 242

5.2 PreparingTrainingData.................................242

5.2.1 Training data for factored models . . . . . . . . . . . . . . . . . . . . . . . 243

5.2.2 Cleaningthecorpus...............................243

5.3 FactoredTraining.....................................244

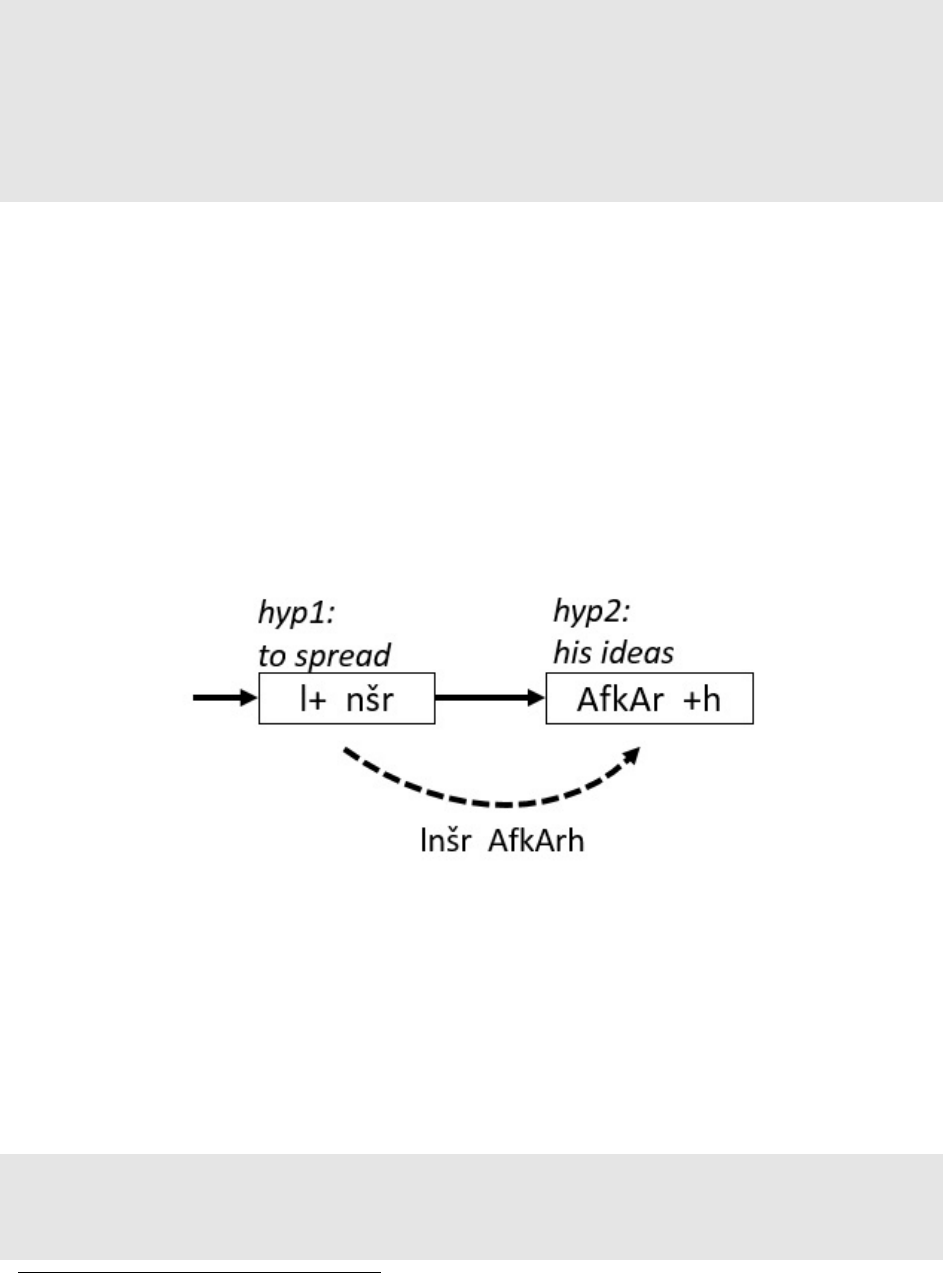

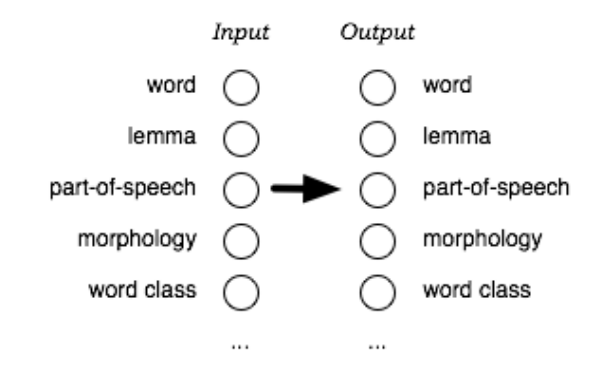

5.3.1 Translationfactors................................244

5.3.2 Reorderingfactors................................245

5.3.3 Generationfactors................................245

5.3.4 Decodingsteps..................................245

5.4 Training Step 1: Prepare Data . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 245

5.5 Training Step 2: Run GIZA++ . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 247

5.5.1 Training on really large corpora . . . . . . . . . . . . . . . . . . . . . . . . 248

5.5.2 Traininginparallel................................248

5.6 Training Step 3: Align Words . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 249

5.7 Training Step 4: Get Lexical Translation Table . . . . . . . . . . . . . . . . . . . . 252

5.8 Training Step 5: Extract Phrases . . . . . . . . . . . . . . . . . . . . . . . . . . . . 252

5.9 Training Step 6: Score Phrases . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 253

5.10 Training Step 7: Build reordering model . . . . . . . . . . . . . . . . . . . . . . . . 256

5.11 Training Step 8: Build generation model . . . . . . . . . . . . . . . . . . . . . . . . 258

5.12 Training Step 9: Create Configuration File . . . . . . . . . . . . . . . . . . . . . . . 258

5.13 Building a Language Model . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 258

5.13.1 Language Models in Moses . . . . . . . . . . . . . . . . . . . . . . . . . . . 258

5.13.2 Enabling the LM OOV Feature . . . . . . . . . . . . . . . . . . . . . . . . . 260

8CONTENTS

5.13.3 Building a LM with the SRILM Toolkit . . . . . . . . . . . . . . . . . . . . 260

5.13.4 On the IRSTLM Toolkit . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 260

5.13.5 RandLM......................................265

5.13.6 KenLM ......................................269

5.13.7 OxLM.......................................273

5.13.8 NPLM.......................................273

5.13.9 BilingualNeuralLM...............................275

5.13.10 Bilingual N-gram LM (OSM) . . . . . . . . . . . . . . . . . . . . . . . . . . 276

5.13.11 Dependency Language Model (RDLM) . . . . . . . . . . . . . . . . . . . . 278

5.14 Tuning...........................................279

5.14.1 Overview.....................................279

5.14.2 Batch tuning algorithms . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 279

5.14.3 Online tuning algorithms . . . . . . . . . . . . . . . . . . . . . . . . . . . . 280

5.14.4 Metrics ......................................281

5.14.5 TuninginPractice ................................281

6 Background 285

6.1 Background........................................285

6.1.1 Model.......................................286

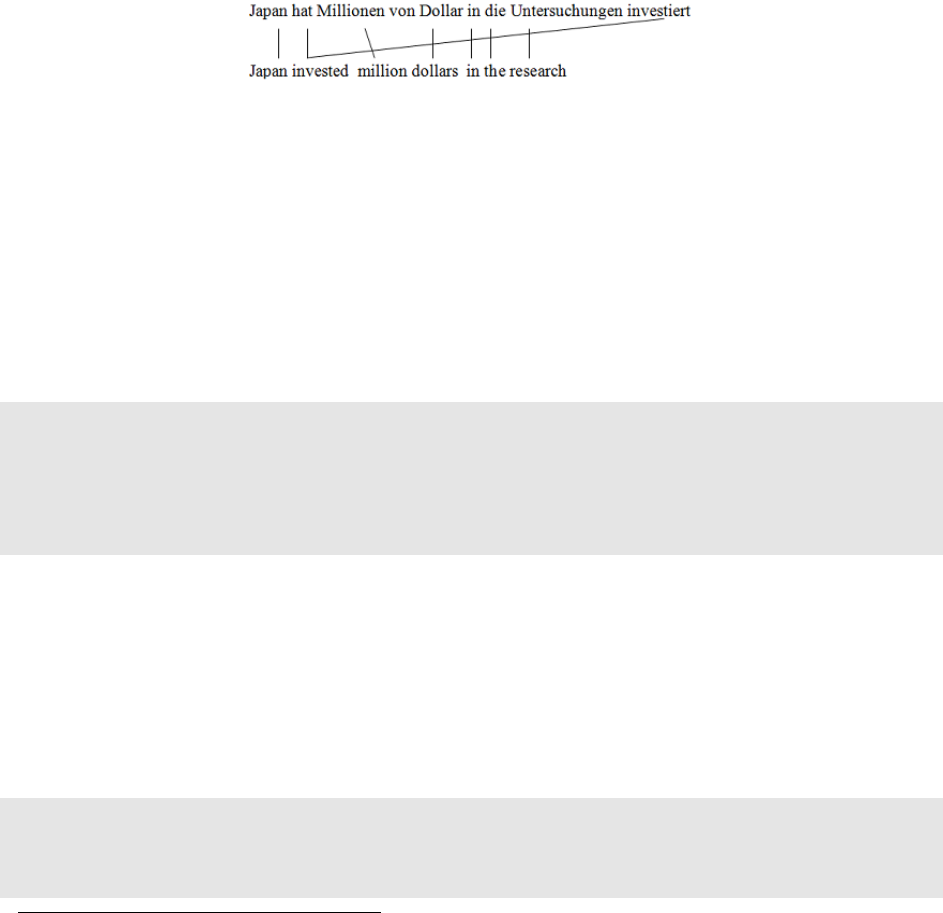

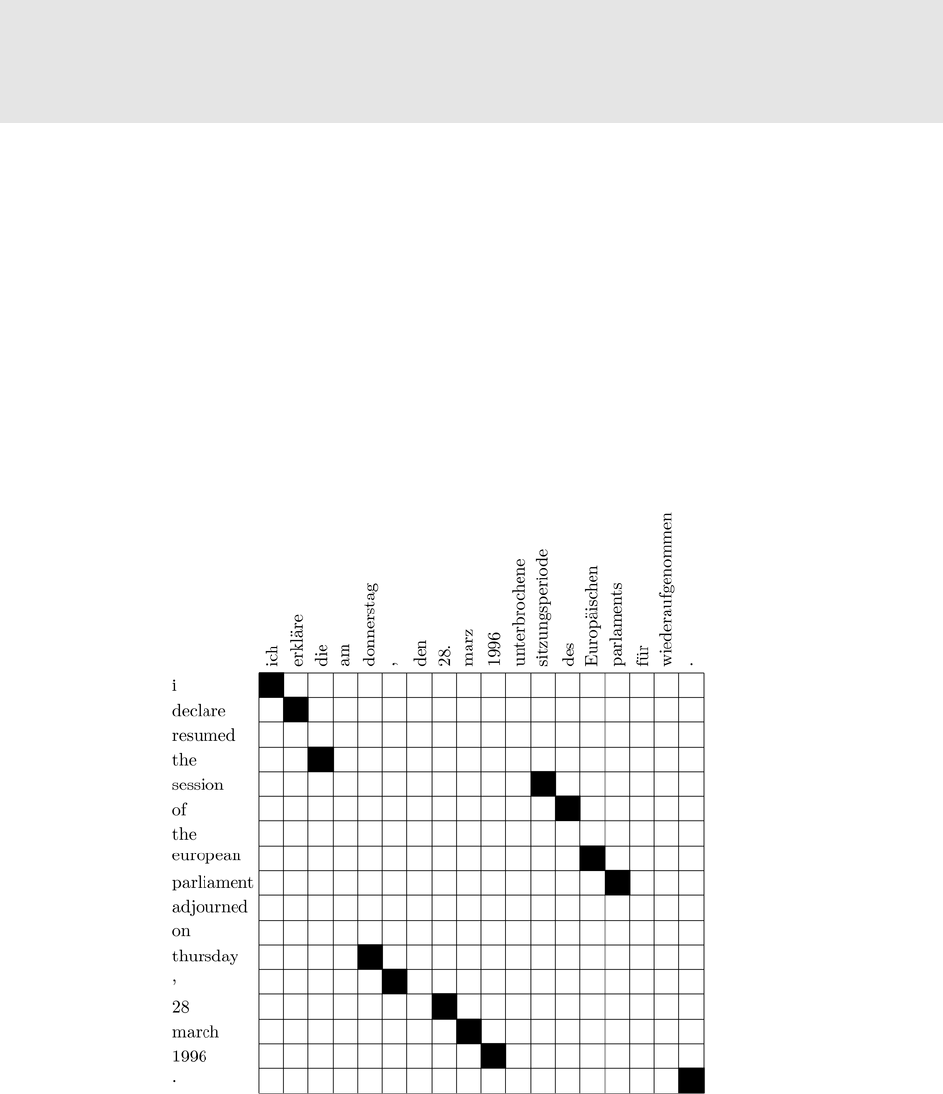

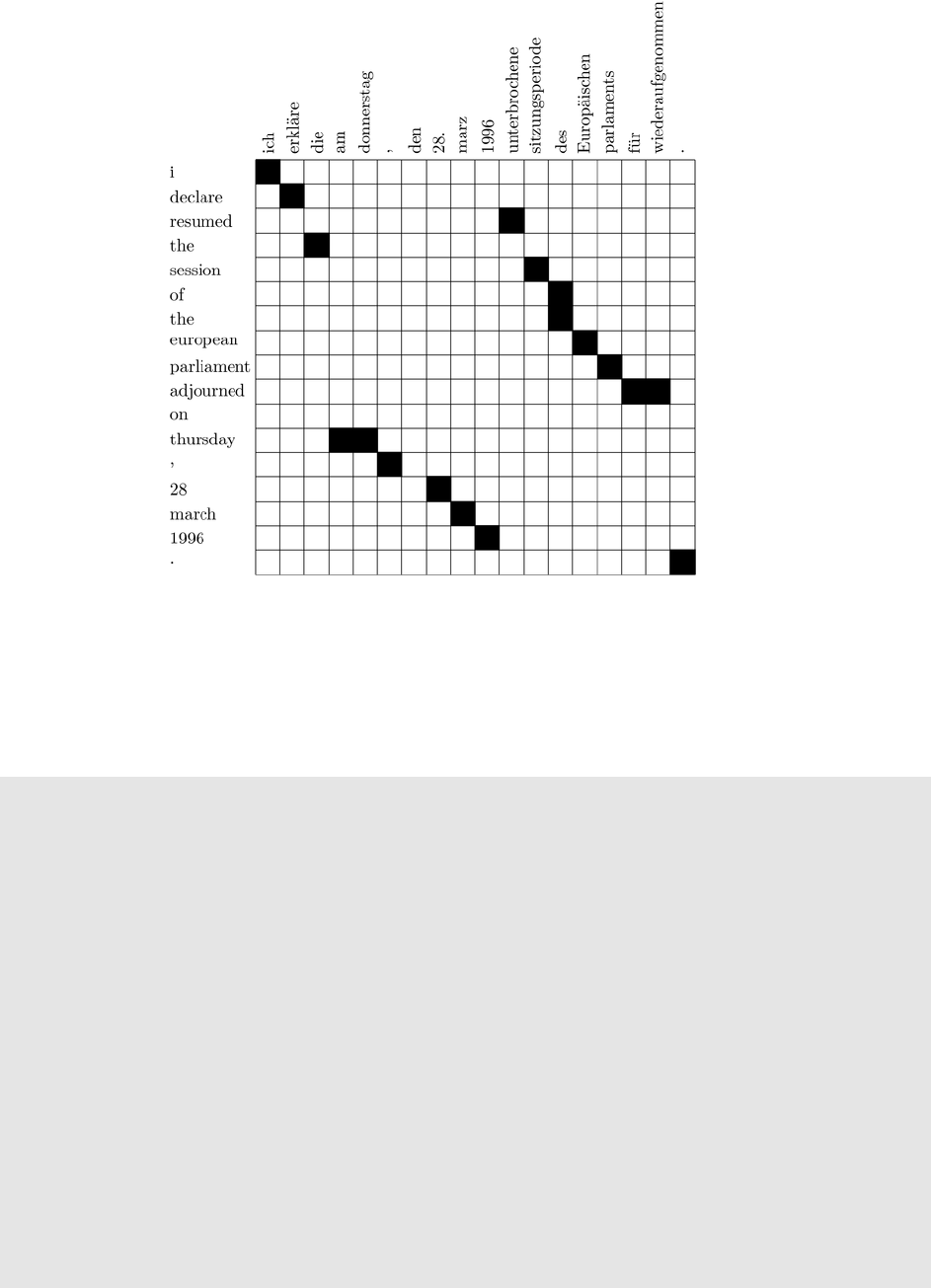

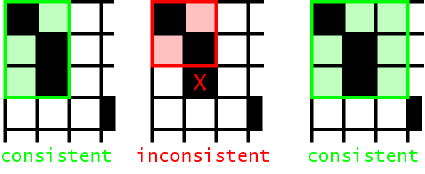

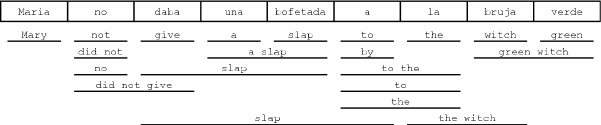

6.1.2 WordAlignment.................................287

6.1.3 Methods for Learning Phrase Translations . . . . . . . . . . . . . . . . . . 288

6.1.4 OchandNey...................................288

6.2 Decoder ..........................................291

6.2.1 TranslationOptions ...............................291

6.2.2 CoreAlgorithm .................................292

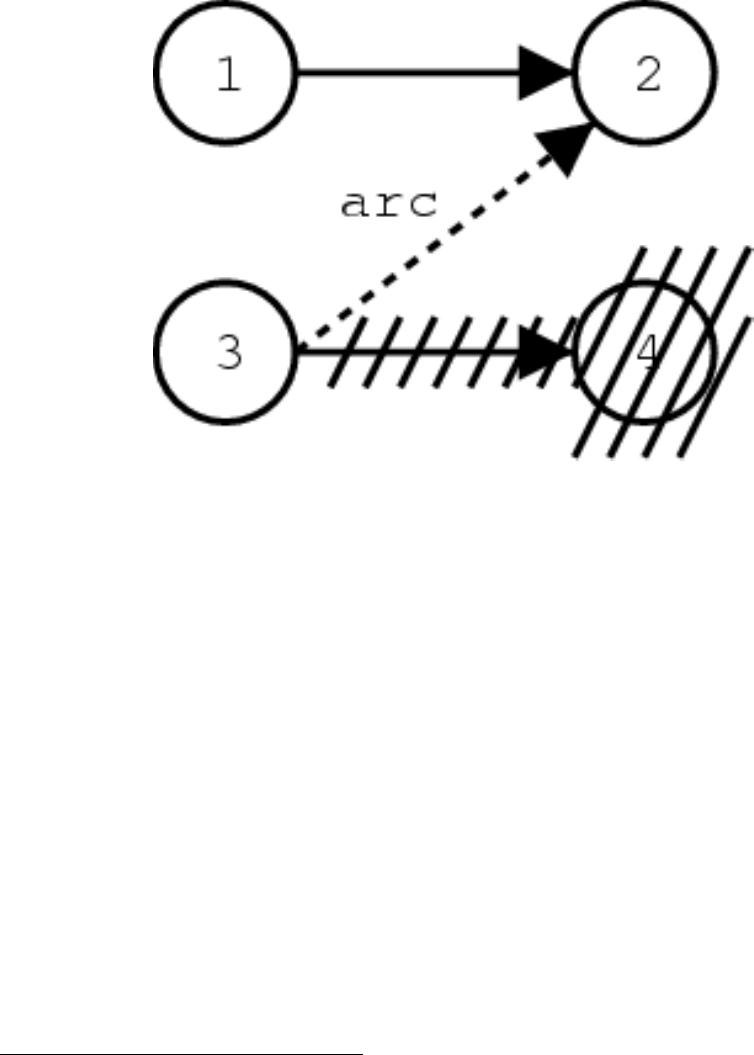

6.2.3 Recombining Hypotheses . . . . . . . . . . . . . . . . . . . . . . . . . . . . 293

6.2.4 BeamSearch ...................................293

6.2.5 Future Cost Estimation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 295

6.2.6 N-Best Lists Generation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 296

6.3 Factored Translation Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 298

6.3.1 Motivating Example: Morphology . . . . . . . . . . . . . . . . . . . . . . . 298

6.3.2 Decomposition of Factored Translation . . . . . . . . . . . . . . . . . . . . 299

6.3.3 StatisticalModel.................................300

6.4 Confusion Networks Decoding . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 303

6.4.1 ConfusionNetworks ..............................303

6.4.2 Representation of Confusion Network . . . . . . . . . . . . . . . . . . . . 304

6.5 WordLattices.......................................305

6.5.1 How to represent lattice inputs . . . . . . . . . . . . . . . . . . . . . . . . . 305

6.5.2 Configuring moses to translate lattices . . . . . . . . . . . . . . . . . . . . 306

6.5.3 Verifying PLF files with checkplf .......................306

6.5.4 Citation ......................................307

6.6 Publications........................................307

7 Code Guide 309

7.1 CodeGuide........................................309

7.1.1 Github, branching, and merging . . . . . . . . . . . . . . . . . . . . . . . . 309

7.1.2 Thecode .....................................312

7.1.3 QuickStart ....................................313

CONTENTS 9

7.1.4 DetailedGuides .................................313

7.2 CodingStyle .......................................313

7.2.1 Formatting ....................................313

7.2.2 Comments ....................................314

7.2.3 Data types and methods . . . . . . . . . . . . . . . . . . . . . . . . . . . . 315

7.2.4 Source Control Etiquette . . . . . . . . . . . . . . . . . . . . . . . . . . . . 316

7.3 Factors,Words,Phrases .................................316

7.3.1 Factors.......................................316

7.3.2 Words .......................................317

7.3.3 FactorTypes ...................................317

7.3.4 Phrases ......................................317

7.4 Tree-Based Model Decoding . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 318

7.4.1 Looping over the Spans . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 318

7.4.2 Looking up Applicable Rules . . . . . . . . . . . . . . . . . . . . . . . . . . 319

7.4.3 Applying the Rules: Cube Pruning . . . . . . . . . . . . . . . . . . . . . . 322

7.4.4 Hypotheses and Pruning . . . . . . . . . . . . . . . . . . . . . . . . . . . . 324

7.5 Multi-Threading .....................................325

7.5.1 Tasks........................................325

7.5.2 ThreadPool....................................327

7.5.3 OutputCollector .................................327

7.5.4 Not Deleting Threads after Execution . . . . . . . . . . . . . . . . . . . . . 328

7.5.5 Limit the Size of the Thread Queue . . . . . . . . . . . . . . . . . . . . . . 328

7.5.6 Example......................................328

7.6 Adding Feature Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 330

7.6.1 Video .......................................331

7.6.2 Otherresources..................................331

7.6.3 FeatureFunction.................................331

7.6.4 Stateless Feature Function . . . . . . . . . . . . . . . . . . . . . . . . . . . 334

7.6.5 Stateful Feature Function . . . . . . . . . . . . . . . . . . . . . . . . . . . . 335

7.6.6 Place-holder features . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 336

7.6.7 moses.ini .....................................336

7.6.8 Examples .....................................337

7.7 Adding Sparse Feature Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . 340

7.7.1 Implementation .................................341

7.7.2 Weights ......................................341

7.8 RegressionTesting ....................................342

7.8.1 Goals .......................................342

7.8.2 Testsuite .....................................342

7.8.3 Running the test suite . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 343

7.8.4 Running an individual test . . . . . . . . . . . . . . . . . . . . . . . . . . . 343

7.8.5 Howitworks...................................343

7.8.6 Writing regression tests . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 344

8 Reference 345

8.1 Frequently Asked Questions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 345

8.1.1 My system is taking a really long time to translate a sentence. What can

Idotospeeditup?................................345

8.1.2 The system runs out of memory during decoding. . . . . . . . . . . . . . 345

10 CONTENTS

8.1.3 I would like to point out a bug / contribute code. . . . . . . . . . . . . . . 345

8.1.4 How can I get an updated version of Moses ? . . . . . . . . . . . . . . . . 346

8.1.5 What changed in the latest release of Moses? . . . . . . . . . . . . . . . . . 346

8.1.6 I am an undergrad/masters student looking for a project in SMT. What

shouldIdo?....................................346

8.1.7 What do the 5 numbers in the phrase table mean? . . . . . . . . . . . . . . 346

8.1.8 What OS does Moses run on? . . . . . . . . . . . . . . . . . . . . . . . . . . 346

8.1.9 Can I use Moses on Windows ? . . . . . . . . . . . . . . . . . . . . . . . . 347

8.1.10 Do I need a computer cluster to run experiments? . . . . . . . . . . . . . . 347

8.1.11 I have compiled Moses, but it segfaults when running. . . . . . . . . . . . 347

8.1.12 How do I add a new feature function to the decoder? . . . . . . . . . . . . 347

8.1.13 Compiling with SRILM or IRSTLM produces errors. . . . . . . . . . . . . 347

8.1.14 I am trying to use Moses to create a web page to do translation. . . . . . . 348

8.1.15 How can a create a system that translate both ways, ie. X-to-Y as well as

Y-to-X?.......................................348

8.1.16 PhraseScore dies with signal 11 - why? . . . . . . . . . . . . . . . . . . . . 348

8.1.17 Does Moses do Hierarchical decoding, like Hiero etc? . . . . . . . . . . . 349

8.1.18 Can I use Moses in proprietary software ? . . . . . . . . . . . . . . . . . . 349

8.1.19 GIZA++ crashes with error "parameter ’coocurrencefile’ does not exist." . 349

8.1.20 Running regenerate-makefiles.sh gives me lots of errors about *GREP

and*SEDmacros.................................350

8.1.21 Running training I got the following error "*** buffer overflow detected

***: ../giza-pp/GIZA++-v2/GIZA++ terminated" . . . . . . . . . . . . . . 350

8.1.22 I retrained my model and got different BLEU scores. Why? . . . . . . . . 350

8.1.23 I specified ranges for mert weights, but it returned weights which are

outwiththoseranges...............................350

8.1.24 Who do I ask if my question has not been answered by this FAQ? . . . . 350

8.2 Reference: All Decoder Parameters . . . . . . . . . . . . . . . . . . . . . . . . . . 351

8.3 Reference: All Training Parameters . . . . . . . . . . . . . . . . . . . . . . . . . . . 352

8.3.1 BasicOptions...................................353

8.3.2 Factored Translation Model Settings . . . . . . . . . . . . . . . . . . . . . . 355

8.3.3 Lexicalized Reordering Model . . . . . . . . . . . . . . . . . . . . . . . . . 355

8.3.4 PartialTraining..................................355

8.3.5 FileLocations...................................356

8.3.6 AlignmentHeuristic...............................357

8.3.7 Maximum Phrase Length . . . . . . . . . . . . . . . . . . . . . . . . . . . . 357

8.3.8 GIZA++Options.................................357

8.3.9 Dealing with large training corpora . . . . . . . . . . . . . . . . . . . . . . 358

8.4 Glossary..........................................358

1

Introduction

1.1 Welcome to Moses!

Moses is a statistical machine translation system that allows you to automatically train trans-

lation models for any language pair. All you need is a collection of translated texts (parallel

corpus). Once you have a trained model, an efficient search algorithm quickly finds the highest

probability translation among the exponential number of choices.

1.2 Overview

1.2.1 Technology

Moses is an implementation of the statistical (or data-driven) approach to machine translation

(MT). This is the dominant approach in the field at the moment, and is employed by the on-

line translation systems deployed by the likes of Google and Microsoft. In statistical machine

translation (SMT), translation systems are trained on large quantities of parallel data (from

which the systems learn how to translate small segments), as well as even larger quantities of

monolingual data (from which the systems learn what the target language should look like).

Parallel data is a collection of sentences in two different languages, which is sentence-aligned,

in that each sentence in one language is matched with its corresponding translated sentence in

the other language. It is also known as a bitext.

The training process in Moses takes in the parallel data and uses coocurrences of words and

segments (known as phrases) to infer translation correspondences between the two languages

of interest. In phrase-based machine translation, these correspondences are simply between

continuous sequences of words, whereas in hierarchical phrase-based machine translation

or syntax-based translation, more structure is added to the correspondences. For instance a

hierarchical MT system could learn that the German hat X gegessen corresponds to the English

ate X, where the Xs are replaced by any German-English word pair. The extra structure used in

these types of systems may or may not be derived from a linguistic analysis of the parallel data.

Moses also implements an extension of phrase-based machine translation know as factored

translation which enables extra linguistic information to be added to a phrase-based systems.

11

12 1. Introduction

For more information about the Moses translation models, please refer to the tutorials on

phrase-based MT (Section 3.1), syntactic MT (Section 3.3) or factored MT (Section 3.2).

Whichever type of machine translation model you use, the key to creating a good system is lots

of good quality data. There are many free sources of parallel data1which you can use to train

sample systems, but (in general) the closer the data you use is to the type of data you want to

translate, the better the results will be. This is one of the advantages to using on open-source

tool like Moses, if you have your own data then you can tailor the system to your needs and

potentially get better performance than a general-purpose translation system. Moses needs

sentence-aligned data for its training process, but if data is aligned at the document level, it can

often be converted to sentence-aligned data using a tool like hunalign2

1.2.2 Components

The two main components in Moses are the training pipeline and the decoder. There are also

a variety of contributed tools and utilities. The training pipeline is really a collection of tools

(mainly written in perl, with some in C++) which take the raw data (parallel and monolingual)

and turn it into a machine translation model. The decoder is a single C++ application which,

given a trained machine translation model and a source sentence, will translate the source

sentence into the target language.

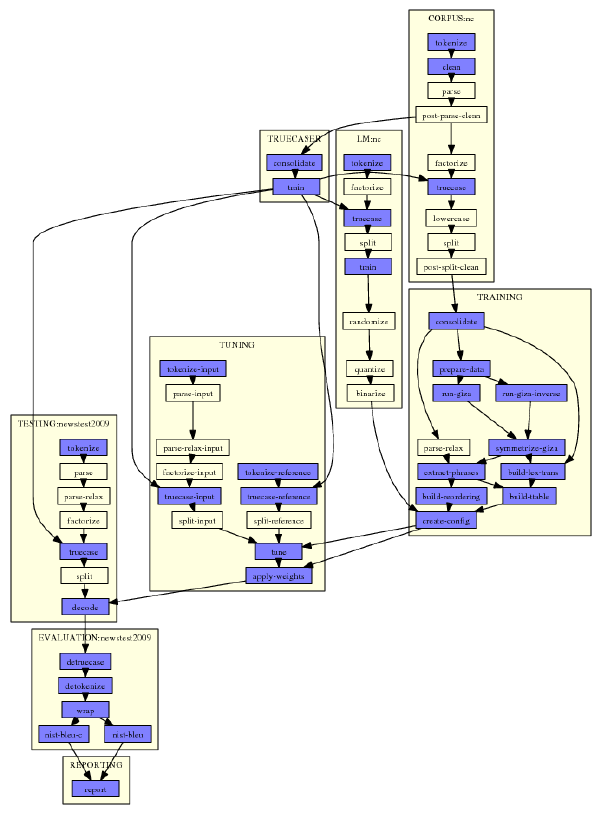

The Training Pipeline

There are various stages involved in producing a translation system from training data, which

are described in more detail in the training documentation (Section 5.1) and in the baseline

system guide (Section 2.3). These are implemented as a pipeline, which can be controlled by

the Moses experiment management system (Section 3.5), and Moses in general makes it easy

to insert different types of external tools into the training pipeline.

The data typically needs to be prepared before it is used in training, tokenising the text and con-

verting tokens to a standard case. Heuristics are used to remove sentence pairs which look to

be misaligned, and long sentences are removed. The parallel sentences are then word-aligned,

typically using GIZA++3, which implements a set of statistical models developed at IBM in the

80s. These word alignments are used to extract phrase-phrase translations, or hierarchical rules

as required, and corpus-wide statistics on these rules are used to estimate probabilities.

An important part of the translation system is the language model, a statistical model built

using monolingual data in the target language and used by the decoder to try to ensure the

fluency of the output. Moses relies on external tools (Section 5.13) for language model building.

The final step in the creation of the machine translation system is tuning (Section 5.14), where

the different statistical models are weighted against each other to produce the best possible

translations. Moses contains implementations of the most popular tuning algorithms.

1http://www.statmt.org/moses/?n=Moses.LinksToCorpora

2http://mokk.bme.hu/resources/hunalign/

3http://code.google.com/p/giza-pp/

1.2. Overview 13

The Decoder

The job of the Moses decoder is to find the highest scoring sentence in the target language

(according to the translation model) corresponding to a given source sentence. It is also pos-

sible for the decoder to output a ranked list of the translation candidates, and also to supply

various types of information about how it came to its decision (for instance the phrase-phrase

correspondences that it used).

The decoder is written in a modular fashion and allows the user to vary the decoding process

in various ways, such as:

•Input: This can be a plain sentence, or it can be annotated with xml-like elements to guide

the translation process, or it can be a more complex structure like a lattice or confusion

network (say, from the output of speech recognition)

•Translation model: This can use phrase-phrase rules, or hierarchical (perhaps syntactic)

rules. It can be compiled into a binarised form for faster loading. It can be supplemented

with features to add extra information to the translation process, for instance features

which indicate the sources of the phrase pairs in order to weight their reliability.

•Decoding algorithm: Decoding is a huge search problem, generally too big for exact

search, and Moses implements several different strategies for this search, such as stack-

based, cube-pruning, chart parsing etc.

•Language model: Moses supports several different language model toolkits (SRILM,

KenLM, IRSTLM, RandLM) each of which has there own strengths and weaknesses, and

adding a new LM toolkit is straightforward.

The Moses decoder also supports multi-threaded decoding (since translation is embarassingly

parallelisable4), and also has scripts to enable multi-process decoding if you have access to a

cluster.

Contributed Tools

There are many contributed tools in Moses which supply additional functionality over and

above the standard training and decoding pipelines. These include:

•Moses server: which provides an xml-rpc interface to the decoder

•Web translation: A set of scripts to enable Moses to be used to translate web pages

•Analysis tools: Scripts to enable the analysis and visualisation of Moses output, in com-

parison with a reference.

There are also tools to evaluate translations, alternative phrase scoring methods, an implemen-

tation of a technique for weighting phrase tables, a tool to reduce the size of the phrase table,

and other contributed tools.

1.2.3 Development

Moses is an open-source project, licensed under the LGPL5, which incorporates contributions

from many sources. There is no formal management structure in Moses, so if you want to

4http://en.wikipedia.org/wiki/Embarrassingly_parallel

5http://www.gnu.org/copyleft/lesser.html

14 1. Introduction

contribute then just mail support6and take it from there. There is a list (Section 1.3) of possible

projects on this website, but any new MT techiques are fair game for inclusion into Moses.

In general, the Moses administrators are fairly open about giving out push access to the git

repository, preferring the approach of removing/fixing bad commits, rather than vetting com-

mits as they come in. This means that trunk occasionally breaks, but given the active Moses

user community, it doesn’t stay broken for long. The nightly builds and tests of trunk are re-

ported on the cruise control7web page, but if you want a more stable version then look for one

of the releases (Section 2.4).

1.2.4 Moses in Use

The liberal licensing policy in Moses, together with its wide coverage of current SMT technol-

ogy and complete tool chain, make it probably the most widely used open-source SMT system.

It is used in teaching, research, and, increasingly, in commercial settings.

Commercial use of Moses is promoted and tracked by TAUS8. The most common current use

for SMT in commercial settings is post-editing where machine translation is used as a first-

pass, with the results then being edited by human translators. This can often reduce the time

(and hence total cost) of translation. There is also work on using SMT in computer-aided

translation, which is the research topic of two current EU projects, Casmacat9and MateCat10.

1.2.5 History

2005 Hieu Hoang (then student of Philipp Koehn) starts Moses as successor to Pharoah

2006 Moses is the subject of the JHU workshop, first check-in to public repository

2006 Start of Euromatrix, EU project which helps fund Moses development

2007 First machine translation marathon held in Edinburgh

2009 Moses receives support from EuromatrixPlus, also EU-funded

2010 Moses now supports hierarchical and syntax-based models, using chart decoding

2011 Moses moves from sourceforge to github, after over 4000 sourceforge check-ins

2012 EU-funded MosesCore launched to support continued development of Moses

Subsection last modified on August 13, 2013, at 10:38 AM

1.3 Get Involved

1.3.1 Mailing List

The main forum for communication on Moses is the Moses support mailing list11.

6http://www.statmt.org/moses/?n=Moses.MailingLists

7http://www.statmt.org/moses/cruise/

8http://www.translationautomation.com/user-cases/machines-takes-center-stage.html

9http://www.casmacat.eu/

10http://www.matecat.com/

11http://www.statmt.org/moses/?n=Moses.MailingLists

1.3. Get Involved 15

1.3.2 Suggestions

We’d like to hear what you want from Moses. We can’t promise to implement the suggestions,

but they can be used as input into research and student projects, as well as Marathon12 projects.

If you have a suggestion/wish for a new feature or improvement, then either report them

via the issue tracker13, contact the mailing list or drop Barry or Hieu a line (addresses on the

mailing list page).

1.3.3 Development

Moses is an open source project that is at home in the academic research community. There are

several venues where this community gathers, such as:

•The main conferences in the field: ACL, EMNLP, MT Summit, etc.

•The annual ACL Workshop on Statistical Machine Translation14

•The annual Machine Translation Marathon15

Moses is being developed as a reference implementation of state-of-the-art methods in statisti-

cal machine translation. Extending this implementation may be the subject of undergraduate or

graduate theses, or class projects. Typically, developers extend functionality that they required

for their projects, or to explore novel methods. Let us know if you made an improvement, no

matter how minor. Also let us know if you found or fixed a bug.

1.3.4 Use

We are aware of many commercial deployments of Moses, for instance as described by TAUS16.

Please let us know if you use Moses commercially. Do not hesitate to contact the core develop-

ers of Moses. They are willing to answer questions and may be even available for consulting

services.

1.3.5 Contribute

There are many ways you can contribute to Moses.

•To get started, build systems with your data and get familiar with how Moses works.

•Test out alternative settings for building a system. The shared tasks organized around

the ACL Workshop on Statistical Machine Translation17 are a good forum to publish such

results on standard data conditions.

•Read the code. While you at it, feel free to add comments or contribute to the Code Guide

(Section 7.1) to make it easier for others to understand the code.

•If you come across inefficient implementations (e.g., bad algorithms or code in Perl that

should be ported to C++), program more efficient implementations.

•If you have new ideas for features, tools, and functionality, add them.

•Help out with some of the projects listed below.

12http://www.statmt.org/moses/?n=Moses.Marathons

13https://github.com/moses-smt/mosesdecoder/issues

14http://www.statmt.org/wmt14/

15http://www.statmt.org/moses/?n=Moses.Marathons

16http://www.translationautomation.com/user-cases/machines-takes-center-stage.html

17http://www.statmt.org/wmt14/

16 1. Introduction

1.3.6 Projects

If you are looking for projects to improve Moses, please consider the following list:

Front-end Projects

•OpenOffice/Microsoft Word, Excel or Access plugins: (Hieu Hoang) Create wrappers for

the Moses decoder to translate within user apps. Skills required - Windows, VBA, Moses.

(GSOC)

•Firefox, Chrome, Internet Explorer plugins: (Hieu Hoang) Create a plugin that calls the

Moses server to translate webpages. Skills required - Web design, Javascript, Moses.

(GSOC)

•Moses on the OLPC: (Hieu Hoang) Create a front-end for the decoder, and possible the

training pipeline, so that it can be run on the OLPC. Some preliminary work has been

done here18

•Rule-based numbers, currency, date translation: (Hieu Hoang) SMT is bad at translating

numbers and dates. Write some simple rules to identify and translate these for the lan-

guage pairs of your choice. Integrate it into Moses and combine it with the placeholder

feature19. Skills required - C++, Moses. (GSOC)

•Named entity translation: (Hieu Hoang) Text with lots of names and trademarks etc are

difficult for SMT to translate. Integrate named entity recognition into Moses. Translate

them using the transliteration phrase-table, placeholder feature, or a secondary phrase-

table. Skills required - C++, Moses. (GSOC)

•Interactive visualization for SCFG decoding: (Hieu Hoang) Create a front-end to the

hiero/syntax decoder that enables the user to re-translate a part of the sentence, change

parameters in the decoder, add or delete translation rules etc. Skills required - C++, GUI,

Moses. (GSOC)

•Integrating the decoder with OCR/speech recognition input and speech synthesis out-

put (Hieu Hoang)

Training & Tuning

•Incremental updating of translation and language model: When you add new sentences

to the training data, you don’t want to re-run the whole training pipeline (do you?). Abby

Levenberg has implemented incremental training20 for Moses but what it lacks is a nice

How-To guide.

•Compression for lmplz: (Kenneth Heafield) lmplz trains language models on disk. The

temporary data on disk is not compressed, but it could be, especially with a fast com-

pression algorithm like zippy. This will enable us to build much larger models. Skills

required: C++. No SMT knowledge required. (GSOC)

•Faster tuning by reuse: In tuning, you constantly re-decode the same set of sentences

and this can be very time-consuming. What if you could reuse part of the calculation

each time? This has been previously proposed as a marathon project21

18http://wiki.laptop.org/go/Projects/Automatic_translation_software

19http://www.statmt.org/moses/?n=Moses.AdvancedFeatures#ntoc61

20http://www.statmt.org/moses/?n=Moses.AdvancedFeatures#ntoc36

21http://www.statmt.org/mtm12/index.php%3Fn=Projects.TargetHypergraphSerialization

1.3. Get Involved 17

•Use binary files to speed up phrase scoring: Phrase-extraction and scoring involves a lot

of processing of text files which is inefficient in both time and disk usage. Using binary

files and vocabulary ids has the potential to make training more efficient, although more

opaque.

•Lattice training: At the moment lattices can be used for decoding (Section 6.5), and also

for MERT22 but they can’t be used in training. It would be pretty cool if they could be

used for training, but this is far from trivial.

•Training via forced decoding: (Matthias Huck) Implement leave-one-out phrase model

training in Moses. Skills required - C++, SMT.

https://www-i6.informatik.rwth-aachen.de/publications/download/668/Wuebker-ACL-2010.pdf

•Faster training for the global lexicon model: Moses implements the global lexicon model

proposed by Mauser et al. (2009)23, but training features for each target word using a

maximum entropy trainer is very slow (years of CPU time). More efficient training or

accommodation of training of only frequent words would be useful.

•Letter-based TER: Implement an efficient version of letter-based TER as metric for tuning

and evaluation, geared towards morphologically complex languages.

•New Feature Functions: Many new feature functions could be implemented and tested.

For some ideas, see Green et al. (2014)24

•Character count feature: The word count feature is very valuable, but may be geared

towards producing superfluous function words. To encourage the production of longer

words, a character count feature could be useful. Maybe a unigram language model

fulfills the same purpose.

•Training with comparable corpora, related language, monolingual data: (Hieu Hoang)

High quality parallel corpora is difficult to obtain. There is a large amount of work on us-

ing comparable corpora, monolingual data, and parallel data in closely related languages

to create translation models. This project will re-implement and extend some of the prior

work.

Chart-based Translation

•Decoding algorithms for syntax-based models: Moses generally supports a large set

of grammar types. For some of these (for instance ones with source syntax, or a very

large set of non-terminals), the implemented CYK+ decoding algorithm is not optimal.

Implementing search algorithms for dedicated models, or just to explore alternatives,

would be of great interest.

•Source cardinality synchronous cube pruning for the chart-based decoder: (Matthias

Huck) Pooling hypotheses by amount of covered source words. Skills required - C++,

SMT.

http://www.dfki.de/~davi01/papers/vilar11:search.pdf

•Cube pruning for factored models: Complex factored models with multiple translation

and generation steps push the limits of the current factored model implementation which

exhaustively computes all translations options up front. Using ideas from cube pruning

(sorting the most likely rules and partial translation options) may be the basis for more

efficient factored model decoding.

22http://www.statmt.org/moses/?n=Moses.AdvancedFeatures#ntoc33

23http://aclweb.org/anthology/D/D09/D09-1022.pdf

24http://www.aclweb.org/anthology/W14-3360.pdf

18 1. Introduction

•Missing features for chart decoder: A number of features are missing for the chart de-

coder, such as: MBR decoding (should be simple) and lattice decoding. In general, re-

porting and analysis within experiment.perl could be improved.

•More efficient rule table for chart decoder: (Marcin) The in-memory rule table for the

hierarchical decoder loads very slowly and uses a lot of RAM. An optimized implemen-

tation that is vastly more efficient on both fronts should be feasible. Skills required - C++,

NLP, Moses. (GSOC)

•More features for incremental search: Kenneth Heafield presented a faster search al-

gorithm for chart decoding Grouping Language Model Boundary Words to Speed K-Best Ex-

traction from Hypergraphs (NAACL 2013)25. This is implemented as a separate search al-

gorithm in Moses (called ’incremental search’), but it lacks many features of the default

search algorithm (such as sparse feature support, or support for multiple stateful fea-

tures). Implementing these features for the incremental search would be of great interest.

•Scope-0 grammar and phrase-table: (Hieu Hoang). The most popular decoding algorithm

for syntax MT is the CYK+ algorithm. This is a parsing algorithm which is able to use de-

coding with an unnormalized, unpruned grammar. However, the disadvantage of using

such a general algorithm is its speed; Hopkins and Langmead (2010) showed that that a

sentence of length n can be parsed using a scope-k grammar in O(nk) chart update. For

an unpruned grammar with 2 non-terminals (the usual SMT setup), the scope is 3.

This project proposes to quantify the advantages and disadvantages of scope-0 grammar. A

scope-0 grammar lacks application ambiguity, therefore, decoding can be fast and memory

efficient. However, this must be offset against potential translation quality degradation due to

the lack of coverage.

It may be that the advantages of a scope-0 grammar can only be realized through specifically

developed algorithms, such as parsing algorithms or data structures. The phrase-table lookup

for a Scope-0 grammar can be significantly simplified, made faster, and applied to much large

span width.

This project will also aim to explore this potentially rich research area.

http://aclweb.org/anthology//W/W12/W12-3150.pdf

http://www.sdl.com/Images/emnlp2009_tcm10-26628.pdf

Phrase-based Translation

•A better phrase table: The current binarised phrase table suffers from (i) far too many lay-

ers of indirection in the code making it hard to follow and inefficient (ii) a cache-locking

mechanism which creates excessive contention; and (iii) lack of extensibility meaning

that (e.g.) word alignments were added on by extensively duplicating code and addi-

tional phrase properties are not available. A new phrase table could make Moses faster

and more extensible.

•Multi-threaded decoding: Moses uses a simple "thread per sentence" model for multi-

threaded decoding. However this means that if you have a single sentence to decode,

then multi-threading will not get you the translation any faster. Is it possible to have a

finer-grained threading model that can use multiple threads on a single sentence? This

would call for a new approach to decoding.

25http://kheafield.com/professional/edinburgh/search_paper.pdf

1.3. Get Involved 19

•Better reordering: (Matthias Huck, Hieu Hoang) E.g. with soft constraints on reordering:

Moses currently allows you to specify hard constraints26 on reordering, but it might be

useful to have "soft" versions of these constraints. This would mean that the translation

would incur a trainable penalty for violating the constraints, implemented by adding a

feature function. Skills required - C++, SMT.

More ideas related to reordering:

http://www.spencegreen.com/pubs/green+galley+manning.naacl10.pdf

http://www.transacl.org/wp-content/uploads/2013/07/paper327.pdf

https://www-i6.informatik.rwth-aachen.de/publications/download/444/Zens-COLING-2004.pdf

https://www-i6.informatik.rwth-aachen.de/publications/download/896/Feng-ACL-2013.pdf

http://research.google.com/pubs/archive/36484.pdf

http://research.google.com/pubs/archive/37163.pdf

http://research.google.com/pubs/archive/41651.pdf

•Merging the phrase table and lexicalized reordering table: (Matthias Huck, Hieu Hoang)

They contain the same source and target phrases, but different probabilities, and how

those probabilities are applied. Merging the 2 models would halve the number of lookups.

Skills required - C++, Moses. (GSOC)

•Using artificial neural networks as memory to store the phrase table: (Hieu Hoang) ANN

can be used as associative memory to store information in a lossy method. [http://ieeexplore.ieee.org/xpls/abs_all.jsp?arnumber=4634358&tag=1].

It would be interesting to use them to how useful they are at store the phrase table. Fur-

ther research can focus on how they can be used to store morphologically similar transla-

tions.

•Entropy-based pruning: (Matthias Huck) A more consistent method for pre-pruning phrase

tables. Skills required - C++, NLP.

http://research.google.com/pubs/archive/38279.pdf

•Faster phrase-based decoding by refining feature state: Implement Heafield’s Faster

Phrase-Based Decoding by Refining Feature State (ACL 2014)27.

•Multi-pass decoding: (Hieu Hoang) Some features may be too expensive to use during

decoding - maybe due to their computational cost, or due to their wider use of context

which leads to more state splitting. Think of a recurrent neural network language model

that both uses too much context (the entire output string) and is costly to compute. We

would like to use these features in a reranking phase, but dumping out the search graph,

and then re-decode it outside of Moses, creates a lot of additional overhead. So, it would

be nicer to integrate second pass decoding within the decoder. This idea is related to

coarse to fine decoding. Technically, we would like to be able to specify any feature

function as a first pass or second pass feature function. There are some major issues that

have to be tackled with multi-pass decoding:

1. A losing hypothesis which have been recombined with the winning hypothesis may now

be the new winning hypothesis. The output search graph has to be reordered to reflect

this.

26http://www.statmt.org/moses/?n=Moses.AdvancedFeatures#ntoc17

27http://kheafield.com/professional/stanford/mtplz_paper.pdf

20 1. Introduction

2. The feature functions in the 2nd pass produce state information. Recombined hypotheses

may no longer be recombined and have to be split.

3. It would be useful for feature functions scores to be able to be evaluated asynchronously.

That is, a function to calculate the score it called but the score is calculated later. Skills

required - C++, NLP, Moses. (GSOC)

General Framework & Tools

•Out-of-vocabulary (OOV) word handling: Currently there are two choices for OOVs -

pass them through or drop them. Often neither is appropriate and Moses lacks good

hooks to add new OOV strategies, and lacks alternative strategies. A new phrase table

class should be created which process OOV. To create a new phrase-table type, make a

copy of moses/TranslationModel/SkeletonPT.*, rename the class and follow the exam-

ple in the file to implement your own code. Skills required - C++, Moses. (GSOC)

•Tokenization for your language: Tokenization is the only part of the basic SMT process

that is language-specific. You can help make translation for your language better. Make

a copy of the file scripts/share/nonbreaking_prefixes/nonbreaking_prefix.en and

replace it with non-breaking words in your language. Skills required - SMT, Moses, lots

of human languages. (GSOC)

•Python interface: A Python interface to the decoder could enable easy experimentation

and incorporation into other tools. cdec has one28 and Moses has a python interface to

the on-disk phrase tables (implemented by Wilker Aziz) but it would be useful to be able

to call the decoder from python.

•Analysis of results: (Philipp Koehn) Assessing the impact of variations in the design of

a machine translation system by observing the fluctuations of the BLEU score may not

be sufficiently enlightening. Having more analysis of the types of errors a system makes

should be very useful.

Engineering Improvements

•Integration of sigfilter: The filtering algorithm of Johnson et al29 is available30 in Moses,

but it is not well integrated, has awkward external dependencies and so is seldom used.

At the moment the code is in the contrib directory. A useful project would be to refactor

this code to use the Moses libraries for suffix arrays, and to integrate it with the Moses

experiment management system (EMS). The goal would be to enable the filtering to be

turned on with a simple switch in the EMS config file.

•Boostification: Moses has allowed boost31 since Autumn 2011, but there are still many

areas of the code that could be improved by usage of the boost libraries, for instance using

shared pointers in collections.

•Cruise control: Moses has cruise control32 running on a server at the University of Ed-

inburgh, however this only tests one platform (Ubuntu 12.04). If you have a different

platform, and care about keeping Moses stable on that platform, then you could set up a

cruise control instance too. The code is all in the standard Moses distribution.

28http://ufal.mff.cuni.cz/pbml/98/art-chahuneau-smith-dyer.pdf

29http://aclweb.org/anthology/D/D07/D07-1103.pdf

30http://www.statmt.org/moses/?n=Moses.AdvancedFeatures#ntoc16

31http://www.boost.org

32http://www.statmt.org/moses/cruise/

1.3. Get Involved 21

Documentation

•Maintenance: The documentation always needs maintenance as new features are intro-

duced and old ones are updated. Such a large body of documentation inevitably contains

mistakes and inconsistencies, so any help in fixing these would be most welcome. If you

want to work on the documentation, just introduce yourself on the mailing list.

•Help messages: Moses has a lot of executables, and often the help messages are quite

cryptic or missing. A help message in the code is more likely to be maintained than

separate documentation, and easier to locate when you’re trying to find the right options.

Fixing the help messages would be a useful contribution to making Moses easier to use.

Subsection last modified on June 16, 2015, at 02:05 PM

22 1. Introduction

2

Installation

2.1 Getting Started with Moses

This section will show you how to install and build Moses, and how to use Moses to translate

with some simple models. If you experience problems, then please check the support1page. If

you do not want to build Moses from source, then there are packages2available for Windows

and popular Linux distributions.

2.1.1 Easy Setup on Ubuntu (on other linux systems, you’ll need to install pack-

ages that provide gcc, make, git, automake, libtool)

1. Install required Ubuntu packages to build Moses and its dependencies:

sudo apt-get install build-essential git-core pkg-config automake libtool wget

zlib1g-dev python-dev libbz2-dev

For the regression tests, you’ll also need

sudo apt-get install libsoap-lite-perl

See below for additional packages that you’ll need to actually run Moses (especially when

you are using EMS).

2. Clone Moses from the repository and cd into the directory for building Moses

git clone https://github.com/moses-smt/mosesdecoder.git

cd mosesdecoder

3. Run the following to install a recent version of Boost (the default version on your system

might be too old), as well as cmph (for CompactPT), irstlm (language model from FBK,

required to pass the regression tests), and xmlrpc-c (for moses server). By default, these

will be installed in ./opt in your working directory:

make -f contrib/Makefiles/install-dependencies.gmake

4. To compile moses, run

./compile.sh [additional options]

1http://www.statmt.org/moses/?n=Moses.MailingLists

2http://www.statmt.org/moses/?n=Moses.Packages

23

24 2. Installation

Popular additional bjam options (called from within ./compile.sh and ./run-regtests.sh):

•--prefix=/destination/path --install-scripts

... to install Moses somewhere else on your system

•--with-mm

...to enable suffix array-based phrase tables3

Note that you’ll still need a word aligner; this is not built automatically

Running regression tests (Advanced; for Moses developers; normal users won’t need this)

To compile and run the regression tests all in one go, run

./run-regtests.sh [additional options]

Regression testing is only of interest for people who are actively making changes in the Moses

codebase. If you are just using Moses to run MT experiments, there’s no point in running

regression tests, unless you want to check that your current version of Moses is working as

expected. However, you can also check your version against the daily regression tests here4.

If you run your own regression tests, sometimes Moses will fail them even when everything

is working correctly, because different compilers produce slightly different executables that

might produce slightly different output because they make different kinds of rounding errors.

Manually installing Boost

Boost 1.48 has a serious bug which breaks Moses compilation. Unfortunately, some Linux

distributions (eg. Ubuntu 12.04) have broken versions of the Boost library. In these cases, you

must download and compile Boost yourself.

This is the exact commands I (Hieu) use to compile boost:

wget https://dl.bintray.com/boostorg/release/1.64.0/source/boost_1_64_0.tar.gz

tar zxvf boost_1_64_0.tar.gz

cd boost_1_64_0/

./bootstrap.sh

./b2 -j4 --prefix=$PWD --libdir=$PWD/lib64 --layout=system link=static install || echo FAILURE

This create library file in the directory lib64, NOT in the system directory. Therefore, you don’t

need to be system admin/root to run this. However, you will need to tell moses where to find

boost, which is explained below

Once boost is installed, you can then compile Moses. However, you must tell Moses where

boost is with the --with-boost flag. This is the exact commands I use to compile Moses:

3https://ufal.mff.cuni.cz/pbml/104/art-germann.pdf

4http://statmt.org/moses/cruise

2.1. Getting Started with Moses 25

./bjam --with-boost=~/workspace/temp/boost_1_64_0 -j4

2.1.2 Compiling Moses directly with bjam

You may need to do this if

1. compile.sh doesn’t work for you, for example,

i. you’re using OSX

ii. you don’t have all the prerequisites installed on your system so you want to compile Moses with a reduced number of features

2. You want more control over exactly what options and features you want

To compile with bare minimum of features:

./bjam -j4

If you have compiled boost manually, then tell bjam where it is:

./bjam --with-boost=~/workspace/temp/boost_1_64_0 -j8

If you have compiled the cmph library manually:

./bjam --with-cmph=/Users/hieu/workspace/cmph-2.0

If you have compiled the xmlrpc-c library manually:

./bjam --with-xmlrpc-c=/Users/hieu/workspace/xmlrpc-c/xmlrpc-c-1.33.17

If you have compiled the xmlrpc-c library manually:

26 2. Installation

./bjam --with-irstlm=/Users/hieu/workspace/irstlm/irstlm-5.80.08/trunk

This is the exact command I (Hieu) used on Linux:

./bjam --with-boost=/home/s0565741/workspace/boost/boost_1_57_0 --with-cmph=/home/s0565741/workspace/cmph-2.0 --with-irstlm=/home/s0565741/workspace/irstlm-code --with-xmlrpc-c=/home/s0565741/workspace/xmlrpc-c/xmlrpc-c-1.33.17 -j12

Compiling on OSX

Recent versions of OSX have clang C/C++ compiler, rather than gcc. When compiling with

bjam, you must add the following:

./bjam toolset=clang

This is the exact command I (Hieu) use on OSX Yosemite:

./bjam --with-boost=/Users/hieu/workspace/boost/boost_1_59_0.clang/ --with-cmph=/Users/hieu/workspace/cmph-2.0 --with-xmlrpc-c=/Users/hieu/workspace/xmlrpc-c/xmlrpc-c-1.33.17 --with-irstlm=/Users/hieu/workspace/irstlm/irstlm-5.80.08/trunk --with-mm --with-probing-pt -j5 toolset=clang -q -d2

You also need to add this argument when manually compiling boost. This is the exact com-

mand I use:

./b2 -j8 --prefix=$PWD --libdir=$PWD/lib64 --layout=system link=static toolset=clang install || echo FAILURE

2.1.3 Other software to install

Word Alignment

Moses requires a word alignment tool, such as giza++5, mgiza6, or Fast Align7.

I (Hieu) use MGIZA because it is multi-threaded and give general good result, however, I’ve

also heard good things about Fast Align. You can find instructions to compile them here8.

5http://code.google.com/p/giza-pp/

6https://github.com/moses-smt/mgiza

7https://github.com/clab/fast_align/blob/master/README.md

8http://www.statmt.org/moses/?n=Moses.ExternalTools#ntoc3

2.1. Getting Started with Moses 27

Language Model Creation

Moses includes the KenLM language model creation program, lmplz9.

You can also create language models with IRSTLM10 and SRILM11. Please read this12 if you

want to compile IRSTLM. Language model toolkits perform two main tasks: training and

querying. You can train a language model with any of them, produce an ARPA file, and query

with a different one. To train a model, just call the relevant script.

If you want to use SRILM or IRSTLM to query the language model, then they need to be linked

with Moses. For IRSTLM, you first need to compile IRSTLM then use the --with-irstlm switch

to compile Moses with IRSTLM. This is the exact command I used:

./bjam --with-irstlm=/home/s0565741/workspace/temp/irstlm-5.80.03 -j4

Personally, I only use IRSTLM as a query tool in this way if the LM n-gram order is over 7. In

most situation, I use KenLM because KenLM is multi-threaded and faster.

2.1.4 Platforms

The primary development platform for Moses is Linux, and this is the recommended platform

since you will find it easier to get support for it. However Moses does work on other platforms:

2.1.5 OSX Installation

Mac OSX is widely used by Moses developers and everything should run fine. Installation is

the same as for Linux.

Mac OSX out-of-the-box doesn’t have many programs that are critical to Moses, or different

version of standard GNU programs. For example, split,sort,zcat are incompatible BSD-

versions rather than GNU versions.

Therefore, Moses has been tested with Mac OSX with Mac Ports. Make sure you have this

installed on your machine. Success has also been reported with brew installation. Do note,

however, that you will need to install xmlrpc-c independently, and then compile with bjam

using the --with-xmlrpc-c=/usr/local flag (where /usr/local/ is the default location of the

xmlrpc-c installation).

9http://www.statmt.org/moses/?n=FactoredTraining.BuildingLanguageModel#ntoc19

10http://sourceforge.net/projects/irstlm/

11http://www.speech.sri.com/projects/srilm/download.html

12http://www.statmt.org/moses/?n=FactoredTraining.BuildingLanguageModel#ntoc4

28 2. Installation

2.1.6 Linux Installation

Debian

Install the following packages using the command

su

apt-get install [package name]

Packages:

git

subversion

make

libtool

gcc

g++

libboost-dev

tcl-dev

tk-dev

zlib1g-dev

libbz2-dev

python-dev

Ubuntu

Install the following packages using the command

sudo apt-get install [package name]

Packages:

g++

git

subversion

automake

libtool

zlib1g-dev

2.1. Getting Started with Moses 29

libboost-all-dev

libbz2-dev

liblzma-dev

python-dev

graphviz

imagemagick

make

cmake

libgoogle-perftools-dev (for tcmalloc)

autoconf

doxygen

Fedora / Redhat / CentOS / Scientific Linux

Install the following packages using the command

su

yum install [package name]

Packages:

git

subversion

make

automake

cmake

libtool

gcc-c++

zlib-devel

python-devel

bzip2-devel

boost-devel

ImageMagick

cpan

expat-devel

In addition, you have to install some perl packages:

cpan XML::Twig

cpan Sort::Naturally

30 2. Installation

2.1.7 Windows Installation

Moses can run on Windows 10 with Ubuntu 16.04 subsystem. Installation is exactly the same as

for Ubuntu. (Are you running it on Windows? If so, please give us feedback on how it works).

Install the following packages via Cygwin:

boost

automake

libtool

cmake

gcc-g++

python

git

subversion

openssh

make

tcl

zlib0

zlib-devel

libbz2_devel

unzip

libexpat-devel

libcrypt-devel

Also, the nist-bleu script need a perl module called XML::Twig13. Install the following perl

packages:

cpan

cpan XML::Twig

cpan Sort::Naturally

2.1.8 Run Moses for the first time

Download the sample models and extract them into your working directory:

cd ~/mosesdecoder

wget http://www.statmt.org/moses/download/sample-models.tgz

tar xzf sample-models.tgz

cd sample-models

13http://search.cpan.org/~mirod/XML-Twig-3.44/Twig.pm

2.1. Getting Started with Moses 31

Run the decoder

cd ~/mosesdecoder/sample-models

~/mosesdecoder/bin/moses -f phrase-model/moses.ini < phrase-model/in > out

If everything worked out right, this should translate the sentence "das ist ein kleines haus" (in

the file in) as "this is a small house" (in the file out).

Note that the configuration file moses.ini in each directory is set to use the KenLM language

model toolkit by default. If you prefer to use IRSTLM14, then edit the language model en-

try in moses.ini, replacing KENLM with IRSTLM. You will also have to compile with ./bjam

--with-irstlm, adding the full path of your IRSTLM installation.

Moses also supports SRILM and RandLM language models. See here15 for more details.

2.1.9 Chart Decoder

The chart decoder is part of the same executable as of version 3.0.

You can run the chart demos from the sample-models directory as follows

~/mosesdecoder/bin/moses -f string-to-tree/moses.ini < string-to-tree/in > out.stt

~/mosesdecoder/bin/moses -f tree-to-tree/moses.ini < tree-to-tree/in.xml > out.ttt

The expected result of the string-to-tree demo is

this is a small house

2.1.10 Next Steps

Why not try to build a Baseline (Section 2.3) translation system with freely available data?

2.1.11 bjam options

This is a list of options to bjam. On a system with Boost installed in a standard path, none

should be required, but you may want additional functionality or control.

14http://hlt.fbk.eu/en/irstlm

15http://www.statmt.org/moses/?n=FactoredTraining.BuildingLanguageModel#ntoc1

32 2. Installation

Optional packages

Language models In addition to KenLM and ORLM (which are always compiled):

--with-irstlm=/path/to/irstlm Path to IRSTLM installation

--with-randlm=/path/to/randlm Path to RandLM installation

--with-nplm=/path/to/nplm Path to NPLM installation

--with-srilm=/path/to/srilm Path to SRILM installation.

If your SRILM install is non-standard, use these options:

--with-srilm-dynamic Link against srilm.so.

--with-srilm-arch=arch Override the arch setting given by /path/to/srilm/sbin/machine-type

Other packages

--with-boost=/path/to/boost If Boost is in a non-standard location, specify it here. This direc-

tory is expected to contain include and lib or lib64.

--with-xmlrpc-c=/path/to/xmlrpc-c Specify a non-standard libxmlrpc-c installation path. Used

by Moses server.

--with-cmph=/path/to/cmph Path where CMPH is installed. Used by the compact phrase table

and compact lexical reordering table.

--without-tcmalloc Disable thread-caching malloc.

--with-regtest=/path/to/moses-regression-tests Run the regression tests using data from this

directory. Tests can be downloaded from https://github.com/moses-smt/moses-regression-

tests.

Installation

--prefix=/path/to/prefix sets the install prefix [default is source root].

--bindir=/path/to/prefix/bin sets the bin directory [default is PREFIX/bin]

--libdir=/path/to/prefix/lib sets the lib directory [default is PREFIX/lib]

--includedir=/path/to/prefix/include installs headers. Does not install if missing. No argu-

ment defaults to PREFIX/include .

--install-scripts=/path/to/scripts copies scripts into a directory. Does not install if missing. No

argument defaults to PREFIX/scripts .

--git appends the git revision to the prefix directory.

2.2. Building with Eclipse 33

Build Options

By default, the build is multi-threaded, optimized, and statically linked.

threading=single|multi controls threading (default multi)

variant=release|debug|profile builds optimized (default), for debug, or for profiling

link=static|shared controls preferred linking (default static)

--static forces static linking (the default will fall back to shared)

debug-symbols=on|off include (default) or exclude debugging information also known as -g

--notrace compiles without TRACE macros

--enable-boost-pool uses Boost pools for the memory SCFG table

--enable-mpi switch on mpi (used for MIRA - one of the tuning algorithms)

--without-libsegfault does not link with libSegFault

--max-kenlm-order maximum ngram order that kenlm can process (default 6)

--max-factors maximum number of factors (default 4)

--unlabelled-source ignore source nonterminals (if you only use hierarchical or string-to-tree

models without source syntax)

Controlling the Build

-q quit on the first error

-a to build from scratch

-j$NCPUS to compile in parallel

--clean to clean

2.2 Building with Eclipse

There is a video showing you how to set up Moses with Eclipse.

{\bf How to compile Moses with Eclipse\footnote{\sf https://vimeo.com/129306919}}

Moses comes with Eclipse project files for some of the C++ executables. Currently, there are

project files for

34 2. Installation

* moses (decoder)

* moses-cmd (decoder)

* extract

* extract-rules

* extract-ghkm

* server

* ...

The Eclipse build is used primarily for development and debugging. It is not optimized and

doesn’t have many of the options available in the bjam build.

The advantage of using Eclipse is that it offers code-completion, and a GUI debugging envi-

ronment.

NB. The recent update of Mac OSX replaces g++ with clang. Eclipse doesn’t yet fully function

with clang. Therefore, you should not use the Eclipse build with any OSX version higher than

10.8 (Mountain Lion)

Follow these instructions to build with Eclipse:

* Use the version of Eclipse for C++. Works (at least) with Eclipse Kepler and Luna.

* Get the Moses source code

git clone git@github.com:moses-smt/mosesdecoder.git

cd mosesdecoder

* Create a softlink to Boost (and optionally to XMLRPC-C lib if you want to compile the moses server) in the Moses root directory

eg. ln -s ~/workspace/boost_x_xx_x boost