Manual

manual

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 159 [warning: Documents this large are best viewed by clicking the View PDF Link!]

GStreamer Application Development

Manual (1.5.0.1)

Wim Taymans

Steve Baker

Andy Wingo

Ronald S. Bultje

Stefan Kost

GStreamer Application Development Manual (1.5.0.1)

by Wim Taymans, Steve Baker, Andy Wingo, Ronald S. Bultje, and Stefan Kost

This material may be distributed only subject to the terms and conditions set forth in the Open Publication License, v1.0 or later (the latest version

is presently available at http://www.opencontent.org/opl.shtml ( http://www.opencontent.org/opl.shtml)).

Table of Contents

Foreword.................................................................................................................................................. vii

Introduction............................................................................................................................................ viii

1. Who should read this manual? ................................................................................................... viii

2. Preliminary reading.................................................................................................................... viii

3. Structure of this manual ............................................................................................................. viii

I. About GStreamer ...................................................................................................................................x

1. What is GStreamer? .......................................................................................................................1

2. Design principles............................................................................................................................4

2.1. Clean and powerful............................................................................................................4

2.2. Object oriented ..................................................................................................................4

2.3. Extensible ..........................................................................................................................4

2.4. Allow binary-only plugins.................................................................................................4

2.5. High performance..............................................................................................................5

2.6. Clean core/plugins separation............................................................................................5

2.7. Provide a framework for codec experimentation...............................................................5

3. Foundations....................................................................................................................................6

3.1. Elements ............................................................................................................................6

3.2. Pads....................................................................................................................................6

3.3. Bins and pipelines..............................................................................................................7

3.4. Communication .................................................................................................................7

II. Building an Application .......................................................................................................................9

4. Initializing GStreamer..................................................................................................................10

4.1. Simple initialization.........................................................................................................10

4.2. The GOption interface .....................................................................................................11

5. Elements.......................................................................................................................................13

5.1. What are elements?..........................................................................................................13

5.2. Creating a GstElement ..................................................................................................15

5.3. Using an element as a GObject......................................................................................16

5.4. More about element factories ..........................................................................................17

5.5. Linking elements .............................................................................................................19

5.6. Element States .................................................................................................................20

6. Bins ..............................................................................................................................................22

6.1. What are bins...................................................................................................................22

6.2. Creating a bin ..................................................................................................................22

6.3. Custom bins .....................................................................................................................23

6.4. Bins manage states of their children................................................................................24

7. Bus ...............................................................................................................................................25

7.1. How to use a bus..............................................................................................................25

7.2. Message types..................................................................................................................28

8. Pads and capabilities ....................................................................................................................30

8.1. Pads..................................................................................................................................30

8.2. Capabilities of a pad ........................................................................................................33

8.3. What capabilities are used for .........................................................................................35

8.4. Ghost pads .......................................................................................................................38

9. Buffers and Events .......................................................................................................................41

iii

9.1. Buffers .............................................................................................................................41

9.2. Events ..............................................................................................................................41

10. Your first application..................................................................................................................43

10.1. Hello world....................................................................................................................43

10.2. Compiling and Running helloworld.c ...........................................................................46

10.3. Conclusion.....................................................................................................................47

III. Advanced GStreamer concepts........................................................................................................48

11. Position tracking and seeking ....................................................................................................49

11.1. Querying: getting the position or length of a stream.....................................................49

11.2. Events: seeking (and more) ...........................................................................................50

12. Metadata.....................................................................................................................................52

12.1. Metadata reading ...........................................................................................................52

12.2. Tag writing.....................................................................................................................54

13. Interfaces....................................................................................................................................56

13.1. The URI interface ..........................................................................................................56

13.2. The Color Balance interface ..........................................................................................56

13.3. The Video Overlay interface..........................................................................................56

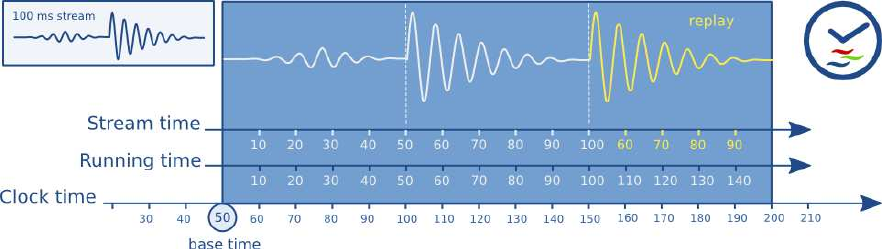

14. Clocks and synchronization in GStreamer.................................................................................58

14.1. Clock running-time........................................................................................................58

14.2. Buffer running-time.......................................................................................................59

14.3. Buffer stream-time.........................................................................................................59

14.4. Time overview ...............................................................................................................60

14.5. Clock providers .............................................................................................................60

14.6. Latency ..........................................................................................................................61

15. Buffering ....................................................................................................................................63

15.1. Stream buffering............................................................................................................64

15.2. Download buffering.......................................................................................................65

15.3. Timeshift buffering........................................................................................................65

15.4. Live buffering ................................................................................................................66

15.5. Buffering strategies........................................................................................................66

16. Dynamic Controllable Parameters .............................................................................................71

16.1. Getting Started...............................................................................................................71

16.2. Setting up parameter control .........................................................................................71

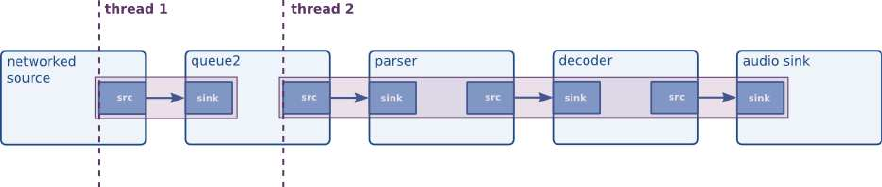

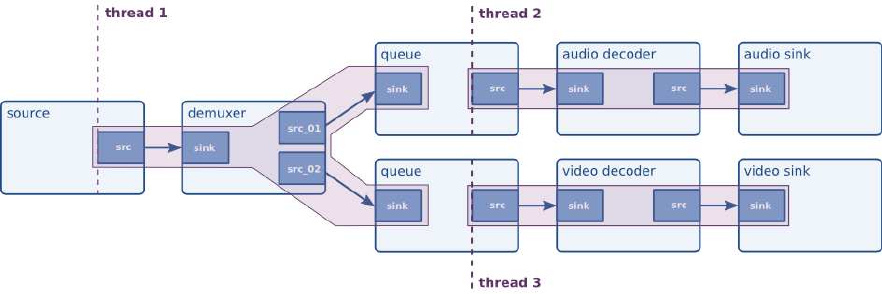

17. Threads.......................................................................................................................................73

17.1. Scheduling in GStreamer...............................................................................................73

17.2. Configuring Threads in GStreamer ...............................................................................73

17.3. When would you want to force a thread?......................................................................79

18. Autoplugging .............................................................................................................................81

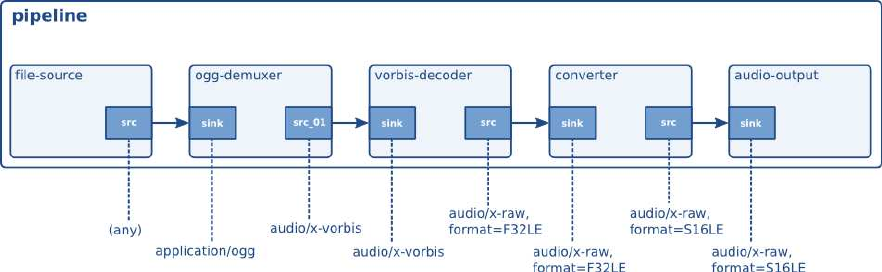

18.1. Media types as a way to identify streams ......................................................................81

18.2. Media stream type detection..........................................................................................82

18.3. Dynamically autoplugging a pipeline............................................................................84

19. Pipeline manipulation ................................................................................................................85

19.1. Using probes..................................................................................................................85

19.2. Manually adding or removing data from/to a pipeline..................................................93

19.3. Forcing a format ..........................................................................................................101

19.4. Dynamically changing the pipeline.............................................................................103

iv

IV. Higher-level interfaces for GStreamer applications.....................................................................111

20. Playback Components..............................................................................................................112

20.1. Playbin.........................................................................................................................112

20.2. Decodebin....................................................................................................................113

20.3. URIDecodebin.............................................................................................................116

20.4. Playsink .......................................................................................................................116

V. Appendices.........................................................................................................................................120

21. Programs ..................................................................................................................................121

21.1. gst-launch ...................................................................................................................121

21.2. gst-inspect...................................................................................................................124

22. Compiling.................................................................................................................................128

22.1. Embedding static elements in your application...........................................................128

23. Things to check when writing an application ..........................................................................130

23.1. Good programming habits...........................................................................................130

23.2. Debugging ...................................................................................................................130

23.3. Conversion plugins ......................................................................................................131

23.4. Utility applications provided with GStreamer.............................................................131

24. Porting 0.8 applications to 0.10 ...............................................................................................133

24.1. List of changes.............................................................................................................133

25. Porting 0.10 applications to 1.0 ...............................................................................................135

25.1. List of changes.............................................................................................................135

26. Integration ................................................................................................................................138

26.1. Linux and UNIX-like operating systems.....................................................................138

26.2. GNOME desktop .........................................................................................................138

26.3. KDE desktop ...............................................................................................................140

26.4. OS X ............................................................................................................................140

26.5. Windows......................................................................................................................140

27. Licensing advisory ...................................................................................................................143

27.1. How to license the applications you build with GStreamer ........................................143

28. Quotes from the Developers.....................................................................................................145

v

List of Figures

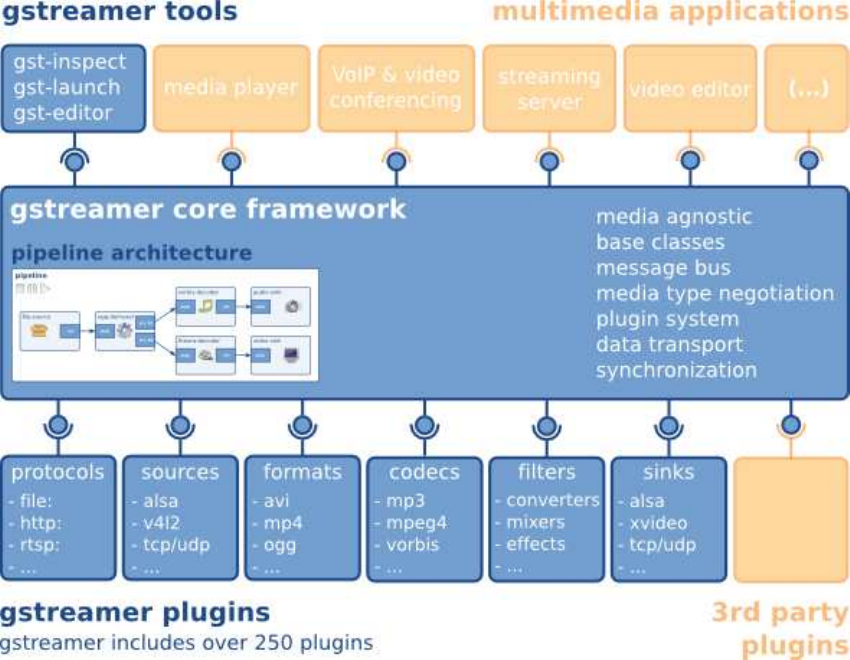

1-1. Gstreamer overview..............................................................................................................................2

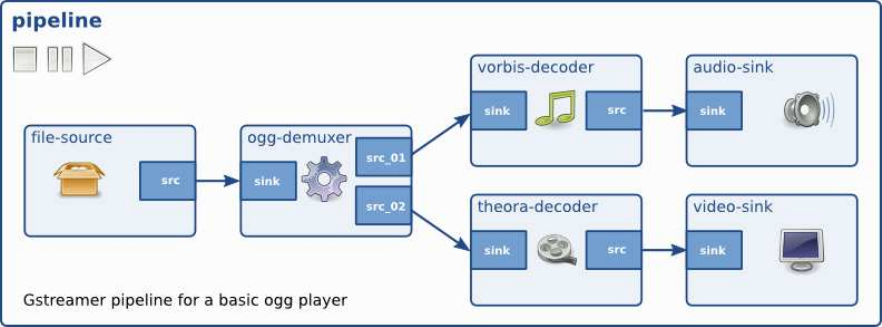

3-1. GStreamer pipeline for a simple ogg player.........................................................................................7

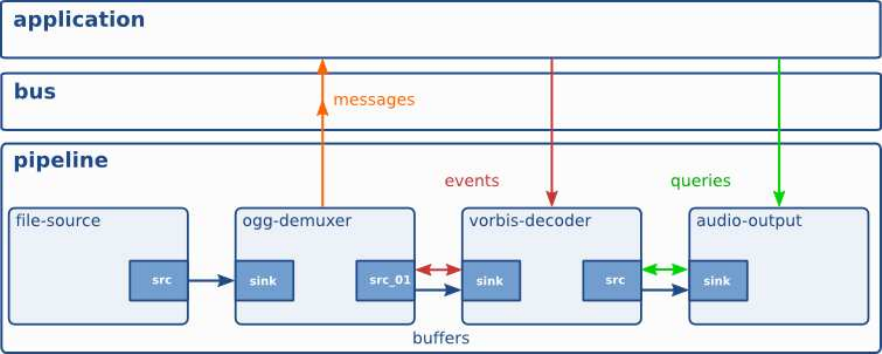

3-2. GStreamer pipeline with different communication flows.....................................................................8

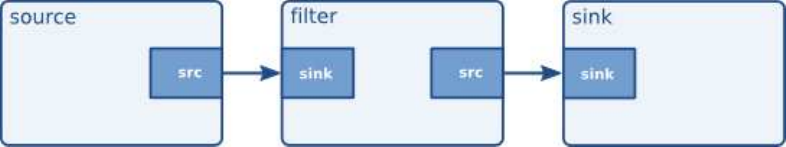

5-1. Visualisation of a source element.......................................................................................................13

5-2. Visualisation of a filter element..........................................................................................................14

5-3. Visualisation of a filter element with more than one output pad........................................................14

5-4. Visualisation of a sink element...........................................................................................................14

5-5. Visualisation of three linked elements................................................................................................19

6-1. Visualisation of a bin with some elements in it..................................................................................22

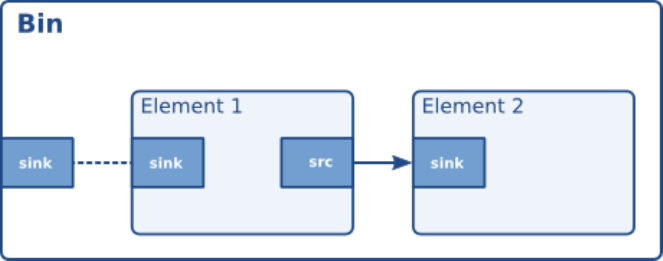

8-1. Visualisation of a GstBin (http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstBin.html)

38

8-2. Visualisation of a GstBin (http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstBin.html)

38

10-1. The "hello world" pipeline ...............................................................................................................46

14-1. GStreamer clock and various times..................................................................................................60

17-1. Data buffering, from a networked source .........................................................................................79

17-2. Synchronizing audio and video sinks...............................................................................................79

18-1. The Hello world pipeline with media types .....................................................................................81

vi

Foreword

GStreamer is an extremely powerful and versatile framework for creating streaming media applications.

Many of the virtues of the GStreamer framework come from its modularity: GStreamer can seamlessly

incorporate new plugin modules. But because modularity and power often come at a cost of greater

complexity, writing new applications is not always easy.

This guide is intended to help you understand the GStreamer framework (version 1.5.0.1) so you can

develop applications based on it. The first chapters will focus on development of a simple audio player,

with much effort going into helping you understand GStreamer concepts. Later chapters will go into

more advanced topics related to media playback, but also at other forms of media processing (capture,

editing, etc.).

vii

Introduction

1. Who should read this manual?

This book is about GStreamer from an application developer’s point of view; it describes how to write a

GStreamer application using the GStreamer libraries and tools. For an explanation about writing plugins,

we suggest the Plugin Writers Guide

(http://gstreamer.freedesktop.org/data/doc/gstreamer/head/pwg/html/index.html).

Also check out the other documentation available on the GStreamer web site

(http://gstreamer.freedesktop.org/documentation/).

2. Preliminary reading

In order to understand this manual, you need to have a basic understanding of the C language.

Since GStreamer adheres to the GObject programming model, this guide also assumes that you

understand the basics of GObject (http://library.gnome.org/devel/gobject/stable/) and glib

(http://library.gnome.org/devel/glib/stable/) programming. Especially,

•GObject instantiation

•GObject properties (set/get)

•GObject casting

•GObject referecing/dereferencing

•glib memory management

•glib signals and callbacks

•glib main loop

3. Structure of this manual

To help you navigate through this guide, it is divided into several large parts. Each part addresses a

particular broad topic concerning GStreamer appliction development. The parts of this guide are laid out

in the following order:

Part I in GStreamer Application Development Manual (1.5.0.1) gives you an overview of GStreamer, it’s

design principles and foundations.

viii

Introduction

Part II in GStreamer Application Development Manual (1.5.0.1) covers the basics of GStreamer

application programming. At the end of this part, you should be able to build your own audio player

using GStreamer

In Part III in GStreamer Application Development Manual (1.5.0.1), we will move on to advanced

subjects which make GStreamer stand out of its competitors. We will discuss application-pipeline

interaction using dynamic parameters and interfaces, we will discuss threading and threaded pipelines,

scheduling and clocks (and synchronization). Most of those topics are not just there to introduce you to

their API, but primarily to give a deeper insight in solving application programming problems with

GStreamer and understanding their concepts.

Next, in Part IV in GStreamer Application Development Manual (1.5.0.1), we will go into higher-level

programming APIs for GStreamer. You don’t exactly need to know all the details from the previous parts

to understand this, but you will need to understand basic GStreamer concepts nevertheless. We will,

amongst others, discuss XML, playbin and autopluggers.

Finally in Part V in GStreamer Application Development Manual (1.5.0.1), you will find some random

information on integrating with GNOME, KDE, OS X or Windows, some debugging help and general

tips to improve and simplify GStreamer programming.

ix

I. About GStreamer

This part gives you an overview of the technologies described in this book.

Chapter 1. What is GStreamer?

GStreamer is a framework for creating streaming media applications. The fundamental design comes

from the video pipeline at Oregon Graduate Institute, as well as some ideas from DirectShow.

GStreamer’s development framework makes it possible to write any type of streaming multimedia

application. The GStreamer framework is designed to make it easy to write applications that handle audio

or video or both. It isn’t restricted to audio and video, and can process any kind of data flow. The pipeline

design is made to have little overhead above what the applied filters induce. This makes GStreamer a

good framework for designing even high-end audio applications which put high demands on latency.

One of the most obvious uses of GStreamer is using it to build a media player. GStreamer already

includes components for building a media player that can support a very wide variety of formats,

including MP3, Ogg/Vorbis, MPEG-1/2, AVI, Quicktime, mod, and more. GStreamer, however, is much

more than just another media player. Its main advantages are that the pluggable components can be

mixed and matched into arbitrary pipelines so that it’s possible to write a full-fledged video or audio

editing application.

The framework is based on plugins that will provide the various codec and other functionality. The

plugins can be linked and arranged in a pipeline. This pipeline defines the flow of the data. Pipelines can

also be edited with a GUI editor and saved as XML so that pipeline libraries can be made with a

minimum of effort.

The GStreamer core function is to provide a framework for plugins, data flow and media type

handling/negotiation. It also provides an API to write applications using the various plugins.

Specifically, GStreamer provides

•an API for multimedia applications

•a plugin architecture

•a pipeline architecture

•a mechanism for media type handling/negotiation

•a mechanism for synchronization

•over 250 plug-ins providing more than 1000 elements

•a set of tools

GStreamer plug-ins could be classified into

•protocols handling

•sources: for audio and video (involves protocol plugins)

1

Chapter 1. What is GStreamer?

•formats: parsers, formaters, muxers, demuxers, metadata, subtitles

•codecs: coders and decoders

•filters: converters, mixers, effects, ...

•sinks: for audio and video (involves protocol plugins)

Figure 1-1. Gstreamer overview

GStreamer is packaged into

•gstreamer: the core package

•gst-plugins-base: an essential exemplary set of elements

•gst-plugins-good: a set of good-quality plug-ins under LGPL

•gst-plugins-ugly: a set of good-quality plug-ins that might pose distribution problems

•gst-plugins-bad: a set of plug-ins that need more quality

•gst-libav: a set of plug-ins that wrap libav for decoding and encoding

2

Chapter 1. What is GStreamer?

•a few others packages

3

Chapter 2. Design principles

2.1. Clean and powerful

GStreamer provides a clean interface to:

•The application programmer who wants to build a media pipeline. The programmer can use an

extensive set of powerful tools to create media pipelines without writing a single line of code.

Performing complex media manipulations becomes very easy.

•The plugin programmer. Plugin programmers are provided a clean and simple API to create

self-contained plugins. An extensive debugging and tracing mechanism has been integrated.

GStreamer also comes with an extensive set of real-life plugins that serve as examples too.

2.2. Object oriented

GStreamer adheres to GObject, the GLib 2.0 object model. A programmer familiar with GLib 2.0 or

GTK+ will be comfortable with GStreamer.

GStreamer uses the mechanism of signals and object properties.

All objects can be queried at runtime for their various properties and capabilities.

GStreamer intends to be similar in programming methodology to GTK+. This applies to the object

model, ownership of objects, reference counting, etc.

2.3. Extensible

All GStreamer Objects can be extended using the GObject inheritance methods.

All plugins are loaded dynamically and can be extended and upgraded independently.

2.4. Allow binary-only plugins

Plugins are shared libraries that are loaded at runtime. Since all the properties of the plugin can be set

using the GObject properties, there is no need (and in fact no way) to have any header files installed for

the plugins.

4

Chapter 2. Design principles

Special care has been taken to make plugins completely self-contained. All relevant aspects of plugins

can be queried at run-time.

2.5. High performance

High performance is obtained by:

•using GLib’s GSlice allocator

•extremely light-weight links between plugins. Data can travel the pipeline with minimal overhead.

Data passing between plugins only involves a pointer dereference in a typical pipeline.

•providing a mechanism to directly work on the target memory. A plugin can for example directly write

to the X server’s shared memory space. Buffers can also point to arbitrary memory, such as a sound

card’s internal hardware buffer.

•refcounting and copy on write minimize usage of memcpy. Sub-buffers efficiently split buffers into

manageable pieces.

•dedicated streaming threads, with scheduling handled by the kernel.

•allowing hardware acceleration by using specialized plugins.

•using a plugin registry with the specifications of the plugins so that the plugin loading can be delayed

until the plugin is actually used.

2.6. Clean core/plugins separation

The core of GStreamer is essentially media-agnostic. It only knows about bytes and blocks, and only

contains basic elements. The core of GStreamer is functional enough to even implement low-level

system tools, like cp.

All of the media handling functionality is provided by plugins external to the core. These tell the core

how to handle specific types of media.

2.7. Provide a framework for codec experimentation

GStreamer also wants to be an easy framework where codec developers can experiment with different

algorithms, speeding up the development of open and free multimedia codecs like those developed by the

Xiph.Org Foundation (http://www.xiph.org) (such as Theora and Vorbis).

5

Chapter 3. Foundations

This chapter of the guide introduces the basic concepts of GStreamer. Understanding these concepts will

be important in reading any of the rest of this guide, all of them assume understanding of these basic

concepts.

3.1. Elements

An element is the most important class of objects in GStreamer. You will usually create a chain of

elements linked together and let data flow through this chain of elements. An element has one specific

function, which can be the reading of data from a file, decoding of this data or outputting this data to

your sound card (or anything else). By chaining together several such elements, you create a pipeline that

can do a specific task, for example media playback or capture. GStreamer ships with a large collection of

elements by default, making the development of a large variety of media applications possible. If needed,

you can also write new elements. That topic is explained in great deal in the GStreamer Plugin Writer’s

Guide.

3.2. Pads

Pads are element’s input and output, where you can connect other elements. They are used to negotiate

links and data flow between elements in GStreamer. A pad can be viewed as a “plug” or “port” on an

element where links may be made with other elements, and through which data can flow to or from those

elements. Pads have specific data handling capabilities: a pad can restrict the type of data that flows

through it. Links are only allowed between two pads when the allowed data types of the two pads are

compatible. Data types are negotiated between pads using a process called caps negotiation. Data types

are described as a GstCaps.

An analogy may be helpful here. A pad is similar to a plug or jack on a physical device. Consider, for

example, a home theater system consisting of an amplifier, a DVD player, and a (silent) video projector.

Linking the DVD player to the amplifier is allowed because both devices have audio jacks, and linking

the projector to the DVD player is allowed because both devices have compatible video jacks. Links

between the projector and the amplifier may not be made because the projector and amplifier have

different types of jacks. Pads in GStreamer serve the same purpose as the jacks in the home theater

system.

For the most part, all data in GStreamer flows one way through a link between elements. Data flows out

of one element through one or more source pads, and elements accept incoming data through one or

more sink pads. Source and sink elements have only source and sink pads, respectively. Data usually

means buffers (described by the GstBuffer

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/gstreamer-GstBuffer.html)

object) and events (described by the GstEvent

6

Chapter 3. Foundations

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/gstreamer-GstEvent.html)

object).

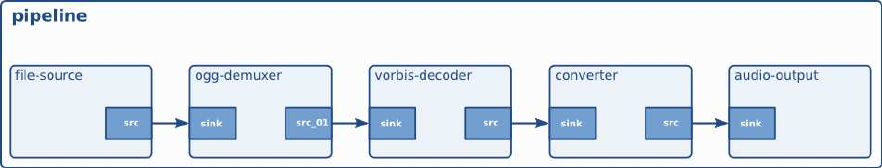

3.3. Bins and pipelines

Abin is a container for a collection of elements. Since bins are subclasses of elements themselves, you

can mostly control a bin as if it were an element, thereby abstracting away a lot of complexity for your

application. You can, for example change state on all elements in a bin by changing the state of that bin

itself. Bins also forward bus messages from their contained children (such as error messages, tag

messages or EOS messages).

Apipeline is a top-level bin. It provides a bus for the application and manages the synchronization for its

children. As you set it to PAUSED or PLAYING state, data flow will start and media processing will take

place. Once started, pipelines will run in a separate thread until you stop them or the end of the data

stream is reached.

Figure 3-1. GStreamer pipeline for a simple ogg player

3.4. Communication

GStreamer provides several mechanisms for communication and data exchange between the application

and the pipeline.

•buffers are objects for passing streaming data between elements in the pipeline. Buffers always travel

from sources to sinks (downstream).

•events are objects sent between elements or from the application to elements. Events can travel

upstream and downstream. Downstream events can be synchronised to the data flow.

7

Chapter 3. Foundations

•messages are objects posted by elements on the pipeline’s message bus, where they will be held for

collection by the application. Messages can be intercepted synchronously from the streaming thread

context of the element posting the message, but are usually handled asynchronously by the application

from the application’s main thread. Messages are used to transmit information such as errors, tags,

state changes, buffering state, redirects etc. from elements to the application in a thread-safe way.

•queries allow applications to request information such as duration or current playback position from

the pipeline. Queries are always answered synchronously. Elements can also use queries to request

information from their peer elements (such as the file size or duration). They can be used both ways

within a pipeline, but upstream queries are more common.

Figure 3-2. GStreamer pipeline with different communication flows

8

II. Building an Application

In these chapters, we will discuss the basic concepts of GStreamer and the most-used objects, such as

elements, pads and buffers. We will use a visual representation of these objects so that we can visualize

the more complex pipelines you will learn to build later on. You will get a first glance at the GStreamer

API, which should be enough for building elementary applications. Later on in this part, you will also

learn to build a basic command-line application.

Note that this part will give a look into the low-level API and concepts of GStreamer. Once you’re going

to build applications, you might want to use higher-level APIs. Those will be discussed later on in this

manual.

Chapter 4. Initializing GStreamer

When writing a GStreamer application, you can simply include gst/gst.h to get access to the library

functions. Besides that, you will also need to initialize the GStreamer library.

4.1. Simple initialization

Before the GStreamer libraries can be used, gst_init has to be called from the main application. This

call will perform the necessary initialization of the library as well as parse the GStreamer-specific

command line options.

A typical program 1would have code to initialize GStreamer that looks like this:

Example 4-1. Initializing GStreamer

#include <stdio.h>

#include <gst/gst.h>

int

main (int argc,

char *argv[])

{

const gchar *nano_str;

guint major, minor, micro, nano;

gst_init (&argc, &argv);

gst_version (&major, &minor, µ, &nano);

if (nano == 1)

nano_str = "(CVS)";

else if (nano == 2)

nano_str = "(Prerelease)";

else

nano_str = "";

printf ("This program is linked against GStreamer %d.%d.%d %s\n",

major, minor, micro, nano_str);

return 0;

}

10

Chapter 4. Initializing GStreamer

Use the GST_VERSION_MAJOR, GST_VERSION_MINOR and GST_VERSION_MICRO macros to

get the GStreamer version you are building against, or use the function gst_version to get the version

your application is linked against. GStreamer currently uses a scheme where versions with the same

major and minor versions are API-/ and ABI-compatible.

It is also possible to call the gst_init function with two NULL arguments, in which case no command

line options will be parsed by GStreamer.

4.2. The GOption interface

You can also use a GOption table to initialize your own parameters as shown in the next example:

Example 4-2. Initialisation using the GOption interface

#include <gst/gst.h>

int

main (int argc,

char *argv[])

{

gboolean silent = FALSE;

gchar *savefile = NULL;

GOptionContext *ctx;

GError *err = NULL;

GOptionEntry entries[] = {

{ "silent", ’s’, 0, G_OPTION_ARG_NONE, &silent,

"do not output status information", NULL },

{ "output", ’o’, 0, G_OPTION_ARG_STRING, &savefile,

"save xml representation of pipeline to FILE and exit", "FILE" },

{ NULL }

};

ctx = g_option_context_new ("- Your application");

g_option_context_add_main_entries (ctx, entries, NULL);

g_option_context_add_group (ctx, gst_init_get_option_group ());

if (!g_option_context_parse (ctx, &argc, &argv, &err)) {

g_print ("Failed to initialize: %s\n", err->message);

g_error_free (err);

return 1;

}

printf ("Run me with --help to see the Application options appended.\n");

return 0;

}

11

Chapter 4. Initializing GStreamer

As shown in this fragment, you can use a GOption

(http://developer.gnome.org/glib/stable/glib-Commandline-option-parser.html) table to define your

application-specific command line options, and pass this table to the GLib initialization function along

with the option group returned from the function gst_init_get_option_group. Your application

options will be parsed in addition to the standard GStreamer options.

Notes

1. The code for this example is automatically extracted from the documentation and built under

tests/examples/manual in the GStreamer tarball.

12

Chapter 5. Elements

The most important object in GStreamer for the application programmer is the GstElement

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstElement.html) object. An

element is the basic building block for a media pipeline. All the different high-level components you will

use are derived from GstElement. Every decoder, encoder, demuxer, video or audio output is in fact a

GstElement

5.1. What are elements?

For the application programmer, elements are best visualized as black boxes. On the one end, you might

put something in, the element does something with it and something else comes out at the other side. For

a decoder element, for example, you’d put in encoded data, and the element would output decoded data.

In the next chapter (see Pads and capabilities), you will learn more about data input and output in

elements, and how you can set that up in your application.

5.1.1. Source elements

Source elements generate data for use by a pipeline, for example reading from disk or from a sound card.

Figure 5-1 shows how we will visualise a source element. We always draw a source pad to the right of

the element.

Figure 5-1. Visualisation of a source element

Source elements do not accept data, they only generate data. You can see this in the figure because it only

has a source pad (on the right). A source pad can only generate data.

5.1.2. Filters, convertors, demuxers, muxers and codecs

Filters and filter-like elements have both input and outputs pads. They operate on data that they receive

on their input (sink) pads, and will provide data on their output (source) pads. Examples of such elements

are a volume element (filter), a video scaler (convertor), an Ogg demuxer or a Vorbis decoder.

13

Chapter 5. Elements

Filter-like elements can have any number of source or sink pads. A video demuxer, for example, would

have one sink pad and several (1-N) source pads, one for each elementary stream contained in the

container format. Decoders, on the other hand, will only have one source and sink pads.

Figure 5-2. Visualisation of a filter element

Figure 5-2 shows how we will visualise a filter-like element. This specific element has one source and

one sink element. Sink pads, receiving input data, are depicted at the left of the element; source pads are

still on the right.

Figure 5-3. Visualisation of a filter element with more than one output pad

Figure 5-3 shows another filter-like element, this one having more than one output (source) pad. An

example of one such element could, for example, be an Ogg demuxer for an Ogg stream containing both

audio and video. One source pad will contain the elementary video stream, another will contain the

elementary audio stream. Demuxers will generally fire signals when a new pad is created. The

application programmer can then handle the new elementary stream in the signal handler.

5.1.3. Sink elements

Sink elements are end points in a media pipeline. They accept data but do not produce anything. Disk

writing, soundcard playback, and video output would all be implemented by sink elements. Figure 5-4

shows a sink element.

Figure 5-4. Visualisation of a sink element

14

Chapter 5. Elements

5.2. Creating a GstElement

The simplest way to create an element is to use gst_element_factory_make ()

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstElementFactory.html#gst-

element-factory-make). This function takes a factory name and an element name for the newly created

element. The name of the element is something you can use later on to look up the element in a bin, for

example. The name will also be used in debug output. You can pass NULL as the name argument to get a

unique, default name.

When you don’t need the element anymore, you need to unref it using gst_object_unref ()

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstObject.html#gst-object-

unref). This decreases the reference count for the element by 1. An element has a refcount of 1 when it

gets created. An element gets destroyed completely when the refcount is decreased to 0.

The following example 1shows how to create an element named source from the element factory named

fakesrc. It checks if the creation succeeded. After checking, it unrefs the element.

#include <gst/gst.h>

int

main (int argc,

char *argv[])

{

GstElement *element;

/*init GStreamer */

gst_init (&argc, &argv);

/*create element */

element = gst_element_factory_make ("fakesrc", "source");

if (!element) {

g_print ("Failed to create element of type ’fakesrc’\n");

return -1;

}

gst_object_unref (GST_OBJECT (element));

return 0;

}

gst_element_factory_make is actually a shorthand for a combination of two functions. A

GstElement

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstElement.html) object is

created from a factory. To create the element, you have to get access to a GstElementFactory

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstElementFactory.html)

object using a unique factory name. This is done with gst_element_factory_find ()

15

Chapter 5. Elements

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstElementFactory.html#gst-

element-factory-find).

The following code fragment is used to get a factory that can be used to create the fakesrc element, a fake

data source. The function gst_element_factory_create ()

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstElementFactory.html#gst-

element-factory-create) will use the element factory to create an element with the given

name.

#include <gst/gst.h>

int

main (int argc,

char *argv[])

{

GstElementFactory *factory;

GstElement *element;

/*init GStreamer */

gst_init (&argc, &argv);

/*create element, method #2 */

factory = gst_element_factory_find ("fakesrc");

if (!factory) {

g_print ("Failed to find factory of type ’fakesrc’\n");

return -1;

}

element = gst_element_factory_create (factory, "source");

if (!element) {

g_print ("Failed to create element, even though its factory exists!\n");

return -1;

}

gst_object_unref (GST_OBJECT (element));

return 0;

}

5.3. Using an element as a GObject

AGstElement

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstElement.html) can have

several properties which are implemented using standard GObject properties. The usual GObject

methods to query, set and get property values and GParamSpecs are therefore supported.

16

Chapter 5. Elements

Every GstElement inherits at least one property from its parent GstObject: the "name" property. This

is the name you provide to the functions gst_element_factory_make () or

gst_element_factory_create (). You can get and set this property using the functions

gst_object_set_name and gst_object_get_name or use the GObject property mechanism as

shown below.

#include <gst/gst.h>

int

main (int argc,

char *argv[])

{

GstElement *element;

gchar *name;

/*init GStreamer */

gst_init (&argc, &argv);

/*create element */

element = gst_element_factory_make ("fakesrc", "source");

/*get name */

g_object_get (G_OBJECT (element), "name", &name, NULL);

g_print ("The name of the element is ’%s’.\n", name);

g_free (name);

gst_object_unref (GST_OBJECT (element));

return 0;

}

Most plugins provide additional properties to provide more information about their configuration or to

configure the element. gst-inspect is a useful tool to query the properties of a particular element, it will

also use property introspection to give a short explanation about the function of the property and about

the parameter types and ranges it supports. See Section 23.4.2 in the appendix for details about

gst-inspect.

For more information about GObject properties we recommend you read the GObject manual

(http://developer.gnome.org/gobject/stable/rn01.html) and an introduction to The Glib Object system

(http://developer.gnome.org/gobject/stable/pt01.html).

AGstElement

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstElement.html) also

provides various GObject signals that can be used as a flexible callback mechanism. Here, too, you can

use gst-inspect to see which signals a specific element supports. Together, signals and properties are the

most basic way in which elements and applications interact.

17

Chapter 5. Elements

5.4. More about element factories

In the previous section, we briefly introduced the GstElementFactory

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstElementFactory.html)

object already as a way to create instances of an element. Element factories, however, are much more

than just that. Element factories are the basic types retrieved from the GStreamer registry, they describe

all plugins and elements that GStreamer can create. This means that element factories are useful for

automated element instancing, such as what autopluggers do, and for creating lists of available elements.

5.4.1. Getting information about an element using a factory

Tools like gst-inspect will provide some generic information about an element, such as the person that

wrote the plugin, a descriptive name (and a shortname), a rank and a category. The category can be used

to get the type of the element that can be created using this element factory. Examples of categories

include Codec/Decoder/Video (video decoder), Codec/Encoder/Video (video encoder),

Source/Video (a video generator), Sink/Video (a video output), and all these exist for audio as well,

of course. Then, there’s also Codec/Demuxer and Codec/Muxer and a whole lot more. gst-inspect will

give a list of all factories, and gst-inspect <factory-name> will list all of the above information, and a

lot more.

#include <gst/gst.h>

int

main (int argc,

char *argv[])

{

GstElementFactory *factory;

/*init GStreamer */

gst_init (&argc, &argv);

/*get factory */

factory = gst_element_factory_find ("fakesrc");

if (!factory) {

g_print ("You don’t have the ’fakesrc’ element installed!\n");

return -1;

}

/*display information */

g_print ("The ’%s’ element is a member of the category %s.\n"

"Description: %s\n",

gst_plugin_feature_get_name (GST_PLUGIN_FEATURE (factory)),

gst_element_factory_get_metadata (factory, GST_ELEMENT_METADATA_KLASS),

gst_element_factory_get_metadata (factory, GST_ELEMENT_METADATA_DESCRIPTION));

return 0;

}

18

Chapter 5. Elements

You can use gst_registry_pool_feature_list (GST_TYPE_ELEMENT_FACTORY) to get a list of

all the element factories that GStreamer knows about.

5.4.2. Finding out what pads an element can contain

Perhaps the most powerful feature of element factories is that they contain a full description of the pads

that the element can generate, and the capabilities of those pads (in layman words: what types of media

can stream over those pads), without actually having to load those plugins into memory. This can be used

to provide a codec selection list for encoders, or it can be used for autoplugging purposes for media

players. All current GStreamer-based media players and autopluggers work this way. We’ll look closer at

these features as we learn about GstPad and GstCaps in the next chapter: Pads and capabilities

5.5. Linking elements

By linking a source element with zero or more filter-like elements and finally a sink element, you set up a

media pipeline. Data will flow through the elements. This is the basic concept of media handling in

GStreamer.

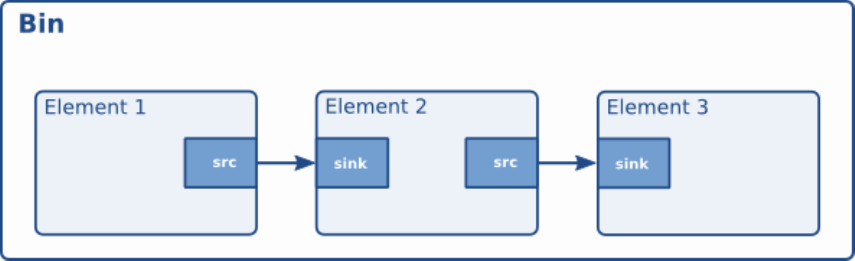

Figure 5-5. Visualisation of three linked elements

By linking these three elements, we have created a very simple chain of elements. The effect of this will

be that the output of the source element (“element1”) will be used as input for the filter-like element

(“element2”). The filter-like element will do something with the data and send the result to the final sink

element (“element3”).

Imagine the above graph as a simple Ogg/Vorbis audio decoder. The source is a disk source which reads

the file from disc. The second element is a Ogg/Vorbis audio decoder. The sink element is your

soundcard, playing back the decoded audio data. We will use this simple graph to construct an

Ogg/Vorbis player later in this manual.

In code, the above graph is written like this:

#include <gst/gst.h>

int

19

Chapter 5. Elements

main (int argc,

char *argv[])

{

GstElement *pipeline;

GstElement *source, *filter, *sink;

/*init */

gst_init (&argc, &argv);

/*create pipeline */

pipeline = gst_pipeline_new ("my-pipeline");

/*create elements */

source = gst_element_factory_make ("fakesrc", "source");

filter = gst_element_factory_make ("identity", "filter");

sink = gst_element_factory_make ("fakesink", "sink");

/*must add elements to pipeline before linking them */

gst_bin_add_many (GST_BIN (pipeline), source, filter, sink, NULL);

/*link */

if (!gst_element_link_many (source, filter, sink, NULL)) {

g_warning ("Failed to link elements!");

}

[..]

}

For more specific behaviour, there are also the functions gst_element_link () and

gst_element_link_pads (). You can also obtain references to individual pads and link those using

various gst_pad_link_*() functions. See the API references for more details.

Important: you must add elements to a bin or pipeline before linking them, since adding an element to a

bin will disconnect any already existing links. Also, you cannot directly link elements that are not in the

same bin or pipeline; if you want to link elements or pads at different hierarchy levels, you will need to

use ghost pads (more about ghost pads later, see Section 8.4).

5.6. Element States

After being created, an element will not actually perform any actions yet. You need to change elements

state to make it do something. GStreamer knows four element states, each with a very specific meaning.

Those four states are:

•GST_STATE_NULL: this is the default state. No resources are allocated in this state, so, transitioning to

it will free all resources. The element must be in this state when its refcount reaches 0 and it is freed.

20

Chapter 5. Elements

•GST_STATE_READY: in the ready state, an element has allocated all of its global resources, that is,

resources that can be kept within streams. You can think about opening devices, allocating buffers and

so on. However, the stream is not opened in this state, so the stream positions is automatically zero. If

a stream was previously opened, it should be closed in this state, and position, properties and such

should be reset.

•GST_STATE_PAUSED: in this state, an element has opened the stream, but is not actively processing it.

An element is allowed to modify a stream’s position, read and process data and such to prepare for

playback as soon as state is changed to PLAYING, but it is not allowed to play the data which would

make the clock run. In summary, PAUSED is the same as PLAYING but without a running clock.

Elements going into the PAUSED state should prepare themselves for moving over to the PLAYING

state as soon as possible. Video or audio outputs would, for example, wait for data to arrive and queue

it so they can play it right after the state change. Also, video sinks can already play the first frame

(since this does not affect the clock yet). Autopluggers could use this same state transition to already

plug together a pipeline. Most other elements, such as codecs or filters, do not need to explicitly do

anything in this state, however.

•GST_STATE_PLAYING: in the PLAYING state, an element does exactly the same as in the PAUSED

state, except that the clock now runs.

You can change the state of an element using the function gst_element_set_state (). If you set an

element to another state, GStreamer will internally traverse all intermediate states. So if you set an

element from NULL to PLAYING, GStreamer will internally set the element to READY and PAUSED

in between.

When moved to GST_STATE_PLAYING, pipelines will process data automatically. They do not need to

be iterated in any form. Internally, GStreamer will start threads that take this task on to them. GStreamer

will also take care of switching messages from the pipeline’s thread into the application’s own thread, by

using a GstBus

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstBus.html). See Chapter 7

for details.

When you set a bin or pipeline to a certain target state, it will usually propagate the state change to all

elements within the bin or pipeline automatically, so it’s usually only necessary to set the state of the

top-level pipeline to start up the pipeline or shut it down. However, when adding elements dynamically to

an already-running pipeline, e.g. from within a "pad-added" signal callback, you need to set it to the

desired target state yourself using gst_element_set_state () or

gst_element_sync_state_with_parent ().

Notes

1. The code for this example is automatically extracted from the documentation and built under

tests/examples/manual in the GStreamer tarball.

21

Chapter 6. Bins

A bin is a container element. You can add elements to a bin. Since a bin is an element itself, a bin can be

handled in the same way as any other element. Therefore, the whole previous chapter (Elements) applies

to bins as well.

6.1. What are bins

Bins allow you to combine a group of linked elements into one logical element. You do not deal with the

individual elements anymore but with just one element, the bin. We will see that this is extremely

powerful when you are going to construct complex pipelines since it allows you to break up the pipeline

in smaller chunks.

The bin will also manage the elements contained in it. It will perform state changes on the elements as

well as collect and forward bus messages.

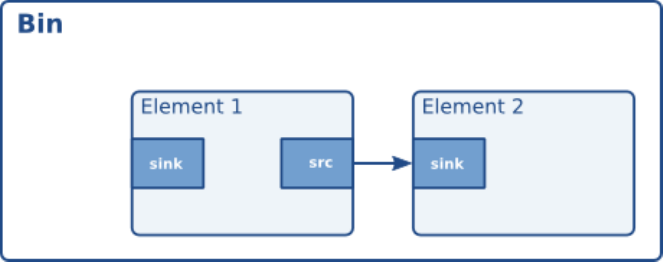

Figure 6-1. Visualisation of a bin with some elements in it

There is one specialized type of bin available to the GStreamer programmer:

•A pipeline: a generic container that manages the synchronization and bus messages of the contained

elements. The toplevel bin has to be a pipeline, every application thus needs at least one of these.

6.2. Creating a bin

Bins are created in the same way that other elements are created, i.e. using an element factory. There are

also convenience functions available (gst_bin_new () and gst_pipeline_new ()). To add

elements to a bin or remove elements from a bin, you can use gst_bin_add () and gst_bin_remove

(). Note that the bin that you add an element to will take ownership of that element. If you destroy the

22

Chapter 6. Bins

bin, the element will be dereferenced with it. If you remove an element from a bin, it will be

dereferenced automatically.

#include <gst/gst.h>

int

main (int argc,

char *argv[])

{

GstElement *bin, *pipeline, *source, *sink;

/*init */

gst_init (&argc, &argv);

/*create */

pipeline = gst_pipeline_new ("my_pipeline");

bin = gst_bin_new ("my_bin");

source = gst_element_factory_make ("fakesrc", "source");

sink = gst_element_factory_make ("fakesink", "sink");

/*First add the elements to the bin */

gst_bin_add_many (GST_BIN (bin), source, sink, NULL);

/*add the bin to the pipeline */

gst_bin_add (GST_BIN (pipeline), bin);

/*link the elements */

gst_element_link (source, sink);

[..]

}

There are various functions to lookup elements in a bin. The most commonly used are

gst_bin_get_by_name () and gst_bin_get_by_interface (). You can also iterate over all

elements that a bin contains using the function gst_bin_iterate_elements (). See the API

references of GstBin

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstBin.html) for details.

6.3. Custom bins

The application programmer can create custom bins packed with elements to perform a specific task.

This allows you, for example, to write an Ogg/Vorbis decoder with just the following lines of code:

int

main (int argc,

char *argv[])

{

GstElement *player;

23

Chapter 6. Bins

/*init */

gst_init (&argc, &argv);

/*create player */

player = gst_element_factory_make ("oggvorbisplayer", "player");

/*set the source audio file */

g_object_set (player, "location", "helloworld.ogg", NULL);

/*start playback */

gst_element_set_state (GST_ELEMENT (player), GST_STATE_PLAYING);

[..]

}

(This is a silly example of course, there already exists a much more powerful and versatile custom bin

like this: the playbin element.)

Custom bins can be created with a plugin or from the application. You will find more information about

creating custom bin in the Plugin Writers Guide

(http://gstreamer.freedesktop.org/data/doc/gstreamer/head/pwg/html/index.html).

Examples of such custom bins are the playbin and uridecodebin elements from gst-plugins-base

(http://gstreamer.freedesktop.org/data/doc/gstreamer/head/gst-plugins-base-plugins/html/index.html).

6.4. Bins manage states of their children

Bins manage the state of all elements contained in them. If you set a bin (or a pipeline, which is a special

top-level type of bin) to a certain target state using gst_element_set_state (), it will make sure all

elements contained within it will also be set to this state. This means it’s usually only necessary to set the

state of the top-level pipeline to start up the pipeline or shut it down.

The bin will perform the state changes on all its children from the sink element to the source element.

This ensures that the downstream element is ready to receive data when the upstream element is brought

to PAUSED or PLAYING. Similarly when shutting down, the sink elements will be set to READY or

NULL first, which will cause the upstream elements to receive a FLUSHING error and stop the

streaming threads before the elements are set to the READY or NULL state.

Note, however, that if elements are added to a bin or pipeline that’s already running, , e.g. from within a

"pad-added" signal callback, its state will not automatically be brought in line with the current state or

target state of the bin or pipeline it was added to. Instead, you have to need to set it to the desired target

state yourself using gst_element_set_state () or gst_element_sync_state_with_parent

() when adding elements to an already-running pipeline.

24

Chapter 7. Bus

A bus is a simple system that takes care of forwarding messages from the streaming threads to an

application in its own thread context. The advantage of a bus is that an application does not need to be

thread-aware in order to use GStreamer, even though GStreamer itself is heavily threaded.

Every pipeline contains a bus by default, so applications do not need to create a bus or anything. The

only thing applications should do is set a message handler on a bus, which is similar to a signal handler

to an object. When the mainloop is running, the bus will periodically be checked for new messages, and

the callback will be called when any message is available.

7.1. How to use a bus

There are two different ways to use a bus:

•Run a GLib/Gtk+ main loop (or iterate the default GLib main context yourself regularly) and attach

some kind of watch to the bus. This way the GLib main loop will check the bus for new messages and

notify you whenever there are messages.

Typically you would use gst_bus_add_watch () or gst_bus_add_signal_watch () in this

case.

To use a bus, attach a message handler to the bus of a pipeline using gst_bus_add_watch (). This

handler will be called whenever the pipeline emits a message to the bus. In this handler, check the

signal type (see next section) and do something accordingly. The return value of the handler should be

TRUE to keep the handler attached to the bus, return FALSE to remove it.

•Check for messages on the bus yourself. This can be done using gst_bus_peek () and/or

gst_bus_poll ().

#include <gst/gst.h>

static GMainLoop *loop;

static gboolean

my_bus_callback (GstBus *bus,

GstMessage *message,

gpointer data)

{

g_print ("Got %s message\n", GST_MESSAGE_TYPE_NAME (message));

switch (GST_MESSAGE_TYPE (message)) {

25

Chapter 7. Bus

case GST_MESSAGE_ERROR: {

GError *err;

gchar *debug;

gst_message_parse_error (message, &err, &debug);

g_print ("Error: %s\n", err->message);

g_error_free (err);

g_free (debug);

g_main_loop_quit (loop);

break;

}

case GST_MESSAGE_EOS:

/*end-of-stream */

g_main_loop_quit (loop);

break;

default:

/*unhandled message */

break;

}

/*we want to be notified again the next time there is a message

*on the bus, so returning TRUE (FALSE means we want to stop watching

*for messages on the bus and our callback should not be called again)

*/

return TRUE;

}

gint

main (gint argc,

gchar *argv[])

{

GstElement *pipeline;

GstBus *bus;

guint bus_watch_id;

/*init */

gst_init (&argc, &argv);

/*create pipeline, add handler */

pipeline = gst_pipeline_new ("my_pipeline");

/*adds a watch for new message on our pipeline’s message bus to

*the default GLib main context, which is the main context that our

*GLib main loop is attached to below

*/

bus = gst_pipeline_get_bus (GST_PIPELINE (pipeline));

bus_watch_id = gst_bus_add_watch (bus, my_bus_callback, NULL);

gst_object_unref (bus);

[..]

/*create a mainloop that runs/iterates the default GLib main context

26

Chapter 7. Bus

*(context NULL), in other words: makes the context check if anything

*it watches for has happened. When a message has been posted on the

*bus, the default main context will automatically call our

*my_bus_callback() function to notify us of that message.

*The main loop will be run until someone calls g_main_loop_quit()

*/

loop = g_main_loop_new (NULL, FALSE);

g_main_loop_run (loop);

/*clean up */

gst_element_set_state (pipeline, GST_STATE_NULL);

gst_object_unref (pipeline);

g_source_remove (bus_watch_id);

g_main_loop_unref (loop);

return 0;

}

It is important to know that the handler will be called in the thread context of the mainloop. This means

that the interaction between the pipeline and application over the bus is asynchronous, and thus not

suited for some real-time purposes, such as cross-fading between audio tracks, doing (theoretically)

gapless playback or video effects. All such things should be done in the pipeline context, which is easiest

by writing a GStreamer plug-in. It is very useful for its primary purpose, though: passing messages from

pipeline to application. The advantage of this approach is that all the threading that GStreamer does

internally is hidden from the application and the application developer does not have to worry about

thread issues at all.

Note that if you’re using the default GLib mainloop integration, you can, instead of attaching a watch,

connect to the “message” signal on the bus. This way you don’t have to switch() on all possible

message types; just connect to the interesting signals in form of “message::<type>”, where <type> is a

specific message type (see the next section for an explanation of message types).

The above snippet could then also be written as:

GstBus *bus;

[..]

bus = gst_pipeline_get_bus (GST_PIPELINE (pipeline);

gst_bus_add_signal_watch (bus);

g_signal_connect (bus, "message::error", G_CALLBACK (cb_message_error), NULL);

g_signal_connect (bus, "message::eos", G_CALLBACK (cb_message_eos), NULL);

[..]

27

Chapter 7. Bus

If you aren’t using GLib mainloop, the asynchronous message signals won’t be available by default. You

can however install a custom sync handler that wakes up the custom mainloop and that uses

gst_bus_async_signal_func () to emit the signals. (see also documentation

(http://gstreamer.freedesktop.org/data/doc/gstreamer/stable/gstreamer/html/GstBus.html) for details)

7.2. Message types

GStreamer has a few pre-defined message types that can be passed over the bus. The messages are

extensible, however. Plug-ins can define additional messages, and applications can decide to either have

specific code for those or ignore them. All applications are strongly recommended to at least handle error

messages by providing visual feedback to the user.

All messages have a message source, type and timestamp. The message source can be used to see which

element emitted the message. For some messages, for example, only the ones emitted by the top-level

pipeline will be interesting to most applications (e.g. for state-change notifications). Below is a list of all

messages and a short explanation of what they do and how to parse message-specific content.

•Error, warning and information notifications: those are used by elements if a message should be shown

to the user about the state of the pipeline. Error messages are fatal and terminate the data-passing. The

error should be repaired to resume pipeline activity. Warnings are not fatal, but imply a problem

nevertheless. Information messages are for non-problem notifications. All those messages contain a

GError with the main error type and message, and optionally a debug string. Both can be extracted

using gst_message_parse_error (),_parse_warning () and _parse_info (). Both error

and debug strings should be freed after use.

•End-of-stream notification: this is emitted when the stream has ended. The state of the pipeline will

not change, but further media handling will stall. Applications can use this to skip to the next song in

their playlist. After end-of-stream, it is also possible to seek back in the stream. Playback will then

continue automatically. This message has no specific arguments.

•Tags: emitted when metadata was found in the stream. This can be emitted multiple times for a

pipeline (e.g. once for descriptive metadata such as artist name or song title, and another one for

stream-information, such as samplerate and bitrate). Applications should cache metadata internally.

gst_message_parse_tag () should be used to parse the taglist, which should be

gst_tag_list_unref ()’ed when no longer needed.

•State-changes: emitted after a successful state change. gst_message_parse_state_changed ()

can be used to parse the old and new state of this transition.

•Buffering: emitted during caching of network-streams. One can manually extract the progress (in

percent) from the message by extracting the “buffer-percent” property from the structure returned by

gst_message_get_structure (). See also Chapter 15.

•Element messages: these are special messages that are unique to certain elements and usually represent

additional features. The element’s documentation should mention in detail which element messages a

particular element may send. As an example, the ’qtdemux’ QuickTime demuxer element may send a

’redirect’ element message on certain occasions if the stream contains a redirect instruction.

28

Chapter 7. Bus

•Application-specific messages: any information on those can be extracted by getting the message

structure (see above) and reading its fields. Usually these messages can safely be ignored.

Application messages are primarily meant for internal use in applications in case the application needs

to marshal information from some thread into the main thread. This is particularly useful when the

application is making use of element signals (as those signals will be emitted in the context of the

streaming thread).

29

Chapter 8. Pads and capabilities

As we have seen in Elements, the pads are the element’s interface to the outside world. Data streams

from one element’s source pad to another element’s sink pad. The specific type of media that the element

can handle will be exposed by the pad’s capabilities. We will talk more on capabilities later in this

chapter (see Section 8.2).

8.1. Pads

A pad type is defined by two properties: its direction and its availability. As we’ve mentioned before,

GStreamer defines two pad directions: source pads and sink pads. This terminology is defined from the

view of within the element: elements receive data on their sink pads and generate data on their source

pads. Schematically, sink pads are drawn on the left side of an element, whereas source pads are drawn

on the right side of an element. In such graphs, data flows from left to right. 1

Pad directions are very simple compared to pad availability. A pad can have any of three availabilities:

always, sometimes and on request. The meaning of those three types is exactly as it says: always pads

always exist, sometimes pad exist only in certain cases (and can disappear randomly), and on-request

pads appear only if explicitly requested by applications.

8.1.1. Dynamic (or sometimes) pads

Some elements might not have all of their pads when the element is created. This can happen, for

example, with an Ogg demuxer element. The element will read the Ogg stream and create dynamic pads

for each contained elementary stream (vorbis, theora) when it detects such a stream in the Ogg stream.

Likewise, it will delete the pad when the stream ends. This principle is very useful for demuxer elements,

for example.

Running gst-inspect oggdemux will show that the element has only one pad: a sink pad called ’sink’. The

other pads are “dormant”. You can see this in the pad template because there is an “Exists: Sometimes”

property. Depending on the type of Ogg file you play, the pads will be created. We will see that this is

very important when you are going to create dynamic pipelines. You can attach a signal handler to an

element to inform you when the element has created a new pad from one of its “sometimes” pad

templates. The following piece of code is an example of how to do this:

#include <gst/gst.h>

static void

cb_new_pad (GstElement *element,

GstPad *pad,

gpointer data)

{

gchar *name;

30

Chapter 8. Pads and capabilities

name = gst_pad_get_name (pad);

g_print ("A new pad %s was created\n", name);

g_free (name);

/*here, you would setup a new pad link for the newly created pad */

[..]

}

int

main (int argc,

char *argv[])

{

GstElement *pipeline, *source, *demux;

GMainLoop *loop;

/*init */

gst_init (&argc, &argv);

/*create elements */

pipeline = gst_pipeline_new ("my_pipeline");

source = gst_element_factory_make ("filesrc", "source");

g_object_set (source, "location", argv[1], NULL);

demux = gst_element_factory_make ("oggdemux", "demuxer");

/*you would normally check that the elements were created properly */

/*put together a pipeline */

gst_bin_add_many (GST_BIN (pipeline), source, demux, NULL);

gst_element_link_pads (source, "src", demux, "sink");

/*listen for newly created pads */

g_signal_connect (demux, "pad-added", G_CALLBACK (cb_new_pad), NULL);

/*start the pipeline */

gst_element_set_state (GST_ELEMENT (pipeline), GST_STATE_PLAYING);

loop = g_main_loop_new (NULL, FALSE);

g_main_loop_run (loop);

[..]

}

It is not uncommon to add elements to the pipeline only from within the "pad-added" callback. If you do

this, don’t forget to set the state of the newly-added elements to the target state of the pipeline using

gst_element_set_state () or gst_element_sync_state_with_parent ().

31

Chapter 8. Pads and capabilities

8.1.2. Request pads

An element can also have request pads. These pads are not created automatically but are only created on

demand. This is very useful for multiplexers, aggregators and tee elements. Aggregators are elements

that merge the content of several input streams together into one output stream. Tee elements are the

reverse: they are elements that have one input stream and copy this stream to each of their output pads,

which are created on request. Whenever an application needs another copy of the stream, it can simply

request a new output pad from the tee element.

The following piece of code shows how you can request a new output pad from a “tee” element:

static void

some_function (GstElement *tee)

{