Pdi User Guide

pdi_user_guide

User Manual: Pdf

Open the PDF directly: View PDF ![]() .

.

Page Count: 262 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Contents

- Introduction

- Pentaho Data Integration Architecture

- Use Pentaho Data Integration

- Interface Perspectives

- Terminology and Basic Concepts

- Create Transformations

- Executing Transformations

- Working with the DI Repository

- Reusing Transformation Flows with Mapping Steps

- Arguments, Parameters, and Variables

- Rapid Analysis Schema Prototyping

- Using the SQL Editor

- Using the Database Explorer

- Unsupported Databases

- Performance Monitoring and Logging

- Working with Big Data and Hadoop in PDI

- Pentaho MapReduce Workflow

- PDI Hadoop Job Workflow

- Hadoop to PDI Data Type Conversion

- Hadoop Hive-Specific SQL Limitations

- Big Data Tutorials

- Implement Data Services with the Thin Kettle JDBC Driver

- Transactional Databases and Job Rollback

- Interacting With Web Services

- Scheduling and Scripting PDI Content

- Transformation Step Reference

- Big Data

- Input

- Cassandra Input

- CSV File Input

- Data Grid

- De-serialize From File

- Email Messages Input

- ESRI Shapefile Reader

- Fixed File Input

- Generate Random Credit Card Numbers

- Generate Random Value

- Generate Rows

- Get Data From XML

- Get File Names

- Get Files Rows Count

- Get Repository Names

- Get Subfolder Names

- Get System Info

- Get Table Names

- Google Analytics Input

- Google Docs Input

- GZIP CSV Input

- HBase Input

- HL7 Input

- JMS Consumer

- JSON Input

- LDAP Input

- LDIF Input

- Load File Content In Memory

- Microsoft Access Input

- Microsoft Excel Input

- Mondrian Input

- MongoDB Input

- OLAP Input

- OpenERP Object Input

- Palo Cell Input

- Palo Dim Input

- Property Input

- Splunk Input

- RSS Input

- S3 CSV Input

- Salesforce Input

- SAP Input

- SAS Input

- Table Input

- Text File Input

- XBase Input

- XML Input Stream (StAX)

- YAML Input

- Output

- Automatic Documentation Output

- Cassandra Output

- Delete

- HBase Output

- Insert/Update

- JMS Producer

- JSON Output

- LDAP Output

- Microsoft Access Output

- Microsoft Excel Output

- Microsoft Excel Writer

- MongoDB Output

- OpenERP Object Input

- Palo Cell Output

- Palo Dim Output

- Pentaho Reporting Output

- Properties Output

- RSS Output

- S3 File Output

- Salesforce Delete

- Salesforce Insert

- Salesforce Update

- Salesforce Upsert

- Serialize to File

- Splunk Output

- SQL File Output

- Synchronize After Merge

- Table Output

- Text File Output

- Update

- XML Output

- Transform

- Add a Checksum

- Add Constants

- Add Sequence

- Add Value Fields Changing Sequence

- Add XML

- Calculator

- Closure Generator

- Example Plugin

- Get ID From Slave Server

- Number Range

- Replace in String

- Row Denormalizer

- Row Flattener

- Row Normalizer

- Select Values

- Set Field Value

- Set Field Value to a Constant

- Sort Rows

- Split Field to Rows

- Split Fields

- String Operations

- Strings Cut

- Unique Rows

- Unique Rows (HashSet)

- Value Mapper

- XSL Transformation

- Utility

- Flow

- Scripting

- Lookup

- Joins

- Data Warehouse

- Validation

- Statistics

- Palo

- Job

- Mapping

- Bulk Loading

- Inline

- Data Mining Steps

- Job Entry Reference

- About PDI Marketplace

- Troubleshooting

- Changing the Pentaho Data Integration Home Directory Location (.kettle folder)

- Kitchen can't read KJBs from a Zip export

- Generating a DI Repository Configuration Without Running Spoon

- Unable to Get List of Repositories Exception

- Database Locks When Reading and Updating From A Single Table

- Force PDI to use DATE instead of TIMESTAMP in Parameterized SQL Queries

- PDI Does Not Recognize Changes Made To a Table

- Using ODBC

- Sqoop Import into Hive Fails

Create DI Solutions

This document supports Pentaho Business Analytics Suite 5.0 GA and Pentaho Data Integration 5.0 GA,

documentation revision February 3, 2014, copyright © 2014 Pentaho Corporation. No part may be reprinted without

written permission from Pentaho Corporation. All trademarks are the property of their respective owners.

Help and Support Resources

If you do not find answers to your quesions here, please contact your Pentaho technical support representative.

Support-related questions should be submitted through the Pentaho Customer Support Portal at

http://support.pentaho.com.

For information about how to purchase support or enable an additional named support contact, please contact your

sales representative, or send an email to sales@pentaho.com.

For information about instructor-led training, visit

http://www.pentaho.com/training.

Liability Limits and Warranty Disclaimer

The author(s) of this document have used their best efforts in preparing the content and the programs contained

in it. These efforts include the development, research, and testing of the theories and programs to determine their

effectiveness. The author and publisher make no warranty of any kind, express or implied, with regard to these

programs or the documentation contained in this book.

The author(s) and Pentaho shall not be liable in the event of incidental or consequential damages in connection

with, or arising out of, the furnishing, performance, or use of the programs, associated instructions, and/or claims.

Trademarks

Pentaho (TM) and the Pentaho logo are registered trademarks of Pentaho Corporation. All other trademarks are the

property of their respective owners. Trademarked names may appear throughout this document. Rather than list

the names and entities that own the trademarks or insert a trademark symbol with each mention of the trademarked

name, Pentaho states that it is using the names for editorial purposes only and to the benefit of the trademark

owner, with no intention of infringing upon that trademark.

Third-Party Open Source Software

For a listing of open source software used by each Pentaho component, navigate to the folder that contains the

Pentaho component. Within that folder, locate a folder named licenses. The licenses folder contains HTML.files that

list the names of open source software, their licenses, and required attributions.

Contact Us

Global Headquarters Pentaho Corporation

Citadel International, Suite 340

5950 Hazeltine National Drive

Orlando, FL 32822

Phone: +1 407 812-OPEN (6736)

Fax: +1 407 517-4575

http://www.pentaho.com

Sales Inquiries: sales@pentaho.com

| TOC | 3

Contents

Introduction..............................................................................................................................11

Pentaho Data Integration Architecture.....................................................................................12

Use Pentaho Data Integration................................................................................................. 13

Create a Connection to the DI Repository..................................................................................................13

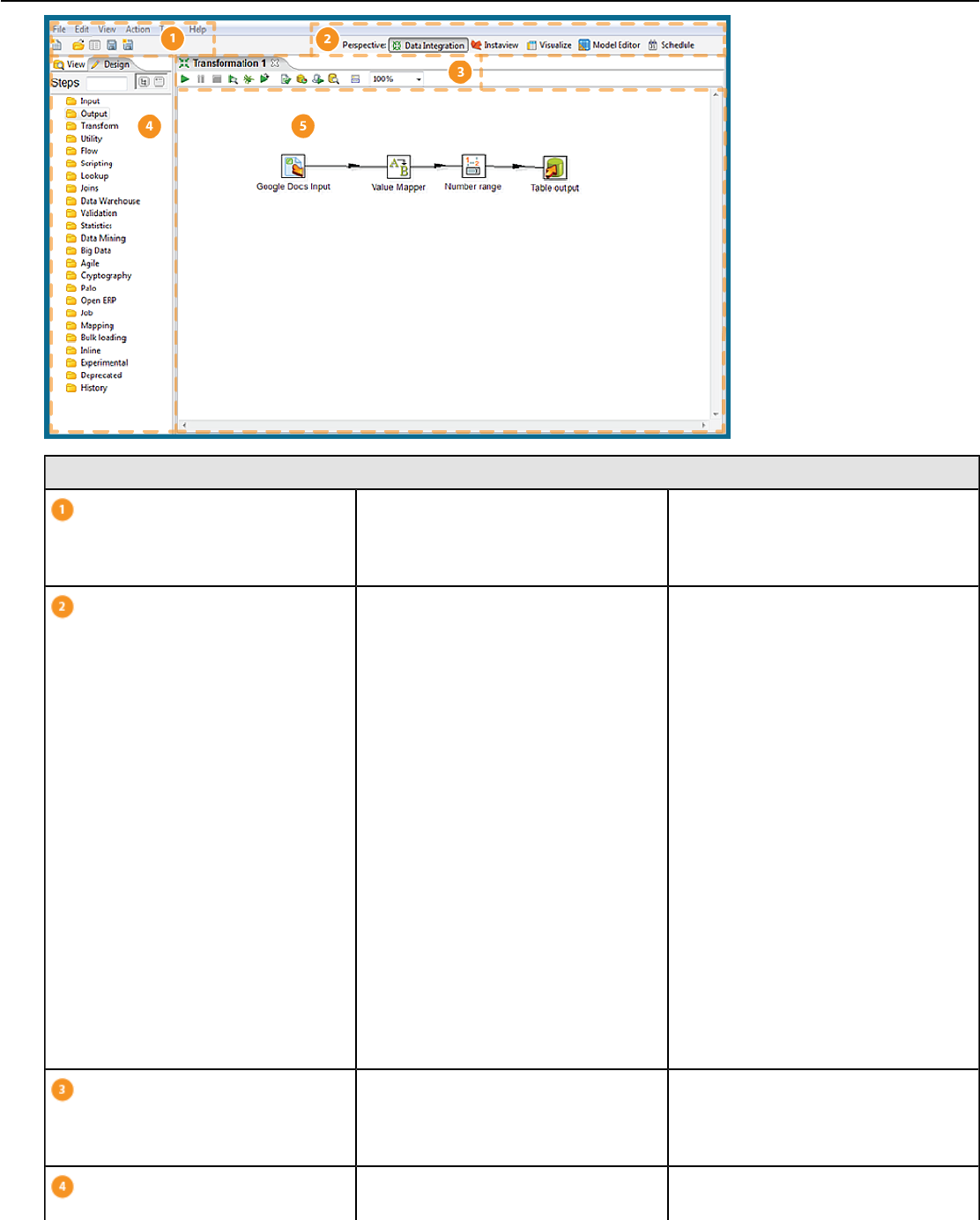

Interface Perspectives............................................................................................................. 14

Use Perspectives Within Spoon................................................................................................................. 14

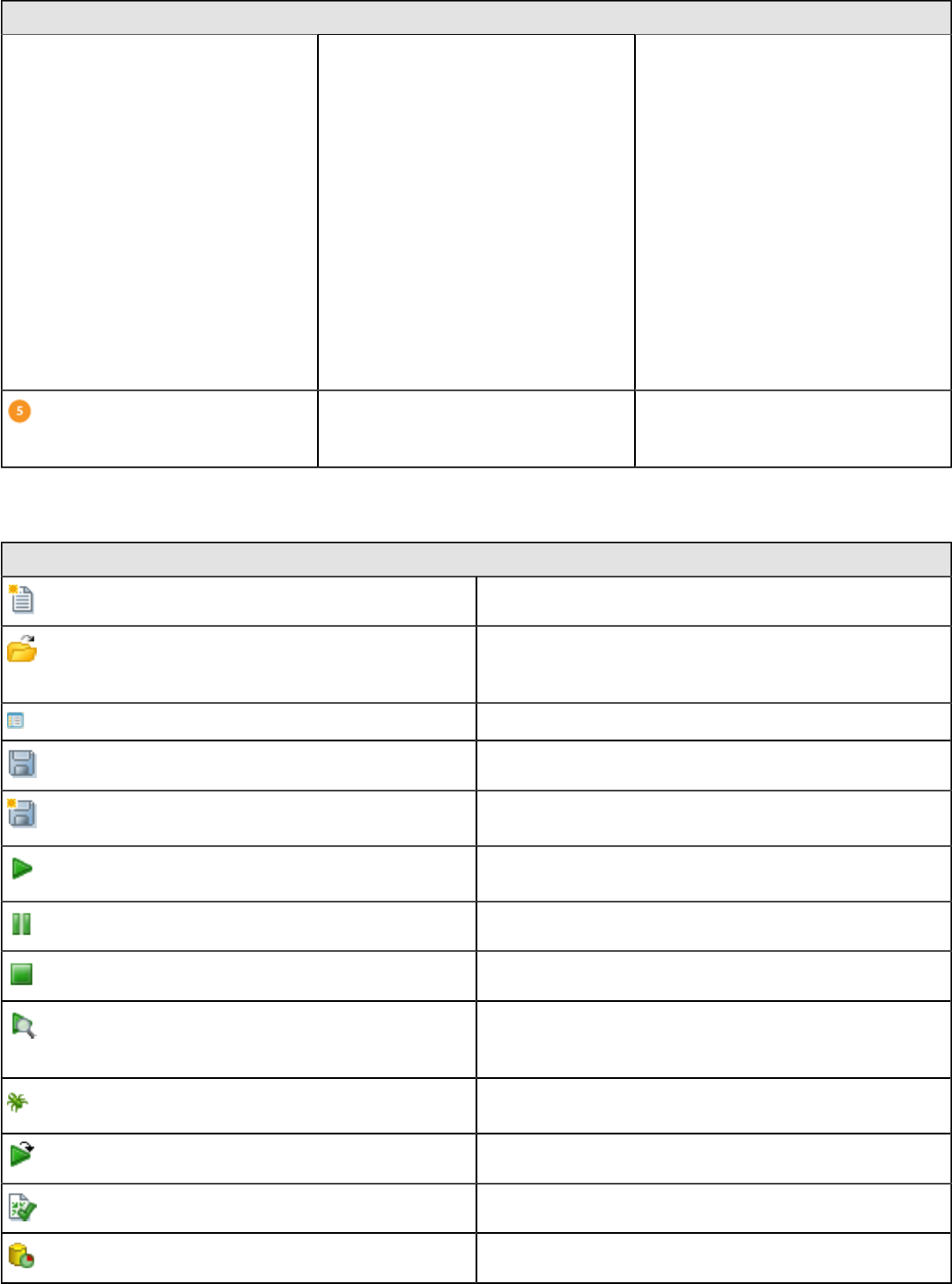

Tour Spoon.................................................................................................................................................15

VFS File Dialogues in Spoon...........................................................................................................17

Model Perspective...................................................................................................................................... 17

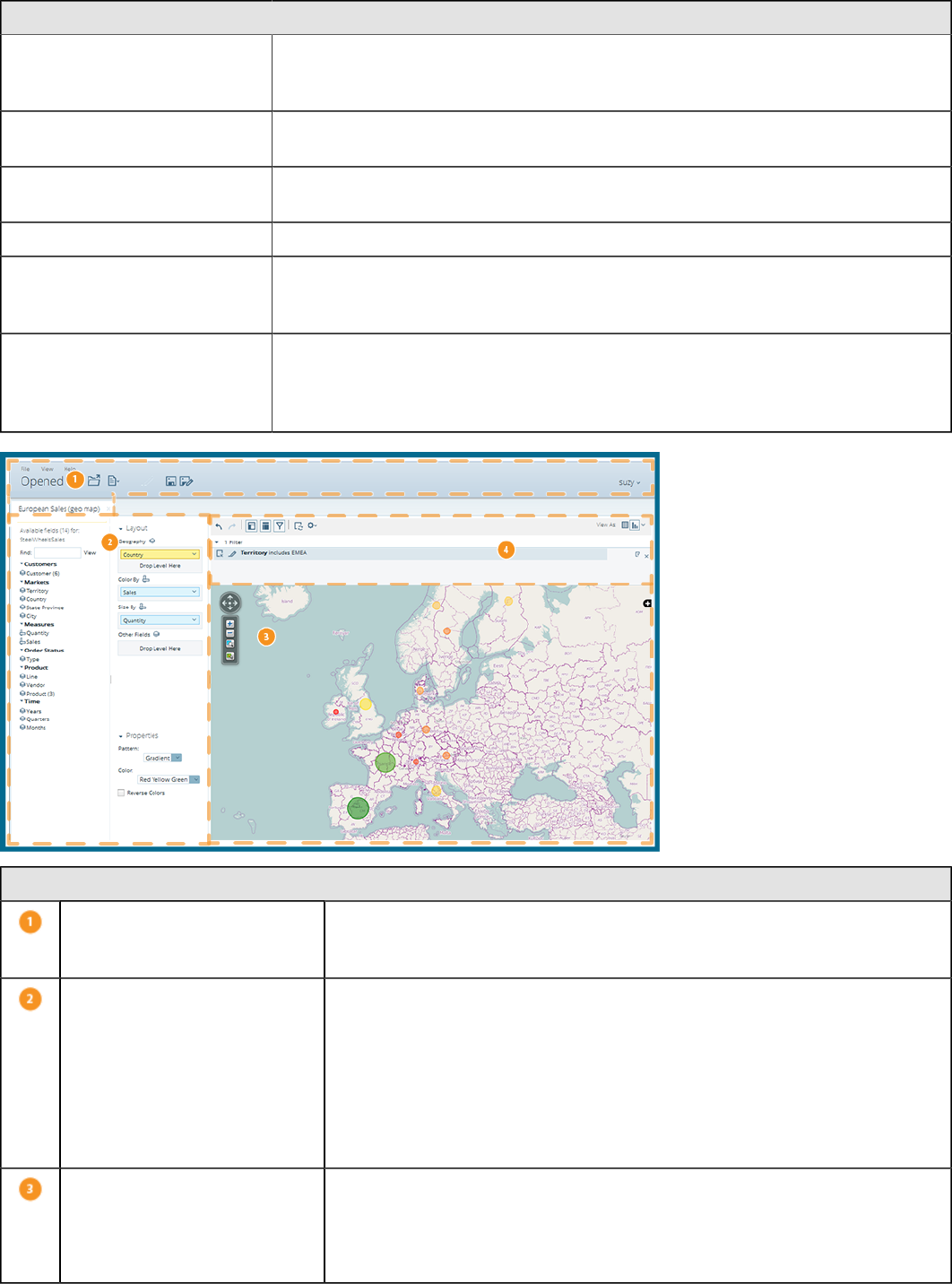

Visualization Perspective............................................................................................................................18

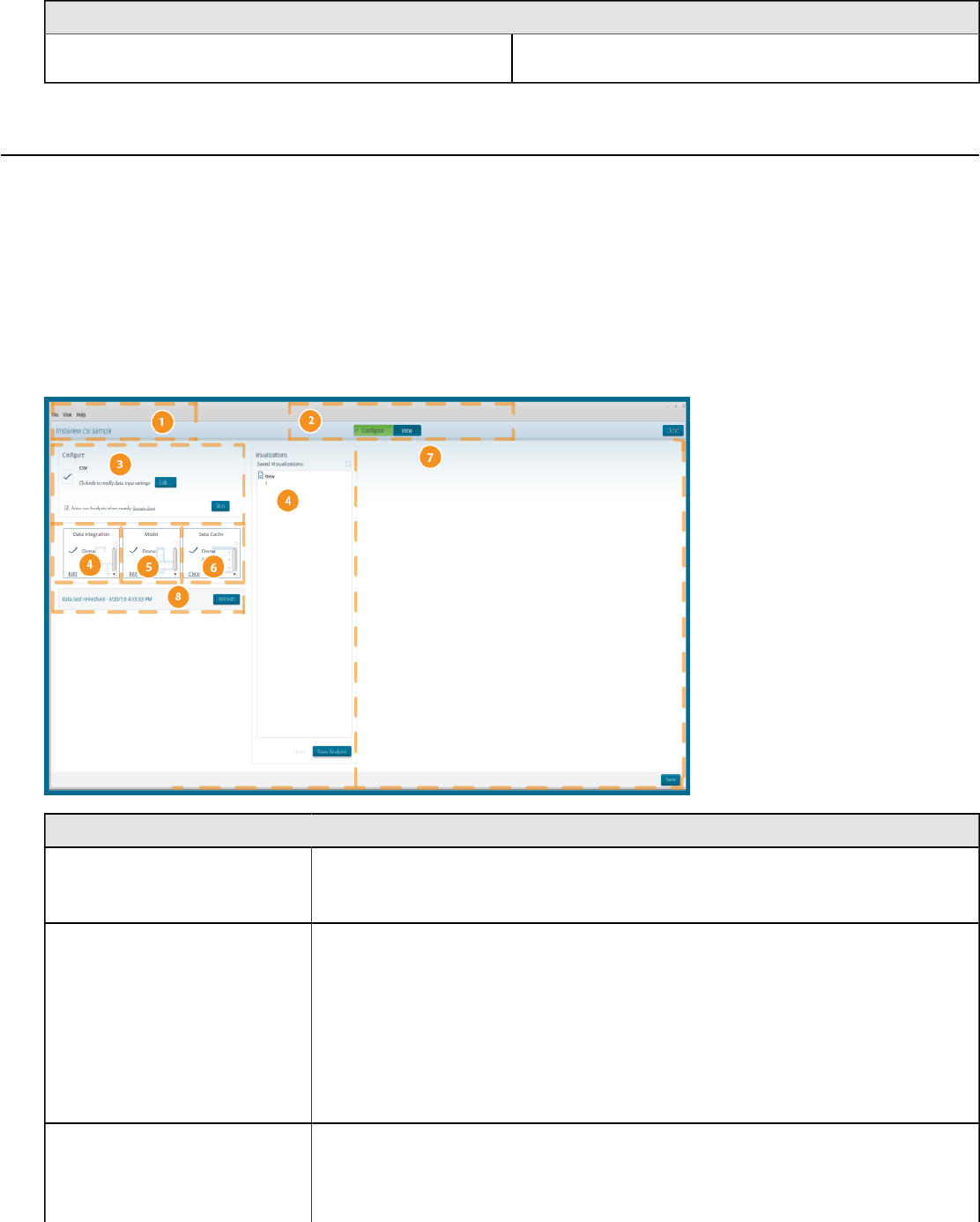

Instaview Perspective.................................................................................................................................19

Customizing the Spoon Interface................................................................................................................21

Terminology and Basic Concepts............................................................................................24

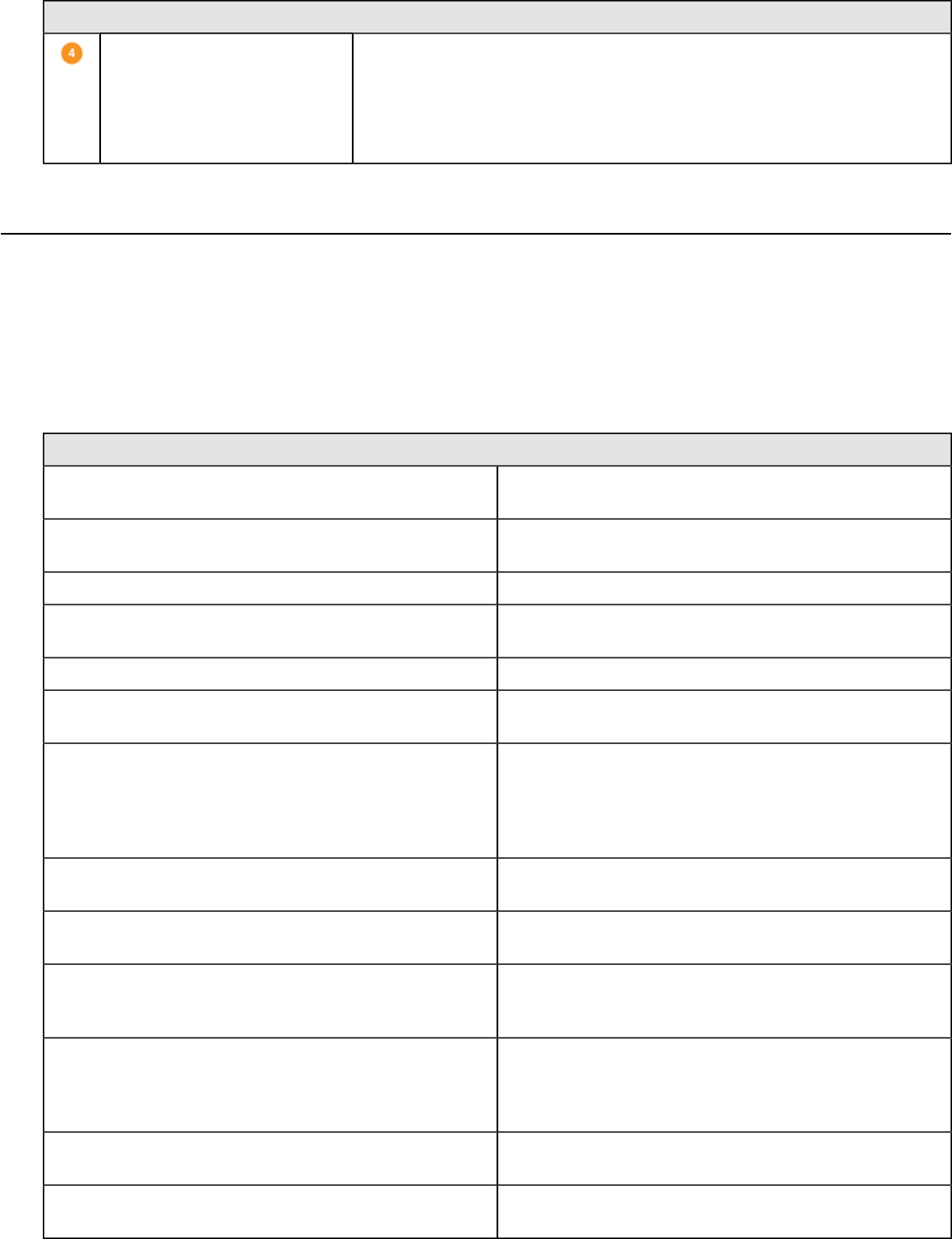

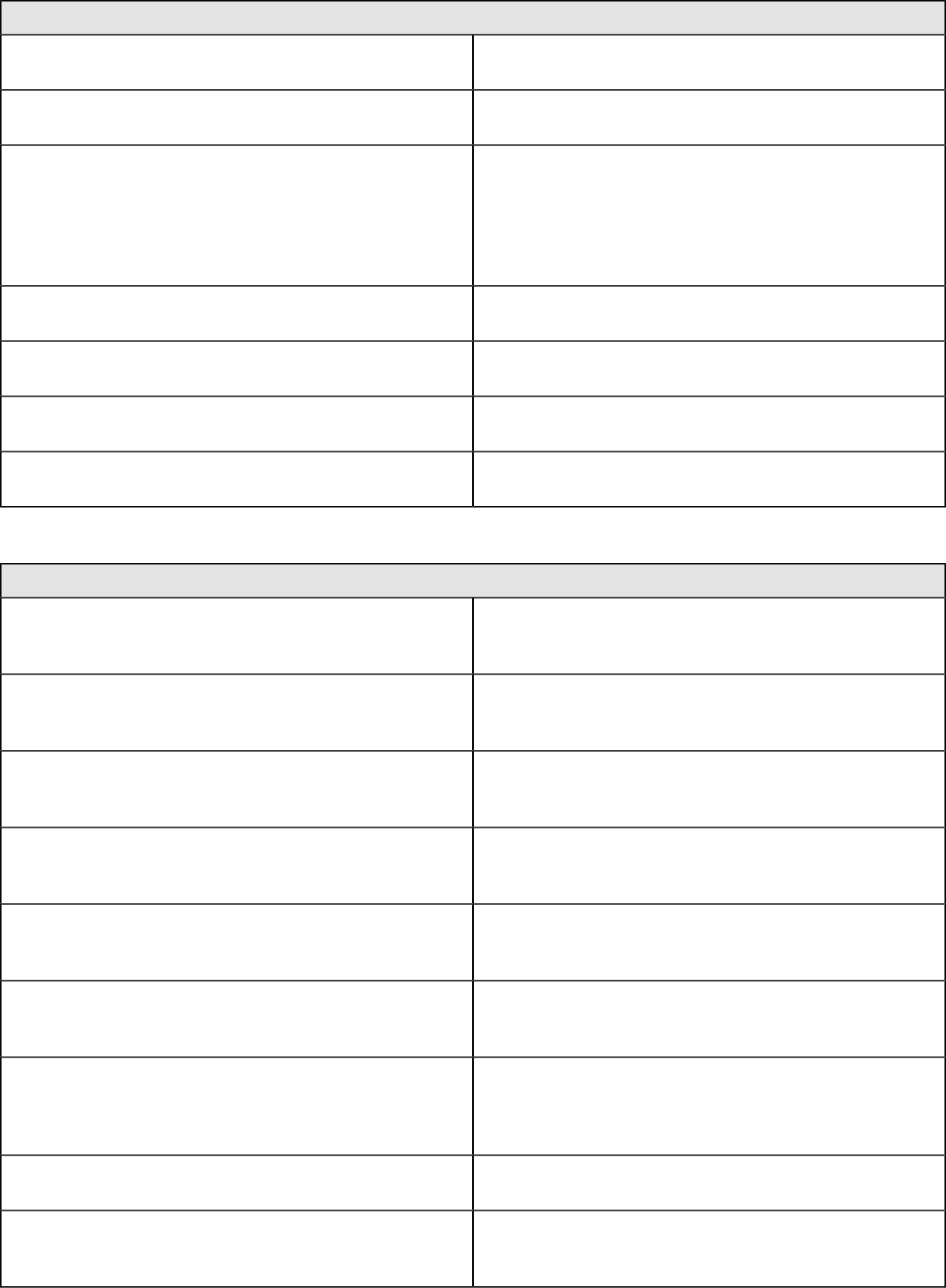

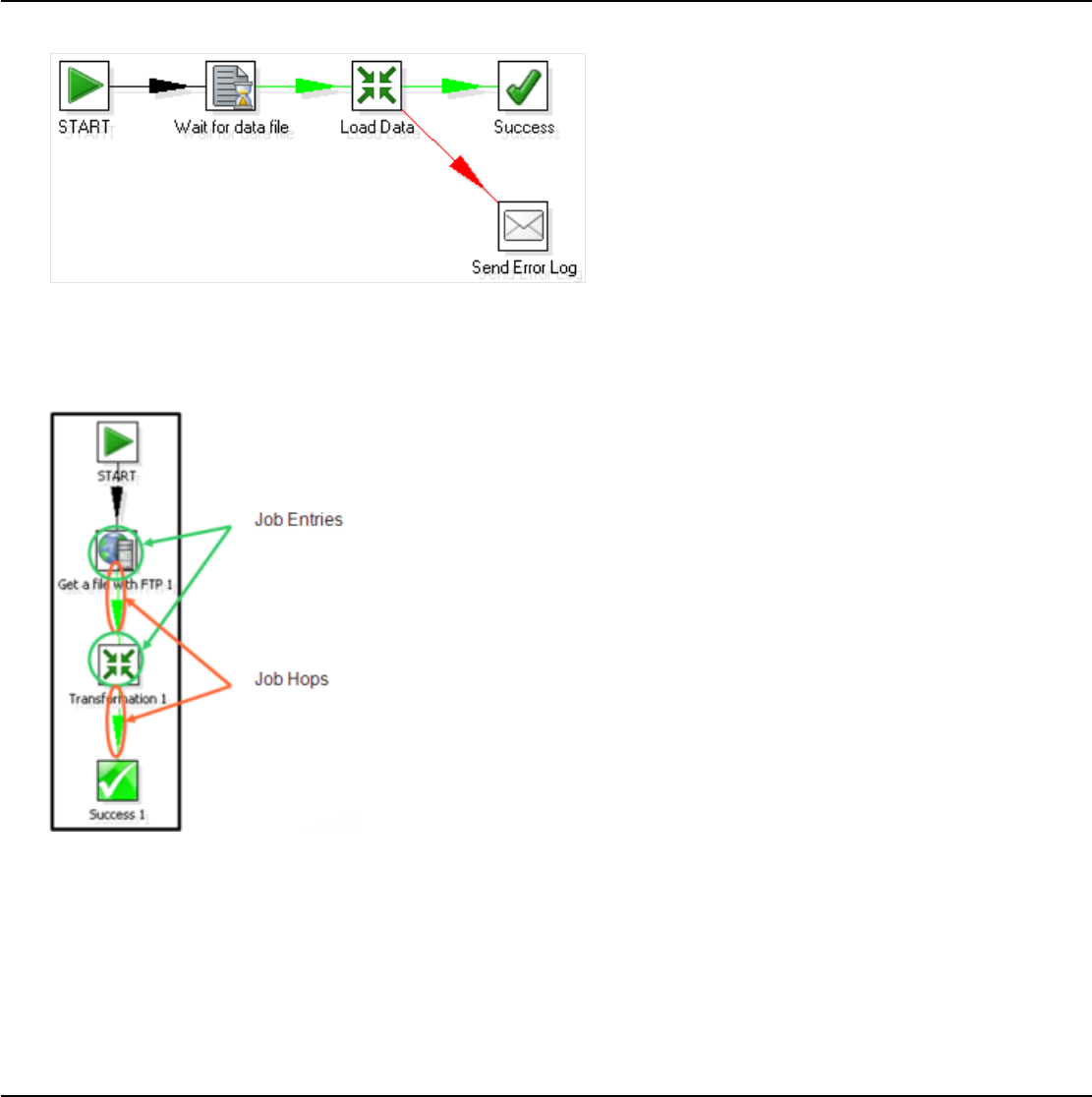

Transformations, Steps, and Hops............................................................................................................. 24

Jobs............................................................................................................................................................ 25

More About Hops........................................................................................................................................25

Create Transformations...........................................................................................................28

Get Started................................................................................................................................................. 28

Save Your Transformation..........................................................................................................................29

Run Your Transformation Locally............................................................................................................... 29

Build a Job..................................................................................................................................................30

Executing Transformations......................................................................................................31

Initialize Slave Servers in Spoon................................................................................................................ 31

Executing Jobs and Transformations from the Repository on the Carte Server.........................................32

Impact Analysis...........................................................................................................................................32

Working with the DI Repository............................................................................................... 33

Deleting a Repository................................................................................................................................. 33

Managing Content in the DI Repository......................................................................................................33

Setting Folder-Level Permissions.................................................................................................... 34

Exporting Content from Solutions Repositories with Command-Line Tools.................................... 35

Working with Version Control..................................................................................................................... 36

Examining Version History...............................................................................................................36

Restoring a Previously Saved Version of a Job or Transformation................................................. 36

Reusing Transformation Flows with Mapping Steps................................................................37

Arguments, Parameters, and Variables...................................................................................39

Arguments.................................................................................................................................................. 39

Parameters................................................................................................................................................. 39

VFS Properties.................................................................................................................................39

Variables.....................................................................................................................................................40

Variable Scope.................................................................................................................................41

Internal Variables.............................................................................................................................41

Rapid Analysis Schema Prototyping........................................................................................43

Creating a Prototype Schema With a Non-PDI Data Source......................................................................43

Creating a Prototype Schema With a PDI Data Source............................................................................. 43

Testing With Pentaho Analyzer and Report Wizard................................................................................... 44

Prototypes in Production.............................................................................................................................44

Using the SQL Editor...............................................................................................................45

Using the Database Explorer...................................................................................................46

Unsupported Databases..........................................................................................................47

Performance Monitoring and Logging......................................................................................48

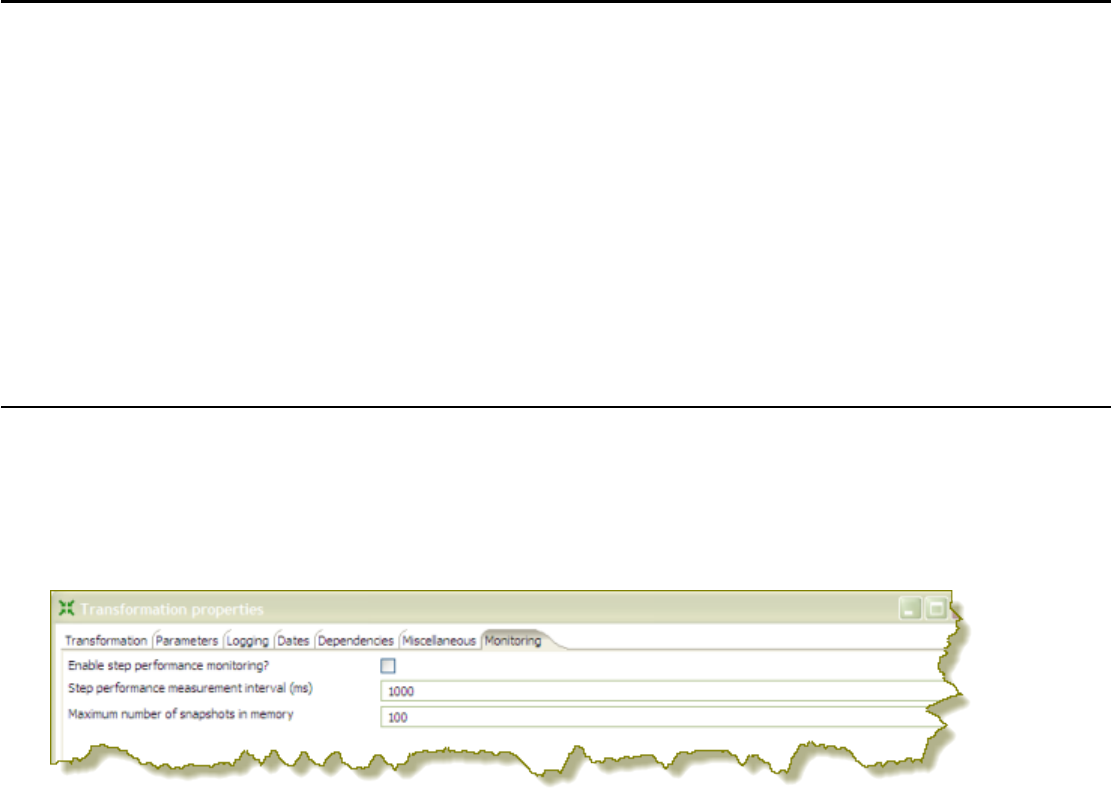

Monitoring Step Performance.....................................................................................................................48

Using Performance Graphs............................................................................................................. 48

Logging Steps.............................................................................................................................................49

| TOC | 4

Logging Transformations............................................................................................................................50

Pentaho Data Integration Performance Tuning Tips.................................................................................. 52

Working with Big Data and Hadoop in PDI..............................................................................54

Pentaho MapReduce Workflow.................................................................................................................. 54

PDI Hadoop Job Workflow..........................................................................................................................56

Hadoop to PDI Data Type Conversion....................................................................................................... 57

Hadoop Hive-Specific SQL Limitations.......................................................................................................58

Big Data Tutorials....................................................................................................................................... 58

Hadoop Tutorials..............................................................................................................................58

MapR Tutorials.................................................................................................................................66

Cassandra Tutorials.........................................................................................................................67

MongoDB Tutorials.......................................................................................................................... 67

Implement Data Services with the Thin Kettle JDBC Driver....................................................69

Transactional Databases and Job Rollback............................................................................ 70

Make a Transformation Database Transactional........................................................................................70

Make a Job Database Transactional.......................................................................................................... 70

Interacting With Web Services.................................................................................................71

Scheduling and Scripting PDI Content.................................................................................... 72

Scheduling Transformations and Jobs From Spoon...................................................................................72

Command-Line Scripting Through Pan and Kitchen.................................................................................. 73

Pan Options and Syntax.................................................................................................................. 73

Kitchen Options and Syntax.............................................................................................................74

Importing KJB or KTR Files From a Zip Archive..............................................................................76

Connecting to a DI Solution Repositories with Command-Line Tools............................................. 76

Exporting Content from Solutions Repositories with Command-Line Tools.................................... 77

Transformation Step Reference...............................................................................................79

Big Data......................................................................................................................................................79

Avro Input.........................................................................................................................................79

Cassandra Input...............................................................................................................................80

Cassandra Output............................................................................................................................82

CouchDB Input.................................................................................................................................83

Hadoop File Input.............................................................................................................................84

Hadoop File Output..........................................................................................................................89

HBase Input..................................................................................................................................... 91

HBase Output.................................................................................................................................. 93

HBase Row Decoder....................................................................................................................... 95

MapReduce Input.............................................................................................................................96

MapReduce Output..........................................................................................................................96

MongoDB Input................................................................................................................................97

MongoDB Output........................................................................................................................... 102

Splunk Input...................................................................................................................................105

Splunk Output................................................................................................................................ 107

SSTable Output............................................................................................................................. 108

Input..........................................................................................................................................................108

Cassandra Input.............................................................................................................................108

CSV File Input................................................................................................................................109

Data Grid........................................................................................................................................111

De-serialize From File....................................................................................................................111

Email Messages Input....................................................................................................................111

ESRI Shapefile Reader..................................................................................................................112

Fixed File Input.............................................................................................................................. 112

Generate Random Credit Card Numbers...................................................................................... 113

Generate Random Value............................................................................................................... 113

Generate Rows..............................................................................................................................113

Get Data From XML.......................................................................................................................114

Get File Names..............................................................................................................................114

Get Files Rows Count....................................................................................................................114

Get Repository Names...................................................................................................................115

Get Subfolder Names.................................................................................................................... 115

Get System Info............................................................................................................................. 115

| TOC | 5

Get Table Names...........................................................................................................................115

Google Analytics Input...................................................................................................................115

Google Docs Input......................................................................................................................... 117

GZIP CSV Input............................................................................................................................. 118

HBase Input................................................................................................................................... 118

HL7 Input....................................................................................................................................... 121

JMS Consumer.............................................................................................................................. 122

JSON Input.................................................................................................................................... 122

LDAP Input.....................................................................................................................................124

LDIF Input...................................................................................................................................... 124

Load File Content In Memory.........................................................................................................124

Microsoft Access Input...................................................................................................................124

Microsoft Excel Input......................................................................................................................124

Mondrian Input...............................................................................................................................127

MongoDB Input..............................................................................................................................127

OLAP Input.................................................................................................................................... 132

OpenERP Object Input.................................................................................................................. 132

Palo Cell Input................................................................................................................................132

Palo Dim Input............................................................................................................................... 132

Property Input................................................................................................................................ 132

Splunk Input...................................................................................................................................133

RSS Input.......................................................................................................................................134

S3 CSV Input................................................................................................................................. 135

Salesforce Input.............................................................................................................................136

SAP Input.......................................................................................................................................136

SAS Input.......................................................................................................................................136

Table Input.....................................................................................................................................136

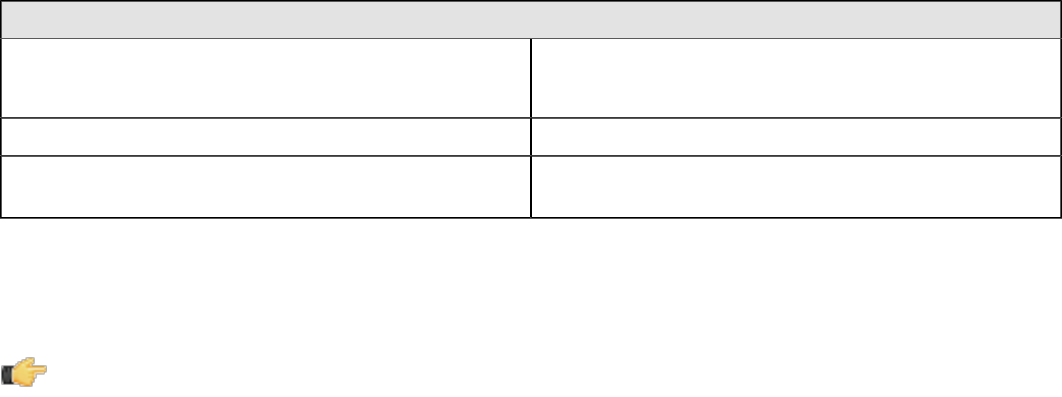

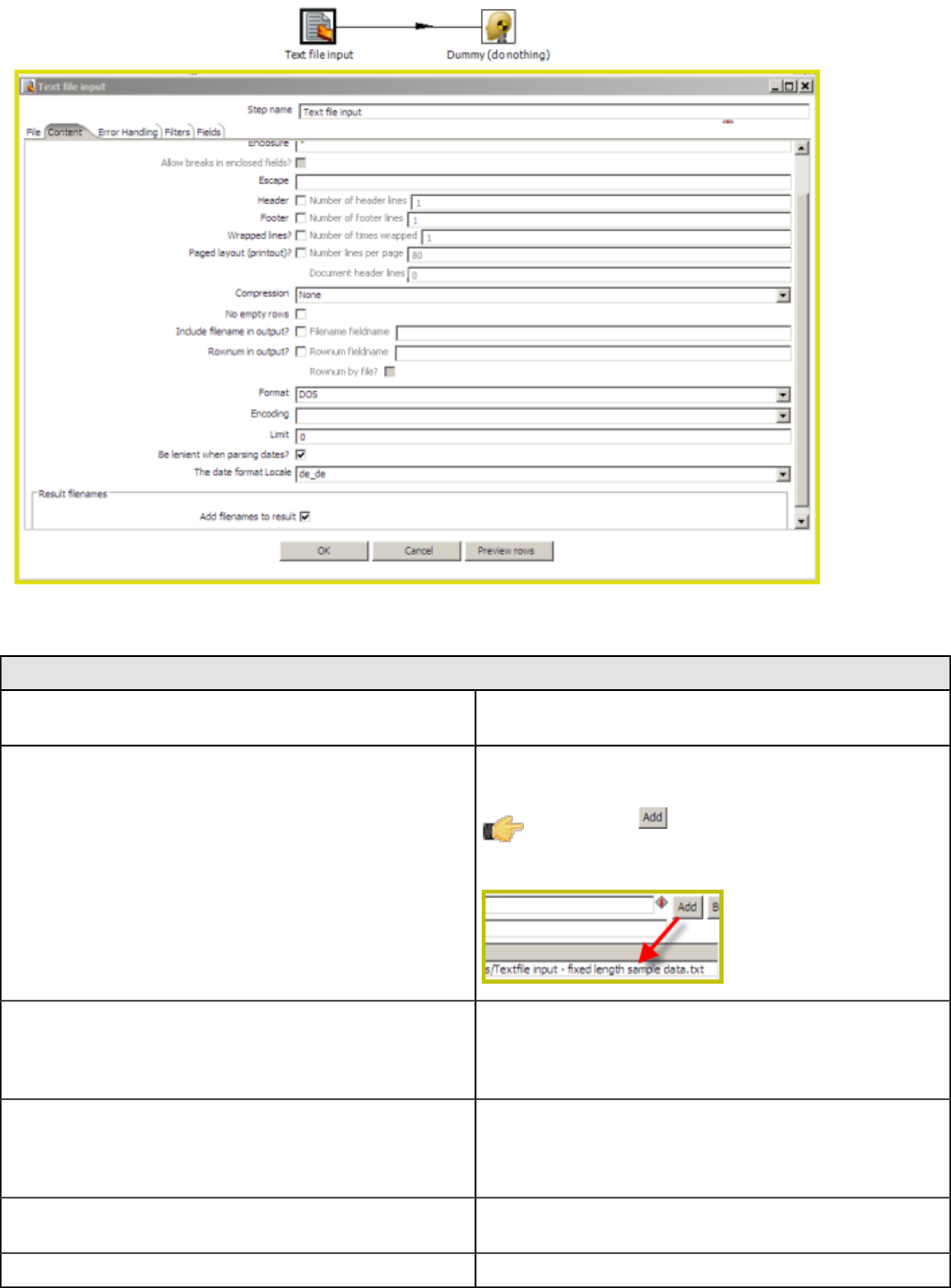

Text File Input................................................................................................................................ 137

XBase Input................................................................................................................................... 143

XML Input Stream (StAX).............................................................................................................. 143

YAML Input.................................................................................................................................... 143

Output.......................................................................................................................................................144

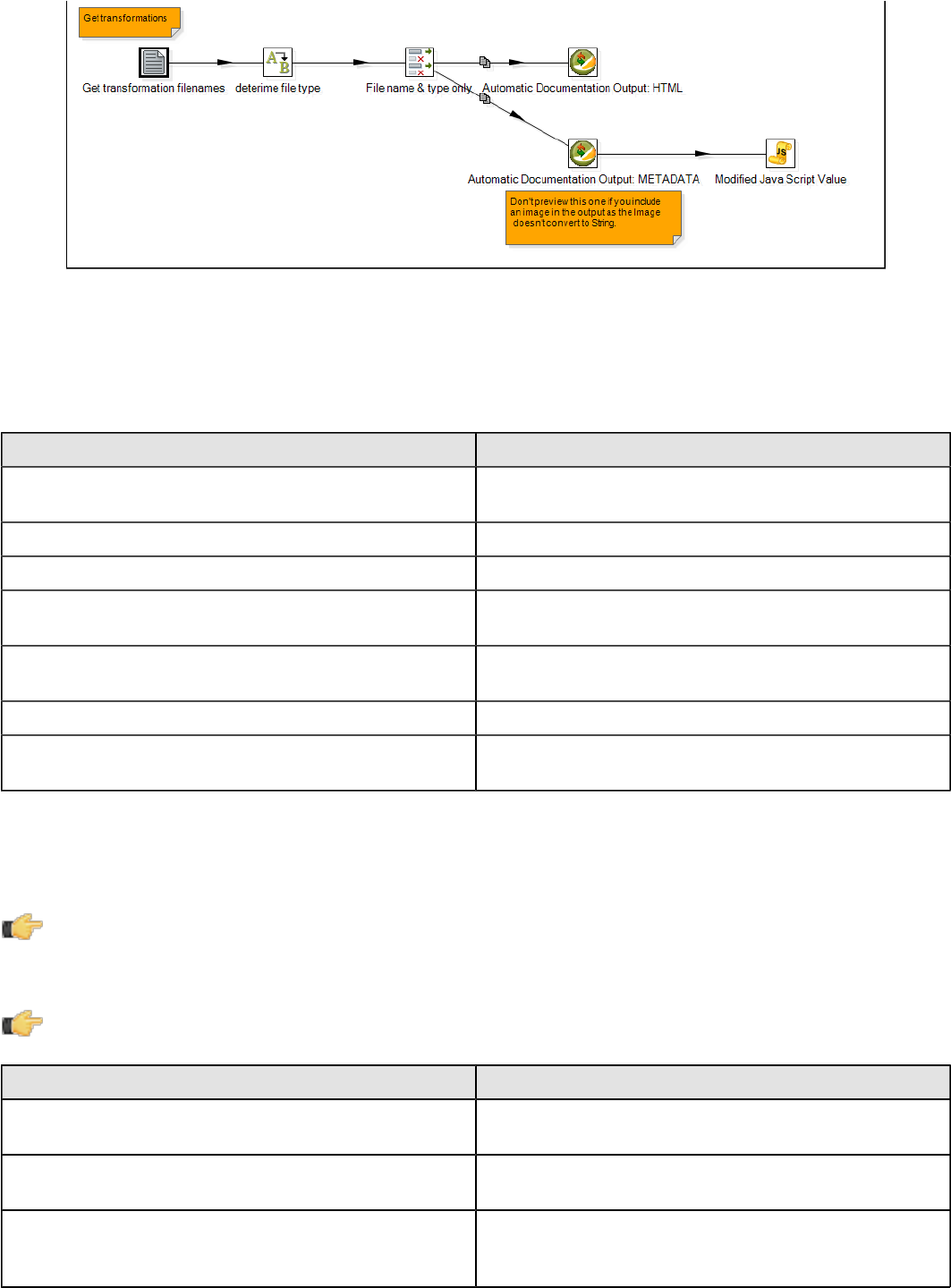

Automatic Documentation Output..................................................................................................144

Cassandra Output..........................................................................................................................145

Delete.............................................................................................................................................146

HBase Output................................................................................................................................ 146

Insert/Update................................................................................................................................. 148

JMS Producer................................................................................................................................ 148

JSON Output..................................................................................................................................149

LDAP Output..................................................................................................................................150

Microsoft Access Output................................................................................................................150

Microsoft Excel Output...................................................................................................................150

Microsoft Excel Writer....................................................................................................................151

MongoDB Output........................................................................................................................... 152

OpenERP Object Input.................................................................................................................. 155

Palo Cell Output.............................................................................................................................155

Palo Dim Output.............................................................................................................................156

Pentaho Reporting Output............................................................................................................. 156

Properties Output...........................................................................................................................156

RSS Output....................................................................................................................................157

S3 File Output................................................................................................................................157

Salesforce Delete...........................................................................................................................159

Salesforce Insert............................................................................................................................159

Salesforce Update......................................................................................................................... 159

Salesforce Upsert.......................................................................................................................... 159

Serialize to File.............................................................................................................................. 160

Splunk Output................................................................................................................................ 160

SQL File Output............................................................................................................................. 161

Synchronize After Merge............................................................................................................... 161

Table Output.................................................................................................................................. 161

Text File Output............................................................................................................................. 163

| TOC | 6

Update........................................................................................................................................... 165

XML Output....................................................................................................................................165

Transform................................................................................................................................................. 165

Add a Checksum............................................................................................................................165

Add Constants............................................................................................................................... 166

Add Sequence............................................................................................................................... 166

Add Value Fields Changing Sequence.......................................................................................... 167

Add XML........................................................................................................................................ 167

Calculator.......................................................................................................................................168

Closure Generator......................................................................................................................... 171

Example Plugin..............................................................................................................................171

Get ID From Slave Server..............................................................................................................171

Number Range...............................................................................................................................173

Replace in String............................................................................................................................174

Row Denormalizer......................................................................................................................... 174

Row Flattener.................................................................................................................................174

Row Normalizer............................................................................................................................. 174

Select Values.................................................................................................................................174

Set Field Value...............................................................................................................................176

Set Field Value to a Constant........................................................................................................ 176

Sort Rows...................................................................................................................................... 177

Split Field to Rows......................................................................................................................... 177

Split Fields..................................................................................................................................... 177

String Operations...........................................................................................................................177

Strings Cut..................................................................................................................................... 178

Unique Rows..................................................................................................................................178

Unique Rows (HashSet)................................................................................................................ 178

Value Mapper.................................................................................................................................179

XSL Transformation.......................................................................................................................179

Utility.........................................................................................................................................................179

Change File Encoding....................................................................................................................179

Clone Row..................................................................................................................................... 179

Delay Row......................................................................................................................................179

Edit to XML.................................................................................................................................... 179

Execute a Process.........................................................................................................................180

If Field Value is Null....................................................................................................................... 180

Mail................................................................................................................................................ 180

Metadata Structure of Stream........................................................................................................182

Null if..............................................................................................................................................183

Process Files................................................................................................................................. 183

Run SSH Commands.....................................................................................................................183

Send Message to Syslog............................................................................................................... 184

Write to Log....................................................................................................................................184

Flow.......................................................................................................................................................... 184

Abort.............................................................................................................................................. 184

Append Streams............................................................................................................................ 185

Block This Step Until Steps Finish.................................................................................................185

Blocking Step.................................................................................................................................185

Detect Empty Stream.....................................................................................................................186

Dummy (do nothing)...................................................................................................................... 186

ETL Metadata Injection..................................................................................................................186

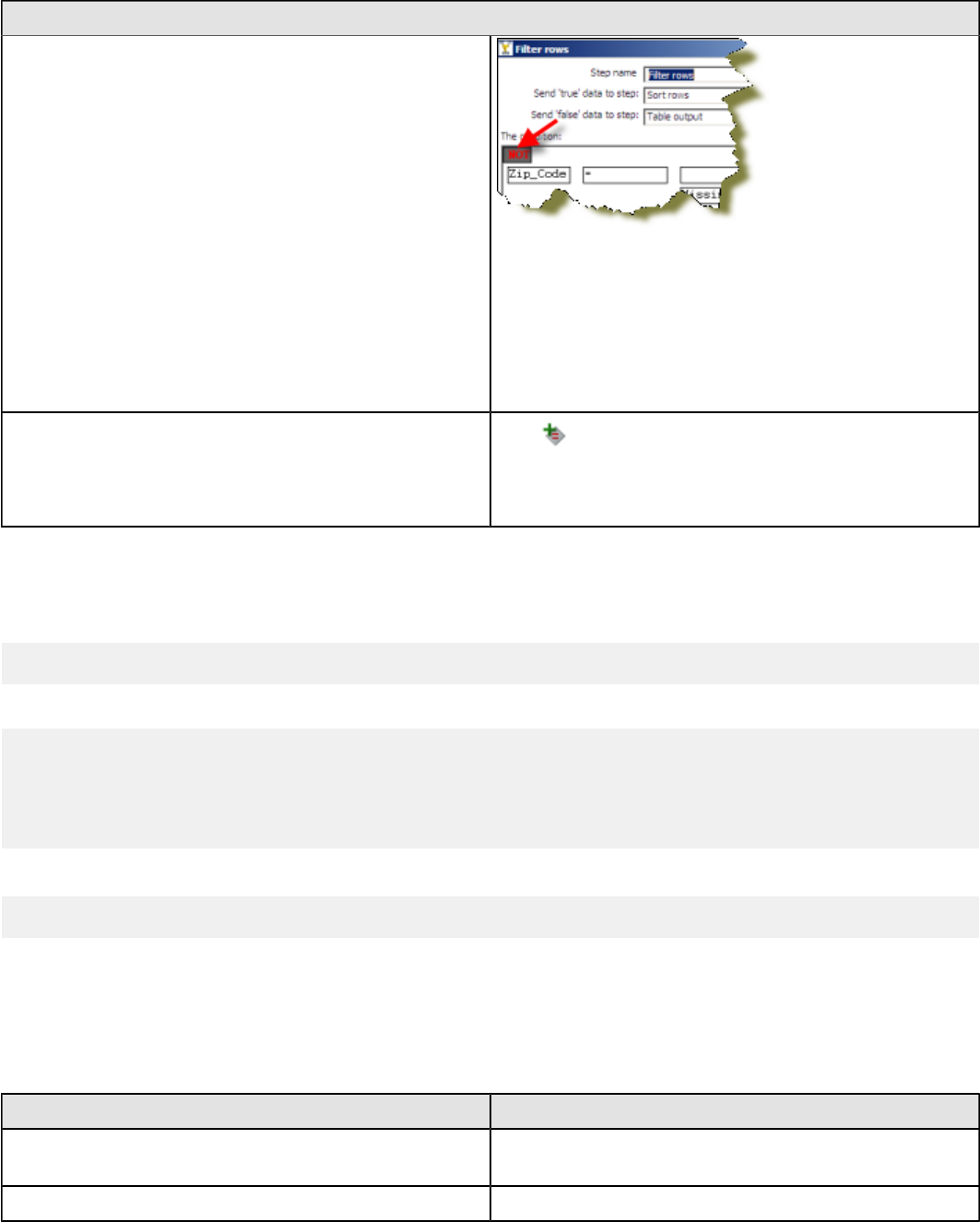

Filter Rows.....................................................................................................................................186

Identify Last Row in a Stream........................................................................................................187

Java Filter...................................................................................................................................... 187

Prioritize Streams...........................................................................................................................188

Single Threader............................................................................................................................. 188

Switch / Case.................................................................................................................................188

Scripting....................................................................................................................................................188

Execute Row SQL Script............................................................................................................... 188

Execute SQL Script........................................................................................................................188

Formula..........................................................................................................................................189

| TOC | 7

Modified JavaScript Value............................................................................................................. 189

Regex Evaluation...........................................................................................................................190

User Defined Java Class............................................................................................................... 190

User Defined Java Expression.......................................................................................................190

Lookup......................................................................................................................................................190

Call DB Procedure......................................................................................................................... 190

Check if a Column Exists...............................................................................................................191

Check if File is Locked...................................................................................................................191

Check if Webservice is Available...................................................................................................191

Database Join................................................................................................................................191

Database Lookup...........................................................................................................................191

Dynamic SQL Row.........................................................................................................................192

File Exists.......................................................................................................................................192

Fuzzy Match...................................................................................................................................192

HTTP Client................................................................................................................................... 194

HTTP Post..................................................................................................................................... 194

MaxMind GeoIP Lookup................................................................................................................ 195

RESTClient.................................................................................................................................... 195

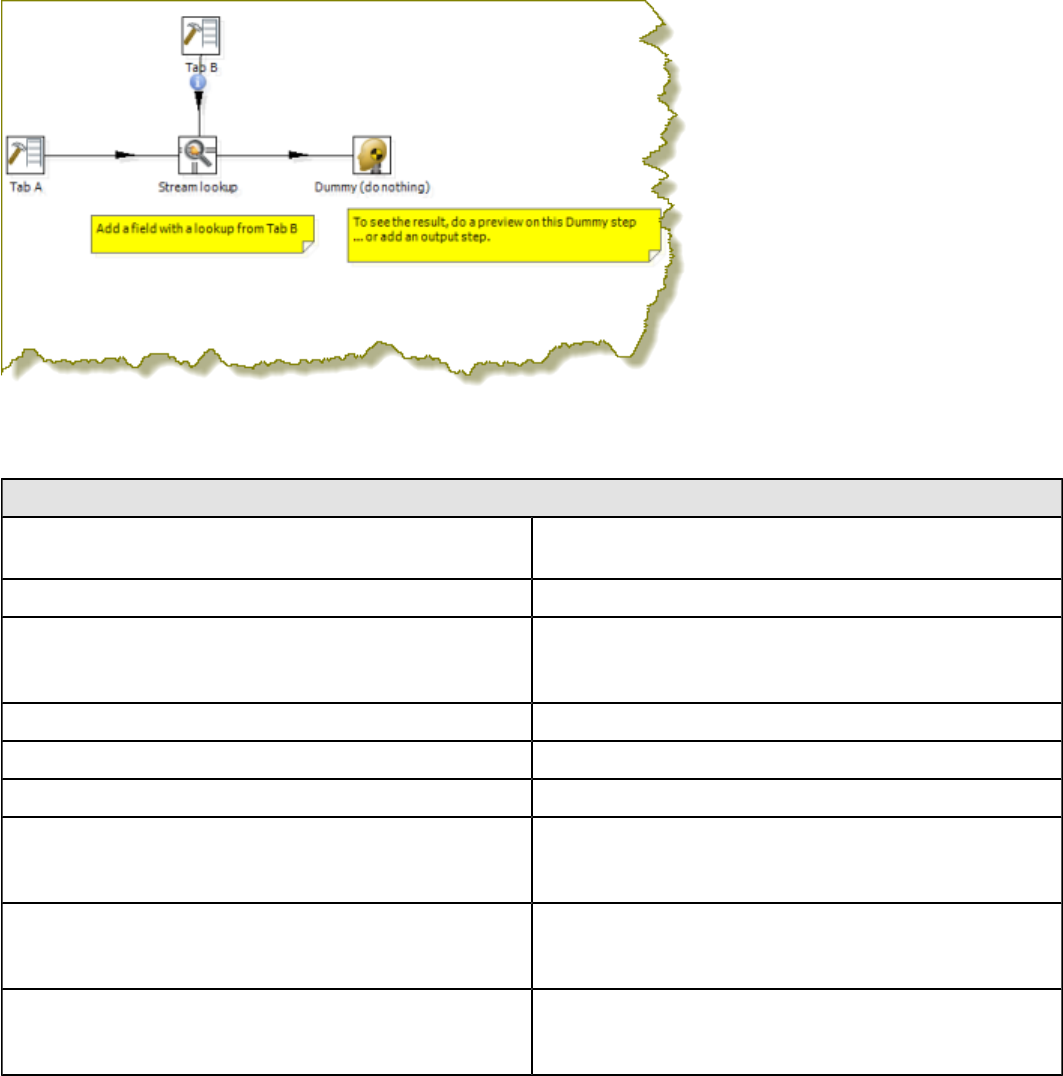

Stream Lookup...............................................................................................................................197

Table Exists................................................................................................................................... 198

Web Services Lookup....................................................................................................................198

Joins......................................................................................................................................................... 199

Join Rows (Cartesian Product)...................................................................................................... 199

Merge Join..................................................................................................................................... 199

Merge Rows (diff)...........................................................................................................................200

Sorted Merge................................................................................................................................. 200

XML Join........................................................................................................................................200

Data Warehouse.......................................................................................................................................200

Combination Lookup/Update......................................................................................................... 201

Dimension Lookup/Update.............................................................................................................203

Validation..................................................................................................................................................206

Credit Card Validator..................................................................................................................... 206

Data Validator................................................................................................................................ 207

Mail Validator................................................................................................................................. 207

XSD Validator................................................................................................................................ 207

Statistics................................................................................................................................................... 207

Analytic Query................................................................................................................................207

Group By........................................................................................................................................208

Memory Group By..........................................................................................................................209

Output Steps Metrics..................................................................................................................... 209

Reservoir Sampling........................................................................................................................209

Sample Rows.................................................................................................................................210

Univariate Statistics....................................................................................................................... 210

Palo...........................................................................................................................................................210

Palo Cell Input................................................................................................................................210

Palo Cell Output.............................................................................................................................210

Palo Dim Input............................................................................................................................... 210

Palo Dim Output.............................................................................................................................211

Job............................................................................................................................................................211

Copy Rows to Result..................................................................................................................... 211

Get Files From Result....................................................................................................................211

Get Rows From Result...................................................................................................................211

Get Variables................................................................................................................................. 212

Set Files in Result..........................................................................................................................212

Set Variables..................................................................................................................................213

Mapping....................................................................................................................................................213

Mapping (sub-transformation)........................................................................................................213

Mapping Input Specification...........................................................................................................213

Mapping Output Specification........................................................................................................213

Bulk Loading.............................................................................................................................................213

ElasticSearch Bulk Insert...............................................................................................................213

| TOC | 8

Greenplum Bulk Loader.................................................................................................................214

Greenplum Load............................................................................................................................ 214

Infobright Loader............................................................................................................................214

Ingres VectorWise Bulk Loader..................................................................................................... 215

LucidDB Streaming Loader............................................................................................................215

MonetDB Bulk Loader....................................................................................................................215

MySQL Bulk Loader.......................................................................................................................215

Oracle Bulk Loader........................................................................................................................ 215

PostgreSQL Bulk Loader............................................................................................................... 215

Teradata Fastload Bulk Loader......................................................................................................216

Inline......................................................................................................................................................... 216

Injector........................................................................................................................................... 216

Socket Reader............................................................................................................................... 216

Socket Writer................................................................................................................................. 216

Data Mining Steps.................................................................................................................................... 216

Weka Scoring.................................................................................................................................217

Reservoir Sampling........................................................................................................................217

ARFF Output..................................................................................................................................217

Univariate Statistics....................................................................................................................... 217

Knowledge Flow.............................................................................................................................217

Univariate Statistics....................................................................................................................... 217

Weka Forecasting..........................................................................................................................217

Job Entry Reference..............................................................................................................219

File Encryption..........................................................................................................................................219

Decrypt Files With PGP................................................................................................................. 219

Encrypt Files With PGP................................................................................................................. 219

Verify File Signature With PGP......................................................................................................219

Big Data....................................................................................................................................................219

Amazon EMR Job Executor...........................................................................................................219

Amazon Hive Job Executor............................................................................................................220

Hadoop Copy Files........................................................................................................................ 221

Hadoop Job Executor.................................................................................................................... 222

Oozie Job Executor........................................................................................................................223

Pentaho MapReduce..................................................................................................................... 224

Pig Script Executor........................................................................................................................ 227

Sqoop Export................................................................................................................................. 228

Sqoop Import................................................................................................................................. 228

General.....................................................................................................................................................229

Start............................................................................................................................................... 229

Dummy...........................................................................................................................................229

Example Plugin..............................................................................................................................229

Job................................................................................................................................................. 230

Set Variables..................................................................................................................................231

Success......................................................................................................................................... 231

Transformation...............................................................................................................................231

Mail........................................................................................................................................................... 233

Get Mails (POP3/IMAP).................................................................................................................233

Mail................................................................................................................................................ 233

Mail Validator................................................................................................................................. 235

File Management......................................................................................................................................235

Add Filenames to Result................................................................................................................235

Compare Folders........................................................................................................................... 235

Convert File Between DOS and Unix.............................................................................................236

Copy Files......................................................................................................................................236

Copy or Remove Result Filenames............................................................................................... 237

Create a Folder..............................................................................................................................237

Create File..................................................................................................................................... 237

Delete File......................................................................................................................................237

Delete Filenames From Result...................................................................................................... 237

Delete Files....................................................................................................................................238

Delete Folders................................................................................................................................238

| TOC | 9

File Compare................................................................................................................................. 238

HTTP..............................................................................................................................................238

Move Files......................................................................................................................................239

Unzip File.......................................................................................................................................239

Wait For File...................................................................................................................................241

Write to File....................................................................................................................................241

Zip File........................................................................................................................................... 241

Conditions.................................................................................................................................................242

Check DB Connections..................................................................................................................242

Check Files Locked........................................................................................................................242

Check If a Folder is Empty.............................................................................................................243

Check Webservice Availability.......................................................................................................243

Checks If Files Exist.......................................................................................................................243

Columns Exist in a Table............................................................................................................... 243

Evaluate Files Metrics....................................................................................................................244

Evaluate Rows Number in a Table................................................................................................ 244

File Exists.......................................................................................................................................244

Simple Evaluation.......................................................................................................................... 244

Table Exists................................................................................................................................... 245

Wait For......................................................................................................................................... 245

Scripting....................................................................................................................................................245

JavaScript...................................................................................................................................... 245

Shell...............................................................................................................................................246

SQL................................................................................................................................................247

Bulk Loading.............................................................................................................................................247

Bulkload From MySQL Into File.....................................................................................................247

Bulkload Into MSSQL.....................................................................................................................247

Bulkload Into MySQL..................................................................................................................... 248

MS Access Bulk Load....................................................................................................................248

XML.......................................................................................................................................................... 248

Check if XML FIle is Well-Formed................................................................................................. 248

DTD Validator................................................................................................................................ 249

XSD Validator................................................................................................................................ 249

XSL Transformation.......................................................................................................................249

Utility.........................................................................................................................................................249

Abort Job........................................................................................................................................250

Display Msgbox Info.......................................................................................................................250

HL7 MLLP Acknowledge................................................................................................................250

HL7 MLLP Input.............................................................................................................................250

Ping a Host.................................................................................................................................... 250

Send Information Using Syslog......................................................................................................250

Send SNMP Trap...........................................................................................................................251

Talend Job Execution.................................................................................................................... 251

Truncate Tables.............................................................................................................................251

Wait for SQL.................................................................................................................................. 251

Write to Log....................................................................................................................................252

Repository.................................................................................................................................................252

Check if Connected to Repository................................................................................................. 252

Export Repository to XML File....................................................................................................... 252

File Transfer..............................................................................................................................................252

FTP Delete.....................................................................................................................................252

Get a File with FTP........................................................................................................................ 252

Get a File With FTPS.....................................................................................................................254

Get a file with SFTP.......................................................................................................................254

Put a File With FTP........................................................................................................................255

Put a File With SFTP..................................................................................................................... 255

SSH2 Get.......................................................................................................................................255

SSH2 Put....................................................................................................................................... 255

Upload Files to FTPS.....................................................................................................................255

Palo...........................................................................................................................................................256

Palo Cube Create.......................................................................................................................... 256

| TOC | 10

Palo Cube Delete...........................................................................................................................256

About PDI Marketplace..........................................................................................................257

Troubleshooting.....................................................................................................................258

Changing the Pentaho Data Integration Home Directory Location (.kettle folder)....................................258

Changing the Kettle Home Directory within the Pentaho BI Platform............................................ 259

Kitchen can't read KJBs from a Zip export............................................................................................... 260

Generating a DI Repository Configuration Without Running Spoon.........................................................260

Connecting to a DI Solution Repositories with Command-Line Tools........................................... 260

Unable to Get List of Repositories Exception........................................................................................... 261

Executing Jobs and Transformations from the Repository on the Carte Server............................261

Database Locks When Reading and Updating From A Single Table.......................................................261

Reading and Updating Table Rows Within a Transformation........................................................261

Force PDI to use DATE instead of TIMESTAMP in Parameterized SQL Queries....................................262

PDI Does Not Recognize Changes Made To a Table.............................................................................. 262

Using ODBC............................................................................................................................................. 262

Sqoop Import into Hive Fails.....................................................................................................................262

| Introduction | 11

Introduction

Pentaho Data Integration (PDI) is a flexible tool that allows you to collect data from disparate sources such as

databases, files, and applications, and turn the data into a unified format that is accessible and relevant to end users.

PDI provides the Extraction, Transformation, and Loading (ETL) engine that facilitates the process of capturing the right

data, cleansing the data, and storing the data using a uniform and consistent format.

PDI provides support for slowly changing dimensions, and surrogate key for data warehousing, allows data migration

between databases and application, is flexible enough to load giant datasets, and can take full advantage of cloud,

clustered, and massively parallel processing environments. You can cleanse your data using transformation steps that

range from very simple to very complex. Finally, you can leverage ETL as the data source for Pentaho Reporting.

Note: Dimension is a data warehousing term that refers to logical groupings of data such as product, customer,

or geographical information. Slowly Changing Dimensions (SCD) are dimensions that contain data that

changes slowly over time. For example, in most instances, employee job titles change slowly over time.

Common Uses of Pentaho Data Integration Include:

• Data migration between different databases and applications

• Loading huge data sets into databases taking full advantage of cloud, clustered and massively parallel processing

environments

• Data Cleansing with steps ranging from very simple to very complex transformations

• Data Integration including the ability to leverage real-time ETL as a data source for Pentaho Reporting

• Data warehouse population with built-in support for slowly changing dimensions and surrogate key creation (as

described above)

Audience and Assumptions

This section is written for IT managers, database administrators, and Business Intelligence solution architects who have

intermediate to advanced knowledge of ETL and Pentaho Data Integration Enterprise Edition features and functions.

You must have installed Pentaho Data Integration to examine some of the step-related information included in this

document.

If you are novice user, Pentaho recommends that you start by following the exercises in Getting Started with Pentaho

Data Integration available in the Pentaho InfoCenter. You can return to this document when you have mastered some of

the basic skills required to work with Pentaho Data Integration.

What this Section Covers

This document provides you with information about the most commonly used steps. For more information about steps,

see Matt Caster's blog and the Pentaho Data Integration wiki.

Refer to Administer DI Server for information about administering PDI and configuring security.

| Pentaho Data Integration Architecture | 12

Pentaho Data Integration Architecture

Spoon is the design interface for building ETL jobs and transformations. Spoon provides a drag-and-drop interface that

allows you to graphically describe what you want to take place in your transformations. Transformations can then be

executed locally within Spoon, on a dedicated Data Integration Server, or a cluster of servers.

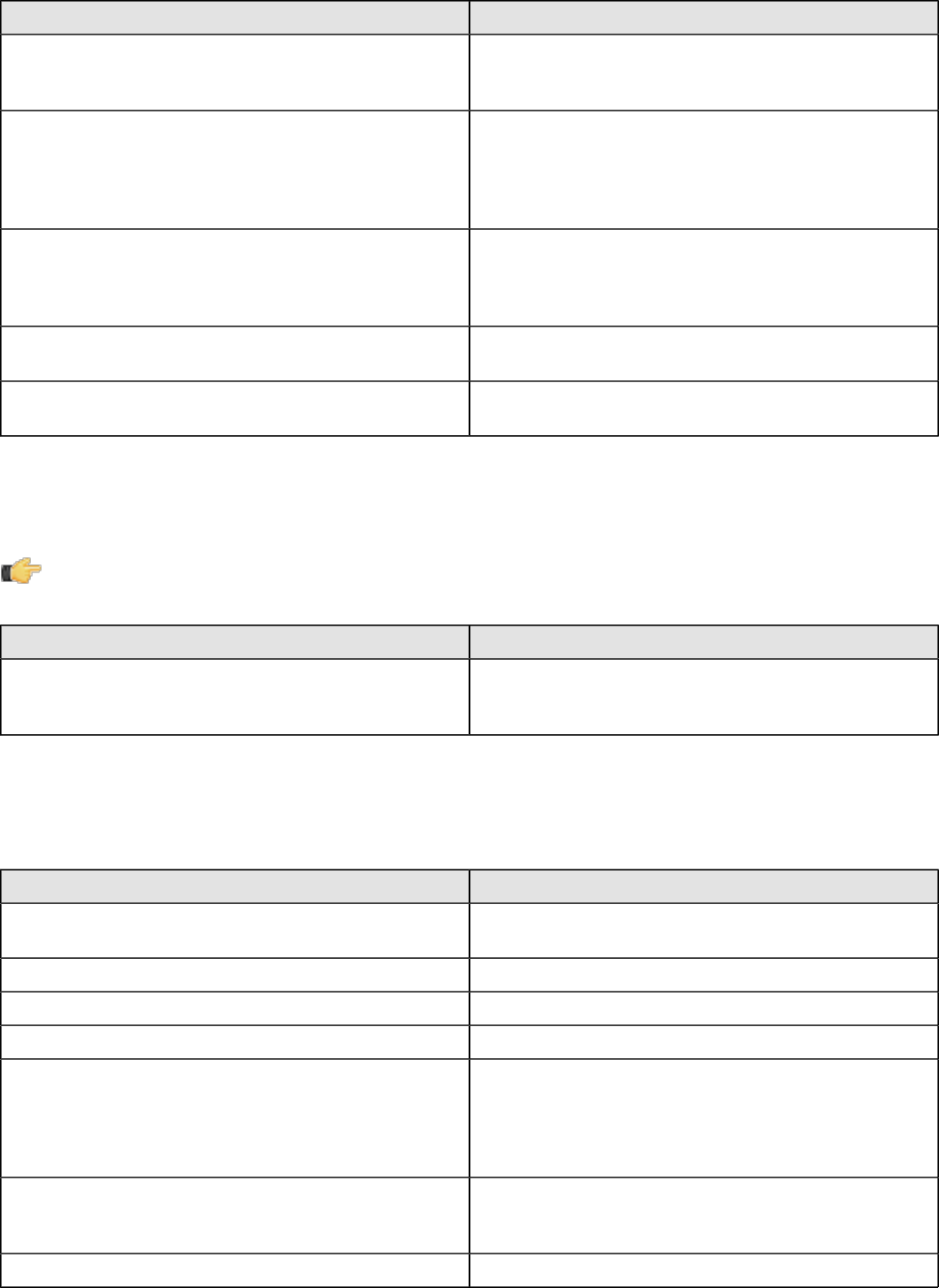

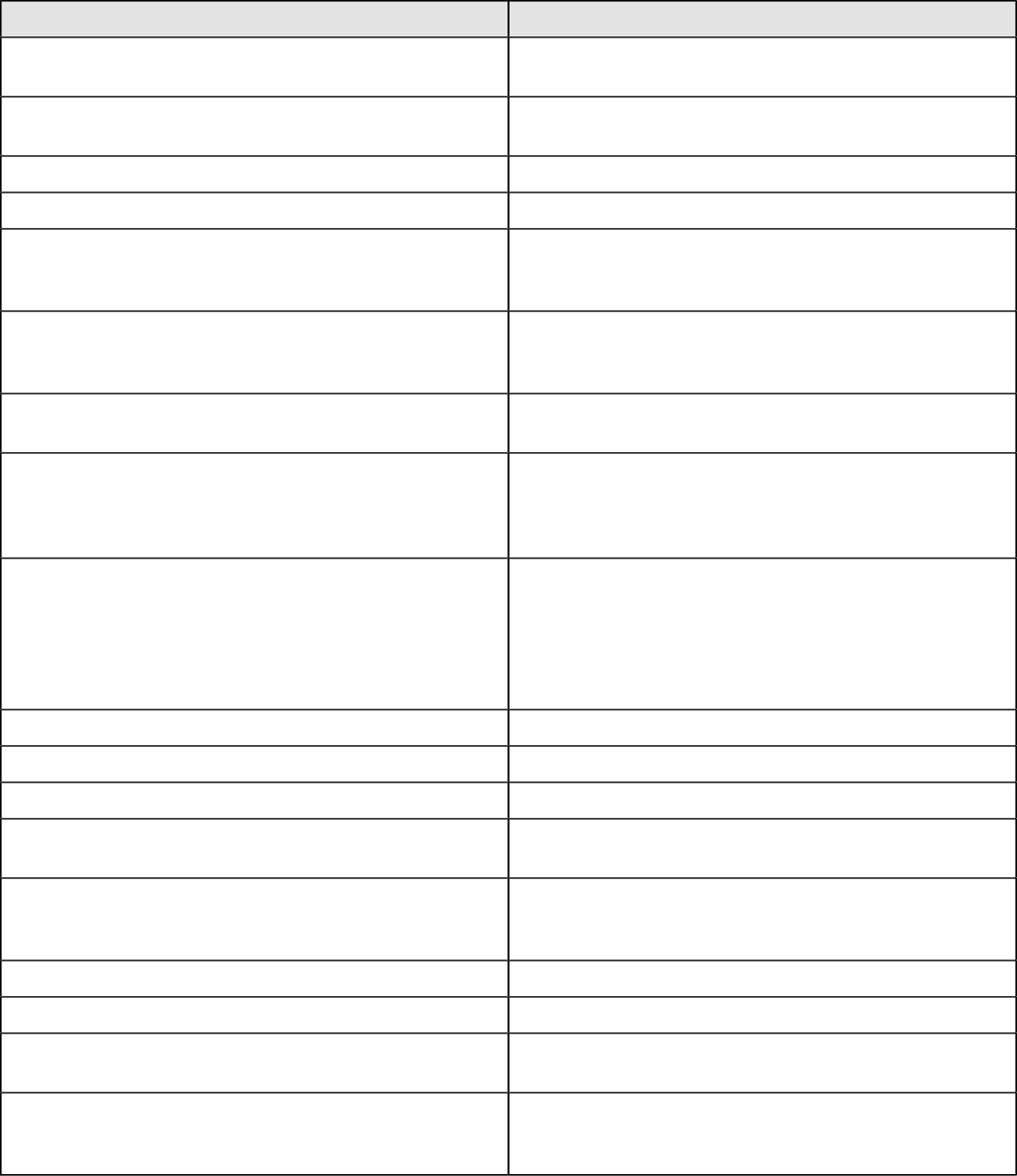

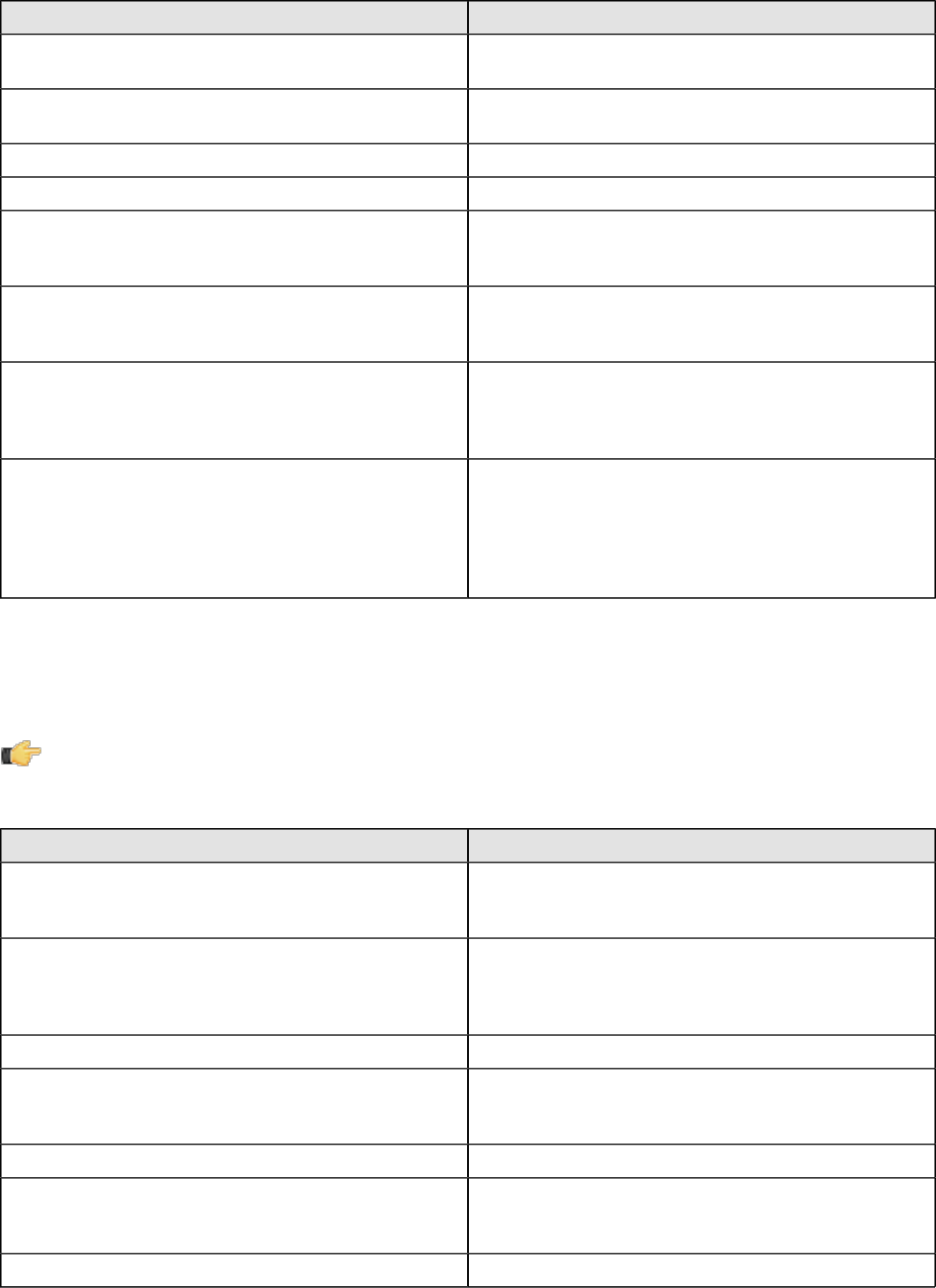

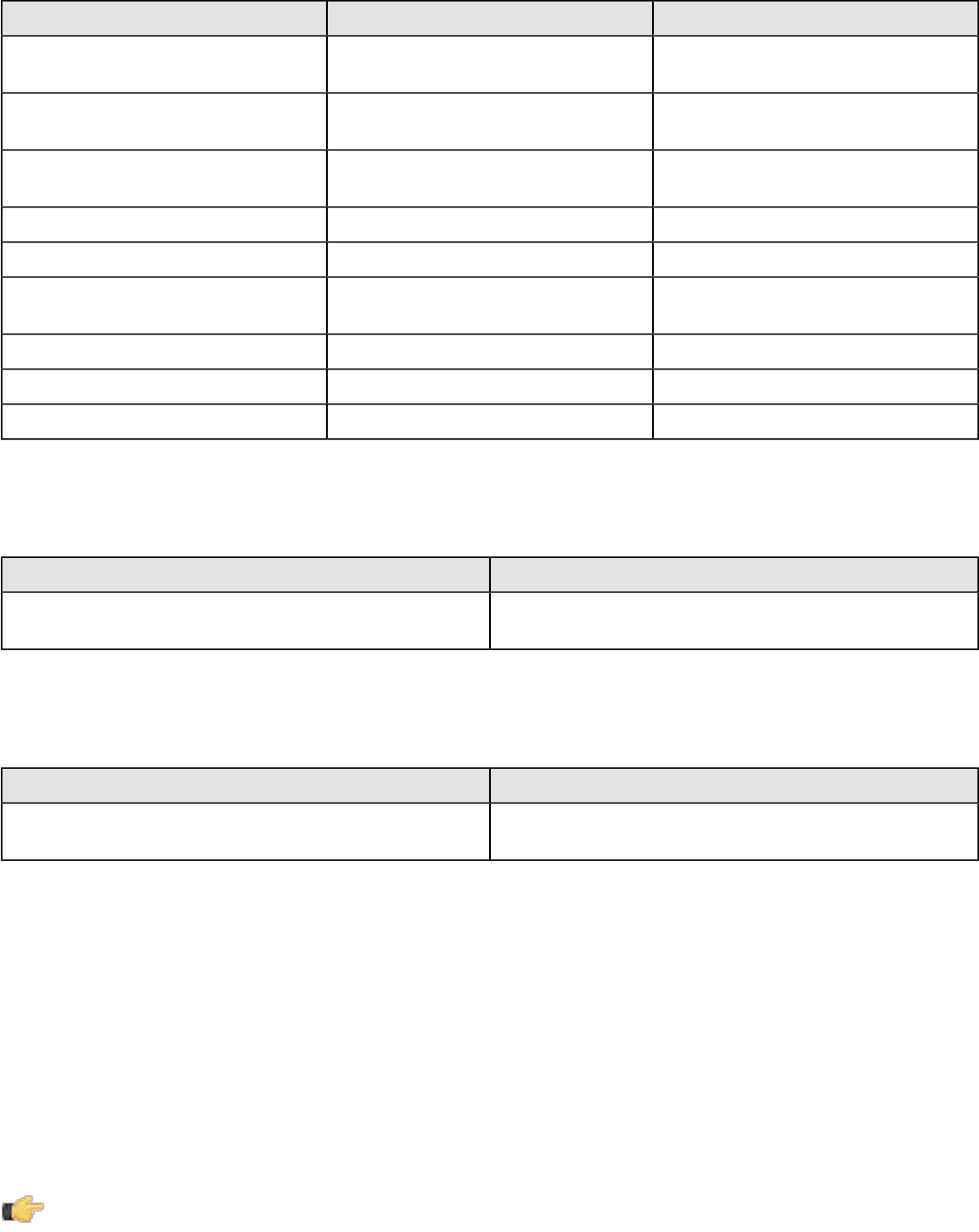

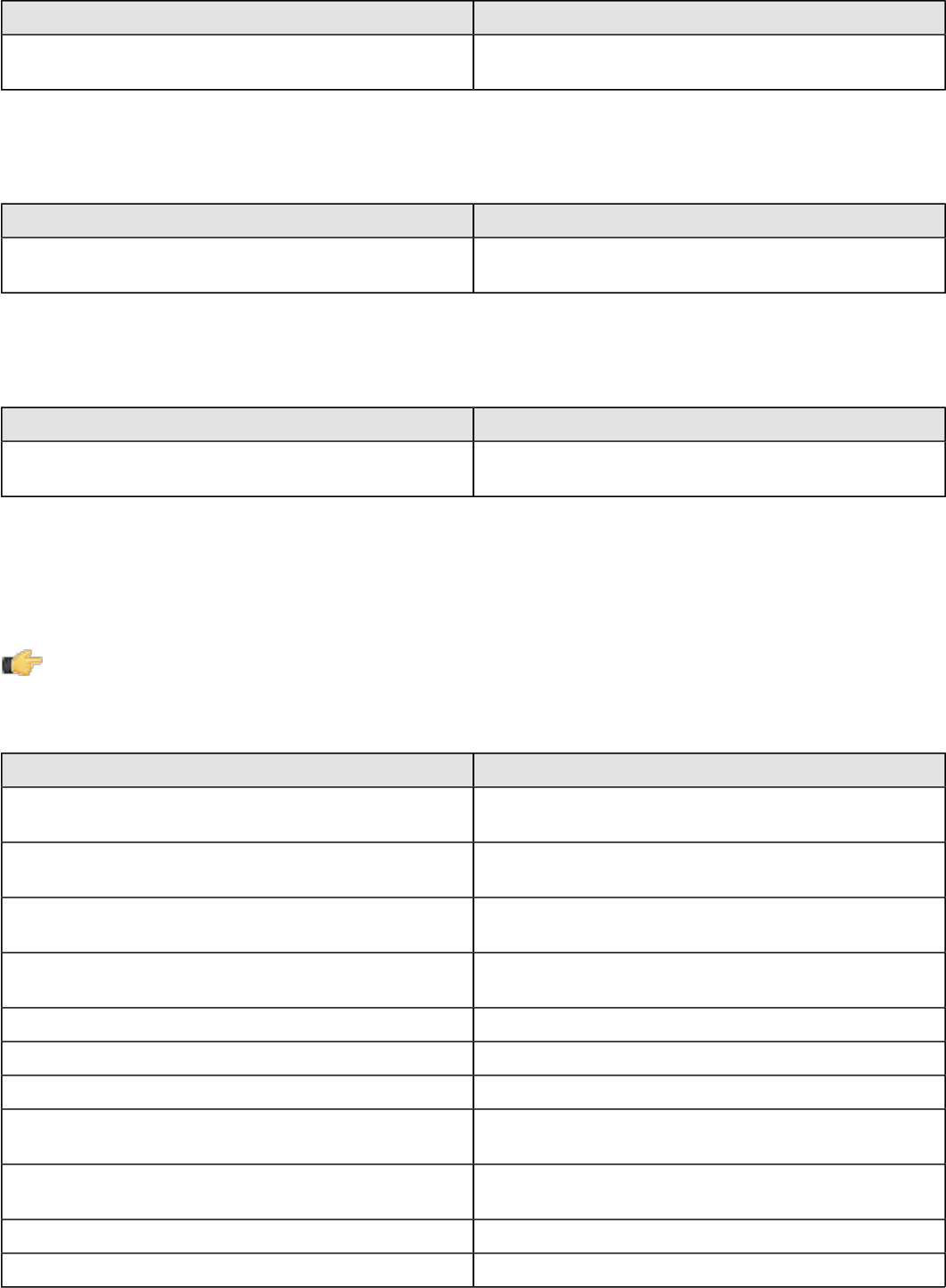

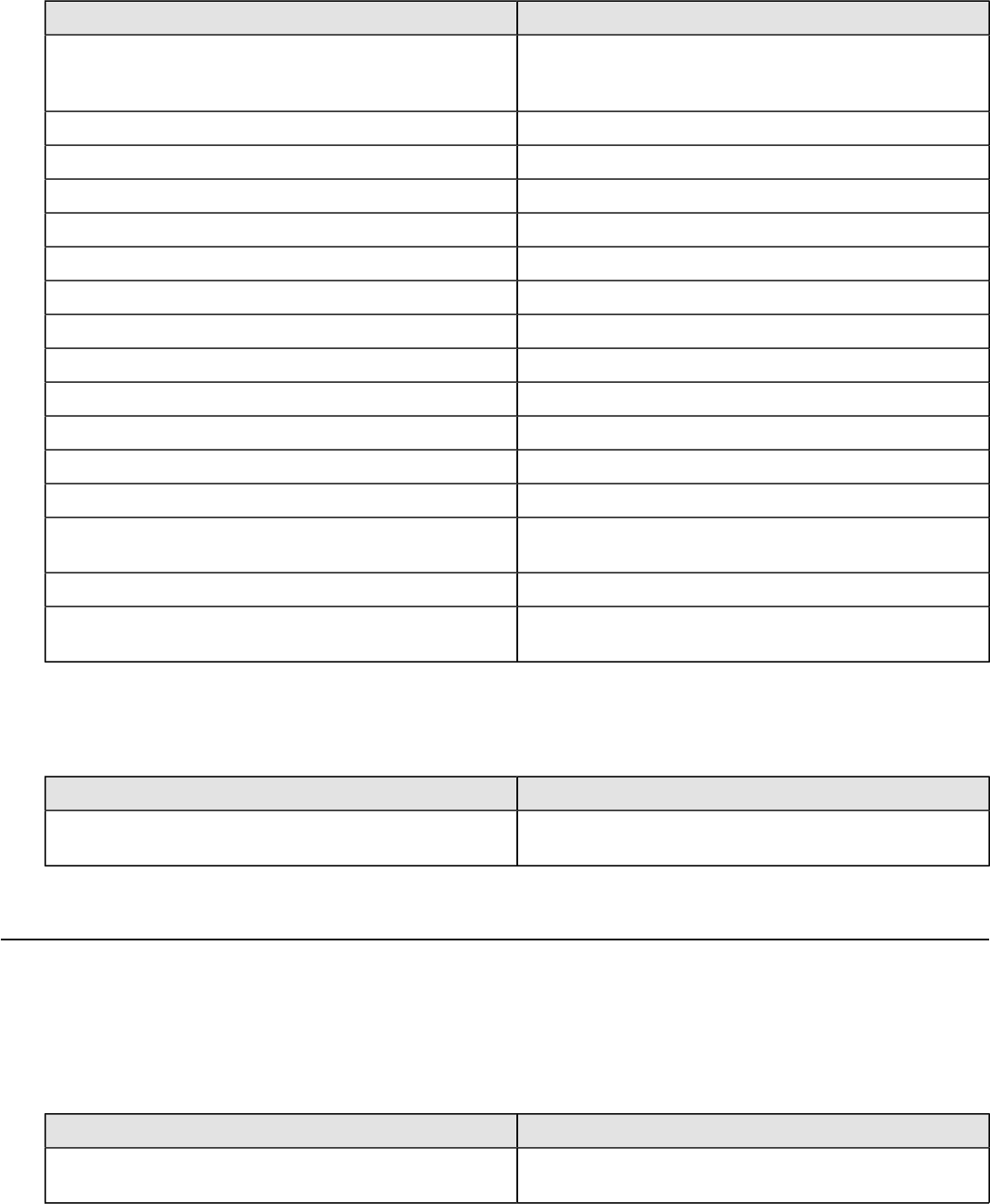

The Data Integration Server is a dedicated ETL server whose primary functions are:

Execution Executes ETL jobs and transformations using the Pentaho

Data Integration engine

Security Allows you to manage users and roles (default security) or

integrate security to your existing security provider such

as LDAP or Active Directory

Content Management Provides a centralized repository that allows you to

manage your ETL jobs and transformations. This includes

full revision history on content and features such as

sharing and locking for collaborative development

environments.

Scheduling Provides the services allowing you to schedule and

monitor activities on the Data Integration Server from

within the Spoon design environment.

Pentaho Data Integration is composed of the following primary components:

•Spoon. Introduced earlier, Spoon is a desktop application that uses a graphical interface and editor for

transformations and jobs. Spoon provides a way for you to create complex ETL jobs without having to read or

write code. When you think of Pentaho Data Integration as a product, Spoon is what comes to mind because, as a

database developer, this is the application on which you will spend most of your time. Any time you author, edit, run

or debug a transformation or job, you will be using Spoon.

•Pan. A standalone command line process that can be used to execute transformations and jobs you created in

Spoon. The data transformation engine Pan reads data from and writes data to various data sources. Pan also

allows you to manipulate data.

•Kitchen. A standalone command line process that can be used to execute jobs. The program that executes the jobs

designed in the Spoon graphical interface, either in XML or in a database repository. Jobs are usually scheduled to

run in batch mode at regular intervals.

•Carte. Carte is a lightweight Web container that allows you to set up a dedicated, remote ETL server. This provides

similar remote execution capabilities as the Data Integration Server, but does not provide scheduling, security

integration, and a content management system.

What's with all the Culinary Terms?

If you are new to Pentaho, you may sometimes see or hear Pentaho Data Integration referred to as, "Kettle." To avoid

confusion, all you must know is that Pentaho Data Integration began as an open source project called. "Kettle." The

term, K.E.T.T.L.E is a recursive that stands for Kettle Extraction Transformation Transport Load Environment. When

Pentaho acquired Kettle, the name was changed to Pentaho Data Integration. Other PDI components such as Spoon,

Pan, and Kitchen, have names that were originally meant to support a "restaurant" metaphor of ETL offerings.

| Use Pentaho Data Integration | 13

Use Pentaho Data Integration

There are several tasks that must be done first before following these tutorials. These are the tasks that must be done

first.

• Your administrator must have installed Pentaho Data Integration and configured the DI server and its client tools as

described in Configure the DI Server and Configure the PDI Tools and Utilities.

• You must also start the DI server and login to Spoon.

Create a Connection to the DI Repository

You need a place to store your work. We call this place the DI Repository. Your administrator may have created a

connection to the DI repository during the configuration process. If you need to make another repository connection or if

your administrator did not create a connection to the DI repository, you can create the connection.

1. Click on Tools > Repository > Connect.

The Repository Connection dialog box appears.

2. In the Repository Connection dialog box, click the add button (+).

3. Select DI Repository:DI Repository and click OK.

The Repository Configuration dialog box appears.

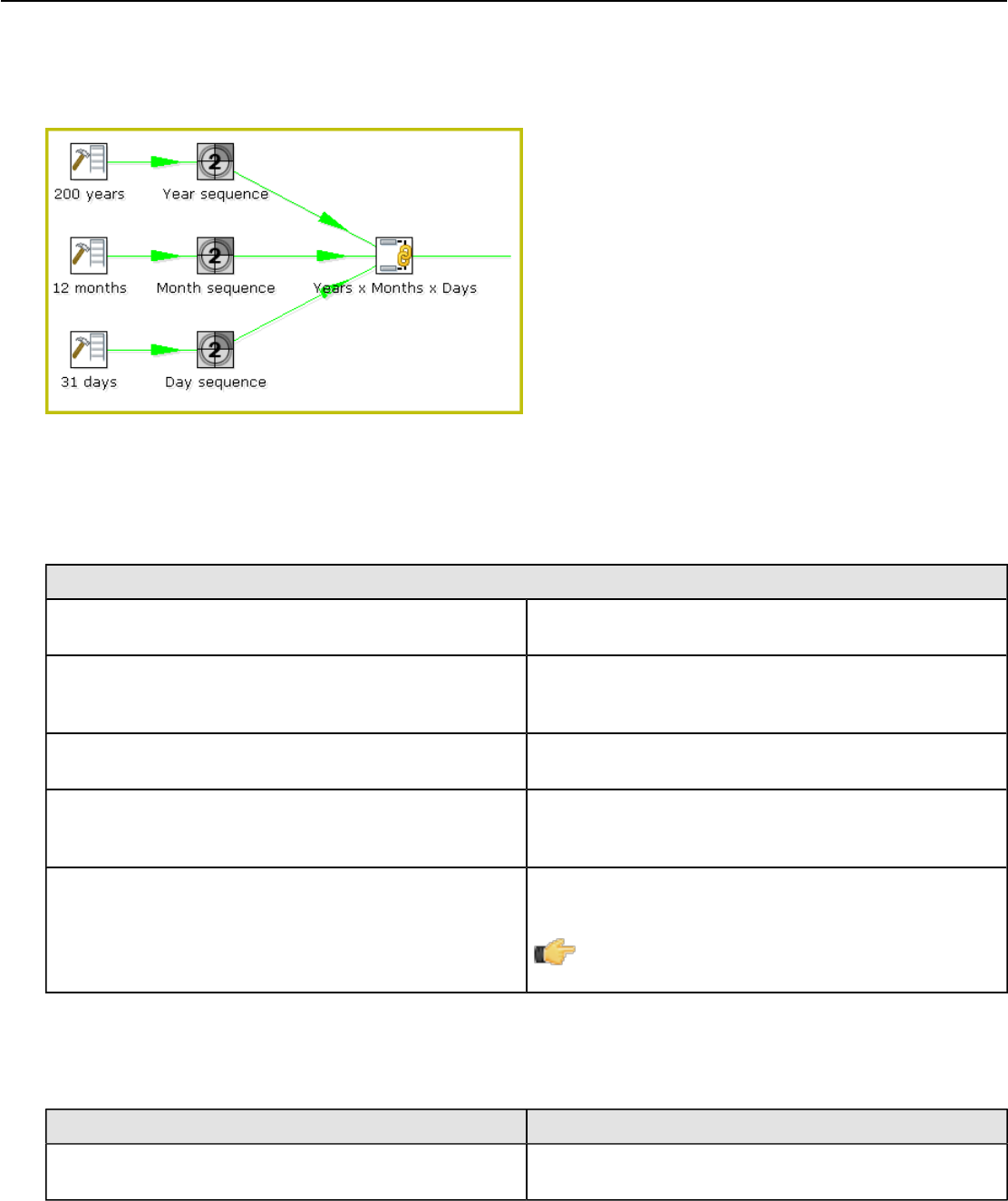

4. Enter the URL associated with your repository. Enter an ID and name for your repository.