Avid MediaCentral Platform Services Installation & Configuration Guide Media Central 2.3 And MCS V2 3 ICG EN

User Manual: avid MediaCentral Platform Services - 2.3 - Installation and Configuration Guide Free User Guide for Avid MediaCentral Software, Manual

Open the PDF directly: View PDF ![]() .

.

Page Count: 223 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Important Information

- Revision History

- Part I: Installation Prerequisites

- Chapter Overview

- Before You Begin

- Network Interface Cards and Network Connections

- Accessing the MCS Server(s)

- Obtaining the Software

- Updating MediaCentral UX Licenses

- Creating User Accounts

- Adjusting Interplay Production Settings

- Adding the MediaCentral UX Version to Avid iNEWS

- Installing the MediaCentral Distribution Service

- Creating the MCS Installation USB Drive

- Part II: BIOS & RAID Configuration

- Chapter Overview

- Changing BIOS Settings

- Configuring the Onboard RAID

- Part III: Software Installation and Preparation

- Chapter Overview

- Installing RHEL and the MCS Software

- Special Instructions for Dell Servers

- MCS Software Deployment

- Booting RHEL for the First Time

- Network Configuration

- Verify DNS

- Identifying NIC Interfaces and Connecting the Network Cable

- (HP Only) Verifying the NIC Interface Name

- (HP Only) Swapping NIC Interface Names

- (HP Only) Removing the MAC Address Hardware References

- Configuring the Hostname and Static Network Route

- Verifying the hosts File Contents

- Verifying the Contents of resolv.conf and nsswitch.conf

- Ensuring the NIC Interface Comes Up at System Startup

- Verifying Hostname, Network and DNS Connectivity

- Configure Date and Time Settings

- Creating the File Cache on the RAID

- Enable / Disable 3G and Edge Streams

- Copying Software to the MCS Server

- Security Updates

- Install Software Patches

- Part IV: Configuring MCS

- Chapter Overview

- Configuring MCS for MediaCentral UX and Media Composer Cloud

- Updating the MediaCentral UX Configuration

- Logging into MediaCentral UX

- Changing the Administrator Password

- Creating a Second Administrator User

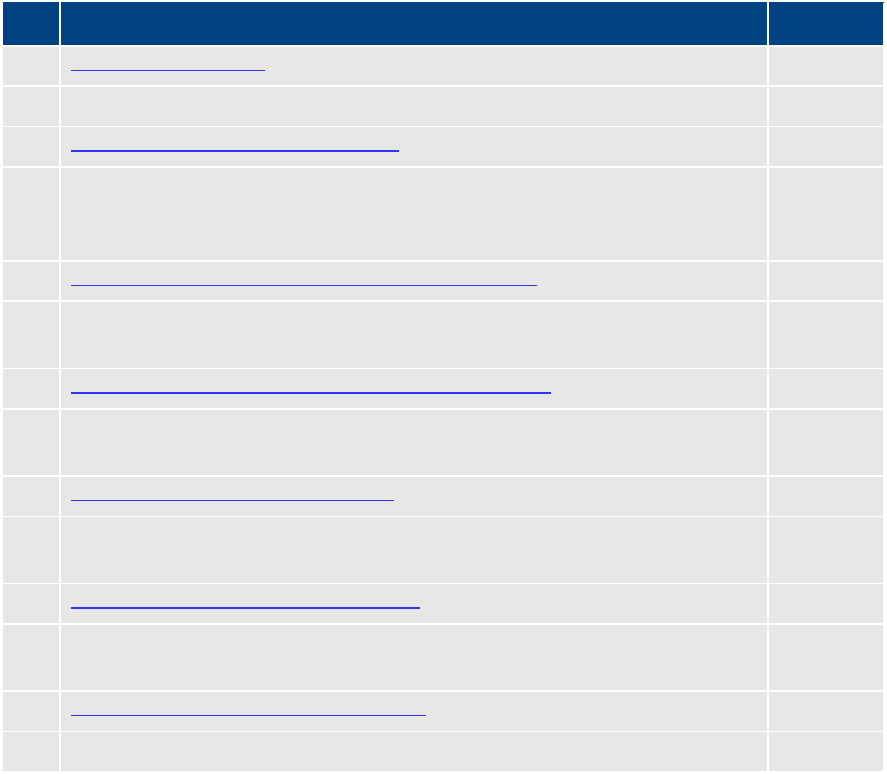

- Configuring System Settings

- Verifying the System Settings

- Configuring Send To Playback Settings

- Importing Domain Users

- Creating Local Users and Assigning Roles

- Continuing the Installation

- Configuring MCS for Interplay MAM

- Continuing the Installation

- Part V: Clustering

- Chapter Overview

- Cluster Overview

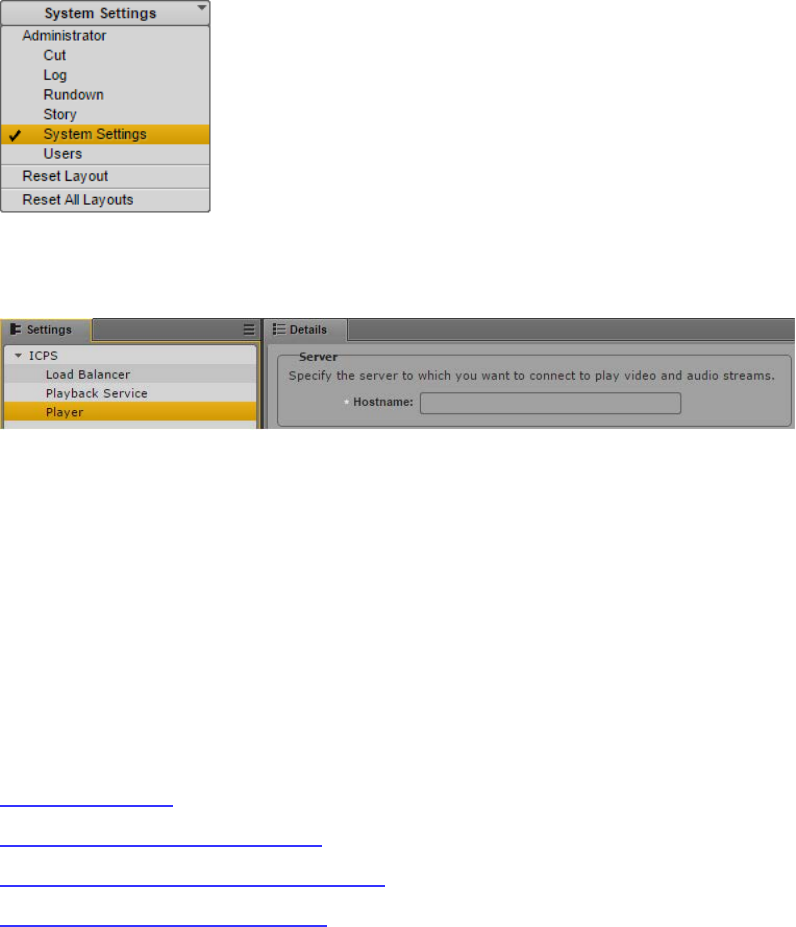

- Configuring the Player System Setting

- Configuring DRBD

- Starting the Cluster Services on the Master Node

- Adding Nodes to the Cluster

- Replicating the File Caches using GlusterFS

- Part VI: Verifying the Installation

- Chapter Overview

- Testing the Basics

- Testing the Cluster Email Service

- Testing Cluster Failover

- Verifying ACS Bus Functionality

- Verifying the Status of RabbitMQ

- Validating the FQDN for External Access

- Backing up the MCS System Settings and the MCS Database

- Part VII: Installing the Closed Captioning Service

- Chapter Overview

- Preparing the Software Package

- Installing the Closed Captioning Service on a Single Server

- Installing the Closed Captioning Service in a Cluster

- Uninstalling the Closed Captioning Service

- Part VIII: Installing the MAM Connector

- Chapter Overview

- Preparing the Software Package

- Installing the MAM Connector on a Single Server

- Installing the MAM Connector in a Cluster

- Uninstalling the MAM Connector

- Configuring the MAM Connector

- Part IX: Multi-Zone Configuration

- Chapter Overview

- Multi-Zone Overview

- Creating and Installing the RSA Keys

- Creating the Master Zone and Initiating Multi-Zone

- Adding Slave Zone(s) to the Multi-Zone Environment

- Validating Multi-Zone Functionality

- Troubleshooting the Multi-Zone Setup

- Dismantling a Multi-Zone Environment

- Appendices

- Appendix A: Overview

- Enabling the Player Demonstration Web Page

- Copying Software to the MCS Server

- Installing MCS on Non-HP / Dell Hardware for Interplay MAM

- Working with the Dell RAID Controller

- HP DL360p Gen8 Card Placement

- Contents of the MCS Installation Package

- Enabling Trusted Certificates

- Determining the Installed MCS Version

- Using SNMP Monitoring on the MCPS Server

- Log Cycling

- Retrieving MCS Logs

- Verifying Cache Directory Permissions

- Monitoring the AAF Generator Service

- Monitoring Services and Resources

- Backing up and Restoring the MCS Database

- Verifying the ISIS Mount

- Reconfiguring the ISIS Connection(s)

- Unicast Support in Clustering

- Reconfiguring MediaCentral Settings in a Cluster

- Shutting Down or Rebooting a MediaCentral Cluster

- Identifying the Master, Slave and Load-Balancing Nodes

- Monitoring MCS High-Availability

- Monitoring Load Balancing

- Changing the Cluster Administrator Email Address

- Taking a Cluster Node Off-Line Temporarily

- Permanently Removing a Node from a Cluster

- Adding a New Node to a Cluster

- Port Requirements

- Appendix B: Configuring Port Bonding for Interplay MAM

- Appendix C: Enabling MOS Active-X Plug-Ins

- Appendix D: Configuring iNEWS for Integration with MediaCentral

- Appendix E: The Avid MediaCentral UX Mobile Application

- iNEWS Configuration for Mobile Integration

- Installing Avid Central on an iOS Device

- Installing Avid Central on an Android Device

- Copyright and Disclaimer

MediaCentral® Platform Services

Installation and Configuration Guide Version 2.3

Important Information

This document provides instructions to install and configure Avid MediaCentral Platform

Services (MCS) v2.3. For a complete list of qualified products, see the Avid MediaCentral

Platform Services ReadMe.

For the latest information on the MediaCentral Platform Services, see the documentation

available from the MediaCentral Platform Services page of the Avid Knowledge Base. Updates

are occasionally issued after initial release.

For information on upgrading to MCS 2.3 from an earlier release, see the Avid MediaCentral

Platform Services Upgrading Guide, available on the MediaCentral Platform Services page of the

Avid Knowledge Base.

For information on configuring Media | Index, see the Avid Media | Index Configuration Guide,

which is currently available internally only.

For information on installing Media | Distribute, see the Media |Distribute Installation and

Configuration Guide available on the MediaCentral Platform Services page of the Avid

Knowledge Base.

Note: Throughout this document, “Avid MediaCentral Platform Services” will be referred to as

“MCS”. “Red Hat Enterprise Linux” will be referred to as “RHEL”.

Note: The RHEL deployment used in an MCS environment is a command-line based operating

system. The installation process will require the editing of various system files. Although

multiple text editors exist, the tool used throughout this document is “vi”. If needed, a short

introduction to vi is included in the MediaCentral Platform Services Concepts and Clustering Guide.

Note: When working in Linux, this guide assumes the user is logged in as the “root” user.

Perform all commands and server configuration as the “root” user.

MCS 2.3 Installation and Configuration Guide

2

Revision History

Date Revised

Changes Made

November 20, 2015

• Added restart of “avid-acs-ctrl-core” to Multi-Zone

configuration process.

• Minor update to Configuring Unicast Cluster

Communication.

September 25, 2015

•

Updated Closed Captioning Service installation

instructions.

August 27, 2015

•

Added support for GlusterFS v3.6.4.

• Updated MediaCentral Distribution Service support.

August 14, 2015

Added note regarding prerequisite package for Gluster v3.4.4.

July 30, 2015

•

Added support for GlusterFS v3.4.4 and updated the

process for installing GlusterFS.

• Updated process for configuring a cluster for unicast.

• New uninstall process for the Closed Captioning service.

• Removed Avid Collect Suite Client from Part VI of this

guide.

June, 25 2015

First publication.

Updates from the 2.2 Installation Guide include:

• The Installation Guide has been updated to present a

more streamlined installation process.

• Pay particular attention to the creation of the “MCS

Installation USB Drive” as processes have changed.

• Sections of the guide that contained general concepts

regarding RHEL and clustering have been moved to the

MediaCentral Platform Services Concepts and Clustering

Guide.

• Information regarding the Pre-Flight checklist has been

consolidated and relocated directly to the Pre Flight

document itself.

• Installation process for the new Closed Captioning

Service.

MCS 2.3 Installation and Configuration Guide

3

Contents

Important Information ....................................................................................................................... 1

Revision History .................................................................................................................................. 2

PART I: INSTALLATION PREREQUISITES .......................................................................................................... 10

Chapter Overview .................................................................................................................................... 11

Before You Begin ...................................................................................................................................... 12

Network Interface Cards and Network Connections ............................................................................... 13

Accessing the MCS Server(s) .................................................................................................................... 14

Copying Software to the MCS Server ................................................................................................... 14

Obtaining the Software ............................................................................................................................ 15

Red Hat Enterprise Linux (RHEL) .......................................................................................................... 15

RHEL Security Updates ......................................................................................................................... 15

MCS Installation Packages .................................................................................................................... 16

Storage Controller Driver for the HP ProLiant Gen9 Server ................................................................. 17

GlusterFS .............................................................................................................................................. 18

Updating MediaCentral UX Licenses ........................................................................................................ 19

Interplay Production Licensing ............................................................................................................. 19

iNEWS Licensing ................................................................................................................................... 20

Creating User Accounts ............................................................................................................................ 20

Interplay Production User .................................................................................................................... 20

Avid ISIS User ........................................................................................................................................ 21

Avid iNEWS User ................................................................................................................................... 22

Interplay MAM User ............................................................................................................................. 22

Media Composer Cloud User ............................................................................................................... 22

Adjusting Interplay Production Settings .................................................................................................. 23

Adding the MediaCentral UX Version to Avid iNEWS .............................................................................. 24

Installing the MediaCentral Distribution Service ..................................................................................... 25

Creating the MCS Installation USB Drive ................................................................................................. 26

Preparing the Installation Drive for the HP ProLiant Gen9 .................................................................. 26

Preparing the Installation Drive for HP Gen8 and Dell Servers ............................................................ 29

PART II: BIOS & RAID CONFIGURATION ....................................................................................................... 31

Chapter Overview .................................................................................................................................... 32

Changing BIOS Settings ............................................................................................................................ 33

MCS 2.3 Installation and Configuration Guide

4

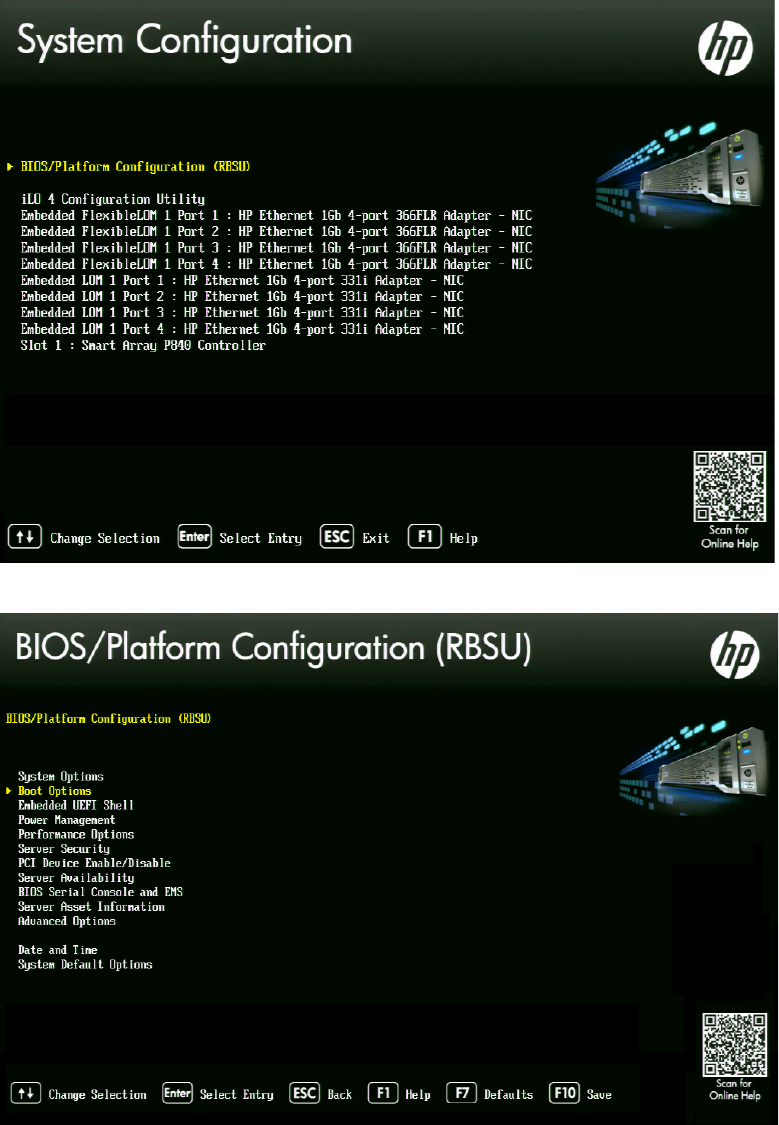

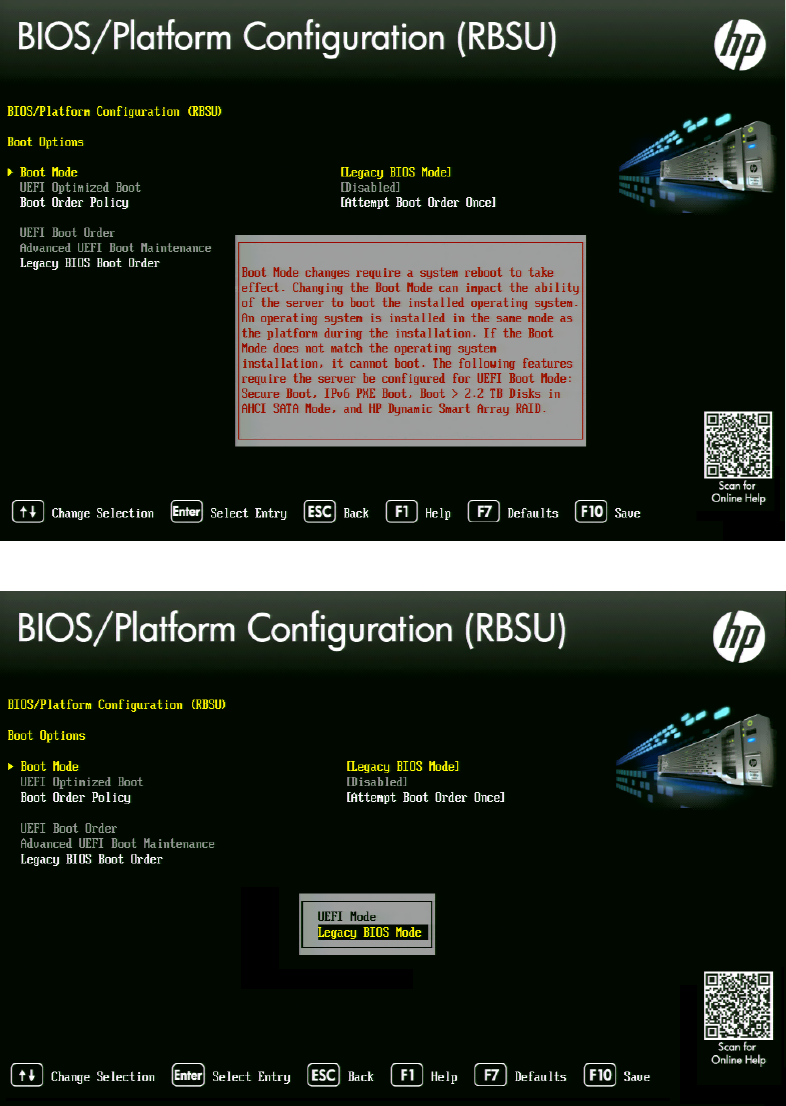

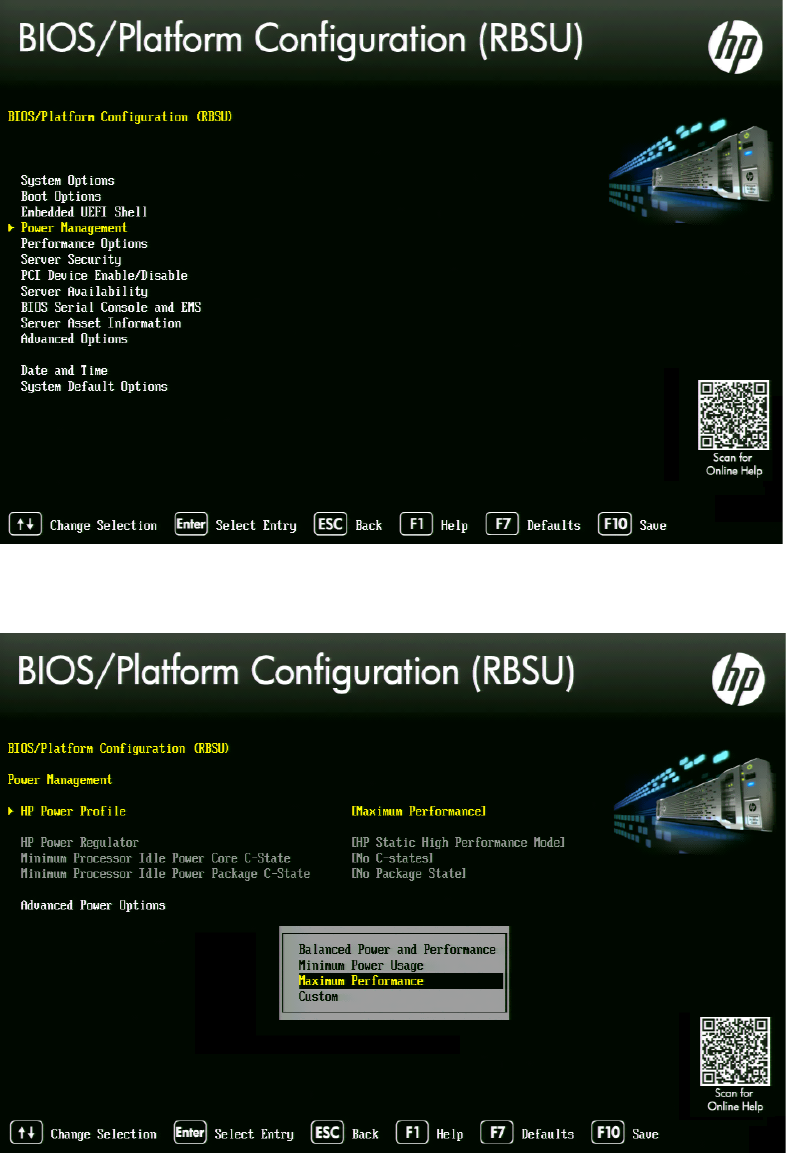

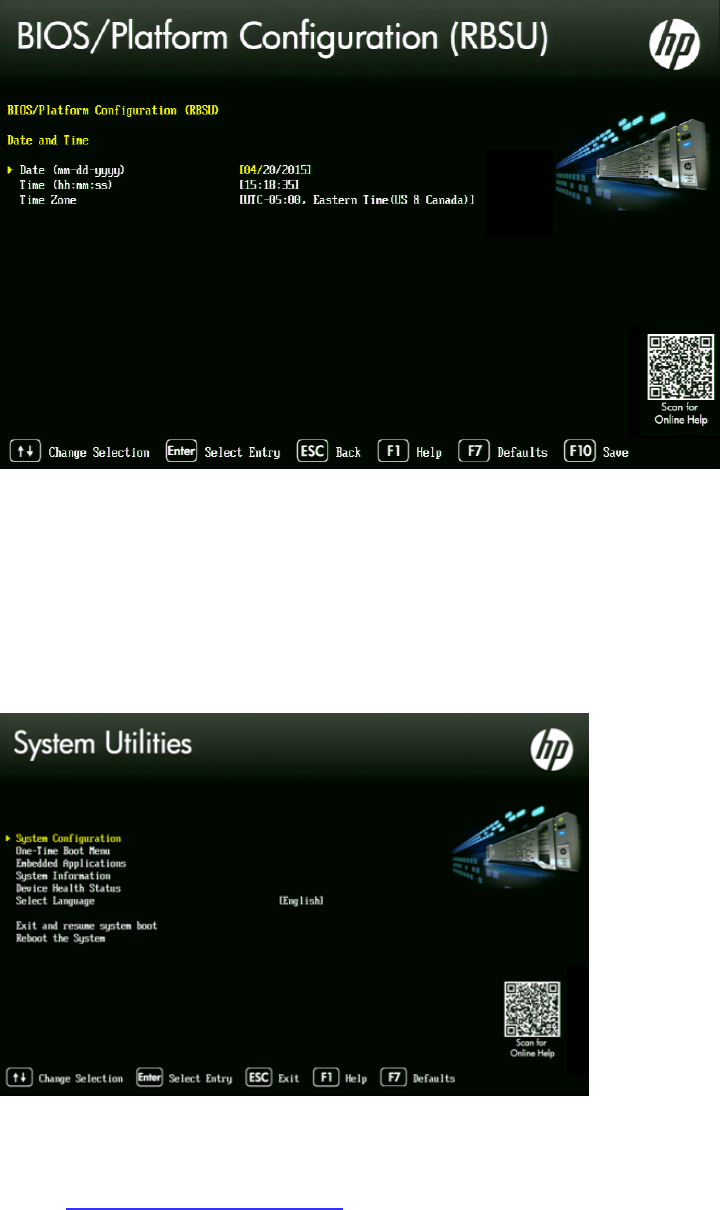

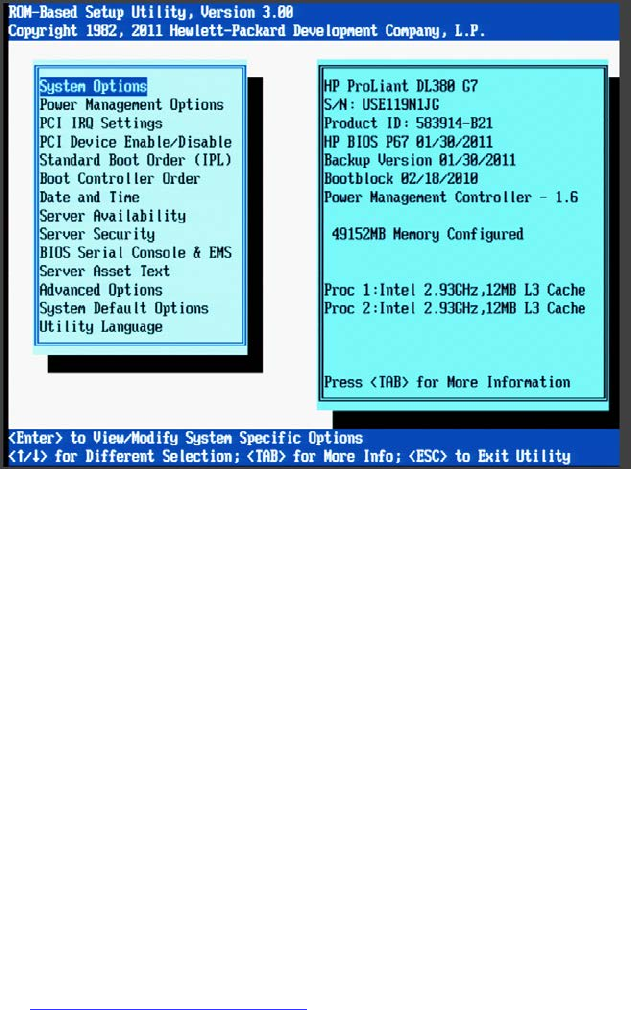

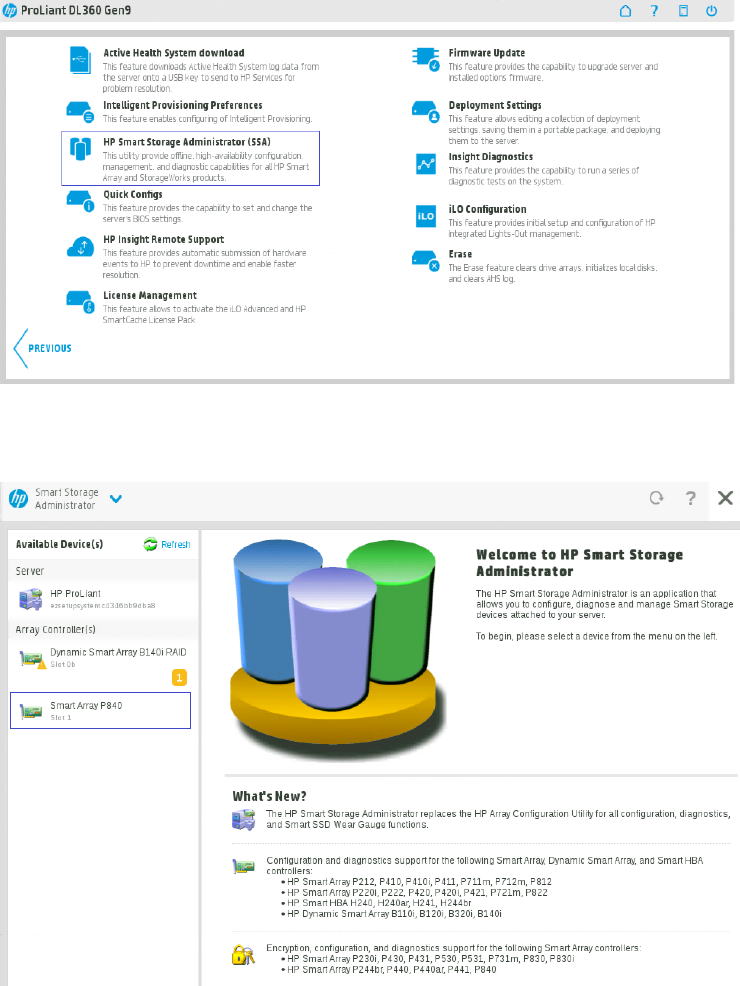

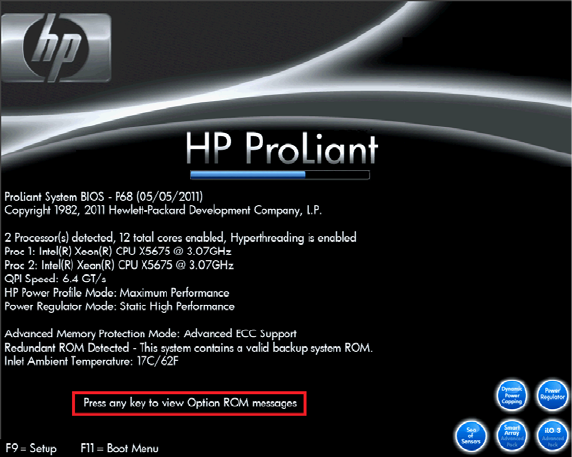

Configuring the BIOS on the HP ProLiant DL360 Gen9 ........................................................................ 33

Configuring the BIOS on the HP ProLiant DL360p Gen8 ...................................................................... 38

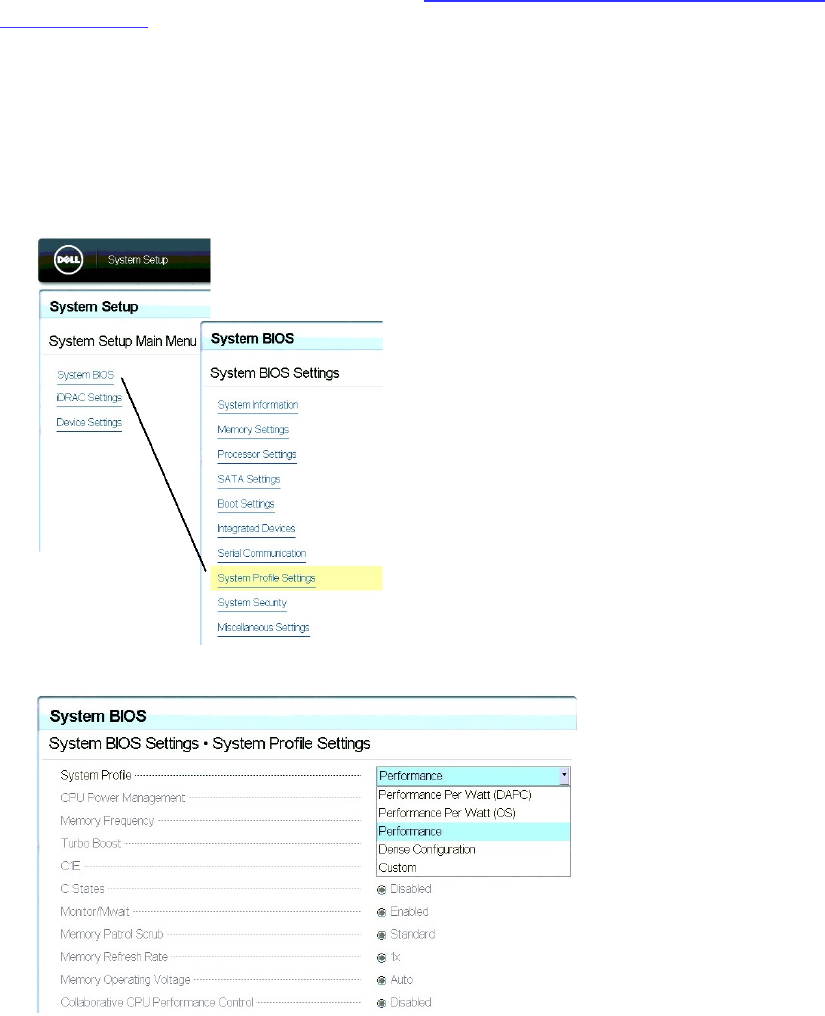

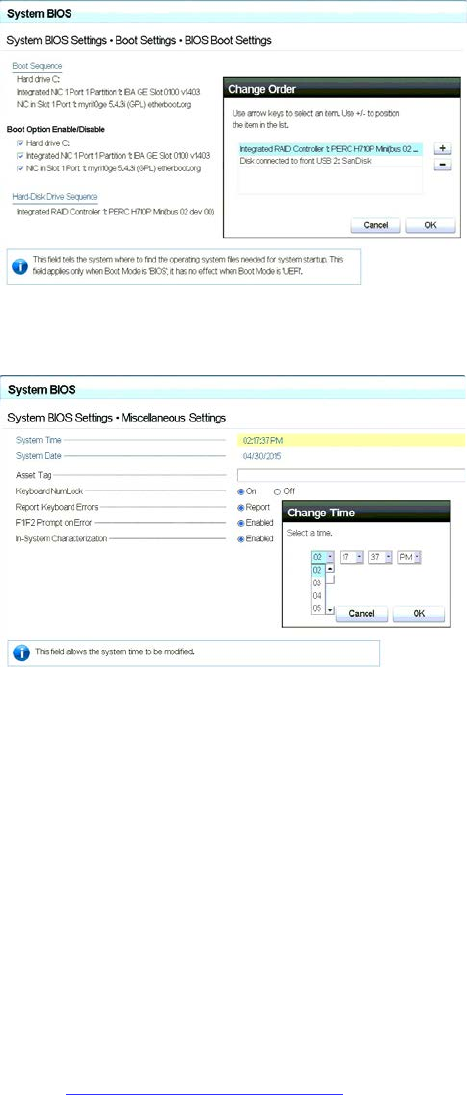

Configuring the BIOS on the Dell PowerEdge R620 / R630 .................................................................. 39

Configuring the Onboard RAID ................................................................................................................ 41

HP ProLiant DL360 Gen9 RAID Configuration ...................................................................................... 41

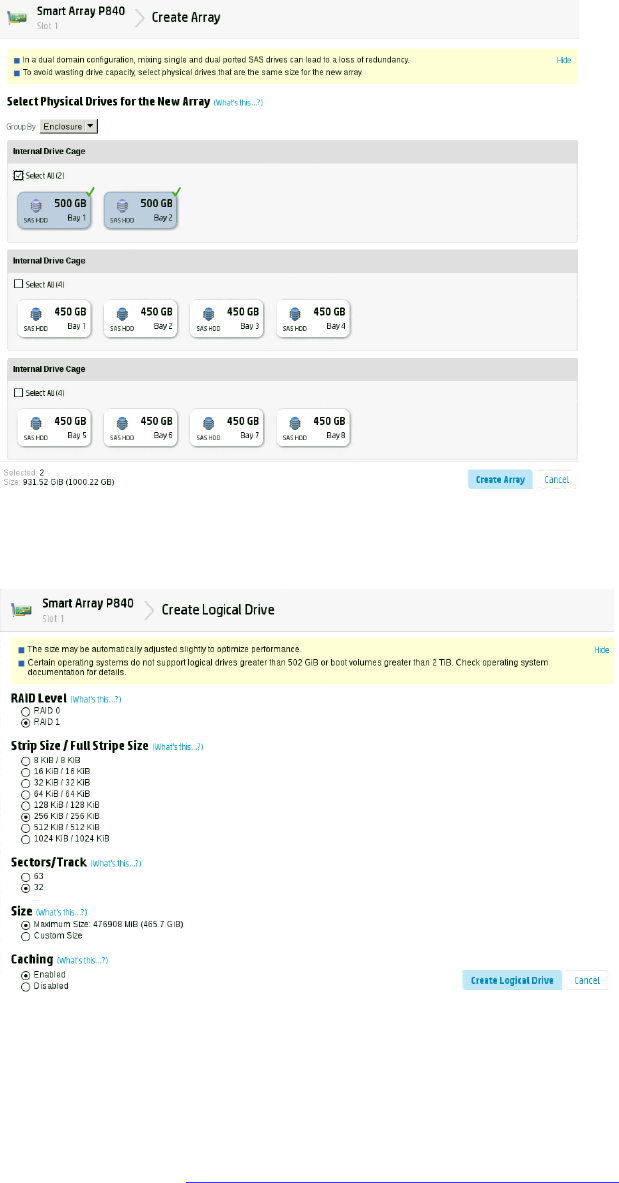

Configuring the HP ProLiant DL360 Gen9 RAID 1 ............................................................................. 42

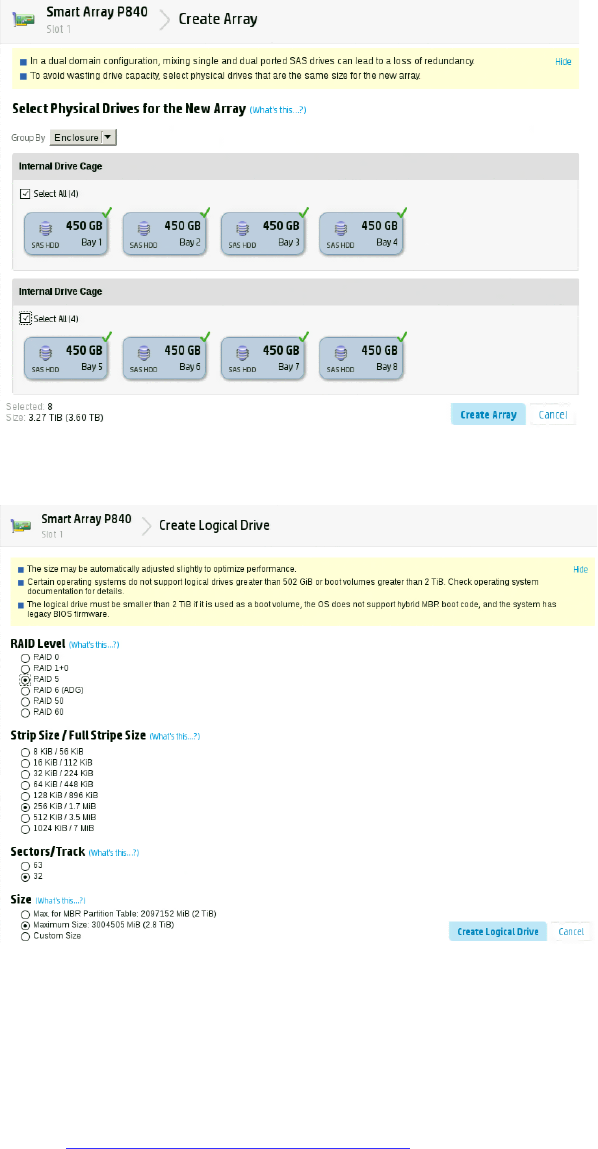

Configuring the HP ProLiant DL360 Gen9 RAID 5 ............................................................................. 44

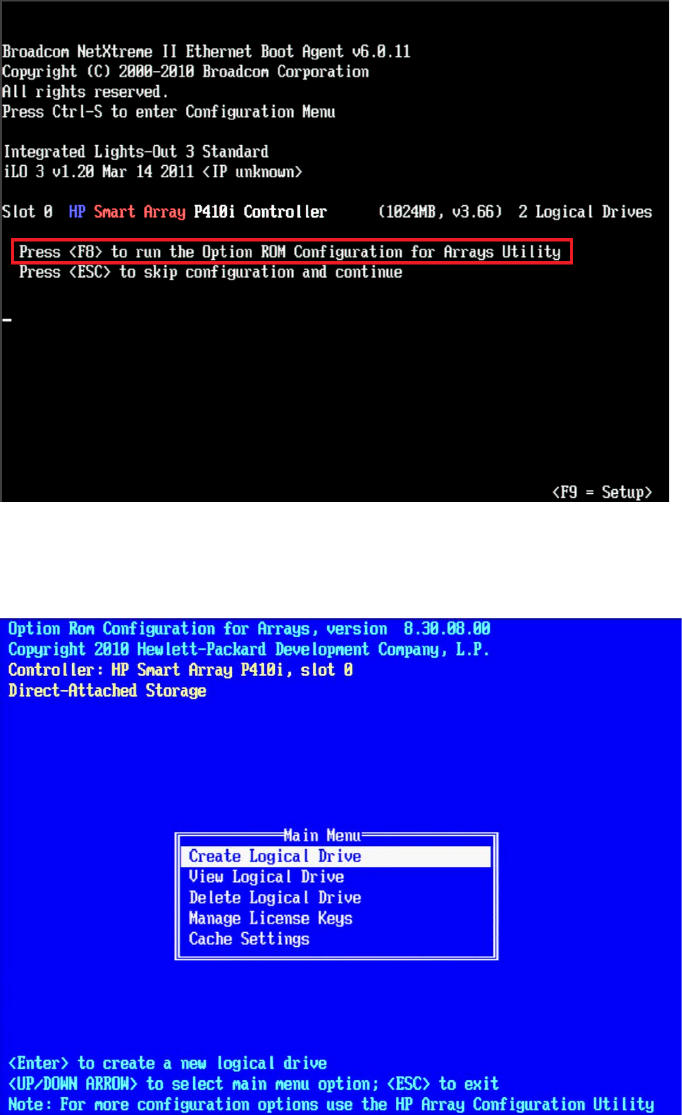

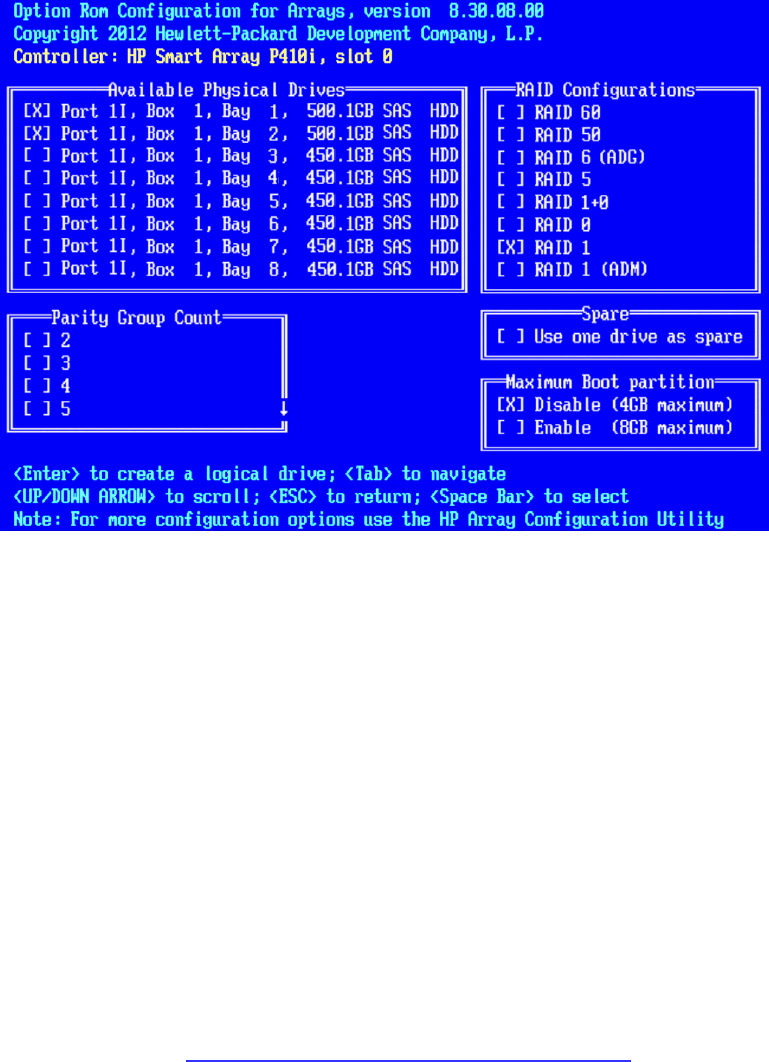

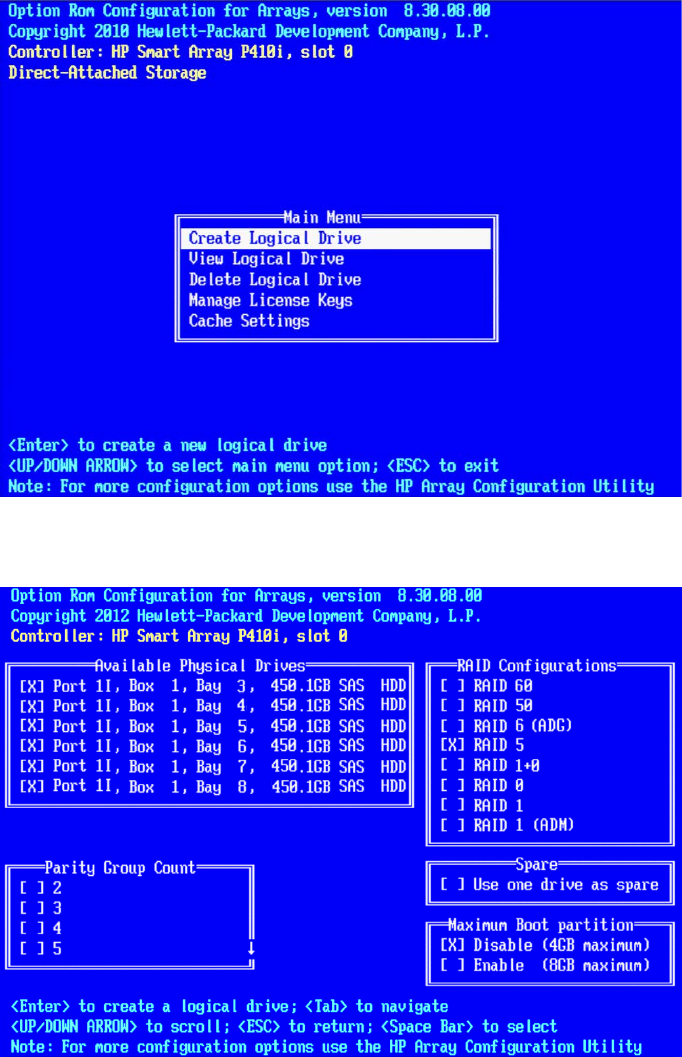

HP ProLiant DL360p Gen8 RAID Configuration .................................................................................... 45

Configuring the HP ProLiant DL360p Gen8 RAID 1 ........................................................................... 45

Configuring the HP ProLiant DL360p Gen8 RAID 5 ........................................................................... 48

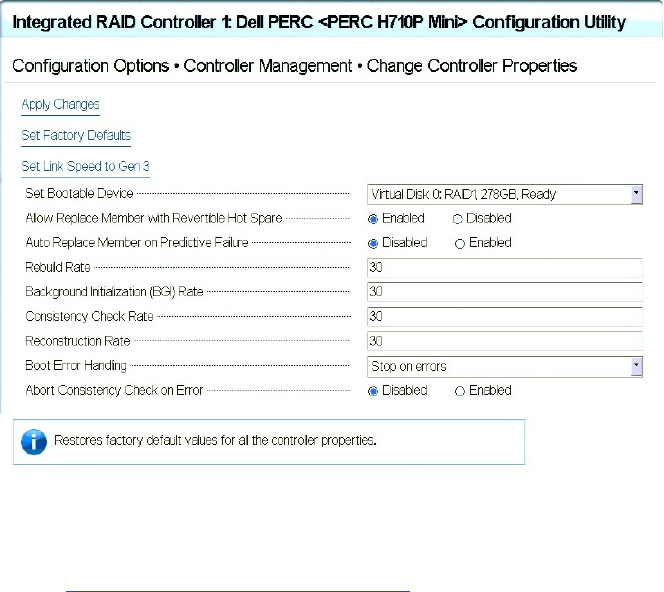

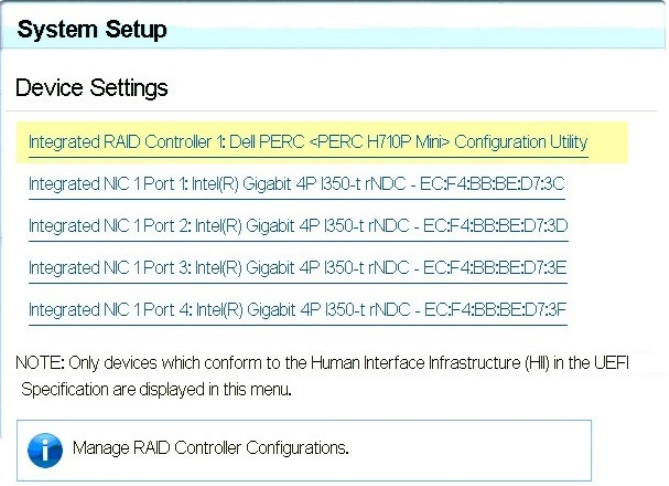

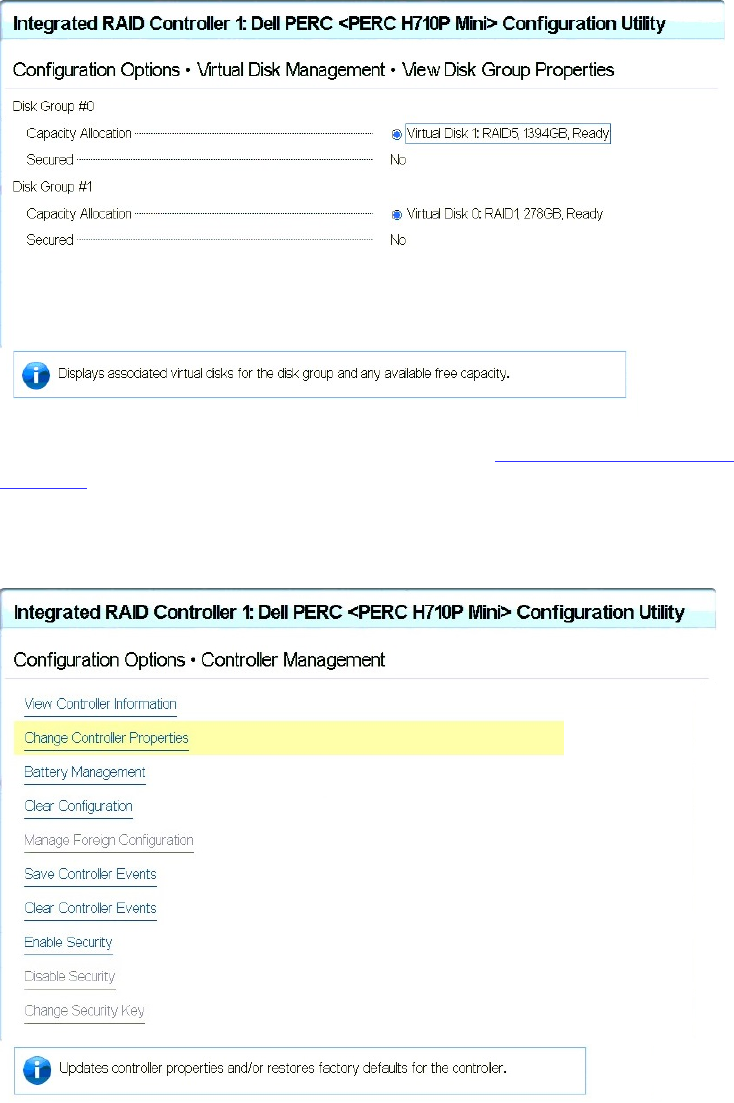

Dell PowerEdge R620 / R630 RAID Configuration ................................................................................ 50

Verifying the PowerEdge Dell R620 / 630 RAID Configuration:........................................................ 50

PART III: SOFTWARE INSTALLATION AND PREPARATION .................................................................................. 53

Chapter Overview .................................................................................................................................... 54

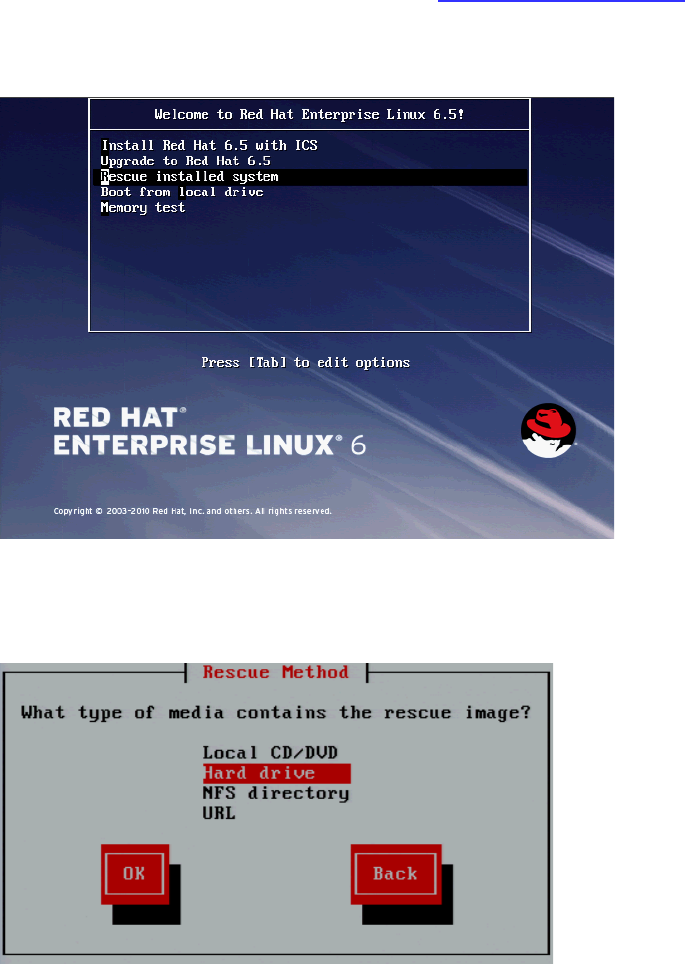

Installing RHEL and the MCS Software ..................................................................................................... 55

Special Instructions for Dell Servers ........................................................................................................ 56

MCS Software Deployment ...................................................................................................................... 60

Booting RHEL for the First Time ............................................................................................................... 63

Booting from the System Drive ............................................................................................................ 63

Changing the root Password ................................................................................................................ 64

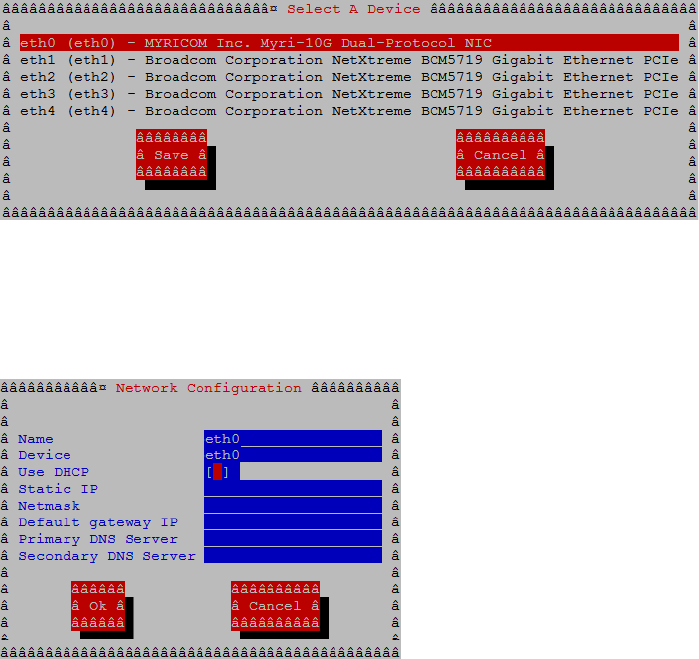

Network Configuration ............................................................................................................................ 65

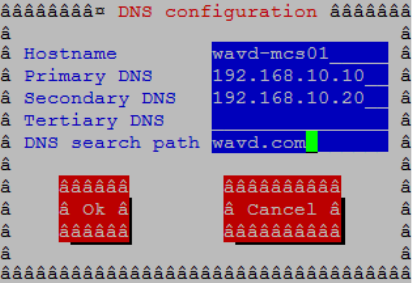

Verify DNS ............................................................................................................................................ 65

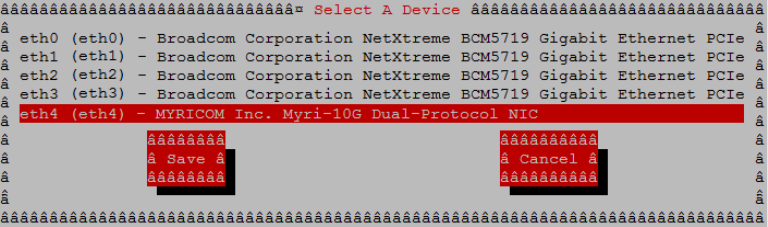

Identifying NIC Interfaces and Connecting the Network Cable ........................................................... 66

(HP Only) Verifying the NIC Interface Name ........................................................................................ 67

(HP Only) Swapping NIC Interface Names ............................................................................................ 67

(HP Only) Removing the MAC Address Hardware References ............................................................. 69

Configuring the Hostname and Static Network Route ......................................................................... 70

Verifying the hosts File Contents .......................................................................................................... 72

Verifying the Contents of resolv.conf and nsswitch.conf .................................................................... 73

Ensuring the NIC Interface Comes Up at System Startup .................................................................... 74

Verifying Hostname, Network and DNS Connectivity .......................................................................... 75

Configure Date and Time Settings ........................................................................................................... 76

Setting the Time Zone .......................................................................................................................... 76

MCS 2.3 Installation and Configuration Guide

5

Synching the System Clock ................................................................................................................... 77

Creating the File Cache on the RAID ........................................................................................................ 79

Partitioning the RAID ............................................................................................................................ 79

Creating the Logical Volume, Filesystem and Mounting the Cache ..................................................... 80

Enable / Disable 3G and Edge Streams .................................................................................................... 84

Copying Software to the MCS Server ....................................................................................................... 84

Security Updates ...................................................................................................................................... 84

Install Software Patches ........................................................................................................................... 84

PART IV: CONFIGURING MCS ...................................................................................................................... 85

Chapter Overview .................................................................................................................................... 86

Configuring MCS for MediaCentral UX and Media Composer Cloud....................................................... 88

Updating the MediaCentral UX Configuration ......................................................................................... 88

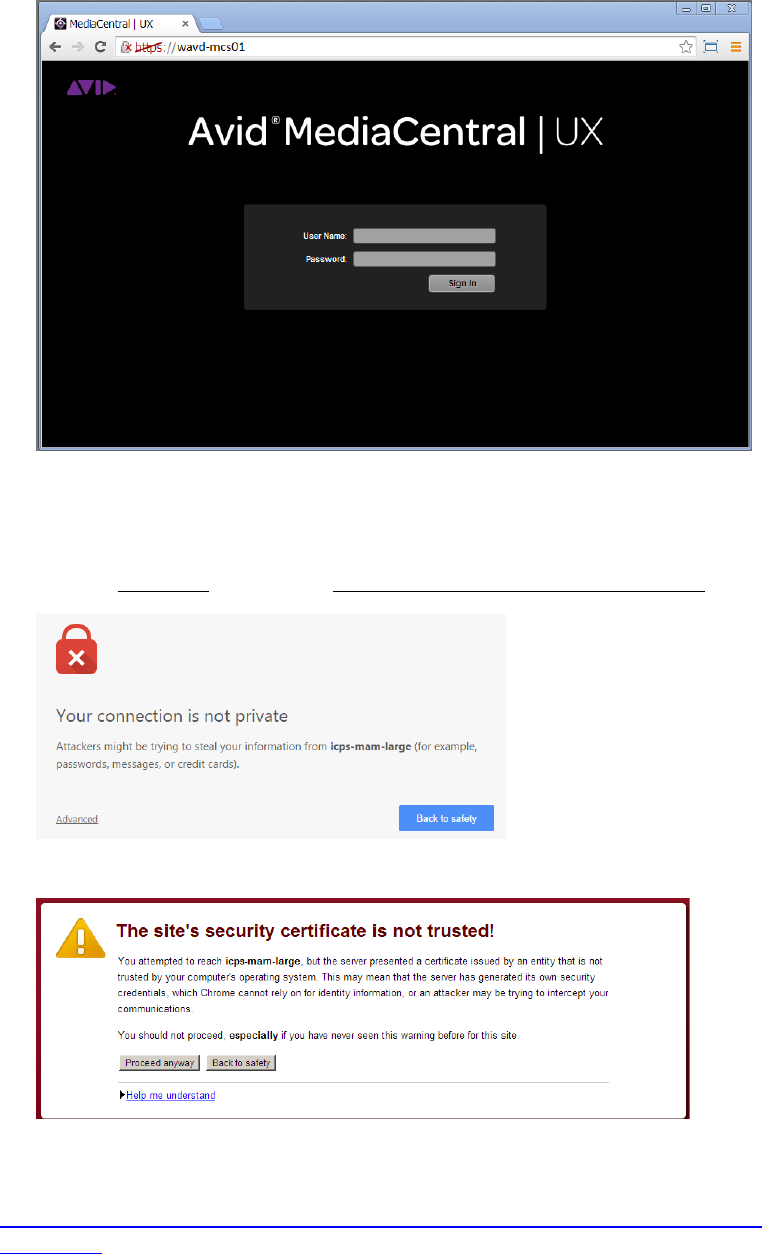

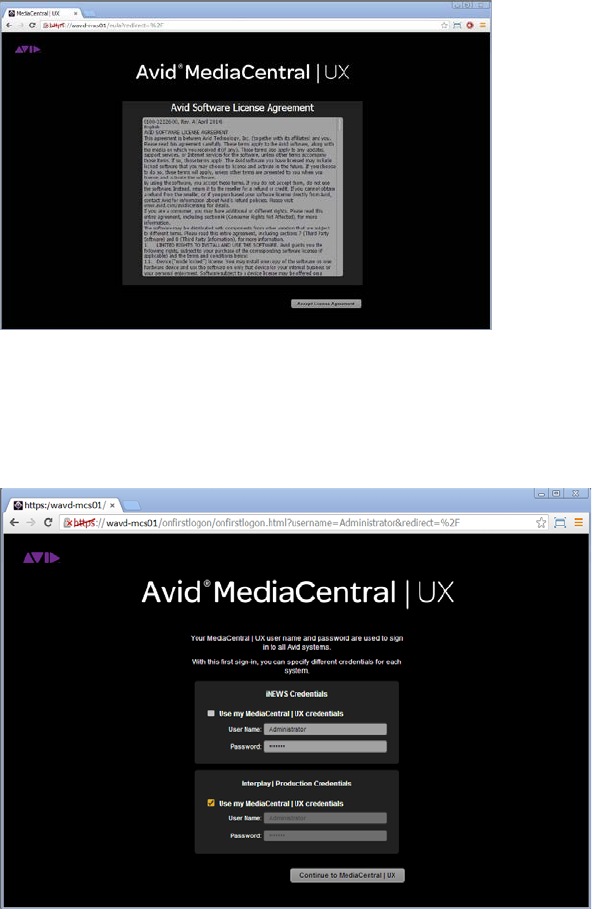

Logging into MediaCentral UX ................................................................................................................. 89

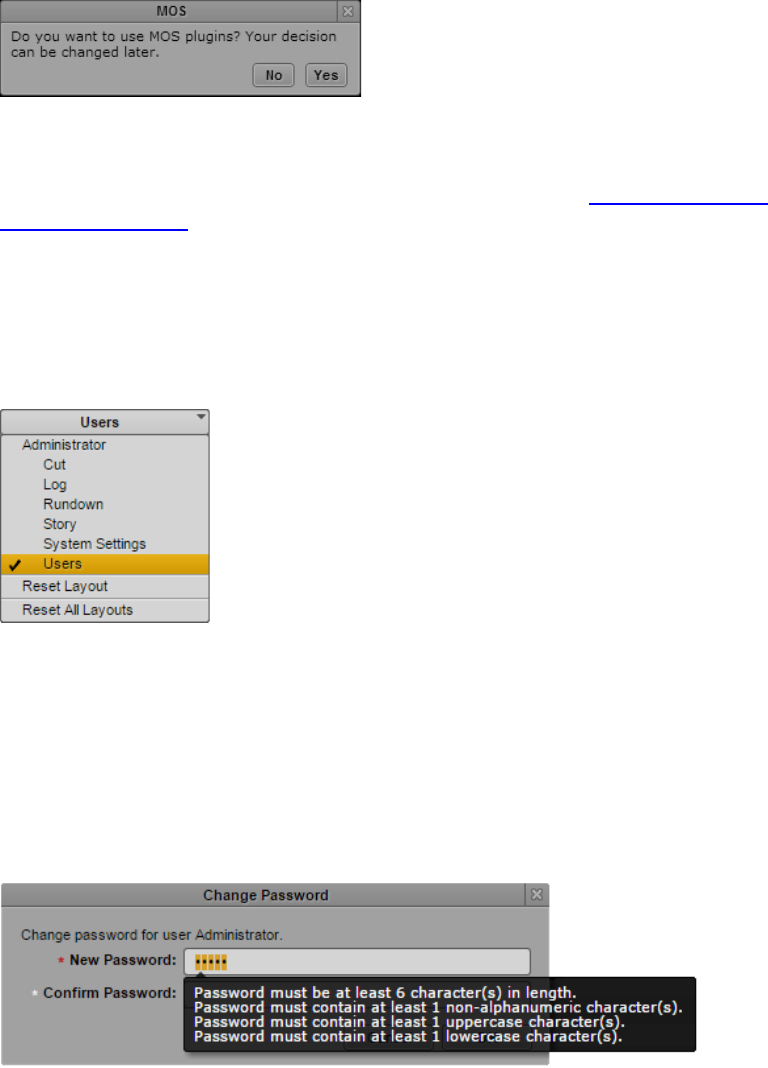

Changing the Administrator Password .................................................................................................... 92

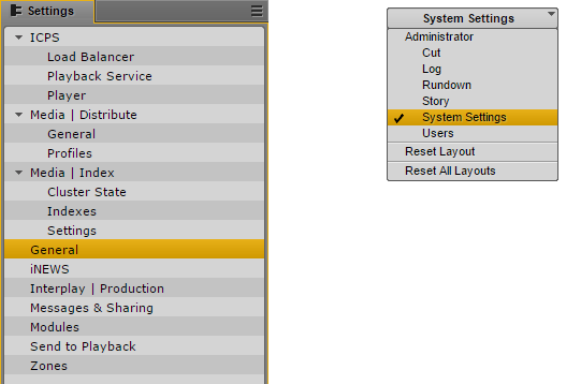

Creating a Second Administrator User .................................................................................................... 93

Configuring System Settings .................................................................................................................... 93

General Settings ................................................................................................................................... 94

iNEWS Settings ..................................................................................................................................... 94

Interplay Production Settings ............................................................................................................... 95

Messages & Sharing ............................................................................................................................. 96

Playback Service Settings ..................................................................................................................... 96

Player Settings ...................................................................................................................................... 99

Verifying the System Settings ................................................................................................................ 100

Verifying the iNEWS Connection ........................................................................................................ 100

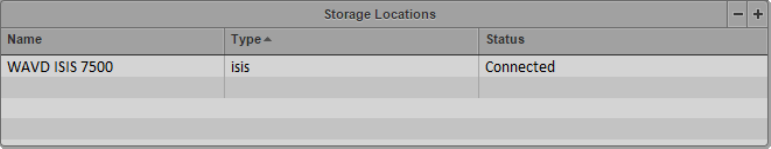

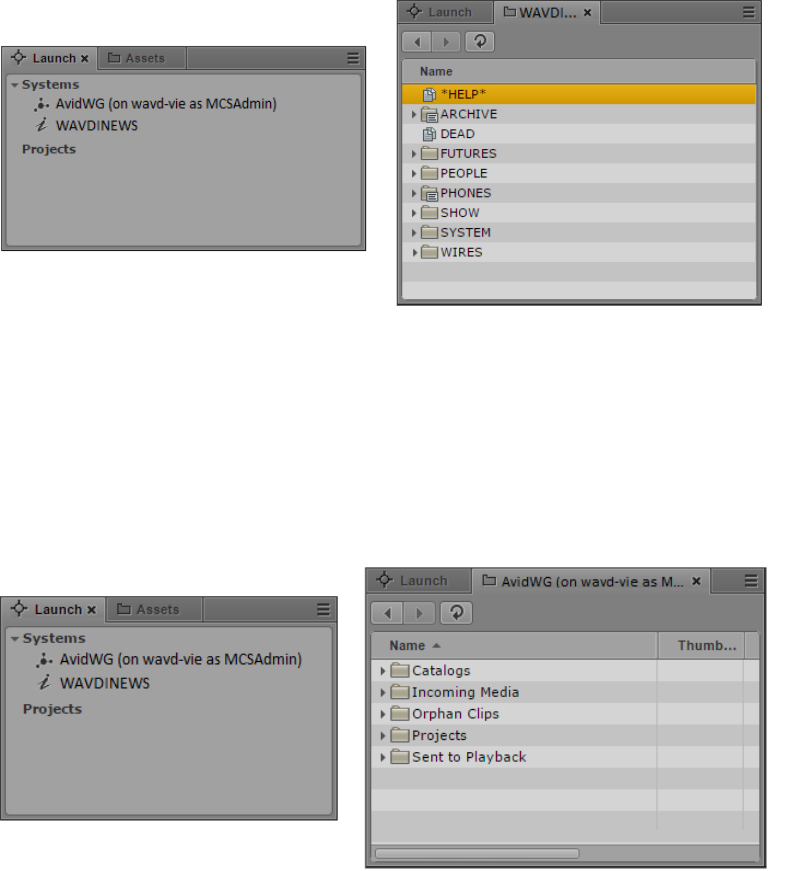

Verifying the Interplay Production and ISIS Connections .................................................................. 100

Configuring Send To Playback Settings .................................................................................................. 101

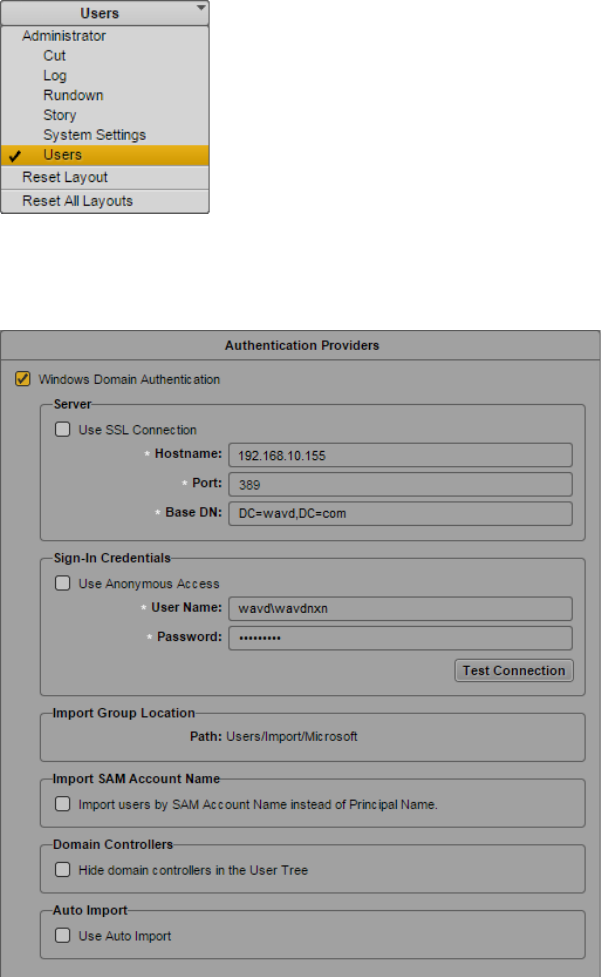

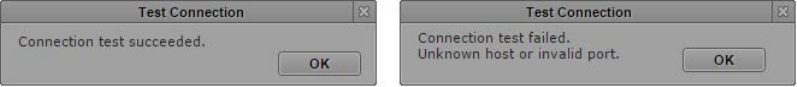

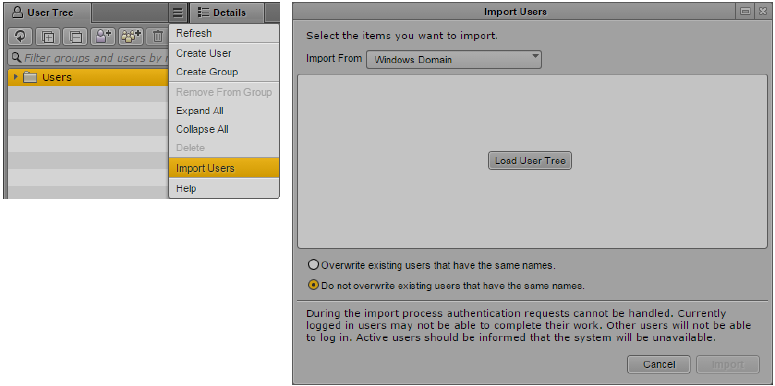

Importing Domain Users ........................................................................................................................ 103

Creating Local Users and Assigning Roles .............................................................................................. 105

Continuing the Installation .................................................................................................................... 106

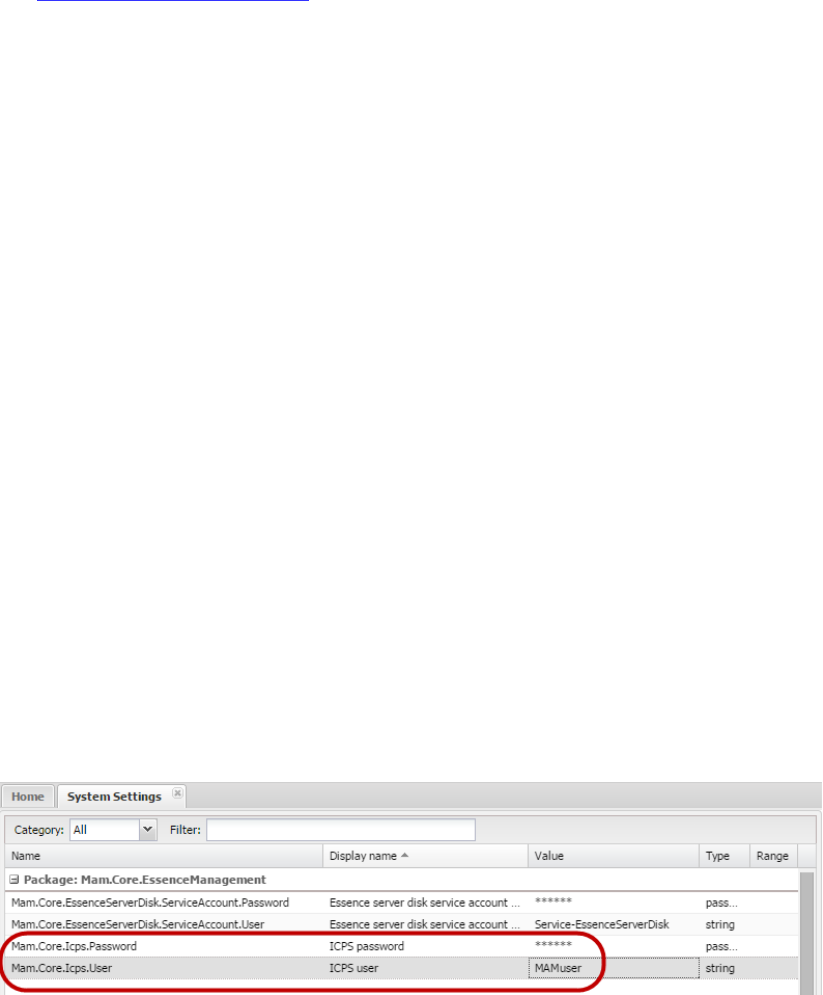

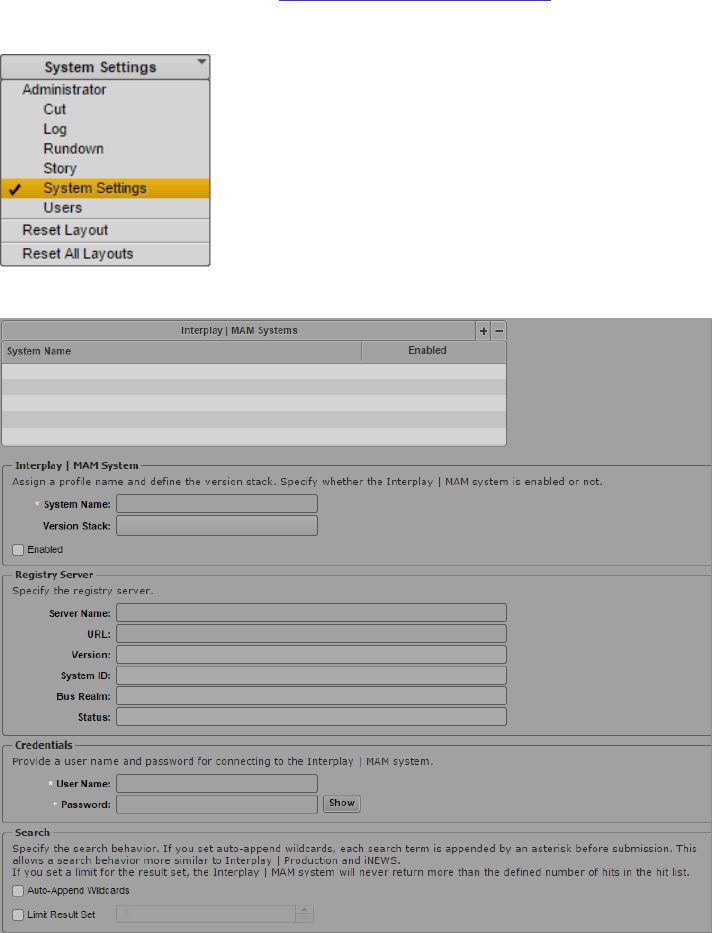

Configuring MCS for Interplay MAM ..................................................................................................... 107

Configuring the MediaCentral UI ....................................................................................................... 107

Creating the MAM System User ......................................................................................................... 108

Configuring the MCS Player ................................................................................................................ 109

MCS 2.3 Installation and Configuration Guide

6

Continuing the Installation .................................................................................................................... 109

PART V: CLUSTERING ................................................................................................................................. 110

Chapter Overview .................................................................................................................................. 111

Cluster Overview .................................................................................................................................... 112

Configuring the Player System Setting ................................................................................................... 113

Configuring DRBD ................................................................................................................................... 114

Starting the Cluster Services on the Master Node ................................................................................. 117

Adding Nodes to the Cluster .................................................................................................................. 120

Replicating the File Caches using GlusterFS ........................................................................................... 121

Installing GlusterFS ............................................................................................................................. 121

Creating the Trusted Storage Pool ..................................................................................................... 122

Configuring the GlusterFS Volumes ................................................................................................... 123

Setting Gluster Volume Ownership .................................................................................................... 125

Making the RHEL Cache Directories ................................................................................................... 127

Changing Ownership and Mounting the GlusterFS Volumes ............................................................. 128

Testing the Cache ............................................................................................................................... 130

Ensuring Gluster is On at Boot ........................................................................................................... 130

PART VI: VERIFYING THE INSTALLATION ....................................................................................................... 131

Chapter Overview .................................................................................................................................. 132

Testing the Basics ................................................................................................................................... 133

Testing the Cluster Email Service ........................................................................................................... 134

Testing Cluster Failover .......................................................................................................................... 135

Verifying ACS Bus Functionality ............................................................................................................. 138

Verifying the Status of RabbitMQ .......................................................................................................... 138

Validating the FQDN for External Access ............................................................................................... 139

Backing up the MCS System Settings and the MCS Database ............................................................... 141

PART VII: INSTALLING THE CLOSED CAPTIONING SERVICE.............................................................................. 145

Chapter Overview .................................................................................................................................. 146

Preparing the Software Package ............................................................................................................ 147

Installing the Closed Captioning Service on a Single Server .................................................................. 147

Installing the Closed Captioning Service in a Cluster ............................................................................. 148

Verifying Prerequisites ....................................................................................................................... 148

Identifying the Master, Slave and Load-Balancing Nodes .................................................................. 148

MCS 2.3 Installation and Configuration Guide

7

Taking the Cluster Offline ................................................................................................................... 149

Installing the Closed Captioning Service Software ............................................................................. 149

Bringing the Cluster Online ................................................................................................................ 150

Checking on the Cluster Status ........................................................................................................... 150

Uninstalling the Closed Captioning Service ........................................................................................... 151

PART VIII: INSTALLING THE MAM CONNECTOR ........................................................................................... 153

Chapter Overview .................................................................................................................................. 154

Preparing the Software Package ............................................................................................................ 155

Installing the MAM Connector on a Single Server ................................................................................. 155

Installing the MAM Connector in a Cluster ............................................................................................ 156

Before You Begin ................................................................................................................................ 156

Take the Cluster Offline ...................................................................................................................... 156

Install the MAM Connector Software ................................................................................................ 156

Bring the Cluster Back Online ............................................................................................................. 157

Uninstalling the MAM Connector .......................................................................................................... 158

Configuring the MAM Connector ........................................................................................................... 159

PART IX: MULTI-ZONE CONFIGURATION ..................................................................................................... 160

Chapter Overview .................................................................................................................................. 161

Multi-Zone Overview ............................................................................................................................. 162

Making Changes to a Multi-Zone Configuration ................................................................................ 162

Creating and Installing the RSA Keys ..................................................................................................... 163

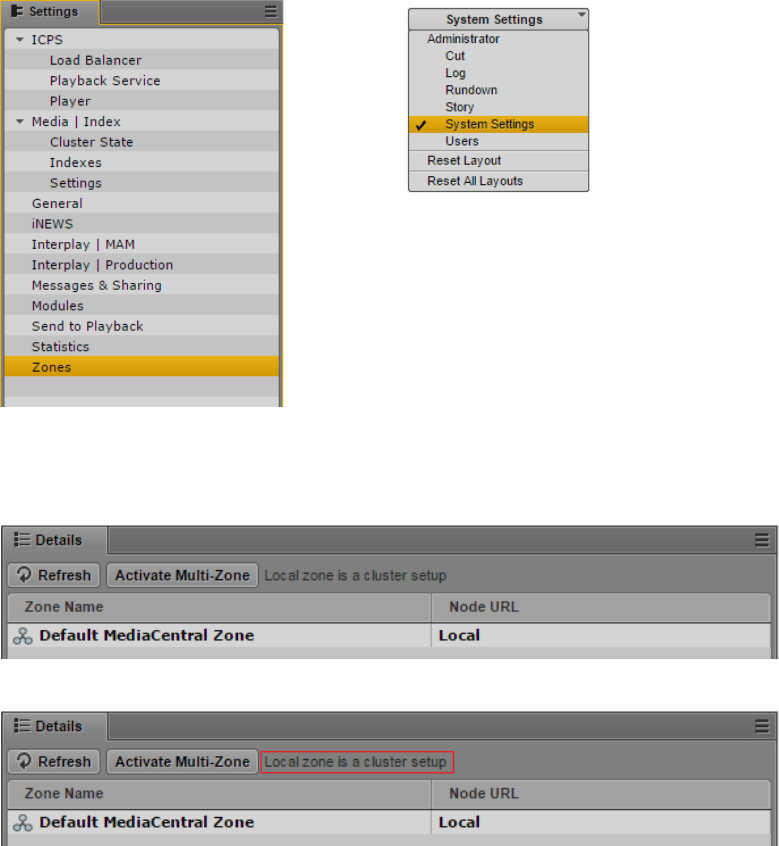

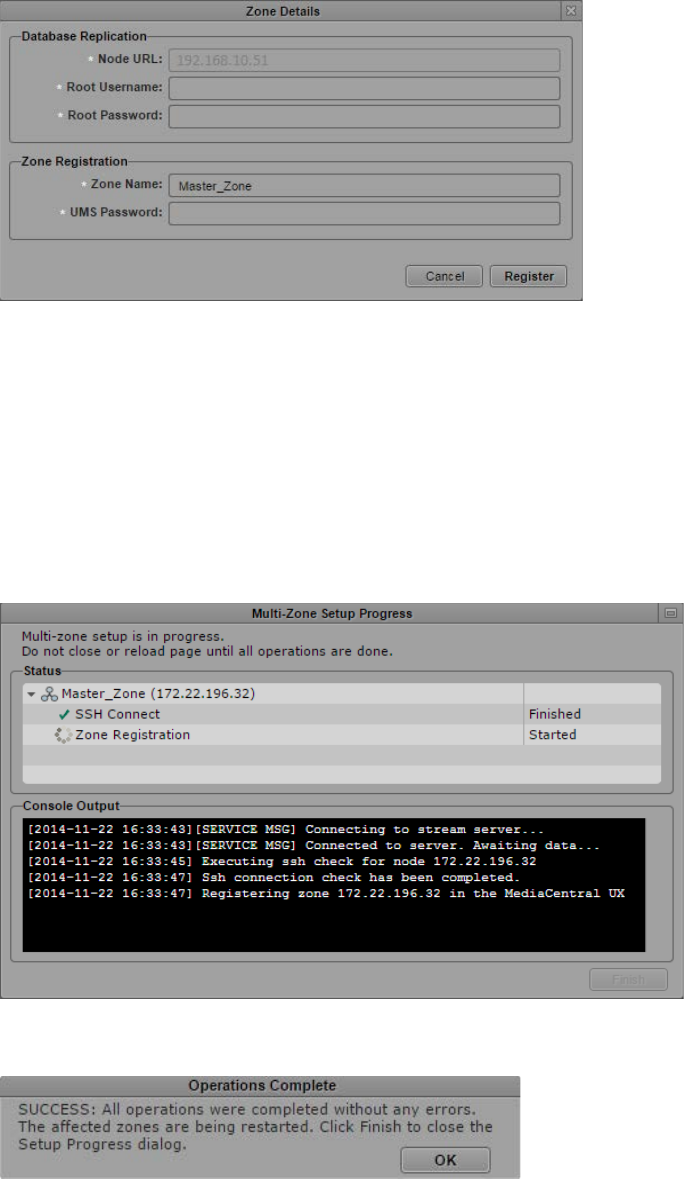

Creating the Master Zone and Initiating Multi-Zone ............................................................................. 164

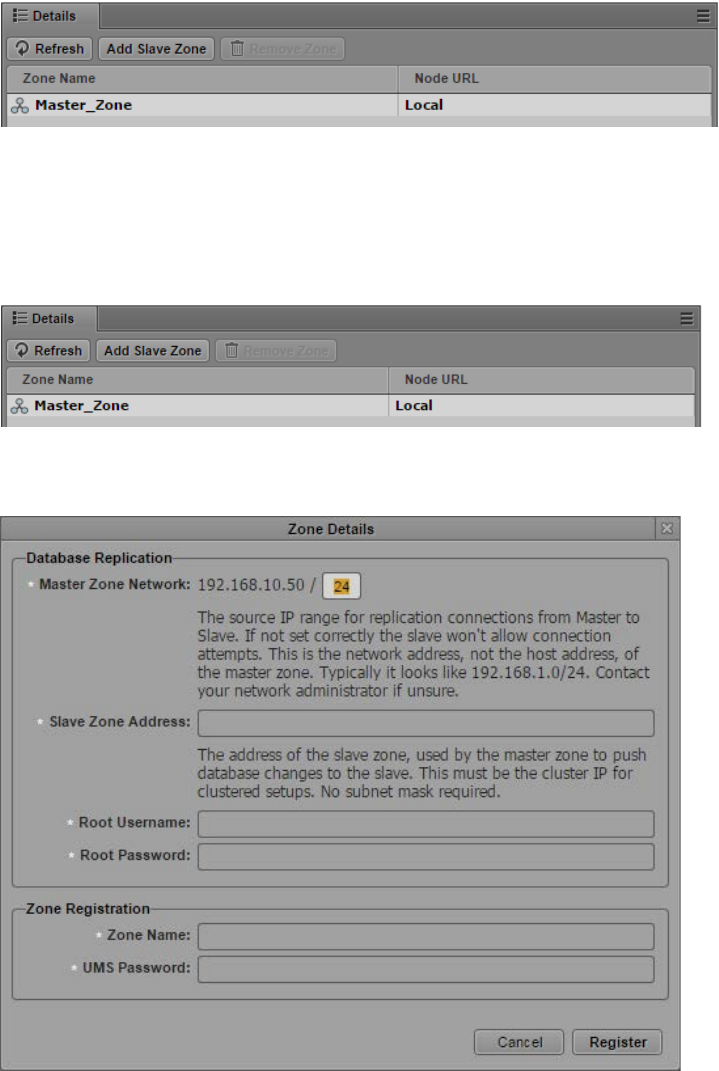

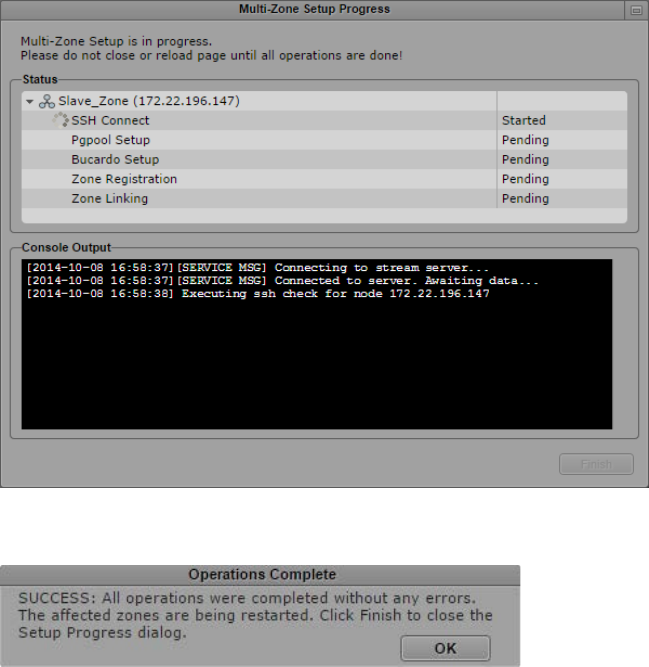

Adding Slave Zone(s) to the Multi-Zone Environment .......................................................................... 166

Validating Multi-Zone Functionality ...................................................................................................... 168

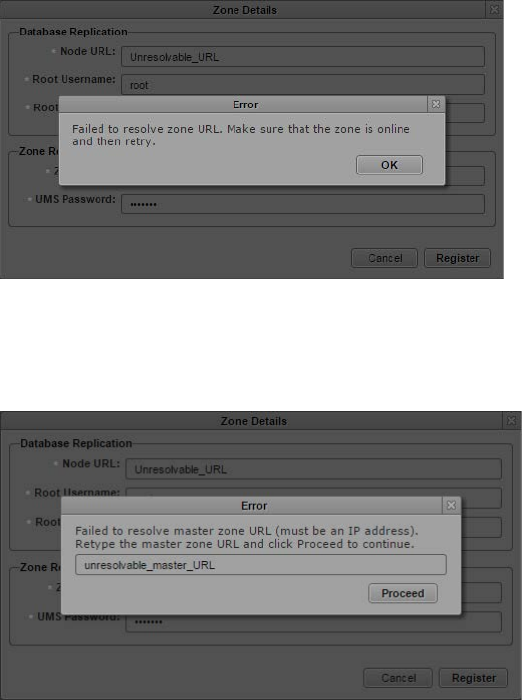

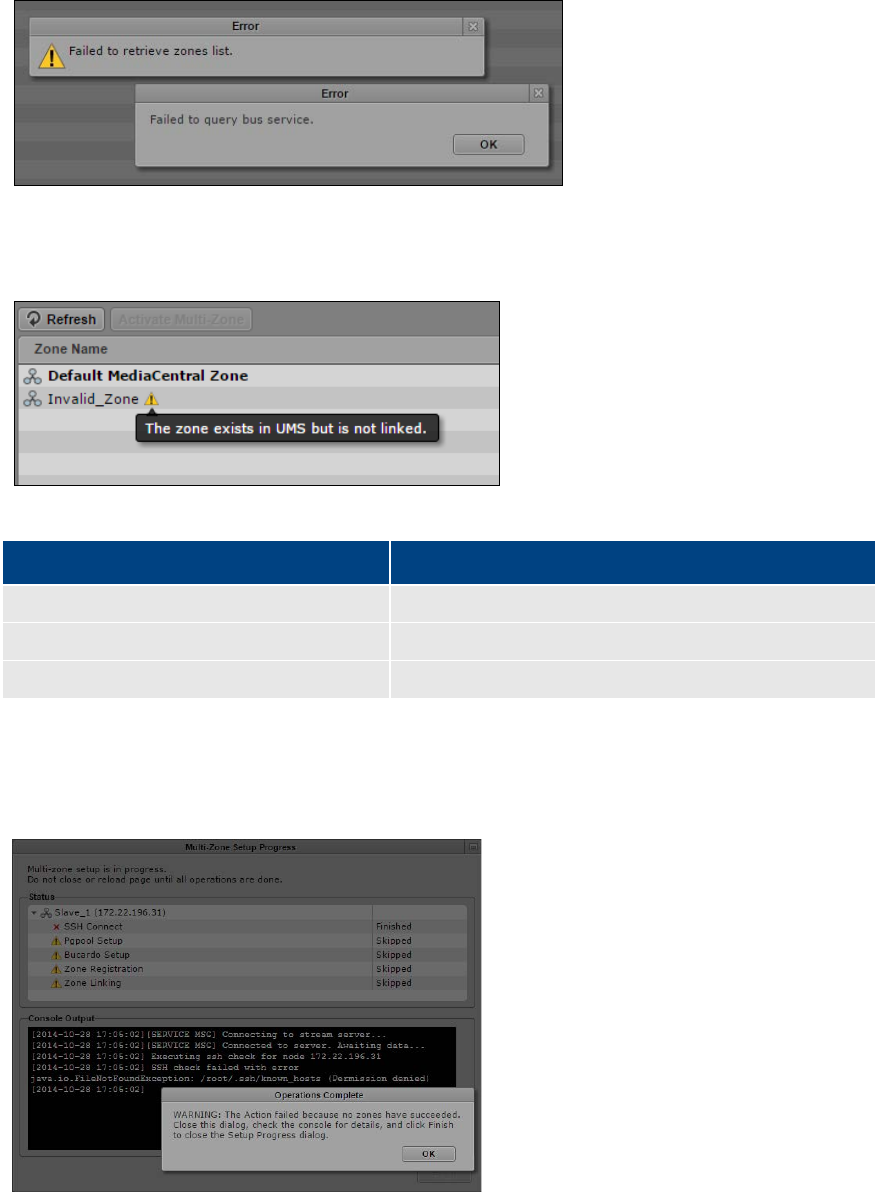

Troubleshooting the Multi-Zone Setup ................................................................................................. 169

Failed to Resolve Zone URL ............................................................................................................ 169

Bus Error ......................................................................................................................................... 170

Errors in Zone Configuration .......................................................................................................... 170

Errors During Setup ........................................................................................................................ 170

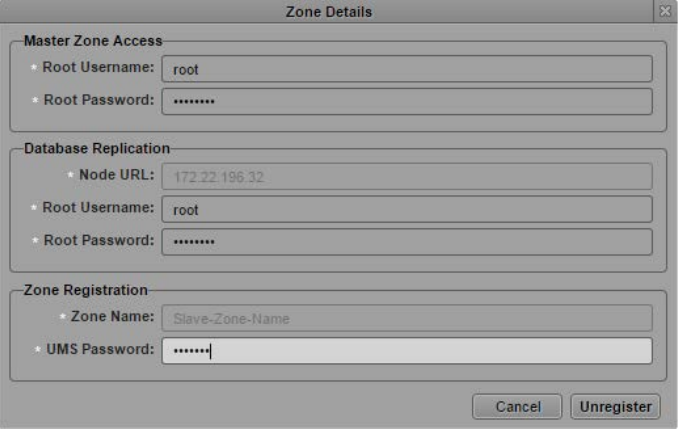

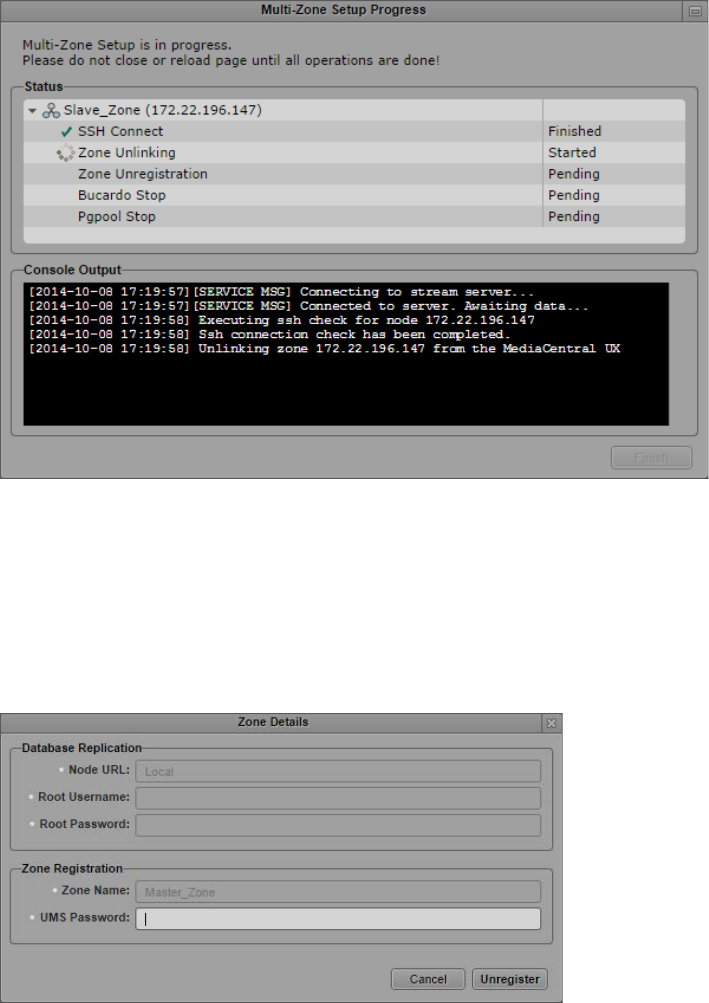

Dismantling a Multi-Zone Environment ................................................................................................. 171

APPENDICES ............................................................................................................................................. 173

Appendix A: Overview ............................................................................................................................ 174

Enabling the Player Demonstration Web Page ...................................................................................... 175

MCS 2.3 Installation and Configuration Guide

8

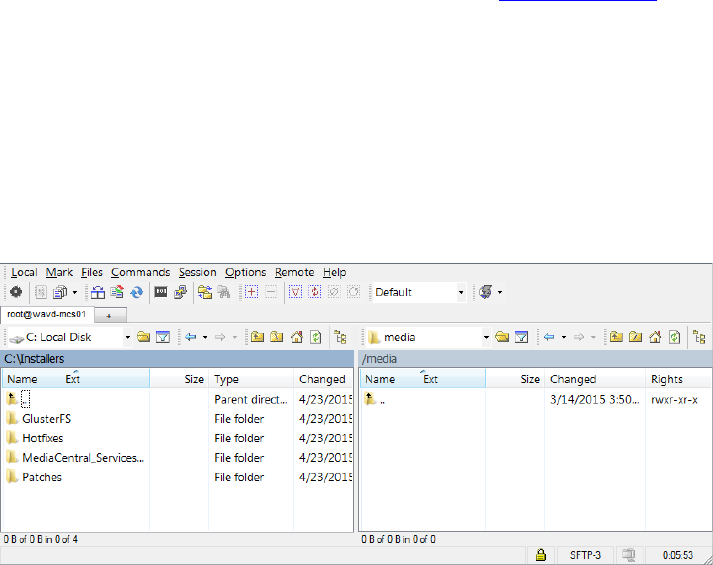

Copying Software to the MCS Server ..................................................................................................... 176

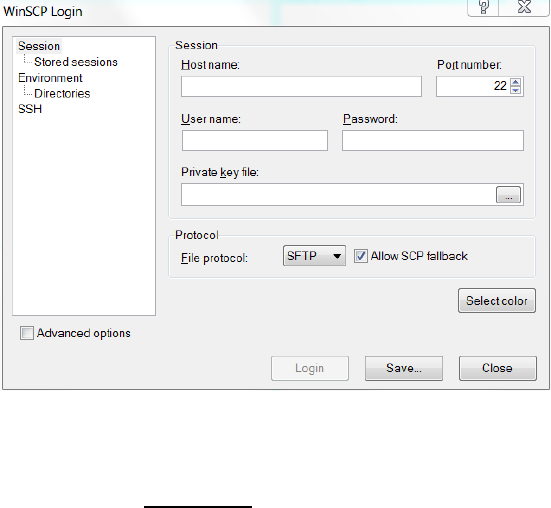

Copying Software Using WinSCP ........................................................................................................ 176

Copying Software Using a USB Drive .................................................................................................. 177

Installing MCS on Non-HP / Dell Hardware for Interplay MAM ............................................................ 179

Non-HP / Dell Installation Notes ........................................................................................................ 180

Working with the Dell RAID Controller .................................................................................................. 182

Creating the RAIDs ............................................................................................................................. 182

Deleting the RAIDs .............................................................................................................................. 183

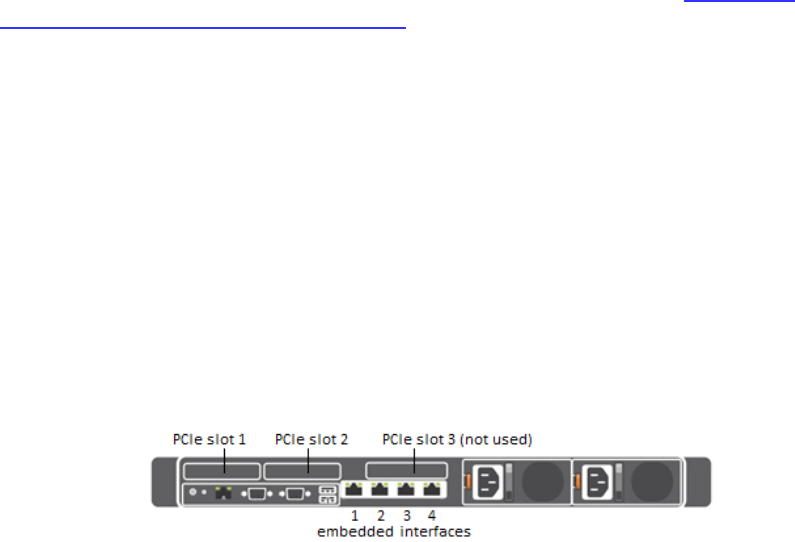

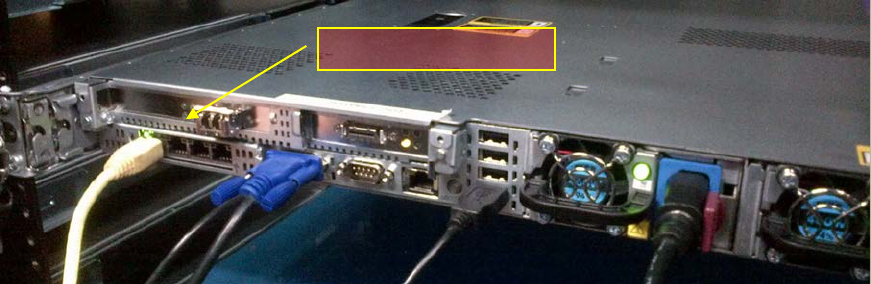

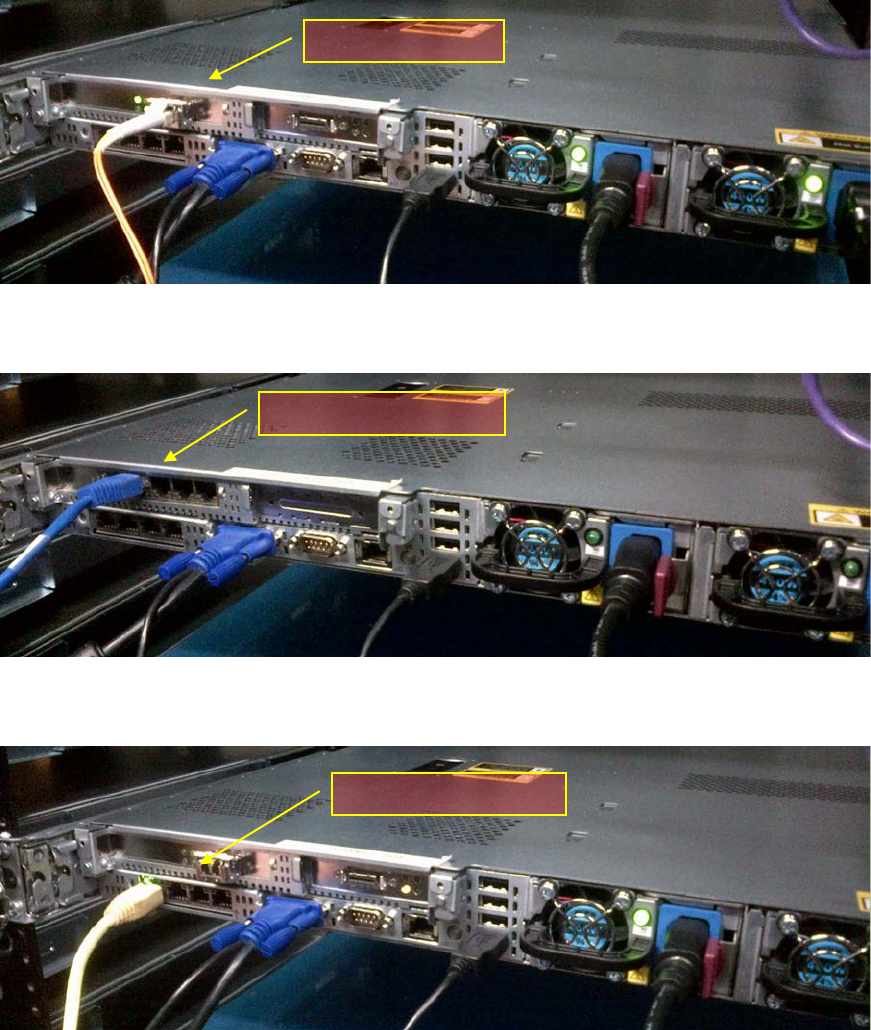

HP DL360p Gen8 Card Placement .......................................................................................................... 183

Connecting to non-ISIS Proxy Storage ................................................................................................ 183

Connecting to ISIS Proxy Storage ....................................................................................................... 184

Contents of the MCS Installation Package ............................................................................................. 185

Enabling Trusted Certificates ................................................................................................................. 186

Determining the Installed MCS Version ................................................................................................. 186

Using SNMP Monitoring on the MCPS Server........................................................................................ 186

Log Cycling ............................................................................................................................................. 187

Retrieving MCS Logs ............................................................................................................................... 187

Verifying Cache Directory Permissions .................................................................................................. 188

Monitoring the AAF Generator Service ................................................................................................. 189

Monitoring Services and Resources ....................................................................................................... 190

Backing up and Restoring the MCS Database ........................................................................................ 191

Verifying the ISIS Mount ........................................................................................................................ 193

Reconfiguring the ISIS Connection(s) ..................................................................................................... 194

Unicast Support in Clustering ................................................................................................................ 195

Configuring Unicast Cluster Communication.................................................................................. 196

Reconfiguring MediaCentral Settings in a Cluster ................................................................................. 197

Shutting Down or Rebooting a MediaCentral Cluster ........................................................................... 197

Identifying the Master, Slave and Load-Balancing Nodes ..................................................................... 198

Monitoring MCS High-Availability .......................................................................................................... 199

Monitoring Load Balancing .................................................................................................................... 200

Changing the Cluster Administrator Email Address ............................................................................... 202

Taking a Cluster Node Off-Line Temporarily .......................................................................................... 203

Permanently Removing a Node from a Cluster ..................................................................................... 203

MCS 2.3 Installation and Configuration Guide

9

Adding a New Node to a Cluster ............................................................................................................ 204

Port Requirements ................................................................................................................................. 206

Appendix B: Configuring Port Bonding for Interplay MAM ................................................................... 207

Verifying the Ethernet Ports ............................................................................................................... 207

Configuring the Ports ......................................................................................................................... 207

Appendix C: Enabling MOS Active-X Plug-Ins ......................................................................................... 210

Enabling MediaCentral MOS Plug-Ins in Chrome ............................................................................... 210

Setting Up Your Browser ................................................................................................................ 210

Enabling MOS.................................................................................................................................. 210

Installing Plug-Ins ............................................................................................................................ 211

Uninstalling the Chrome Extension ................................................................................................ 211

Enabling MediaCentral MOS Plug-Ins in Internet Explorer ................................................................ 211

Sample ActiveX Object in the Preferences File ............................................................................... 213

Appendix D: Configuring iNEWS for Integration with MediaCentral ..................................................... 214

Verifying MediaCentral Licenses on iNEWS ....................................................................................... 214

Editing SYSTEM.CLIENT.VERSIONS ..................................................................................................... 215

Editing SYSTEM.CLIENT.WINDOWS .................................................................................................... 216

Appendix E: The Avid MediaCentral UX Mobile Application ................................................................. 218

Before You Begin ................................................................................................................................ 218

iNEWS Configuration for Mobile Integration ......................................................................................... 219

Editing SYSTEM.CLIENT.VERSIONS ..................................................................................................... 219

Editing the iNEWS Configuration File ................................................................................................. 220

Installing Avid Central on an iOS Device ................................................................................................ 222

Installing Avid Central on an Android Device ......................................................................................... 222

Copyright and Disclaimer ....................................................................................................................... 223

MCS 2.3 Installation and Configuration Guide

10

PART I: INSTALLATION PREREQUISITES

MCS 2.3 Installation and Configuration Guide

11

Chapter Overview

The purpose of this chapter is to guide the preparation of all materials needed for the MCS

installation and to pre-configure all connected systems for integration with MCS.

The following table describes the topics covered in this chapter.

Step Task Time Est.

1

Before You Begin

varies

A quick check to make sure you have everything in place for an efficient and

successful installation.

2

Network Interface Cards and Network Connections

15 min

Network connection information for various deployment options.

3

Accessing the MCS Server(s)

1 min

Understanding how to connect to the MCS server(s).

4

Obtaining the Software

varies

Where to find all the software necessary for the installation.

5

Updating MediaCentral UX Licenses

15 min

Licensing requirements for Interplay Production and iNEWS.

6

Creating User Accounts

10 min

Convers the creation of user accounts required by MCS.

7

Adjusting Interplay Production Settings

5 min

Information on adjusting settings required by MCS.

8

Adding the MediaCentral UX Version to Avid iNEWS

5 min

Enables MediaCentral UX user to connect to iNEWS.

9

Installing the MediaCentral Distribution Service

10 min

Required for certain Interplay Production workflows.

10

Creating the MCS Installation USB Drive

45 min

In this procedure, you create the USB drive you will use to install the MCS

software.

MCS 2.3 Installation and Configuration Guide

12

Before You Begin

A successful MCS installation begins with careful planning. Ensuring that you have identified all

prerequisites to the installation is very important. Examples:

Networking: IP addresses, hostnames, domain name, DNS, NTP, SNMP, etc.

Cluster-specific information: Additional IP addresses, e-mail address

Users: Identifying users, user groups, and passwords (both local and domain users)

Host Solutions: Identify what systems will connect to MCS. Once identified, it is also

important to verify that these systems are available and operating normally. Examples:

o Avid | ISIS

o Avid | iNEWS

o Interplay | Production

o Interplay | MAM

o Media Composer | Cloud

For Interplay | Production deployments, the following systems could also be required:

o Interplay | Production Services Automation and Interplay | Consolidate (Required for

certain Interplay | Delivery workflows. Media | Index is required for this functionality.)

o Interplay | Transcode (Required for Send To Playback workflows)

o Interplay | STP Encode (Required for Send To Playback of Long GOP media formats)

o Interplay | Transfer (Required for Send To Playback to 3rd party playback solutions)

To assist in ensuring you have all the information you need prior to beginning the installation,

Avid provides a “Pre-Flight Checklist” available on the MediaCentral Services page of the Avid

Knowledge Base. Completing the Pre-Flight information will avoid delays during the installation

process.

While the installation procedures for MediaCentral UX, Media Composer Cloud and Interplay

MAM are very similar, the configuration steps are different. Any configuration differences

between MediaCentral UX and Media Composer Cloud will be identified in this document. For

differences in the Interplay MAM configuration process, refer to the Interplay | MAM

Installation Manual.

MCS is available in single server and cluster configurations. A cluster is a group of MCS servers

that provide redundancy, load balancing, and scale. Each server in a cluster is called a “node”.

During the cluster configuration, one server is identified as the Master node. If you have

multiple MCS servers in a rack, the Master node is usually the top-most server in the rack.

If you are configuring a cluster, configure Part I through Part III concurrently on all cluster nodes.

Part IV of this installation document must be completed on the Master node only, unless

otherwise instructed.

MCS 2.3 Installation and Configuration Guide

13

Network Interface Cards and Network Connections

Avid supports the onboard 1 Gb NIC for each of the HP DL360 Gen8 / Gen9 and Dell R620/R630

servers. However, certain workflows require the increased bandwidth of an add-in 10 Gb card.

For example, a 10 Gb connection is required for any MCS deployment that will use 100+ Mbps

video formats (e.g., AVC-I 100, DVCPro 100, DNxHD 145). 10 Gb connections may be desired for

additional bandwidth / playback streams.

The HP DL360p Gen8 supports additional 1 Gb network adapters. See HP DL360p Gen8 Card

Placement in Appendix A for more information.

For more information on determining 1 Gb or 10 Gb connections as well as information on

supported adapters, see the MediaCentral Platform Services Hardware Guide located on the

MediaCentral Services page of the Avid Knowledge Base.

The Zone in which the network connection is made must also be considered.

Zone 1: Connected to ISIS VLAN(s) through an ISS 1 Gb or 10 Gb port (direct connect)

Zone 2: Connected to ISIS VLAN(s) through a 1 Gb or 10 Gb port on an Avid qualified

layer-2 switch (non-routed)

Zone 3: Connected to an Avid qualified layer-3 switch (routed) with known Quality of

Service (QoS); traffic routed to ISIS (one hop) and (if applicable) load-balanced across

ISIS VLANs (approximately a 60/40 ratio)

For more information on networking in an Avid environment, see “Network Requirements for

ISIS and Interplay PAM and MAM” located at:

http://avid.force.com/pkb/articles/en_US/compatibility/en244197

MediaCentral UX and Media Composer Cloud

In this workflow MCS decodes the source media format on ISIS and streams images and sound

to the c

li

ents

. This workflow requires MCS to

connect to an Avid ISIS system.

Zone 1, Zone 2 or Zone 3 (recommended) connections are supported.

Interplay MAM

In this workflow MCS provides playback of video assets registered as browse proxies by

Interplay MAM. The connection required depends on where the browse proxies are stored.

For non-ISIS storage, the network connection is at the user’s discretion as long as it is a 1 Gb

connection or better.

For ISIS storage, Zone 1, Zone 2 or Zone 3 (recommended) connections are supported.

Avid iNEWS

iNEWS-only deployments do not require an ISIS connection. The network connection is at the

user’s discretion as long as it is a 1 Gb connection or better.

MCS 2.3 Installation and Configuration Guide

14

Accessing the MCS Server(s)

The initial configuration of the MCS server(s) must be completed using a directly connected

monitor and keyboard to the server, or through a KVM (keyboard, video and mouse) device.

Note: Some KVMs present virtual USB devices to the operating system. These devices might

be assigned a device name (sda, sdb) by RHEL during the installation, which results in a failed

installation. Disable this option on your KVM if applicable.

Once the initial configuration is complete, Avid recommends connecting to MCS indirectly

through SSH (Secure Shell). SSH is preferable for the following reasons:

Allows for an expandable view of the RHEL interface (adjustable window size)

Allows for multiple sessions to the host server or to multiple servers

Allows for simplified copy/paste of commands between SSH windows

Allows for logging of all session output

On Windows, PuTTY.exe is an example of a SSH client:

http://www.chiark.greenend.org.uk/~sgtatham/putty/download.html

At the appropriate point in the installation procedure, you will be given the option to switch

from a direct connection to an indirect connection.

Copying Software to the MCS Server

At various times during the upgrade, you will need to copy software to the MCS server. This task

can be performed using one of two methods:

Using a Windows system and a SFTP tool such as WinSCP

Connecting a USB drive directly to the server

While the SFTP method may be preferred for ease of access, the USB method may be required

for some operations such as backing up MCS files during a system upgrade.

See Copying Software to the MCS Server in Appendix A for details on each of these methods.

MCS 2.3 Installation and Configuration Guide

15

Obtaining the Software

Multiple software packages are required to properly install and configure MCS. These include:

Red Hat Enterprise Linux (RHEL)

RHEL Security Updates

MCS Installation Packages

MediaCentral Platform Services

(if applicable) MediaCentral Platform Services Updates

(if applicable) MediaCentral UX Closed Captioning Service

(if applicable) MediaCentral Distribution Service (MCDS)

(if applicable) Interplay MAM Connector

(If applicable) Media Composer Cloud plugin

(If applicable) Media Distribute

(if applicable) Storage Controller Driver ISO for the HP ProLiant Gen9 Server

(If applicable) GlusterFS

Red Hat Enterprise Linux (RHEL)

Due to licensing restrictions, Avid is unable to redistribute the RHEL installation media. The RHEL

installation image (.iso) file can be located at: http://www.redhat.com/en

Log in to your Red Hat Network account and download the DVD image (.iso) file.

Note: At the time of this document’s publication, the RHEL 6.5 ISOs were available by

choosing Red Hat Enterprise Linux Server from the Red Hat Product Downloads page.

Specify Red Hat Enterprise Linux Server (product variant), 6.5 (version) and x86_64

(architecture). Download the Binary DVD (rhel-server-6.5-x86_64-dvd.iso).

Important: MCS requires RHEL 6.5. Do not install any OS updates or patches unless

specifically directed to do so by Avid.

RHEL Security Updates

Red Hat has issued various security advisories for RHEL 6.5. Avid has tested and supports the

installation of specific patches for RHEL. For instructions and software download links, see the

“Security Updates” section in the Avid MediaCentral Platform Services ReadMe located on the

MediaCentral Services page of the Avid Knowledge Base.

MCS 2.3 Installation and Configuration Guide

16

MCS Installation Packages

The MCS software packages are available from the Avid Download Center.

Note: If you have not already created an Avid.com user account, you will need to do so now.

This Master Account enables you to sync your Avid Video Download and Avid Video

Community accounts as well as gain access to the Avid Support Center.

After you have logged into the Download Center, download the following:

Avid MediaCentral Platform Services

This is the primary MCS installer package. All MCS installations will require this

software.

(if applicable) Avid MediaCentral Platform Services Updates

Avid will often release updates to MCS providing fixes and new features. Consult the

ReadMe for your version of software for patch availability and specific installation

instructions.

(if applicable) Avid MediaCentral UX Closed Captioning Service

Introduced with MCS v2.3, this service adds functionality to MediaCentral UX that

enables new closed captioning workflows.

(if applicable) MediaCentral Distribution Service (MCDS)

MCDS is a service that resides on a Windows system that coordinates jobs with Avid

Production Services for send-to-playback operations. If your installation will include a

STP workflow, download this software.

(if applicable) Interplay MAM Connector

The MAM Connector enables Interplay MAM workflows within MediaCentral UX. If

your installation includes MAM integration, download this software.

(If applicable) Media Composer Cloud plugin

The Media Composer Cloud software is a plugin for the Media Composer editor that

enables remote editing capabilities. If your installation includes a Cloud workflow,

download this software.

(If applicable) Media Distribute

Media Distribute links production with distribution to web, mobile, and social media

outlets by orchestrating workflow and automating file preparation and transcoding.

Media Distribute is not publicly available on the Avid Download Center at this time. If

your installation includes a Distribute workflow, contact your Avid representative for

this software.

Note: If any of these packages are not available through the Download Center, contact your

Avid representative to obtain the necessary software.

MCS 2.3 Installation and Configuration Guide

17

Storage Controller Driver for the HP ProLiant Gen9 Server

By default the HP ProLiant Gen9 server storage controller does not support RHEL 6.5. Manually

download the following RHEL driver update disk (.iso) to enable RHEL 6.5 support:

dd-hpsa-18216-x86_64.iso

The driver update disk is available directly from Red Hat, but driver details and a link to the

correct page can be found at the “HP Servers Support & Certification Matrices” “technical

exceptions” web page:

http://h17007.www1.hp.com/us/en/enterprise/servers/supportmatrix/exceptions/rhel_excepti

ons.aspx

Note: This procedure applies to the HP ProLiant Gen9 server only.

To download the driver disk:

1. Open a web browser and navigate to the “HP Servers Support & Certification Matrices”

“technical exceptions” web page:

http://h17007.www1.hp.com/us/en/enterprise/servers/supportmatrix/exceptions/rhel_exc

eptions.aspx

2. Locate the link to Red Hat by searching for the words “DL360 Gen9” using the browser’s

“find on this page” feature.

3. Click on the RHEL6.5 x86_64 link.

You are redirected to the Red Hat web site.

4. Log in to your Red Hat Network account.

5. On the “Download Red Hat Enterprise Linux” page, locate the driver update disk (.iso):

dd-hpsa-18216-x86_64.iso

6. Click the “Download Now” button and save the ISO file to your computer.

You will use this driver update disk ISO file later when you create the MCS Installation USB

drive.

MCS 2.3 Installation and Configuration Guide

18

GlusterFS

GlusterFS is an open source software package that MCS uses to automate replication of the

dedicated media cache volumes (e.g. RAID 5) across all MCS servers in the cluster. Doing so

increases the speed at which clients can access the media on multiple cluster nodes. If you will

be installing a clustered MCS system, you should obtain this software.

MediaCentral Platform Services v2.3.2 introduced support for GlusterFS v3.6.4. This is the

minimum version required for MCS v2.3.2 and higher

MediaCentral Platform Services v2.3.1 introduced support for GlusterFS v3.4.4. MediaCentral

Platform Services v2.0 introduced support for GlusterFS v3.4.0. GlusterFS v3.4.0 is the minimum

version required for MCS v2.0 – v2.3.1.

Note: If you are installing GlusterFS v3.4.4 with MCS v2.3.1, a perquisite package called “xfsprogs” is

required. This software is not automatically installed during the RHEL and MCS install process. See

the MCS 2.3.1 ReadMe for details on how to obtain and install this software

Navigate to the download directory at gluster.org containing the GlusterFS version supported by

MCS: http://download.gluster.org/pub/gluster/glusterfs/3.6/3.6.4/RHEL/epel-6Server/x86_64

Download following packages:

glusterfs-3.6.4-1.el6.x86_64.rpm

glusterfs-api-3.6.4-1.el6.x86_64.rpm (new in GlusterFS v3.6.4)

glusterfs-cli-3.6.4-1.el6.x86_64.rpm

glusterfs-fuse-3.6.4-1.el6.x86_64.rpm

glusterfs-geo-replication-3.6.4-1.el6.x86_64.rpm

glusterfs-libs-3.6.4-1.el6.x86_64.rpm

glusterfs-server-3.6.4-1.el6.x86_64.rpm

Note: If the specified version of GlusterFS is no longer available, contact your Avid

representative.

MCS 2.3 Installation and Configuration Guide

19

Updating MediaCentral UX Licenses

Depending upon your deployment, one or more connected systems may need licenses installed

or updated to allow for integration with MCS.

If connecting to Interplay Production, MediaCentral UX users will consume Interplay Client

licenses.

If connecting to iNEWS, MediaCentral UX users will consume iNEWS Client licenses.

If connecting to Interplay Production and iNEWS, MediaCentral UX users will consume both

Interplay and iNEWS Client licenses.

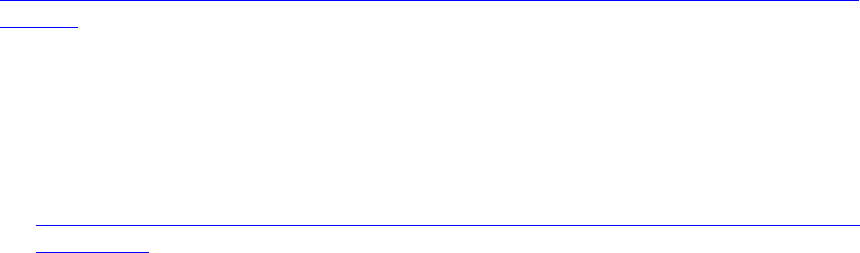

Interplay Production Licensing

If you will be connecting to an Interplay Production system, MediaCentral UX will validate client

licenses against the Interplay Engine. Additional client licenses might have been included with

the purchase of MCS. These licenses will need to be added to the Interplay Engine.

Note: Interplay Production v3.3 introduced a software licensing option (no dongle). The

following process is correct for the original dongle licensing process. For software licensing

procedures, see the Interplay | Production Software Installation and Configuration Guide.

1. Launch the Interplay Administrator on the Interplay Engine. This can be found at:

Start>Avid>Avid Interplay Access Utilities>Avid Interplay Administrator.

2. Log in using Interplay Production’s Administrator credentials.

3. From the main menu, select Server>Licenses.

4. Click the Import License button.

5. Navigate to the location of the license file (often provided on a USB drive).

6. Select the license file and click Open.

You should receive a message indicating that the license was successfully activated.

7. Log out and close the Interplay Administrator application.

For additional information on the Interplay Administrator, see the Interplay | Engine and

Interplay | Archive Engine Administration Guide.

MCS 2.3 Installation and Configuration Guide

20

iNEWS Licensing

If you will be connecting to an iNEWS system, MediaCentral UX will validate client licenses

against the iNEWS server(s). Additional client licenses might have been included with the

purchase of MCS. These licenses will need to be added to iNEWS.

See Appendix D: Configuring iNEWS for Integration with MediaCentral for more information.

Creating User Accounts

This section will cover the creation of user accounts for use with:

Interplay Production

Avid ISIS

iNEWS

Interplay MAM

Media Composer Cloud

Create any user accounts applicable to your installation.

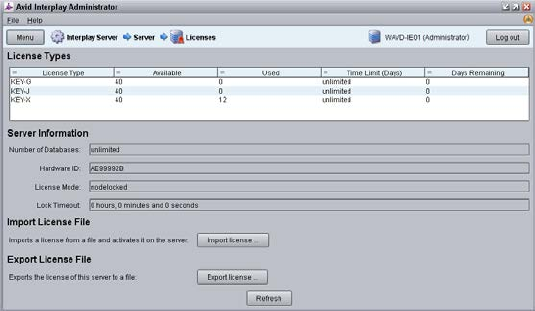

Interplay Production User

When integrating with Interplay Production, MediaCentral UX requires credentials to access the

Interplay Production database. This user should have Read/Write privileges to the entire

database (at minimum). For consistency purposes, this user and password should be the same

as the user you create on the Avid ISIS system.

Decide upon the name and password for this user now. Suggested user name: MCSAdmin

1. Launch the Interplay Administrator on the Interplay Engine. This can be found at:

Start>Avid>Avid Interplay Access Utilities>Avid Interplay Administrator.

2. Log in using Interplay Production’s Administrator credentials.

3. From the main menu, select User Management>User Management.

4. If multiple User Groups are created, highlight the User Group on the left under which you

want to create your new user. Example: Administrators

5. Click the Create User button at the top of the window.

6. Enter a name and password.

7. Verify that the MediaCentral UX Admin user has at least Read/Write access to the entire

database. Administrator-level access is not required, but recommended.

8. Click Apply.

9. Close the Interplay Administrator.

For additional information on users creation in Interplay Production, see the Interplay | Engine

and Interplay | Archive Engine Administration Guide.

MCS 2.3 Installation and Configuration Guide

21

Avid ISIS User

When integrating with Interplay Production, MediaCentral UX requires credentials to access the

media on the ISIS system to enable playback and allow for the creation of voice-over media. For

consistency purposes, this user and password should be the same as the user you create on the

Interplay Production system.

Decide upon the name and password for this user now. Suggested user name: MCSAdmin

1. Launch the ISIS Management Console page by opening a web browser and navigating to one

of the following:

ISIS 5500: http://System Director hostname

ISIS 7500: https://System Director hostname:5015

Note: In a failover configuration, use the virtual System Director hostname.

Alternatively, the IP address of the System Director (or virtual System Director) can also

be used.

2. Log in using the ISIS Administrator credentials.

3. From the main menu, select System>Users.

4. Click the New button to create a new user.

5. Give the user a name and password.

6. Under Workspace Access, assign privileges to all indexed workspaces. At minimum, the user

needs Read access to all workspaces indexed by the Interplay Media Indexer and

Read/Write access to the workspace where voice-overs will be recorded (workspace defined

in the Interplay Administrator> Interplay Application Settings).

7. Click Apply to create the user account.

8. Close the ISIS Management Console.

Note: If you are connecting to multiple ISIS systems, ensure the same user/password is

created on each ISIS.

For additional information on users creation in Interplay Production, see the Avid | ISIS

Administration Guide.

MCS 2.3 Installation and Configuration Guide

22

Avid iNEWS User

When integrating with iNEWS, the MCS Administrator requires access to the iNEWS database.

This can be accomplished by creating a custom user account (superuser rights not required) or

by associating the Administrator with an existing iNEWS account.

Decide upon the name and password for this user now. Suggested user name: MCSAdmin

For instructions on creating a custom iNEWS user account for MediaCentral UX, see the Avid

iNEWS Setup and Configuration Guide.

Interplay MAM User

If you are integrating with MCS as a player for an Interplay MAM system, a specialized user must

be created within the MCS user database.

Decide upon the name of this custom user now. Suggested user name: MAMuser

For details on creating this user, see Configuring MCS for Interplay MAM on page 107.

When installing Interplay MAM, a special user account is created on the MAM system. This user

is identified as: “Service-Ics” with password “Avid123”. If you will be installing the MAM

Connector software for MediaCentral UX, this “Service-Ics” is used in the System Settings.

For details on configuring the MAM Connector System Settings, see Configuring the MAM

Connector on page 159.

For more information on this user and setting, see the Avid MediaCentral | UX Administration

Guide.

Media Composer Cloud User

When integrating with Media Composer Cloud, a custom, matching user account needs to be

added to the Interplay Administrator (Application Database Settings) and to the MediaCentral

UX System Settings (ICPS>Player tab).

When added to the MediaCentral UX System Settings, this account is automatically added as an

MCS user and assigned a special “Playback-Only Client” user role. This will appear in the Users

Layout under Users>Unassigned>Playback-Only.

Rules regarding the specialized user account:

This must be a unique user created solely for this purpose. Do not use the same user

you created to log into Interplay Production and Avid ISIS.

Do not use an account that already exists as a Central User. It must be a new user.

This user should not be created as an Interplay Production or an ISIS user.

Remember that MCS runs on Linux. Both passwords and user accounts are case

sensitive.

Decide upon the name of this custom user now. Suggested user name: cloud

For more information on this user, see the Media Composer | Cloud Installation and

Configuration Guide.

MCS 2.3 Installation and Configuration Guide

23

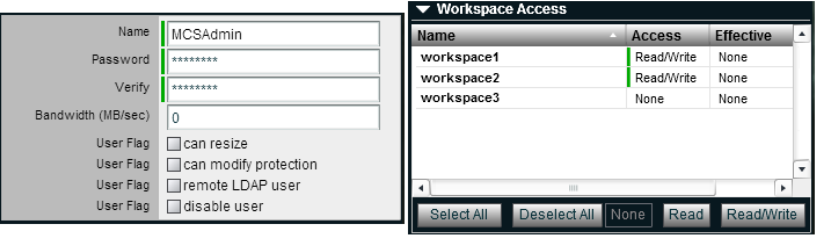

Adjusting Interplay Production Settings

When integrating with Interplay Production, MCS will check with the Interplay Engine for various

settings. This section is particularly important for sites requiring STP workflows or integrations

with Media Composer Cloud.

1. Launch the Interplay Administrator on the Interplay Engine. This can be found at:

Start>Avid>Avid Interplay Access Utilities>Avid Interplay Administrator

2. Log in using Interplay Production’s Administrator credentials.

3. From the main menu, select Application Settings>Application Database Settings. Adjust the

following:

a. Audio – General Settings: Ensure a Media Creation Workspace is selected.

b. Interplay Common Playback Service (Cloud workflow only):

i. Hostname: Enter the hostname of the MCS server. In the case of a cluster,

enter the virtual MCS hostname.

ii. Username / Password: Specify a custom user and password that can be used

to communicate with MediaCentral UX. This same user / password will be

entered in the MediaCentral UX System Settings under the ICPS>Player tab.

This must be a unique user created solely for this purpose.

c. ICS Settings – Messaging URL: This setting enables a messaging workflow between

MCS and Media Composer. Enter the hostname of the MCS server in the form of a

URL. In the case of a cluster, enter the virtual MCS hostname.

d. Interplay Sphere (Cloud workflow only): The proxy setting used with Media

Composer Cloud is shown here for legacy purposes only. The proxy settings were

moved to “Application Settings>Media Composer | Cloud Settings” in Interplay

Production v3.1.

4. Click Apply.

5. If you are integrating with Media Composer Cloud, you should also configure the Application

Settings>Media Composer | Cloud Settings. See the Media Composer | Cloud Installation

and Configuration Guide for additional details.

MCS 2.3 Installation and Configuration Guide

24

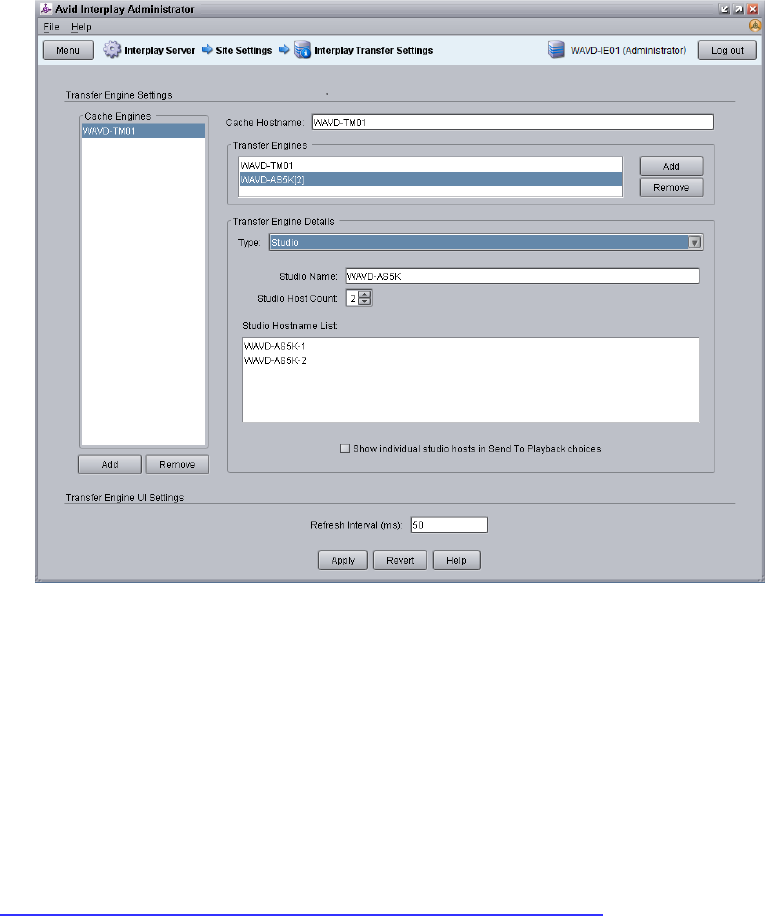

6. From the main menu, select Site Settings>Interplay Transfer Settings. MediaCentral will poll

this setting for configured Transfer Engines and AirSpeed servers when creating STP profiles.

7. Click Apply.

Adding the MediaCentral UX Version to Avid iNEWS

Before connecting MediaCentral UX to iNEWS, the MediaCentral UX Client version must be

added to the iNEWS SYSTEM.CLIENT.VERSIONS file.

Refer to the Avid MediaCentral Platform Services ReadMe for the correct version number for

your installation.

See Appendix D: Configuring iNEWS for Integration with MediaCentral for instructions on

adding the version number to iNEWS.

MCS 2.3 Installation and Configuration Guide

25

Installing the MediaCentral Distribution Service

The MediaCentral Distribution Service (MCDS) is a lightweight required for Send to Playback

(STP) operations. It analyzes the STP request and determines if additional actions are required

before sending the media to the playback device (AirSpeed, Transfer Engine, other). An

Interplay Transcode provider is required for STP operations requiring audio mixdowns (stereo

audio tracks) or video mixdowns (sequences with dissolves). An Interplay STP Encode provider

is required when using Long GOP media.

MCDS is not used if you are sending an asset directly to Transcode or Delivery. MCDS is not

used in iNEWS-only configurations.

The following guidelines apply to installing MCDS:

Supported on Windows 7 64-bit and Windows Server 2012.

o If you are running Windows Server 2012, you must install the Windows Desktop

Experience feature. For more information and installation procedures, see the Interplay

| Production Dell and HP Server Support guide at:

http://avid.force.com/pkb/articles/en_US/readme/Avid-Interplay-Production-V3-3-x-

Documentation

o If you are running Windows 7 N, Windows Media Player must be manually installed. For

more information on “N” versions of Windows, see:

http://windows.microsoft.com/en-us/windows7/products/what-is-windows-7-n-edition

Requires a minimum of 512MB of RAM and approximately 380MB of hard drive space on the

host server.

Ensure that all enabled network adapters on both the system hosting the MCDS and the Interplay

Production Services Engine are fully routable to each other.

Can be installed on a server hosting other services or applications, such as the Interplay

Production Services Engine or an Interplay Archive Provider.

Must be installed on a system that has the ISIS Client software installed.

Must not be installed on a system running Interplay Transcode or STP Encode. These systems

share libraries with MCDS and a co-install risks introducing incompatibilities.

Must not be installed on an Interplay Production Engine or Interplay Archive Engine.

As of Interplay Production 3.2, MCDS should not be installed on a Media Indexer server as the

two systems risk sharing network port 8443.

In MediaCentral UX 1.x, the MCDS service used port 8080 for normal http communication. In

MediaCentral UX v2.0 / MCDS v3.1, the port changed to 8890. This change allows MCDS to be

installed on the same server as the Production Services Engine (if desired). Port 8443 is used for

http security protocol.

Versions of MCDS prior to v3.3 required the Interplay Service Framework (32 or 64bit) software

to be installed on the system hosting MCDS. As of v3.3, this is no longer a requirement.

For redundancy purposes, MCDS can be installed on two systems. Installing a second instance

of MCDS does not provide load-balancing functionality. You will configure MediaCentral UX to

find the installed instance(s) of MCDS later in this document.

Installing the MediaCentral Distribution Service:

1. Launch the MCDS installer on your desired system(s).

MCS 2.3 Installation and Configuration Guide

26

2. Proceed through the installation and accept the defaults.

You may be asked to install prerequisite requirements such as Microsoft Visual C++.

3. Once installed, use Windows Computer Management to verify that the service is “Started”

and the Startup Type is configured as “Automatic”.

Creating the MCS Installation USB Drive

The MCS installation is initiated from a bootable USB drive that contains the OS (Red Hat

Enterprise Linux) and the MCS software. For this procedure you require the following items:

A Windows-based computer

The MCS installation package

RHEL installation image (.iso) file

A 16GB or larger USB drive

Note: Avid has been informed of problems using USB drives from some vendors. If the

server does not boot from the USB drive, or fails to complete the boot, try using a drive

from another vendor or a drive with a larger capacity (32GB).

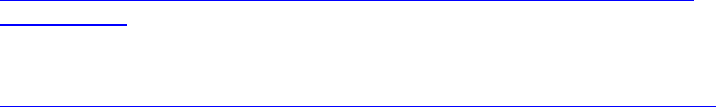

This procedure uses an application called “ISO to USB” to create a bootable USB drive containing

the required RHEL operating system and MCS files. Do not simply drag and drop installation files

onto the USB drive as this will not create the correct file structure needed to successfully install

MCS.

Note: Since “ISO to USB” creates a bootable drive, Avid recommends only connecting the

USB drive you plan to use to the Windows system. If you have more than one USB drive

inserted, make sure you choose the right one when performing this procedure.

Preparing the Installation Drive for the HP ProLiant Gen9

The procedure for creating the MCS installation drive on a ProLiant Gen9 server differs from that

of other installations. Make sure you follow the customized instructions for your server type.

This section contains three procedures:

Preparing the MCS Installation USB Drive

Copying the Storage Controller Driver to the USB Drive

Preparing the MCS Installation USB Drive:

1. Log into a Windows system.

2. Connect the USB drive to the Windows system and give it a few moments to be

recognized.

3. Use Windows Disk Management to format the USB drive as a FAT32 volume.

MCS 2.3 Installation and Configuration Guide

27

4. Extract the contents of the MediaCentral_Services_<version>_Linux.zip file to the

desktop (or your preferred destination directory).

5. Open the newly created MediaCentral_Services_<version>_Linux folder.

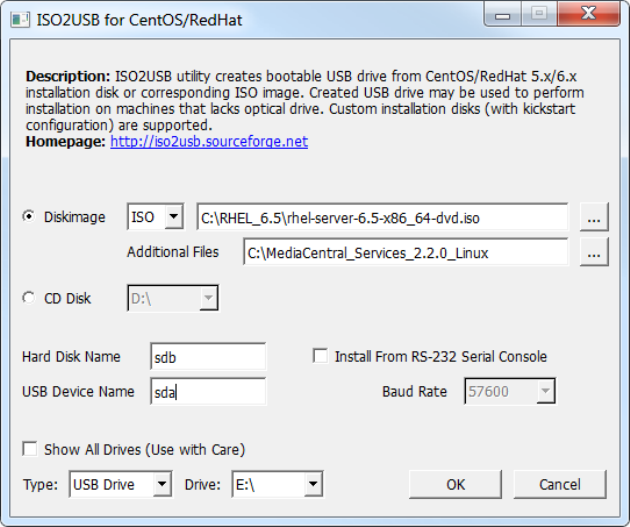

6. Double-click iso2usb.exe to launch the application.

7. Choose the Diskimage radio button then navigate to the RHEL image (.iso) file (named

rhel-server-6.5-x86_64-dvd.iso or similar).

Note: Make sure the RHEL image (.iso) file is accessible locally (preferable) or over the

network from your computer.

8. In the “Additional Files” field, navigate to the MediaCentral_Services_<version>_Linux

folder and click the “Select Folder” button.

9. Use the table below to verify that the Hard Disk Name and USB Device Name fields are

correct for your deployment.

RAID Configuration RAID 1

(“Hard Disk Name”)

RAID 5 USB

(“USB Device Name”)

RAID 1 and RAID 5

sda

sdb

sdc

RAID 1 only

sda

--

sdb

For example, for a system deploying both RAID 1 and RAID 5 volumes, enter the

following values in the dialog:

• Hard Disk Name: sda

• USB Device Name: sdc

Important: For those familiar with earlier HP servers, the HP ProLiant Gen9 server

identifies the RAID 1, RAID 5, and the USB drive with different device names.

MCS 2.3 Installation and Configuration Guide

28

10. Verify the USB Drive letter or use the pull-down menu to select a new drive letter.

11. Click OK in the main dialog.

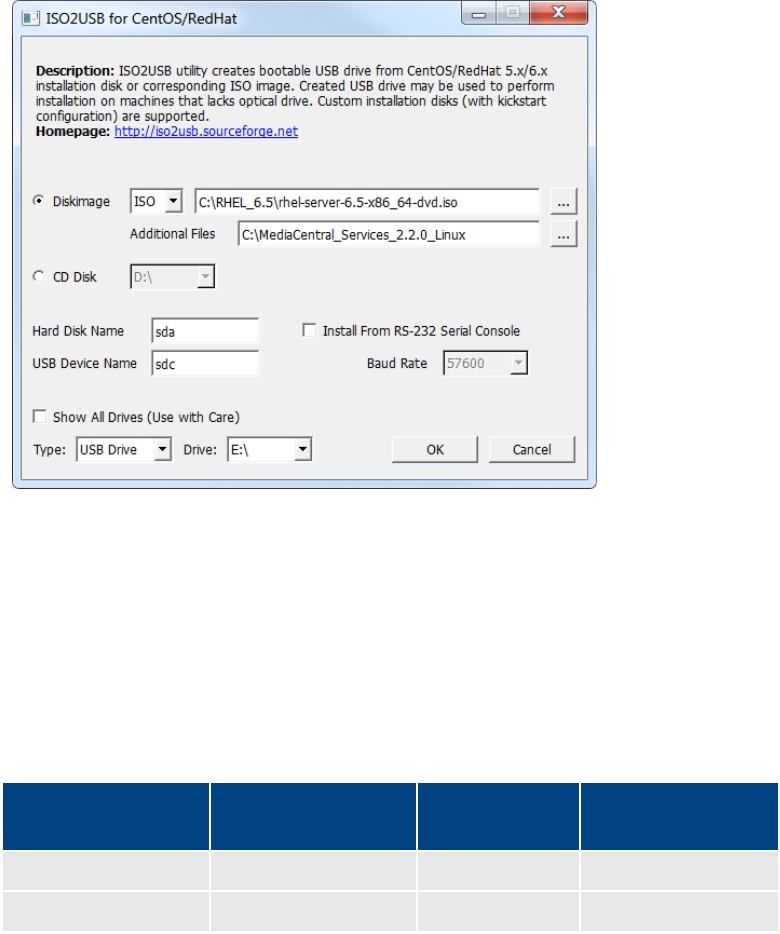

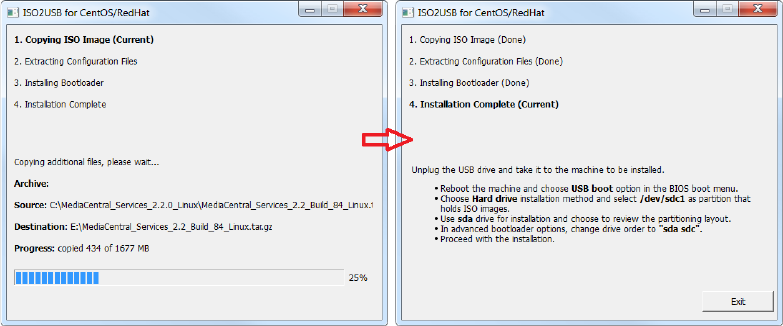

12. A process begins to copy the RHEL image (.iso) file and the MCS installation files to the

USB drive.

This process takes 10-20 minutes. Once complete, the USB drive has everything it needs

to complete the RHEL and MCS installation.

Note: Copying the RHEL image (.iso) file to the USB drive is a one-time process. To install

MCS on more than one server, or to re-install MCS, you do not need to repeat these

steps.

13. Click Exit to close the application.

Copying the Storage Controller Driver to the USB Drive:

1. With the Installation USB drive still plugged in to the Windows laptop or desktop, copy

the RAID controller driver ISO to the root directory on the drive:

dd-hpsa-18216-x86_64.iso

2. Rename the ISO:

• Old Name: dd-hpsa-18216-x86_64.iso

• New Name: z_dd-hpsa-18216-x86_64.iso

Renaming the driver ISO is essential, since the installation script attempts to mount the

first ISO it finds as the RHEL ISO. If you do not rename it, the installation will fail.

MCS 2.3 Installation and Configuration Guide

29

Preparing the Installation Drive for HP Gen8 and Dell Servers

Follow this procedure only if you are installing MCS software components on supported HP

Gen8 or Dell servers.

Preparing the MCS Installation USB Drive:

1. Log into a Windows system.

2. Connect the USB drive to the Windows system and give it a few moments to be

recognized.

3. Use Windows Disk Management to format the USB drive as a FAT32 volume.

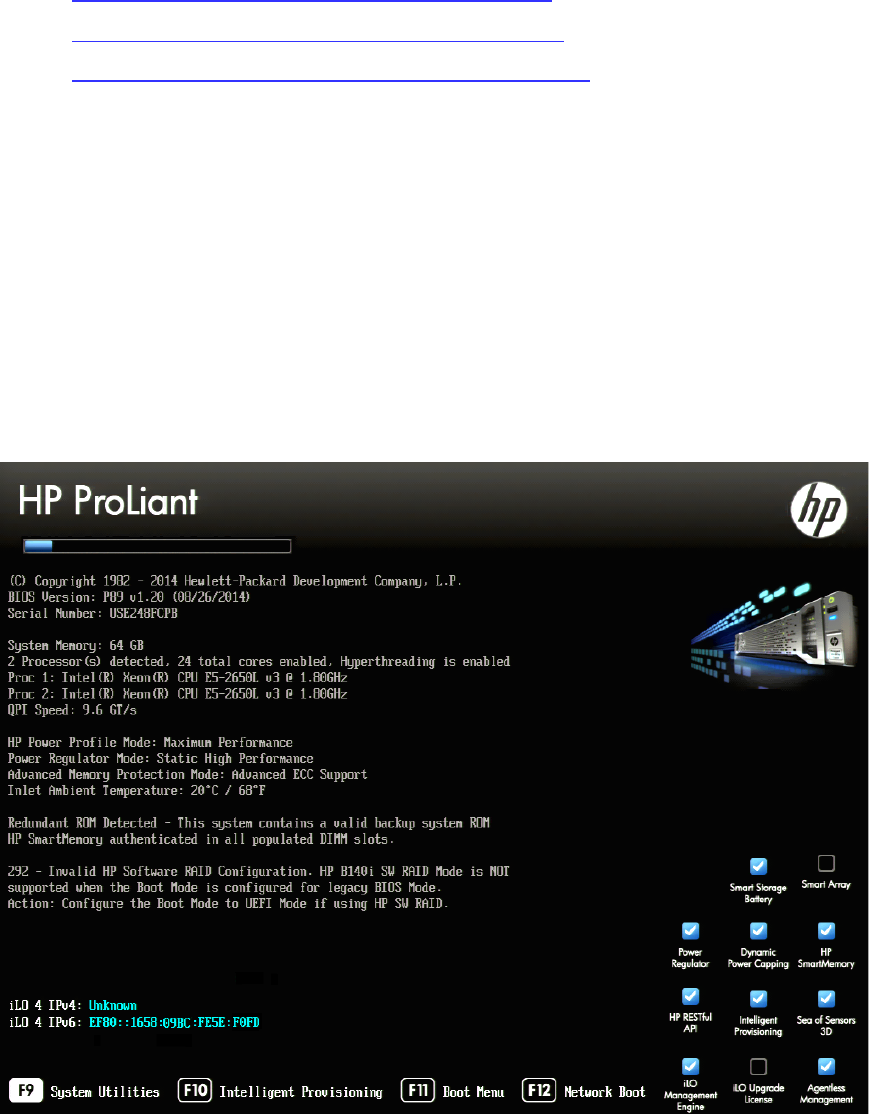

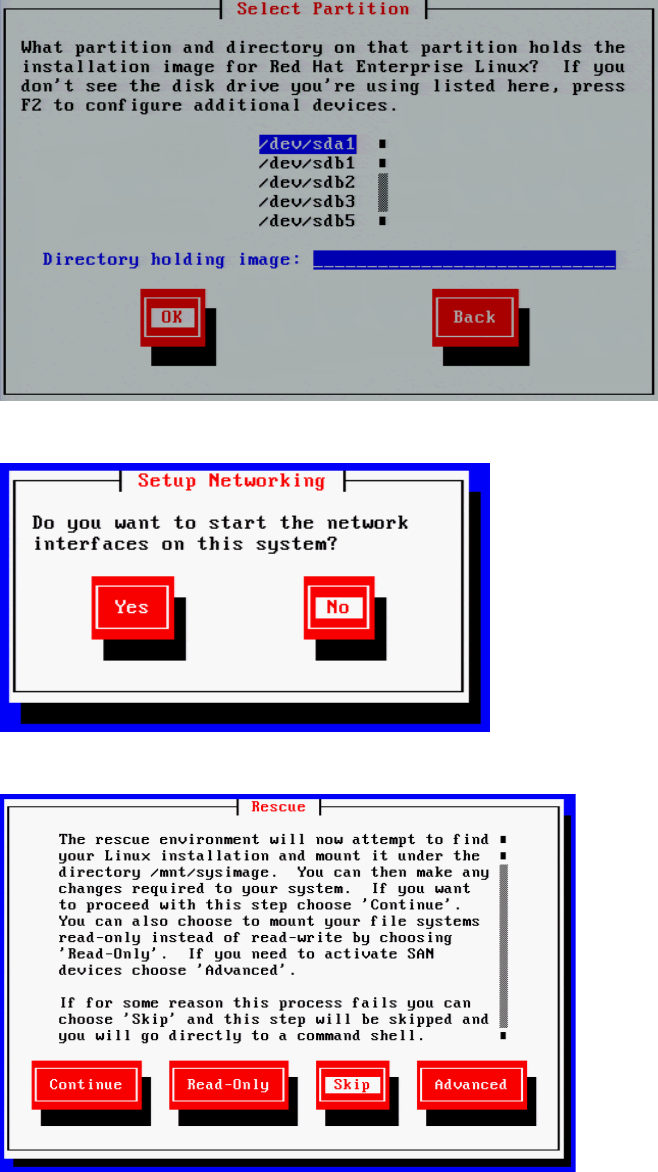

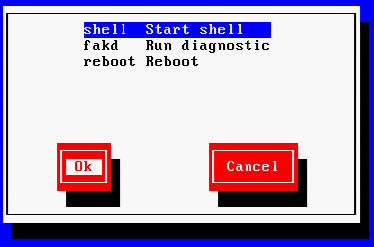

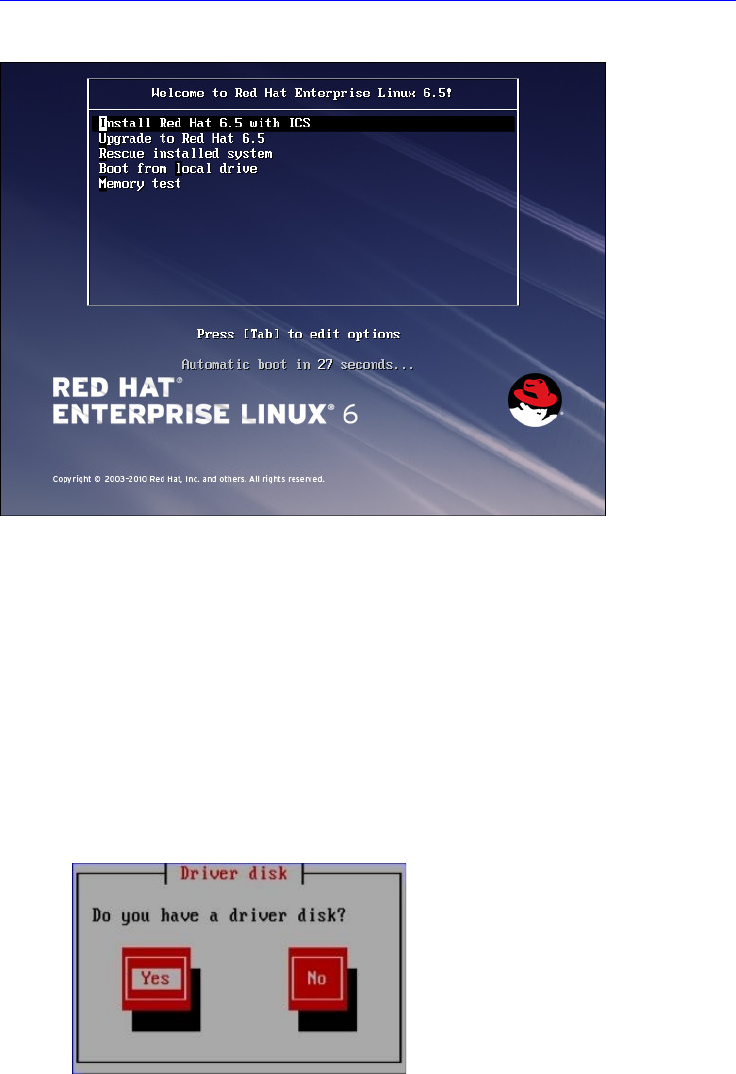

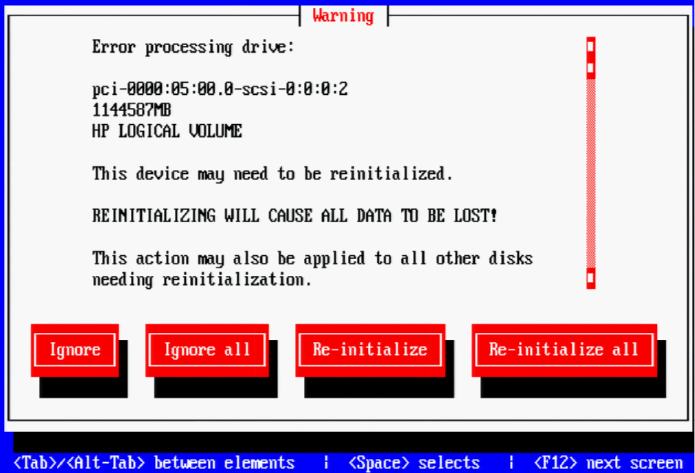

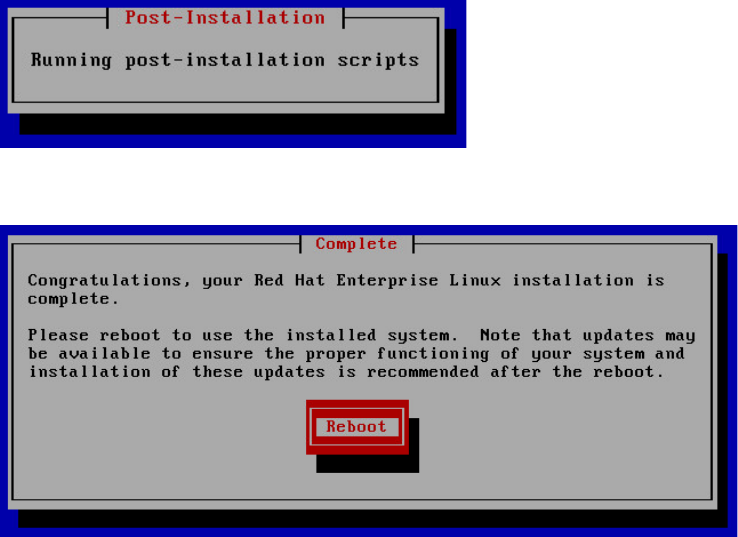

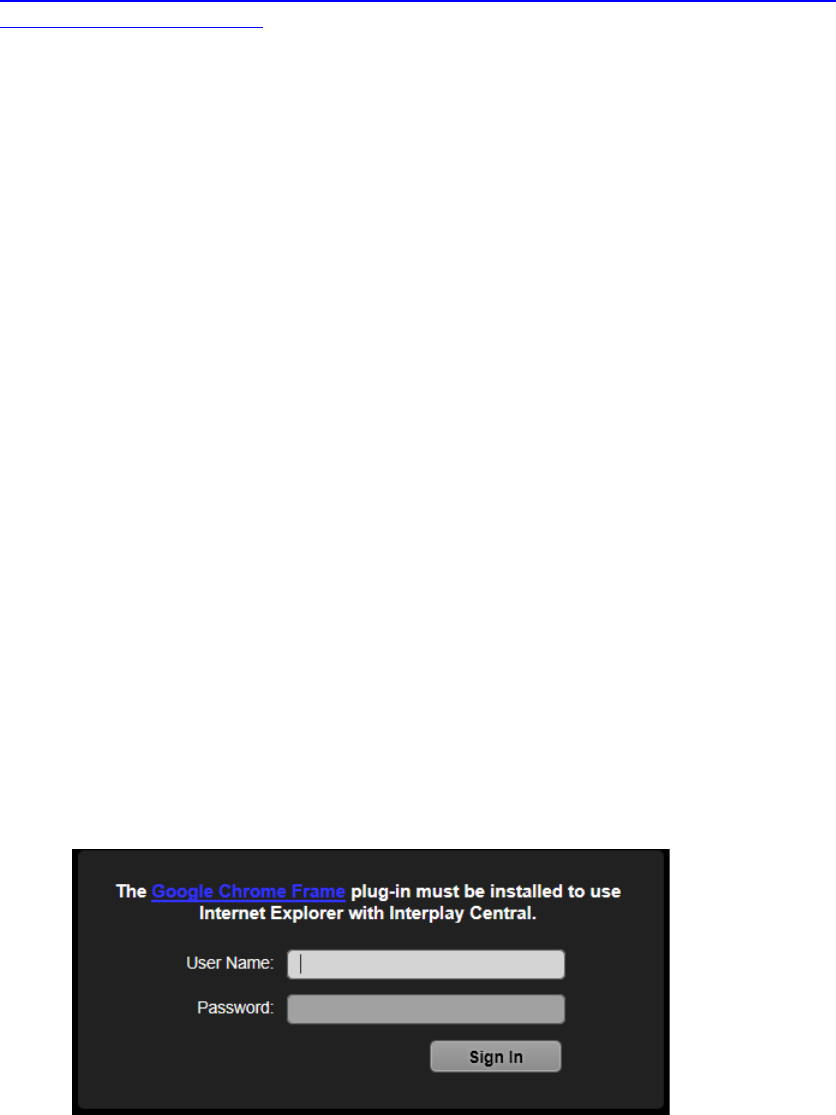

4. Extract the contents of the MediaCentral_Services_<version>_Linux.zip file to the