Vmware VSphere Command Line Interface Concepts And Examples ESXi 6.5 V Center Server Sphere Vcenter 65 En

User Manual: vmware vCenter Server - 6.5 - vSphere Command-Line Interface Concepts and Examples Free User Guide for VMware vCenter Software, Manual

Open the PDF directly: View PDF ![]() .

.

Page Count: 174 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- vSphere Command-Line Interface Concepts and Examples

- Contents

- About This Book

- vSphere CLI Command Overviews

- Introduction

- List of Available Host Management Commands

- Targets and Protocols for vCLI Host Management Commands

- Supported Platforms for vCLI Commands

- Commands with an esxcfg Prefix

- ESXCLI Commands Available on Different ESXi Hosts

- Trust Relationship Requirement for ESXCLI Commands

- Using ESXCLI Output

- Connection Options for vCLI Host Management Commands

- Connection Options for DCLI Commands

- vCLI Host Management Commands and Lockdown Mode

- Managing Hosts

- Managing Files

- Managing Storage

- Introduction to Storage

- Examining LUNs

- Detach a Device and Remove a LUN

- Reattach a Device

- Working with Permanent Device Loss

- Managing Paths

- Managing Path Policies

- Scheduling Queues for Virtual Machine I/O

- Managing NFS/NAS Datastores

- Monitor and Manage FibreChannel SAN Storage

- Monitoring and Managing Virtual SAN Storage

- Monitoring vSphere Flash Read Cache

- Monitoring and Managing Virtual Volumes

- Migrating Virtual Machines with svmotion

- Configuring FCoE Adapters

- Scanning Storage Adapters

- Retrieving SMART Information

- Managing iSCSI Storage

- iSCSI Storage Overview

- Protecting an iSCSI SAN

- Command Syntax for esxcli iscsi and vicfg-iscsi

- iSCSI Storage Setup with ESXCLI

- iSCSI Storage Setup with vicfg-iscsi

- Listing and Setting iSCSI Options

- Listing and Setting iSCSI Parameters

- Enabling iSCSI Authentication

- Set Up Ports for iSCSI Multipathing

- Managing iSCSI Sessions

- Managing Third-Party Storage Arrays

- Managing Users

- Managing Virtual Machines

- Managing vSphere Networking

- Introduction to vSphere Networking

- Retrieving Basic Networking Information

- Troubleshoot a Networking Setup

- Setting Up vSphere Networking with vSphere Standard Switches

- Setting Up Virtual Switches and Associating a Switch with a Network Interface

- Retrieving Information About Virtual Switches

- Adding and Deleting Virtual Switches

- Checking, Adding, and Removing Port Groups

- Managing Uplinks and Port Groups

- Setting the Port Group VLAN ID

- Managing Uplink Adapters

- Adding and Modifying VMkernel Network Interfaces

- Managing VMkernel Network Interfaces with ESXCLI

- Add and Configure an IPv4 VMkernel Network Interface with ESXCLI

- Add and Configure an IPv6 VMkernel Network Interface with ESXCLI

- Managing VMkernel Network Interfaces with vicfg-vmknic

- Add and Configure an IPv4 VMkernel Network Interface with vicfg-vmknic

- Add and Configure an IPv6 VMkernel Network Interface with vicfg-vmknic

- Setting Up vSphere Networking with vSphere Distributed Switch

- Managing Standard Networking Services in the vSphere Environment

- Setting the DNS Configuration

- Manage an NTP Server

- Manage the IP Gateway

- Setting Up IPsec

- Manage the ESXi Firewall

- Monitor VXLAN

- Monitoring ESXi Hosts

- Index

vSphere Command-Line Interface

Concepts and Examples

ESXi 6.5

vCenter Server 6.5

This document supports the version of each product listed and

supports all subsequent versions until the document is

replaced by a new edition. To check for more recent editions of

this document, see http://www.vmware.com/support/pubs.

EN-002352-00

vSphere Command-Line Interface Concepts and Examples

2 VMware, Inc.

You can find the most up-to-date technical documentation on the VMware Web site at:

hp://www.vmware.com/support/

The VMware Web site also provides the latest product updates.

If you have comments about this documentation, submit your feedback to:

docfeedback@vmware.com

Copyright © 2007–2017 VMware, Inc. All rights reserved. Copyright and trademark information.

VMware, Inc.

3401 Hillview Ave.

Palo Alto, CA 94304

www.vmware.com

Contents

About This Book 9

1vSphere CLI Command Overviews 11

Introduction 11

Documentation 12

Command-Line Help 12

List of Available Host Management Commands 13

Targets and Protocols for vCLI Host Management Commands 15

Supported Platforms for vCLI Commands 15

Commands with an esxcfg Prex 16

ESXCLI Commands Available on Dierent ESXi Hosts 17

Trust Relationship Requirement for ESXCLI Commands 17

Download and Install the vCenter Server Certicate 17

Using the --cacertsle Option 18

Using the --thumbprint Option 18

Use the Credential Store 18

Using ESXCLI Output 19

Connection Options for vCLI Host Management Commands 19

Connection Options for DCLI Commands 19

vCLI Host Management Commands and Lockdown Mode 19

2Managing Hosts 21

Stopping, Rebooting, and Examining Hosts 21

Stopping and Rebooting Hosts with ESXCLI 21

Stopping, Rebooting, and Examining Hosts with vicfg-hostops 22

Entering and Exiting Maintenance Mode 22

Enter and Exit Maintenance Mode with ESXCLI 22

Enter and Exit Maintenance Mode with vicfg-hostops 23

Backing Up Conguration Information with vicfg-cfgbackup 24

Backup Tasks 24

Backing Up Conguration Data 24

Restore Conguration Data 24

Using vicfg-cfgbackup from vMA 25

Managing VMkernel Modules 25

Manage Modules with esxcli system module 25

Manage Modules with vicfg-module 26

Using vicfg-authcong for Active Directory Conguration 26

Prepare ESXi Hosts for Active Directory Integration 26

Set Up Active Directory to Work with ESXi 27

Updating Hosts 27

VMware, Inc. 3

3Managing Files 29

Introduction to Virtual Machine File Management 29

Managing the Virtual Machine File System with vmkfstools 30

Upgrading VMFS3 Volumes to VMFS5 31

Managing VMFS Volumes 31

Managing Duplicate VMFS Datastores 32

Mounting Datastores with Existing Signatures 32

Resignaturing VMFS Copies 33

Reclaiming Unused Storage Space 34

Using vifs to View and Manipulate Files on Remote ESXi Hosts 35

vifs Options 36

vifs Examples 37

4Managing Storage 41

Introduction to Storage 42

How Virtual Machines Access Storage 42

Datastores 44

Storage Device Naming 44

Examining LUNs 45

Target and Device Representation 45

Examining LUNs with esxcli storage core 46

Examining LUNs with vicfg-scsidevs 47

Detach a Device and Remove a LUN 48

Reaach a Device 49

Working with Permanent Device Loss 49

Removing a PDL LUN 49

Reaach a PDL LUN 49

Managing Paths 50

Multipathing with Local Storage and FC SANs 50

Listing Path Information 51

Changing the State of a Path 53

Managing Path Policies 54

Multipathing Considerations 54

Changing Path Policies 55

Set Policy Details for Devices that Use Round Robin 56

Scheduling Queues for Virtual Machine I/O 57

Managing NFS/NAS Datastores 57

Capabilities Supported by NFS/NAS 57

Adding and Deleting NAS File Systems 58

Monitor and Manage FibreChannel SAN Storage 59

Monitoring and Managing Virtual SAN Storage 60

Retrieve Virtual SAN Information 60

Manage a Virtual SAN Cluster 60

Add and Remove Virtual SAN Storage 61

Monitoring vSphere Flash Read Cache 62

Monitoring and Managing Virtual Volumes 62

Migrating Virtual Machines with svmotion 63

Storage vMotion Uses 63

Storage vMotion Requirements and Limitations 63

vSphere Command-Line Interface Concepts and Examples

4 VMware, Inc.

Running svmotion in Interactive Mode 64

Running svmotion in Noninteractive Mode 64

Conguring FCoE Adapters 65

Scanning Storage Adapters 66

Retrieving SMART Information 66

5Managing iSCSI Storage 69

iSCSI Storage Overview 69

Discovery Sessions 70

Discovery Target Names 71

Protecting an iSCSI SAN 71

Protecting Transmied Data 71

Securing iSCSI Ports 72

Seing iSCSI CHAP 72

Command Syntax for esxcli iscsi and vicfg-iscsi 73

esxcli iscsi Command Syntax 74

Key to esxcli iscsi Short Options 74

vicfg-iscsi Command Syntax 75

iSCSI Storage Setup with ESXCLI 78

Set Up Software iSCSI with ESXCLI 78

Set Up Dependent Hardware iSCSI with ESXCLI 80

Set Up Independent Hardware iSCSI with ESXCLI 82

iSCSI Storage Setup with vicfg-iscsi 84

Set Up Software iSCSI with vicfg-iscsi 85

Set Up Dependent Hardware iSCSI with vicfg-iscsi 86

Set Up Independent Hardware iSCSI with vicfg-iscsi 87

Listing and Seing iSCSI Options 89

Listing iSCSI Options with ESXCLI 89

Seing MTU with ESXCLI 89

Listing and Seing iSCSI Options with vicfg-iscsi 89

Listing and Seing iSCSI Parameters 90

Listing and Seing iSCSI Parameters with ESXCLI 90

Returning Parameters to Default Inheritance with ESXCLI 92

Listing and Seing iSCSI Parameters with vicfg-iscsi 92

Returning Parameters to Default Inheritance with vicfg-iscsi 94

Enabling iSCSI Authentication 94

Enable iSCSI Authentication with ESXCLI 94

Enable Mutual iSCSI Authentication with ESXCLI 95

Enable iSCSI Authentication with vicfg-iscsi 96

Set Up Ports for iSCSI Multipathing 97

Managing iSCSI Sessions 98

Introduction to iSCSI Session Management 98

Listing iSCSI Sessions 98

Logging in to iSCSI Sessions 99

Removing iSCSI Sessions 99

Contents

VMware, Inc. 5

6Managing Third-Party Storage Arrays 101

Managing NMP with esxcli storage nmp 101

Device Management with esxcli storage nmp device 102

Listing Paths with esxcli storage nmp path 102

Managing Path Selection Policy Plug-Ins with esxcli storage nmp psp 103

Fixed Path Selection Policy Operations 104

Customizing Round Robin Setup 105

Managing SATPs 106

Path Claiming with esxcli storage core claiming 108

Using the Reclaim Troubleshooting Command 109

Unclaiming Paths or Sets of Paths 109

Managing Claim Rules 110

Change the Current Claim Rules in the VMkernel 110

Adding Claim Rules 111

Removing Claim Rules 112

Listing Claim Rules 113

Loading Claim Rules 113

Moving Claim Rules 113

Load and Apply Path Claim Rules 114

Running Path Claim Rules 114

7Managing Users 117

Users in the vSphere Environment 117

vicfg-user Command Syntax 118

Managing Users with vicfg-user 118

Assigning Permissions with ESXCLI 120

8Managing Virtual Machines 123

vmware-cmd Overview 123

Connection Options for vmware-cmd 124

General Options for vmware-cmd 124

Format for Specifying Virtual Machines 124

List and Register Virtual Machines 125

Retrieving Virtual Machine Aributes 125

Managing Virtual Machine Snapshots with vmware-cmd 127

Take a Virtual Machine Snapshot 127

Reverting and Removing Snapshots 128

Powering Virtual Machines On and O 128

Connecting and Disconnecting Virtual Devices 129

Working with the AnswerVM API 130

Forcibly Stop a Virtual Machine with ESXCLI 130

9Managing vSphere Networking 131

Introduction to vSphere Networking 131

Networking Using vSphere Standard Switches 132

Networking Using vSphere Distributed Switches 133

Retrieving Basic Networking Information 134

Troubleshoot a Networking Setup 134

vSphere Command-Line Interface Concepts and Examples

6 VMware, Inc.

Seing Up vSphere Networking with vSphere Standard Switches 136

Seing Up Virtual Switches and Associating a Switch with a Network Interface 136

Retrieving Information About Virtual Switches 137

Adding and Deleting Virtual Switches 138

Checking, Adding, and Removing Port Groups 139

Managing Uplinks and Port Groups 140

Seing the Port Group VLAN ID 141

Managing Uplink Adapters 142

Adding and Modifying VMkernel Network Interfaces 145

Seing Up vSphere Networking with vSphere Distributed Switch 148

Managing Standard Networking Services in the vSphere Environment 149

Seing the DNS Conguration 149

Seing the DNS Conguration with ESXCLI 149

Seing the DNS Conguration with vicfg-dns 151

Manage an NTP Server 152

Manage the IP Gateway 152

Seing Up IPsec 153

Using IPsec with ESXi 154

Managing Security Associations 155

Managing Security Policies 156

Manage the ESXi Firewall 157

Monitor VXLAN 158

10 Monitoring ESXi Hosts 161

Using resxtop for Performance Monitoring 161

Managing Diagnostic Partitions 161

Managing Core Dumps 162

Manage Local Core Dumps with ESXCLI 162

Manage Core Dumps with ESXi Dump Collector 163

Manage Core Dumps with vicfg-dumppart 164

Conguring ESXi Syslog Services 164

Managing ESXi SNMP Agents 166

Conguring SNMP Communities 166

Conguring the SNMP Agent to Send Traps 166

Conguring the SNMP Agent for Polling 168

Retrieving Hardware Information 169

Index 171

Contents

VMware, Inc. 7

vSphere Command-Line Interface Concepts and Examples

8 VMware, Inc.

About This Book

vSphere Command-Line Interface Concepts and Examples explains how to use the commands in the VMware

vSphere® Command-Line Interface (vCLI) and includes command overviews and examples.

Intended Audience

This book is for experienced Windows or Linux system administrators who are familiar with vSphere

administration tasks and data center operations and know how to use commands in scripts.

VMware Technical Publications Glossary

VMware Technical Publications provides a glossary of terms that might be unfamiliar to you. For denitions

of terms as they are used in VMware technical documentation, go to

hp://www.vmware.com/support/pubs.

Related Documentation

The documentation for vCLI is available in the vSphere Documentation Center and on the vCLI

documentation page. Go to hp://www.vmware.com/support/developer/vcli.

nGeing Started with vSphere Command-Line Interfaces includes information about available CLIs, enabling

the ESXi Shell, as well as installing and running vCLI and DCLI commands.

nvSphere Command-Line Interface Reference is a reference to both ESXCLI commands and vicfg-

commands. The vicfg- command help is generated from the POD available for each command, run

pod2html for any vicfg- command to generate individual HTML les interactively. The ESXCLI

reference information is generated from the ESXCLI help.

nDCLI Reference is a reference to DCLI commands for managing vCenter services.

The documentation for PowerCLI is available in the vSphere Documentation Center and on the PowerCLI

documentation page.

The vSphere SDK for Perl documentation explains how you can use the vSphere SDK for Perl and related

utility applications to manage your vSphere environment.

The vSphere Management Assistant Guide explains how to install and use the vSphere Management Assistant

(vMA). vMA is a virtual machine that includes vCLI and other prepackaged software.

Background information for the tasks discussed in this book is available in the vSphere documentation set.

The vSphere documentation consists of the combined VMware vCenter Server and ESXi documentation.

VMware, Inc. 9

vSphere Command-Line Interface Concepts and Examples

10 VMware, Inc.

vSphere CLI Command Overviews 1

This chapter introduces the command set, presents supported commands for dierent versions of vSphere,

lists connection options, and discusses vCLI and lockdown mode.

This chapter includes the following topics:

n“Introduction,” on page 11

n“List of Available Host Management Commands,” on page 13

n“Targets and Protocols for vCLI Host Management Commands,” on page 15

n“Supported Platforms for vCLI Commands,” on page 15

n“Commands with an esxcfg Prex,” on page 16

n“ESXCLI Commands Available on Dierent ESXi Hosts,” on page 17

n“Trust Relationship Requirement for ESXCLI Commands,” on page 17

n“Using ESXCLI Output,” on page 19

n“Connection Options for vCLI Host Management Commands,” on page 19

n“Connection Options for DCLI Commands,” on page 19

n“vCLI Host Management Commands and Lockdown Mode,” on page 19

Introduction

The commands in the vSphere CLI package allow you to perform vSphere conguration tasks using

commands from vCLI package installed on supported platforms, or using commands from vMA. The

package consists of several command sets.

The following table lists the components of the vSphere CLI command set.

vCLI Commands Description

ESXCLI commands Manage many aspects of an ESXi host. You can run ESXCLI commands remotely or in the

ESXi Shell.

You can also run ESXCLI commands from the PowerCLI prompt by using the Get-EsxCli

cmdlet.

vicfg- commands Set of commands for many aspects of host management Eventually, these commands will be

replaced by ESXCLI commands.

A set of esxcfg- commands that precisely mirrors the vicfg- commands is also included in

the vCLI package.

VMware, Inc. 11

vCLI Commands Description

Other commands

(vmware-cmd, vifs,

vmkfstools)

Commands implemented in Perl that do not have a vicfg- prex. These commands are

scheduled to be deprecated or replaced by ESXCLI commands.

DCLI commands Manage VMware SDDC services.

DCLI is a CLI client to the vSphere Automation SDK interface for managing VMware SDDC

services. A DCLI command talks to a vSphere Automation API endpoint to locate relevant

information, and then executes the command and displays result to the user.

You can install the vSphere CLI command set on a supported Linux or Windows system. See Geing Started

with vSphere Command-Line Interfaces. You can also deploy the vSphere Management Assistant (vMA) to an

ESXi system of your choice.

After installation, run vCLI commands from the Linux or Windows system or from vMA.

nManage ESXi hosts with other vCLI commands by specifying connection options such as the target

host, user, and password or a conguration le. See “Connection Options for vCLI Host Management

Commands,” on page 19.

nManage vCenter services with DCLI commands by specifying a target vCenter Server system and

authentication options. See Geing Started with vSphere Command-Line Interfaces for a list of connection

options.

Documentation

You can nd information about dierent aspects of vCLI in separate publications.

Geing Started with vSphere Command-Line Interfaces includes information about available CLIs, enabling the

ESXi Shell, and installing and running vCLI commands.

Reference information for vCLI and DCLI commands is available on the vCLI documentation page

hp://www.vmware.com/support/developer/vcli/ and in the vSphere Documentation Center for the product

version that you are using.

nvSphere Command-Line Interface Reference is a reference to vicfg- and related vCLI commands and

includes reference information for ESXCLI commands. All reference information is generated from the

help.

nA reference to esxtop and resxtop is included in the Resource Management documentation.

nThe DCLI Reference is included separately from the vSphere Command-Line Interface Reference. All

reference information is generated from the help.

Command-Line Help

Available command-line help diers for the dierent command sets.

Command Set Available Command-Line Help

vicfg-

commands

Run <vicfg-cmd> --help for an overview of each options.

Run Pod2Html with a vicfg- command as input and pipe the output to a le for more detailed help

information.

pod2html vicfg-authconfig.pl > vicfg-authconfig.html

This output corresponds to the information available in the vSphere Command-Line Interface Reference.

ESXCLI

commands

Run --help at any level of the hierarchy for information about both commands and namespaces

available from that level.

DCLI commands Run --help for any command or namespace to display the input options, whether the option is

required, and the input option type. For namespaces, --help displays all available child namespaces

and commands.

Run dcli --help to display usage information for DCLI.

vSphere Command-Line Interface Concepts and Examples

12 VMware, Inc.

List of Available Host Management Commands

vCLI host management commands from earlier versions have been replaced with commands that have

equivalent functionality.

The following table lists vCLI host management commands in alphabetical order and the corresponding

ESXCLI command if available. For ESXCLI, new commands and namespaces are added with each release.

See the Release Notes for the corresponding release for information.

Functionality of the DCLI command set that is being added in vSphere 6.0 and later is dierent from these

commands. They are not included in the table.

vCLI 4.1

Command

vCLI 5.1 and later

Command Comment

esxcli esxcli (new syntax) All vCLI 4.1 commands have been renamed. Signicant additions

have been made to ESXCLI. Many tasks previously performed

with a vicfg- command is now performed with ESXCLI.

resxtop resxtop (No ESXCLI

equivalent)

Supported only on Linux.

Monitors in real time how ESXi hosts use resources. Runs in

interactive or batch mode.

See “Using resxtop for Performance Monitoring,” on page 161.

See the vSphere Resource Management documentation for a detailed

reference.

svmotion svmotion (No ESXCLI

equivalent)

Must run against a

vCenter Server system.

Moves a virtual machine’s conguration le, and, optionally, its

disks, while the virtual machine is running.

See “Migrating Virtual Machines with svmotion,” on page 63.

vicfg-advcfg esxcli system settings

advanced

Performs advanced conguration.

The advanced seings are a set of VMkernel options. These

options are typically in place for specic workarounds or

debugging.

Use this command as instructed by VMware.

vicfg-authconfig vicfg-authconfig (No

ESXCLI equivalent)

Remotely congures Active Directory seings for an ESXi host.

See “Using vicfg-authcong for Active Directory Conguration,”

on page 26.

vicfg-cfgbackup vicfg-cfgbackup (No

ESXCLI equivalent)

Cannot run against a

vCenter Server system.

Backs up the conguration data of an ESXi system and restores

previously saved conguration data.

See “Backing Up Conguration Information with vicfg-

cfgbackup,” on page 24.

vicfg-dns esxcli network ip dns Species an ESXi host’s DNS (Domain Name Server)

conguration.

See “Seing the DNS Conguration,” on page 149.

vicfg-dumppart esxcli system coredump Sets both the partition (esxcli system coredump partition)

and the network (esxcli system coredump network) to use for

core dumps. Use this command to set up ESXi Dump Collector.

See “Managing Diagnostic Partitions,” on page 161.

vicfg-hostops esxcli system

maintenancemode

esxcli system shutdown

Manages hosts.

“Stopping, Rebooting, and Examining Hosts,” on page 21.

“Entering and Exiting Maintenance Mode,” on page 22.

vicfg-ipsec esxcli network ip ipsec Sets up IPsec (Internet Protocol Security), which secures IP

communications coming from and arriving at ESXi hosts. ESXi

hosts support IPsec using IPv6.

See “Seing Up IPsec,” on page 153.

vicfg-iscsi esxcli iscsi Manages hardware and software iSCSI storage.

See Chapter 5, “Managing iSCSI Storage,” on page 69.

Chapter 1 vSphere CLI Command Overviews

VMware, Inc. 13

vCLI 4.1

Command

vCLI 5.1 and later

Command Comment

vicfg-module esxcli system module Enables VMkernel options. Use this command with the options

listed in this document, or as instructed by VMware.

See “Managing VMkernel Modules,” on page 25.

vicfg-mpath

vicfg-mpath35

esxcli storage core

path

Congures storage arrays.

See “Managing Paths,” on page 50.

vicfg-nas esxcli storage nfs Manages NAS/NFS lesystems.

See “Managing NFS/NAS Datastores,” on page 57.

vicfg-nics esxcli network nic Manages the ESXi host's uplink adapters.

See “Managing Uplink Adapters,” on page 142.

vicfg-ntp vicfg-ntp (No ESXCLI

equivalent)

Denes the NTP (Network Time Protocol) server.

See “Manage an NTP Server,” on page 152.

vicfg-rescan esxcli storage core

adapter rescan

Rescans the storage conguration.

See “Scanning Storage Adapters,” on page 66.

vicfg-route esxcli network ip route Manages the ESXi host's route entry.

See “Manage the IP Gateway,” on page 152.

vicfg-scsidevs esxcli storage core

device

Finds and examines available LUNs.

See “Examining LUNs,” on page 45.

vicfg-snmp esxcli system snmp Manages the SNMP agent. See “Managing ESXi SNMP Agents,”

on page 166. Using SNMP in a vSphere environment is discussed

in detail in the vSphere Monitoring and Performance documentation.

New options added in vCLI 5.0.

Expanded SNMP support added in vCLI 5.1.

vicfg-syslog esxcli system syslog Species log seings for ESXi hosts including local storage

policies and server and port information for network logging. See

“Conguring ESXi Syslog Services,” on page 164.

The vCenter Server and Host Management documentation explains

how to set up system logs using the vSphere Web Client.

vicfg-user vicfg-user (No ESXCLI

equivalent)

Creates, modies, deletes, and lists local direct access users and

groups of users. See Chapter 7, “Managing Users,” on page 117.

The vSphere Security documentation discusses security

implications of user management and custom roles.

vicfg-vmknic esxcli network ip

interface

Adds, deletes, and modies VMkernel network interfaces.

See “Adding and Modifying VMkernel Network Interfaces,” on

page 145.

vicfg-volume esxcli storage

filesystem

Supports resignaturing the copy of a VMFS volume, and

mounting and unmounting the copy.

See “Managing Duplicate VMFS Datastores,” on page 32.

vicfg-vswitch esxcli network vswitch Adds or removes virtual switches or modies virtual switch

seings.

See “Seing Up Virtual Switches and Associating a Switch with a

Network Interface,” on page 136.

vifs vifs (No ESXCLI equivalent) Performs le system operations such as retrieving and uploading

les on the ESXi system.

See “Managing the Virtual Machine File System with vmkfstools,”

on page 30.

vihostupdate esxcli software vib Updates legacy ESXi hosts to a dierent version of the same major

release.

You cannot run vihostupdate against ESXi 5.0 and later hosts.

See “Managing VMkernel Modules,” on page 25.

vSphere Command-Line Interface Concepts and Examples

14 VMware, Inc.

vCLI 4.1

Command

vCLI 5.1 and later

Command Comment

vmkfstools vmkfstools (No ESXCLI

equivalent)

Creates and manipulates virtual disks, le systems, logical

volumes, and physical storage devices on an ESXi host.

See “Managing the Virtual Machine File System with vmkfstools,”

on page 30.

vmware-cmd vmware-cmd (No ESXCLI

equivalent)

Performs virtual machine operations remotely. This includes, for

example, creating a snapshot, powering the virtual machine on or

o, and geing information about the virtual machine.

See Chapter 8, “Managing Virtual Machines,” on page 123.

Targets and Protocols for vCLI Host Management Commands

Most vCLI commands are used to manage or retrieve information about one or more ESXi hosts. They can

target an ESXi host or a vCenter Server system.

When you target a vCenter Server system, you can use --vihost to specify the ESXi host to run the

command against. The only exception is svmotion, which you can run against vCenter Server systems, but

not against ESXi systems.

The following commands must have an ESXi system, not a vCenter Server system as a target.

nvifs

nvicfg-user

nvicfg-cfgbackup

nvihostupdate

nvmkfstools

The resxtop command requires an HTTPS connection. All other commands support HTTP and HTTPS.

Supported Platforms for vCLI Commands

Platform support for vCLI commands diers depending on the vCenter Server and ESXi version.

You cannot run the vihostupdate command against an ESXi 5.0 or later system.

You cannot run vicfg-syslog --setserver or vicfg-syslog --setport with an ESXi 5.0 or later target.

The following table lists platform support for the dierent vCLI commands.

Command ESXi 5.x and 6.x

vCenter Server 5.x

and 6.x ESXi 4.x ESX 4.x

vCenter Server

4.x

DCLI No No No No No

esxcli Yes Yes Yes Yes No

resxtop Yes (from Linux) Yes (from Linux) Yes (from

Linux)

Yes (from

Linux)

Yes (from Linux)

svmotion No Yes No No Yes

vicfg-advcfg Yes Yes Yes Yes Yes

vicfg-authconfig Yes Yes Yes Yes Yes

vicfg-cfgbackup Yes No Yes No No

vicfg-dns Yes Yes Yes Yes Yes

vicfg-dumppart Yes Yes Yes Yes Yes

vicfg-hostops Yes Yes Yes Yes Yes

Chapter 1 vSphere CLI Command Overviews

VMware, Inc. 15

Command ESXi 5.x and 6.x

vCenter Server 5.x

and 6.x ESXi 4.x ESX 4.x

vCenter Server

4.x

vicfg-ipsec Yes No Yes Yes No

vicfg-iscsi Yes Yes Yes Yes Yes

vicfg-module Yes Yes Yes Yes Yes

vicfg-mpath Yes Yes Yes Yes Yes

vicfg-nas Yes Yes Yes Yes Yes

vicfg-nics Yes Yes Yes Yes Yes

vicfg-ntp Yes Yes Yes Yes Yes

vicfg-rescan Yes Yes Yes Yes Yes

vicfg-route Yes Yes Yes Yes Yes

vicfg-scsidevs Yes Yes Yes Yes Yes

vicfg-snmp Yes No Yes Yes No

vicfg-syslog No No for 5.0 target Yes No Yes

vicfg-user Yes No Yes Yes No

vicfg-vmknic Yes Yes Yes Yes Yes

vicfg-volume Yes Yes Yes Yes Yes

vicfg-vswitch Yes Yes Yes Yes Yes

vifs Yes No Yes Yes No

vihostupdate Use esxcli

software vib

instead.

Use esxcli

software vib

instead.

Yes Yes No

vmkfstools Yes No Yes Yes No

vmware-cmd Yes Yes Yes Yes Yes

vicfg-mpath35 No No No No No

vihostupdate35 No No No No No

Commands with an esxcfg Prefix

To facilitate easy migration if shell scripts that use esxcfg- commands, the vCLI package includes a copy of

each vicfg- command that uses an esxcfg prex.

I You should use ESXCLI or the vCLI commands with the vicfg prex. Commands with the

esxcfg prex are available mainly for compatibility reasons and are now obsolete. vCLI esxcfg- commands

are equivalent to vicfg- commands, but not completely equivalent to the deprecated esxcfg- service console

commands.

The Following table lists all vCLI vicfg- commands for which a vCLI command with an esxcfg prex is

available.

Command with vicfg Prefix Command with esxcfg Prefix

vicfg-advcfg esxcfg-advcfg

vicfg-cfgbackup esxcfg-cfgbackup

vicfg-dns esxcfg-dns

vicfg-dumppart esxcfg-dumppart

vicfg-module esxcfg-module

vSphere Command-Line Interface Concepts and Examples

16 VMware, Inc.

Command with vicfg Prefix Command with esxcfg Prefix

vicfg-mpath esxcfg-mpath

vicfg-nas esxcfg-nas

vicfg-nics esxcfg-nics

vicfg-ntp esxcfg-ntp

vicfg-rescan esxcfg-rescan

vicfg-route esxcfg-route

vicfg-scsidevs esxcfg-scsidevs

vicfg-snmp esxcfg-snmp

vicfg-syslog esxcfg-syslog

vicfg-vmknic esxcfg-vmknic

vicfg-volume esxcfg-volume

vicfg-vswitch esxcfg-vswitch

ESXCLI Commands Available on Different ESXi Hosts

The available ESXCLI commands depend on the ESXi host version.

When you run an ESXCLI vCLI command, you must know the commands supported on the target host. For

example, if you run commands against ESXi 5.x hosts, ESXCLI 5.x commands are supported. If you run

commands against ESXi 6.x hosts, ESXCLI 6.x commands are supported.

Some commands or command outputs are determined by the host type. In addition, VMware partners

might develop custom ESXCLI commands that you can run on hosts where the partner VIB has been

installed.

Run esxcli --server <target> --help for a list of namespaces supported on the target. You can drill down

into the namespaces for additional help.

Trust Relationship Requirement for ESXCLI Commands

Starting with vSphere 6.0, ESXCLI checks whether a trust relationship exists between the machine where

you run the ESXCLI command and the ESXi host. An error results if the trust relationship does not exist.

Download and Install the vCenter Server Certificate

You can download the vCenter Server root certicate by using a Web browser and add it to the trusted

certicates on the machine where you plan to run ESXCLI commands.

Procedure

1 Enter the URL of the vCenter Server system or vCenter Server Appliance into a Web browser.

2 Click the Download trusted root link.

3 Change the extension of the downloaded le to .zip. (The le is a ZIP le of all certicates in the

TRUSTED_ROOTS store).

4 Extract the ZIP le.

A certicates folder is extracted. The folder includes les with the extension .0. .1, and so on, which are

certicates, and les with the extension .r0, r1, and so on which are CRL les associated with the

certicates.

Chapter 1 vSphere CLI Command Overviews

VMware, Inc. 17

5 Add the trusted root certicates to the list of trusted roots.

The process diers depending on the platform that you are on.

What to do next

You can now run ESXCLI commands against any host that is managed by the trusted vCenter Server system

without supplying additional information if you specify the vCenter Server system in the --server option

and the ESXi host in the --vihost option.

Using the --cacertsfile Option

Using a certicate to establish the trust relationship is the most secure option.

You can specify the certicate with the --cacertsfile parameter or the VI_CACERTFILE variable.

Using the --thumbprint Option

You can supply the thumbprint for the target ESXi host or vCenter Server system in the --thumbprint

parameter or the VI_THUMBPRINT variable.

When you run a command, ESXCLI rst checks whether a certicate le is available. If not, ESXCLI checks

whether a thumbprint of the target server is available. If not, you receive an error of the following type.

Connect to sof-40583-srv failed. Server SHA-1 thumbprint: 5D:01:06:63:55:9D:DF:FE:38:81:6E:2C:FA:

71:BC:Usin63:82:C5:16:51 (not trusted).

You can run the command with the thumbprint to establish the trust relationship, or add the thumbprint to

the VI_THUMBPRINT variable. For example, using the thumbprint of the ESXi host above, you can run the

following command.

esxcli --server myESXi --username user1 --password 'my_password' --thumbprint 5D:

01:06:63:55:9D:DF:FE:38:81:6E:2C:FA:71:BC:63:82:C5:16:51 storage nfs list

Use the Credential Store

Your vCLI installation includes a credential store. You can establish trust for a user with the credential store.

You can manage the credential store with the credstore-admin utility application, which is located in

the /Perl/apps/general directory inside the VMware vSphere CLI directory.

I Updating the credential store is a two-step process. First you add the user and password for

the server, and then you add the thumbprint for the server.

Procedure

1 Add the user and password for the target ESXi host to the local credential store.

credstore_admin.pl add --server <esxi_HOSTNAME_OR_IP> --username <user> --password <pwd>

2 Add the thumbprint for the target ESXi host. This thumbprint was returned in the error when you

aempted to connect to the host.

credstore_admin.pl add --server <esxi_HOSTNAME_OR_IP> --thumbprint <thumbprint>

3If you are using a non-default credential store le, you must pass it in with the --credstore option.

If you do not use the --credstore option, the host becomes accessible without authentication.

vSphere Command-Line Interface Concepts and Examples

18 VMware, Inc.

Using ESXCLI Output

Many ESXCLI commands generate output you might want to use in your application. You can run esxcli

with the --formatter dispatcher option and send the resulting output as input to a parser.

The --formatter options supports three values - csv, xml, and keyvalue and is used before any namespace.

The following example lists all le system information in CSV format.

esxcli --formatter=csv storage filesystem list

You can pipe the output to a le.

esxcli --formatter=keyvalue storage filesystem list > myfilesystemlist.txt

I You should always use a formaer for consistent output.

Connection Options for vCLI Host Management Commands

You can run host management commands such as ESXCLI commands, vicfg- commands, and other

commands with several dierent connection options.

You can target hosts directly or target a vCenter Server system and specify the host you want to manage. If

you are targeting a vCenter Server system, specify the Platform Services Controller, which includes the

vCenter Single Sign-On service, for best security.

I For connections to ESXi hosts version 6.0 or later, vCLI supports both the IPv4 protocol and the

IPv6 protocol. For earlier versions, vCLI supports only IPv4. In all cases, you can congure IPv6 on the

target host with several of the networking commands.

See the Geing Started with vSphere Command-Line Interfaces documentation for a complete list and examples.

Connection Options for DCLI Commands

DCLI is a CLI client to the vSphere Automation SDK interface for managing VMware SDDC services. A

DCLI command talks to a vSphere Automation SDK endpoint to get the vSphere Automation SDK

command information, executes the command, and displays result to the user.

You can run DCLI commands locally or from an administration server.

nRun DCLI on the Linux shell of a vCenter Server Appliance.

nInstall vCLI on a supported Windows or Linux system and target a vCenter Server Windows

installation or a vCenter Server Appliance. You have to provide endpoint information to successfully

run commands.

DCLI commands support other connection options than other commands in the command set.

See the Geing Started with vSphere Command-Line Interfaces documentation for a complete list and examples.

vCLI Host Management Commands and Lockdown Mode

For additional security, an administrator can place one or more hosts managed by a vCenter Server system

in lockdown mode. Lockdown mode aects login privileges for the ESXi host.

See the vSphere Security document in the vSphere Documentation Center for a detailed discussion of normal

lockdown mode and strict lockdown mode, and of how to enable and disable them.

Chapter 1 vSphere CLI Command Overviews

VMware, Inc. 19

To make changes to ESXi systems in lockdown mode, you must go through a vCenter Server system that

manages the ESXi system as the user vpxuser and include both the --server and --vihost parameters.

esxcli --server MyVC --vihost MyESXi storage filesystem list

The command prompts for the vCenter Server system user name and password.

The following commands cannot run against vCenter Server systems and are therefore not available in

lockdown mode.

nvifs

nvicfg-user

nvicfg-cfgbackup

nvihostupdate

nvmkfstools

If you have problems running a command on an ESXi host directly, without specifying a vCenter Server

target, check whether lockdown mode is enabled on that host.

vSphere Command-Line Interface Concepts and Examples

20 VMware, Inc.

Managing Hosts 2

Host management commands can stop and reboot ESXi hosts, back up conguration information, and

manage host updates. You can also use a host management command to make your host join an Active

Directory domain or exit from a domain.

For information on updating ESXi 5.0 hosts with the esxcli software command and on changing the host

acceptance level to match the level of a VIB that you might want to use for an update, see the vSphere

Upgrade documentation in the vSphere 5.0 Documentation Center.

This chapter includes the following topics:

n“Stopping, Rebooting, and Examining Hosts,” on page 21

n“Entering and Exiting Maintenance Mode,” on page 22

n“Backing Up Conguration Information with vicfg-cfgbackup,” on page 24

n“Managing VMkernel Modules,” on page 25

n“Using vicfg-authcong for Active Directory Conguration,” on page 26

n“Updating Hosts,” on page 27

Stopping, Rebooting, and Examining Hosts

You can stop, reboot, and examine hosts with ESXCLI or with vicfg-hostops.

Stopping and Rebooting Hosts with ESXCLI

You can shut down or reboot an ESXi host by using the vSphere Web Client or vCLI commands, such as

ESXCLI or vicfg-hostops.

Shuing down a managed host disconnects it from the vCenter Server system, but does not remove the host

from the inventory. You can shut down a single host or all hosts in a data center or cluster. Specify one of the

options listed in “Connection Options for vCLI Host Management Commands,” on page 19 in place of

<conn_options>.

To shut down a host, run esxcli system shutdown poweroff. You must specify the --reason option and

supply a reason for the shutdown. A --delay option allows you to specify a delay interval, in seconds.

To reboot a host, run system shutdown reboot. You must specify the --reason option and supply a reason

for the reboot. A --delay option allows you to specify a delay interval, in seconds.

VMware, Inc. 21

Stopping, Rebooting, and Examining Hosts with vicfg-hostops

You can shut down or reboot an ESXi host by using the vSphere Web Client, or ESXCLI or the vicfg-hostops

vCLI command.

Shuing down a managed host disconnects it from the vCenter Server system, but does not remove the host

from the inventory. You can shut down a single host or all hosts in a data center or cluster. Specify one of the

options listed in “Connection Options for vCLI Host Management Commands,” on page 19 in place of

<conn_options>.

nSingle host - Run vicfg-hostops with --operation shutdown.

nIf the host is in maintenance mode, run the command without the --force option.

vicfg-hostops <conn_options> --operation shutdown

nIf the host is not in maintenance mode, use --force to shut down the host and all running virtual

machines.

vicfg-hostops <conn_options> --operation shutdown --force

nAll hosts in data center or cluster - To shut down all hosts in a cluster or data center, specify --cluster

or --datacenter.

vicfg-hostops <conn_options> --operation shutdown --cluster <my_cluster>

vicfg-hostops <conn_options> --operation shutdown --datacenter <my_datacenter>

You can reboot a single host or all hosts in a data center or cluster.

nSingle host - Run vicfg-hostops with --operation reboot.

nIf the host is in maintenance mode, run the command without the --force option.

vicfg-hostops <conn_options> --operation reboot

nIf the host is not in maintenance mode, use --force to shut down the host and all running virtual

machines.

vicfg-hostops <conn_options> --operation reboot --force

nAll hosts in data center or cluster - You can specify --cluster or --datacenter to reboot all hosts in a

cluster or data center.

vicfg-hostops <conn_options> --operation reboot --cluster <my_cluster>

vicfg-hostops <conn_options> --operation reboot --datacenter <my_datacenter>

You can display information about a host by running vicfg-hostops with --operation info.

vicfg-hostops <conn_options> --operation info

The command returns the host name, manufacturer, model, processor type, CPU cores, memory capacity,

and boot time. The command also returns whether vMotion is enabled and whether the host is in

maintenance mode.

Entering and Exiting Maintenance Mode

You can instruct your host to enter or exit maintenance mode with ESXCLI or with vicfg-hostops.

Enter and Exit Maintenance Mode with ESXCLI

You place a host in maintenance mode to service it, for example, to install more memory. A host enters or

leaves maintenance mode only as the result of a user request.

esxcli system maintenanceMode set allows you to enable or disable maintenance mode.

vSphere Command-Line Interface Concepts and Examples

22 VMware, Inc.

When you run the vicfg-hostops vCLI command, you can specify one of the options listed in “Connection

Options for vCLI Host Management Commands,” on page 19 in place of <conn_options>.

Procedure

1 To enter maintenance mode, run the following command.

esxcli <conn_options> system maintenanceMode set --enable true

After all virtual machines on the host have been suspended or migrated, the host enters maintenance

mode.

N You cannot deploy or power on a virtual machine on hosts in maintenance mode.

2 To exit maintenance mode, run the following command.

esxcli <conn_options> system maintenanceMode set --enable false

N If you aempt to exit maintenance mode when the host is no longer in maintenance mode, an

error informs you that maintenance mode is already disabled.

Enter and Exit Maintenance Mode with vicfg-hostops

You place a host in maintenance mode to service it, for example, to install more memory. A host enters or

leaves maintenance mode only as the result of a user request.

vicfg-hostops suspends virtual machines by default, or powers o the virtual machine if you run vicfg-

hostops --action poweroff.

N vicfg-hostops does not work with VMware DRS. Virtual machines are always suspended.

The host is in a state of Entering Maintenance Mode until all running virtual machines are suspended or

migrated. When a host is entering maintenance mode, you cannot power on virtual machines on it or

migrate virtual machines to it.

When you run the vicfg-hostops vCLI command, you can specify one of the options listed in “Connection

Options for vCLI Host Management Commands,” on page 19 in place of <conn_options>.

Procedure

1 To enter maintenance mode, run the following command.

vicfg-hostops <conn_options> --operation enter

2 To check whether the host is in maintenance mode or in the Entering Maintenance Mode state, run the

following command.

vicfg-hostops <conn_options> --operation info

After all virtual machines on the host have been suspended or migrated, the host enters maintenance mode.

You cannot deploy or power on a virtual machine on hosts in maintenance mode.

What to do next

You can put all hosts in a cluster or data center in maintenance mode by using the --cluster or --

datacenter option. You must not use those options unless suspending all virtual machines in that cluster or

data center is no problem.

You can later run vicfg-hostops <conn_options> --operation exit to exit maintenance mode.

Chapter 2 Managing Hosts

VMware, Inc. 23

Backing Up Configuration Information with vicfg-cfgbackup

After you congure an ESXi host, you can back up the host conguration data. You should always back up

your host conguration after you change the conguration or upgrade the ESXi image.

I The vicfg-cfgbackup command is available only for ESXi hosts. The command is not available

through a vCenter Server system connection. No equivalent ESXCLI command is supported.

Backup Tasks

During a conguration backup, the serial number is backed up with the conguration.

The number is restored when you restore the conguration. The number is not preserved when you run the

Recovery CD (ESXi Embedded) or perform a repair operation (ESXi Installable).

You can back up and restore conguration information as follows.

1 Back up the conguration by using the vicfg-cfgbackup command.

2 Run the Recovery CD or repair operation.

3 Restore the conguration by using the vicfg-cfgbackup command.

When you restore a conguration, you must make sure that all virtual machines on the host are stopped.

Backing Up Configuration Data

You can back up conguration data by running vicfg-cfgbackup with the -s option.

The following example backs up conguration data in a temporary location.

vicfg-cfgbackup <conn_options> -s /tmp/ESXi_181842_backup.txt

For the backup lename, include the number of the build that is running on the host that you are backing

up. If you are running vCLI on vMA, the backup le is saved locally on vMA. Backup les can safely be

stored locally because virtual appliances are stored in the /vmfs/volumes/<datastore> directory on the host,

which is separate from the ESXi image and conguration les.

Restore Configuration Data

If you have created a backup, you can later restore ESXi conguration data.

When you restore conguration data, the number of the build running on the host must be the same as the

number of the build that was running when you created the backup le. To override this requirement,

include the -f (force) option.

When you run the vicfg-cfgbackup vCLI command, you can specify one of the options listed in

“Connection Options for vCLI Host Management Commands,” on page 19 in place of <conn_options>.

Procedure

1 Power o all virtual machines that are running on the host that you want to restore.

2 Log in to a host on which vCLI is installed, or log in to vMA.

3 Run vicfg-cfgbackup with the -l ag to load the host conguration from the specied backup le.

nIf you run the following command, you are prompted for conrmation.

vicfg-cfgbackup <conn_options> -l /tmp/ESXi_181842_backup.tgz

nIf you run the following command, you are not prompted for conrmation.

vicfg-cfgbackup <conn_options> -l /tmp/ESXi_181842_backup.tgz -q

vSphere Command-Line Interface Concepts and Examples

24 VMware, Inc.

4 (Optional) To restore the host to factory seings, run vicfg-cfgbackup with the -r option.

vicfg-cfgbackup <conn_options> -r

Using vicfg-cfgbackup from vMA

To back up a host conguration, you can run vicfg-cfgbackup from a vMA instance. The vMA instance can

run on the host that you are backing up or restoring, also referred to as the target host, or on a remote host.

To restore a host conguration, you must run vicfg-cfgbackup from a vMA instance running on a remote

host. The host must be in maintenance mode, which means all virtual machines, including vMA, must be

suspended on the target host.

For example, a backup operation for two ESXi hosts, host1 and host2, with vMA deployed on both hosts

works as follows.

nTo back up one of the host’s conguration, run vicfg-cfgbackup from the vMA appliance running on

either host1 or host2. Use the --server option to specify the host for which you want backup

information. The information is stored on vMA.

nTo restore the host1 conguration, run vicfg-cfgbackup from the vMA appliance running on host2. Use

the --server option to point to host1 to restore the conguration to that host.

nTo restore the host2 conguration, run vicfg-cfgbackup from the vMA appliance running on host1. Use

the --server option to point to host2 to restore the conguration to that host.

Managing VMkernel Modules

The esxcli system module and vicfg-module commands support seing and retrieving VMkernel module

options.

The vicfg-module and esxcli system module commands are implementations of the deprecated esxcfg-

module service console command. The two commands support most of the options esxcfg-module supports.

vicfg-module and esxcli system module are commonly used when VMware Technical Support, a

Knowledge Base article, or VMware documentation instruct you to do so.

Manage Modules with esxcli system module

Not all VMkernel modules have seable module options.

The following example illustrates how to examine and enable a VMkernel module. Specify one of the

connection options listed in “Connection Options for vCLI Host Management Commands,” on page 19 in

place of <conn_options>.

Procedure

1 List information about the module.

esxcli <conn_options> system module list --module=module_name

The system returns the name, type, value, and description of the module.

2 (Optional) List all enabled or loaded modules.

esxcli <conn_options> system module list --enabled=true

esxcli <conn_options> system module list --loaded=true

3 Enable the model.

esxcli <conn_options> system module set --module=module_name --enabled=true

Chapter 2 Managing Hosts

VMware, Inc. 25

4 Set the parameter.

esxcli system module parameters set --module=module_name --parameter-

string="parameter_string"

5 Verify that the module is congured.

esxcli <conn_options> system module parameters list --module=module_name

Manage Modules with vicfg-module

Not all VMkernel modules have seable module options.

The following example illustrates how the examine and enable a VMkernel modules. Specify one of the

connection options listed in “Connection Options for vCLI Host Management Commands,” on page 19 in

place of <conn_options>.

Procedure

1 Run vicfg-module --list to list the modules on the host.

vicfg-module <conn_options> --list

2 Run vicfg-module --set-options with connection options, the option string to be passed to a module,

and the module name.

vicfg-module <conn_options> --set-options '<parameter_name>=<value>' <module_name>

3 (Optional) To retrieve the option string that is congured to be passed to a module when the module is

loaded, run vicfg-module --get-options.

N This string is not necessarily the option string currently in use by the module.

vicfg-module <conn_options> --get-options module_name

Veries that a module is congured.

Using vicfg-authconfig for Active Directory Configuration

ESXi can be integrated with Active Directory. Active Directory provides authentication for all local services

and for remote access through the vSphere Web Services SDK, vSphere Web Client, PowerCLI, and vSphere

CLI.

You can congure Active Directory seings with the vSphere Web Client, as discussed in the vCenter Server

and Host Management documentation, or use vicfg-autconfig.

vicfg-authconfig allows you to remotely congure Active Directory seings on ESXi hosts. You can list

supported and active authentication mechanisms, list the current domain, and join or part from an Active

Directory domain.

Prepare ESXi Hosts for Active Directory Integration

Before you run the vicfg-authconfig command on an ESXi host, you must prepare the host.

Procedure

1Congure ESXi and Active Directory to use same NTP server.

I All hosts that join Active Directory must also be managed by an NTP server to avoid

issues with clock skews and Kerberos tickets. You must make sure the ESXi system and the Active

Directory server are using the same time zone.

The ESXi system’s time zone is always set to UTC.

vSphere Command-Line Interface Concepts and Examples

26 VMware, Inc.

2Congure the ESXi system’s DNS to be in the Active Directory domain.

Set Up Active Directory to Work with ESXi

You can run vicfg-authconfig to add the ESXi host to the Active Directory domain. You can run the

command directly against the host or against a vCenter Server system, specifying the host with --vihost.

Prerequisites

nVerify that you have installed the ESXi host, as explained in the vSphere Installation and Setup

documentation.

nVerify that you have installed Windows Active Directory on a Windows Server that runs Windows 2000

Server, Windows Server 2003, or Windows Server 2008. See the Microsoft Web site for instructions and

best practices.

nVerify that you have the appropriate Active Directory permissions and administrative privileges on the

ESXi host.

nVerify that time between the ESXi system and Windows Active Directory is synchronized.

Procedure

1 Test that the Windows Active Directory Server can ping the ESXi host by using the host name.

ping <ESX_hostname>

2 Run vicfg-authconfig to add the host to the Active Directory domain.

vicfg-authconfig --server=<ESXi Server IP Address>

--username=<ESXi Server Admin Username>

--password=<ESXi Server Admin User's Password>

--authscheme AD --joindomain <AD Domain Name>

--adusername=<Active Directory Administrator User Name>

--adpassword=<Active Directory Administrator User's Password>

The system prompts for user names and passwords if you do not specify them on the command line.

Passwords are not echoed to the screen.

3 Check that a Successfully Joined <Domain Name> message appears.

4 Verify the ESXi host is in the intended Windows Active Directory domain.

vicfg-authconfig --server XXX.XXX.XXX.XXX --authscheme AD -c

You are prompted for a user name and password for the ESXi system.

Updating Hosts

When you add custom drivers or patches to a host, the process is called an update.

nUpdate ESXi 4.0 and ESXi 4.1 hosts with the vihostupdate command, as discussed in the vSphere

Command-Line Interface Installation and Reference Guide included in the vSphere 4.1 documentation set.

nUpdate ESXi 5.0 hosts with esxcli software vib commands discussed in the vSphere Upgrade

documentation included in the vSphere 5.0 documentation set. You cannot run the vihostupdate

command against ESXi 5.0 or later.

nUpdate ESXi 5.0 hosts with esxcli software vib commands discussed in the vSphere Upgrade

documentation included in the vSphere 5.0 documentation set. You cannot run the vihostupdate

command against ESXi 5.0 or later.

nUpdate ESXi 5.1 hosts with esxcli software vib commands discussed in the vSphere Upgrade

documentation included in the vSphere 5.1 documentation set.

Chapter 2 Managing Hosts

VMware, Inc. 27

nUpdate ESXi 5.5 hosts with esxcli software vib commands discussed in the vSphere Upgrade

documentation included in the vSphere 5.5 documentation set.

nUpdate ESXi 6.0 hosts with esxcli software vib commands discussed in the vSphere Upgrade

documentation included in the vSphere 6.0 documentation set.

nUpdate ESXi 6.5 hosts with esxcli software vib commands discussed in the vSphere Upgrade

documentation included in the vSphere 6.5 documentation set.

vSphere Command-Line Interface Concepts and Examples

28 VMware, Inc.

Managing Files 3

The vSphere CLI includes two commands for le manipulation. vmkfstools allows you to manipulate VMFS

(Virtual Machine File System) and virtual disks. vifs supports remote interaction with les on your ESXi

host.

N See Chapter 4, “Managing Storage,” on page 41 for information about storage manipulation

commands.

This chapter includes the following topics:

n“Introduction to Virtual Machine File Management,” on page 29

n“Managing the Virtual Machine File System with vmkfstools,” on page 30

n“Upgrading VMFS3 Volumes to VMFS5,” on page 31

n“Managing VMFS Volumes,” on page 31

n“Reclaiming Unused Storage Space,” on page 34

n“Using vifs to View and Manipulate Files on Remote ESXi Hosts,” on page 35

Introduction to Virtual Machine File Management

You can use the vSphere Web Client or vCLI commands to access dierent types of storage devices that your

ESXi host discovers and to deploy datastores on those devices.

N Datastores are logical containers, analogous to le systems, that hide specics of each storage device

and provide a uniform model for storing virtual machine les. Datastores can be used for storing ISO

images, virtual machine templates, and oppy images. The vSphere Web Client uses the term datastore

exclusively. In vCLI, the term datastore, as well as VMFS or NFS volume, refer to the same logical container

on the physical device.

Depending on the type of storage you use, datastores can be backed by the VMFS and NFS le system

formats.

nVirtual Machine File System (VMFS) - High-performance le system that is optimized for storing

virtual machines. Your host can deploy a VMFS datastore on any SCSI-based local or networked storage

device, including Fibre Channel and iSCSI SAN equipment. As an alternative to using the VMFS

datastore, your virtual machine can have direct access to raw devices and use a mapping le (RDM) as

a proxy.

You manage VMFS and RDMs with the vSphere Web Client, or the vmkfstools command.

VMware, Inc. 29

nNetwork File System (NFS) - The NFS client built into ESXi uses the NFS protocol over TCP/IP to access

a designated NFS volume that is located on a NAS server. The ESXi host can mount the volume and use

it for its storage needs. vSphere supports versions 3 and 4.1 of the NFS protocol. Typically, the NFS

volume or directory is created by a storage administrator and is exported form the NFS server. The NFS

volumes do not need to be formaed with a local le system, such as VMFS. You can mount the

volumes directly and use them to store and boot virtual machines in the same way that you use VMFS

datastores. The host can access a designated NFS volume located on an NFS server, mount the volume,

and use it for any storage needs.

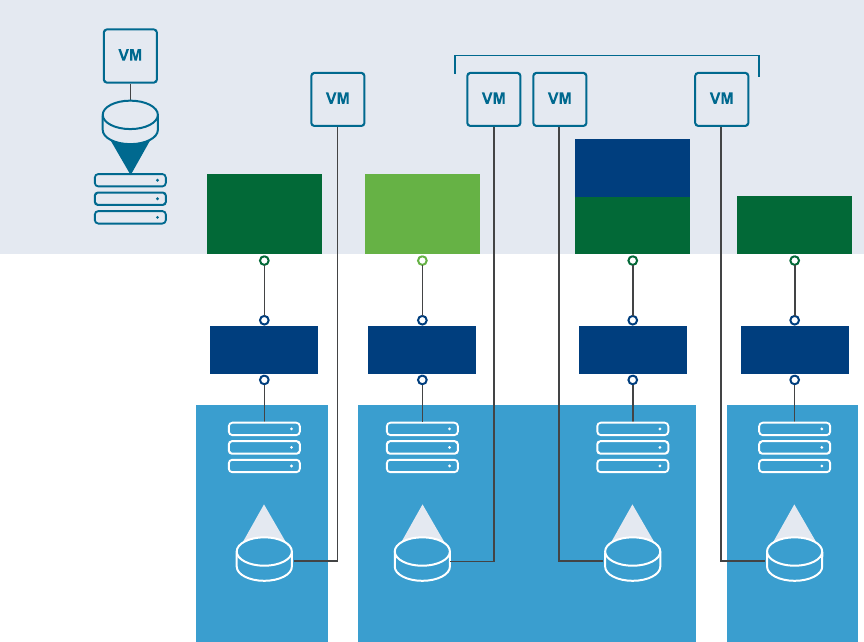

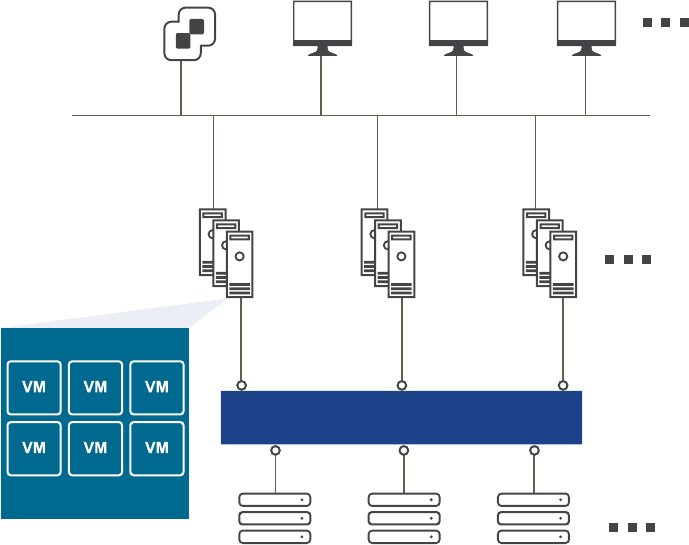

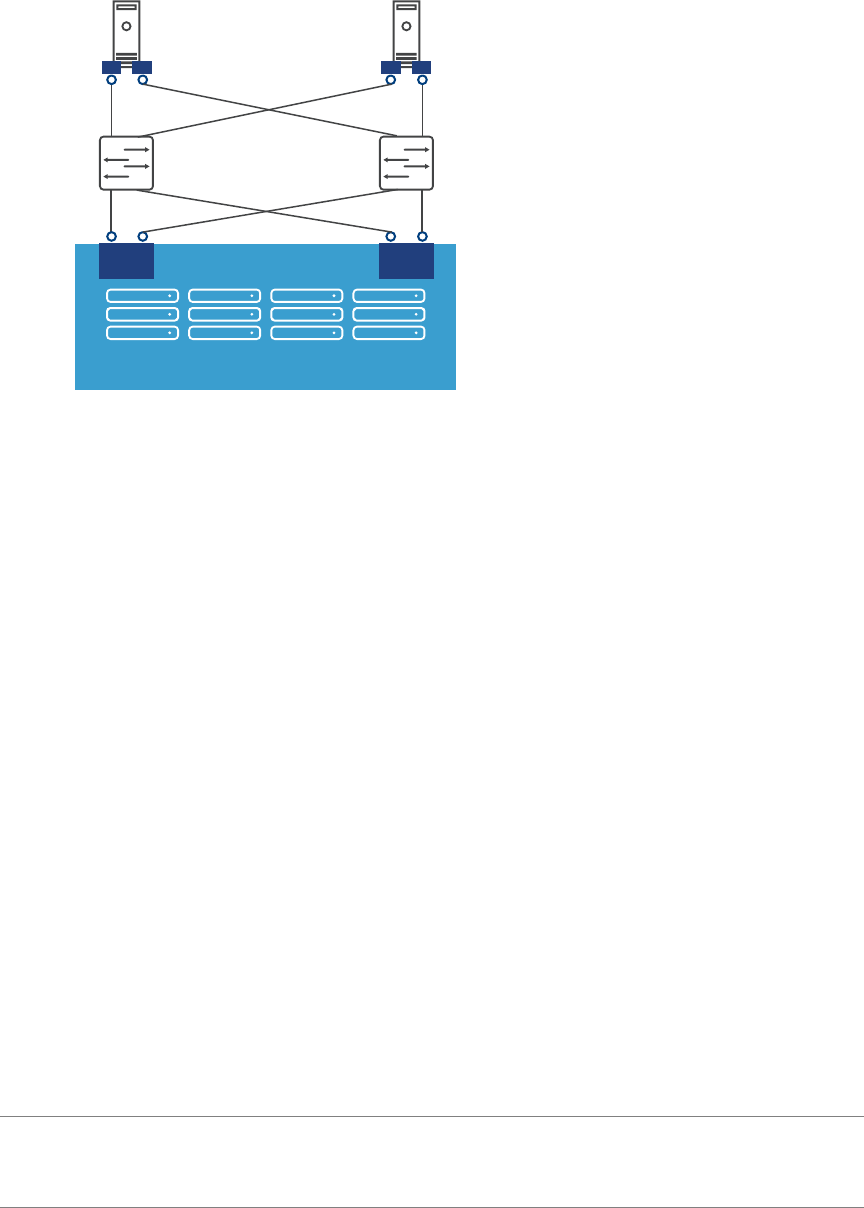

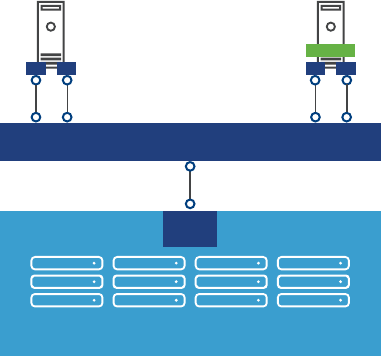

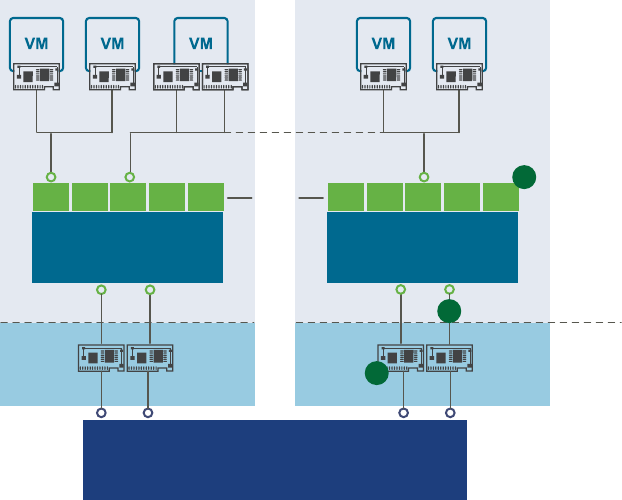

You manage NAS storage devices from the vSphere Web Client or with the esxcli storage nfs

command. The diagram below illustrates dierent types of storage, but it is for conceptual purposes

only. It is not a recommended conguration.

Figure 3‑1. Virtual Machines Accessing Different Types of Storage

iSCSI array

VMFS VMFS

LAN LAN

iSCSI

hardware

initiator ethernet

NIC

Host

requires TCP/IP connectivity

software

initiator

NAS

appliance

NFS

LAN

ethernet

NIC

fibre

array

VMFS

VMFS

LAN

fibre

channel

HBA

local

ethernet

SCSI

Managing the Virtual Machine File System with vmkfstools

VMFS datastores primarily serve as repositories for virtual machines.

You can store multiple virtual machines on the same VMFS volume. Each virtual machine, encapsulated in a

set of les, occupies a separate single directory. For the operating system inside the virtual machine, VMFS

preserves the internal le system semantics.

In addition, you can use the VMFS datastores to store other les, such as virtual machine templates and ISO

images. VMFS supports le and block sizes that enable virtual machines to run data-intensive applications,

including databases, ERP, and CRM, in virtual machines. See the vSphere Storage documentation.

vSphere Command-Line Interface Concepts and Examples

30 VMware, Inc.

You use the vmkfstools vCLI to create and manipulate virtual disks, le systems, logical volumes, and

physical storage devices on an ESXi host. You can use vmkfstools to create and manage a virtual machine

le system on a physical partition of a disk and to manipulate les, such as virtual disks, stored on VMFS-3

and NFS. You can also use vmkfstools to set up and manage raw device mappings (RDMs).

I The vmkfstools vCLI supports most but not all of the options that the vmkfstools ESXi Shell

command supports. See VMware Knowledge Base article 1008194.

You cannot run vmkfstools with --server pointing to a vCenter Server system.

The vSphere Storage documentation includes a complete reference to the vmkfstools command that you can

use in the ESXi Shell. You can use most of the same options with the vmkfstools vCLI command. Specify one

of the connection options listed in “Connection Options for vCLI Host Management Commands,” on

page 19 in place of <conn_options>.

The following options supported by the vmkfstools ESXi Shell command are not supported by the

vmkfstools vCLI command.

n--breaklock -B

n--chainConsistent -e

n--eagerzero -k

n--fix -x

n--lock -L

n--migratevirtualdisk -M

n--parseimage -Y

n--punchzero -K

n--snapshotdisk -I

n--verbose -v

Upgrading VMFS3 Volumes to VMFS5

vSphere 5.0 supports VMFS5 volumes, which have improved scalability and performance.

You can upgrade from VMFS3 to VMFS5 by using the vSphere Web Client, the vmkfstools ESXi Shell

command, or the esxcli storage vmfs upgrade command. You can pass the volume label or the volume

UUID to the ESXCLI command.

I You cannot upgrade VMFS3 volumes to VMFS5 with the vmkfstools command included in

vSphere CLI.

Managing VMFS Volumes

Dierent commands are available for listing, mounting, and unmounting VMFS volumes and for listing,

mounting, and unmounting VMFS snapshot volumes.

nManaging VMFS volumes

esxcli storage filesystem list shows all volumes, mounted and unmounted, that are resolved, that

is, that are not snapshot volumes.

esxcli storage filesystem unmount unmounts a currently mounted lesystem. Use this command for

snapshot volumes or resolved volumes.

nManaging snapshot volumes

Chapter 3 Managing Files

VMware, Inc. 31

esxcli storage vmfs snapshot commands can be used for listing, mounting, and resignaturing

snapshot volumes. See “Mounting Datastores with Existing Signatures,” on page 32 and

“Resignaturing VMFS Copies,” on page 33.

Managing Duplicate VMFS Datastores

In some cases VMFS datastores can have duplicate UUIDs.

Each VMFS datastore created in a LUN has a unique UUID that is stored in the le system superblock.

When the LUN is replicated or when a snapshot is made, the resulting LUN copy is identical, byte-for-byte,

to the original LUN. As a result, if the original LUN contains a VMFS datastore with UUID X, the LUN copy

appears to contain an identical VMFS datastore, or a VMFS datastore copy, with the same UUID X.

ESXi hosts can determine whether a LUN contains the VMFS datastore copy, and either mount the datastore

copy with its original UUID or change the UUID to resignature the datastore.

When a LUN contains a VMFS datastore copy, you can mount the datastore with the existing signature or

assign a new signature. The vSphere Storage documentation discusses volume resignaturing in detail.

Mounting Datastores with Existing Signatures

You can mount a VMFS datastore copy without changing its signature if the original is not mounted.

For example, you can maintain synchronized copies of virtual machines at a secondary site as part of a

disaster recovery plan. In the event of a disaster at the primary site, you can mount the datastore copy and

power on the virtual machines at the secondary site.

I You can mount a VMFS datastore only if it does not conict with an already mounted VMFS

datastore that has the same UUID.

When you mount the VMFS datastore, ESXi allows both read and write operations to the datastore that

resides on the LUN copy. The LUN copy must be writable. The datastore mounts are persistent and valid

across system reboots.

You can mount a datastore with ESXCLI or with vicfg-volume. See “Mount a Datastore with ESXCLI,” on

page 32 or “Mount a Datastore with vicfg-volume,” on page 33.

Mount a Datastore with ESXCLI

The esxcli storage filesystem commands support mounting and unmounting volumes. You can also

specify whether to persist the mounted volumes across reboots by using the --no-persist option.

Use the esxcli storage filesystem command to list mounted volumes, mount new volumes, and unmount

a volume. Specify one of the connection options listed in “Connection Options for vCLI Host Management

Commands,” on page 19 in place of <conn_options>.

Procedure

1 List all volumes that have been detected as snapshots.

esxcli <conn_options> storage filesystem list

2 Run esxcli storage filesystem mount with the volume label or volume UUID.

esxcli <conn_options> storage filesystem volume mount --volume-label=<label>|--volume-

uuid=<VMFS-UUID>

N This command fails if the original copy is online.

vSphere Command-Line Interface Concepts and Examples

32 VMware, Inc.

What to do next

You can later run esxcli storage filesystem volume unmount to unmount the snapshot volume.

esxcli <conn_options> storage filesystem volume unmount --volume-label=<label>|--volume-

uuid=<VMFS-UUID>

Mount a Datastore with vicfg-volume

The vicfg-volume command supports mounting and unmounting volumes.

Use the vicfg-volume command to list mounted volumes, mount new volumes, and unmount a volume.

Specify one of the connection options listed in “Connection Options for vCLI Host Management

Commands,” on page 19 in place of <conn_options>.

Procedure

1 List all volumes that have been detected as snapshots or replicas.

vicfg-volume <conn_options> --list

2 Run vicfg-volume --persistent-mount with the VMFS-UUID or label as an argument to mount a

volume.

vicfg-volume <conn_options> --persistent-mount <VMFS-UUID|label>

N This command fails if the original copy is online.

What to do next

You can later run vicfg-volume --unmount to unmount the snapshot or replica volume.

vicfg-volume <conn_options> --unmount <VMFS-UUID|label>

The vicfg-volume command supports resignaturing a snapshot volume and mounting and unmounting the

volume. You can also make the mounted volume persistent across reboots and query a list of snapshot

volumes and original volumes.

Resignaturing VMFS Copies

You can use datastore resignaturing to retain the data stored on the VMFS datastore copy.

When resignaturing a VMFS copy, the ESXi host assigns a new UUID and a new label to the copy, and

mounts the copy as a datastore distinct from the original. Because ESXi prevents you from resignaturing the

mounted datastore, unmount the datastore before resignaturing.

The default format of the new label assigned to the datastore is snap-<snapID>-<oldLabel>, where <snapID>

is an integer and <oldLabel> is the label of the original datastore.

When you perform datastore resignaturing, consider the following points.

nDatastore resignaturing is irreversible.

nThe LUN copy that contains the VMFS datastore that you resignature is no longer treated as a LUN

copy.

nA spanned datastore can be resignatured only if all its extents are online.

nThe resignaturing process is crash and fault tolerant. If the process is interrupted, you can resume it

later.

nYou can mount the new VMFS datastore without a risk of its UUID conicting with UUIDs of any other

datastore, such as an ancestor or child in a hierarchy of LUN snapshots.

Chapter 3 Managing Files

VMware, Inc. 33

You can resignature a VMFS copy with ESXCLI or with vicfg-volume. See “Resignature a VMFS Copy with

ESXCLI,” on page 34 or “Resignature a VMFS Copy with vicfg-volume,” on page 34.

Resignature a VMFS Copy with ESXCLI

The esxcli storage vmfs snapshot commands support resignaturing a snapshot volume.

Specify one of the connection options listed in “Connection Options for vCLI Host Management

Commands,” on page 19 in place of <conn_options>.

Procedure

1 List unresolved snapshots or replica volumes.

esxcli <conn_options> storage vmfs snapshot list

2 (Optional) Unmount the copy.

esxcli <conn_options> storage filesystem unmount

3 Run the resignature command.

esxcli <conn_options> storage vmfs snapshot resignature --volume-label=<label>|--volume-

uuid=<id>

The command returns to the prompt or signals an error.

What to do next

After resignaturing, you might have to perform the following operations.

nIf the resignatured datastore contains virtual machines, update references to the original VMFS

datastore in the virtual machine les, including .vmx, .vmdk, .vmsd, and .vmsn.

nTo power on virtual machines, register them with the vCenter Server system.

Resignature a VMFS Copy with vicfg-volume

You can use vicfg-volume to mount, unmount, and resignature VMFS volumes.

Prerequisites

Verify that the VMFS copy you want to resignature is not mounted.

Procedure

uRun vicfg-volume with the resignature option.

vicfg-volume <conn_options> --resignature <VMFS-UUID|label>

The command returns to the prompt or signals an error.

Reclaiming Unused Storage Space

When VMFS datastores reside on thin-provisioned LUNs, you can use ESXCLI commands to reclaim the

unused logical blocks of a thin-provisioned LUN formaed with VMFS.

When you run the commands, you must specify the volume label --volume-label or the volume ID --

volume-uuid but you cannot specify both.

In each iteration, the command issues unmap commands to the number of le system blocks that are

specied by the optional reclaim-unit argument, which defaults to 200. For newly created VMFS-5 le

systems, the lesystem block size is always 1 MB. For VMFS-3 le systems or VMFS-5 le systems that were

upgraded from VMFS-3, the lesystem block size could be one of 1, 2, 4, 8 MB.

vSphere Command-Line Interface Concepts and Examples

34 VMware, Inc.

The following examples illustrate how to use the command.

# esxcli storage vmfs unmap --volume-label datastore1 --reclaim-unit 100

# esxcli storage vmfs unmap -l datastore1 -n 100

# esxcli storage vmfs unmap --volume-uuid 515615fb-1e65c01d-b40f-001d096dbf97 --reclaim-unit 500

# esxcli storage vmfs unmap -u 515615fb-1e65c01d-b40f-001d096dbf97 -n 500

# esxcli storage vmfs unmap -l datastore1

# esxcli storage vmfs unmap -u 515615fb-1e65c01d-b40f-001d096dbf97

Using vifs to View and Manipulate Files on Remote ESXi Hosts

You can use the vifs utility for datastore le management.

C If you manipulate les directly, your vSphere setup might end up in an inconsistent state. Use the

vSphere Web Client or one of the other vCLI commands to manipulate virtual machine conguration les

and virtual disks.

The vifs command performs common operations such as copy, remove, get, and put on ESXi les and

directories. The command is supported against ESXi hosts but not against vCenter Server systems.

Some similarities between vifs and DOS or UNIX/Linux le system management utilities exist, but there are

many dierences. For example, vifs does not support wildcard characters or current directories and, as a

result, relative pathnames. You should use vifs only as documented.

Instead of using the vifs command, you can browse datastore contents and host les by using a Web

browser. Connect to the following location.

http://ESX_host_IP_Address/host

http://ESX_host_IP_Address/folder

You can view data center and datastore directories from this root URL. The following examples demonstrate

the syntax that you can use.

http://<ESXi_addr>/folder?dcPath=ha-datacenter

http://<ESXi_host_name>/folder?dcPath=ha-datacenter

The ESXi host prompts for a user name and password.

The vifs command supports dierent operations for the following groups of les and directories. Dierent

operations are available for each group, and you specify locations with a dierent syntax. The behavior

diers for vSphere 4.x and vSphere 5.0.

Chapter 3 Managing Files

VMware, Inc. 35

vSphere 4.x vSphere 5.0

Host Host conguration les. You must

specify the le’s unique name

identier.

Specify host locations by using

the /host/<path> syntax.

Host conguration les. You must

specify the le’s unique name

identier.

Specify host locations by using

the /host/<path> syntax.

You cannot list subdirectories

of /host.