Programming Hive Guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 350 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Table of Contents

- Preface

- Chapter 1. Introduction

- Chapter 2. Getting Started

- Chapter 3. Data Types and File Formats

- Chapter 4. HiveQL: Data Definition

- Chapter 5. HiveQL: Data Manipulation

- Chapter 6. HiveQL: Queries

- SELECT … FROM Clauses

- WHERE Clauses

- GROUP BY Clauses

- JOIN Statements

- ORDER BY and SORT BY

- DISTRIBUTE BY with SORT BY

- CLUSTER BY

- Casting

- Queries that Sample Data

- UNION ALL

- Chapter 7. HiveQL: Views

- Chapter 8. HiveQL: Indexes

- Chapter 9. Schema Design

- Chapter 10. Tuning

- Chapter 11. Other File Formats and Compression

- Chapter 12. Developing

- Chapter 13. Functions

- Discovering and Describing Functions

- Calling Functions

- Standard Functions

- Aggregate Functions

- Table Generating Functions

- A UDF for Finding a Zodiac Sign from a Day

- UDF Versus GenericUDF

- Permanent Functions

- User-Defined Aggregate Functions

- User-Defined Table Generating Functions

- Accessing the Distributed Cache from a UDF

- Annotations for Use with Functions

- Macros

- Chapter 14. Streaming

- Chapter 15. Customizing Hive File and Record Formats

- Chapter 16. Hive Thrift Service

- Chapter 17. Storage Handlers and NoSQL

- Chapter 18. Security

- Chapter 19. Locking

- Chapter 20. Hive Integration with Oozie

- Chapter 21. Hive and Amazon Web Services (AWS)

- Why Elastic MapReduce?

- Instances

- Before You Start

- Managing Your EMR Hive Cluster

- Thrift Server on EMR Hive

- Instance Groups on EMR

- Configuring Your EMR Cluster

- Persistence and the Metastore on EMR

- HDFS and S3 on EMR Cluster

- Putting Resources, Configs, and Bootstrap Scripts on S3

- Logs on S3

- Spot Instances

- Security Groups

- EMR Versus EC2 and Apache Hive

- Wrapping Up

- Chapter 22. HCatalog

- Chapter 23. Case Studies

- m6d.com (Media6Degrees)

- Outbrain

- NASA’s Jet Propulsion Laboratory

- Photobucket

- SimpleReach

- Experiences and Needs from the Customer Trenches

- Glossary

- Appendix. References

- Index

Programming Hive

Edward Capriolo, Dean Wampler, and Jason Rutherglen

Beijing

•

Cambridge

•

Farnham

•

Köln

•

Sebastopol

•

Tokyo

Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >

Programming Hive

by Edward Capriolo, Dean Wampler, and Jason Rutherglen

Copyright © 2012 Edward Capriolo, Aspect Research Associates, and Jason Rutherglen. All rights re-

served.

Printed in the United States of America.

Published by O’Reilly Media, Inc., 1005 Gravenstein Highway North, Sebastopol, CA 95472.

O’Reilly books may be purchased for educational, business, or sales promotional use. Online editions

are also available for most titles (http://my.safaribooksonline.com). For more information, contact our

corporate/institutional sales department: 800-998-9938 or corporate@oreilly.com.

Editors: Mike Loukides and Courtney Nash

Production Editors: Iris Febres and Rachel Steely

Proofreaders: Stacie Arellano and Kiel Van Horn

Indexer: Bob Pfahler

Cover Designer: Karen Montgomery

Interior Designer: David Futato

Illustrator: Rebecca Demarest

October 2012: First Edition.

Revision History for the First Edition:

2012-09-17 First release

See http://oreilly.com/catalog/errata.csp?isbn=9781449319335 for release details.

Nutshell Handbook, the Nutshell Handbook logo, and the O’Reilly logo are registered trademarks of

O’Reilly Media, Inc. Programming Hive, the image of a hornet’s hive, and related trade dress are trade-

marks of O’Reilly Media, Inc.

Many of the designations used by manufacturers and sellers to distinguish their products are claimed as

trademarks. Where those designations appear in this book, and O’Reilly Media, Inc., was aware of a

trademark claim, the designations have been printed in caps or initial caps.

While every precaution has been taken in the preparation of this book, the publisher and authors assume

no responsibility for errors or omissions, or for damages resulting from the use of the information con-

tained herein.

ISBN: 978-1-449-31933-5

[LSI]

1347905436

Table of Contents

Preface .................................................................... xiii

1. Introduction ........................................................... 1

An Overview of Hadoop and MapReduce 3

Hive in the Hadoop Ecosystem 6

Pig 8

HBase 8

Cascading, Crunch, and Others 9

Java Versus Hive: The Word Count Algorithm 10

What’s Next 13

2. Getting Started ........................................................ 15

Installing a Preconfigured Virtual Machine 15

Detailed Installation 16

Installing Java 16

Installing Hadoop 18

Local Mode, Pseudodistributed Mode, and Distributed Mode 19

Testing Hadoop 20

Installing Hive 21

What Is Inside Hive? 22

Starting Hive 23

Configuring Your Hadoop Environment 24

Local Mode Configuration 24

Distributed and Pseudodistributed Mode Configuration 26

Metastore Using JDBC 28

The Hive Command 29

Command Options 29

The Command-Line Interface 30

CLI Options 31

Variables and Properties 31

Hive “One Shot” Commands 34

iii

Executing Hive Queries from Files 35

The .hiverc File 36

More on Using the Hive CLI 36

Command History 37

Shell Execution 37

Hadoop dfs Commands from Inside Hive 38

Comments in Hive Scripts 38

Query Column Headers 38

3. Data Types and File Formats ............................................. 41

Primitive Data Types 41

Collection Data Types 43

Text File Encoding of Data Values 45

Schema on Read 48

4. HiveQL: Data Definition ................................................. 49

Databases in Hive 49

Alter Database 52

Creating Tables 53

Managed Tables 56

External Tables 56

Partitioned, Managed Tables 58

External Partitioned Tables 61

Customizing Table Storage Formats 63

Dropping Tables 66

Alter Table 66

Renaming a Table 66

Adding, Modifying, and Dropping a Table Partition 66

Changing Columns 67

Adding Columns 68

Deleting or Replacing Columns 68

Alter Table Properties 68

Alter Storage Properties 68

Miscellaneous Alter Table Statements 69

5. HiveQL: Data Manipulation .............................................. 71

Loading Data into Managed Tables 71

Inserting Data into Tables from Queries 73

Dynamic Partition Inserts 74

Creating Tables and Loading Them in One Query 75

Exporting Data 76

iv | Table of Contents

6. HiveQL: Queries ........................................................ 79

SELECT … FROM Clauses 79

Specify Columns with Regular Expressions 81

Computing with Column Values 81

Arithmetic Operators 82

Using Functions 83

LIMIT Clause 91

Column Aliases 91

Nested SELECT Statements 91

CASE … WHEN … THEN Statements 91

When Hive Can Avoid MapReduce 92

WHERE Clauses 92

Predicate Operators 93

Gotchas with Floating-Point Comparisons 94

LIKE and RLIKE 96

GROUP BY Clauses 97

HAVING Clauses 97

JOIN Statements 98

Inner JOIN 98

Join Optimizations 100

LEFT OUTER JOIN 101

OUTER JOIN Gotcha 101

RIGHT OUTER JOIN 103

FULL OUTER JOIN 104

LEFT SEMI-JOIN 104

Cartesian Product JOINs 105

Map-side Joins 105

ORDER BY and SORT BY 107

DISTRIBUTE BY with SORT BY 107

CLUSTER BY 108

Casting 109

Casting BINARY Values 109

Queries that Sample Data 110

Block Sampling 111

Input Pruning for Bucket Tables 111

UNION ALL 112

7. HiveQL: Views ........................................................ 113

Views to Reduce Query Complexity 113

Views that Restrict Data Based on Conditions 114

Views and Map Type for Dynamic Tables 114

View Odds and Ends 115

Table of Contents | v

8. HiveQL: Indexes ...................................................... 117

Creating an Index 117

Bitmap Indexes 118

Rebuilding the Index 118

Showing an Index 119

Dropping an Index 119

Implementing a Custom Index Handler 119

9. Schema Design ....................................................... 121

Table-by-Day 121

Over Partitioning 122

Unique Keys and Normalization 123

Making Multiple Passes over the Same Data 124

The Case for Partitioning Every Table 124

Bucketing Table Data Storage 125

Adding Columns to a Table 127

Using Columnar Tables 128

Repeated Data 128

Many Columns 128

(Almost) Always Use Compression! 128

10. Tuning .............................................................. 131

Using EXPLAIN 131

EXPLAIN EXTENDED 134

Limit Tuning 134

Optimized Joins 135

Local Mode 135

Parallel Execution 136

Strict Mode 137

Tuning the Number of Mappers and Reducers 138

JVM Reuse 139

Indexes 140

Dynamic Partition Tuning 140

Speculative Execution 141

Single MapReduce MultiGROUP BY 142

Virtual Columns 142

11. Other File Formats and Compression . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 145

Determining Installed Codecs 145

Choosing a Compression Codec 146

Enabling Intermediate Compression 147

Final Output Compression 148

Sequence Files 148

vi | Table of Contents

Compression in Action 149

Archive Partition 152

Compression: Wrapping Up 154

12. Developing .......................................................... 155

Changing Log4J Properties 155

Connecting a Java Debugger to Hive 156

Building Hive from Source 156

Running Hive Test Cases 156

Execution Hooks 158

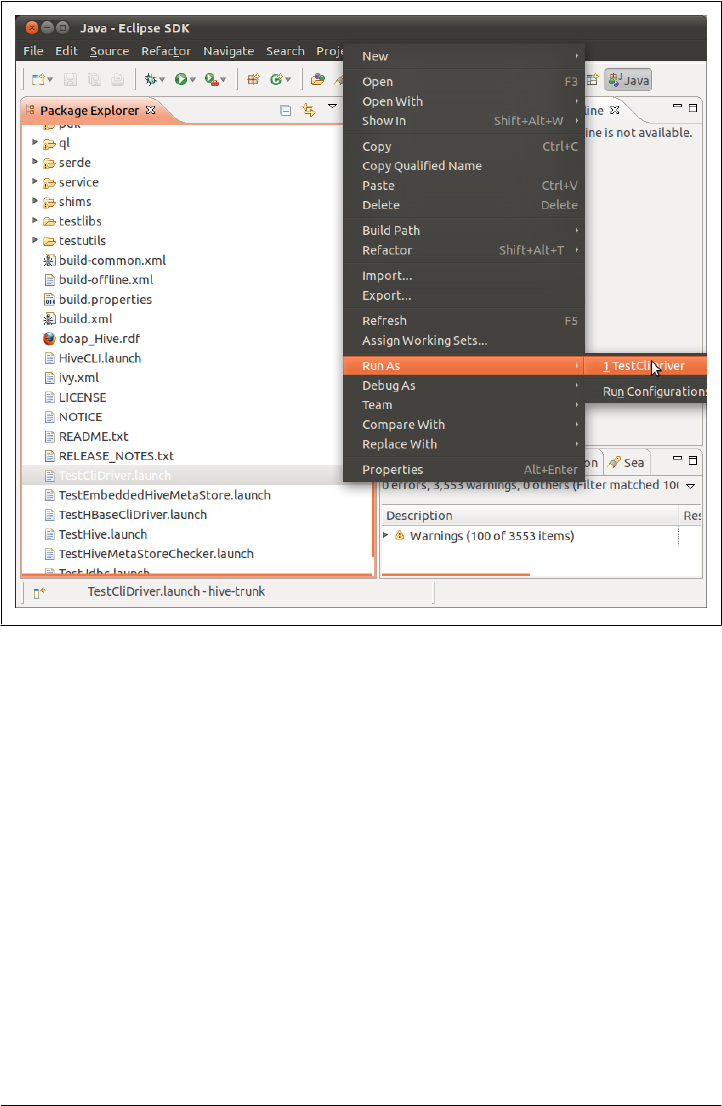

Setting Up Hive and Eclipse 158

Hive in a Maven Project 158

Unit Testing in Hive with hive_test 159

The New Plugin Developer Kit 161

13. Functions ............................................................ 163

Discovering and Describing Functions 163

Calling Functions 164

Standard Functions 164

Aggregate Functions 164

Table Generating Functions 165

A UDF for Finding a Zodiac Sign from a Day 166

UDF Versus GenericUDF 169

Permanent Functions 171

User-Defined Aggregate Functions 172

Creating a COLLECT UDAF to Emulate GROUP_CONCAT 172

User-Defined Table Generating Functions 177

UDTFs that Produce Multiple Rows 177

UDTFs that Produce a Single Row with Multiple Columns 179

UDTFs that Simulate Complex Types 179

Accessing the Distributed Cache from a UDF 182

Annotations for Use with Functions 184

Deterministic 184

Stateful 184

DistinctLike 185

Macros 185

14. Streaming ........................................................... 187

Identity Transformation 188

Changing Types 188

Projecting Transformation 188

Manipulative Transformations 189

Using the Distributed Cache 189

Table of Contents | vii

Producing Multiple Rows from a Single Row 190

Calculating Aggregates with Streaming 191

CLUSTER BY, DISTRIBUTE BY, SORT BY 192

GenericMR Tools for Streaming to Java 194

Calculating Cogroups 196

15. Customizing Hive File and Record Formats . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 199

File Versus Record Formats 199

Demystifying CREATE TABLE Statements 199

File Formats 201

SequenceFile 201

RCFile 202

Example of a Custom Input Format: DualInputFormat 203

Record Formats: SerDes 205

CSV and TSV SerDes 206

ObjectInspector 206

Think Big Hive Reflection ObjectInspector 206

XML UDF 207

XPath-Related Functions 207

JSON SerDe 208

Avro Hive SerDe 209

Defining Avro Schema Using Table Properties 209

Defining a Schema from a URI 210

Evolving Schema 210

Binary Output 211

16. Hive Thrift Service .................................................... 213

Starting the Thrift Server 213

Setting Up Groovy to Connect to HiveService 214

Connecting to HiveServer 214

Getting Cluster Status 215

Result Set Schema 215

Fetching Results 215

Retrieving Query Plan 216

Metastore Methods 216

Example Table Checker 216

Administrating HiveServer 217

Productionizing HiveService 217

Cleanup 218

Hive ThriftMetastore 219

ThriftMetastore Configuration 219

Client Configuration 219

viii | Table of Contents

17. Storage Handlers and NoSQL ............................................ 221

Storage Handler Background 221

HiveStorageHandler 222

HBase 222

Cassandra 224

Static Column Mapping 224

Transposed Column Mapping for Dynamic Columns 224

Cassandra SerDe Properties 224

DynamoDB 225

18. Security ............................................................. 227

Integration with Hadoop Security 228

Authentication with Hive 228

Authorization in Hive 229

Users, Groups, and Roles 230

Privileges to Grant and Revoke 231

Partition-Level Privileges 233

Automatic Grants 233

19. Locking . ............................................................ 235

Locking Support in Hive with Zookeeper 235

Explicit, Exclusive Locks 238

20. Hive Integration with Oozie . ........................................... 239

Oozie Actions 239

Hive Thrift Service Action 240

A Two-Query Workflow 240

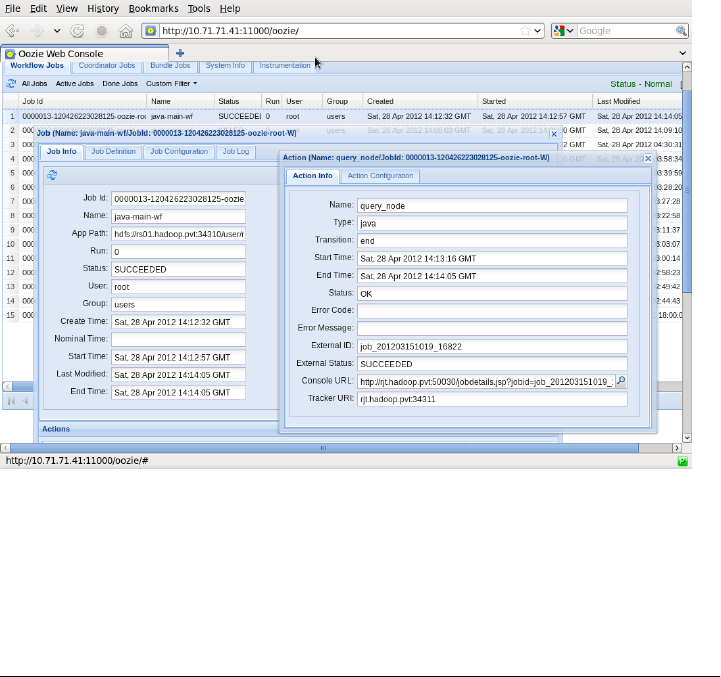

Oozie Web Console 242

Variables in Workflows 242

Capturing Output 243

Capturing Output to Variables 243

21. Hive and Amazon Web Services (AWS) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 245

Why Elastic MapReduce? 245

Instances 245

Before You Start 246

Managing Your EMR Hive Cluster 246

Thrift Server on EMR Hive 247

Instance Groups on EMR 247

Configuring Your EMR Cluster 248

Deploying hive-site.xml 248

Deploying a .hiverc Script 249

Table of Contents | ix

Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >

Setting Up a Memory-Intensive Configuration 249

Persistence and the Metastore on EMR 250

HDFS and S3 on EMR Cluster 251

Putting Resources, Configs, and Bootstrap Scripts on S3 252

Logs on S3 252

Spot Instances 252

Security Groups 253

EMR Versus EC2 and Apache Hive 254

Wrapping Up 254

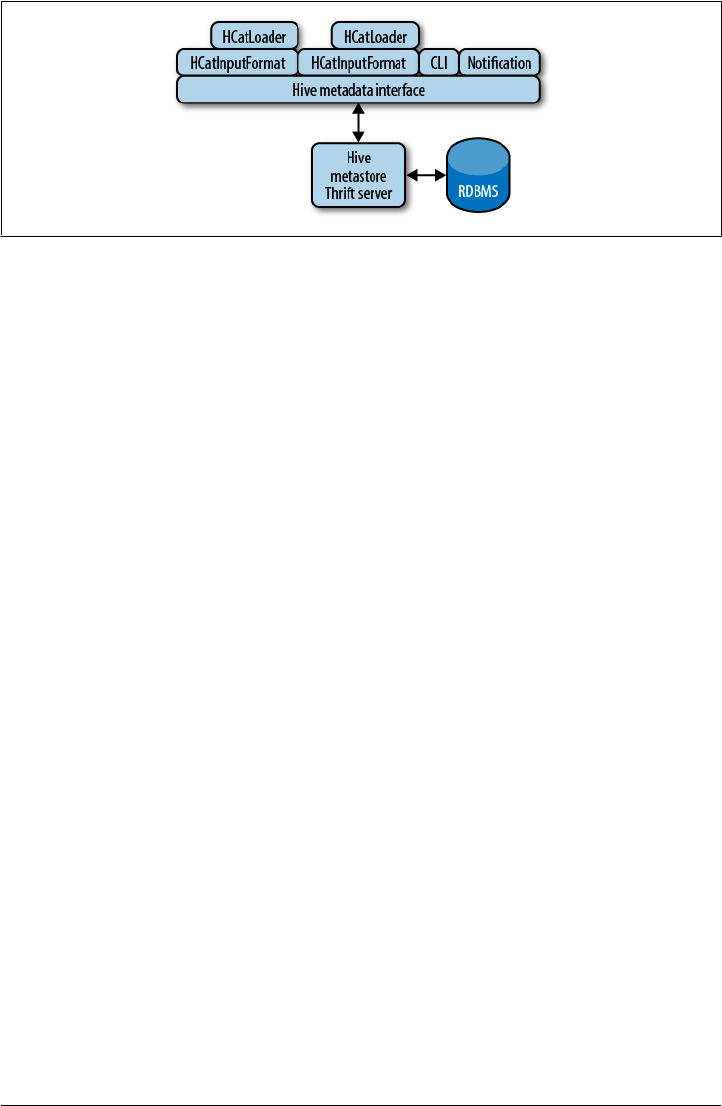

22. HCatalog ............................................................ 255

Introduction 255

MapReduce 256

Reading Data 256

Writing Data 258

Command Line 261

Security Model 261

Architecture 262

23. Case Studies ......................................................... 265

m6d.com (Media6Degrees) 265

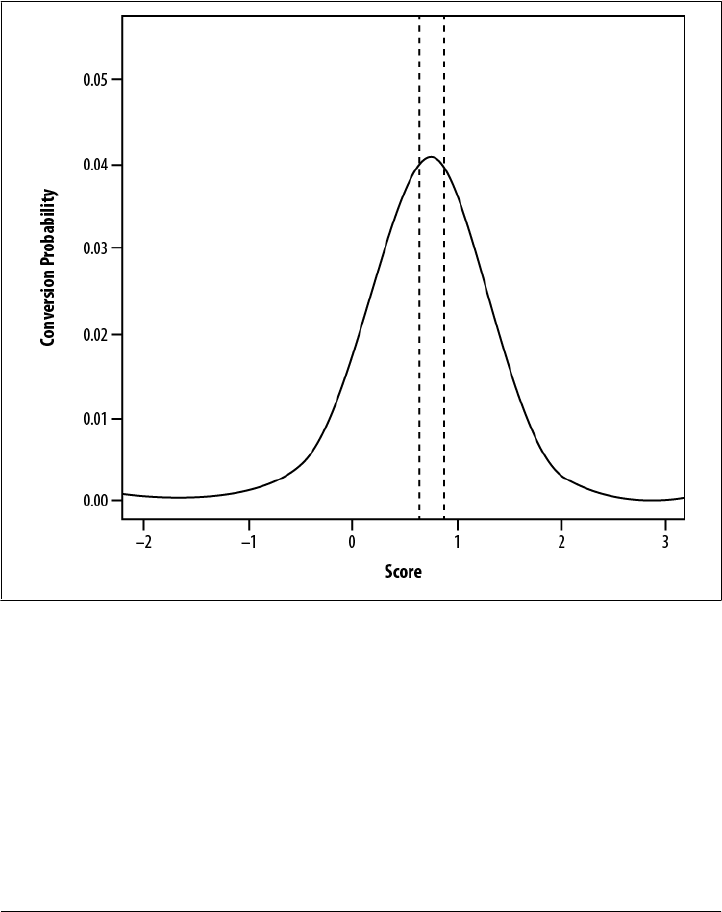

Data Science at M6D Using Hive and R 265

M6D UDF Pseudorank 270

M6D Managing Hive Data Across Multiple MapReduce Clusters 274

Outbrain 278

In-Site Referrer Identification 278

Counting Uniques 280

Sessionization 282

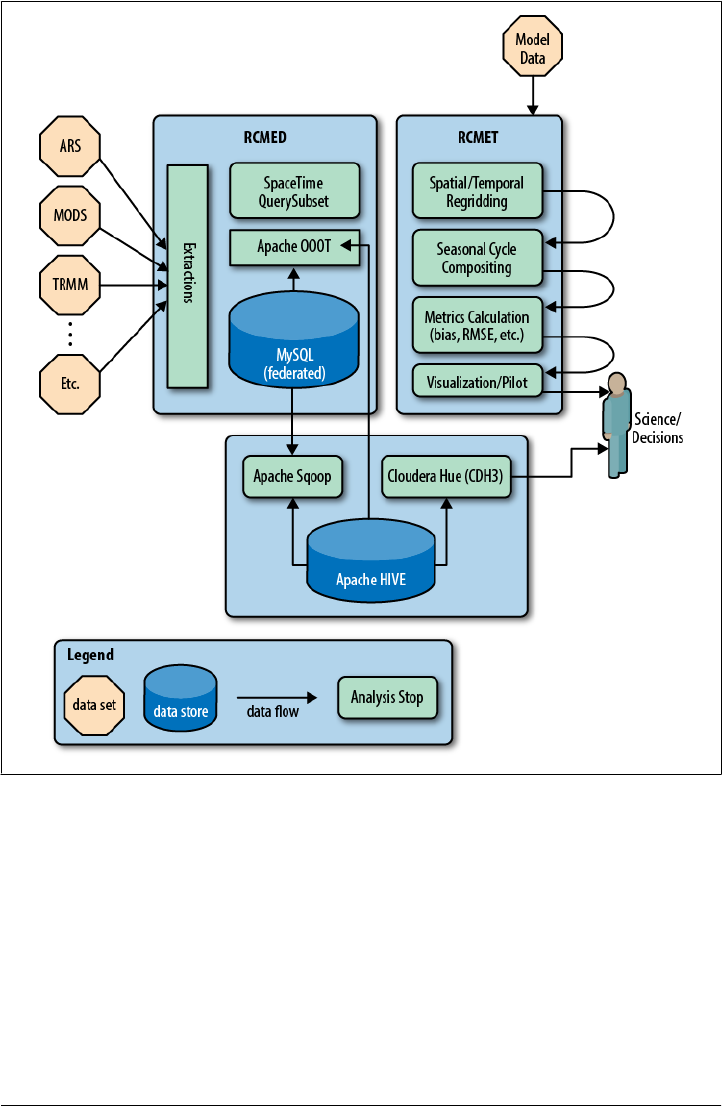

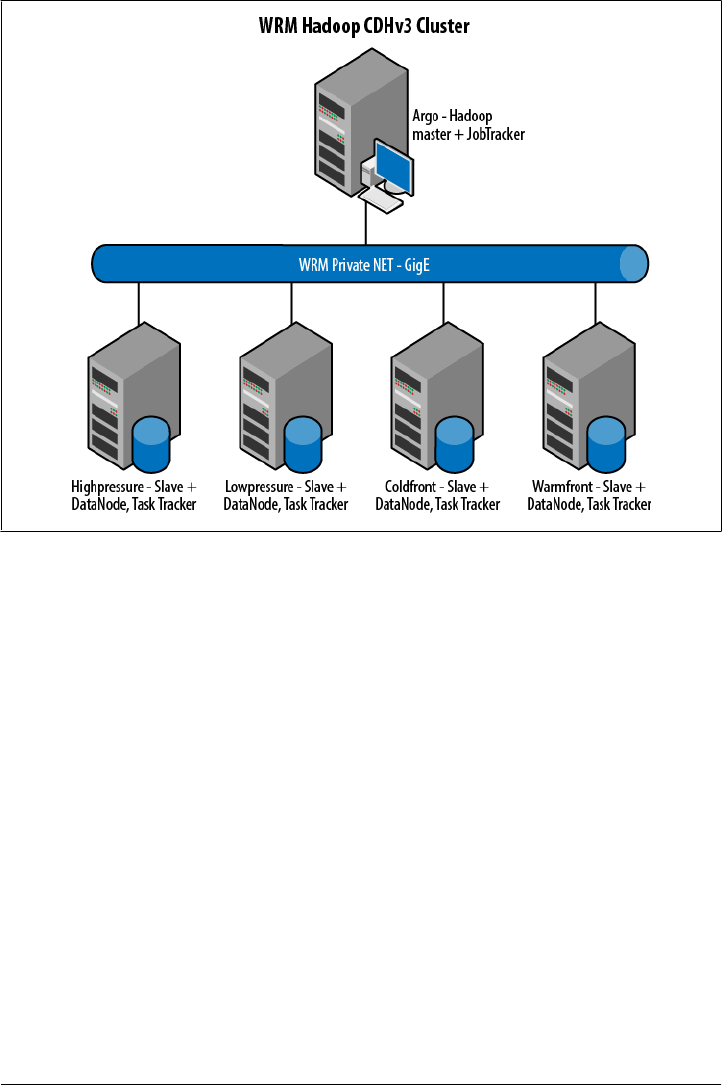

NASA’s Jet Propulsion Laboratory 287

The Regional Climate Model Evaluation System 287

Our Experience: Why Hive? 290

Some Challenges and How We Overcame Them 291

Photobucket 292

Big Data at Photobucket 292

What Hardware Do We Use for Hive? 293

What’s in Hive? 293

Who Does It Support? 293

SimpleReach 294

Experiences and Needs from the Customer Trenches 296

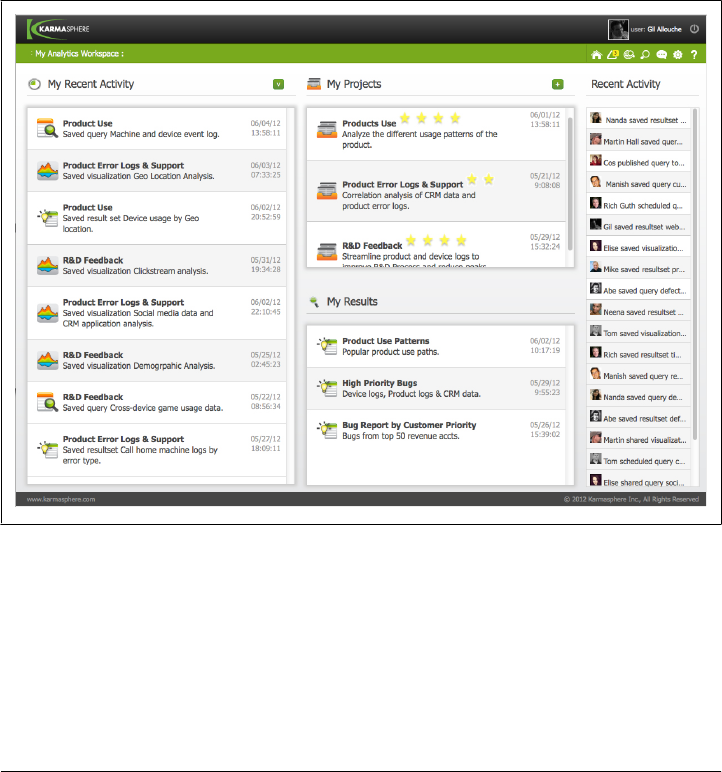

A Karmasphere Perspective 296

Introduction 296

Use Case Examples from the Customer Trenches 297

x | Table of Contents

Preface

Programming Hive introduces Hive, an essential tool in the Hadoop ecosystem that

provides an SQL (Structured Query Language) dialect for querying data stored in the

Hadoop Distributed Filesystem (HDFS), other filesystems that integrate with Hadoop,

such as MapR-FS and Amazon’s S3 and databases like HBase (the Hadoop database)

and Cassandra.

Most data warehouse applications are implemented using relational databases that use

SQL as the query language. Hive lowers the barrier for moving these applications to

Hadoop. People who know SQL can learn Hive easily. Without Hive, these users must

learn new languages and tools to become productive again. Similarly, Hive makes it

easier for developers to port SQL-based applications to Hadoop, compared to other

tool options. Without Hive, developers would face a daunting challenge when porting

their SQL applications to Hadoop.

Still, there are aspects of Hive that are different from other SQL-based environments.

Documentation for Hive users and Hadoop developers has been sparse. We decided

to write this book to fill that gap. We provide a pragmatic, comprehensive introduction

to Hive that is suitable for SQL experts, such as database designers and business ana-

lysts. We also cover the in-depth technical details that Hadoop developers require for

tuning and customizing Hive.

You can learn more at the book’s catalog page (http://oreil.ly/Programming_Hive).

Conventions Used in This Book

The following typographical conventions are used in this book:

Italic

Indicates new terms, URLs, email addresses, filenames, and file extensions. Defi-

nitions of most terms can be found in the Glossary.

Constant width

Used for program listings, as well as within paragraphs to refer to program elements

such as variable or function names, databases, data types, environment variables,

statements, and keywords.

xiii

Constant width bold

Shows commands or other text that should be typed literally by the user.

Constant width italic

Shows text that should be replaced with user-supplied values or by values deter-

mined by context.

This icon signifies a tip, suggestion, or general note.

This icon indicates a warning or caution.

Using Code Examples

This book is here to help you get your job done. In general, you may use the code in

this book in your programs and documentation. You do not need to contact us for

permission unless you’re reproducing a significant portion of the code. For example,

writing a program that uses several chunks of code from this book does not require

permission. Selling or distributing a CD-ROM of examples from O’Reilly books does

require permission. Answering a question by citing this book and quoting example

code does not require permission. Incorporating a significant amount of example code

from this book into your product’s documentation does require permission.

We appreciate, but do not require, attribution. An attribution usually includes the title,

author, publisher, and ISBN. For example: “Programming Hive by Edward Capriolo,

Dean Wampler, and Jason Rutherglen (O’Reilly). Copyright 2012 Edward Capriolo,

Aspect Research Associates, and Jason Rutherglen, 978-1-449-31933-5.”

If you feel your use of code examples falls outside fair use or the permission given above,

feel free to contact us at permissions@oreilly.com.

Safari® Books Online

Safari Books Online (www.safaribooksonline.com) is an on-demand digital

library that delivers expert content in both book and video form from the

world’s leading authors in technology and business.

Technology professionals, software developers, web designers, and business and cre-

ative professionals use Safari Books Online as their primary resource for research,

problem solving, learning, and certification training.

xiv | Preface

Safari Books Online offers a range of product mixes and pricing programs for organi-

zations, government agencies, and individuals. Subscribers have access to thousands

of books, training videos, and prepublication manuscripts in one fully searchable da-

tabase from publishers like O’Reilly Media, Prentice Hall Professional, Addison-Wesley

Professional, Microsoft Press, Sams, Que, Peachpit Press, Focal Press, Cisco Press, John

Wiley & Sons, Syngress, Morgan Kaufmann, IBM Redbooks, Packt, Adobe Press, FT

Press, Apress, Manning, New Riders, McGraw-Hill, Jones & Bartlett, Course Tech-

nology, and dozens more. For more information about Safari Books Online, please visit

us online.

How to Contact Us

Please address comments and questions concerning this book to the publisher:

O’Reilly Media, Inc.

1005 Gravenstein Highway North

Sebastopol, CA 95472

800-998-9938 (in the United States or Canada)

707-829-0515 (international or local)

707-829-0104 (fax)

We have a web page for this book, where we list errata, examples, and any additional

information. You can access this page at http://oreil.ly/Programming_Hive.

To comment or ask technical questions about this book, send email to

bookquestions@oreilly.com.

For more information about our books, courses, conferences, and news, see our website

at http://www.oreilly.com.

Find us on Facebook: http://facebook.com/oreilly

Follow us on Twitter: http://twitter.com/oreillymedia

Watch us on YouTube: http://www.youtube.com/oreillymedia

What Brought Us to Hive?

The three of us arrived here from different directions.

Edward Capriolo

When I first became involved with Hadoop, I saw the distributed filesystem and Map-

Reduce as a great way to tackle computer-intensive problems. However, programming

in the MapReduce model was a paradigm shift for me. Hive offered a fast and simple

way to take advantage of MapReduce in an SQL-like world I was comfortable in. This

approach also made it easy to prototype proof-of-concept applications and also to

Preface | xv

champion Hadoop as a solution internally. Even though I am now very familiar with

Hadoop internals, Hive is still my primary method of working with Hadoop.

It is an honor to write a Hive book. Being a Hive Committer and a member of the

Apache Software Foundation is my most valued accolade.

Dean Wampler

As a “big data” consultant at Think Big Analytics, I work with experienced “data people”

who eat and breathe SQL. For them, Hive is a necessary and sufficient condition for

Hadoop to be a viable tool to leverage their investment in SQL and open up new op-

portunities for data analytics.

Hive has lacked good documentation. I suggested to my previous editor at O’Reilly,

Mike Loukides, that a Hive book was needed by the community. So, here we are…

Jason Rutherglen

I work at Think Big Analytics as a software architect. My career has involved an array

of technologies including search, Hadoop, mobile, cryptography, and natural language

processing. Hive is the ultimate way to build a data warehouse using open technologies

on any amount of data. I use Hive regularly on a variety of projects.

Acknowledgments

Everyone involved with Hive. This includes committers, contributors, as well as end

users.

Mark Grover wrote the chapter on Hive and Amazon Web Services. He is a contributor

to the Apache Hive project and is active helping others on the Hive IRC channel.

David Ha and Rumit Patel, at M6D, contributed the case study and code on the Rank

function. The ability to do Rank in Hive is a significant feature.

Ori Stitelman, at M6D, contributed the case study, Data Science using Hive and R,

which demonstrates how Hive can be used to make first pass on large data sets and

produce results to be used by a second R process.

David Funk contributed three use cases on in-site referrer identification, sessionization,

and counting unique visitors. David’s techniques show how rewriting and optimizing

Hive queries can make large scale map reduce data analysis more efficient.

Ian Robertson read the entire first draft of the book and provided very helpful feedback

on it. We’re grateful to him for providing that feedback on short notice and a tight

schedule.

xvi | Preface

John Sichi provided technical review for the book. John was also instrumental in driving

through some of the newer features in Hive like StorageHandlers and Indexing Support.

He has been actively growing and supporting the Hive community.

Alan Gates, author of Programming Pig, contributed the HCatalog chapter. Nanda

Vijaydev contributed the chapter on how Karmasphere offers productized enhance-

ments for Hive. Eric Lubow contributed the SimpleReach case study. Chris A. Matt-

mann, Paul Zimdars, Cameron Goodale, Andrew F. Hart, Jinwon Kim, Duane Waliser,

and Peter Lean contributed the NASA JPL case study.

Preface | xvii

CHAPTER 1

Introduction

From the early days of the Internet’s mainstream breakout, the major search engines

and ecommerce companies wrestled with ever-growing quantities of data. More re-

cently, social networking sites experienced the same problem. Today, many organiza-

tions realize that the data they gather is a valuable resource for understanding their

customers, the performance of their business in the marketplace, and the effectiveness

of their infrastructure.

The Hadoop ecosystem emerged as a cost-effective way of working with such large data

sets. It imposes a particular programming model, called MapReduce, for breaking up

computation tasks into units that can be distributed around a cluster of commodity,

server class hardware, thereby providing cost-effective, horizontal scalability. Under-

neath this computation model is a distributed file system called the Hadoop Distributed

Filesystem (HDFS). Although the filesystem is “pluggable,” there are now several com-

mercial and open source alternatives.

However, a challenge remains; how do you move an existing data infrastructure to

Hadoop, when that infrastructure is based on traditional relational databases and the

Structured Query Language (SQL)? What about the large base of SQL users, both expert

database designers and administrators, as well as casual users who use SQL to extract

information from their data warehouses?

This is where Hive comes in. Hive provides an SQL dialect, called Hive Query Lan-

guage (abbreviated HiveQL or just HQL) for querying data stored in a Hadoop cluster.

SQL knowledge is widespread for a reason; it’s an effective, reasonably intuitive model

for organizing and using data. Mapping these familiar data operations to the low-level

MapReduce Java API can be daunting, even for experienced Java developers. Hive does

this dirty work for you, so you can focus on the query itself. Hive translates most queries

to MapReduce jobs, thereby exploiting the scalability of Hadoop, while presenting a

familiar SQL abstraction. If you don’t believe us, see “Java Versus Hive: The Word

Count Algorithm” on page 10 later in this chapter.

1

Hive is most suited for data warehouse applications, where relatively static data is an-

alyzed, fast response times are not required, and when the data is not changing rapidly.

Hive is not a full database. The design constraints and limitations of Hadoop and HDFS

impose limits on what Hive can do. The biggest limitation is that Hive does not provide

record-level update, insert, nor delete. You can generate new tables from queries or

output query results to files. Also, because Hadoop is a batch-oriented system, Hive

queries have higher latency, due to the start-up overhead for MapReduce jobs. Queries

that would finish in seconds for a traditional database take longer for Hive, even for

relatively small data sets.1 Finally, Hive does not provide transactions.

So, Hive doesn’t provide crucial features required for OLTP, Online Transaction Pro-

cessing. It’s closer to being an OLAP tool, Online Analytic Processing, but as we’ll see,

Hive isn’t ideal for satisfying the “online” part of OLAP, at least today, since there can

be significant latency between issuing a query and receiving a reply, both due to the

overhead of Hadoop and due to the size of the data sets Hadoop was designed to serve.

If you need OLTP features for large-scale data, you should consider using a NoSQL

database. Examples include HBase, a NoSQL database integrated with Hadoop,2 Cas-

sandra,3 and DynamoDB, if you are using Amazon’s Elastic MapReduce (EMR) or

Elastic Compute Cloud (EC2).4 You can even integrate Hive with these databases

(among others), as we’ll discuss in Chapter 17.

So, Hive is best suited for data warehouse applications, where a large data set is main-

tained and mined for insights, reports, etc.

Because most data warehouse applications are implemented using SQL-based rela-

tional databases, Hive lowers the barrier for moving these applications to Hadoop.

People who know SQL can learn Hive easily. Without Hive, these users would need to

learn new languages and tools to be productive again.

Similarly, Hive makes it easier for developers to port SQL-based applications to

Hadoop, compared with other Hadoop languages and tools.

However, like most SQL dialects, HiveQL does not conform to the ANSI SQL standard

and it differs in various ways from the familiar SQL dialects provided by Oracle,

MySQL, and SQL Server. (However, it is closest to MySQL’s dialect of SQL.)

1. However, for the big data sets Hive is designed for, this start-up overhead is trivial compared to the actual

processing time.

2. See the Apache HBase website, http://hbase.apache.org, and HBase: The Definitive Guide by Lars George

(O’Reilly).

3. See the Cassandra website, http://cassandra.apache.org/, and High Performance Cassandra Cookbook by

Edward Capriolo (Packt).

4. See the DynamoDB website, http://aws.amazon.com/dynamodb/.

2 | Chapter 1: Introduction

So, this book has a dual purpose. First, it provides a comprehensive, example-driven

introduction to HiveQL for all users, from developers, database administrators and

architects, to less technical users, such as business analysts.

Second, the book provides the in-depth technical details required by developers and

Hadoop administrators to tune Hive query performance and to customize Hive with

user-defined functions, custom data formats, etc.

We wrote this book out of frustration that Hive lacked good documentation, especially

for new users who aren’t developers and aren’t accustomed to browsing project artifacts

like bug and feature databases, source code, etc., to get the information they need. The

Hive Wiki5 is an invaluable source of information, but its explanations are sometimes

sparse and not always up to date. We hope this book remedies those issues, providing

a single, comprehensive guide to all the essential features of Hive and how to use them

effectively.6

An Overview of Hadoop and MapReduce

If you’re already familiar with Hadoop and the MapReduce computing model, you can

skip this section. While you don’t need an intimate knowledge of MapReduce to use

Hive, understanding the basic principles of MapReduce will help you understand what

Hive is doing behind the scenes and how you can use Hive more effectively.

We provide a brief overview of Hadoop and MapReduce here. For more details, see

Hadoop: The Definitive Guide by Tom White (O’Reilly).

MapReduce

MapReduce is a computing model that decomposes large data manipulation jobs into

individual tasks that can be executed in parallel across a cluster of servers. The results

of the tasks can be joined together to compute the final results.

The MapReduce programming model was developed at Google and described in an

influential paper called MapReduce: simplified data processing on large clusters (see the

Appendix) on page 309. The Google Filesystem was described a year earlier in a paper

called The Google filesystem on page 310. Both papers inspired the creation of Hadoop

by Doug Cutting.

The term MapReduce comes from the two fundamental data-transformation operations

used, map and reduce. A map operation converts the elements of a collection from one

form to another. In this case, input key-value pairs are converted to zero-to-many

5. See https://cwiki.apache.org/Hive/.

6. It’s worth bookmarking the wiki link, however, because the wiki contains some more obscure information

we won’t cover here.

An Overview of Hadoop and MapReduce | 3

output key-value pairs, where the input and output keys might be completely different

and the input and output values might be completely different.

In MapReduce, all the key-pairs for a given key are sent to the same reduce operation.

Specifically, the key and a collection of the values are passed to the reducer. The goal

of “reduction” is to convert the collection to a value, such as summing or averaging a

collection of numbers, or to another collection. A final key-value pair is emitted by the

reducer. Again, the input versus output keys and values may be different. Note that if

the job requires no reduction step, then it can be skipped.

An implementation infrastructure like the one provided by Hadoop handles most of

the chores required to make jobs run successfully. For example, Hadoop determines

how to decompose the submitted job into individual map and reduce tasks to run, it

schedules those tasks given the available resources, it decides where to send a particular

task in the cluster (usually where the corresponding data is located, when possible, to

minimize network overhead), it monitors each task to ensure successful completion,

and it restarts tasks that fail.

The Hadoop Distributed Filesystem, HDFS, or a similar distributed filesystem, manages

data across the cluster. Each block is replicated several times (three copies is the usual

default), so that no single hard drive or server failure results in data loss. Also, because

the goal is to optimize the processing of very large data sets, HDFS and similar filesys-

tems use very large block sizes, typically 64 MB or multiples thereof. Such large blocks

can be stored contiguously on hard drives so they can be written and read with minimal

seeking of the drive heads, thereby maximizing write and read performance.

To make MapReduce more clear, let’s walk through a simple example, the Word

Count algorithm that has become the “Hello World” of MapReduce.7 Word Count

returns a list of all the words that appear in a corpus (one or more documents) and the

count of how many times each word appears. The output shows each word found and

its count, one per line. By common convention, the word (output key) and count (out-

put value) are usually separated by a tab separator.

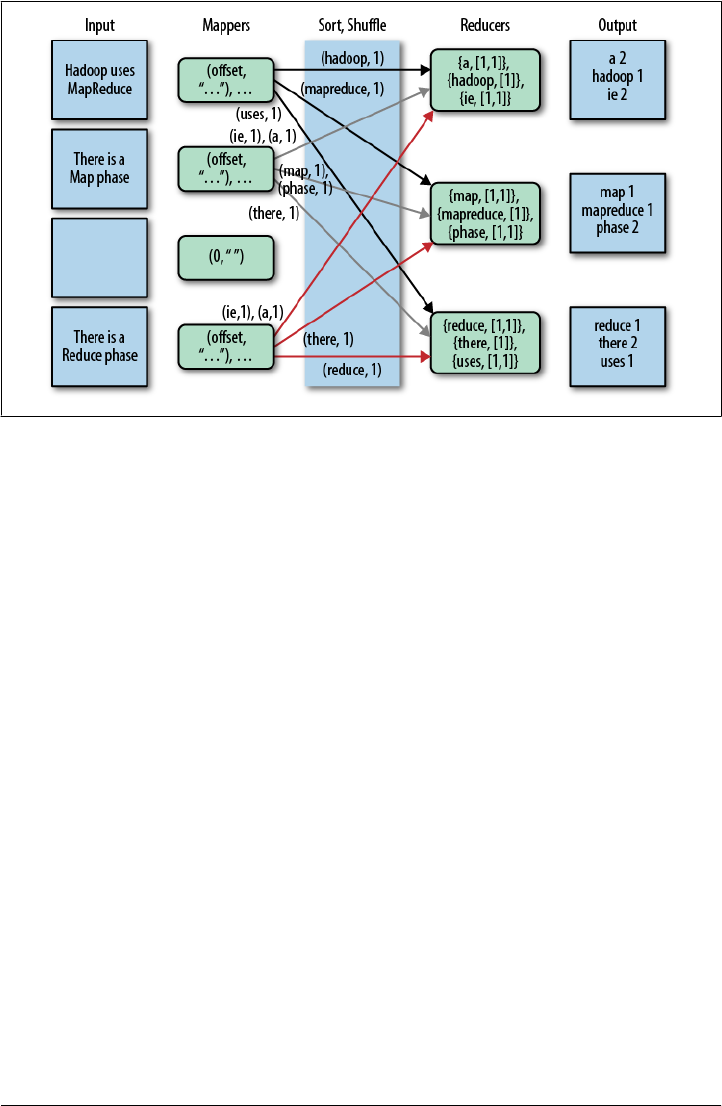

Figure 1-1 shows how Word Count works in MapReduce.

There is a lot going on here, so let’s walk through it from left to right.

Each Input box on the left-hand side of Figure 1-1 is a separate document. Here are

four documents, the third of which is empty and the others contain just a few words,

to keep things simple.

By default, a separate Mapper process is invoked to process each document. In real

scenarios, large documents might be split and each split would be sent to a separate

Mapper. Also, there are techniques for combining many small documents into a single

split for a Mapper. We won’t worry about those details now.

7. If you’re not a developer, a “Hello World” program is the traditional first program you write when learning

a new language or tool set.

4 | Chapter 1: Introduction

The fundamental data structure for input and output in MapReduce is the key-value

pair. After each Mapper is started, it is called repeatedly for each line of text from the

document. For each call, the key passed to the mapper is the character offset into the

document at the start of the line. The corresponding value is the text of the line.

In Word Count, the character offset (key) is discarded. The value, the line of text, is

tokenized into words, using one of several possible techniques (e.g., splitting on white-

space is the simplest, but it can leave in undesirable punctuation). We’ll also assume

that the Mapper converts each word to lowercase, so for example, “FUN” and “fun”

will be counted as the same word.

Finally, for each word in the line, the mapper outputs a key-value pair, with the word

as the key and the number 1 as the value (i.e., the count of “one occurrence”). Note

that the output types of the keys and values are different from the input types.

Part of Hadoop’s magic is the Sort and Shuffle phase that comes next. Hadoop sorts

the key-value pairs by key and it “shuffles” all pairs with the same key to the same

Reducer. There are several possible techniques that can be used to decide which reducer

gets which range of keys. We won’t worry about that here, but for illustrative purposes,

we have assumed in the figure that a particular alphanumeric partitioning was used. In

a real implementation, it would be different.

For the mapper to simply output a count of 1 every time a word is seen is a bit wasteful

of network and disk I/O used in the sort and shuffle. (It does minimize the memory

used in the Mappers, however.) One optimization is to keep track of the count for each

word and then output only one count for each word when the Mapper finishes. There

Figure 1-1. Word Count algorithm using MapReduce

An Overview of Hadoop and MapReduce | 5

are several ways to do this optimization, but the simple approach is logically correct

and sufficient for this discussion.

The inputs to each Reducer are again key-value pairs, but this time, each key will be

one of the words found by the mappers and the value will be a collection of all the counts

emitted by all the mappers for that word. Note that the type of the key and the type of

the value collection elements are the same as the types used in the Mapper’s output.

That is, the key type is a character string and the value collection element type is an

integer.

To finish the algorithm, all the reducer has to do is add up all the counts in the value

collection and write a final key-value pair consisting of each word and the count for

that word.

Word Count isn’t a toy example. The data it produces is used in spell checkers, language

detection and translation systems, and other applications.

Hive in the Hadoop Ecosystem

The Word Count algorithm, like most that you might implement with Hadoop, is a

little involved. When you actually implement such algorithms using the Hadoop Java

API, there are even more low-level details you have to manage yourself. It’s a job that’s

only suitable for an experienced Java developer, potentially putting Hadoop out of

reach of users who aren’t programmers, even when they understand the algorithm they

want to use.

In fact, many of those low-level details are actually quite repetitive from one job to the

next, from low-level chores like wiring together Mappers and Reducers to certain data

manipulation constructs, like filtering for just the data you want and performing SQL-

like joins on data sets. There’s a real opportunity to eliminate reinventing these idioms

by letting “higher-level” tools handle them automatically.

That’s where Hive comes in. It not only provides a familiar programming model for

people who know SQL, it also eliminates lots of boilerplate and sometimes-tricky

coding you would have to do in Java.

This is why Hive is so important to Hadoop, whether you are a DBA or a Java developer.

Hive lets you complete a lot of work with relatively little effort.

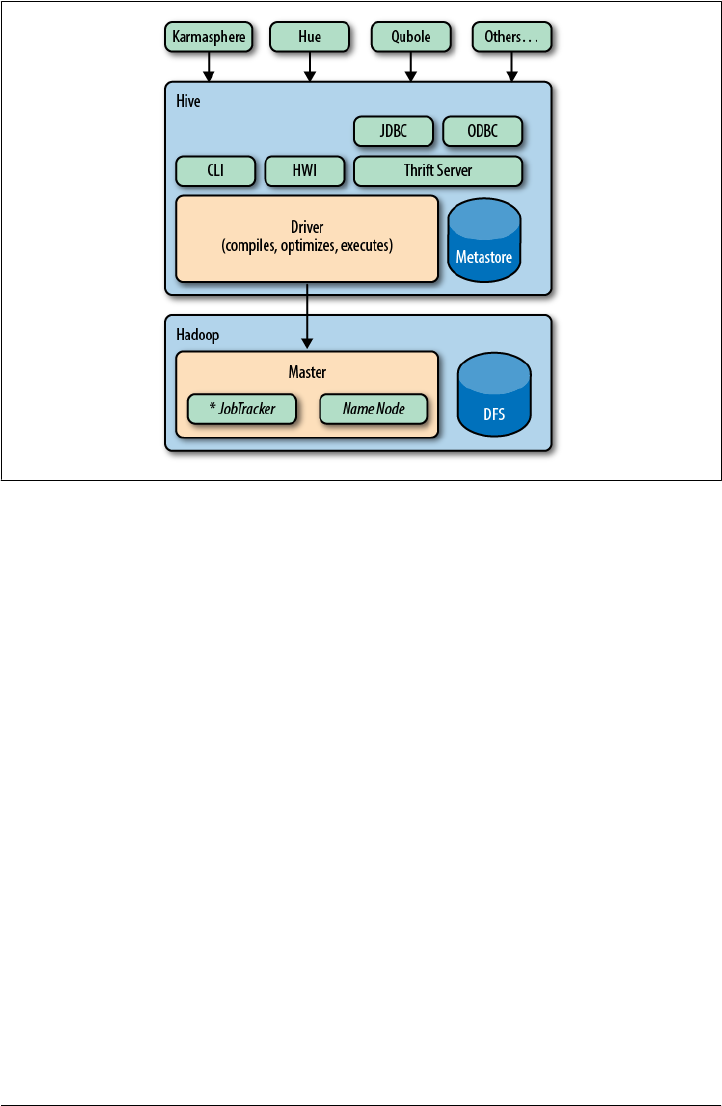

Figure 1-2 shows the major “modules” of Hive and how they work with Hadoop.

There are several ways to interact with Hive. In this book, we will mostly focus on the

CLI, command-line interface. For people who prefer graphical user interfaces, com-

mercial and open source options are starting to appear, including a commercial product

from Karmasphere (http://karmasphere.com), Cloudera’s open source Hue (https://git

hub.com/cloudera/hue), a new “Hive-as-a-service” offering from Qubole (http://qubole

.com), and others.

6 | Chapter 1: Introduction

Bundled with the Hive distribution is the CLI, a simple web interface called Hive web

interface (HWI), and programmatic access through JDBC, ODBC, and a Thrift server

(see Chapter 16).

All commands and queries go to the Driver, which compiles the input, optimizes the

computation required, and executes the required steps, usually with MapReduce jobs.

When MapReduce jobs are required, Hive doesn’t generate Java MapReduce programs.

Instead, it uses built-in, generic Mapper and Reducer modules that are driven by an

XML file representing the “job plan.” In other words, these generic modules function

like mini language interpreters and the “language” to drive the computation is encoded

in XML.

Hive communicates with the JobTracker to initiate the MapReduce job. Hive does not

have to be running on the same master node with the JobTracker. In larger clusters,

it’s common to have edge nodes where tools like Hive run. They communicate remotely

with the JobTracker on the master node to execute jobs. Usually, the data files to be

processed are in HDFS, which is managed by the NameNode.

The Metastore is a separate relational database (usually a MySQL instance) where Hive

persists table schemas and other system metadata. We’ll discuss it in detail in Chapter 2.

While this is a book about Hive, it’s worth mentioning other higher-level tools that you

should consider for your needs. Hive is best suited for data warehouse applications,

where real-time responsiveness to queries and record-level inserts, updates, and deletes

Figure 1-2. Hive modules

Hive in the Hadoop Ecosystem | 7

Downloa d f r o m W o w ! e B o o k < w w w.woweb o o k . c o m >

are not required. Of course, Hive is also very nice for people who know SQL already.

However, some of your work may be easier to accomplish with alternative tools.

Pig

The best known alternative to Hive is Pig (see http://pig.apache.org), which was devel-

oped at Yahoo! about the same time Facebook was developing Hive. Pig is also now a

top-level Apache project that is closely associated with Hadoop.

Suppose you have one or more sources of input data and you need to perform a complex

set of transformations to generate one or more collections of output data. Using Hive,

you might be able to do this with nested queries (as we’ll see), but at some point it will

be necessary to resort to temporary tables (which you have to manage yourself) to

manage the complexity.

Pig is described as a data flow language, rather than a query language. In Pig, you write

a series of declarative statements that define relations from other relations, where each

new relation performs some new data transformation. Pig looks at these declarations

and then builds up a sequence of MapReduce jobs to perform the transformations until

the final results are computed the way that you want.

This step-by-step “flow” of data can be more intuitive than a complex set of queries.

For this reason, Pig is often used as part of ETL (Extract, Transform, and Load) pro-

cesses used to ingest external data into a Hadoop cluster and transform it into a more

desirable form.

A drawback of Pig is that it uses a custom language not based on SQL. This is appro-

priate, since it is not designed as a query language, but it also means that Pig is less

suitable for porting over SQL applications and experienced SQL users will have a larger

learning curve with Pig.

Nevertheless, it’s common for Hadoop teams to use a combination of Hive and Pig,

selecting the appropriate tool for particular jobs.

Programming Pig by Alan Gates (O’Reilly) provides a comprehensive introduction to

Pig.

HBase

What if you need the database features that Hive doesn’t provide, like row-level

updates, rapid query response times, and transactions?

HBase is a distributed and scalable data store that supports row-level updates, rapid

queries, and row-level transactions (but not multirow transactions).

HBase is inspired by Google’s Big Table, although it doesn’t implement all Big Table

features. One of the important features HBase supports is column-oriented storage,

where columns can be organized into column families. Column families are physically

8 | Chapter 1: Introduction

stored together in a distributed cluster, which makes reads and writes faster when the

typical query scenarios involve a small subset of the columns. Rather than reading entire

rows and discarding most of the columns, you read only the columns you need.

HBase can be used like a key-value store, where a single key is used for each row to

provide very fast reads and writes of the row’s columns or column families. HBase also

keeps a configurable number of versions of each column’s values (marked by time-

stamps), so it’s possible to go “back in time” to previous values, when needed.

Finally, what is the relationship between HBase and Hadoop? HBase uses HDFS (or

one of the other distributed filesystems) for durable file storage of data. To provide

row-level updates and fast queries, HBase also uses in-memory caching of data and

local files for the append log of updates. Periodically, the durable files are updated with

all the append log updates, etc.

HBase doesn’t provide a query language like SQL, but Hive is now integrated with

HBase. We’ll discuss this integration in “HBase” on page 222.

For more on HBase, see the HBase website, and HBase: The Definitive Guide by Lars

George.

Cascading, Crunch, and Others

There are several other “high-level” languages that have emerged outside of the Apache

Hadoop umbrella, which also provide nice abstractions on top of Hadoop to reduce

the amount of low-level boilerplate code required for typical jobs. For completeness,

we list several of them here. All are JVM (Java Virtual Machine) libraries that can be

used from programming languages like Java, Clojure, Scala, JRuby, Groovy, and Jy-

thon, as opposed to tools with their own languages, like Hive and Pig.

Using one of these programming languages has advantages and disadvantages. It makes

these tools less attractive to nonprogrammers who already know SQL. However, for

developers, these tools provide the full power of a Turing complete programming lan-

guage. Neither Hive nor Pig are Turing complete. We’ll learn how to extend Hive with

Java code when we need additional functionality that Hive doesn’t provide (Table 1-1).

Table 1-1. Alternative higher-level libraries for Hadoop

Name URL Description

Cascading http://cascading.org Java API with Data Processing abstractions. There are now

many Domain Specific Languages (DSLs) for Cascading in other

languages, e.g., Scala, Groovy, JRuby, and Jython.

Cascalog https://github.com/nathanmarz/casca

log

A Clojure DSL for Cascading that provides additional function-

ality inspired by Datalog for data processing and query ab-

stractions.

Crunch https://github.com/cloudera/crunch A Java and Scala API for defining data flow pipelines.

Hive in the Hadoop Ecosystem | 9

Because Hadoop is a batch-oriented system, there are tools with different distributed

computing models that are better suited for event stream processing, where closer to

“real-time” responsiveness is required. Here we list several of the many alternatives

(Table 1-2).

Table 1-2. Distributed data processing tools that don’t use MapReduce

Name URL Description

Spark http://www.spark-project.org/ A distributed computing framework based on the idea of dis-

tributed data sets with a Scala API. It can work with HDFS files

and it offers notable performance improvements over Hadoop

MapReduce for many computations. There is also a project to

port Hive to Spark, called Shark (http://shark.cs.berkeley.edu/).

Storm https://github.com/nathanmarz/storm A real-time event stream processing system.

Kafka http://incubator.apache.org/kafka/in

dex.html

A distributed publish-subscribe messaging system.

Finally, it’s important to consider when you don’t need a full cluster (e.g., for smaller

data sets or when the time to perform a computation is less critical). Also, many alter-

native tools are easier to use when prototyping algorithms or doing exploration with a

subset of data. Some of the more popular options are listed in Table 1-3.

Table 1-3. Other data processing languages and tools

Name URL Description

Rhttp://r-project.org/ An open source language for statistical analysis and graphing

of data that is popular with statisticians, economists, etc. It’s

not a distributed system, so the data sizes it can handle are

limited. There are efforts to integrate R with Hadoop.

Matlab http://www.mathworks.com/products/

matlab/index.html

A commercial system for data analysis and numerical methods

that is popular with engineers and scientists.

Octave http://www.gnu.org/software/octave/ An open source clone of MatLab.

Mathematica http://www.wolfram.com/mathema

tica/

A commercial data analysis, symbolic manipulation, and nu-

merical methods system that is also popular with scientists and

engineers.

SciPy, NumPy http://scipy.org Extensive software package for scientific programming in

Python, which is widely used by data scientists.

Java Versus Hive: The Word Count Algorithm

If you are not a Java programmer, you can skip to the next section.

If you are a Java programmer, you might be reading this book because you’ll need to

support the Hive users in your organization. You might be skeptical about using Hive

for your own work. If so, consider the following example that implements the Word

10 | Chapter 1: Introduction

Count algorithm we discussed above, first using the Java MapReduce API and then

using Hive.

It’s very common to use Word Count as the first Java MapReduce program that people

write, because the algorithm is simple to understand, so you can focus on the API.

Hence, it has become the “Hello World” of the Hadoop world.

The following Java implementation is included in the Apache Hadoop distribution.8 If

you don’t know Java (and you’re still reading this section), don’t worry, we’re only

showing you the code for the size comparison:

package org.myorg;

import java.io.IOException;

import java.util.*;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.conf.*;

import org.apache.hadoop.io.*;

import org.apache.hadoop.mapreduce.*;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

public class WordCount {

public static class Map extends Mapper<LongWritable, Text, Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

String line = value.toString();

StringTokenizer tokenizer = new StringTokenizer(line);

while (tokenizer.hasMoreTokens()) {

word.set(tokenizer.nextToken());

context.write(word, one);

}

}

}

public static class Reduce extends Reducer<Text, IntWritable, Text, IntWritable> {

public void reduce(Text key, Iterable<IntWritable> values, Context context)

throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

context.write(key, new IntWritable(sum));

}

8. Apache Hadoop word count: http://wiki.apache.org/hadoop/WordCount.

Java Versus Hive: The Word Count Algorithm | 11

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

Job job = new Job(conf, "wordcount");

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

job.setMapperClass(Map.class);

job.setReducerClass(Reduce.class);

job.setInputFormatClass(TextInputFormat.class);

job.setOutputFormatClass(TextOutputFormat.class);

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.waitForCompletion(true);

}

}

That was 63 lines of Java code. We won’t explain the API details.9 Here is the same

calculation written in HiveQL, which is just 8 lines of code, and does not require com-

pilation nor the creation of a “JAR” (Java ARchive) file:

CREATE TABLE docs (line STRING);

LOAD DATA INPATH 'docs' OVERWRITE INTO TABLE docs;

CREATE TABLE word_counts AS

SELECT word, count(1) AS count FROM

(SELECT explode(split(line, '\s')) AS word FROM docs) w

GROUP BY word

ORDER BY word;

We’ll explain all this HiveQL syntax later on.

9. See Hadoop: The Definitive Guide by Tom White for the details.

12 | Chapter 1: Introduction

In both examples, the files were tokenized into words using the simplest possible ap-

proach; splitting on whitespace boundaries. This approach doesn’t properly handle

punctuation, it doesn’t recognize that singular and plural forms of words are the same

word, etc. However, it’s good enough for our purposes here.10

The virtue of the Java API is the ability to customize and fine-tune every detail of an

algorithm implementation. However, most of the time, you just don’t need that level

of control and it slows you down considerably when you have to manage all those

details.

If you’re not a programmer, then writing Java MapReduce code is out of reach. How-

ever, if you already know SQL, learning Hive is relatively straightforward and many

applications are quick and easy to implement.

What’s Next

We described the important role that Hive plays in the Hadoop ecosystem. Now let’s

get started!

10. There is one other minor difference. The Hive query hardcodes a path to the data, while the Java code

takes the path as an argument. In Chapter 2, we’ll learn how to use Hive variables in scripts to avoid

hardcoding such details.

What’s Next | 13

CHAPTER 2

Getting Started

Let’s install Hadoop and Hive on our personal workstation. This is a convenient way

to learn and experiment with Hadoop. Then we’ll discuss how to configure Hive for

use on Hadoop clusters.

If you already use Amazon Web Services, the fastest path to setting up Hive for learning

is to run a Hive-configured job flow on Amazon Elastic MapReduce (EMR). We discuss

this option in Chapter 21.

If you have access to a Hadoop cluster with Hive already installed, we encourage

you to skim the first part of this chapter and pick up again at “What Is Inside

Hive?” on page 22.

Installing a Preconfigured Virtual Machine

There are several ways you can install Hadoop and Hive. An easy way to install a com-

plete Hadoop system, including Hive, is to download a preconfigured virtual ma-

chine (VM) that runs in VMWare1 or VirtualBox2. For VMWare, either VMWare

Player for Windows and Linux (free) or VMWare Fusion for Mac OS X (inexpensive)

can be used. VirtualBox is free for all these platforms, and also Solaris.

The virtual machines use Linux as the operating system, which is currently the only

recommended operating system for running Hadoop in production.3

Using a virtual machine is currently the only way to run Hadoop on

Windows systems, even when Cygwin or similar Unix-like software is

installed.

1. http://vmware.com.

2. https://www.virtualbox.org/.

3. However, some vendors are starting to support Hadoop on other systems. Hadoop has been used in

production on various Unix systems and it works fine on Mac OS X for development use.

15

Most of the preconfigured virtual machines (VMs) available are only designed for

VMWare, but if you prefer VirtualBox you may find instructions on the Web that

explain how to import a particular VM into VirtualBox.

You can download preconfigured virtual machines from one of the websites given in

Table 2-1.4 Follow the instructions on these web sites for loading the VM into VMWare.

Table 2-1. Preconfigured Hadoop virtual machines for VMWare

Provider URL Notes

Cloudera, Inc. https://ccp.cloudera.com/display/SUPPORT/Clou

dera’s+Hadoop+Demo+VM

Uses Cloudera’s own distribution

of Hadoop, CDH3 or CDH4.

MapR, Inc. http://www.mapr.com/doc/display/MapR/Quick

+Start+-+Test+Drive+MapR+on+a+Virtual

+Machine

MapR’s Hadoop distribution,

which replaces HDFS with the

MapR Filesystem (MapR-FS).

Hortonworks,

Inc.

http://docs.hortonworks.com/HDP-1.0.4-PREVIEW

-6/Using_HDP_Single_Box_VM/HDP_Single_Box

_VM.htm

Based on the latest, stable Apache

releases.

Think Big An-

alytics, Inc.

http://thinkbigacademy.s3-website-us-east-1.ama

zonaws.com/vm/README.html

Based on the latest, stable Apache

releases.

Next, go to “What Is Inside Hive?” on page 22.

Detailed Installation

While using a preconfigured virtual machine may be an easy way to run Hive, installing

Hadoop and Hive yourself will give you valuable insights into how these tools work,

especially if you are a developer.

The instructions that follow describe the minimum necessary Hadoop and Hive

installation steps for your personal Linux or Mac OS X workstation. For production

installations, consult the recommended installation procedures for your Hadoop

distributor.

Installing Java

Hive requires Hadoop and Hadoop requires Java. Ensure your system has a recent

v1.6.X or v1.7.X JVM (Java Virtual Machine). Although the JRE (Java Runtime Envi-

ronment) is all you need to run Hive, you will need the full JDK (Java Development

Kit) to build examples in this book that demonstrate how to extend Hive with Java

code. However, if you are not a programmer, the companion source code distribution

for this book (see the Preface) contains prebuilt examples.

4. These are the current URLs at the time of this writing.

16 | Chapter 2: Getting Started

After the installation is complete, you’ll need to ensure that Java is in your path and

the JAVA_HOME environment variable is set.

Linux-specific Java steps

On Linux systems, the following instructions set up a bash file in the /etc/profile.d/

directory that defines JAVA_HOME for all users. Changing environmental settings in

this folder requires root access and affects all users of the system. (We’re using $ as the

bash shell prompt.) The Oracle JVM installer typically installs the software in /usr/java/

jdk-1.6.X (for v1.6) and it creates sym-links from /usr/java/default and /usr/java/latest

to the installation:

$ /usr/java/latest/bin/java -version

java version "1.6.0_23"

Java(TM) SE Runtime Environment (build 1.6.0_23-b05)

Java HotSpot(TM) 64-Bit Server VM (build 19.0-b09, mixed mode)

$ sudo echo "export JAVA_HOME=/usr/java/latest" > /etc/profile.d/java.sh

$ sudo echo "PATH=$PATH:$JAVA_HOME/bin" >> /etc/profile.d/java.sh

$ . /etc/profile

$ echo $JAVA_HOME

/usr/java/latest

If you’ve never used sudo (“super user do something”) before to run a

command as a “privileged” user, as in two of the commands, just type

your normal password when you’re asked for it. If you’re on a personal

machine, your user account probably has “sudo rights.” If not, ask your

administrator to run those commands.

However, if you don’t want to make permanent changes that affect all

users of the system, an alternative is to put the definitions shown for

PATH and JAVA_HOME in your $HOME/.bashrc file:

export JAVA_HOME=/usr/java/latest

export PATH=$PATH:$JAVA_HOME/bin

Mac OS X−specific Java steps

Mac OS X systems don’t have the /etc/profile.d directory and they are typically

single-user systems, so it’s best to put the environment variable definitions in your

$HOME/.bashrc. The Java paths are different, too, and they may be in one of several

places.5

Here are a few examples. You’ll need to determine where Java is installed on your Mac

and adjust the definitions accordingly. Here is a Java 1.6 example for Mac OS X:

$ export JAVA_HOME=/System/Library/Frameworks/JavaVM.framework/Versions/1.6/Home

$ export PATH=$PATH:$JAVA_HOME/bin

5. At least that’s the current situation on Dean’s Mac. This discrepancy may actually reflect the fact that

stewardship of the Mac OS X Java port is transitioning from Apple to Oracle as of Java 1.7.

Detailed Installation | 17

Here is a Java 1.7 example for Mac OS X:

$ export JAVA_HOME=/Library/Java/JavaVirtualMachines/1.7.0.jdk/Contents/Home

$ export PATH=$PATH:$JAVA_HOME/bin

OpenJDK 1.7 releases also install under /Library/Java/JavaVirtualMachines.

Installing Hadoop

Hive runs on top of Hadoop. Hadoop is an active open source project with many re-

leases and branches. Also, many commercial software companies are now producing

their own distributions of Hadoop, sometimes with custom enhancements or replace-

ments for some components. This situation promotes innovation, but also potential

confusion and compatibility issues.

Keeping software up to date lets you exploit the latest performance enhancements and

bug fixes. However, sometimes you introduce new bugs and compatibility issues. So,

for this book, we’ll show you how to install the Apache Hadoop release v0.20.2. This

edition is not the most recent stable release, but it has been the reliable gold standard

for some time for performance and compatibility.

However, you should be able to choose a different version, distribution, or release

without problems for learning and using Hive, such as the Apache Hadoop v0.20.205

or 1.0.X releases, Cloudera CDH3 or CDH4, MapR M3 or M5, and the forthcoming

Hortonworks distribution. Note that the bundled Cloudera, MapR, and planned

Hortonworks distributions all include a Hive release.

However, we don’t recommend installing the new, alpha-quality, “Next Generation”

Hadoop v2.0 (also known as v0.23), at least for the purposes of this book. While this

release will bring significant enhancements to the Hadoop ecosystem, it is too new for

our purposes.

To install Hadoop on a Linux system, run the following commands. Note that we

wrapped the long line for the wget command:

$ cd ~ # or use another directory of your choice.

$ wget \

http://www.us.apache.org/dist/hadoop/common/hadoop-0.20.2/hadoop-0.20.2.tar.gz

$ tar -xzf hadoop-0.20.2.tar.gz

$ sudo echo "export HADOOP_HOME=$PWD/hadoop-0.20.2" > /etc/profile.d/hadoop.sh

$ sudo echo "PATH=$PATH:$HADOOP_HOME/bin" >> /etc/profile.d/hadoop.sh

$ . /etc/profile

To install Hadoop on a Mac OS X system, run the following commands. Note that we

wrapped the long line for the curl command:

$ cd ~ # or use another directory of your choice.

$ curl -o \

http://www.us.apache.org/dist/hadoop/common/hadoop-0.20.2/hadoop-0.20.2.tar.gz

$ tar -xzf hadoop-0.20.2.tar.gz

$ echo "export HADOOP_HOME=$PWD/hadoop-0.20.2" >> $HOME/.bashrc

18 | Chapter 2: Getting Started

$ echo "PATH=$PATH:$HADOOP_HOME/bin" >> $HOME/.bashrc

$ . $HOME/.bashrc

In what follows, we will assume that you added $HADOOP_HOME/bin to your path, as in

the previous commands. This will allow you to simply type the hadoop command

without the path prefix.

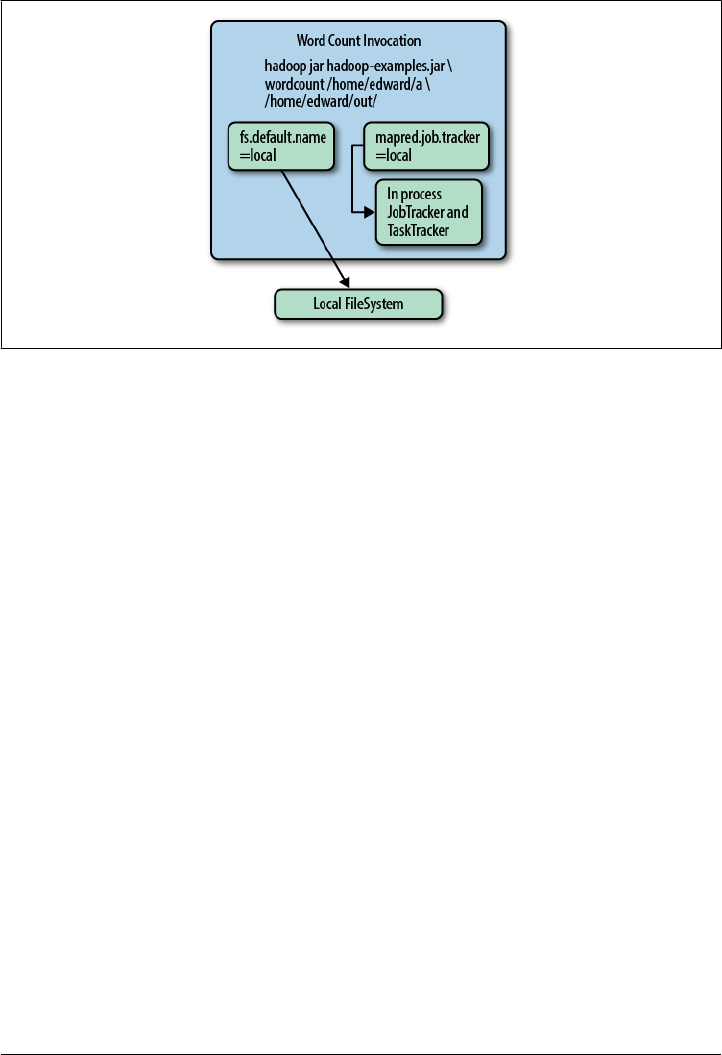

Local Mode, Pseudodistributed Mode, and Distributed Mode

Before we proceed, let’s clarify the different runtime modes for Hadoop. We mentioned

above that the default mode is local mode, where filesystem references use the local

filesystem. Also in local mode, when Hadoop jobs are executed (including most Hive

queries), the Map and Reduce tasks are run as part of the same process.

Actual clusters are configured in distributed mode, where all filesystem references that

aren’t full URIs default to the distributed filesystem (usually HDFS) and jobs are man-

aged by the JobTracker service, with individual tasks executed in separate processes.

A dilemma for developers working on personal machines is the fact that local mode

doesn’t closely resemble the behavior of a real cluster, which is important to remember

when testing applications. To address this need, a single machine can be configured to

run in pseudodistributed mode, where the behavior is identical to distributed mode,

namely filesystem references default to the distributed filesystem and jobs are managed

by the JobTracker service, but there is just a single machine. Hence, for example, HDFS

file block replication is limited to one copy. In other words, the behavior is like a single-

node “cluster.” We’ll discuss these configuration options in “Configuring Your Ha-

doop Environment” on page 24.

Because Hive uses Hadoop jobs for most of its work, its behavior reflects the Hadoop

mode you’re using. However, even when running in distributed mode, Hive can decide

on a per-query basis whether or not it can perform the query using just local mode,

where it reads the data files and manages the MapReduce tasks itself, providing faster

turnaround. Hence, the distinction between the different modes is more of an

execution style for Hive than a deployment style, as it is for Hadoop.

For most of the book, it won’t matter which mode you’re using. We’ll assume you’re

working on a personal machine in local mode and we’ll discuss the cases where the

mode matters.

When working with small data sets, using local mode execution

will make Hive queries much faster. Setting the property set

hive.exec.mode.local.auto=true; will cause Hive to use this mode more

aggressively, even when you are running Hadoop in distributed or pseu-

dodistributed mode. To always use this setting, add the command to

your $HOME/.hiverc file (see “The .hiverc File” on page 36).

Detailed Installation | 19

Testing Hadoop

Assuming you’re using local mode, let’s look at the local filesystem two different ways.

The following output of the Linux ls command shows the typical contents of the “root”

directory of a Linux system:

$ ls /

bin cgroup etc lib lost+found mnt opt root selinux sys user var

boot dev home lib64 media null proc sbin srv tmp usr

Hadoop provides a dfs tool that offers basic filesystem functionality like ls for the

default filesystem. Since we’re using local mode, the default filesystem is the local file-

system:6

$ hadoop dfs -ls /

Found 26 items

drwxrwxrwx - root root 24576 2012-06-03 14:28 /tmp

drwxr-xr-x - root root 4096 2012-01-25 22:43 /opt

drwx------ - root root 16384 2010-12-30 14:56 /lost+found

drwxr-xr-x - root root 0 2012-05-11 16:44 /selinux

dr-xr-x--- - root root 4096 2012-05-23 22:32 /root

...

If instead you get an error message that hadoop isn’t found, either invoke the command

with the full path (e.g., $HOME/hadoop-0.20.2/bin/hadoop) or add the bin directory to

your PATH variable, as discussed in “Installing Hadoop” on page 18 above.

If you find yourself using the hadoop dfs command frequently, it’s

convenient to define an alias for it (e.g., alias hdfs="hadoop dfs").

Hadoop offers a framework for MapReduce. The Hadoop distribution contains an

implementation of the Word Count algorithm we discussed in Chapter 1. Let’s run it!

Start by creating an input directory (inside your current working directory) with files

to be processed by Hadoop:

$ mkdir wc-in

$ echo "bla bla" > wc-in/a.txt

$ echo "bla wa wa " > wc-in/b.txt

Use the hadoop command to launch the Word Count application on the input directory

we just created. Note that it’s conventional to always specify directories for input and

output, not individual files, since there will often be multiple input and/or output files

per directory, a consequence of the parallelism of the system.

6. Unfortunately, the dfs -ls command only provides a “long listing” format. There is no short format, like

the default for the Linux ls command.

20 | Chapter 2: Getting Started

If you are running these commands on your local installation that was configured to

use local mode, the hadoop command will launch the MapReduce components in the

same process. If you are running on a cluster or on a single machine using pseudodis-

tributed mode, the hadoop command will launch one or more separate processes using

the JobTracker service (and the output below will be slightly different). Also, if you are

running with a different version of Hadoop, change the name of the examples.jar as

needed:

$ hadoop jar $HADOOP_HOME/hadoop-0.20.2-examples.jar wordcount wc-in wc-out

12/06/03 15:40:26 INFO input.FileInputFormat: Total input paths to process : 2

...

12/06/03 15:40:27 INFO mapred.JobClient: Running job: job_local_0001

12/06/03 15:40:30 INFO mapred.JobClient: map 100% reduce 0%

12/06/03 15:40:41 INFO mapred.JobClient: map 100% reduce 100%

12/06/03 15:40:41 INFO mapred.JobClient: Job complete: job_local_0001

The results of the Word count application can be viewed through local filesystem

commands:

$ ls wc-out/*

part-r-00000

$ cat wc-out/*

bla 3

wa 2

They can also be viewed by the equivalent dfs command (again, because we assume

you are running in local mode):

$ hadoop dfs -cat wc-out/*

bla 3

wa 2

For very big files, if you want to view just the first or last parts, there is

no -more, -head, nor -tail subcommand. Instead, just pipe the output

of the -cat command through the shell’s more, head, or tail. For exam-

ple: hadoop dfs -cat wc-out/* | more.

Now that we have installed and tested an installation of Hadoop, we can install Hive.

Installing Hive

Installing Hive is similar to installing Hadoop. We will download and extract a tarball

for Hive, which does not include an embedded version of Hadoop. A single Hive binary

is designed to work with multiple versions of Hadoop. This means it’s often easier and

less risky to upgrade to newer Hive releases than it is to upgrade to newer Hadoop

releases.

Hive uses the environment variable HADOOP_HOME to locate the Hadoop JARs and con-

figuration files. So, make sure you set that variable as discussed above before proceed-

ing. The following commands work for both Linux and Mac OS X:

Detailed Installation | 21

$ cd ~ # or use another directory of your choice.

$ curl -o http://archive.apache.org/dist/hive/hive-0.9.0/hive-0.9.0-bin.tar.gz

$ tar -xzf hive-0.9.0.tar.gz

$ sudo mkdir -p /user/hive/warehouse

$ sudo chmod a+rwx /user/hive/warehouse

As you can infer from these commands, we are using the latest stable release of Hive

at the time of this writing, v0.9.0. However, most of the material in this book works

with Hive v0.7.X and v0.8.X. We’ll call out the differences as we come to them.

You’ll want to add the hive command to your path, like we did for the hadoop command.

We’ll follow the same approach, by first defining a HIVE_HOME variable, but unlike

HADOOP_HOME, this variable isn’t really essential. We’ll assume it’s defined for some ex-

amples later in the book.

For Linux, run these commands:

$ sudo echo "export HIVE_HOME=$PWD/hive-0.9.0" > /etc/profile.d/hive.sh

$ sudo echo "PATH=$PATH:$HIVE_HOME/bin >> /etc/profile.d/hive.sh

$ . /etc/profile

For Mac OS X, run these commands:

$ echo "export HIVE_HOME=$PWD/hive-0.9.0" >> $HOME/.bashrc

$ echo "PATH=$PATH:$HIVE_HOME/bin" >> $HOME/.bashrc

$ . $HOME/.bashrc

What Is Inside Hive?

The core of a Hive binary distribution contains three parts. The main part is the Java

code itself. Multiple JAR (Java archive) files such as hive-exec*.jar and hive-meta

store*.jar are found under the $HIVE_HOME/lib directory. Each JAR file implements

a particular subset of Hive’s functionality, but the details don’t concern us now.

The $HIVE_HOME/bin directory contains executable scripts that launch various Hive

services, including the hive command-line interface (CLI). The CLI is the most popular

way to use Hive. We will use hive (in lowercase, with a fixed-width font) to refer to the

CLI, except where noted. The CLI can be used interactively to type in statements one

at a time or it can be used to run “scripts” of Hive statements, as we’ll see.

Hive also has other components. A Thrift service provides remote access from other

processes. Access using JDBC and ODBC are provided, too. They are implemented on

top of the Thrift service. We’ll describe these features in later chapters.

All Hive installations require a metastore service, which Hive uses to store table schemas

and other metadata. It is typically implemented using tables in a relational database.

By default, Hive uses a built-in Derby SQL server, which provides limited, single-

process storage. For example, when using Derby, you can’t run two simultaneous in-

stances of the Hive CLI. However, this is fine for learning Hive on a personal machine

22 | Chapter 2: Getting Started

and some developer tasks. For clusters, MySQL or a similar relational database is

required. We will discuss the details in “Metastore Using JDBC” on page 28.

Finally, a simple web interface, called Hive Web Interface (HWI), provides remote

access to Hive.

The conf directory contains the files that configure Hive. Hive has a number of con-

figuration properties that we will discuss as needed. These properties control features

such as the metastore (where data is stored), various optimizations, and “safety

controls,” etc.

Starting Hive

Let’s finally start the Hive command-line interface (CLI) and run a few commands!

We’ll briefly comment on what’s happening, but save the details for discussion later.

In the following session, we’ll use the $HIVE_HOME/bin/hive command, which is a

bash shell script, to start the CLI. Substitute the directory where Hive is installed on

your system whenever $HIVE_HOME is listed in the following script. Or, if you added

$HIVE_HOME/bin to your PATH, you can just type hive to run the command. We’ll make

that assumption for the rest of the book.

As before, $ is the bash prompt. In the Hive CLI, the hive> string is the hive prompt,

and the indented > is the secondary prompt. Here is a sample session, where we have

added a blank line after the output of each command, for clarity:

$ cd $HIVE_HOME

$ bin/hive

Hive history file=/tmp/myname/hive_job_log_myname_201201271126_1992326118.txt

hive> CREATE TABLE x (a INT);

OK

Time taken: 3.543 seconds

hive> SELECT * FROM x;

OK

Time taken: 0.231 seconds

hive> SELECT *

> FROM x;

OK

Time taken: 0.072 seconds

hive> DROP TABLE x;

OK

Time taken: 0.834 seconds

hive> exit;

$

The first line printed by the CLI is the local filesystem location where the CLI writes