Vivado Design Suite: AXI Reference Guide (UG1037) Ug1037

ug1037-vivado-axi-reference-guide

User Manual:

Open the PDF directly: View PDF ![]() .

.

Page Count: 175 [warning: Documents this large are best viewed by clicking the View PDF Link!]

- Vivado Design Suite: AXI Reference Guide

- Revision History

- Table of Contents

- Ch. 1: Introducing AXI for Vivado

- Ch. 2: AXI Support in Xilinx Tools and IP

- Ch. 3: Samples of Vivado AXI IP and Xilinx Processors

- Overview

- AXI Infrastructure IP Cores

- Xilinx AXI SmartConnect and AXI Interconnect IP

- AXI SmartConnect IP

- AXI4-Stream Interconnect Core IP

- AXI Virtual FIFO Controller

- The AXI4-Stream interconnect can also perform local FIFO buffering, clock conversion, and width conversion to adapt the interface of the stream endpoints to the data path of the virtual FIFO controller and the AXI memory controller

- DataMover

- AXI4 DMA

- Simulating IP

- Using Debug and IP

- Zynq UltraScale+ MPSoC Processor Device

- Zynq-7000 All Programmable SoC Processor IP

- MicroBlaze Processor

- Ch. 4: AXI Feature Adoption in Xilinx Devices

- Ch. 5: Migrating to Xilinx AXI Protocols

- Ch. 6: AXI System Optimization: Tips and Hints

- Introduction

- AXI System Optimization

- AXI4-based Vivado Multi-Ported Memory Controller: AXI4 System Optimization Example

- Common Pitfalls Leading to AXI Systems of Poor Quality Results

- Optimizing AXI on Zynq-7000 AP SoC Processors

- Ch. 7: AXI4-Stream IP Interoperability: Tips and Hints

- Appx. A: AXI Adoption Summary

- Appx. B: AXI Terminology

- Appx. C: Additional Resources and Legal Notices

Vivado AXI Reference

[optional]

UG1037 (v4.0) July 15, 2017 [optional]

Vivado Design

Suite

AXI Reference Guide

UG1037 (v4.0) July 15, 2017

Vivado AXI Reference Guide www.xilinx.com 2

UG1037 (v4.0) July 15, 2017

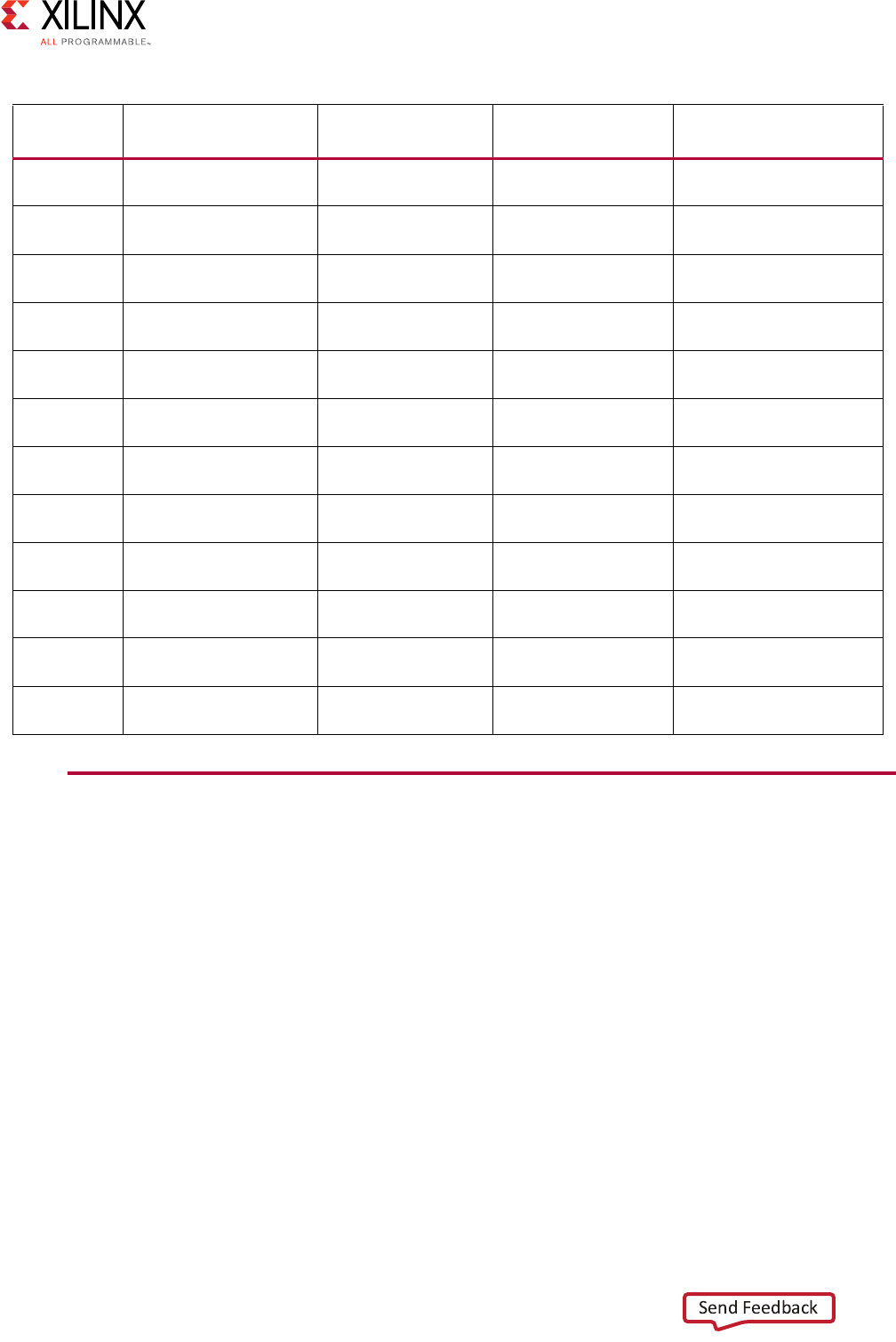

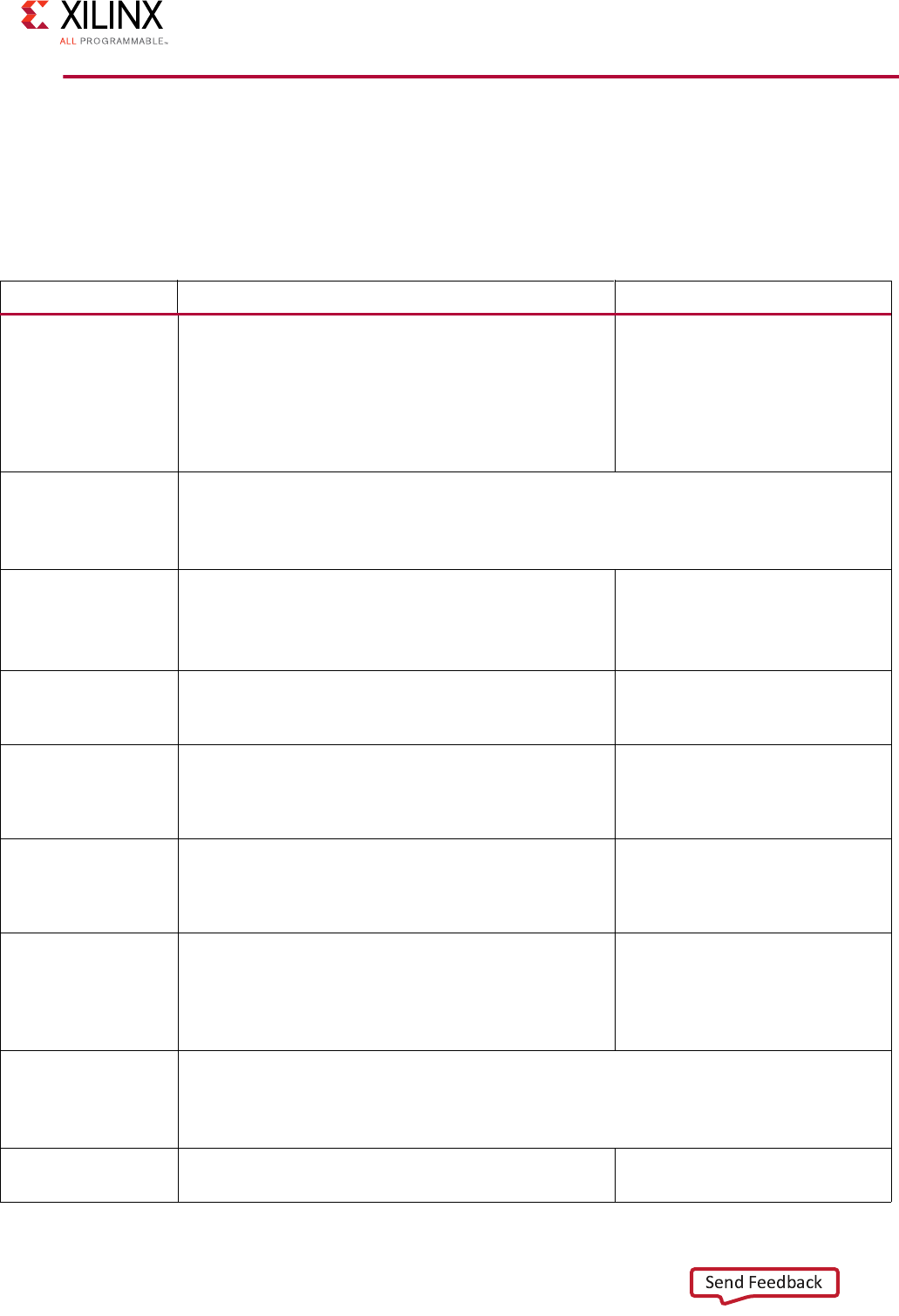

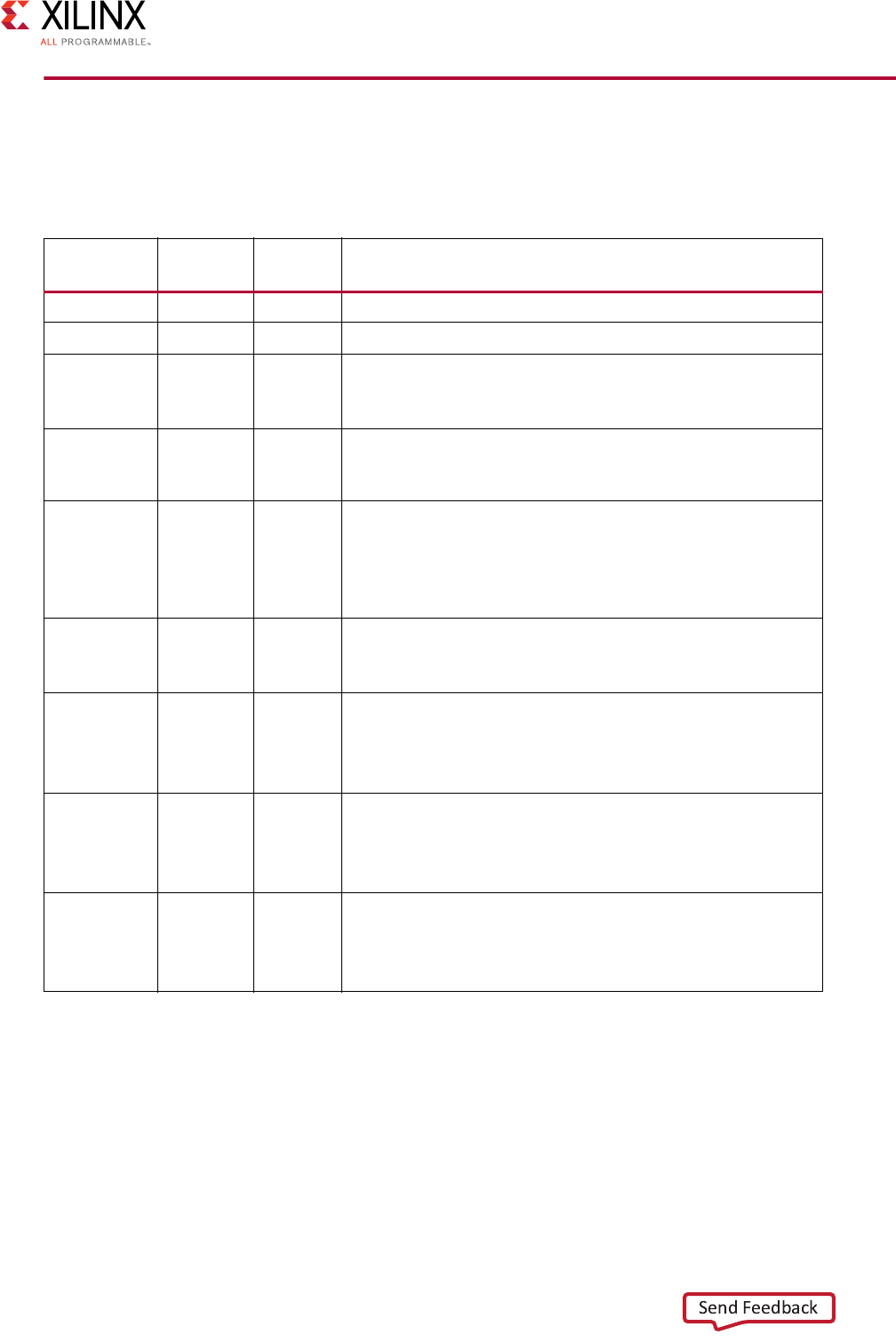

Revision History

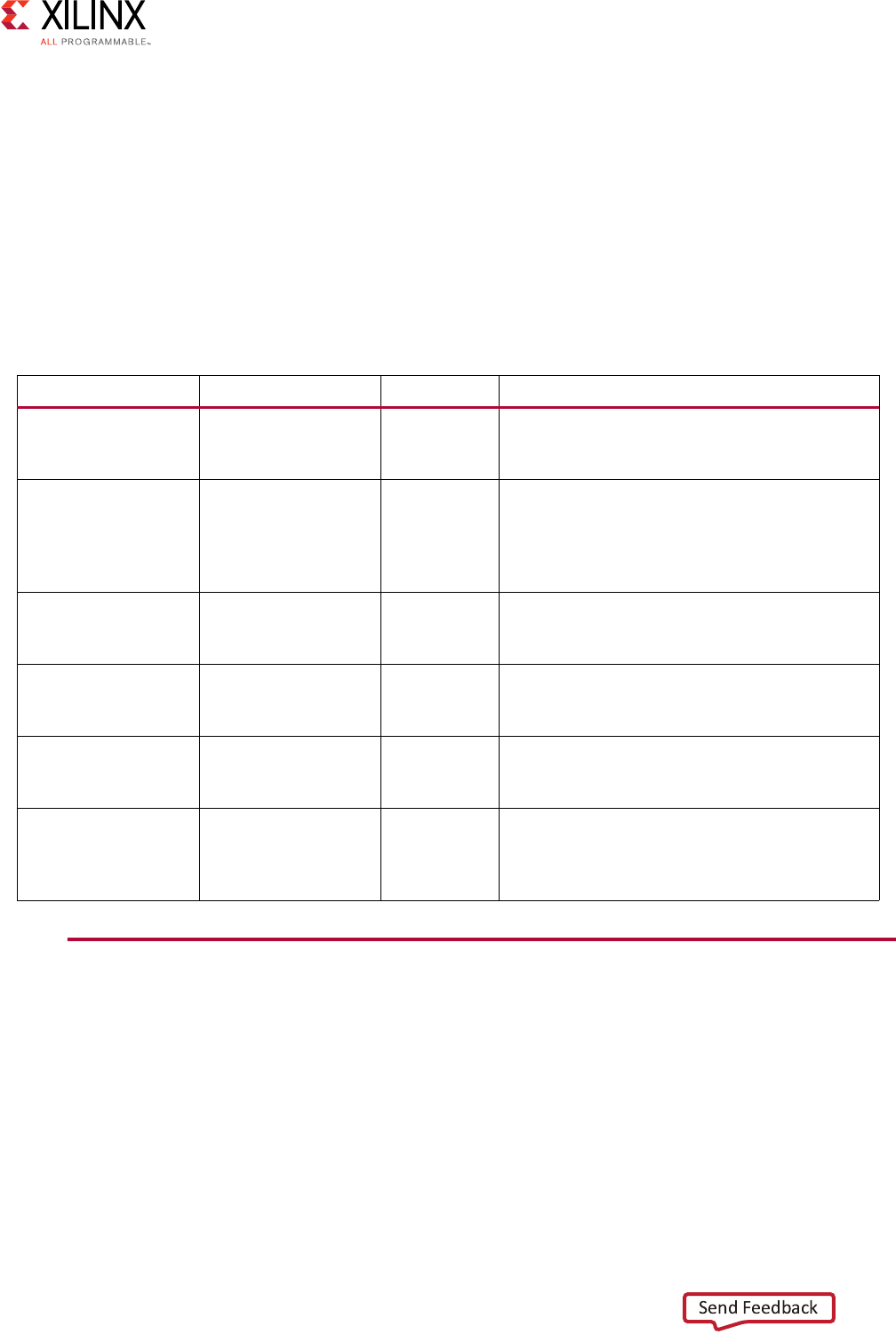

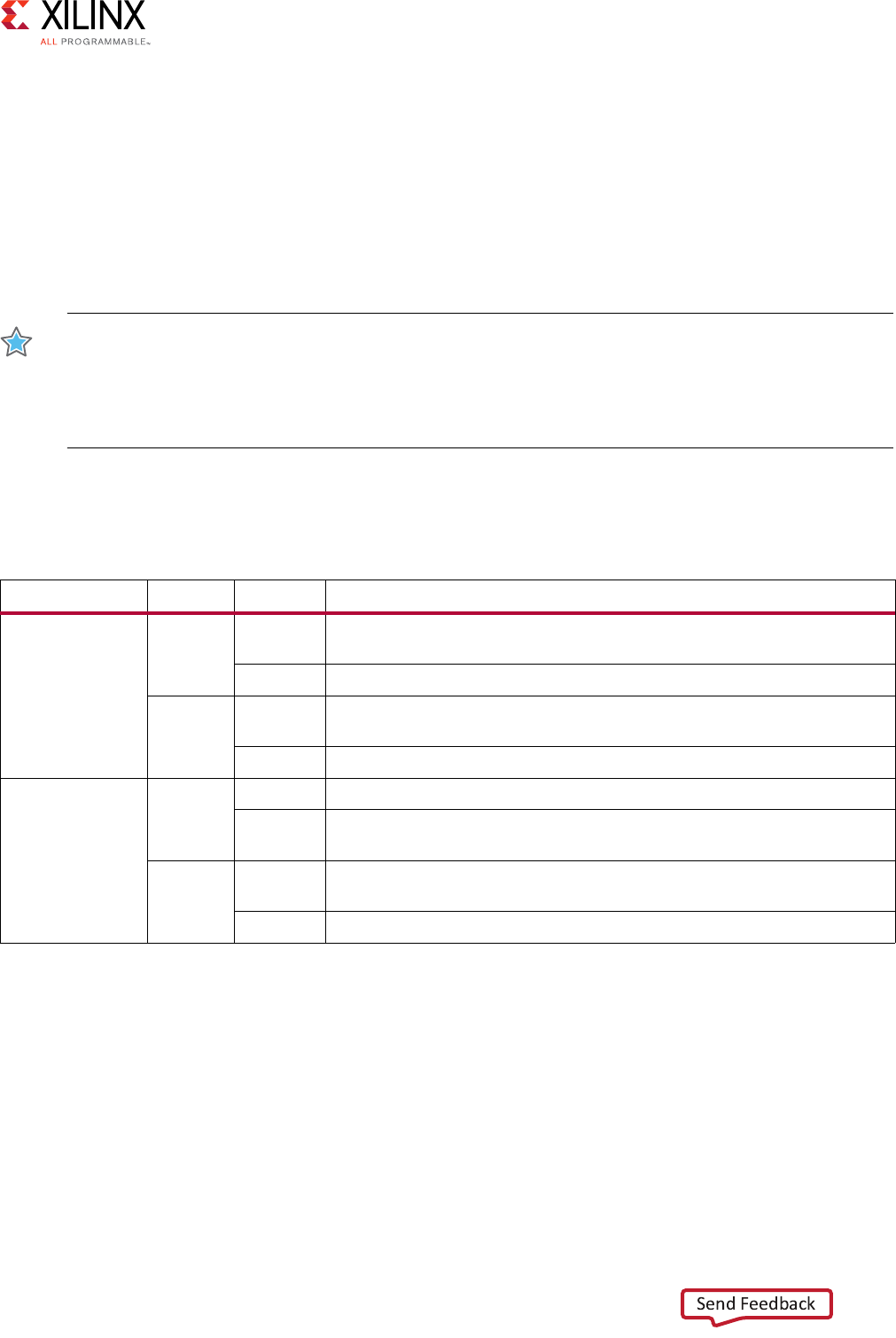

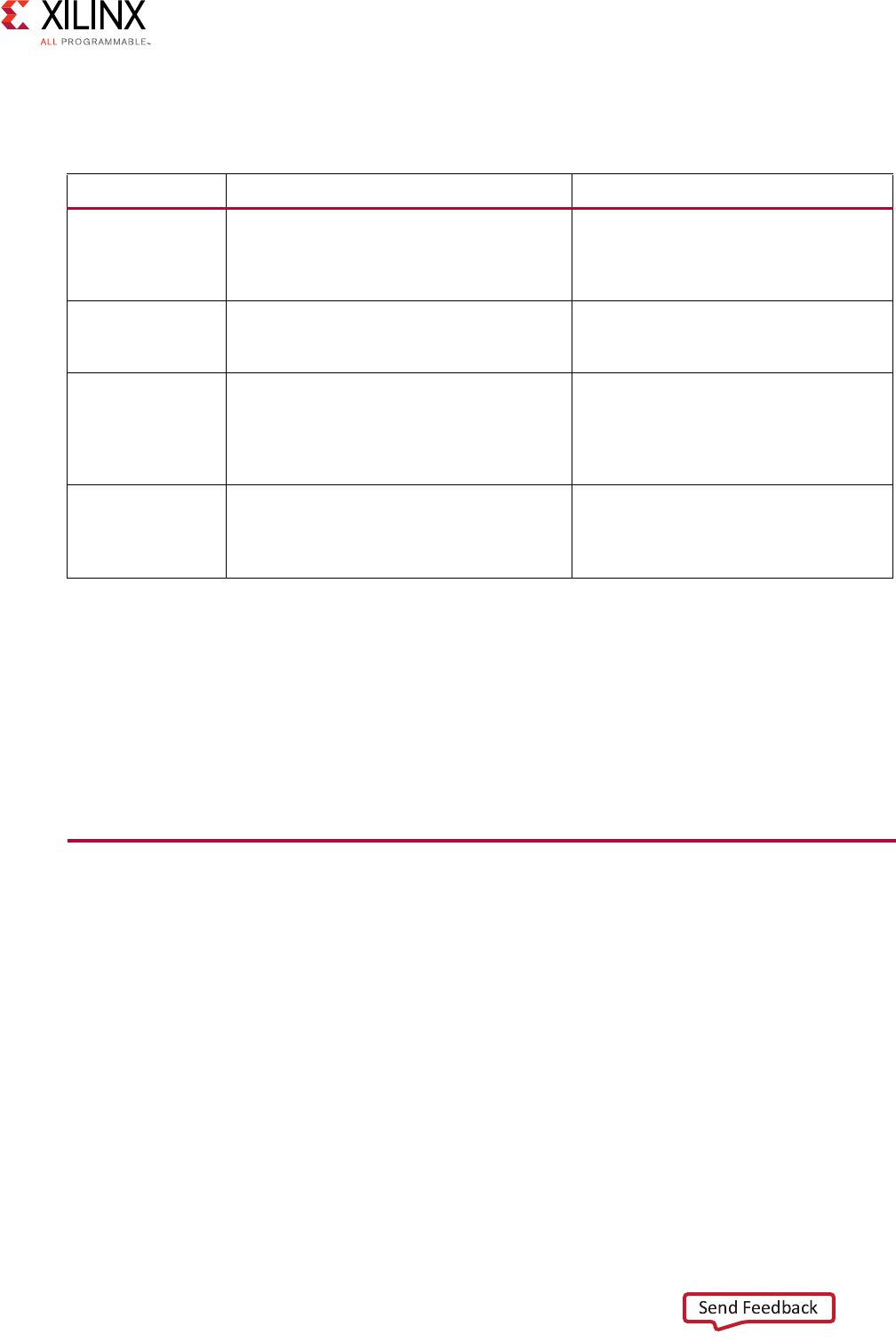

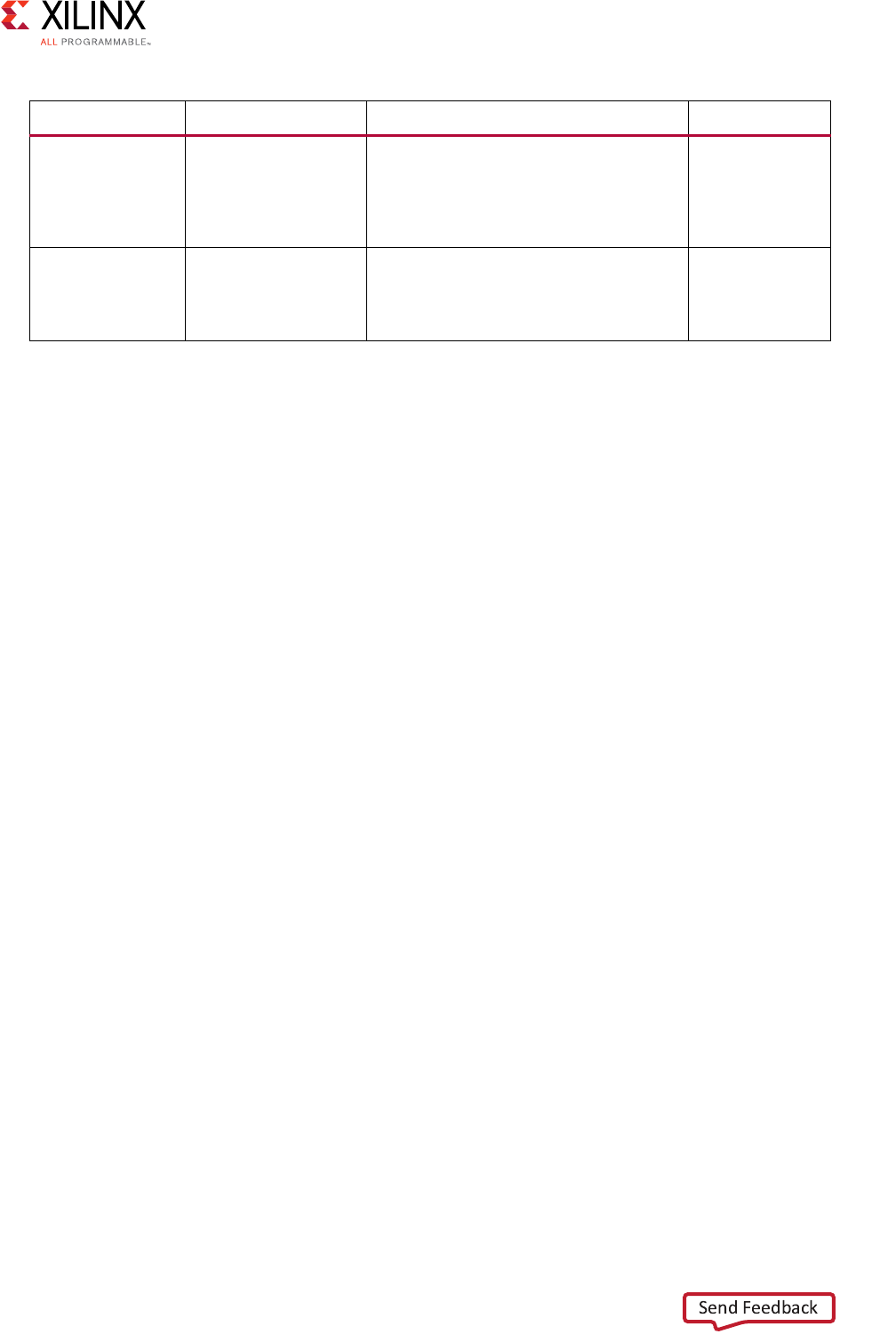

The following table shows the revision history for this document.

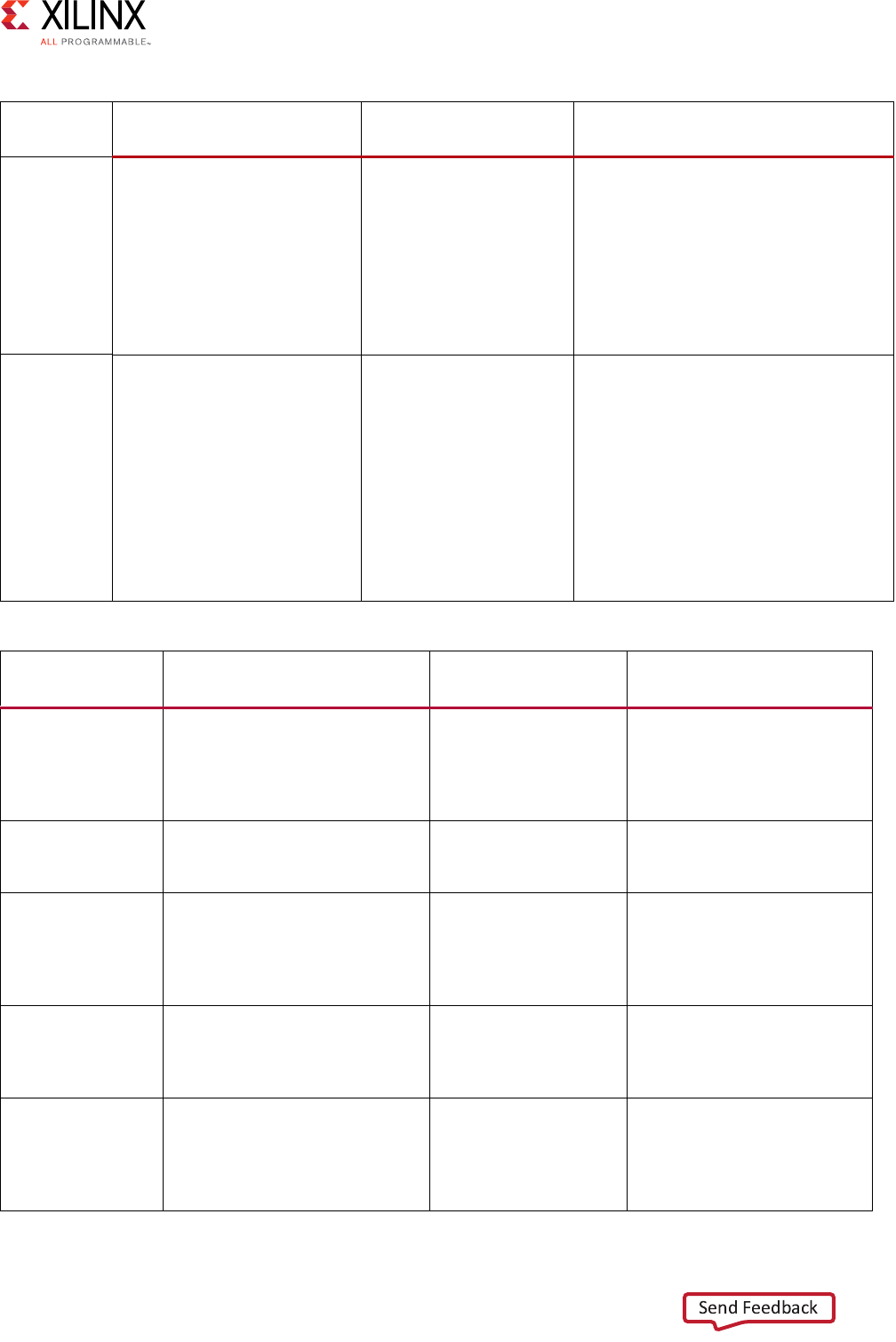

Date Version Revision

07/15/2017 4.0 Updated to match the new Vivado “Look and Feel”.

Updated all IP to reflect current features.

Added Zynq UltraScale+ MPSoC Processor Device in Chapter 3.

Added AXI SmartConnect IP in Chapter 3, and mention of SmartConnect IP

capabilities throughout the document.

Added AXI Verification IP in Chapter 3.

Added AXI4-Stream Verification IP in Chapter 3.

Added Zynq-7000 AP SoC Verification IP in Chapter 3.

06/24/2015 3.0 Added Quick Take Videos.

Updated the AXI IP Catalog Figure 2-1.

Updated the IP Project Settings Packaging tab in Figure 2-6.

Changed Features and Limitations in AXI Infrastructure IP Cores.

Updated Vivado Lab Tools to Vivado Lab Edition throughout the document.

Added XAPP1231 document reference to additional resources.

Added direct links to destinations.

11/20/2014 2.1 Corrected AWCACHE and ARCACHE for AXI4-Lite to “Signal not present” in

Appendix A, Write Data Channel Signals and Appendix A, Read Data Channel Signals.

11/19/2014 2.0 Changed:

IP Interoperability. Using Vivado AXI IP in RTL Projects.

Using the Create and Package IP Wizard for AXI IP.

Using Vivado IP Integrator to Assemble AXI IP.

Adding AXI IP to the IP Catalog Using Vivado IP Packager.

Using AXI IP in System Generator for DSP.

Added:

Adding AXI Interfaces Using High Level Synthesis.

AXI Virtual FIFO Controller. DataMover

Simulating IP. Using Debug and IP.

Performance Monitor IP. AXI BFM.

Bus Functional Models.

Choosing a Programmable Logic Interface.

Zynq-7000 All Programmable SoC Processor IP.

MicroBlaze Processor.

Added: Migrating to AXI for IP Cores.

Migrating to AXI for IP Cores.

Migrating HDL Designs to use DSP IP with AXI4-Stream.

Migrating IP Using the Vivado Create and Package Wizard.

High End Verification Solutions.

Added Optimizing AXI on Zynq-7000 AP SoC Processors.

04/02/2014 1.0 Initial release of Vivado AXI Reference Guide.

Vivado AXI Reference Guide www.xilinx.com 3

UG1037 (v4.0) July 15, 2017

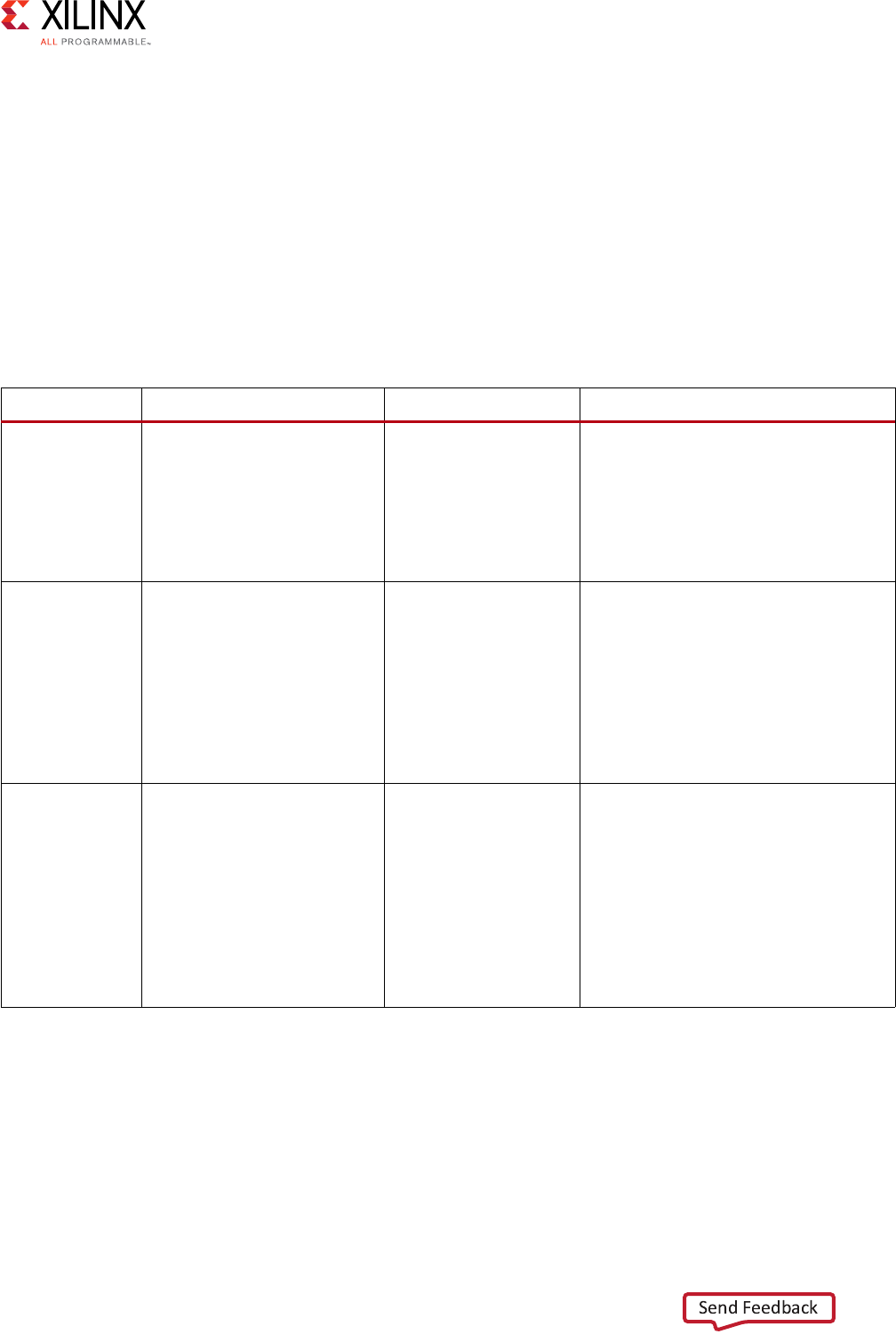

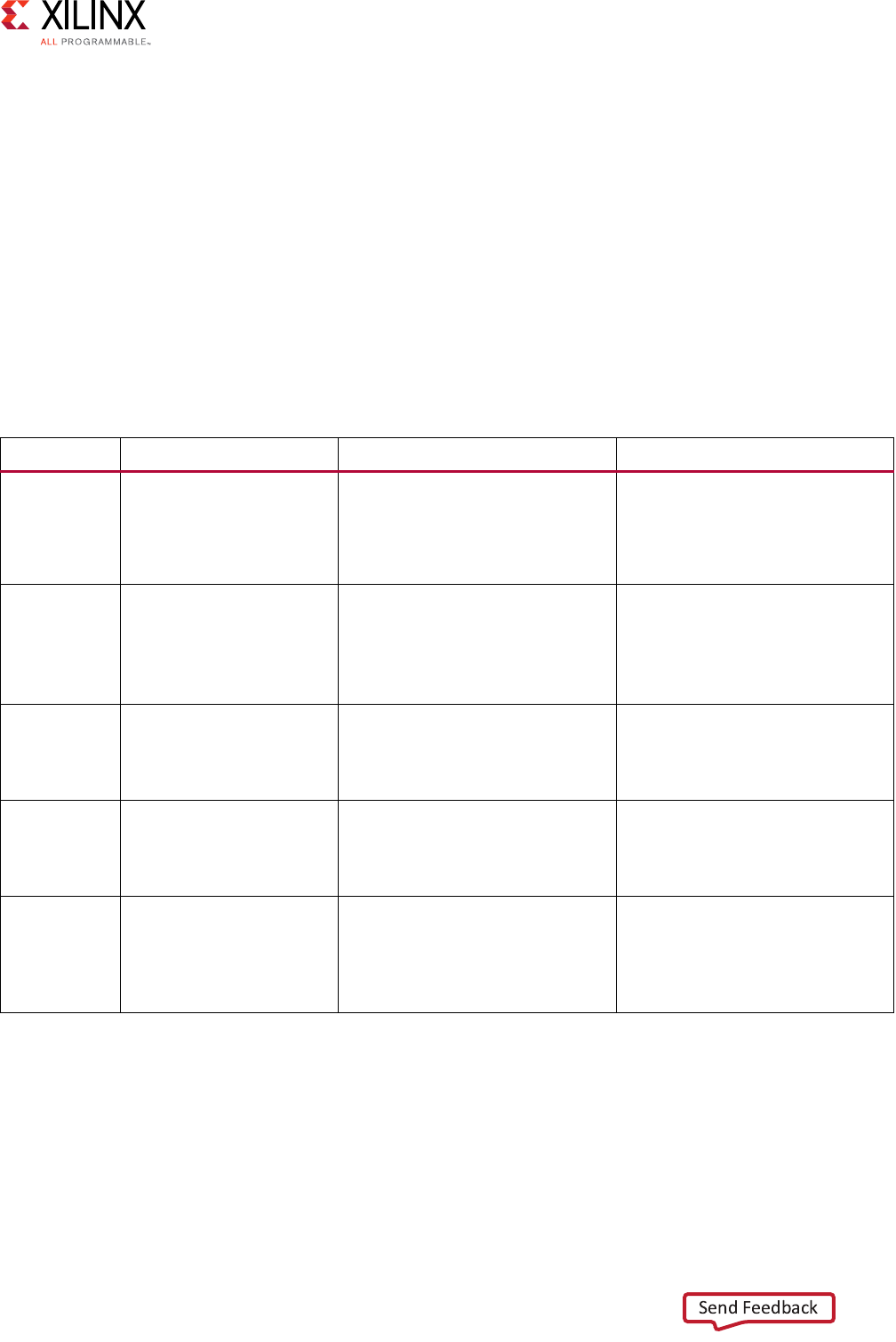

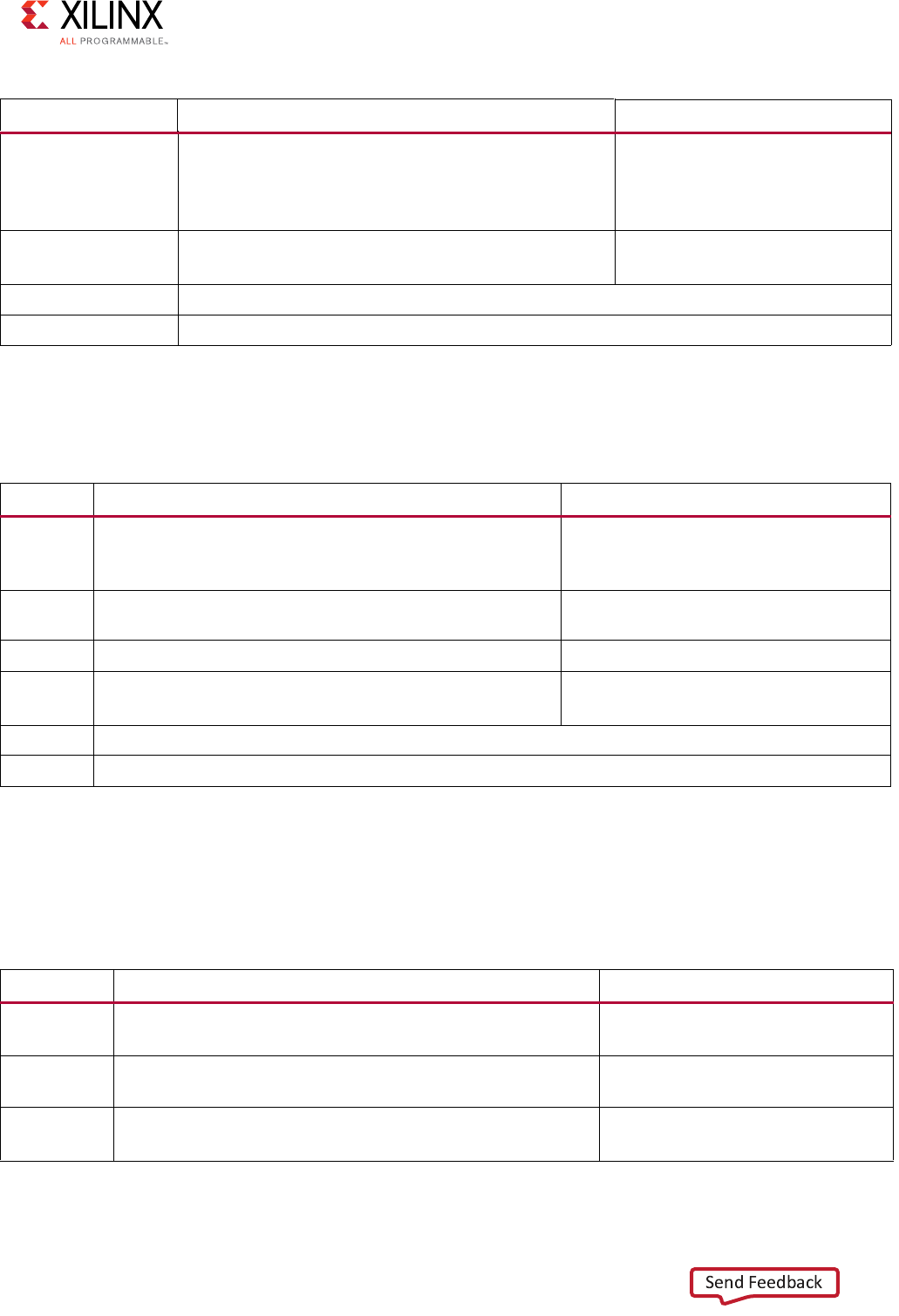

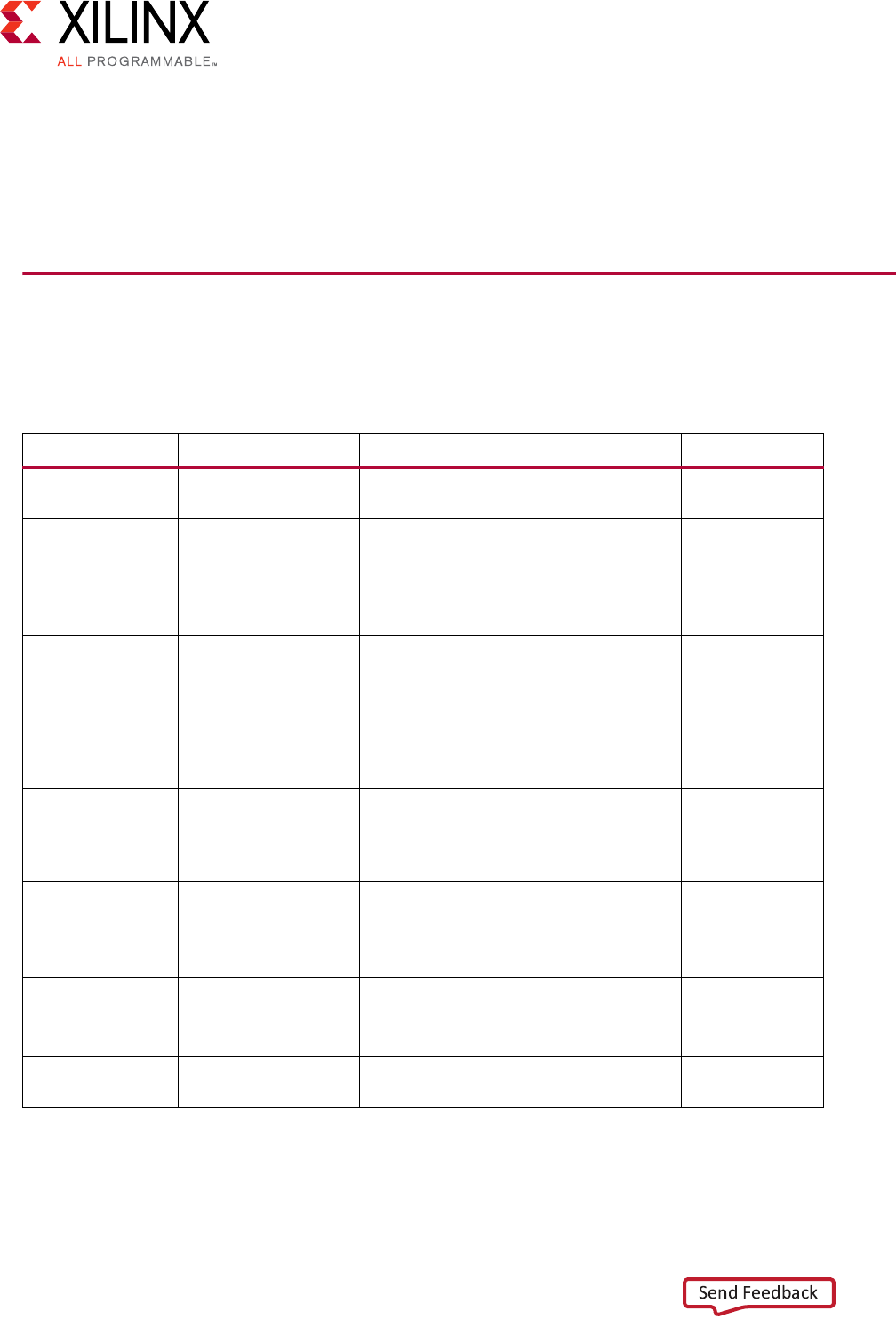

Table of Contents

Chapter 1: Introducing AXI for Vivado

Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

What is AXI? . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

Summary of AXI4 Benefits. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

How AXI Works . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

IP Interoperability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

Quick Take Videos . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

Chapter 2: AXI Support in Xilinx Tools and IP

Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12

Using Vivado AXI IP in RTL Projects . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12

Using the Create and Package IP Wizard for AXI IP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

Adding AXI IP to the IP Catalog Using Vivado IP Packager . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

Using Vivado IP Integrator to Assemble AXI IP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

Using AXI IP in System Generator for DSP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 22

Adding AXI Interfaces Using High Level Synthesis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

AXI Infrastructure IP Cores . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

AXI4 DMA . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 49

Simulating IP. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

Using Debug and IP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 56

Zynq UltraScale+ MPSoC Processor Device . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 67

Zynq-7000 All Programmable SoC Processor IP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

MicroBlaze Processor. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 72

Chapter 4: AXI Feature Adoption in Xilinx Devices

Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 80

Memory-Mapped IP Feature Adoption and Support . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 80

AXI4-Stream Adoption and Support . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 82

DSP and Wireless IP: AXI Feature Adoption. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 94

Video IP: AXI Feature Adoption . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 95

Vivado AXI Reference Guide www.xilinx.com 4

UG1037 (v4.0) July 15, 2017

Chapter 5: Migrating to Xilinx AXI Protocols

Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 110

Migrating to AXI for IP Cores. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 110

Migrating IP Using the Vivado Create and Package Wizard . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 111

Using System Generator for DSP for Migrating IP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 111

Migrating a Fast Simplex Link to AXI4-Stream . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 112

Migrating HDL Designs to use DSP IP with AXI4-Stream . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 114

High End Verification Solutions. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 117

Chapter 6: AXI System Optimization: Tips and Hints

Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 118

AXI System Optimization. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 122

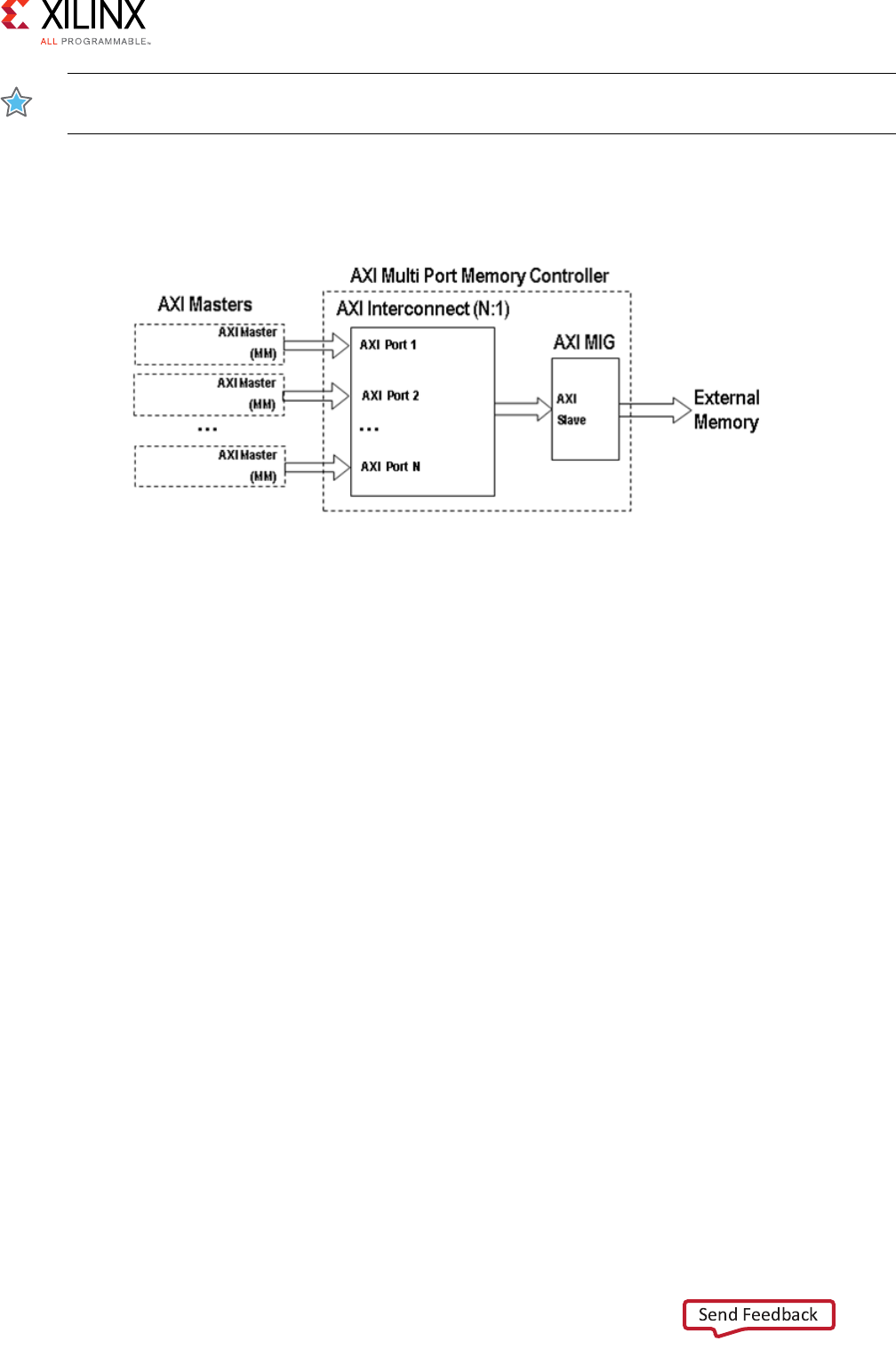

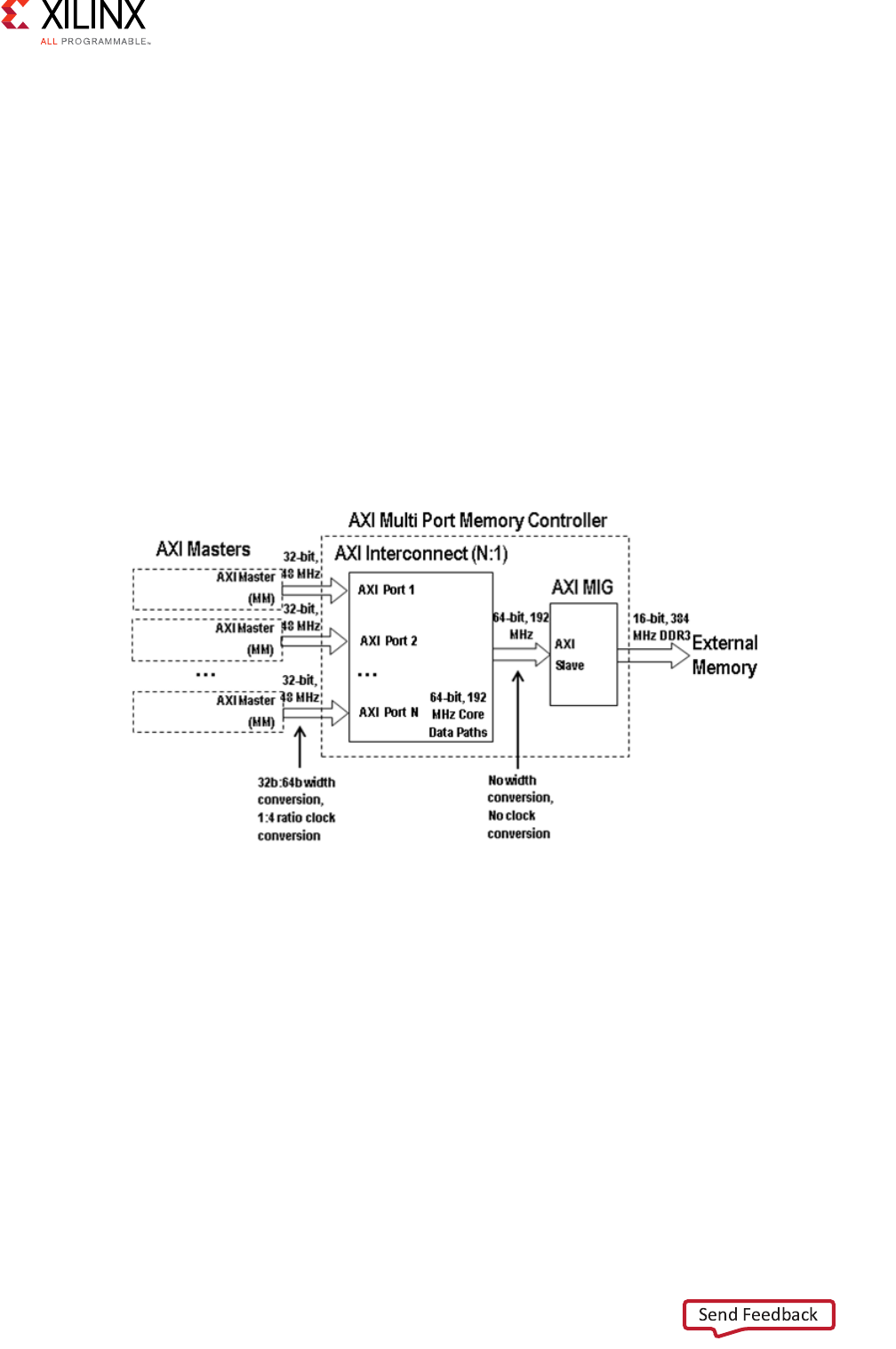

AXI4-based Vivado Multi-Ported Memory Controller: AXI4 System Optimization Example . . . . 126

Common Pitfalls Leading to AXI Systems of Poor Quality Results . . . . . . . . . . . . . . . . . . . . . . . . . 142

Optimizing AXI on Zynq-7000 AP SoC Processors . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 145

Chapter 7: AXI4-Stream IP Interoperability: Tips and Hints

Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 148

Key Considerations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 148

Domain Usage Guidelines and Conventions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 151

Domain-Specific Data Interpretation and Interoperability Guidelines . . . . . . . . . . . . . . . . . . . . . 155

Appendix A: AXI Adoption Summary

Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 162

Global Signals . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 162

AXI4 and AXI4-Lite Signals. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 163

AXI4-Stream Signal Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 167

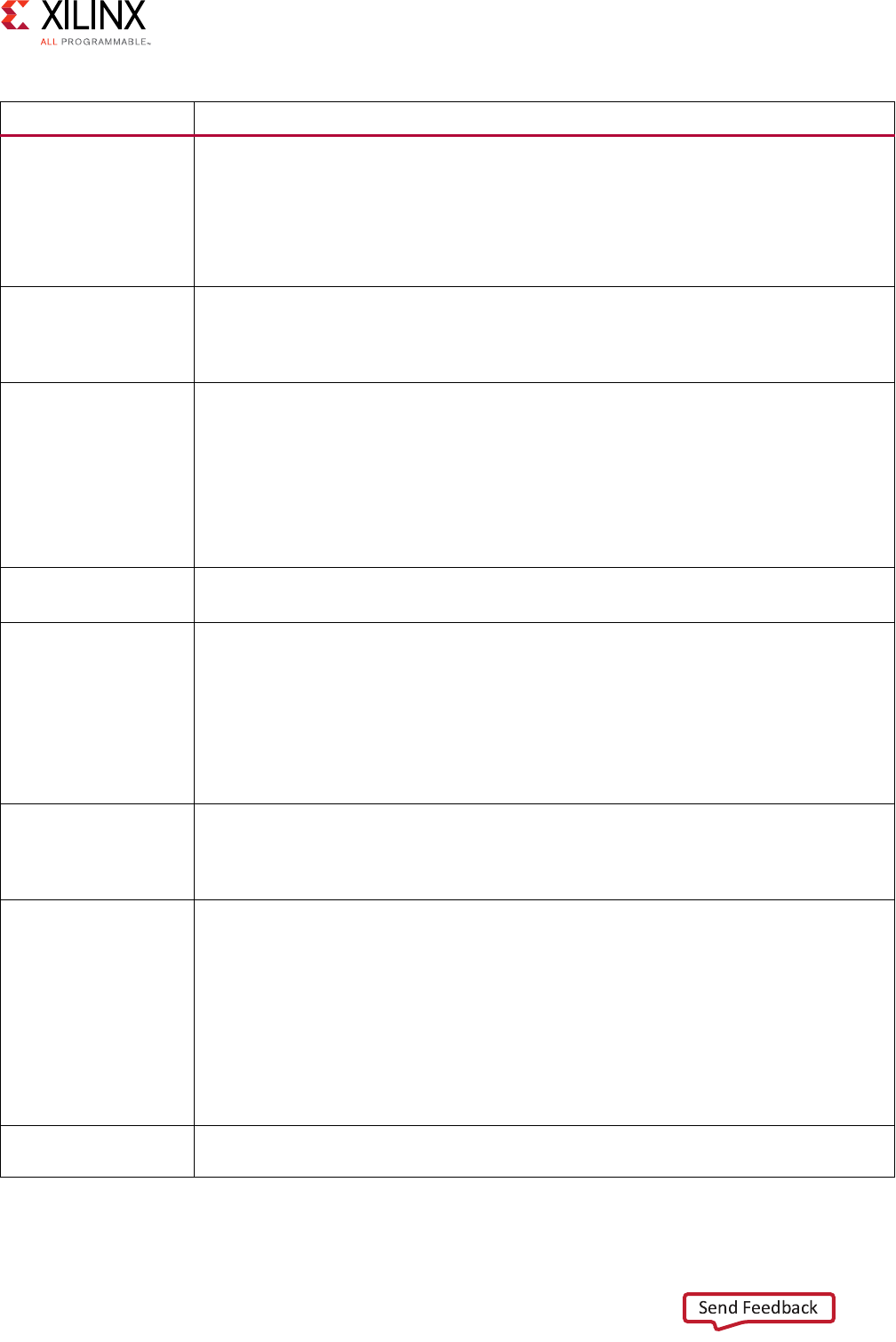

Appendix B: AXI Terminology

Terminology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 168

Appendix C: Additional Resources and Legal Notices

Xilinx Resources . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 172

Solution Centers. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 172

Documentation Navigator and Design Hubs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 172

Third-Party Documentation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 173

Xilinx Documentation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 173

Vivado Design Suite Video Tutorials. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 175

Please Read: Important Legal Notices . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 175

Vivado AXI Reference Guide www.xilinx.com 5

UG1037 (v4.0) July 15, 2017

Chapter 1

Introducing AXI for Vivado

Overview

Xilinx adopted the Advanced eXtensible Interface (AXI) protocol for Intellectual Property

(IP) cores beginning with the Xilinx® Spartan®-6 and Virtex®-6 devices. Xilinx continues

the use of the AXI protocol for IP targeting the UltraScale™ architecture, 7 series, and

Zynq®-7000 All Programmable (AP) SoC devices.

This document is intended to: Introduce key concepts of the AXI protocol.

• Give an overview of what Xilinx tools you can use to create AXI-based IP.

• Explain what features of AXI that have been adopted by Xilinx.

• Provide guidance on how to migrate your existing design to AXI.

Note: This document is not intended to replace the advanced micro controller bus architecture

(AMBA®) ARM® AXI4 specifications. Before beginning an AXI design, you need to download, read,

and understand the AMBA AXI and ACE Protocol Specification, along with the AMBA4 AXI4-Stream

Protocol. You might need to fill out a brief registration before downloading the documents. See the

AMBA website [Ref 1].

Note: The ACE portion of the AMBA specification is generally not used, except in special cases such

as the connection between a MicroBlaze™ processor and its associated system cache block.

What is AXI?

AXI is part of ARM AMBA, a family of micro controller buses first introduced in 1996. The

first version of AXI was first included in AMBA 3.0, released in 2003. AMBA 4.0, released in

2010, includes the second major version of AXI, AXI4.

There are three types of AXI4 interfaces:

•AXI4: For high-performance memory-mapped requirements.

•AXI4-Lite: For simple, low-throughput memory-mapped communication (for example,

to and from control and status registers).

•AXI4-Stream: For high-speed streaming data.

Vivado AXI Reference Guide www.xilinx.com 6

UG1037 (v4.0) July 15, 2017

Chapter 1: Introducing AXI for Vivado

Xilinx introduced these interfaces in the ISE® Design Suite, release 12.3. Xilinx continues to

use and support AXI and AXI4 interfaces in the Vivado® Design Suite.

Summary of AXI4 Benefits

AXI4 is widely adopted in Xilinx product offerings, providing benefits to Productivity,

Flexibility, and Availability:

•Productivity: By standardizing on the AXI interface, developers need to learn only a

single protocol for IP.

•Flexibility: Providing the right protocol for the application:

°AXI4 is for memory-mapped interfaces and allows high throughput bursts of up to

256 data transfer cycles with just a single address phase.

°AXI4-Lite is a light-weight, single transaction memory-mapped interface. It has a

small logic footprint and is a simple interface to work with both in design and

usage.

°AXI4-Stream removes the requirement for an address phase altogether and allows

unlimited data burst size. AXI4-Stream interfaces and transfers do not have address

phases and are therefore not considered to be memory-mapped.

•Availability: By moving to an industry-standard, you have access not only to the

Vivado IP Catalog, but also to a worldwide community of ARM partners.

°Many IP providers support the AXI protocol.

°A robust collection of third-party AXI tool vendors is available that provide many

verification, system development, and performance characterization tools. As you

begin developing higher performance AXI-based systems, the availability of these

tools is essential.

How AXI Works

This section provides a brief overview of how the AXI interface works. Consult the AMBA AXI

specifications [Ref 1] for the complete details on AXI operation.

The AXI specifications describe an interface between a single AXI master and AXI slave,

representing IP cores that exchange information with each other. Multiple memory-mapped

AXI masters and slaves can be connected together using AXI infrastructure IP blocks. The

Xilinx AXI Interconnect IP and the newer AXI SmartConnect IP contain a configurable

number of AXI-compliant master and slave interfaces, and can be used to route transactions

between one or more AXI masters and slaves.

Vivado AXI Reference Guide www.xilinx.com 7

UG1037 (v4.0) July 15, 2017

Chapter 1: Introducing AXI for Vivado

The AXI Interconnect is architected using a traditional, monolithic crossbar approach;

described in AXI Infrastructure IP Cores in Chapter 3. The newer SmartConnect IP, which was

production released in 2017.1, contains a more scalable and flexible Network-on-Chip

(NoC) architecture and is described in Xilinx AXI SmartConnect and AXI Interconnect IP in

Chapter 3.

Both AXI4 and AXI4-Lite interfaces consist of five different channels:

• Read Address Channel

• Write Address Channel

• Read Data Channel

• Write Data Channel

• Write Response Channel

Data can move in both directions between the master and slave simultaneously, and data

transfer sizes can vary. The limit in AXI4 is a burst transaction of up to 256 data transfers.

AXI4-Lite allows only one data transfer per transaction.

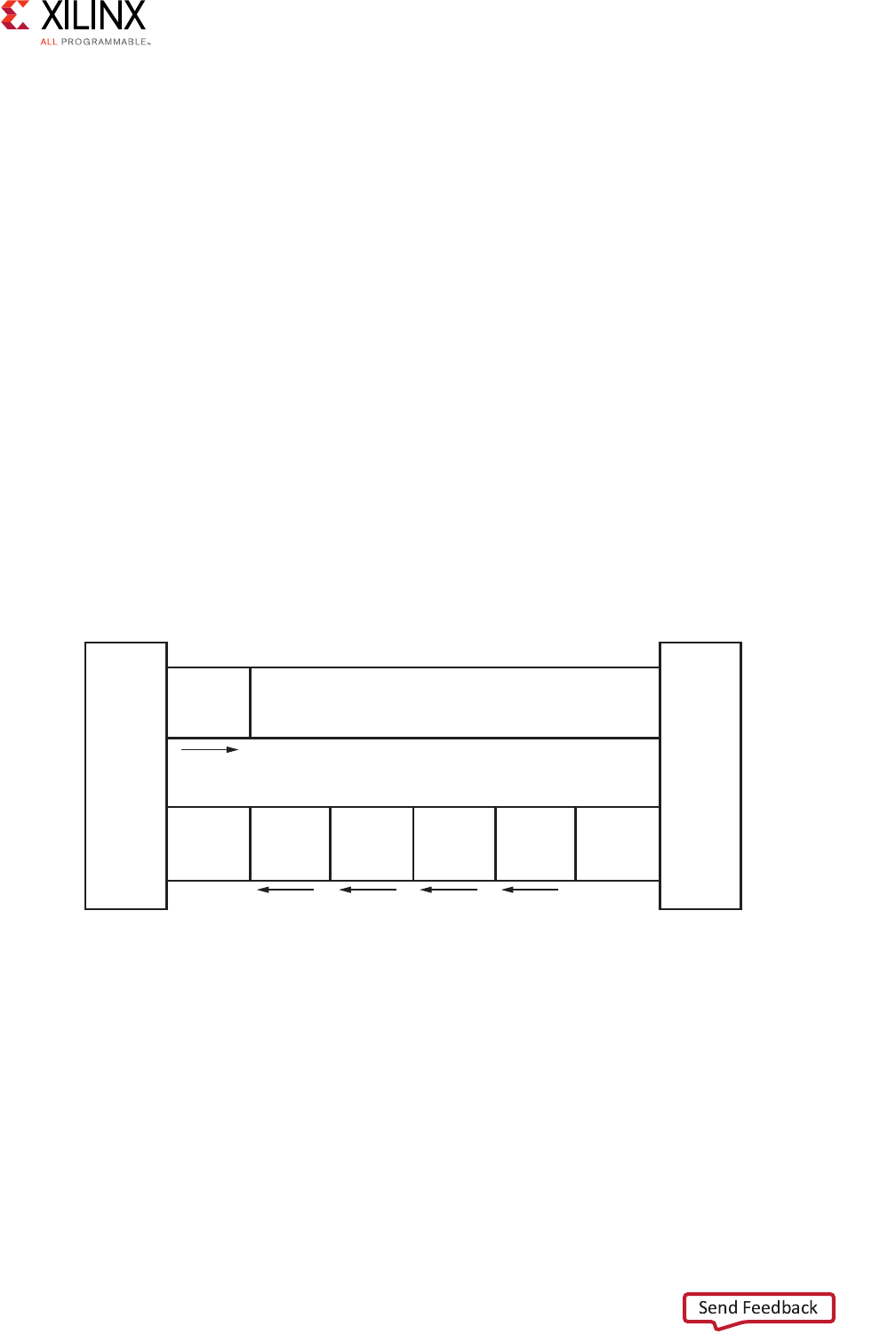

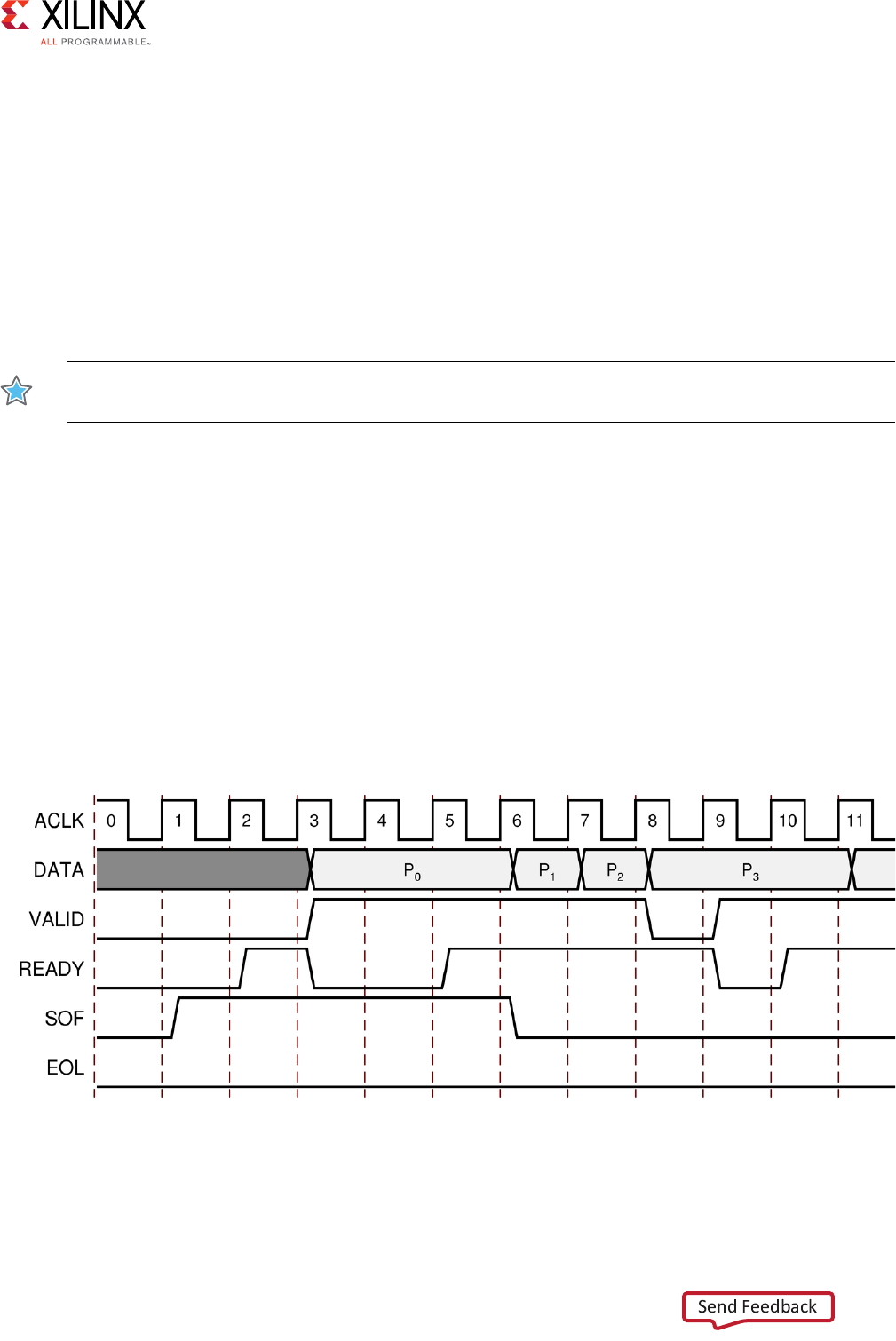

The following figure shows how an AXI4 read transaction uses the read address and read

data channels.

X-Ref Target - Figure 1-1

Figure 1-2 shows how a write transaction uses the write address, write data, and write

response channels.

Figure 1-1: Channel Architecture of Reads

Master

interface

Read address channel

Address

and

control

Read data channel

Read

data

Read

data

Read

data

Read

data

Slave

interface

X12076

Vivado AXI Reference Guide www.xilinx.com 8

UG1037 (v4.0) July 15, 2017

Chapter 1: Introducing AXI for Vivado

X-Ref Target - Figure 1-2

As shown in the preceding figures, AXI4:

• Provides separate data and address connections for reads and writes, which allows

simultaneous, bidirectional data transfer.

• Requires a single address and then bursts up to 256 words of data.

The AXI4 protocol describes options that allow AXI4-compliant systems to achieve very

high data throughput. Some of these features, in addition to bursting, are: data upsizing

and downsizing, multiple outstanding addresses, and out-of-order transaction processing.

At a hardware level, AXI4 allows systems to be built with a different clock for each AXI

master-slave pair. In addition, the AXI4 protocol allows the insertion of register slices (often

called pipeline stages) to aid in timing closure.

AXI4-Lite is similar to AXI4 with some exceptions: The most notable exception is that

bursting is not supported. The AXI4-Lite chapter of the ARM AMBA AXI Protocol

Specification [Ref 1] describes the AXI4-Lite protocol in more detail.

The AXI4-Stream protocol defines a single channel for transmission of streaming data. The

AXI4-Stream channel models the write data channel of AXI4. Unlike AXI4, AXI4-Stream

interfaces can burst an unlimited amount of data. There are additional, optional capabilities

described in the AMBA4 AXI4-Stream Protocol Specification [Ref 1]. The specification

describes how you can split, merge, interleave, upsize, and downsize AXI4-Stream

compliant interfaces.

IMPORTANT: Unlike AXI4, you cannot reorder AXI4-Stream transfers.

Figure 1-2: Channel Architecture of Writes

Master

interface

Write address channel

Address

and

control

Write data channel

Write

data

Write

data

Write

data

Write

data

Write

response

Write response channel

Slave

interface

X12077

Vivado AXI Reference Guide www.xilinx.com 9

UG1037 (v4.0) July 15, 2017

Chapter 1: Introducing AXI for Vivado

•Memory-Mapped Protocols: In memory-mapped protocols (AXI3, AXI4, and

AXI4-Lite), all transactions involve the concept of transferring a target address within a

system memory space and data.

Memory-mapped systems often provide a more homogeneous way to view the system,

because the IP operates around a defined memory map.

AXI3-based IP can be integrated into AXI4-based systems for interoperability, however

most Xilinx IP natively adopt AXI4 which contains protocol enhancements compared to

AXI3.

Note: The processing system block in the Zynq-7000 AP SoC devices use AXI3 memory-mapped

interfaces. AXI3 is a subset of AXI4 and Xilinx tools automatically insert the necessary adaptation

logic to translate between AXI3 and AXI4.

•AXI4-Stream Protocol: Use the AXI4-Stream protocol for applications that typically

focus on a data-centric and data-flow paradigm where the concept of an address is not

present or not required. Each AXI4-Stream acts as a single unidirectional channel with a

handshaking data flow.

At this lower level of operation (compared to the memory-mapped protocol types), the

mechanism to move data between IP is defined and efficient, but there is no unifying

address context between IP. The AXI4-Stream IP can be better optimized for

performance in data flow applications, but also tends to be more specialized around a

given application space.

•Infrastructure IP: An infrastructure IP is a building block used to help assemble

systems. Infrastructure IP tends to be a generic IP that moves or transforms data

around the system using general-purpose AXI4 interfaces and does not interpret data.

Examples of infrastructure IP are:

°AXI Register slices (for pipelining)

°AXI FIFOs (for buffering/clock conversion)

°AXI Interconnect IP and AXI SmartConnect IP (for connecting memory-mapped IP

together)

°AXI Direct Memory Access (DMA) engines (for memory-mapped to stream

conversion)

°AXI Performance Monitors and Protocol Checkers (for analysis and debug)

°AXI Verification IP (for simulation-based verification and performance analysis)

These IP are useful for connecting IP together into a system, but are not generally

endpoints for data.

Vivado AXI Reference Guide www.xilinx.com 10

UG1037 (v4.0) July 15, 2017

Chapter 1: Introducing AXI for Vivado

Combining AXI4-Stream and Memory-Mapped Protocols

A common approach is to build systems that combine AXI4-Stream and AXI

memory-mapped IP together. Often a DMA engine can be used to move streams in and out

of memory.

For example, a processor can work with DMA engines to decode packets or implement a

protocol stack on top of the streaming data to build more complex systems where data

moves between different application spaces or different IP.

IP Interoperability

The AXI specification provides a framework that defines protocols for moving data between

IP using a defined signaling standard. This standard ensures that IP can exchange data with

each other and that data can be moved across a system.

AXI IP interoperability affects:

• The IP application space

• How the IP interprets data

• Which AXI interface protocol is used (AXI4, AXI4-Lite, or AXI4-Stream)

The AXI protocol defines how data is exchanged, transferred, and transformed. The AXI

protocol also ensures an efficient, flexible, and predictable means for transferring data.

Data Interpretation

IMPORTANT: The AXI protocol does not specify or enforce the interpretation of data; therefore, you

need to understand the data contents, and the different IP must have a compatible interpretation of the

data.

For IP such as a general purpose processor with an AXI4 memory-mapped interface, there

is a great degree of flexibility in how to program a processor to format and interpret data as

required by the Endpoint IP.

IP Compatibility

For more application-specific IP, like an Ethernet MAC (EMAC) or a Video Display IP using

AXI4-Stream, the compatibility of the IP is more limited to their respective application

spaces. For example, directly connecting an Ethernet MAC to the Video Display IP is not

feasible.

Vivado AXI Reference Guide www.xilinx.com 11

UG1037 (v4.0) July 15, 2017

Chapter 1: Introducing AXI for Vivado

Note: Even though two IP, such as EMAC and Video Streaming, can theoretically exchange data with

each other, they would not function together because the two IP interpret bit fields and data packets

in a completely different manner.

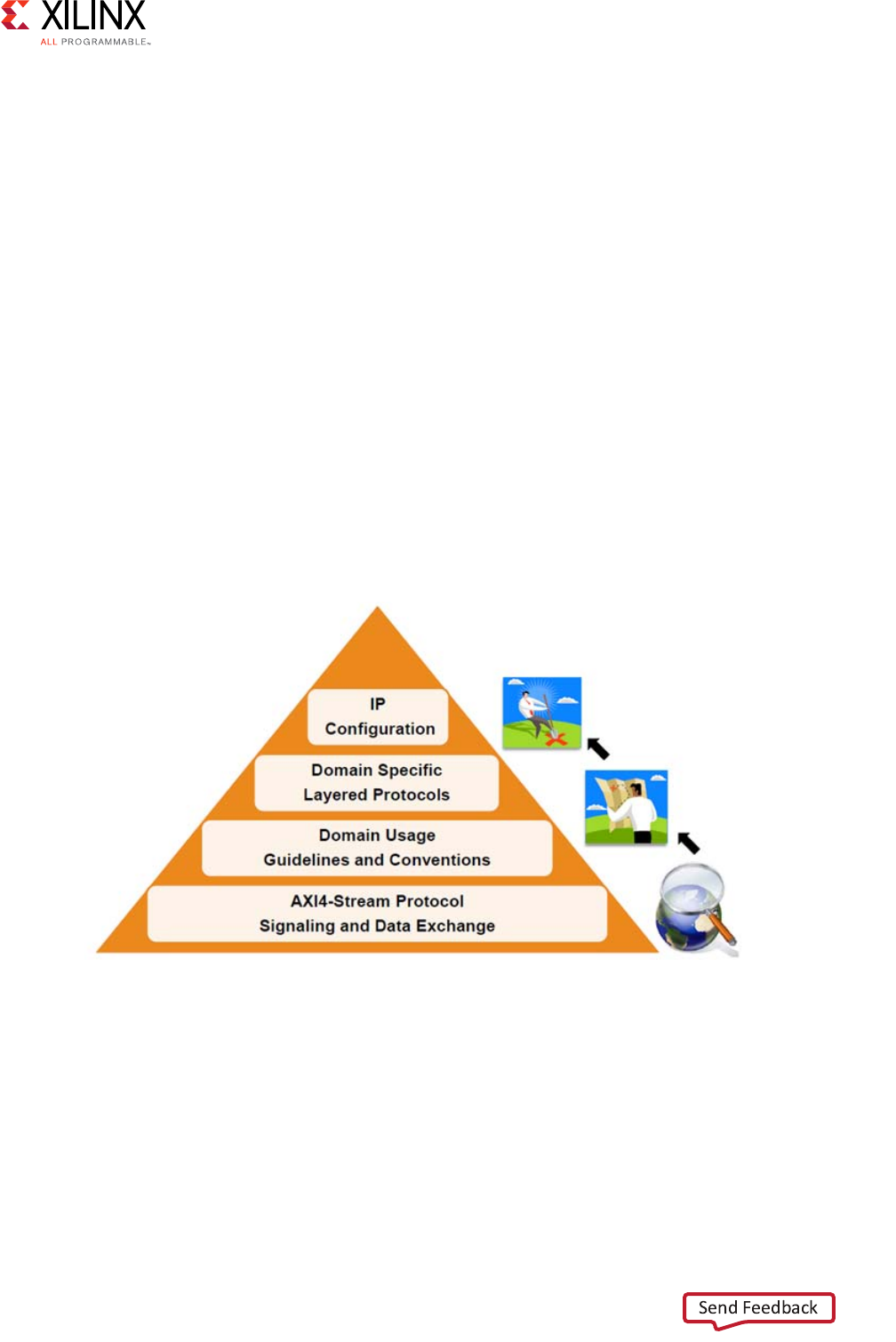

AXI4-Stream IP Interoperability

Chapter 7, “AXI4-Stream IP Interoperability: Tips and Hints,” provides an overview of the

main elements and steps for building an AXI4-Stream system with interoperable IP. These

key elements include understanding the AXI protocol, learning domain specific usage

guidelines, using AXI infrastructure IP as system building blocks, and validating the final

result. You can be most effective if you follow these steps:

1. Review the AXI4 documents:

°AMBA4 AXI4-Stream Protocol Specification [Ref 1]

°LogiCORE IP AXI Interconnect IP Product Guide (PG059) [Ref 12]

°LogicCORE IP AXI SmartConnect Product Guide (PG247) [Ref 23]

°Chapter 7, “AXI4-Stream IP Interoperability: Tips and Hints.”

°LogicCore IP AXI4-Stream Interconnect Product Guide (PG085) [Ref 14]

2. Understand IP domains:

°Review data types and layered protocols in Chapter 7, “AXI4-Stream IP

Interoperability: Tips and Hints.”

°Review the list of AXI IP available at: the Xilinx IP Center website [Ref 3].

°Understand the domain-level guidelines described in Domain Usage Guidelines and

Conventions in Chapter 7.

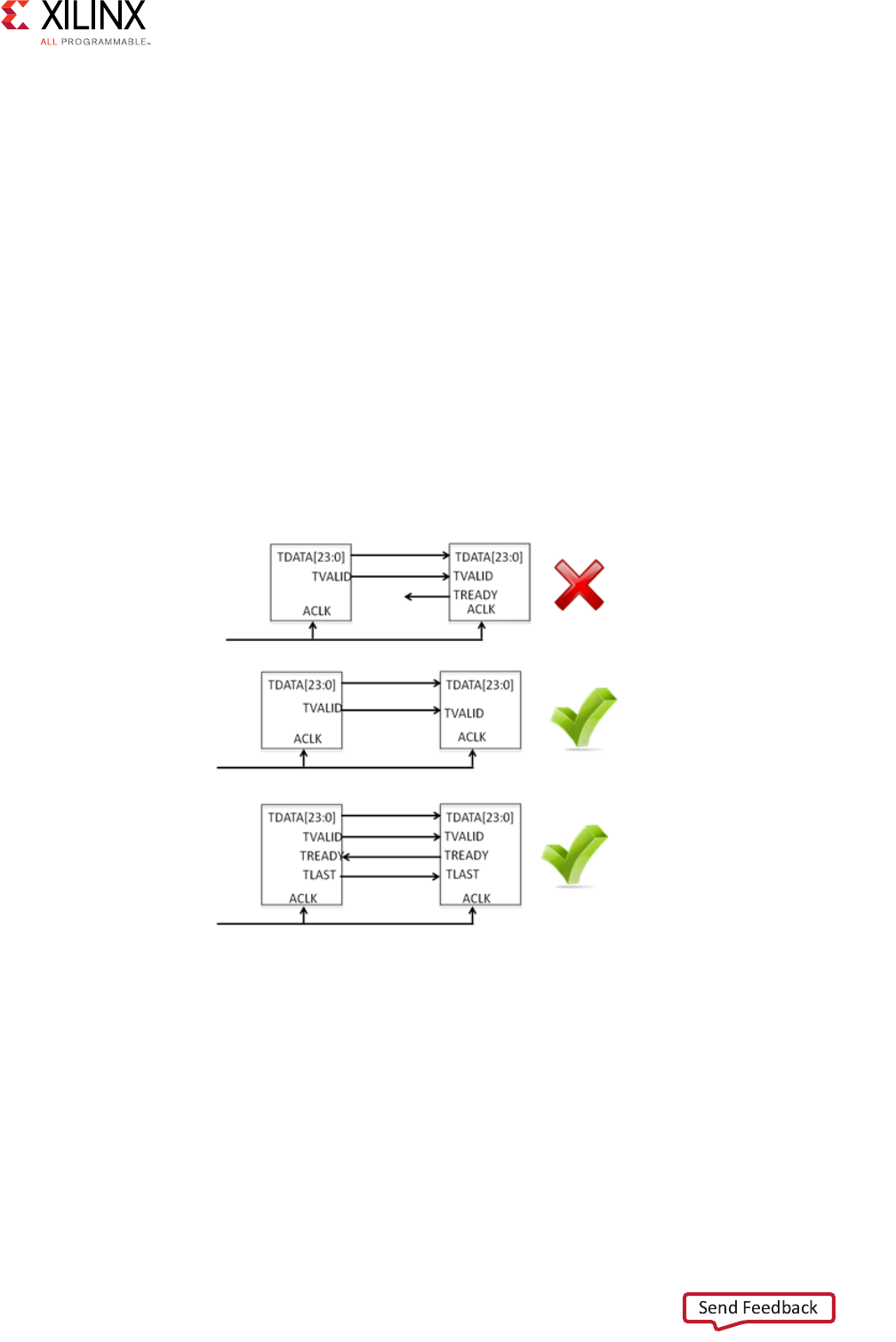

3. Use the following steps when creating your system:

a. Configure IP to share compatible data types and protocols.

b. Use Infrastructure IP or converters where necessary.

c. Validate the system.

Quick Take Videos

The following quick take videos provide more information about using the AXI protocol

with the Vivado Design Suite and other Xilinx development tools:

VIDEOS:

Vivado Design Suite QuickTake Video: Targeting Zynq Devices Using Vivado IP Integrator

Vivado Design Suite QuickTake Video: Designing with Vivado IP Integrator

Vivado Design Suite QuickTake Video: Targeting Zynq Devices Using Vivado IP Integrator

Vivado AXI Reference Guide www.xilinx.com 12

UG1037 (v4.0) July 15, 2017

Chapter 2

AXI Support in Xilinx Tools and IP

Introduction

This chapter describes how you can use Xilinx® tools to deploy individual pieces of AXI IP

or to build systems of interconnected Xilinx AXI IP (using the Vivado® IP integrator in the

Vivado® Design Suite.

Using Vivado AXI IP in RTL Projects

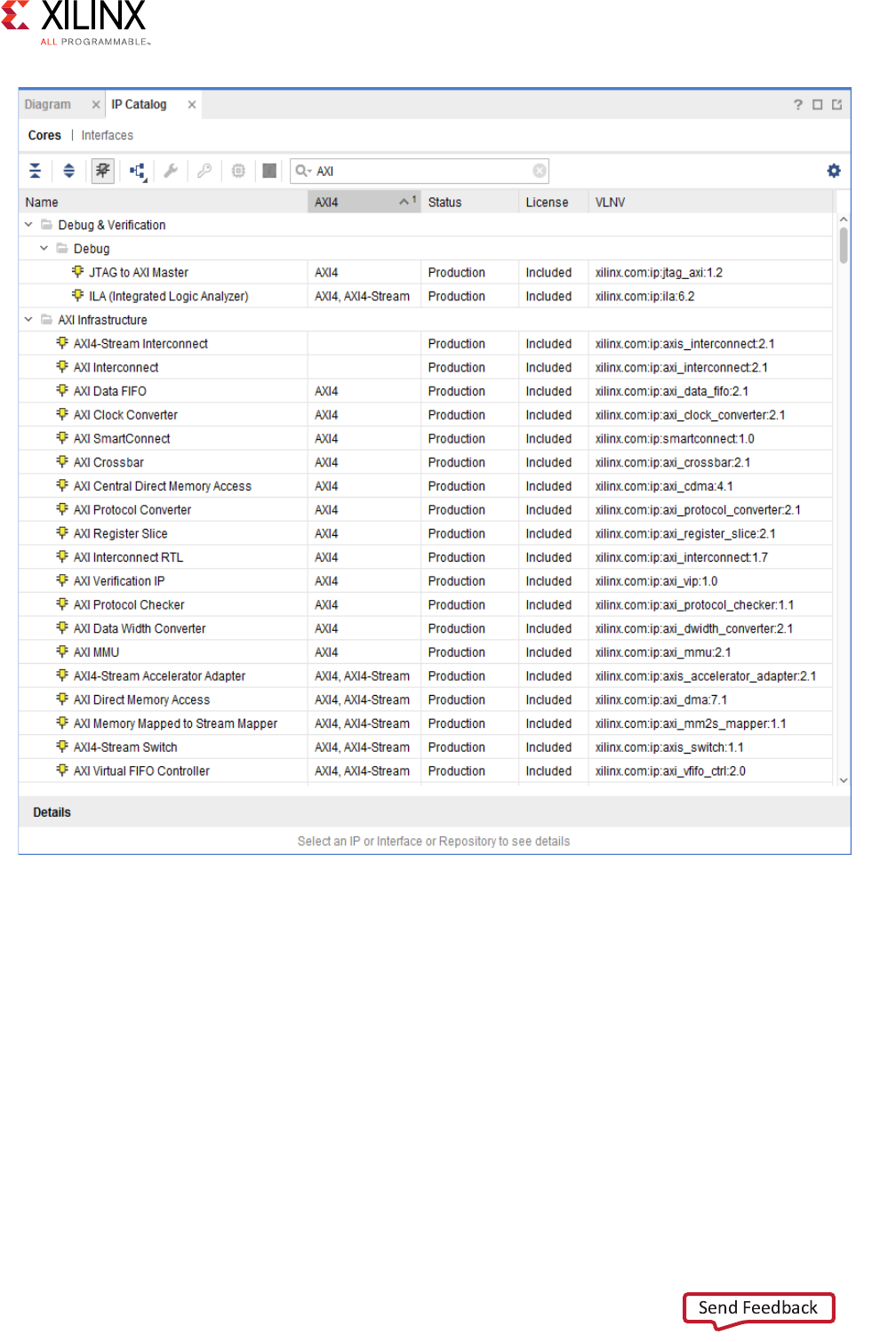

In the Vivado Integrated Development Environment (IDE), you can access Xilinx IP with an

AXI4 interface directly from the Vivado IP Catalog and instantiate that IP directly into an

register transfer logic (RTL) design (Figure 2-1).

Vivado AXI Reference Guide www.xilinx.com 13

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

In the IP catalog, the AXI4 column shows IP with AXI4 interfaces that are supported and

displays the which interfaces are supported by the IP interface: AXI4 (memory-mapped),

AXI4-Stream, or none.

Xilinx IP are designed to support AXI where applicable. For more information about using IP

from the Vivado IP catalog in an RTL flow, see the Vivado Design Suite User Guide: Designing

with IP (UG896) [Ref 30].

X-Ref Target - Figure 2-1

Figure 2-1: IP Catalog in Xilinx Tools

Vivado AXI Reference Guide www.xilinx.com 14

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

Using the Create and Package IP Wizard for AXI IP

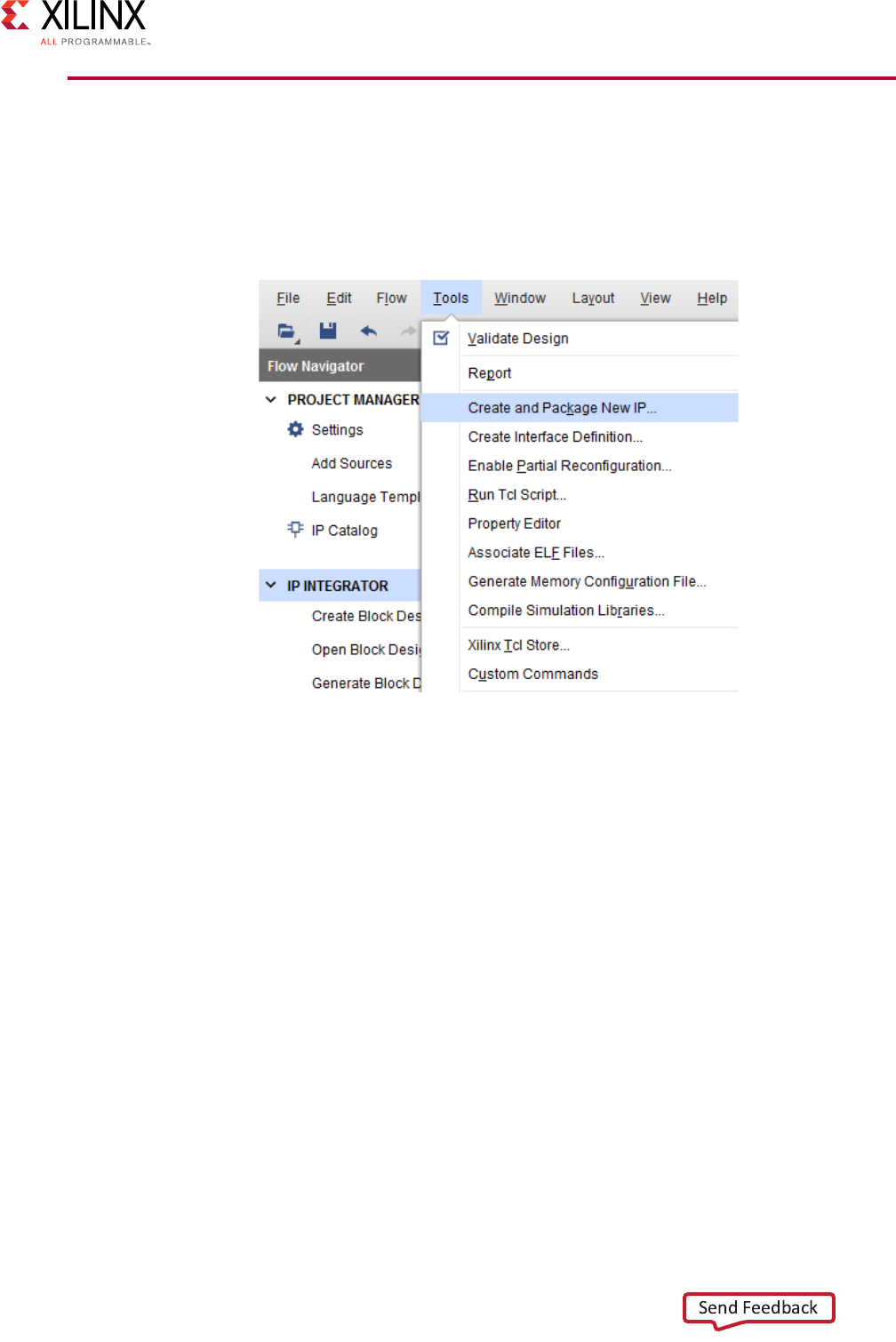

The Vivado IDE provides a Create and Package IP wizard that takes you through all the

required steps and settings for AXI IP. To create and package new IP:

1. From the Tools menu, select Create and Package IP, as shown in the following figure.

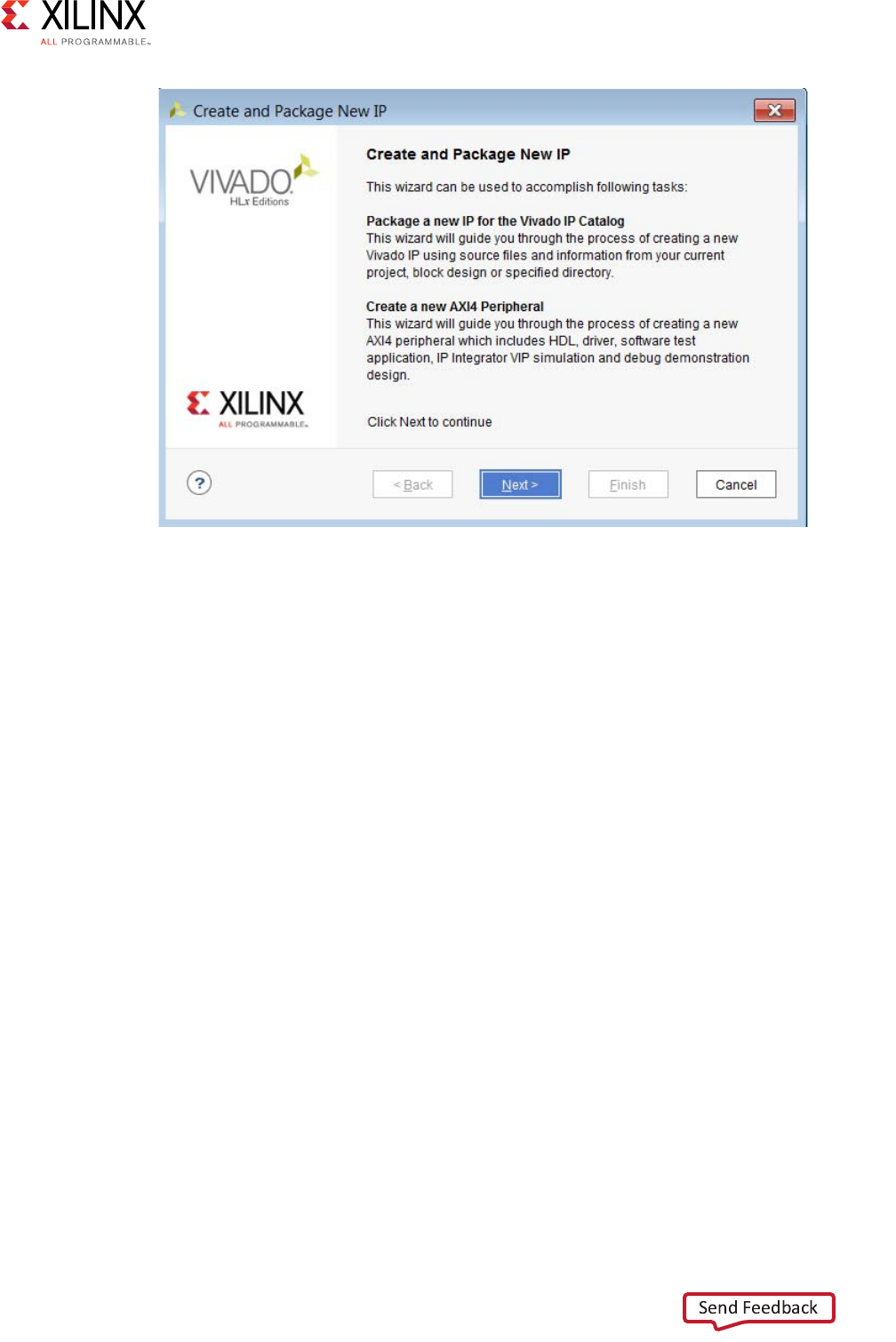

The Create And Package IP wizard opens, as shown in Figure 2-3.

X-Ref Target - Figure 2-2

Figure 2-2: Create and Package IP Option

Vivado AXI Reference Guide www.xilinx.com 15

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

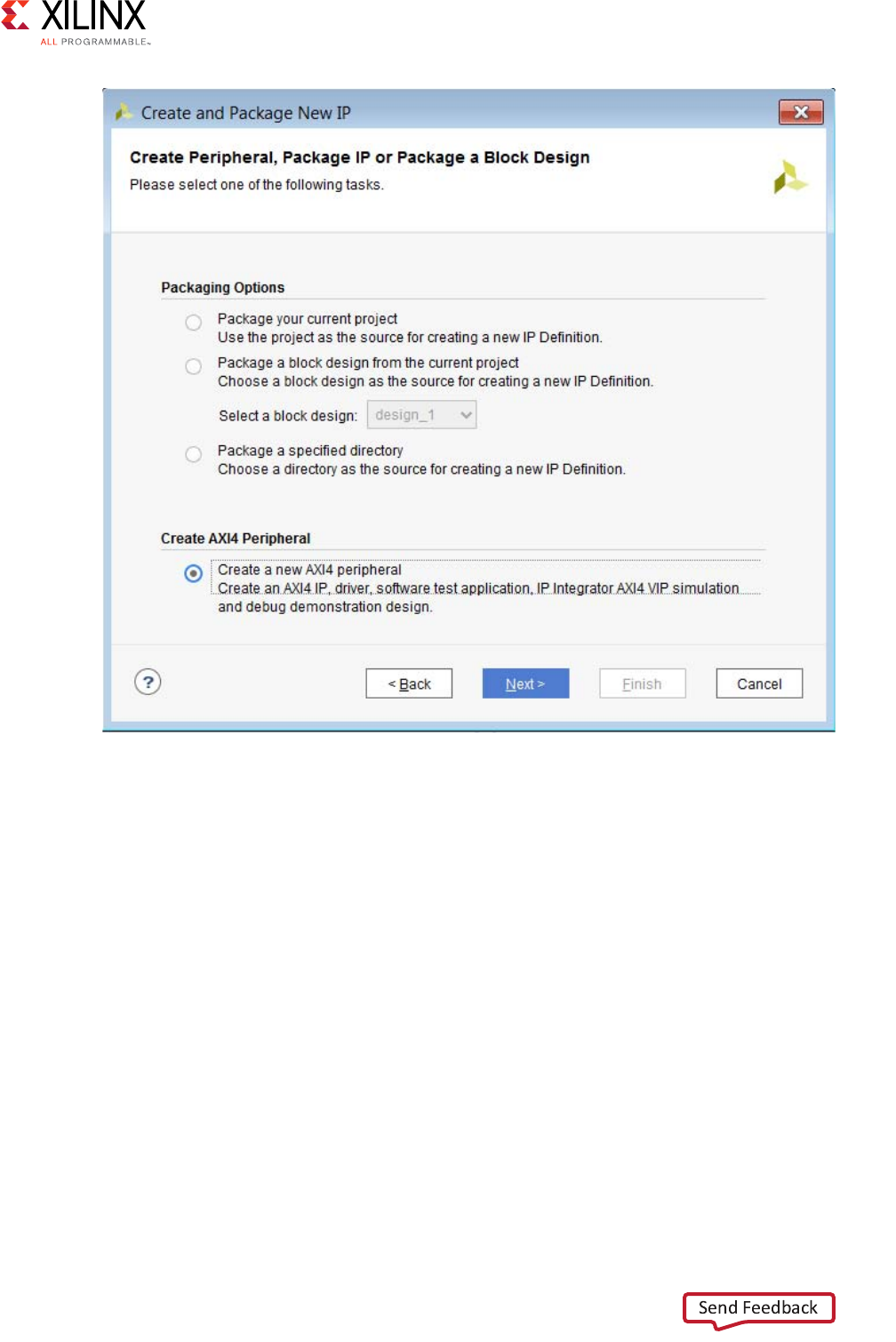

2. Select Create a new AXI4 Peripheral, to create a template AXI4 peripheral that includes

HDL, drivers, a test application, and a BFM example template.

3. Click Next.

The Choose Create Peripheral or Package IP page opens, as shown in Figure 2-4.

X-Ref Target - Figure 2-3

Figure 2-3: Create and Package IP Dialog Box

Vivado AXI Reference Guide www.xilinx.com 16

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

4. Click Next.

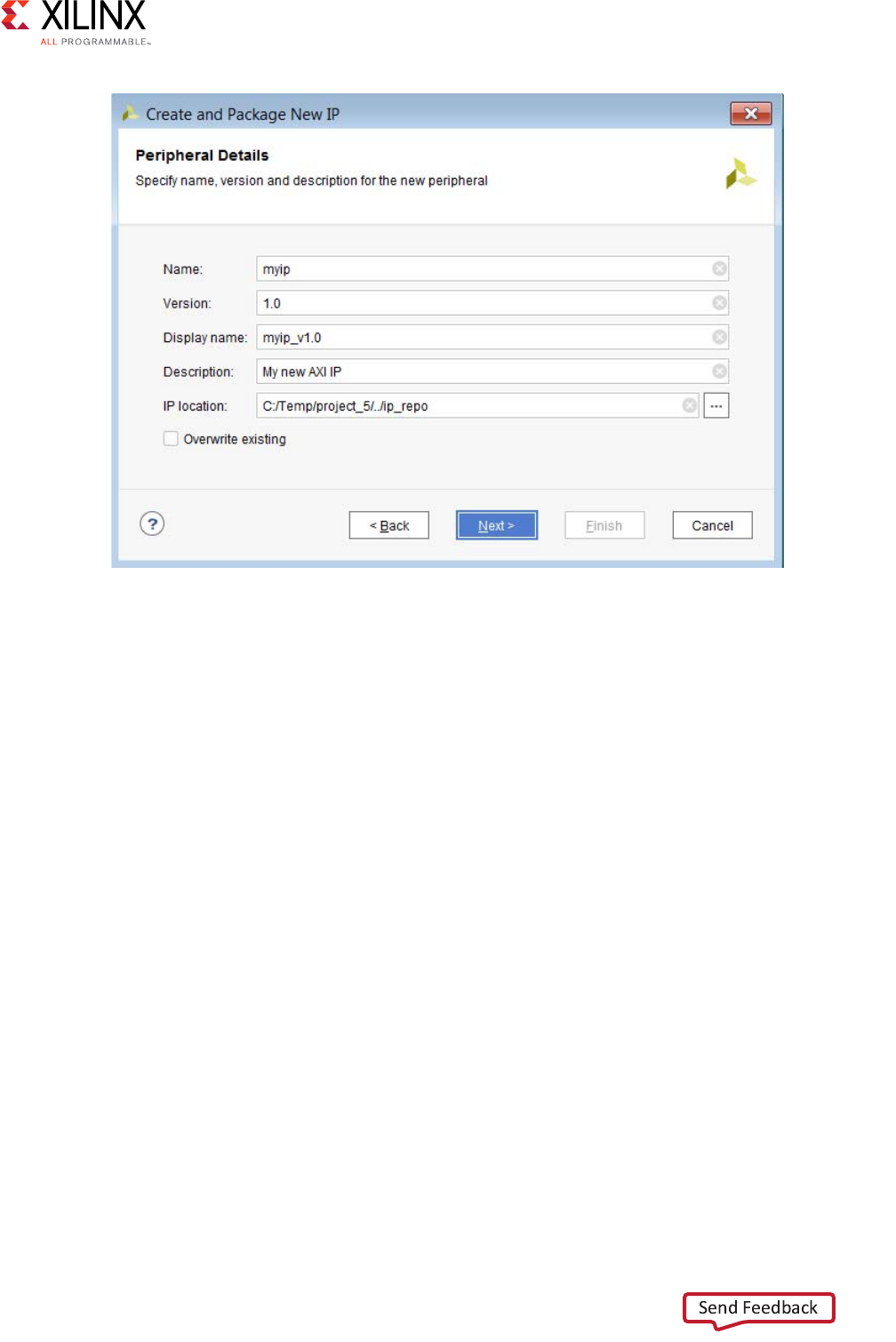

The Peripheral Details page opens.

5. Enter the IP details in the Peripheral Details page, as shown in Figure 2-5.

X-Ref Target - Figure 2-4

Figure 2-4: Choose Create or Package IP Dialog Box

Vivado AXI Reference Guide www.xilinx.com 17

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

The Display Name you provide shows in the Vivado IP catalog.

You can have different names in the Name and Display Name fields; any change in

Name reflects automatically in the Display Name, which is concatenated with the

Version field.

6. Click Next.

7. Select the IP location where you want the IP to be located.

The Vivado IP tool adds the location automatically to the IP repository list.

8. Click Next.

X-Ref Target - Figure 2-5

Figure 2-5: IP Details

Vivado AXI Reference Guide www.xilinx.com 18

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

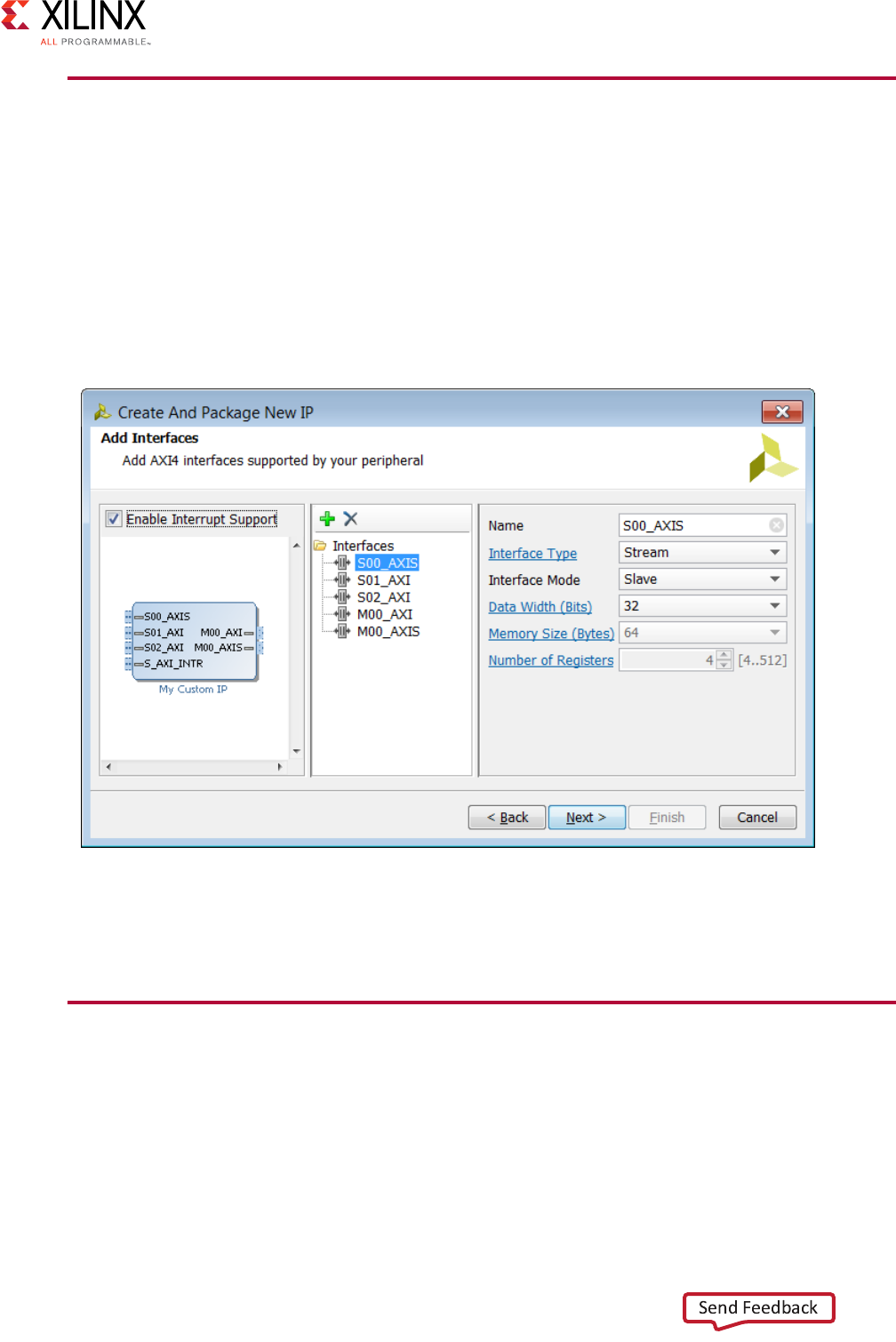

9. Add interfaces to your IP based on the functionality and the required AXI type, as shown

in the following figure.

10. To include interrupts to be available in your IP, check the Enable Interrupt Support

check box.

The IP that this example shows would support edge or level interrupt (generated locally

on the counter), which can be extended to input ports by user and IRQ output.

The data width and the number of registers vary, based on the AXI4 selection type.

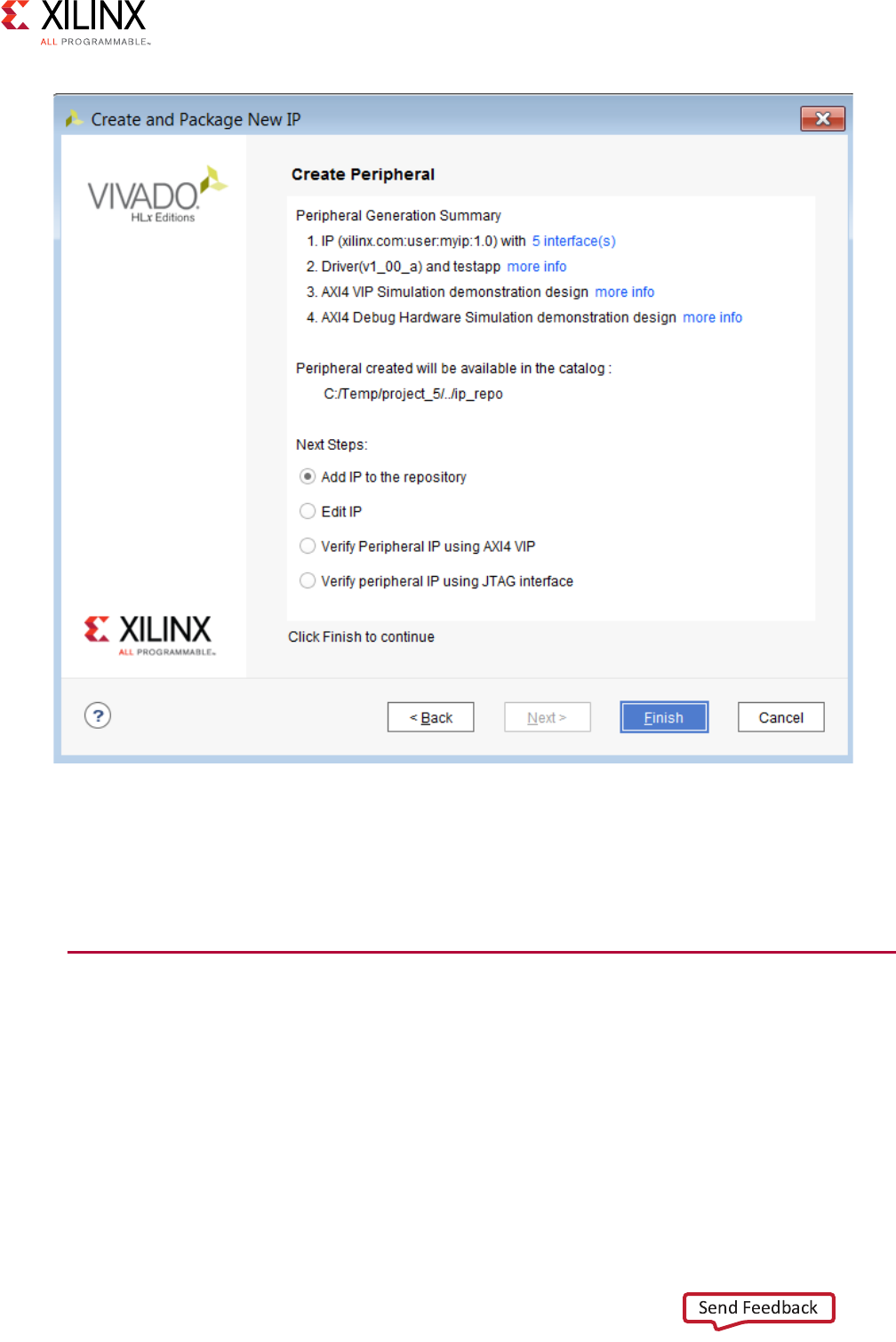

11. Click Next and review your selections.

The final page of the wizard lists the details of your IP, as shown in Figure 2-7.

X-Ref Target - Figure 2-6

Figure 2-6: Create and Package New IP

Vivado AXI Reference Guide www.xilinx.com 19

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

After you generate the IP, additional options are available.

See the Vivado Design Suite User Guide: Creating and Packaging Custom IP (UG1118)

[Ref 30] for more information regarding creating and packaging AXI IP.

Adding AXI IP to the IP Catalog Using Vivado IP

Packager

The Vivado IP packager tool, shown in Figure 2-8, lets you prepare a design for use in the

Vivado IP catalog. You can then instantiate this IP into a design in the Vivado Design Suite

using the Vivado IP integrator.

X-Ref Target - Figure 2-7

Figure 2-7: Create Peripheral Summary Page

Vivado AXI Reference Guide www.xilinx.com 20

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

When you use the Vivado Design Suite IP packaging flow for IP development, you have a

more consistent user experience, better tool integration, and greater facilitation for reuse of

the IP design in the Vivado IDE. The IP packager is AXI-aware, and can auto-recognize AXI

signals. See Appendix A, AXI Adoption Summary, for a summary of the Xilinx AXI adoption

per signal.

X-Ref Target - Figure 2-8

Figure 2-8: Vivado IP Packager Tool

Vivado AXI Reference Guide www.xilinx.com 21

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

See the following documents for more information:

•Vivado Design Suite User Guide: Designing IP Subsystems Using IP Integrator (UG994)

[Ref 38]

•Vivado Design Suite User Guide: Creating and Packaging Custom IP (UG1118) [Ref 41]

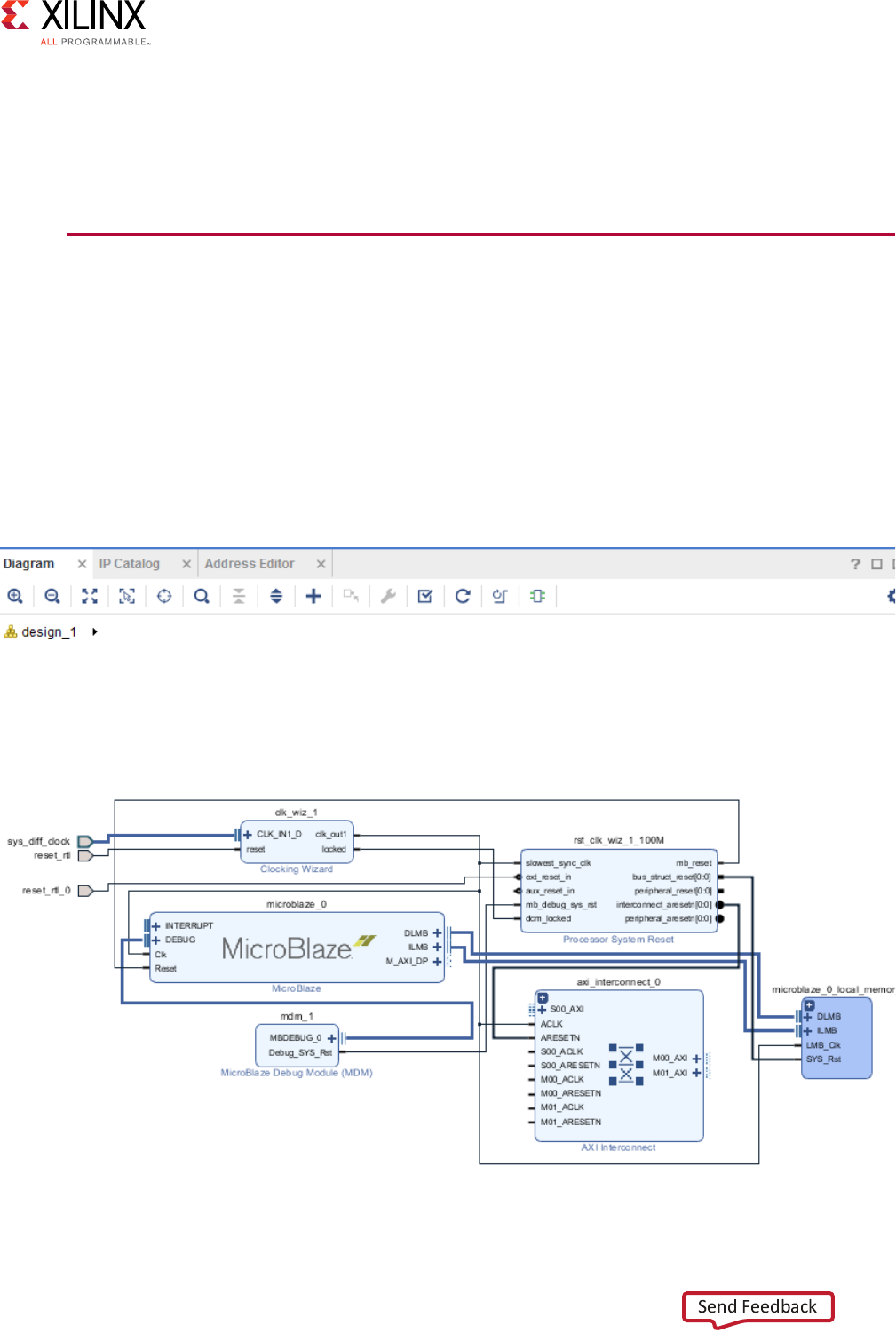

Using Vivado IP Integrator to Assemble AXI IP

The Vivado IP integrator feature lets you create complex system designs by instantiating

and interconnecting IP from the Vivado IP Catalog on a design block. You can create

designs interactively through the IP integrator GUI or programmatically through a Tcl

programming interface.

The Vivado IP integrator interprets AXI4 and AXI4-Stream interfaces, allowing you to

construct designs at the interface level (for enhanced productivity). See the following

figure.

X-Ref Target - Figure 2-9

Figure 2-9: Vivado IP Integrator Diagram and AXI Interface Level Connections

Vivado AXI Reference Guide www.xilinx.com 22

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

An interface is a grouping of signals that share a common function. An AXI4 master

interface, for example, contains a large number of individual signals which are required to

be wired correctly to make a connection.

If each signal in an interface is presented separately, the IP symbol is visually very complex

and requires more effort to connect. By grouping these signals into a single AXI interface,

a single Tcl command or GUI connection can be made using a higher level of abstraction.

The Vivado IP integrator contains design rule checks (DRCs) and automation facilities that

are aware of each specific AXI interfaces to help ensure that the required signals are

connected and configured properly. IP integrator offers design assistance as you develop

your design.

For more information about the Vivado IP integrator, see Vivado Design Suite User Guide:

Designing IP Subsystems Using IP Integrator (UG994) [Ref 38].

Using AXI IP in System Generator for DSP

System Generator for DSP® is a design tool that lets you use of the MathWorks

model-based Simulink® product design environment for FPGA design. Designs are

captured in a DSP-friendly Simulink modeling environment using an optimized

Xilinx-specific blockset.

System Generator supports the following:

• AXI4-Lite and AXI4-Stream interfaces which move data in and out of the DSP design

created with System Generator. By using AXI4-Lite and AXI4-Stream for data transfer, it

is easier to integrate a DSP design into a broader system using the Vivado Design Suite.

• Libraries of IP that users connect together using their AXI4-Stream interfaces. The DSP

designs created using System Generator can be created using AXI4-Stream based DSP

blocks.

For more information on using this tool to create AXI-based IP, see Vivado Design Suite User

Guide: Model-Based DSP Design using System Generator (UG897) [Ref 31]. Also, see the

System Generator for DSP website [Ref 49].

Vivado AXI Reference Guide www.xilinx.com 23

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

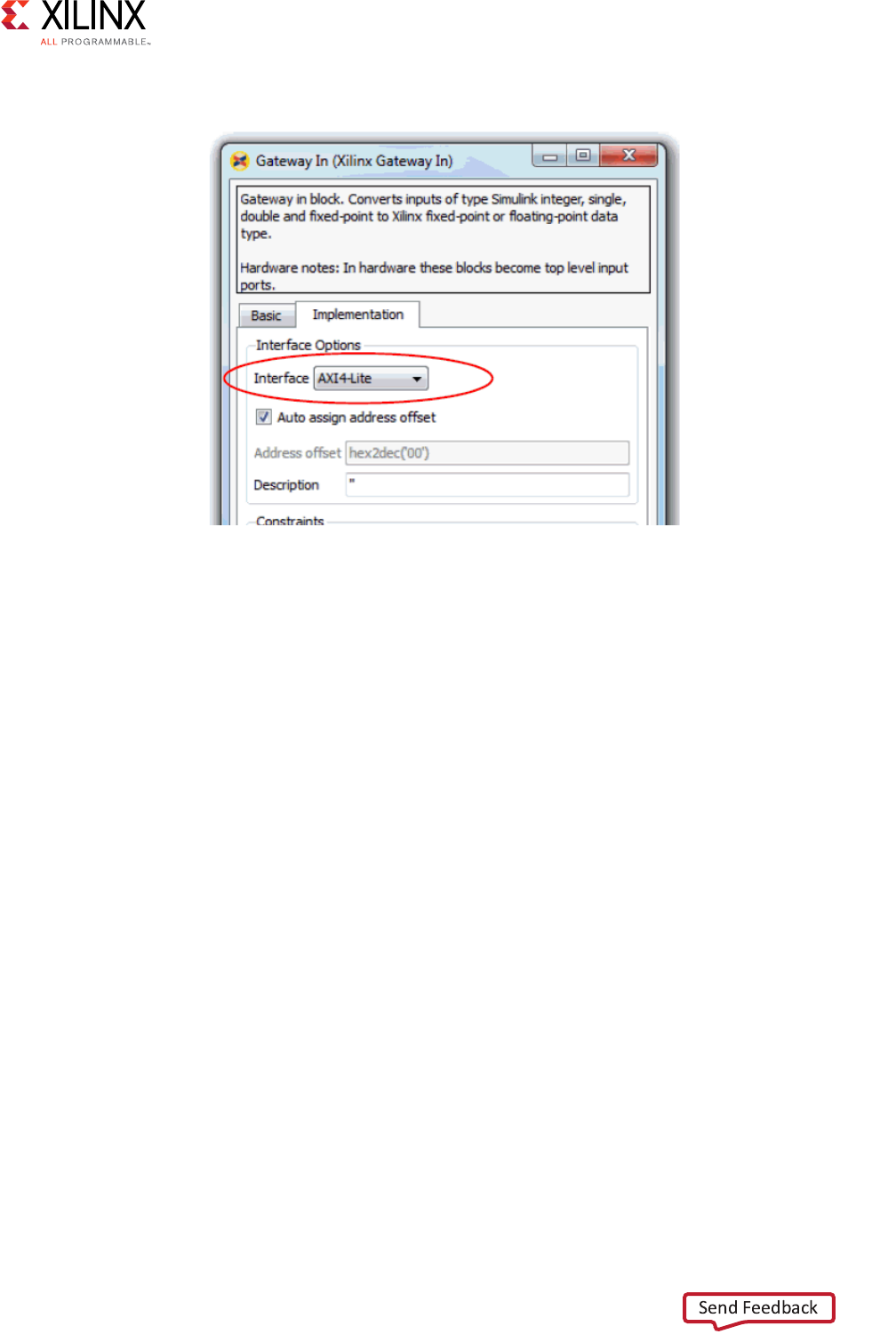

The following figure shows how to specify an AXI4-Lite interface on a Gateway In block.

Port Name Truncation

System Generator shortens the AXI4-Stream signal names to improve readability on the

block; this is cosmetic and the complete AXI4-Stream name is used in the netlist.

The name truncation is turned on by default; uncheck the Display shortened port names

option in the block parameter dialog box to see the full name.

Port Groupings

System Generator groups together and color-codes blocks of AXI4-Stream channel signals.

In the example illustrated in the following figure, the top-most input port, data_tready,

and the top two output ports, data_tvalid and data_tdata belong in the same

AXI4-Stream channel, as well as phase_tready, phase_tvalid, and phase_tdata.

X-Ref Target - Figure 2-10

Figure 2-10: Specifying an AXI4-Lite Interface in System Generator for DSP

Vivado AXI Reference Guide www.xilinx.com 24

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

System Generator gives signals that are not part of any AXI4-Stream channels the same

background color as the block; the arsetn signal, shown in the following figure, is an

example.

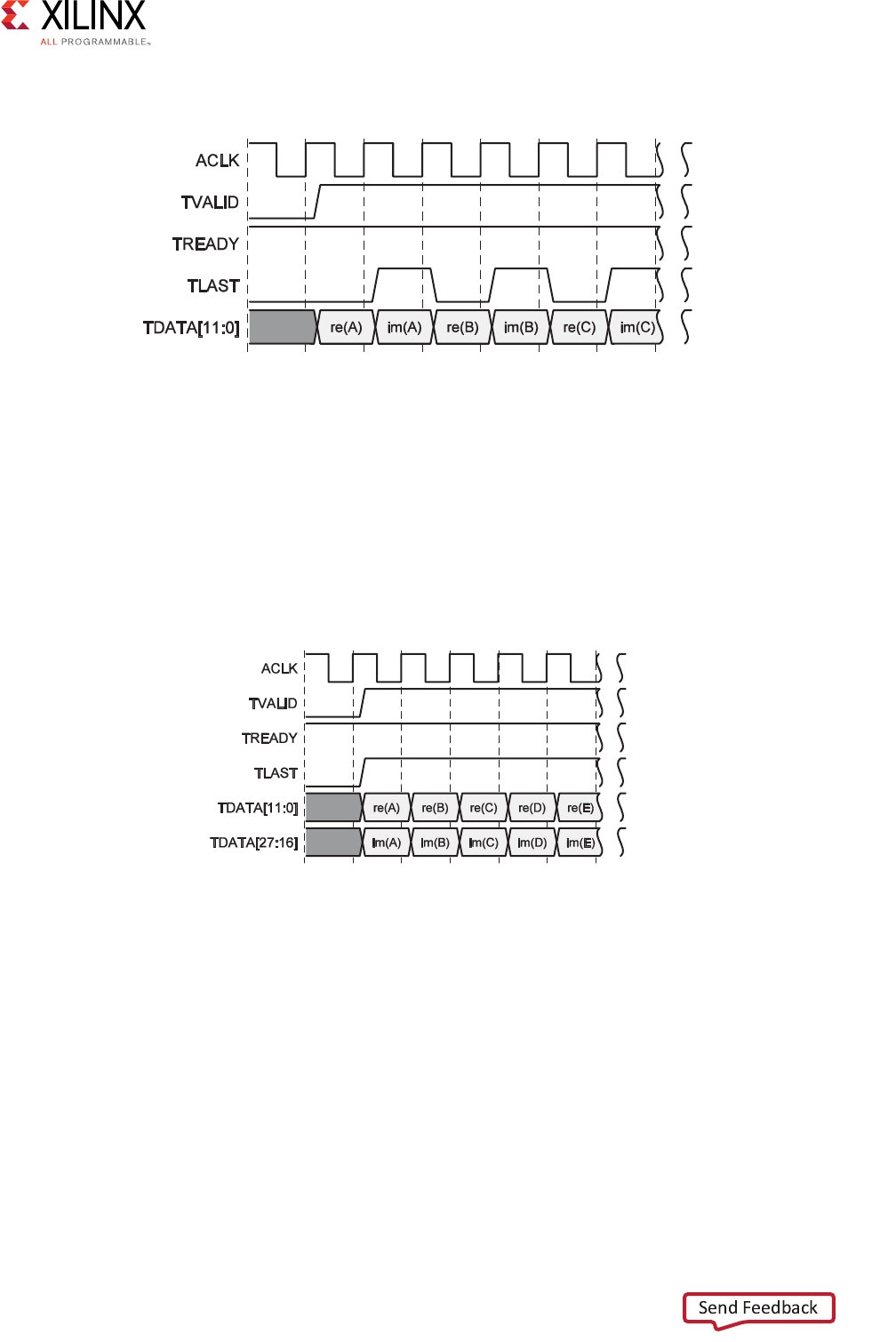

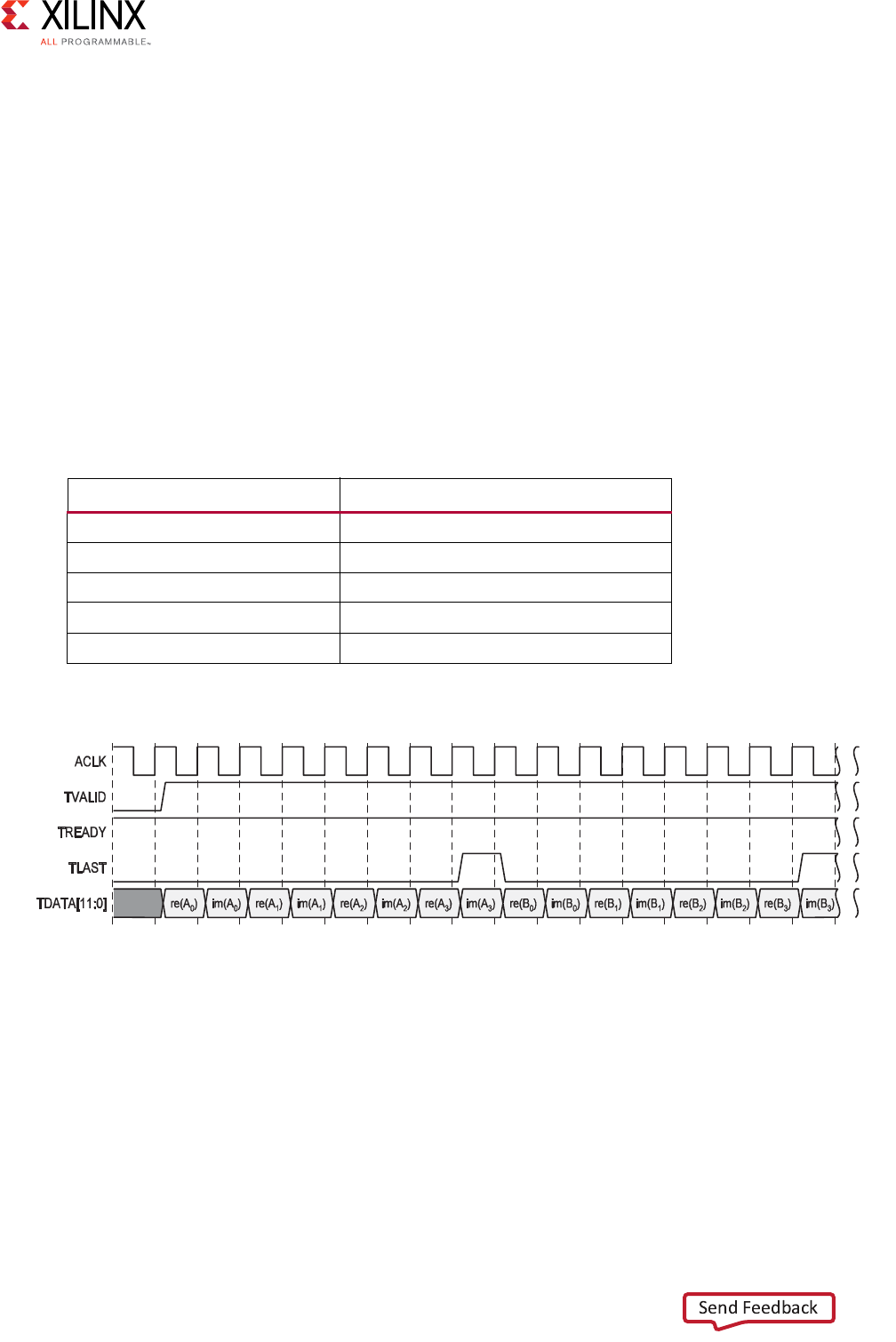

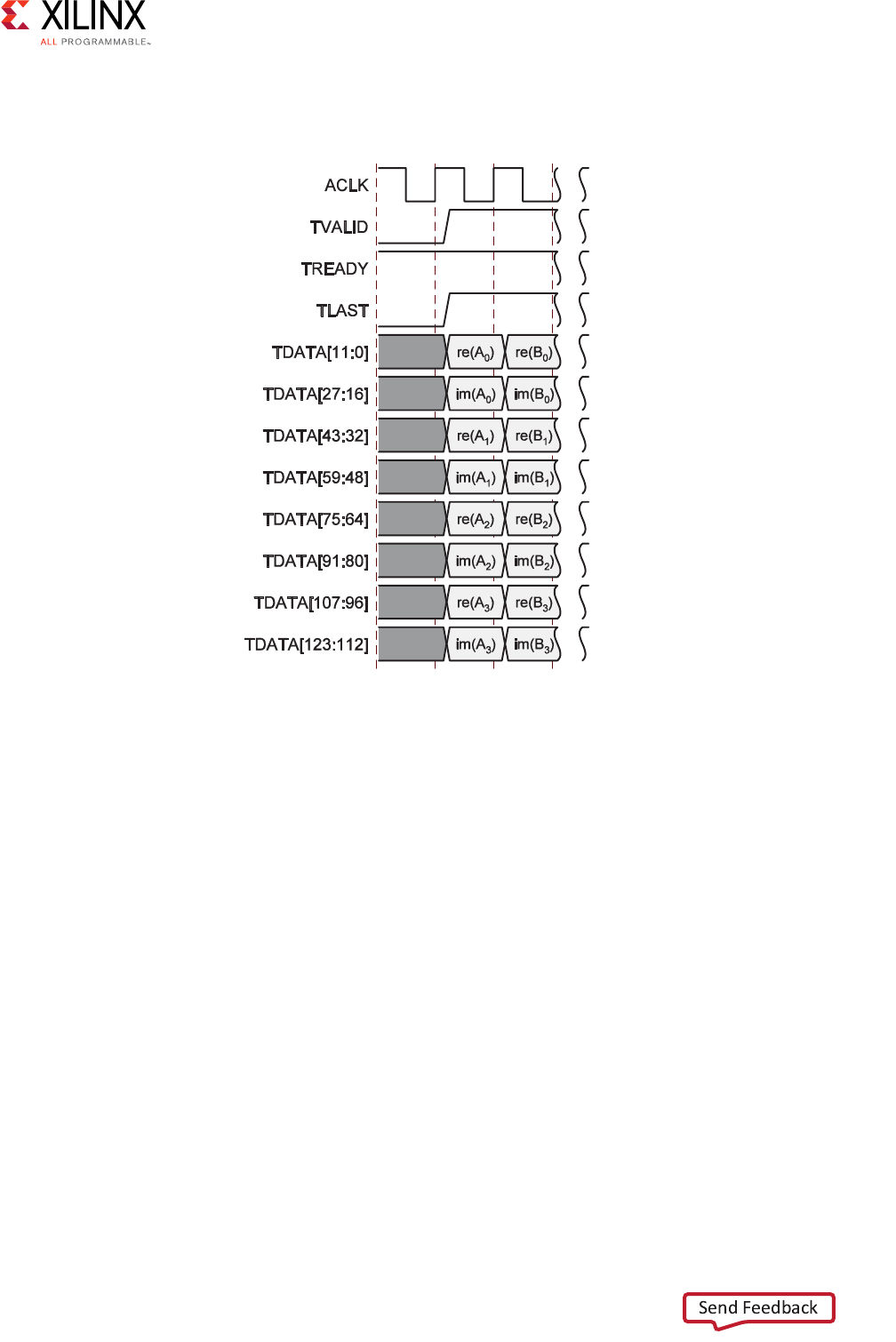

Breaking Out Multichannel TDATA

The tdata signal in an AXI4-Stream can contain multiple channels of data. In System

Generator, the individual channels for tdata are broken out; for example, in the complex

multiplier shown in the following figure, the tdata for the dout port contains both the

imaginary and the real number components.

X-Ref Target - Figure 2-12

Note: Breaking out of multichannel tdata does not add additional logic to the design. The data is

correctly byte-aligned also.

X-Ref Target - Figure 2-11

Figure 2-11: Block Signal Groupings

Figure 2-12: Multi-Channel TDATA

Vivado AXI Reference Guide www.xilinx.com 25

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

Adding AXI Interfaces Using High Level Synthesis

The Vivado High Level Synthesis (HLS) tool transforms a C, C++, SystemC, or OpenCL

design specification into a Register Transfer Level (RTL) implementation that you synthesize

in turn into an FPGA device.

Vivado HLS supports adding AXI interfaces you can transfer information into and out of a

design synthesized from the C, C++, SystemC, or OpenCL description. This feature

combines the ability to implement algorithms using a higher level of abstraction with the

plug-and-play benefits of the AXI protocol to integrate that design into a system with ease.

You can integrate Vivado HLS designs with AXI interfaces through Vivado IP integrator, or

instantiate directly into RTL designs.

C-based designs perform I/O operations in zero time, through formal function arguments.

In an RTL design, you perform I/O operations through a port in the design interface, that

typically operate using a specific I/O protocol.

Vivado HLS supports the automatic synthesis of function arguments into the following AXI4

interfaces:

•AXI4-Stream (axis)

• AXI4-Lite slave (s_axilite)

• AXI4 master (m_axi)

The following subsections provide a brief description of the Vivado HLS AXI functionality.

For more information, see the Vivado Design Suite User Guide: High-Level Synthesis (UG902)

[Ref 32].

HLS AXI4-Stream Interface

You can apply an AXI4-Stream interface (axis mode) to any input argument and any array

or pointer output argument. Because the AXI4-Stream interface transfers data in a

sequential streaming manner it cannot be used with arguments which are both read and

written.

You can use an AXI4-Stream in your design: with or without side-channels.

•With side-channels: Using AXI4-Stream interface with side-channels provides

additional functionality, allowing the optional side-channels which are part of the

AXI4-Stream standard, to be used directly in the C code.

•Without side-channels: Use the AXI4-Stream when the data type does not contain any

AXI4 side-channel elements.

Vivado AXI Reference Guide www.xilinx.com 26

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

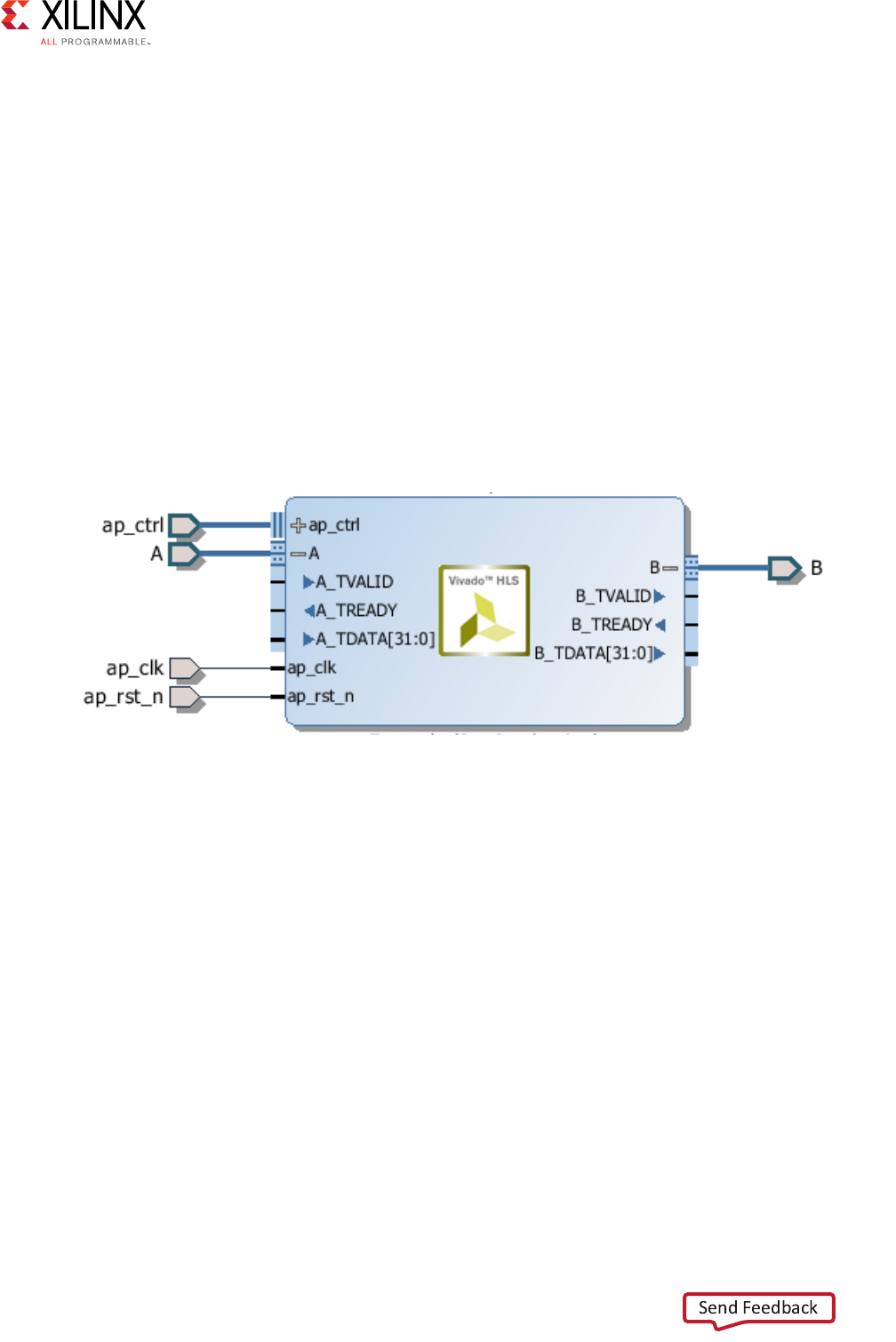

The following example shows a design where the data type is a standard C int type. In this

example, both interfaces are implemented using AXI4-Stream:

void example(int A[50], int B[50]) {

//Set the HLS native interface types

#pragma HLS INTERFACE axis port=A

#pragma HLS INTERFACE axis port=B

int i;

for(i = 0; i < 50; i++){

B[i] = A[i] + 5;

}

}

After you synthesize the code in the previous example, HLS implements both arguments

with a data port and the standard AXI4-Stream TVALID and TREADY protocol ports, as

shown in the following figure.

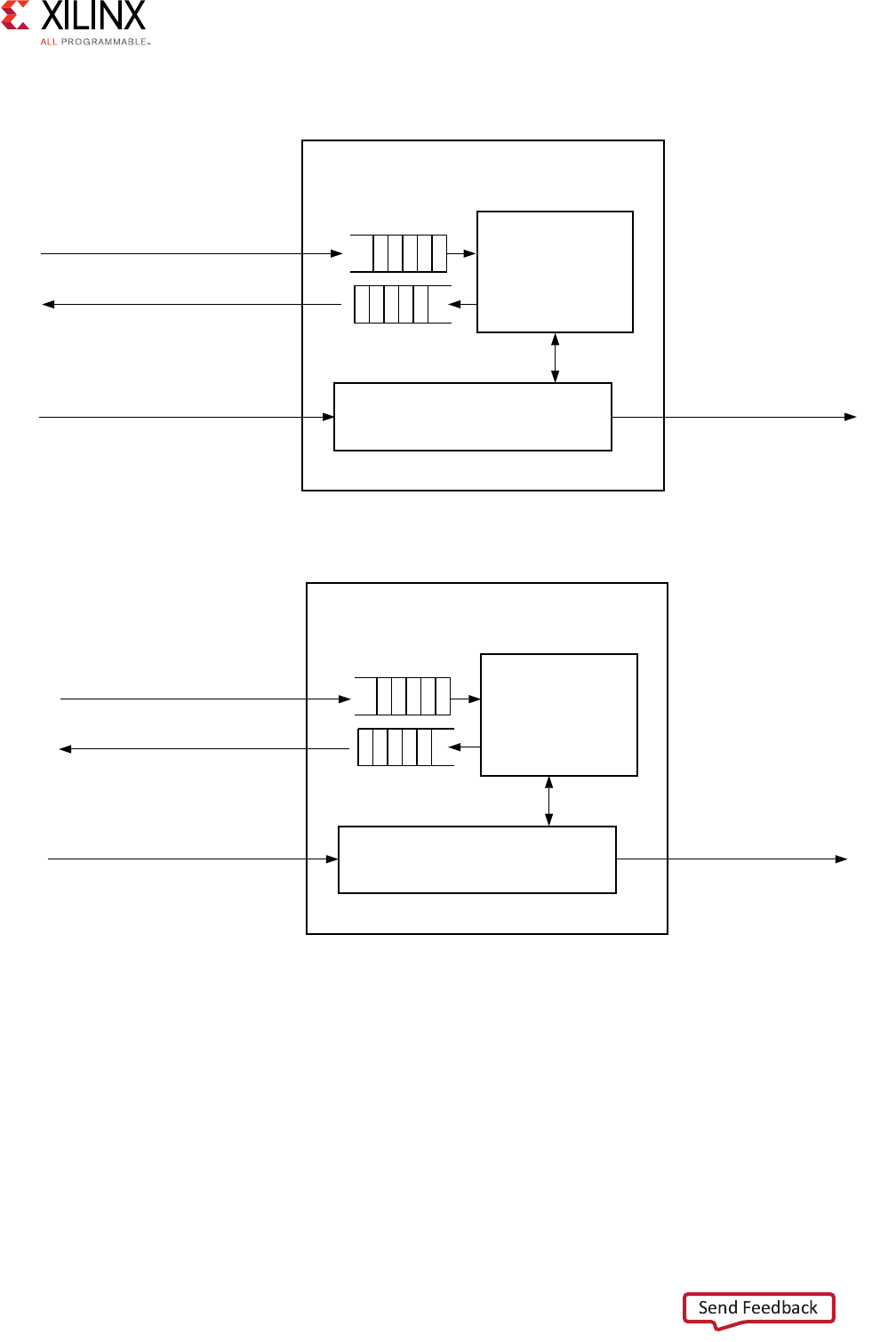

HLS AXI4-Lite Interface

An AXI4-Lite slave interface is typically used to allow a design to be controlled by some

form of CPU or microcontroller. The Vivado HLS features of the AXI4-Lite slave interface,

(s_axilite mode) are:

• Grouping multiple ports into the same AXI4-Lite slave interface.

• Outputting C function and header files for use with the code running on a processor

when you export the design to the Vivado IP catalog.

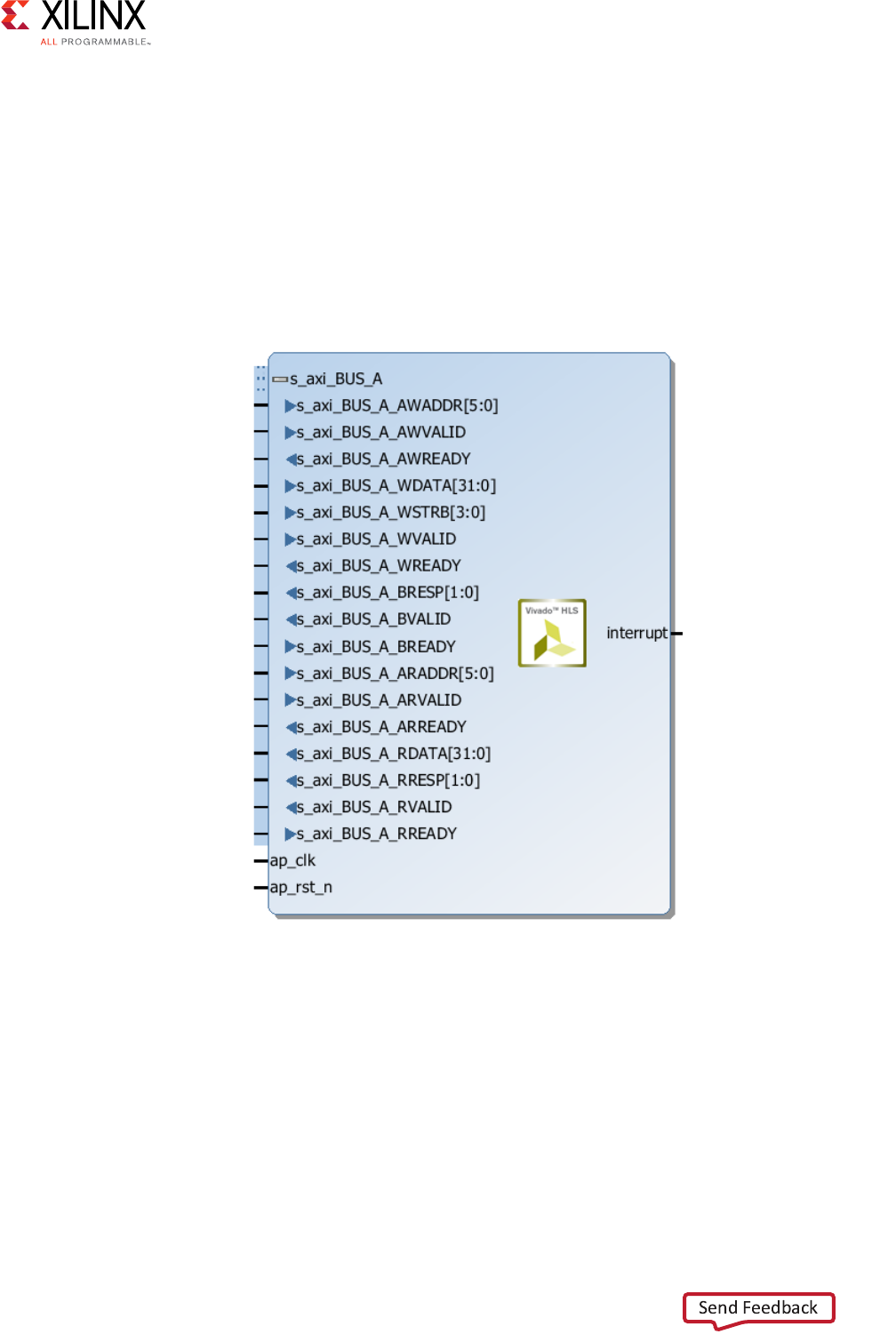

The following code example shows an implementation of multiple arguments, including the

function return, as AXI4-Lite slave interfaces in bundle=BUS_A. Because each interface

uses the same name for the bundle option, the HLS tool groups each of the ports into the

same AXI4-Lite interface:

void example(char *a, char *b, char *c)

{

#pragma HLS INTERFACE s_axilite port=return bundle=BUS_A

X-Ref Target - Figure 2-13

Figure 2-13: HLS AXI4-Stream Interfaces without Side-Channels

Vivado AXI Reference Guide www.xilinx.com 27

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

#pragma HLS INTERFACE s_axilite port=a bundle=BUS_A

#pragma HLS INTERFACE s_axilite port=b bundle=BUS_A

#pragma HLS INTERFACE s_axilite port=c bundle=BUS_A

*c += *a + *b;

}

After synthesizing the previous example code, HLS implements the ports, with the AXI4-Lite

slave port expanded, as shown in the following figure. The interrupt port is created by

including the function return in the AXI4-Lite slave interface. The block-level protocol port,

ap_done, drives the interrupt, which indicates when the function has completed operation.

[

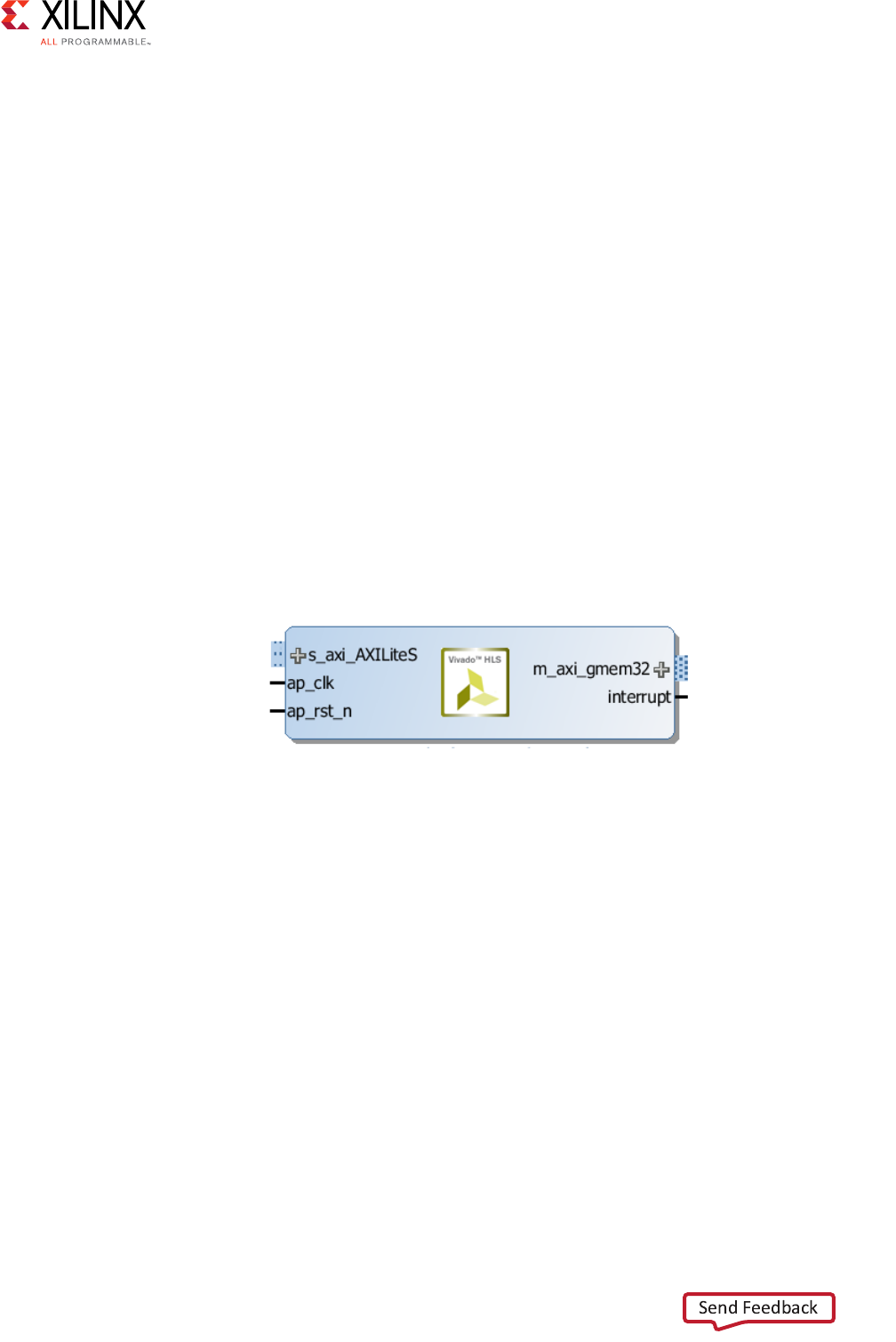

HLS AXI4 Master Interface

You can implement the HLS AXI4 master interface (m_axi mode) on any array or

pointer/reference arguments. The interface is used two modes: individual data transfers,

burst-mode data transfers using the C memcpy function.

X-Ref Target - Figure 2-14

Figure 2-14: HLS AXI4-Lite Slave Interfaces with Grouped RTL Ports

Vivado AXI Reference Guide www.xilinx.com 28

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

Individual Data Transfers

Individual data transfers are those with the characteristics shown in the following code

examples, where a data is read or written to the top-level function argument. The following

code snippets show examples:

Example 1:

void bus (int *d) {

static int acc = 0;

acc += *d;

*d = acc;

}

Example 2:

void bus (int *d) {

static int acc = 0;

int i;

for (i=0;i<4;i++) {

acc += d[i];

d[i] = acc;

}

}

In both cases, the data transfers over the AXI4 master interface as simple read or write

operations: one address, one data values at a time.

Burst-Mode Transfers

Burst-mode transfers data using a single base address, which is followed by multiple

sequential data samples, and is capable of higher data throughput. Burst-mode is possible

only when you use the C memcpy function to read data into, or data out of, the top-level

function for synthesis.

Vivado AXI Reference Guide www.xilinx.com 29

UG1037 (v4.0) July 15, 2017

Chapter 2: AXI Support in Xilinx Tools and IP

The following example shows a copy of a burst-mode AXI4 master interface transfer. The

top-level function, argument a, is specified as the AXI4 master interface:

void example(volatile int *a){

#pragma HLS INTERFACE m_axi depth=50 port=a

#pragma HLS INTERFACE s_axilite port=return bundle=AXILiteS

//Port a is assigned to an AXI4-master interface

int i;

int buff[50];

// memcpy creates a burst access to memory

memcpy(buff,(const int*)a,50*sizeof(int));

for(i=0; i < 50; i++){

buff[i] = buff[i] + 100;

}

memcpy((int *)a,buff,50*sizeof(int));

}

When you synthesize a design with the previous example, it results in an interface as shown

in the following figure (the AXI interfaces are shown collapsed):

X-Ref Target - Figure 2-15

Figure 2-15: HLS AXI Master Interface

Vivado AXI Reference Guide www.xilinx.com 30

UG1037 (v4.0) July 15, 2017

Chapter 3

Samples of Vivado AXI IP and Xilinx

Processors

Overview

Xilinx offers a suite of AXI Infrastructure IP that serve as system building blocks. These IP

provide the ability to move data, operate on data, and measure, test, and debug AXI

transactions. For a more complete list of AXI IP, see the Xilinx® IP Center website [Ref 3].

The following IP descriptions are samples of the common, available AXI IP developed by

Xilinx, and a brief description of the Xilinx processors: Zynq® MPSoC UltraScale+™

processor, the Zynq-7000 All Programmable SoC processor, and the MicroBlaze™ processor,

which include AXI IP.

AXI Infrastructure IP Cores

The AXI Infrastructure is a collection of the following IP cores:

•AXI Crossbar: Connects one or more similar AXI memory-mapped masters to one or

more similar memory-mapped slaves.

•AXI Data Width Converter: Connects one AXI memory-mapped master to one AXI

memory-mapped slave having a wider or narrower data path.

•AXI Clock Converter: Connects one AXI memory-mapped master to one AXI

memory-mapped slave operating in a different clock domain.

•AXI Protocol Converter: Connects one AXI4, AXI3 or AXI4-Lite master to one AXI slave

of a different AXI memory-mapped protocol.

•AXI Data FIFO: Connects one AXI memory-mapped master to one AXI

memory-mapped slave through a set of FIFO buffers.

•AXI Register Slice: Connects one AXI memory-mapped master to one AXI

memory-mapped slave through a set of pipeline registers, typically to break a critical

timing path.

•AXI MMU: Provides address range decoding services for AXI Interconnect.

Vivado AXI Reference Guide www.xilinx.com 31

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

AXI Interconnect is available as a standalone IP in the Xilinx IP catalog for use in an RTL

design. The standalone AXI Interconnect has a reduced feature set, mainly suitable for

connecting multiple AXI4 masters to a single AXI4 slave. See the LogiCORE IP AXI

Interconnect IP Product Guide (PG059) [Ref 5] for more information.

The IP integrator provides the user a choice to select between the AXI Interconnect and the

new AXI SmartConnect if the endpoints being connected are AXI4 memory-mapped

endpoints.

The IP integrator tool provides enhanced features, greater ease of use, and automation

services. See the following documents for more information:

°Vivado Design Suite User Guide: Designing IP Subsystems Using IP Integrator

(UG994) [Ref 38]

°Vivado Design Suite Tutorial: Designing IP Subsystems Using IP Integrator (UG995)

[Ref 39]

Xilinx AXI SmartConnect and AXI Interconnect IP

The Xilinx LogiCORE IP AXI Interconnect and LogiCORE IP AXI SmartConnect cores both

connect one or more AXI memory-mapped master devices to one or more

memory-mapped slave devices; however, the SmartConnect is more tightly integrated into

the Vivado design environment to automatically configure and adapt to connected AXI

master and slave IP with minimal user intervention. The AXI Interconnect can be used in all

memory-mapped designs. There are certain cases for high bandwidth application where

using a SmartConnect provides better optimization. The AXI SmartConnect IP delivers the

maximum system throughput at low latency by synthesizing a low area custom interconnect

that is optimized for important interfaces.

The AXI Interconnect core IP (axi_interconnect) connects one or more AXI

memory-mapped master devices to one or more memory-mapped slave devices.

The AXI interfaces conform to the AMBA® AXI4 specification from ARM®, including the

AXI4-Lite control register interface subset.

IMPORTANT: The AXI Interconnect and the AXI SmartConnect core IP are intended for

memory-mapped transfers only; AXI4-Stream transfers are not applicable, but instead can use the

AXI4-Stream Interconnect core IP (axis_interconnect). IP with AXI4-Stream interfaces are

generally connected to one another, to DMA IP, or to the AXI4-Stream Interconnect IP.

RECOMMENDED: For new medium to high performance designs, the AXI SmartConnect IP is

recommended as it offers better upward scaling in area and timing. For low performance (AXI4-Lite) or

small to medium complexity designs, AXI Interconnect may be more area efficient.

Vivado AXI Reference Guide www.xilinx.com 32

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

The following section is extracted from the LogiCORE IP AXI Interconnect IP Product Guide

(PG059) [Ref 12]. See the AXI SmartConnect IP section for more in that IP.

AXI Interconnect Core Features

The AXI Interconnect IP contains the following features:

• AXI protocol compliant (AXI3, AXI4, and AXI4-Lite), which includes:

°Burst lengths up to 256 for incremental (INCR) bursts

°Converts AXI4 bursts >16 beats when targeting AXI3 slave devices by splitting

transactions.

°Propagates USER signals on each channel, if any; independent USER signal width per

channel (optional)

°Propagates Quality of Service (QoS) signals, if any; not used by the AXI Interconnect

core (optional)

• Interface data widths:

°AXI4: 32, 64, 128, 256, 512, or 1024 bits.

°AXI4-Lite: 32 bits and 64 bits

• Address width: Up to 64-bits

• ID width: Up to 32 bits

• Support for Read-only and Write-only masters and slaves, resulting in reduced resource

utilization.

Note: When used in a standalone mode, the AXI Interconnect core connects multiple masters to one

slave, which is typically a memory controller.

• Built-in data-width conversion:

°Each master and slave connection can independently use data widths of 32, 64, 128,

256, 512, or 1024 bits wide:

- The internal crossbar can be configured to have a native data-width of 32, 64,

128, 256, 512, or 1024 bits.

- Data-width conversion is performed for each master and slave connection that

does not match the crossbar native data-width.

°When converting to a wider interface (upsizing), data is packed (merged) optionally,

when permitted by address channel control signals (CACHE modifiable bit is

asserted).

°When converting to a narrower interface (downsizing), burst transactions can be

split into multiple transactions if the maximum burst length would otherwise be

exceeded.

• Built-in clock-rate conversion:

°Each master and slave connection can use independent clock rates.

Vivado AXI Reference Guide www.xilinx.com 33

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

°Synchronous integer-ratio (N:1 and 1:N) conversion to the internal crossbar native

clock-rate.

°Asynchronous clock conversion (uses more storage and incurs more latency than

synchronous conversion).

°The AXI Interconnect core exports reset signals re-synchronized to the clock input

associated with each SI and MI slot.

• Built-in AXI4-Lite protocol conversion:

°The AXI Interconnect core can connect to any mixture of AXI4 and AXI4-Lite masters

and slaves.

°The AXI Interconnect core saves transaction IDs and restores them during response

transfers, when connected to an AXI4-Lite slave.

- AXI4-Lite slaves do not need to sample or store IDs.

°The AXI Interconnect core detects illegal AXI4-Lite transactions from AXI4 masters,

such as any transaction that accesses more than one word.

It generates a protocol-compliant error response to the master, and does not

propagate the illegal transaction to the AXI4-Lite slave.

• Built-in AXI3 protocol conversion:

°The AXI Interconnect core splits burst transactions of more than 16 beats from AXI4

masters into multiple transactions of no more than 16 beats when connected to an

AXI3 slave.

• Optional register-slice pipelining:

°Available on each AXI channel connecting to each master and each slave.

°Facilitates timing closure by trading-off frequency versus latency.

°One latency cycle per register-slice, with no loss in data throughput under all AXI

handshaking conditions.

• Optional data path FIFO buffering:

°Available on write and read data paths connecting to each master and each slave.

°32-deep LUT-RAM based.

°512-deep block RAM based.

°Option to delay assertion of:

-AWVALID until the complete burst is stored in the W-channel FIFO

-ARVALID until the R-channel FIFO has enough vacancy to store the entire burst

length

• Selectable Interconnect Architecture:

°Shared-Address, Multiple-Data (SAMD) crossbar (Performance Optimized):

- Parallel crossbar pathways for write data and read data channels.

Vivado AXI Reference Guide www.xilinx.com 34

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

When more than one write or read data source has data to send to different

destinations, data transfers can occur independently and concurrently, provided AXI

ordering rules are met.

°Shared Access Shared Data (SASD) mode (Area optimized):

- Shared write data, shared read data, and single shared address pathways.

- Issues one outstanding transaction at a time.

- Minimizes resource utilization.

• Supports multiple outstanding transactions:

°Supports masters with multiple reordering depth (ID threads).

°Supports up to 16-bit wide ID signals (system-wide).

°Supports write response re-ordering, read data re-ordering, and read data

interleaving.

°Configurable write and read transaction acceptance limits for each connected

master.

°Configurable write and read transaction issuing limits for each connected slave.

• “Single-Slave per ID” method of cyclic dependency (deadlock) avoidance:

°For each ID thread issued by a connected master, the master can have outstanding

transactions to only one slave for writes and one slave for reads, at any time.

• Fixed priority and round-robin arbitration:

°16 configurable levels of static priority.

°Round-robin arbitration is used among all connected masters configured with the

lowest priority setting (priority 0), when no higher priority master is requesting.

°Any SI slot that has reached its acceptance limit, or is targeting an MI slot that has

reached its issuing limit, or is trying to access an MI slot in a manner that risks

deadlock, is temporarily disqualified from arbitration, so that other SI slots can be

granted arbitration.

• Supports TrustZone security for each connected slave as a whole:

- If configured as a secure slave, only secure AXI accesses are permitted

- Any non-secure accesses are blocked and the AXI Interconnect core returns a

DECERR response to the master

• Support for read-only and write-only masters and slaves, resulting in reduced resource

utilization.

Vivado AXI Reference Guide www.xilinx.com 35

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

AXI Interconnect Core Limitations

These limitations apply to the AXI Interconnect core and all applicable infrastructure cores:

• The AXI Interconnect core does not support discontinued AXI3 features:

°Atomic locked transactions. This feature was retracted by AXI4 protocol. A locked

transaction is changed to a non-locked transaction and propagated by the MI.

°Write interleaving. This feature was retracted by AXI4 protocol. AXI3 master devices

must be configured as if connected to a slave with a write interleaving depth of

one.

• AXI4 Quality of Service (QoS) signals do not influence arbitration priority in AXI

Crossbar. QoS signals are propagated from SI to MI.

• AXI Interconnect and the Vivado IDE do not support the use of AXI AWREGION and

ARREGION signals.

• Interconnect cores do not support low-power mode or propagate the AXI C-channel

signals.

• AXI Interconnect cores do not time out if the destination of any AXI channel transfer

stalls indefinitely. All connected AXI slaves must respond to all received transactions, as

required by AXI protocol.

• AXI Interconnect (including AXI Crossbar and AXI MMU cores) provides no address

remapping.

• AXI Interconnect sub-cores do not include conversion or bridging to non-AXI

protocols, such as APB.

• AXI Interconnect cores do not have clock-enable (aclken) inputs. Consequently, the

use of aclken is not supported among memory-mapped AXI interfaces in Xilinx

systems.

Note: The aclken signal is supported for Xilinx AXI4-Stream interfaces.

The following subsection describes the use models for the AXI Interconnect core.

AXI Interconnect Core Use Models

The AXI Interconnect IP core connects one or more AXI memory-mapped master devices to

one or more memory-mapped slave devices. The following subsections describe the

possible use cases:

•Conversion Only

•N-to-1 Interconnect

•1-to-N Interconnect

•N-to-M Interconnect (Sparse Crossbar Mode)

Vivado AXI Reference Guide www.xilinx.com 36

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

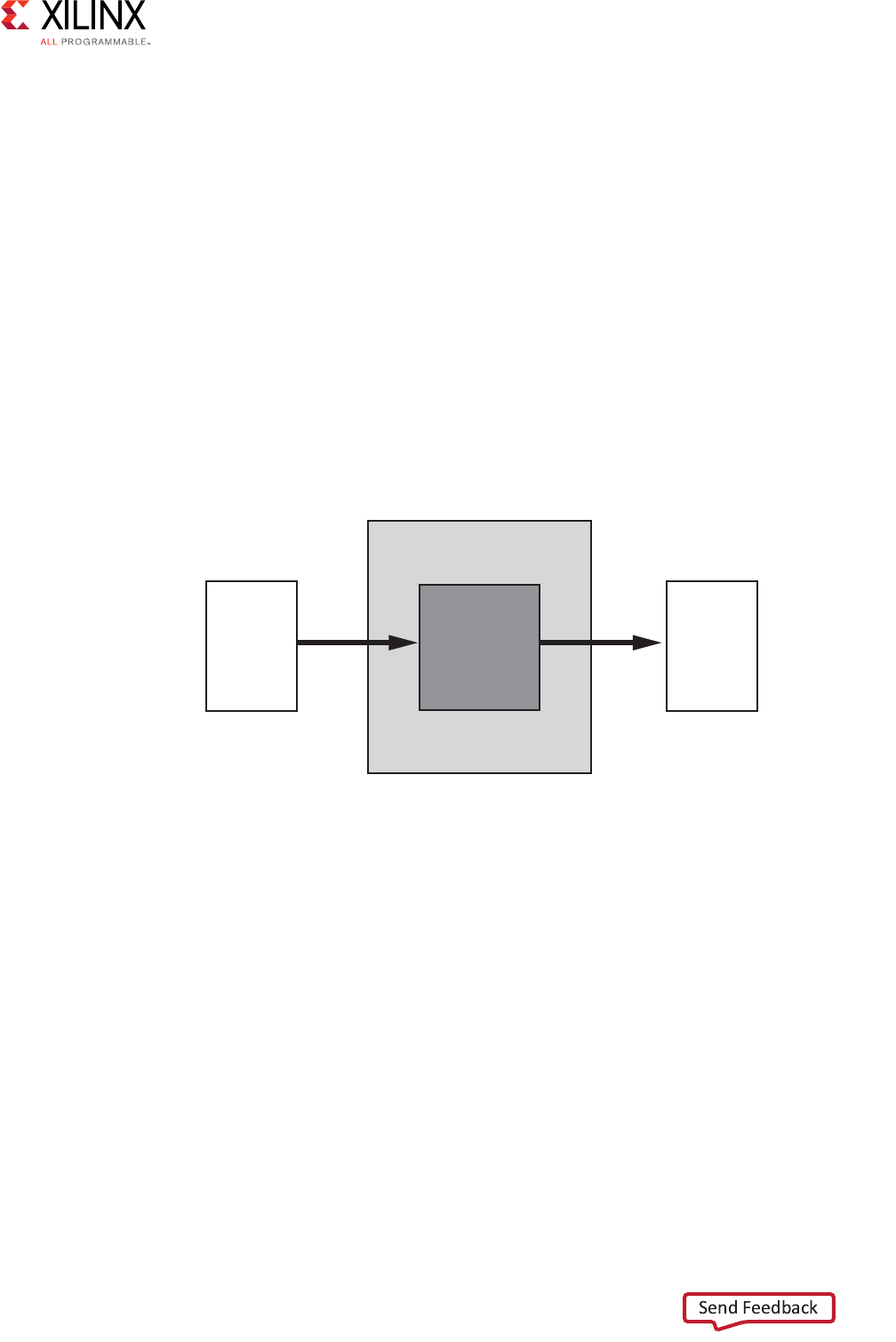

Conversion Only

The AXI Interconnect core can perform various conversion and pipelining functions when

connecting one master device to one slave device. These are:

• Data width conversion

• Clock rate conversion

• AXI4-Lite slave adaptation

• AXI-3 slave adaptation

• Pipelining, such as a register slice or data channel FIFO

In these cases, the AXI Interconnect core contains no arbitration, decoding, or routing logic.

There could be incurred latency, depending on the conversion being performed.

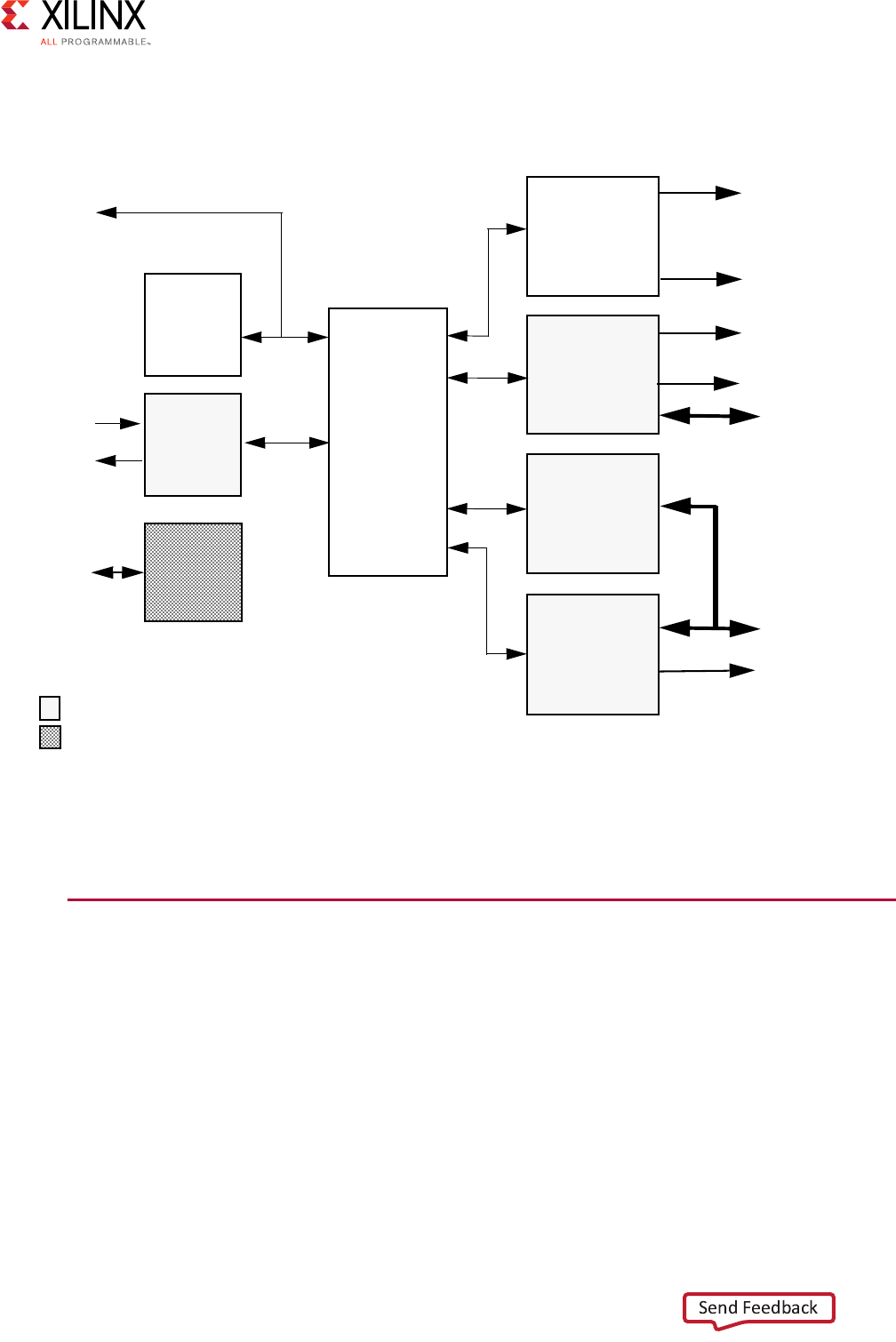

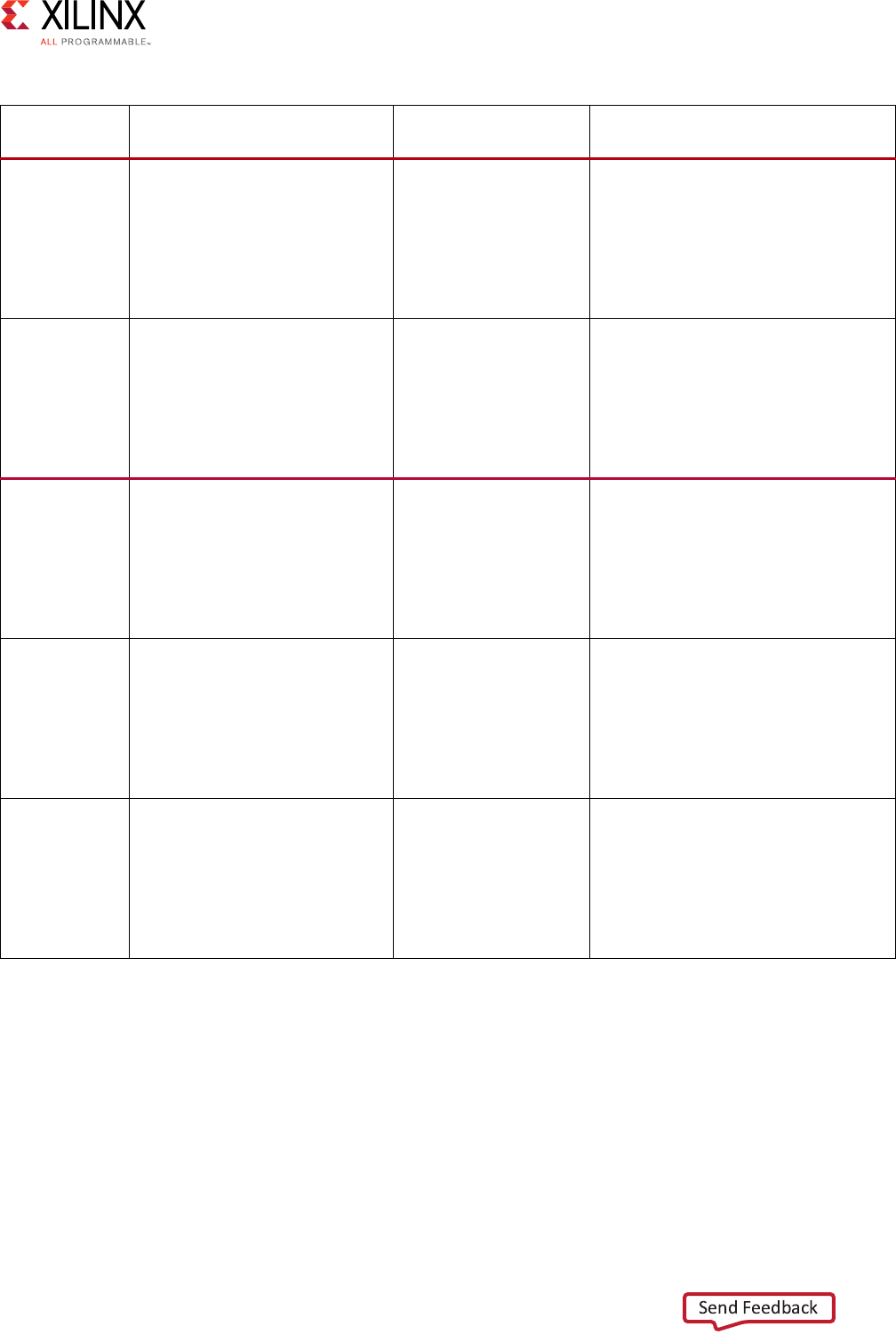

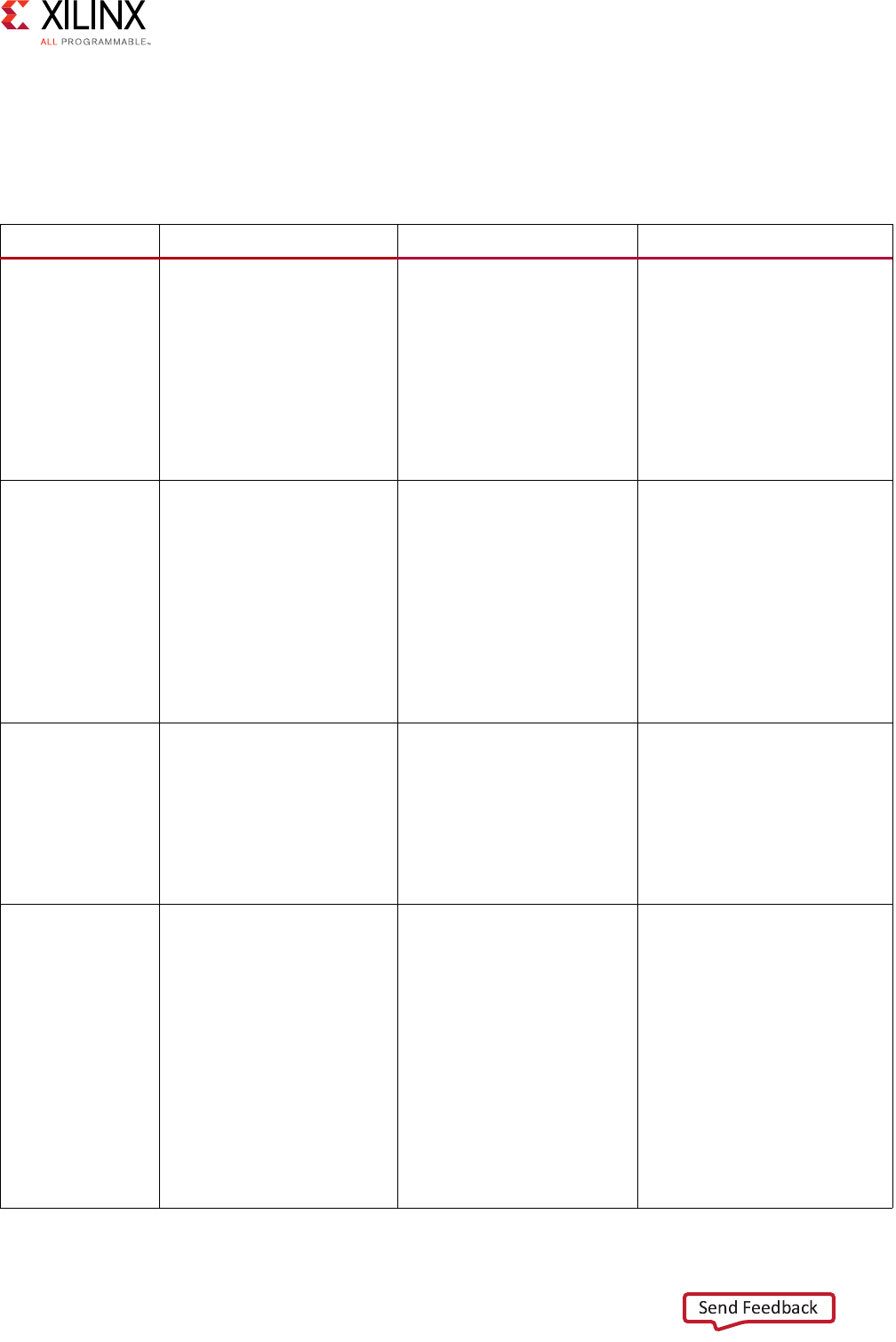

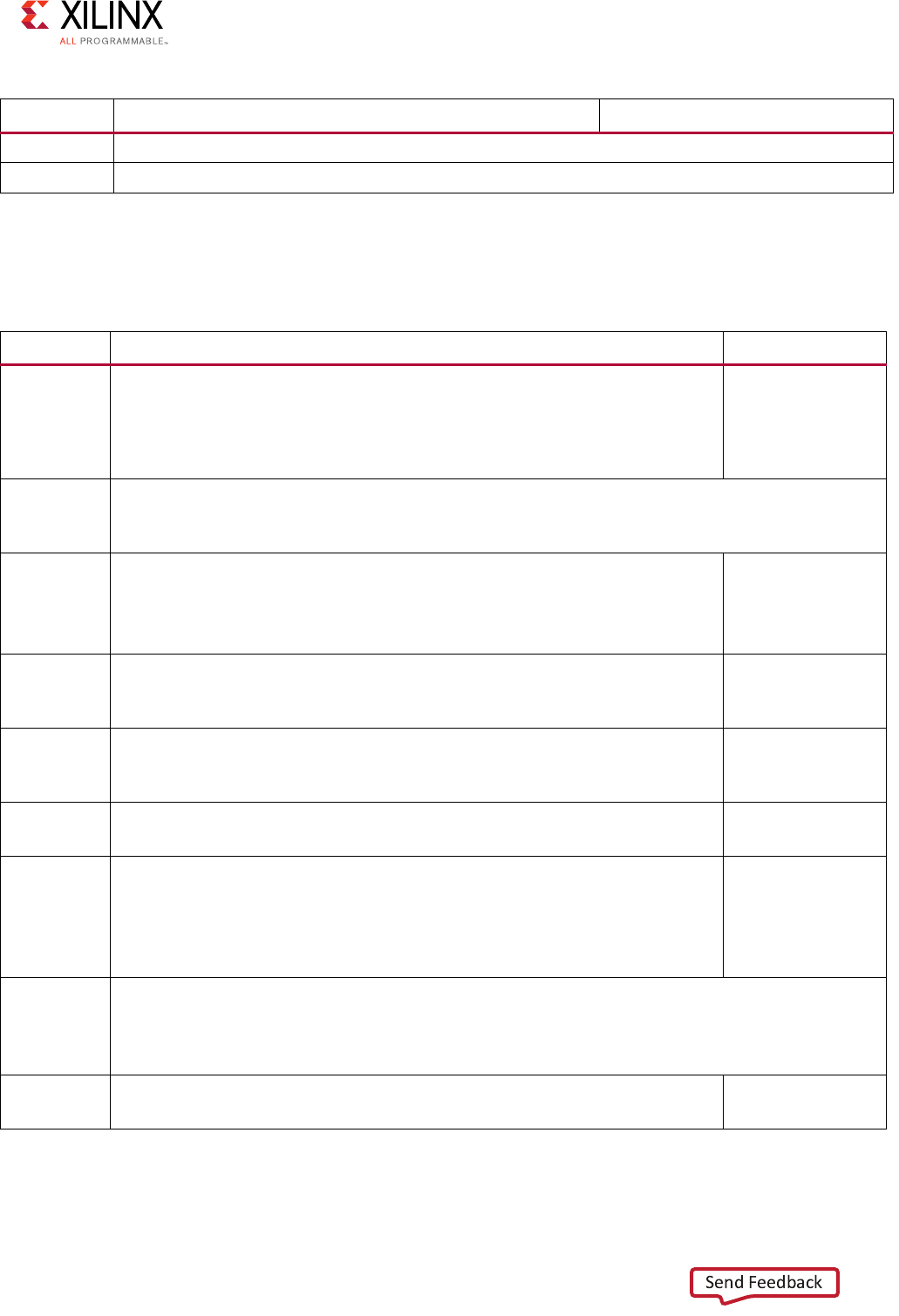

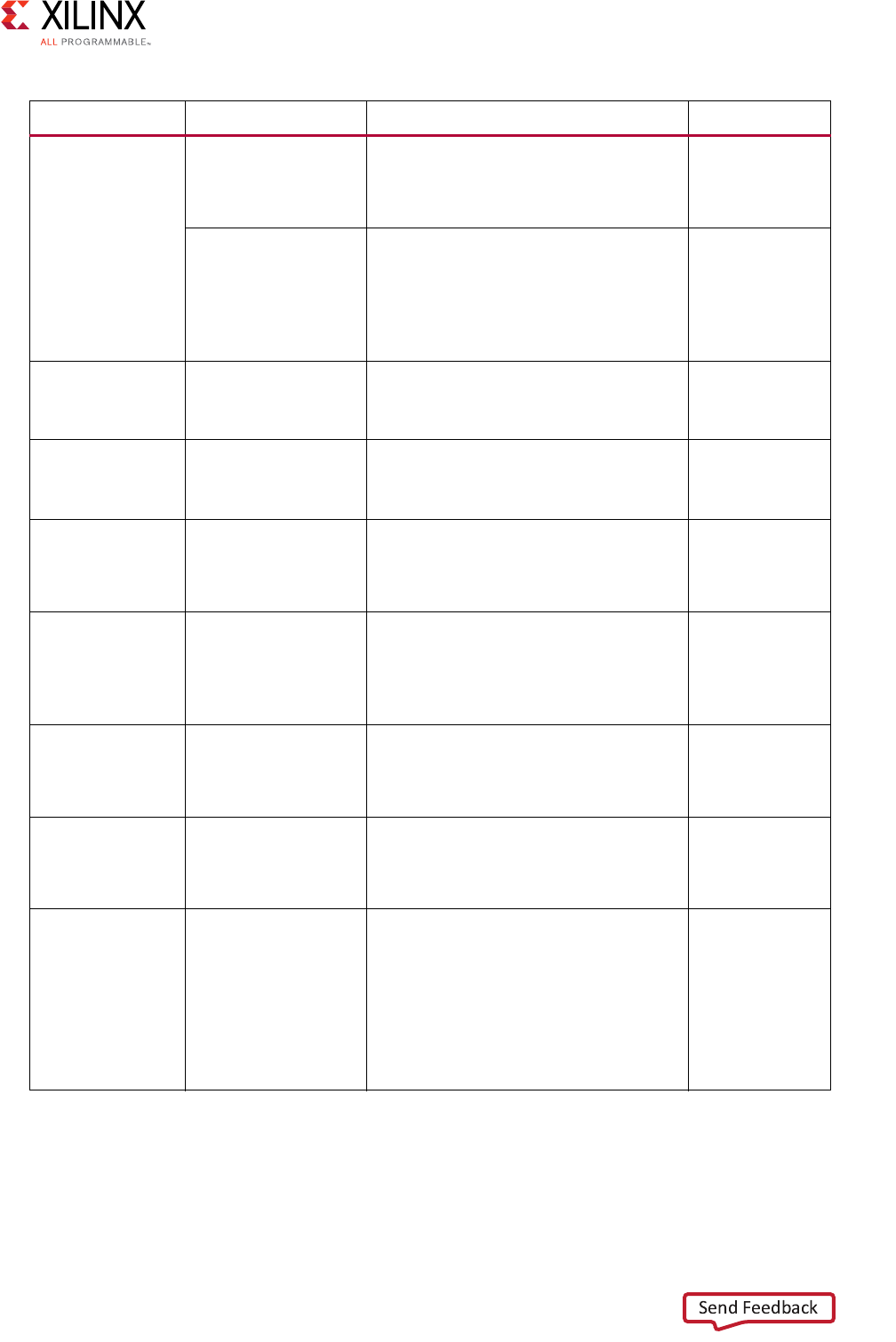

The following figure shows the one-to-one or conversion use case.

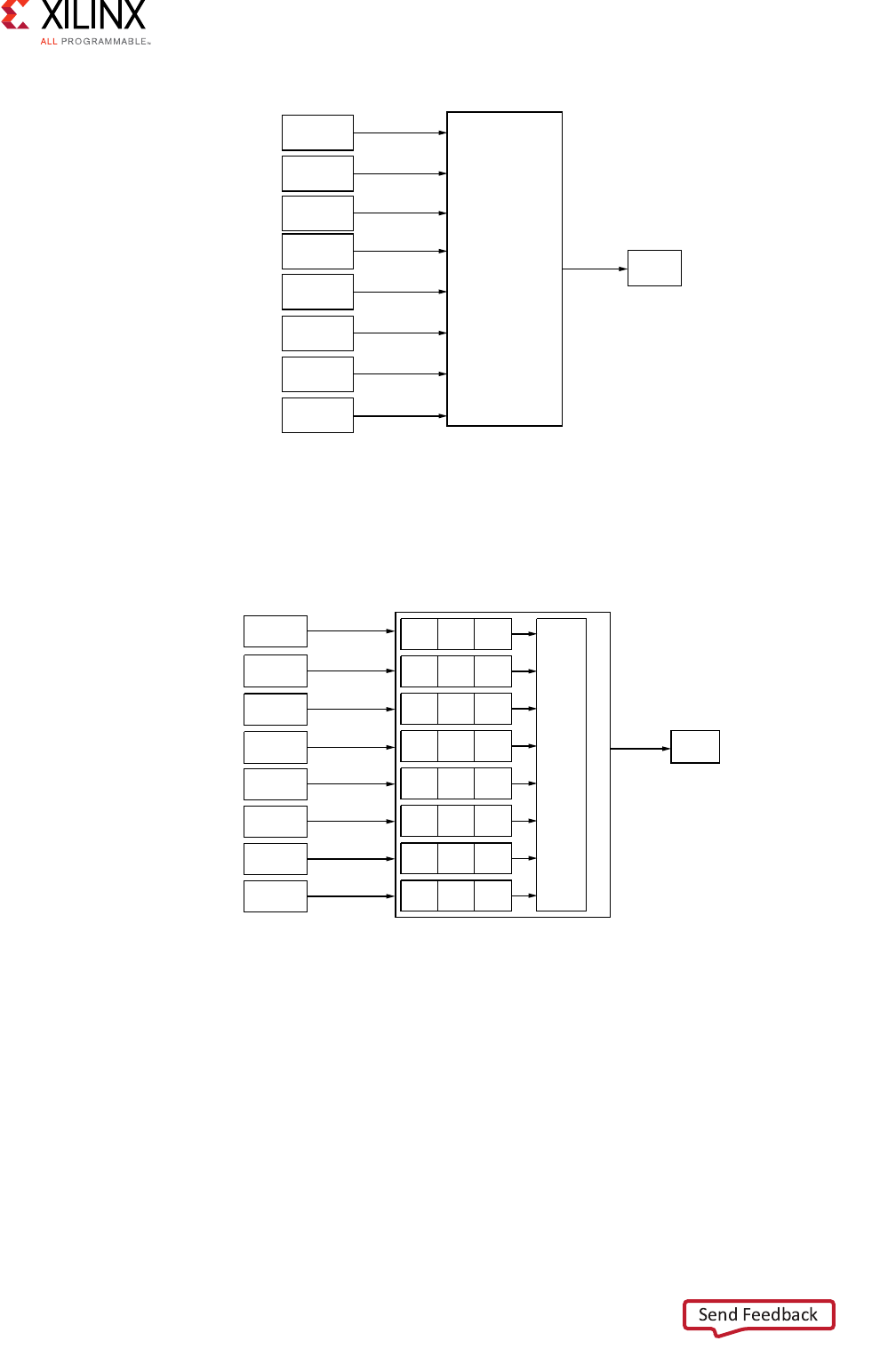

N-to-1 Interconnect

A common degenerate configuration of AXI Interconnect core is when multiple master

devices arbitrate for access to a single slave device, typically a memory controller. In these

cases, address decoding logic might be unnecessary and omitted from the AXI Interconnect

core (unless address range validation is needed).

Conversion functions, such as data width and clock rate conversion, can also be performed

in this configuration. The following figure shows the N-to-1 AXI interconnection use case.

X-Ref Target - Figure 3-1

Figure 3-1: 1-to-1 Conversion AXI Interconnect Use Case

Master 0 Slave 0

Interconnect

Conversion

and/or

Pipelining

Vivado AXI Reference Guide www.xilinx.com 37

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

.

1-to-N Interconnect

Another degenerative configuration of the AXI Interconnect core is when a single master

device, typically a processor, accesses multiple memory-mapped slave peripherals. In these

cases, arbitration (in the address and write data paths) is not performed. The following

figure shows the 1 to N Interconnect use case.

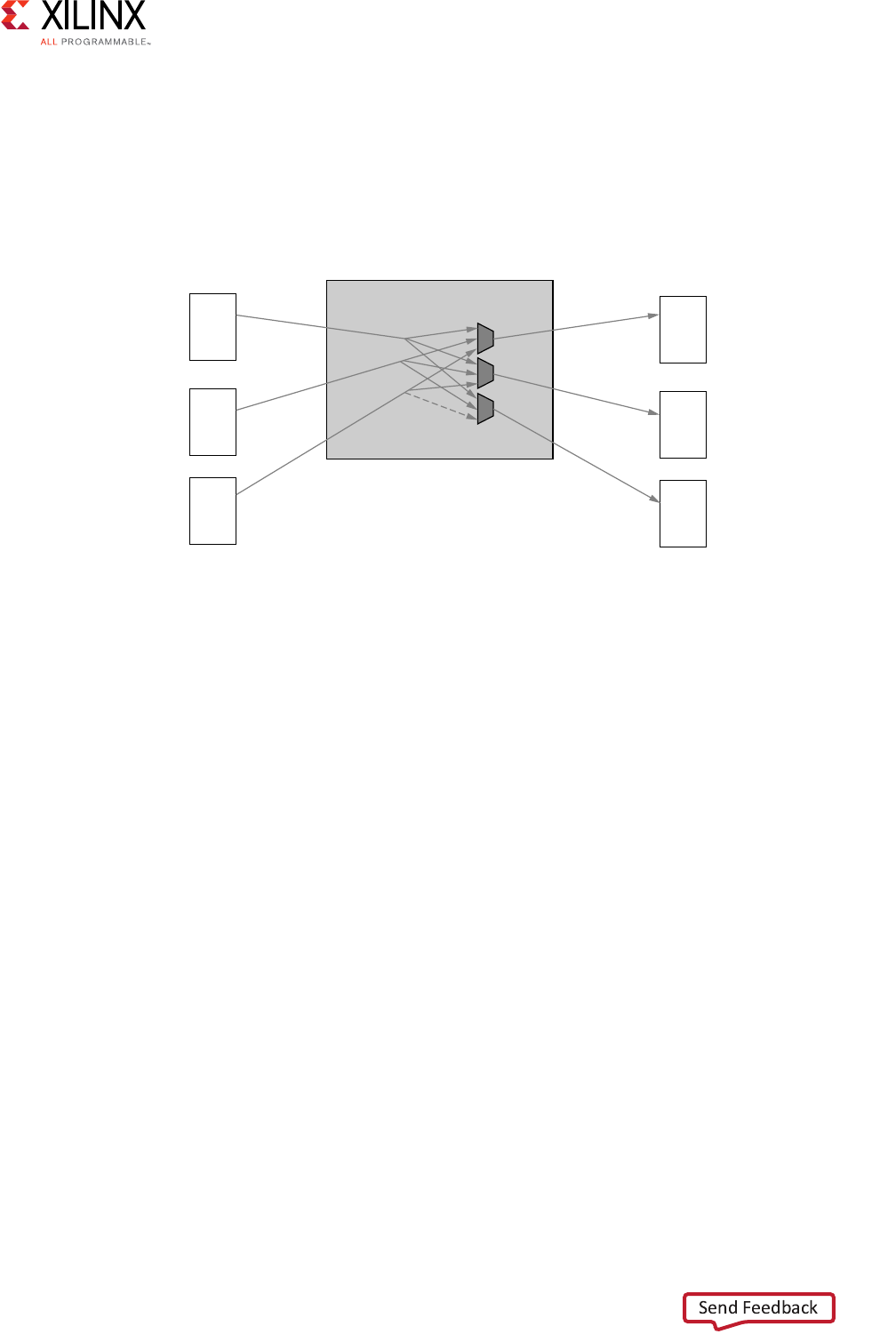

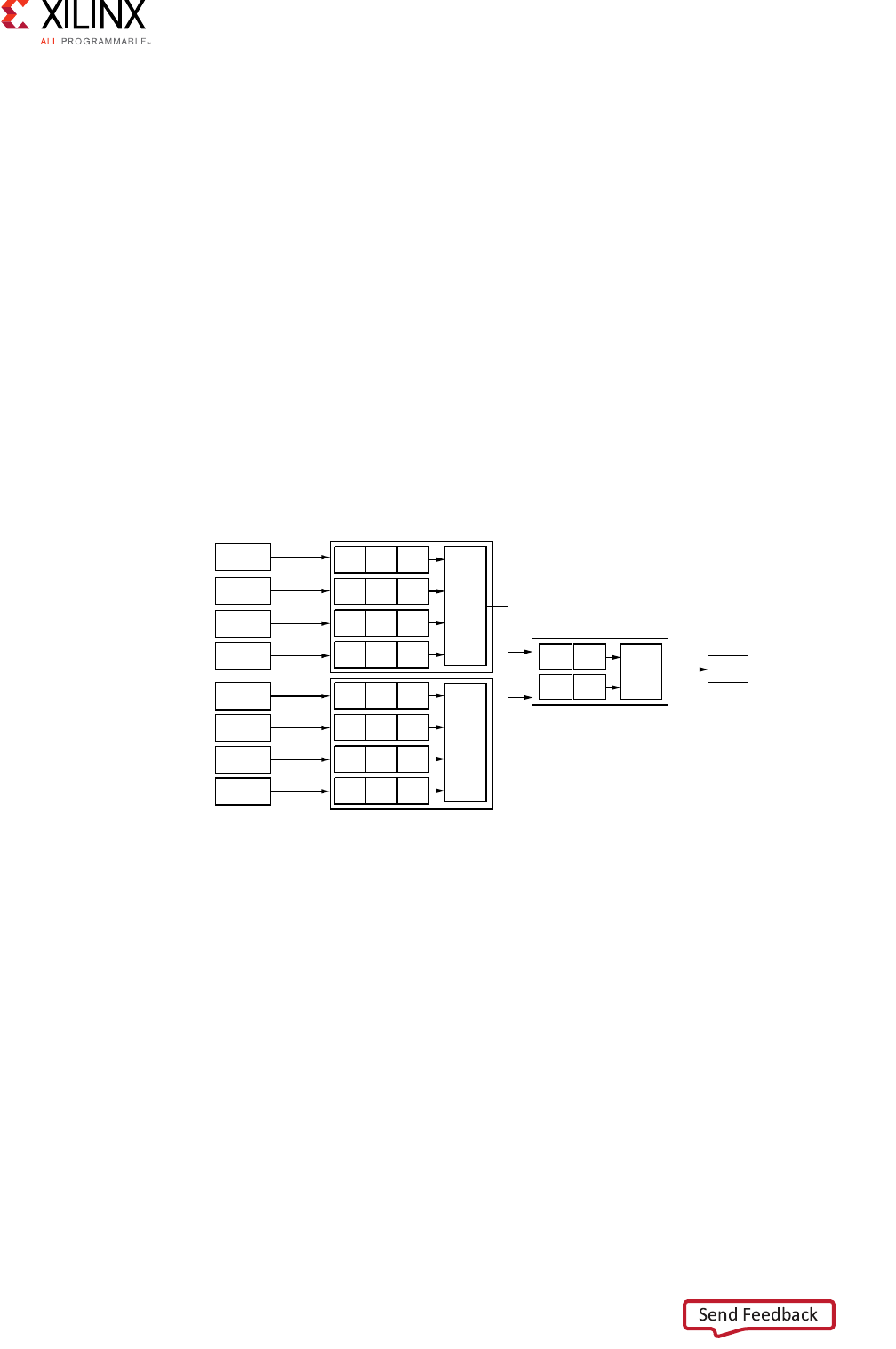

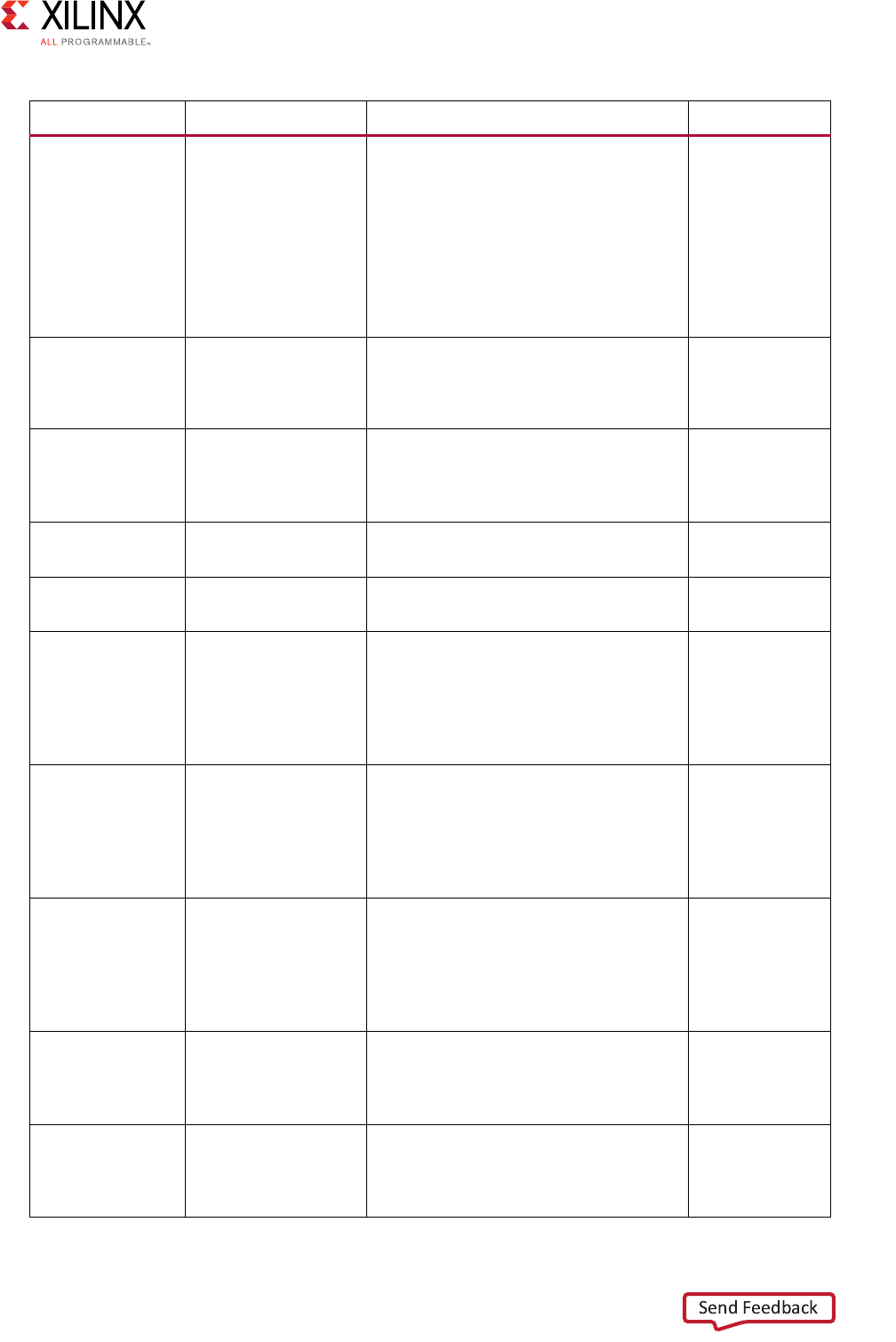

N-to-M Interconnect (Sparse Crossbar Mode)

The N-to-M use case of the AXI Interconnect features a Shared-Address Multiple-Data

(SAMD) topology, consisting of sparse data crossbar connectivity, with single-threaded

write and read address arbitration, as shown in Figure 3-4.

X-Ref Target - Figure 3-2

Figure 3-2: N-to-1 AXI Interconnect

X-Ref Target - Figure 3-3

Figure 3-3: 1-to-N AXI Interconnect Use Case

X12050

Master 0

Master 1

Slave 0

Interconnect

Arbiter

Master 0

Slave 0

Slave 1

Interconnect

Decoder/Router

Vivado AXI Reference Guide www.xilinx.com 38

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

The following figure shows the sparse crossbar write and read data pathways.

X-Ref Target - Figure 3-4

Figure 3-4: Shared Write and Read Address Arbitrations

Master 0

Master 1

Master 2

Slave 0

Slave 1

Slave 2

Interconnect

AW

AR

AW

AR

AW

AR

AW

AR

AW

AR

AW

AR

Write

Transaction

Arbiter

Read

Transaction

Arbiter

Router

Router

X-Ref Target - Figure 3-5

Figure 3-5: Sparse Crossbar Write and Read Pathways

Interconnect

Master 0

Master 1

Master 2

Slave 0

Slave 1

Slave 2

W

R

W

R

W

R

W

R

W

R

W

R

Write Data Crossbar

Read Data Crossbar

Vivado AXI Reference Guide www.xilinx.com 39

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

Parallel write and read data pathways connect each SI slot (attached to AXI masters on the

left) to all the MI slots (attached to AXI slaves on the right) that it can access, according to

the configured sparse connectivity map.

When more than one source has data to send to different destinations, data transfers can

occur independently and concurrently, provided AXI ordering rules are met.

The write address channels among all SI slots (if > 1) feed into a central address arbiter,

which grants access to one SI slot at a time, as is also the case for the read address channels.

The winner of each arbitration cycle transfers its address information to the targeted MI slot

and pushes an entry into the appropriate command queue(s) that enable various data

pathways to route data to the proper destination while enforcing AXI ordering rules.

Cascading AXI Interconnect Cores Together

You can connect the slave interface of one AXI Interconnect core module to the master

interface of another AXI Interconnect core with no intervening logic. Cascading multiple AXI

Interconnects allow systems to be partitioned and potentially better optimized.

AXI SmartConnect IP

The following information is extracted from the LogicCORE IP AXI SmartConnect Product

Guide (PG247) [Ref 23].

Feature Summary

• Up to 16 Slave Interfaces (SI) and up to 16 Master Interfaces (MI) per instance.

• Instances of SmartConnect can be cascaded to interconnect a larger number of

masters/slaves or for organizing the interconnect topology.

• AXI Protocol compliant. Each SI and MI of SmartConnect can be connected to a master

or slave IP interface of type AXI3, AXI4 or AXI4-Lite.

• Transactions between interfaces of different protocol types are automatically converted

by SmartConnect.

• Burst transactions are automatically split, as needed, to remain AXI compliant.

• Interface Data Widths (bits):

°AXI4 and AXI3: 32,64,128,256, 512 or 1024.

°AXI4-Lite: 32 or 64-bit.

• Transactions between interfaces of different data widths are automatically converted by

SmartConnect.

• Supports multiple clock domains (the IP provides one clock pin per domain).

• Transactions between interfaces in different clock domains are automatically converted

by SmartConnect.

Vivado AXI Reference Guide www.xilinx.com 40

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

°Address width: Up to 64 bits:

°SmartConnect decodes up to 256 total address range segments.

• User defined signals up to 512 bits wide per channel.

°User signals on any AXI channel are propagated regardless of internal transaction

conversions.

• ID width: Up to 32 bits

°Automatic re-mapping/compression of wide input ID signals.

• Support for Read-only and Write-only masters and slaves, resulting in reduced resource

utilization.

• Supports multiple outstanding transactions:

°Supports connected masters with multiple reordering depth (ID threads).

°Supports write response re-ordering, Read data re-ordering, and Read Data

interleaving.

°Multi-threaded traffic (propagation of ID signals) is supported regardless of internal

transaction conversions, including data width conversion and transaction splitting.

°Optional single ordering mode (per SI and MI). Stores ID values internally instead of

propagating to the slave, resulting in reduced resource utilization.

• “Single-Slave per ID” method of cyclic dependency (deadlock) avoidance.

°For each ID thread issued by a connected master, the SmartConnect allows one or

more outstanding transactions to only one slave device for Writes and one slave

device for Reads, at a time.

°Multiple parallel pathways along all AXI channels when connected to multiple

masters and multiple slaves:

°Each AXI channel has independent destination-side arbitration. Transfers from two

or more source endpoints to separate destination endpoints can occur concurrently,

for any AXI channel.

°Round-robin arbitration for each of the AW, AR, R and B channels. (W-channel

transfers follow the same order as AW-channel arbitration, per AXI protocol rules.)

• Supports back-to-back transfers (100% duty cycle) on any AXI channel:

°Single data-beat transactions can traverse the SmartConnect at the same

bandwidth as multi-beat bursts.

• Supports TrustZone security for each connected slave:

°If configured as a secure address segment, only secure AXI accesses are permitted

according to the AXI arprot or awprot signal.

°Any non-secure accesses are blocked and the AXI SmartConnect core returns a

decerr response to the connected master.

Vivado AXI Reference Guide www.xilinx.com 41

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

• Internally resynchronized reset:

°One aresetn input per IP.

AXI SmartConnect Core Limitations

These limitations apply to the AXI SmartConnect core:

• SmartConnect unconditionally packs all multi-beat bursts to fill the interface

data-width.

• SmartConnect SI interfaces accept “narrow” bursts, in which the arsize or awsize

signal indicates data units which are smaller than the interface data-width. But such

bursts are always propagated through the SmartConnect and its MI interfaces fully

packed. The “modifiable bit” of the AXI arcache or awcache signal does not prevent

packing.

• SmartConnect converts all WRAP type bursts into INCR type. SmartConnect SI

interfaces accept all protocol-compliant WRAP bursts, beginning at any target address.

But such bursts are always converted to a single INCR burst beginning at the “wrap

address”. This may increase response latency of unaligned read wrap bursts.

• SmartConnect does not support FIXED type bursts. Any FIXED burst transaction

received at the SmartConnect SI is blocked and a DECERR response is returned to the

master.

• SmartConnect does not propagate original ID values from endpoint masters. IDs

received at an SI interface are re-mapped to a smaller (or equal) number of bits for

more resource-efficient management of multi-threaded traffic.

• SmartConnect appends ID bits to differentiate among multiple masters, when

propagating transactions to the MI. Values of master identification bits are assigned by

IP Integrator and cannot be controlled or predicted by the user.

• The AXI SmartConnect core does not support discontinued AXI3 features:

°Atomic locked transactions. This feature was retracted by AXI4 protocol. A locked

transaction is changed to a non-locked transaction and propagated by the MI.

°Write interleaving. This feature was retracted by AXI4 protocol. AXI3 master

devices must be configured as if connected to a slave with a Write interleaving

depth of one.

• All arbitration on all AXI channels is round-robin. SmartConnect does not support fixed

priority arbitration.

• AXI4 Quality of Service (arqos and awqos) signals do not influence arbitration

priority. QoS signals are propagated from SI to MI.

• SmartConnect neither propagates nor generates the AXI4 arregion or awregion

signal.

Vivado AXI Reference Guide www.xilinx.com 42

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

• SmartConnect does not support independent reset domains. If any master or slave

device connected to SmartConnect is reset, then all connected devices must be reset

concurrently.

• AXI SmartConnect core does not support low-power mode or propagate the AXI C

channel signals.

• AXI SmartConnect cores does not time out if the destination of any AXI channel

transfer stalls indefinitely. All connected AXI slaves must respond to all received

transactions, as required by AXI protocol.

• AXI SmartConnect provides no address remapping. AXI SmartConnect core does not

include conversion or bridging to non-AXI protocols, such as APB.

AXI4-Stream Interconnect Core IP

The AXI4-Stream Interconnect core IP (axis_interconnect) connects one or more

AXI4-Stream master devices to one or more stream slave devices. The AXI interfaces

conform to the ARM®AMBA® AXI4-Stream Product Guide (PG035) [Ref 9].

Note: The AXI4-Steam Interconnect core IP is intended for AXI4-Stream transfers only; AXI

memory-mapped transfers are not applicable.

The AXI4-Stream Interconnect IP is available in IP integrator and as standalone IP:

•The Infrastructure version for IP integrator has enhanced features, greater ease of use,

and automation services. See the AXI4-Stream Infrastructure IP Suite: Product Guide for

Vivado Design Suite (PG035) [Ref 9] for more information.

• AXI4-Stream Interconnect is also available standalone directly from the IP catalog for

use in an RTL design. See the AXI4-Stream Interconnect IP Product Guide (PG085)

[Ref 14] for more information. The following information is extracted from the AXI-4

Stream Product Guide.

AXI4-Stream Interconnect Core Features

The AXI4-Stream Interconnect IP contains the following features:

• AXI4-Stream compliant:

°Supports all AXI4-Stream defined signals: TVALID, TREADY, TDATA, TSTRB, TKEEP,

TLAST, TID, TDEST, and TUSERl

-TDATA, TSTRB, TKEEP, TLAST, TID, TDEST, and TUSER are optional

- Programmable TDATA, TID, TDEST, and TUSER widths (TSTRB and TKEEP width

is TDATA width/8).

°Per port ACLK/ARESETn inputs (supports clock domain crossing).

°Per port ACLKEN inputs (optional).

Vivado AXI Reference Guide www.xilinx.com 43

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

•Core switch:

°1-16 masters.

°1-16 slaves.

°Full slave-side arbitrated crossbar switch.

°Slave input to master output routing based on TDEST value decoding and

comparison against base and high value range settings.

°Round-Robin and Priority arbitration.

- Arbitration suppress capability to prevent head-of-line blocking.

- Native switch data width 8, 16, 24, 32, 48, ... 4096 bits (any byte width up to 512

bytes).

- Arbitration tuning parameters to arbitrate on TLAST boundaries, after a set

number of transfers, and/or after a certain number of idle clock cycles.

°Optional pipeline stages after internal TDEST decoder and arbiter functional blocks.

°Programmable connectivity map to specify full or sparse crossbar connectivity.

• Built-in data width conversion:

°Each master and slave connection can independently use data widths of 8, 16, 24,

32, 48, ... 4096 bits (any byte width up to 512 bytes).

• Built-in clock-rate conversion:

°Each master and slave connection can use independent clock rates.

°Synchronous integer-ratio (N:1 and 1:N) conversion to the internal crossbar native

clock rate.

°Asynchronous clock conversion (uses more storage and incurs more latency than

synchronous conversion).

• Optional register-slice pipelining:

°Available on each AXI4-Stream channel connecting to each master and slave device.

°Facilitates timing closure by trading-off frequency versus latency.

°One latency cycle per register-slice, with no loss in data throughput in the register

slice under all AXI4-Stream handshake conditions.

• Optional data path FIFO buffering:

°Available on data paths connecting to each master and each slave.

°16, 32, 64, 128, through 32768 deep (16-deep and 32-deep are LUT-RAM based;

otherwise are block RAM based).

°Normal and Packet FIFO modes (Packet FIFO mode is also known as

store-and-forward in which a packet is stored and only released downstream after a

TLAST packet boundary is detected.)

Vivado AXI Reference Guide www.xilinx.com 44

UG1037 (v4.0) July 15, 2017

Chapter 3: Samples of Vivado AXI IP and Xilinx Processors

°FIFO data count outputs to report FIFO occupancy.

• Additional error flags to detect conditions such as TDEST decode error, sparse TKEEP

removal, and packer error.

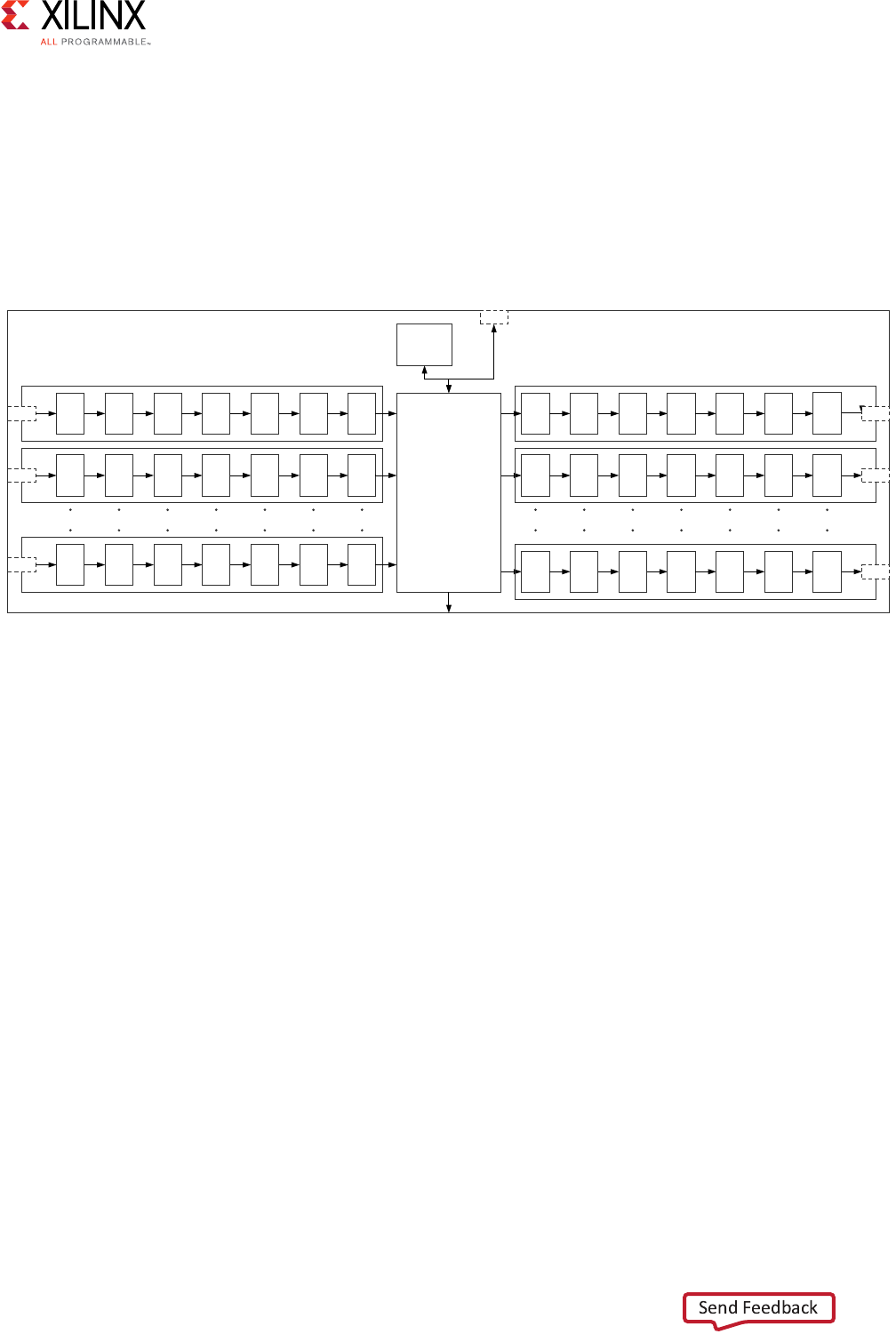

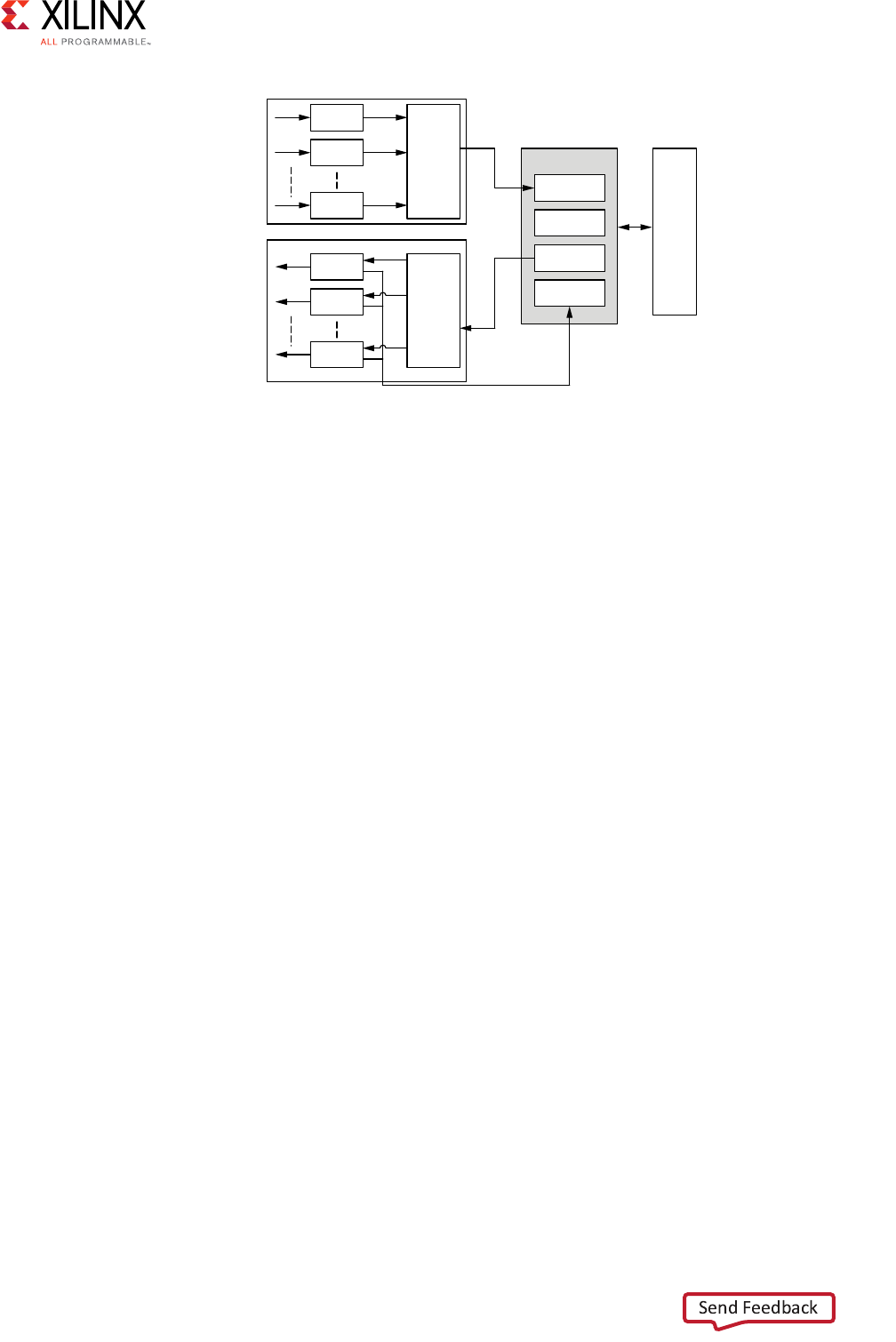

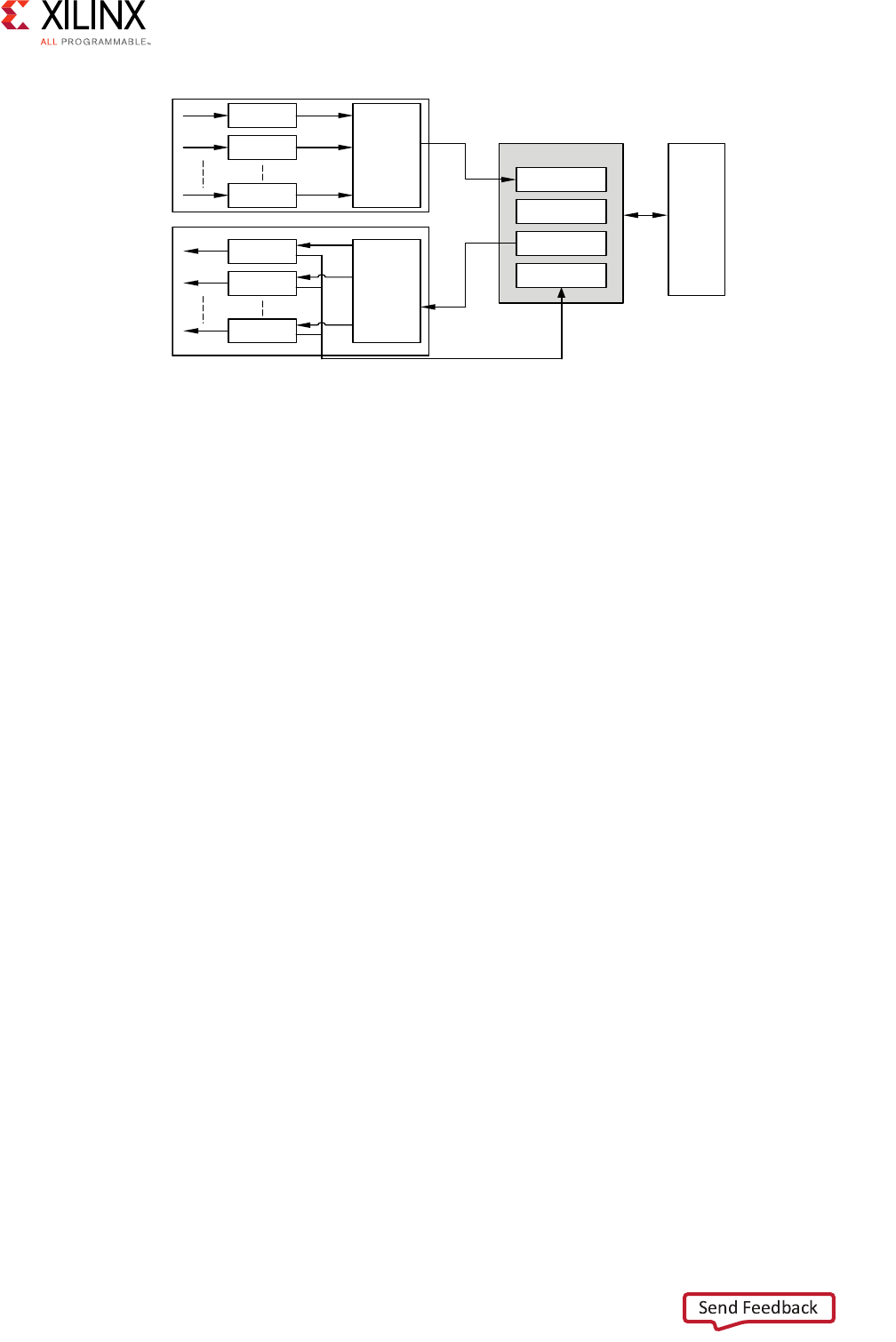

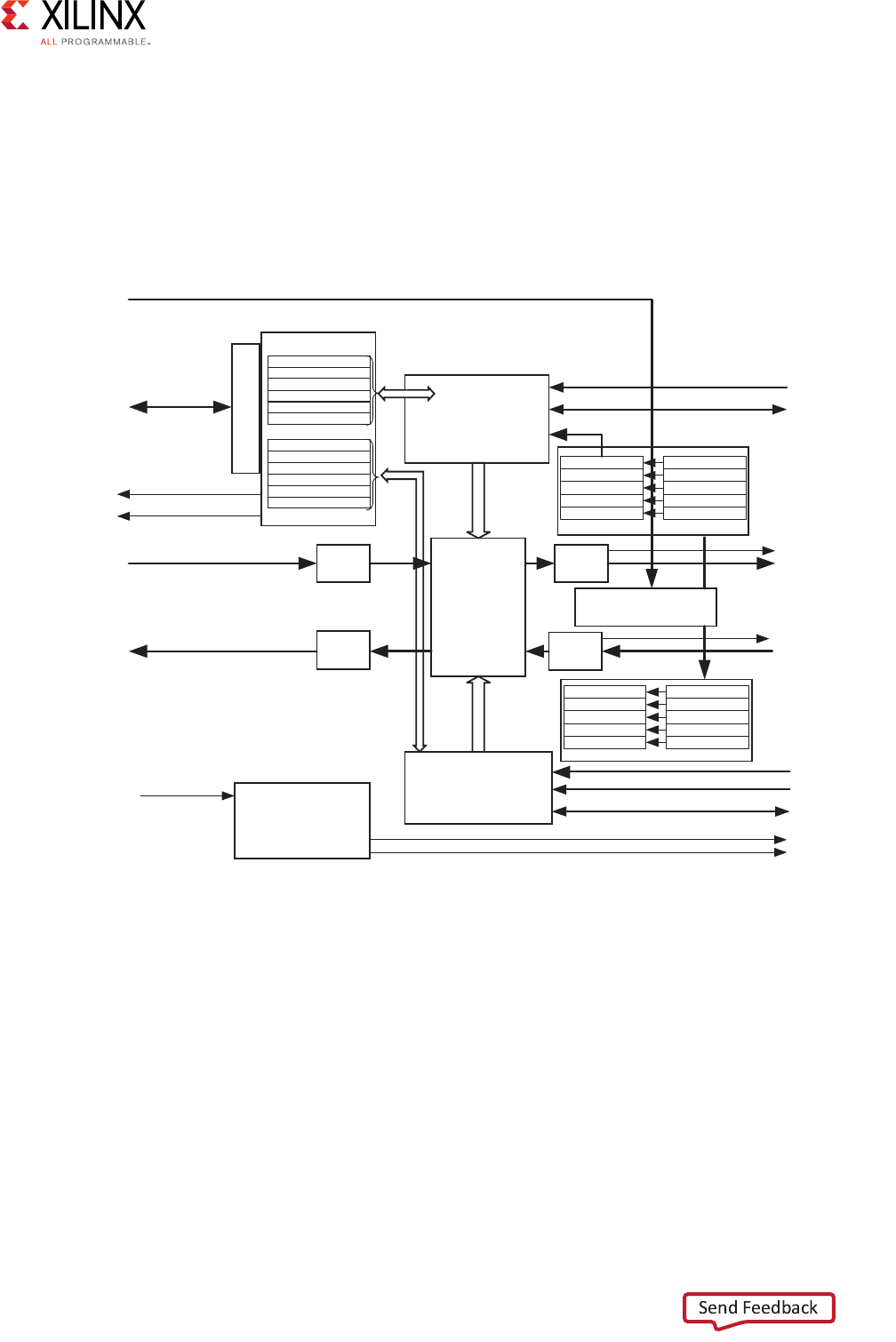

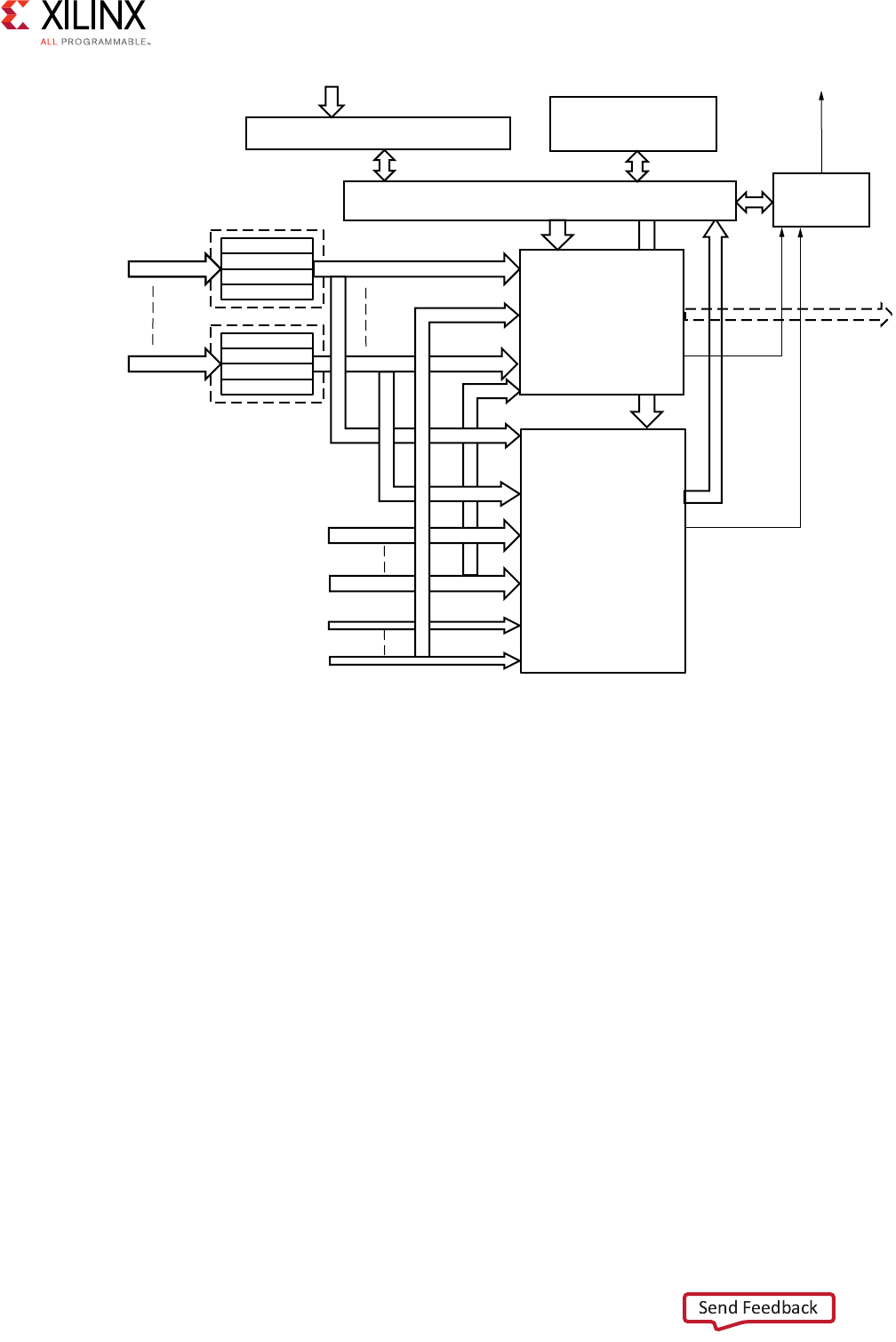

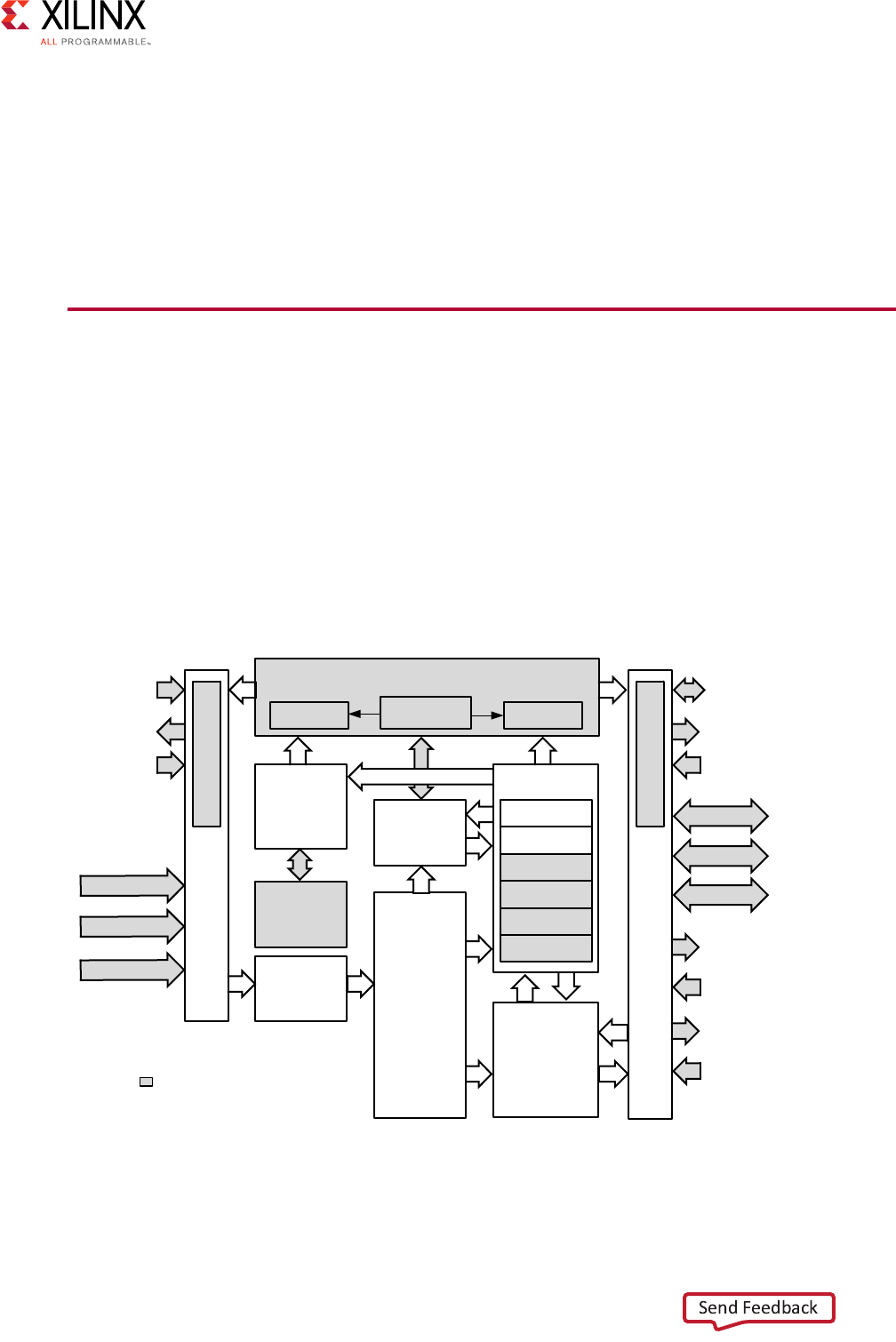

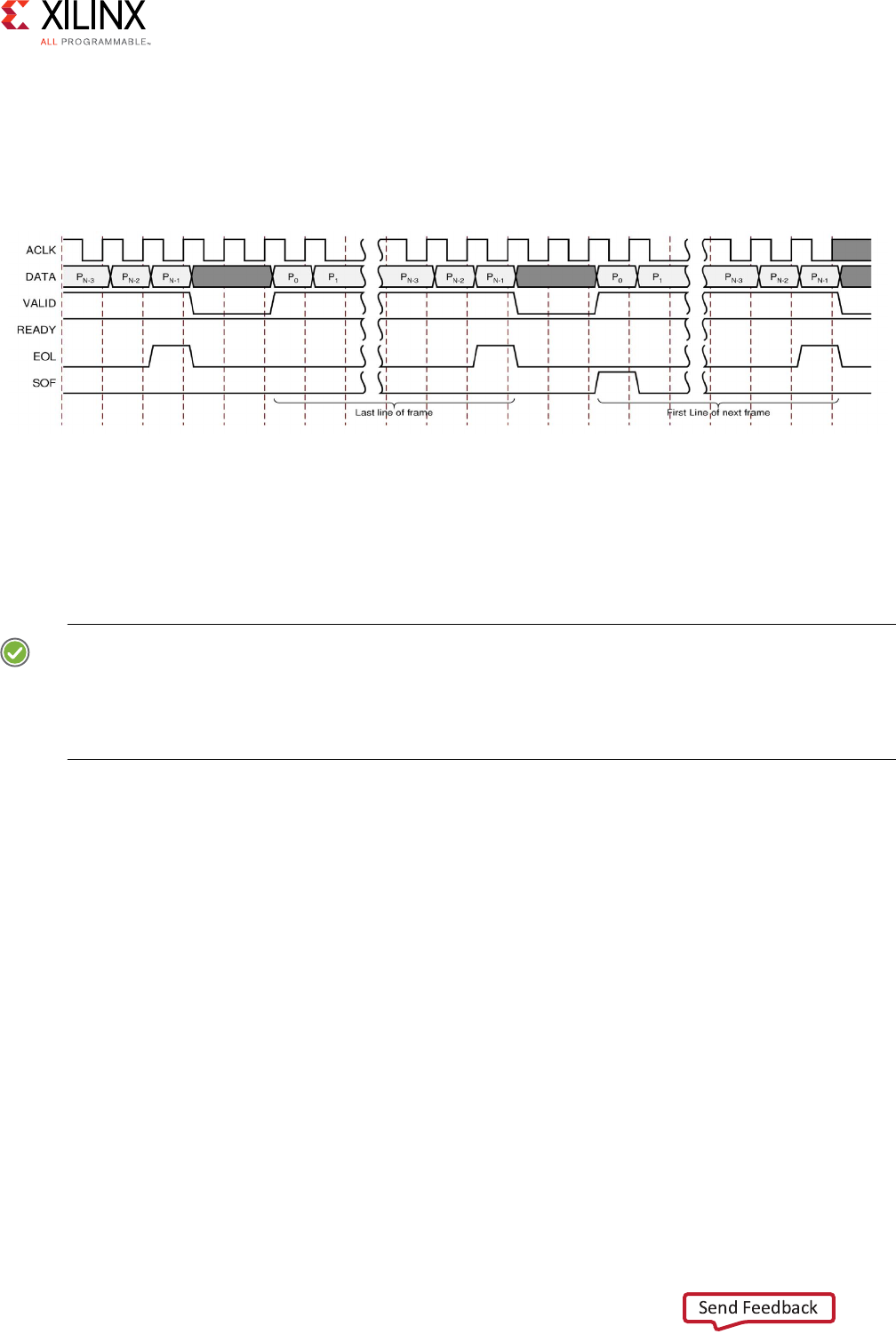

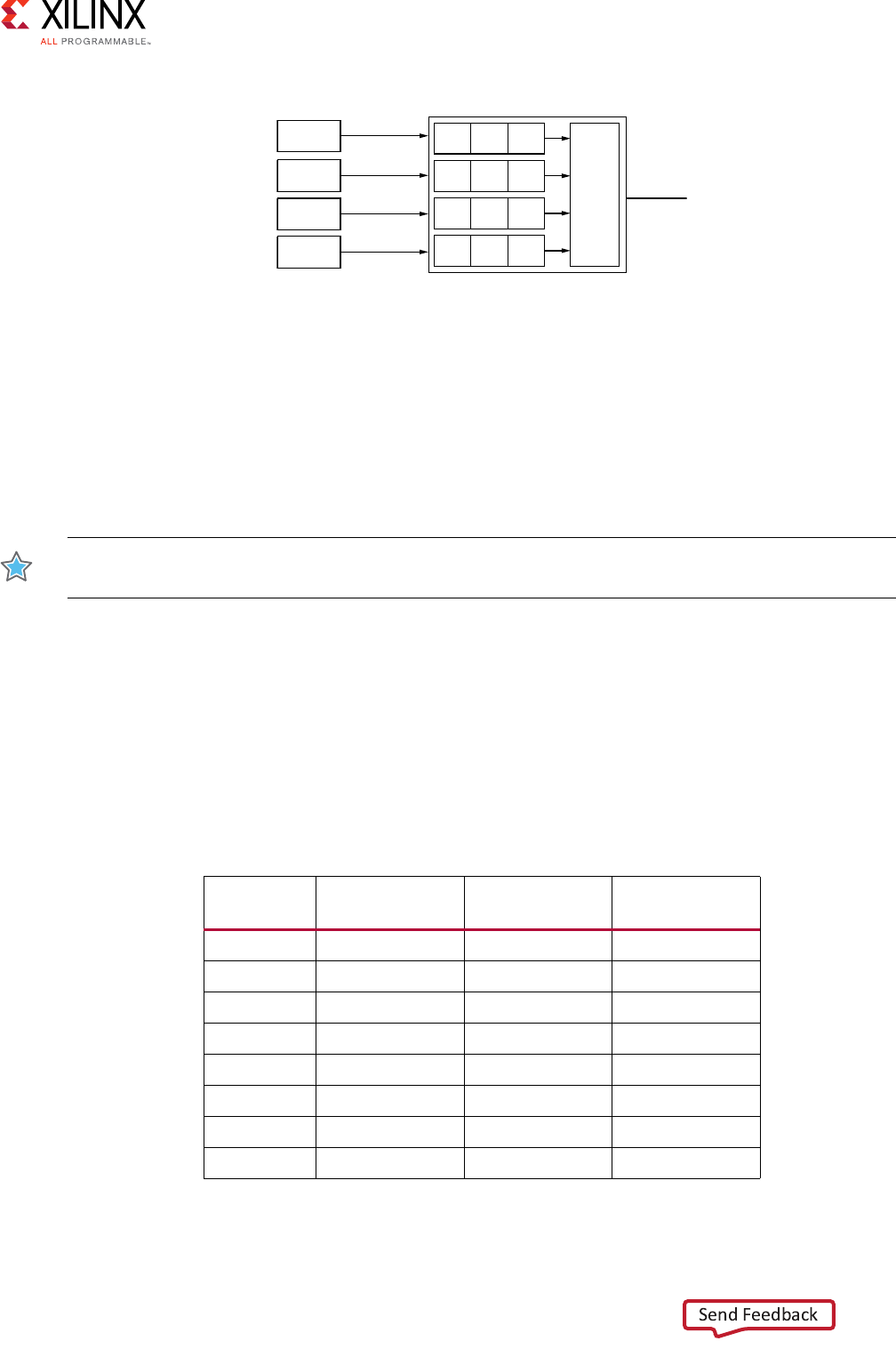

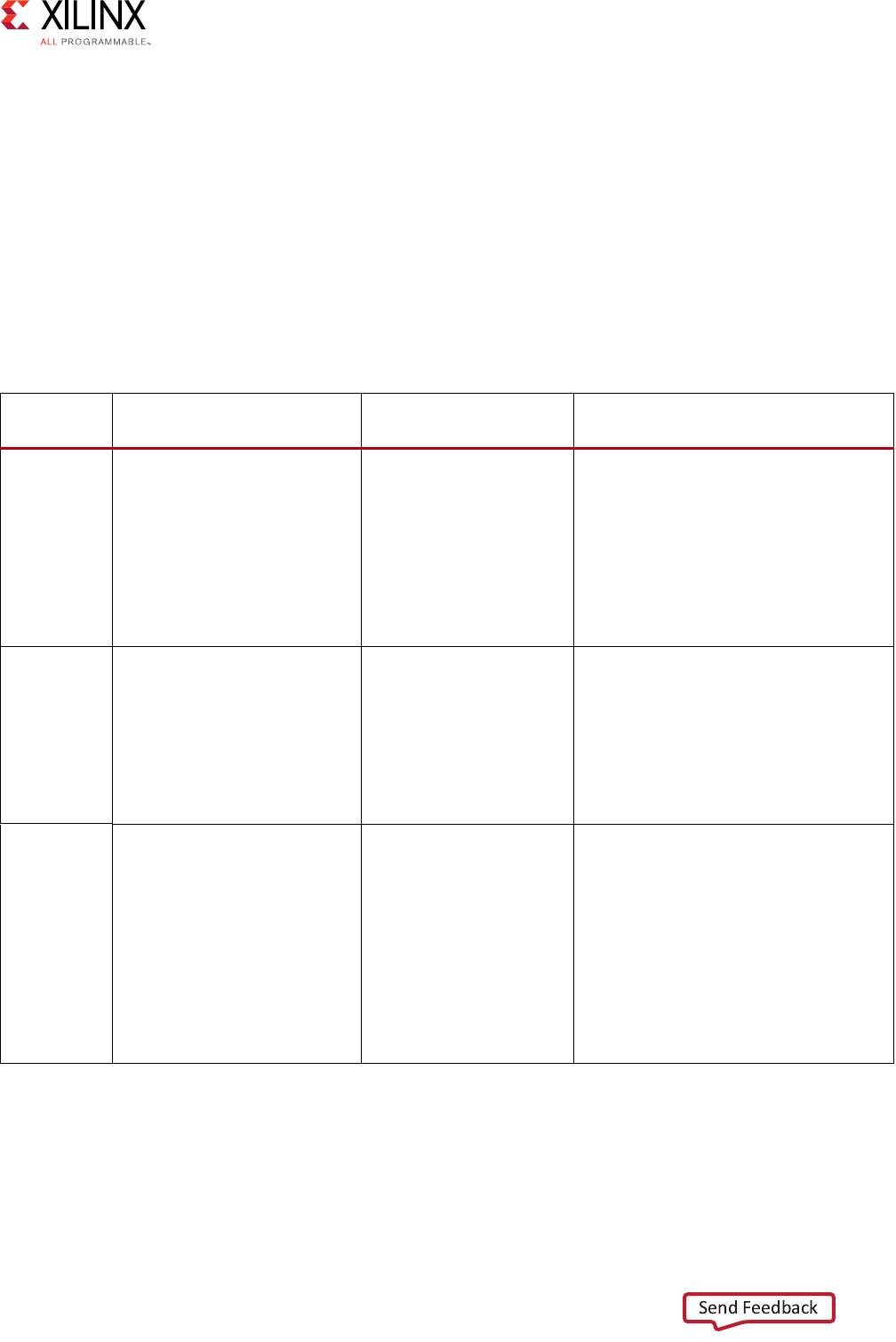

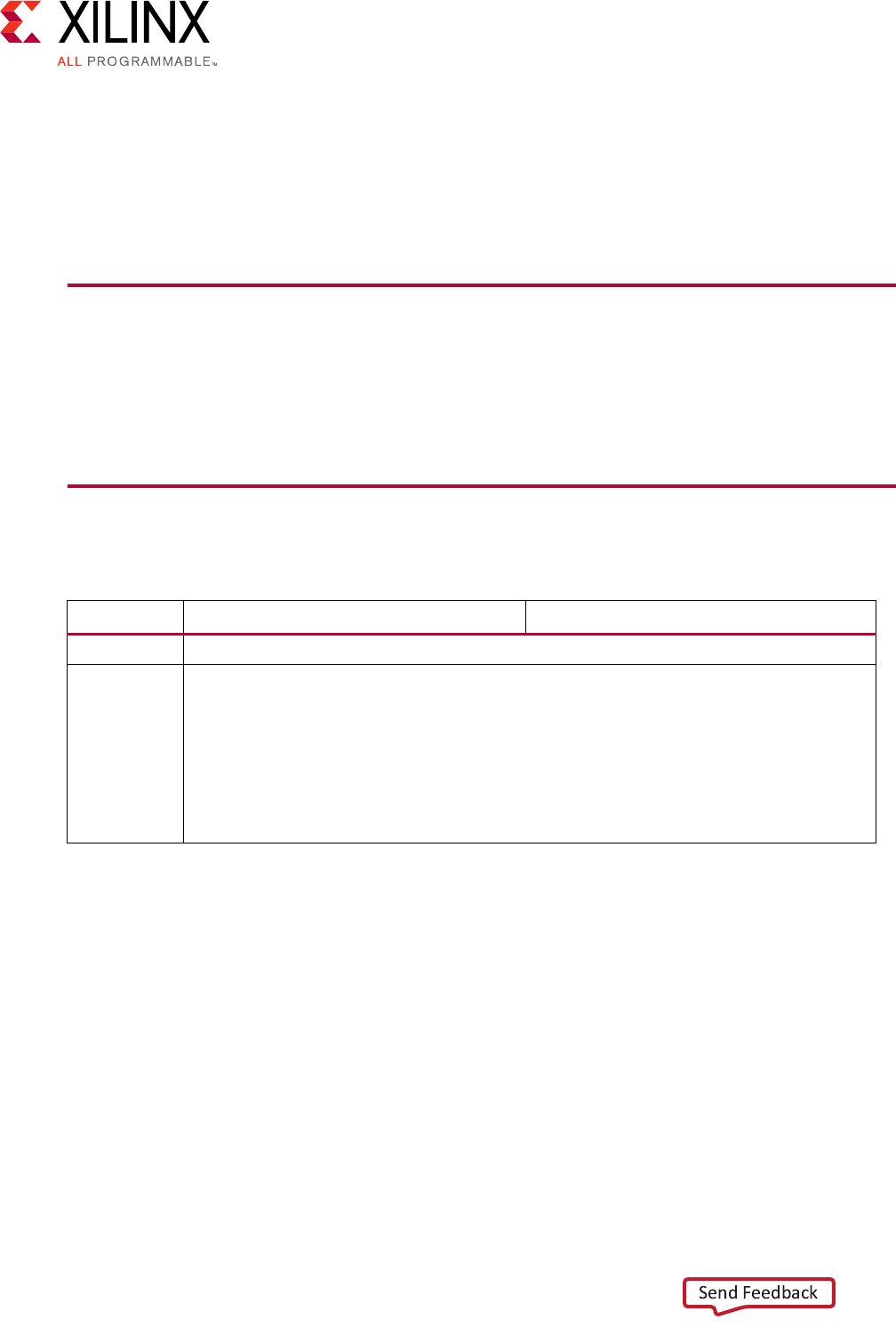

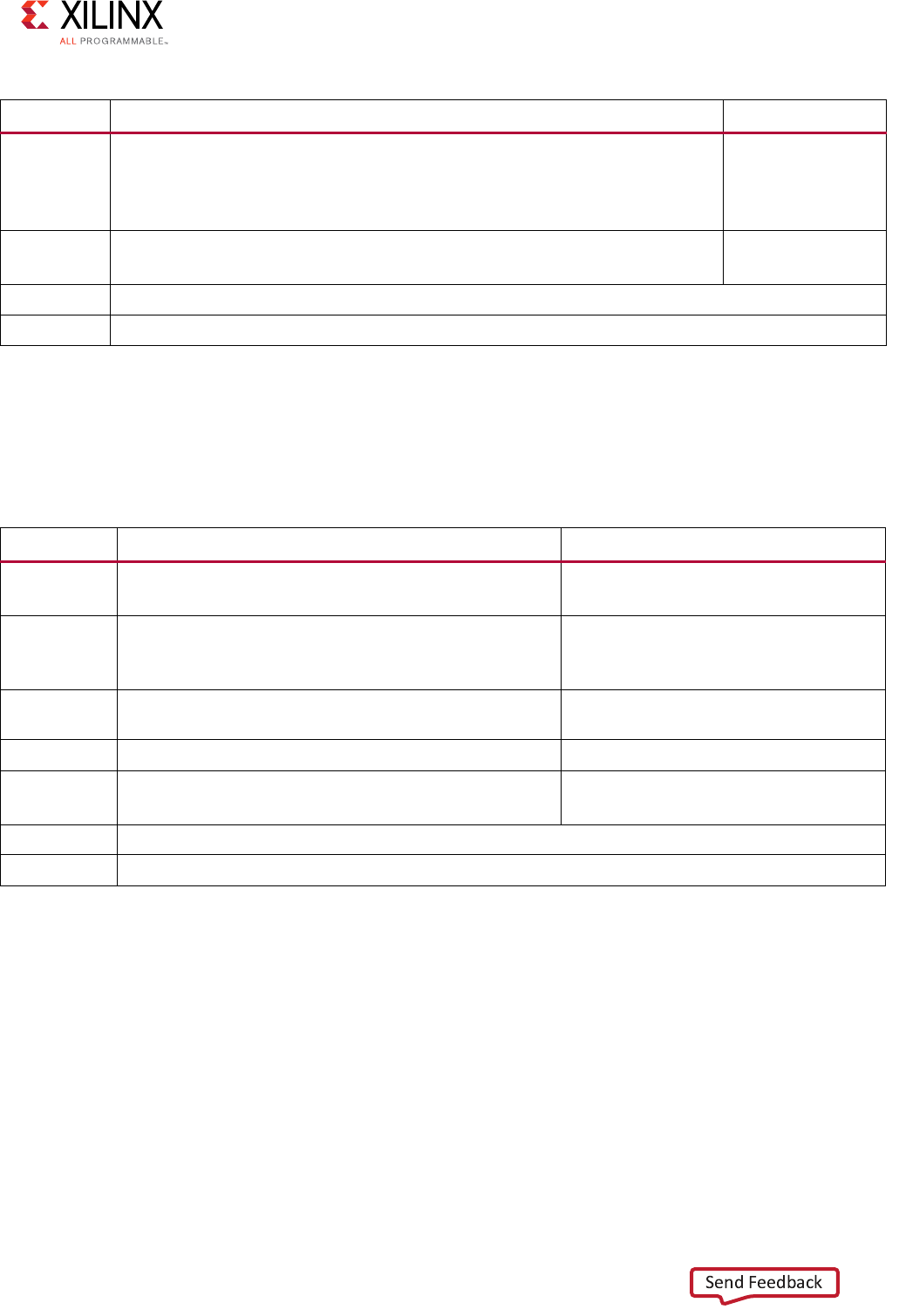

AXI4-Stream Interconnect Core Diagram

The following figure illustrates a top-level AXI4-Stream Interconnect architecture.

The AXI4-Stream Interconnect core consists of the SI, the MI, and the functional units that

include the AXI channel pathways between them.

• The SI accepts transaction requests from connected master devices.

• The MI issues transactions to slave devices.

• At the center is the switch that arbitrates and routes traffic between the various devices

connected to the SI and MI.

The AXI4-Stream Interconnect core also includes other functional units located between the

switch and each of the SI and MI interfaces that optionally perform various conversion and

storage functions. The switch effectively splits the AXI4-Stream Interconnect core down the

middle between the SI-related functional units (SI hemisphere) and the MI-related units

(MI hemisphere). This architecture is similar to that of the AXI Interconnect.

AXI4-Stream Interconnect Core Use Models

The AXI4-Stream Interconnect IP core connects one or more AXI4-Stream master devices to

one or more AXI4-Stream slave devices. The following subsections describe the possible use

cases:

• Streaming data routing and switching

• Stream multiplexing and de-multiplexing

X-Ref Target - Figure 3-6

Figure 3-6: Top-Level AXI4-Stream Interconnect Architecture

!8)33WITCH

3) -)

3) -)

3) -)

3) -)

3) -)

3) -)

!)

&IXED0RIORITY

2OUND2OBIN

!RBITER

$%#%22

!8)3

2EGISTER

3LICE

!8)3

5PSIZER

!8)3

#LOCK

#ONV

!8)3

$OWN

SIZER

!8)3

&)&/

!)

3)

!8)3)NTERCONNECT

!8)3

&)&/

!8)3

5PSIZER

!8)3

#LOCK

#ONV

!8)3

$OWN

SIZER

!8)3

0ACKER -)

!8)3

3UBSET

#ONV

!8)3

0ACKER

!8)3

5PSIZER

!8)3

#LOCK

#ONV

!8)3

$OWN

SIZER

!8)3

&)&/

3)

!8)3

3UBSET

#ONV

!8)3

0ACKER

!8)3

5PSIZER

!8)3

#LOCK

#ONV

!8)3

$OWN

SIZER

!8)3

&)&/

3)

!8)3

3UBSET

#ONV

!8)3

0ACKER

!8)3

3UBSET

#ONV

!8)3

&)&/

!8)3

5PSIZER

!8)3

#LOCK

#ONV

!8)3

$OWN

SIZER

!8)3

0ACKER -)

!8)3

&)&/

!8)3

5PSIZER

!8)3

#LOCK

#ONV

!8)3

$OWN

SIZER

!8)3

0ACKER -)

!8)3

2EGISTER

3LICE

!8)3

2EGISTER

3LICE

!8)3

2EGISTER

3LICE

!8)3

3UBSET

#ONV

!8)3

3UBSET

#ONV

!8)3

2EGISTER

3LICE

!8)3

2EGISTER

3LICE

Vivado AXI Reference Guide www.xilinx.com 45

UG1037 (v4.0) July 15, 2017